The AI Powered Workflow for Automated Content Tagging and Metadata Enrichment

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Operational Challenges and Strategic Imperatives

The media and entertainment industry is experiencing an unprecedented surge in digital content, with video hours, high-resolution images, and audio files doubling annually. Production studios, broadcasters, streaming platforms, and user-generated channels all contribute to a heterogeneous asset landscape. Disparate naming conventions, quality standards, and metadata schemas fragment repositories, undermining content discovery, licensing compliance, and audience engagement. The proliferation of formats—from ultra-high-definition video and immersive 360-degree footage to short-form social clips and interactive media—introduces diverse technical parameters and processing requirements that strain manual workflows.

Inconsistent or missing metadata impairs recommendation engines, search filters, and rights management systems, leading to compliance risks, revenue leakage, and viewer frustration. Manual tagging processes fail to scale, creating backlogs that delay distribution and introduce subjective discrepancies. In an era of on-demand streaming and personalized experiences, audiences expect immediate access to relevant content. The absence of a unified, automated pipeline for metadata generation translates into missed monetization opportunities—such as targeted advertising and dynamic packaging—and hinders global operations across production, syndication, and distribution channels.

Core Objectives of an AI-Driven Tagging Workflow

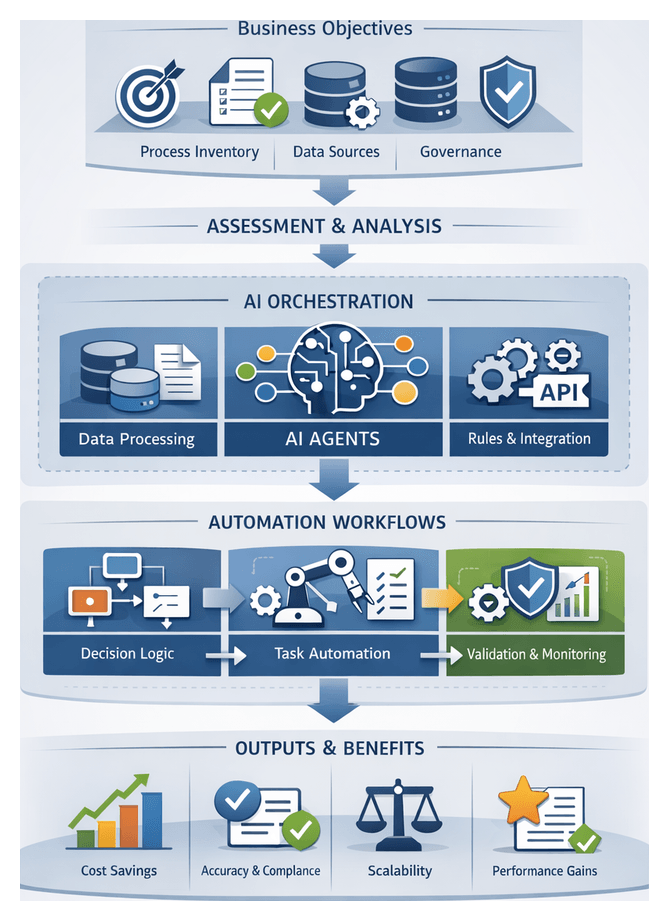

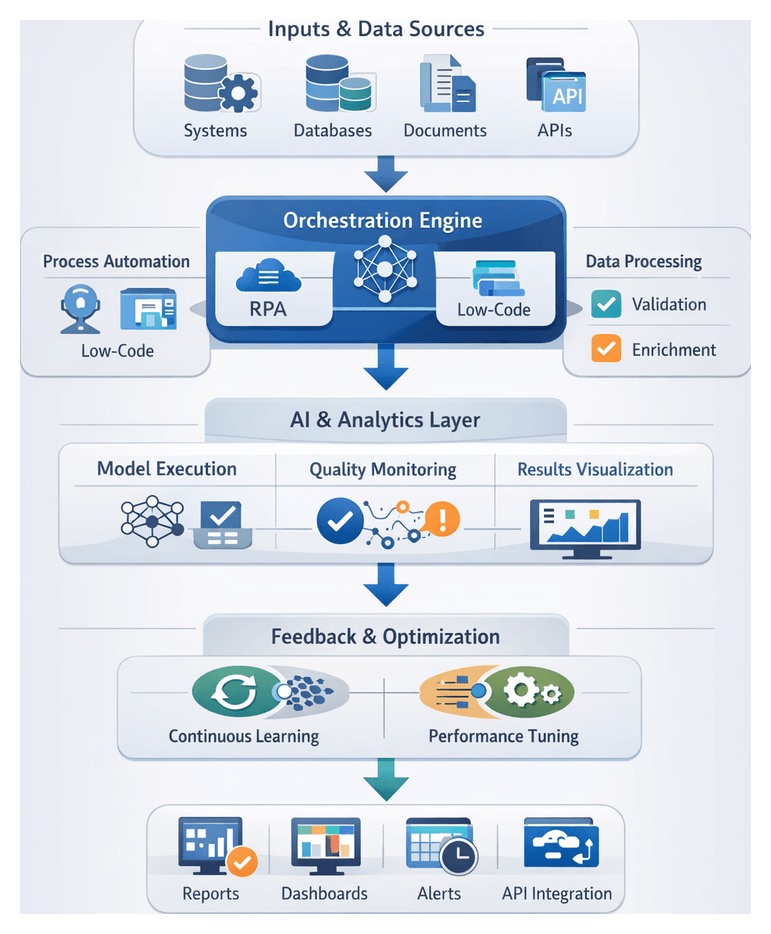

An automated, AI-driven metadata workflow addresses these challenges by ingesting raw media, extracting and standardizing technical and contextual attributes, enriching tags with semantic insights, and integrating results into content management systems. The primary objectives are to:

- Ensure reliable discovery and retrieval through consistent metadata standards.

- Accelerate time to market by automating repetitive tagging tasks.

- Enhance content monetization with targeted recommendations driven by semantic insights.

- Reduce compliance risk via automated rights and attributes classification.

- Support scalable operations across global production, syndication, and distribution networks.

Fundamental Prerequisites and Inputs

Successful implementation requires alignment of technical infrastructure, data assets, and governance policies. Key inputs include:

- Media Asset Sources: High-resolution video feeds, audio files, images, and textual transcripts from production cameras, post-production systems, and user-generated channels.

- Technical Infrastructure: Scalable storage and compute platforms—on-premises GPU clusters or cloud services such as AWS Rekognition and Google Cloud Vision—with high-throughput network connectivity.

- Existing Metadata Repositories: Legacy catalog systems and digital asset management platforms that supply initial tag sets and taxonomy definitions.

- Domain Taxonomies: Industry-specific vocabularies, controlled schemas, and hierarchical templates that guide accurate classification.

- AI Model Library: Pre-trained vision, audio, and language models, including services like IBM Watson Natural Language Understanding and OpenAI APIs.

- Integration Endpoints: Well-defined API schemas, event buses, or message queues for seamless orchestration between ingestion, AI services, and content management systems.

- Security and Compliance: Authentication controls, encryption protocols, and audit trails to protect sensitive content and adhere to regulatory standards.

- Governance Policies: Metadata standards, quality thresholds, and exception handling procedures that define acceptance criteria and human review triggers.

Organizational readiness demands cross-functional alignment among production, post-production, metadata governance, and IT teams. Consistent naming conventions, file validation rules, and sample annotations expedite AI calibration. A structured change-management plan ensures automation integrates smoothly without disrupting existing workflows or compromising editorial quality.

Structured, End-to-End Workflow Design

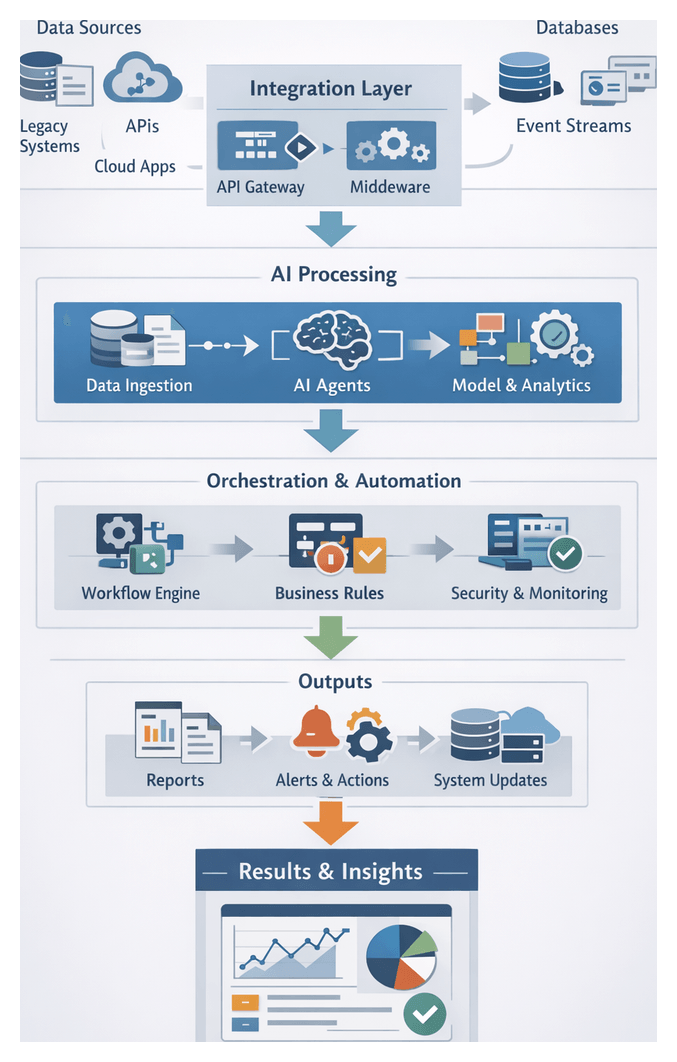

A cohesive workflow unifies ingestion, preprocessing, tagging, enrichment, and distribution modules, reducing manual handoffs and enforcing uniform standards. Core principles include:

- API-First Coordination: Event triggers and message brokers (Kafka, RabbitMQ) notify orchestration engines such as Apache Airflow to sequence tasks.

- Schema Enforcement: Metadata validation agents inspect AI-generated tags against controlled vocabularies, quarantining or auto-correcting non-conforming entries.

- Error Handling and Audit Trails: Retry policies, fallback routes, and centralized logging capture task status, enabling rapid troubleshooting and compliance reporting.

- Modular Scalability: Independent services for ingestion, preprocessing, tagging, enrichment, and CMS integration, each containerized and auto-scaled to meet demand.

- Human-In-The-Loop: Role-based review queues route low-confidence or conflicting tags to editorial teams via collaboration platforms and dashboards.

- Real-Time and Batch Processing: Event-driven pipelines for live content and scheduled jobs for archive enrichment, sharing compute and storage resources effectively.

This architecture isolates failures, supports incremental deployments, and accommodates new AI services—multilingual transcription, face recognition, or emotion analysis—by wiring them into existing event triggers and data flows.

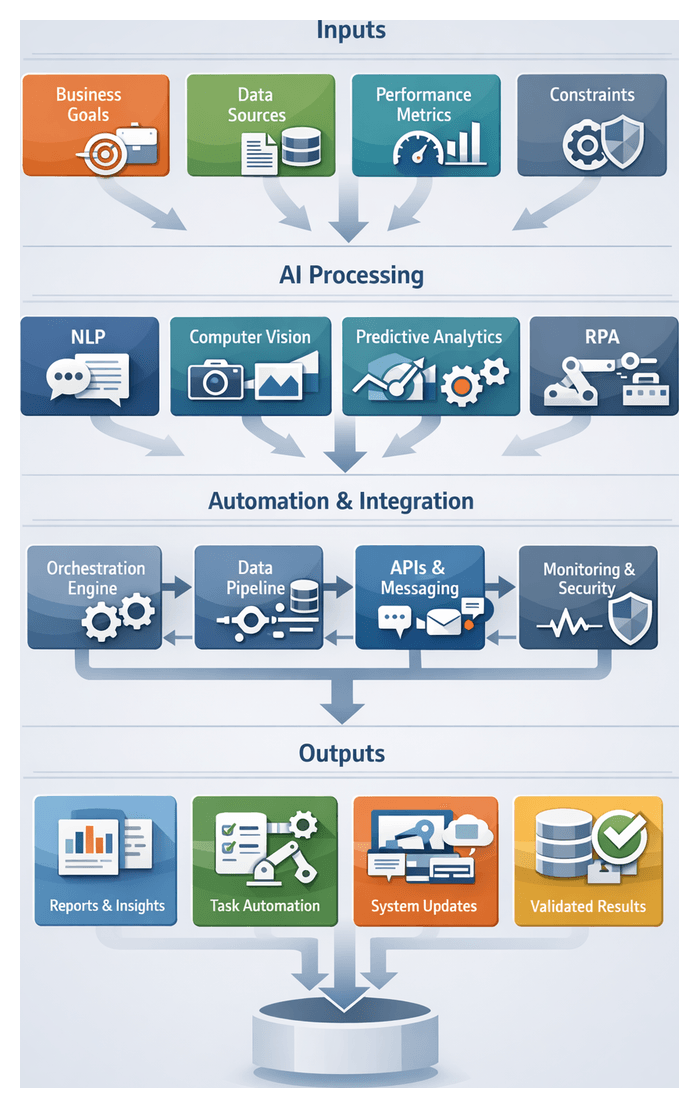

AI Technologies Powering Metadata Automation

AI capabilities transform raw assets into rich, searchable metadata through:

Computer Vision for Visual Understanding

- Object detection and localization using deep convolutional networks.

- Scene classification and context labeling.

- Facial recognition against identity registries.

- Optical character recognition (OCR) for on-screen text.

- Activity and gesture analysis for temporal tagging.

Natural Language Processing for Textual Insight

- Speech-to-text transcription with encoder-decoder and hybrid acoustic-linguistic models.

- Named entity recognition to identify people, places, and organizations.

- Sentiment and emotion analysis.

- Keyword extraction and summarization.

- Language detection and translation for multilingual catalogs.

Multimodal AI Integration

- Cross-modal embeddings that unify visual, audio, and textual features.

- Temporal alignment of transcripts and video frames.

- Contextual reasoning to link faces with spoken names or on-screen text to dialogue.

- Fusion models using transformer or graph neural network architectures.

Knowledge Graphs and Semantic Enrichment

- Entity linking to industry taxonomies and public knowledge bases.

- Relationship extraction for co-occurrence and narrative dependencies.

- Concept clustering under thematic classifications.

- Schema validation via rule-based inference and W3C SHACL engines.

Dynamic Metadata Generation

- Incremental tagging to process new frames or transcripts.

- Rule-based augmentation for composite tags like “High Action.”

- Versioned metadata management for auditing and rollback.

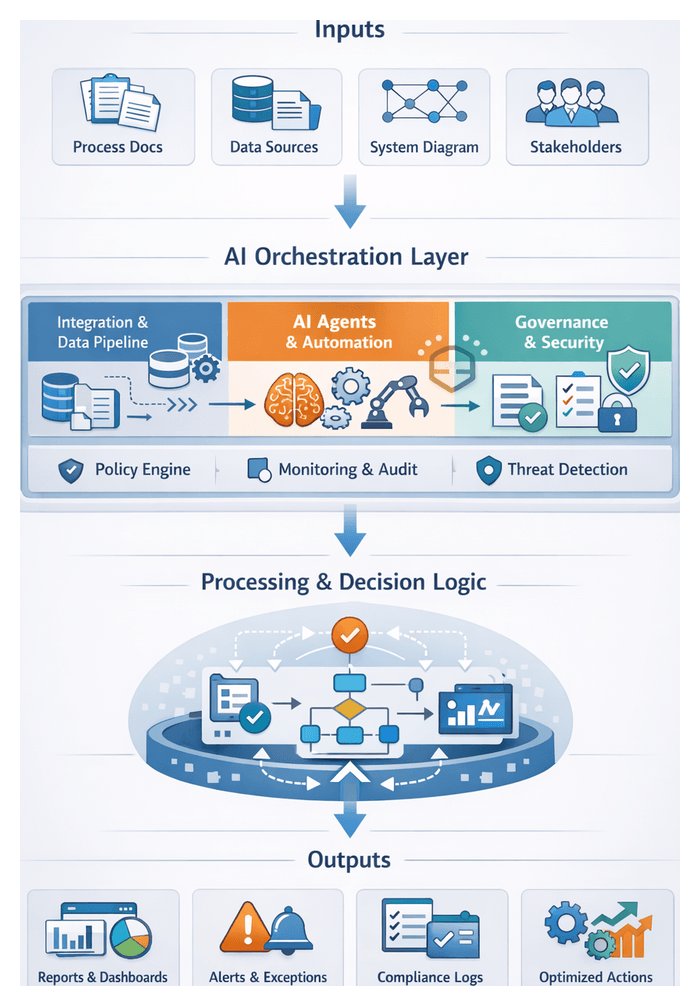

AI Agent Orchestration and Workflow Integration

- Event-driven triggers with message brokers and event buses.

- Task queues and parallel processing across compute clusters.

- Error detection, retry logic, and circuit breakers.

- API choreography with defined schemas and contracts.

Unified monitoring dashboards enable dynamic scaling decisions to meet SLAs and track processing metrics.

Supporting Infrastructure and Data Management

- Object storage for media chunks with lifecycle policies.

- Feature stores for visual descriptors, text embeddings, and semantic vectors.

- Metadata catalogs centralizing tag definitions and schema versions.

- Elastic compute clusters orchestrated via Kubernetes or similar frameworks.

Compliance systems enforce security and access controls across all storage and compute resources.

Outputs, Dependencies, and Handoffs Across Pipeline Stages

The workflow produces stage-specific artifacts and orchestrates their handoffs to maintain traceability:

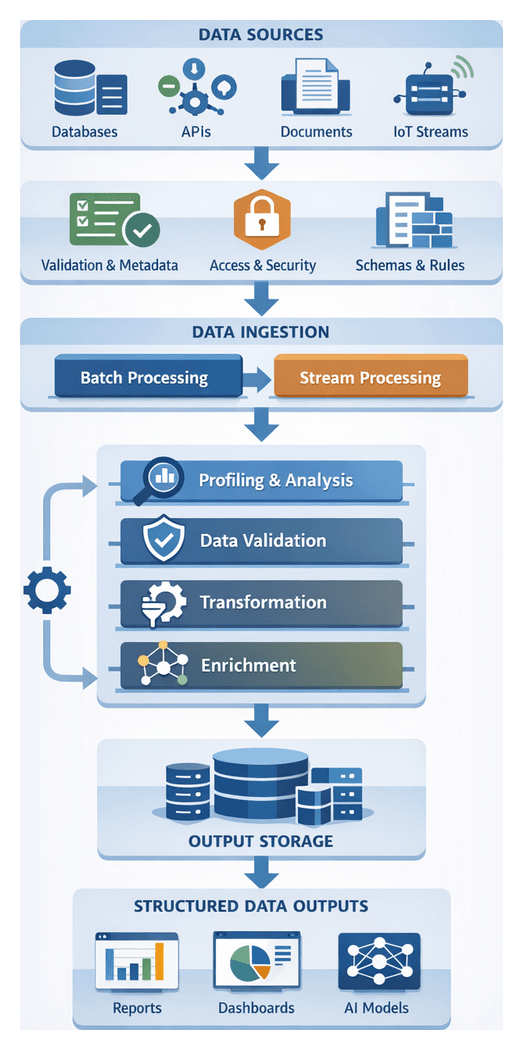

Content Acquisition and Ingestion

- Primary outputs: Raw media packages, ingestion manifests with checksums and access controls, initial metadata records.

- Dependencies: SFTP/HTTPS, authentication services, object storage endpoints, and Google Cloud Video Intelligence for format validation.

- Handoff: Orchestration triggers deliver media URIs and manifests to preprocessing engines.

Preprocessing and Quality Assurance

- Primary outputs: Transcoded files (H.264, ProRes), normalized audio, QA reports on signal-to-noise ratios and color histograms.

- Dependencies: Encoding engines like FFmpeg or Encoding.com, noise-reduction algorithms, and AWS Elemental MediaConvert.

- Handoff: Metadata connectors trigger taxonomy definition tools such as Protégé.

Taxonomy and Schema Definition

- Primary outputs: Controlled vocabularies, JSON-LD schema files, field definitions, and sample metadata payloads.

- Dependencies: Industry standards (EBUCore, IPTC), ontology management platforms, and SHACL validation services.

- Handoff: Versioned schemas are injected into model training configurations.

AI Model Selection and Training

- Primary outputs: Trained model binaries or containers, hyperparameter configurations, evaluation reports (precision, recall, F1).

- Dependencies: GPU clusters, data versioning systems, annotation tools like Labelbox, and Kubeflow.

- Handoff: Model artifacts register in MLflow or Amazon SageMaker Model Registry for orchestration.

AI Agent Orchestration Design

- Primary outputs: Workflow definitions, task queue configurations, retry policies, and runbooks.

- Dependencies: Kafka or RabbitMQ, function-as-a-service platforms, API gateways, and Prometheus or Datadog.

- Handoff: Deployed orchestration triggers invoke AI agents with model endpoints and asset pointers.

Automated Content Tagging and Classification

- Primary outputs: Metadata payloads with bounding boxes, sentiment scores, timestamped scene markers, and confidence metrics.

- Dependencies: AWS Rekognition, NLP engines like spaCy, and ensemble classification services.

- Handoff: Metadata streams publish to message buses for semantic enrichment.

Metadata Enrichment and Semantic Analysis

- Primary outputs: Knowledge graph triples (RDF), personalized tag scores, and concept linkage maps.

- Dependencies: Neo4j, Wikidata, sentiment analyzers, and AWS Personalize.

- Handoff: Enriched metadata synchronizes with CMS via connector scripts.

Integration with Content Management Systems

- Primary outputs: CMS entries with embedded metadata, audit logs, and reconciliation reports.

- Dependencies: CMS APIs such as Adobe Experience Manager, middleware connectors, and conflict resolution engines.

- Handoff: Quality control workflows queue entries for human review.

Validation, Quality Control, and Human-in-the-Loop

- Primary outputs: Approved metadata records, exception logs, reviewer comments, and compliance certificates.

- Dependencies: Review platforms, anomaly detection services, and governance frameworks.

- Handoff: Validation outcomes feed monitoring dashboards and trigger remediation.

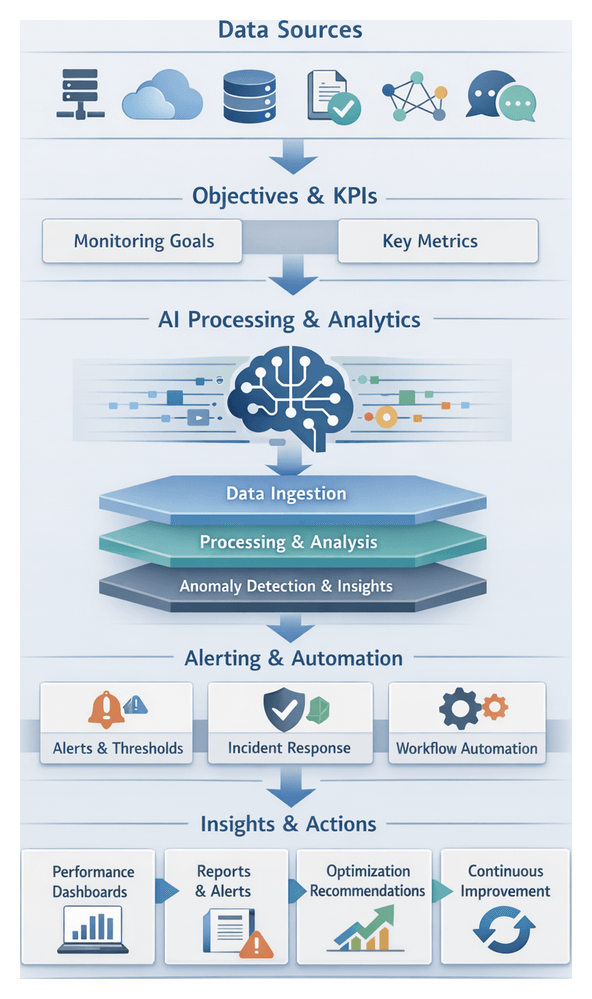

Monitoring, Feedback, and Continuous Improvement

- Primary outputs: Analytics dashboards, error rate reports, engagement metrics, and retraining job definitions.

- Dependencies: Logging infrastructures, data lakes, anomaly detection models, and scheduler services.

- Handoff: Prioritized feedback datasets commit to training repositories for iterative model refinement.

Governance, Quality Control, and Continuous Improvement

Ongoing oversight ensures metadata accuracy and relevance. Processes include statistical sampling, anomaly detection, and human review via dashboards. Reviewer feedback feeds back into model retraining and taxonomy updates, closing the loop between AI predictions and domain expertise. Governance policies enforce access controls, audit logs, and data retention rules across the pipeline. Performance monitoring of throughput, latency, and accuracy metrics informs capacity planning and SLA management. By embedding continuous feedback mechanisms and adhering to structured governance, media enterprises maintain metadata integrity and position themselves for future innovation in content discovery and monetization.

Chapter 1: Content Acquisition and Ingestion

Purpose and Scope

The acquisition stage defines the entry point for all media assets entering the end-to-end tagging and metadata pipeline. Its objectives are to capture, consolidate, and securely onboard raw content from on-set feeds, live streams, user uploads, third-party packages, and digitized archives. By enforcing uniform naming conventions, timecode alignment, and directory structures, this stage safeguards against data loss, version conflicts, and metadata gaps that can compromise downstream AI-driven processing.

Key goals include registering each asset, routing it through security and compliance checks, monitoring transfer integrity in real time, and orchestrating a transparent handoff to preprocessing systems. These measures accelerate time-to-insight, reduce manual intervention, and establish a harmonized stream of media ready for AI-driven tagging and enrichment.

Inputs and Prerequisites

Raw Media Sources

- On-set Production Feeds: High-resolution camera outputs delivered via SDI/IP or bonded cellular links, including timecode-embedded video, raw audio, and camera metadata.

- Live Broadcast and Streaming: Satellite or cloud streams using RTMP, RTSP, or MPEG-DASH protocols for low-latency capture.

- User-Generated and Partner Uploads: Files submitted through web portals or mobile apps, varying in resolution, codec, and naming.

- Third-Party Syndication Packages: Standardized containers (MXF, GXF) with sidecar XML, EDL, or JSON metadata.

- Legacy Archives and Tape Digitization: Bulk transfers from LTO libraries or tape decks, converted to modern formats via high-throughput ingest hardware.

Accepted File Formats and Codecs

- Video Containers: MXF, MOV, MP4, AVI, GXF

- Video Codecs: ProRes (422, 4444), DNxHD, H.264, H.265/HEVC

- Audio Formats: WAV (24-bit PCM), AIFF, AAC

- Image Stills: JPEG2000, TIFF, DPX

- Sidecar Metadata: XML (EBUCore, MPEG-7), JSON, CSV

- Transcript Files: SRT, VTT, TTML

Security and Compliance

- Secure Transfer Protocols: SFTP, HTTPS with TLS 1.2 , or managed solutions like IBM Aspera and Signiant.

- Authentication and Access Control: OAuth2, JWT tokens, mutual TLS, and scoped API keys.

- Encryption at Rest and In Transit: AES-256 encryption, TLS for network transfers, and integration with enterprise KMS.

- Integrity Verification: MD5 or SHA-256 checksums with automated validation.

- Audit Trails and Logging: Immutable logs for transfers, integrity checks, and user actions, retained for regulatory compliance.

Infrastructure and Integrations

- Network and Storage: Dedicated links or VPN tunnels, edge capture nodes connected to Amazon S3, Azure Blob Storage, or Google Cloud Storage with lifecycle and tiering policies.

- Authentication and APIs: RESTful endpoints secured by IAM, webhook or event triggers via AWS EventBridge, Azure Event Grid, or Google Pub/Sub.

- Monitoring and Alerting: Telemetry agents feeding dashboards that track bandwidth, transfer rates, storage utilization, and SLA compliance.

Governance and Policies

- Data Ownership: Clear stewardship and approval workflows for each incoming asset.

- Naming Conventions and Metadata Templates: Standard identifiers, language codes, and region tags linked to schema validation.

- Retention and Archival Policies: Defined schedules for raw masters, sidecar metadata, and disposal.

- Embargo and Compliance Controls: Automated gating for NDA or time-release content.

- Service Level Agreements: Documented targets for transfer throughput, availability, and error resolution.

Workflow Actions and System Interactions

Source Integration and Orchestration

Connector modules handle authentication, protocol conversion, rate limiting, and retries for SFTP servers, object storage APIs, RESTful feeds, and live ingestion points. They poll or listen for new assets, emitting ingestion events to a central orchestrator backed by a durable queue. Priority flags allow urgent or live feeds to bypass standard backlog.

Secure Transfer and Validation

Workers establish encrypted channels (SSH or TLS) to stream files into a landing zone while computing continuous SHA-256 checksums. Mismatches trigger exponential back-off retries. Transfer metrics—throughput, duration, and errors—are logged for real-time monitoring and alerts.

Profiling and Preliminary Metadata

- Format Verification: Tools such as FFmpeg inspect container type, codecs, bitrate, and resolution.

- Integrity Checks: Verification of index tables and detection of truncated frames.

- Security Scans: Malware detection against signature databases.

- Thumbnail Generation: Keyframe extraction for visual previews.

Cataloging and Event-Driven Handoffs

Assets are assigned GUIDs and registered with storage URIs in the media catalog. Profiling metadata and source context are persisted, with schema validation enforcing uniformity. A successful ingest publishes an AssetIngested event to the message bus, including GUID, URI, metadata summary, and any exception flags. Downstream preprocessing services subscribe to these events to begin automated workflows.

Error Handling, Scalability, and Compliance

- Retry and Dead-Letter Queues: Transient failures trigger retries; assets exceeding thresholds move to exception queues.

- Automated Escalations: Critical failures notify human operators through workflow dashboards.

- Elastic Worker Pools: Parallel transfers, micro-batch chunking, and adaptive scaling ensure low latency and high throughput.

- Audit Trail: Immutable logs of authentication checks, transfers, profiling outcomes, catalog entries, and event publications support regulatory audits.

AI-Driven Capabilities

- Format Detection and Transcoding with Google Cloud Video Intelligence API for container and codec recognition, routing unsupported formats to AWS Elemental MediaConvert.

- Visual and Audio Metadata Extraction using Amazon Rekognition and Microsoft Azure Video Indexer to capture scene duration, dominant colors, spoken keywords, and on-screen text.

- Automated Tagging via image classification and speech-to-text with IBM Watson Visual Recognition and Google Speech-to-Text, applying confidence thresholds and queuing low-confidence tags for review.

- Scene Boundary Detection and Keyframe Sampling through shot segmentation endpoints in the Video Intelligence API, generating representative frames for asset previews.

- Content Fingerprinting and Deduplication with ACRCloud for perceptual hashing and audio fingerprinting to merge or flag duplicates.

- Language Detection and Transcription using AWS Transcribe for speaker diarization, language codes, and time-aligned transcripts, with fallbacks to human linguists when confidence is low.

- Technical Quality Scoring via deep learning anomaly detectors to assess noise, clipping, and artifacts, integrated with Grafana dashboards for alerting.

- Metadata Normalization by NLP-driven harmonization agents that standardize terminology, date formats, and measurement units before writing to the canonical catalog.

- Error Detection and Feedback Loop capturing ingestion anomalies and human corrections to refine AI model performance over time.

Outputs, Dependencies, and Handoff Mechanisms

Key Outputs

- Raw Asset References: Immutable objects in AWS S3, Google Cloud Storage, or Azure Blob Storage with GUIDs.

- Metadata Records: Structured catalog entries in relational or NoSQL databases capturing technical attributes and checksums.

- Audit Logs and Validation Reports: Detailed records of transfer protocols, checksums, security scans, and schema compliance.

- Event Notifications: JSON payloads published to Kafka, SNS/SQS, or RabbitMQ containing assetId, storageUri, metadata pointers, and ingestion status.

Dependencies and Integration Points

- Secure Transport: SDKs or CLI tools for Aspera FASP, SFTP, HTTPS multipart uploads, and FFmpeg streaming.

- Object Storage APIs: S3-compatible, Google Cloud Storage client libraries, and Azure SDKs for upload and bucket management.

- Catalog Services: RESTful interfaces to MAM platforms or custom catalogs backed by PostgreSQL, MongoDB, or Elasticsearch.

- Validation Scanners: REST or CLI integration with antivirus engines and compliance tools.

- Messaging Infrastructure: Producer libraries for Apache Kafka, AWS SNS/SQS, or RabbitMQ clients.

- Identity and Access Management: SSO via OAuth or SAML, token-based microservice policies, and role-based permissions.

- Monitoring and Logging: Data pipelines to Splunk, ELK, or Prometheus/Grafana for metrics and log analytics.

Handoff to Preprocessing

- Event-Driven Triggers: AssetIngested events with assetId, storageUri, metadata endpoint, timestamp, and validation status.

- RESTful Invocation: Optional API calls returning HTTP 202 and job tickets for progress polling.

- Catalog State Transitions: Lifecycle fields moving from “Pending Ingest” to “Ready for Preprocessing” for polling or CDC-based detection.

- Queue Buffering: Durable queues in Amazon SQS or RabbitMQ to manage back-pressure and retries.

- Metadata Contracts: Mandatory fields for preprocessing, with remediation workflows for incomplete records.

- SLA Monitoring: Alerts on handoff latency and error rates to meet processing targets.

By unifying secure ingestion, AI-driven analysis, and event-driven orchestration, this stage delivers validated, richly annotated assets with traceable audit trails and seamless downstream integration. This foundation enables scalable, efficient preprocessing, quality assurance, and semantic enrichment across the media lifecycle.

Chapter 2: Preprocessing and Quality Assurance

Purpose and Context

The Preprocessing and Quality Assurance stage transforms heterogeneous raw media into standardized, high-quality assets ready for AI-driven metadata tagging. By applying cleansing operations, format conversions, AI-based enhancements and rigorous checks, this stage eliminates noise, enforces uniform technical specifications, and embeds critical metadata. In the fast-growing media landscape—with high-resolution video, immersive audio, and diverse user-generated content—assets often arrive with inconsistent codecs, variable lighting and audio artifacts. Effective preprocessing mitigates these challenges through domain-specific normalization routines and well-defined quality thresholds, ensuring downstream models for computer vision, speech transcription and sentiment analysis operate on reliable inputs.

Strategic Benefits

- Consistency: Uniform file formats, codecs and quality levels reduce model errors and labeling discrepancies.

- Efficiency: Automated transcoding, denoising and normalization accelerate workflows and lower manual effort.

- Accuracy: Clean, high-quality inputs maximize performance of AI engines, improving metadata precision.

- Scalability: A repeatable, event-driven pipeline handles growing asset volumes without bottlenecks.

- Governance: Embedded policy checks enforce legal, contractual and brand standards, minimizing downstream risk.

Inputs and Prerequisites

Successful preprocessing requires clearly defined inputs, environment configurations and governance policies. Key prerequisites include:

- Raw Media Assets: Video files (MP4, MOV, MKV with H.264, H.265, ProRes), audio tracks (WAV, FLAC, MP3, AAC), image sequences (JPEG, PNG, TIFF), and text transcripts/subtitles (SRT, VTT, XML).

- Format Specifications: Target resolutions (1080p, 4K, 8K), frame rates (24, 25, 30, 60 fps), bitrate ranges, color spaces (Rec.709, Rec.2020, DCI-P3) and bit depths (8-, 10-, 12-bit).

- Quality Thresholds: Minimum signal-to-noise ratios for audio, luminance and chrominance noise floors, completeness metrics (frame counts, audio durations, subtitle sync), and maximum error rates (dropped frames, artifacts, desyncs).

- Upstream Metadata: Initial asset identifiers, source tags, DRM flags, access controls, language codes and locale indicators from ingestion.

- Technical Environment: GPU-accelerated nodes or CPU clusters, FFmpeg, AWS Elemental MediaConvert, TensorFlow, PyTorch, catalog APIs, object storage and compliance with GDPR/CCPA.

- Governance Policies: Privacy consents, copyright clearances, brand guidelines, watermark requirements and audit-ready logs for traceability.

Workflow Actions and Architecture

This stage orchestrates modular services, AI processors and human checkpoints via event-driven triggers and distributed queues. Key flow actions include:

- Orchestration and Intake: Ingestion events trigger retrieval of metadata from the catalog, integrity validation via checksum and signature checks, asset classification, and task enqueuing into pipelines coordinated by systems like Apache Airflow or AWS Step Functions.

- Quality Gatekeeping: Lightweight pre-checks using FFmpeg extract container metadata for codec, resolution and duration. Non-conforming assets route to exception queues for human review; approved assets advance with annotated headers.

- Transcoding and Standardization: Containerized jobs via AWS Elemental MediaConvert or Bitmovin Encoder convert video to H.264/H.265 MP4, audio to 44.1 kHz WAV, split subtitle streams and generate thumbnails. Completion events trigger downstream AI tasks.

- Noise Reduction and Enhancement: Video denoising through convolutional neural filters and GAN-based super-resolution; audio noise suppression via spectral subtraction. Color correction, stabilization and dynamic range adjustments improve consistency.

- Scene Segmentation: AI models—such as Google Cloud Video Intelligence or OpenCV extensions—detect shot boundaries, extract key frames, and generate context clips with time buffers.

- Anomaly Detection and Quality Scoring: Autoencoder and CNN models identify missing frames, audio dropouts, flicker and compression artifacts. Engines like Amazon Rekognition Video and Microsoft Video Indexer assign numerical quality ratings and flag assets below thresholds.

- Metadata Extraction: OCR via IBM Watson Visual Recognition or Azure Cognitive Services OCR, speech-to-text with Google Cloud Speech-to-Text or AWS Transcribe, and basic object recognition feed preliminary tags into NoSQL stores.

- Error Handling and Retries: Centralized error handlers capture logs, apply retry policies, route persistent failures to human operators, and trigger notifications via Slack or email integrations.

- Coordination and Handoff: Messaging via Apache Kafka or Google Cloud Pub/Sub links microservices. Upon completion, standardized assets, manifests and metadata are published to storage and catalog services for taxonomy definition.

AI-Driven Capabilities and System Roles

- Scene Segmentation Models: Detect shot boundaries and extract representative key frames for indexing and QC.

- Anomaly Detection Engines: Identify visual and audio defects, score quality and trigger exception workflows.

- Audio Enhancement Modules: Perform noise reduction, loudness normalization and clipping detection to optimize speech clarity.

- Metadata Extraction Services: Generate on-screen text via OCR, named entities via NER and basic object labels for preliminary cataloging.

- Transcoding Orchestrators: Integrate with FFmpeg, AWS Elemental MediaConvert and Bitmovin Encoder to enforce format standards and adjust encoding parameters dynamically.

- Normalization Classifiers: Apply VFR to CFR conversion, sample rate standardization and color space remapping based on format detection models.

- Visual Enhancement Models: Use GANs and autoencoders for color correction, super-resolution and denoising, with versioned outputs for rollback.

- Error Management Agents: Monitor repeat failures, classify errors, and manage retries or human escalations.

- Resource Management Systems: Autoscale GPUs and CPUs on Kubernetes, optimize infrastructure cost and forecast demand.

- Logging and Monitoring Frameworks: Aggregate logs in Elasticsearch or Splunk, visualize metrics in Grafana or Kibana, and alert on anomalies.

- Governance Engines: Enforce retention, access controls and redaction policies based on AI-classified content sensitivity.

Outputs

- Standardized Media Assets: Transcoded video (H.264/H.265 MP4, ProRes), audio (WAV/AIFF), and stills (JPEG/PNG) produced via FFmpeg or AWS Elemental MediaConvert.

- Quality Assurance Reports: JSON/XML summaries of noise levels, SNR, color histograms and pass/fail indicators from OpenCV and Clarifai models.

- Error and Exception Logs: Timestamped entries with asset IDs, error codes and stack traces for traceability.

- Integrity Manifests: MD5/SHA-256 checksums and file size assertions accompanying each output file.

- Preview Proxies: Low-res video/audio files, thumbnails and waveform images for rapid human review.

- Normalization Profiles: Versioned records of preprocessing parameters (color space, deinterlacing, loudness settings) stored in Git or Artifactory.

- Preliminary Metadata Annotations: Technical attributes—duration, frame count, aspect ratio, channel layout—exported in sidecar files.

- Anomaly Flags: Time-coded indicators of defects directing manual review effort.

Key Dependencies

- Compute Infrastructure: CPU/GPU clusters orchestrated by Kubernetes, NVIDIA Video SDK for hardware-accelerated processing.

- Transcoding Engines: FFmpeg, GStreamer pipelines and proprietary encoders invoked via REST APIs.

- AI Models and Inference Servers: TensorFlow Serving and TorchServe hosting segmentation, anomaly detection and enhancement models.

- Storage and Catalog Services: Amazon S3, Google Cloud Storage or on-premises DAM platforms for asset and metadata management.

- Logging and Monitoring: Elasticsearch/Splunk for logs; Grafana/Kibana for dashboards and alerts.

- Security and Compliance: Encryption, IAM controls, secure API gateways and data retention policies aligned with GDPR/CCPA.

- Configuration Repositories: Git or Artifactory for normalization profiles, schema templates and quality definitions.

- Workflow Orchestration: Apache Airflow or AWS Step Functions coordinating event triggers, task queues and retry policies.

- Human Review Interfaces: Web dashboards with authentication, session locking and annotation tools for quality engineers.

Downstream Handoffs

- Asset Transfer: Normalized files and manifests deposited in ingestion buckets accessible to taxonomy and labeling services.

- Catalog Registration: Automated ingestion of preliminary metadata and QA results into the catalog, triggering taxonomy definition workflows.

- QA Report Provisioning: Detailed reports delivered to ML engineers for schema refinement and model retraining.

- Flagged Asset Notifications: Anomaly-flagged items routed to human-in-the-loop queues; post-review corrections are fed back or passed forward with exception notes.

- Profile Synchronization: Shared normalization parameter sets ensure consistency between preprocessing and model training data.

- Event Triggers: Completion notifications initiate taxonomy workshops, supplying assets, metrics and metadata to stakeholders.

- APIs for Training: RESTful or GraphQL endpoints expose preprocessed assets and metadata for automated model training pipelines.

- Documentation and Audit Logs: Comprehensive records of preprocessing activities, decisions and dependencies archived for compliance and continuous improvement.

Chapter 3: Taxonomy and Metadata Schema Definition

Purpose and Scope

The Taxonomy and Metadata Schema Definition stage establishes the semantic foundation that all tagging, enrichment, and discovery processes will rely on. By translating business objectives and industry standards into a hierarchical ontology and extensible metadata templates, organizations ensure consistent classification and seamless integration across production, distribution, and syndication workflows. Rapid expansion of media libraries—driven by streaming services, user-generated content, and global syndication—has revealed the pitfalls of ad hoc metadata practices. Inconsistent terminology, overlapping categories, and missing descriptors hinder content discoverability and elevate manual curation costs. A formal taxonomy and schema address these challenges by providing:

- A hierarchical ontology capturing genres, subgenres, formats, and contextual attributes.

- Controlled vocabularies and standardized fields for uniform metadata entry and accurate search, recommendation, and analytics.

- Alignment with frameworks such as Schema.org, EBUCore, and IPTC’s NewsML-G2 to support interoperability.

- Governance policies, versioning, and extension mechanisms for scalability and compliance with data privacy regulations like GDPR and CCPA.

By defining clear input requirements and schema constraints, organizations reduce downstream rework, streamline integration with third-party platforms, and enable advanced functions such as automated recommendation engines and audience segmentation.

Inputs and Stakeholder Collaboration

Successful taxonomy design depends on a comprehensive set of inputs and active engagement from diverse stakeholders. Early workshops and iterative feedback loops reconcile competing requirements and ensure the schema’s relevance and usability.

Key Inputs

- Industry Standards: Frameworks like Schema.org, EBUCore, and IPTC’s NewsML-G2 provide reusable classes and properties to accelerate interoperability.

- Organizational Requirements: Business rules, content strategies, and use cases specified by editorial, marketing, and programming teams.

- Existing Metadata Audit: An inventory of current fields, controlled lists, and attribute values highlighting gaps and inconsistencies.

- Technical Constraints: Integration requirements for CMS, DAM, and streaming platforms, including API schemas, data formats (XML, JSON, RDF), and performance SLAs.

- Governance Policies: Data stewardship guidelines, role-based permissions, approval workflows, and change management procedures.

- Tooling: Ontology editors and collaborative platforms such as Protégé, PoolParty Taxonomy Manager, and GraphDB.

Stakeholder Roles

- Content Strategists define genres, themes, and promotional tags.

- Production Teams specify technical metadata like codecs, resolution, and file formats.

- Legal and Rights Managers embed licensing windows, geographic restrictions, and attribution metadata.

- Data Scientists advise on taxonomy facets for segmentation, recommendation, and reporting.

- IT Architects validate schema compatibility with existing APIs, pipelines, and identity systems.

- UX and Customer Experience contribute search patterns, filter preferences, and navigation labels.

Structured governance—through a steering committee and taxonomy review board—defines decision rights, review cycles, and escalation paths. Training sessions and sandbox environments ensure metadata authors and integrators adopt the schema effectively.

Workflow Overview

The definition stage follows a structured sequence of collaborative workshops, technical processes, and governance checkpoints. These actions transform high-level requirements into a formally articulated taxonomy and controlled vocabularies that guide AI-driven tagging pipelines.

1. Governance Alignment

A steering committee of content operations, metadata governance, and AI engineering representatives convenes to approve the project charter. This charter specifies objectives for coverage and granularity, roles and responsibilities, KPIs (schema adoption rate, classification accuracy), and decision-making processes. Approved scope and timelines are communicated via the enterprise message bus to trigger task assignment and ensure auditability.

2. Requirements Capture Workshops

Facilitated sessions gather detailed use cases and edge cases from content producers, rights managers, distribution partners, legal officers, and AI engineers. Real-time collaboration tools record term suggestions, relationships, and property definitions. Outputs include a requirements matrix mapping stakeholder needs to taxonomy constructs.

3. Ontology and Vocabulary Drafting

Taxonomy architects draft hierarchical class structures (Genre Subgenre Theme), define attributes (Release Year, Production Country), specify relationships (isPartOf, hasContributor), and create controlled lists for fields like language codes and region identifiers. Tools such as Protégé and GraphDB facilitate RDF/OWL model creation with documented definitions and examples. The draft triggers an OntologyDraftReady event for reviewers.

4. Iterative Review and Validation

Parallel review streams—semantic, compliance, and technical—examine definitions, policies, and downstream compatibility. Feedback is consolidated via a collaboration platform into a master change log. Critical issues generate RevisionRequired events, returning the draft to architects. Automated version control tracks all edits.

5. Approval and Publication

The steering committee reviews key schema highlights, resolved concerns, impact analyses, and deployment timelines. Upon approval via a digital governance portal, a SchemaApproved event is published, the taxonomy version is tagged in the repository, and artifacts are published to the schema registry.

6. Technical Implementation

Integration engineers export the ontology in machine-readable formats (JSON-LD, RDF/XML), load vocabularies into a centralized metadata service or knowledge graph, update API contracts, configure access controls, and execute integration tests with sample assets. Continuous integration pipelines validate syntax and consistency, alerting teams to any failures.

7. Versioning and Event-Driven Coordination

The schema lifecycle—Draft, Review, Approval, Deployment, Deprecation, Archival—is managed through governance events (TermDeprecated, SchemaVersionRetired) on the event bus. An AI orchestrator listens for SchemaApproved to retrieve the new schema, reload controlled vocabularies in model pipelines, update enrichment agents, and promote the taxonomy into production tagging clusters after successful validation.

8. Handover to Model Training

The finalized schema is handed off to AI model training and orchestration teams with access credentials, API documentation, release notes, and sample scripts. A TaxonomyDeployed event signifies readiness for live tagging, enabling AI engineers to integrate the new taxonomy into fine-tuning and retraining workflows.

AI-Driven Capabilities

AI accelerates taxonomy design by automating pattern discovery, term suggestion, validation, and continuous evolution.

- Ontological Pattern Discovery uses unsupervised clustering and topic modeling to reveal latent concept groupings within existing metadata.

- Term Suggestion and Expansion leverages NLP pipelines with Amazon Comprehend and Google Cloud Natural Language API to propose synonyms and domain entities via NER and embedding similarity.

- Schema Validation employs rule-based engines and machine learning classifiers to enforce naming conventions, cardinality rules, and mapping accuracy against sample assets.

- Semantic Similarity and Concept Clustering applies transformer embeddings from platforms like IBM Watson Knowledge Studio and clustering algorithms to refine taxonomy structure based on semantic distance.

- Continuous Learning integrates real-time catalog updates and user feedback via Azure Text Analytics, retraining models periodically to surface emerging concepts and trends.

These AI components—Pattern Discovery Engine, Term Suggestion Module, Validation Engine, Semantic Analyzer, and Continuous Learning Orchestrator—enable data-driven, scalable creation and maintenance of a robust semantic framework.

Outputs and Integration Handoff

The completed taxonomy and schema definition stage yields structured artifacts and establishes dependencies to support downstream tagging, enrichment, and model training.

- Versioned Taxonomy Ontologies: Hierarchical concept hierarchies stored in a centralized registry for traceability.

- Controlled Vocabularies and Glossaries: Defined terms with definitions, synonyms, preferred labels, and provenance.

- Schema Templates: Metadata blueprints specifying required and optional attributes, data types, and validation constraints.

- Mapping Specifications: Crosswalk documents aligning internal terms with external standards like IPTC, Dublin Core, or Schema.org.

- API Definitions: OpenAPI-compliant endpoints exposing taxonomy lookups, term suggestions, and validation operations.

- Governance Reports: Change logs and approval records documenting term additions, deprecations, and structural updates.

Dependencies

- Domain Experts from editorial, legal, marketing, and production teams for term validation and relevance.

- Metadata Management Platforms such as PoolParty and Ontotext GraphDB for ontology hosting, version control, and API exposure.

- Asset Management Systems like Adobe Experience Manager Assets and Mosaiq for sample retrieval and metadata synchronization.

- Security and Access Controls via enterprise identity providers to enforce role-based permissions.

- Project Management Tools such as JIRA and Confluence for tracking requests, reviews, and approvals.

Handoff to Model Training

- API-Driven Retrieval: Training pipelines consume taxonomy and schema via RESTful or gRPC endpoints.

- Annotation Tool Integration: Platforms like Prodigy and Labelbox import term lists and templates for accurate labeling.

- Workflow Configuration: Labeling tasks and dataset splits defined according to metadata blueprints and relationship constraints.

- Sample Asset Sets: Preprocessed media collections, enriched with provisional metadata for early validation.

- Version Alignment: Training manifests reference taxonomy versions to ensure reproducibility and support rollback.

- Documentation: Ontology diagrams, term usage guidelines, and API integration instructions shared with data science and DevOps teams.

This robust handoff protocol ensures that supervised learning pipelines are fed with consistent, high-quality labels and that semantic integrity is maintained across the content tagging life cycle.

Chapter 4: AI Model Selection and Training

Model Training Purpose and Scope

The model selection and training stage translates business requirements and domain taxonomies into scalable AI solutions for automated content tagging and metadata enrichment. By aligning NLP, computer vision, and multimodal models with enterprise ontologies, teams establish performance baselines for scene detection, object recognition, sentiment analysis, and other tagging tasks. This approach ensures metadata consistency and accuracy across expansive video, audio, and text libraries, directly impacting content discoverability, monetization, and user engagement.

Data Inputs and Environment Prerequisites

Essential Data Inputs

- Annotated Corpora: Text datasets with transcripts, subtitles, and descriptive annotations aligned to the target taxonomy.

- Domain Sample Videos and Frames: Curated clips and key frames paired with bounding boxes, object labels, and action annotations.

- Audio Excerpts with Transcriptions: Dialogue, ambient sounds, and musical scores annotated with speaker identities and emotion labels.

- Taxonomy Definitions: Hierarchical schemas and controlled vocabularies guiding output classes during training and inference.

- Baseline Metadata Records: Existing tags, ratings, and engagement signals for transfer learning.

- Performance Benchmarks and SLAs: Historical metrics and error rate requirements for automated hyperparameter tuning in Amazon SageMaker and Google Cloud AI Platform.

- Compute Environment Specifications: GPU and CPU cluster details supporting distributed training workflows.

- Data Privacy and Compliance Guidelines: Licensing constraints and PII handling policies enforced by frameworks like Hugging Face.

- Stakeholder Requirements: Accuracy thresholds, supported languages, and content rating criteria from editorial, legal, and production teams.

Prerequisites and Conditions

- Preprocessed Assets: Format normalization, noise reduction, and quality scoring as outlined in earlier pipeline stages.

- Validated Taxonomy: Finalized metadata schema approved by domain experts to prevent model confusion.

- Sufficient Data Volume and Diversity: Minimum tens of thousands of labeled segments or frames covering varied accents, dialects, and scene contexts.

- Defined Data Split Strategy: Training, validation, and test partitions respecting content ownership and preventing data leakage.

- Reserved Compute Resources: Availability of GPU instances or on-premise servers, scheduled to.optimize cost and utilization.

- Monitoring and Logging: Telemetry agents integrated with platforms such as NVIDIA Clara for real-time visibility.

- Security and Access Controls: Role-based access control, encryption at rest and in transit, and secure code repository governance.

- Baseline Model Inventory: Catalog of pretrained checkpoints and transfer learning candidates to accelerate experimentation.

- Governance Workflows: Approval processes for bias assessment, model proposal reviews, and release sign-offs.

Workflow Actions and Integrations

The training workflow orchestrates a sequence of activities—from initial evaluation through final validation—using system-to-system integrations and human reviews. This structured flow ensures repeatable, auditable, and scalable model development.

Phase 1: Model Evaluation

- Data Retrieval: Query the metadata repository via RESTful APIs authenticated with OAuth to fetch training datasets.

- Baseline Model Loading: Fetch pretrained networks from the TensorFlow Model Garden or Hugging Face registry, orchestrated by Kubeflow.

- Automated Benchmarking: Execute inference on validation data and store metrics in MLflow.

- Stakeholder Review: Notify ML engineers and data scientists via messaging integrations for performance approval or rejection.

Phase 2: Transfer Learning and Hyperparameter Optimization

- Job Submission: Launch fine-tuning tasks on Amazon SageMaker or Google Cloud AI Platform with specified container images and dataset URIs.

- Preprocessing Pipeline: Execute DAGs in Apache Airflow, standardizing inputs, augmenting images, and tokenizing text, with events streamed via Apache Kafka.

- Hyperparameter Sweeps: Coordinate searches through Azure Machine Learning or Weights & Biases, reporting trial metrics back to the experiment tracker.

- Autoscaling: Use Kubernetes autoscaler policies defined in Infrastructure as Code to adjust compute nodes dynamically.

Phase 3: Iterative Training and Validation

- Continuous Retraining: Schedule nightly retraining cycles using cron jobs in the orchestration framework.

- Cross-Validation: Partition data into folds to evaluate model generalization, comparing results against historical benchmarks.

- Anomaly Detection: Apply statistical tests to identify performance drift, triggering alerts via the monitoring service.

- Human-in-the-Loop: Generate review tasks in ticketing systems when drift exceeds thresholds, enabling expert sample annotation and pipeline re-ingestion.

Phase 4: Versioning and Artifact Management

- Artifact Packaging: Bundle model weights, architecture definitions, and metadata into versioned container images.

- Registry Publication: Push containers to the model registry with metadata stored in a relational database.

- Deployment Descriptor Update: Tag the new model for production rollout via configuration management services.

- Audit Logging: Record parameters, metrics, and lineage in a secure ledger for compliance and forensics.

Phase 5: Final Quality Gate

- Benchmark Tests: Run end-to-end evaluations for inference latency, adversarial robustness, and bias checks.

- Dashboard Generation: Synthesize performance, fairness, and resource consumption metrics into centralized dashboards.

- Approval Workflow: Route dashboards for digital signatures; record acceptance or remedial actions.

- Release Flagging: Set flags in the configuration store to trigger downstream agent orchestration design.

Integration relies on event-driven architectures, uniform API orchestration, centralized metadata stores, and an observability stack ensuring security and governance across inter-service interactions.

AI Capabilities and Supporting Roles

Advanced AI functions and system roles coalesce to form an enterprise-grade training pipeline. These capabilities reduce manual experimentation, improve accuracy, and embed governance.

AI-Driven Capabilities

- Neural Architecture Search: Automated discovery of optimal network topologies through reinforcement or gradient-based search.

- Meta-Learning: Initialization strategies leveraging prior outcomes to accelerate adaptation to new content styles.

- Transfer Learning: Seamless import of PyTorch or TensorFlow checkpoints for domain-specific fine-tuning.

- Hyperparameter Optimization: Bayesian and evolutionary strategies executed by Ray Tune and Weights & Biases.

- Data Augmentation: Customized pipelines with Albumentations and GAN-driven synthetic data generation for rare scenarios.

- Ensemble and Distillation: Model fusion techniques and knowledge distillation to balance accuracy with inference efficiency.

- Interpretability: Saliency maps and attention visualizations for compliance audits.

- Multi-Modal Fusion: Cross-modal embeddings combining visual, textual, and audio features for contextual tagging.

System Roles and Responsibilities

- Training Orchestrator: Coordinates containerized jobs on Kubernetes, automating event-driven triggers from taxonomy updates.

- Data Pipeline Manager: Secures ingestion and preprocessing, ensuring reproducibility and lineage tracking.

- Feature Store: Serves precomputed embeddings and descriptors, providing low-latency feature access.

- Hyperparameter Tuner: Allocates resources dynamically and integrates search algorithms via Azure Machine Learning and Weights & Biases.

- Experiment Tracker: Logs configurations and metrics in MLflow, enabling comparative analytics and reproducibility.

- Validation Engine: Automates QA tests, adversarial checks, and performance alerts.

- Model Registry and Governance Agent: Manages artifact storage, approval workflows, and audit trails.

- Resource Manager: Provisions and scales GPU/CPU clusters, enforcing cost optimization and tenant isolation.

- Security and Compliance Agent: Enforces RBAC, encryption standards, and immutable audit logging.

- Collaboration Interface: Delivers real-time notifications and dashboards to stakeholders, capturing feedback for continuous improvement.

Artifacts, Dependencies, and Handoff Protocols

Output Artifacts

- Model binaries and weight files in SavedModel or ONNX formats.

- Evaluation reports detailing accuracy, recall, F1 score, and AUC.

- Hyperparameter tuning logs from Amazon SageMaker and Weights & Biases.

- Serialized preprocessing pipelines, tokenizers, and feature extractors.

- Model cards documenting purpose, provenance, limitations, and version history.

- Container images and Kubernetes manifests for scalable inference deployment.

- Versioned dataset snapshots in Amazon S3 or Google Cloud Storage.

Dependencies and Resources

- Compute clusters with GPUs/TPUs on Google Cloud AI Platform or managed EC2 GPU fleets.

- Distributed training frameworks such as TensorFlow and PyTorch.

- Feature store and data lake integration for consistent schema enforcement.

- Cataloging and registry services via MLflow or the Amazon SageMaker Model Registry.

- Hyperparameter optimization platforms like Optuna and SageMaker Automatic Model Tuning.

- Orchestration engines such as Kubeflow Pipelines and Apache Airflow.

- Security frameworks for identity and access management, encryption, and audit logging.

- Registry Event Triggers: Notifications via Apache Kafka or AWS SNS to downstream orchestration services.

- Inference Endpoint Deployment: Configuration of REST or gRPC services on Amazon SageMaker Endpoints or Google Cloud Run.

- API Contract Definitions: OpenAPI or Protocol Buffer specifications for input/output schemas and authentication.

- Resource Tagging: Propagation of labels for environment, version, and ownership to support policy enforcement.

- Access Controls: Role-based permissions granting AI agents model artifact access.

- Documentation Handover: Publication of model cards and usage guidelines to a centralized knowledge base.

Version Control and Documentation

- Git and data-version control tags aligning code commits with dataset snapshots.

- Automated model card generation capturing training context and performance summaries.

- Changelog tracking for systematic recording of iterative changes and impact assessments.

Validation and Quality Assurance

- Automated Regression Tests: Compare new outputs against established baselines.

- Bias and Fairness Assessments: Evaluate performance across demographic and content slices.

- Drift Detection: Monitor statistical deviations in input feature distributions.

Integration Testing

- Contract Testing: Validate API schema adherence and error handling conventions.

- Load Testing: Assess inference latency and throughput under simulated peak loads.

- Resilience Verification: Test fallback and retry logic for model endpoint failures.

By unifying these processes—data preparation, model evaluation, iterative training, artifact management, and rigorous validation—teams establish a robust framework that propels trained models seamlessly into AI-driven tagging orchestration, delivering consistent, high-fidelity metadata at enterprise scale.

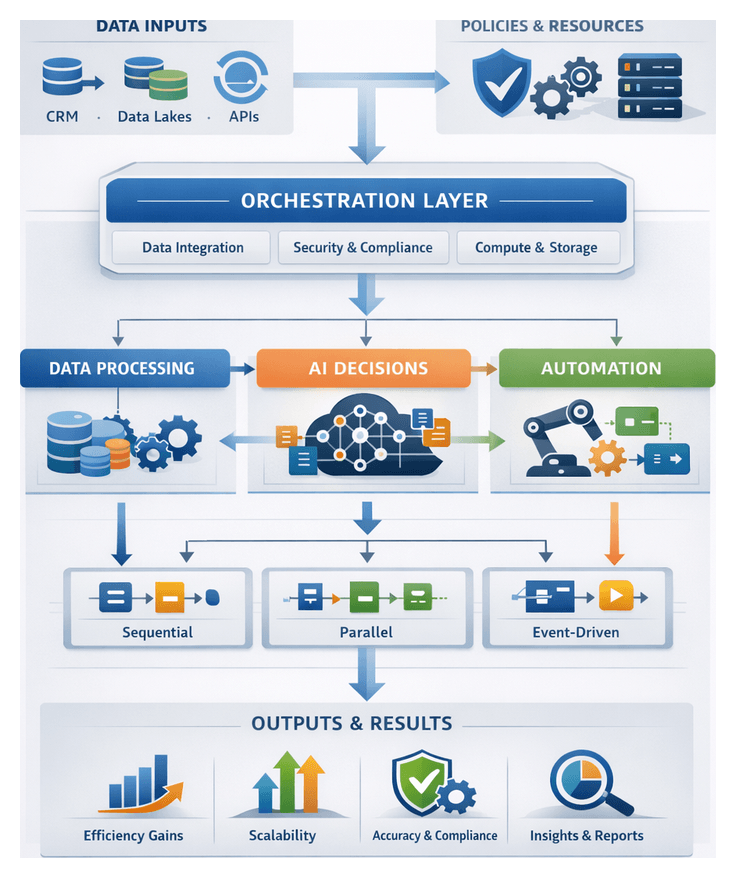

Chapter 5: AI Agent Orchestration and Workflow Design

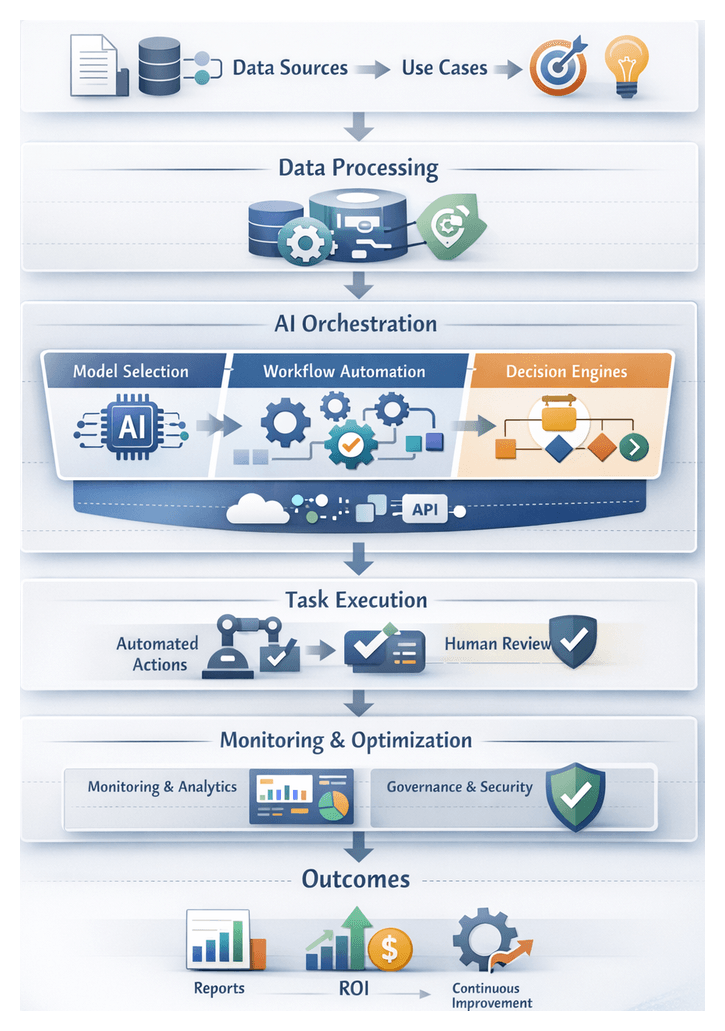

Purpose and Context of the Orchestration Stage

The orchestration stage establishes a unified control layer that coordinates specialized AI agents, external services, and system components into a seamless, end-to-end pipeline for automated content tagging and metadata enrichment. By defining clear execution paths, event triggers, and handoff protocols, this stage transforms independently trained computer vision, natural language processing, audio analysis, and semantic enrichment models into a scalable workflow capable of processing vast media libraries with minimal manual intervention.

Entertainment and media organizations manage extensive catalogs of video, audio, and image assets that must be accurately tagged and classified to support content discovery, personalization, and monetization strategies. Traditional manual approaches cannot scale to millions of hours of footage or continuous user-generated streams. The orchestration layer addresses challenges such as data transfer latency, parallel execution conflicts, inconsistent metadata outputs, and compliance requirements by enforcing standardized protocols for task initiation, handoff, error recovery, and auditability.

Prerequisites and Inputs

The orchestration design relies on a comprehensive set of organizational standards, model artifacts, and infrastructural components collected from earlier solution planning phases. Key inputs include:

- Trained AI model artifacts for computer vision, NLP, audio analysis, and multimodal inference, along with associated metadata schema definitions.

- Event definitions and messaging schemas detailing content arrival, preprocessing completion, and taxonomy updates.

- API specifications for AI agent endpoints, including input/output contract definitions, authentication mechanisms, and rate limits.

- Container images, compute cluster profiles, service quotas, and storage allocations informed by performance benchmarks and service-level objectives.

- Security and compliance policies governing data classification, encryption requirements, access control matrices, and audit logging standards.

- Governance guidelines for version control, CI/CD pipelines, release management protocols, and documentation requirements.

- Monitoring and logging specifications for capturing workflow metrics, error traces, and audit events in real time.

- Test harnesses, integration test suites, and acceptance criteria documents designed to validate orchestration logic in sandbox environments.

Infrastructure Requirements

Successful deployment demands a robust infrastructure foundation:

- A messaging backbone such as Apache Kafka or RabbitMQ for event pub/sub and task queueing.

- An API gateway and identity management services to secure agent invocations and enforce role-based access controls.

- Container orchestration platforms like Kubernetes or serverless frameworks to host and scale AI workloads.

- Centralized configuration management tools for environment parameterization, secrets injection, and feature flag support.

- Monitoring and logging infrastructure, including time-series databases and dashboards built with Prometheus and Grafana, alongside distributed tracing via Jaeger.

Governance and Compliance

Orchestration workflows must adhere to enterprise and industry regulations:

- Source control repositories for workflow definitions, infrastructure as code, and API contract schemas.

- Automated CI/CD pipelines that validate workflows, run integration tests, and promote changes across development, staging, and production environments.

- Release management protocols specifying rollback procedures, change approval boards, and version tagging conventions.

- Security guidelines for data classification, encryption at rest and in transit, and least-privilege access enforced via AWS IAM or Azure Active Directory.

- Audit logging specifications that capture user activity, service interactions, and policy violations for forensic analysis.

API and Schema Definitions

Clear API contracts and message formats prevent integration mismatches:

- OpenAPI or GraphQL definitions detailing request payloads, response structures, and error codes.

- Event schema documents in JSON Schema, Avro, or Protocol Buffers to enforce payload validation.

- Authentication and authorization protocols such as OAuth 2.0 or mutual TLS for secure API access.

Performance and Error Management

To meet operational targets, the orchestration design must include:

- Key performance indicators such as task execution times, queue latencies, and throughput thresholds.

- Retry policies, circuit breaker configurations, and dead-letter queue definitions for robust error handling.

- Incident response playbooks outlining notification channels, on-call rosters, and escalation procedures.

Workflow Design and Execution Flow

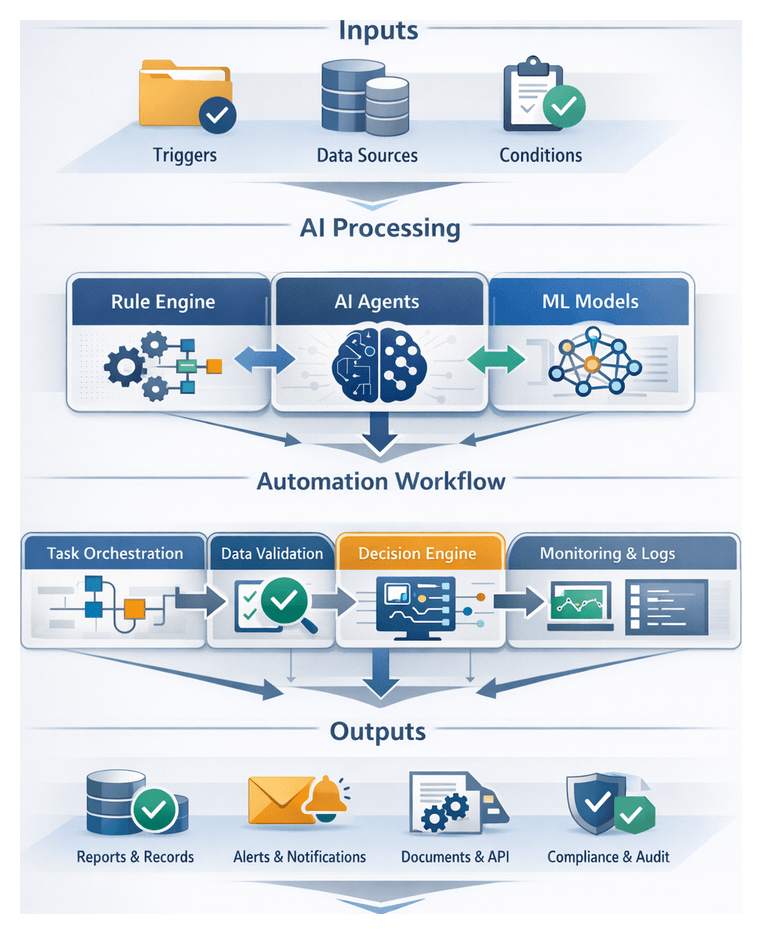

The orchestration framework implements an event-driven pipeline where validated events initiate AI-driven tasks according to parameterized workflow templates. Each stage in the flow is defined by trigger definitions, branching logic, and retry policies that ensure resilience and flexibility.

Event Ingestion and Validation

The process begins with capturing events that indicate new or updated media assets. Sources include content management systems, media repositories, manual approvals, or scheduled jobs. Upon receipt, the orchestration engine validates events against schema definitions and required metadata fields. Invalid or incomplete messages are diverted to an exception queue for remediation.

Task Orchestration and Trigger Management

Validated events are mapped to specific workflows based on routing rules. Workflow templates—defined as directed acyclic graphs using frameworks such as Apache Airflow or Prefect—specify task sequences, branching conditions, and retry behaviors. Triggers may be time-based, event-based, or conditional on asset attributes. The orchestrator issues REST or gRPC calls to microservices hosting AI models, taxonomy services, and metadata repositories.

Parallel Processing Streams

To maximize throughput, independent processing streams execute concurrently:

- Vision Stream: Object recognition, scene detection, and facial analysis via computer vision models.

- NLP Stream: Transcript analysis, entity extraction, and topic tagging through natural language processing.

- Audio Stream: Sentiment scoring, speaker diarization, and acoustic event detection on audio tracks.

- Enrichment Stream: Integration with external knowledge graphs and recommendation engines for semantic relationships.

Each stream operates on separate task queues, converging at a synchronization gate where intermediate results merge for downstream processing.

Dynamic Agent Delegation

The orchestrator leverages metadata attributes, performance metrics, and resource availability to assign tasks to optimal compute resources. High-resolution video may be directed to GPU-accelerated clusters, while short-form content runs on CPU instances. Service discovery provided by Kubernetes ensures agent endpoints are located dynamically, and autoscaling policies adjust capacity based on queue depth and resource utilization.

Error Handling and Recovery Mechanisms

Robust error management prevents task failures from cascading across the pipeline. Layers of fault tolerance include:

- Automated retries with exponential backoff for transient errors.

- Fallback agents or simplified algorithms when primary services are unavailable.

- Dead-letter queues capturing persistent failures with error context.

- Integration with Prometheus and alert routers to notify engineering teams of critical issues.

State Management and Handoff Coordination

A centralized state store records task statuses, input/output payload references, and timestamps to support idempotency and restartability. Standardized handoff interfaces package enriched metadata with provenance information before dispatching to subsequent stages.

Scalability and Autoscaling Integration

Autoscaling frameworks respond to workload fluctuations by adjusting the number of orchestrator and agent instances. Scaling triggers include Kubernetes pod metrics, message queue depths, and custom performance indicators derived from Apache Kafka consumer lag or inference latencies.

Governance, Security, and Observability

Policy engines and service meshes enforce role-based access controls, encrypt data in transit and at rest, and audit every task invocation and configuration change. Observability stacks capture metrics, distributed traces, and structured logs, with dashboards displaying end-to-end processing times, success rates, and SLA compliance.

Final Aggregation and Handoff

Upon completion of parallel streams, the orchestrator consolidates metadata—tags, confidence scores, semantic annotations, and provenance details—into a unified record. A standardized API call or event publication signals the automated classification stage to ingest enriched tags into the media asset repository.

AI Roles and Capabilities

The orchestration layer comprises modular AI agents and governance engines, each fulfilling specialized functions under central coordination. These roles ensure efficient task delegation, fault tolerance, human oversight, and continuous optimization.

- Central Orchestration Engine: Acts as the command center, parsing and routing events, scheduling tasks via DAG frameworks such as Apache Airflow or Prefect, and enforcing policies for data retention, privacy, and audit logging.

- Event-Driven Trigger Manager: Listens to event streams from platforms like AWS Step Functions or Google Cloud Pub/Sub, enriches payloads with contextual data, prioritizes tasks using ML-based models, and fans out events to parallel pipelines.

- Dynamic Task Delegation Agent: Selects optimal AI models and compute resources based on metadata characteristics, historical performance, and resource availability. Implements load balancing and elastic scaling through reinforcement learning strategies and fallback orchestration.

- Error Handling and Self-Healing Components: Detects anomalies using statistical analysis, initiates automated retries, reprovisions failed components, and escalates persistent issues to human operators with diagnostic context.

- Conversational AI and Human-in-the-Loop Interfaces: Provides chat-based supervision and exception management via platforms like Google Dialogflow. Enables asset queries, approval workflows, interactive pipeline control, and captures intervention metadata for governance.

- Logging, Monitoring, and Analytics Agents: Collects telemetry data for real-time dashboards, applies predictive analytics to forecast capacity needs, correlates enrichment results with business KPIs, and triggers alerts for anomaly detection.

- API Gateway and Integration Brokers: Facilitates interoperability between REST, gRPC, and message queue protocols. Validates metadata schemas, enforces security policies, and implements adaptive rate limiting using service mesh features from Istio or Kong.

- Governance and Policy Enforcement Modules: Tracks data lineage, applies rule-based validation for PII masking and retention schedules, generates compliance reports, and adapts policies through reinforcement learning to reflect evolving regulations.

Outputs, Dependencies, and Handoff to Tagging

Completion of the orchestration stage yields a suite of artifacts, external service dependencies, and handoff protocols that drive downstream tagging and classification engines.

Primary Outputs

- Workflow Definition Artifacts in JSON or YAML outlining task sequences, branching logic, retry policies, and timeouts.

- Agent Configuration Packages bundling model endpoints, parameter files, and runtime dependencies for consistent deployments.

- Event and Message Schemas in JSON Schema, Avro, or Protocol Buffers for payload validation.

- Operational Dashboards integrated into Apache Airflow or Prefect to visualize workflow topologies, pending tasks, and resource assignments.

- Execution Logs and Metrics captured by centralized logging services and dashboards built on Prometheus and Grafana for performance analysis.

- Error and Exception Reports summarizing failed tasks, error codes, and stack traces for rapid triage.

- State Snapshots and Checkpoints that preserve in-flight events and partial results for fault recovery and warm restarts.

- Audit Trails and Compliance Records providing immutable logs of event flows, human approvals, and configuration changes.

Key Dependencies

- Message Brokers such as Apache Kafka or RabbitMQ for event transport and task queuing.

- API Gateway and Service Mesh solutions like Istio or Kong for load balancing, service discovery, and security enforcement.

- Container Orchestration via Kubernetes or Amazon EKS to schedule agent containers and manage autoscaling.

- Compute and GPU Resources managed by cluster schedulers or Kubernetes operators to optimize inference performance.

- Metadata Repository and Configuration Store implemented with Apache Cassandra or HashiCorp Vault for secure key management and schema storage.

- Identity and Access Management through AWS IAM or Azure Active Directory for authentication and authorization.

- Monitoring and Alerting Systems such as Prometheus and Grafana for SLA monitoring and incident notifications.

- Logging and Tracing Infrastructure leveraging the ELK Stack or Lightstep for distributed log aggregation and root-cause analysis.

- Secrets Management via HashiCorp Vault for storing API keys, certificates, and credentials.

- Network and Security Policies enforcing zero-trust frameworks, VPNs, and firewall rules to secure inter-service communication.

Handoff to Automated Tagging and Classification

- Task Queues with Payload Envelopes using Amazon SQS or RabbitMQ, containing asset references, taxonomy identifiers, and invocation instructions.

- Model Endpoint References listing URIs for inference services hosted on AWS SageMaker or microservice endpoints, along with credentials and API schemas.

- Contextual Metadata Tags including initial enrichment attributes like production date, content type, and geolocation to guide classification rules.

- Execution Parameters such as retry limits, concurrency settings, and sampling thresholds optimized for cost and accuracy.

- Flow Control Signals implementing back-pressure, token buckets, and throttling configurations to prevent downstream overloads.

- Validation Schemas and Quality Gates using JSON Schema or OpenAPI definitions to verify tagged metadata before acceptance.

- Security Tokens like JWTs or OAuth 2.0 scopes scoped for downstream classification stages.

- Notification Hooks via webhooks or callback URLs for status updates and completion events integrated into monitoring dashboards.

This integrated set of outputs, dependencies, and handoff protocols constitutes the connective tissue that binds AI-driven agents into a unified, scalable, and auditable metadata enrichment system. By packaging rich context, enforcing governance, and maintaining full observability, the orchestration stage ensures that downstream tagging and classification execute accurately and reliably at scale.

Chapter 6: Automated Content Tagging and Classification

Stage Purpose and Inputs

Purpose and Objectives

The Automated Content Tagging and Classification stage systematically analyzes video, audio, text, and image assets to assign descriptive tags and classification labels. Leveraging computer vision, natural language processing, audio analysis, and ensemble learning, it generates consistent, taxonomy-compliant metadata that powers search indexing, personalized recommendations, targeted advertising, and automated workflows. Each tag is accompanied by a confidence score, enabling dynamic validation policies and human-in-the-loop review.

Use Case Scenarios

- Content Discovery and Recommendation: Scene-level insights drive recommendation engines, boosting viewer retention through personalized suggestions.

- Advertising and Brand Safety: Object and logo detection via Amazon Rekognition or Google Cloud Vision API enforces brand guidelines and ad placement rules.

- Regulatory Compliance: Automated detection of explicit content, violence, or hate speech tags assets for human review and policy enforcement.

- Closed Captioning and Subtitling: NLP-driven keyword extraction using AWS Comprehend or the OpenAI API facilitates dynamic subtitle generation and segment labeling.

- Rights Management and Syndication: Metadata on cast, crew, and licensing automates syndication workflows and royalty tracking.

- Asset Monetization and Cataloguing: Retailers and broadcasters assemble content bundles and targeted ads using enriched metadata.

Scale and Performance Considerations

To handle petabyte-scale libraries and real-time streams, design for horizontal scalability, batch and stream processing, and cost optimization. Co-locate compute near storage or deploy edge nodes for low-latency tagging of high-resolution assets. Implement auto-scaling policies on platforms such as Kubernetes or AWS Fargate, and integrate performance monitoring tools to track throughput, service latencies, and queue backlogs.

Required Inputs and Preconditions

- Preprocessed Media Assets: Standardized video (MP4, MOV), audio (AAC, WAV), and transcripts (WebVTT, SRT) from the quality assurance stage.

- Taxonomy Definitions: Controlled vocabularies, hierarchical category trees, and schema templates accessible via API or registry.

- Trained AI Models: Computer vision services such as Azure Computer Vision, NLP engines like Google Cloud Natural Language, and ensemble classifiers for multimodal fusion.

- Configuration Parameters: Confidence thresholds, resource quotas, batch sizing, and failover policies.

- Infrastructure Endpoints: Object storage (AWS S3, Google Cloud Storage), message brokers (Apache Kafka, RabbitMQ), metadata service APIs, and authentication systems.

- Governance Policies: Data privacy, compliance (GDPR, CCPA), monitoring frameworks, and human-in-the-loop review protocols.

- Stakeholder Sign-Off: Approvals from taxonomy owners, data engineers, AI practitioners, security officers, product managers, and operations teams.

Workflow Actions and Flow

The tagging workflow orchestrates event-driven triggers, microservices, and AI agents through a sequence of coordinated steps. Clear handoffs and communication protocols minimize latency and support parallel execution across distributed systems.

- 1. Event Trigger and Asset Retrieval: The orchestrator (for example, Apache Airflow) consumes an event from Kafka or RabbitMQ, validates eligibility, fetches media and transcripts from AWS S3 or Google Cloud Storage, and retrieves taxonomy schemas via API. A “Ready for Tagging” event then enqueues AI processing tasks.

- 2. Preprocessing Validation and Segmentation: A media processing microservice performs frame extraction or shot boundary detection, applies quality checks, and flags segments below thresholds for retry or human review.

- 3. Vision-Based Analysis: Computer vision agents using AWS Rekognition, Google Cloud Vision API, or Azure Computer Vision perform scene classification, object detection, face recognition, and OCR. Outputs include bounding boxes, confidence scores, and actor matches to talent databases.

- 4. Textual Analysis: NLP agents generate or ingest transcripts via IBM Watson Speech to Text or Azure Speech Services. Using AWS Comprehend or transformer models from OpenAI, they extract entities, topics, sentiment scores, and align speaker diarization metadata.

- 5. Ensemble Fusion and Tag Aggregation: A fusion engine normalizes confidence scores, applies taxonomy rules to merge tags, resolves duplicates (for instance “car” vs. “automobile”), and flags low-confidence or conflicting tags for escalation.

- 6. Contextual Enrichment: Graph-based agents link entities to external sources (IMDb, cultural databases), detect temporal patterns (narrative arcs, motifs), and incorporate personalization signals from engagement models.

- 7. Quality Assurance and Exception Handling: A monitoring service triggers alerts on SLA breaches or confidence anomalies, applies conflict resolution rules, and logs audit trails. Exceptional tasks route to human review interfaces (for example, JIRA or AgentLink AI dashboards).

- 8. Metadata Packaging and Handoff: Enriched metadata is compiled into JSON payloads conforming to schema definitions, validated for completeness, and dispatched via event topics or REST callbacks to downstream semantic enrichment or CMS connectors.

- 9. Performance Optimization: Dynamic task allocation on Kubernetes or serverless functions, in-memory caching of models and taxonomies, batch inference, and backpressure mechanisms ensure scalable, cost-effective processing.

- 10. Observability: Centralized logging (Elasticsearch, Splunk), metrics dashboards (Grafana, Prometheus), and distributed tracing with unique trace IDs provide end-to-end visibility for debugging and continuous improvement.

AI Capabilities and System Roles

AI-driven tagging pipelines combine specialized agents and infrastructure components to deliver accurate, consistent metadata. Key capabilities span computer vision, NLP, audio analysis, multimodal fusion, model serving, governance, and developer tools.

- Computer Vision: Deep learning models such as ResNet or EfficientNet detect objects, scenes, faces, logos, and perform OCR. Services include Google Cloud Vision and Clarifai, with roles like object recognition, scene segmentation, face identification, and text extraction.

- Natural Language Processing: Speech-to-text conversion by IBM Watson or Azure Speech Services, followed by named entity recognition, sentiment analysis, and topic modeling using LDA or transformer embeddings from OpenAI GPT.

- Audio Analysis: Acoustic scene classification, music recognition via Audd.io, speaker diarization, and audio quality assessment enrich metadata with ambient and speech characteristics.

- Multimodal Fusion: A confidence aggregator and conflict resolution engine align outputs across modalities, apply governance rules, and produce cohesive tag sets through a fusion orchestrator.

- Model Serving Infrastructure: Platforms such as Amazon SageMaker and MLflow host models with auto-scaling GPU/CPU clusters, API gateways for authentication, and versioned model registries.