The AI Agent Workflow Orchestration Handbook

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Understanding Data Fragmentation and Operational Silos

Large enterprises often contend with isolated information repositories and labor-intensive transitions that undermine efficiency and agility. Data silos arise when relational databases and ERP systems store structured records without cross-system interfaces, while unstructured silos embed critical business content in emails, documents, or multimedia. Streaming silos capture real-time events from IoT sensors or messaging queues that may not feed into centralized stores. Shadow IT—including departmental spreadsheets, bespoke scripts, and local databases—further fragments visibility, and third-party platforms introduce contractual and format inconsistencies.

Manual handoffs compound these challenges. Users transfer data via email attachments and shared drives, risking version confusion and unauthorized access. Copy-and-paste operations between spreadsheets and systems spawn transcription errors, while printed reports, faxed documents, and verbal instructions create delays, misplacement hazards, and untraceable decisions. The resulting latency, error rates, and compliance exposures degrade decision quality, distort reporting, and consume valuable labor hours that could fuel innovation.

Addressing these issues begins with a structured assessment to inventory data sources, map process touchpoints, and evaluate quality and accessibility. Engaging stakeholders through interviews and workshops grounds the diagnosis in real-world pain points, while performance metrics on exception rates and reconciliation efforts quantify the impact. A clear view of system inventories, architecture diagrams, standard operating procedures, and governance policies establishes the foundation for prioritizing high-impact integration and automation initiatives.

- Comprehensive Visibility—A holistic map of applications, data flows, and human interactions reveals interdependencies and hidden risks.

- Risk Mitigation—Identifying compliance gaps, data quality issues, and points of failure reduces regulatory exposure.

- Prioritization—Resource allocation targets processes with the greatest inefficiencies and manual effort.

- Stakeholder Alignment—Process owners, data stewards, and IT teams unite around a shared diagnostic framework.

Key deliverables from this diagnostic stage include a structured data source catalog, a process touchpoint map, a gap analysis report highlighting integration challenges, a prioritization matrix ranking workflows by impact and feasibility, and baseline performance metrics to benchmark future improvements.

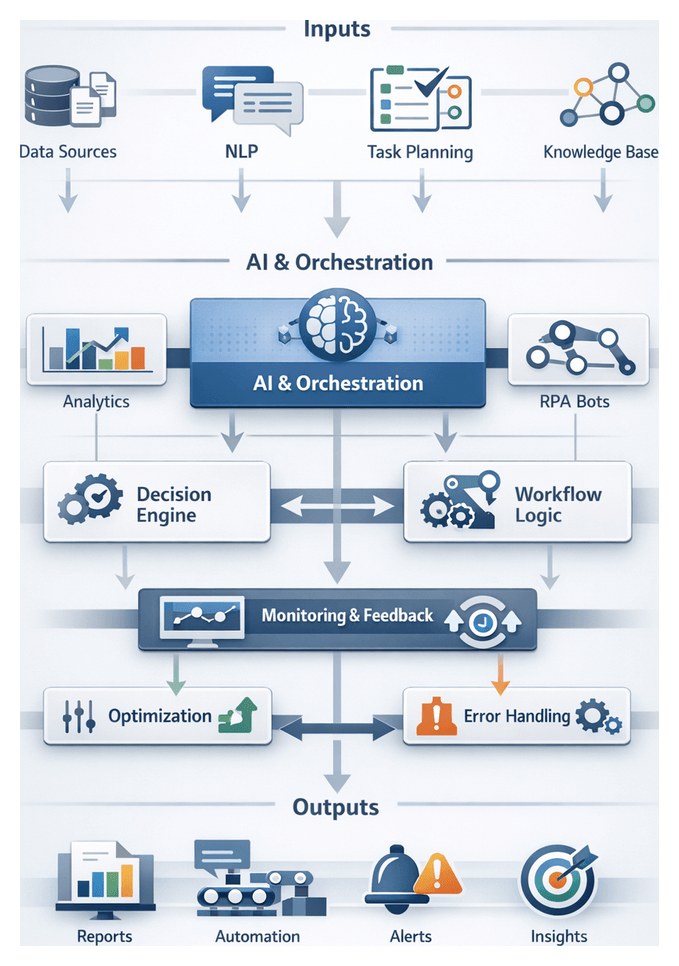

Establishing a Unified AI-Driven Orchestration Framework

To transform fragmented workflows into cohesive, end-to-end processes, enterprises must adopt an orchestration framework that aligns diverse services, AI models, bots, and human actors under consistent, transparent controls. A robust design embraces event-driven architecture, standardized interfaces, centralized visibility, dynamic routing, and extensible integrations.

Core Principles

- Event-Driven Architecture—Workflows launch in response to data arrivals, user requests, or system conditions.

- Standardized Interfaces—APIs, message queues, and publish-subscribe channels provide uniform access points.

- Centralized Visibility—A unified dashboard aggregates logs, metrics, and alerts for real-time oversight.

- Dynamic Routing—Rules engines evaluate context to direct tasks to optimal agents or fallback handlers.

- Extensible Integration—Plugin architectures enable new services to join without reworking the core engine.

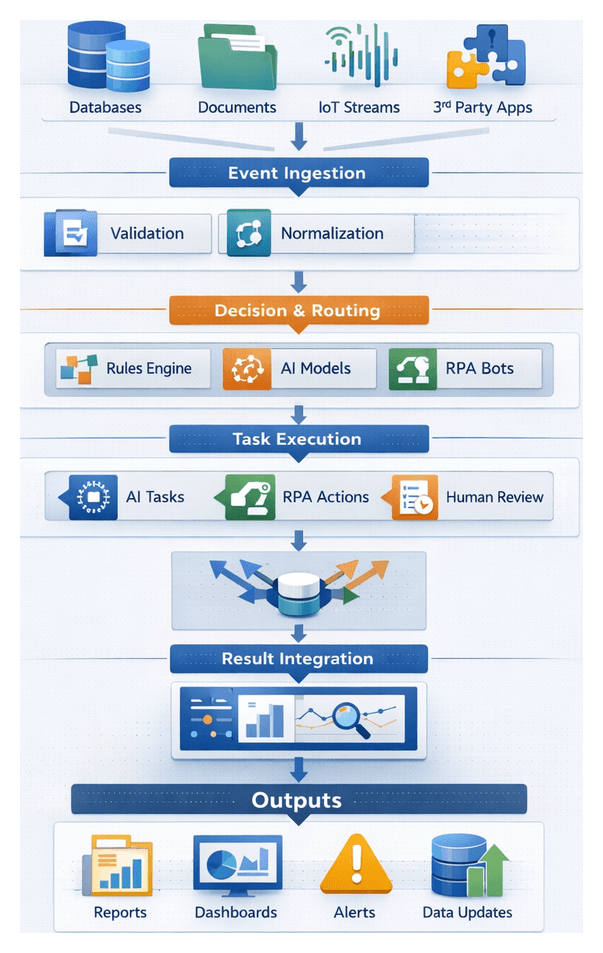

Typical Workflow Sequence

- Event Ingestion: Captured via webhooks, file watchers, or message brokers.

- Pre-Processing Validation: Schema checks, authentication, and prerequisite verifications.

- Routing Decision: A rules engine evaluates metadata (for example, priority or region) to select execution paths.

- Task Dispatch: Jobs queue or invoke AI inference endpoints, RPA bots, or human task portals.

- Concurrent Coordination: Parallel tasks synchronize via barrier patterns or join nodes.

- Result Aggregation: Outputs merge, transform, and forward to subsequent stages or datastores.

- Monitoring and Alerting: Latency, success rates, and resource utilization feed dashboards and remediation scripts.

- Completion and Audit Logging: Execution paths and parameters persist for compliance and analysis.

Coordination Mechanisms

- Priority Queuing: Tags determine execution order and resource allocation.

- Load Balancing: Distributed consumers or serverless functions adapt to demand surges.

- Heartbeat Protocols: Agents report status; missed heartbeats trigger automated failover.

- Locking and Concurrency Controls: Optimistic or pessimistic locks prevent resource conflicts.

- Timeout Strategies: Configurable timeouts detect hung tasks for reroute or retry.

Integration Touchpoints and System Roles

- Orchestration Engine: Central coordinator for workflows, state, and routing. Examples include Apache Airflow and Azure Logic Apps.

- AI Inference Services: Specialized endpoints for natural language understanding, image recognition, and predictive analytics.

- RPA Agents: Bots interfacing with legacy systems via UI automation or APIs, deployed through UiPath or Automation Anywhere.

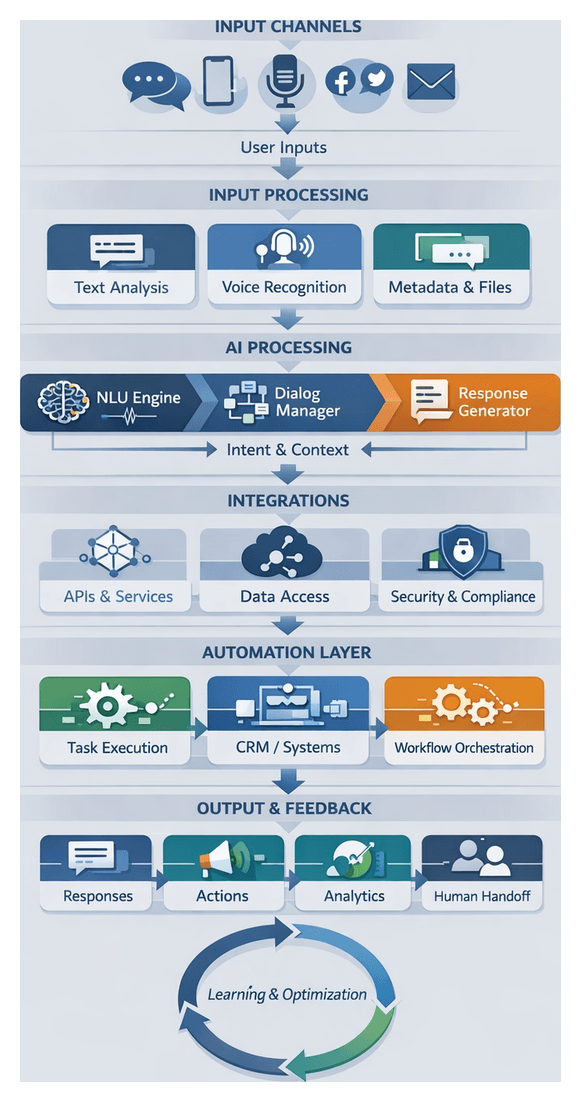

- Virtual Agents and Chatbots: Conversational interfaces for user queries, escalating to humans when needed.

- Human Task Queues: Portals for manual review, decision-making, or exception handling.

- Data Stores and Knowledge Repositories: Databases, data lakes, and semantic stores housing structured, unstructured, and enriched content.

- Monitoring and Logging Services: Telemetry collection and audit trails for governance.

Error Handling and Dynamic Rerouting

- Retry Policies: Automatic retries with back-off for transient failures.

- Fallback Routes: Alternative agents or degraded handlers when primaries fail.

- Compensation Transactions: Rollback actions in multi-step workflows to maintain consistency.

- Alert Escalation: Persistent errors prompt notifications to operations or messaging platforms.

- Dead-Letter Queues: Unprocessable messages move to holding areas for manual investigation.

By centralizing control, organizations achieve elastic resource utilization, uniform execution standards, reduced integration overhead, and accelerated time to market. Operational teams gain end-to-end visibility, governance functions enforce compliance, and business stakeholders enjoy faster, more reliable outcomes.

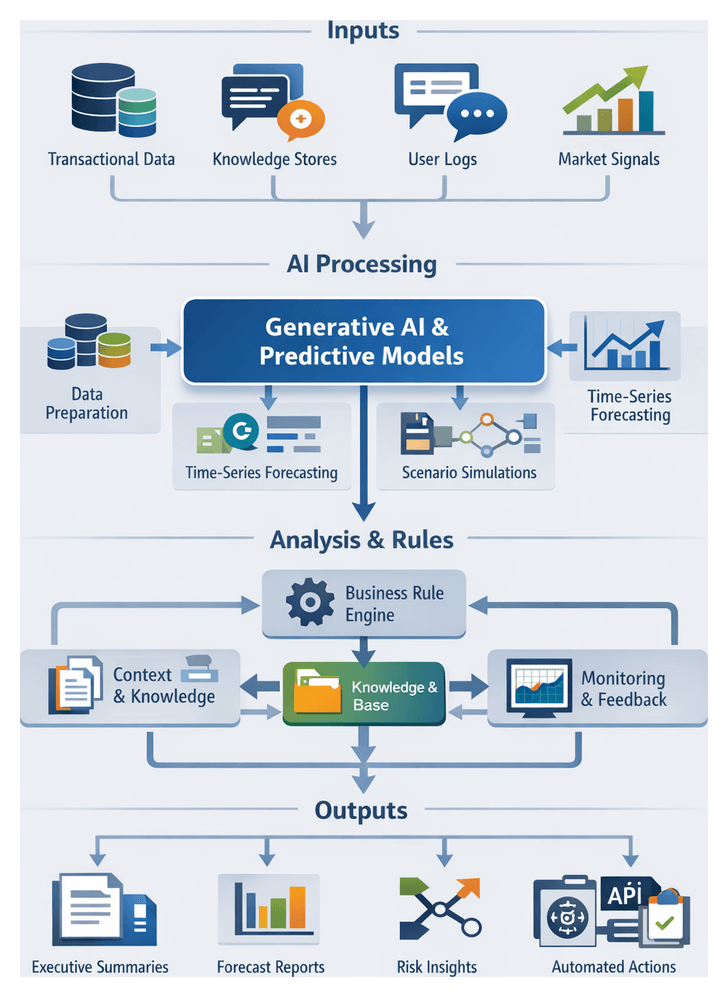

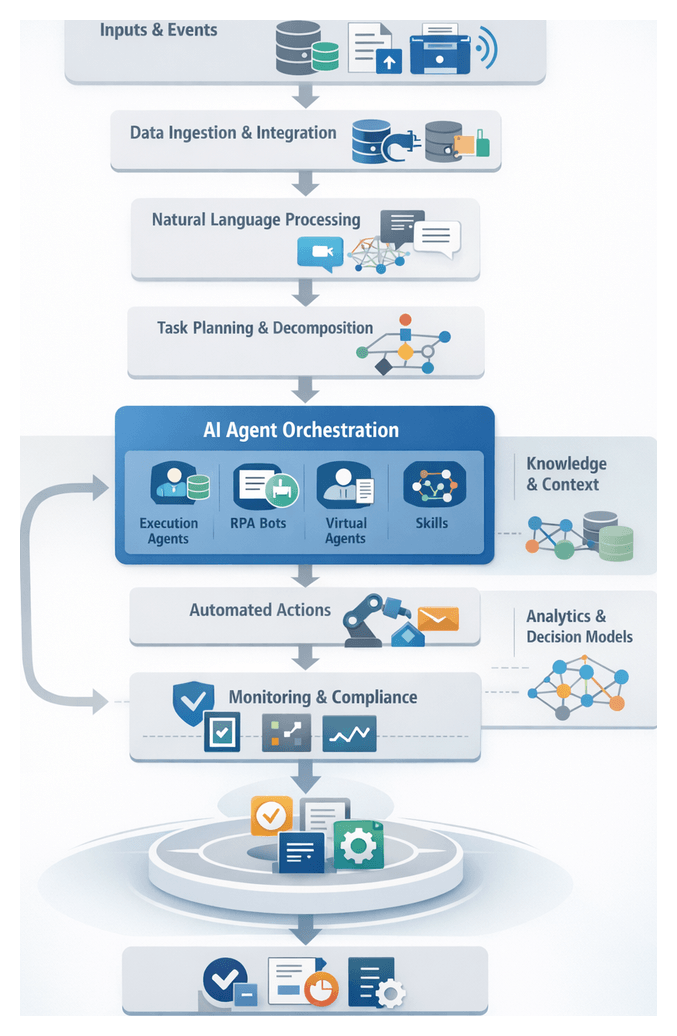

Integrating AI Agents into Enterprise Workflows

Embedding AI agents into orchestration layers transforms static pipelines into adaptive, intelligent workflows. These agents leverage machine learning models, natural language understanding, robotic process automation, decision-support systems, generative AI, and computer vision to automate tasks, collaborate with humans, and self-optimize.

Core AI Capabilities

- Natural Language Understanding: Agents parse text and speech to extract intents and entities using solutions like IBM Watson Assistant or Google Dialogflow, enabling automated ticket triage and self-service knowledge delivery.

- Robotic Process Automation: Bots deployed via UiPath or Automation Anywhere perform rule-driven tasks on legacy systems without modifying underlying applications.

- Predictive and Prescriptive Analytics: Machine learning models forecast trends, detect anomalies, and recommend actions for pricing, maintenance, and fraud prevention.

- Generative AI: Large language models such as OpenAI’s GPT and Azure OpenAI draft text, generate code snippets, and propose strategic scenarios.

- Computer Vision: Vision-enabled agents automate visual inspection and document digitization via AWS Rekognition, enhancing quality control and security monitoring.

Supporting Infrastructure

Robust systems provide orchestration, data management, security, and monitoring to ensure reliability, scalability, and compliance.

- Orchestration Frameworks: Visual pipelines, retry logic, and conditional branches powered by Apache Airflow, AWS Step Functions, or Google Cloud Workflows.

- Data Platforms and APIs: Data lakes on Amazon S3 or Azure Data Lake Store, real-time streaming with Apache Kafka, and API gateways exposing master data and business logic.

- Security and Compliance: Identity and access management via Okta or Azure Active Directory, encryption at rest and in transit, key management, and continuous compliance monitoring for standards such as GDPR, HIPAA, or PCI DSS.

- Monitoring and Logging: Centralized log aggregation with Elastic Stack or Splunk, dashboards tracking throughput, errors, and resource usage, and automated remediation triggers.

Collaborative Dynamics with Human Stakeholders

- Human-in-the-Loop Controls: Agents present recommendations with confidence scores for human review in high-impact decisions like loan approvals or medical diagnoses.

- Task Routing and Escalation: Ambiguous or exception-laden tasks route automatically to designated operators or supervisors.

- Interactive Interfaces: Conversational agents integrated into Microsoft Teams, Slack, or custom portals allow users to initiate workflows, query status, and intervene as needed.

- Training and Feedback Loops: Human corrections feed versioned datasets into model retraining pipelines, reducing manual interventions over time.

Governance, Error Management, and Scaling

- Policy Enforcement: Centralized engines codify data access rules, fairness constraints, and ethical guidelines for runtime validation.

- Exception Handling: Standardized error schemas, retry policies, and escalation protocols within the orchestration layer.

- Version Control and Auditing: Agent code, configurations, and models tracked via Git repositories with automated deployment and rollback capabilities.

- Elastic Scaling: Container orchestration platforms like Kubernetes instantiate additional agents based on queue depths and custom metrics.

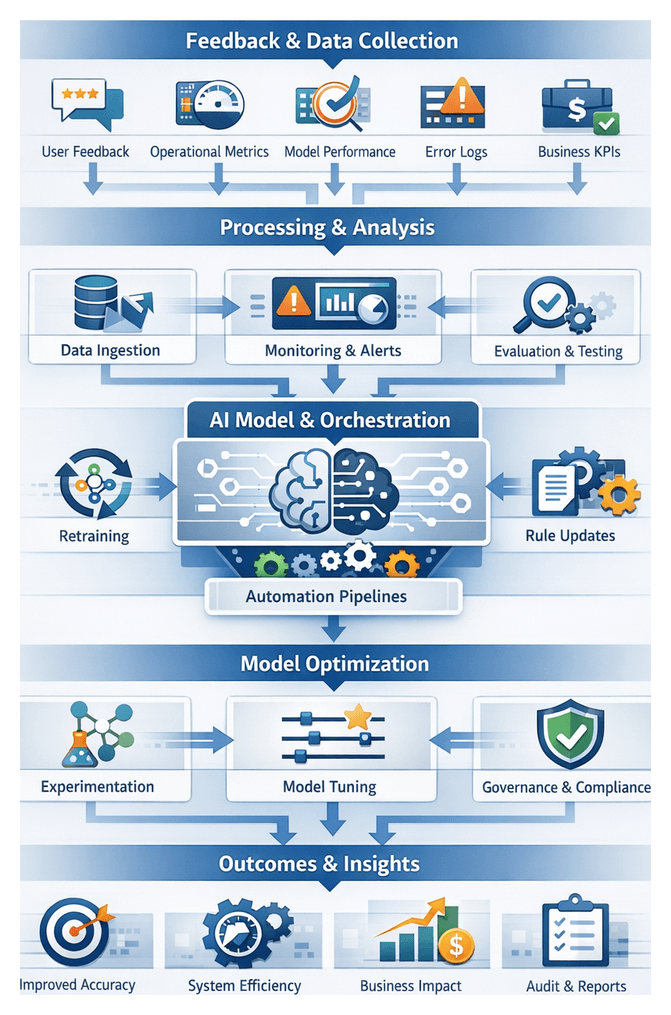

Measuring Impact and Continuous Improvement

- Quantitative Metrics: Cycle times, error rates, cost savings, and user satisfaction compared against pre-deployment benchmarks.

- Qualitative Assessments: End-user and stakeholder feedback to surface usability gaps and compliance concerns.

- A/B Testing and Experimentation: Parallel agent configurations or model versions to identify optimal strategies before full rollout.

- Retraining and Rule Refinement: Production data integrated into training pipelines to address model drift and emerging patterns.

- Scalability Planning: Infrastructure utilization reviews and forecasts guide capacity planning for future expansion.

Structural Foundations: Outputs, Dependencies, and Handoffs

To translate conceptual designs into actionable guidance, the Structural phase of the AI Agent Workflow Handbook produces core artifacts, maps interdependencies, and codifies handoff protocols. These deliverables enable coherent progression from architectural patterns to implementation and training.

Primary Outputs

- Structural Blueprint: Visual and textual representation of chapter sequencing, section objectives, and narrative flow from data ingestion to optimization.

- Dependency Matrix: Tabular mapping of prerequisite knowledge, data sources, technology versions, and inter-chapter linkages.

- Navigation Framework: Cross-references, index entries, and lookup conventions for rapid discovery of topics in tools such as Apache Airflow, Camunda, or Kubeflow.

- Handoff Guidelines: Templates, checklists, and repository links for transferring narrative drafts, diagrams, code samples, and validation scripts to downstream teams, referencing platforms like AWS Step Functions and UiPath.

Dependency Considerations

- Content Dependencies: Narrative assumes awareness of preceding topics, such as intent extraction before task planning.

- Technical Dependencies: Sample code and API examples require specific library versions cataloged in the technology stack appendix.

- Data Dependencies: Realistic sample datasets, configuration files, and synthetic streams with defined schemas ensure reproducibility.

- Compliance Dependencies: Chapters flagged for GDPR or HIPAA review before publication.

Handoff Mechanisms

- Artifact Packaging: Standardized packages containing drafts, diagrams, code, and metadata for versioning and review status.

- Review Gates: Automated checks for style compliance, hyperlink integrity, code execution, and glossary alignment.

- Repository Transfer: Committed to a central content repository with role-based access; downstream teams pull artifacts per branch policies.

- Integration Workshops: Collaborative sessions among authors, solution architects, and development leads to align patterns before coding.

Clear roles and responsibilities—documented in a RACI matrix—assign accountability for artifact creation, editorial review, technical validation, and consumption by implementation teams. By formalizing outputs, dependencies, and handoff protocols, the handbook becomes a living blueprint, guiding enterprises from strategy to operational AI-driven workflows across diverse organizational contexts.

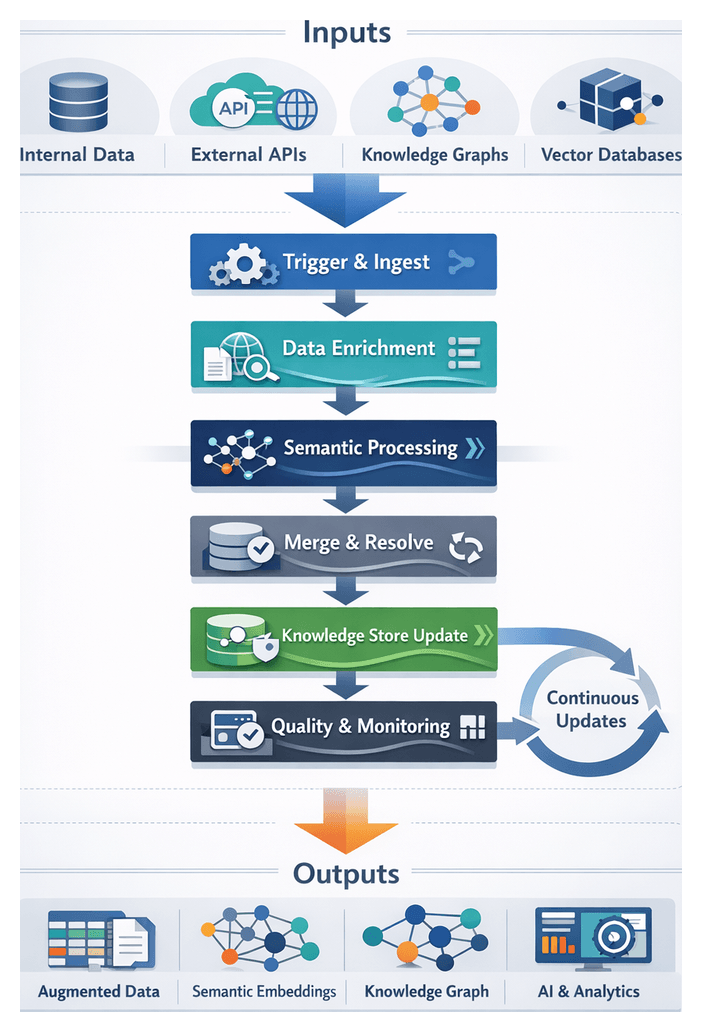

Chapter 1: Data Ingestion and Integration

Purpose and Context

In enterprise environments, data ingestion and integration establish a reliable single source of truth for AI-driven workflows. By consolidating raw inputs from transactional systems, IoT devices, third-party APIs and unstructured repositories into a central data lake, organizations eliminate silos, reduce manual effort and accelerate time-to-insight. In regulated industries, this stage also enforces traceability, compliance and auditability.

Data Sources and Characteristics

Incoming data typically falls into four categories:

- Structured Systems: Relational databases (Oracle, SQL Server), data warehouses and ERP platforms.

- Semi-Structured Repositories: JSON, XML and CSV files in object stores or content management systems.

- Unstructured Stores: Documents, emails, images and multimedia requiring indexing and metadata extraction.

- Streaming Event Streams: High-velocity data from IoT sensors, logs and message queues via Apache Kafka or Amazon Kinesis.

Prerequisites for Ingestion

- Secure Connectivity: VPN tunnels or private links, firewall rules and VPC configurations.

- Access Controls: Role-based permissions, token authentication and credential storage via HashiCorp Vault.

- Schema Registry: Centralized definitions with Apache Avro Schema Registry or AWS Glue Data Catalog.

- Governance Policies: Data ownership, retention and compliance rules (GDPR, HIPAA, SOC 2) enforced with automated checks.

Ingestion Patterns

- Batch Ingestion: Off-peak loads using Informatica or Talend.

- Micro-Batch Processing: Frequent small batches via Fivetran or Stitch.

- Real-Time Streaming: Continuous feeds with Apache Kafka or Amazon Kinesis.

- Change Data Capture: Delta captures using Debezium or AWS Database Migration Service.

Tools and Frameworks

- Integration Services: Azure Data Factory, Google Cloud Dataflow, AWS Glue.

- Orchestration: Apache Airflow.

- Event Streaming: Apache Kafka, Amazon Kinesis.

- Data Lakes: Amazon S3, Azure Data Lake Storage, Google Cloud Storage.

- Metadata & Lineage: OpenLineage, Apache Atlas.

Validation, Security and Compliance

- Schema conformity, data type checks, null/range validations and referential integrity tests.

- Statistical profiling to detect anomalies.

- Encryption in transit (TLS/SSL) and at rest (AES-256).

- Masking and tokenization of sensitive fields.

- Audit logging and compliance mechanisms to enforce GDPR, HIPAA and other regulations.

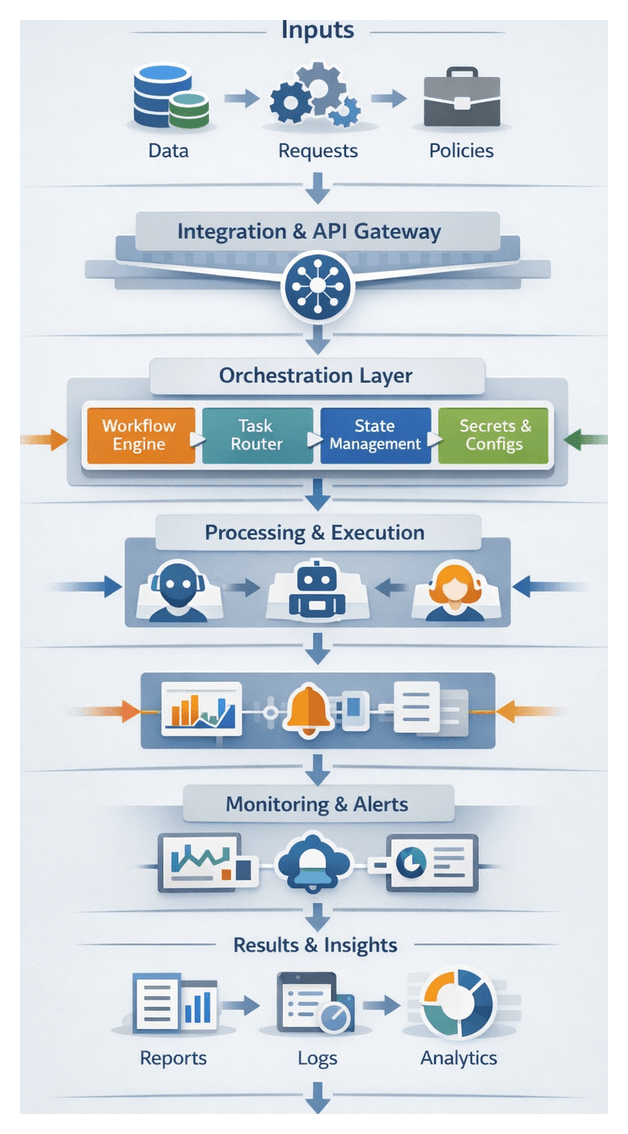

Cohesive Orchestration Framework

Background

Enterprises often operate in silos of specialized tools, causing latency, duplicated effort and governance gaps. The shift from batch workflows to event-driven, AI-augmented pipelines demands a unified orchestration layer to coordinate AI agents, legacy systems and cloud services end to end.

Framework Definition

A cohesive orchestration framework sequences, monitors and governs interactions by abstracting service interfaces into reusable primitives. Key functions include:

- Routing tasks based on business rules, priority and resource availability.

- State management with retries, compensating actions and transactional integrity.

- Centralized monitoring and audit trails for visibility and compliance.

- Governance enforcement for security, privacy and regulatory policies.

Core Benefits

Consistency of Execution

- Standardized workflows and centralized business rules.

- Replayable processes for debugging and root-cause analysis.

Scalability of Operations

- Dynamic task routing and parallel execution.

- Auto-scaling orchestration using AWS Step Functions or Apache Airflow.

Error Reduction and Resilience

- Built-in retry policies and compensating transactions.

- Centralized exception handling and audit logs for compliance.

Interaction Patterns

- Triggering Events: User actions, inbound messages, scheduled polls or alerts.

- Task Routing: Dispatching tasks to AI agents or compute clusters via queues.

- Data Handoff: Standardized schemas and payloads managed within workflows.

- State Management: Checkpoints, parallel branches and timed waits.

- Monitoring & Feedback: Dashboards, metrics and alerting for SLA breaches.

- Completion: Final output packages for downstream consumers.

Implementation Considerations

- Governance & Ownership: Establish a center of excellence to manage workflows and rules.

- Tool Selection: Evaluate platforms like Apache Airflow, AWS Step Functions and AgentLinkAI.

- Standard Interfaces: Use OpenAPI or gRPC and schema registries for message validation.

- Security & Compliance: IAM, encryption and audit logging for workflows.

- Incremental Adoption: Pilot key services before scaling scope.

- Change Management: Train teams and integrate workflow testing into DevOps pipelines.

Future-Proofing

- Onboard emerging AI services like multimodal models or streaming analysis engines.

- Apply global business rules consistently across legacy, cloud and edge environments.

- Prototype new processes in sandboxes before production deployment.

- Scale across geographies while maintaining governance.

AI Agents in Enterprise Workflows

Definition and Role

AI agents are autonomous software entities that perform tasks, make decisions and interact with systems or users based on objectives and learning capabilities. They bridge unstructured data, legacy applications, modern APIs and human stakeholders to enable dynamic, self-optimizing workflows.

Key Capabilities

- Autonomous Decision-Making: ML models and rule engines select actions without human input.

- Adaptive Learning: Continuous feedback loops refine models and strategies.

- Conversational Interaction: NLU and generation via chatbots and voice interfaces.

- Multi-Modal Processing: Combining text, images, video and sensor data.

- Integration Frameworks: Built-in connectors for legacy, SaaS and cloud services.

- Error Detection & Recovery: Monitoring, retry logic and compensating transactions.

Integration Architecture

- Central orchestration layer for task routing and prioritization.

- Standard communication protocols: RESTful APIs, message queues or event streams.

- Role-based security, OAuth and encryption.

- Modular microservices or containerized agents.

- Data management with versioned model registries.

Technical Components

- Orchestration Platforms: Apache Airflow, Kubeflow, Azure Logic Apps, AWS Step Functions.

- Agent Frameworks: Rasa, LangChain, OpenAI API, Ray RLlib.

- Monitoring & Logging: Prometheus, ELK Stack.

Deployment Strategies

- API-First: Expose agents via REST or GraphQL endpoints.

- Event-Driven: Trigger agents with Kafka or RabbitMQ.

- Containerized Microservices: Kubernetes orchestration for scaling.

- Edge Deployment: Lightweight agents on devices with offline processing.

- Hybrid Integration: On-premises components combined with cloud services.

Organizational Roles

- Solution Architects design integration and security models.

- Data Engineers prepare datasets and ensure quality.

- ML Engineers develop and retrain models.

- DevOps/AI Ops automate deployments and monitoring.

- Business Analysts define requirements and validate outcomes.

- Security Officers enforce compliance and governance.

Business Impact

- Operational efficiency gains of up to 70 percent through automation.

- Enhanced customer experience with 24/7 virtual support.

- Data-driven insights for proactive decision-making.

- Cost optimization via dynamic resource allocation.

- Risk mitigation through built-in governance and audit trails.

Challenges and Mitigation

- Legacy Constraints: Use API wrappers, middleware or RPA overlays to bridge outdated systems.

- Data Silos & Quality: Centralize data lakes, enforce governance and validation checks.

- Model Drift: Implement continuous monitoring, automated retraining and audits.

- Security Risks: Adopt zero-trust, encryption and regular penetration testing.

- Change Management: Communicate benefits, provide training and establish governance committees.

Best Practices

- Modular agent design for rapid updates as new models emerge.

- Unified observability across infrastructure and agents.

- Explainable AI techniques to build trust and meet regulations.

- Cross-functional collaboration through shared tools and feedback loops.

- Align agent objectives with measurable business outcomes.

Outputs and Handoff Mechanisms

Core Data Artifacts

- Landing zones with raw data snapshots.

- Staging tables applying standardized schemas.

- Master records merging entities from multiple sources.

- Curated views optimized for analytics.

- Data catalogs documenting schemas, quality scores and business context.

Schema Registries and Formats

- Apache Parquet for columnar analytics.

- Avro for compact binary serialization.

- ORC for Hadoop integration.

- JSON for semi-structured exchange.

- CSV for tabular snapshots.

- Relational tables in Snowflake or Databricks.

Metadata and Lineage

- AWS Glue Data Catalog or Apache Atlas for asset indexing.

- Lineage graphs capturing dependencies and transformations.

- Quality dashboards with validation results and anomaly alerts.

- Versioned scripts in Git for traceability.

- Data contracts outlining schemas, SLAs and refresh schedules.

Upstream Dependencies

- API availability from third-party providers.

- Batch extract schedules from Oracle or SQL Server.

- Streaming pipelines via Apache Kafka or Confluent Platform.

- Connectivity to Amazon S3, Azure Blob or Google Cloud Storage.

- Identity provider configurations and network performance.

Quality Gate Dependencies

- Schema validation engines enforcing types and nullability.

- Data cleansing for deduplication and normalization.

- Anomaly detection routines surfacing outliers.

- Business rule validators for referential integrity.

- Monitoring alerts triggering remediation workflows.

Handoff Mechanisms

- Batch transfers to secure FTP or shared cloud storage.

- Event notifications via Amazon SQS, Azure Service Bus or RabbitMQ.

- Real-time streaming with Kafka topics or AWS Kinesis Data Streams.

- RESTful APIs exposing JSON or Protobuf payloads.

- Database views or materialized tables for SQL access.

Orchestration and Notification

- Apache Airflow for DAG scheduling.

- Prefect for dynamic workflows.

- Dagster with type-aware pipelines.

- Azure Data Factory for hybrid ETL orchestration.

- AWS Step Functions coordinating Lambda and data tasks.

SLAs and Data Contracts

- Data freshness windows: real-time, hourly or daily updates.

- Throughput and latency targets for batch and streaming.

- Error tolerance thresholds and remediation timeframes.

- Schema change management and deprecation policies.

- Access controls and encryption requirements for sensitive data.

Security and Compliance at Handoff

- PII masking or tokenization before export.

- TLS encryption in transit and AES-256 at rest.

- RBAC enforced via IAM policies.

- Audit logging of exports, API calls and user actions.

- Regular compliance scans aligned to GDPR, HIPAA or SOC 2.

Example: Banking Customer Data Pipeline

Customer transactions flow from core banking, credit bureaus and web portals into a unified profile dataset enriched with risk scores. Nightly extracts, Kafka streams and API calls feed a Snowflake materialized view. Orchestration by Apache Airflow ensures refresh within two hours and masks sensitive account numbers before downstream consumption.

Best Practices for Seamless Handoff

- Standardize self-describing formats and enforce schema evolution policies.

- Maintain comprehensive metadata and lineage visibility.

- Automate validation and remediation before handoff.

- Use event-driven triggers to minimize latency.

- Define clear SLAs, data contracts and compliance rules with consumers.

- Monitor handoff performance and errors with dashboards and alerts.

Chapter 2: Natural Language Understanding and Intent Extraction

Purpose and Scope of the NLU and Extraction Stage

The natural language understanding (NLU) and intent extraction stage transforms diverse unstructured inputs—text documents, chat logs, social media posts, and speech recordings—into normalized, annotated representations. By detecting semantics, intent, entities, and sentiment, this stage provides the structured insights required for reliable downstream automation, decision support, and conversational interfaces. High fidelity here is crucial: errors propagate through orchestration frameworks, impacting response accuracy, compliance, and user satisfaction. In enterprise environments, organized workflows must process streaming and batch inputs at scale, ensuring consistent formatting, language identification, metadata enrichment, and secure handling before routing data to planning and execution modules.

Input Types, Preprocessing, and Metadata Enrichment

Data Sources and Formats

- Text Documents: Emails, PDFs, Word files, and web pages require character encoding normalization, markup removal, and metadata extraction.

- Chat and Messaging Logs: Exchanges from Slack or Microsoft Teams preserve context and timestamps for multi-turn dialogues.

- Social Media Content: Posts from Twitter, Facebook, or LinkedIn involve handling slang, abbreviations, and embedded media.

- Speech Recordings: Audio from call centers or voice assistants is fed through speech-to-text services such as Google Cloud Speech-to-Text, Amazon Transcribe, or Microsoft Azure Speech Services.

- Transcripts: Engine-generated transcripts include speaker diarization metadata, confidence scores, and timestamps.

Text Normalization and Audio Preprocessing

Standardizing inputs prevents inconsistencies in downstream analysis. Common preprocessing tasks include:

- Character Encoding: Converting all text to UTF-8.

- Punctuation and Casing: Retaining or removing punctuation; normalizing case; applying lemmatization and stop-word filters with libraries such as the Hugging Face Tokenizers or the OpenNLP toolkit.

- Tokenization and Segmentation: Breaking text into tokens and sentences.

- Domain Entity Patterns: Standardizing dates, currencies, and product codes via rule-based patterns.

- Audio Quality: Ensuring minimum sampling rates (16 kHz ), applying noise reduction, echo cancellation, and using pyannote.audio for speaker diarization.

Language and Domain Identification

Accurate detection of input language and domain context enables routing to specialized models. Tools like spaCy and fastText provide general-purpose language identification, while custom classifiers and glossaries refine processing for sectors such as healthcare, finance, or legal. Domain tags guide vocabulary adaptation, sentiment thresholds, and entity schemas.

Contextual Metadata and Conversation History

- Session Tracking: Persisting prior exchanges to resolve references.

- User Profiles: Incorporating language preferences, region, and entitlements.

- Channel Indicators: Noting origin (mobile, web, voice) to adjust formality and noise expectations.

- Temporal and Geospatial Context: Annotating timestamps and location data for compliance and location-based services.

Security, Compliance, and Governance Prerequisites

Enterprise-grade NLU workflows must enforce rigorous controls to protect sensitive data and ensure regulatory compliance.

- Data Encryption: Securing audio, transcripts, and annotations in transit and at rest to meet GDPR, HIPAA, or PCI DSS standards.

- Access Controls: Enforcing role-based permissions via LDAP, SAML, or OAuth integrations.

- Pseudonymization and Masking: Redacting PII or replacing with pseudonyms when appropriate.

- Audit Logging: Capturing model versions, processing steps, and user access for traceability.

- Governance Roles: Defining data owners, model custodians, and platform engineers responsible for data quality, model drift monitoring, and infrastructure reliability.

Entity Recognition and Intent Extraction Workflow

Capturing precise elements—customer names, product codes, dates—and interpreting user intent—such as order placement or support requests—requires coordinated sub-processes. This workflow generates structured insights that feed into planning and execution engines, reducing manual triage and improving routing accuracy.

Core Components

- Preprocessing Engine: Normalizes inputs and applies language detection.

- Tokenization Service: Segments text into tokens, handles compound words and emoticons.

- NER Module: Identifies entities using models such as spaCy or Amazon Comprehend.

- Intent Classifier: Fine-tuned transformer models via the Hugging Face Inference API.

- Sentiment Analysis: Services like Google Cloud Natural Language evaluate emotional tone.

- Orchestration Layer: Coordinates execution, handles retries, aggregates results, and logs outcomes.

- Knowledge Base: Supplies domain vocabularies, disambiguation rules, and contextual metadata.

High-Level Sequence of Actions

- Input Reception: Tagging with metadata—channel ID, user profile, session history—from message queues or APIs.

- Preprocessing: Applying normalization rules, expanding contractions, and filtering noise.

- Parallel Tokenization and POS Tagging: Issuing requests to multiple language models for code-mixed inputs, reconciling by confidence.

- NER Invocation: Extracting labeled spans with confidence metrics.

- Intent Classification: Assigning intent labels and probabilities.

- Sentiment Analysis: Evaluating overall polarity and emotion scores.

- Entity-Intent Correlation: Validating combinations against domain rules; low-confidence cases trigger fallbacks.

- Disambiguation: Resolving ambiguous entities via semantic graphs or heuristics.

- Handoff: Packaging results into a standardized schema and routing to task planners.

- Logging and Feedback: Recording metrics, errors, and confidence distributions for continuous improvement.

Orchestration, Error Handling, and Fallback Strategies

Coordination Patterns

- Circuit Breakers and Retries: Managing transient failures with exponential backoff.

- Parallel vs. Sequential Execution: Balancing latency and debugging complexity.

- Dynamic Routing Rules: Applying business logic to VIP customers or high-priority contexts.

- Human-in-the-Loop Triggers: Escalating ambiguous cases to case management systems.

- Contextual State Management: Tracking multi-turn dialogue in session caches or vector stores.

Error Handling and Fallback Mechanisms

- Secondary Model Invocation: Calling domain-specialized NER or intent classifiers on low-confidence segments.

- Pattern-Based Extraction: Using regex for critical entities like invoice numbers.

- Human Review Escalation: Generating tickets when automated methods fail thresholds.

- Graceful Degradation: Proceeding with highest-confidence data, flagging missing elements for downstream handling.

Output Specifications and Handoff to Planning Modules

Core Output Artifacts

- Intent Results: Primary label, ranked alternatives, and confidence scores.

- Entity Sets: Named entities with types, spans, and confidences.

- Sentiment and Emotion: Polarity, scores, and optional emotion breakdown.

- Slots and Dialogue State: Extracted slot-value pairs and session context.

- Normalized Text: Tokenized, cleaned input with language metadata.

- Processing Metadata: Timestamps, model and schema versions, error flags.

Data Schemas and Integration Patterns

Outputs conform to JSON schemas—request_id, timestamp, language, intent, entities, sentiment, slots, metadata—or alternative formats like Protocol Buffers. Handoff mechanisms include:

- Event-Driven Messages: Publishing to topics on Apache Kafka or RabbitMQ.

- RESTful Calls: Pushing JSON payloads to task planners with retry logic.

- Shared Stores: Writing records to data lakes or databases for Change Data Capture.

- Serverless Invocation: Triggering AWS Lambda or Azure Functions to initiate planning workflows.

Versioning, Traceability, and Auditing

- Model and Schema Tags: Embedding version identifiers in outputs.

- Correlation IDs: Tracing requests across ingestion, NLU, and execution.

- Audit Trails: Persisting raw inputs, processed outputs, and logs for forensic analysis.

- Access Logs: Recording administrative changes under role-based access control.

Infrastructure, Monitoring, and Lifecycle Management

Supporting Platforms and Orchestration Engines

- Data Platforms: Amazon Redshift, Google BigQuery.

- Message Queues: Apache Kafka, RabbitMQ.

- APIs and Gateways: REST or gRPC endpoints with authentication and rate limiting.

- Container Orchestration: Red Hat OpenShift, Amazon EKS.

- Knowledge Stores: Milvus, Pinecone.

- Observability: Prometheus, Grafana, Elastic Stack.

- Secrets Management: HashiCorp Vault.

- Policy Engines: Open Policy Agent.

- Workflow Engines: Apache Airflow, Kubeflow Pipelines, Temporal, Argo Workflows, commercial iPaaS like Mulesoft and IBM App Connect.

Continuous Monitoring and Optimization

Dashboards track throughput, latency, confidence distributions, and error rates. Alerts trigger on SLA breaches or recurring misclassifications. Logged data feeds into MLOps pipelines that automate retraining, model versioning, and rule refinement, ensuring workflows adapt to evolving language and domain requirements.

Agent Lifecycle and Continuous Improvement

- Version Control: Storing logic, artifacts, and configurations as code with changelogs.

- Automated Testing: Integrating unit, integration, and performance tests in CI/CD.

- Canary Deployments: Rolling out updates to a subset of traffic before full release.

- Retraining and Data Refresh: Scheduling model updates based on fresh, labeled data.

- Feedback Loops: Capturing user feedback and error reports for prioritizing enhancements.

- Scalability Planning: Designing stateless agents and autoscaling policies to meet variable loads.

Business Impact and Governance

By embedding rigorous controls, standardized schemas, and robust orchestration, enterprises achieve operational efficiency, error reduction, and enhanced customer experiences. Clear governance roles and audit trails support compliance with regulations such as GDPR, HIPAA, and PCI DSS. Continuous monitoring and lifecycle practices ensure that NLU and intent extraction remain aligned with business objectives, delivering measurable ROI through reduced cycle times, cost optimization, and sustained competitive differentiation.

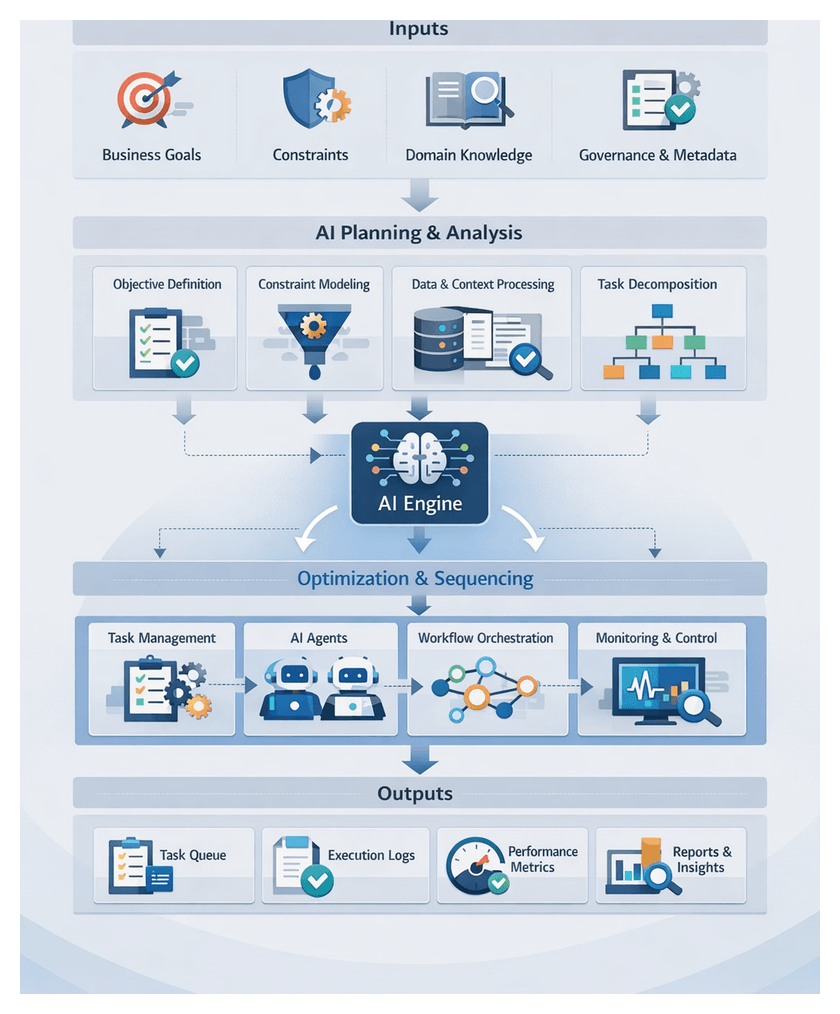

Chapter 3: Task Decomposition and Workflow Planning

Defining Goals and Gathering Planning Metadata

SMART Objectives and Strategic Use Cases

Effective AI-driven orchestration begins with clear, SMART (Specific, Measurable, Achievable, Relevant, Time-bound) objectives that translate high-level strategies into actionable use cases. Project sponsors and business analysts collaborate to define business drivers—such as revenue growth, cost reduction, compliance mandates or customer satisfaction targets—and map each to one or more use cases. These use cases specify actors, triggers, expected outcomes and acceptance criteria, forming the foundation for task planners to align every downstream task with measurable impact.

- Business drivers justifying AI orchestration investment

- Target stakeholder and end-user groups

- Performance improvement or ROI metrics

- Regulatory or compliance requirements

- Delivery time horizons and milestones

Constraints, Context and Domain Knowledge

Real-world initiatives face constraints—budget limits, resource availability, data privacy regulations, legacy system interoperability and organizational policies—that shape feasible solutions. Capturing these constraints and associated success criteria ensures planning engines can enforce quantitative targets (throughput rates, error thresholds) and qualitative measures (customer feedback scores, audit checklists).

- Financial boundaries, funding phases and cost centers

- Technical dependencies on platforms, APIs and middleware

- Data retention, classification and privacy policies

- Service level agreements and uptime requirements

- Quality benchmarks and defect tolerance levels

Embedding domain context prevents AI agents from missing critical nuances. Essential elements include ontologies, historical performance data, process maps, glossaries and industry-specific regulations. These artifacts guide decomposition algorithms to produce tasks that respect domain-specific rules and constraints.

Governance, Version Control and Metadata Repository

Establishing governance sign-offs and maintaining versioned metadata are vital for accountability and traceability. Stakeholders—project sponsors, data stewards, compliance officers and technical leads—review and approve goals, constraints and metadata definitions through documented workflows. A centralized metadata registry captures each iteration of objectives, assumptions and system requirements, facilitating impact analysis when changes occur.

- Stakeholder roles, review cycles and documented approval workflows

- Risk assessments, mitigation plans and change control processes

- Collaboration tools with version control and metadata schemas

- Tagged artifacts with timestamps, authorship and change summaries

- Automated synchronization between planning and execution repositories

Decomposition Parameters and Success Metrics

With goals, constraints and context in place, planning engines require parameters for optimal task granularity—balancing parallelism and coordination overhead. Defining minimum and maximum task sizes, dependency depths and parallelism thresholds guides decomposition algorithms. Planning metadata should also include performance indicators and feedback loop configurations to measure planning efficacy and inform iterative refinement.

- Maximum subtasks per objective and preferred execution durations

- Resource affinity constraints and data locality preferences

- Batch sizes, concurrency limits and streaming thresholds

- Target throughput rates, acceptable latencies and error tolerances

- Dashboards, automated alerts and bi-directional reporting APIs

By assembling validated objectives, constraints, domain context, governance approvals, versioned metadata and performance specifications into a planning repository, enterprises create a blueprint for scalable, error-resilient AI workflows.

Sequencing Tasks in the Planning Workflow

Dependency Graphs and Sequence Modeling

Sequencing transforms decomposed tasks into an ordered plan, addressing dependencies and parallelization opportunities. Planners construct a directed acyclic graph (DAG), where nodes represent tasks and edges denote prerequisites. Metadata—inputs, outputs, expected durations, retry policies and resource requirements—feeds into graph construction, enabling the orchestration engine to identify valid execution orders and parallel branches.

- Prerequisite relationships derived from data flows, API chains and business rules

- Resource annotations for CPU, memory, licenses or specialized hardware

- Priority weights based on business importance and SLA commitments

- Runtime estimates propagated to compute critical path durations

Static, Dynamic and Hybrid Sequencing

Static sequencing produces a fixed order at design time, ideal for predictable processes. Dynamic sequencing adapts in real time to data arrival, system load or external events. Hybrid approaches establish checkpoints where dynamic evaluation can adjust upcoming segments without reordering completed work.

- Static sequencing offers simplicity, repeatability and auditability

- Dynamic sequencing excels amid variable data and service latencies

- Hybrid models balance stability with adaptive responsiveness

Planning Engines and Optimization Algorithms

Modern orchestration frameworks—such as Apache Airflow, Prefect and Argo Workflows—provide APIs for defining DAGs, dependencies and scheduling policies. Under the hood, planners apply topological sorting, critical path method (CPM), constraint solvers and heuristic refinements to generate optimal sequences that respect resource and policy constraints.

- Topological sorting for initial dependency-compliant order

- Critical path analysis to identify duration-influencing tasks

- Heuristics to reorder non-critical tasks for increased parallelism

- Constraint solving to enforce resource limits and SLAs

Error Handling, Parallelization and Validation

Runtime anomalies—network latency, outages or data issues—require robust replanning protocols. Failure detection monitors status codes and logs; compensating actions handle side effects; graph pruning and on-the-fly reanalysis generate revised sequences; and notification triggers inform operators of significant changes.

- Independent subgraph extraction for parallel execution

- Granularity tuning to balance overhead and concurrency

- Resource contention avoidance and data locality optimization

- Backpressure management to throttle dispatch under load

Before handoff, planners validate dependency integrity, resource availability, policy compliance and sequence viability through dry-runs or simulations. Successful sequencing produces output artifacts—ordered task lists, dependency manifests, configuration payloads and dashboard updates—that are handed off via API calls, message queues or shared data tables to execution agents.

Integrating AI Agents into Enterprise Operations

Roles and Capabilities of AI Agents

AI agents automate complex workflows by performing specialized roles:

- Data Collection and Fusion—Ingest, validate and normalize structured and unstructured inputs.

- Natural Language Interaction—Understand user intents and entities with models like OpenAI GPT-4.

- Task Decomposition and Planning—Break down objectives and allocate resources using rule engines and optimization libraries like OR-Tools.

- Orchestration and Coordination—Monitor execution, manage retries and enforce load-balancing policies.

- Decision Support and Recommendation—Generate insights with predictive models built on TensorFlow or PyTorch.

- Action Execution—Automate UIs or APIs with RPA tools such as UiPath.

Supporting Systems and Orchestration Patterns

AI agents integrate with enterprise platforms via APIs, messaging and secure authentication:

- Enterprise Service Bus—Routes events and enforces security policies.

- API Gateways—Expose agent services with throttling and analytics.

- Data Lakes and Warehouses—Store raw and processed data for continuous learning.

- Event Streaming—Use platforms like Apache Kafka for reactive workflows.

Common orchestration patterns include centralized orchestrators, decentralized peer coordination, microservice-driven meshes with engines such as Apache Airflow or Azure Logic Apps, and event-driven workflows that respond to domain events in real time.

Best Practices and Real-World Example

Key implementation considerations:

- Governance—Define policies for model validation, version control and audit logging.

- Security—Enforce role-based access, encryption and secure credential management.

- Observability—Use tools like Prometheus or Azure Monitor for end-to-end visibility and alerts.

- Performance—Profile models, tune inference and scale infrastructure dynamically.

- Change Management—Communicate updates, train stakeholders and document new capabilities.

In customer onboarding, a bot built with Azure Bot Service collects applicant data, planning agents sequence credit checks and compliance reviews, and RPA bots from UiPath provision accounts on legacy systems. Orchestration agents monitor progress and escalate exceptions, reducing onboarding time from days to hours and improving accuracy and customer satisfaction.

Task Queue Outputs and Dependency Handoffs

Core Output Artifacts

The planning stage emits artifacts that drive execution:

- Task Queue Definition—List of tasks with unique identifiers, descriptions, parameters, priority levels and estimated resources.

- Dependency Graph—DAG capturing nodes with payload references, edge conditions and synchronization points.

- Scheduling Hints—Preferred execution windows, concurrency limits and affinity labels for orchestration engines like Apache Airflow.

- Validation Reports—Input schema checks, business rule compliance confirmations and anomaly logs with remediation guidance.

Upstream Dependencies and Validation

Reliable outputs depend on clean inputs from data ingestion, natural language understanding and business rule systems. Planning engines may leverage confidence scores from Google Cloud Natural Language API, refer to a central knowledge store for context resolution and synchronize rule sets to prevent compliance violations.

Handoff Mechanisms and Best Practices

Execution agents receive tasks via:

- Orchestration APIs and Message Buses—Publish tasks to queues or topics in Apache Kafka or AWS EventBridge for asynchronous pickup.

- Webhooks—Emit HTTP callbacks for real-time delivery with authentication and retry logic.

- Shared Storage—Stage large payloads in object storage, with agents retrieving file references from task definitions.

- Logging and Acknowledgment—Record delivery attempts, receive agent acknowledgments or negative acknowledgments, and integrate with monitoring platforms to trigger alerts.

- Design idempotent tasks to simplify retries.

- Embed schema versions in payloads for backward compatibility.

- Enforce data contracts defining mandatory fields and type constraints.

- Monitor end-to-end latency to detect bottlenecks.

- Automate final validation of upstream dependencies before handoff.

- Implement circuit breakers to escalate repeated failures.

By defining comprehensive output structures, validating dependencies and employing reliable handoff mechanisms, organizations ensure that planning outputs form a dependable foundation for scalable, resilient AI agent execution.

Chapter 4: Agent Orchestration and Coordination

Building a Unified AI Orchestration Framework

Operational Context and Challenges

Enterprises deploy a diverse set of AI agents, robotic process automation bots, and human workflows across multiple departments. Without a unified control layer, these capabilities operate in isolation, leading to data inconsistencies, variable reliability, and fragmented governance. Custom integrations and manual handoffs introduce latency, errors, and maintenance overhead, undermining agility and inflating total cost of ownership.

- Data inconsistency: disparate tools transform or interpret data differently, requiring manual reconciliation.

- Variable reliability: inconsistent error recovery strategies cause silent failures or process interruptions.

- Lack of visibility: stakeholders cannot trace requests end to end, impeding troubleshooting and compliance.

- Scalability constraints: each integration path must be scaled and maintained separately, multiplying effort.

- Compliance risk: decentralized policies create gaps in audit trails and regulatory adherence.

Design Principles

A cohesive orchestration framework addresses these challenges through a set of guiding principles:

- Unified control plane: a single interface for deploying, monitoring, and managing workflows across AI and RPA components.

- Declarative workflows: high-level definitions of task sequences, dependencies, and routing logic.

- Event-driven interactions: loose coupling via a central event bus or message queue, enabling asynchronous execution and scalability.

- Resilient error handling: standardized retry policies, circuit breakers, and compensating transactions for uniform failure recovery.

- Policy-based governance: central enforcement of security, compliance, and data retention policies with role-based access control.

- Observability by default: integrated logging, metrics, and tracing to provide end-to-end transparency.

Core Components

- Workflow Engine: interprets workflow definitions, schedules tasks, and manages state transitions.

- Message Bus: durable event broker such as Apache Kafka or RabbitMQ for high-throughput messaging.

- Task Router: evaluates routing rules to assign tasks to the appropriate AI agent, RPA bot, or human operator based on capacity, priority, and SLAs.

- State Store: persists workflow context and audit records to enable recovery and compliance reporting.

- API Gateway: provides secure entry points for external triggers, webhooks, and third-party integrations.

- Monitoring and Alerting Module: aggregates logs, metrics, and traces, raising alerts on anomalies.

- Secrets and Configuration Manager: centralizes credentials, feature flags, and configuration parameters for consistent access.

Coordinating Human and Automated Tasks

The framework supports both synchronous AI-driven tasks and asynchronous human approvals. Automated work items enter task queues, preserving dependencies and allowing agents to process them independently. Human tasks surface through user interfaces or notifications, with built-in escalation rules for overdue items. Integration with identity and access management enforces role-based permissions, ensuring only authorized individuals can approve or amend critical decisions.

Maintaining Consistency and Resilience

Consistency is achieved through idempotent operations and compensating actions. Retry logic with exponential backoff addresses transient failures, while circuit breakers isolate unhealthy services. Multi-step transactions employ saga patterns to orchestrate local transactions with compensation steps, preserving data integrity without monolithic two-phase commits.

Security and Governance

Centralized orchestration enforces security and compliance controls at a single point. Role-based access restricts workflow visibility and actions. Data encryption in transit and at rest protects sensitive payloads. Audit trails capture every state transition and decision, supporting regulatory reporting. Policy-as-code frameworks such as Open Policy Agent validate workflows against organizational policies before deployment.

Scalability and Elasticity

Control plane components and worker agents scale on demand via container orchestration platforms like Kubernetes. Serverless functions such as AWS Lambda and Azure Functions execute short-lived tasks without provisioning servers. Auto-scaling policies respond to queue depth or latency spikes, maintaining SLAs while optimizing resource utilization.

Scheduling and Routing Task Assignments

Purpose and Business Impact

The scheduling and routing layer forms the operational backbone of an AI orchestration framework. It assigns incoming tasks to the most appropriate agents based on business priorities, resource availability, and performance objectives. By codifying assignment logic and enforcing routing constraints, organizations achieve consistent throughput, predictable service levels, and end-to-end traceability.

- Operational efficiency: automated load balancing reduces idle time and prevents resource saturation.

- Scalability: dynamic workload distribution adapts to growing task volumes and new agent types.

- Reliability: built-in retry and escalation mechanisms mitigate transient errors and agent outages.

- Compliance: policy-driven routing enforces regulatory and data-sovereignty requirements.

- Business agility: priority schemes and policies can be updated rapidly without code changes.

Key Inputs and Metadata Requirements

- Task Descriptors: metadata including task type, priority, deadlines, and constraints (data locality, compliance tiers).

- Agent Registry: directory of agent capabilities, supported formats, concurrency limits, and health status via heartbeat messages.

- Business Policies: definitions of priority rules, affinity constraints, escalation protocols, and compliance filters.

- Resource Telemetry: real-time metrics on CPU, GPU, memory, and queue lengths to prevent overload.

- Historical Performance Data: execution logs and throughput statistics supporting predictive scheduling.

- Temporal Constraints: business hours, maintenance windows, and batch schedules governing execution timing.

Prerequisites and System Conditions

- Standardized Task Handshake: a versioned schema for task metadata transmission ensures consistency across components.

- Agent Registration Mechanism: service discovery using platforms like Apache ZooKeeper or custom registries in Kubeflow.

- Policy Engine: runtime rule evaluation supporting dynamic updates without downtime.

- Monitoring and Alerting Infrastructure: dashboards and automated alerts for queue backlogs and policy violations.

- Security and Authentication: encrypted channels and fine-grained access controls for metadata and telemetry.

- Scalable Messaging Fabric: queuing or streaming platforms such as Apache Kafka or AWS SQS to buffer tasks and absorb traffic bursts.

Scheduling Logic Patterns

- Priority Queue Scheduling: multiple tiers with preemption of lower-priority tasks for strict SLA use cases.

- Round-Robin Allocation: even distribution of homogeneous tasks across identical agents.

- Weighted Fair Queuing: proportional task shares based on agent capacity or business importance.

- Deadline-Aware Scheduling: urgency calculation to prioritize tasks with imminent deadlines.

- Load-Aware Dynamic Scheduling: real-time telemetry drives assignment away from overloaded agents.

Routing Rules and Constraints

- Capability Matching: filtering agents by declared roles such as text classification or image recognition.

- Data Locality and Compliance: enforcing data-sovereignty mandates by routing tasks to approved regions.

- Affinity and Anti-Affinity: grouping related tasks for cache efficiency or spreading them for fault tolerance.

- Escalation Paths: fallback agents, human queues, or alternate pipelines when standard processing fails.

- Concurrent Execution Limits: respecting per-agent caps to preserve performance guarantees.

Integration with Adjacent Stages

- Ingests enriched task definitions from the planning stage, including constraints and context.

- Queries the monitoring layer for real-time agent health and load metrics.

- Dispatches tasks via agent-specific queues or direct HTTP/gRPC calls.

- Records assignment decisions in execution registries and audit logs for traceability.

- Handles completion and failure updates, re-queuing tasks according to retry and escalation policies.

Coordination Rules and System Integration Roles

Coordination Rule Framework

Coordination rules externalize orchestration logic, defining task sequencing, resource assignment, error handling, and branching based on data inputs or external events.

- Sequencing Rules: enforce task dependencies, ensuring prerequisites complete before downstream steps.

- Parallelism and Synchronization Rules: govern concurrent execution and join barriers for consistency.

- Error Handling and Retry Policies: specify backoff intervals, maximum attempts, and escalation to human operators.

- Resource Allocation Rules: allocate compute, storage, or specialized hardware based on priorities and cost constraints.

- Event-Driven Triggers: enable dynamic branching in response to API callbacks, message events, or alerts.

Rule Authoring and Management

- Rule Engine Selection: platforms like Drools provide decision tables and event processing for dynamic policy evaluation.

- Version Control: store rule definitions in source repositories for audit trails, peer reviews, and rollback capabilities.

- Testing and Simulation: validate rules against synthetic and historical scenarios before production deployment.

- Approval Workflows: integrate rule changes into formal change management with role-based approvals.

- Execution Monitoring: capture metrics on rule firing frequencies, latency, and exception rates for visibility.

- API Gateway: unified entry point enforcing authentication, rate limits, and payload validation.

- Message Broker: event buses such as Apache Kafka or AgentLinkAI for scalable, asynchronous communication.

- Enterprise Service Bus: protocol translation and data transformation across legacy systems and enterprise applications.

- Adapter Layer: connectors normalizing calls to SQL, NoSQL, file systems, cloud storage, and third-party APIs.

- Event Router: rule-based filtering and enrichment of events such as anomaly detections or performance alerts.

- Monitoring and Logging Service: aggregation of trace logs, metrics, and audit records for real-time visibility.

Integration Patterns

- Request-Reply: synchronous interactions for low-latency inference requests.

- Publish-Subscribe: broadcasting updates and notifications to multiple subscribers.

- CQRS: separating read and write paths for performance optimization.

- Saga Pattern: coordinating distributed transactions with compensating actions on failure.

- Sidecar Architecture: deploying integration proxies alongside AI agent containers for cross-cutting concerns.

Security and Compliance Responsibilities

- Authentication and Authorization: implement OAuth2, JWT, or mutual TLS for service identity and access control.

- Data Encryption: enforce TLS for data in transit and AES-256 at rest.

- Data Masking and Tokenization: protect PII and financial data within integration payloads.

- Audit Logging: record message payloads, timestamps, and actor identities for compliance audits.

- Policy Enforcement: validate requests against data residency and privacy regulations before routing.

Monitoring and Auditing Integrations

- Health Checks and Heartbeats: periodic probes validate endpoint connectivity and response times.

- End-to-End Tracing: correlate transaction IDs across distributed components for root-cause analysis.

- Throughput and Error Metrics: track message volumes, queue lengths, and retry counts for capacity planning.

- Compliance Reports: automated extraction of audit trails and policy violation incidents.

- SLA Management: define objectives and monitor adherence, triggering notifications for at-risk obligations.

Best Practices and Organizational Alignment

- Maintain a centralized repository for coordination rules and integration configurations with clear ownership.

- Adopt continuous integration pipelines that validate rule changes and integration code through automated tests and security scans.

- Design modular rule sets and adapters to enable reuse and reduce coupling.

- Regularly review and prune outdated rules and connectors to minimize technical debt.

- Engage AI engineers, DevOps, security, and business process owners in defining coordination policies and integration standards.

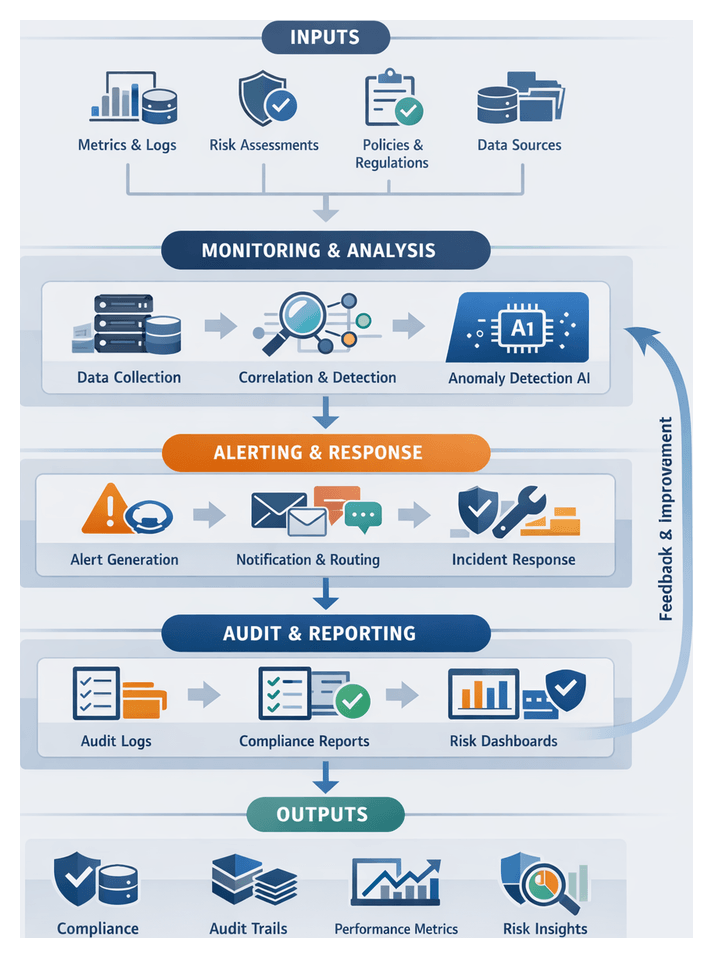

Monitoring, Alerting, and Dynamic Rerouting

Monitoring Outputs and Telemetry Sources

The monitoring stage provides continuous visibility into workflow execution, resource utilization, and service health through structured logs, real-time metrics, alerts, and audit trails. Key telemetry sources include:

- Infrastructure Monitoring Agents: tools such as Prometheus collect node-level CPU, memory, disk I/O, and network metrics.

- Application Performance Instrumentation: libraries like OpenTelemetry produce distributed traces and method-level metrics.

- Log Aggregation Services: platforms such as Grafana Loki and Splunk centralize logs for search and analysis.

- Event Streams: messaging systems like Apache Kafka capture task completions, errors, and audit events in real time.

- External Service Health Endpoints: SaaS status APIs supply uptime and response-time metrics.

Alerting Mechanisms and Thresholds

- Metric-Based Alerts: trigger when metrics cross thresholds, such as CPU utilization above 80 percent.

- Log-Based Alerts: pattern matching or anomaly detection on log streams to detect repeated failures or exceptions.

- Synthetic Transaction Alerts: periodic probes simulate end-to-end workflows and flag failures or latency deviations.

- Composite Alerts: combine multiple conditions, for example, rising API error rates alongside database latency.

Alerts route to notification channels such as email, Slack, Microsoft Teams, or incident management tools like PagerDuty, including contextual metadata and recommended remediation steps.

Dynamic Rerouting Policies and Triggers

Dynamic rerouting policies use monitoring outputs to redirect tasks to alternative agents or service instances, ensuring continuous workflow progress. Policy components include:

- Condition Evaluation: detect alert states or metric anomalies that meet rerouting criteria.

- Alternative Path Definitions: specify fallback agents, redundant endpoints, or quarantine queues.

- Priority and Weighting: assign weights to alternative paths based on reliability scores.

- Escalation Tiers: multi-stage rerouting from local retries to human intervention.

- Cool-Down Intervals: prevent oscillation by enforcing minimum residence times on new paths.

Primary triggers include real-time rule engines such as Kubeflow Pipelines for millisecond-latency rerouting, and scheduled reconciliation checks that rebalance workflows based on aggregated metrics.

Handoff to Recovery and Execution Agents

- Updated Task Metadata: tasks carry new routing instructions, retry counts, and reroute timestamps.

- Recovery Task Queues: isolated queues store tasks for manual review or delayed processing.

- Execution Reports: agents publish receipts with status, latency, and resource consumption metrics.

- Incident Tickets: systems like ServiceNow generate structured records when automated recovery fails.

Integrations with Third-Party Monitoring Tools

- Cloud-Native Monitoring: services such as AWS CloudWatch, Azure Monitor, and Google Cloud Operations.

- End-User Experience Monitoring: solutions like Dynatrace and Datadog combining RUM and synthetic checks.

- Logging and Analysis: Elastic Stack for search, visualization, and ML-driven anomaly detection.

- Incident Management: platforms like PagerDuty and Opsgenie for on-call scheduling and escalation policies.

Using Monitoring Outputs for Continuous Improvement

- Model Tuning: feedback loops from performance metrics drive retraining cycles and rule refinements.

- Compliance Reporting: audit trails and alert histories support regulatory reviews.

- Capacity Planning: resource utilization dashboards inform scaling decisions.

- Strategic Reporting: aggregated metrics quantify ROI, SLA compliance, and incident reduction.

Business Impact of Monitoring and Self-Healing

Effective monitoring and dynamic rerouting prevent silent failures, reduce mean time to detection, and maintain service reliability under fluctuating loads. By embedding self-healing capabilities, enterprises minimize manual intervention, optimize resource utilization, and enhance customer trust in AI-driven solutions.

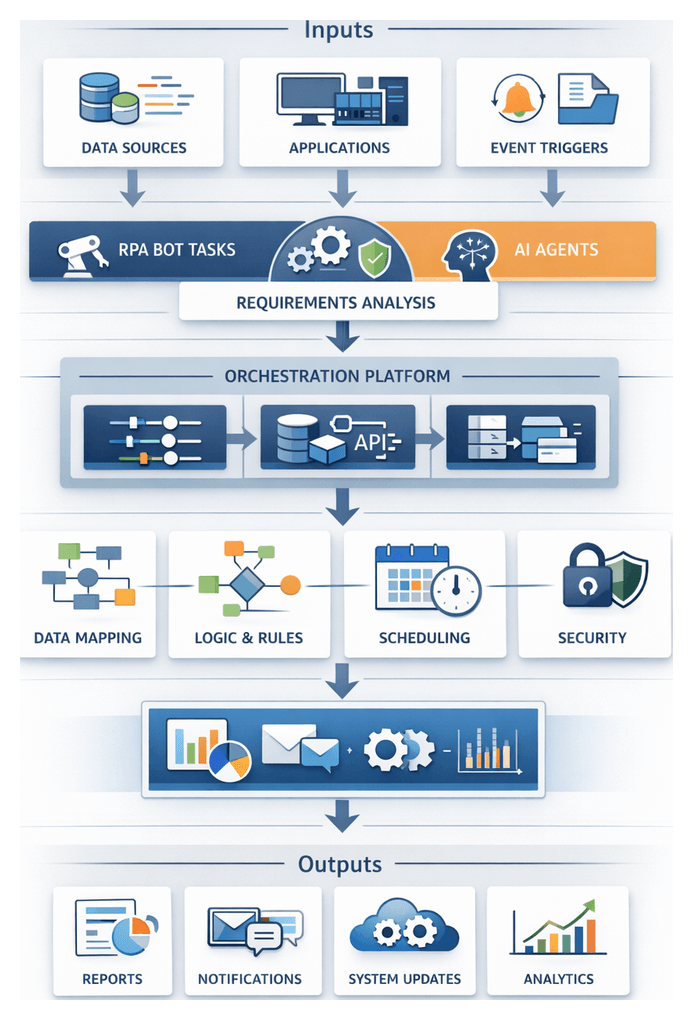

Chapter 5: Automated Action Execution with RPA Agents

Defining Automation Requirements for RPA and AI Agents

Enterprises embarking on robotic process automation (RPA) and AI-driven workflows must begin with rigorous requirements identification to ensure automation aligns with business objectives and technical constraints. This phase clarifies the scope of tasks—repetitive, rule-based activities suited for RPA and context-rich processes requiring AI agents—across legacy, cloud, and digital applications. Teams document target systems, data exchanges, event triggers, and security prerequisites to minimize implementation risk, accelerate deployment, and support scalable maintenance.

Industry trends such as regulatory compliance, 24×7 operations, and escalating transaction volumes drive the need for precise input definitions. Process complexity, fueled by hybrid architectures and manual handoffs, increases error rates and latency. Well-scoped requirements reduce scope creep, prevent runtime failures, and establish a solid foundation for orchestration and agent integration.

Key Objectives

- Clarify process boundaries, automation scope, and performance targets

- Catalog applications, interfaces, APIs, and integration points

- Define data mappings, transformation logic, and validation rules

- Establish triggers, schedules, exception scenarios, and dependencies

- Document security controls, compliance requirements, and access policies

- Align stakeholders on deliverables, timelines, and success metrics

Stakeholder Roles and Collaboration

Cross-functional teams drive comprehensive requirements gathering. Process owners and subject matter experts detail current workflows, exceptions, and performance goals. IT architects assess system architectures, API availability, and network constraints. Security and compliance teams validate data privacy, credential management, and audit logging standards. Business analysts translate workflows into actionable RPA and AI specifications, while automation developers confirm feasibility within tool constraints. Early alignment prevents rework and ensures the design reflects operational realities.

Landscape Assessment and Target Interfaces

Teams employ process mining, operational dashboards, and user interviews to quantify task frequency, duration, and exception rates. System inventories reveal legacy mainframes, web portals, SaaS platforms, desktop productivity tools, and messaging systems. Interface details—screen layouts and control hierarchies for UI automation, REST or SOAP API schemas, file formats, and database connection parameters—inform technology choices: UI scraping, direct integration, or hybrid approaches.

Data Mapping and Transformation

Automation often shuttles data between heterogeneous systems. Detailed mapping activities specify how source fields correspond to target fields, including type conversions, default values, conditional logic, and cleansing rules. Documentation includes sample inputs, expected outputs, validation checks, and error thresholds to handle missing or malformed data gracefully.

Trigger Conditions and Scheduling

Defining clear triggers ensures bots and AI agents act when business conditions warrant. Triggers may be file-based (monitoring directories or cloud storage), message-driven (enterprise messaging systems), schedule-based (cron expressions or business calendars), manual (secure portals), or hybrid combinations. Precise definitions prevent unwanted runs, align timing with downstream processes, and optimize resource utilization.

Security, Compliance, and Change Management

Automated agents require secure credential handling via vaults or credential services and operate under least-privilege access controls. Compliance obligations—data residency, encryption standards, auditability—shape input processing and logging. Before development, confirm process stability, freeze application versions, and embed change management protocols to manage UI redesigns or API updates. Regular reviews and regression testing underlie maintainable automation.

Documentation and Readiness Criteria

The requirements phase culminates in a formal Automation Requirements Specification (ARS) that includes process flow diagrams, application catalogs, data mapping tables, trigger definitions, security checklists, and testing strategies. Stakeholder sign-off on the ARS signals readiness to advance into design, development, and orchestration.

Designing a Unified Orchestration Framework

Orchestration frameworks coordinate AI agents, RPA bots, data pipelines, and microservices into cohesive, end-to-end workflows. By centralizing control logic, enterprises eliminate brittle point-to-point integrations, enforce error-handling policies, and enable scalable, repeatable architectures.

Flow Patterns and Interaction Models

Common orchestration patterns include:

- Linear sequences for straightforward pipelines

- Parallel branching for concurrent task execution

- Event-driven triggers reacting to file arrivals or anomaly signals from AI models

- Conditional routing based on business rules or AI-driven classifications

Tools like Apache Airflow and Prefect offer declarative pipelines and operators for AI and data tasks.

Coordination Planes

- Control Plane: Manages workflow definitions, schedules, retry policies, and access controls.

- Data Plane: Transfers inputs and outputs via APIs, message queues, or file systems with integrity and traceability.

- Execution Plane: Hosts agents, containers, or microservices executing tasks under orchestration directives.

Frameworks such as Camunda abstract these layers, enabling seamless integration of custom AI microservices via gRPC or REST adapters.

Building Blocks

- Workflow Designer: Visual or code-based process definition environment.

- Task Executors: Workers interfacing with databases, message brokers, RPA platforms, and AI services.

- Event Bus: Publish-subscribe infrastructure propagating state changes and triggers.

- Monitoring Dashboard: Real-time console for pipeline health, task performance, and SLA adherence.

- Audit Trail: Persistent execution records for compliance and root-cause analysis.

Open-source solutions like Kubeflow or commercial platforms such as MuleSoft can assemble these components according to security and governance requirements.

Case Example: AI-Powered Order Processing

- New orders publish events to the orchestration event bus.

- An NLU service validates customer identity.

- Conditional routing directs high-risk orders to a fraud detection microservice.

- Approved orders trigger RPA bots built with UiPath, Automation Anywhere, or Blue Prism to interact with legacy ERP systems.

- Parallel tasks update CRM records and dispatch confirmation emails.

- Exceptions invoke a human review workflow via a virtual agent.

This cohesive flow reduces manual errors, ensures consistency, and provides a clear audit trail.

Adoption Considerations

- Governance: Define roles and permissions for workflow management.

- Service Catalog: Maintain a registry of AI components, data sources, and RPA endpoints.

- Version Control: Apply CI/CD to workflow definitions with testing and systematic rollouts.

- Observability: Integrate metrics and logs into incident management tools.

- Training and Change Management: Equip teams to author, monitor, and troubleshoot orchestrated workflows.

Integrating AI Agents into Enterprise Processes

Integrating AI agents into existing enterprise processes embeds autonomous, learning-driven capabilities within operational workflows. This conjunction of cognitive automation and RPA extends automation to dynamic, context-rich activities.

Agent Typologies

- Autonomous Process Agents: Monitor event streams and initiate workflows, for example supply chain agents generating procurement requests.

- Conversational Virtual Agents: Engage users via chat or voice; platforms like Rasa and Google Dialogflow power scalable deployments.

- Data Enrichment Agents: Augment datasets via external APIs and knowledge graphs using Amazon Neptune or Neo4j.

- Analytical and Predictive Agents: Apply machine learning models via OpenAI APIs or Azure Machine Learning to generate forecasts and risk assessments.

- RPA Agents: Automate interactions with legacy systems and batch processes using UiPath, Automation Anywhere, or Blue Prism.

Supporting Architecture

- Orchestration Engine: Coordinates tasks via APIs from frameworks like those listed on AgentLinkAI.

- Integration Layer: Middleware exposing REST, gRPC, or messaging interfaces; message brokers such as Apache Kafka or RabbitMQ.

- Data Platform: Unified repository via AWS SageMaker or Google Cloud AI Platform.

- Knowledge Management: Semantic stores and vector databases like Coveo and Pinecone.

- Security and Governance: IAM, encryption, and audit logs managed with Splunk or the ELK Stack.

- Monitoring and Observability: Telemetry captured by Prometheus, Grafana, or Datadog.

Integration Patterns

- Event-Driven Architecture: Agents subscribe to event streams for loose coupling.

- Request-Response APIs: Synchronous interactions for real-time tasks.

- Command Messaging: Orchestrator publishes commands to message buses with correlation IDs.

- Service Mesh: Istio or Linkerd secure and observe microservice communications.

- Adapter-Based Connections: Adapters bridge legacy systems via UI automation or custom connectors.

Lifecycle Management

- Dynamic Provisioning: Container orchestration (e.g., Kubernetes) scales agent instances on demand.

- Versioning and Rollouts: Blue-green and canary deployments ensure safe updates.

- Health Checks: Self-assessments trigger automated restarts.

- Configuration Management: Centralized settings via HashiCorp Consul or AWS Systems Manager.

- Logging and Traceability: Audit logs capture decisions and data flows.

Security and Ethical Considerations

- IAM: Service identities, OAuth tokens, and mTLS certificates enforce least privilege.

- Data Privacy: GDPR and CCPA compliance via data masking and anonymization.

- Ethical AI: Bias mitigation, model explainability, and decision rationale logging.

- Continuous Compliance: Automated audits and alerts for policy deviations.

Strategic Impact

- Throughput: Agents process tasks 24/7, freeing human resources.

- Accuracy: Consistent business rule application reduces errors.

- Responsiveness: Event-driven agents swift reaction to critical incidents.

- Personalization: Recommendation and conversational bots engage customers at scale.

- Innovation: Modular agents accelerate new workflow assembly.

Execution Monitoring and Next-Stage Handoffs

Execution logs generated by RPA bots and AI agents capture the details, metrics, and outcomes of automated tasks. Standardizing log schemas and establishing robust storage, monitoring, and handoff mechanisms ensure traceability, compliance, and seamless data flow into subsequent AI-driven stages.

Log Artifact Taxonomy

- Execution Trace Logs: Timestamped records of UI interactions, API calls, and data events.

- Performance Metrics: Statistics on task durations, throughput, and resource utilization.

- Error and Exception Logs: Structured records with codes, stack traces, and retry actions.

- Audit Trails: Immutable chains of custody linking transactions to credentials and contexts.

- Transaction Summaries: High-level overviews of process sequences and statuses.

Standardizing Schemas

- Timestamp: ISO-8601

- Agent Identifier: e.g., UiPath Robot ID

- Transaction ID: Unique key for end-to-end correlation

- Step Name and Status Code: SUCCESS, ERROR, RETRY

- Payload References and Performance Data

- Error Details and Remediation Guidance

Compliance with these schemas simplifies integration with centralized platforms like Splunk or the ELK Stack.

Storage, Retention, and Access Control

- Retention Policies: Align with regulatory mandates and cost considerations.