Orchestrating Professional Services with AI Agents An End to End Project Management Workflow

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Industry Context and Operational Complexity

Professional services firms operate at the intersection of specialized expertise and rapidly evolving client demands. Market shifts, regulatory frameworks, and technological innovation increase engagement complexity, requiring coordination across consultants, subject-matter experts, and external partners. Traditional reliance on spreadsheets, email threads, and ad hoc processes creates transparency gaps, governance challenges, and risks of misalignment with strategic objectives. Without a formal framework, firms face bottlenecks, rework, and unpredictable outcomes as engagements scale in scope, budget constraints tighten, and quality standards intensify.

Benefits of a Structured AI-Driven Workflow

Embedding an AI-driven orchestration layer into project management delivers three core advantages:

- Consistency: Standardized templates, validation rules, and automated checks enforce best practices for intake, scope definition, and resource planning.

- Error Reduction: Intelligent data capture and rule-based engines identify omissions or inconsistencies early, preventing costly downstream corrections.

- Predictability: Defined stage gates and handoff points enable accurate forecasting of timelines, budgets, and staffing needs.

These benefits shift firms from reactive firefighting to proactive governance. By leveraging AI for natural language understanding, predictive analytics, and optimization, organizations gain data-driven insights that support sustained operational excellence and high client satisfaction.

AI-Enhanced Project Intake

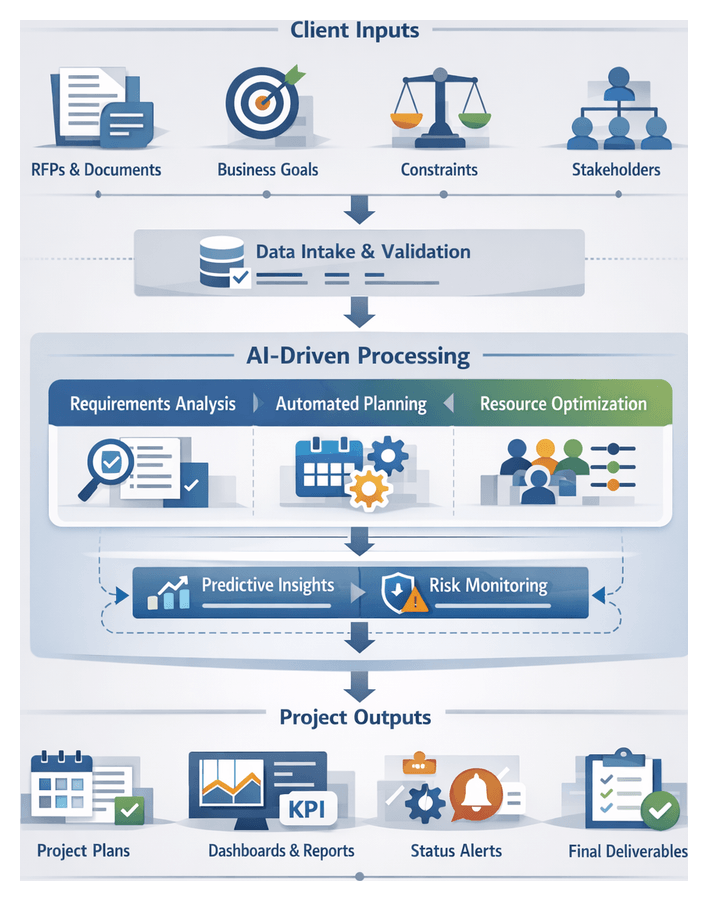

The intake stage is the gateway to the project life cycle. AI integration transforms manual proposals and unstructured emails into a rich, standardized dataset that underpins planning and execution.

AI-Driven Data Capture and Standardization

Proposals arrive in varied formats—email narratives, PDFs, slide decks, or web submissions. AI agents use advanced document understanding to extract key fields such as project titles, stakeholder names, deliverables, timelines, and budgets. Tools like IBM Watson Discovery and Google Cloud Document AI employ optical character recognition and transformer models to map unstructured text into structured schemas. Standardization libraries reconcile terminology and units—aligning “consulting hours,” “advisory days,” and “professional service units” against internal taxonomies maintained in graph databases—ensuring consistent inputs for downstream modules.

Natural Language Processing for Proposal Interpretation

NLP pipelines apply named entity recognition, intent classification, and sentiment analysis to interpret client priorities, risk indicators, and implicit constraints. Engines such as OpenAI’s GPT-4 and Hugging Face transformers fine-tuned on domain-specific corpora identify urgency phrases (“must launch by Q3,” “regulatory deadline”) and categorize requirements into strategic objectives, compliance needs, technical specifications, or quality criteria. Correlating these classifications with historical data in cloud data lakes enables AI agents to infer feasibility scores and suggest clarifications before formal approval.

Automated Validation and Feedback

Extracted data undergoes intelligent validation against internal and external reference systems. Budget figures compare against averages in Oracle NetSuite or SAP S/4HANA, while timelines align with delivery benchmarks stored in project portfolio management tools. Discrepancies trigger automated alerts through rules engines such as Camunda, prompting intake coordinators to review specific fields. This reduces back-and-forth communications and accelerates decision cycles.

Integration with Enterprise Systems

Validated intake records flow into CRM, ERP, and portfolio management platforms via integration platforms as a service. Solutions like MuleSoft, Microsoft Power Automate, and Apache NiFi enable low-code connectors that map AI-processed outputs to target APIs. Client information in Salesforce is enriched, contract metadata in SAP is updated, and project initiation triggers procurement requisitions—maintaining a single source of truth and auditability.

Priority Scoring and Recommendations

Machine learning models assess each opportunity’s strategic value by analyzing win rates, margin performance, client lifetime value, and resource alignment. Frameworks like TensorFlow and PyTorch compute priority scores, while CRM analytics modules such as Salesforce Einstein present recommendations to sales leaders. Automated workflows route low-scoring proposals for leadership review or propose adjustments—optimizing staffing, deal structure, and risk mitigation strategies.

Continuous Learning and Improvement

Project outcomes feed back into the AI ecosystem. MLOps platforms like MLflow and Azure Machine Learning track model performance, manage versioning, and orchestrate retraining pipelines based on real delivery metrics. User feedback from intake coordinators and collaboration tools injects active learning signals, enabling models to adapt to evolving terminology, regulatory changes, and client expectations.

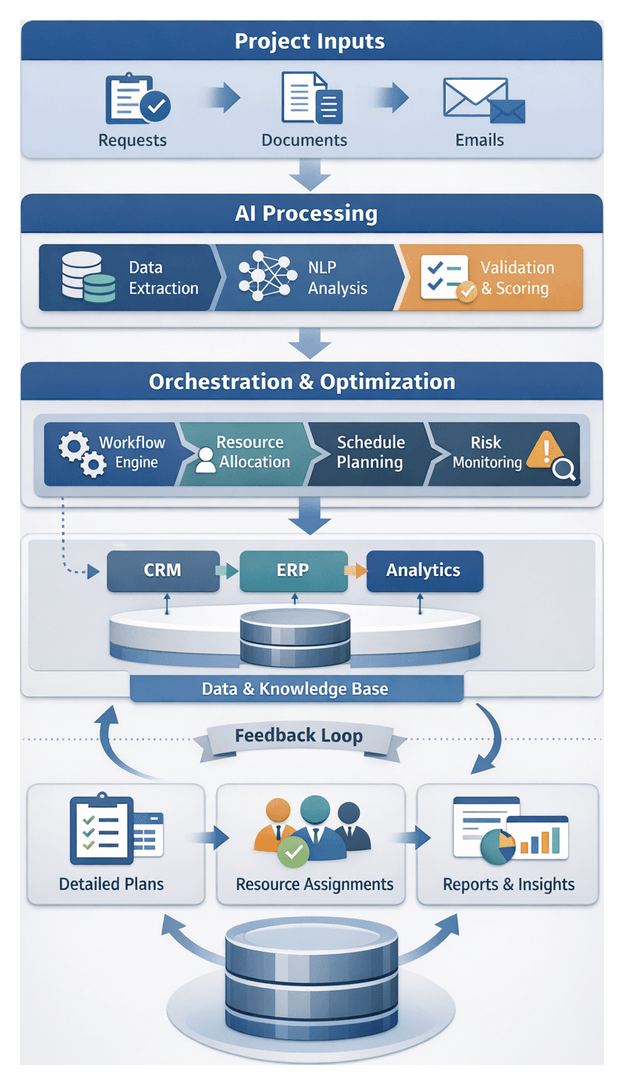

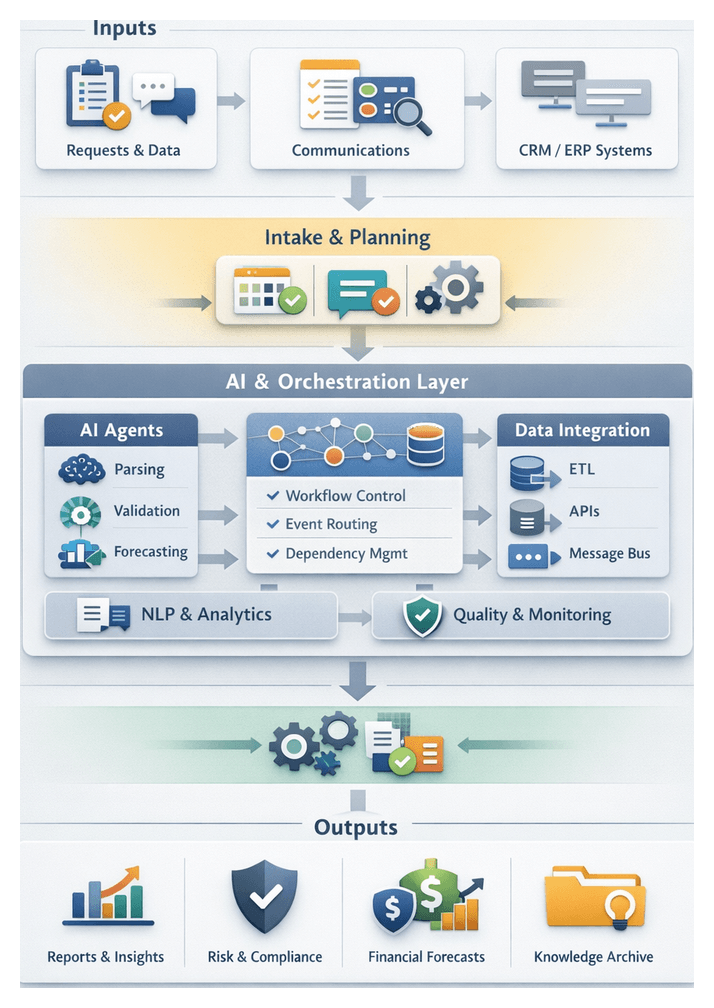

Architecture and Orchestration

The solution architecture provides a blueprint for orchestrating data flows, component interactions, and service integrations across all project stages. A layered model separates concerns, simplifies development, and supports independent scaling of critical services.

Layered Architectural Model

- Presentation Layer: Web portals, API gateways, and virtual assistants that capture intake forms and display dashboards. Outputs: Validated inputs, API calls, event messages.

- Orchestration Layer: Workflow engines and message brokers managing task sequences, AI triggers, and notifications. Dependencies: Messaging infrastructure (Kafka, RabbitMQ), rules engines, scheduling services.

- AI Services Layer: NLP parsers, predictive analytics, and optimization agents. Dependencies: Model serving platforms such as Google Cloud AI, AWS SageMaker.

- Data Management Layer: Relational and NoSQL databases, document stores, and data lakes. Outputs: Persisted artifacts, audit trails, historical analytics.

Core Components and Interactions

Modular components communicate through an event bus or message broker, ensuring loose coupling and reliable delivery. Key components include:

- Intake Agents: Validate fields and extract metadata, publishing standardized records to requirement parsers.

- Requirement Parsers: Use NLP to identify deliverables and constraints, routing structured objects to scope workflows.

- Resource Optimizers: Apply algorithms to match skills with needs, feeding allocations to the scheduling engine.

- Schedule Generators: Build timelines based on dependencies and availability, delivering schedules to task assigners.

- Task Assigners: Allocate tasks dynamically, integrating with collaboration hubs and notification services.

- Monitoring Dashboards: Aggregate KPIs and visualize trends, alerting risk engines and executives.

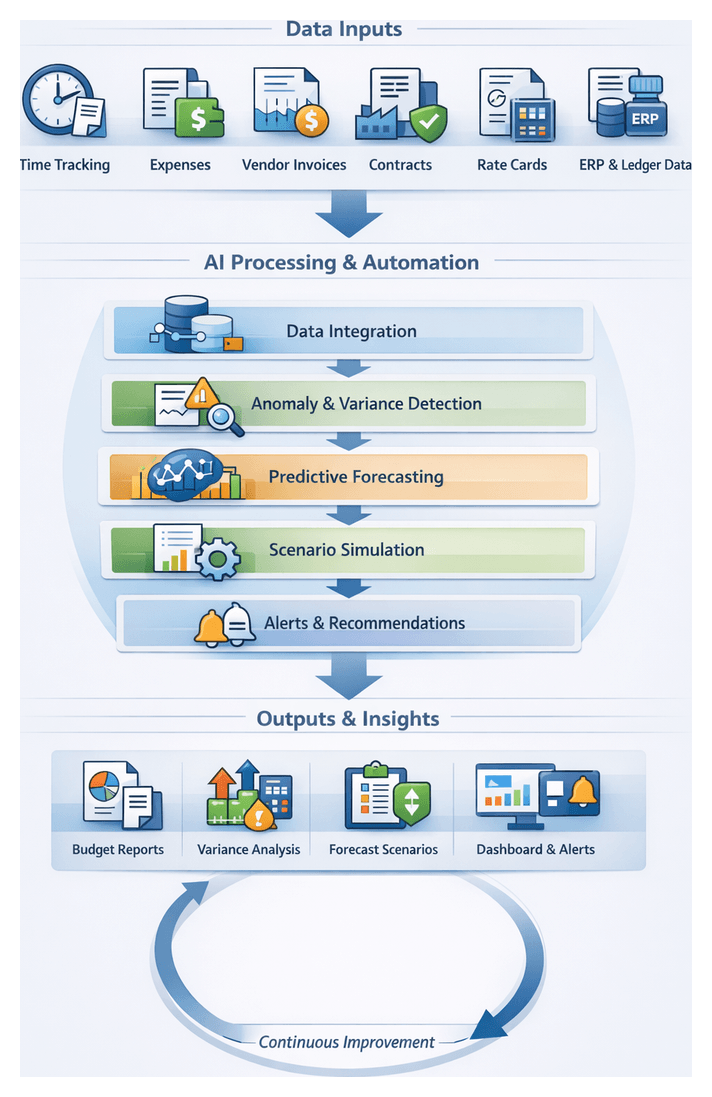

- Financial Modules: Consolidate time and expense data for variance analysis, supplying forecasts to analytics services.

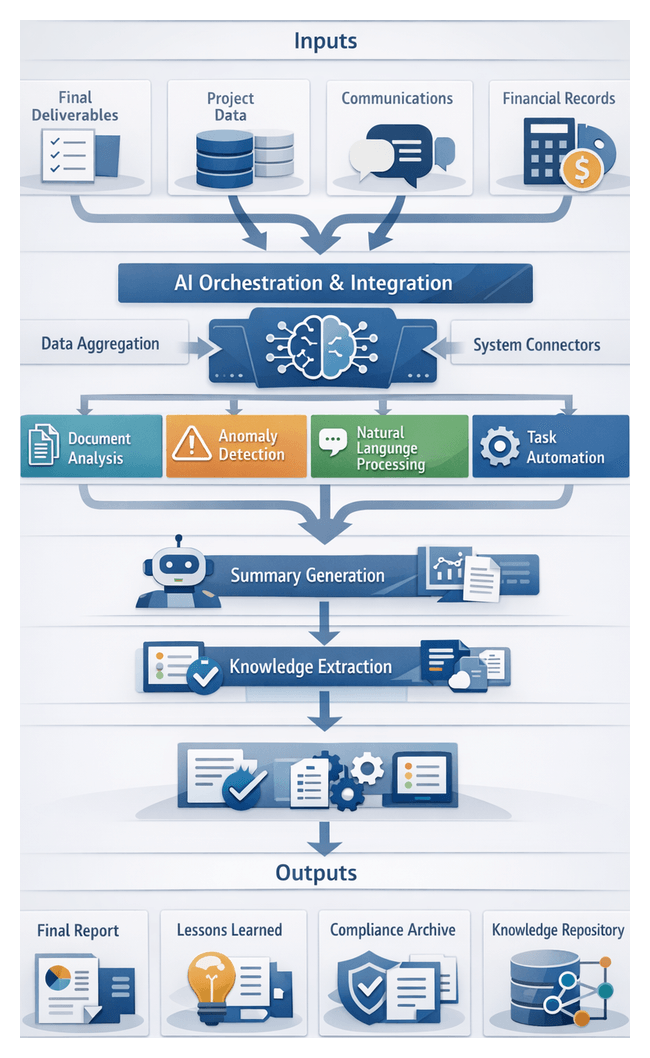

- Closure Repositories: Archive deliverables and lessons learned, exposing knowledge packages to audit systems.

Data Flow and Handoff Contracts

Clear contracts define message schemas, validation rules, delivery protocols, security requirements, and versioning strategies. Schema registries and API specifications prevent mismatches and support backward compatibility. Automated gates and monitoring alerts detect contract violations, triggering remediation workflows.

Integration Patterns and Dependency Management

- Event-Driven Orchestration: Services react to published events, enabling asynchronous processing, retries, and dead-letter queues.

- API-First Design: REST or gRPC interfaces with SDKs and client libraries accelerate adoption.

- Microservices Isolation: Containerized or serverless deployments reduce blast radius and simplify upgrades.

- Centralized Configuration: Feature flags and policy engines enable real-time tuning of AI models and thresholds.

- Service Discovery: Dependency injection frameworks and registry services ensure reliable endpoint resolution.

Scalability, Security, and Governance

Auto-scaling clusters, stateless services, and partitioned data stores support performance demands. Security controls include mutual TLS, role-based access control, and audit logging. A governance layer enforces compliance with GDPR, SOC, and industry standards, maintaining metadata on origin, transformations, and access history.

- Audit Trails: Log every data mutation with timestamps, user or agent identifiers, and version history.

- Compliance Reporting: Prebuilt templates extract metrics for audits.

- Model Governance: Versioned AI models with documented training data, performance metrics, and bias assessments.

Chapter 1: Opportunity Identification and Project Intake

Intake Objectives and Foundational Inputs

In professional services, the intake stage transforms unstructured client inquiries into standardized data that fuels planning, resource allocation, and scheduling. A robust intake process mitigates miscommunication, aligns stakeholders early, and establishes transparency, efficiency, predictability, and scalability across engagements.

The primary objectives are:

- Capture Client Intent: Extract core goals and outcomes from emails, proposals, or presentations to prevent scope drift.

- Standardize Input Data: Record budgets, compliance mandates, and performance metrics in a consistent template to support analytics and automated workflows.

- Identify Early Risks: Surface unrealistic timelines, conflicting requirements, and regulatory gaps through structured validation rules.

- Secure Stakeholder Commitment: Obtain formal sign-off on intake summaries to align expectations before detailed planning.

Key foundational inputs include:

- Client proposal artifacts such as RFPs, contracts, and briefing documents.

- Business objectives and KPIs, for example revenue targets, cost savings, or compliance thresholds.

- Scope boundaries detailing deliverables, geographic reach, user populations, and service levels.

- Budgetary parameters including estimates, billing rates, and payment milestones.

- Timeline constraints with target dates, milestones, and blackout periods.

- Resource profiles covering required skills, certifications, and availability.

- Technical prerequisites such as infrastructure dependencies, data access permissions, and security protocols.

- Stakeholder directory listing decision-makers, technical contacts, and communication preferences.

Prerequisites for effective intake include a governance framework defining approval paths, a standardized digital form or portal with conditional logic, an automated validation engine, integration with CRM systems, AI-powered natural language processing—enabled by solutions such as the OpenAI GPT-4 API—and security controls for role-based access, encryption, and audit trails. Training and adoption plans ensure teams follow best practices and maximize tool usage.

Automated Intake Workflow and Validation

The automated intake workflow centralizes submissions from web forms, emails, CRM tickets, and chatbots into a unified queue. An orchestration layer connects to each channel via APIs, performing ingestion, metadata enrichment, preliminary classification, and secure storage. This ensures every request receives a unique intake ID and maintains end-to-end visibility through integration with identity directories and notification services.

Automated Validation and Quality Assurance

An AI-driven validation engine combines rule-based scripts with natural language processing to verify data integrity. The rule-based layer checks required fields, value formats, duplicates, and attachment conventions. Concurrently, NLP modules extract deliverable types, compliance standards, and risk indicators, flagging contradictions and mapping unstructured descriptions to templates via domain ontologies. This generates a dashboard reporting pass, warning, or fail statuses for intake coordinators.

Exception Handling and Stakeholder Alerts

Records with missing or inconsistent data enter an exception sub-flow where automated notifications request client clarification. Domain-specific queries are routed to subject matter experts via collaboration platforms. Integrated chatbots powered by knowledge bases answer real-time questions. Every interaction is logged to maintain an audit trail. Critical failures require approver override before proceeding.

Handoff Readiness and Continuous Improvement

Upon validation, the system packages structured data into JSON or XML payloads and pushes them via REST API to requirements gathering platforms. Role-based notifications alert project managers and resource planners. Dashboards update to reflect the record’s status. Intake coordinators perform final sanity checks and business leads grant digital sign-off.

Metrics such as time to validation, exception loops, and common failure causes feed continuous improvement. Data scientists tune validation rules and NLP models, analysts refine intake templates with inline guidance, training materials address confusion patterns, and RPA bots automate frequent manual corrections. These feedback loops reduce exceptions, accelerate cycles, and enhance the client experience.

AI Parsing and Stakeholder Coordination

AI parsing transforms unstructured proposals—PDFs, slide decks, emails, or audio recordings—into structured data and aligns stakeholders for review. Advanced natural language processing, machine learning models, and collaboration platforms accelerate intake cycles, reduce manual effort, and ensure accurate capture of critical details.

Natural Language Processing for Proposal Interpretation

A multi-stage NLP pipeline performs text segmentation, OCR for scanned content, tokenization, part-of-speech tagging, dependency parsing, and sentiment detection. Cloud services such as IBM Watson Natural Language Understanding and Microsoft Azure Cognitive Services Text Analytics provide pre-trained models optimized for professional language.

Entity Extraction and Relationship Mapping

Named entity recognition identifies client names, dates, budget figures, deliverable descriptions, and contractual terms. Tools like spaCy and the OpenAI API detect domain-specific entities when fine-tuned. Extracted entities form a semantic graph linking deliverables to dependencies and approval authorities, enabling reviewers to query relationships without reading entire documents.

Semantic Classification and Prioritization

Supervised and unsupervised learning tag content by theme—compliance, technical scope, commercial terms—and assign priority based on patterns from historical projects. Topic modeling and transformer-based classifiers deliver clarity by grouping related requirements and focus by ranking items such as regulatory constraints or high-value deliverables.

AI-Driven Stakeholder Identification and Role Assignment

AI analyzes organizational charts, project histories, and communication logs to recommend approvers and subject matter experts. Workflow engines like UiPath Document Understanding and ServiceNow AI Document Intelligence propagate tasks based on predicted roles, ensuring correct routing and multi-level sign-off where required.

Collaboration Platforms and Notification Systems

Integrated hubs centralize communication, embed context, and automate notifications. Slack bots and Microsoft Teams integrations alert stakeholders when parsed summaries are ready. Platforms such as Asana generate task cards listing actions and deadlines. Threaded discussions and audit logs preserve decision paths and compliance evidence.

Continuous Learning and Model Refinement

Reviewer corrections and feedback signal misclassifications or missing entities, triggering automated retraining pipelines. MLOps frameworks manage version control, performance monitoring, and deployment of updated models. Metrics on precision, recall, and processing time guide refinements, ensuring the parsing engine improves over time and reduces manual intervention.

Standardized Intake Output and Handoff

The standardized intake output packages validated data into a consistent, machine-readable format and executes a structured handoff protocol. This transition ensures downstream teams receive complete information for requirements gathering without manual re-entry or interpretation errors.

Key Deliverables and Data Outputs

- Structured intake record as JSON or XML with project details, objectives, and budget estimates.

- Client profile summary generated by NLP agents, with links to external systems like Salesforce for enriched context.

- Stakeholder matrix listing decision-makers, experts, and communication preferences.

- Risk and flag list enumerating potential issues detected during validation.

- Validation report documenting checks, missing fields, and resolution actions.

- Metadata package including timestamps, data lineage, NLP model versions, and processing status codes.

Dependencies and Integration Points

Outputs rely on upstream platforms such as Microsoft Power Automate for data capture, integration with IBM Watson for NLP parsing, rules engines like Drools or MuleSoft for validation, security gateways for compliance, and middleware such as ESB or iPaaS for routing to downstream systems.

Handoff Process to Requirements Gathering

- Package outputs into a secure payload with checksum verification.

- Publish to a central repository, for example SharePoint Online or Git-based systems, with metadata tags.

- Trigger the requirements workflow in tools like Jira or ServiceNow via event-driven iPaaS scripts.

- Notify scope definition leads with links to artifacts and outstanding items.

- Record handoff events in an audit log with user identifiers and timestamps.

- Obtain digital confirmation of receipt and governance checks before completing the handoff.

This repeatable, transparent handoff mechanism eliminates manual bottlenecks, maintains data integrity, and ensures requirements gathering begins with trusted information, accelerating project timelines and driving predictable delivery outcomes.

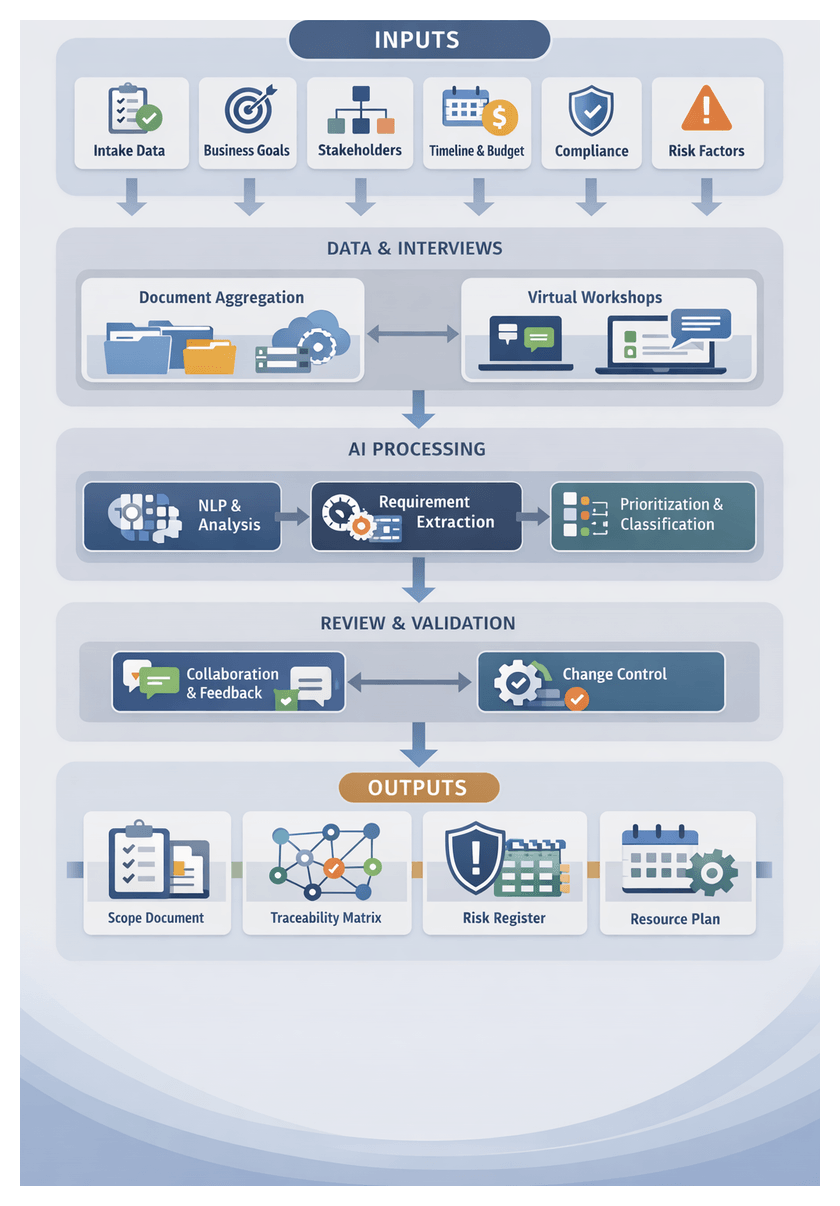

Chapter 2: Requirement Gathering and Scope Definition

Purpose and Strategic Importance of Scope Definition

The Scope Definition stage translates validated intake information into a detailed project blueprint, outlining deliverables, constraints, and measurable success criteria. By defining boundaries and expectations early, professional services firms establish a foundation for resource planning, scheduling, budgeting, and risk management. This structured approach reduces ambiguity, aligns stakeholder expectations, and minimizes costly rework or scope creep. Moreover, precise documentation of engagement parameters enables confident resource allocation, effective procurement negotiations, and proactive risk mitigation. Firms excelling at scope definition strengthen their reputation, improve proposal win rates, and achieve higher margins by avoiding late-stage changes and disputes.

Essential Inputs and Initiation Conditions

Successful scope definition depends on assembling comprehensive inputs and satisfying key prerequisites. Required inputs include:

- Validated Intake Package: Consolidated client proposal, intake form data, and stakeholder feedback.

- Business Objectives Document: Agreed goals, success metrics, and priority outcomes.

- Stakeholder Roster and Roles: Internal and external participants with decision-making authority.

- Preliminary Timeline and Milestones: Target dates for deliverables and governance checkpoints.

- Budget Parameters: Initial cost estimates, funding limits, and billing models.

- Regulatory and Compliance Requirements: Industry mandates, contractual obligations, and data protection standards.

- Risk Identification Log: Draft register of potential technical, legal, and resource challenges.

- Historical Benchmarks: Performance data from similar engagements for realistic estimates.

- Technical Constraints: Details on existing systems, network environments, and integration dependencies.

- Organizational Standards: Internal frameworks, templates, and process guidelines.

Initiation conditions must include:

- Formal Intake Approval: Stakeholder sign-off confirming readiness.

- Confirmed Availability: Scheduled workshops and interviews with client and internal teams.

- Data Access: Credentials to legacy documents, technical specifications, and policies.

- Data Quality Verification: Completeness and accuracy checks for intake information.

- Communication Channels: Established collaboration platforms and notification workflows.

- Legal Clearance: Executed non-disclosure agreements and compliance approvals.

- Baseline Objectives: Alignment on deliverables, scope boundaries, and acceptance criteria.

- Tool Configuration: Deployment of specialized software for AI analysis and document management.

The workflow comprises four primary phases: data aggregation, stakeholder engagement, AI-driven analysis, and documented scope handoff. Each phase coordinates among client representatives, project managers, subject-matter experts, and technology systems to ensure a repeatable, transparent process.

Data Ingestion and Aggregation

- Automated Pull: Scheduled jobs query repositories such as SharePoint and Box to ingest requirement artifacts.

- Metadata Enrichment: AI agents tag documents by industry, service line, and regulatory domain using tools like Microsoft Azure Cognitive Services.

- Version Control: Each item is tracked with unique identifiers, timestamps, and source references for auditability.

Virtual Interviews and Collaborative Workshops

- Scheduling Integration: Calendar APIs propose optimal slots based on participant availability.

- Pre-Interview Briefs: Context packs generated from intake data align participants on objectives.

- Real-Time Transcription: Captures dialogue, attributes speakers, and identifies action items.

- Action Item Extraction: AI agents flag commitments, deadlines, and decisions, assigning follow-up tasks automatically.

AI-Powered Requirement Extraction

- Segmentation: NLP models break transcripts and documents into candidate requirements.

- Entity Recognition: Tools such as OpenAI models and IBM Watson Discovery identify system components, user roles, and performance metrics.

- Priority Scoring: Machine learning classifiers assign priority levels based on stakeholder roles, keywords, and risk factors.

Classification, Prioritization, and Traceability

- Scope Segmentation: Requirements are grouped into user experience, infrastructure, security, and compliance workstreams.

- Priority Matrix: A weighted scoring model evaluates business value, complexity, and regulatory urgency.

- Traceability Ledger: Each requirement links back to its source and approval history, supporting impact analysis.

Interactive Review and Change Management

- Reviewer Notifications: Automated alerts assign review tasks with deadlines.

- Inline Collaboration: Stakeholders comment on requirements directly, proposing edits or clarifications.

- Conflict Detection: AI monitors annotations to flag contradictory feedback or unresolved queries.

- Approval Workflow: Gating mechanisms require explicit sign-off before finalizing requirements.

System Integrations and API Coordination

- Webhook Notifications: Events trigger downstream processes like budget estimation upon requirement approval.

- Bi-Directional Sync: Updates in planning tools such as Jira or Microsoft Project reflect back to the requirement repository.

- Audit Trail Integration: Compliance systems automatically receive tagged requirements for reporting.

Scoped Deliverable Assembly and Handoff

- Document Generation: AI populates templates for scope documents, including clauses and acceptance criteria.

- Final Approval: Digital signatures validate commitment to the defined scope.

- Handoff Notification: Resource planning and scheduling teams receive automated alerts that scope artifacts are ready.

AI-Driven Analysis for Requirement Classification

AI-powered analysis transforms unstructured data—emails, transcripts, specifications—into structured requirement sets. By leveraging NLP, semantic role labeling, machine learning, and knowledge graphs, organizations accelerate classification, enforce consistency, and enable data-driven decisions.

Natural Language Processing Techniques

- Tokenization and Lemmatization: Normalizing text using services like Google Cloud Natural Language.

- Part-of-Speech Tagging: Identifying verbs for functional requirements and adjectives for quality attributes.

- Dependency Parsing: Mapping modifier-object relationships to distinguish core requirements.

- Named Entity Recognition: Recognizing entities with Amazon Comprehend and Microsoft Azure Cognitive Services Text Analytics.

Semantic Role Labeling and Ontology Integration

Semantic role labeling assigns predicate-argument structures, mapping actors, actions, objects, and conditions to conceptual frames. Custom models built with AllenNLP or spaCy integrate with knowledge graphs managed in platforms like Neo4j or Stardog. This framework enables semantic validation, redundancy detection, and compliance checks against domain ontologies.

Machine Learning for Prioritization

- Feature Engineering: Incorporating requirement type, stakeholder weight, regulatory level, and historical data.

- Supervised Learning: Training algorithms such as Random Forest and Gradient Boosted Trees on past projects.

- Ranking Models: Pairwise ranking approaches to order large requirement sets.

- Active Learning: Incorporating human feedback to refine models on evolving domains.

Integration with Requirements Management Systems

- API Connectivity: Bi-directional links with Jama Connect, IBM Engineering Requirements Management DOORS Next, or Atlassian Jira ensure seamless updates and model retraining data capture.

- Traceability Links: Automatic connections between requirements, user stories, and test cases.

- Collaboration Workflows: Automated review assignments maintain governance and accountability.

- Audit Trails: Version control records AI actions and human overrides for compliance.

Human-in-the-Loop Governance

- Review Dashboards: Present classifications with confidence scores for analyst validation.

- Feedback Mechanisms: Capture corrections to feed continuous model improvement.

- Governance Policies: Define roles, approval thresholds, and escalation paths for AI outputs.

- Performance Metrics: Monitor precision, recall, and override rates to demonstrate ROI.

Scalable Orchestration and Continuous Improvement

Deploy classification services as microservices, event-driven pipelines, or serverless functions. Implement a model registry with CI/CD for consistent testing and rollout. Measure impact through reduced manual review time, classification accuracy, cycle time improvements, and stakeholder satisfaction.

Deliverables and Handoff to Resource Planning

The final outputs of Scope Definition include a comprehensive deliverable set that underpins downstream planning, resource allocation, and execution. These artifacts, validated through quality gates, ensure alignment and traceability across the project lifecycle.

Primary Deliverable Artifacts

- Formal Scope Document: Consolidated requirements, deliverables, assumptions, and success criteria with revision history.

- Requirements Specification: Categorized functional, non-functional, and regulatory requirements, each tagged and prioritized.

- Deliverable Breakdown Structure: Hierarchical decomposition into work packages with effort estimates and acceptance criteria.

- Acceptance Criteria Matrix: Listing of requirements with test scenarios and sign-off authorities.

- Traceability Matrix: Links requirements to intake inputs, interview transcripts, and AI-classified entities.

- Constraints and Assumptions Log: Record of budget, regulatory, and technical constraints alongside assumptions.

Supporting Risk and Change Artifacts

- Preliminary Risk Register Inputs: Mapped to affected requirements with probability and impact ratings.

- Change Request Templates: Standardized forms including impact analysis and approval paths.

- Stakeholder Alignment Summary: Report capturing decisions, open questions, and sign-off status.

Dependencies and Quality Gates

- Validated Intake Data: Reconciliation of Chapter 1 context with detailed scope items.

- Interview Transcripts and Artifacts: Accessible recordings and documents in the repository.

- AI-Classified Tags: Verified outputs from IBM Watson Natural Language Understanding or OpenAI GPT models.

- Stakeholder Sign-Offs: Recorded via electronic signatures or workflows in ServiceNow Project Portfolio Management.

- Change Control Baseline: Established for any future modifications.

Automated Validation and Consistency Checks

AI engines like Microsoft Azure Form Recognizer scan scope documents for completeness, numbering consistency, and template adherence, flagging discrepancies for review.

Handoff Mechanisms to Resource Planning

- API-Driven Exchange: Export scope artifacts in JSON or XML for consumption by planning engines.

- Collaboration Integration: Post links to finalized documents in Jira or Asana with automatic synchronization of tasks.

- Notification Triggers: Automated alerts inform resource managers that scope definition is complete.

- Version Control: Check in documents with timestamps and change logs for auditability.

Mapping Scope to Resource Requirements

- Work Package Complexity: Effort estimates and technical tags guide seniority matching.

- Priority Sequencing: Milestone dates drive the allocation order for critical path items.

- Skill Requirements: Roles such as data modeling, UX design, or compliance review are matched via AI-driven talent platforms.

- Timeline Constraints: Milestone deadlines feed into scheduling engines to align availability.

Ensuring Smooth Transition

- Formal Handoff Meeting: Review artifacts with scope, planning, and delivery leads to confirm clarity and timelines.

- Automated Checklists: AI agents verify completion of required fields, approvals, and templates.

- Governance Sign-Off: Sponsors and boards sign off within the PM system.

- Real-Time Visibility: Dashboards display deliverables, effort estimates, and dependencies for delivery teams.

By rigorously defining scope and establishing clear handoff protocols, professional services organizations enable AI-driven planning engines to generate accurate capacity plans, assign the right talent, and maintain alignment with client expectations throughout the project life cycle.

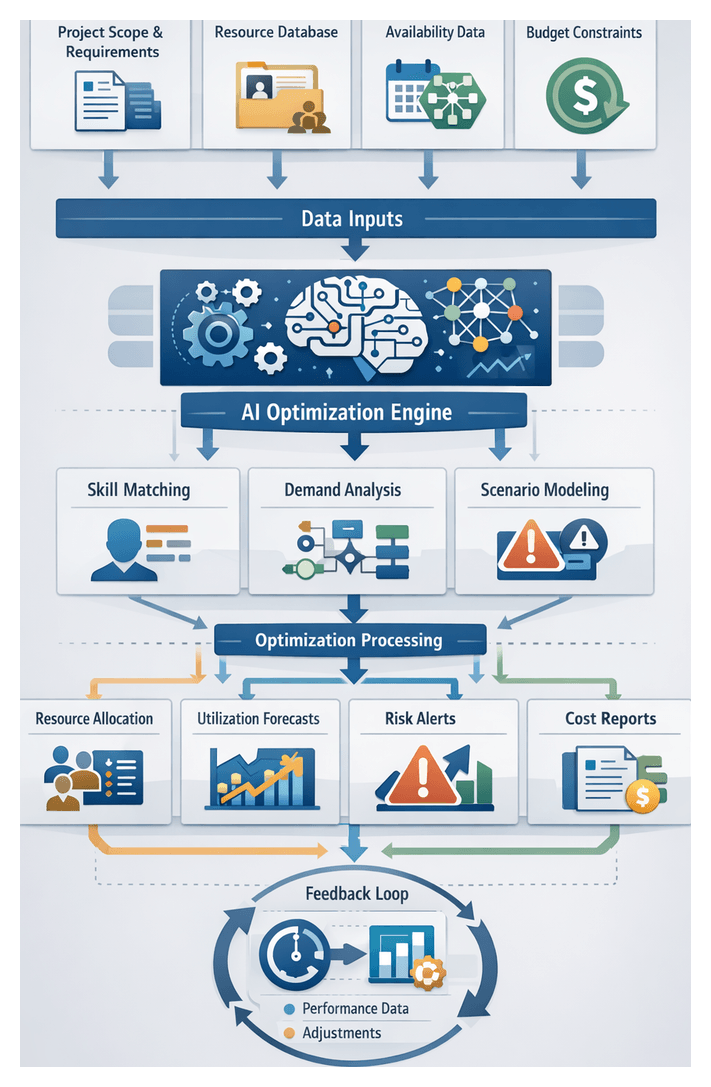

Chapter 3: Resource Allocation and Capacity Planning

In professional services organizations, escalating project complexity demands a structured approach to align staffing with strategic objectives. Resource allocation and capacity planning transforms scope documents and effort estimates into a dynamic resource model that balances demand with available talent. By integrating real-time availability, historical performance, and organizational policies, firms shift from reactive staffing to proactive workforce management. This structured process enhances utilization, mitigates risks, and underpins financial performance while delivering credible capacity forecasts for downstream scheduling and task assignment.

Key Objectives of the Resource Planning Stage

- Demand-Supply Alignment: Match project workload estimates with resources by skill, certification, and location to ensure predictability and client satisfaction.

- Utilization Optimization: Balance billable hours with professional development and bench time, preventing burnout and informing hiring or redeployment.

- Bottleneck Identification: Use analytics to flag tasks at risk due to capacity constraints, enabling timely corrective actions such as reassignments or subcontracting.

- Cost Control: Embed labor rates, overtime rules, and subcontractor fees into allocation decisions to protect profitability.

- Strategic Prioritization: Allocate scarce resources to high-value or strategic engagements first, based on contract criticality or ROI metrics.

- Scenario Analysis: Evaluate what-if scenarios—including scope changes or resource unavailability—to guide robust planning decisions.

Essential Inputs for Effective Planning

- Project Scope Documentation: Deliverables, work breakdown structures, and task durations form the basis for demand forecasting.

- Role and Skill Requirements: Standardized competency definitions ensure accurate matching of resources to tasks.

- Resource Inventory: Profiles of employees, contractors, and vendors with metadata on skills, certifications, performance, and cost rates.

- Availability Calendars: Integrated schedules from systems like Microsoft Outlook or Google Calendar capture absences and training.

- Utilization and Demand Forecasts: Historical and predictive data from Workday Adaptive Planning or SAP SuccessFactors inform capacity trends.

- Budget Constraints: Labor budgets, billing rate structures, and profit margin targets guide allocation boundaries.

- Organizational Policies: Rules for overtime, subcontractor use, diversity, and compliance embedded into planning engines.

- Integration with HR and ERP Systems: Bi-directional data flows with AI modules such as AgentLinkAI ResourceAllocator ensure consistency and reduce reconciliation.

- AI-Driven Optimization Engine: Machine learning modules from platforms like Azure Machine Learning analyze utilization patterns and recommend assignments.

- Data Quality and Governance Standards: Ownership, validation rules, and update frequencies maintain data integrity.

Prerequisites and Organizational Readiness

- Standardized Skill Taxonomy: A unified framework of roles, competencies, and proficiency levels to enable precise matching.

- Data Integration Layer: Middleware synchronizing HR, project management, and time-tracking data into a single source of truth.

- Governance and Compliance: Policies for labor regulations, data privacy, and procurement enforced in the planning engine.

- Stakeholder Endorsement: Executive sponsorship from finance, HR, and PMO to define decision rights and accountability.

- Stable Intake and Scope Artifacts: Validated intake forms and scope documents to reduce rework and resource churn.

- Technology Readiness: Infrastructure and training programs for resource planning platforms and AI modules.

- Continuous Improvement Mechanism: Feedback loops capturing actual versus planned outcomes to refine forecasting models.

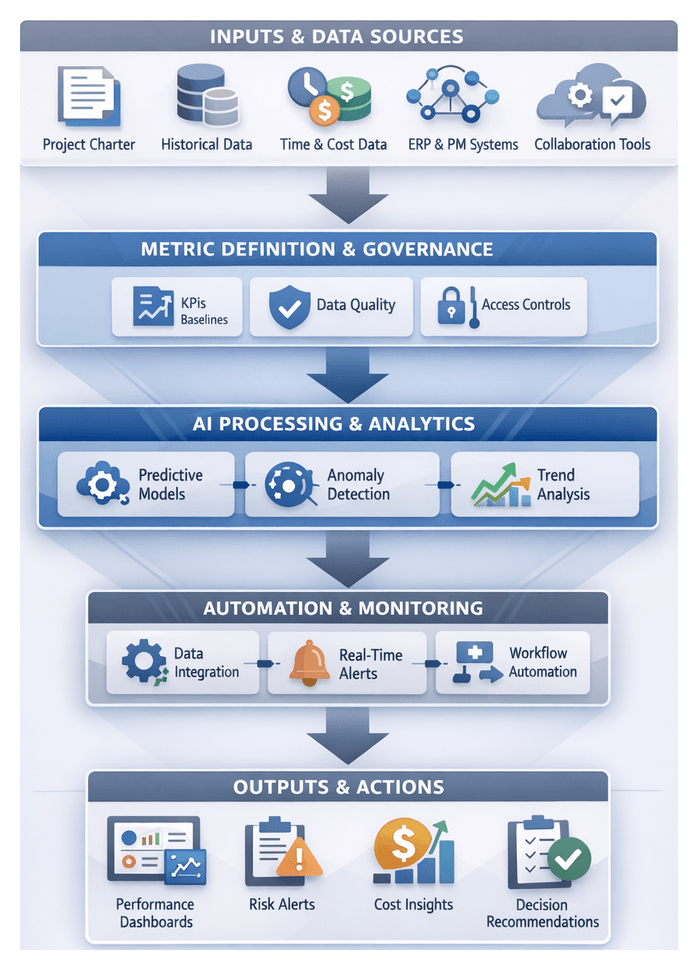

Optimization Algorithm Workflow

The optimization stage ingests validated resource profiles, demand forecasts, and project constraints to generate an optimized assignment plan. It applies mixed-integer programming, constraint programming, and metaheuristic techniques to balance objectives such as utilization, cost, and workload equity.

Data Ingestion and Preprocessing

Automated pipelines extract resource skills, availability, and performance scores from HRIS, time-tracking databases, and project intake systems. Data validation flags anomalies, while normalization converts metrics—such as part-time percentages—into fractional full-time equivalents. Change data capture ensures incremental updates, and logging frameworks surface transformation errors for rapid remediation.

Constraint Definition and Model Configuration

Hard constraints—such as skill matches, regulatory compliance, and maximum hours—are strictly enforced. Soft constraints—team composition preferences, utilization targets, and strategic priorities—are weighted within objective functions. Resource managers can adjust weights in a configuration interface or allow reinforcement learning to tune them over time based on project outcomes.

Engine Execution Modes

The core solver leverages linear programming solvers and decomposition strategies to handle large portfolios. Batch runs produce nightly master plans, while event-driven triggers enable near-real-time reoptimizations for high-impact changes. Heuristics prune infeasible options, ensuring timely delivery of candidate allocation scenarios.

Results Evaluation and Collaboration

The engine outputs ranked scenarios detailing resource-to-project mappings, utilization rates, and cost impacts. A dashboard highlights soft constraint violations and feasibility metrics, enabling rapid trade-off analysis. Automated alerts notify project and resource managers, who can annotate, override, or escalate proposed allocations. All decisions are logged for auditability and model training.

Integration with Scheduling and HR Systems

Approved allocations are exported via secure APIs and message queues to scheduling engines and HRIS platforms. Connectors translate data for tools such as CapacityPro and WorkFlowX, while transactional safeguards prevent partial updates. Error handling routines—retry policies and manual reconciliation tasks—ensure data consistency across systems.

Feedback Loop and Continuous Optimization

Actual utilization data from time-tracking and ticketing systems feeds back into the optimization engine. Variance analysis identifies model underperformance, prompting adjustments to constraint weights, performance scores, and cost estimates. Structured retrospectives capture lessons learned and update the engine’s knowledge base, driving successive improvements in forecast accuracy.

AI Capabilities and Supporting Systems

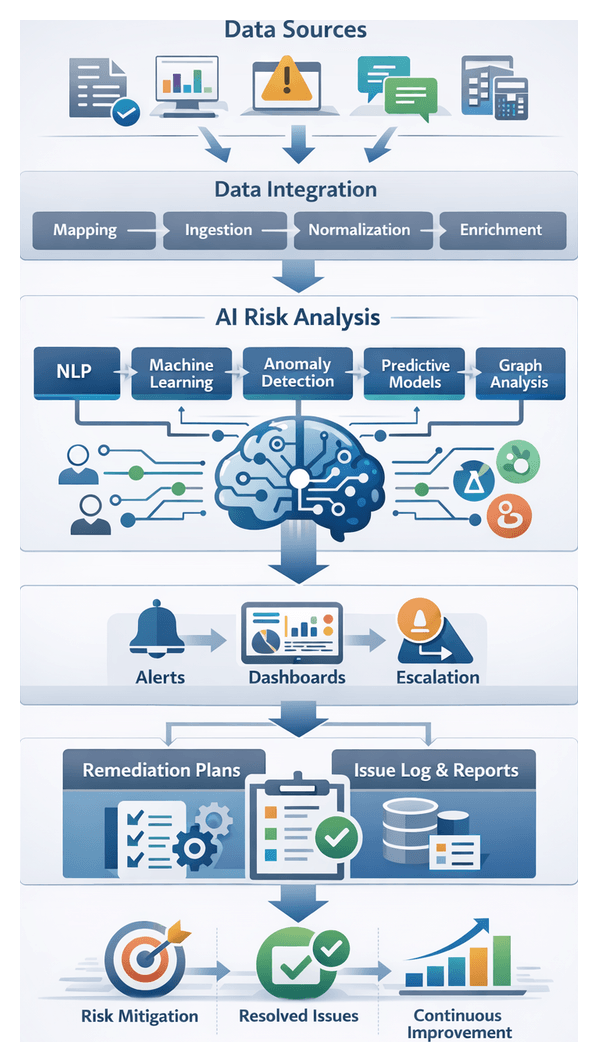

AI-driven modules and integrated platforms elevate capacity planning through predictive analytics, skills mapping, and dynamic monitoring.

- Demand Forecasting: Time series models (ARIMA, Prophet) and ensemble regressors (random forest, gradient boosting) predict resource needs. Platforms include Microsoft Azure Machine Learning and Google Cloud AI.

- Skills Ontology and Competency Mapping: Knowledge graphs and NLP pipelines extract and standardize skill tags from profiles and performance reviews. Authoritative systems include SAP SuccessFactors and Oracle Cloud HCM.

- Optimization Engines: Linear programming solvers and metaheuristics balance utilization, cost, and priorities. Libraries include IBM CPLEX and Google OR-Tools.

- Real-Time Monitoring: Continuous ingestion of timesheet entries and calendar updates enables anomaly detection and automated remediations. Visualization tools such as Microsoft Power BI and Tableau display capacity heatmaps and recommendations.

- Integration Middleware: Data orchestration with MuleSoft and Dell Boomi synchronizes ERP, CRM, and HRIS systems. Project management connectors update Microsoft Project, Jira, or Asana assignments based on capacity plans.

- Human-in-the-Loop: Explainable AI interfaces present ranked options with rationale, allowing managers to override recommendations. Overrides and confirmations feed back into learning pipelines to refine future allocations.

- Data Pipelines and MLOps: ETL workflows managed by Apache Airflow feed data into centralized warehouses. Model versioning and deployment are streamlined via Databricks, ensuring reproducibility and auditability.

- Security and Compliance: IAM enforces least-privilege access, encryption protects data at rest and in transit, and audit logs capture every recommendation and approval. Role-based controls and regulatory policy configurations ensure governance.

Capacity Plan Output and Dependencies

The culmination of the planning process is a detailed capacity plan comprising:

- A resource allocation matrix mapping tasks to roles, skills, and utilization percentages.

- A capacity forecast report outlining headcount needs, overtime projections, and under-utilization windows.

- A variance dashboard highlighting gaps between planned and available capacity by practice, geography, and time period.

- Machine-readable exports (JSON, XML) for seamless ingestion by scheduling engines and workflow orchestrators.

- Versioned plan artifacts stored in a centralized repository with audit trails for approvals and change history.

Accurate outputs depend on validated scope documents, up-to-date resource master data, real-time availability calendars, historical utilization metrics, and staffing policies. Integration with external feeds—vendor schedules, subcontractor availability, and tooling constraints—ensures end-to-end alignment.

Integration and Handoff Protocols

- Data Exchange: Machine-readable exports transmit via secure APIs or message queues to scheduling engines. Payloads are validated against an enterprise schema registry.

- Governance Approval: Formal sign-off from project managers, practice leads, and client stakeholders via digital workflows flags the plan as baseline.

- Notification Triggers: Automated alerts inform resource managers and team leads, linking to detailed profiles for confirmation or dispute.

- Dependency Linking: Scheduling tasks are programmatically annotated with capacity references, ensuring timeline generation respects utilization constraints.

- Feedback Loop: Confirmations and adjustments from the scheduling engine reconcile back to the capacity planning module for continual alignment.

Quality Assurance and Governance

- Automated validation checks prevent over-allocation and enforce utilization thresholds.

- Cross-validation routines compare forecasts with historical patterns to flag anomalies.

- Role-based access controls restrict modifications to authorized users, ensuring accountability.

- Regular governance reviews surface capacity shifts and enable timely corrective actions, with all revisions versioned and communicated.

Risks and Mitigation Strategies

- Data Drift: Periodic model retraining and master data governance mitigate changes in skill definitions or team structures.

- Over-reliance on Historical Trends: Scenario what-if analyses validate allocations for novel project demands.

- Bottleneck Concentration: Resource pools and shadow assignments provide fallback options for high-value specialists.

- Integration Latency: Near real-time APIs and event-driven architectures reduce the risk of stale capacity data.

Key Performance Indicators

- Utilization Variance: Difference between planned and actual utilization over defined periods.

- Forecast Accuracy: Deviation between modeled capacity requirements and realized staffing needs.

- Resource Conflict Rate: Frequency of schedule clashes or over-allocations identified during scheduling.

- Adjustment Turnaround Time: Time from change request to updated plan distribution.

By integrating AI-driven forecasting, optimization, real-time monitoring, and rigorous governance, firms transform capacity planning into a strategic capability. The resulting data-driven resource plans enable predictable delivery, optimized utilization, and enhanced stakeholder confidence across complex, multi-project portfolios.

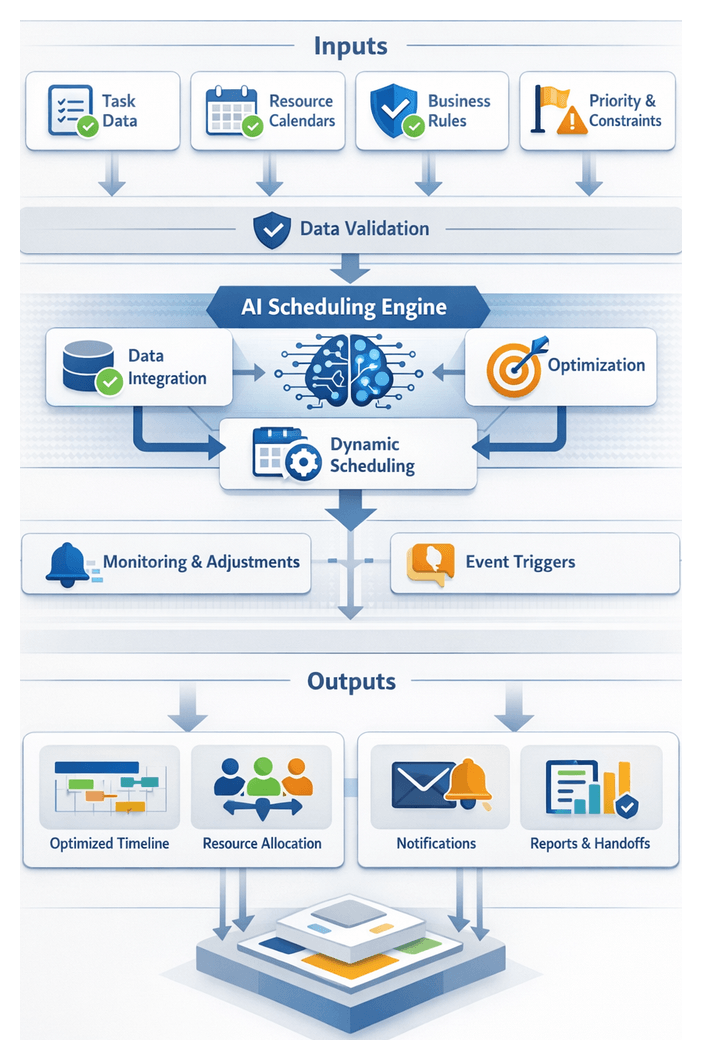

Chapter 4: Intelligent Scheduling and Timeline Optimization

Defining Scheduling Requirements and Data Sources

Purpose and Context

This stage establishes the foundational inputs and conditions necessary to generate an optimized project schedule. By defining precise scheduling requirements and identifying authoritative data sources, firms ensure that AI-driven engines have the context needed to model dependencies, resource availability, and organizational constraints into a coherent dataset that drives intelligent scheduling.

Objectives and Prerequisites

- Define the universe of data elements required to drive intelligent scheduling.

- Identify and validate the sources of each data element to ensure completeness and accuracy.

- Establish prerequisites and quality checks that gate the transition to the scheduling engine.

- Project scope and deliverables have been formally defined and approved.

- Resource allocation and capacity planning outputs are finalized.

- Governance rules and business calendars (holidays, blackout periods) are documented.

- Task dependencies and work breakdown structures are reviewed for accuracy.

- Stakeholder alignment is achieved on scheduling assumptions, including overtime policies and escalation protocols.

Required Inputs and Validation

- Task Definitions and Dependencies: A detailed work breakdown structure listing every task, milestone, and predecessor/successor relationship.

- Resource Availability and Calendars: Individual and team availability, including working hours, planned time off, and utilization targets, integrated with tools such as Forecast or Resource Guru.

- Business and Compliance Constraints: Organizational calendars defining holidays, maintenance windows, and regulatory reporting deadlines.

- Project and Client Priorities: Data on high-value tasks, contractual milestones, and regulatory deliverables to guide resource allocation.

Before invoking the scheduling engine, inputs must pass validation checks that verify task completeness, non-conflicting availability, alignment with organizational policies, and consistency between scope documents and task breakdowns.

Transition to Dynamic Scheduling

- Approval of task and dependency data by project managers.

- Confirmation of resource availability with team leads.

- Verification of business calendar constraints by operations teams.

- Sign-off on priority and risk settings by stakeholders.

Dynamic Scheduling and Adjustment Workflow

Input Collection and Pre-processing

The workflow begins by aggregating data from multiple systems to construct an initial view of tasks, dependencies, and availabilities. Inputs include:

- Task definitions and durations from the approved scope document.

- Dependency mappings illustrating sequencing constraints.

- Resource calendars from Microsoft Exchange and Google Calendar.

- Current workload snapshots from modules such as Reschedulr.

- Priority profiles and deadline updates via collaboration tools.

An AI-driven normalization agent reconciles date formats, aligns time zones, and flags anomalies, ensuring the scheduling engine processes a coherent input set.

Iterative Schedule Computation

An intelligent engine such as Optimal.ai computes feasible timelines using constraint-based optimization and machine learning to balance objectives:

- Minimize project duration while respecting dependencies.

- Maximize resource utilization without exceeding capacity.

- Adhere to priority weights assigned to critical tasks.

- Accommodate soft constraints like preferred working hours or skill preferences.

The engine generates a baseline in its first pass and refines through iterative heuristics and predictive models, scoring each candidate timeline against defined objectives.

Event-Driven Adjustments

Live updates trigger real-time adjustments via subscriptions to event feeds:

- Leave approvals from HR systems.

- Scope changes from ChangePilot.

- Task completion notifications from Slack or Microsoft Teams.

- Client feedback on milestone dates via customer portals.

A scheduling agent evaluates impact using decision models and proposes alternative slots or resource substitutions automatically, minimizing manual re-planning.

Cross-System Coordination and Notifications

- Push revised timelines into platforms like Asana or Jira.

- Synchronize event changes with corporate calendars.

- Alert resource owners and project managers through email or messaging channels.

- Log adjustments in configuration management databases for audit.

AI-driven notification services prioritize messages based on role and urgency, ensuring timely updates without fatigue.

Validation and Stakeholder Approval

Certain adjustments—such as executive presentations or contractual milestone changes—require human sign-off. The workflow generates approval requests with:

- A comparison view of original and proposed schedules.

- Impact analysis on downstream tasks, costs, and resource utilization.

- An AI-generated rationale explaining the optimization.

Stakeholders respond via integrated interfaces like ApproveNow, triggering final commits or rollbacks.

Continuous Feedback and Integration

Metrics from each scheduling run—estimate accuracy, adjustment frequency, utilization variance, and approval turnaround—feed back into machine learning models. Over time, the engine refines duration predictions and resource matchmaking. Once stable, the finalized timeline is packaged for handoff to task assignment modules, including detailed start/end dates, resource allocations with confidence scores, and audit trails.

AI-Driven Conflict Resolution and Resourcing

Core AI Capabilities

- Constraint Analysis: AI engines apply satisfaction techniques to detect overallocation and dependency violations across millions of combinations in seconds.

- Resource Leveling: Optimization algorithms (mixed-integer programming, genetic algorithms) rebalance workloads based on skills, contractual obligations, and preferred hours.

- Scenario Simulation: Real-time what-if analyses generate alternative timelines for resource substitutions, duration adjustments, or priority shifts.

- Predictive Reassignment: Machine learning forecasts potential bottlenecks, suggesting proactive reassignments to prevent conflicts.

- Enterprise Integration: Modules exchange data with ERP systems, human capital platforms, and time-tracking applications to ensure decisions reflect real-time availability and certified skills.

- Continuous Learning: Reinforcement learning refines recommendations by learning from accepted adjustments and manual overrides.

Supporting Systems and Integration

- Data Integration Platform: Tools such as MuleSoft or Zapier unify data from human capital, project portfolio, and calendar systems.

- Centralized Analytics Repository: Platforms like Snowflake store historical records, enabling AI to learn from past outcomes.

- Workflow Orchestration: Engines such as Apache Airflow schedule ingestion jobs and trigger conflict detection routines.

- Notification and Collaboration Hub: Alerts and proposals are delivered via Slack or Microsoft Teams.

- Visualization Dashboards: Embedded BI tools display conflict heatmaps, utilization graphs, and scenario comparisons.

Conflict Resolution Workflow

- Ingest schedule data, resource calendars, and skill profiles from integrated systems.

- Run constraint analysis to detect conflicts.

- Prioritize conflicts by business impact.

- Generate resolution scenarios with estimated outcomes.

- Deliver recommendations via dashboards and collaboration channels.

- Apply approved changes automatically in the scheduling engine.

- Monitor actual progress and update AI models with real-world results.

Benefits and Best Practices

- Reduced Schedule Slippage: Automated conflict resolution cuts remediation time by up to 80 percent.

- Improved Resource Utilization: Skill-based assignments increase billable utilization rates.

- Faster Decision Making: Scenario simulations and one-click approvals accelerate approval cycles.

- Higher Stakeholder Satisfaction: Proactive alerts and clear recommendations enhance transparency and confidence.

- Data Quality and Governance: Maintain accurate resource profiles and calendars to feed reliable inputs.

- Human-in-the-Loop Balance: Define thresholds for auto-apply versus manual approval to ensure oversight.

- Security and Compliance: Implement access controls and encryption for sensitive schedule and personnel data.

Optimized Timeline and Handoff Specifications

Master Schedule and Supporting Artifacts

The finalized master schedule is a comprehensive, resource-aware plan refined through AI-driven adjustments and conflict resolution. It details every activity, planned start and end dates, resource assignments, and dependencies. Outputs include interactive web views, PDF summaries, and exportable data files.

- Task Dependency Matrix with lead/lag times.

- Resource Utilization Report highlighting allocation percentages.

- Milestone Tracker listing key deliverables and approvals.

- Scenario Baselines preserving audit trails of alternative timelines.

- Schedule Change Log recording AI suggestions and stakeholder approvals.

Dependencies and Preconditions

- Validated task list and scope document aligned with deliverables.

- Confirmed resource profiles synchronized with enterprise human capital tools.

- Integrated organizational calendars via Microsoft Project or Smartsheet.

- Codified task dependency rules and compliance constraints.

- Configured risk-adjusted buffer settings based on historical variance data.

Handoff Mechanisms

- API-Driven Data Push: Use RESTful or GraphQL interfaces to transmit schedule objects to task assignment engines.

- Calendar Synchronization: Export events in iCalendar (ICS) format for import into team calendars and Jira plug-ins.

- Document Distribution: Publish PDF or XLSX versions to a centralized repository with version control.

- Notification Workflows: Trigger automated emails or in-app notifications summarizing milestones and updates.

Communication, Access, and Audit Trails

- Self-service portals offering interactive timeline dashboards and tailored report downloads.

- Embedded reports in Slack or Microsoft Teams for contextual discussion.

- Executive briefings delivered via slide decks or email digests with milestone snapshots and variance analyses.

- Role-based access controls to restrict sensitive resource data and provide high-level overviews to clients.

- Immutable archives and diff reports to maintain a forensic record of schedule evolution.

By defining clear outputs, dependencies, and handoff protocols at the close of the scheduling stage, firms create a solid foundation for downstream automation in task assignment, execution tracking, and performance monitoring. This structured transition minimizes miscommunication, reduces rework, and ensures all teams operate from a unified, validated timeline that drives on-time delivery and client satisfaction.

Chapter 5: Automated Task Assignment and Prioritization

In professional services environments, efficient allocation of work tasks is essential to meeting project goals, controlling budgets, and maximizing team productivity. Automated task assignment and prioritization transforms static planning outputs—defined deliverables, resource capacity plans, and timelines—into actionable work items. By leveraging AI-driven decision engines, organizations eliminate manual bottlenecks, reduce human error in skill-to-task matching, and adapt in real time to changing project demands. The result is a dynamic, data-driven workflow that balances workloads, aligns expertise with priorities, and accelerates time to value for clients.

This approach addresses the challenge of distributing large volumes of discrete tasks across multidisciplinary teams. Automated agents process extensive datasets, recognize patterns in performance history, and respond to resource availability changes, yielding a predictable delivery cadence, improved utilization, and enhanced visibility into task status for project leaders and clients. Clear matching algorithms ensure that critical path activities receive immediate attention while lower-risk tasks are queued appropriately, driving operational efficiency and reducing uncertainty.

Inputs and Prerequisites for Dynamic Assignment

Effective automation requires validated inputs and established operational conditions. Missing or inconsistent data can lead to suboptimal recommendations and reduced confidence in AI outputs. Automated validation checks and exception notifications should surface any gaps for resolution.

- Task Definitions and Metadata: Scope, estimated effort, priority, dependencies, deadlines.

- Resource Profiles: Skills, certifications, roles, performance metrics.

- Current Workload and Availability: Real-time assignments, calendar integrations, time-off data.

- Project Priority Framework: Strategic importance, client deadlines, regulatory milestones.

- Business Rules and Constraints: Billable hours limits, skill interchangeability, geographic or time-zone restrictions, budget caps.

- Dependency and Precedence Information: Task interdependencies and critical path elements.

- Performance History: Completion times, quality ratings, stakeholder feedback.

Prerequisites:

- Approved Project Baseline: Locked scope, schedule, budget, resource plans.

- Integrated Data Streams: Bi-directional connections with ERP, CRM, talent management, calendars.

- Security and Access Permissions: Roles enabling AI agents to read and write project data, with governance policies and audit trails.

- Escalation Protocols: Workflows for skill shortages, overbooked resources, or policy violations.

- Performance Monitoring Setup: Dashboards and alerts tracking assignment accuracy, completion rates, and utilization.

- Stakeholder Alignment: Clear communication on automation role, override processes, and expectations.

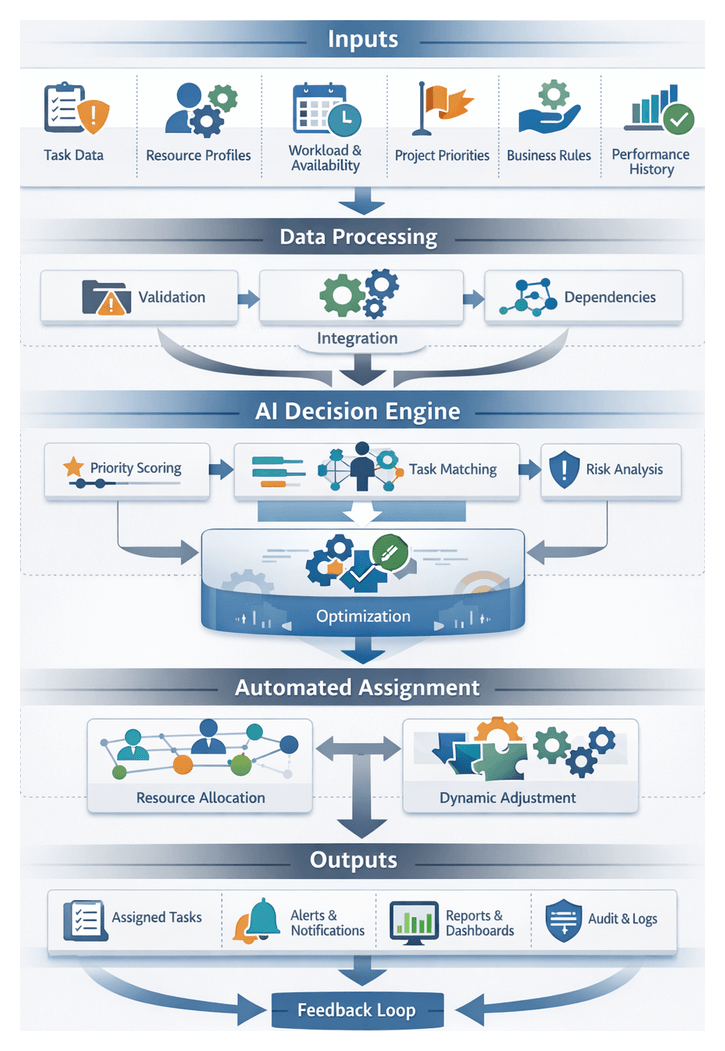

AI-Driven Prioritization and Reassignment Workflow

Data Inputs and Trigger Events

The dynamic prioritization engine continuously ingests task status updates, work-in-progress indicators, and manual status changes. It monitors resource availability signals via ServiceNow or Asana, project milestone adjustments, risk and issue alerts, and stakeholder requests from intake forms or chatbots. Triggers can be rule-based—scheduled at regular intervals or sprint boundaries—or event-driven to react immediately to significant changes, balancing stability with responsiveness.

Priority Scoring and Normalization

Raw inputs are normalized to consistent scales, extracting key features:

- Business Impact Score: Strategic value of deliverables.

- Deadline Proximity: Ratio of remaining time to effort estimate.

- Resource Skill Match Quality: Alignment of personnel skills with requirements.

- Risk Exposure Level: Severity of associated risk items.

- Stakeholder Sentiment Index: Sentiment analysis via Google Cloud AI Platform or OpenAI.

A multi-criteria decision analysis algorithm—often realized through a gradient boosting model or neural ensemble—applies weighted scoring functions. Rule overrides handle compliance deadlines or contractual obligations. Final priority scores, timestamps, and contributing metadata are stored in the task database, supporting audit requirements. The model periodically retrains on historical data to improve accuracy.

Dynamic Reassignment and Continuous Optimization

Following scoring, the system reevaluates assignments in a loop:

- Identify Underutilized Resources: Query capacity planner for available bandwidth.

- Match Tasks to Resources: Use a constraint solver balancing skills, availability, and workload.

- Resolve Conflicts: Evaluate impact metrics when tasks compete for the same resource.

- Update Assignments: Persist changes and trigger notifications via messaging APIs.

Continuous optimization may include simulated annealing or reinforcement learning agents that minimize projected completion times and maximize utilization.

System Integrations and Stakeholder Notifications

Key integrations include a project management platform such as Adobe Workfront, a resource management system like Smartsheet, communication hubs in Microsoft Teams or Slack, monitoring and logging services, and a data warehouse for historical analytics. Middleware or an event bus (Kafka, RabbitMQ) orchestrates these connections.

Notifications are delivered through in-app alerts, chat messages, email digests, and mobile pushes. They include contextual details—original assignment, new priority score, responsible resource, and expected completion window—to foster trust and clarity.

Error Handling and Exception Management

The workflow handles common exceptions:

- Resource Data Unavailability: Revert to last known availability and schedule reconciliation.

- Conflicting Updates: Reconcile via last-write-wins or operational transformation, logging conflicts for review.

- Model Scoring Anomalies: Flag outlier scores for manual review and retraining.

- Assignment Rejection: Reenter declined tasks into the prioritization loop immediately.

Exception events surface in an issue management system with AI-generated remediation suggestions based on past resolutions.

AI Capabilities for Task Matching and Load Balancing

Skill Profiling and Competency Mapping

AI builds comprehensive skill profiles by aggregating data from learning management platforms, certification records, performance management systems, NLP analysis of resumes and internal bios, and on-the-job signals such as code commits or document edits. Machine learning models normalize and weight these inputs into multi-dimensional competency vectors.

Task Requirement Analysis

Natural language understanding transforms unstructured task descriptions into structured requirement sets. Entity extraction identifies skills and domain knowledge, dependency parsing reveals task hierarchies, and sentiment and urgency detection prioritize tasks based on client tone and deadlines.

Predictive Availability and Performance Forecasting

AI forecasts resource capacity using time-tracking systems, calendar APIs from Microsoft Teams or Slack, regression models predicting completion durations, and adjustments for seasonal trends, holidays, and team ramp-up periods. These forecasts prevent over-commitment and identify potential bottlenecks.

Optimization and Dynamic Matching Algorithms

At runtime, AI employs integer programming, genetic algorithms, or constraint satisfaction solvers to optimize multiple objectives: maximize skill alignment, minimize project duration, respect priority levels, and support soft constraints like learning goals. These algorithms integrate into orchestration platforms such as Asana or Jira, exposing RESTful APIs for seamless assignment updates.

Continuous Feedback Loops and Model Refinement

Continuous learning processes ingest performance feedback—actual versus estimated time logs and quality ratings—user interactions, and override events. Anomaly detection flags deviations, triggering retraining. Periodic calibration aligns skill profiles with evolving roles, ensuring models adapt to new certifications and organizational changes.

Integration with Collaboration and Workflow Tools

Assignments synchronize to platforms such as Trello, monday.com, and enterprise work management tools. Notifications and alerts via Slack or Microsoft Teams inform resources of new assignments and rebalancing. Status updates from collaboration hubs feed back into AI dashboards, closing the loop on real-time efficacy. Automated handoffs to time tracking and billing systems ensure accurate financial reconciliation.

Fairness, Transparency, and Governance

Explainability modules offer human-readable rationales for assignment decisions, listing key factors and confidence scores. Bias mitigation techniques monitor allocation patterns and trigger safeguards or manual overrides. Governance dashboards allow program managers to audit assignments, validate equity objectives, and enforce compliance. Role-based access controls protect sensitive model parameters and performance data.

Outputs and Handoff to Execution

Assigned Task List and Artifacts

The primary outputs are a structured assignment record—often machine-readable JSON or XML capturing task IDs, descriptions, assignees, priority levels, effort estimates, dates, and dependencies—and an assignment summary dashboard powered by Asana or Jira. Notification payloads—webhook events and emails—include actionable links to collaboration hubs. Integration artifacts document API endpoints, authentication tokens, payload schemas, and error-handling protocols for downstream systems.

Dependencies and System Integration

Assigned outputs rely on real-time access to the optimized timeline, resource allocation data, priority service analyses, a performance history database updated via ETL or CDC, and a governance repository enforcing policies such as segregation of duties and workload thresholds.

Handoff Mechanisms

- Webhook Notifications: HTTP callbacks to Jira or Trello when tasks are assigned.

- Message Bus Integration: Event streams via Apache Kafka or Azure Event Hubs for financial forecasting, risk monitoring, and dashboards.

- API-Driven Push: Task creation in Slack, Microsoft Teams, or Asana with rich message cards.

- Document Distribution: Automated templates populated and shared via SharePoint or Box for regulated reporting and sign-off.

- Dashboard Refresh: BI tools update live visualizations of deadlines, resource loads, and critical paths.

- Audit Trail and Logging: All API calls, webhooks, and event publications logged with timestamps and payload snapshots.

These outputs and handoff protocols ensure seamless propagation of assignments into execution channels, providing transparency, traceability, and a foundation for ongoing performance monitoring and continuous optimization.

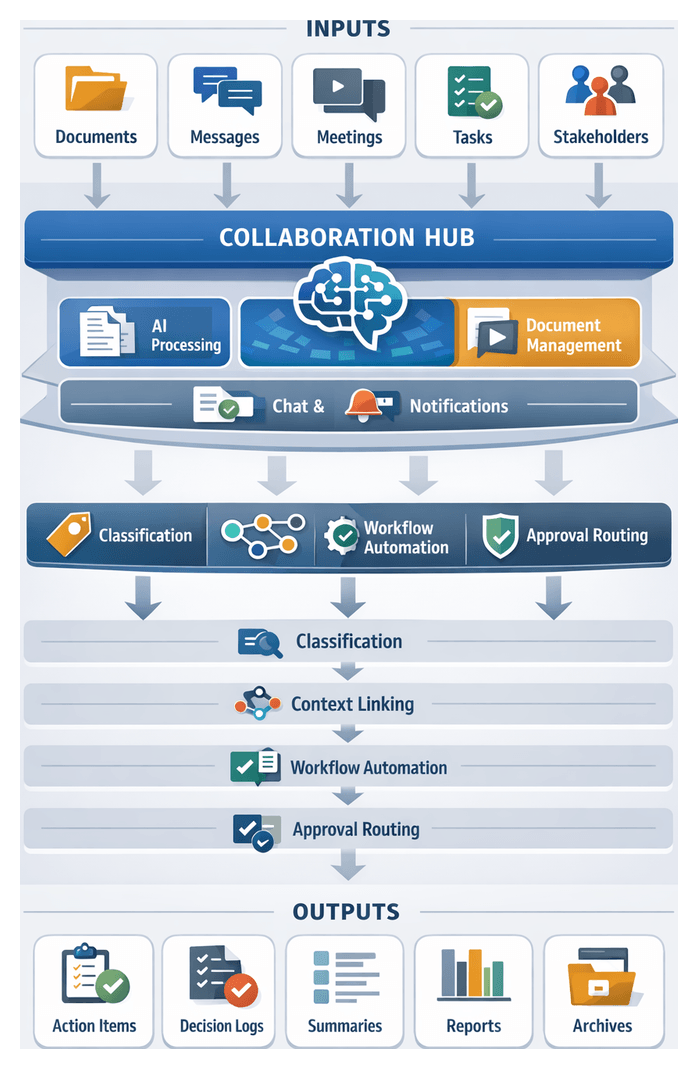

Chapter 6: Collaboration and Communication Coordination

Purpose and Scope of the Collaboration Hub

The Collaboration Hub establishes a unified environment for all project communications, documents, and decision points, breaking down silos among strategy, technology, operations, and client teams. By integrating asynchronous messaging, document repositories, meeting recordings, and AI-driven insights into a single interface, the hub ensures every stakeholder accesses the latest context. This centralized approach accelerates decision cycles, enhances accountability through auditable trails of revisions and approvals, and binds planning, execution, and review into a cohesive workflow that advances projects with clarity and alignment.

Key Objectives and Value

- Centralize project communications, documents, and events to prevent information silos and ensure consistency.

- Capture approvals, comments, and action items in context for transparent, auditable decision-making.

- Accelerate stakeholder alignment and reduce time to decision via integrated notifications and real-time updates.

- Enforce document versioning, naming conventions, and metadata tagging for efficient retrieval and compliance.

- Support synchronous and asynchronous collaboration across time zones and work patterns.

- Embed automated workflows for reviews, feedback loops, and approval cycles.

- Provide role-based access controls to safeguard sensitive information and meet data governance requirements.

Collaboration Inputs and System Integration

Effective coordination relies on high-quality inputs from diverse systems. The Collaboration Hub ingests data from:

- Document Repositories: Charters, specifications, design artifacts, and contracts stored in enterprise content management systems or cloud platforms.

- Communication Channels: Chat logs from Slack, Microsoft Teams, and email archives, ensuring all threads are captured and indexed.

- Meeting Schedules and Recordings: Calendar events and audio/video transcripts from Zoom or Webex for automated extraction of action items and decisions.

- Task and Workflow Updates: Statuses and assignments from tools like Jira and Asana.

- Stakeholder Directory: Structured data on team members, clients, and subject matter experts with roles and approval responsibilities.

- Historical Collaboration Logs: Archived communications, lessons learned, and compliance documents to inform governance and AI-driven recommendations.

- Metadata and Taxonomies: Predefined tags and classification schemas for consistent labeling and AI processing.

Key prerequisites include single sign-on and role-based access controls, robust API connectivity, unified naming and metadata standards, defined governance policies, end-user training, AI platform provisioning, and validated network performance. Upstream inputs flow from resource planning and scheduling, while downstream outputs feed risk assessment, performance monitoring, and continuous improvement processes.

AI-Enhanced Document Sharing and Contextual Communication Workflow

To maintain alignment and transparency, the document sharing workflow automates ingestion, classification, chat association, collaboration, approvals, notifications, and archival.

Document Ingestion and Routing

- Source Monitoring: Connectors poll services such as Google Drive, Box, and internal SharePoint libraries.

- File Normalization: OCR-processing of PDFs, conversion of Office formats, and versioning.

- Metadata Extraction: AI agents tag project names, deliverable types, and dates for search and compliance.

- Routing Decisions: Files are pushed to secure storage, collaboration channels, or review queues based on policies.

AI-Enabled Content Classification

- Taxonomy Matching: Natural language models categorize content into proposals, specifications, or status reports.

- Confidence Scoring: Low-confidence classifications are flagged for human verification.

- Automated Tagging: Approved labels support downstream workflows and archival rules.

Contextual Chat Thread Association

- Event Detection: Webhooks capture mentions of document IDs or titles in Slack or Microsoft Teams.

- Context Enrichment: AI extracts intent and sentiment, identifying review comments or approval requests.

- Thread Linking: Chats are bound to document metadata, preserving context for audits.

- Access Synchronization: Participants receive permissions based on role-based controls.

Real-Time Collaboration and Version Control

- Lock-Free Editing: Live edits via Google Drive or Office 365 synchronize without conflicts.

- Change Tracking: AI-powered diff engines highlight sentence-level modifications.

- Notification Triggers: Alerts for significant edits appear in chat or email.

- Audit Logging: All revisions and approvals are timestamped and attributed.

Automated Approval Routing

- Approver Selection: Machine learning models recommend reviewers based on expertise and turnaround history.

- Summary Generation: AI extracts key changes since the last review to brief approvers.

- Sequential or Parallel Routing: Workflows follow configured sequences or circulate to multiple stakeholders.

- Escalation Handling: Unanswered requests escalate to backups or project leaders after SLA thresholds.

Contextual Notifications and Alerts

- Channel-Specific Delivery: Notifications appear in designated Slack channels or Teams groups.

- Priority Tagging: Alerts labeled as normal, high-priority, or critical compliance checks.

- Aggregated Digests: Consolidated daily or hourly digests reduce notification fatigue.

- Adaptive Cadence: AI adjusts notification frequency based on user response patterns.

Integration with Task Management Systems

- Action Item Detection: Natural language understanding in chat identifies new tasks.

- Task Enrichment: Tasks inherit metadata such as due dates, priorities, and project codes.

- Status Synchronization: Updates in Asana or Jira propagate back to chat and document records.

Archival and Compliance Review

- Automated Archiving: Files and transcripts older than retention thresholds move to long-term, write-once storage.

- Compliance Tagging: AI scans for sensitive data and applies redaction or encryption.

- Audit Artifacts: Consolidated archives include final deliverables, approval logs, and communication records.

AI-Driven Meeting Capture, Context Extraction, and Summarization

AI transforms raw meeting interactions into structured intelligence, accelerating decision cycles and maintaining comprehensive records of commitments and insights.

Meeting Capture and Transcription Workflow

- Media Ingestion: Audio/video from conferencing platforms stream to processing queues.

- Speech-to-Text Conversion: Services such as Google Speech-to-Text, AWS Transcribe, or Azure Speech Services generate time-coded transcripts with speaker diarization.

- Time-Stamping: Precise timestamps map utterances to agendas and slide decks.

Natural Language Understanding and Context Tagging

- Intent and Topic Detection: Models label segments as status updates, decision discussions, or risk notifications.

- Entity and Action Item Extraction: Named entity recognition tags deliverables, dates, and budget figures; sequence labeling identifies tasks with owners and deadlines.

- Decision Capture: Formal approvals and direction changes are recorded.

- Risk and Issue Identification: Emerging concerns and blockers are flagged for follow-up.

Summarization and Highlight Generation

- Extractive Summarization: Ranking algorithms select salient sentences.

- Abstractive Summarization: Transformer models compose coherent summaries that paraphrase the core discussion.

- Structured Output: Bullet points and narrative overviews highlight action items, decisions, dependencies, and open questions.

Integration with Project Artifacts and Collaboration Platforms

- Project Task Boards: Generate tickets in Jira or Azure DevOps.

- Document Repositories: Archive transcripts and summaries in SharePoint or Box.

- Knowledge Graphs: Enrich enterprise bases with new insights and relationships.

- Collaboration Hubs: Post summaries in Microsoft Teams, Slack, or Webex Teams channels.

Supporting Systems and Security

Infrastructure components—message queues, service orchestration platforms, data lakes, and identity management—ensure scalable, secure processing. Encryption at rest and in transit, role-based access controls, consent management, data retention policies, and audit logging uphold GDPR, HIPAA, and SOC 2 compliance. AI models provide explainability for action-item identification and risk flagging, fostering trust in automated insights.

Operational Benefits

- Increased Efficiency: Teams focus on execution rather than minute-taking.

- Improved Accuracy: Automated extraction reduces omissions and errors.

- Accelerated Decisions: Near–real time summaries enable prompt stakeholder action.

- Enhanced Transparency: Standardized records ensure all participants share a single source of truth.

- Scalable Knowledge Capture: AI scales processing without proportional headcount increases.

Outputs and Handoff Protocols

The Collaboration and Communication Coordination stage yields artifacts that inform risk assessment, issue management, performance monitoring, and project closure. Key outputs include:

- Meeting Summaries and Action Item Registers: AI-generated summaries linking to recordings or transcripts.

- Contextual Chat Logs: Threaded discussions from Slack, Microsoft Teams, and Google Chat, tagged by topic and phase.

- Shared Document Repositories: Version-controlled libraries with AI-extracted metadata for rapid retrieval and compliance.

- Stakeholder Notification Logs: Records of notifications with read receipts and escalation triggers.

- Collaboration Analytics Dashboards: Reports on response times, review turnaround, and attendance rates.

- Updated Project Context Models: Knowledge graphs linking stakeholders, deliverables, milestones, and threads for predictive analytics.

Dependencies for reliable outputs include integration with scheduling and task assignment, document management systems, pretrained NLP models such as OpenAI GPT and Google BERT, real-time communication feeds, a unified stakeholder directory, security frameworks, and notification workflows configured in platforms like Zapier or Microsoft Power Automate.

At stage handoff, protocols package and transfer outputs to downstream modules:

- Issue and Risk Indicators: Negative sentiment, escalated items, and severity scores forwarded to Issue Management.

- Action Item Registers: Integrated into risk and monitoring dashboards for schedule and quality correlation.

- Communication Metadata: Indexed in the project data lake for performance monitoring and KPI computation.

- Knowledge Graph Updates: Consumed by predictive analytics to refine risk scoring and forecast escalations.

- Escalation Notifications: Alerts sent to the Project Management Office with direct links to artifacts.

- Archival for Audit Trails: Finalized summaries, logs, and threads archived in compliance repositories.

Data Governance, Compliance, and Analytical Insights

Rigorous data governance underpins effective collaboration coordination. Key imperatives include:

- Information Classification: Tagging content by sensitivity levels before ingestion.