Orchestrating Omnichannel Customer Journeys with AI Agents A Practical Workflow for Retail and E Commerce

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Fragmented Data Landscape in Retail and E-Commerce

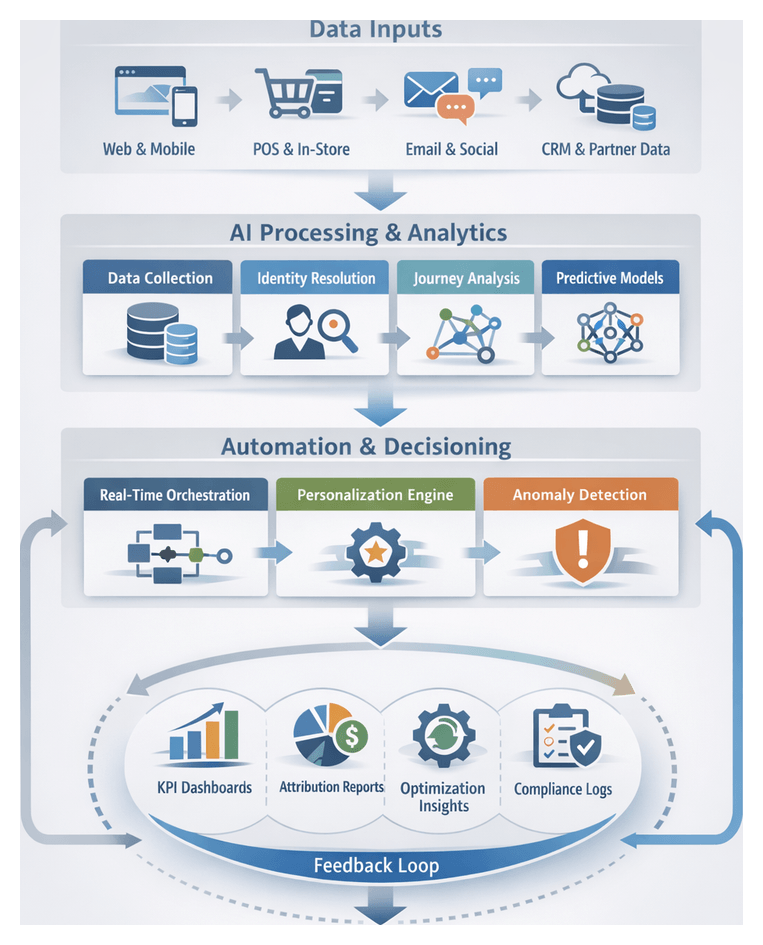

Today’s retail and e-commerce ecosystem spans an extensive network of digital and physical touchpoints. Consumers browse product catalogs on web storefronts and mobile apps, engage with brands on social channels, shop through third-party marketplaces, scan items at point-of-sale terminals, and interact with chatbots or call centers. Each interaction generates event data—page views, clicks, transactions, returns, loyalty redemptions, reviews, service requests—captured in disparate systems with proprietary schemas, authentication mechanisms and retention policies. Legacy on-premises databases, cloud-native analytics services, custom applications and partner feeds maintain isolated data silos, leading to inconsistent formats, fragmented customer views and significant blind spots for personalization and attribution.

Without a unified approach to inventorying and ingesting every data source, organizations struggle to assemble a reliable, end-to-end picture of the customer journey. Web events may stream through Apache Kafka or Amazon Kinesis, mobile analytics SDKs batch logs to cloud storage, POS terminals upload daily transaction files, and social media APIs or webhook feeds deliver sentiment and engagement metrics on varying schedules. The absence of a transparent blueprint for source connectivity, schema definitions and quality standards makes it difficult to ensure data completeness, enforce latency requirements or measure extraction SLAs reliably.

Establishing a comprehensive inventory of interaction sources and defining precise ingestion conditions is the essential first step. This foundational work catalogs every channel—web properties, content delivery networks, native mobile apps, in-store POS systems, social platforms such as Facebook and Instagram, partner marketplaces and customer support applications—and describes event schemas, payload structures and authentication credentials. By specifying data quality thresholds for latency, completeness and accuracy, and by agreeing on extraction methods—real-time streaming, batch exports or API polling—teams eliminate hidden blind spots and create a transparent roadmap for downstream normalization, integration and AI-driven enrichment.

Necessity of a Cohesive AI-Driven Workflow Framework

Addressing the complexities of a fragmented data landscape requires a structured, multi-stage workflow that orchestrates ingestion, integration, analysis and activation tasks. Rather than relying on ad hoc scripts or isolated point solutions, a cohesive framework provides end-to-end visibility, governance and error handling across the customer data pipeline. By decomposing the process into interoperable stages, organizations enable transparent handoffs, consistent artifacts and predictable outcomes, reducing operational risk and supporting scalable growth.

Key benefits of a defined workflow framework include:

- Repeatability: Standardized procedures and interfaces guarantee that data flows follow the same path with predictable results.

- Scalability: Modular stages can be parallelized or distributed across processing clusters to accommodate increasing event volumes.

- Resilience: Built-in monitoring, exception handling and retry logic detect anomalies and automatically remediate issues, preventing data loss or corruption.

- Traceability: Metadata capture at each stage preserves lineage—source timestamps, transformation parameters and validation outcomes—supporting audit and compliance requirements.

- Collaboration: Clear delineation of responsibilities across data engineering, analytics, marketing and IT fosters alignment on inputs, outputs and dependencies.

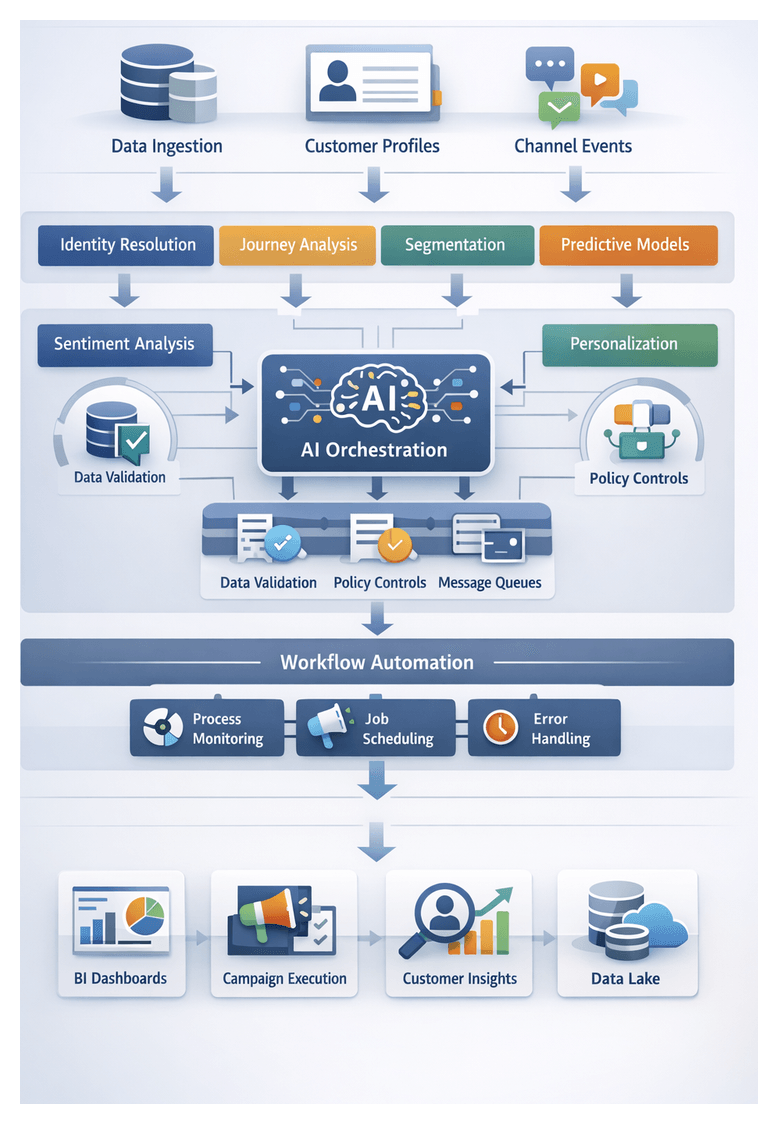

Within this orchestrated framework, AI-driven enhancements can be introduced at precise handoff points. Intelligent agents for extraction, normalization, entity matching, predictive modeling and decision orchestration integrate via standardized connectors, ensuring that downstream services—identity resolution, segmentation engines, journey analytics platforms and campaign orchestrators—receive validated, enriched data on schedule. Solutions exemplify how plug-and-play AI agents can augment existing pipelines without requiring wholesale redesigns.

Integrating AI Agents throughout the Customer Journey

AI agents encapsulate specialized capabilities into modular services that automate critical tasks across the omnichannel workflow. By embedding these agents at strategic points, organizations achieve:

- Scalability: Parallel processing of millions of interactions without manual intervention.

- Consistency: Uniform application of enrichment rules, matching logic and decision policies across all channels.

- Agility: Rapid deployment of new models or business rules through continuous integration and delivery pipelines.

- Insight: Continuous learning loops that refine predictions and recommendations over time.

The principal categories of AI agents include:

Data Extraction and Standardization Agents

- Adaptive connectors supporting REST APIs, message queues such as Apache Kafka, file systems and change-data-capture streams.

- Automated format detection to infer field delimiters and nested structures, reducing manual schema mappings.

- Runtime schema enforcement and validation to flag or reject anomalous records before downstream processing.

Data Enrichment and Semantic Interpretation Agents

- Text analysis and sentiment scoring through Google Cloud Natural Language or AWS Comprehend to extract entities, key phrases and customer sentiment.

- Computer vision models for image and video tagging, identifying products, logos or in-store behaviors.

- Contextual attribute assignment to infer demographics, purchase intent and campaign affiliations.

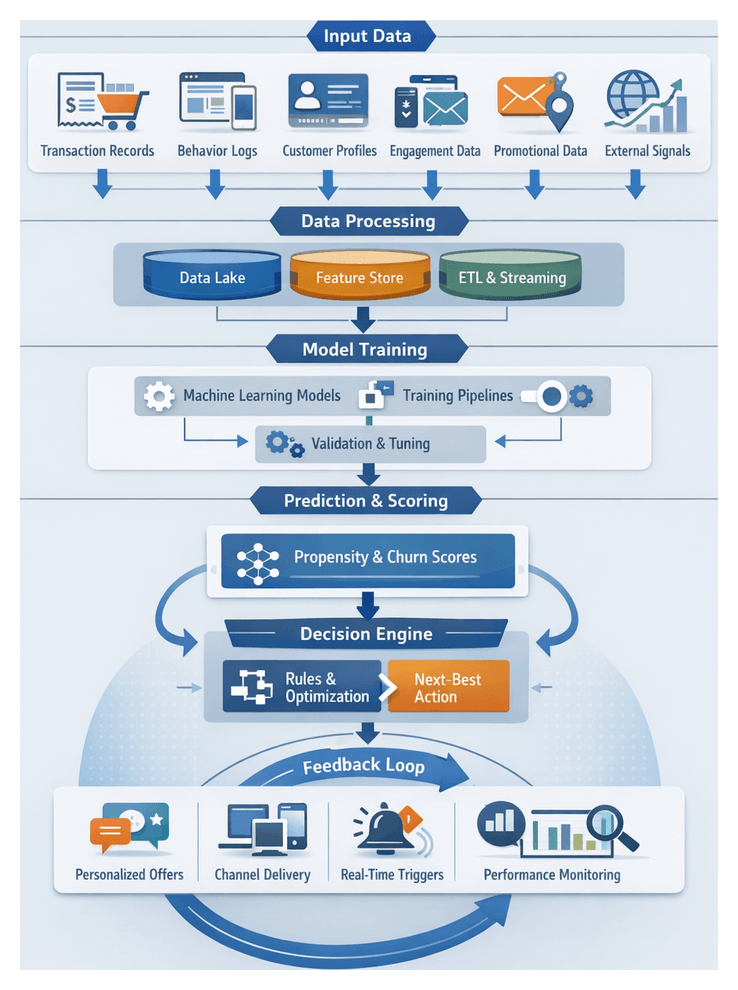

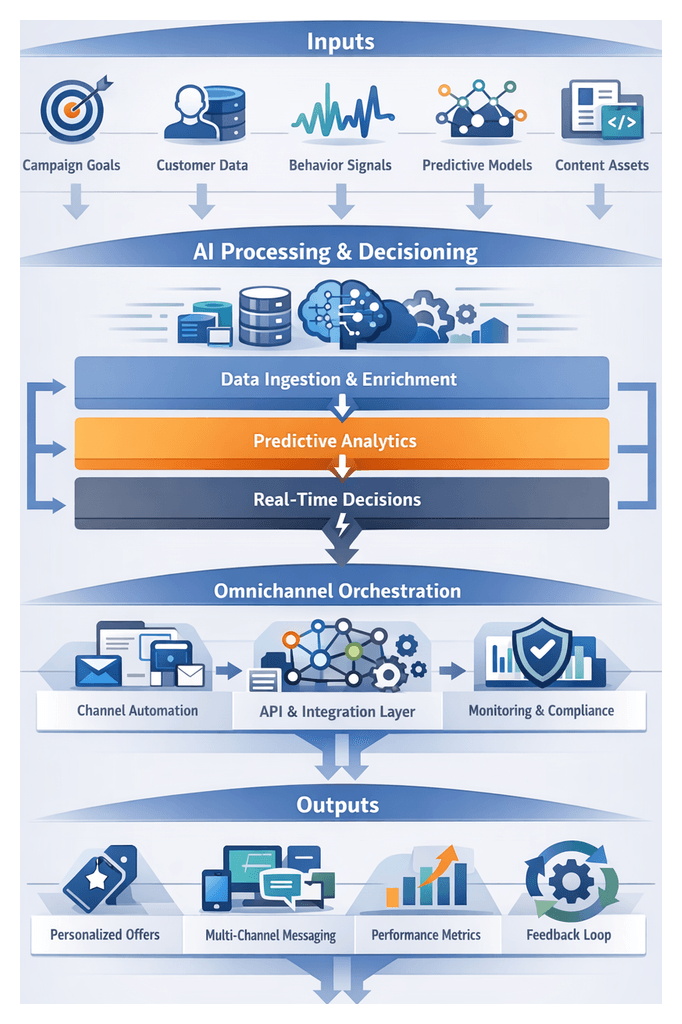

Predictive Analytics and Next-Best-Action Engines

- Feature engineering pipelines that transform raw and enriched data into model-ready features.

- Model training, hyperparameter tuning and A/B testing on platforms such as Amazon SageMaker or Azure Machine Learning.

- Low-latency inference endpoints delivering churn risk, lifetime value and propensity scores in real time.

Decision Logic and Orchestration Agents

- Rule evaluation engines enforcing deterministic policies—exclusions, budget caps and compliance constraints.

- Dynamic pathing through ML-augmented decision trees that learn from historical outcomes.

- Workflow coordination and task scheduling with platforms like Apache Airflow to guarantee reliability and retry logic.

These agents interoperate via event streams, message buses and API calls, producing enriched event streams and actionable recommendations for personalization and campaign orchestration.

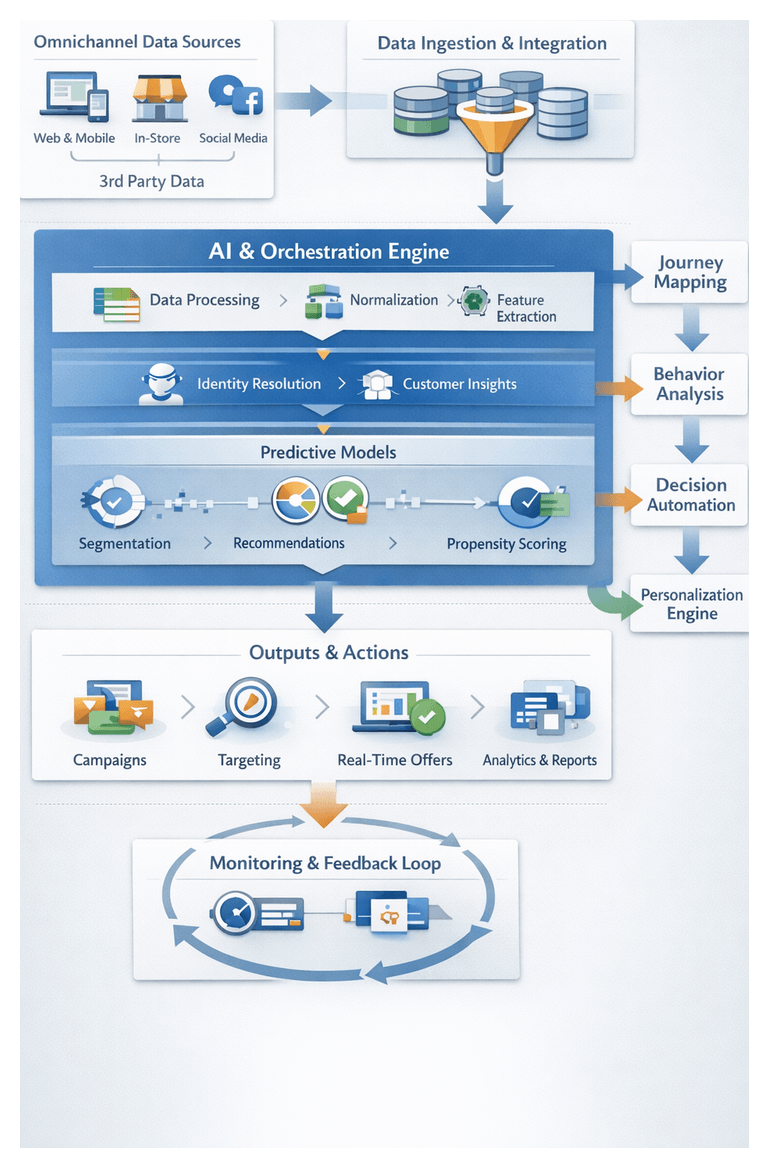

Pillars of the Omnichannel AI Workflow

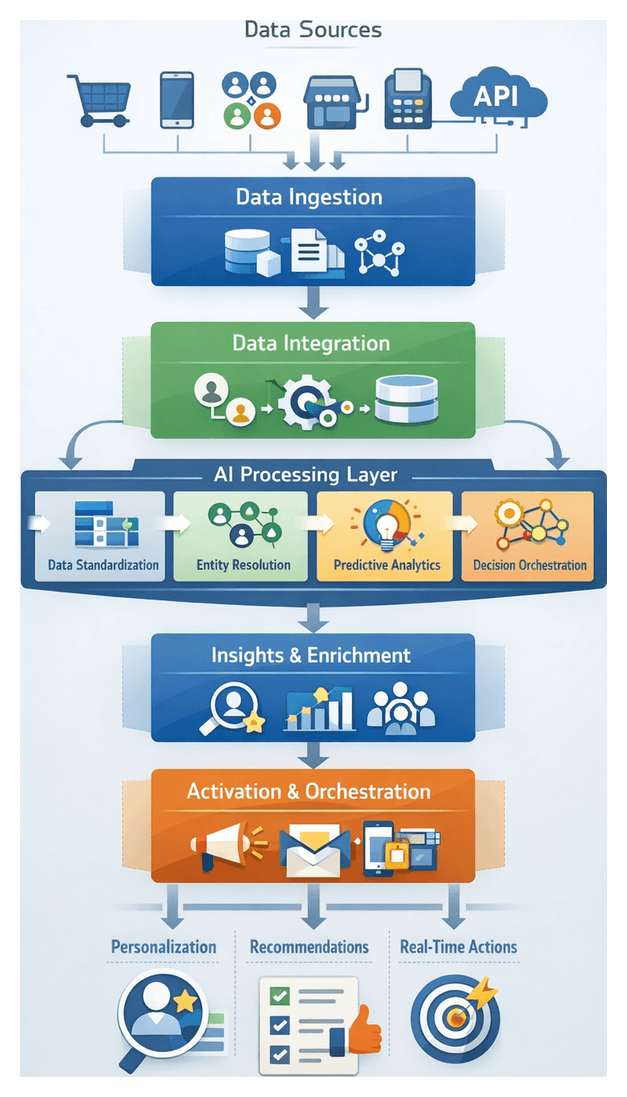

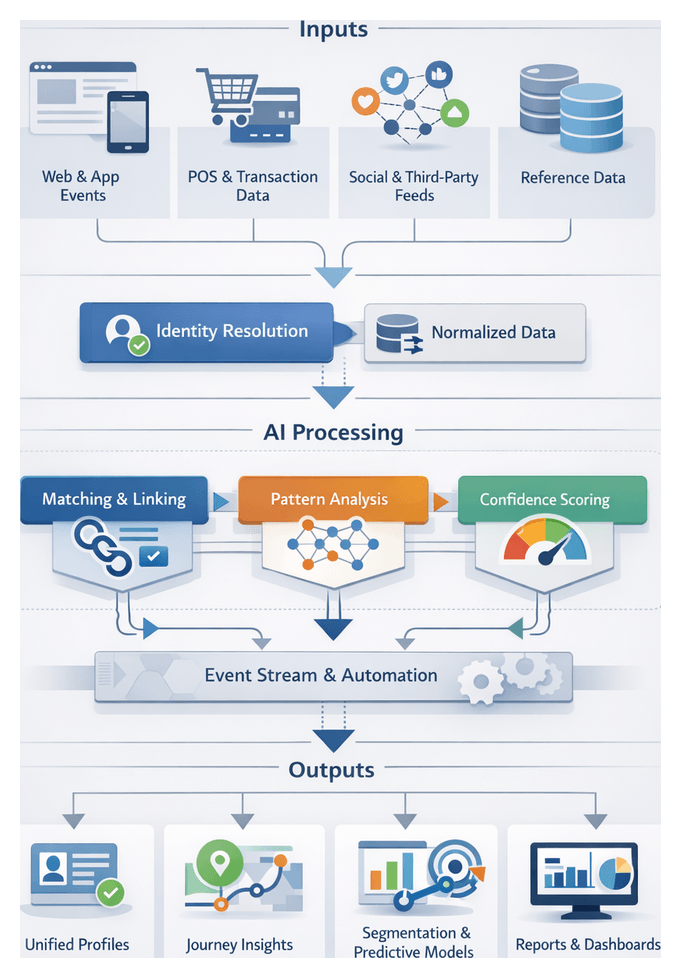

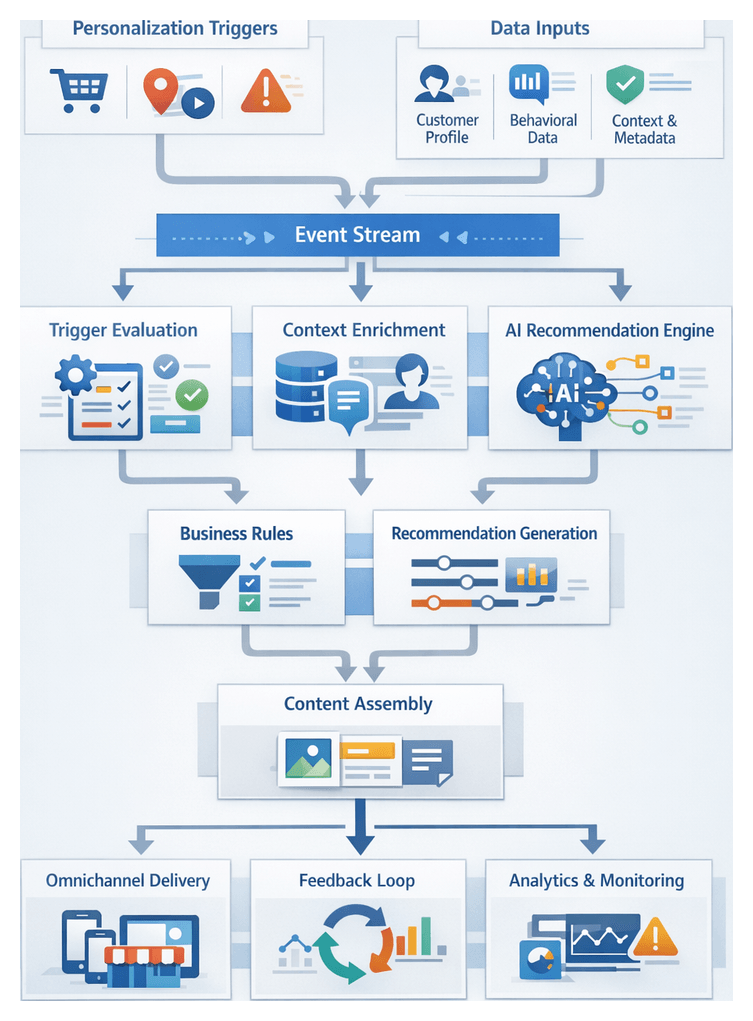

The unified workflow framework can be conceptualized in four coordinated pillars, each supported by AI agents and integration tools:

1. Data Ingestion and Capture

Ingestion agents collect raw customer events from web SDKs, mobile apps, POS terminals and social APIs. They standardize formats, enrich records with metadata and perform initial validation. High-throughput pipelines using Apache Kafka or cloud equivalents buffer and route data into data lakes or cloud storage, preserving provenance for audit and replay.

2. Data Integration and Harmonization

Integration agents map normalized event batches to a canonical data model. Entity matching services consolidate related records into unified customer and product profiles, while cleansing agents resolve anomalies and impute missing values. Harmonized data is persisted in centralized repositories—data warehouses on platforms such as Snowflake—or customer data platforms for downstream consumption.

3. Insight Generation and Profile Enrichment

Identity resolution agents merge duplicates and reconcile identities across channels. Journey analytics reconstructs time-ordered interaction paths, highlighting milestones and drop-off points. Behavioral clustering and sentiment scoring identify customer segments, while predictive models score profiles for churn risk, lifetime value and next-best actions.

4. Activation and Campaign Orchestration

Personalization engines evaluate real-time triggers—page views, cart updates, loyalty events—and select tailored messages or offers. Orchestration platforms schedule multi-touch campaigns, enforce channel priorities, and route content to delivery systems, including email service providers, push notification gateways and in-store displays. Monitoring agents track performance, update attribution data, and trigger model retraining when drift is detected.

By defining clear interfaces—message schemas, API contracts and storage conventions—this architecture supports incremental enhancements. New channels, AI agents or data sources can be onboarded by adding connector adapters and updating orchestration rules without overhauling the core pipeline.

Chapter 1: Data Ingestion and Channel Capture

Omnichannel Data Gathering

The foundational step in any omnichannel AI workflow is systematic capture of customer interactions and transactions across web browsers, mobile apps, point-of-sale terminals, social platforms and partner systems. Comprehensive event collection provides a time-stamped repository that serves as the single source of truth for personalization engines, predictive models and analytics. By defining inputs, data contracts and governance structures at this stage, organizations enable unified customer journeys, real-time recommendations, robust attribution analysis and compliance with regulations such as GDPR and CCPA.

Business Context and Importance

- End-to-end visibility: Complete logs from desktop, mobile, in-store and third-party marketplaces support precise journey mapping.

- Personalization at scale: Dynamic content delivery and next-best-action recommendations require fresh, channel-agnostic event streams.

- AI-driven analytics: High-fidelity data inputs minimize bias and improve accuracy in sentiment analysis, forecasting and attribution models.

- Operational efficiency: Automated pipelines reduce manual handling, accelerate time-to-insight and lower error rates.

- Regulatory compliance: Audit trails of every event ensure readiness for data subject requests and regulatory audits.

Key Input Sources

- Web event streams: Clickstreams, page views, form submissions from platforms like Google Analytics and Adobe Experience Platform.

- Mobile application events: In-app actions and session metadata via mobile SDKs for iOS and Android.

- Point-of-sale systems: Transactions, returns and loyalty interactions from retail management software.

- Social interactions: Likes, shares, comments and ad engagement retrieved through Facebook, Instagram, Twitter and LinkedIn APIs.

- Partner feeds: Referral data and marketplace transactions ingested via SFTP, webhooks or API calls.

- IoT and sensors: Proximity beacons, shelf monitors and smart fitting rooms enriching digital records.

- Customer service logs: Chat transcripts, email threads and call center interactions from CRM exports.

Prerequisites and Governance

- Event taxonomy and schema design: A unified model defining event types, attributes and naming conventions that drive instrumentation and downstream logic.

- Instrumentation strategy: Deployment of tracking pixels, SDKs and in-store tags coordinated across marketing, development and analytics teams.

- Privacy and compliance: Consent management, anonymization policies and retention schedules aligned with GDPR, CCPA and PCI DSS requirements.

- Secure ingestion channels: Encrypted pipelines (TLS/HTTPS), VPN or private connections to safeguard data in transit.

- API access and rate management: Valid credentials, token rotation and monitoring to prevent service disruptions.

- Latency requirements: Definition of real-time streams via message brokers like Apache Kafka or Amazon Kinesis, versus batch ingestion schedules.

- Data quality at source: Validation rules, anomaly thresholds and completeness checks to catch errors early.

- AI-enabled connectors: Intelligent agents that detect new sources, adapt to evolving formats and enrich metadata during capture.

Conditional Dependencies and Alignment

- Synchronization windows aligning session identifiers across web and mobile events.

- Time-zone normalization to ensure consistent chronology between digital and in-store logs.

- Referential integrity linking CRM identifiers to anonymous analytics records.

- Graceful handling of missing feeds with fallback values and retry policies.

Cross-Functional Collaboration

- Data engineering configures pipelines, manages infrastructure and enforces security controls.

- Marketing and analytics define event requirements, attribute priorities and measurement objectives.

- IT and security oversee compliance, credential management and network security.

- Business units coordinate with external partners on SLAs, data sharing agreements and change notifications.

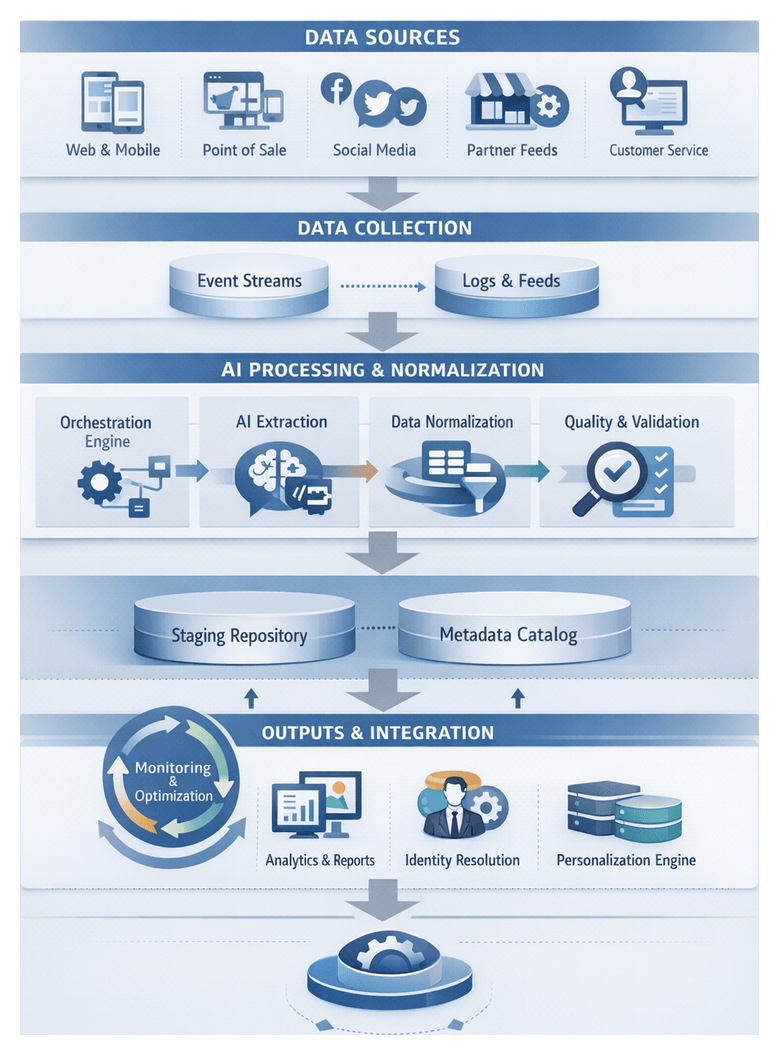

Automated Data Extraction and Normalization

Bridging raw event capture and unified integration, this stage uses orchestration engines, source connectors and AI agents to retrieve, parse and standardize heterogeneous records. By automating extraction, classification and schema mapping, organizations reduce custom coding, accelerate deployments and maintain consistent data quality at scale.

Core Workflow Components

- Orchestration Engine: Schedules jobs, enforces dependencies and manages retries using platforms such as Apache Airflow or Azure Data Factory.

- Source Connectors: Prebuilt adapters for web analytics APIs, mobile SDKs, POS databases, social platforms and partner feeds.

- AI Extraction Agents: ML modules that parse semi-structured and unstructured payloads using natural language processing and computer vision.

- Normalization Service: Rules engine for schema mapping, type conversion, timestamp alignment and reference lookups.

- Metadata Catalog: Repository tracking data lineage, schema versions, transformation rules and quality metrics.

- Staging Repository: Transient storage in a data lake or cloud object store holding normalized records before integration.

Connector Invocation Patterns

- Batch Pull: Scheduled extractions via API queries for legacy systems and partner CSV feeds.

- Event Stream: Continuous ingestion through message brokers like Apache Kafka or Amazon Kinesis, supporting at-least-once delivery and offset management.

AI-Driven Parsing and Classification

- Schema Inference Models: Supervised learning to identify field boundaries and flatten nested JSON.

- Natural Language Processing: Entity recognition and sentiment extraction from customer comments and social posts.

- Computer Vision Techniques: Decoding QR scans and shelf monitoring metadata into structured events.

Normalization Logic

- Attribute Mapping: Canonical naming of fields such as txn_id versus transactionId.

- Data Type Conversion: Standardizing numerics, dates to ISO 8601 and boolean flags.

- Reference Enrichment: Lookup tables for regions, product categories and loyalty tiers.

- Currency and Unit Standardization: Base currency conversion and uniform measurement units.

- Timestamp Alignment: Harmonizing time zones and applying ingestion timestamps.

- Anomaly Detection: Flagging outliers such as negative quantities or implausible prices.

Data Lineage and Quality Assurance

- Recording each transformation step, AI model version and connector detail in the metadata catalog.

- Automated quality checks capturing field coverage, null rates and value distributions.

- Quarantine zone for malformed or suspicious records, triggering exception workflows.

- Audit logs documenting errors, warnings and performance metrics for root-cause analysis.

Error Handling and Exception Management

- Quarantine Repository: Secure staging for failed records awaiting triage.

- Automated Alerting: Notifications via email, Slack or Microsoft Teams with sample payloads and error codes.

- Human-in-the-Loop Triage: Engineers update parsing rules or reference data and retry ingestion via API.

- Ticketing Integration: Incident tracking in Jira for resolution and root-cause documentation.

- Feedback Loop: AI agents and rules engines incorporate corrections to prevent recurrence.

Performance and Scalability

- Parallel Processing: Partition by source, event type or time window for concurrent ingestion.

- Auto-Scaling Compute: Containerized microservices on Kubernetes or serverless functions (AWS Lambda, Azure Functions).

- Streaming vs. Micro-Batch: Sub-second windows for latency-sensitive channels, longer intervals for batch feeds.

- Resource Monitoring: Metrics on CPU, memory and I/O integrated with Datadog or Prometheus dashboards.

Security and Compliance

- Authentication and Authorization: OAuth 2.0 or API keys with role-based access control (RBAC).

- Data Encryption: TLS in transit, AES-256 at rest managed by AWS Key Management Service.

- PII Masking: Obfuscation policies aligned with GDPR and CCPA applied during parsing.

- Audit Trails: Immutable logs of access, transformations and configuration changes.

Handoff to Integration

- Message Bus: Publishing normalized events to Kafka or Amazon Kinesis topics for consumption.

- File Drop: Compressed Parquet or Avro files in Amazon S3 or Google Cloud Storage for batch ingestion.

- API Push: Data ingestion into unified repositories such as Snowflake or Azure Synapse Analytics.

- Webhook Notifications: Callbacks signaling data availability with schema metadata and manifests.

AI-Powered Event Formatting and Validation

This quality-gate stage uses AI modules to classify events, detect anomalies and enforce schema integrity. Adaptive, learning-driven systems complement rule-based checks, scaling with evolving sources and reducing manual effort while ensuring clean inputs for identity resolution and predictive modeling.

Event Classification

- Semantic labeling of events like page_view, add_to_cart or coupon_redemption.

- Context enrichment flags for promotion_code, loyalty_member or abandoned_session.

- Model retraining to incorporate new event types from A/B tests or integrations.

- Confidence scoring to trigger fallback logic when certainty is low.

Anomaly Detection

- Threshold-based alerts for metrics such as event volume per minute or latency distributions.

- Clustering algorithms like Isolation Forest or DBSCAN to identify sparse clusters of unusual events.

- Time-series techniques for spikes or drops using seasonal decomposition or z-score evaluation.

- Automated triage creating incidents when critical integrity breaches occur.

Schema Validation and Normalization

- Dynamic schema inference with machine learning proposals for new attributes.

- Type coercion of timestamps, numerics and nested JSON structures.

- Missing data imputation via predictive models leveraging correlated features.

- Normalization of categorical values such as country codes and product identifiers.

Supporting Systems

- Message queues like Kafka or cloud services such as Google Cloud Dataflow.

- Metadata catalogs tracking schema definitions, model versions and validation outcomes.

- Data lakes or warehouses persisting validated and normalized events.

- Monitoring dashboards visualizing error rates, anomaly trends and throughput metrics.

Production Readiness

- Model governance with version control and performance regression tests.

- Observability of throughput, latency and error metrics.

- Graceful degradation to rule-based logic or data buffering on model failures.

- Security controls ensuring validation logs meet compliance standards.

Benefits and Considerations

- Improved data quality through automated error detection and correction.

- Scalability across diverse event types without constant rule updates.

- Adaptability via continuous learning loops for new schemas and anomaly patterns.

- End-to-end transparency with metadata annotations and lineage tracking.

Output Artifacts and Downstream Integration

The final phase of ingestion delivers structured artifacts, clear dependency mappings and automated handoff mechanisms. These outputs form the basis for data integration, identity resolution and analytics orchestration, ensuring reliability, traceability and compliance.

Defined Output Artifacts

- Raw Event Archive: Immutable, time-ordered payloads stored in object storage or a data lake for audit and reprocessing.

- Normalized Records: Schema-conformant events with uniform fields such as customerID, timestamp and eventType.

- Quality and Validation Reports: Summaries of anomalies, schema failures, duplicates and completeness metrics for data owners.

- Lineage Metadata: Provenance records tracing each event to its source, AI model versions and transformation steps.

- Processing Logs: Execution status, resource metrics and error traces captured by orchestration engines and AI agents.

Dependencies and Risk Management

- Source System Availability: SLAs and real-time monitoring of web servers, POS terminals, APIs and partner endpoints.

- Schema Registry: Central repository of canonical definitions fetched at runtime by AI parsers and classifiers.

- Anomaly Detection Services: Operational AI modules tuned to flag outliers and prevent silent data corruption.

- Enrichment Engines: Low-latency lookups against master tables for product, loyalty and promotional metadata.

- Orchestration Framework: Workflow engines that coordinate tasks, manage failure states and enforce execution order.

Automated Handoff Mechanisms

- Landing Zone Transfer: Writing normalized files to a data lake directory or table with notifications via message queues or event buses.

- Streaming Publication: Publishing cleaned event streams to Kafka or cloud streaming services for real-time consumption.

- API Callbacks: Webhooks informing downstream microservices of new batches or streams with metadata payloads.

- Orchestration Signals: Task completion events carrying schema version, processing node and anomaly flags for conditional branching.

- Metadata Catalog Updates: Registration of new datasets, partitions or offsets enabling dynamic discovery by integration pipelines.

Governance, Security and Compliance

- Access Control: RBAC for ingestion outputs, with encryption at rest and in transit.

- Data Masking: Tokenization of PII fields before landing in shared zones, with key management and vaulting.

- Audit Trails: Immutable logs of access, modification and deletion actions for regulatory audits.

- Retention Policies: Automated purging of stale archives and reports according to defined schedules.

Reliability Patterns

- Transactional Writes: Atomic operations to prevent partial writes and enable safe retries without duplication.

- Ready/Failed Markers: Status flags (_SUCCESS, _ERROR) encapsulating summary statistics for downstream decision logic.

- Automated Alerts: Monitoring of volume drops, error spikes and schema drift with escalation workflows.

- Versioned Artifacts: Tagging outputs by timestamp, schema evolution and agent code revision for reproducibility.

- Health Checks: Probes validating connectivity to sources, schema registry and AI modules, pausing ingestion on failure.

Integration with Catalogs and Lineage Tools

- Automated Metadata Ingestion: Catalog connectors retrieving lineage, schema and processing metadata from logs.

- Lineage Visualization: Graph-based tools mapping data flow from raw archives through normalization and validation.

- Impact Analysis: Forecasting downstream effects of schema or model updates to prevent incidents.

- Collaboration: Annotation of artifacts and linkage to issue tickets within the catalog interface for cross-functional alignment.

Best Practices

- Define explicit data contracts for each artifact, including field-level schemas and quality thresholds.

- Implement idempotent, transactional writes to support safe retries and avoid duplicates.

- Automate health checks and alerts to detect upstream issues before they cascade.

- Maintain centralized schema and AI model registries to coordinate parsing, classification and enrichment logic.

- Use metadata catalogs and lineage tools for end-to-end visibility and impact analysis.

- Establish clear governance around access control, data masking and audit logging.

Chapter 2: Data Integration and Harmonization

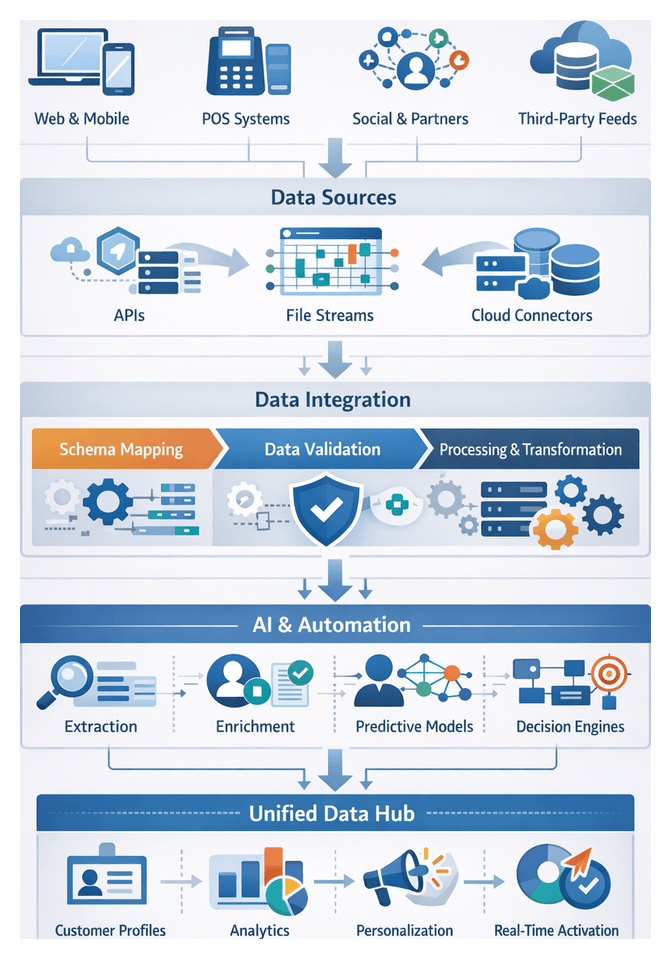

Data Landscape and Intake for Omnichannel AI Workflows

Retail and e-commerce organizations capture customer interactions across web sites, mobile apps, point-of-sale terminals, social media and partner platforms. Each touchpoint emits distinct event streams—clickstream logs, in-app engagement metrics, transactional records, sentiment data and third-party feeds—often in incompatible formats and update cadences. Without a unified intake strategy, these silos undermine personalization, fragment reporting and delay real-time decisioning.

Fragmentation arises from disparate technology stacks—legacy on-premises systems coexisting with cloud-native services—and independent business unit procurements that define asynchronous schemas and governance policies. Data arrives as nested JSON, XML feeds, flat files and proprietary APIs. Channels generate bursty volumes during peak campaigns or seasonal sales, while legacy exports may only refresh daily. Achieving comprehensive capture demands streaming connectors alongside batch pipelines, a central schema registry and automated validation to adapt to evolving definitions.

Core prerequisites for reliable intake include:

- Persistent customer identifiers to unify profiles

- Instrumented event tracking across web, mobile and in-store systems

- APIs or connectors for third-party and partner feeds

- Data governance framework covering ownership, lineage and quality

- Schema registry or catalog to track and version structures

- Security and privacy policies ensuring regulatory compliance

- Scalable infrastructure supporting both streaming and batch modes

- Monitoring and alerting for data latency, throughput and anomalies

Collaboration between IT, data engineering, security and business teams is essential. For example, marketing defines matching rules for persistent identifiers, digital teams manage API credentials for social feeds, and compliance vets data handling standards. Underpinning the intake stage with these technical and organizational conditions lays the foundation for downstream AI-driven processes.

Schema Mapping and Data Harmonization

After intake, the orchestration layer unifies heterogeneous datasets by mapping source models to a canonical schema. Automated metadata ingestion, AI-driven mapping proposals and human validation ensure that every field and entity aligns with master definitions. This coordinated approach enables consistent analytics, identity resolution and personalization.

Metadata Ingestion and Catalog Population

Connectors poll source endpoints—relational databases, data lakes, queues or SaaS APIs—and extract schema definitions, sample records and data type information into a central catalog such as Collibra or Alation. Profiling agents apply statistical and machine learning techniques to infer semantic roles, detect anomalies and classify fields by domain.

- Orchestration via engines like Apache Airflow or AWS Step Functions schedules regular schema refreshes.

- Versioned metadata tracks schema evolution over time.

- Health checks and automated retries ensure connector reliability.

Automated Mapping Rule Generation

AI-assisted engines propose field-to-field correspondences between source schemas and the target model. Semantic matching leverages metadata tags, natural language processing on field names and historical mapping patterns. The system logs confidence scores to determine which mappings auto-approve and which require human review.

- Mapping requests include source metadata, sample values and target definitions.

- Returned candidates specify transformation hints such as unit conversions.

- Low-confidence mappings generate review tasks; high-confidence ones proceed automatically.

Human-in-the-Loop Validation

Ambiguous or critical mappings route to data stewards through governance portals. Tasks include source and target context, sample data and transformation examples. Reviewers accept, adjust or annotate mappings, ensuring edge cases and legacy code sets receive proper handling.

- Review interfaces link directly to the metadata catalog and profiling dashboards.

- Approved mappings are versioned in the canonical mapping repository.

- Escalation rules enforce SLAs and notify stakeholders of overdue tasks.

Transformation Logic Execution

Finalized mappings drive ETL/ELT jobs in engines such as AWS Glue, Talend or cloud warehouses. The orchestration layer sequences transformations, allocates compute resources and enforces dependencies.

- Type casting, unit conversion and value mapping apply at field level.

- Intermediate checks validate schema conformance and data completeness.

- Errors trigger notifications and automated rollbacks per policy.

Entity Relationship Alignment

Post-transformation, workflows consult master data management platforms to reconcile customer, product and order references. Calls to the MDM API resolve foreign keys or generate exception tickets for orphan records. Corrections loop back into transformation jobs to ensure synchronized entity definitions.

Publishing to the Unified Repository

Aligned data loads into the unified customer data repository—whether a cloud data warehouse, lakehouse or CDP. Loading tasks write to production tables or object storage, with final reconciliation checks comparing record counts and key metrics against catalog expectations.

- Loading to platforms like Snowflake may leverage Snowpipe for incremental ingest.

- Post-load validations enforce completeness, uniqueness and statistical baselines.

- Successful loads open datasets for identity resolution, analytics and personalization.

Monitoring and Governance

Dashboards surface job durations, error rates and mapping coverage. Lineage tracking records every field’s journey from source to target, facilitating impact analysis when upstream changes occur. Role-based access controls restrict modifications to mappings and schema definitions, preserving dataset integrity.

Embedding AI Agents in Journey Design

AI agents automate extraction, enrichment, modeling and decision logic within customer journeys. Modular agents—each encapsulating expertise in text analysis, predictive scoring or recommendation—scale horizontally and adapt to new channels without reengineering workflows.

Data Extraction Agents

- Connector Agents: Discover API schemas, generate optimized calls and apply adaptive throttling.

- Parsing Agents: Infer nested JSON or XML structures, normalize records and flag anomalies.

Enrichment and Feature Engineering Agents

- Attribute Agents: Append demographics via third-party signals and assign loyalty tiers with hybrid ML rules.

- Context Agents: Profile devices, infer attribution channels and enrich sessions with contextual metadata.

Predictive Modeling Agents

- Propensity Scoring: Supervised models estimate purchase likelihood and churn risk.

- Value Estimation: Regression and survival analysis forecast customer lifetime value and upsell opportunities.

Next-Best-Action Agents

- Policy Engines: Combine deterministic rules with ML outputs to balance revenue, engagement and compliance.

- Personalization Engines: Rank content with learning-to-rank algorithms and optimize creatives via dynamic A/B testing.

Real-Time Orchestration Agents

- Event stream processors ingest data at sub-second scale and trigger decision pipelines.

- Delivery connectors invoke channel APIs—email, push or in-store—to execute recommendations.

- Resiliency agents detect failures and reroute interactions or initiate retries.

Integration Patterns and Governance

Standard patterns—central orchestrator invocation, event-based choreography and microservice deployments—ensure loose coupling and independent scalability. Model registries, automated monitoring and retraining pipelines maintain performance, detect drift and support compliance audits.

Delivering Harmonized Datasets and Dependencies

The harmonization stage produces consolidated fact and dimension tables, metadata catalogs and change data capture streams that feed identity resolution, enrichment and analytics. Controlled delivery and clear dependency definitions guarantee that downstream stages operate on complete, high-quality data.

Output Artifacts

- Consolidated customer master and event timeline tables

- Reference dimensions for channels, products, geography and time

- Schema registry entries and lineage logs documenting AI-driven transformations

- Delta tables or CDC topics for incremental updates

Upstream Dependencies

- Raw event feeds via secure FTP or Amazon S3, streaming topics from Apache Kafka or Confluent Cloud, and connectors like Fivetran or Stitch

- Schema mapping configurations stored in Git or Databricks Unity Catalog

- Data quality reports from validation agents highlighting exceptions

Delivery Mechanisms

- Batch exports to warehouses such as Snowflake with Snowpipe for CDC

- Streaming publication to Kafka topics with schema enforcement

- RESTful or gRPC data service APIs secured via OAuth

- External tables and materialized views for ad hoc analysis

Handoff to Identity Resolution

- Event-driven triggers or schedules in engines like Apache Airflow or Azure Data Factory launch matching jobs

- Direct reads from shared storage or API retrieval for incremental enrichment

- Schema contracts enforced via registry with backward-compatibility checks

- Automated retries and dead-letter queues for failed records

Audit, Lineage and Compliance

- Lineage captured in governance tools such as Apache Atlas or Collibra

- Immutable audit logs detailing AI-driven actions and change histories

- Role-based access controls, encryption in transit and at rest

- Data anonymization flags and retention schedules enforced via policy engines

Operational Monitoring and SLAs

- End-to-end latency and throughput metrics from raw ingestion to harmonized availability

- Error dashboards alerting on data quality breaches and schema drift

- Defined SLAs for batch windows or streaming freshness, with rollback and escalation paths

By unifying fragmented data, automating schema alignment, embedding AI agents in journey orchestration and delivering harmonized datasets with clear dependencies, organizations establish an end-to-end foundation for real-time personalization, advanced analytics and scalable customer engagement.

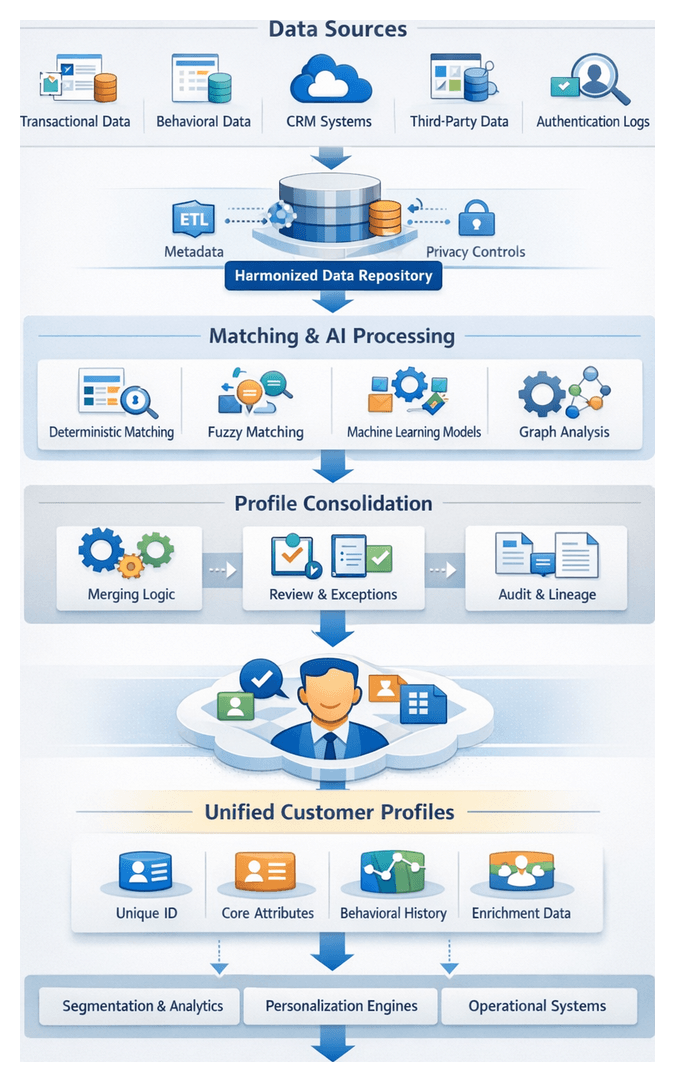

Chapter 3: Identity Resolution and Profile Enrichment

Establishing Single Customer Identities

In omnichannel retail and e-commerce, consumer journeys generate fragmented data across web sessions, mobile apps, in-store transactions, social platforms and third-party enrichments. Establishing single customer identities merges these discrete records into authoritative, persistent profiles. This unified view drives personalized experiences, accurate analytics and efficient campaign orchestration.

Key objectives include:

- Eliminating duplicate profiles and reducing data fragmentation

- Reconciling conflicting attributes such as name variants, addresses and contact details

- Assigning persistent identifiers to support cross-channel recognition

- Enabling precise lifetime value measurement and engagement metrics

- Laying a foundation for segmentation, journey mapping and real-time personalization

By the end of this stage, each customer is represented by a single, enriched profile that supports downstream AI-driven personalization engines.

Data Inputs and Prerequisites

Effective identity resolution requires harmonized input datasets and governance controls. Core prerequisites include:

- Harmonized Data Repository: Event records must be schema-mapped and format-normalized via ETL frameworks.

- Metadata and Lineage Tracking: Records should carry source identifiers, ingestion timestamps and transformation history.

- Consent and Privacy Flags: Customer opt-in statuses must be captured to enforce compliance with GDPR, CCPA and other regulations.

- Reference Tables: Standard lists for countries, currencies, product categories and channel codes ensure attribute alignment.

Common data source categories:

- Transactional Systems: Order databases, payment processors

- Behavioral Logs: Web clickstreams, mobile events, email tracking pixels

- CRM Platforms: Contact records, service tickets, loyalty data

- Third-Party Enrichments: Demographic append services, geolocation feeds

- Authentication Providers: Single sign-on logs, device fingerprints

These inputs feed deterministic and probabilistic matching engines to consolidate records.

Matching Approaches and AI Algorithms

Identity matching combines multiple strategies to balance precision and recall. AI-driven tools and algorithms automate this process at scale.

Deterministic Matching

Exact comparisons on unique identifiers deliver high precision when fields are reliable. Typical rules include exact matches on email, phone number, loyalty ID and hashed keys. Platforms such as Informatica MDM and Microsoft Azure Data Factory embed deterministic rules within ETL pipelines to standardize and compare values.

Probabilistic and Fuzzy Matching

When data contains errors or variations, probabilistic models compute similarity scores across attributes. These models apply functions like Levenshtein distance, Jaro-Winkler and cosine similarity, weighted by attribute reliability. Services evaluate candidate pairs and return confidence scores, routing high-confidence matches to automated merges and lower-confidence cases to review queues.

Supervised Learning Models

Supervised classifiers learn matching patterns from labeled record pairs. Feature engineering captures similarity metrics, contextual signals and behavioral alignment. Training platforms like Amazon SageMaker, Google Cloud AI Platform and Microsoft Azure Machine Learning support model training, validation and deployment. RESTful APIs integrate these models into master data management and customer data platforms.

Unsupervised Clustering Techniques

Clustering algorithms such as DBSCAN and hierarchical clustering group similar records without labeled data. Dimensionality reduction methods like PCA or t-SNE simplify attribute spaces before clustering. Solutions like Databricks Lakehouse enable prototyping and productionizing unsupervised workflows, feeding cluster assignments back to identity resolution engines.

Graph-Based Analysis

Graph analytics represent records and attributes as nodes and edges, uncovering indirect links through community detection and centrality algorithms. Databases like Neo4j and frameworks such as Apache Spark GraphX facilitate scalable graph processing. This approach reveals complex relationships like shared devices or networked behaviors, enriching identity resolution beyond pairwise matching.

Deep Learning and Embeddings

Deep learning models produce dense embeddings that capture latent identity signals. Autoencoders compress attribute sets into compact representations, while Siamese networks train twin encoders to separate matched and non-matched pairs. Frameworks like TensorFlow and PyTorch accelerate training on GPU clusters. Embeddings drive both supervised and unsupervised matching, improving accuracy for sparse or inconsistent identifiers.

Real-Time and Batch Architectures

Identity matching can run in batch mode—through scheduled ETL jobs—or in real time via streaming frameworks. Apache Kafka with Confluent and AWS Kinesis support sub-second resolution, powering fraud detection and live personalization. Hybrid architectures combine batch-generated master indexes with low-latency lookup services exposed via APIs.

Merging Workflows and Ambiguity Resolution

The merging stage consolidates matched records into unified profiles, managing deterministic and probabilistic flows, exceptions and auditability.

Deterministic vs Probabilistic Flows

An orchestration engine—often implemented with AWS Step Functions or Apache Kafka workflows—partitions records based on match strategy. Deterministic sets invoke MDM platforms such as IBM InfoSphere MDM for exact merges. Non-deterministic records pass to probabilistic services, which returns confidence scores and merge recommendations.

Interactive Review and Exception Handling

Ambiguous matches fall into a review console where data stewards compare attributes side by side, view interaction histories and decide on merges, non-matches or enrichment requests. Steward decisions feed back into the match rules repository and retrain probabilistic models. Exception queues capture timeouts, format violations and conflicting directives. Tools such as Google Cloud Dataflow or Databricks streaming jobs manage retries and incident ticket creation.

Data Lineage and Auditability

Every merge action logs record IDs, source tags, match strategy, confidence scores and timestamps into a metadata ledger. This ledger integrates with data catalogs to provide an auditable trail for compliance and analysis. Attributes such as merge rules, steward decisions and service interactions remain traceable back to original events.

Scalability and Performance

High-volume implementations shard records by region or segment, use micro-batches to balance throughput and latency, and auto-scale matching services via container orchestration like Kubernetes. Monitoring tools track queue depths, service latencies and merge success rates. Alerts drive dynamic resource adjustments, preventing backlogs.

Coordination with Enrichment and Analytics

Post-merge profiles trigger enrichment workflows that fetch demographic appends, geolocation lookups and behavioral scores. Unified profiles synchronize with CRM and marketing automation platforms via events or batch exports to secure data lakes. They also populate graph databases for social network and household analysis, enabling downstream journey reconstruction and analytics.

Consolidated Profile Artifacts and Handoff

Upon merge completion, the system produces consolidated profiles containing:

- Unique Customer Identifier linking to the master record

- Core Attributes: Demographics, contact data, account metadata

- Behavioral History: Chronological interaction events and purchase records

- Enrichment Metadata: Third-party appends and inferred preferences

- Confidence and Provenance Scores quantifying attribute reliability and lineage

Dependencies and Data Lineage

Profile integrity depends on:

- Harmonized dataset feeds conforming to unified schemas

- Match rule configurations and threshold settings

- Audit trails from ingestion, transformation and merge stages

- Successful enrichment service calls before profile consolidation

Handoff to Journey Reconstruction

Consolidated profiles publish to customer data platforms such as Segment CDP or Salesforce Customer 360. Journey analytics engines subscribe via message queues or change data capture pipelines to:

- Link profiles with historical touchpoints using the unique identifier

- Augment events with profile attributes—segment membership, loyalty status, risk scores

- Assemble end-to-end timelines for visualization and analysis

Integration with Segmentation and Analytics

Downstream workflows consume profiles through:

- Batch Exports in Parquet or CSV for cohort analysis and model training

- API Endpoints for real-time decisioning and personalization

- Streaming Feeds for near-real-time segment assignment and scoring

This decoupling enables experimentation with new segmentation criteria and ML models without reprocessing raw data.

Synchronization with Operational Systems

Operational applications—CRM, email service providers and marketing platforms—receive incremental profile updates. Key practices include:

- Delta exports to minimize data transfer

- Field mapping rules to align repository attributes with target schemas

- Conflict resolution policies favoring the most recent updates

- Error handling routines that log exceptions and trigger retries

Governance, Monitoring and Feedback Loops

Robust governance ensures profile quality and operational reliability. Essential components:

- Data Quality Dashboards tracking completeness, match rates and confidence distributions

- Alerting on anomalous profile volumes, low-confidence merges or sync failures

- Feedback channels for downstream teams to flag incorrect merges or missing data

- Audit logs of profile creation, updates and exports for compliance and investigation

These controls maintain trust in unified profiles and enable continuous improvement of the identity resolution process.

Chapter 4: Touchpoint Mapping and Journey Reconstruction

Linking Interactions to Customer Profiles

Establishing a unified view of each customer’s touchpoints across channels is the foundation of a robust omnichannel workflow. Raw event data—web page views, mobile app actions, point-of-sale transactions, social media engagements and third-party feed events—must be systematically associated with resolved identity records. This linking process bridges fragmented event streams and actionable insights, enabling downstream analyses such as journey reconstruction, segmentation and next-best-action modeling.

By correlating behavior across channels, organizations gain the context required to sequence behavior, detect patterns and drive data-informed decision making. A single source of truth for customer history underpins real-time personalization engines and advanced analytics including cohort evolution, channel attribution and drop-off detection. Without precise linkage, event data remains siloed by channel and identifier, preventing a holistic view of the customer experience.

Key Inputs and Data Sources

- Interaction Event Streams:

- Web clickstream logs from Tealium or mParticle

- Mobile app events collected via SDKs (in-app purchases, feature interactions, push engagements)

- Point-of-sale and loyalty program transactions streamed through Fivetran

- Social media data harvested via platform APIs (Facebook, Twitter, Instagram)

- Third-party referral events, affiliate clicks and syndicated content logs

- Resolved Identity Records:

- Unified customer profiles with canonical identifiers, hashed emails and device IDs

- Matching confidence scores and audit trails from deterministic and probabilistic resolution

- Historical merge logs preserving profile lineage for governance

- Reference Data:

- Data dictionaries defining event schemas and attribute semantics

- Metadata catalogs describing source systems, ingestion timestamps and transformation lineage

Prerequisites and Conditions

- Robust Identity Resolution Framework: High-fidelity profiles served by a CDP or data warehouse such as Segment or RudderStack

- Normalized Event Schemas: Standardized field names, timestamp formats and taxonomies enforced by automated agents

- Time Synchronization: NTP-aligned timestamps across systems to prevent ordering anomalies

- Access Controls and Privacy Compliance: Role-based restrictions, GDPR/CCPA adherence and PII pseudonymization

- High-Performance Infrastructure: Scalable compute engines such as Snowflake or Apache Spark clusters to process billions of events

- Monitoring and Alerting: Dashboards tracking link success rates, unmatched volumes and latency with automated alerts for anomalies

Operational Workflow

- Event Partitioning and Pre-Filtering: Batch events by time window or source and remove noise (internal pings, bot traffic)

- Identifier Extraction and Normalization: Extract emails, device IDs and loyalty numbers; lowercase, strip non-numeric characters and apply consistent formatting

- Lookup and Match Execution: Perform deterministic key-value lookups for exact matches; invoke fuzzy matching logic for probabilistic associations

- Confidence Scoring and Thresholding: Compute match scores, automatically link above thresholds and flag gray-zone cases for manual review

- Audit Logging and Lineage Tracking: Record source event ID, profile ID, match method and confidence for governance and model retraining

- Error Handling and Retry Logic: Route unmatched events through retry policies, classify error types and alert engineers as needed

Integrating event streaming platforms like Apache Kafka or Amazon Kinesis with CDPs such as Segment and RudderStack, machine learning services on Amazon SageMaker, data warehouses like Snowflake and Google BigQuery, and orchestration tools such as Apache Airflow or Prefect ensures modularity, scalability and resilience.

Measurement and Quality Metrics

- Link Rate: Percentage of events successfully associated with a profile

- Match Confidence Distribution: Ratio of deterministic to probabilistic matches and score distribution

- Unmatched Event Volume: Count and percentage of events failing to link by type and source

- Latency: Average and percentile processing time per event

- Audit Trail Completeness: Coverage of lineage metadata for compliance and troubleshooting

Regular review of these metrics identifies data quality issues, system bottlenecks and opportunities to refine matching logic.

Sequencing and Visualizing Customer Journeys

With interactions linked to identities, the next step imposes temporal order on events and translates ordered sequences into visual artifacts. This sequencing and visualization stage transforms raw, profile-linked touchpoints into coherent journey paths for business analysts, data scientists and AI engines.

Events emitted by the identity resolution stage—including customer identifiers, timestamps, channel tags and contextual attributes—are published to an event bus such as Apache Kafka or Amazon Kinesis. An orchestration layer then directs records through batching, sorting, sessionization, enrichment and delivery to visualization engines.

Data Flow and System Interactions

- Time Window Batching: Group events into sliding windows to accommodate late arrivals and out-of-order records

- Temporal Sorting: Sort within batches by normalized timestamps, correcting for timezone differences and clock skew

- Sessionization: Define sessions using inactivity thresholds and channel-specific idle rules to identify coherent sequences

- Enrichment: Append AI-driven metadata—intent tags, journey phases and propensity scores—via agents

- Delivery to Visualization Engines: Forward sequences through REST APIs or connectors to platforms like Google Analytics Journey Reports or Microsoft Power BI

Actors and Tools

- Data Engineers: Configure time windows, message queue topics and schema evolution

- Integration Layer: Middleware such as Adobe Experience Platform to route messages and enforce transformations

- AI Agents: Stateless microservices for intent classification and anomaly tagging

- Stream Processing Engines: Apache Flink or Tableau Prep Conductor for high-volume temporal operations

- Visualization Platforms: Journey mapping in Google Analytics Journey Reports, Microsoft Power BI and Apache Superset for exploratory analysis

- Business Analysts: Validate journeys, identify drop-off points and annotate with business context

Standardized Data Models

- EventRecord: Fields for customerId, eventTimestamp, channel, eventType, attributes and sessionId

- SessionWindow: Represents contiguous EventRecords with sessionStart, sessionEnd and inactivityThreshold

- JourneyPath: Aggregates multiple SessionWindows annotated with journeyPhase and segmentTags

- VisualizationFrame: Converts JourneyPath into nodes and edges with metrics like timeOnStep, transitionProbability and dropOffRate

These models are defined with Avro or protocol buffers to enforce type safety and version control. Schema registries validate incoming records to prevent structural drift.

Visualization Pipeline

- Data Extraction: Pull JourneyPath records from the data lake or operational store via batch jobs or real-time connectors

- Aggregation: Compute temporal metrics such as average time per touchpoint, transition frequencies and loop patterns

- Chart Generation: Produce Sankey diagrams, sequence plots and heatmaps to surface flow volumes and concentration of activity

- Dashboard Assembly: Combine visual elements with filters for channel, cohort and recency in self-service portals

- Publication: Embed dashboards within campaign orchestration consoles or BI platforms for on-demand access

Organizations often employ a mix of open source and commercial tools. Apache Superset supports exploratory work, while Tableau and Power BI serve enterprise reporting. Real-time dashboards leverage WebSocket connectors for near-zero latency updates.

Coordination and Governance

- Schema Evolution Management: Change control for EventRecord and JourneyPath definitions

- Data Quality Monitoring: Checks for missing timestamps, duplicates and sessionization errors with automated alerts

- Access Control: Role-based permissions to safeguard sensitive data in visualization tools

- Performance Tuning: Continuous measurement of pipeline latency and rendering times

- Documentation and Training: Runbooks, data dictionaries and workshops to align business and technical teams

Sequencing and visualization deliver end-to-end transparency into customer journeys, accelerate insight cycles from days to minutes and foster cross-functional alignment. Interactive journey maps inform A/B tests, UI improvements and AI model retraining by highlighting friction points and emerging patterns.

AI-Driven Path Reconstruction

While sequencing orders touchpoints, AI-driven path reconstruction uncovers hidden loops and infers missing steps in customer journeys. Advanced algorithms process high-volume event streams to generate complete journey narratives, enabling end-to-end visibility into behavior patterns that inform predictive analytics and personalization engines.

Core AI Techniques

Probabilistic Sequence Models

- Hidden Markov Models (HMMs) and Markov Decision Processes (MDPs) infer hidden states such as engagement intent or purchase readiness

- Expectation-maximization trains transition and emission probabilities on historical sequences

- Viterbi decoding reconstructs the most likely state sequence for each journey

Graph-Based Journey Analysis

- Graph databases like Neo4j or Amazon Neptune model events as nodes and transitions as edges

- Edge weights capture transition frequency, time lag or revenue value

- PageRank and modularity algorithms identify influential touchpoints and journey clusters

Deep Learning Approaches

- Recurrent neural networks (RNNs), LSTM and transformer architectures learn complex temporal dependencies

- Attention mechanisms assign dynamic importance to touchpoints based on context

- Embedding layers convert high-cardinality attributes (product IDs, campaign codes) into dense vectors

Anomaly and Pattern Detection

- Autoencoders learn compressed representations of normal journeys and flag high-error sequences as anomalies

- Density-based clustering (DBSCAN) groups rare paths, revealing niche behaviors or data issues

- Statistical process control monitors key transition metrics for sudden shifts

Supporting Systems and Infrastructure

- Event Ingestion: Apache Kafka for ordered delivery of interaction logs

- Storage: Time-series databases and data lakes for raw event persistence

- Compute: Apache Spark and serverless platforms for distributed training and inference

- Model Serving: Container orchestration systems deploy low-latency inference microservices

- Graph and Feature Stores: Persist precomputed journey graphs and feature vectors for reuse

- Orchestration Engines: Apache Airflow or Prefect schedule retraining, inference calls and data synchronization

- Monitoring: Dashboards for pipeline health, model performance and data quality alerts

Operational Considerations

- Data Consistency and Lineage: Synchronize profile updates to reconstruction pipelines with metadata tracking for reproducibility

- Latency and Throughput: Employ edge inference, model caching and event partitioning to meet sub-second requirements

- Resilience and Error Handling: Implement retry logic, dead-letter queues and fallback sequencing rules to maintain continuity

- Scalability and Continuous Improvement: Use CI/CD pipelines for automated retraining, feature integration and zero-downtime deployments

By integrating probabilistic models, graph analytics and deep learning with scalable data platforms and orchestration layers, organizations transform fragmented logs into coherent path reconstructions. These narratives uncover high-impact touchpoints, feed predictive and personalization modules and support real-time responses to emerging trends.

Journey Map Outputs and Analysis Handoffs

The final stage produces structured artifacts that serve as the input for segmentation, predictive analytics, personalization orchestration and optimization dashboards. Clear output schemas, dependency tracking and robust handoff protocols ensure that journey insights flow seamlessly into downstream processes.

Core Output Artifacts

- Journey Sequence Dataset: Chronologically ordered events enriched with profile references, campaign IDs, engagement outcomes and sentiment flags in JSON Lines or Apache Parquet

- Visual Journey Map Models: Graph representations with nodes and weighted edges exported as Vega-Lite specifications, Cytoscape JSON or SVG

- Drop-Off and Engagement Heatmaps: Aggregated matrices highlighting abandonment points and channel transitions delivered as CSV or dashboard tables

- Engagement Loop Reports: Recurring behavior patterns with counts and average durations to inform churn prediction and re-engagement strategies

- Metadata and Lineage Catalog: Registry of source identifiers, schema versions, transformation references and execution timestamps for auditability

Key Dependencies

- Identity Resolution Outputs: Accurate profiles from Segment or RudderStack

- Unified Schema Definitions: Centralized repository for event and profile schemas

- Time Synchronization Services: NTP-synchronized servers and latency monitoring

- AI Model Artifacts: Versioned predictive and pattern-detection models

- Execution Orchestration Engine: Platforms like Apache to schedule and trigger handoffs

Handoff Mechanisms

- API Endpoints: RESTful or gRPC interfaces with OpenAPI-defined payloads for on-demand retrieval

- Message Queues and Event Streams: Kafka topics or cloud pub/sub channels broadcasting notifications of new artifacts

- Scheduled Data Exports: Bulk exports to Amazon S3, Google BigQuery or Snowflake with versioned file naming

- Shared Metadata Store: Central catalog services like Apache Atlas for asset discovery and validation

Each channel enforces access controls, encryption in transit and at rest, and schema validation to prevent downstream failures. Monitoring tracks deliverable latency, file integrity checksums and API response times, ensuring prompt escalation of any disruptions.

Integration with Downstream Modules

- Segmentation and Cohort Analysis: Dynamic clustering based on path similarity, time-to-conversion metrics and channel preferences

- Predictive Modeling: Next-best-action and churn-risk models trained on loop patterns and drop-off attributes

- Personalization Orchestration: Real-time decision engines triggering contextual messages based on journey state indicators

- Optimization Dashboards: BI teams visualizing heatmaps and loop frequencies to prioritize A/B tests and UX improvements

By codifying artifacts, dependencies and handoff protocols, retail and e-commerce organizations create a repeatable link between customer behavior insights and activation platforms. This rigor accelerates time-to-value and enables continuous optimization of the omnichannel experience.

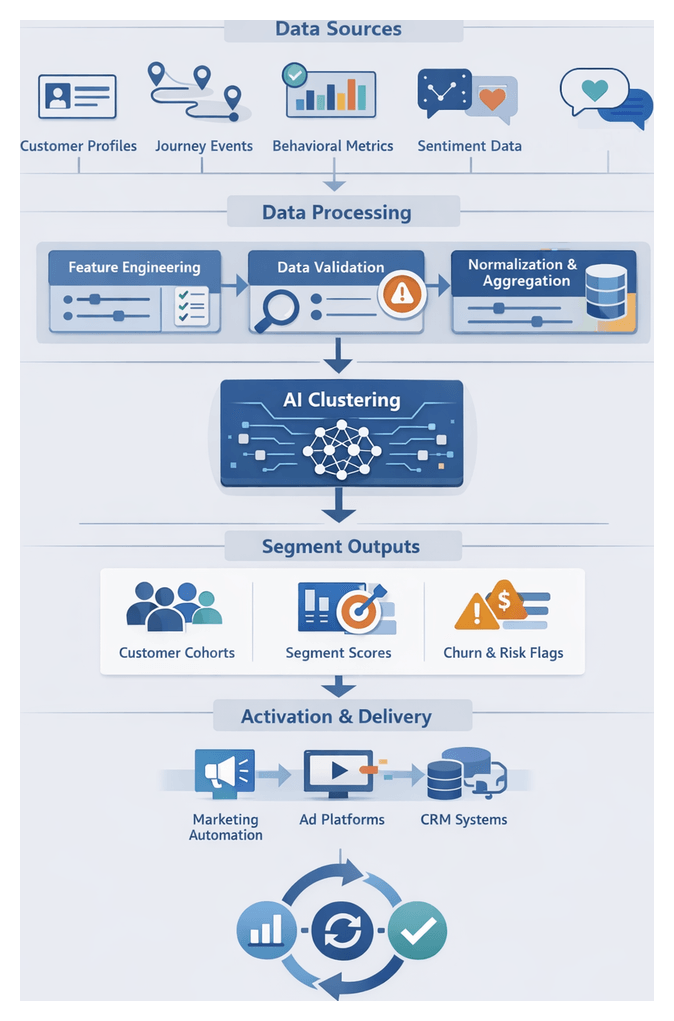

Chapter 5: Segmentation and Cohort Analysis

Purpose and Strategic Value of Behavior-Based Segmentation

In omnichannel retail and e-commerce, segmenting customer behaviors transforms raw interaction data into actionable insights. By grouping customers based on purchase patterns, engagement frequency, lifetime value and sentiment signals, organizations tailor messaging, optimize media spend and deliver experiences that resonate. Behavior-based segmentation replaces one-size-fits-all approaches, reducing wasted spend, increasing conversion rates and deepening loyalty.

- Targeted messaging and personalized content for specific cohorts

- Optimized channel budgets by focusing on high-value segments

- Precise measurement via cohort-level attribution and performance tracking

- Cross-functional alignment around a unified customer taxonomy

Operational benefits include improved return on ad spend through resource allocation by segment potential, faster multi-touch attribution analysis and agility in campaign decisions. Focusing on behavioral patterns also supports privacy compliance by avoiding sensitive attributes and underpins data governance through clear rules and quality thresholds.

Prerequisites for effective segmentation include:

- Consolidated Customer Profiles with persistent identifiers, demographic attributes and enriched data such as loyalty tier and predicted lifetime value.

- Sequenced Journey Data capturing ordered touchpoint records with timestamps, channel identifiers and session metrics.

- Behavioral Metrics including RFM parameters, browsing history, product affinities, abandoned carts and churn indicators.

- Sentiment and Qualitative Insights from behavioral and sentiment analysis providing intent tags and feedback summaries.

- Data Quality and Governance Metrics such as completeness scores, anomaly flags and lineage records with at least 95% coverage.

- Business Taxonomy and Rules defining lifecycle stages (new, active, at-risk, churned) and value tiers (high, mid, low).

- Technical Environment with scalable compute, storage and integration to AI clustering services like AWS SageMaker and Google Cloud AI Platform.

- Stakeholder Alignment on segmentation objectives, KPIs and governance processes across marketing, analytics and IT teams.

Segmentation Workflow and AI-Driven Clustering

The segmentation and cohort identification workflow orchestrates the transformation of unified profiles and journey data into meaningful customer groups. It leverages event streams, feature stores, AI clustering services and orchestration engines to keep segments current, relevant and ready for activation.

Data Streams and Orchestration

Key input sources include harmonized profiles in Snowflake, event sequences from Segment or Tealium, behavioral scores from Google Cloud AI Platform or Azure Machine Learning, and transactional data from ERP or CRM systems. Triggers may be scheduled intervals, data-readiness events or manual initiation. The orchestrator—using tools like Amazon SageMaker Pipelines or Apache Airflow—validates dependencies, allocates resources on clusters such as Databricks running Apache Spark, executes data preparation scripts and ensures end-to-end traceability.

Feature Engineering and Data Preparation

Data scientists and engineers define features including recency, frequency, monetary value, product affinity scores, channel engagement ratios and sentiment indices. The workflow involves:

- Joining journey and profile tables to enrich customer records

- Aggregating session metrics and time-windowed interactions in Apache Spark or SQL engines

- Normalizing and scaling features (z-score, min-max) for comparability

- Computing derived attributes such as lifetime value predictions using Amazon SageMaker

- Persisting engineered features to a feature store or staging tables

Data validation routines check for missing values, outliers and schema drift. Anomalies trigger exception paths for manual review or AI-based imputation via services like H2O.ai.

Clustering Algorithm Selection and Execution

Algorithm choice depends on dataset size, dimensionality and interpretability needs. Common options include:

- K-means and MiniBatch K-means via scikit-learn or Spark MLlib

- Density-based methods (DBSCAN, OPTICS) in scikit-learn for noise handling

- Hierarchical clustering in H2O.ai or Databricks for nested cohort structures

- Gaussian Mixture Models for soft assignments

- Self-organizing maps or spectral clustering for nonlinear relationships

Configuration parameters (cluster count, distance metrics, convergence thresholds) are managed in a versioned repository. Parameter tuning via grid search or Bayesian optimization may run asynchronously before production execution on Azure Machine Learning or similar platforms.

Model Monitoring, Validation and Quality Assurance

During execution, progress logs and metrics stream to dashboards such as Looker. Key indicators include silhouette scores, Davies-Bouldin index and inertia. Alerts trigger if metrics fall below SLAs, enabling rollbacks or hyperparameter re-tuning. Post-clustering, analysts and stakeholders review segment profiles in interactive notebooks or BI tools, compare against historical cohorts, conduct A/B tests, and apply business rules to merge or split clusters. Approved definitions are captured and versioned before activation.

Integration with Activation Systems

Final segments are published through APIs or batch exports to downstream systems:

- CDPs such as Segment Personas, Tealium or mParticle for real-time personalization

- Marketing automation platforms—Marketo, HubSpot, Salesforce Marketing Cloud—for email, SMS and push campaigns

- Ad platforms and social engines importing look-alike audiences derived from high-value segments

- Analytics suites updating dashboards with segment distributions and performance metrics

Secure data exchange protocols and audit logs ensure traceability of segment lineage and usage.

AI Contributions and Advanced Techniques

AI accelerates segmentation at every stage, from automated feature generation to dynamic clustering and real-time inference. A cohesive ecosystem of services, frameworks and orchestration platforms delivers precision and scalability.

Automated Feature Engineering and Data Quality

- Apache Airflow and Prefect orchestrate ETL pipelines that extract recency, frequency, monetary values and sentiment scores

- Databricks AutoML modules suggest optimal feature transformations and interactions

- H2O.ai Data Quality Monitor applies anomaly detection to flag outliers before clustering

- Feature store integration ensures consistent definitions across training and real-time inference

Advanced Clustering and Dynamic Segmentation

- Autoencoder-based embeddings in Amazon SageMaker or Microsoft Azure Machine Learning uncover nonlinear relationships

- Graph-based community detection with Amazon Neptune or Neo4j detects referral and co-purchase networks

- Online and incremental clustering on Apache Flink or AWS Kinesis Data Analytics updates segments in real time

Real-Time Inference Engines

- RESTful endpoints on Google Cloud AI Platform and Amazon SageMaker serve pre-trained models with millisecond latency

- Message buses like Kafka, AWS SNS and Azure Event Hubs distribute segmentation decisions for immediate personalization

- Embedding lightweight models in mobile apps or edge devices ensures offline segment evaluation

Monitoring, Governance and Workflow Automation

- Segment performance dashboards in Tableau and Power BI track size, stability and response rates

- Drift detection via Neptune AI and Fiddler triggers retraining workflows when boundaries no longer align

- Data catalogs such as Collibra and Alation record lineage of features and model versions

- Orchestration platforms (Apache Airflow, Prefect) and Infrastructure as Code tools (Terraform, CloudFormation) automate end-to-end pipelines

Segment Catalog and Campaign Handoff

The segment catalog consolidates defined cohorts, metadata and technical artifacts for downstream consumption. Key deliverables include:

- Segment Definitions: Human-readable names, logical criteria and business context

- Cohort Metadata: Creation date, model version, source datasets and stability scores

- Performance Baselines: Historical conversion rates, average order value, churn rates and engagement benchmarks

- Data Payload Schemas: API contracts for batch exports and real-time ingestion

- Membership Lists: Customer IDs mapped to segment IDs in tabular or JSON formats

Dependencies and Infrastructure

- Unified profiles and journey data enriched with behavioral and sentiment insights

- Storage in platforms like Google BigQuery or Adobe Experience Platform

- Orchestration frameworks such as Apache Airflow or Azure Data Factory managing refresh cadences

Handoff Mechanisms

- Batch exports to tables or object storage for platforms like Salesforce Marketing Cloud and Adobe Campaign

- API-based delivery to personalization engines such as Segment

- Streaming via Kafka or AWS Kinesis for instant campaign triggers

- Direct database synchronization through integration connectors

- Artifact versioning in a centralized registry for traceable deployments and rollback

Campaign Integration Considerations

- Trigger Configuration: Campaigns initiate based on segment membership changes

- Rule Mapping: Segment IDs map to email templates, push messages and in-app experiences

- Channel Prioritization: Sequenced outreach based on segment preferences and historical responses

- Personalization Tokenization: Dynamic content tokens populated with segment attributes

- Monitoring Hooks: Tracking parameters capture engagement by segment for analytics feedback

Feedback and Continuous Improvement

- Outcome Attribution: Linking campaign results to originating segments

- Model Retraining Triggers: Underperforming segments or behavior shifts initiate retraining on Google Cloud AI Platform

- Segment Health Dashboards: Stability scores, overlap metrics and growth rates guide merges or splits

- Governance and Compliance: Audit logs record segment creation, modification and deployment activities

Best Practices for Catalog Management

- Define clear naming conventions and versioning protocols for traceability

- Document business rationale, inclusion criteria and performance benchmarks for each segment

- Automate quality checks on membership counts and distribution anomalies prior to handoff

- Enforce role-based access controls to protect segment definitions and sensitive data

- Regularly review performance and adjust refresh cadences to balance agility and stability

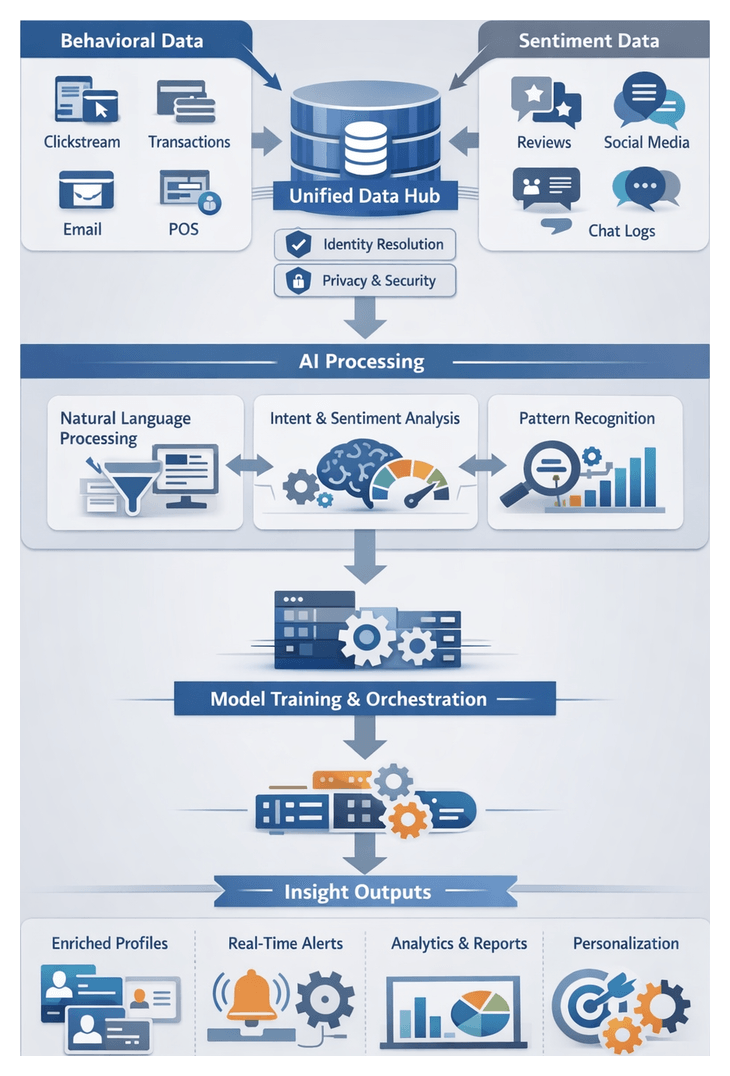

Chapter 6: Behavioral and Sentiment Analysis

Ingesting and Preparing Behavioral and Sentiment Data

The initial phase integrates quantitative interaction metrics with qualitative feedback to create a unified foundation for customer analysis. Structured records—clickstream events, transaction logs and engagement rates—are combined with unstructured inputs such as product reviews, social media comments and contact center transcripts. Aligning these signals to resolved customer identities enables accurate attribution and prepares data for advanced natural language processing and pattern recognition. Privacy, consent and security controls are applied to ensure compliance with regulations such as GDPR and CCPA.

Key Objectives

- Define the scope of behavioral metrics and feedback channels

- Verify data availability, quality and completeness across structured and unstructured sources

- Link each input to a unified customer identifier and journey segment

- Enforce privacy, security and consent management

- Partition or stream data for downstream AI workflows

Primary Data Inputs

Quantitative Interaction Metrics

- Web and mobile analytics (page views, clicks, session duration)

- Transaction records (orders, cart additions, promotions, refunds)

- Search queries and product exploration patterns

- Email and notification engagement (open rates, clicks)

- Loyalty program activity and point redemptions

- In-store point-of-sale logs

Qualitative Feedback Signals

- Product reviews and star ratings

- Social media posts and direct messages

- Survey responses and NPS feedback

- Contact center transcripts and chat logs

- User-generated content on forums and support portals

- Voice-of-customer data from focus groups

Prerequisites and Conditions

- Consolidated Data Repository: Scalable storage and real-time ingestion via platforms such as Snowflake, Google BigQuery or Azure Synapse Analytics, and streaming using Apache Kafka or Amazon Kinesis.

- Resolved Customer Identities: Identity resolution services merge cookies, device IDs and loyalty IDs into a single profile.

- Data Quality Assurance: Cleansing rules remove duplicates, correct malformed records and validate timestamps. Text sources undergo language detection, profanity filtering and spam removal using tools such as spaCy or NLTK.

- Privacy and Consent Management: Consent and retention policies enforced by OneTrust or TrustArc.

- Access Controls and Security: Role-based permissions, encryption at rest and in transit, and audit logging.

- Language and Locale Support: Locale tagging and optional translation via services like Google Translate API to guide multilingual workflows.

Organizations that satisfy these conditions can proceed to the analytical core where unstructured feedback is transformed into structured sentiment, intent and pattern insights.

Natural Language Processing and Pattern Recognition

This stage orchestrates AI-driven services to convert raw text into structured data. A distributed messaging layer decouples data producers and consumers, while preprocessing modules normalize text for analysis. Core components include intent classification, sentiment scoring and pattern detection. Model training, serving, orchestration and error handling ensure high accuracy and reliability at scale.

Data Intake and Preprocessing

- Stream text payloads via Apache Kafka or Amazon Kinesis with Avro or JSON Schema enforcement.

- Preprocess using spaCy or NLTK: language detection, tokenization, stop-word removal, lemmatization and entity masking.

- Apply backpressure controls and partition messages by channel for parallel processing.

Intent Classification, Sentiment Scoring and Pattern Detection

- Intent Classification: Transformer models such as BERT via Google Cloud Natural Language API categorize messages into intents like product inquiry or return request. Features include batch vs real-time modes, model versioning, confidence thresholds and fallback to human review.

- Sentiment Analysis: Compute polarity and emotional attributes with Amazon Comprehend or IBM Watson Natural Language Understanding. The pipeline orchestrates API calls, handles rate limits and enriches records with normalized scores.

- Pattern Recognition: Extract keywords and themes using TF-IDF, Latent Dirichlet Allocation and regex engines to surface recurring topics and domain-specific patterns.

Model Training, Deployment and Hybrid Strategies

- Feature Management: Centralized feature store feeds both batch training and real-time inference.

- Training Infrastructure: GPU clusters on Amazon SageMaker or Kubernetes, orchestrated by Kubeflow Pipelines or Apache Airflow, with experiment tracking via MLflow or Weights & Biases.

- Real-Time Serving: Models hosted on TensorFlow Serving, TorchServe or SageMaker Endpoints. Autoscaling, caching with Redis or Memcached, and monitoring with Prometheus and Grafana ensure low-latency inference.

- Hybrid Rule-Based Approaches: Incorporate sentiment lexicons, business rules and ensemble methods. Leverage Hugging Face Transformers for open collaboration and fine-tuning.

Orchestration, Enrichment and Quality Controls

- Manage workflows with Apache Airflow or AWS Step Functions, defining dependencies and retry policies.

- Integrate with knowledge bases and search indexes like Elasticsearch to enrich records with product specifications, past ticket histories and active promotions.