Orchestrating AI Driven Automated Software Testing Workflows

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Defining the Challenge of Modern Software Testing

Enterprise applications today span microservices, distributed systems, mobile platforms, APIs, and edge devices, all under stringent regulatory and security demands. Manual testing processes have become a bottleneck, unable to keep pace with rapid code commits, continuous integration and delivery pipelines, and dynamic cloud-native environments. To compete, organizations must accelerate test throughput without sacrificing functional and non-functional quality metrics.

Key factors driving this need include:

- Proliferation of Deployment Targets across public cloud, private data centers, mobile devices, and IoT endpoints, each requiring tailored test configurations.

- Increased Release Frequency with CI/CD pipelines triggering builds and deployments dozens or hundreds of times per day.

- Regulatory and Security Constraints demanding rigorous data handling, audit trails, and integrated security testing.

- Distributed System Observability requiring end-to-end coverage to detect performance degradations or integration faults.

- Parallel Development Streams—feature branching, pull requests, and hotfix workflows—that overwhelm manual capacities.

Accelerating test throughput in this complex landscape requires a structured, automated workflow that leverages intelligent orchestration and AI-driven tools to optimize execution across diverse environments and shrinking release windows.

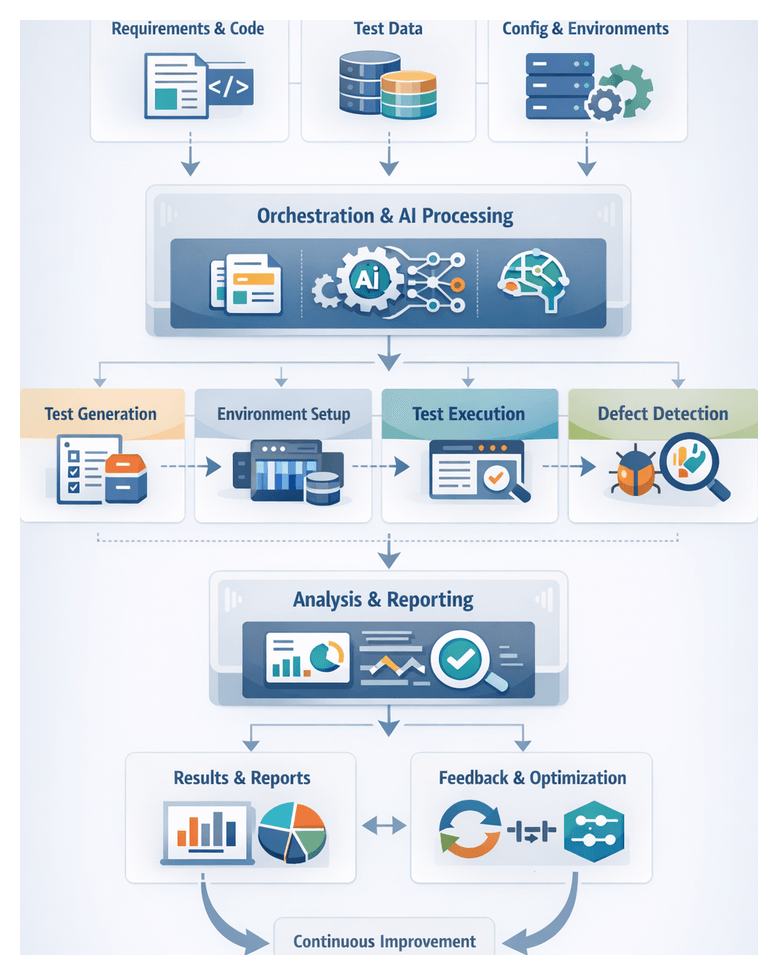

Establishing a Structured Automation Workflow

A structured automation workflow is a predefined sequence of coordinated actions, decision points, and integration interfaces that guide test artifacts from initial requirements through final analysis. By standardizing handoffs, enforcing consistent execution rules, and providing real-time monitoring, the workflow minimizes manual intervention, reduces rework, and ensures traceability.

This approach resolves key challenges:

- Tool Integration Gaps bridged by defining APIs and message formats.

- Process Variability eliminated through enforced sequences and policies.

- Lack of Visibility addressed by dashboards and progress metrics.

- Scaling Constraints overcome with parallelization, load balancing, and dynamic resource allocation.

- Governance and Compliance ensured via automated audit trails and traceable handoffs.

Key Components and Interactions

- Requirement Intake from JIRA or GitLab Issues with completeness validation and triggers for test design.

- Test Design Engine using AI agents and tools such as Selenium to generate and prioritize test cases.

- Environment Provisioner leveraging Terraform or Kubernetes to instantiate isolated test environments.

- Data Manager that anonymizes, synthesizes, and catalogs datasets for compliance and consistency.

- Execution Scheduler integrating with Jenkins or GitLab CI to orchestrate parallel test runs.

- Defect Analyzer applying machine learning to classify failures and assign root causes before routing to issue trackers.

- Reporting Dashboard aggregating metrics, trends, and predictive insights for release readiness.

Coordination and Traceability

Effective workflows define event triggers, approval gates, notification channels, escalation paths, and feedback loops. Each artifact—requirement, test case, environment configuration, dataset, or defect report—carries unique identifiers, with metadata that capture timestamps, actor details, and version information. Bidirectional links connect requirements to test cases, execution runs to environment snapshots, and defects back to code commits, ensuring auditability and rapid root-cause analysis.

Orchestration Mechanisms

- Declarative Pipelines such as Jenkins Pipeline or GitLab CI YAML definitions.

- Event-Driven Orchestration using lightweight event buses and microservices.

- Stateful Workflow Engines like Apache Airflow or Prefect for long-running workflows and visualization.

- AI-Enhanced Orchestrators that adapt workflows based on performance metrics.

Change Management and Continuous Improvement

- Review and Approval Processes for pipeline changes with automated policy checks.

- Version Control of all workflow assets in source repositories.

- Automated Policy Enforcement for security baselines and data privacy.

- Role-Based Access Control for modifying workflows and accessing sensitive data.

- Ongoing measurement of throughput, cycle time, failure rates, resource utilization, defect leakage, and coverage indices to guide AI-driven optimizations.

Positioning AI Agents Across the Testing Lifecycle

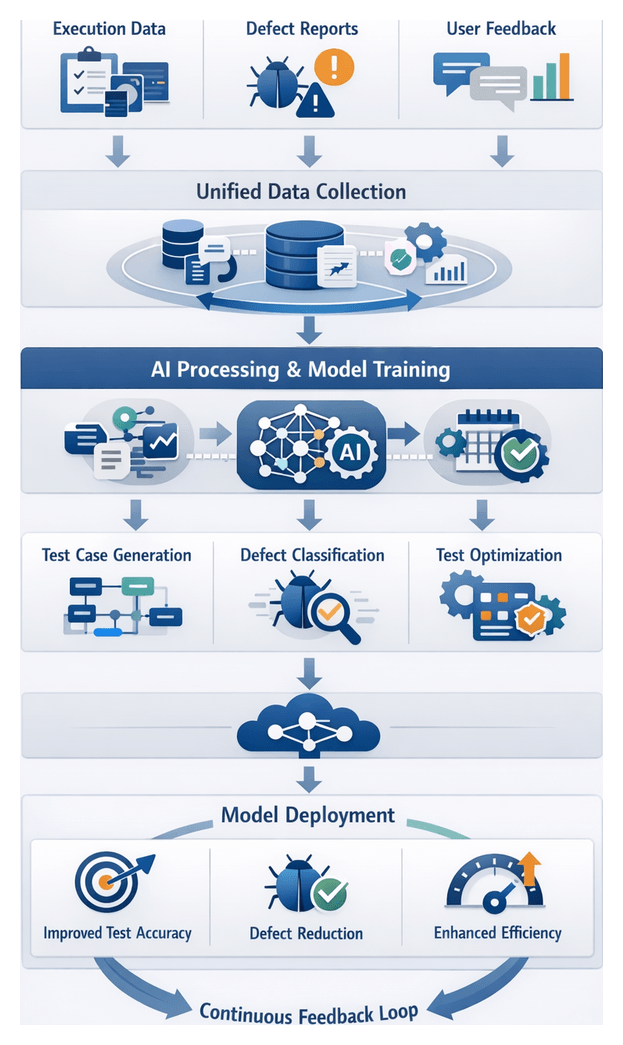

AI agents introduce dynamic intelligence across the testing pipeline, complementing traditional automation tools. They perform intelligent analysis, decision support, and continuous optimization—interpreting requirements, generating and prioritizing tests, orchestrating execution, classifying defects, and refining processes over time.

AI Agent Roles and Tools

- Requirement Analysis uses IBM Watson NLU and Azure Text Analytics to extract testable conditions and highlight ambiguities.

- Test Case Generation with Testim and Functionize employing reinforcement and deep learning to create resilient, prioritized scenarios.

- Environment Provisioning where AI modules augment Terraform for predictive capacity planning based on historical utilization.

- Synthetic Test Data Generation using Databricks libraries and GANs/VAEs to produce realistic, compliant datasets.

- Execution Scheduling integrating with Jenkins and GitLab CI/CD to dynamically reschedule or parallelize tests in response to environment health.

- Defect Detection leveraging Splunk for anomaly detection in logs, screenshots, and telemetry.

- Defect Triage with Bugsnag to assess severity and recommend assignment based on impact predictions.

- Result Analysis where Datadog and Power BI deliver trend analyses and release readiness forecasts.

- Continuous Model Refinement using MLflow to manage retraining pipelines driven by post-release metrics and feedback.

Integration with Supporting Systems

AI agents connect to requirement management platforms (JIRA), version control repositories (GitHub, GitLab, Bitbucket), CI/CD pipelines, infrastructure-as-code engines (Terraform, AWS CloudFormation), container orchestrators (Docker, Kubernetes), observability services (Prometheus, OpenTelemetry), and issue trackers (Micro Focus ALM).

Continuous Intelligence and Adaptation

By correlating data across stages—linking requirement changes to test failures or environment metrics to execution success—AI agents identify systemic inefficiencies and recommend corrective actions. Predictive analytics guide capacity adjustments, while reinforcement learning refines scheduling policies, creating a self-improving ecosystem that adapts to evolving demands.

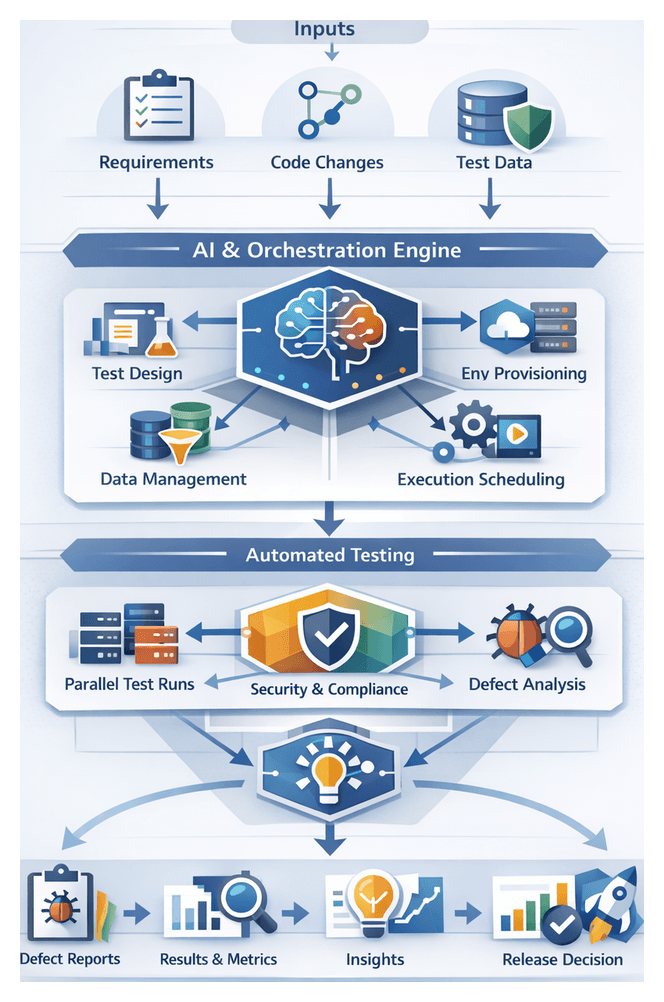

Cohesive Blueprint and Key Artifacts

This blueprint guides practitioners through a modular, AI-driven test automation pipeline composed of stages for requirements integration, test generation, environment provisioning, data management, execution orchestration, defect analysis, triage, insight extraction, and continuous feedback. Each stage produces standardized artifacts, dependencies, and handoff protocols to maintain end-to-end traceability and accountability.

Key Artifacts and Outputs

- Requirements Trace Documents mapping user stories to testable criteria.

- Generated Test Suites with priority rankings and coverage metrics.

- Environment Configuration Manifests in Terraform, Docker Compose, or Kubernetes formats.

- Synthetic Data Catalogs with schemas and generation rules.

- Execution Logs and Reports including performance metrics.

- Defect Metadata Bundles with screenshots, stack traces, and root-cause hypotheses.

- Prioritized Issue Queues and quality insight dashboards.

- Retrained AI Models with version artifacts for continuous improvement.

Dependencies and Integration Contracts

- Requirement Management Platforms (JIRA, Azure DevOps)

- Version Control Systems (GitHub, GitLab, Bitbucket)

- Infrastructure-as-Code Engines (Terraform, AWS CloudFormation)

- Container and Orchestration Services (Docker, Kubernetes)

- Data Stores and Privacy Gateways

- CI/CD Pipelines (Jenkins, GitLab CI)

- Issue Tracking Tools (ServiceNow, GitHub Issues)

- Analytics and Visualization Services (Power BI, custom dashboards)

Handoff Mechanisms and Traceability

- Artifact Repositories with immutability guarantees.

- Message Buses or Event Streaming for real-time notifications.

- RESTful APIs for synchronous payload handoffs.

- Webhook Notifications to collaboration platforms.

- Traceability Matrices automatically mapping artifacts across stages.

Enabling Continuous Feedback

- Model Evaluation Reports feeding retraining pipelines.

- Pipeline Health Dashboards for capacity forecasting.

- User Feedback Aggregates informing requirement updates.

- Defect Resolution Logs guiding prioritization refinements.

By following these outputs, integration contracts, and handoff protocols, organizations can accelerate test throughput, enhance quality assurance, and establish a resilient, adaptable AI-driven pipeline that scales with business needs.

Chapter 1: Workflow Overview and Objectives

Defining Scope and Inputs for AI-Driven Testing

A successful AI-orchestrated testing pipeline begins with clearly defined scope boundaries and well-structured inputs. Aligning objectives across development, QA, operations, and AI engineering ensures that automation efforts deliver measurable value while avoiding scope creep. This stage establishes what to automate—functional modules, test types, target environments, data domains, and integration points—and outlines exclusions for later phases. By documenting scope in requirement management systems such as Jira or Azure DevOps Boards, teams create a shared reference that drives consistent decision-making, resource allocation, and traceability from user stories to test outcomes.

Key prerequisites for workflow activation include:

- Version Control Integration via GitHub, GitLab or Bitbucket

- Infrastructure-as-Code definitions using Terraform or Ansible, and container orchestration through Docker and Kubernetes or OpenShift

- Artifact repositories such as GitHub Packages, Nexus Repository or Artifactory

- Compute resources for model training on platforms like AWS SageMaker or Google Cloud AI Platform

- Security and compliance frameworks governing data anonymization and access control

- Collaboration channels in Slack or Microsoft Teams

- Governance forums defining KPIs, metrics and stakeholder alignment

Primary inputs enable AI agents to generate, execute, and analyze tests with contextual awareness. These include:

- Requirement specifications and user stories in machine-readable form

- Source code, API definitions (OpenAPI/Swagger) and build artifacts

- Historical test artifacts from frameworks like Selenium WebDriver and Cypress

- Defect logs from Jira or Azure DevOps and performance data from tools such as New Relic or Datadog

- Environment configuration and service dependency metadata

- Test data profiles for volume, domain and privacy constraints

- Baseline quality and performance benchmarks

- Scheduling and priority rules tied to release timelines and regulatory deadlines

Decision criteria—test coverage thresholds, risk tolerances, acceptable false positive rates, and escalation rules—are embedded in governance documents. Early proof-of-concept pilots validate readiness, uncover tool compatibility gaps, and set the stage for scalable AI-driven workflows.

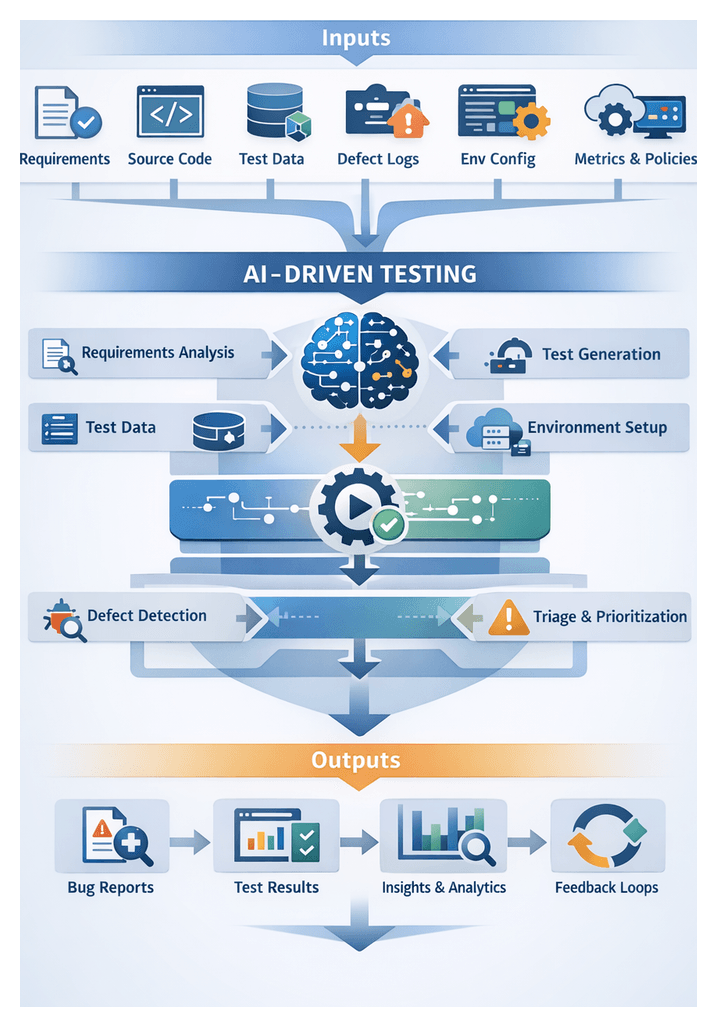

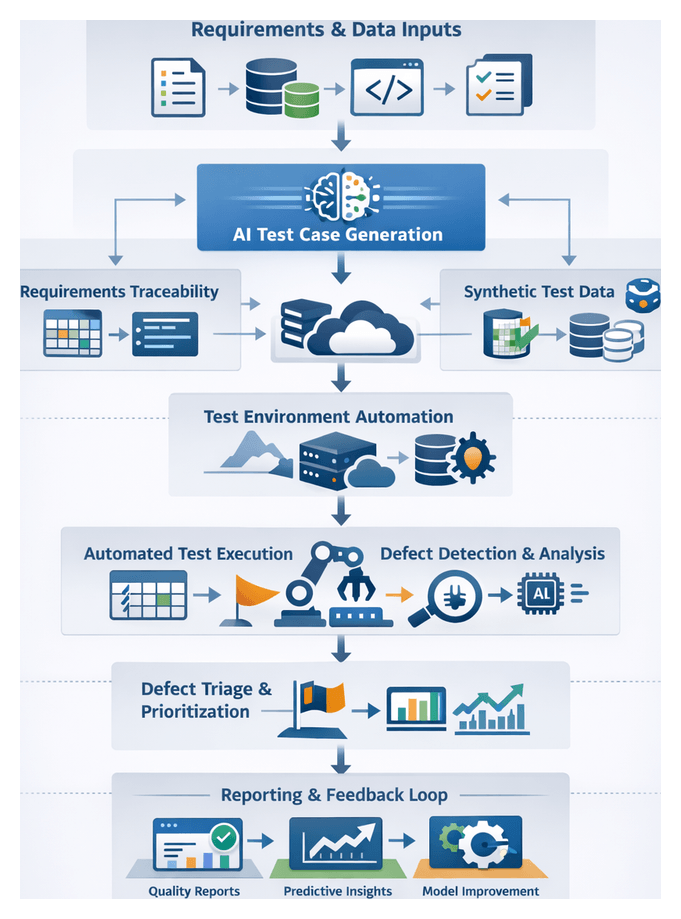

Mapping the End-to-End Workflow

Mapping workflow steps and transitions provides visibility into dependencies, system integrations, and decision gates. A high-level pipeline comprises:

- Requirements Ingestion and Validation

- Test Planning and Automated Case Generation

- Environment and Data Provisioning

- Execution Orchestration and Scheduling

- Defect Detection and Classification

- Dynamic Triage and Prioritization

- Insight Extraction and Feedback

Stage 1: Requirements Ingestion and Validation

User stories and acceptance criteria are ingested from Jira or Azure DevOps. AI agents apply NLP to extract testable conditions, validate completeness, assign risk-based priority tags, and generate clarification requests. Approved requirements and trace IDs are stored in a central repository for downstream traceability.

Stage 2: Test Planning and Automated Case Generation

Platforms like Testim and GitHub Copilot analyze requirement metadata, source code repositories, and historical defect patterns to generate and prioritize test suites. Generated cases are submitted via pull requests to a Git-backed test repo, reviewed by test leads, and tagged with execution constraints.

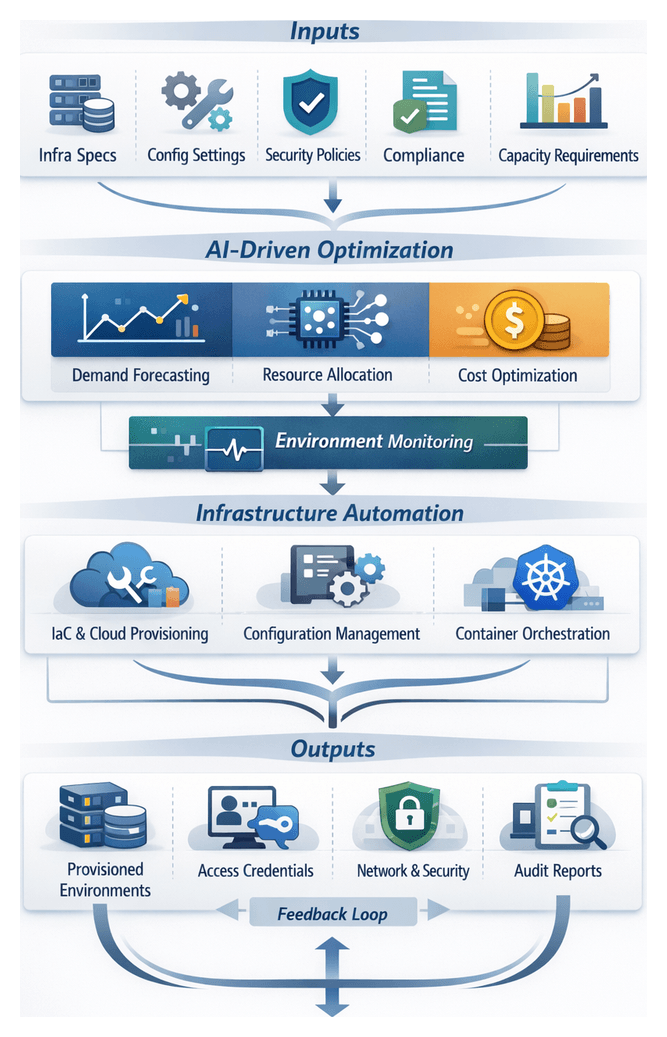

Stage 3: Environment and Data Provisioning

Infrastructure orchestration tools (Terraform, Ansible) provision containers or VMs. AI-driven capacity models forecast resource needs and balance cost with performance. Synthetic data engines such as Gretel.ai and DataRobot generate anonymized datasets. Environment health checks validate network and schema integrity before promotion to the execution queue.

Stage 4: Execution Orchestration and Scheduling

CI/CD orchestrators like Jenkins, GitHub Actions or GitLab CI distribute test suites in parallel, sequence smoke tests, and monitor telemetry. AI monitoring agents predict node failures and reroute tasks mid-run to meet throughput targets. Logs and metrics are aggregated for real-time visibility.

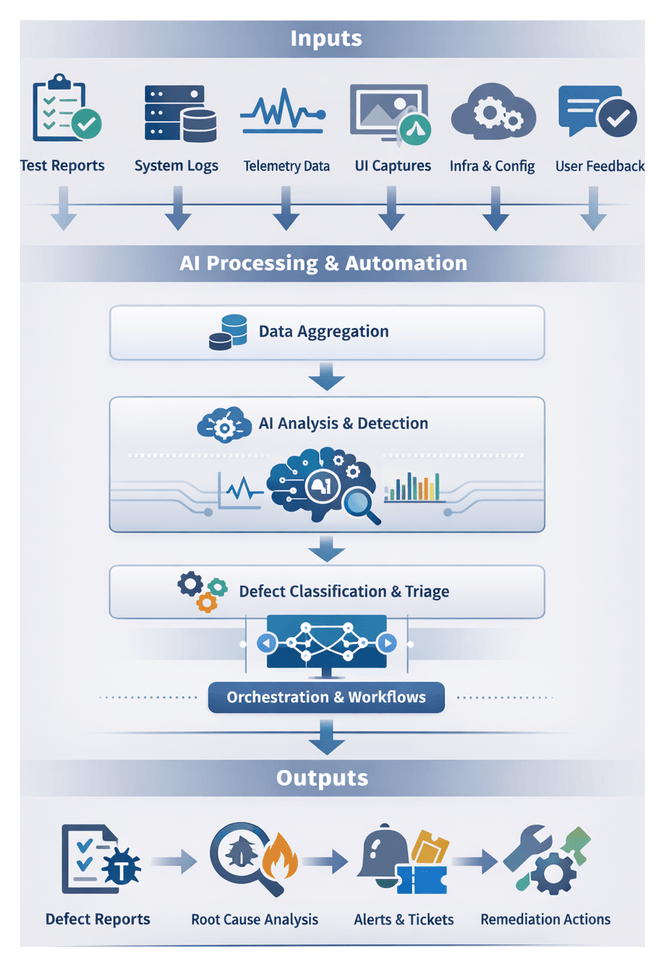

Stage 5: Defect Detection and Classification

AI engines such as Applitools and Dynatrace ingest logs, screenshots and performance data. Machine learning models identify anomalies, classify defects by severity and root cause, and enrich tickets with contextual metadata before filing them in the issue tracker.

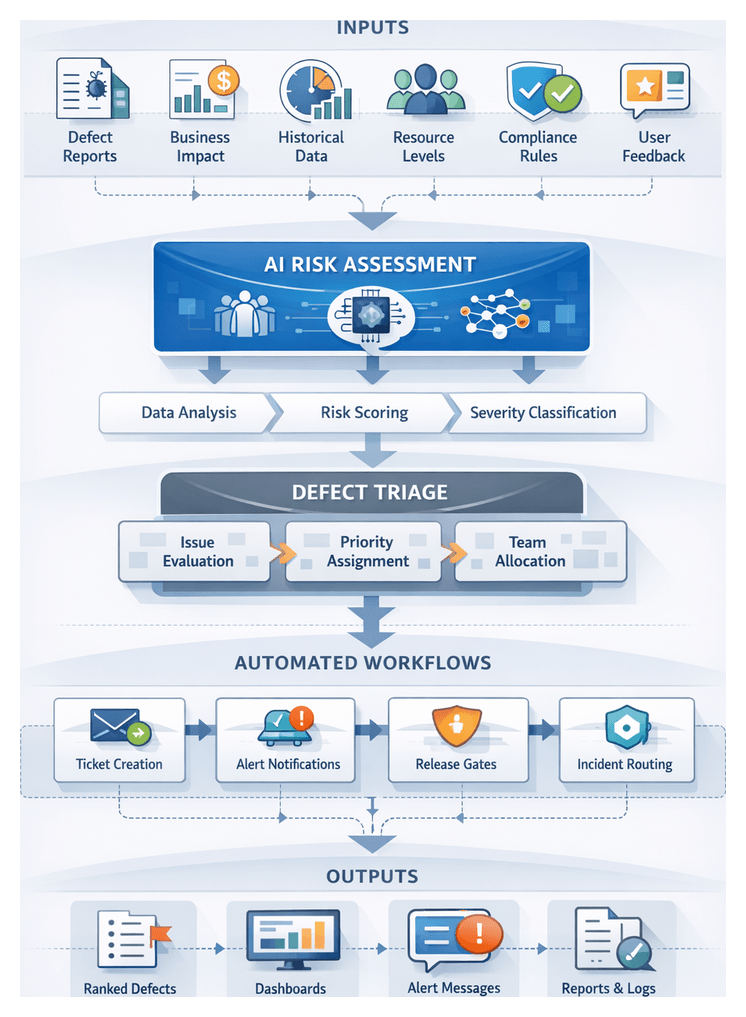

Stage 6: Dynamic Triage and Prioritization

Risk models evaluate business impact based on usage patterns, historical resolution times and compliance factors. High-risk defects are routed to subject matter experts or scheduled for hotfix sprints. Automated escalation enforces SLAs and dashboards provide backlog transparency.

Stage 7: Insight Extraction and Feedback

Analytics platforms, integrated via OData feeds or direct connections to tools like Power BI and Tableau, aggregate execution metrics and defect trends. Predictive models forecast release readiness, flag coverage gaps and recommend optimizations. Continuous feedback informs requirement refinement, test heuristics and capacity planning.

AI Functions Across the Workflow

AI capabilities—NLP, classification, predictive analytics, anomaly detection and reinforcement learning—embed intelligence at every stage. Key functions include:

Requirement Ingestion and Mapping

NLP agents extract entities, generate traceability links to existing test cases or code modules, and flag ambiguities in platforms like Jira or IBM DOORS Next.

Test Case Generation and Prioritization

Tools such as GitHub Copilot and Testim propose parameterized scripts, optimize coverage and assign risk-based priorities. AI integrates with frameworks like Selenium and Appium to produce executable artifacts.

Environment Provisioning and Capacity Planning

Predictive models in Terraform Enterprise or AWS CloudFormation estimate resource demand, automate provisioning workflows and optimize cost-performance trade-offs.

Synthetic Data Generation

Agents in Gretel.ai and DataRobot learn data distributions and preserve correlations while enforcing privacy constraints to deliver representative test datasets.

Execution Scheduling Adaptation

Reinforcement learning plugins for Jenkins or Azure DevOps dynamically parallelize workloads, reorder tests on failure and trigger self-healing actions when anomalies occur.

Defect Detection and Classification

Engines like Applitools, Dynatrace and Splunk apply computer vision, log pattern recognition and root cause prediction to streamline triage.

Dynamic Triage and Assignment

Risk scoring models integrate with Jira or Azure Boards to automate assignment, escalation and reprioritization based on real-time data.

Result Analysis and Predictive Insights

Machine learning extensions for Power BI or Tableau forecast defect hotspots, estimate on-time delivery and calculate quality health scores.

Continuous Feedback and Model Refinement

MLOps platforms like MLflow and Kubeflow orchestrate data collection, model retraining and versioned deployment to maintain AI accuracy and adapt to evolving application behavior.

Deliverables and Governance Artifacts

- Workflow Diagram mapping stages, decision points and AI functions

- Process Definition Document detailing step narratives, entry/exit criteria and outputs

- Roles and Responsibilities Matrix assigning RACI ownership

- Tooling Inventory and Integration Map cataloguing platforms and integration touchpoints

- Metrics and SLA Catalog defining KPIs, targets and reporting cadence

- Traceability Matrix linking requirements to AI-generated test cases

- Assumptions and Constraints Log documenting dependencies and governance policies

All artifacts reside in version-controlled repositories like GitHub or centralized document systems, with automated approval workflows and semantic versioning to ensure a single source of truth.

Key Metrics and Success Criteria

Process Efficiency

- Cycle Time per Stage

- Test Throughput per Pipeline Run

- Environment Provisioning Time

Quality and Coverage

- Test Coverage Rate

- Defect Escape Rate

- Classification Accuracy

Operational Reliability

- Execution Success Rate

- Integration Error Rate

- Data Generation Fidelity

AI Model Performance

- Model Accuracy

- Precision and Recall

- Retraining Frequency

Business Impact

- Release Readiness Score

- Mean Time to Detect and Resolve Defects

- Stakeholder Satisfaction Index

Dashboards in Power BI or Tableau integrate data from CI/CD, test management and AIOps tools to provide real-time alerts and periodic KPI reviews.

Dependencies and Assumptions

- Requirements Management in Jira or equivalent with clear versioning

- Existing Automation Frameworks such as Testim or Tricentis Tosca

- Infrastructure-as-Code via Terraform or Kubernetes configurations

- Data Access and Compliance for production-like sources with GDPR/HIPAA guidelines

- AI Model Libraries with available pre-trained NLP and ML models

- Cross-Functional Collaboration across Dev, QA, Security and Ops teams

- Executive Sponsorship for tooling licenses and budget

Assumptions include SME availability for model training, a DevOps culture embracing iterative feedback, and stable connectivity between on-premise and cloud services. A dependency register and mitigation plans address deviations without disrupting the workflow.

Integration Points and Handoffs

- Requirements to Test Generation: Event-driven exports via API or webhooks from Jira or Azure DevOps into AI test generators; confirmation reports returned for sign-off.

- Test Cases to Environment Provisioning: Commits trigger Jenkins or GitLab CI jobs; Terraform or Ansible templates reference test dependencies for environment setup.

- Environments to Execution: Provisioned endpoints and credentials published to the orchestrator; test runners consume serialized suites and emit status events.

- Results to Defect Analysis: Logs, screenshots and metrics streamed to data lakes; AI engines publish structured tickets with severity and root cause metadata.

- Defects to Triage: Classified issues and risk scores forwarded to dashboards; notifications dispatched via email or collaboration tools.

- Insights to Dashboards: Aggregated metrics published through OData feeds to Power BI or Tableau; automated alerts highlight release readiness and trend deviations.

Each handoff is governed by versioned API contracts, secure authentication protocols and error-handling procedures. Comprehensive logging and health checks ensure rapid failure detection and automated recovery or escalation.

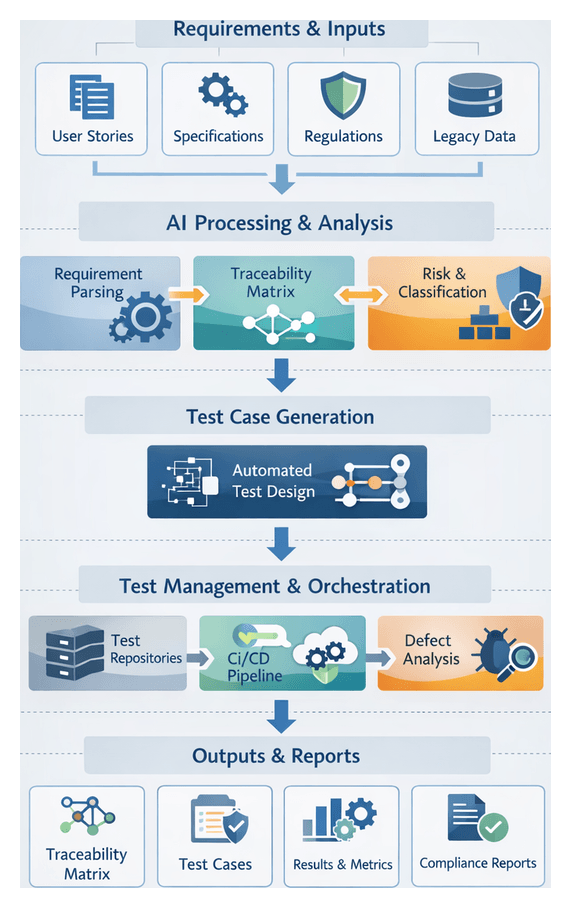

Chapter 2: Requirements Integration and Traceability

Purpose and Context of Requirement Integration and Traceability

At the outset of any AI-driven testing workflow, capturing requirements and trace inputs establishes the foundation for automated test design, execution, and compliance. This phase consolidates stakeholder needs—business objectives, user stories, use cases—alongside technical specifications, non-functional targets, and regulatory mandates into validated, structured artifacts. By defining clear objectives and scope boundaries, organizations enable bidirectional linkages between requirements and test cases, support natural language processing by AI agents, and ensure audit readiness under frameworks such as ISO 26262, FDA regulations or GDPR.

In an era of distributed teams, microservices, and continuous integration and delivery (CI/CD), manual testing struggles to maintain coverage and speed. AI-driven workflows leverage machine learning, intelligent agents, and orchestration engines to parse requirements, generate test scenarios, and analyze impact. Accurate requirement capture reduces ambiguity, minimizes rework, and accelerates delivery by ensuring automation begins with complete, approved inputs.

Prerequisites and Key Inputs

Effective requirement integration demands:

- Access to stakeholder inputs: business objectives, user personas, acceptance criteria from product owners and end users.

- Integration with platforms such as Jira, Azure DevOps, or IBM DOORS Next.

- Governance workflows for requirement review, approval, and baselining.

- Standardized templates for user stories, use cases, and non-functional requirements.

- Initial traceability matrix framework for linking requirements to design elements and test cases.

Primary inputs include:

- User stories, epics, use cases and scenarios

- Functional and non-functional specifications

- Regulatory standards (HIPAA, PCI DSS, SOX)

- Architectural diagrams, API schemas and data models

- Historical defect and test data for risk-based prioritization

Stakeholders and Early Validation

Collaboration among product owners, business analysts, system architects, QA leads, compliance officers and DevOps engineers ensures high-quality inputs. Early validation through peer reviews, workshops and automated checks for missing acceptance criteria or inconsistent priorities aligns business goals with technical constraints and primes AI agents for accurate parsing and mapping.

Orchestrating the Requirements-to-Test Workflow

Maintaining a structured workflow from requirement intake to test case generation enforces consistency, bidirectional traceability, and auditability. This orchestration integrates requirement management systems, AI analysis engines and test management repositories to reduce manual effort and accelerate the software lifecycle.

Core Workflow Actions

- Intake and normalization

- Automated parsing and classification

- Traceability matrix generation

- Handoff to test design

Intake and Normalization

Connectors extract raw requirements from sources—Jira, Azure DevOps, GitHub Issues—and normalize them into a canonical schema. Fields such as summary, description, acceptance criteria, priority and tags feed downstream AI services.

Automated Parsing and Classification

AI-driven engines apply natural language processing to extract feature sets, identify requirement types and assign risk levels. Requirements are tagged with domain entities, dependencies and suggested test scenarios, guiding the selection of unit, integration, performance or security tests.

Traceability Matrix Generation

The orchestration engine builds an initial matrix mapping requirements to test objectives. Stored in solutions like TestRail or Azure Test Plans, this matrix highlights coverage gaps and enables stakeholder review before test creation.

Handoff to Test Design

Upon matrix approval, structured payloads containing requirement IDs, classification tags, acceptance criteria and artifacts (UI mockups, API specifications) are exported to test case generation tools. Version control records these handoffs, ensuring auditability and rollback capability.

System Interactions and Team Coordination

An event-driven architecture using RESTful APIs, message queues (Apache Kafka, RabbitMQ) and service buses coordinates the flow. Quality engineers, analysts and integrators define mapping rules, refine AI models through feedback loops and maintain integration connectors. Dashboards in Grafana track traceability status and classification confidence, while notifications alert teams to exceptions or mapping conflicts.

Metrics and Benefits

- Requirement Processing Time: latency from submission to matrix update

- Classification Accuracy: ratio of AI classifications accepted without manual correction

- Coverage Ratio: percentage of requirements with linked test scenarios

- Exception Rate: frequency of manual reviews triggered by low-confidence mappings

- Integration Health: success rate of API calls and message deliveries

Structured orchestration accelerates test case generation, improves compliance readiness, enhances cross-team collaboration and creates feedback loops that continuously refine AI agents and mapping rules.

AI Agents in Requirement Analysis

Embodied within the requirements integration and traceability stage, AI agents apply NLP, machine learning and knowledge graphs to transform raw specifications into structured test logic. Their capabilities reduce interpretation errors, scale with parallel development streams and maintain continuous traceability for audit demands.

Key AI Capabilities and Systems

- Natural Language Processing: tokenization, part-of-speech tagging and dependency parsing

- Entity Extraction and Classification: feature names, user roles and business rules

- Semantic Similarity and Clustering: grouping related statements and identifying duplicates

- Knowledge Graphs: domain ontologies capturing entity relationships

- Machine Learning Models: classification of functional, security and performance requirements

- Requirements Management Integrations: Jira, Jama Connect, IBM DOORS Next

- Orchestration Engines: Jenkins, GitLab CI/CD, Azure DevOps

Roles of AI Agents

- Automated Ingestion: Normalizing artifacts from APIs or document stores.

- Semantic Parsing and Annotation: Decomposing statements into actors, actions, conditions and data types.

- Ambiguity Detection: Flagging unclear or conflicting statements and prompting stakeholder clarification.

- Classification and Prioritization: Assigning requirement categories and risk-based priorities using historical defect data.

- Mapping to Test Templates: Selecting test case templates for security, performance, load or functional scenarios.

- Dependency and Trace Link Generation: Creating bidirectional links between requirements, design artifacts and tests.

- Continuous Learning: Refining models with feedback from test execution and defect analysis.

Integration Best Practices

- Toolchain Assessment: Identify integration points and data formats.

- Model Selection and Training: Leverage APIs such as Google Cloud Natural Language API or IBM Watson Natural Language Understanding and train with domain data.

- Workflow Orchestration: Define triggers, processing steps and outputs in an automation engine.

- Human-in-the-Loop: Route low-confidence mappings to experts for review.

- Monitoring and Metrics: Track mapping accuracy, time to generation and trace coverage.

- Governance and Versioning: Maintain model version control and audit trails for annotations.

Embedded AI agents transform requirement analysis into an intelligence-driven practice, reducing ambiguity-driven rework by up to 50 percent, generating test scripts within minutes, and ensuring 100 percent linkage between requirements and test cases.

Traceable Outputs and Handoffs

This stage produces machine-readable artifacts that serve as the authoritative inputs for test design, environment provisioning and execution orchestration. Clear handoff mechanisms preserve traceability and automation efficiency.

Artifact Types and Standards

- Requirement Traceability Matrix (RTM): mapping business requirements to functional specifications and acceptance criteria.

- Testable Criteria Document: formal pass/fail conditions linked to RTM entries.

- API Contract Definitions: OpenAPI or AsyncAPI schemas for automated parsing by Postman.

- Data Model Maps: JSON schemas or entity-relationship diagrams annotated for data synthesis.

- Change Logs: metadata capturing requirement revisions, timestamps and authors.

- Trace Metadata Package: JSON or CSV exports linking requirement IDs in Jira or Azure DevOps.

Standard formats such as ReqIF, IEEE 829 templates and OpenAPI reduce custom integration work.

Dependencies and Integration Points

- Upstream Sources: Requirement platforms (Jira, IBM DOORS Next), document repositories (GitLab, GitHub).

- Downstream Consumers: AI test generators like Testim, Mabl; infrastructure as code tools (Terraform, AWS CloudFormation); data synthesis via Datagen AI; CI/CD pipelines (Jenkins, GitLab CI, Azure Pipelines); BI dashboards (Power BI, Tableau).

Handoff Mechanisms

- API-Based Transfers: push mode via RESTful endpoints or pull mode with incremental polling.

- Event-Driven Notifications: message brokers (Apache Kafka, AWS SNS) and webhooks.

- Artifact Repositories: versioned storage and access control in Nexus or Artifactory.

Quality Gates and Metrics

- Completeness Checks: ensuring every requirement maps to testable criteria.

- Format Validation: schema validators for JSON, XML and OpenAPI.

- Trace Coverage Metrics: dashboards measuring mapping completeness and quality gate pass rates.

- Dependency Verification: automated scans aligning API contracts with data models.

Best Practices for Sustained Traceability

- Consistent Naming Conventions: predictable identifiers (REQ-001, TC-001).

- Automated Synchronization: scheduled sync tasks between platforms and trace repositories.

- Audit Logging: immutable logs of artifact modifications and handoff events.

- Integrated Dashboards: real-time traceability status and KPIs.

- Governance Reviews: periodic human audits complementing automated checks.

By producing structured outputs, validating quality, and automating handoffs, organizations maintain full visibility into requirement coverage and deliver accelerated, compliant testing processes.

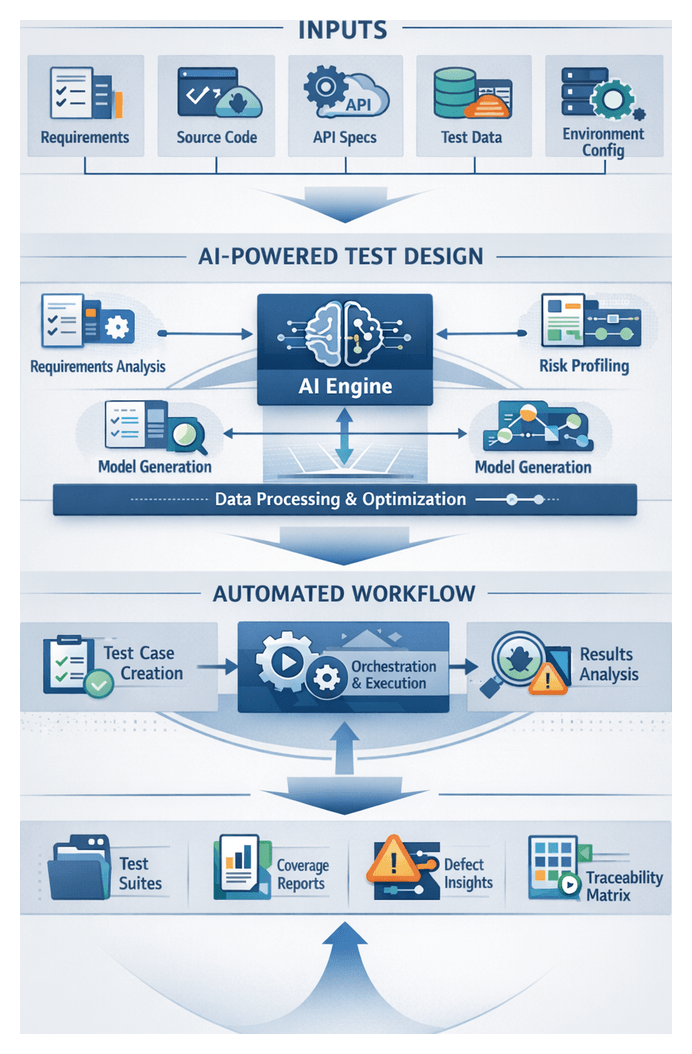

Chapter 3: AI-Powered Test Case Generation

Purpose and Strategic Importance of Automated Test Design

The automated test design stage establishes the foundation for AI-driven generation of test scenarios that align with business requirements, technical specifications, and historical quality insights. By systematically gathering and validating inputs, teams ensure that AI agents produce relevant, maintainable, and traceable test cases. Investing effort in this preparatory stage accelerates test creation, reduces manual overhead, and minimizes the risk of misalignment between testing outcomes and customer expectations.

Strategic Benefits

- Alignment of test scenarios with business priorities and compliance requirements

- Maximization of coverage through data-driven analysis of past defects and risk indicators

- Reduction of duplicate or irrelevant tests by leveraging historical execution results

- Traceability between requirements, test cases, and defects for audit and governance

- Improved maintainability through standardized input formats and metadata tagging

Inputs and Prerequisites for AI-Driven Test Design

Required Inputs

- Functional Requirements and User Stories: Specifications from requirement management systems such as Jira or Azure DevOps, including acceptance criteria, business rules, and user personas.

- Technical Specifications and API Contracts: Interface definitions, API schemas (OpenAPI/Swagger), message formats, and integration guidelines guiding boundary, edge-case, and integration tests.

- Source Code Repositories: Codebases in platforms like GitHub or GitLab for analysis of application structure, dependencies, and logical branches.

- Historical Defect and Test Execution Data: Defect records and execution logs from tools such as TestRail or QMetry, informing prioritization toward high-risk areas.

- Non-Functional Requirements: Performance benchmarks, security policies, compliance mandates (GDPR, HIPAA), and accessibility standards for load, penetration, and compliance tests.

- Domain Models and Data Schemas: Entity-relationship diagrams, data dictionaries, and canonical data models to drive synthetic test data generation.

- Environment and Configuration Definitions: Infrastructure-as-code templates, container specifications, and environment variables outlining deployment contexts.

- Traceability Matrices: Mapping documents linking requirements to existing test cases and defects for bidirectional traceability.

Prerequisites and Governance

- Structured Requirements Management: Centralized, version-controlled systems with standardized templates in Confluence or IBM DOORS.

- Accessible Artifact Storage: APIs or file shares with proper permissions and authentication for automated retrieval.

- Data Quality and Hygiene: Current, accurate inputs with controlled vocabularies, metadata conventions, and validation rules.

- Versioning and Baseline Control: Tags or branches marking stable code and requirement baselines for reproducibility.

- Integration Points and APIs: REST APIs, webhooks, or message queues connecting requirement management, code repositories, defect tracking, and test management systems.

- Governance and Compliance Framework: Policies for data privacy, intellectual property, and auditability with approval gates where necessary.

- Team Alignment and Roles: Defined responsibilities for business analysts, developers, QA engineers, and DevOps practitioners.

- Computational Resources and Security Controls: Sufficient compute capacity, secure infrastructure, access controls, network segmentation, and encryption safeguards.

Data Validation, Preprocessing and Risk Profiling

- Natural language normalization of requirement text to remove ambiguities

- Code parsing and abstract syntax tree generation to identify functions, classes, and control structures

- Schema validation of API definitions against OpenAPI specifications

- Deduplication and classification of historical defects to ensure representative sampling

- Annotation of domain entities with semantic metadata for accurate scenario mapping

- Change impact analysis from version control diffs

- Usage analytics highlighting high-traffic features

- Security advisories indicating vulnerability hotspots

- Regulatory change logs affecting compliance requirements

Structure of an AI-Powered Automation Workflow

Modern software delivery demands a structured end-to-end automation workflow that unifies teams, tools, and data flows. By codifying clear stages, triggers, and integration points, organizations eliminate ad hoc practices, accelerate feedback loops, and ensure scalable, reliable test outcomes.

Orchestration Layer

Engines such as GitLab CI or CircleCI coordinate sequence, parallelization, and conditional flows. AI agents adjust scheduling in real time based on environment health or test queue backlogs.

Integration Points and APIs

Webhooks and standardized APIs enable seamless communication. For example, merging a feature branch triggers Testim for test case generation and an API call to Terraform for environment provisioning.

Data and Artifact Exchange

Uniform schemas such as Test Anything Protocol (TAP) or JUnit XML ensure compatibility across execution engines and analytics dashboards. Synthetic datasets from platforms like mabl are injected automatically, eliminating manual data imports.

Governance and Visibility

Automated metrics—coverage reports, environment utilization, defect summaries—feed central dashboards. Role-based access controls and audit trails enforce compliance and maintain data integrity.

Coordination Across Teams and Tools

- Development to Test Handoff: Feature branches trigger AI agents to analyze code diffs and requirements, proposing scenarios to test management via API. Notifications inform QA of new suites for review.

- Test to Environment Provisioning: The orchestration layer invokes infrastructure-as-code scripts. AI-driven capacity planners predict resource needs for parallel execution.

- Execution to Defect Analysis: Runners output structured logs and telemetry. An AI defect detection service classifies failures and logs issues in tools such as Sauce Labs TestOps or Jira.

AI Models and Techniques for Scenario Generation

Machine learning and model-based techniques transform requirements, code artifacts, and historical data into executable test scenarios. This approach increases coverage, reduces design time, and adapts rapidly to evolving logic.

Key AI Techniques

- Natural Language Processing (NLP) for requirements interpretation via named entity recognition and dependency parsing.

- Model-Based Testing using finite state machines, decision diagrams, or Petri nets derived from interface definitions and code annotations.

- Pattern Mining with clustering and association rule mining on execution logs and defect repositories.

- Reinforcement Learning agents exploring scenario space, guided by defect discovery rates, coverage metrics, and performance thresholds.

Roles of AI Models

- Requirement Analyzer: Converts unstructured specifications into structured intent graphs.

- State Model Refiner: Updates formal models to reflect code and interface changes.

- Scenario Synthesizer: Generates candidate test cases by traversing models and instantiating parameters.

- Coverage Optimizer: Prunes and prioritizes scenarios based on risk and resource constraints.

- Feedback Integrator: Recalibrates models using execution results and defect outcomes.

Integration with Supporting Systems

- Requirements platforms such as Jira or IBM DOORS Next.

- Code repositories like GitHub, Subversion, or Azure Repos.

- Test management tools including TestRail and frameworks such as Selenium, Cypress, or UFT.

- CI/CD systems like Jenkins or GitHub Actions for continuous validation.

Continuous Learning and Model Refinement

- Monitor metrics such as defect yield, coverage contributions, and false positive rates.

- Collect feedback from execution logs, test outcomes, and tester annotations.

- Retrain models using platforms like Google Cloud AutoML or Microsoft Azure Machine Learning.

- Version and validate models with registry tools and A/B evaluations.

- Deploy improved models with rollback capability to production AI agents.

Case Example

Integrating Diffblue Cover for code-based scenarios with Test.ai for UI pattern learning resulted in a 30% reduction in test design time and a 20% improvement in functional coverage. Static analysis and reinforcement learning agents synchronized models and shared feedback, delivering unified backend and frontend test suites.

Generated Test Artifacts and Traceability

AI-powered test case generation produces a structured collection of artifacts that bridge requirements and execution frameworks. These outputs are comprehensive, consistent, and versioned for maintainability.

Artifact Types

- Test Definition Files: JSON, YAML, or DSL files detailing actions, parameters, expected outcomes, and exception paths.

- Metadata Manifests: Attributes such as priority, tags, estimated runtime, required data profiles, and preconditions.

- Requirement Traceability Matrix Entries: Bidirectional links between test cases and requirements, exportable to ALM platforms.

- Environment Configuration Snippets: Variables for URLs, credentials, feature flags, or container images.

- Test Data Profiles: Schema definitions and sample payloads for synthetic or masked datasets via Mabl or internal tools.

- Version Control References: Commit SHAs or branch tags aligning tests with specific application versions.

Dependency Management

Artifacts are annotated with:

- Requirement identifiers and excerpts of acceptance criteria.

- Classification of requirement types (functional, security, performance, compliance).

- Environment characteristics: OS, browser variants, service endpoints, API contract versions, data schema states, and network profiles.

Versioning and Change Management

- Store test definitions in version control with branch policies mirroring feature development.

- Apply semantic or date-based tags to distinguish artifact releases.

- Trigger AI-driven regeneration on requirement updates, producing draft tests in feature branches for review.

Handoffs to Test Execution

- Artifact Publishing: Definitions and manifests published to a shared registry detected by Jenkins, Azure DevOps, or Testim agents.

- API Registration: Orchestrators accept HTTP POST requests containing artifacts and environment tags for dynamic scheduling.

- Event-Driven Queues: Generation events published to Kafka or AWS EventBridge, with downstream consumers initiating execution workflows.

Traceability and Auditability

- Timestamps, AI engine version, and identity of the generating agent.

- Snapshots of requirement text and code baselines used as inputs.

- Rationales for scenario creation, including key phrases identified by NLP.

- Change logs detailing differences between successive test generations.

Metrics and Reporting

- Generation Throughput: Number of test cases produced per requirement per period.

- Coverage Ratio: Percentage of requirements with associated test cases by feature or component.

- Redundancy Index: Overlap between scenarios, highlighting consolidation opportunities.

- Defect Detection Rate: Ratio of defects found by generated tests versus manual suites.

- Traceability Completeness: Proportion of artifacts with valid links to requirements, environments, and version tags.

Best Practices for Managing Output Artifacts

- Define naming conventions, schema standards, and version tagging policies via a central governance team.

- Implement automated validation gates to verify artifact schema conformance before handoff.

- Enforce role-based access control on artifact repositories.

- Conduct periodic reviews to retire obsolete scenarios and refine AI model parameters.

- Integrate feedback loops from execution results and defect classification to improve generation accuracy.

Continuous Improvement and Optimization

A structured automation workflow evolves through feedback loops, data-driven insights, and periodic reviews. Key performance indicators guide refinements in throughput, coverage, and quality.

- Cycle Time: Time from code commit to test report availability, minimized through parallelization and AI-driven scheduling.

- Test Coverage and Redundancy: Analytics identify low-value or overlapping tests for removal or consolidation.

- Defect Detection Rate: Focus on early detection by enhancing scenario generation and classification accuracy.

- Resource Utilization: Predictive planning models forecast demand to prevent contention during peak periods.

Regular retrospectives and governance reviews analyze KPI trends and AI model performance. Model retraining cadences ensure alignment with evolving applications, while version-controlled pipeline definitions enable safe experimentation and rollback. Through continuous learning and structured workflows, organizations achieve higher quality at unprecedented speed.

Chapter 4: Infrastructure and Environment Automation

Purpose and Context of Environment Provisioning Stage

The environment provisioning stage establishes the infrastructure foundation for AI-driven testing workflows. It ensures that compute, networking, storage, and configuration resources are consistent, reproducible, and aligned with application requirements and production conditions. By defining precise prerequisites and inputs, this stage reduces false positives, accelerates defect identification, and enables parallel test execution without manual intervention. Over the past decade, the rise of microservices, containerization, and cloud-native architectures has driven adoption of Infrastructure as Code (IaC) tools such as Terraform and AWS CloudFormation, alongside container orchestration platforms like Kubernetes, to automate environment definitions and provisioning at scale.

In an AI-enhanced context, environment provisioning integrates predictive capacity planning, policy-driven security checks, and continuous feedback loops. AI agents leverage telemetry and historical data to forecast resource demands, optimize cost, and adjust allocations dynamically. This holistic approach streamlines automated workflows, aligns DevOps and QA teams, and embeds governance controls for auditability and compliance.

Core Inputs and Prerequisites

Effective environment provisioning relies on well-defined inputs that guide infrastructure automation tools and AI agents. The following categories capture essential specifications:

- Infrastructure Specifications: Compute profiles (CPU, memory, GPUs), storage types, OS images, and network interfaces. In cloud contexts, this translates to instance types, block volumes, and virtual network settings.

- Configuration Definitions: Software packages, middleware, environment variables, and startup scripts managed by tools like Ansible or Chef. Versioned repositories and YAML manifests ensure reproducibility and rollback capability.

- Network and Security Constraints: Virtual private networks, firewall rules, load balancer settings, and security group configurations. Policy checkers validate encryption, certificate management, and identity provider integrations prior to provisioning.

- Compliance and Governance Inputs: Framework references (GDPR, HIPAA, PCI DSS), data encryption requirements, logging retention policies, and segregation of duties. Compliance scanners cross-verify IaC definitions to produce audit-ready artifacts.

- Resource Quotas and Capacity Profiles: Limits on compute, storage, and network utilization per test run or project. Autoscaling policies reflect capacity thresholds and cost-center tagging supports chargeback and cleanup routines.

- Integration Points and Handoff Definitions: Metadata outputs (URLs, credentials, resource IDs) and status indicators consumed by CI/CD tools such as Jenkins or GitLab CI. Clear handoff schemas enable seamless transitions to test data preparation, execution, and teardown.

Best practices include maintaining a centralized IaC repository, parameterizing modules for multi-environment deployments, and incorporating linting and validation steps to catch schema drift early.

AI-Driven Capacity Planning and Allocation

Traditional static resource allocation often leads to idle capacity or bottlenecks. AI-driven capacity planning addresses this by forecasting demand and automatically adjusting resources ahead of test execution. Key components include:

- Predictive Demand Forecaster: Machine learning models—time series algorithms (ARIMA, Prophet), regression trees, and LSTM networks—analyze historical test logs, CI/CD triggers, and external signals (release schedules, feature toggles) to estimate resource requirements.

- Dynamic Resource Allocator: Reinforcement learning agents and policy-based controllers determine optimal compute, memory, and network allocations within predefined risk thresholds.

- Cost Optimization Advisor: Evaluates spot instances, reserved capacity, and rightsizing opportunities to minimize infrastructure spend while maintaining reliability.

- Environment Health Monitor: Continuously ingests telemetry from monitoring platforms like Prometheus and Grafana to detect anomalies and trigger scaling overrides or rollback actions.

Integration with IaC pipelines enables AI-generated plans to be enacted via Terraform modules, Kubernetes Cluster Autoscaler, and cloud-native autoscaling services (AWS Auto Scaling, Azure VM Scale Sets, Google Cloud Instance Groups). Closed-loop feedback compares predicted versus actual usage and refines forecasting models, while approval gates and audit trails maintain human oversight for significant budget impacts.

Key Deliverables and Artifacts

The automated environment provisioning stage produces a set of versioned artifacts that define, instantiate, and validate test environments. Deliverables include:

- Infrastructure-as-Code templates for Terraform and AWS CloudFormation, stored in version control alongside application code.

- Configuration management playbooks for Ansible or Chef, parameterized for environment variants.

- Container definitions and orchestration manifests—Docker images, Kubernetes Deployment YAML, and Helm charts—with tagged registries for traceability.

- Network and security blueprints specifying subnets, security groups, load balancers, and encryption policies.

- Service endpoint registry cataloging URLs, DNS records, ports, and authentication endpoints.

- Environment metadata and inventory in machine-readable formats (JSON, YAML) listing resource IDs, zones, capacities, and cost metrics.

- Automated health check reports capturing service availability and performance metrics against predefined thresholds.

- Access credentials and secret references managed by HashiCorp Vault, AWS Secrets Manager, or Azure Key Vault.

Version Control and Dependency Management

Rigorous version control prevents configuration drift and ensures consistency across test runs. Strategies include:

- Module registries (public or private) with pinned versions for IaC components and playbooks.

- Semantic versioning of configuration playbooks, container images, and manifests following major.minor.patch conventions.

- Immutable infrastructure patterns that replace resources rather than patching them in place.

- Dependency graph generation tools to sequence provisioning based on resource interdependencies.

- CI pipelines in Jenkins or GitLab CI for automated linting, plan-only previews, and approvers in code review processes.

- Environment-specific variable overlays to separate dev, staging, and production configurations.

Handoff, Cleanup, and Teardown Mechanisms

Seamless handoff of environment artifacts to test execution and efficient teardown are crucial for resource hygiene and cost control. Key mechanisms include:

Environment Metadata Publication

- Publishing environment identifiers and tags linked to commit hashes.

- Exposing service endpoint definitions in JSON or YAML for programmatic consumption by test runners.

- Enforcing metadata schemas to validate field types and required parameters.

Secrets and Credentials Provisioning

- Test orchestrators retrieve secrets via HashiCorp Vault, AWS Secrets Manager, or Azure Key Vault using time-scoped permissions.

- Secrets references are injected as environment variables or configuration inputs without embedding sensitive data in code.

- Audit logs record retrieval events for compliance and forensic analysis.

Notification and Event Triggers

- Messages on EventBridge or Kafka trigger CI/CD pipelines in CircleCI or GitLab CI/CD to launch test jobs.

- Dashboards update environment status flags for visibility across teams.

- Webhooks notify orchestration services when environments reach a “ready” state.

Cleanup and Teardown

- CI/CD teardown jobs invoke IaC destroy commands or cloud APIs to remove resources tagged with environment identifiers.

- Conditional logic retains environments of failed runs for debugging, while automatically destroying successful runs after a retention period.

- Shared service cleanup deregisters test-specific endpoints without disrupting common infrastructure.

- Resource usage reporting informs cost allocation and capacity optimization.

Metrics, Reporting, and Traceability

Comprehensive metrics and audit artifacts enable continuous improvement and compliance:

- Provisioning lead time from initiation to validation, highlighting IaC or script bottlenecks.

- Error and failure logs forwarded to Prometheus, ELK Stack, or other log management systems.

- Resource utilization reports during provisioning and test execution for rightsizing decisions.

- Compliance artifacts demonstrating encryption, network segmentation, and security policy enforcement.

- Capacity forecast dashboards projecting future needs based on historical trends.

- Versioned IaC commits and execution logs mapping code changes to deployed environments.

- Approval records and policy check outputs documenting manual and automated decision points.

- Environment lineage graphs illustrating module ancestry and override layers for impact analysis.

By consolidating these inputs, AI-driven allocation strategies, deliverables, and governance mechanisms, the environment provisioning stage supports a deterministic, scalable, and auditable foundation for AI-enhanced test automation. Subsequent stages—data preparation, test execution, and defect analysis—consume these artifacts to deliver rapid feedback and predictive quality insights across the software delivery pipeline.

Chapter 5: Synthetic Test Data Generation and Management

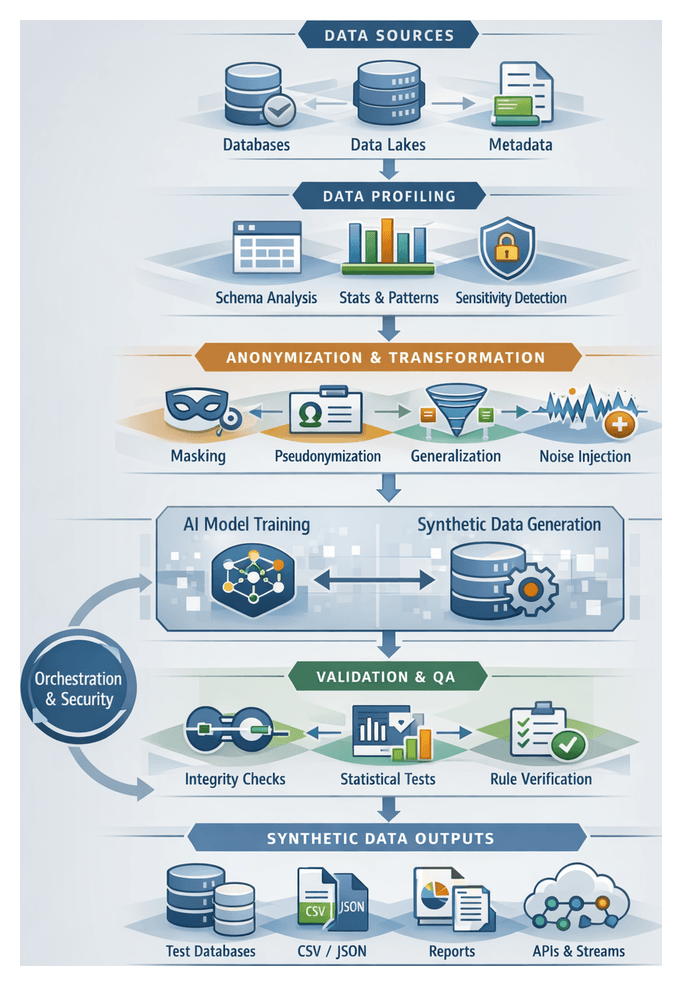

Establishing Data Requirements and Profiling Inputs

The first stage in synthetic test data generation defines the data requirements and establishes a detailed profile of existing datasets. This foundational step aligns test objectives with compliance mandates, captures statistical and relational properties of production systems, and informs the design of realistic, privacy-preserving synthetic data. By profiling data characteristics, teams mitigate the risk of misrepresentation, uncover edge cases, and build scalable data provisioning workflows.

Key outcomes include:

- High-fidelity data models that preserve distributions, constraints, and referential patterns

- Identification and classification of sensitive elements requiring masking, tokenization, or anonymization

- Documentation of business rules, domain logic, and validation criteria

- Alignment with regulatory frameworks such as GDPR, CCPA, and HIPAA

- Consensus between data engineering, compliance, and testing stakeholders

Prerequisites and Tooling

Execution of this stage depends on securing access to source environments, maintaining metadata catalogs, and leveraging automated profiling platforms. Core prerequisites include:

- Secure connectivity to transactional databases, data warehouses, and data lakes

- Up-to-date schema and lineage metadata from Informatica and Collibra

- Stakeholder alignment among business analysts, data stewards, and compliance officers

- Profiling tools like Trifacta and DataRobot to automate metric collection at scale

- Defined security policies governing data handling, anonymization thresholds, and retention

Profiling Activities and Success Metrics

Automated profiling uncovers data patterns and generates metrics that inform synthetic data models. Typical activities include:

- Schema Discovery: Extracting table and column metadata, data types, length constraints, and index definitions

- Statistical Analysis: Calculating cardinality, value frequency, distributions, mean, median, and standard deviation

- Pattern Recognition: Detecting regular expressions, date formats, and custom string patterns

- Anomaly Detection: Identifying outliers using AI techniques to ensure coverage of edge cases

- Referential Integrity Checks: Mapping parent-child relationships and detecting orphan records

- Sensitivity Classification: Tagging PII via Collibra Data Intelligence modules

To gauge profiling effectiveness, teams define acceptance criteria such as:

- Coverage ratio of profiled fields versus overall schema scope

- Data quality score derived from completeness, consistency, and validity metrics

- Null density to guide synthetic null value injection

- Uniqueness ratio to preserve high-cardinality distributions

- Anomaly rate to inform edge-case generation

- Sensitivity coverage aligned with approved anonymization methods

Synthetic Data Generation Workflow

Data Ingestion and Profiling

Source system integration uses secure connectors—such as Fivetran pipelines or custom extract scripts—to ingest data into a staging environment. Profiling engines, including Collibra and open-source alternatives, calculate metrics on cardinality, uniqueness, and null ratios. AI-driven modules detect hidden correlations and anomalies, populating a metadata catalog that drives anonymization and synthesis.

Anonymization and Transformation

Privacy transformations protect sensitive values via a combination of techniques orchestrated by engines like Apache Airflow:

- Masking and Tokenization: Replacing identifiers with irreversible tokens generated by Vault Enterprise

- Pseudonymization: Substituting real-world keys with consistent pseudonyms to preserve referential integrity

- Generalization: Converting granular data (timestamps, ages) into broader categories

- Noise Injection: Adding calibrated noise to numeric fields to maintain statistical validity

Every transformation is logged for auditability, with role-based approval gates enforcing compliance before proceeding.

Data Modeling and Schema Mapping

AI-assisted schema mapping tools infer foreign key relationships, propose synthetic reference tables, and enforce constraints. Key activities include:

- Entity Relationship Extraction: AI agents analyze catalogs to identify associations and lookup tables

- Constraint Enforcement: Defining primary key uniqueness, not-null rules, and domain validations

- Business Logic Incorporation: Reviewing and adjusting inferred rules so synthetic data reflects valid real-world scenarios

Synthetic Data Modeling and Synthesis

Platforms such as Tonic.ai and Databricks orchestrate generative models to produce synthetic records:

- Model Training: Training GANs or VAEs on profiled metrics to learn underlying distributions

- Sampling and Synthesis: Generating records at volume targets that cover edge cases and rare combinations

- Deterministic Generation: Managing seeds to reproduce identical datasets for regression testing

AI-driven monitors detect distribution drift and adjust parameters to maintain fidelity to production patterns.

Validation and Quality Assurance

Generated datasets undergo structural, semantic, and statistical validation:

- Structural Checks: Comparing generated schemas against expected definitions

- Referential Integrity: Verifying foreign key resolutions within synthetic tables

- Statistical Validation: Measuring differences in histograms, correlation matrices, and summary statistics

- Domain Rule Verification: Executing business rule engines (e.g., balance non-negativity, valid date sequences)

Failures trigger alerts in centralized monitoring platforms like ELK or Datadog, and human review gates allow parameter adjustments before continuation.

Orchestration, Security, and Compliance Controls

Workflow Orchestration

A central scheduler sequences tasks, manages dependencies, and coordinates parallel execution. Core capabilities include:

- Dependency Tracking: Ensuring profiling completes before anonymization begins

- Parallel Execution: Running environment-specific workflows concurrently

- Error Handling: Automated retries and escalations for persistent failures

- Audit Logging: Capturing execution metadata for traceability

Security and Compliance

Every workflow stage enforces privacy regulations and security policies:

- Data encryption at rest and in transit (AES-256, TLS)

- Role-based access control integrated with identity providers

- Policy enforcement via Collibra or Informatica

- Immutable audit trails recording run initiators, parameters, and outputs

- Automated data retention policies to purge raw and intermediate data

Delivery and Consumption of Synthetic Data

Artifact Types and Formats

Synthetic datasets are exported in multiple formats to support diverse test environments:

- CSV for scripts and analysis tools

- Apache Parquet for large-scale analytics

- JSON and Avro for API and event-driven testing

- SQL dumps for relational database population

- BSON/JSON for document stores

Metadata, Lineage, and Traceability

Each dataset is accompanied by machine-readable metadata capturing schema definitions, generation parameters, lineage records, and quality metrics. Registries such as SDV or AWS Glue ensure transparency and support auditability.

Compliance and Quality Reports

Automated reporting validates privacy and data quality requirements:

- Privacy assessments (k-anonymity, differential privacy, re-identification risk)

- Statistical validation (histograms, chi-square tests, KS statistics)

- Schema conformance logs for constraint violations

- Audit trails of generation runs and user approvals

Platforms like DataRobot AI Cloud and Gretel.ai provide built-in modules for privacy and quality reporting.

Integration with Test Execution Pipelines

Synthetic data is handed off via:

- Direct Database Population: Automated loaders ingest dumps into test databases

- API-Driven Ingestion: Endpoints exposed by Informatica Intelligent Cloud Services

- Artifact Repositories: Versioned stores such as Nexus or Artifactory

- Message Streams: Publishing payloads to Apache Kafka or AWS Kinesis

Consumption Patterns Across Test Stages

- Test Design: Sample datasets for parameterized scenario development

- Integration Testing: Representative records delivered via APIs or fixtures

- Performance Testing: Bulk exports in Parquet or JSON for load generators

- User Acceptance: Realistic, privacy-compliant user profiles for stakeholder validation

Automated Handoff Mechanisms

- Pipeline Triggers: Jobs initiated by Jenkins, GitLab CI, or Azure DevOps

- Webhooks and Callbacks: HTTP notifications to downstream services

- Message Brokers: Topics in RabbitMQ or Kafka signal dataset readiness

- RESTful APIs: Endpoints for dataset retrieval (e.g., /datasets/latest)

Continuous Improvement and Feedback Loops

Validation metrics and user feedback drive ongoing refinement of profiling and generation processes. Key practices include:

- Metric-Driven Tuning: Adjusting anonymization parameters and generation algorithms based on distribution drift and rule violations

- Model Retraining: Triggering GAN and VAE retraining pipelines with fresh production snapshots

- User Feedback Integration: Incorporating test team insights to close realism and coverage gaps

Roles, Responsibilities, and Best Practices

Clear ownership and governance ensure reliability and compliance:

- Data Engineers: Build pipelines, manage profiling and transformations

- AI Specialists: Develop generative models and oversee training

- Compliance Officers: Define privacy policies and approve anonymization rules

- Test Environment Managers: Provision environments and manage dataset handoffs

- Orchestration Administrators: Maintain workflow engines and scheduling

Adherence to versioned, immutable artifacts; validation gates; comprehensive documentation; and proactive monitoring minimizes friction between data generation and test execution, accelerating delivery while upholding data governance and security.

Chapter 6: Automated Test Execution and Scheduling

Purpose of Automated Test Execution

The automated test execution stage is the critical bridge between test design and result analysis, enabling continuous integration and delivery pipelines to validate application behavior under realistic conditions without manual intervention. By defining precise inputs—from code artifacts and configurations to environment endpoints and test data—and codifying trigger conditions, teams ensure tests run only when prerequisites are satisfied, minimizing resource waste, reducing false failures and delivering rapid, reliable feedback. In highly dynamic delivery environments, clear guardrails around execution timing and dependencies prevent cascading failures, resource contention and loss of confidence in the pipeline.

Prerequisites and Inputs

Execution Prerequisites

- Code and Artifact Readiness: Successful merge into the target branch or completion of a build job that passes static analysis, compilation and unit tests. Build artifacts—binaries, Docker images or serverless packages—must be published to a repository.

- Environment Provisioning and Health: Cloud resources, container clusters and external service mocks must be provisioned with completed health checks. Monitoring platforms such as Datadog or Prometheus can feed health signals.

- Configuration and Secrets Injection: Environment variables, configuration files and secrets (API keys, credentials) must be loaded via a secure vault or secrets manager such as HashiCorp Vault or AWS Secrets Manager.

- Test Data Preparation: Synthetic or masked datasets must be staged to match expected scenarios according to compliance and profiling rules.

- Dependency Service Readiness: Upstream APIs, message queues or external systems required by the application under test must be stubbed, mocked or deployed in test mode.

- Feature Flag Configuration: Feature toggles influencing application behavior should be set to the correct state.

- Scheduling Constraints: Concurrency limits, predefined time windows and resource-intensive suite schedules (nightly regressions, weekend load tests) must be defined.

Code and Build Artifact Inputs

- Source Control Reference: Commit hash, branch name or pull request identifier integrated with systems such as GitHub Actions, GitLab CI/CD or Azure DevOps Pipelines.

- Artifact Identifiers: Build numbers, semantic version strings or checksum-based tags ensure precise mapping between code and test executions.

- Container Image Locations: Fully qualified image URLs for containerized tests (for example, registry.company.com/app-under-test:1.2.3-test).

- Executable Packages: Paths to JARs, ZIPs or object-store artifacts (Amazon S3, Azure Blob).

- Build Metadata: Commit author, timestamp and build environment variables drive test selection and dynamic parameters.

Environment and Infrastructure Inputs

- Endpoint URIs: Base URLs for application services, database hosts and message brokers.

- Credentials and Access Tokens: API keys, OAuth tokens or identity provider credentials.

- Network Configuration: VPN settings, SSH tunnels or service mesh parameters.

- Infrastructure Health Indicators: Metrics confirming CPU, memory, storage and network utilization, fed by tools like Datadog or Prometheus.

- Environment Labeling: Tags identifying environment purpose (smoke, regression, performance) to route tests and collect metrics.

Test Suite and Configuration Inputs

- Suite Definitions: Manifests of test classes, scripts or scenarios managed in frameworks such as Selenium Grid, Appium or TestComplete.

- Filter Criteria: Tags, annotations or labels (critical, ui, api, database) selecting test subsets.

- Execution Parameters: Timeouts, retry policies, parallelism settings and device or browser configurations.

- Dynamic Data References: Pointers to datasets or configuration tables for data-driven tests.

- Environment-Specific Overrides: Variable files or configuration maps adjusting behavior per environment.

Data and State Inputs

- Database Snapshots or Seed Scripts: SQL dumps or migration scripts to establish a known data state.

- File System Artifacts: Configuration files, certificates or media assets required by the application.

- Message Queue Data: Preloaded messages simulating real-time events.

- Service Mocks: Virtualized contracts or stubs populated with expected responses.

Trigger Mechanisms

- Continuous Integration Events: Post-commit, merge or pull request events triggering test jobs.

- Scheduled Triggers: Cron-style schedules for nightly regressions and off-peak tests.

- Environment State Changes: Provisioning or data seeding completion events.

- Manual Intervention: On-demand execution via pipeline dashboards or chat-ops commands.

- On-Failure Retries: Conditional re-execution of flaky tests coordinated by tools such as Functionize or Testim.

Scheduling and Orchestration

Core Scheduling Logic

The orchestration layer evaluates triggers, gathers inputs from version control events, environment readiness checks and test metadata, then applies rules to select test suites and allocate execution slots. It integrates commit events from GitHub Actions or GitLab CI/CD, readiness signals from Azure DevOps or Kubernetes clusters, test case metadata and resource availability from platforms such as Selenium Grid or BrowserStack.

The scheduler determines which suites run immediately or queue for later, how many parallel instances to spawn, execution sequence when dependencies exist and fallback paths for readiness failures. These decisions are codified in pipeline definitions or APIs, such as declarative YAML in Jenkins or Kubernetes Job manifests.

Parallelization Strategies

- Test Suite Sharding: Dividing suites into smaller groups by feature or tags.

- Data-Driven Concurrency: Feeding multiple instances of the same script with varied data sets.

- Environment Pooling: Using pools of pre-provisioned containers or VMs to avoid delays.

- Dynamic Scaling: Programmatic integration with auto-scaling services such as AWS ECS or Google Kubernetes Engine to meet peak demand.

Prioritization Mechanisms

- Risk Score: Derived from defect history and code complexity, sourced from tools like SonarQube.

- Business Impact: High-priority tests for revenue-critical or compliance features.

- Dependency Hierarchy: Smoke and integration tests before deeper functional or performance suites.

- Recent Failures: Elevating tests that failed in the last cycle.

Sequencing and Dependencies

Dependency graphs model relationships between test suites, with workflow engines such as Camunda or Jenkins Pipelines executing independent nodes in parallel while deferring dependent nodes until prerequisites succeed.

CI/CD Integration

- Pre-Merge Validation: Automated tests on feature branches via GitHub Actions or GitLab CI/CD.

- Nightly Builds: Full regressions scheduled off-peak, with results fed into dashboards.

- Release Gates: Smoke and performance tests triggered by release candidate tags.

- Environment Cleanup: Post-execution teardown, metrics collection and log archiving.

Coordination and Communication

- Triggering Agents: Webhooks and API calls from source code management systems.

- Provisioning Services: Infrastructure-as-code tools like Terraform or Pulumi reporting status back to the scheduler.

- Test Execution Engines: Frameworks such as JUnit 5, TestNG or Playwright emitting progress via standard formats.

- Reporting Dashboards: Platforms like Kibana or Grafana visualizing pass rates and bottlenecks.

- Stakeholder Notifications: Alerts via Slack or Microsoft Teams on execution status and failures.

Monitoring and Continuous Improvement

- Execution Metrics: Queue lengths, wait times and durations to identify bottlenecks.

- Failure Analysis: Classifying failures by root cause to refine scheduling and resource policies.

- Capacity Forecasting: Historical data-driven predictions for proactive scaling.

- Policy Updates: Adjusting priorities and concurrency limits based on feedback.

- Retrospectives: Regular reviews of orchestration performance and improvement opportunities.

AI-Driven Adaptation and Monitoring

Environment Health Monitoring

AI-powered agents collect telemetry from infrastructure components, test harnesses and the application under test. They use unsupervised learning to establish baselines for CPU, memory, network latency and disk I/O, apply time-series anomaly detection, parse log streams with natural language processing to identify recurring errors and calculate composite health scores for each environment instance. Platforms such as Datadog or custom ML pipelines provide these capabilities.

Dynamic Scheduling Adjustments

- Priority Reordering: Reinforcement learning evaluates test criticality against health scores to sequence runs.

- Parallelization Management: Algorithms optimize concurrency levels per environment to avoid oversubscription.

- Adaptive Batching: Grouping tests with similar dependencies to reduce setup overhead.

- Context-Aware Routing: Directing tests to the healthiest available nodes based on real-time performance and historical success rates.

Anomaly Detection and Self-Healing

- Root Cause Inference: Bayesian networks analyze correlated metrics to identify underlying issues.

- Self-Healing Actions: Playbooks trigger environment resets, cache clears or service restarts on specific anomaly patterns.

- Escalation Decisioning: Failed automated remediations escalate issues to human operators with enriched context.

- Learning from Incidents: Reinforcement loops refine intervention strategies to reduce false positives.

Predictive Resource Scaling

- Demand Forecasting: Time-series models predict queue lengths and peak loads.

- Proactive Provisioning: Triggers infrastructure-as-code modules to spin up resources ahead of demand.

- Cost Optimization: Balancing performance targets and budget constraints by scaling resources based on forecast confidence.

- Workload Smoothing: Shifting scheduled test batches to flatten peaks and avoid overprovisioning.

Intelligent Load Balancing

- Performance Profiling: Supervised models classify nodes by execution speed and stability.

- Load Distribution: Constraint solving assigns test cases to nodes to minimize completion time.

- Dependency Mapping: Graph analytics ensure tests with shared resources or sequences run on compatible nodes in order.

- Resilience Routing: Rerouting in-flight tests to alternate nodes upon node failure.

Feedback Integration

- Outcome Analysis: Classification models assess run outcomes, failure modes and durations.

- Model Retraining: Execution metrics and incident data feed back into scheduling, detection and forecasting models.

- A/B Testing of Policies: Controlled experiments compare scheduling strategies to adopt superior approaches.

- Dashboard Enrichment: Visualizations surface model performance and drift, enabling retraining interventions.

Adaptive Orchestration Outcomes

By embedding intelligence at each decision point—health monitoring, scheduling adjustments, self-healing, predictive scaling and load balancing—teams achieve a resilient, adaptive test execution pipeline. Continuous feedback loops refine AI agents, driving improvements in throughput, reliability and cost efficiency to support fast-paced development cycles without compromising quality.

Execution Artifacts and Feedback

Artifact Portfolio and Structure

Automated test runs produce artifacts that document system behavior, environment state and AI-driven adaptations. Consistent structures, naming conventions and metadata schemas maximize downstream utility. Key artifacts include:

- Test Result Files: Detailed XML (JUnit), JSON or HTML files enumerating each case, status, duration and tags, correlated by build numbers or commit hashes.

- Execution Logs: Standard output, debug traces and framework messages, centralized in platforms like ELK (Elasticsearch, Logstash, Kibana) or Splunk for searchable analysis and AI-driven log pattern recognition.

- Coverage and Quality Reports: Code coverage heatmaps and metrics feeding risk engines to calculate test completeness scores.

- Performance and Resource Metrics: Telemetry on CPU, memory, disk I/O, network latency and application-specific metrics recorded by tools such as New Relic or Dynatrace.

- Environment Snapshots: Identifiers for containers, VMs or cloud instances, orchestration manifests and network topologies for reproducibility and post-mortems.

- AI Adaptation Logs: Records of scheduling decisions, routing changes and scaling events made by AI agents.

A recommended directory layout is:

- Outputs/<pipelineName>/<buildNumber>/results/