Orchestrating AI Agents for End to End Data Analysis Workflows

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Challenges of Fragmented Analytics Processes

Enterprises today generate enormous volumes of data across diverse systems, geographies, and business units. Without a unified analytics framework, information becomes trapped in departmental silos, manual handoffs introduce errors and delays, and critical insights arrive too late or not at all. Disconnected toolchains and legacy platforms amplify complexity, while inconsistent definitions and schema drift lead to broken reports and flawed models. As workloads grow, scalability constraints strain infrastructure, and security or compliance gaps expose organizations to risk. Cultural and organizational barriers further hinder collaboration, causing duplication of effort, redundant pipelines, and lost productivity.

- Data silos that limit visibility and collaboration

- Inconsistent definitions, formats, and schema drift

- Manual handoffs prone to delay and human error

- Latency issues impeding real-time or near-real-time analysis

- Lack of standardized processes, governance, and accountability

- Duplication of effort across teams and redundant pipelines

- Security, privacy, and compliance gaps in scattered systems

- Scalability and resource constraints under growing workloads

- Fragmented toolchains that increase integration complexity

- Cultural and organizational barriers that hinder collaboration

When data is isolated in on-premise databases, cloud services, or departmental spreadsheets, cross-functional reporting requires massive manual effort. Definitions such as “customer_id” versus “clientID” diverge across systems, and schema drift can trigger downstream failures without detection. Traditional handoffs involve exporting snapshots, spreadsheet cleansing, and email exchanges, each step introducing versioning errors and audit gaps. The result is delayed insights, reactive troubleshooting, and a growing backlog of unresolved data requests.

Consider a common scenario: a marketing team submits an analytics request, IT must approve elevated access to a vendor database, engineers write custom queries and export flat files, analysts discover missing fields and reopen tickets, and data scientists resort to manual imputation to meet deadlines. By the time senior leadership receives corrected analysis, the original campaign window has closed. This reactive cycle undermines agility, erodes trust in analytics, and wastes valuable resources.

AI Agents and Unified Orchestration

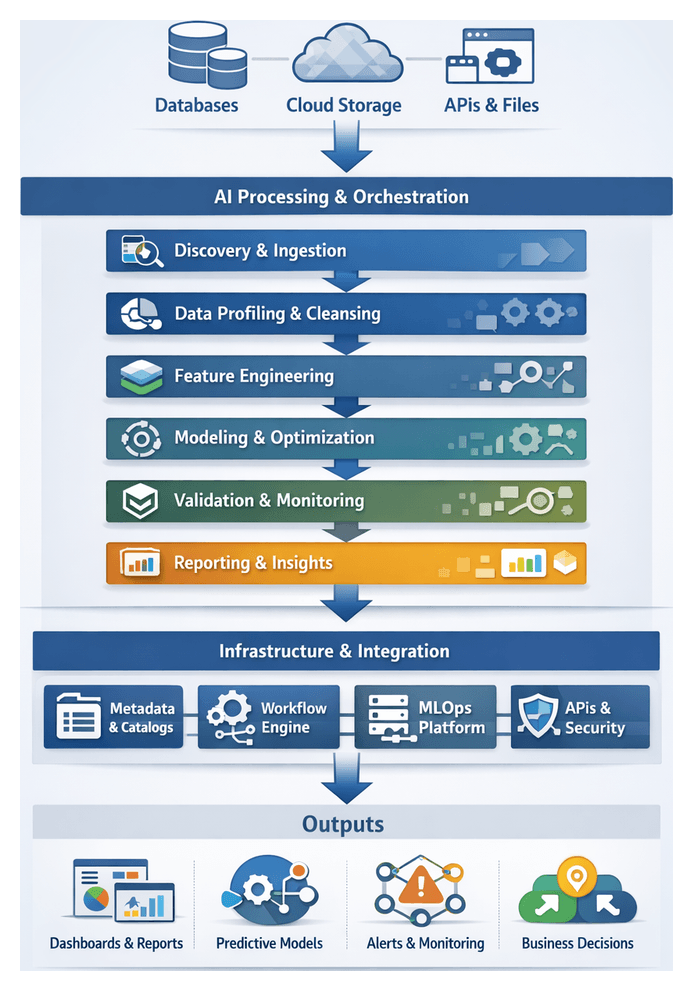

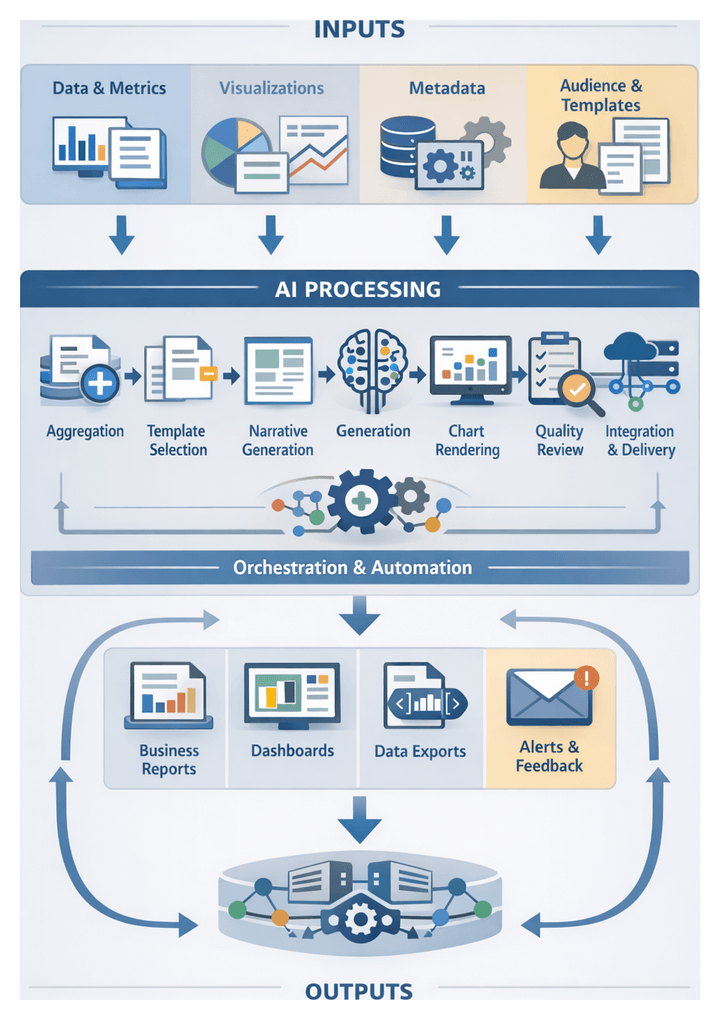

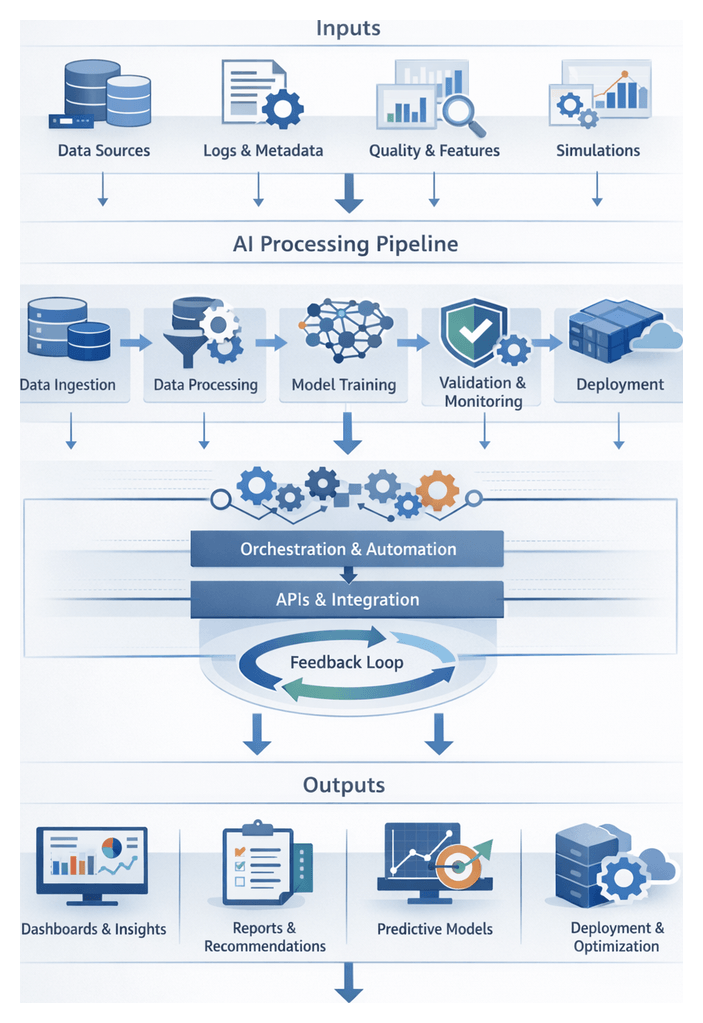

Transforming fragmented processes into an end-to-end, automated pipeline requires intelligent orchestration. Specialized AI agents operate at each stage of the analytics lifecycle—discovery, ingestion, profiling, cleansing, feature engineering, modeling, validation, and reporting—eliminating routine manual tasks and enabling teams to focus on strategic problem solving. These agents embed decision logic, self-learning capabilities, and metadata capture, ensuring consistent quality, governance, and auditability.

By autonomously detecting schema changes, triggering cleansing routines, recommending model configurations, and generating narrative summaries, AI agents remove bottlenecks and reduce time-to-insight. Centralized orchestration engines manage dependencies, scheduling, retries, and event-driven handoffs, providing real-time visibility into pipeline health and performance. As a result, organizations can scale analytics operations, enforce security policies, and maintain compliance—all while fostering cross-team collaboration and reducing technical debt.

Key AI Agent Capabilities Across the Analytics Lifecycle

- Discovery and Ingestion Agents: Scan enterprise catalogs, apply connector templates, and adapt to schema variations. Examples include Apache Airflow connectors and custom adaptive pipelines.

- Profiling and Cleansing Agents: Use statistical analysis and anomaly detection to generate quality metrics, classify outliers, and enforce standardization rules. Platforms such as H2O.ai illustrate automated rule enforcement.

- Feature Engineering Agents: Derive new variables, apply aggregations, integrate external enrichment, and assess feature importance. Solutions like DataRobot embed these capabilities within automated pipelines.

- Visualization and Pattern Recognition Agents: Generate interactive charts, detect clusters, and propose hypotheses. Tools such as Prefect and Kubeflow dashboards leverage embedded pattern detection.

- Modeling and Optimization Agents: Automate algorithm selection, hyperparameter tuning, and cross-validation. MLOps platforms like MLflow and DataRobot streamline experimentation and artifact management.

- Validation and Drift Detection Agents: Monitor production performance, detect concept drift, trigger alerts, and orchestrate retraining workflows.

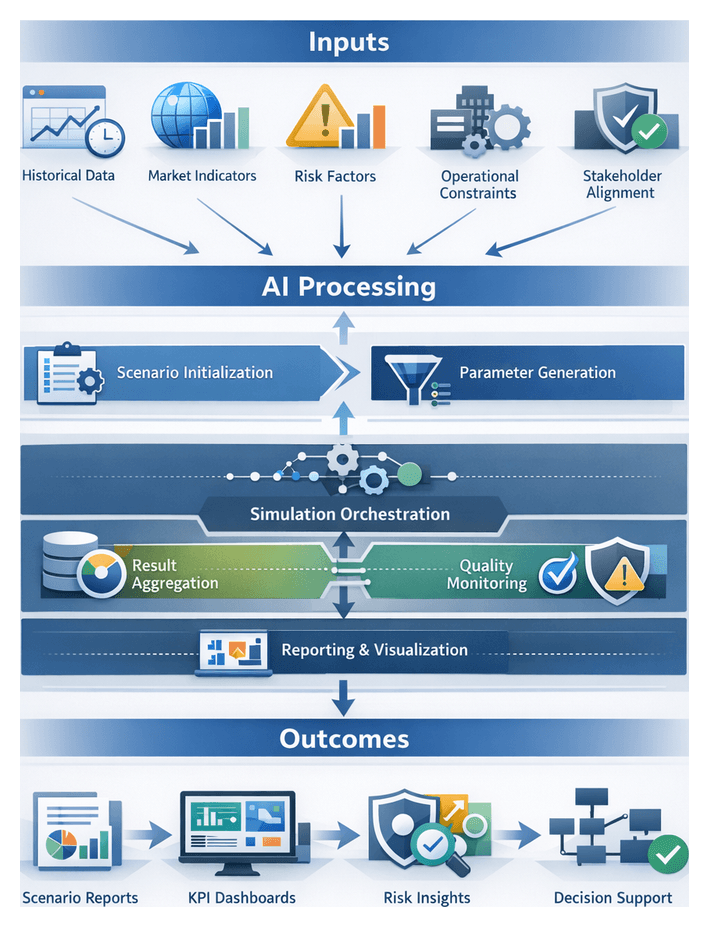

- Simulation and Scenario Planning Agents: Run stress tests, Monte Carlo simulations, and risk analyses to support strategic decision making.

- Prescriptive and Optimization Agents: Solve resource allocation, pricing, and operational planning problems using linear programming, genetic algorithms, or heuristic search.

- Narrative Generation Agents: Employ generative language models to produce executive-grade summaries, embed visualizations, and tailor language to specific stakeholders.

- Orchestration and Governance Agents: Manage Directed Acyclic Graphs (DAGs), enforce dependencies, capture audit trails, and integrate with orchestration engines.

Supporting Systems and Integration Layers

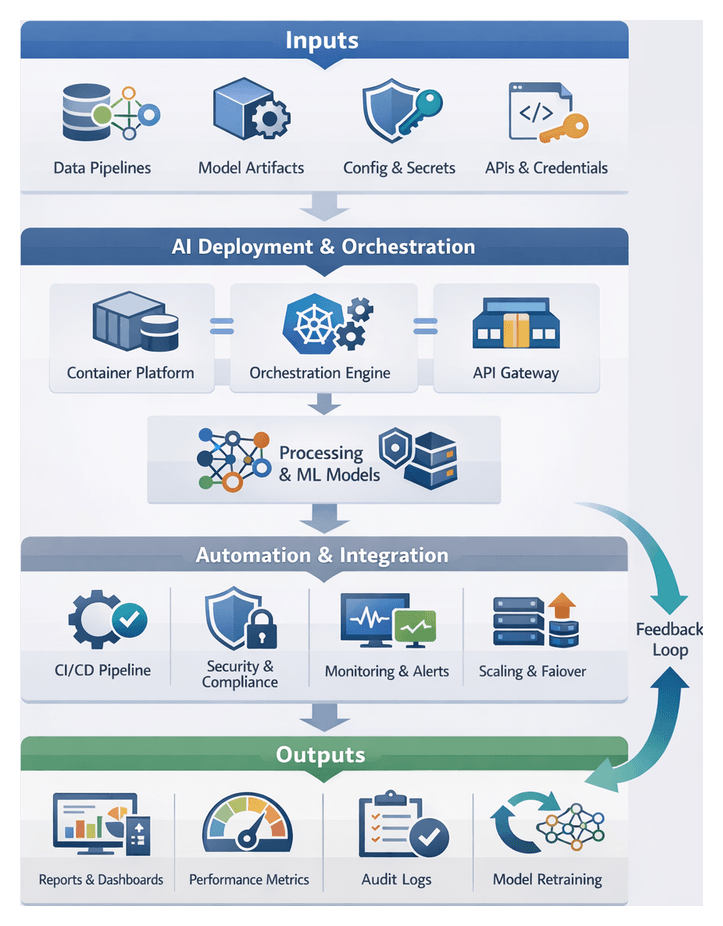

AI agents depend on a robust ecosystem of metadata services, workflow engines, and MLOps platforms. These systems provide service discovery, configuration management, and execution environments that underpin reliable automation and scalability.

- Metadata and Data Catalogs: Central repositories for schema definitions, lineage graphs, and access policies. Examples include Apache Atlas and commercial governance platforms.

- Workflow Orchestration Engines: Coordinate agent tasks, manage scheduling, and handle retries. Common tools include Apache Airflow, Prefect, and Kubeflow.

- MLOps and Model Management Platforms: Track experiments, version models, and automate deployment. Platforms like MLflow and H2O.ai Driverless AI serve these roles.

- API Gateways and Microservices: Expose agent functions via REST or gRPC, enforce authentication, and route requests to internal services.

- Containerization and Orchestration: Docker and Kubernetes enable isolated, scalable runtime environments, service discovery, and rolling updates.

- Logging and Monitoring Frameworks: Centralized log aggregation, metrics collection, and alerting with Prometheus and Grafana support real-time observability.

Operational Benefits of AI-Driven Automation

- Reduced manual effort through automation of repetitive tasks

- Accelerated time-to-insight via parallelized, self-healing pipelines

- Consistent data quality with embedded validation and lineage tracking

- Scalability to accommodate growing data volumes and user demands

- Enhanced governance with audit trails and policy enforcement

- Improved cross-team collaboration using standardized interfaces

- Adaptive intelligence as agents learn from evolving patterns

Designing a Structured AI-Driven Workflow Framework

A well-defined framework serves as the blueprint for operationalizing AI-driven analytics. It sequences processes, assigns agent responsibilities, and specifies integration points, ensuring alignment with business objectives and minimizing fragmentation. The output of this design phase guides engineering teams, data scientists, and operations in executing a cohesive, scalable solution.

Key Deliverables

- Workflow Stage Sequence Specification: Diagrams and narratives detailing each stage from discovery through deployment, including inputs, outputs, conditionals, and exception paths.

- Agent Role and Communication Matrix: Mapping of agents, responsibilities, input parameters, and messaging protocols, referencing orchestration platforms such as Apache Airflow.

- Integration Interface Definitions: API contracts, message formats, and data schemas for connecting to data lakes, feature stores, model registries, and reporting platforms like Amazon SageMaker and several other listed on AgentLinkAI.

- Metadata Catalog and Lineage Mapping: Repository of schema definitions, transformation histories, and governance rules documenting dataset provenance and audit trails.

- Orchestration Configuration Artifacts: Templates for container manifests, scheduling parameters, and CI/CD pipelines that automate agent provisioning and lifecycle management.

Dependencies and Preconditions

- Data Platform Readiness: Availability of data lakes, warehouses, or streaming platforms compatible with agent runtimes and serialization formats.

- Security and Compliance Policies: Defined access controls, encryption standards, identity integrations, and audit logging in line with regulations.

- Agent Runtime Environment: Provisioned container orchestration (Kubernetes, Docker Swarm), monitoring tools, resource quotas, and auto-scaling rules.

- Integration Tooling and Licenses: Connectors, SDKs, and middleware for ETL platforms, message brokers, and external data sources.

- Governance and Stakeholder Alignment: A steering committee to approve schemas, naming conventions, and quality standards, with regular checkpoints to align technology with business goals.

Handoff Mechanisms to Execution Teams

- Version-Controlled Repositories: Storage of design documents, diagrams, and configuration templates in Git or equivalent systems with tagged releases.

- Automated CI/CD Pipelines: Integration of artifacts into delivery workflows where changes trigger validation and staging tests for agent coordination.

- Design Review Sessions: Workshops to walk through specifications, communication matrices, and interface definitions, generating actionable backlog items.

- Technical Readiness Checklists: Standard forms to confirm environment configurations, dependency installations, and access rights before development.

- Onboarding Guides and Templates: Preconfigured code and container templates referencing naming conventions, error-handling patterns, and logging standards.

Integration Points and Governance

- Data Source Connectors: Automated interfaces to on-premise databases, cloud storage, and SaaS applications, driven by metadata-driven connectors that adapt to schema changes.

- Feature Store APIs: Standardized endpoints for registering and retrieving features to ensure consistency across modeling teams.

- Model Registry Endpoints: Interfaces for versioning and publishing trained models to support validation and deployment workflows.

- Reporting and Visualization Platforms: Event-driven handoffs to tools such as Tableau and Power BI via RESTful endpoints for automated dashboard updates.

- Monitoring and Observability Services: Integration with log aggregation, metrics platforms, and alerting frameworks to ensure SLA compliance and proactive issue resolution.

Scalability and Future Extensibility

- Modular Agent Design: Self-contained agents that can be deployed, scaled, or replaced independently.

- Microservices and API-First Approach: Service-oriented components with well-defined interfaces for independent evolution.

- Metadata-Driven Configuration: Dynamic workflows driven by catalogs and configuration files to minimize code changes for new sources or rules.

- Elastic Compute and Storage: Cloud-native infrastructure and infrastructure-as-code templates that auto-scale with demand.

- Plug-In Frameworks for New Agents: Extension points that allow integration of future AI capabilities without major refactoring.

With this structured framework in place—articulating challenges, agent roles, integration layers, deliverables, and governance—organizations can transition seamlessly from design to production. The next stage focuses on deploying autonomous agents for data discovery and ingestion, guided by the artifacts, dependencies, and handoff protocols defined here.

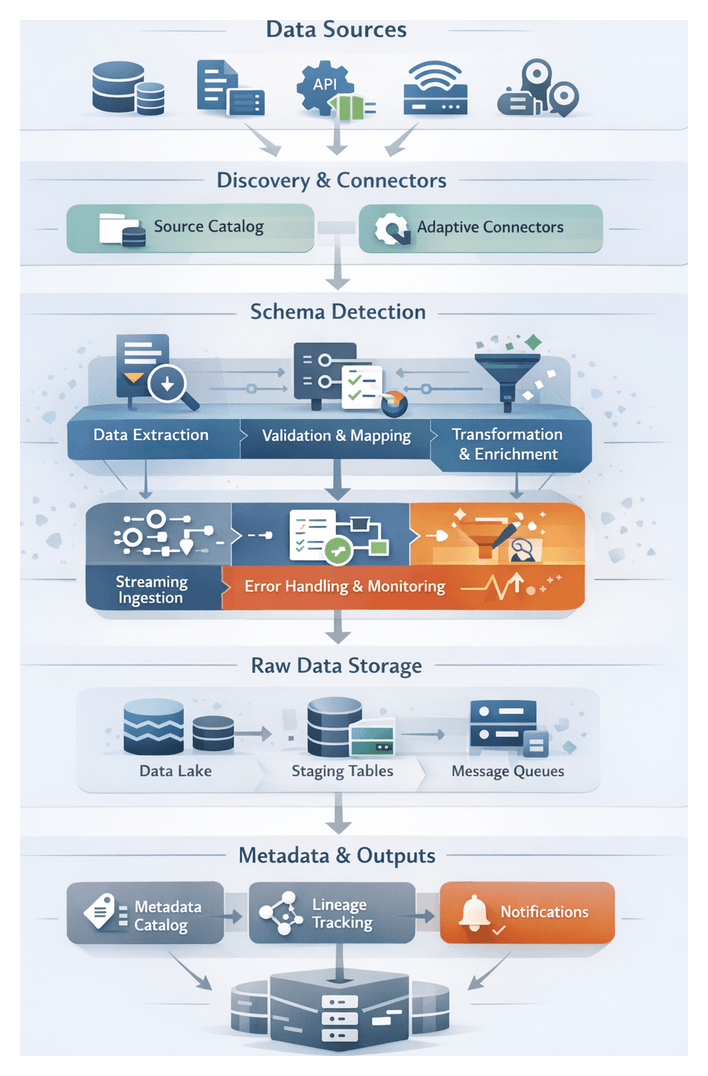

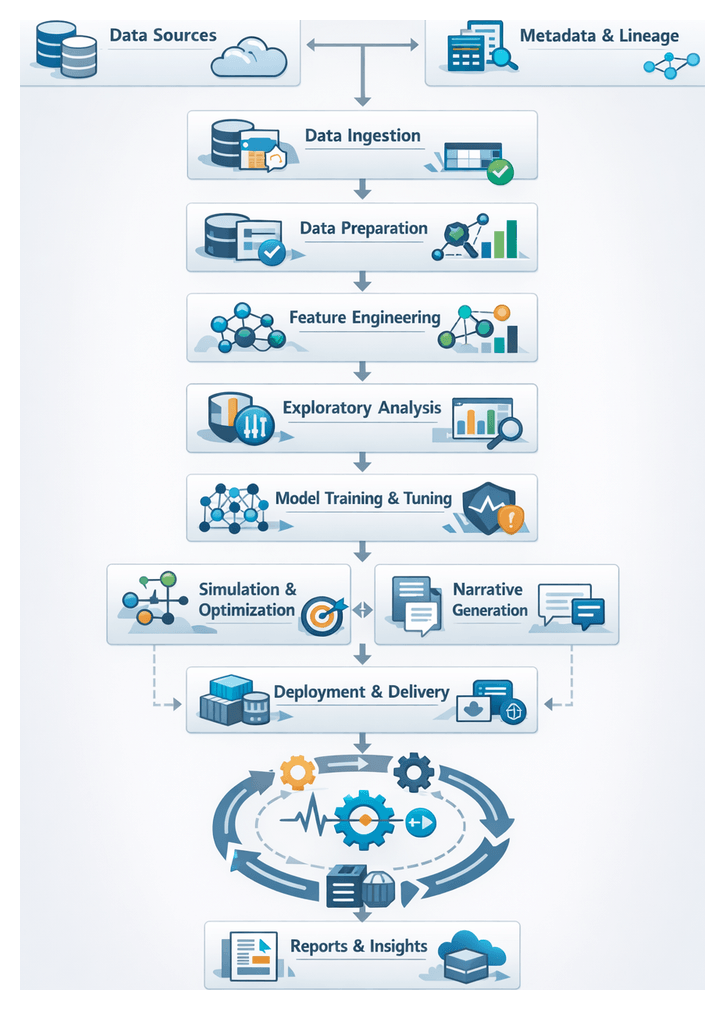

Chapter 1: Data Discovery and Ingestion

The journey from dispersed raw data to actionable insights begins with a unified discovery and ingestion stage. This foundational phase defines objectives for connecting to relational databases, NoSQL stores, streaming platforms, file shares, APIs, and edge devices. AI-driven discovery agents automate the detection of new repositories, infer logical relationships between datasets, and adapt to schema changes. By centralizing source enumeration and metadata capture, organizations enforce consistent governance, streamline security reviews, and eliminate manual handoffs that delay insight generation. Scalable pipelines ingest structured, semi-structured, and unstructured data—ranging from event logs and sensor telemetry to social media feeds and multimedia assets—into a raw landing zone that primes downstream preparation, feature engineering, and modeling efforts.

Core Capabilities and AI-Driven Tools

Modern discovery and ingestion leverage intelligent agents and orchestration layers to deliver resilience, traceability, and performance at scale. Key capabilities include automated source cataloging, adaptive connector configuration, dynamic schema management, real-time streaming ingestion, and robust error handling. These functions are supported by specialized platforms and frameworks:

- Databricks Unity Catalog for unified metadata management.

- Apache NiFi for extensible connector plugins and data flow orchestration.

- Apache Kafka for high-throughput event streaming.

- AWS Glue Data Catalog, Azure Data Catalog, and Google Cloud Data Catalog for cloud metadata synchronization.

Discovery Agents and Metadata Harvesting

Discovery agents perform automated endpoint enumeration across on-premises and cloud environments. Adaptive crawlers probe storage locations, streaming topics, and API endpoints to harvest schema definitions, file structures, protocol requirements, and access credentials. Natural language processing and pattern recognition classify data domains, detect sensitive information, and assign contextual tags. Agents populate centralized catalogs—such as Databricks Unity Catalog—and maintain an up-to-date inventory that feeds governance engines and supports policy enforcement.

Adaptive Connector Configuration

Connector agents leverage AI to create and tune connectors for JDBC, REST, file-based, and streaming protocols. Machine learning models predict optimal batch sizes, parallelism levels, and polling intervals. When source interfaces evolve, agents detect API version changes or schema shifts and automatically adjust authentication methods, request parameters, or plugin versions. In Apache NiFi environments, agents orchestrate plugin management to ensure compatibility and security compliance.

Schema Evolution and Dynamic Mapping

Before ingestion, schema interpretation agents analyze source definitions and sample records, inferring field structures, data types, and nested hierarchies. Versioned schema registries track changes over time. Upon detecting new columns, type mutations, or reordering, agents validate impacts on downstream consumers, auto-generate mapping updates or flag anomalies for human review. Mapping templates govern type casting, naming conventions, and default value assignments, ensuring consistent staging schemas across diverse sources.

Batch Ingestion Workflow

Batch ingestion handles predictable, high-volume transfers with minimal latency requirements. The workflow steps include:

- Snapshot Capture: Agents query new or changed records using watermark or change data capture (CDC) markers to enable incremental extraction.

- Data Extraction: Connectors pull records into secure staging zones. Window sizes and polling intervals are optimized to protect source performance.

- Pre-Ingestion Validation: Validation agents inspect payloads for schema compliance, missing keys, and null constraints. Quarantined batches are rerouted for cleansing.

- Transformation and Enrichment: Transformation agents apply mapping templates to standardize field names, cast types, and add metadata such as source identifiers, extraction timestamps, and lineage IDs.

- Load into Staging: Processed batches are written to data lakes, object stores, or staging tables. Completion events are registered in the metadata catalog.

- Post-Load Verification: Verification agents reconcile record counts and checksums against source statistics. Discrepancies trigger retries or alert notifications.

Streaming Ingestion Workflow

Streaming pipelines deliver low-latency data feeds into analytics platforms. The sequence typically follows:

- Topic Provisioning: Provisioning agents create or update partitions on messaging brokers (e.g., Apache Kafka) to balance load.

- Event Capture: Source adapters publish events using HTTP callbacks, WebSockets, or proprietary push interfaces.

- Stream Processing: Agents subscribe to topics, applying in-motion transformations, deduplication, and timestamp normalization.

- Windowing and Aggregation: Events are grouped into tumbling or sliding windows for preliminary metric computations.

- Continuous Delivery: Enriched events are written continuously to real-time dashboards, data warehouses, or sandboxes via high-throughput connectors.

- Stream Monitoring: Monitoring agents track lag, throughput, and error rates. Threshold breaches trigger automated scaling or operator alerts.

Error Handling and Retry Mechanisms

Resilience is built into every ingestion workflow. Agents implement layered error handling:

- Connection Errors: Back-off and retry strategies for transient network or authentication failures, with escalation of persistent issues.

- Schema Mismatches: Fallback mappings or record quarantining for unexpected field types or missing columns.

- Data Quality Violations: Anomaly resolution agents divert critical anomalies—such as null primary keys—to cleansing queues or human operators.

- Resource Exhaustion: Dynamic scaling and throttling maintain stability under high load conditions.

Orchestration and Monitoring

A central orchestration layer coordinates agent tasks via RESTful APIs and message queues. Core components include:

- Task Scheduler: Manages execution windows, dependencies, and concurrency limits.

- Metadata Catalog: Stores connector definitions, schema versions, lineage, and performance metrics.

- Event Bus: Facilitates asynchronous communication, driving state transitions with events like “ingestion success” or “error detected.”

- Resource Manager: Allocates compute resources and orchestrates containerized agent deployments to meet SLAs.

- Alerting Service: Aggregates notifications from error handling agents and delivers them via email, chat, or dashboards.

Raw Data Consolidation and Handoff Protocols

Upon completion of ingestion, raw data consolidation establishes consistent storage, annotation, and communication of datasets for downstream preparation. Standardized deliverables include object storage files, staging tables, message queue topics, metadata manifests, and lineage records. By enforcing predictable folder structures, metadata tagging conventions, and event-driven notifications, organizations minimize handoff failures and ensure end-to-end traceability.

Raw Data Deliverables

- Object Storage Files in S3, Azure Data Lake Storage, or Google Cloud Storage (formats: JSON, Parquet, Avro, CSV)

- Staging Tables in data warehouses or relational databases preserving source schemas

- Message Queue Topics on systems like Apache Kafka or AWS Kinesis

- Metadata Manifests describing schema versions, record counts, checksums, and extraction timestamps

- Lineage Records in centralized metadata repositories linking data artifacts to origin systems and agents

Dependencies and System Integration

- Extraction Success Signals from agents feeding orchestration tools such as AWS Step Functions

- Schema Registry Access for compatibility and version control

- Storage Infrastructure Availability with sufficient capacity and performance

- Security and Access Controls compliant with GDPR, CCPA, or HIPAA mandates

- Catalog and Metadata Services

Handoff Mechanisms

- File Landing and Folder Structure: Organize raw files by source, date, and run ID (e.g., /raw/sourceA/2026-02-25/run_07/).

- Event Publication: Publish events with file URIs, record counts, and manifest references to messaging topics.

- Catalog Registration and Tagging: Register datasets in the data catalog with tags: sourceSystemId, schemaVersion, extractionTimestamp, storageLocation, lineageId.

- Orchestration Workflow Transitions: Trigger data preparation workflows upon successful handoff or send alerts on failures.

- Notification and Alerting: Inform stakeholders via email, chat, or dashboards with hyperlinks to logs, catalog entries, or diagnostics.

Metadata Tagging Conventions

- sourceSystemId: code representing the originating application (e.g., ERP_12)

- schemaVersion: semantic version number (e.g., v1.3.2)

- extractionTimestamp: ISO 8601 timestamp (e.g., 2026-02-25T14:30:00Z)

- ingestionRunId: UUID for each execution

- recordCount: integer count of ingested records

- checksum: MD5 or SHA-256 hash for integrity verification

Error Handling and Retries

- Automatic Retries with exponential back-off for transient failures

- Dead-Letter Queues for unresolved records and failure manifests

- Alert Escalation to on-call engineers with diagnostic details

- Audit Logs capturing structured actions for compliance and forensics

Operational Best Practices

- Leverage Incremental Ingestion and CDC to minimize resource consumption

- Implement Data Compression and Partitioning to improve storage efficiency

- Enforce Schema Evolution Policies for compatibility and data integrity

- Adopt a Unified Orchestration Layer with platforms like Azure Data Factory or Google Cloud Dataflow

- Maintain a Centralized Data Catalog with automated metadata harvesting

- Regularly Review and Optimize SLAs with upstream and downstream stakeholders

Transition to Data Preparation and Cleaning

With raw data consolidated and handoff protocols executed, cleaning agents subscribe to event triggers, retrieve artifacts, and commence profiling. Guaranteed conditions include accessible URIs, comprehensive metadata manifests, automated workflow activation, and exception queues for human review. These foundations empower downstream AI-driven feature engineering and modeling to proceed with high-quality, traceable inputs.

Connecting to Enterprise Data Sources

The foundation of a unified analytics workflow lies in automated discovery and secure connectivity to every data repository across cloud, on-premise, and third-party systems. AI-driven agents scan warehouses, databases, file shares, APIs, and streaming platforms to build a consolidated inventory of data assets, schemas, access protocols, and quality indicators. This eliminates manual integration bottlenecks, uncovers hidden silos, and ensures downstream processes operate on a complete, governed data foundation.

Key Objectives

- Comprehensive Source Identification: Detect all structured and unstructured repositories, including under-utilized or private assets.

- Schema Interpretation and Profiling: Assess field definitions, data types, and distributions to guide ingestion logic.

- Metadata Cataloging: Enrich asset records with lineage pointers, sensitivity labels, owner details, and refresh schedules.

- Access Validation: Verify connectivity, authentication, and authorization settings to prevent runtime failures.

- Prioritization: Rank sources by strategic value, data volume, and recency to optimize ingestion order.

Inputs and Deliverables

- Credentials and Network Details: Secure tokens, certificates, VPC/subnet info, firewall rules, and proxy settings.

- Prioritization Criteria and Glossary: Business rules ranking sources and a seed dictionary of known entities.

- Agent Configuration Profiles: Templates specifying protocols (JDBC, REST, SFTP), scanning frequency, and resource quotas.

- Consolidated Inventory: Searchable catalog of detected repositories with standardized identifiers.

- Schema Extracts and Connectivity Report: Machine-readable definitions (JSON/XML) and status summaries with remediation guidance.

- Metadata Registry: Enriched asset descriptions supporting governance and self-service discovery.

Prerequisites and Handoff Conditions

- Infrastructure Readiness: Pre-provisioned network access, approved scanning windows, and containerized or serverless agent deployment.

- Governance Policies: Classification frameworks (GDPR, CCPA), masking rules, and retention guidelines.

- Logging and Audit Trails: Centralized systems capturing connection attempts, schema changes, and anomalies.

- Stakeholder Alignment: Agreed source prioritization, maintenance windows, and escalation procedures.

- Handoff Protocols: Versioned catalogs (JSON Schema or Apache Atlas), standardized metadata formats, and connectivity validation reports.

Integration with platforms delivers adaptive connector generation, intelligent schema matching, anomaly alerts, and self-service catalog interfaces, closing the loop on metadata enrichment and source management.

Profiling and Detecting Data Anomalies

Once sources are connected, an orchestration layer triggers profiling agents to compute summary statistics—counts, min/max values, null rates, histograms—and index schema definitions in a metadata catalog. Profiling tasks execute via RESTful APIs or JDBC drivers against data lakes and warehouses, with progress and metrics streamed to a monitoring dashboard.

- Orchestration polls ingestion outputs and retrieves dataset identifiers.

- Profiling agents compute aggregates and store field definitions in the catalog.

- Anomaly detection compares current metrics to historical baselines and thresholds.

- Alerts for deviations feed into an anomaly resolution queue.

A decision engine classifies anomalies—distinguishing schema evolution from data corruption—and invokes schema-evolution agents to update connectors and rerun profiling when necessary. This automated feedback loop ensures consistent awareness of data health and readiness for cleansing.

Automated Cleansing and Standardization

Following detection, a cleansing workflow applies domain-specific rules to standardize, deduplicate, impute, and enforce data types. A centralized rule repository, accessible via API, supplies transformations that execute in stages over a distributed messaging bus.

- Rule Retrieval: Fetch format patterns, value mappings, and null-handling directives.

- Bulk Standardization: Apply global normalization—date formatting, numeric scaling, text casing.

- Inconsistency Resolution: Use record linkage algorithms to identify and merge duplicates.

- Null Handling: Impute, delete, or insert placeholders based on field criticality.

- Type Enforcement: Coerce data to target schema types, capturing conversion errors for review.

Status messages and error logs publish to a centralized event store, powering dashboards for throughput, error rates, and processing durations. When automated rules cannot resolve an anomaly, a human-in-the-loop interface alerts data stewards to review samples, update rule definitions, and resume processing.

AI-Driven Rules Enforcement and Anomaly Resolution

Detection and Classification

- Statistical Profiling Engines compute dynamic baselines and flag outliers without manual threshold tuning.

- Classification Models leveraging Great Expectations categorize anomalies—format violations, referential errors, business rule conflicts.

- Metadata-Aware Contextualization using platforms like Collibra refines classification by applying semantic context and lineage information.

- NLP Modules inspect semi-structured text for sentiment shifts and terminology inconsistencies.

Rule Generation and Refinement

- Automated Rule Synthesis analyzes historical corrections to propose new validation rules.

- Active Learning Workflows present recommended rules with confidence scores for steward approval.

- Versioned Rule Repository via Informatica Data Quality tracks rule versions, approvals, and deployments.

- Policy Alignment cross-references regulatory libraries to ensure compliance.

Automated Remediation and Validation

- Pattern-Based Corrections apply transformation templates for phone, address, and date fields.

- Predictive Imputation using H2O.ai models estimates missing values from correlated features.

- Master Data Reconciliation enforces golden records by matching duplicates against authoritative sources.

- Transactional Rollbacks and version control ensure safe reversal of corrections.

Validation agents re-run classification models, verify referential integrity, and update lineage metadata to confirm that remediations adhere to rules without introducing new anomalies.

Continuous Learning and Integration

- Expert Review Dashboards and feedback loops refine models based on steward decisions.

- Automated Retraining via Amazon SageMaker updates classification and imputation models.

- Performance Monitoring tracks precision, remediation accuracy, and false positive rates.

- Orchestration with Apache Airflow or Azure Data Factory handles scheduling, dependencies, and SLAs.

- Collaboration via Jira or ServiceNow tickets streamlines escalation of complex anomalies.

Clean Dataset Delivery and Integration Handoffs

Output Artifacts

- Schema and Formats: Clean datasets in Parquet, Avro, or relational tables with embedded definitions.

- Metadata and Quality Metrics: Completeness, uniqueness, validity, and consistency scores attached to each field.

- Lineage and Provenance: Graphs linking raw sources, applied rules, steward decisions, and outputs.

Dependency Tracking and Version Control

- Dependency Graphs visualize relationships among sources, agents, and outputs.

- Automated Change Detection triggers reprocessing on rule or connector updates.

- Semantic Versioning encodes schema evolution and quality threshold conformance.

Handoff Protocols

- Secure Storage and Access: Role-based permissions on data lakes, warehouses, or catalog-managed URIs.

- Notification Triggers: Event payloads with dataset identifiers, versions, and lineage links.

- API Access: RESTful or gRPC endpoints exposing datasets under defined contracts.

Integration with Feature Engineering and Compliance

- Metadata Catalog APIs list available datasets and quality profiles for self-service discovery.

- Orchestration Workflows in Apache Airflow sequence cleaning and feature-derivation tasks.

- Shared Storage Mounts in Databricks enable direct table access.

- Audit Logs, Digital Signatures, and Access Reviews ensure traceability and regulatory compliance.

Example Implementation Pattern

- A Trifacta pipeline transforms raw data into cleaned Parquet tables in S3.

- Trifacta emits an AWS SNS event with dataset URIs and lineage pointers.

- Apache Airflow captures the message, validates output against Git-stored schemas, and updates the data catalog.

- Feature engineering DAGs then load the validated tables for analytic feature derivation.

Summary of Deliverables

- Validated, schema-compliant datasets in production-ready repositories.

- Rich metadata and lineage documentation for auditability.

- Automated notifications and orchestration triggers for downstream stages.

- Governance controls ensuring secure, compliant data access.

- Monitoring and versioning strategies to manage dataset evolution.

Chapter 3: Feature Engineering and Enrichment

Defining Feature Objectives and Inputs

Feature engineering is the stage where cleansed data is transformed into structured variables that drive predictive models, simulations, and decision-support systems. A formal feature definition process aligns data signals with business priorities, accelerates time-to-model, and ensures governance. Clear objectives and comprehensive inputs prevent ad hoc efforts, rework, and suboptimal outcomes.

Evolution and Importance

Traditional feature creation relied on manual coding in Python or R, limiting scale across enterprises. AI-driven frameworks embedded domain heuristics and meta-learning to recommend transformations, validate new variables against quality benchmarks, and automate repetitive tasks. This shift frees data teams to focus on strategic alignment, interpretation of model outputs, and reuse of high-value features.

Primary Objectives

- Business Alignment: Map features to measurable metrics—customer lifetime value, processing time reductions—to translate model outputs into strategic insights.

- Predictive Performance: Prioritize variables that maximize accuracy, reduce error rates, or improve AUC.

- Parsimony and Scalability: Select a minimal feature set that captures maximal information and supports templating for new domains.

- Interpretability: Ensure transparent transformations and clear naming conventions for stakeholder trust.

- Operational Feasibility: Define acceptable latency and resource constraints for production generation.

Core Inputs and Prerequisites

- Cleaned and Standardized Data: Free of invalid values, duplicates, and inconsistencies.

- Metadata and Data Dictionary: Field definitions, data types, lineage, and sampling timestamps.

- Business Metric Specifications: Documented targets and KPIs guiding optimization goals.

- Domain Knowledge Artifacts: Ontologies, ER diagrams, and stakeholder heuristics for rule-based transformations.

- External Enrichment Sources: Geolocation, weather, social sentiment, or economic indices invoked via connectors.

- Transformation Libraries: Access to tools such as Featuretools, DataRobot Autopilot, H2O.ai Driverless AI, or Amazon SageMaker Autopilot.

- Compute Environment: Batch and real-time configurations with container orchestration parameters.

- Governance Guidelines: Data retention, PII handling, anonymization, and consent policies.

- Versioning and Lineage Trackers: Systems recording transformation code versions and experiment metadata.

Aligning Business Goals to Feature Strategies

- Identify top-level outcomes—revenue growth, cost reduction, fraud prevention.

- Define analytical use cases—classification, regression, anomaly detection.

- Translate outcomes into metrics—recall at set false positive rates, error thresholds.

- Host ideation workshops with domain experts to brainstorm and prioritize candidate features.

- Apply scoring matrices to rank ideas by impact, complexity, and compliance risk.

Success Criteria and Environment Preconditions

- Completeness Thresholds: Minimum non-null percentages per field.

- Freshness Windows: Latency limits for time-sensitive inputs.

- Quality Benchmarks: Statistical range checks and distribution tests.

- Scalable Infrastructure: Distributed frameworks (Spark, Dask) or serverless compute.

- Feature Store Integration: Low-latency feature serving for training and inference.

- CI/CD for Analytics: Orchestration tools (Airflow, Prefect) with Git integration.

- Monitoring and Logging: Data drift signals, processing metrics, and error tracking.

- Stakeholder Roles: Data engineers, scientists, domain experts, compliance officers, ML engineers, and architects collaborating on inputs and validations.

- Feedback Loops: Define protocols for drift detection, model explainability reports, and iterative feature refinement.

Transformation and Derivation Workflow

The transformation phase bridges raw inputs and enriched features through automated operations orchestrated by AI agents. This stage applies normalization, scaling, encoding, aggregations, and statistical derivations, integrates external data, and validates outputs against governance standards. Automation ensures consistency, reduces manual coding, and accelerates time-to-insight.

System Components and Actors

- Orchestration Engine—such as Azure Data Factory or AWS Glue—manages task sequencing and retries.

- Transformation Agent—executes AI-generated scripts or code templates.

- Metadata Store—houses definitions, lineage, and version history.

- Data Processing Cluster—scalable compute resources via Apache Spark on Databricks or AWS Glue.

- External Connectors—invoke third-party APIs and reference datasets.

- Monitoring Services—track execution metrics, anomalies, and trigger alerts.

Step-By-Step Process Flow

- Ingest Specifications: Retrieve transformation logic, source fields, aggregation windows, and target formats from the metadata store.

- Pre-Profiling: Compute summary statistics to guide algorithm selection and detect distribution shifts.

- Execute Transformations: Apply scaling, encoding, date-time extraction, and normalization with full context logging.

- Derive and Synthesize: Generate rolling averages, ratios, composite scores, and time-window aggregates.

- External Enrichment: Append demographic or environmental attributes from connectors to services like WeatherAPI or OpenWeatherMap.

- Validation: Enforce null thresholds, range constraints, and statistical consistency tests with automated rollback on failure.

- Lineage Recording: Update metadata with versioned transformation parameters, agent identifiers, and timestamps.

- Handoff: Package enriched features into feature store tables or Parquet files for modeling via APIs or data contracts.

Orchestration Patterns and Collaboration

Event-driven pipelines trigger transformations upon data arrival, while scheduled jobs ensure periodic updates. Hybrid architectures combine real-time message queues with batch frameworks. Human analysts define parameters, review derivation rules via interactive interfaces, and provide annotations that AI agents use to refine future transformations.

Tooling Considerations

- Compute Scalability—distributed engines like Spark or serverless models for elasticity.

- Feature Store Integration—platforms such as Featuretools to manage and serve features.

- Security and Governance—access controls, encryption, and audit logging.

- Extensibility—plug-in architectures for custom transformations.

- Monitoring and Alerting—central dashboards for drift, latency, and errors.

Agent Strategies for Automated Feature Creation and Enrichment

AI agents accelerate feature discovery, transformation, and enrichment by leveraging meta-learning, statistical analysis, and external data integration. Centralized governance systems enforce policies, naming conventions, and lineage tracking, ensuring transparency and compliance.

Key Agent Roles and Interfaces

- Discovery Agents—statistical profiling, correlation analysis, pattern extraction, and schema exploration to propose candidates.

- Transformation Agents—apply mathematical, encoding, aggregation, and extraction operations in the most efficient execution environment.

- Enrichment Agents—ingest third-party data via dynamic connectors, align entities, synchronize temporally, and validate quality.

- Evaluation Agents—rank features using mutual information, chi-square, SHAP values, permutation importance, and cross-validation.

- Meta-Learning Agents—match current dataset meta-features to historical experiments, reuse proven pipelines, and update recommendations.

- Governance Systems—feature catalogs and metadata stores for lineage, naming, quality annotations, and access controls.

- Orchestration Platform—coordinates dependencies, scheduling, error handling, and resource allocation.

Continuous Feedback and Refinement

- Monitor model performance for feature-specific drift and trigger re-engineering workflows.

- Automate retraining when drift thresholds are exceeded.

- Capture domain expert feedback on feature utility to inform discovery agents.

- Maintain version control of feature pipelines and enable rollbacks.

By orchestrating these agents with governance systems, organizations build an automated, scalable, and auditable feature creation process that feeds directly into predictive modeling.

Packaging Enriched Feature Sets and Dependencies

Concluding feature engineering, enriched feature sets must be packaged, documented, and handed off with clear dependency information. Standardized deliverables and protocols ensure reproducibility, traceability, and seamless integration with modeling pipelines.

Output Artifacts

- Feature Matrix—tabular dataset aligned to primary keys or timestamps.

- Schema Definition—machine-readable descriptor (JSON Schema, Avro) of names, types, and ranges.

- Feature Dictionary—human-readable metadata with business definitions, logic, and units.

- Lineage Logs—records of source data, version identifiers, and transformation scripts.

- Pipeline Specifications—DAG configurations or job manifests.

- Dataset Snapshot—semantic version tag or timestamped marker for rollback and comparison.

Dependency Management

- Source Data References—connection details, schema versions, and extraction timestamps.

- Transformation Code Links—repository commits, notebook versions, or script archives.

- Parameter Records—stored thresholds, window lengths, and aggregation rules.

- Lineage Graphs—visual or machine-readable maps of raw data through transformations, supported by tools like Featuretools.

Packaging and Deployment

- Modular Archives—versioned ZIP or TAR bundles of matrices, schemas, and documentation.

- Containerization—Docker images encapsulating computation code for consistent environments.

- Semantic Versioning—MAJOR.MINOR.PATCH for breaking changes, additions, and metadata updates.

- Virtual Views—materialized or virtual tables in the data warehouse for direct querying by modeling agents.

Feature Store Integration

- Automated Ingestion—CI/CD pipelines pushing artifacts via APIs in platforms such as H2O.ai Driverless AI and DataRobot.

- Metadata Synchronization—programmatic upload of descriptions, lineage, and ownership.

- Access Controls—role-based permissions for reading approved versions and writing rights for governance teams.

- Discovery Interfaces—searchable catalogs promoting reuse and consistency across projects.

Handoff Protocols and Governance

- API Endpoints—RESTful or gRPC services for retrieving feature sets by version, supporting filtering and metadata queries.

- Manifest Files—YAML or JSON manifests enumerating available versions, locations, and parameters.

- Event Notifications—messages via Kafka or SNS triggering modeling jobs on feature publication.

- Approval Gates—sign-off steps for data stewards before production release.

- Audit Trails—immutable logs of publish, update, and rollback actions with user identity and timestamps.

- Regulatory Tags—sensitivity classifications and retention policies embedded in metadata.

- Notification Services—automated alerts via email, Slack, or Teams for stakeholders.

Best Practices

- Standardize names with clear prefixes or suffixes for domain, units, and aggregation levels.

- Document transformation logic with both human-readable descriptions and code references.

- Version early and often to simplify rollback and parallel testing.

- Enforce automated validation in CI pipelines before publication.

- Foster feature reuse via searchable catalogs and cross-team review sessions.

Adherence to these practices ensures analytical rigor, accelerates model development, and sustains enterprise-wide collaboration and compliance.

Chapter 4: Exploratory Data Analysis

Objectives and Prerequisites of Exploratory Visualization

The exploratory visualization stage transforms cleansed and enriched datasets into intuitive visual representations that reveal patterns, distributions, and anomalies. By converting complex numerical outputs into charts, graphs, and interactive dashboards, this stage empowers analysts and stakeholders to validate assumptions, uncover latent relationships, and align findings with business objectives. Visualization agents leverage machine learning and pattern-recognition algorithms to automate the generation of summaries, accelerating insight delivery and reducing manual effort.

Key aims include identifying outliers that may indicate data quality issues or emerging trends, mapping the trajectory of performance indicators over time, and guiding hypothesis refinement. In regulated industries such as finance, healthcare, and manufacturing, rigorous exploratory analysis ensures transparency, auditability, and compliance with standards. Dynamic real-time visual feedback on streaming data feeds supports both batch and live scenarios, enabling alerts on threshold breaches like spikes in customer churn or inventory anomalies.

Well-structured visualizations annotated with metadata become the foundation for downstream reporting and narrative generation. Standardizing outputs with tags for chart type, data source, variable definitions, and agent version ensures seamless handoff to narrative engines and reporting interfaces.

Required Inputs

- Cleansed and enriched dataset with standardized schemas and feature definitions

- Metadata repository detailing variable descriptions, data lineage, and quality scores

- List of analytical hypotheses, business metrics, and key performance indicators

- Visualization templates or style guides aligned to corporate branding and accessibility standards

- User persona profiles defining access levels, analytical roles, and preferred formats

- Domain reference materials such as taxonomies, glossaries, and regulatory requirements

- Configuration files for AI visualization agents specifying algorithm parameters and update frequencies

Technical Preconditions

- Access to a scalable visualization platform (for example, Tableau or Microsoft Power BI)

- Compute resources—CPU, memory, GPU—sized for interactive graphics and large datasets

- Connectivity to data warehouses, lakes, or real-time message queues

- APIs and SDKs configured for AI agents to read, write, and update dashboards

- Authentication and authorization mechanisms—OAuth tokens, LDAP integration—to control access

- Logging and monitoring infrastructure capturing agent performance and errors

- Version control and change management protocols governing dashboard updates

Dashboards and Pattern Discovery Workflow

The dashboards and pattern discovery workflow orchestrates AI-driven agents, visualization engines, and metadata repositories to produce dynamic, context-aware dashboards. It surfaces correlations, clusters, anomalies, and trends through interactive panels, guiding users toward actionable hypotheses. Central to the workflow are entry triggers, orchestration logic, pattern detection, interactive refinement, and handoff artifacts.

Workflow Entry and Initialization

The process begins when a supervisory orchestration agent publishes an event upon delivery of an enriched feature dataset and completion of pattern detection. This event enters a bus like Kafka or AWS EventBridge. A visualization orchestrator agent retrieves dataset metadata from a catalog such as Apache Atlas or Microsoft Purview, establishes a secure connection to the data lake or warehouse, and performs schema validation, data sampling, template selection, and resource allocation via platforms like Tableau or Power BI.

Pattern Detection Routines

Specialized AI-driven agents execute automated routines to detect statistically significant relationships:

- Correlation Analysis: Pairwise Pearson or Spearman computations visualized as heatmaps

- Clustering Identification: Unsupervised algorithms such as K-Means or DBSCAN via DataRobot

- Anomaly Detection: Isolation forests or autoencoder models flagging outliers in high dimensions

- Trend Extraction: Time series decomposition isolating seasonality, trend, and residuals

Results feed back into the dashboard engine via APIs or direct database writes, enabling real-time annotation of heatmaps, scatter plots, and geo-maps.

Interactive Refinement Loop

Analysts interact with dashboards to refine insights. Actions include drill-downs for deeper slices, parameter adjustments for clustering or correlation thresholds, annotation for audit trails, and visualization swaps. A user interaction agent captures events that trigger targeted sub-workflows such as new data fetches or pattern re-computations. The orchestration agent maintains session state for rollback or variant comparisons.

Automated Iterative Enhancement

An adaptive recommendation agent applies reinforcement learning to propose dashboard refinements. It logs actions and outcomes, assigns rewards based on engagement and model performance uplift, updates its policy, and delivers context-aware prompts within the UI. This closed-loop ensures that dashboards evolve with analyst preferences and data characteristics.

Error Handling and Observability

A logging agent collects API latencies, compute utilization, and data anomalies. In case of failures, the system retries operations with exponential backoff and escalates alerts via channels like Slack or Microsoft Teams. Audit logs in a central observability platform support root cause analysis and governance compliance.

Handoff Artifacts and Best Practices

Upon sign-off, final dashboards, annotated metadata, and interaction logs are packaged for downstream teams. Deliverables include:

- Dashboard definition files capturing layout, data sources, and settings

- Pattern metadata records listing correlation pairs, cluster IDs, and anomaly scores

- Exploration session reports with time-sequenced logs of user actions and recommendations

These artifacts are checkpointed in version control and registered with lineage systems, ensuring modeling agents can retrieve precise contexts for feature selection and algorithm training. Best practices include maintaining modular template libraries, metadata-driven orchestration, user-centric feedback loops, and scalable infrastructure.

Capabilities of AI-Driven Visualization and Pattern Recognition Agents

AI-driven visualization agents automate the transformation of datasets into actionable graphical summaries. Pattern recognition agents specialize in extracting higher-order structures. Together, they accelerate insight generation and reduce cognitive load.

- Automated Correlation Analysis: Agents compute Pearson, Spearman, and non-parametric correlations, rendering heatmaps where color gradients reflect association strength.

- Dynamic Clustering and Segmentation: Unsupervised algorithms such as K-Means, DBSCAN, t-SNE, or UMAP group observations and project clusters onto scatter diagrams or multidimensional plots.

- Anomaly and Outlier Detection: Density estimation, isolation forests, and autoencoder models flag deviations, overlaid as highlights on time-series or scatter visuals.

- Contextual Annotation: Natural language modules generate tooltips and summaries, explaining spikes or shifts by correlating external events or related metrics.

- Interactive Drill-Down: Users click chart segments to spawn focused sub-visualizations without manual query building.

- Adaptive Visualization Selection: Agents choose optimal chart types—histogram, box plot, network diagram—based on metadata and analysis objectives.

Pattern Recognition Agents in Practice

- Temporal Pattern Agent: Spectral analysis and change point detection annotate cycles, seasonality, and regime shifts on series plots.

- Spatial Correlation Agent: Metrics like Moran’s I and Getis-Ord Gi\* overlay clusters on choropleth maps for geospatial insights.

- Multivariate Interaction Agent: Partial dependence plots and SHAP interactions reveal joint feature influences on target metrics.

- Trend Extrapolation Agent: Regression models with confidence intervals project future scenarios on line charts.

- Graph and Network Analysis Agent: Force-directed layouts and community detection visualize relational data, highlighting central hubs and connectors.

Supporting Infrastructure Roles

- High-Performance Compute Cluster: GPU-accelerated and distributed nodes power real-time analytics with frameworks like Apache Spark.

- Data Access and Caching Layer: In-memory caches or columnar stores such as Apache Parquet enable sub-second retrieval of slices and aggregates.

- Visualization Libraries and Dashboards: Front-end frameworks like Plotly, D3.js, and platforms such as Microsoft Power BI or Tableau render interactive visuals.

- Message Bus and Event Streaming: Apache Kafka or AWS Kinesis ensure dashboards reflect current data via event-driven refreshes.

- Model Registry and Metadata Store: Central registries version models and templates, enforcing governance and lineage.

- API Gateway and Access Control: Role-based permissions secure agent endpoints for third-party integration.

- Orchestration Engine: Managers such as Apache Airflow or Prefect coordinate data retrieval, inference, and updates with retry logic and logging.

Integration Strategies

- Embedded Agent Widgets: Self-contained widgets embed visualization and pattern logic within BI tools or web portals.

- API-First Microservices: RESTful or gRPC APIs expose agent functionality for custom front ends and cross-unit reuse.

- Event-Driven Trigger Models: Agents subscribe to data change events or schedules, refreshing visuals and alerting stakeholders on emerging patterns.

Insight Reports and Analysis Handoffs

At the conclusion of exploratory analysis, structured insight reports and formal handoffs ensure seamless transition to predictive modeling. Outputs translate patterns into deliverables that support reproducibility, stakeholder alignment, and governance.

- Interactive dashboards and visualizations

- Static summary reports and documentation

- Annotated analysis notebooks

- Derived data artifacts and metadata packages

Interactive dashboards, built on Tableau or Power BI, allow parameterized exploration of correlation matrices, distribution plots, and time series analyses. Static reports synthesize key findings into paginated PDFs or HTML documents, ensuring offline reference. Analysis notebooks in Jupyter or Databricks combine code, visuals, and narrative for full transparency and reproducibility. Derived artifacts—filtered datasets, correlation matrices, and summary tables—are delivered in Parquet or CSV alongside JSON schemas.

Handoffs rely on precise dependencies:

- Versioned cleansed datasets from data preparation

- Feature schemas and data dictionaries from profiling

- Business glossaries from a data catalog

- Environment configurations for compute and visualization libraries

- User feedback from interactive review sessions

Metadata tags—source system identifiers, lineage, and quality metrics—accompany each artifact. Integration with a governance platform such as Alation ensures discoverable lineage and provenance.

Common handoff mechanisms include:

- Artifact repositories or document management systems with access controls and version histories

- Automated BI platform deployments publishing dashboards to shared workspaces

- Data catalog registrations ingesting datasets and metadata for programmatic discovery

- Event streams triggering downstream workflows and notifications upon artifact publication

Technical protocols underpinning handoffs include:

- RESTful API endpoints on data services for on-demand retrieval

- Secure file shares or cloud buckets with IAM policies

- Automated workflows orchestrated by Dagster or Apache Airflow

- CI/CD pipelines versioning notebooks and reports for analytics environments

Best practices for report and handoff management:

- Standardized naming conventions encoding project IDs, data versions, and artifact types

- Metadata headers documenting authoring agent, timestamps, and data sources

- Semantic tags linking metrics to business objectives in the governance catalog

- Strict version control enabling rollbacks

- Automated validation scripts for metadata completeness and schema conformity

A typical modeling intake package includes direct links to dashboards, downloadable reports, notebook repository URLs, dataset locations, and a metadata manifest. Integration with tools like MLflow allows data scientists to trace model performance back to specific exploratory insights. Governance demands audit logs recording artifact access, validation checks, and agent-orchestrated publishing. Containerized environments or notebook manifests capture package versions and compute configurations. A feedback loop via BI comments or project tickets ensures continuous dialogue between exploratory and modeling teams, refining analyses and enhancing collaboration.

Chapter 5: Predictive Modeling Orchestration

Modeling Objectives and Strategic Alignment

The predictive modeling stage transforms curated feature datasets into actionable insights, driving forecasts, classifications, and recommendations at enterprise scale. Defining clear objectives aligns algorithm selection, training processes, and evaluation metrics with business imperatives—whether inventory optimization, risk scoring, or customer churn prediction. Articulating target outcomes and acceptable error thresholds ensures that automated orchestration agents configure workflows to meet performance, interpretability, and compliance requirements. Embedding strategic goals into the modeling blueprint guides resource allocation decisions, balancing compute intensity, inference latency, and operational agility to maximize business impact.

Inputs and Operational Prerequisites

Automated modeling relies on a comprehensive set of inputs and conditions to ensure reproducibility, governance, and technical readiness.

Feature and Label Definitions

Agents retrieve versioned feature matrices from centralized feature stores, with clear documentation of transformation logic, source fields, and update cadences to support consistency across training and inference. Target labels—binary classes, multi-class tags, or numeric values—must be precisely defined to prevent data leakage. Orchestration systems validate label completeness, temporal alignment with feature windows, and distribution stability to ensure effective generalization.

Metadata, Lineage, and Historical Baselines

Rich metadata captures dataset versions, ingestion timestamps, data quality metrics, and transformation histories, enabling audit trails and impact analysis. Lineage information links each feature back to its origin, satisfying governance and regulatory requirements. Baseline performance metrics from previous cycles—logged by frameworks such as scikit-learn, TensorFlow, or PyTorch—provide reference points for evaluating candidate models and preventing regressions.

Infrastructure, Access Controls, and Quality Gates

Orchestrators integrate with compute platforms—on-premise Kubernetes clusters and cloud services like Azure Machine Learning, Amazon SageMaker, and Google Vertex AI—to provision CPU, GPU, or accelerator resources. Automated data quality checks gate datasets against missing values, distribution shifts, and schema changes. Secure authentication and least-privilege policies ensure agents access only approved datasets and encrypted artifact repositories, with audit logs supporting SOC2 and GDPR compliance.

Automated Algorithm Selection and Training Workflow

A mature analytics workflow orchestrates algorithm evaluation, hyperparameter optimization, and model evaluation through coordinated AI agents and orchestration layers. This flow accelerates time-to-model while enforcing consistent criteria and governance controls.

Experiment Initialization

The orchestration engine aggregates cleaned feature sets, metadata, and modeling objectives from the project configuration repository. Through an API call, the Experiment Manager initiates a session in an experiment tracking platform such as MLflow, performing actions that include:

- Registering experiment parameters and artifacts

- Allocating unique identifiers for result aggregation

- Establishing communication channels between compute nodes and the tracking system

- Validating input schema against model requirements

Algorithm Candidate Generation and Ranking

The Algorithm Selection agent consults an algorithm catalog—spanning linear models, random forests, gradient boosting, deep neural networks, and ensemble frameworks—and uses historical performance logs to prioritize candidates. It adapts selections based on data characteristics such as dimensionality and class imbalance, ranking algorithms by estimated resource consumption, training time, and predictive potential. This ranking informs compute scheduling and shapes hyperparameter search strategies.

Hyperparameter Optimization and Resource Allocation

Once candidates are chosen, the Hyperparameter Tuning agent defines search spaces and budgets, leveraging methods like grid search, Bayesian optimization, or AutoML solutions such as DataRobot and H2O.ai. The Resource Manager negotiates with the compute orchestration layer—powered by Kubernetes or Apache Airflow—to provision training environments, schedule containerized jobs, and monitor resource budgets. Coordination steps include:

- Defining parameter spaces and evaluation budgets

- Requesting compute instances via cluster APIs

- Packaging jobs into Docker containers with pre-installed dependencies

- Publishing job status updates to messaging systems such as Apache Kafka

Training Execution and Monitoring

The Training Execution agent oversees job health, streams loss and accuracy metrics to the Experiment Manager, and integrates with monitoring tools—Prometheus for system metrics and TensorBoard for model statistics. It enforces early stopping criteria, retries failed tasks, and archives model checkpoints at defined intervals. In preemptible environments, checkpoints are restored automatically to minimize progress loss.

Experiment Tracking and Metadata Management

The Metadata Manager captures hyperparameter values, convergence metrics, dataset versions, and code commit identifiers in the tracking system. Consistent tagging enables traceability from input data to trained artifacts, supports comparative analysis, and fulfills audit requirements. Metadata APIs ensure downstream stages access experiment details seamlessly.

Result Evaluation and Model Selection

Upon training completion, the Evaluation agent aggregates metrics—AUC, F1 score, MAE, inference latency—and applies predefined thresholds to filter top performers. Approved models are registered in the Model Registry, and detailed performance reports are published to reporting dashboards. Notifications trigger the Model Validation stage, ensuring only rigorously evaluated artifacts advance.

Scalability and Parallelization Strategies

To meet enterprise demands, the Parallelization agent fragments hyperparameter searches and model configurations, distributing tasks across multi-node clusters. Data sharding and vectorized processing accelerate throughput, while cloud autoscaling adjusts cluster size based on queue depth. This elastic resource management maximizes cost-performance efficiency without manual intervention.

Key Integration Points

- API endpoints for feature store retrieval

- MLflow SDK for real-time logging

- Message bus topics for job status notifications

- Compute cluster APIs for resource provisioning

- Model Registry interfaces for artifact publishing

Machine Learning Agents and System Roles

Machine learning agents automate the end-to-end model building lifecycle, coordinating training orchestration, resource management, experiment logging, and candidate recommendation. By abstracting infrastructure management, these agents allow data scientists to focus on hypothesis testing and model interpretability.

Training Orchestration Agents

Training orchestration agents translate high-level specifications into executable pipelines, provisioning environments on platforms such as AWS SageMaker and Google Vertex AI. They schedule distributed training tasks, monitor resource utilization, and handle failures or preemptions, retrying or migrating tasks to maintain throughput.

Experiment Tracking Agents

Experiment tracking agents log metadata—hyperparameters, performance curves, dataset versions, code commits—into systems like MLflow or internal stores. Unique run identifiers, real-time metric archiving, and artifact storage enable reproducible research and support regulatory audits.

Automated Model Selection Agents

Selection agents rank trained models using composite scoring functions aligned with business objectives. Advanced implementations leverage meta-learning to recommend promising hyperparameter regions based on historical data, accelerating convergence and reducing compute waste.

Resource Allocation and Parallelism Agents

These agents assess workload characteristics—data size, algorithm complexity—and select appropriate execution modes, integrating with Kubernetes to launch isolated training pods. Auto-scaling policies adjust resources based on queue depth, delivering elastic capacity in response to demand.

Integration with Validation Pipelines

Upon selection, agents package model artifacts—serialized weights, preprocessing code, environment specifications—and publish manifest files describing dependencies and performance. Downstream validation agents detect new models via metadata catalogs, triggering cross-validation, bias assessments, and compliance checks. This end-to-end traceability preserves consistency from data preparation to validated production models.

Model Outputs, Packaging, and Handoff Protocols

At the conclusion of modeling, AI agents produce deliverables—model binaries, configuration files, performance metrics, and metadata—that underpin validation and deployment.

Artifact Types and Formats

- Serialized Models: Python pickle, TensorFlow SavedModel

- Interchange Formats: ONNX, scikit-learn joblib

- Container Images: Docker bundles encapsulating runtimes

- Configuration Manifests: YAML or JSON detailing hyperparameters and feature mappings

- Feature Pipelines: Serialized transformers or scripts capturing preprocessing logic

Metadata, Lineage, and Dependency Management

- Data References: URIs to raw and processed datasets

- Version Tags: Semantic versions, commit hashes, build numbers

- Execution Logs: Structured logs of agent actions and resource metrics

- Performance Summaries: Tables of accuracy, precision, recall, and latency

- Environment Specs: Conda or pip requirements.txt and Dockerfiles

Packaging Standards and Registry Integration

Agents adhere to model exchange protocols—PMML for statistical models, ONNX for cross-framework interoperability, and MLflow Model Format for centralized management. Artifacts are registered in model registries—MLflow Model Registry, Kubeflow Metadata, or enterprise repositories—with stage tags, access policies, and governance metadata.

Handoff to Validation and Deployment

- API Transfers: RESTful endpoints accept model bundles and return validation job IDs

- Message Queues: Notifications via Kafka or RabbitMQ trigger validation workflows

- Storage Watches: Object storage events initiate validation pipelines

- Orchestration Hooks: Kubeflow Pipelines or Airflow tasks ensure seamless stage transitions

Notification, Governance, and Security

- Automated Alerts: Summaries posted to Slack or Microsoft Teams with artifact links

- Dashboard Updates: Real-time modeling progress and pending validations

- Version Control: Git tags, branches, and pull request identifiers linked to artifacts

- Security Scans: Container vulnerability assessments and data privacy checks

- Audit Trails: Immutable logs recording agent actions and approvals

Through rigorous definition of objectives, curated inputs, automated orchestration, and disciplined artifact management, organizations establish a high-velocity, reproducible modeling pipeline. This integrated ecosystem of AI-driven agents and orchestration services accelerates predictive insights while upholding governance, compliance, and operational excellence.

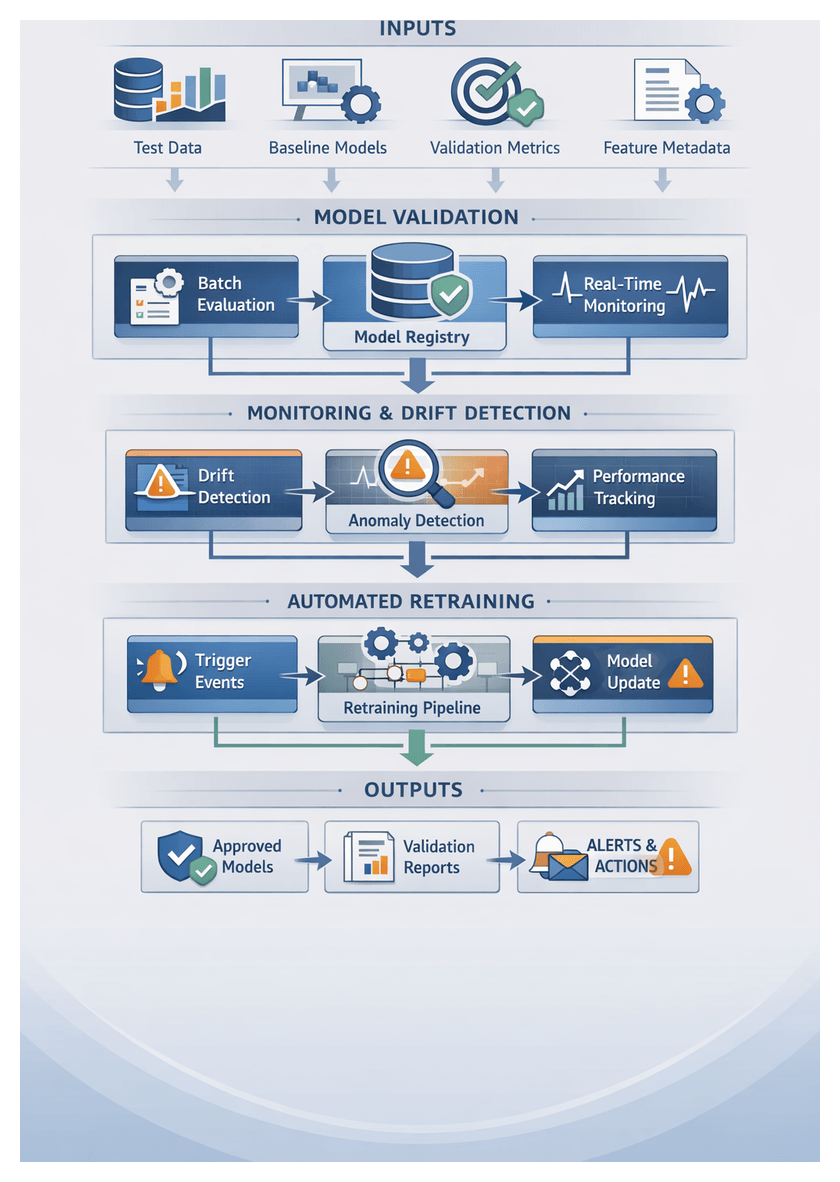

Chapter 6: Model Validation and Monitoring

Purpose of Model Validation

The validation stage serves as the critical checkpoint between model training and deployment, confirming that predictive models meet predefined performance criteria and behave reliably on unseen data. By evaluating outputs against real-world benchmarks, organizations mitigate risks related to overfitting, data drift, and unintended bias. Validation transforms a model from a black-box artifact into a production-ready asset with documented reliability metrics and governance controls.

In regulated industries such as finance, healthcare, and telecommunications, thorough validation provides evidence to satisfy compliance requirements. In dynamic markets, it ensures models maintain forecast accuracy as conditions evolve. Across all sectors, robust validation underpins stakeholder confidence, reduces costly errors, and establishes a foundation for continuous improvement.

Key Inputs and Prerequisites

Effective validation relies on clearly defined inputs and a stable environment. These include:

- Test Datasets: Hold-out data that mirrors production distributions, including seasonality and rare events.

- Validation Metrics: Core measures—accuracy, precision, recall, AUC-ROC, mean absolute error—or domain-specific KPIs with defined acceptance thresholds.

- Baseline Models: Reference systems or rule-based models for comparative performance evaluation.

- Feature Metadata: Documentation of data sources, transformation pipelines, and quality indicators to support reproducibility.

- Operational Constraints: Latency budgets, memory limits, and throughput targets aligned with service-level agreements.

- Versioning and Registry: Centralized tracking of dataset versions, metric evaluations, and model artifacts via MLflow or TensorFlow Extended.

Before validation begins, teams must ensure:

- Data Quality Assurance: Profiling to confirm completeness, correct labeling, and absence of systemic errors.

- Environment Parity: Replication of production infrastructure using platforms like AWS SageMaker or Azure Machine Learning.

- Access Controls and Governance: Role-based permissions, audit logs, and approval workflows for threshold changes and dataset modifications.

- Baseline Benchmarking: Agreed performance metrics and edge-case scenarios documented and approved by stakeholders.

- Monitoring Infrastructure: Logging frameworks and agents configured to capture inference logs, resource usage, and error rates during validation runs.

- Automated Orchestration: Integration of validation tasks into pipelines managed by Apache Airflow or Kubeflow Pipelines.

Validation Workflow and System Architecture

The validation workflow comprises batch validation, real-time monitoring, and feedback integration. Models are first evaluated against hold-out datasets and KPIs. Approved models are registered in a central repository, while monitoring agents track live performance to detect drift. Deviations trigger alerts and retraining workflows, ensuring only validated models remain in production.

System interactions span data lakes or feature stores, model registries, metrics stores, streaming platforms, and observability tools. A typical data pipeline:

- Batch Evaluation Pipeline: Ingests test datasets from a feature store, retrieves model artifacts from MLflow, computes metrics, and writes results to Prometheus or an enterprise warehouse.

- Real-Time Ingestion: Uses Apache Kafka to stream production data. Monitoring agents compute statistical summaries and push them to time-series databases for trend analysis.

- Observability Integration: Correlates inference logs, container metrics, and infrastructure telemetry with platforms like Datadog and Grafana.

- Alerting: Configured to notify stakeholders via email, Slack, or ServiceNow when thresholds are breached.

Batch Validation Sequence

- Schedule Evaluation: Triggered by a cron job or detection of new model versions in the registry.

- Retrieve Test Data: Fetch hold-out datasets and labels from the feature store.

- Load Model: Download the candidate model via the registry API.

- Compute Metrics: Execute evaluation routines for classification or regression KPIs.

- Compare Thresholds: Validate metrics against predefined benchmarks stored in a configuration repository.

- Update Registry: Mark the model as approved or rejected and record the validation report.

Failures notify data science and engineering teams with detailed reports, guiding corrective actions.

Real-Time Monitoring and Drift Detection

- Stream Ingestion: Drift Detection Agent subscribes to Kafka topics or cloud streaming services.

- Compute Drift Metrics: Continuously calculate population stability index, Kullback–Leibler divergence, or other measures.

- Evaluate Alerts: Compare metrics against sensitivity thresholds in the monitoring configuration.

- Trigger Events: Publish drift or anomaly events to the message bus.

- Initiate Investigation: Alerting Agent logs incidents in ServiceNow or Jira, enriches tickets with logs and performance trends.

AI-Driven Monitoring Agents and Automated Retraining

Specialized monitoring agents and trigger systems ensure models maintain accuracy and relevance. These components automate detection of performance degradation and initiation of retraining workflows, reducing manual oversight.

- Statistical Drift Detection: Methods such as PSI and Kolmogorov-Smirnov tests identify feature distribution shifts.

- Performance Alerts: Track accuracy, precision, recall, and AUC to trigger notifications when metrics fall below thresholds.

- Anomaly Classification: Unsupervised learning distinguishes normal variability from significant deviations.

- Adaptive Thresholding: Reinforcement learning or Bayesian optimization adjusts alert levels based on historical trends.

- Resource Monitoring: Observe compute, memory, and I/O usage to maintain service-level objectives.

Agents may leverage Amazon SageMaker Model Monitor, Kubeflow Pipelines modules, Prometheus collectors with Grafana dashboards, or DataDog anomaly detection rules.

When drift or degradation is detected, automated retraining triggers initiate end-to-end pipelines. Key components include:

- Trigger Evaluation Service: A decision engine that applies business rules and retraining policies.

- Pipeline Orchestration: Hooks into Apache Airflow or AWS SageMaker Pipelines to launch data ingestion, feature engineering, training, and validation jobs.

- Configuration Management: Dynamic injection of hyperparameters, dataset references, and version identifiers for reproducibility.

- Version Control Coordination: Interaction with MLflow or Git repositories to archive artifacts and tag new model versions.

- Automated Test Gates: Data quality checks, performance benchmarks, and bias detection integrated into the retraining workflow.

Platforms like Neptune.ai and Weights & Biases can fire webhooks to rerun pipelines when monitored metrics drop below thresholds.

Integration with MLOps infrastructure involves:

- Event Bus Architecture: Apache Kafka or AWS EventBridge to publish alerts and retraining commands.

- Service Mesh: Istio or Linkerd for secure communication among microservices.

- Unified Metadata Store: MLflow Tracking or AWS Glue Data Catalog for audit trails.

- RBAC: Kubernetes or cloud IAM permissions to control agent actions.

These components form a closed feedback loop, enabling continuous data collection, incremental model updates, performance benchmarking, and automated documentation generation. Over time, meta-learning processes refine monitoring configurations and feature engineering strategies.

Operational Best Practices:

- Define Acceptance Criteria: Document quantitative benchmarks for drift, latency, and resource usage.

- Human-in-the-Loop Oversight: Include review steps for critical retraining events.

- Alert Prioritization: Configure multi-channel notifications for timely response.

- Audit Trail Preservation: Store logs of monitoring events and retraining workflows in immutable storage.

- Scalability Testing: Simulate peak loads to validate pipeline reliability.

- Security and Privacy: Encrypt data, anonymize sensitive features, and enforce access controls.

Validation Reporting and Feedback Handoffs

Validation produces structured deliverables and handoffs that drive continuous improvement across teams and processes. Key report artifacts include: