Orchestrating AI Agents for Creative and Content Workflows

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Stage Objectives and Scope

The foundational stage establishes the strategic rationale for an AI-driven content orchestration framework. Clear objectives—such as reducing manual handoffs, ensuring a consistent brand voice, and enabling scalable production—align creative, marketing, and technology teams around shared outcomes. By mapping high-level deliverables and performance targets, stakeholders transform fragmented operations into a seamless pipeline that harnesses AI agents for ideation, drafting, review, optimization, and distribution. This alignment prevents misaligned expectations and sets the course for accelerated time-to-market, enhanced engagement, and measurable return on investment.

Essential Inputs and Prerequisites

Successful implementation depends on grounding the framework in real organizational context. Core inputs and conditions include:

- Business strategy documentation: annual plans, campaign charters, and key performance indicators.

- Audience research data: persona profiles, engagement metrics, and channel preferences.

- Content inventories and brand guidelines: style guides, tone-of-voice specifications, and current assets.

- Process maps: flowcharts of existing workflows, resource assignments, and approval cycles.

- Baseline performance metrics: production speed, quality scores, and distribution reach.

- Technology inventory: content management systems, collaboration platforms, and AI or automation tools.

- Governance structures: executive sponsorship and decision-making protocols.

These prerequisites ensure data accessibility, cross-functional commitment, and an agreed governance model. Consolidating disparate sources into a unified intake stream and convening kick-off workshops with marketing leaders, creative directors, technology architects, and external partners establish the stakeholder alignment necessary to validate inputs and objectives. Training programs on prompt engineering, review protocols, and feedback mechanisms foster a collaborative culture that views AI agents as partners rather than replacements.

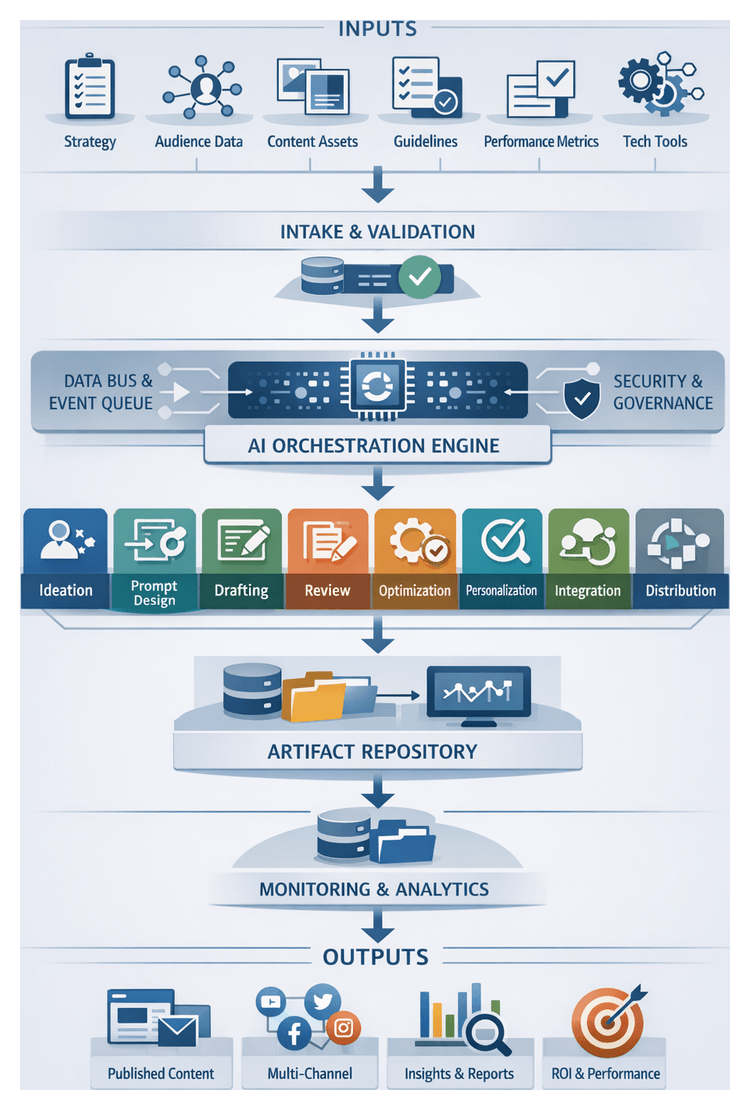

AI Orchestration Framework Overview

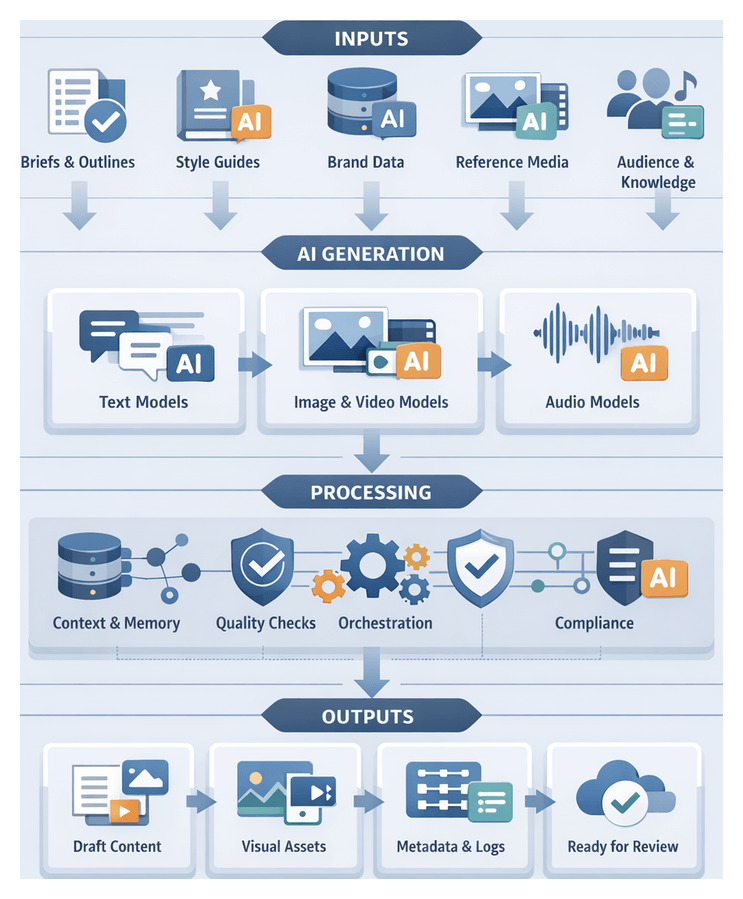

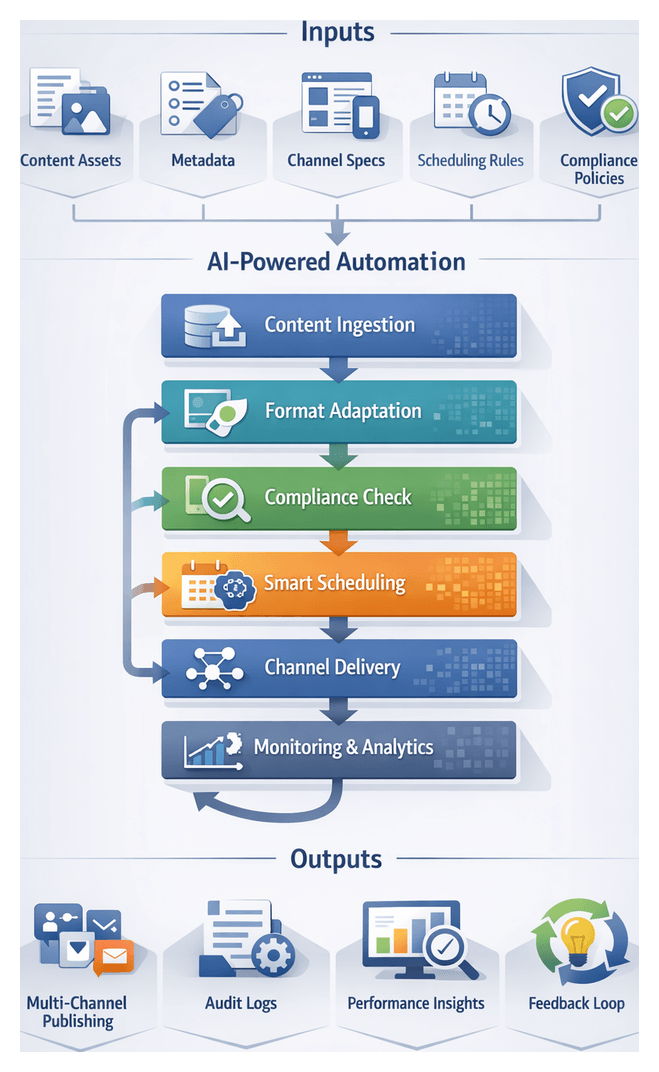

An AI orchestration framework transforms a collection of specialized tools and manual tasks into an automated pipeline with standardized stages, interfaces, and event-driven triggers. It consists of the following core components:

- Intake Layer captures requirements, source assets, audience profiles, and brand guidelines, validating and normalizing data for downstream consumption.

- Orchestration Engine schedules tasks, routes messages between agents, tracks state transitions, and enforces dependencies. Platforms such as AgentLink AI offer API-first orchestration capabilities.

- AI Agent Suite comprises specialized agents for ideation, drafting, review, optimization, personalization, integration, distribution, and analytics.

- Data Bus and Event Queue facilitate asynchronous communication, decoupling producers and consumers while ensuring reliable delivery of events.

- Artifact Repository stores inputs, intermediate deliverables, and final assets with versioning, metadata tags, and audit logs.

- Monitoring and Logging Module collects metrics, error logs, and usage data, providing dashboards and alerts for operational visibility.

- Security and Governance Layer enforces access controls, data encryption, compliance checks, and audit trails.

In this architecture, the orchestration engine listens for events on the data bus, invokes AI agents via well-defined APIs, and manages coordination through stateful workflows, bulk processing, and callback integrations. This design balances synchronous and asynchronous interactions, enabling parallel execution where dependencies allow and serial progression where handoffs require validation.

Collaboration of Specialized AI Agents

Within the structured pipeline, each AI agent assumes a discrete role, contributing expertise and preserving context throughout the workflow. Key agents include:

- Ideation Agent transforms business requirements, audience personas, and content inventories into thematic outlines, headline variations, and concept clusters using semantic embeddings and topic modeling.

- Prompt Design Agent converts concept briefs into precise, context-rich instructions for language and multimodal models. It maintains a library of templates, tunes parameters, and aggregates relevant memory context to ensure consistency.

- Drafting Agent leverages large language models such as OpenAI GPT-4 and visual generators like Adobe Firefly to produce first-pass content. Parallel generation and quality filters support rapid iteration.

- Review Agent automates grammar checks, style alignment, and brand voice verification via tools like Grammarly. It enforces style guides, readability metrics, and change tracking for human approval.

- Optimization Agent enhances SEO and engagement using platforms such as SEMrush or MarketMuse. It integrates keyword analysis, meta description generation, and performance simulation to maximize discoverability.

- Personalization Agent crafts variant messages for audience segments by assembling modular text and media blocks based on behavioral data and persona models.

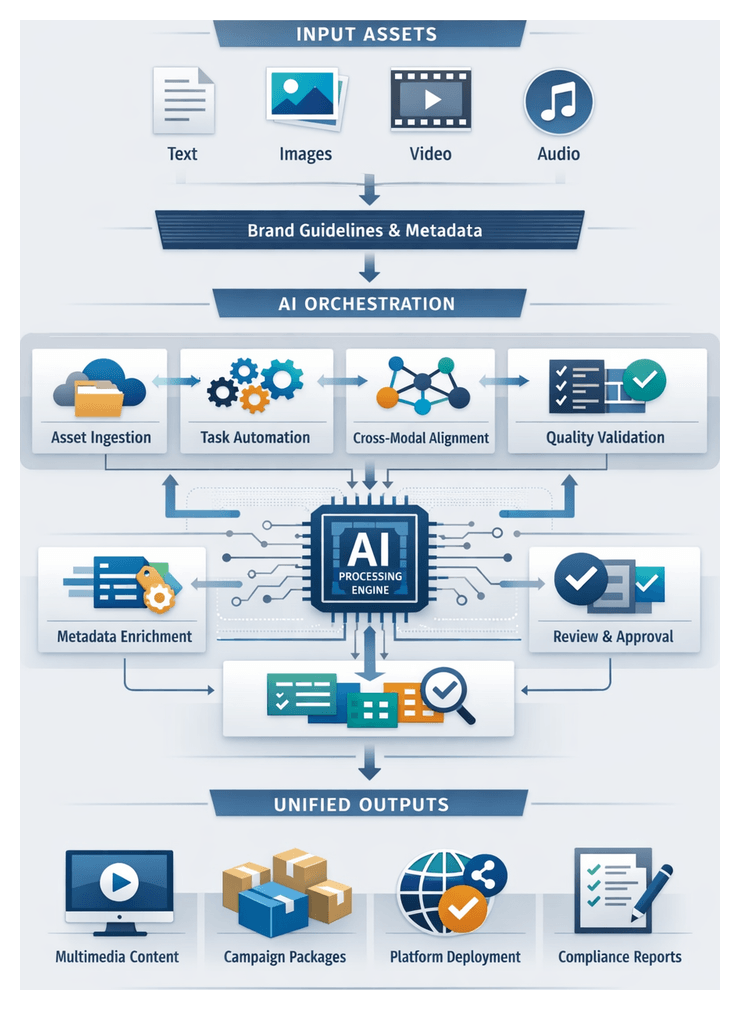

- Integration Agent merges text with images, audio, or video, coordinating transcoding, layout design, and packaging into cohesive assets.

- Distribution Agent automates scheduling, formatting, and compliance checks for multi-channel publishing across CMS, social APIs, and email platforms.

- Analytics Agent aggregates performance data, normalizes metrics, and feeds insights back into the orchestration engine for continuous refinement.

Workflow Structure, Handoffs, and Feedback Loops

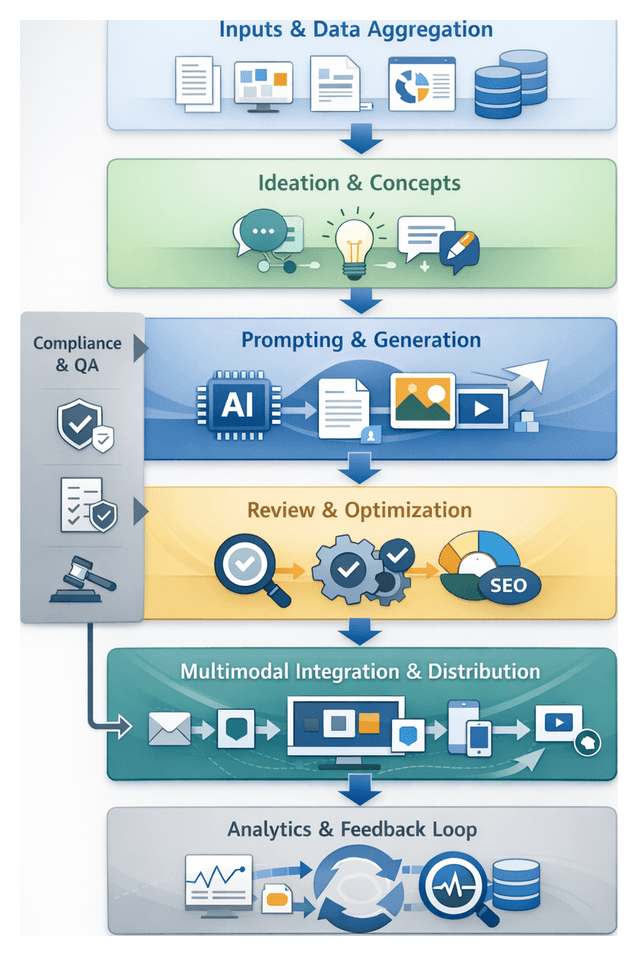

A comprehensive blueprint codifies every stage—Discovery, Ideation, Prompt Design, Drafting, Review, Optimization, Personalization, Integration, Distribution, and Analytics—along with objectives, inputs, outputs, and quality gates. Key deliverables include:

- Process flow diagrams that map dependencies, sequence tasks, and highlight parallel operations.

- Artifact schemas and metadata specifications that standardize content structures for seamless agent consumption.

- Integration matrices detailing API endpoints, authentication protocols, data formats, and error-handling procedures.

- Roles and responsibilities matrices assigning clear ownership for stages, deliverables, and integrations.

Explicit handoff protocols maintain momentum and prevent misalignment. For example:

- Discovery to Ideation: Aggregated input bundles are validated against schema compliance and published to the content repository with event notifications.

- Ideation to Prompt Design: Curated concept decks are delivered via API calls alongside contextual metadata and reviewed for brand alignment.

- Prompt Design to Drafting: Configured prompt templates trigger parallel generation pipelines in models such as OpenAI GPT-4 and Adobe Firefly, with sample runs verifying latency and output quality.

- Drafting to Review: Raw assets are pushed to editing queues where automated syntax and style checks route failures to human editors.

- Review to Optimization: Refined content, approved for compliance, is sent to optimization agents via RESTful APIs with callback URLs for enriched deliverables.

Iterative feedback loops enable content artifacts to flow backward when refinement is required. Conditional routing logic and decision points—governed by AI confidence thresholds or human approvals—ensure that drafts meeting performance criteria progress, while those needing revision return to preceding agents.

Scalability, Governance, and Strategic Benefits

By combining containerized deployments, serverless architectures, and modular workflow definitions, the framework scales horizontally to handle thousands of concurrent tasks. Plugin interfaces allow integration of new AI models or third-party services without disrupting the pipeline, while feature flags and versioned workflows support A/B testing and continuous improvement.

Robust security controls enforce role-based access, data encryption, and comprehensive audit logs. Policy engines validate content compliance against brand guidelines and regulatory standards, ensuring governance at every stage.

This structured approach delivers multiple strategic advantages:

- Scalability: Parallel pipelines and clear handoff contracts enable rapid capacity expansion without redesigning core processes.

- Transparency: Documented artifacts and integration points provide auditability for compliance and performance diagnostics.

- Consistency: Standardized schemas and validation rules ensure uniform quality and brand adherence across outputs.

- Agility: Modular design allows selective enhancement or replacement of stages—such as swapping a language model—without disrupting end-to-end operations.

- Collaboration: A shared blueprint aligns cross-functional teams and external partners around common objectives and governance guidelines.

With this framework in place, organizations can orchestrate creative and content workflows at scale, delivering high-quality, on-brand content with speed and precision.

Chapter 1: Discovery and Input Aggregation

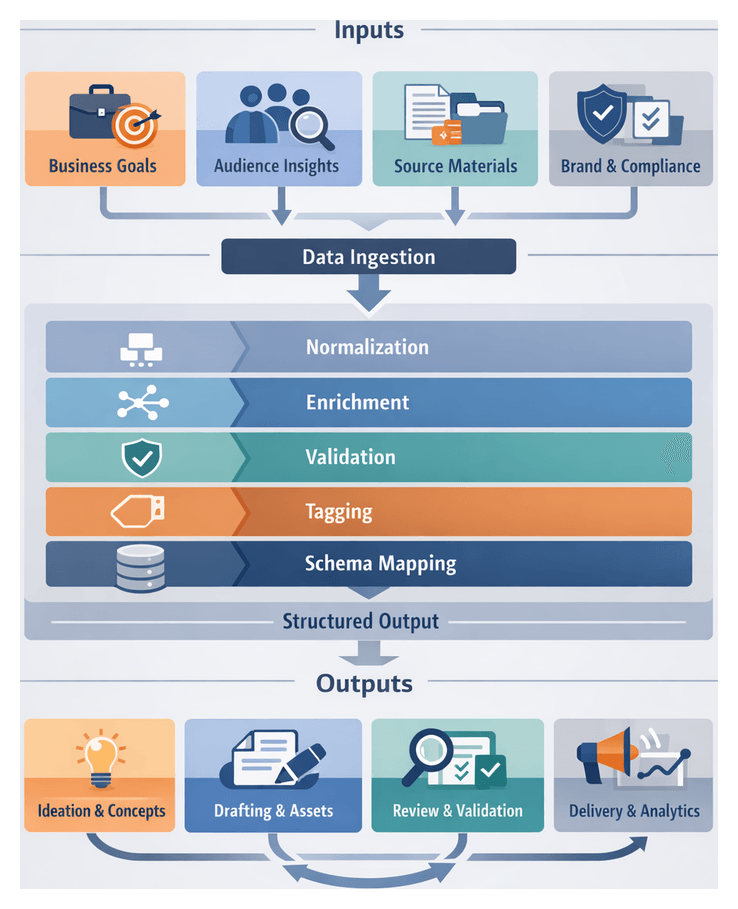

Purpose and Context of the Discovery Stage

The discovery stage establishes the strategic foundation for an AI-driven content workflow, aligning business objectives with creative execution. By consolidating stakeholder requirements, audience research, source materials, and brand guidelines into a unified, context-rich dataset, teams avoid fragmented information and ensure consistent messaging. This structured intake stream transforms organizational knowledge into machine-readable artifacts, enabling coherent ideation, drafting, and distribution at scale. In an environment marked by digital transformation and multi-channel demands, automated discovery accelerates responsiveness, preserves quality, and mitigates inefficiencies inherent in manual workflows.

- Establish a single source of truth for content requirements to minimize miscommunication.

- Capture audience insights to inform personas and tailored messaging.

- Align research reports, case studies, and competitive analyses with brand guidelines.

- Normalize and tag inputs for ready ingestion by AI agents.

- Define metadata schemas and handoff protocols for seamless transitions to ideation.

Required Inputs

Effective discovery depends on gathering diverse data types from internal and external systems and ensuring key conditions are met before pipeline initiation.

- Business Requirements: Strategic goals, campaign briefs, KPIs, compliance rules, and timelines from project management tools or stakeholder interviews.

- Audience Insights: Demographics, behavioral analytics, sentiment data, and persona documentation from CRM platforms, social listening tools, and market research databases.

- Source Materials: White papers, technical specifications, competitor collateral, intellectual property references, and performance reports from content repositories.

- Brand Guidelines: Style guides, tone documentation, visual assets, and legal disclaimers from digital asset management systems.

- Regulatory Constraints: Industry-specific rules, legal requirements, and accessibility standards.

- Technical Metadata: Format specifications, channel constraints, SEO keywords, and performance benchmarks.

Prerequisites

- Stakeholder alignment on objectives and success metrics to prevent divergent outputs.

- Configured data access permissions and integrations for secure ingestion.

- Governance framework defining roles, responsibilities, and escalation pathways for data validation.

Processing Pipeline and AI Agents

The discovery pipeline transforms raw inputs into enriched, tagged artifacts through a series of automated steps powered by specialized AI agents.

- Data Ingestion: Connector agents retrieve inputs from CRM systems, DAM platforms, content repositories, and external APIs.

- Normalization: Standardize formats, units, taxonomies, and eliminate duplicates.

- Enrichment: Apply contextual metadata—campaign identifiers, persona tags, sentiment scores—using semantic networks and knowledge graphs.

- Validation: Run compliance and brand consistency checks, routing exceptions to human reviewers.

- Tagging and Schema Mapping: Label inputs by priority, content type, audience segment, and theme according to a predefined metadata schema.

- Structured Output Generation: Package validated inputs into machine-readable artifacts (JSON or XML) for downstream consumption.

- Connector Agents: Interface with platforms such as OpenAI GPT-4 for parsing unstructured briefs and integrate with CRM or DAM APIs for structured records.

- Normalization Agents: Use rule-based and statistical methods to standardize terminology, measurements, and date formats.

- Enrichment Agents: Leverage semantic networks to infer context, extract keywords, and map to industry taxonomies.

- Validation Agents: Execute business rules and compliance checks, flagging anomalies for human review.

- Metadata Tagging Agents: Employ supervised machine learning to classify content by theme, audience, and sentiment.

Structured Deliverables and Handoff Protocols

Upon completing discovery, the orchestrator produces artifacts and protocols that guarantee seamless handoff to ideation and concept formulation stages.

- Consolidated Input Dataset: A normalized, machine-readable file containing audience profiles, brand lexicon, stakeholder requirements, and research excerpts, tagged for priority and relevance.

- Metadata Catalog: Reference document listing origins, timestamps, confidence scores, and semantic tags for each input.

- Taxonomy and Ontology Schema: Formal representation of topic hierarchies and relationship mappings to guide concept clustering.

- Input Validation Report: Summary of anomalies, missing fields, and conflicting guidelines, with error codes and suggested resolutions.

- Delivery Manifest: Listing of artifacts, file paths or API endpoints, version identifiers, and checksums to ensure traceable handoffs.

- Access to the orchestration platform with appropriate credentials and permissions.

- Availability of input repositories (for example, AWS S3 or secure databases) configured for API queries.

- Version-controlled synchronization of taxonomies and ontologies.

- Stakeholder approval of the Input Validation Report.

- Network and security policies permitting encrypted data transfers and audit logging.

- Event-Driven APIs: Delivery Manifest generation triggers an API call to initiate ideation workflows.

- Message Queues: Artifact metadata is published to queues (for example, Amazon SQS), enabling parallel retrieval by ideation agents.

- Webhooks and Callbacks: Notifications confirm stakeholder sign-off before automatic ingestion.

- Shared File System: Network-mounted directories with locking protocols prevent race conditions.

- Versioning Lockstep: Agents verify version tags against version control services to maintain consistency.

Integration protocols ensure that ideation agents receive precise data slices for prompt parameters, topic modeling, and thematic coherence checks. JSON or XML artifacts feed NLP services such as OpenAI GPT-4 for sentiment analysis, while taxonomies guide clustering agents. Strict checksum verification, schema validation, automated QA scripts, immutable version tags, and change logs preserve quality and reproducibility. Audit logs capture timestamps, artifact versions, agent identities, and validation outcomes, supporting regulatory compliance and performance analysis.

- Encrypted data in transit (TLS) and at rest (AES-256).

- Role-based access control limiting agents to required fields.

- Data retention policies and automated compliance scanning.

- Periodic governance reviews to align protocols with security standards.

- Standardized naming conventions, petri dish testing of changes, clear API documentation, health checks, and regular sync points between teams.

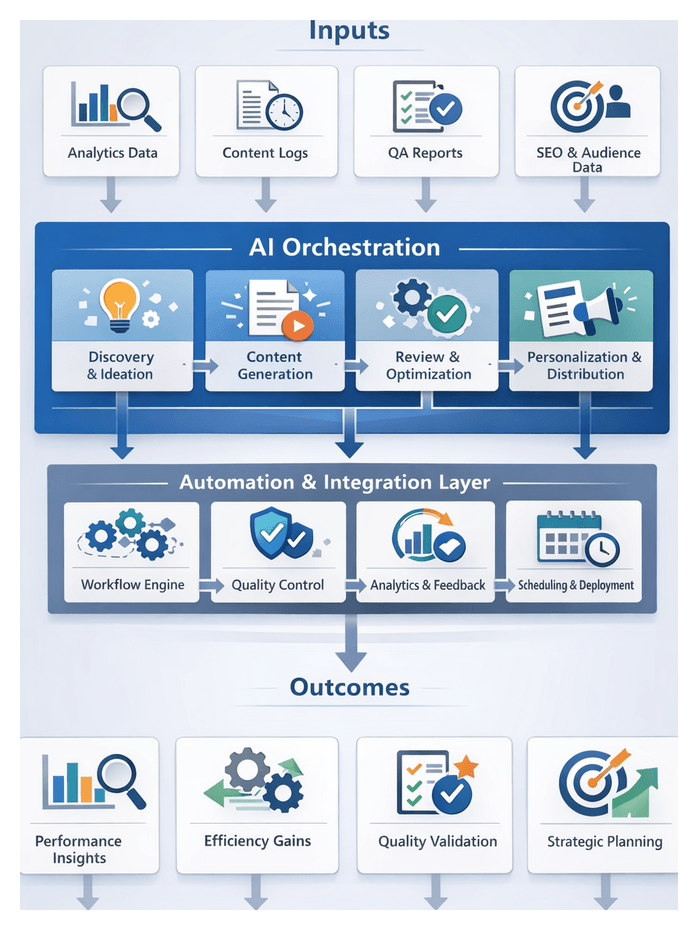

Unified AI Orchestration Framework

A structured, end-to-end AI orchestration framework integrates specialized agents and human inputs across the entire content lifecycle, ensuring consistency, scalability, and strategic focus.

- Intake and Validation: Automate ingestion and normalization of requirements, profiles, and guidelines.

- Ideation and Conceptualization: Use large language and multimodal models to generate themes, narratives, and outlines.

- Prompt Engineering: Configure precise prompts and contextual parameters for generation agents.

- Drafting and Asset Creation: Deploy text composition and media synthesis agents in parallel.

- Automated Review: Execute grammar checks, style audits, and compliance verifications.

- Optimization: Integrate SEO and engagement tools to refine discoverability.

- Personalization: Assemble tailored variants for audience segments.

- Multimodal Integration: Combine text, visuals, audio, and video into cohesive deliverables.

- Distribution Scheduling: Adapt formats, schedule releases, and call platform APIs.

- Analytics Feedback: Ingest performance data and refine workflows through feedback agents.

Coordination mechanisms—including event-driven triggers, shared knowledge repositories, API-first integrations, contextual memory, and priority escalations—ensure predictable, auditable workflows with minimal human intervention.

Business Impact and Implementation Considerations

- Accelerated Time-to-Market: Automated handoffs and parallel tasks can halve content cycle times.

- Consistent Brand Voice: Unified style checks preserve coherence across thousands of assets.

- Cost Efficiency: AI agents handle routine tasks, freeing teams for strategic work.

- Data-Driven Iteration: Real-time analytics feedback drives continuous performance improvements.

- Scalable Personalization: Hyper-targeted campaigns without linear workload increases.

- Risk Reduction: Gated approvals and audit trails ensure compliance and brand safety.

- Infrastructure Assessment: Map existing tools and workflows to identify integration points.

- Prioritize High-Impact Use Cases: Start with critical streams to demonstrate early value.

- Modular Architecture: Design interchangeable agent modules for future upgrades.

- Governance and Change Management: Define policies, training, and documentation for data security and approvals.

- Performance Measurement: Track cycle time, quality scores, and engagement lift via dashboards.

- Continuous Refinement: Use analytics feedback to optimize prompts, agent configurations, and workflow rules.

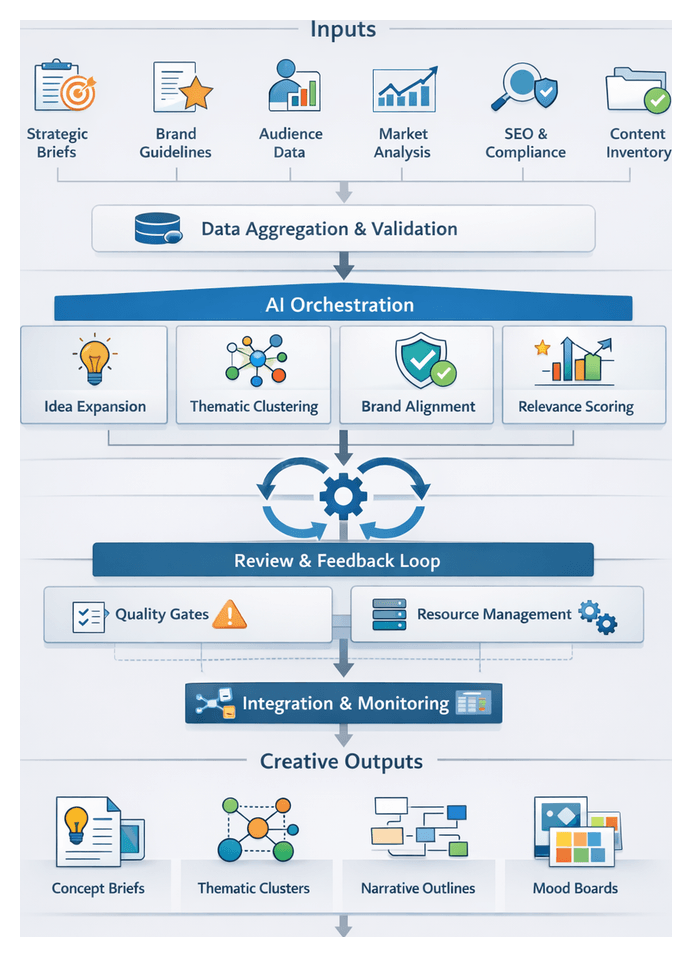

Chapter 2: Ideation and Concept Formulation

Ideation Stage Objectives and Inputs

The ideation stage transforms strategic direction and aggregated data into creative concept proposals that align with business goals, brand voice, and audience needs. By establishing clear objectives, validated inputs, and a configured environment, organizations can accelerate creative exploration, reduce rework, and maintain consistency across large-scale content operations.

- Conceptual Diversity: Generate a broad spectrum of themes, formats, and narrative angles for flexibility in content planning.

- Strategic Alignment: Ensure each concept maps directly to key performance indicators and campaign objectives.

- Brand Consistency: Embed brand pillars, tone guidelines, and design principles to preserve identity across outputs.

- Audience Resonance: Leverage personas, behavioral insights, and pain points to engage target segments.

- Scalability: Enable parallel execution of concept generation by AI agents to support rapid iteration.

- Feasibility Assessment: Evaluate resource requirements, channel constraints, and compliance factors early in the process.

Prerequisites and Environment Configuration

- Input Consolidation: Complete aggregation and tagging of strategic briefs, audience data, market analyses, and brand guidelines.

- Data Normalization and Validation: Standardize formats, perform schema checks, assign metadata tags, and score inputs for relevance and completeness.

- Tool Provisioning: Credential and tune AI platforms such as OpenAI GPT-4, Anthropic Claude, and Jasper with project-specific parameters.

- Computational Resources: Allocate sufficient compute, memory, and storage to support parallel AI agent execution and iterative loops.

- Stakeholder Alignment: Confirm project scope, timelines, deliverables, and compliance requirements with creative directors, marketing leads, and legal teams.

- Workflow Configuration: Define agent sequencing, parameter settings (temperature, token limits), retry logic, and alert mechanisms within the orchestration platform.

Input Categories

- Strategic Briefs: Business goals, KPIs, budget, timelines.

- Brand Guidelines: Voice, tone descriptors, visual identity rules, prohibited topics.

- Audience Insights: Personas, demographic and psychographic data, journey maps, behavior analytics.

- Market Analysis: Competitor audits, industry trends, SWOT findings.

- Content Inventory: Existing asset performance, SEO metrics, engagement scores.

- SEO Requirements: Target keywords, search intent, meta templates, linking guidelines.

- Compliance Constraints: Legal mandates, disclaimers, privacy and accessibility standards.

- Technical Specifications: Format requirements, CMS parameters, channel best practices.

Stakeholder Engagement Protocols

- Kickoff Workshops: Align on objectives, review inputs, and set expectations.

- Review Checkpoints: Schedule interim assessments of preliminary AI-generated themes.

- Structured Feedback Loops: Capture and tag stakeholder feedback for incorporation in subsequent agent runs.

- Approval Gates: Define criteria and decision rights for transitioning concepts to prompt design.

Workflow and AI Agent Collaboration

The ideation workflow orchestrates specialized AI agents in concert with human strategists and supporting systems. Through metadata-driven routing, message-bus communication, and iterative feedback loops, the process ensures transparent handoffs, quality control, and traceability from concept generation through handoff to prompt design.

Input Ingestion and Preprocessing

- Ingestion of validated JSON payloads containing personas, keywords, brand rules, and benchmarks.

- Automated metadata tagging by theme, channel, and audience segment.

- Normalization of text, charts, and images into unified semantic embeddings.

- Schema validation and conflict detection before passing inputs to ideation agents.

AI Agent Collaboration Model

- Idea Expansion Agent: Uses transformer models to brainstorm headlines, hooks, and narrative angles.

- Thematic Clustering Agent: Groups generated ideas via unsupervised learning to surface emergent themes.

- Brand Alignment Agent: Evaluates compliance with style guides and regulatory constraints.

- Relevance Scoring Agent: Ranks concepts based on audience engagement data and SEO priorities.

Agents communicate through a message bus; each publishes results under versioned topics, enabling audit trails and rollback capabilities.

Iterative Brainstorming Loop

- Generate initial concept batch.

- Cluster ideas into preliminary themes.

- Strategist reviews clusters, selects top candidates, and annotates with feedback tags.

- Feedback triggers refined prompts for the Idea Expansion Agent.

- Repeat until approval of 3–5 core concepts with theme titles, message pillars, and sample headlines.

Quality Gates

- Completeness Gate: Each concept must articulate a narrative arc and address key audience pain points.

- Compliance Gate: Flag regulated language and sensitive topics for legal review.

- Novelty Gate: Run semantic similarity checks against existing content to ensure originality.

- Feasibility Gate: Confirm availability of required assets and technical resources.

Integration With Supporting Systems

- Content Performance Database feeds real-time benchmarks to the Relevance Scoring Agent.

- Digital Asset Management (DAM) systems provide multimedia metadata for concept visualization.

- Brand Asset Library supplies approved logos, taglines, and style assets.

- Collaboration Platforms sync concept boards, human reviews, and audit logs.

Governance and Metrics

Every agent invocation, human annotation, and decision is recorded in an immutable audit log. Key performance indicators include:

- Concept Throughput: Number of approved themes per cycle.

- Iteration Count: Average brainstorming loops before concept sign-off.

- Review Cycle Time: Time for human strategists to annotate and approve clusters.

- Alignment Score: Brand Alignment Agent’s aggregate compliance rating.

Models and Support Systems

A diverse suite of AI models and integration layers underpins the ideation stage, enabling concept generation at scale while preserving accuracy, brand alignment, and creative breadth.

Transformer-Based Language Models

Large pretrained transformers, fine-tuned on domain-specific corpora, provide core generative capabilities:

- Create initial concept statements, headlines, and value propositions.

- Expand ideas into thematic outlines and subtopics.

- Adapt tone and style to brand voice parameters.

- Examples include GPT-4, PaLM, and Claude.

Semantic Embedding and Retrieval

Embedding models convert text into high-dimensional vectors for similarity search, enabling:

- Contextual enrichment with external examples and statistics.

- Thematic clustering to avoid redundancy and improve coverage.

- Vector databases such as Pinecone and FAISS support efficient retrieval.

Knowledge Graphs and Ontology Systems

- Enforce regulatory constraints and domain accuracy.

- Maintain brand taxonomy for product names and messaging pillars.

- Validate outputs in real time, flagging inconsistencies for correction.

Multimodal Generative Models

Integrate visual and audio capabilities to preview aesthetic direction:

- Generate moodboard imagery via DALL·E or Stable Diffusion.

- Create preliminary infographic layouts and audio motifs.

Contextual Memory and Conversational Interfaces

- Maintain state across brainstorming sessions for progressive refinement.

- Facilitate real-time dialogue between human leads and AI agents.

- Leverage frameworks such as LangChain to store and retrieve context from vector memory stores.

Data Pipelines and Integration Layers

- Input Aggregators: Connectors that ingest audience data, market research, and brand guidelines.

- Normalization Engines: Standardize formats, extract metadata, and tag content semantically.

- Metadata Enrichment: Automated annotation with named entity recognition and taxonomy mapping.

Orchestration Platforms

Sequence tasks, manage dependencies, and handle errors:

- Schedule parallel model invocations and allocate compute resources.

- Coordinate embedding retrieval, clustering, and expansion steps.

- Expose RESTful APIs and message queues for integration with CMS and collaboration tools.

- Collect telemetry on response times, token usage, and concept acceptance rates.

Generated Concept Deliverables and Handoff Protocols

Upon clearing quality gates, the ideation stage produces a set of standardized deliverables that serve as the foundation for prompt design, drafting, and downstream production. Rigorous dependency tracking, quality criteria, and integration protocols ensure seamless handoff and traceability.

Structured Concept Briefs

- Concept Title: Descriptive label for easy reference.

- Executive Summary: Two- to three-sentence overview of purpose and value proposition.

- Thematic Keywords: Tags capturing topic, tone, and audience focus.

- Narrative Angle: Storytelling approach or message framing.

- Target Persona Mapping: References to audience segment IDs.

- Asset Requirements: Required formats (e.g., blog posts, infographics, videos).

- Priority and Confidence Scores: AI-generated ratings for strategic fit and distinctiveness.

Briefs are exported in JSON or CSV and stored in repositories such as Airtable. Integration with automation tools like Zapier enables real-time notifications to prompt design agents.

Thematic Clusters and Matrices

- Cluster Labels: Unified theme names covering related concepts.

- Mapping Tables: Tabular links between brief IDs and cluster IDs.

- Visualization Artifacts: Graphs or heat maps from platforms such as Miro.

Narrative Outlines

- Act Headings: Phases of the story (Hook, Challenge, Resolution).

- Bullet Details: Descriptions of content in each section.

- Emotional Tone Guides: Language style recommendations.

- Data References: Links to research or statistics.

Outlines are delivered as annotated Word or Google Docs, or serialized JSON for drafting agents like GPT-4 and Claude.

Visual Mood Boards

- Image Sets: Curated collections tagged with style metadata.

- Color Palettes: Hex codes for primary and secondary palettes.

- Typography Guides: Font recommendations aligned to brand rules.

- Wireframe Sketches: Low-fidelity layouts from design tools.

Dependency Management and Quality Criteria

- Source Input IDs: References to discovery artifacts and data sources.

- Agent Version Tags: Records of AI model versions and prompt templates used.

- Data Quality Flags: Indicators for missing or ambiguous inputs.

- Compliance Labels: Classification of regulatory and sensitivity requirements.

Automated validation via platforms like Copy.ai and Jasper enforces brand voice compliance, originality, relevance, and regulatory adherence. Flagged concepts enter refinement loops with human editors or specialized review agents.

Handoff Protocols

- Artifact Publication: Approved briefs and clusters published to a content repository with standardized naming conventions.

- Event Triggers: Webhook notifications invoke prompt orchestration workflows.

- Schema Translation: JSON schemas map concept fields to prompt parameters automatically.

- Access Control: Role-based permissions govern retrieval of concept details.

- Version Tracking: Integrated version control records changes from ideation through publication.

Alignment With Subsequent Stages

- Precision Prompt Engineering: Clear mapping of concept elements to prompt variables reduces ambiguity in draft generation.

- Parallel Drafting Scalability: Consistent metadata enables orchestration engines to launch multiple drafting agents simultaneously.

- Streamlined Reviews: Standardized documentation simplifies automated and human review.

- Attribution and Analytics: Persistent concept IDs allow performance metrics to be tied back to original ideas for future optimization.

By integrating these deliverables and protocols into an end-to-end AI-driven pipeline, organizations achieve a scalable, transparent, and efficient content production process that consistently delivers high-impact creative at scale.

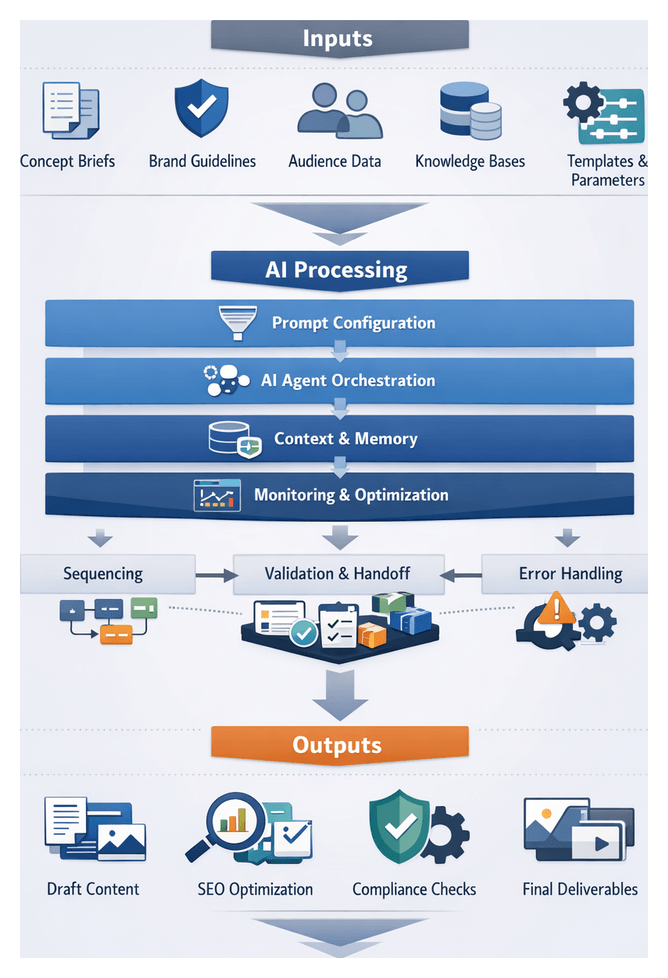

Chapter 3: Prompt Design and AI Agent Orchestration

Prompting Stage Objectives and Configuration

The prompting stage transforms strategic concepts into precise instructions that guide AI agents toward consistent, brand-aligned content generation. By defining clear objectives, contextual parameters, and quality constraints, this phase reduces ambiguity, minimizes iteration cycles, and establishes the guardrails for downstream workflows.

- Establish Clear Objectives: Translate high-level themes, tone, and deliverable formats into explicit prompt instructions.

- Configure Contextual Parameters: Set context window sizes, memory retrieval settings, and external knowledge references.

- Embed Quality and Compliance Constraints: Incorporate style guides, legal requirements, and performance targets into prompt templates.

Prerequisites and Core Inputs

Successful prompt configuration relies on validated artifacts, accessible knowledge sources, and defined governance structures.

- Concept Briefs: Topic clusters, thematic outlines, and campaign objectives.

- Brand Guidelines: Tone of voice rules, approved terminology, and visual directives.

- Audience Profiles: Demographic segments, personas, and behavioral insights.

- Source Materials: Research reports, technical documents, and prior content indexed via vector stores.

- Prompt Templates: Version-controlled frameworks for common tasks with variable placeholders.

- Model Selection Criteria: Temperature settings, token limits, and stop sequences for each model.

- Performance Metrics: Readability, SEO rankings, engagement projections as embedded success criteria.

- Technical Configurations: API credentials, rate limits, and access controls for each AI endpoint.

- Governance Workflows: Approval processes for data privacy, brand compliance, and creative review.

AI Tools and Platforms

- PromptLayer: Prompt version control, performance monitoring, and cost analysis.

- PromptOps: Governance automation, access controls, and compliance checks.

- LangChain: Modular prompt construction, context injection, and streaming pipelines.

- LlamaIndex: Ingestion and retrieval of unstructured data for dynamic context augmentation.

- Weights & Biases: Experiment tracking, hyperparameter logging, and output analytics.

Agent Sequencing and Interaction Patterns

Orchestration defines how specialized agents execute tasks in sequence or parallel to build and refine prompts.

- Sequential Chaining: Dependent tasks execute one after another, persisting outputs to a shared store.

- Parallel Invocation: Independent subtasks run concurrently, reducing latency and improving throughput.

- Hybrid Patterns: Groups of chained tasks execute in parallel, merging results before final optimization.

Communication among agents follows standardized messaging and storage patterns:

- Request-Reply: Synchronous exchanges for critical validations with immediate feedback.

- Event-Driven Notifications: Asynchronous triggers via a message bus to initiate downstream tasks.

- Shared Context Store: Persisted artifacts in a document database or LlamaIndex for retrieval by any agent.

- Stream Processing: Continuous pipelines using LangChain for high-volume prompt generation.

Coordination mechanisms ensure harmony across agents:

- Central Orchestrator: Master service that maintains workflow definitions and monitors progress.

- Distributed Coordination: Peer-to-peer negotiation and result merging via consensus protocols.

- Priority Queues: Task prioritization for urgent or high-impact content requests.

- Concurrency Control: Locks or versioning to prevent conflicting updates to shared contexts.

Shared Context Management

A robust context layer tracks all artifacts and metadata through the sequence, enabling traceability and auditability.

- Context Objects: Bundles of original inputs, transformed drafts, annotations, and quality scores.

- Metadata Enrichment: Provenance data—timestamps, agent IDs, parameter settings—appended at each step.

- Versioned Storage: Snapshots of context changes stored in a versioned document repository.

- Semantic Indexing: Vector indexes supporting similarity queries to reference past prompts or examples.

Error Handling and Reliability

- Retry Mechanisms: Automated retries with exponential backoff for transient failures.

- Fallback Agents: Generic language models that assume specialized tasks when primary agents are unavailable.

- Dead-Letter Queues: Storage of irrecoverable messages for manual review.

- Alerting and Escalation: Notifications to on-call engineers with context snapshots and error logs.

Monitoring and Optimization

- Central Logging: Unified capture of request parameters, response payloads, execution durations, and resource usage.

- Traceability: Unique trace IDs linking related tasks across agents for end-to-end lifecycle analysis.

- Performance Dashboards: Real-time metrics on throughput, error rates, and latencies to identify bottlenecks.

- Compliance Records: Immutable audit logs preserving context snapshots and metadata for governance.

- Bottleneck Analysis: Identification of slow agents for optimization or replacement.

- Batching Strategies: Grouping small tasks to amortize invocation overhead.

- Dynamic Parallelism: Adjustment of parallel execution levels based on system load.

- Adaptive Sequencing: Conditional workflow branches that skip non-critical agents when thresholds are met.

Roles and Parameters for AI Collaboration

Defining agent roles and tuning parameters ensures complementary capabilities and streamlined workflows.

- Briefing Agent: Validates inputs and enriches context with knowledge-graph lookups or semantic tags.

- Drafting Agent: Generates text based on prompts, with adjustable creativity via temperature and top-p settings.

- Review Agent: Enforces grammar, style, and policy compliance using editing models or rule-based systems.

- Optimization Agent: Applies SEO analysis, keyword density checks, and structured data enrichment.

- Aggregation Agent: Merges parallel outputs, resolves conflicts, and assembles final deliverables.

Common parameters include temperature, max tokens, context window size, stop sequences, and memory retrieval settings. Continuous monitoring against readability, compliance, and SEO metrics enables dynamic parameter adjustment.

Prompt Artifacts and Metadata

- Prompt Templates: JSON or YAML definitions with placeholders and conditional logic.

- Parameter Profiles: Configured settings for temperature, token limits, and sampling strategies.

- Execution Logs: Records of API requests, responses, latency metrics, and error codes.

- Generated Candidates: Raw text or multimodal snippets with confidence scores.

- Memory Snapshots: Serialized context states capturing prior exchanges or retrieved data.

- Relevance Scores: Semantic alignment with keywords and brand descriptors.

- Diversity Metrics: Lexical and thematic variety across candidates.

- Compliance Flags: Automatic detection of policy violations or sensitive content.

- Resource Usage: Token consumption, compute time, and memory utilization per agent.

Dependencies and Integrations

- Knowledge Bases: Corporate wikis, product documentation, and domain datasets.

- Brand Repository: Centralized tone, terminology, and style guidelines.

- AI Endpoints: OpenAI GPT-4, Anthropic Claude.

- Orchestration Platforms: Prefect, Apache Airflow.

- Parameter Store: Version-controlled sets ensuring reproducibility and auditability.

Handoff Mechanisms and Validation

- Automated Selection Engine: Filters and ranks candidates by relevance and compliance flags.

- Payload Packaging: Bundles selected excerpts, templates, metadata, and execution context into a drafting payload.

- Event Triggers: Signals downstream drafting agents via message brokers.

- API Ingestion: Drafting services receive payloads through REST or gRPC endpoints with acknowledgment logging.

- Versioning: Incremental tags correlate drafts with original prompt contexts.

- Schema Validation: Automated checks against JSON schemas to prevent missing fields or type mismatches.

- Quality Gates: Threshold checks on relevance and compliance before handoff completion.

- Health Checks: Verification of model endpoints and knowledge sources with fallback strategies.

Downstream Draft Integration

- Contextual Assembly: Reconstruction of memory snapshots and reference URIs from payloads.

- Prompt Enrichment: Augmentation with localization parameters, user preferences, or A/B test identifiers.

- Parallel Processing: Sharding payloads across multiple drafting instances with ordering controls.

- Draft Storage: Persistence in content management systems, each linked back to the original prompt artifacts.

Scalability and Resilience

- Clustered Brokers: Horizontal scaling of message queues to distribute load.

- Backpressure Management: Rate limiting and circuit breakers to protect drafting services.

- Retry and Dead-Letter Policies: Automated retries with limits and manual remediation for persistent failures.

- Endpoint Redundancy: Failover routing to alternate AI providers under heavy load or rate limits.

By integrating these configurations, sequencing patterns, artifacts, and handoff protocols, the prompting stage lays a robust foundation for scalable, consistent, and compliant AI-driven content production. Each element ensures seamless progression into drafting, review, and optimization, upholding strategic intent and operational efficiency.

Chapter 4: Content Drafting and Generation

Drafting Stage Overview

The drafting stage is the critical junction where conceptual outlines and thematic frameworks are transformed into first-pass content assets. Within an AI-driven workflow, this phase leverages specialized language, visual and multimedia models in parallel to generate on-brand text, imagery and supporting media at scale. Key objectives include rapid first-pass creation, consistent brand voice enforcement, multimodal asset production and traceable, metadata-rich outputs that underpin subsequent review and optimization.

- Rapid first-pass drafting using models such as OpenAI GPT-4 for narrative prose and Anthropic Claude for conversational segments.

- Brand and voice consistency driven by embedded style guides and terminology glossaries.

- Scalable, parallel generation of text, visuals and multimedia elements.

- Preservation of narrative continuity through managed context memory and versioned prompt schemas.

- Metadata annotation for end-to-end traceability and auditing.

Core Inputs and Readiness Conditions

Essential Inputs

Successful drafting depends on structured upstream artifacts that guide AI agents. Each input must adhere to machine-readable schemas (JSON, YAML) and include:

- Conceptual outlines and document skeletons defining sections, headings and key messages.

- Prompt specifications with system roles, tone directives and style constraints.

- Brand guidelines and voice profiles stored in standardized repositories.

- Audience and persona data shaping narrative angles and personalization hooks.

- Domain knowledge assets such as technical specifications, compliance rules and glossaries.

- Multimedia references including design mockups, image libraries and audio cues.

Prerequisites and System Integration

Prior to invoking drafting agents, the following conditions must be met:

- Model selection and configuration: Models assigned per modality—GPT-4, Claude, Google Vertex AI, diffusion engines like DALL·E 2 or Stable Diffusion.

- Compute resources provisioned: GPU/TPU clusters, cloud inference endpoints and autoscaling policies via Kubernetes or serverless services.

- API endpoint access and security: Rate limits, authentication tokens and monitoring hooks for each AI provider.

- Contextual memory initialization: Retrieval-augmented stores or dynamic vectors populated with upstream inputs.

- Workflow definitions: Orchestration scripts or state machines in Apache Airflow or proprietary platforms with retry and timeout policies.

- Access controls and audit trails: Role-based permissions and logging of prompt versions, input sources and draft iterations.

Quality and Compliance Conditions

Draft outputs must satisfy predefined metrics and rules:

- Automated style checks: Readability scores, vocabulary complexity and brand voice alignment via services like Grammarly Business.

- Regulatory guardrails: Embedded legal, medical or financial compliance rules in prompt definitions and post-draft filters.

- Inclusive language enforcement: DEI lexicon filters and context-aware rewriting policies.

- Security and privacy protocols: Redaction, encryption and data residency compliance.

Readiness Checklist

- Validated concept outlines and prompt templates available.

- Model endpoints health-checked and performance verified.

- Compute quotas and concurrency limits confirmed.

- Input schemas validated and tagged with metadata.

- Brand guidelines and compliance rules loaded into the orchestration environment.

- Monitoring, logging and alerting subsystems activated.

Deployment and Orchestration Architecture

Model Selection and Role Specialization

Mapping content objectives to model capabilities is essential for quality and efficiency:

- Transformer-based language models: GPT-4, Cohere for long-form text.

- Retrieval-augmented generation for fact-based outputs.

- Diffusion and GAN-based engines: DALL·E 2, Stable Diffusion, Adobe Firefly for imagery.

- Domain-specialized agents for audio/video synthesis.

Infrastructure and Supporting Systems

Robust deployment relies on containerized services and orchestration layers:

- Containerization with Docker and container registries.

- Kubernetes clusters for auto-scaling inference pods.

- API gateways exposing versioned endpoints.

- Model registries like MLflow and Hugging Face Model Hub.

- Data pipelines integrating knowledge bases and embedding stores.

Integration with Prompt and Workflow Pipelines

- Prompt templating services for centralized instruction definitions.

- Stateful context stores (Redis, PostgreSQL) to maintain dialogue history and revision state.

- Workflow engines (Apache Airflow, Camunda) coordinating dependencies and retries.

- Event buses triggering model invocations and downstream processes.

Parallel Generation Process

Task Segmentation and Agent Orchestration

A centralized orchestrator divides outlines into discrete subtasks and dispatches them to specialized agents:

- Segment text sections, image requests and video script tasks.

- Map subtasks to suitable models or microservices.

- Package prompts with style guidelines and reference materials.

- Dispatch requests concurrently via asynchronous message queues or HTTP/2 multiplexing.

- Monitor agent status and collect partial outputs in real time.

Concurrency and Resource Management

Intelligent distribution ensures stability and throughput:

- Priority queues for time-sensitive assets.

- Rate limiting to avoid API throttling.

- Batching large documents into logical chunks.

- Affinity rules to maintain context with consistent model instances.

- Backpressure handling to prevent overload.

Auto-Scaling and Cost Optimization

- Horizontal Pod Autoscaling in Kubernetes based on CPU, queue length and latency.

- Use of spot and on-demand instances for workload differentiation.

- Model sharding across GPUs for large inference loads.

- Real-time cost monitoring dashboards for budget-aware scaling.

Synchronization, Monitoring and Resilience

Output Aggregation and Handoff Criteria

As subtasks complete, outputs are validated, reassembled and staged for review:

- Schema and checksum validation to ensure structure compliance.

- Concatenation of text segments and association of images with captions.

- Duplicate detection and de-duplication workflows.

- Metadata attachment: timestamps, model versions, confidence scores.

- Staging assets in DAM or CMS until batch completion.

Handoff depends on finished states, cleared quality flags and metadata enrichment.

Error Handling and Dynamic Tuning

- Retry policies with exponential backoff for transient failures.

- Alternate model routing (fallback to earlier GPT-4 endpoint or Claude Core).

- Graceful degradation using placeholders for noncritical assets.

- Automated alerts and quarantine queues for persistent errors.

- Dynamic parameter adjustment: latency tracking, prompt refinements, temperature and top-k calibration.

Integration with Asset Management

- Automatic ingestion into DAM with metadata tagging.

- Version control of draft artifacts with rollback capabilities.

- Access controls for review agents and editors.

- Notification hooks triggering the review stage.

- Content indexing for search and preview generation.

Deliverables and Handoff Protocols

Draft Artifacts

- Primary text drafts in plain text or HTML, annotated with prompt and concept identifiers.

- Multimedia placeholders and low-resolution proxies with descriptive alt text.

- Structured metadata manifests (JSON/XML) detailing style metrics, word counts and coherence scores.

- Versioned output bundles including agent configuration snapshots.

- Integration adapters for CMS platforms like Contentful or WordPress.

Packaging for Automated Review

- Consolidate text, metadata and multimedia references into ZIP archives or JSON payloads.

- Annotate segments with review tags: grammar, style, factual checks, SEO readiness.

- Compute checksums (SHA-256) for integrity validation.

- Include priority levels and SLA targets for review routing.

- Deliver packages via message queues, cloud storage events or API calls.

Handoff Protocols and Collaboration Integration

- Emit event-driven notifications to trigger review workflows.

- Use short-lived API vouchers for secure draft retrieval by review agents.

- Automate task creation in project management tools (Jira, Asana) with due dates derived from SLAs.

- Update dashboards with handoff acknowledgements and status tracking.

- Implement retry logic and escalation paths for transfer failures.

Content Management and Collaboration

- CMS ingestion via RESTful APIs into platforms such as Contentful or WordPress.

- Document exports to Google Docs or Microsoft Word for inline feedback.

- Git repository commits with pull request templates and automated quality gates.

- Real-time chat notifications in Slack or Teams with summary cards and action buttons.

Governance and Compliance Considerations

- Detailed audit logs of all handoffs and draft versions.

- Schema version management and backward compatibility controls.

- Error categorization with clear escalation mechanisms.

- Performance monitoring of handoff latency and failure rates.

- Additional checkpoints for regulated content (WCAG, data privacy, legal review).

Chapter 5: Automated Review and Refinement

Unifying Content Operations with AI Orchestration

Organizations face growing pressure to deliver high volumes of consistent, on-brand content with minimal latency. Traditional workflows built on siloed teams and disconnected tools introduce bottlenecks, inconsistent messaging, and manual overhead when stitching together drafts, reviews, and optimizations. A unified AI orchestration framework provides a structured, end-to-end mechanism that aligns content inputs, creative processes, and distribution channels. By coordinating specialized AI agents through a central controller, teams achieve reliable quality, accelerated time to market, and scalable creative output.

The rapid adoption of generative AI unlocks possibilities for automating ideation, drafting, review, and optimization tasks. However, fragmented implementations of standalone AI tools—such as Jasper.ai for text generation or Adobe Sensei for image and video assistance—often lack overarching governance. A cohesive orchestration layer standardizes data handoffs, enforces brand and compliance rules, and provides transparency into each stage of production.

Core Principles and Workflow Sequence

Effective AI orchestration rests on four foundational principles:

- Modularity: Encapsulate each stage as a discrete module with defined inputs, outputs, and performance criteria.

- Interoperability: Use standardized data schemas and communication protocols to enable seamless metadata and artifact exchange.

- Governance: Maintain centralized policy management for brand guidelines, regulatory compliance, and quality thresholds.

- Observability: Implement logging, tracing, and analytics to capture agent decisions and workflow performance.

The typical end-to-end workflow sequence is:

- Input Aggregation: Normalize business requirements, source assets, and audience insights into a structured repository.

- Concept Ideation: AI agents generate themes and outlines using models like GPT-4 or Claude.

- Prompt Configuration: Design context-aware prompts with parameter settings managed via orchestration consoles.

- Content Generation: Run language models and multimodal engines in parallel via tools like Copy.ai or in-house transformer services.

- Automated Review: Editing agents conduct grammar, style, and factual checks using Grammarly and Hemingway Editor.

- Optimization: SEO agents enrich content with keywords, meta descriptions, and readability improvements through platforms like Surfer SEO.

- Personalization: Tailor variants for audience segments using behavioral models and CRM data.

- Multimodal Integration: Assemble text, images, audio, and video into cohesive deliverables.

- Distribution Scheduling: Adapt formats and schedule releases via CMS and social media APIs.

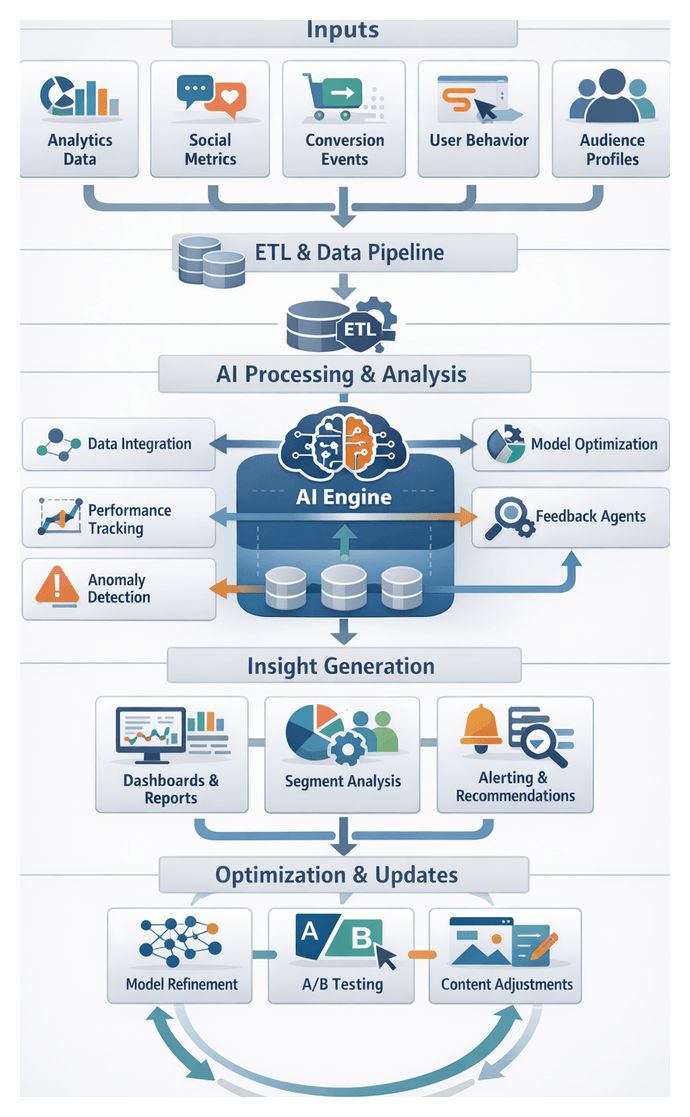

- Analytics Feedback: Ingest performance metrics to refine prompts, retrain models, and adjust workflows.

AI Agents and Their Roles

Assigning clear responsibilities to specialized AI agents ensures consistent quality and scalable creativity:

- Ideation Agents: Analyze audience data and market trends to propose content themes and narrative frameworks, leveraging large language models.

- Prompt Design Agents: Translate concepts into precise prompts, managing parameters and version control for reproducibility.

- Content Drafting Agents: Generate initial drafts, social posts, email copy, and video scripts using text and multimodal engines.

- Review and Quality Assurance Agents: Perform automated editing, fact-checking, and brand consistency audits with tools such as Grammarly and Hemingway Editor.

- Optimization and SEO Agents: Evaluate keyword integration, readability, and meta tags, guided by analytics and platforms like Surfer SEO.

- Personalization Agents: Customize tone, calls to action, and imagery based on persona profiles, CRM insights, and behavioral signals.

- Distribution Orchestration Agents: Manage multi-channel publishing, format adaptation, and scheduling through CMS connectors and social APIs.

The automated review and refinement stage serves as the critical quality gate in the content pipeline. It employs AI agents for grammar correction, style alignment, factual verification, and compliance checks to ensure every draft aligns with brand standards and regulatory requirements.

Objectives and Outputs

This stage aims to:

- Validate adherence to editorial and brand guidelines at scale.

- Identify and correct linguistic errors and factual inaccuracies.

- Enforce legal, regulatory, and accessibility standards.

- Enhance clarity and readability for the target audience.

- Produce structured feedback reports and annotated drafts for iterative refinement.

Required Inputs

Effective review relies on four key inputs:

- Draft Content Assets: Text drafts, image placeholders, scripts, and metadata tagged with unique identifiers and version numbers.

- Editorial and Style Guidelines: Brand manuals, tone documents, and style sheets accessed via CMS or APIs.

- Terminology and Glossaries: Approved term lists, product names, and legal phrases enforced through repositories and tools like Grammarly.

- Regulatory Specifications: Data privacy rules, accessibility standards, and sector regulations sourced from compliance platforms.

Prerequisites and Integration

Success depends on:

- Structured metadata handovers with author IDs, timestamps, and campaign tags.

- Agent configuration for error thresholds and domain adaptation via orchestration consoles.

- Real-time connectivity to knowledge bases, style repositories, and compliance engines.

- Version control and audit trails for all edits and feedback annotations.

- Defined escalation paths for severe issues routed to human approvers.

Refined Outputs and Approval Handoffs

Upon completion of review, the system delivers polished content assets alongside validation metadata. These artifacts provide transparency, traceability, and seamless handoff to optimization and distribution stages.

Artifact Specifications

- Final content body in HTML, Markdown, or JSON formats.

- Inline editorial annotations with automated change-tracking.

- Compliance and brand-voice reports generated by validation agents.

- Revision history logs capturing edit iterations and agent IDs.

- Quality scorecards detailing grammar error rates, readability grades, and SEO readiness.

- Metadata bundles with taxonomy tags, entity extractions, and focus keywords.

Dependency Matrix and Quality Gates

Key dependencies include:

- Initial drafts and multimedia assets from the generation stage.

- Brand voice and style guides managed in platforms like Contentful.

- Editorial policies and compliance checklists from systems such as Acrolinx.

- Tone and terminology models trained with tools like ProWritingAid.

- SEO parameters and readability thresholds provided by Surfer SEO.

- Metadata schemas and taxonomy hierarchies for downstream tagging.

Quality gates enforce criteria such as a Flesch-Kincaid score above 60, grammar error density below 1 per 1,000 words, and style compliance above 95 percent. Failed checks trigger automated rerouting or notifications for manual intervention.

Handoff Mechanisms

Refined artifacts enter the next stage via:

- API-Driven Transfer: RESTful endpoints consume JSON payloads containing content bodies, annotations, and metrics.

- Message Queues: Serialized artifacts published to Kafka or AWS SNS/SQS and consumed by optimization agents.

- Repository Commits: Versioned commits to Git or object storage with webhooks triggering downstream workflows.

- Content Operations Platforms: CMS connectors tag content as “Ready for Optimization” in systems like Contentful.

- Stakeholder Notifications: Automated alerts via email, Slack, or Microsoft Teams, with approvals tracked in platforms such as Jira.

Authentication, schema validation, and error handling are enforced at each integration endpoint. Successfully handed-off content is consumed by optimization agents to enrich keywords, generate meta descriptions, and adjust structure while preserving editorial context through inline annotations and compliance reports. This rigorous approach ensures dependable quality and alignment with strategic guidelines as content moves through a scalable, AI-driven workflow.

Chapter 6: Optimization for Engagement and SEO

Fragmented Content Production Challenges

Organizations today produce diverse content—blog posts, white papers, social media updates, videos and interactive assets—across multiple channels, formats and languages. Traditional manual workflows struggle under disconnected processes, disparate tools and siloed responsibilities, leading to inefficiencies that undermine consistency, quality and scalability. Without unified frameworks, teams rely on email chains, shared drives and ad-hoc meetings, causing lost feedback, version confusion and last-minute rework. As asset volumes swell, tracking requirements and maintaining brand voice across hundreds of deliverables per quarter expose the fragility of manual production models.

Process fragmentation arises when tasks lack standardized workflows. Locally defined checklists and folder structures inhibit cross-project reuse and scaling. Technology fragmentation occurs when multiple point solutions—content management systems, project management tools and file-sharing platforms—operate without integration, slowing workflows and risking data inconsistency. Knowledge fragmentation results from scattered brand guidelines, asset data and performance metrics, eroding the ability to learn from past campaigns.

Siloed teams exacerbate misalignment: marketing strategists draft outlines without visibility into design capacity or legal review guidelines; designers and writers scramble to reconcile conflicting requirements. Manual handoffs introduce communication breakdowns—comments buried in email threads, track-change conflicts and outdated documents—leading to duplication of effort and overlooked feedback. Without automated version control, each revision cycle amplifies delays and frustration.

Inconsistent quality and brand alignment become common. Style rules, tone of voice and compliance requirements are interpreted variably, eroding brand integrity and audience trust. Data and insights remain locked in siloed analytics platforms, CRM systems and research reports, depriving teams of unified, real-time feedback for data-driven decision making. As content volumes grow, manual workflows buckle under increased demand, forcing either headcount expansion or extended timelines—neither of which align with agile market expectations. Bottlenecks concentrate around resource-intensive tasks such as legal review, editorial approval and multichannel formatting, lengthening time-to-market and diminishing competitive advantage.

Stakeholder frustration mounts as meetings multiply, manual status updates consume valuable time and creative teams experience workflow fatigue. Turnover rises, morale falls and operational expenses increase. This diagnostic stage of mapping current workflows—documenting tools, processes, sample artifacts, brand guidelines, performance data and stakeholder interviews—lays the foundation for a unified, AI-driven framework. Cross-functional commitment, governance frameworks and baseline metrics are prerequisites to transition from fragmented, manual production to orchestrated processes powered by AI agents.

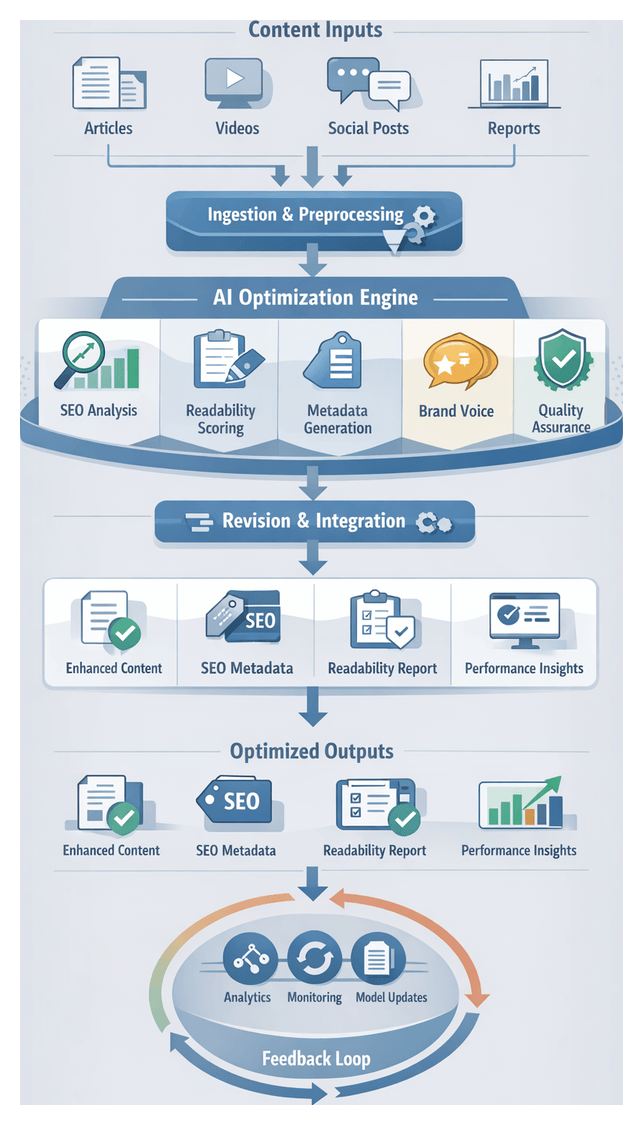

Orchestrating AI-Driven SEO and Readability Enhancement

Optimizing refined drafts for search engine visibility and reader comprehension involves coordinating specialized AI agents within a modular orchestration framework. The Optimization Orchestrator functions as a control plane, dispatching tasks, aggregating results and managing dependencies through RESTful APIs, messaging queues or SDK integrations.

Workflow Architecture and Key Components

- Content Ingestion Module: Normalizes formatting, extracts semantic tags and appends contextual metadata.

- SEO Analysis Agent: Integrates with Semrush, SurferSEO and Clearscope to perform keyword benchmarking, heading optimization, semantic clustering and automated keyword integration via large language models.

- Metadata Generation Service: Uses MarketMuse or Frase to craft optimized title tags, meta descriptions, Open Graph and Twitter Card snippets with brand guidelines.

- Readability Scoring Agent: Leverages Hemingway Editor and Grammarly to compute Flesch-Kincaid, SMOG and clarity metrics, proposing sentence simplifications, active-voice transformations and jargon reduction.

- Brand Voice Agent: Loads predefined voice profiles to ensure formality, empathy and technical depth align with style guides.

- Quality Assurance Agent: Validates SEO compliance, readability thresholds and WCAG 2.1 accessibility standards, routing failures back for remediation.

- Revision Integration Engine: Applies AI-driven suggestions to content, maintaining change logs and version control for auditability.

Optimization Sequence

- Ingestion and Preprocessing: The orchestrator receives drafts with structured fields, performs normalization, semantic tag extraction and registers documents in the pipeline.

- Parallel SEO and Readability Analysis: The SEO Analysis Agent evaluates ranking factors, performs competitive gap analysis, suggests heading adjustments and integrates keywords contextually. Simultaneously, the Readability Scoring Agent calculates readability indices and generates linguistic refinements.

- Metadata Generation: After body optimization, the Metadata Generation Service generates title tags and meta descriptions within character limits, prioritizing keywords and brand integration. Alt text for images is crafted to boost accessibility and SEO.

- Brand Voice Consistency: The Brand Voice Agent scores drafts against voice profiles, adjusting tone and enforcing terminology conventions.

- Automated Quality Assurance: A QA agent executes final checks on SEO, readability and accessibility, triggering alerts or automated handoffs for compliance failures.

- Deliverable Packaging: The orchestrator compiles enriched content, metadata, optimization reports and revision logs into a final package for personalization or distribution agents.

This scalable, repeatable flow ensures every piece of content meets search engine requirements, human reader expectations and brand standards, maximizing impact across digital channels.

Data-Driven Optimization Agent Roles

Specialized AI agents leverage performance data and content analytics to refine discoverability, engagement and relevance. By integrating with analytics platforms, content management systems and SEO tools, these agents apply targeted enhancements to refined drafts and metadata.

- Analytics and Insights Agents: Ingest data from Google Analytics, Adobe Analytics, social platforms and email campaigns. They normalize metrics, detect anomalies, identify trending topics and generate dashboards highlighting optimization opportunities.

- SEO Integration Agents: Perform keyword discovery with semantic analysis, generate metadata, suggest keyword placements, analyze backlink opportunities and monitor algorithm updates via Ahrefs, Moz and other SEO suites.

- Readability and Accessibility Agents: Calculate readability scores, optimize structure with hierarchical headings and bullet lists, validate image alt text, verify color contrast and offer language simplifications to support diverse audiences.

- Semantic Enrichment Agents: Identify entities, recommend contextual internal and external links, suggest topic expansions and integrate knowledge graph schemas to enhance topical authority.

- Predictive Performance Modeling Agents: Use historical data and machine learning to forecast engagement, conversions and search rankings. They simulate optimization strategies, estimate ROI and guide prioritization.

- Tone and Engagement Calibration Agents: Analyze sentiment and emotional triggers, generate headline variants, refine calls to action for clarity, urgency and brand alignment.

- Personalization and Segment Scoring Agents: Build audience profiles from CRM and behavioral data, predict content variant performance per segment, inject dynamic parameters for personalization and monitor segment-specific metrics.

- Automation and Workflow Orchestration Agents: Coordinate job scheduling, manage dependencies, connect to external services via API gateways or message queues, and handle error logging with monitoring tools like Grafana and Prometheus.

- Model Retraining and Feedback Loop Agents: Aggregate post-publication metrics, detect performance drift, automate model retraining pipelines, manage model versioning and validate updated models before deployment.

Supporting infrastructure includes cloud data lakes or warehouses, integration middleware, SEO suites, analytics platforms and orchestration frameworks such as Apache Airflow or Prefect. Robust monitoring and logging ensure observability into agent performance and system health.

Optimized Content Deliverables and Integration Handoffs

The optimization stage produces a comprehensive deliverables package bridging content crafting and personalized distribution. Clear definitions of outputs, dependencies and handoff protocols ensure consistency, reduce rework and accelerate time-to-market.

Primary Deliverables

- Enriched Content Assets: AI-enhanced text with keyword annotations, updated headers, optimized alt text and refined calls to action.

- Metadata and Tagging Package: Title tags, meta descriptions, structured data snippets (JSON-LD or microdata), Open Graph attributes and social card specifications.

- Readability and Engagement Report: Detailed Flesch-Kincaid scores, sentence complexity metrics and predicted time-on-page.

- Keyword Mapping Overlay: Section-level distribution of primary, secondary and long-tail keywords, with hierarchy recommendations.

- SEO Audit Summary: Automated checks for broken links, missing alt attributes, duplicate headings, internal linking opportunities and page speed insights.

- Performance Prediction Models: Machine-learning estimates of click-through rate, bounce likelihood and engagement forecasts.

- Compliance and Brand-Voice Validation: Confirmation of adherence to style guides, regulatory constraints and tone consistency.

Dependencies and Prerequisites

- Refined Draft Content: Clean copy with resolved placeholders and asset references.

- Keyword Taxonomy and Strategy: Validated target keywords and competitive benchmarks from SurferSEO and MarketMuse.

- Brand and Regulatory Guidelines: Style guides, legal copy requirements and WCAG 2.1 standards.

- Analytics and User Data Streams: Historical metrics from Google Analytics and Adobe Analytics.

- Technical Constraints: CMS templates, URL limits, image dimensions and page-speed parameters.

- Integration Credentials: Secure API keys or service accounts for headless CMS, personalization engines and distribution platforms.

- Agent Configuration Profiles: Parameter sets for SEO workflows, readability thresholds and compliance checklists maintained in orchestration tools.

Handoff Protocols

- Packaging Formats:

- ContentPayload.json: Enriched text, metadata tags and keyword mappings.

- SEO_Audit_Summary.xml: Structured audit results for CMS integration.

- ReadabilityReport.pdf: Audit of readability analysis.

- Versioning and Change Tracking: Semantic version identifiers linked to Git commits or CMS revisions.

- API-Driven Transfer: Orchestration agents push payloads to personalization engines via RESTful endpoints or message queues.

- Confirmation and Quality Gates: Handshake protocols using checksums or digital signatures to verify integrity before downstream processing.

- Human-In-The-Loop Notifications: Alerts via email or Slack for manual review of sensitive content, with links to assets in a centralized DAM.

Asset Packaging Specifications

ContentPayload.json

- id: Unique content identifier

- version: Semantic version tag

- body: HTML string with keyword span annotations

- metadata: Object containing titleTag, metaDescription, ogTitle and ogDescription

- keywords: Array of objects with frequency, intent and priority fields

SEO_Audit_Summary.xml

- <pageSpeed> Numeric score

- <altTextReports> List of image alt attributes

- <linkChecks> BrokenLinks, InternalLinks, OutboundLinks </linkChecks>

ReadabilityReport.pdf

- Executive summary of key scores

- Section-by-section readability breakdown

- Recommendations for simplification

Validation and Quality Gates

- Schema Validation: Against registered JSON-Schema and XSD.

- Checksum Comparison: To ensure file integrity.

- Cross-Field Consistency Checks: Verifying metadata and content alignment.

- Accessibility Audit: Assessing alt text and semantic HTML structure.

- Brand-Voice Verification: Scoring alignment against style-guide vectors.

Integration into Downstream Systems

- Personalization Engines: Ingest ContentPayload.json for segment-specific variants, dynamic token replacement and user-profile adaptation.

- Distribution Platforms: Consume metadata manifests for scheduling websites, email campaigns and social channels, with format conversions as needed.

- Analytics and Feedback Systems: Log performance predictions and SEO audit results for post-publication comparison against real-world metrics.

By defining optimized outputs, specifying dependencies and codifying handoff protocols, organizations achieve end-to-end automation without sacrificing quality or brand alignment. This streamlined, repeatable process connects AI-driven optimization directly to audience-focused delivery, maximizing efficiency and impact.

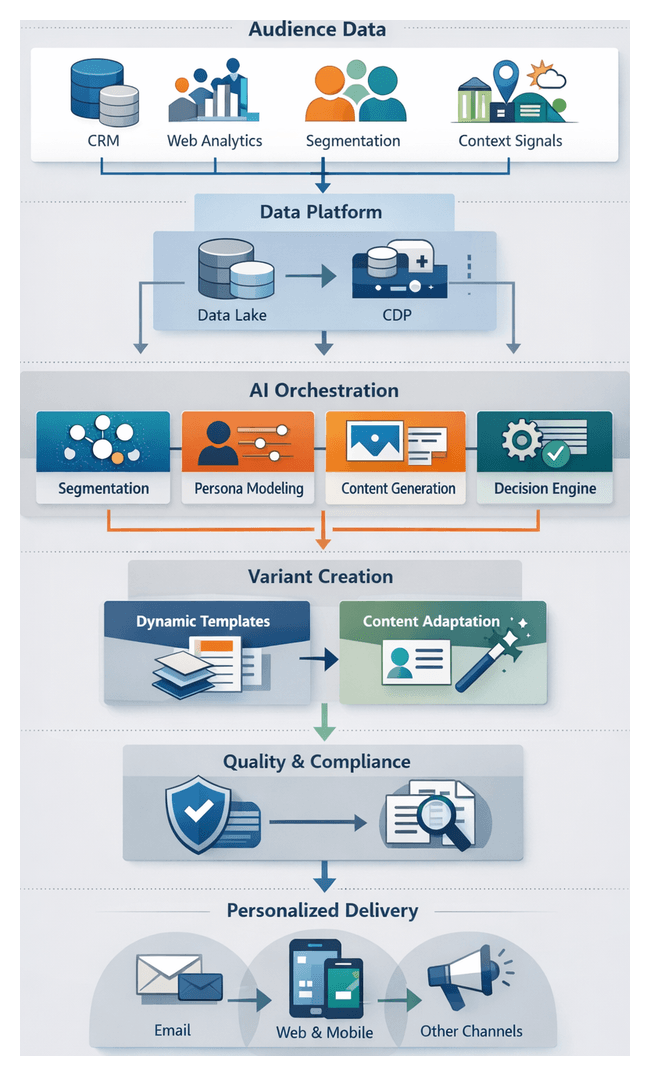

Chapter 7: Personalization and Audience Targeting

The personalization stage transforms generic content into tailored experiences by leveraging behavioral, demographic, and contextual data. By aligning messaging with individual preferences and real-time signals, organizations can boost engagement, improve conversion rates, and foster customer loyalty. This stage relies on a coordinated AI orchestration framework to generate, validate, and distribute multiple content variants while ensuring brand consistency and regulatory compliance.

Personalization Objectives and Data Requirements

Clear objectives guide AI agents and define the necessary data inputs for effective personalization:

- Deliver contextually relevant content using user profiles and messaging templates

- Improve conversion rates with dynamic calls to action aligned to individual intent

- Enhance user experience by selecting optimal format and tone for each segment

- Maintain brand consistency via centralized style and messaging guidelines

- Scale content variants efficiently through automated generation and selection

Successful personalization depends on robust audience data and infrastructure components:

- Unified Customer Profiles: Consolidated data from CRM, web analytics, email marketing, and offline sources

- Segmentation Strategy: Defined segments based on demographics, behavior, purchase history, lifecycle stage, and predictive intent

- Behavioral Data Streams: Real-time tracking of page visits, clicks, session duration, and consumption patterns

- Contextual Signals: Device type, location, time, weather, and campaign parameters

- Brand Guidelines: Centralized assets specifying tone, messaging hierarchy, and compliance rules

- Privacy Metadata: Consent statuses and data usage policies for GDPR, CCPA, and other regulations

Data Infrastructure and Integration

A scalable, low-latency data architecture is essential to feed AI agents with accurate, up-to-date inputs:

- Data Lake or Warehouse: Central repository for raw and processed audience data

- Customer Data Platform (CDP): Unified user profiles with real-time updates

- API Layer: Standardized interfaces for segmentation outputs and contextual signals

- Data Pipelines: ETL/ELT workflows to normalize, clean, and enrich data

- Event Streaming: Platforms such as Apache Kafka or AWS Kinesis for real-time behavior delivery

Integration ensures AI agents access complete, accurate profiles. Latency and throughput must support use cases like on-site personalization or send-time email optimization.

Audience Modeling and Agent Collaboration

Audience modeling interprets raw data to build detailed personas and inform content assembly. AI agents collaborate to segment users, generate profiles, and enable real-time decisioning.

Data Sources and Preprocessing

- CRM systems for transactional history and support interactions

- Web analytics for navigation paths, session duration, and engagement metrics

- Social listening tools for sentiment and topical interest

- Third-party demographic databases to enrich profiles

Feature engineering agents cleanse data, derive RFM scores, engagement indexes, and propensity indicators. Natural language processing extracts themes from feedback and social posts.

Behavioral and Psychographic Modeling

- Clustering algorithms to identify cohorts with similar behavior

- Classification models to predict response likelihood

- Topic modeling for latent interests

- Sentiment analysis to gauge emotional drivers

Models are retrained as new data streams in, ensuring personas remain current and relevant.

Agent Roles and Orchestration

- Segmentation Agent: Clusters users and assigns propensity scores

- Persona Generation Agent: Produces human-readable profiles with motivations and tone guidelines

- Behavior Monitoring Agent: Updates segment membership in real time

- Context Enrichment Agent: Integrates external signals like seasonal trends or industry news

- Decisioning Agent: Selects content variants on the fly based on current session behavior

An orchestration layer schedules tasks, manages dependencies, and monitors SLAs. An event bus supports asynchronous communication, while feature stores and model registries maintain version control. Governance agents enforce privacy, consent, and data lineage requirements.

Variant Generation and Targeting Workflow

This workflow converts static assets into personalized messages for each segment or user.