Orchestrating AI Agent Workflows for Scalable Employee Productivity

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Purpose and Scope

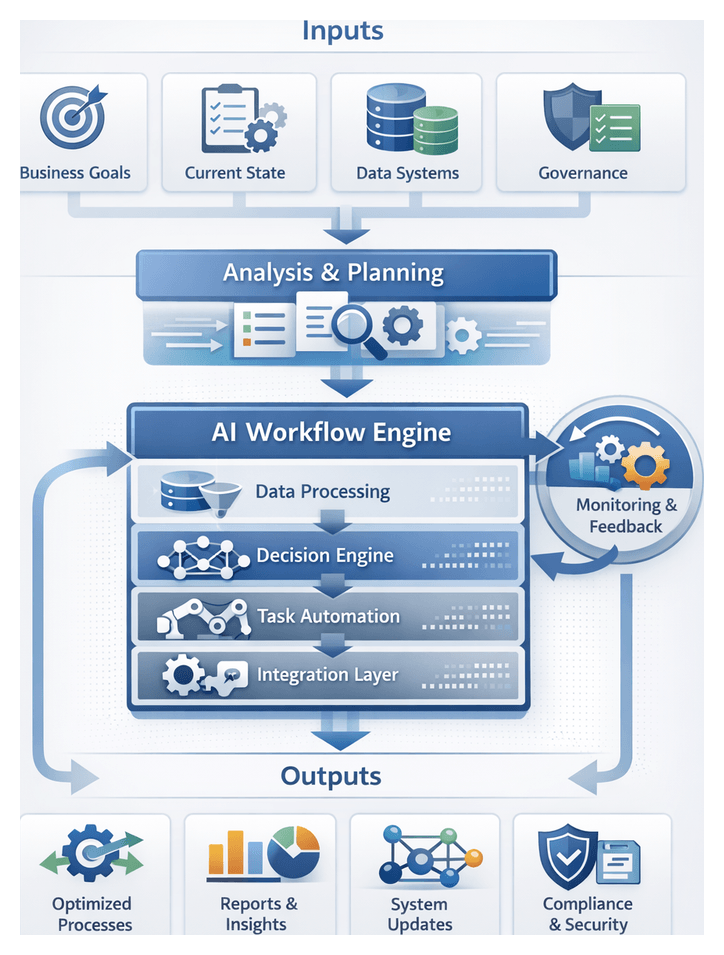

In the initial stage of an AI-driven workflow initiative, organizations establish a strategic foundation to drive scalable productivity. This stage clarifies pain points caused by fragmented tasks, manual handoffs, and data silos. It aligns executive sponsors, functional leaders, IT architects, and end users around shared objectives, success metrics, and governance principles. By documenting existing gaps and defining scope, timelines, and risk tolerance levels, stakeholders gain a unified vision. These preparations mitigate misalignment and technical dependencies, providing a baseline for all subsequent design, integration, and deployment activities.

Inputs and Prerequisites

- Stakeholder Alignment and Vision Setting: Document high-level business objectives, ROI targets, process latency issues, success criteria, and governance models.

- Current State Assessment: Conduct process mapping, time-and-motion studies, system log reviews, and frontline interviews to quantify manual effort and identify bottlenecks.

- Data and System Inventory: Compile an inventory of CRM, ERP, HRIS, ticketing systems, databases, data lakes, APIs, middleware, messaging platforms, data quality metrics, and security mechanisms.

- Governance, Security, and Compliance: Define data stewardship roles, model governance procedures, risk assessment frameworks, audit trail requirements, and policy enforcement thresholds.

- Executive Sponsorship and Resource Commitment: Secure budget, project timelines, cross-departmental participation, KPI definitions, and escalation paths.

- Risk Identification and Mitigation: Map risks related to data quality, legacy integration, change management, and regulatory constraints, assigning responsible parties and contingency actions.

- Scope, Boundaries, and Success Metrics: Draft a scope document outlining in-scope workflows, architectural boundaries, primary metrics like cycle time reduction and error elimination, and a high-level roadmap.

Designing a Structured AI Workflow

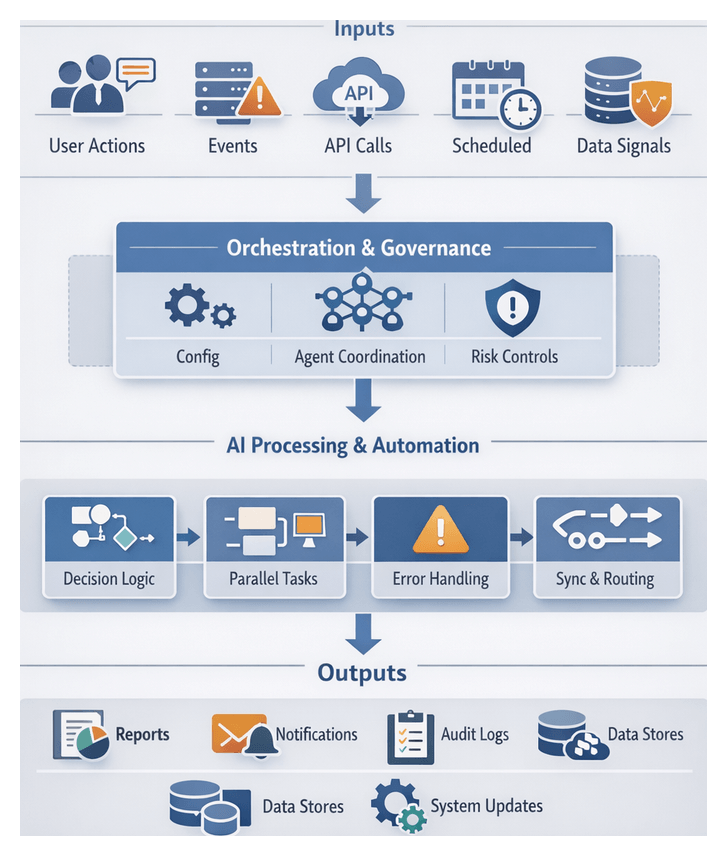

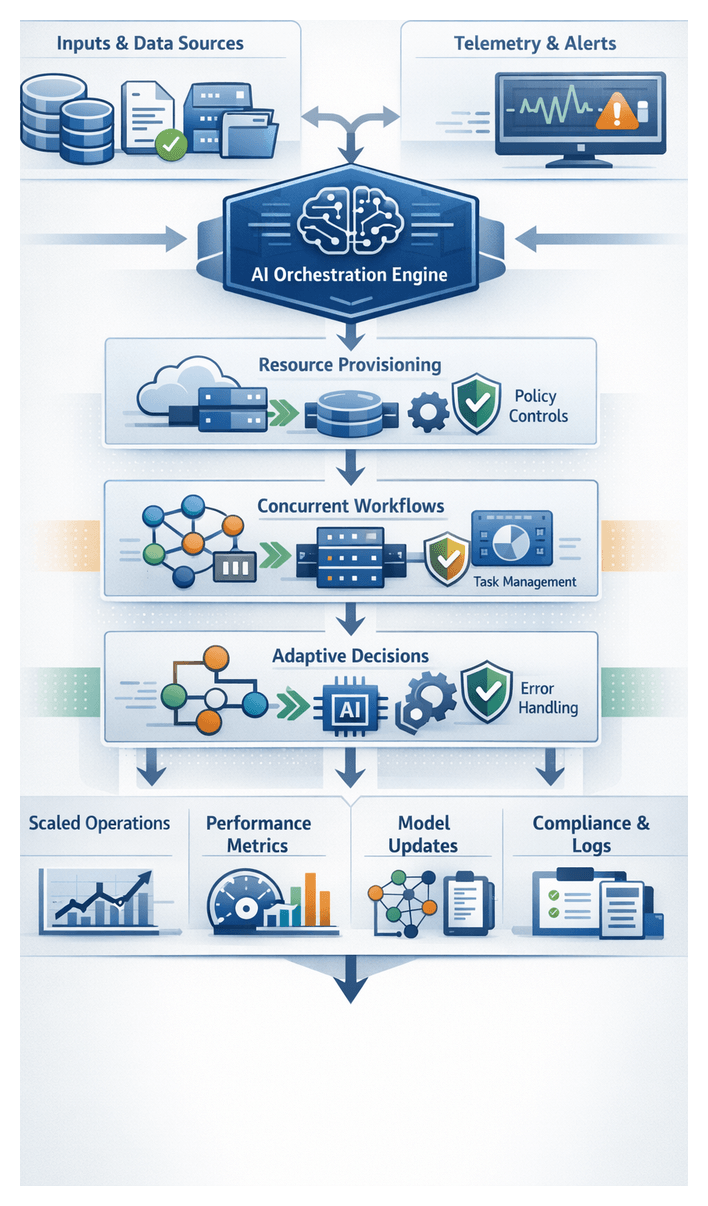

Enterprises operate within a complex mosaic of applications, data repositories, and human roles. A structured AI workflow serves as a blueprint that maps each activity to clear process steps, assigns responsibilities between automated agents and human contributors, and orchestrates data exchange. This end-to-end sequence ensures repeatability, visibility, and scalability, eliminating ad hoc decision-making and manual delays.

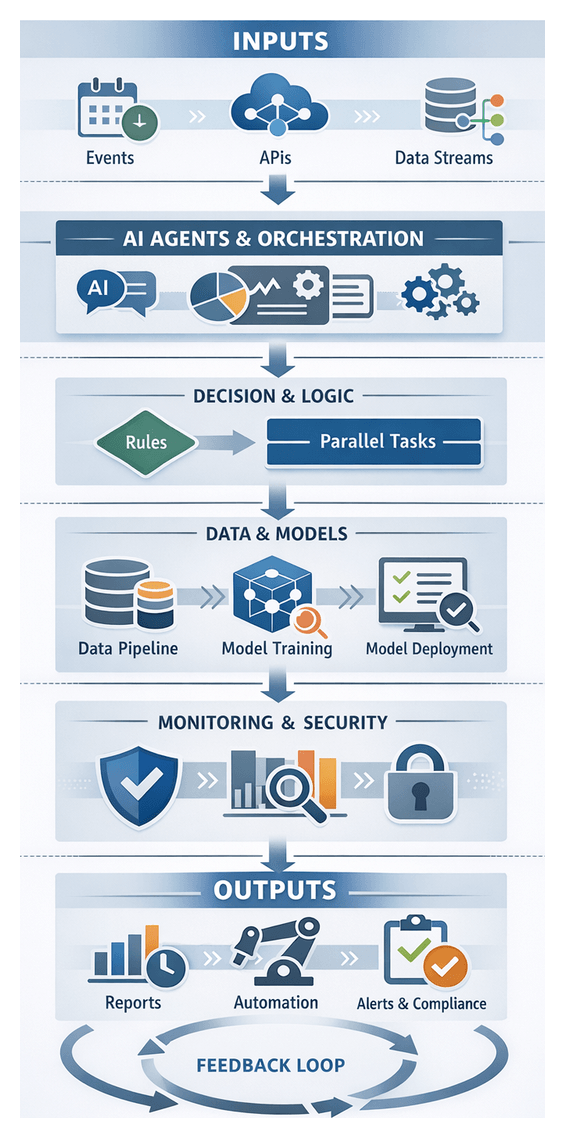

End-to-End Workflow Definition

Workflows begin with trigger conditions—such as new data arrival, user requests, or scheduled events—and proceed through AI-driven analysis, data transformations, automated actions, and user interventions. Outputs may be delivered to end users, forwarded to other systems, or fed back to support continuous optimization. Key characteristics include clarity of inputs and outputs, defined decision points leveraging AI inference or business rules, explicit integration points with systems like Salesforce, SAP S/4HANA, or document management platforms, exception handling, and audit logging.

Key Components

- Trigger Module: Initiates workflows based on events, messages, or schedules.

- Data Ingestion and Preprocessing: Extracts, cleanses, and normalizes data, applying anomaly detection and schema enforcement.

- Decision Engine: Applies business rules and model predictions, supporting branching logic and probabilistic inference.

- Task Orchestration Layer: Coordinates activity sequences, supports parallel execution, and routes tasks between AI agents and humans.

- Integration Connectors: Interface with external systems using REST APIs, message queues, or webhooks.

- Monitoring and Logging: Captures telemetry on execution times, error rates, and resource utilization for compliance and analysis.

- Feedback Loop and Learning: Ingests performance metrics and user feedback to retrain models and refine rules.

System Integration Patterns

Seamless interactions depend on standardized communication protocols, shared schemas, and resilient connectivity. Common patterns include:

- REST APIs for synchronous exchanges with platforms like Salesforce or SAP S/4HANA.

- Event-driven messaging via Apache Kafka, RabbitMQ, or Azure Event Grid.

- Webhooks for notifications and callbacks.

- Database connectors and file transfers for batch data.

- Authentication with OAuth2, JWT, or API keys.

For example, an AI agent may pre-screen customer support requests using the OpenAI GPT series, create tickets in a service management platform, and notify teams through Microsoft Teams or Slack.

Human and AI Collaboration

AI agents excel at high-volume tasks and pattern recognition, while humans provide domain expertise and ethical judgment. Workflows should delineate autonomous AI actions and handoff points for human review. In invoice processing, AI-based OCR services extract data, reconciliation agents validate against master data, and exceptions above thresholds route to human approvers. Approved invoices then flow to payment through the ERP system, with real-time updates sent via chat or email.

AI Agents as Unifying Operatives

AI agents bridge operational silos by coordinating services, automating decisions, and aligning human tasks with digital processes. They exhibit perception, reasoning, and action capabilities to monitor triggers, interpret context, and execute tasks autonomously or in tandem with users.

- Task Orchestration: Invoke services, applications, or sub-agents in defined sequences.

- Data Integration: Ingest, normalize, and route data for consistency.

- Contextual Reasoning: Apply domain rules and predictive models at runtime.

- Adaptive Learning: Refine performance through feedback and monitoring.

- Human-AI Collaboration: Facilitate handoffs and surface actionable insights.

Integration and Orchestration Patterns

- Event-driven coordination with Kafka or Azure Event Grid.

- API-first connectivity to Salesforce or SAP S/4HANA.

- RPA augmentation combining UiPath or Blue Prism with AI decision agents.

- Containerized microservices on Kubernetes.

- Hybrid workflows with approvals in Slack or Microsoft Teams.

Decision Automation and Human Oversight

Agents use rule engines, machine learning, and optimization models. They proceed autonomously when confidence exceeds thresholds, otherwise escalating to human reviewers. Recommendation engines, such as IBM Watson Assistant, rank options for customer support routing. Exception handling agents alert stakeholders, while approval workflows integrate with Oracle ERP for digital signatures and audit logging.

Case Illustrations

In purchase order processing, an AI agent extracts line items via OCR and NLP models on Google Cloud AI, validates codes against an ERP, checks inventory, and opens tickets when discrepancies arise. In HR onboarding, agents orchestrate document collection, background checks via AWS IAM, orientation scheduling, and compliance reminders.

Operational Benefits and Considerations

- End-to-end visibility through unified dashboards.

- Consistency and compliance with automated logic.

- Accelerated cycle times and improved responsiveness.

- Resource optimization by offloading routine tasks.

- Scalability via containerized architectures.

Key considerations include data governance, security controls, telemetry with Prometheus and Grafana, change management, and technology selection aligned with platforms like Azure AI or open-source frameworks such as TensorFlow.

Deliverables, Dependencies, and Handoffs

The Introduction stage produces strategic artifacts that guide detailed design and implementation and formalizes dependencies to ensure seamless progression.

Key Deliverables

- Process Gap Analysis Report: Identifies inefficiencies, handoffs, and silos, highlighting high-impact automation opportunities.

- Stakeholder Alignment Matrix: Maps roles, responsibilities, and communication preferences.

- Objectives and Success Metrics: Translates strategic goals into KPIs, targets, and timelines.

- High-Level Architecture Blueprint: Illustrates core AI ecosystem components, integration points, and data flows.

- Risk and Dependency Assessment: Lists technical, organizational, and compliance risks with mitigation strategies.

- Executive Recommendation Brief: Synthesizes findings for senior leadership, including timelines, resources, and cost-benefit analysis.

- Communication and Change Management Plan: Outlines stakeholder messaging, training strategies, and update cadence.

Critical Dependencies

- Executive sponsorship and defined governance frameworks.

- Stakeholder engagement sessions, including workshops and interviews.

- Access to process documentation, SOPs, and system specifications.

- Technology landscape audit covering applications, databases, and integration points.

- Data availability and quality assessments.

- Resource allocation and project team structure.

- Compliance constraints related to GDPR, HIPAA, or industry standards.

- Provisioning of collaboration and repository tools.

Handoff Mechanisms

- Gap Analysis to use-case teams for scenario prioritization.

- Success Metrics to data strategy groups for defining data requirements.

- Architecture Blueprint to agent design leads for module configuration.

- Risk Assessment to infrastructure and security teams for mitigation planning.

- Executive Brief to the steering committee for roadmap approval.

- Alignment Matrix to change management for tailored communications.

- Change Plan to the PMO for integration into project schedules.

Each handoff is accompanied by review meetings, approval checklists, version-controlled deliverables, and a centralized repository to preserve audit trails and accountability. This disciplined approach ensures that the project advances on a solid strategic and architectural foundation.

Chapter 1: Defining Productivity Objectives and Use Cases

Purpose and Scope of Objectives and Use Case Definition

Defining clear productivity objectives and consolidating use case inputs establishes the foundation for any AI agent workflow initiative. This stage translates high-level strategic imperatives into measurable performance metrics, aligns stakeholder expectations, and captures essential process and technology context. By articulating purpose and inputs at the outset, organizations maintain focus on delivering tangible business value throughout design, development, and deployment.

Many enterprises face operational complexity driven by siloed processes, manual handoffs, and fragmented reporting across marketing, sales, customer support, finance, and HR. These discontinuities undermine efficiency, obscure accountability, and frustrate employees. To address these challenges, leading organizations orchestrate AI agent workflows that automate repetitive tasks, surface actionable insights, and enforce standardized procedures. Establishing objectives and use case definitions equips teams with a clear roadmap, reducing uncertainty and accelerating time to value.

Translating Business Goals into Measurable Objectives

Translating strategic goals into quantifiable targets is critical to avoid drifting into proofs of concept with limited returns. Well-defined productivity objectives enable leaders to:

- Measure progress against benchmarks such as reduced turnaround times, error rates, or resource utilization.

- Align cross-functional teams on success criteria, avoiding misaligned priorities.

- Compare use case scenarios based on expected ROI, implementation cost, and strategic impact.

- Provide data-driven rationale for executive sponsorship, budget allocation, and change management.

Key Inputs for Objective Setting

Building meaningful objectives requires structured intake of information from business leaders, process owners, and technical teams. Essential input categories include:

- Strategic Imperatives: Documented business unit goals, enterprise strategic plans, and competitive targets that define desired outcomes.

- Stakeholder Requirements: Feedback capturing pain points, compliance needs, and success factors from department heads, frontline employees, and end users.

- Performance Indicators: Existing KPIs such as customer satisfaction scores, average handling times, throughput volumes, and cost per transaction.

- Process Documentation: Current process maps, standard operating procedures, and workflow diagrams revealing handoff points, decision gates, and data dependencies.

- Technology Landscape: Inventory of enterprise systems, data warehouses, APIs, and integration capabilities that will support or constrain agent deployment.

- Regulatory and Security Constraints: Compliance requirements, data privacy standards, and access control policies that must be enforced throughout AI workflows.

Prerequisites for Use Case Definition

Before ideation and evaluation begin, organizations must satisfy several conditions to ensure feasibility and integrity:

- Executive Sponsorship: Visible support from senior leadership secures resources, drives adoption, and aligns stakeholders.

- Cross-Functional Engagement: A steering committee or working group representing business, IT, legal, compliance, and data governance teams fosters shared ownership.

- Data Availability and Quality: Assessment of data sources for completeness, accuracy, timeliness, and accessibility is critical for reliable AI outputs.

- Technical Readiness: Documentation and validation of infrastructure capacity, API endpoints, authentication mechanisms, and toolchain compatibility.

- Governance Framework: Defined roles, decision rights, and approval workflows to manage changes in scope, data access, and performance criteria.

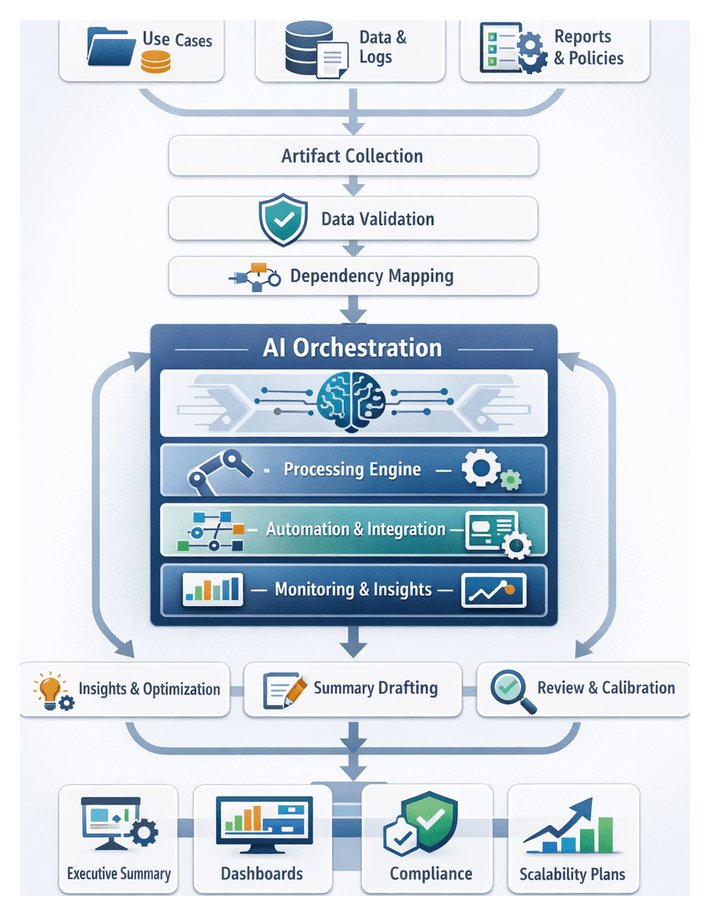

Use Case Intake and Prioritization Workflow

Managing a portfolio of AI use case proposals requires a structured intake and prioritization process. This workflow translates stakeholder requirements into validated scenarios, ranks them objectively, and secures executive approval. The following activities ensure that organizational goals drive consistent, high-impact automation initiatives.

Establishing a Use Case Intake Process

To manage ideation at scale and maintain transparency, organizations often deploy a centralized intake portal or form. Stakeholders submit proposals with scoped objectives, resource estimates, and success metrics. A solution provides templated intake forms that capture business requirement statements, high-level process flows, baseline performance reports, risk assessments, and technology integration matrices. Submissions are reviewed regularly in intake workshops, where standardized scoring criteria—such as expected ROI, complexity score, risk level, and strategic fit—are applied to prioritize requests objectively. Governance checkpoints at defined intervals ensure that emerging priorities and resource constraints inform approval decisions.

Prioritization Criteria

- Return on Investment: Quantified benefits versus total cost of ownership.

- Time to Value: Estimated deployment timeline and speed of measurable impact.

- Implementation Complexity: Technical dependencies, integration effort, and data preparation requirements.

- Risk Exposure: Data sensitivity, regulatory impact, and operational criticality.

- Scalability Potential: Ease of extending the use case across geographies or business units.

1. Stakeholder Requirements Gathering

In this activity, project managers coordinate interviews and workshops to capture detailed requirements. Key practices include:

- Scheduling sessions via calendaring systems and collaboration suites.

- Distributing prework questionnaires through survey tools to elicit priorities.

- Recording discussions in centralized knowledge repositories for traceability.

- Tagging requirements in a management system for version control.

- Assigning follow-up tasks to subject matter experts in project tracking software.

2. Defining Measurable Metrics

Once requirements are gathered, teams translate them into KPIs and target thresholds through:

- Mapping each requirement to productivity metrics such as cycle time, accuracy improvements, or customer satisfaction.

- Leveraging business intelligence dashboards to retrieve baseline performance values.

- Consulting data governance systems to verify metric definitions and lineage.

- Documenting target improvements (for example, 30 percent reduction in manual approval time).

- Storing metric definitions in a shared performance tracking repository.

3. Requirement Consolidation and Validation

Consolidating and validating requirements prevents redundant or infeasible scenarios from advancing:

- Importing requirements into an AI-augmented consolidation tool to identify overlaps.

- Applying semantic clustering algorithms to group related objectives.

- Reviewing consolidated groups with stakeholders via video conferencing platforms.

- Validating groupings against data availability constraints in the enterprise data catalog.

- Updating the requirements management database to reflect validated clusters.

4. Scenario Modeling and Prioritization

With validated requirements, the workflow proceeds to define and rank potential AI use case scenarios:

- Drafting scenario narratives that describe process inputs, decision logic, and expected outputs.

- Estimating effort, resource needs, and anticipated ROI using scenario evaluation tools.

- Invoking an AI ranking service—such as the ranking module in OpenAI API—to score scenarios based on complexity and business impact.

- Facilitating prioritization workshops with decision-makers using shared whiteboarding applications.

- Locking in a ranked backlog of use cases in the project management platform.

5. Final Alignment and Approval

- Generating a summary report of prioritized scenarios in a document management system.

- Circulating the report to the steering committee via secure file sharing.

- Collecting approvals or feedback using e-signature and approval workflows.

- Updating contract and budget planning tools with approved scope items.

- Notifying project teams and transitioning to design activities.

Workflow Actors and System Interactions

The intake and prioritization workflow orchestrates interactions between human actors and supporting platforms:

- Business Sponsors: Define strategic objectives in portfolio management tools and sign off on priorities.

- Project Managers: Orchestrate workshops and track tasks in project management systems.

- Subject Matter Experts: Provide domain context via knowledge repositories and collaboration suites.

- Data Analysts: Retrieve baseline metrics from data warehouses and BI dashboards.

- AI Services: Apply clustering, ranking, and semantic analysis algorithms through API calls.

- Governance Officers: Validate metric definitions in compliance and data governance platforms.

- Executive Review Board: Approves finalized backlogs using e-signature and approval tracking systems.

Outputs and Handoff Criteria

Upon completion of goal translation and prioritization, the following artifacts and conditions enable a seamless transition to design and development:

- Approved use case backlog with ranked scenarios and associated KPIs.

- Consolidated requirements document stored in the centralized repository.

- Baseline metric snapshot and target improvement thresholds.

- Stakeholder alignment sign-off records.

- Handoff checklist confirming data availability, scope clarity, and resource assignments.

AI-Driven Scenario Prioritization

AI agents leverage advanced algorithms to ingest diverse inputs—business objectives, process maps, historical performance data, and stakeholder feedback—and produce objective, data-driven scenario rankings. This approach replaces manual spreadsheet assessments and accelerates decision-making while maintaining transparency and repeatability.

Fundamentals of AI-Driven Evaluation

- Requirement Ingestion: NLU agents parse stakeholder documents and workshop notes to extract requirements and KPIs.

- Feature Extraction: Machine learning models convert qualitative inputs into structured features such as automation potential and risk reduction.

- Scoring Algorithms: Decision-optimization engines weigh factors like cost, risk, time-to-value, and resource availability.

- Ranking and Recommendations: Optimization routines produce a prioritized list of scenarios with confidence scores and sensitivity analyses.

Key AI Capabilities and Tools

- Natural Language Understanding: Frameworks like OpenAI and Microsoft Azure Cognitive Services process unstructured text.

- Machine Learning Classification and Clustering: Supervised models and unsupervised algorithms group and categorize use cases.

- Decision Optimization: Multi-objective solvers evaluate trade-offs between cost, risk, and impact.

- Knowledge Graph Analytics: Platforms such as IBM Watson and Google Vertex AI analyze relationships among processes and stakeholders.

- Explainable AI: Interpretability tools generate human-readable rationales for scenario scores.

Supporting Systems and Infrastructure

- Unified Data Repository: Centralized data lake or warehouse aggregates metrics, performance logs, and feedback.

- Metadata Catalog: Catalogs maintain metadata on source systems, data quality, and governance.

- Workflow Orchestration Engine: Platforms like Apache Airflow and UiPath automate sequencing of AI tasks.

- Visualization and Collaboration: Dashboards built with Tableau and Microsoft Power BI present rankings, confidence intervals, and analyses.

Agent Roles in the Prioritization Workflow

- Requirement Analysis Agent: NLU-powered agent extracts objectives and constraints from unstructured inputs.

- Feature Engineering Agent: AutoML transforms raw data into scoring attributes, quantifying complexity factors.

- Scoring and Ranking Agent: Applies decision-optimization libraries to compute weighted scores and run sensitivity analyses.

- Feedback Loop Agent: Captures stakeholder feedback, refines scoring models, and supports continuous learning.

Integration with Solution Architecture

Scenario prioritization outputs feed directly into agent design and configuration. Integration points include:

- Export of prioritized use cases as structured artifacts (for example, JSON) into the design repository.

- Automatic generation of configuration templates outlining AI capabilities, data sources, and performance targets for each scenario.

- Trigger mechanisms in the orchestration engine to commence design activities, defining agent archetypes and mapping capabilities.

Key Deliverables and Artifacts

The objectives and use case definition stage yields a structured suite of deliverables that guide design, development, and governance:

- Objectives-to-Metrics Mapping Worksheet: Aligns each strategic objective with baseline values, target improvements, calculation formulas, and ownership.

- Stakeholder Requirements Matrix: Captures inputs from business units, operations, IT, and compliance, including priorities and constraints.

- Use Case Prioritization Report: Ranks candidate scenarios based on business value, complexity, risk profile, and strategic alignment, including narrative methodology explanations.

- Scenario Definition Templates: Standardized documents covering problem statements, personas, current and future states, KPIs, data needs, integration points, and exception handling.

- Assumptions and Risk Register: Logs project assumptions, identified risks, impacts, likelihood ratings, mitigation plans, and ownership.

- Executive Summary Deck: Concise presentation summarizing objectives, prioritized use cases, key metrics, high-level timelines, and resource needs.

Dependencies, Handoffs and Quality Gates

Ensuring a smooth transition to agent design and development requires clear preconditions, handoff criteria, and governance quality gates:

Preconditions and Resource Readiness

- Data Accessibility and Quality: Confirmation that required data sources are accessible, complete, and profiled, with cleaning and labeling plans in place.

- Infrastructure Provisioning: Development, testing, and production environments set up with compute, networking, storage, and security zones.

- Tool Licensing and Environment Setup: Licenses acquired and user accounts configured for AI frameworks, API management, and collaboration platforms.

- Security and Compliance Clearance: Data handling procedures, encryption standards, and access controls reviewed and approved.

- Budget Approval and Resource Allocation: Formal sign-off on budgets, headcount, and external services, including contingency plans.

- Governance Framework Integration: Alignment with change control boards, data governance councils, and AI ethics committees.

Handoff Criteria and Quality Gates

- Deliverable Completeness Check: All artifacts—mapping worksheets, matrices, risk registers—are populated, peer-reviewed, and version-controlled.

- Stakeholder Approval Confirmation: Formal sign-off by executive sponsor, product owner, and IT leadership documented via e-signature or governance board minutes.

- Dependency Resolution Status: Critical dependencies resolved or documented as action items with owners and due dates.

- Data and Security Clearance: Validation from data stewards and security officers that data quality and compliance requirements are met.

- Integration Spec Validation: API contracts, event definitions, and authentication methods approved by integration architects.

- Risk Mitigation Initiation: High-priority risks have mitigation actions underway; all risks have monitoring plans.

Stakeholder Roles and Collaboration Points

- Executive Sponsor: Provides strategic direction, secures funding, and approves executive summaries and risk registers.

- Product Owner: Validates use cases, prioritizes backlog, and ensures traceability of requirements.

- Business Analyst: Documents process flows, refines user stories, and maintains the requirements matrix.

- Data Engineering Lead: Defines ETL pipelines, collaborates on data quality, and validates data profiles.

- AI Solutions Architect: Develops architectural blueprints, selects AI models, and establishes technical constraints.

- Security and Compliance Officer: Reviews data protection measures and monitors regulatory adherence.

- Workflow Orchestration Specialist: Maps high-level flows, identifies event triggers, and confirms messaging infrastructure.

- Project Manager: Coordinates schedules, tracks dependencies, and updates status dashboards.

Best Practices for Governance and Continuous Improvement

- Maintain Data Quality Vigilance: Implement automated validation checks, periodic audits, and data stewardship practices to ensure input integrity.

- Define Transparent Scoring Criteria: Collaborate with stakeholders to establish clear weighting factors and thresholds; document parameters for traceability.

- Incorporate Human-in-the-Loop Reviews: Schedule interim checkpoints where domain experts validate AI-generated insights and rankings.

- Enable Iterative Refinement: Capture performance data from pilot deployments to recalibrate scoring models and use case priorities.

- Ensure Explainability and Auditability: Adopt explainable AI frameworks that produce human-readable rationales and maintain audit logs of automated decisions.

- Standardize Handoff Processes: Develop SOPs for review workshops, dependency tracking, and version control to ensure consistency.

- Leverage Automation: Automate notifications, version tagging, and dependency status updates to reduce manual overhead.

- Document Lessons Learned: Conduct retrospectives after handoff cycles to capture insights and update templates and checklists.

- Align with Agile Principles: Structure handoffs to support iterative sprints, providing design teams with prioritized user stories and minimal viable scenarios.

By rigorously defining objectives, executing AI-driven scenario prioritization, and enforcing robust governance and handoff processes, organizations lay the groundwork for scalable AI agent workflows that drive significant productivity improvements and strategic impact.

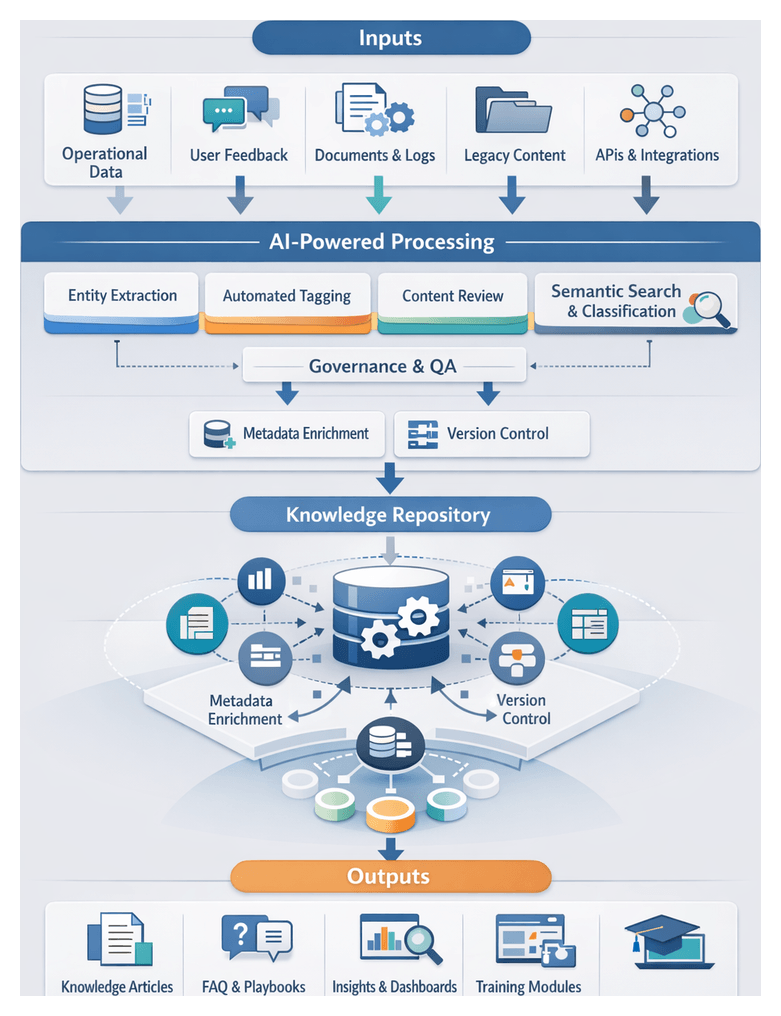

Chapter 2: Data Strategy and Preparation

The initial phase of an AI agent workflow establishes the foundation for reliable, scalable intelligence by defining how data is collected, cleansed, governed, and prepared for downstream use. Without this rigor, organizations risk feeding models with incomplete, inconsistent, or non-compliant data, leading to degraded performance, bias, and regulatory exposure. At its core, the data strategy and preparation stage aims to transform disparate raw inputs into high-quality, policy-aligned assets that drive automated decision-making and predictive analytics.

This stage focuses on three primary objectives:

- Ensuring data accuracy and consistency through standardized profiling and validation routines.

- Enforcing privacy, intellectual property, and regulatory policies via role-based controls and anonymization.

- Providing full transparency into data lineage and usage to support auditability and governance reviews.

By achieving these objectives, organizations lay a solid groundwork that reduces the risk of model drift and bias, streamlines agent configuration, and accelerates continuous improvement cycles.

Industry Challenges and the Need for a Structured Data Strategy

Across sectors such as finance, healthcare, manufacturing, and retail, enterprises grapple with an explosion of data sources and formats. Customer interactions, operational sensors, and third-party services generate high-velocity streams of structured and unstructured information. Traditional IT architectures often silo data in CRM, ERP, and document management systems, impeding unified analysis and resulting in inconsistent customer profiles, disjointed supply chain insights, and missed revenue opportunities.

The proliferation of unstructured content—emails, text transcripts, support tickets—further complicates consolidation efforts, while real-time IoT feeds demand scalable ingestion patterns. Without a coordinated data strategy, projects stall amid inconsistent records, duplicate entries, and governance blind spots. Manual compliance checks become untenable, policies go unenforced, and audit trails are incomplete.

A structured data strategy aligns technical processes, organizational roles, and compliance requirements into a coherent blueprint. It defines criteria for source selection, profiling standards, and policy enforcement mechanisms, moving enterprises beyond ad hoc data gathering toward repeatable, auditable practices. This alignment minimizes friction between domain experts and data engineers, ensures regulatory obligations are met, and preserves customer trust—especially critical in heavily regulated industries.

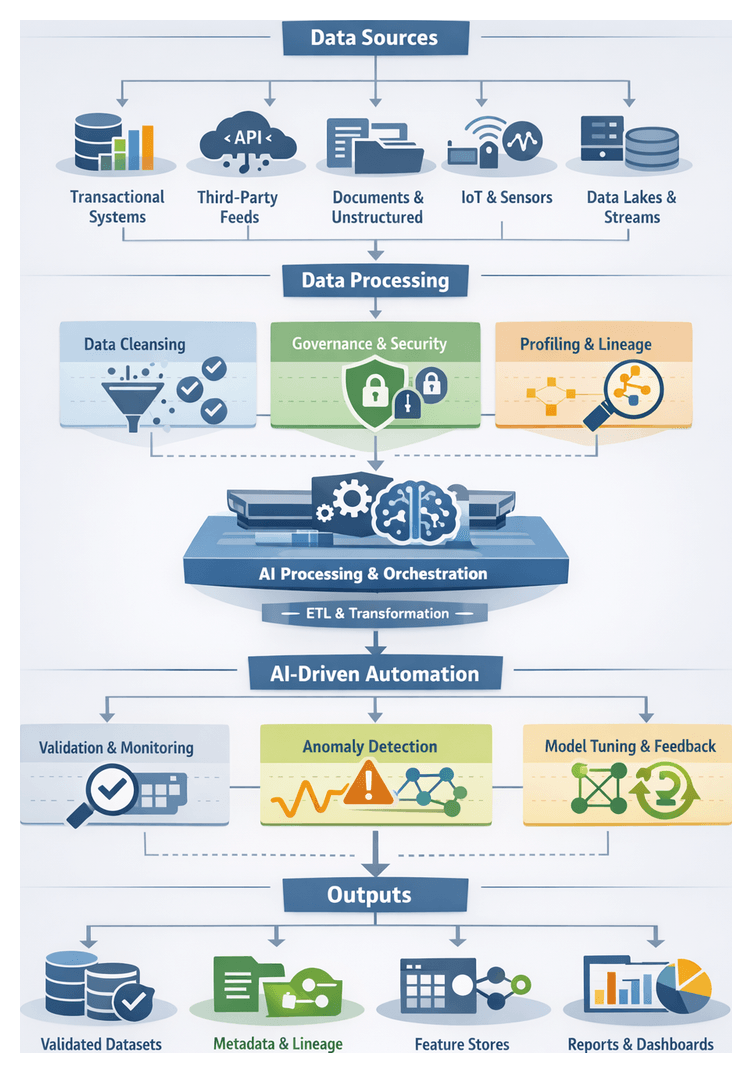

Data Inputs and Acquisition Prerequisites

Effective AI workflows depend on accurately identifying and assessing all relevant data sources. Common input categories include:

- Transactional Systems: Records from ERP, CRM, HRIS, financial ledgers, and supply chain platforms that capture business operations.

- Third-Party Data Feeds: Market intelligence, social media sentiment, geolocation, weather, and competitive benchmarks delivered via APIs.

- Document Repositories: Unstructured content stored in file shares, email archives, knowledge bases, and support ticketing systems.

- Sensor and IoT Streams: Telemetry from industrial equipment, environmental sensors, endpoint logs, and connected devices.

- Data Lakes and Warehouses: Centralized staging areas such as Databricks, Amazon S3, Hadoop clusters, or Azure Data Lake for bulk storage and archival.

- Event Streaming Platforms: Real-time capture of application logs and interactions using Apache Kafka.

Before ingesting data, certain prerequisites must be satisfied to ensure seamless integration, security, and compliance:

- Access Provisioning: Secure credentials, service accounts, OAuth tokens, and key management policies granted by data owners.

- Network and Connectivity: Firewall rules, VPN tunnels, VPC peering, and secure transport protocols (SFTP, HTTPS) configured between sources and ingestion endpoints.

- Cataloging and Classification: Inventory of data assets with metadata on sensitivity, retention policies, and business ownership.

- Regulatory and Contractual Review: Validation against internal policies, SLAs, and external mandates such as GDPR, HIPAA, or industry standards.

- Schema Specifications: Definitions of fields, data types, constraints, and relationships to guide downstream validations and transformations.

Neglecting these prerequisites can lead to pipeline failures, security vulnerabilities, and legal liabilities, compromising the entire AI initiative.

Data Quality, Cleansing, and Enrichment

Data quality is the linchpin of reliable AI outcomes. Cleansing processes detect anomalies—missing values, inconsistent formats, duplicates, and outliers—and remediate them before data enters model training or real-time inference pipelines. A systematic approach typically comprises profiling, standardization, enrichment, and validation steps, often automated through AI-driven tools.

- Profiling and Statistical Assessment: Analyzing distributions, null counts, and pattern anomalies using Azure Machine Learning, open-source frameworks, or custom scripts.

- Deduplication and Record Linkage: Merging duplicate records based on unique keys, fuzzy matching algorithms, or heuristic rules.

- Normalization and Standardization: Uniform formatting of dates, currencies, units of measure, and categorical values according to enterprise reference models.

- Missing Value Handling: Applying imputation techniques (mean, median, predictive) or exclusion rules to address incomplete records.

- Error Correction and Validation: Cross-reference checks against authoritative sources, automated anomaly detection, and rule-based correction routines.

- Data Enrichment: Augmenting records with auxiliary attributes such as geographic coordinates, NAICS codes, demographic segments, or sentiment scores.

By leveraging tools that integrate cleansing and profiling—such as AWS Glue DataBrew for interactive transformations—teams can accelerate cleaning at scale while enforcing corporate standards.

Governance, Compliance, and Metadata Management

Robust data governance frameworks codify policies and assign stewardship to ensure that all collection and cleansing activities adhere to internal and external mandates. Key governance elements include:

- Policy Definitions: Formal guidelines covering data classification, retention, access, and sharing, often documented in a governance charter.

- Role-Based Access Control: Fine-grained permissions managed via IAM, distinguishing between data stewards, engineers, and consumers.

- Metadata Cataloging: Automated lineage and provenance capture using platforms like Collibra or Immuta, facilitating impact analysis and traceability.

- Audit and Logging: Continuous recording of data access, transformations, and policy enforcement actions for regulatory audits.

- Risk Assessments: Periodic reviews to uncover compliance gaps, privacy risks, and potential breach vectors.

- Privacy Techniques: Pseudonymization, tokenization, and differential privacy applied to personally identifiable information.

Metadata repositories and data catalogs become the single source of truth, enabling business users and data professionals to discover assets, understand dependencies, and comply with governance protocols.

Stakeholder Collaboration and Alignment

Effective execution demands coordinated effort across business, IT, legal, compliance, and data science teams. Domain experts articulate use cases and define data relevance, IT provisions infrastructure and security controls, legal and compliance teams verify regulatory adherence, and data engineers design, implement, and monitor pipelines. Establishing regular forums—alignment workshops, change review boards, and RACI matrices—clarifies responsibilities, accelerates decisions, and prevents misalignment.

Cross-functional dependencies extend to DevOps and IT operations, which provision compute clusters, manage container orchestration, and monitor network configurations to support pipeline demands. Business SMEs validate feature definitions against real-world scenarios, while compliance officers verify that data usage aligns with contractual obligations and privacy commitments.

Early engagement of stakeholders reduces rework, sets clear timelines for data access, and aligns expectations for quality thresholds, governance requirements, and downstream deliverables. Transparent communication channels and shared documentation portals support ongoing collaboration throughout the AI lifecycle.

Pipeline Orchestration and Workflow

Data preparation pipelines orchestrate ingestion, transformation, enrichment, validation, and delivery through reliable, repeatable workflows. The core phases include:

- Ingestion and Extraction: Capturing data via batch ETL tools such as AWS Glue and real-time streams with Apache Kafka.

- Schema Discovery and Registration: Automated inference using sample records, with schemas registered in metastore services like Glue Data Catalog or Hive.

- Transformation and Enrichment: Declarative transformations using dbt or programmatic scripts in Apache Spark, implementing normalization, feature creation, and dataset joins.

- Orchestration and Scheduling: Workflow coordination through engines like Apache Airflow or Prefect, defining DAGs, dependency rules, retry policies, and SLA-based alerts.

- Validation and Monitoring: Embedding quality gates with frameworks such as Great Expectations, checkpoint validations, and schema tests to ensure data integrity.

- Scaling Strategies: Partitioned processing by time windows or business units, auto-scaling compute clusters, incremental change data capture, and resource quotas to maintain performance.

- Continuous Integration: Version-controlled workflows, automated testing of transformation logic, and deployment pipelines for production updates.

Error Handling and Recovery

Robust pipelines implement intelligent retry mechanisms with exponential backoff for transient failures, fallbacks to alternative data sources, and manual intervention hooks. Error events propagate to issue tracking systems, enabling prompt investigation and minimizing data downtime.

Observability and Logging

Structured logging in JSON format captures contextual details such as job identifiers, partition keys, and execution parameters. Health dashboards in Grafana or native UI panels provide real-time insights, while automated alerts inform on-call engineers of anomalies, failures, or SLA breaches.

These practices deliver a robust platform for data flow, enabling rapid detection and resolution of operational issues and facilitating capacity planning as workloads evolve.

AI-Driven Validation and Model Conditioning

Integrating AI-driven validation and conditioning into data pipelines transforms static checks into adaptive, intelligent processes that scale with data volume and complexity. Key capabilities include:

Automated Data Quality Monitoring

Continuous evaluation against predefined rules and metrics prevents corrupted or misaligned records from propagating. Schema enforcement tools, such as TensorFlow Data Validation and Great Expectations, verify field types, value ranges, and cross-field consistency. Semantic validation leverages natural language services like Azure Text Analytics to ensure categorical labels and text fields align with controlled vocabularies.

Anomaly Detection and Alerting

Real-time anomaly detectors identify distributional shifts, time-series outliers, and multivariate deviations. Statistical profiling with frameworks such as PyCaret Anomaly Detection, time-series monitoring via Amazon Lookout for Metrics or Azure Anomaly Detector, and multivariate techniques using scikit-learn alert teams to unexpected patterns. Integration with messaging platforms and incident management systems ensures rapid notification and resolution.

Model Conditioning and Continuous Tuning

Maintaining model performance requires automated hyperparameter optimization, drift detection, and calibration. Services like Amazon SageMaker Automatic Model Tuning and Google Vertex AI Hyperparameter Tuning accelerate parameter search. Concept drift monitoring using MLflow or Kubeflow Pipelines triggers retraining pipelines when live data diverges from training baselines. Calibration techniques such as Platt scaling are managed through the Databricks MLflow Model Registry, ensuring reliable probability estimates for decision thresholds.

CI/CD and Feedback Loops

End-to-end MLOps workflows, orchestrated with Prefect and CircleCI, integrate validation gates, automated testing, and containerized deployments. Event-driven pipelines leverage Apache Kafka or AWS EventBridge to channel validation failures into remediation functions or retraining triggers. Real-time dashboards deliver visibility into quality metrics, anomaly trends, and conditioning outcomes, ensuring continuous alignment between data and AI agents.

Data Outputs, Documentation, and Handoff Criteria

The culmination of data strategy, preparation, and validation yields a suite of artifacts ready for agent design and configuration. Rich metadata and well-documented transformation logic enable agent architects to understand feature semantics, data freshness, and potential biases before integrating inputs into design sprints. These outputs include:

- Curated Training and Validation Datasets: Labeled examples, feature vectors, and partitioned sets in Parquet, CSV, or JSON formats, verified against completeness and balance metrics.

- Feature Store Exports: Versioned feature tables with timestamped snapshots and lineage metadata, orchestrated through Apache Airflow and Databricks.

- Transformed Data Tables: Derived attributes, anonymized keys, one-hot encodings, and aggregated metrics maintained by Alteryx and Fivetran.

- Unstructured Data Artifacts: Tokenized text corpora, annotated image datasets in COCO or Pascal VOC formats, and enriched audio transcripts stored in document databases or object stores such as Snowflake.

- Metadata Catalog Entries: Detailed descriptions of each dataset, schema references, update frequencies, and owner contacts.

- Lineage Graphs and Quality Reports: Machine-readable and visual representations linking raw sources to final outputs, annotated with data quality metrics and anomaly statistics.

- Governance Certificates: Documentation of policy enforcement, masking procedures, consent records, and audit logs generated by Collibra or Immuta.

- Validation and Drift Logs: Time-stamped records of anomaly detections, drift events, and retraining triggers with rationale and resolution actions.

- Connectivity and Security Credentials: Service endpoints, API tokens, and access policies configured for downstream use.

- Transformation Scripts and Playbooks: Version-controlled code artifacts—including Python notebooks, SQL scripts, and orchestration DAGs—documenting pipeline logic and error-handling mechanisms.

- Stakeholder Sign-Off: Formal approvals from data stewards, compliance officers, and business sponsors confirming readiness for agent development.

Best Practices for Seamless Handoffs and Continuous Improvement

- Implement Version Control for Data and Code: Treat datasets, transformation scripts, and metadata as code, using Git or specialized versioning systems to track changes and roll back when necessary.

- Automate Documentation Generation: Use tools that extract schema definitions and data profiles to produce up-to-date documentation, reducing manual effort and errors.

- Establish Regular Synchronization Cadences: Schedule recurring meetings between data engineers, compliance teams, and agent designers to review output readiness, clarify requirements, and gather feedback.

- Define Clear SLAs and RTO/RPO Targets: Agree on service-level objectives for data availability, freshness, recovery objectives, and escalation paths to manage pipeline incidents.

- Centralize Artifact Storage and Discovery: Utilize a shared repository or data catalog platform as the single source of truth, providing controlled access and search capabilities across teams.

- Enforce Metadata Validation Rules: Implement automated checks to verify that required fields, descriptions, and classification tags are present before handoff.

- Provide Incremental Deliverables and Previews: Share data samples, lineage snapshots, and draft documentation early to solicit stakeholder input and avoid last-minute revisions.

- Conduct Post-Handoff Retrospectives: Gather teams to review what worked, what didn’t, and incorporate lessons learned into updated processes and playbooks.

By institutionalizing these practices, organizations foster a culture of continuous improvement and operational excellence. Data pipelines evolve proactively to accommodate new sources, emerging compliance requirements, and shifting business objectives, ensuring that AI agents remain tuned to organizational goals.

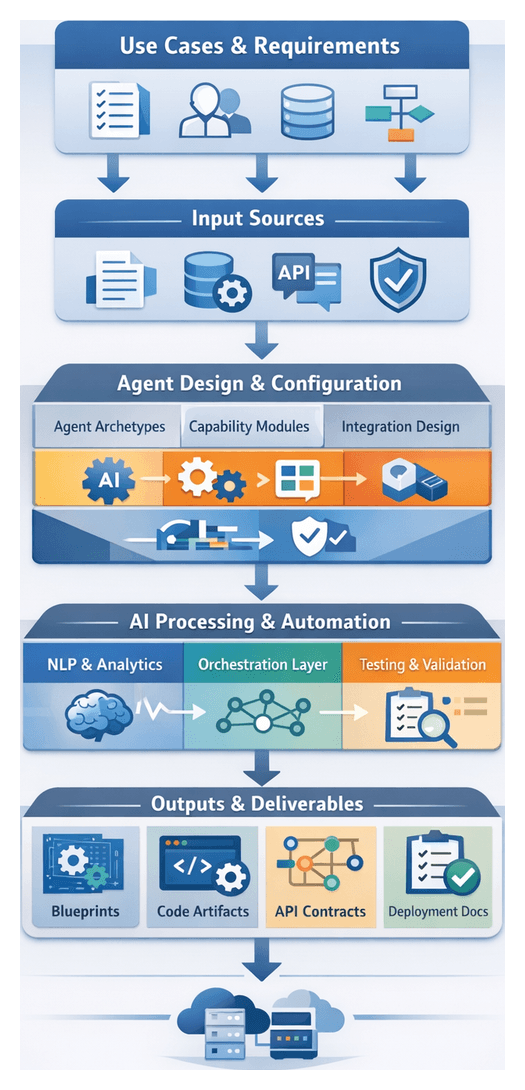

Chapter 3: Agent Design and Configuration

The Agent Design and Configuration stage bridges high-level use case definitions and the technical realization of AI agents within enterprise workflows. By translating strategic objectives into concrete agent archetypes, capability modules, and integration blueprints, this discipline ensures each agent delivers measurable value, aligns with organizational standards, and supports scalable deployment across diverse scenarios.

In complex environments, ad hoc development often leads to inconsistent results, costly rework, and low adoption. A structured design process enforces repeatable methodologies, fosters cross-functional collaboration, and promotes reuse of proven components. The outputs from this stage—blueprints, configuration manifests, and semantic documentation—lay the foundation for efficient platform provisioning, integration, and orchestration.

Key Objectives

- Define agent archetypes mapped to organizational roles and process patterns.

- Specify functional modules, AI capabilities, data schema mappings, and performance targets.

- Document integration points, API contracts, and human workflow escalations.

- Validate design assumptions against technical, operational, and compliance constraints.

Strategic Advantages

- Rapid onboarding of new use cases via standardized agent blueprints.

- Efficient collaboration and transparency with shared configuration artifacts.

- Continuous compliance and security validation through documented design reviews.

- Optimized total cost of ownership by reusing modular components.

- Traceability from business requirements to agent behavior.

Inputs and Prerequisites

- Use Case Definitions and Prioritization: Descriptions with success criteria guide archetype selection and feature sets.

- Stakeholder Requirements and Role Profiles: Interviews, workshops, and personas inform decision logic and escalation paths.

- Data Availability and Quality Assessments: Source systems, schemas, and readiness reports shape knowledge management and NLP modules.

- Process Maps and Integration Inventories: Flow diagrams and API catalogs capture triggers, transformation rules, and error-handling protocols.

- Technology Stack and Constraints: Infrastructure, middleware, and AI framework specifications determine compatibility requirements.

- Governance, Security, and Compliance Policies: Regulatory mandates and audit requirements establish design guardrails.

- Performance Targets and SLAs: Benchmarks for response times, throughput, and error rates drive tuning parameters and operational support agreements.

Environmental Conditions

- Cross-functional governance councils and working groups.

- Version control and change management processes in GitHub, GitLab, or Bitbucket.

- Accessible test and staging environments for safe validation.

- Tooling for modular development using IDEs, low-code platforms, or container frameworks.

- Training and knowledge transfer sessions leveraging Confluence and Microsoft Teams.

Handoff Criteria

- Completion of configuration blueprints with archetype definitions and module mappings.

- Approval of integration design documents including API contracts, event schemas, and data transformations.

- Validation of compliance matrices demonstrating security and regulatory alignment.

- Established test plans and acceptance criteria for performance and functionality.

- Agreement on deployment timelines, resource allocations, and cut-over strategies with operations teams.

With these prerequisites and criteria satisfied, subsequent deployment and orchestration stages proceed with clarity and minimal rework, accelerating time to value while mitigating risk.

Modular Design Workflow for Agent Setup

Building on agent archetypes and configuration inputs, the modular design workflow assembles reusable capability components into cohesive AI agent configurations. This structured process supports scalability, maintainability, and rapid iteration, guiding teams from module ideation through development, validation, and handoff for deployment.

Stage Inputs and Triggers

- Approved blueprints outlining required capabilities and interface contracts.

- Reference architectures specifying module roles and data flows.

- Prioritized scenarios and user stories.

- Provisioned environments with version control and collaboration tools.

Module Identification and Cataloging

- Function Mapping: Decompose blueprints into discrete capabilities such as intent recognition, dialogue management, data retrieval, decision logic, and external integration.

- Module Definition: Document inputs, outputs, APIs, performance SLAs, and security requirements for each capability.

- Catalog Registration: Record module metadata, version information, dependencies, and configuration parameters in a centralized registry.

- Dependency Analysis: Map shared libraries, data schemas, and service endpoints to inform integration sequencing.

Module Development and Configuration

- Specification Refinement in collaboration with business analysts.

- Implementation using OpenAI API for generative language, Microsoft Bot Framework for conversation flows, or IBM Watson Assistant knowledge connectors.

- Configuration Management via infrastructure-as-code templates and environment-specific settings.

- Unit Testing with automated suites to validate functional correctness.

- Peer Review through pull requests in GitHub, GitLab, or Bitbucket.

Orchestration Layer Integration

- Module Registration with service registries and messaging topics on Apache Kafka or RabbitMQ.

- Interface Binding using tools like those listed on AgentLinkAI or LangChain.

- Configuration Propagation of secrets and connection strings via secret management services.

- Smoke Testing to verify runtime module invocation and data bindings.

Human-in-the-Loop Collaboration

- Design Workshops with cross-functional teams.

- SME Reviews for domain-specific logic.

- UX Testing with prototype agents to refine conversational flows.

- Change Management Boards coordinating releases and preventing update conflicts.

Automated Testing and Validation

- Unit Tests triggered on commits.

- Integration Tests simulating module interactions.

- Load and Stress Tests identifying performance bottlenecks.

- Security Scans with Snyk or SonarQube.

- Acceptance Testing against business-driven criteria.

Security and Compliance Checks

- Static Code Analysis for insecure patterns.

- Dependency Audits for known vulnerabilities.

- Configuration Validation of encryption, token policies, and secure protocols.

- Audit Logging of access events and data transformations.

- Policy Enforcement gating promotions until issues are resolved.

Documentation and Handoffs

- Versioned module packages or container images in artifact repositories.

- Published API specifications and event schemas.

- Configuration templates and environment variable manifests.

- Operational runbooks detailing deployment steps, health checks, and rollback procedures.

- Metadata capturing authorship, version history, dependencies, and compliance attestations.

Clear coordination across design leads, development, DevOps, security, QA, and documentation teams ensures that modules are reliable, interoperable, and ready for integration into complex multi-agent orchestrations.

AI Capabilities Mapping to Agent Roles

Mapping core AI functions to agent archetypes creates a flexible ecosystem in which each component contributes distinct value. By aligning capabilities such as language understanding, knowledge retrieval, decision intelligence, and contextual memory with modular roles, organizations can build scalable, coherent workflows.

Natural Language Processing and Understanding

NLP and NLU enable agents to parse unstructured text, recognize intents, extract entities, and manage dialogues. Transformer-based models from OpenAI GPT or pipelines built with spaCy support sentiment analysis and multi-turn conversation management. Continuous training pipelines using TensorFlow or PyTorch ensure models evolve with new terminology and user behavior.

Knowledge Management and Retrieval

Retrieval-augmented generation (RAG) patterns combine generative models with vector databases like Pinecone to surface relevant content from internal documentation or external sources. Graph databases model entity relationships and automated curation workflows leveraging Prefect maintain accuracy and freshness of knowledge repositories.

Decision Intelligence and Optimization

Agents apply predictive analytics, optimization algorithms, and reinforcement learning to recommend actions or forecast outcomes. Tools such as OptaPlanner or custom Python pipelines handle scheduling, inventory planning, and dynamic pricing. Integration with real-time streams on Kafka enables live model updates and automated triggers via workflow engines.

Contextual Awareness and Memory Systems

Memory architectures combine session stores in Redis for short-term context with long-term archives in vector or relational stores. Policies for context window management and periodic pruning ensure coherent dialogues without performance decay, supporting roles from transactional assistants to compliance monitors.

Integration with Supporting Platforms and Services

Agents rely on container orchestration in Kubernetes and Docker, messaging layers like RabbitMQ or Kafka, and API gateways managing authentication, rate limits, and versioning. CI/CD pipelines in Jenkins, GitHub Actions, or GitLab CI automate testing, security scans, and model rollouts.

Security and Compliance Integration

Role-based access controls, audit logging, and encryption standards (AES-256, TLS 1.2 ) safeguard sensitive data. Secrets stored in HashiCorp Vault and policy engines enforce corporate and regulatory mandates. Privacy controls anonymize or redact PII to comply with GDPR and CCPA.

Performance, Scalability, and Maintainability

Monitoring frameworks like Prometheus and Grafana track inference latency and resource utilization. Model versioning with metadata enables safe rollbacks and A/B tests. Autoscaling policies for CPU, GPU, and memory usage ensure elastic performance, while canary deployments verify new releases under real-world conditions. Observability pipelines correlate logs, metrics, and traces to diagnose distributed issues.

This systematic mapping of AI capabilities to agent roles, supported by robust platforms and governance frameworks, establishes a resilient foundation for enterprise-wide agent deployments.

Configured Output Artifacts and Integration Handoffs

The culmination of agent design yields a comprehensive set of artifacts that describe each AI agent’s capabilities, interfaces, and operational parameters. Formal handoff procedures ensure integration and deployment teams receive all necessary context and dependencies to proceed efficiently.

- Agent Blueprints and Configuration Manifests including YAML or JSON templates, metadata annotations, and references to reusable libraries or container images.

- Capability Modules and Code Artifacts comprising source code repositories, unit test suites, and references to frameworks such as OpenAI APIs and Vertex AI.

- Interface Specifications and API Contracts with OpenAPI definitions, GraphQL or event schemas, and sequence diagrams.

- Test Cases and Validation Scripts covering integration scenarios, automated tests for Jenkins or GitLab CI, and performance benchmarks.

- Operational Runbooks detailing deployment steps, secret management, monitoring configurations, and troubleshooting procedures.

- Security, Compliance, and Governance Documentation specifying RBAC policies, encryption guidelines, and audit trail requirements.

Key Dependencies

- Data Model Availability including canonical schemas, ontologies, and sanitized datasets.

- Infrastructure Prerequisites such as container registry endpoints, Kubernetes namespaces, and service mesh configurations.

- Integration Endpoint Definitions covering API gateways, message broker topics, and authentication mechanisms.

- Security and Compliance Constraints involving key management services, data residency regulations, and certificate authorities.

- Stakeholder Sign-Off by business, legal, and compliance owners on acceptance criteria and audit requirements.

Integration Handoff Workflow

- Packaging and Versioning: Tag manifests, code repositories, and container images; publish artifacts to internal registries.

- Documentation Bundle: Compile blueprints, interface contracts, runbooks, and test suites; include a dependency matrix and issue log.

- Stakeholder Review and Approval: Conduct technical walkthroughs with infrastructure engineers; obtain sign-off via Jira tickets.

- Transition to Integration: Create deployment tickets referencing artifact versions and target environments; hand over credentials via HashiCorp Vault.

- Post-Handoff Validation: Execute smoke tests in staging; document deviations and assign action items to the design team.

By maintaining clear dependencies and following a structured handoff process, organizations minimize misconfiguration risk, align multidisciplinary teams, and accelerate time to market for AI agent solutions.

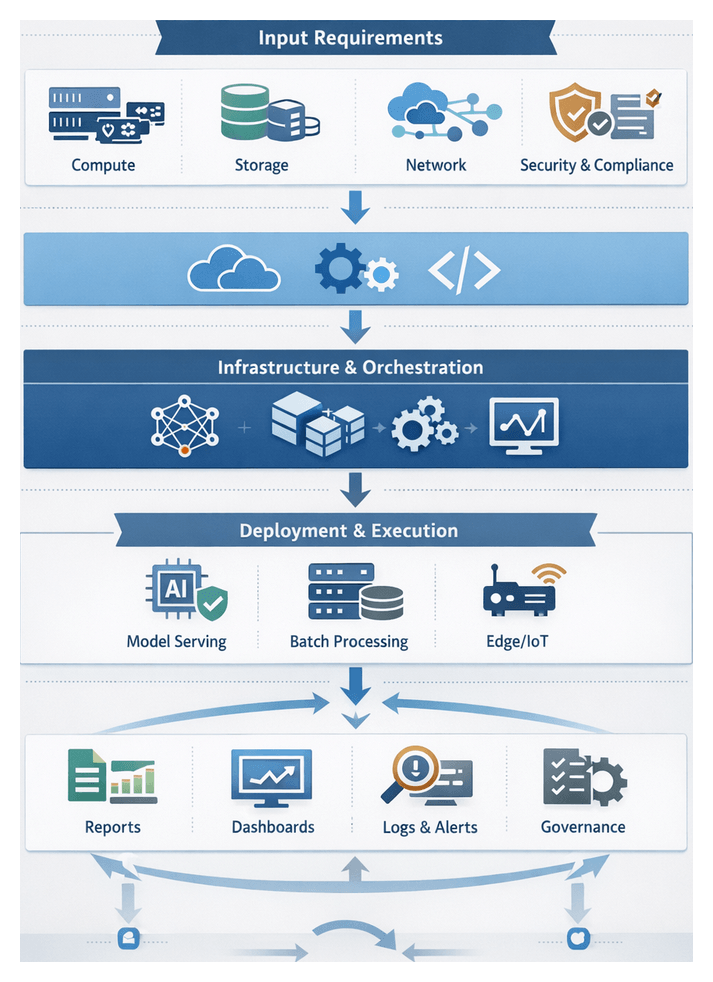

Chapter 4: Infrastructure and Platform Setup

Defining the Purpose of the Infrastructure Requirements Stage

This stage establishes the foundational environment and platform to host, orchestrate, and scale AI agent workflows. It aligns compute, storage, network, security, and compliance specifications with enterprise objectives for performance, availability, and cost efficiency. By defining clear input requirements and preconditions, teams can provision infrastructure that supports rapid deployment, consistent operations, and seamless integration with existing systems.

Enterprises face challenges such as heterogeneous environments, unpredictable workloads, and evolving security mandates. Modern solutions leverage cloud-native architectures, container orchestration platforms, and infrastructure as code to accelerate time to value while maintaining governance. In hybrid or multi-cloud landscapes, a documented set of requirements creates a repeatable, auditable process that supports both agile experimentation and mission-critical production workloads.

Prerequisites and Core Input Specifications

Before provisioning, teams must align stakeholders on objectives, assemble resource inventories, and define organizational policies. The following domains outline the essential inputs for a robust infrastructure environment.

Compute and Scalability Requirements

- Workload profiling: expected CPU, GPU, memory, and I/O characteristics of training or inference tasks

- VM or container sizing guidelines for baseline and peak demands

- Deployment model: cloud instances (Amazon Web Services, Azure, Google Cloud Platform) versus on-premises virtualization

- Container orchestration with Kubernetes or Docker Swarm

- Auto-scaling policies and thresholds to accommodate dynamic workload fluctuations

Storage and Data Management Inputs

- Data volume estimates and growth projections for training datasets, model artifacts, and logs

- Performance requirements for block, object, and shared file systems

- Integration with managed services such as Amazon S3, Azure Blob Storage, or Google Cloud Storage

- Backup, snapshot, and archival policies aligned with RTO and RPO objectives

- Data locality considerations to minimize latency for geo-distributed agent interactions

Networking and Connectivity Conditions

- Design of virtual networks, subnets, and IP schemes to isolate AI traffic

- Firewall rules, security groups, and load balancer specifications for ingress and egress control

- Network peering, VPN tunnels, or dedicated links (Direct Connect, ExpressRoute) for secure communication

- Service mesh or API gateway patterns to manage microservices-level traffic

- Bandwidth and latency targets for real-time agent coordination across regions

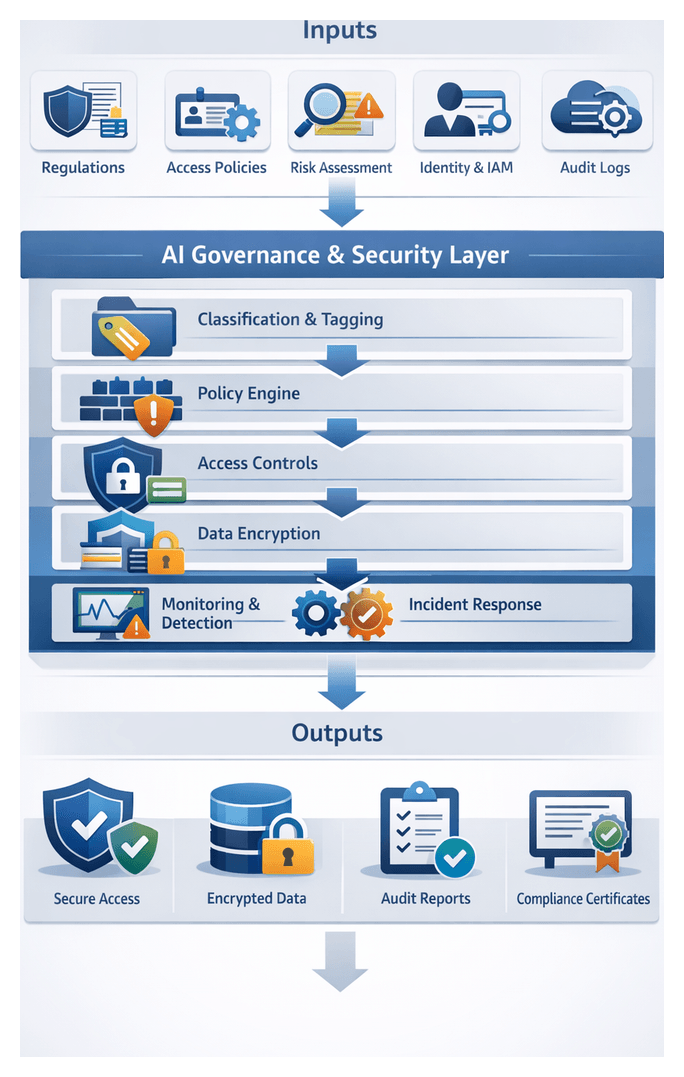

Security, Identity, and Access Control Inputs

- Role-based access control definitions in Kubernetes or cloud IAM services

- Encryption standards for data at rest and in transit using TLS, VPN, or managed keys

- Integration with secrets management solutions such as HashiCorp Vault

- Network security controls, including intrusion detection and vulnerability scanning

- Audit logging and monitoring prerequisites to support compliance frameworks

Platform Provisioning and Orchestration Tools

- Infrastructure as code: Terraform, AWS CloudFormation, Azure Resource Manager

- Configuration management: Ansible, Chef, Puppet

- Container image build and registry tools: Docker and Harbor

- CI/CD pipelines with Jenkins, GitLab CI/CD, or Azure DevOps

- Artifact repositories for model and code versioning aligned with DevOps practices

Monitoring, Logging, and Observability Inputs

- Metrics collection frameworks: Prometheus or cloud monitoring services

- Visualization and dashboards: Grafana or native cloud consoles

- Log aggregation and search: Elastic Stack or Splunk

- Distributed tracing and application performance monitoring

- Alerting thresholds and notification channels for incident response

Compliance, Governance, and Cost Management

- Regulatory standards such as GDPR, HIPAA, PCI DSS, or SOC 2

- Policy definitions for data retention, audit trails, and change management

- Budget allocations, reserved instances, and spot instance strategies

- Tagging schemas to track cost by project, environment, and business unit

- Integration with governance platforms or GRC tools to track controls and exceptions

Platform Provisioning and Orchestration Workflow

An effective provisioning workflow translates architecture into a live runtime environment by coordinating cloud APIs, orchestration frameworks, and automation tools. Leveraging IaC, containerization, and CI/CD, organizations deploy consistent, repeatable platforms that support continuous integration and continuous deployment of AI agents.

Provisioning Strategy and Planning

- Capacity planning based on expected AI training, inference, and batch workloads

- Environment taxonomy with naming conventions, resource groupings, and tags

- Cost optimization through appropriate service tiers and scaling policies

- Security baseline defining network segmentation, access controls, and encryption

- Tool selection such as Terraform, AWS CloudFormation, Kubernetes, or Docker Swarm

Infrastructure as Code and Environment Definition

- Declarative templates for networks, compute clusters, storage volumes, and load balancers

- Parameterization of variables like region, instance size, and environment type

- Validation through static analysis or dry runs to detect errors and policy violations

- Version control and pull requests for peer review and rollback capabilities

Containerization and Image Management

- Base image selection or specialized data science images

- Dockerfile authoring with multi-stage builds to minimize image size and attack surface

- Image scanning via Clair or Trivy to detect vulnerabilities

- Registry management in Amazon ECR or Google Container Registry with lifecycle policies

- Semantic tagging for precise rollbacks and audits

Orchestration and Deployment Pipelines

- Pipeline definitions in Jenkins, GitLab CI/CD, or Azure DevOps sequencing IaC and container steps

- Environment promotion with automated tests, linting, and security checks

- Helm chart management for Kubernetes releases, ingress, and secrets

- Blue/green and canary deployments to mitigate risk and enable rapid rollback

- Dependency orchestration ensuring services like databases, queues, and agents deploy in order

Coordination, Error Handling, and Resilience

- Human approvals via ticketing or chat integrations before critical changes

- Event-driven triggers using webhooks to initiate downstream workflows

- Service mesh integration with Istio or Linkerd for secure mTLS and traffic policies

- Automated rollback and retry logic for idempotent operations

- Health checks and readiness probes to validate service functionality

- Alerting to incident management tools for on-call notifications

AI Service Deployment and System Roles

Deployment bridges model development and operational value delivery by orchestrating trained models and agents into production environments. A robust strategy ensures reliability, security, and maintainability while integrating with broader IT systems.

Deployment Models

- Real-Time Inference for chatbots and recommendation engines using containerized endpoints on Kubernetes

- Batch Processing with solutions like AWS SageMaker Batch Transform or MLflow pipelines for bulk predictions

- Edge and IoT Deployment via containerized models on local hardware using Azure Machine Learning

Core System Roles

- Control Plane: Manages scheduling, configuration updates, and policy enforcement in Kubernetes

- Data Plane: Runs inference workloads on nodes with GPU or CPU clusters via device plugins

- Service Mesh: Uses Istio or Linkerd sidecars for load balancing, traffic routing, and observability

- Monitoring and Logging: Collects metrics with Prometheus and logs with Fluentd or Elastic Stack

- Security Enforcement: Applies RBAC, vulnerability scanning, and audit logging integrated with identity providers

CI/CD for AI Services

- Automated testing: unit, integration, and performance tests to validate service logic and SLOs

- Artifact management: model registries like MLflow Model Registry or TensorFlow Extended

- Deployment strategies: blue/green and canary rollouts via sidecar proxies

Scaling, Resiliency, and Observability

- Horizontal Pod Autoscaling based on CPU or custom metrics

- Cluster autoscaling with cloud provider APIs to add nodes when needed

- Scheduling GPUs, TPUs, or FPGAs via device plugins for specialized workloads

- Liveness and readiness probes to restart unhealthy containers

- Replication and failover for stateless and stateful components

- Persistent volumes and object storage for data consistency

- Metrics: inference latency, throughput, error rates

- Logs: request traces and exception records

- Distributed tracing: end-to-end request tracking across services

Governance and Collaboration

- Role-based access controls integrated with identity providers

- TLS encryption in transit and at rest, with secrets stored in vault services

- Immutable audit trails recording deployment events and access requests

- Cross-functional interfaces between DevOps, data science, and IT operations

- ChatOps notifications and shared dashboards for real-time collaboration

Deployed Platform Outputs, Dependencies, and Handoff

The final stage produces operational artifacts, configuration outputs, and a dependency matrix that downstream teams use for integration. Formalized handoff processes ensure all requirements are met before application usage.

Key Operational Artifacts

- Service endpoint definitions: FQDNs, load balancer addresses, and port mappings

- Configuration manifests: YAML or JSON descriptors with environment variables

- Infrastructure as code artifacts: Terraform state files and CloudFormation stacks

- Container images: tagged Docker images and vulnerability scan reports

- Monitoring dashboards: preconfigured Grafana or Datadog views and alert definitions

- Logging configurations: centralized forwarding to Elastic Stack or OpenSearch

- Security credentials: TLS certificates and OAuth2 client registrations

- Model registry entries: artifacts in MLflow with version metadata and lineage

Dependency Matrix

- Network: VPC peering, firewall rules, DNS zones, and service discovery

- IAM: RBAC entries for Kubernetes or cloud IAM policies

- Storage: object buckets and database connections for model binaries and logs

- Orchestration: compatibility with Kubeflow or Argo Workflows and CRDs

- Monitoring: webhook endpoints for PagerDuty or ServiceNow and API tokens

- Security: vault configurations and audit log destinations

- Model lifecycle: CI pipelines triggering retraining and redeployment workflows

Handoff Process

- Artifact Packaging: publish manifests, scripts, and templates to version control with semantic tags and generate a release bundle

- Dependency Verification: provide a matrix showing each dependency’s status and remediation steps

- Integration Playbooks: deliver runbooks with API examples, authentication flows, and error-handling scenarios

- Stakeholder Sign-Off: host review sessions with infrastructure, security, and integration leads and record approvals

- Automated Gates: promote artifacts into integration environments via CD pipelines and execute smoke tests

Best Practices for Smooth Transition

- Version Control Discipline: maintain strict branching and tagging for code and configuration artifacts

- Automated Validation: run connectivity, authentication, and schema compliance tests before integration

- Cross-Functional Communication: schedule regular syncs between platform engineers, security officers, and developers

- Dynamic Documentation: host runbooks and playbooks in collaborative platforms that update automatically

- Monitoring Readiness Checks: define SLIs and health checks to guard against upstream failures

By capturing deployed outputs in consistent formats, proactively managing dependencies, and formalizing handoff procedures, organizations transform complex provisioning into a transparent, repeatable process. This rigor accelerates integration and establishes the trust required for scaling AI agent workflows across the enterprise.

Chapter 5: Integration with Core Enterprise Systems

Integration Requirements and System Inputs

Successful AI-driven workflows begin with a detailed definition of integration requirements and system inputs. Technical teams, business stakeholders, and security architects must collaborate to inventory enterprise systems, define interface specifications, and establish data contracts. This upfront effort ensures AI agents interact reliably with mission-critical platforms such as Salesforce, SAP, Microsoft Dynamics 365, Workday, and ServiceNow without causing data inconsistencies or process bottlenecks.

- System Landscape Inventory: A centralized register of application names, versions, endpoints, hosting environments, and support SLAs. This serves as the single source of truth for API access coordination and change management.

- Interface and API Specifications: Machine-readable definitions of REST, SOAP, gRPC, and event-stream endpoints, including payload schemas, authentication schemes, rate limits, and error codes. Tools such as MuleSoft and Dell Boomi can publish OpenAPI or WSDL contracts to accelerate connector development.

- Data Model Alignments: Canonical schemas, entity mappings, data dictionaries, and master data attributes. Early collaboration with data architects and use of metadata repositories or MDM platforms ensures consistent transformations.

- Authentication and Authorization: Definitions of OAuth 2.0 flows, SAML assertions, API key policies, and certificate-based schemes, along with token lifecycles and role-based access controls. Security teams enforce least-privilege principles.

- Network and Infrastructure Prerequisites: VPN configurations, VPC peering, firewall rules, proxy settings, and bandwidth capacity. Secure connectors or on-premise agents bridge gap-to-cloud scenarios without exposing internal networks.

- Integration Patterns: Preferred approaches such as synchronous REST calls, event-driven messaging via Apache Kafka or RabbitMQ, batch file transfers, or webhooks. Pattern selection impacts latency, throughput, and resilience.

- Data Exchange Formats: Standardized formats like JSON, XML, Avro, or CSV, with agreed serialization settings, compression, and encryption. Versioning policies prevent schema drift.

- Performance and SLA Metrics: Throughput targets, latency thresholds, concurrency limits, and retry policies. These metrics drive capacity planning and connector tuning.

- Compliance and Audit Inputs: Requirements from GDPR, HIPAA, SOX, or industry mandates, including data residency, consent management, audit logging, and encryption standards.

- Stakeholder Alignment: Business objectives, process owners, escalation paths, and support models. Early agreement on use case scope and acceptance criteria ensures integration efforts deliver intended value.

By rigorously gathering these prerequisites, organizations establish a structured framework that enables AI agents to operate securely and efficiently across diverse enterprise systems. The next stage implements these inputs through API-driven connections and data flows.

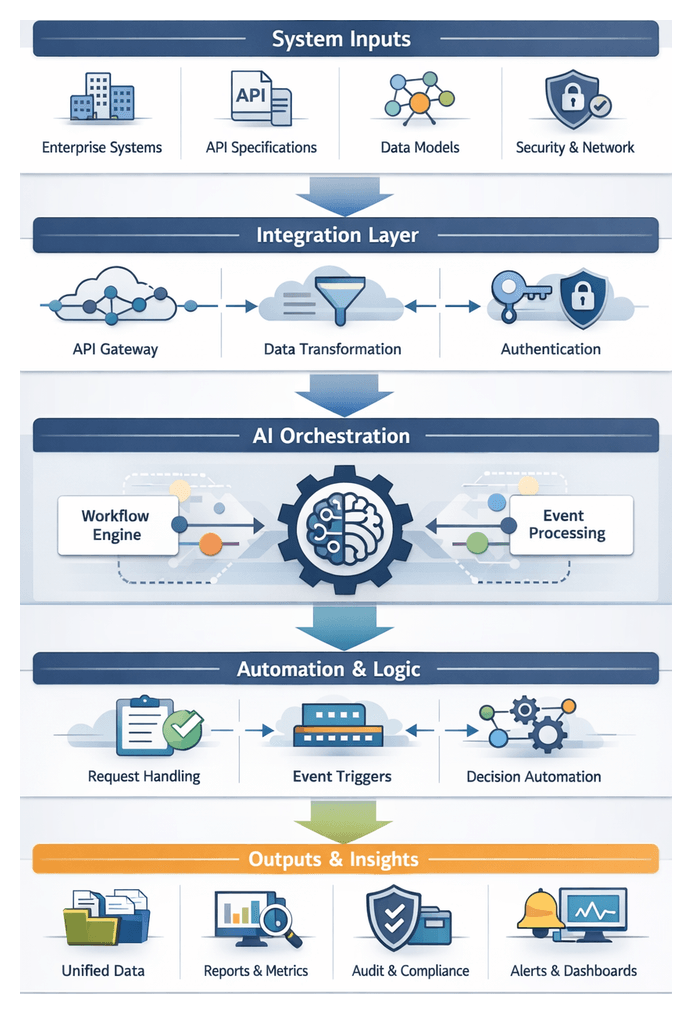

API-Driven Connection and Data Flows

The API-driven stage orchestrates real-time or near-real-time exchanges between AI agents and enterprise applications. An architectural stack typically comprises an AI orchestration layer, an API gateway or management platform, enterprise system endpoints, and message buses or transformation services. Together, these components ensure secure, resilient, and observable data flows.

Gateway Configuration and Routing

Platforms such as AWS API Gateway, Azure API Management, MuleSoft, and Oracle Integration Cloud centralize:

- Route mappings from logical API paths to backend endpoints.

- Rate limiting, throttling, and caching policies.

- Payload transformations to align request and response schemas.

- Authentication enforcement via OAuth 2.0, JWT, or mutual TLS.

- Versioning strategies to support backward compatibility.

Secure Authentication Handshakes

AI agents acquire tokens through OAuth 2.0 client credentials grants or OpenID Connect flows. Processes include:

- Service registration with identity providers to obtain client IDs and secrets.

- Token acquisition, introspection, and automated refresh logic.

- Secret management via vaults such as HashiCorp Vault or AWS Secrets Manager.

Request-Driven and Event-Driven Interactions

Workflows leverage both paradigms:

- Retrieving customer data before generating personalized correspondence.

- Querying inventory levels for order fulfillment decisions.

- Submitting transactions to ERP systems and confirming responses.

- CRM emits a lead-scored event consumed by a lead-routing agent.

- ERP publishes an order-shipped event triggering stakeholder notifications.

- HRIS signals an onboarding event starting document generation and training tasks.

Serialization, Validation, and Transformation

Payloads undergo:

- Format conversion between JSON, XML, Avro, or Protobuf.

- Schema validation against JSON Schema or XSD definitions.

- Field mappings, default values, and lookup enrichments via transformation templates.

- Addition of metadata such as timestamps, correlation IDs, or agent identifiers.

Coordination and State Management

Maintaining workflow context and preventing race conditions relies on:

- Correlation IDs passed in headers for end-to-end tracing.

- Distributed caches or stateful services for short-lived state.