Maximizing Content Impact A Practical Guide to AI Driven Repurposing Workflow in Social Media

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

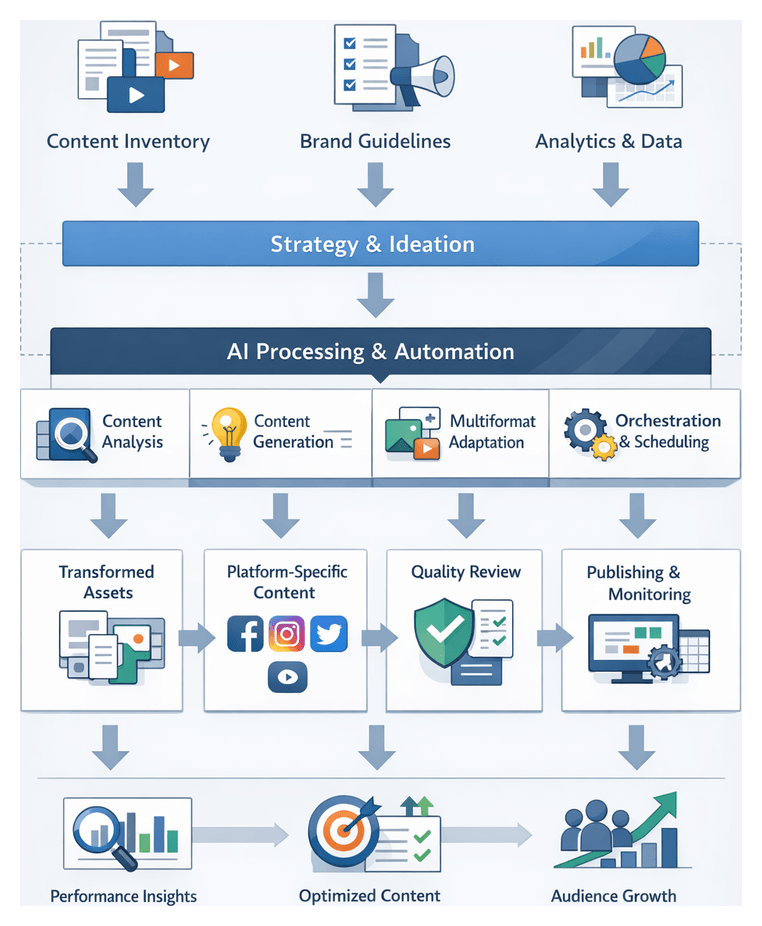

Purpose and Context of Social Media Content Operations

Organizations today face an ever-expanding landscape of social media platforms, each with unique content formats, audience behaviors, and engagement mechanics. From short-form video on TikTok to in-depth articles on LinkedIn, marketing and content teams must manage an unprecedented volume of assets. This proliferation creates strategic and operational challenges: maintaining a consistent brand voice, adhering to platform specifications, and responding quickly to emerging trends. By clearly defining these challenges and documenting existing pain points, organizations lay the groundwork for a structured workflow that can scale without sacrificing quality.

The strategic importance of this exercise extends beyond operational efficiency. When teams align on documented workflows, they can prioritize investments in people, processes, and technology. A clear understanding of channel requirements, content performance baselines, and stakeholder roles informs decisions about whether to build custom tools, adopt third-party platforms, or engage AI services. Without this foundation, attempts to automate or optimize are likely to introduce new bottlenecks or inconsistencies, undermining both agility and brand integrity.

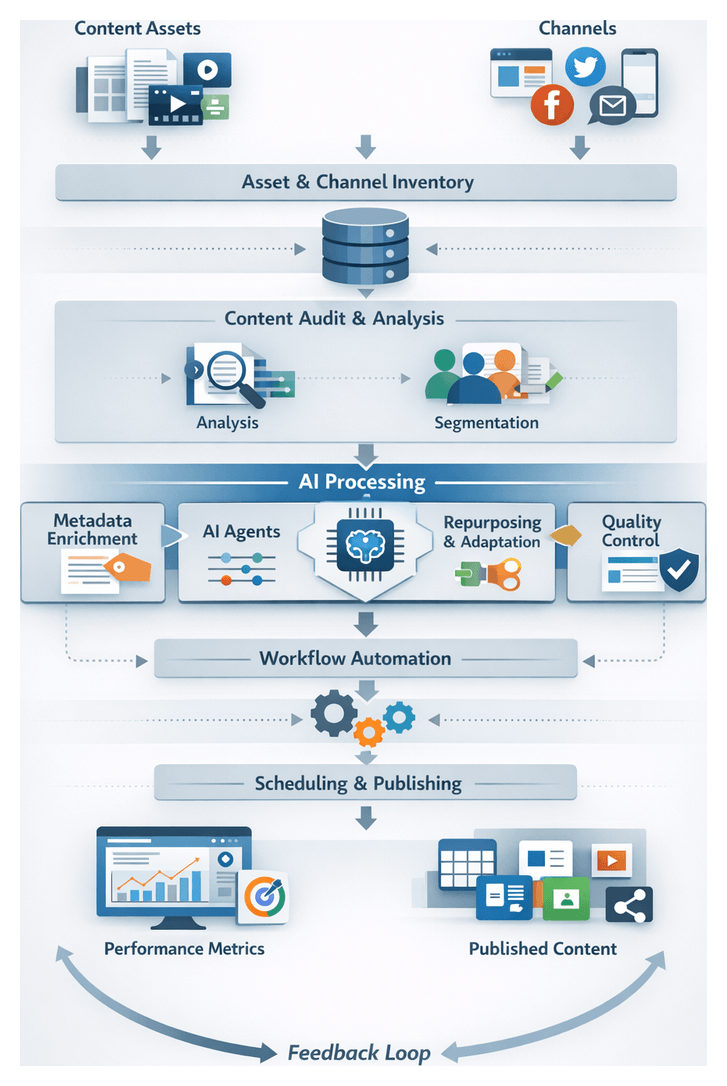

Key prerequisites for diagnosing content operations include a comprehensive content inventory, defined brand guidelines, performance metrics, cross-functional alignment, and a connected technology stack. A centralized repository of existing assets—annotated with metadata on creation date, format, audience segment, and historical engagement—serves as the single source of truth. Living brand guidelines provide rules for voice, tone, visual identity, and compliance. Performance metrics establish a baseline against which improvements are measured. With these inputs in place, teams are positioned to integrate AI-driven tools and orchestrate a repeatable workflow that accelerates time to publish while preserving brand consistency.

Operational and Scaling Challenges

- Channel Fragmentation and Format Diversity: Each social media outlet imposes its own dimensions, caption lengths, and interactive features. Manually converting long-form content into image carousels, short videos, stories, and tweets is labor intensive and prone to errors.

- Inconsistent Brand Voice and Style: As content passes through creative, editorial, design, and compliance teams, messaging drift can occur without standardized guidelines and automated checks, eroding audience trust.

- Ad-Hoc Repurposing and Fragmented Accountability: Reactive workflows coordinated via email, spreadsheets, and chat lead to unclear ownership, missed deadlines, and duplicated effort.

- Siloed Collaboration and Handoffs: Disconnected systems inhibit version control, introduce feedback loops that span multiple tools, and slow down the production cycle.

- Lack of Data-Driven Decision Making: In the absence of integrated analytics, teams rely on intuition rather than real-time insights into engagement, reach, and conversion, resulting in suboptimal content allocation.

- Scaling Constraints and Resource Limitations: Rising demand for new content strains budgets and headcount, leading to burnout, missed opportunities, and diminishing returns.

- Regulatory Compliance and Governance Overhead: Industries such as finance and healthcare require multiple layers of legal and policy reviews, adding delays and administrative complexity to every asset.

When repurposing remains an ad-hoc activity, organizations sacrifice both agility and consistency. Without a repeatable framework, content operations become unpredictable, slowing response to market opportunities and diluting the strategic impact of social media investments.

Foundations for an AI-Driven Repurposing Workflow

Before deploying AI agents or orchestration platforms, teams must establish the foundational inputs and conditions that enable process clarity and automation readiness:

- Comprehensive Content Inventory: A central repository of all source assets—including articles, white papers, videos, images, and past campaigns—enriched with metadata on performance, audience segment, format, and compliance status.

- Defined Brand Guidelines and Style Rules: A living document capturing voice, tone, visual identity, terminology, and legal requirements. This guide serves as the benchmark for both AI validations and human reviews.

- Analytics and Performance Baseline: Historical data on engagement metrics, click-through rates, conversion statistics, and audience demographics. These inputs inform AI-driven prioritization and provide benchmarks for continuous improvement.

- Cross-Functional Alignment: Clearly defined roles, responsibilities, and communication protocols among marketing, creative, legal, compliance, and IT teams. Formal handoff criteria and review cycles prevent delays and ensure accountability.

- Integrated Technology Stack: Identification and integration of core systems such as CMS, DAM, project management tools, and analytics platforms. API accessibility, secure data governance, and consistent metadata schemas enable seamless data flow.

- AI Readiness and Training Data: Curated, high-quality training datasets—including tagged samples and performance annotations—are vital for tuning AI models to the brand’s voice and thematic structures. Establishing annotation guidelines and data hygiene practices accelerates model accuracy.

With these prerequisites satisfied, organizations can proceed to design a structured workflow that leverages AI for classification, transformation, orchestration, and optimization—eliminating manual bottlenecks and enhancing strategic focus.

Structured Repurposing Workflow Framework

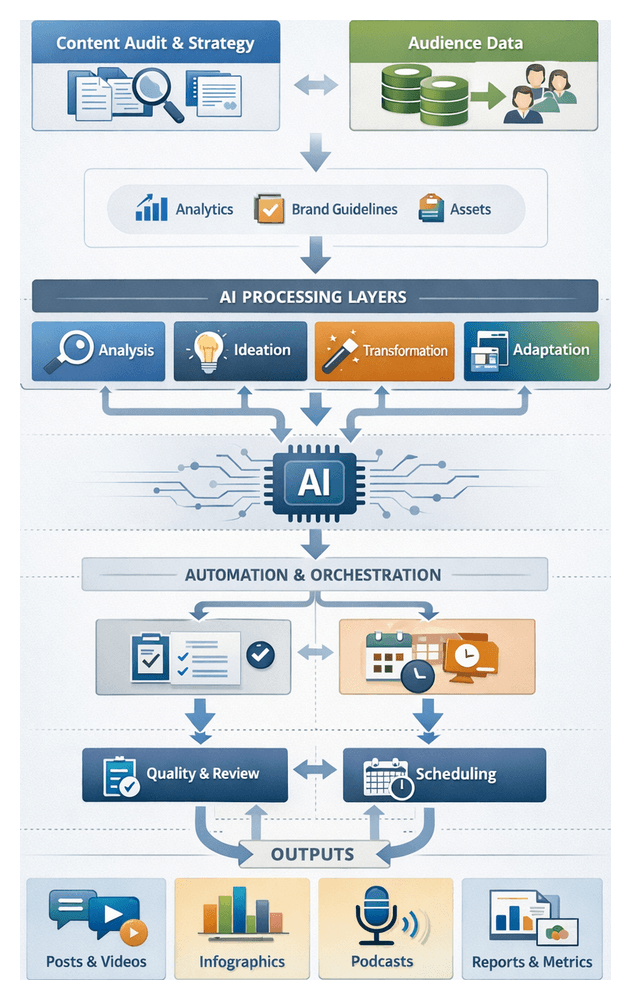

A well-defined repurposing framework codifies process stages, metadata standards, roles, governance, and performance metrics. By formalizing each element, teams achieve consistent outputs, transparency, and scalable throughput.

- Stage Taxonomy: Clearly documented phases—Audit & Inventory, Strategy Definition, Automated Ideation, Content Transformation, Platform Adaptation, Orchestration & Scheduling, Quality Control, Publishing & Distribution, and Optimization—each with entry and exit criteria that enforce handoff protocols.

- Metadata Schema: A unified tagging model capturing asset attributes such as format, theme, sentiment, risk level, target persona, and priority. Standardized metadata enables AI agents to route tasks, enforce business rules, and prioritize high-impact content.

- Role Matrix: Precise mapping of AI modules, content strategists, creative teams, compliance officers, and publishing coordinators to decision rights and review responsibilities, minimizing contention and ensuring accountability.

- Handoff Protocols: API events, task assignments, and approval flags serve as standardized triggers that move assets through stages automatically, reducing manual coordination and hand-off delays.

- Governance Rules & SLAs: Embedded style guides, legal checklists, and brand policies are enforced through AI validations and human review checkpoints. Service-level agreements track metrics such as turnaround time, review accuracy, and error incidence to maintain process health.

Core Workflow Stages and System Interactions

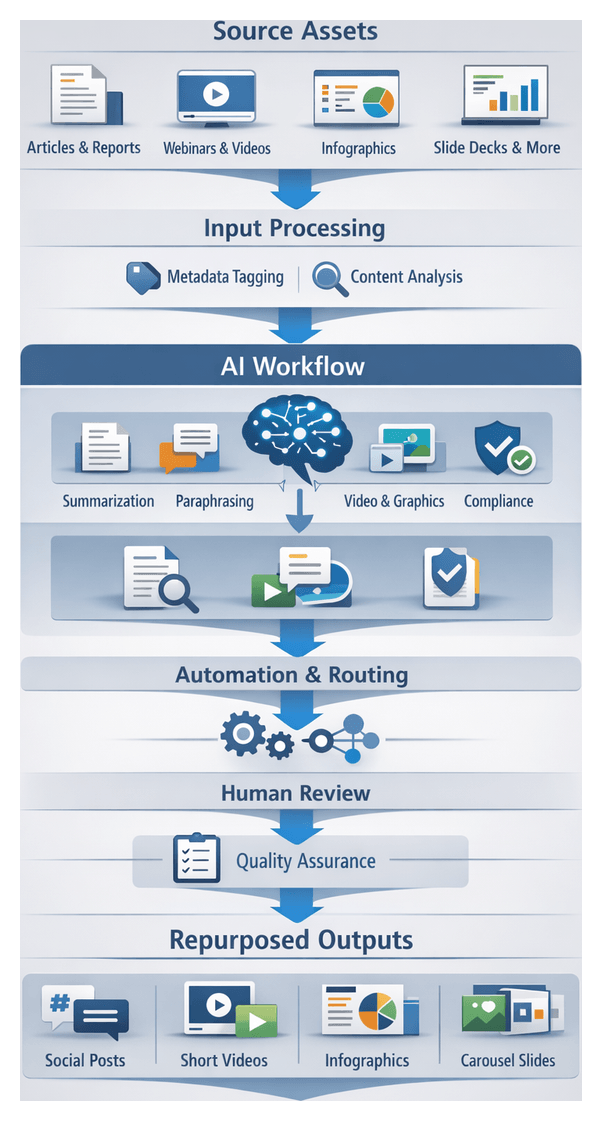

In a cohesive pipeline, assets progress through a sequence of stages powered by AI services, orchestration engines, and collaborative tools:

- Audit & Inventory: A CMS ingests existing assets. AI classification services—using OpenAI and Azure Cognitive Services—analyze performance data, detect themes and sentiment, and populate a DAM with enriched metadata for a complete content inventory.

- Strategy Definition: A collaborative strategy portal aggregates audit outputs, brand guidelines, and campaign objectives. AI analytics modules surface high-value audience segments and recommend platform priorities based on historical engagement patterns.

- Automated Ideation: Natural language generation engines produce thematic outlines, headline variations, and content angles. Generated ideas are stored in a central repository, annotated with metadata, and presented for human refinement.

- Content Transformation: AI models perform summarization, paraphrasing, translation, and multimedia script generation within an authoring environment. Draft assets—text drafts, video scripts, and graphic mockups—are generated in parallel to maximize throughput.

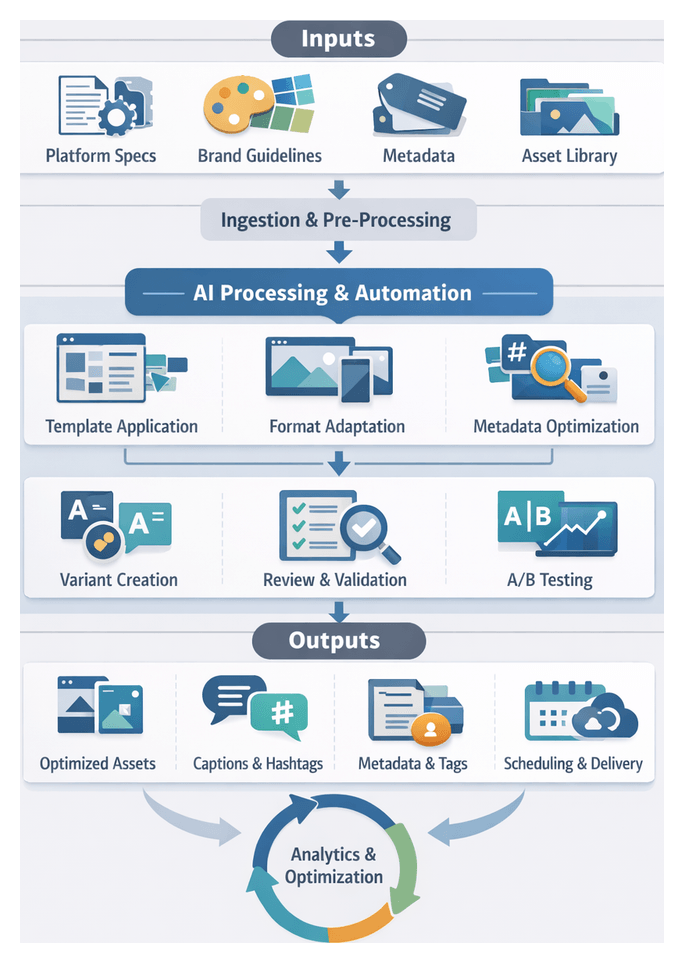

- Platform Adaptation: A styling service such as Adobe Sensei applies design templates and resizes media to meet social media specifications. AI generates captions, hashtags, and accessibility labels, validated against platform rules to ensure compliance.

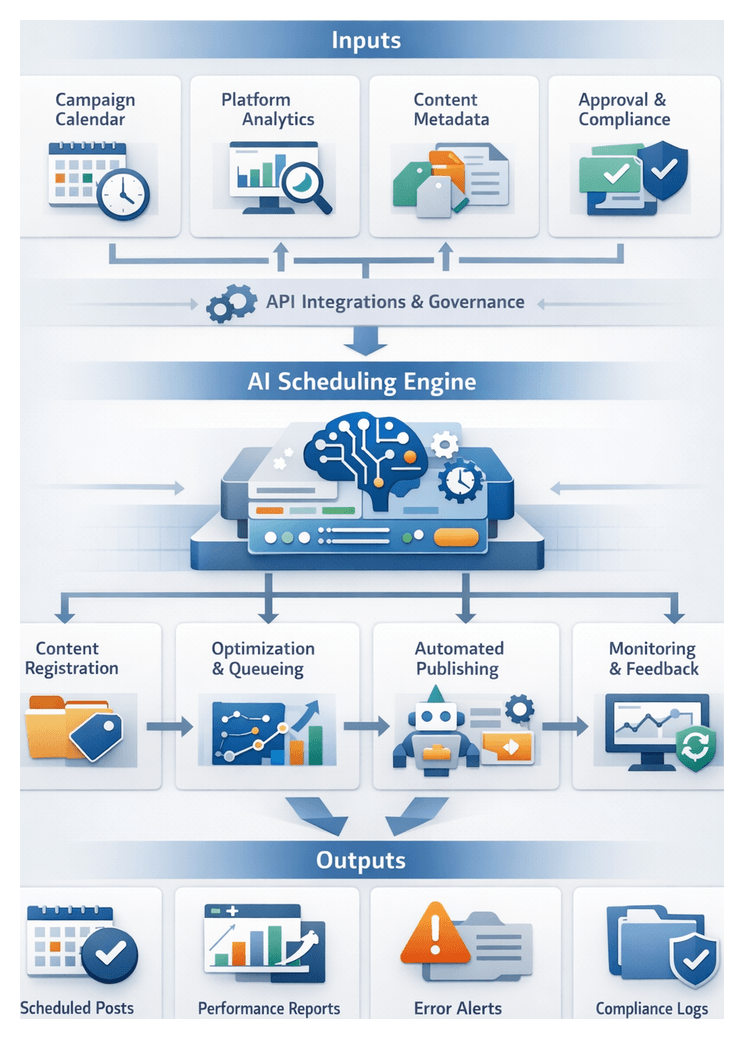

- Orchestration & Scheduling: An orchestration platform coordinates task queues, prioritizes time-sensitive campaigns, and routes assets to appropriate systems or human reviewers, while optimizing posting windows via social media APIs.

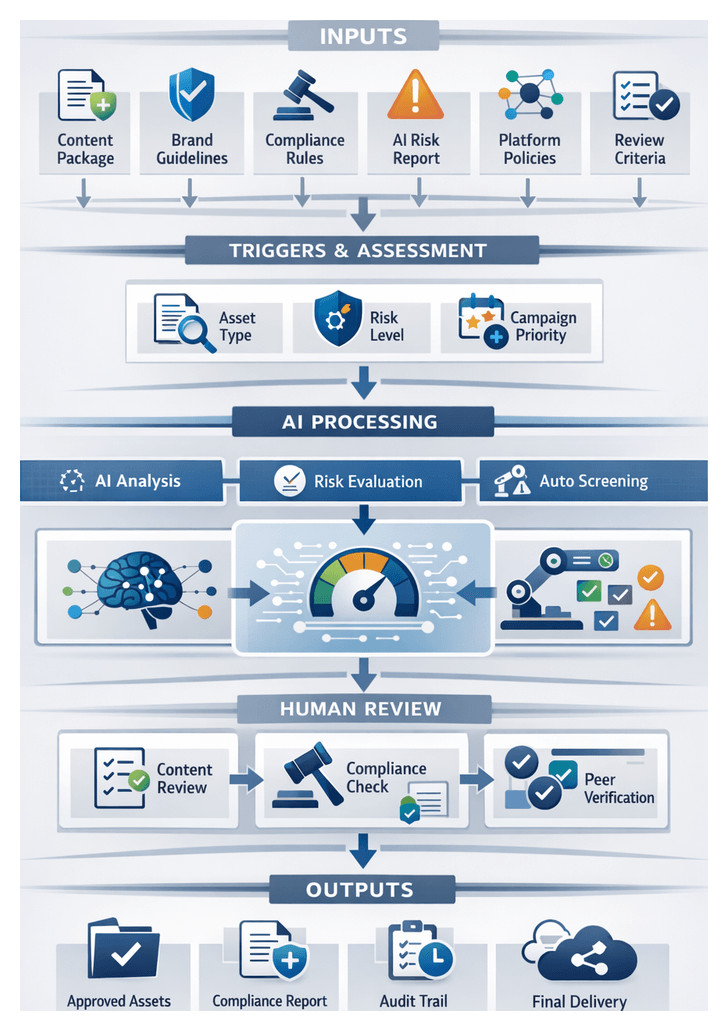

- Quality Control: A human-in-the-loop portal surfaces AI-flagged style deviations, compliance risks, and policy checks. Reviewers address issues within the interface, and approved assets receive a governance stamp before distribution.

- Publishing & Monitoring: Approved content is scheduled through platforms like Buffer or Hootsuite. Real-time algorithms adjust schedules based on live performance data, ensuring maximum audience engagement.

- Optimization: Analytics dashboards ingest engagement metrics, A/B test results, and audience feedback. AI engines such as Google’s Vertex AI forecast performance impact and recommend iterative adjustments to headlines, calls-to-action, and publishing schedules.

AI Integration and Automation Across the Workflow

Embedding AI capabilities throughout the repurposing pipeline delivers intelligence, speed, and consistency that manual or rule-based approaches cannot match. From analysis to orchestration, AI agents automate routine tasks, enforce guidelines, and surface strategic insights.

Content Analysis and Insights

- Theme Extraction: Natural language processing models—such as those in OpenAI GPT and Hugging Face transformers—scan thousands of assets to identify recurring topics, sentiment trends, and brand-related keywords.

- Audience Segmentation: Machine learning algorithms analyze engagement patterns to cluster audiences by demographics, interests, and behavioral signals, enabling precise targeting.

- Format Classification: AI classifiers automatically tag assets by media type—text, image, video—and complexity level, driving decision logic for appropriate repurposing pathways.

- Performance Benchmarking: Predictive analytics models evaluate historical data to forecast the potential reach and engagement of different content themes, guiding strategic prioritization.

Automated Generation and Adaptation

- Paraphrasing and Summarization: Large language models condense long articles into concise captions or bullet lists. Fine-tuned instances of OpenAI GPT ensure that generated copy adheres to brand voice.

- Multilingual Translation: Neural machine translation services—from Hugging Face or DeepL—rapidly localize content for global audiences while preserving nuance.

- Creative Variations: AI engines generate multiple headline and caption options for A/B testing across platforms such as Instagram, Twitter, and TikTok, enabling experimentation at scale.

- Media Conversion: Automated script generators produce storyboard-ready video scripts, while image generation and layout tools like Adobe Sensei and Canva assist in creating visual mockups aligned with platform dimensions.

Intelligent Orchestration and Task Routing

- Dynamic Task Scheduling: AI orchestrators assess asset status and trigger subsequent transformation steps—summarization, translation, or style adaptation—based on predefined rules and real-time conditions.

- Error Detection and Recovery: Machine learning monitors pipeline health, flags processing anomalies, and either reroutes tasks or alerts human operators via Jira and Slack integrations.

- Resource Optimization: Predictive algorithms allocate compute resources to high-priority tasks in cloud environments, balancing throughput with cost efficiency.

- Collaboration Integration: Orchestration platforms synchronize with collaboration tools to provide real-time status updates, collect feedback, and enforce approvals.

Quality Assurance and Governance Automation

- Style and Compliance Checks: Natural language understanding models compare generated content against brand lexicons and regulatory term lists, identifying deviations.

- Image Moderation: Computer vision algorithms scan visual assets for inappropriate content, logo misuse, and design inconsistencies.

- Metadata Validation: Automated scripts verify that captions, tags, and descriptions meet platform-specific requirements, reducing post-publish corrections.

- Human-in-the-Loop Reviews: Intelligent alerting surfaces flagged items to reviewers with contextual annotations, streamlining feedback loops and expediting approvals.

Continuous Learning and Optimization

- Performance Data Ingestion: Analytics platforms feed engagement metrics and audience feedback into AI engines, refining predictive models over time.

- Model Retraining: Periodic retraining incorporates fresh data, ensuring that theme extraction, segmentation, and generation remain aligned with evolving audience preferences.

- Impact Forecasting: Advanced simulations project the potential outcomes of new repurposing strategies, helping teams prioritize high-impact experiments.

- Automated Recommendations: AI-driven dashboards surface actionable insights—such as headline adjustments, call-to-action refinements, or schedule shifts—to maximize content resonance.

Implementation Best Practices and Quantifiable Benefits

Successful deployment of an AI-enhanced repurposing workflow requires careful planning and change management:

- Pilot with High-Impact Content: Validate process design and tooling integration on a priority campaign or content silo before broad rollout.

- Define Clear SLAs and KPIs: Track metrics such as audit turnaround, ideation cycle time, review accuracy, and scheduling latency to measure workflow health.

- Develop a Living Playbook: Maintain a centralized knowledge base documenting workflow rules, role responsibilities, and system configurations for ongoing reference.

- Invest in Training and Change Management: Educate users on AI capabilities, collaboration protocols, and governance requirements to drive adoption and build confidence.

- Monitor Continuously and Iterate: Use real-time dashboards to identify bottlenecks, quality gaps, and compliance issues, making data-driven adjustments to optimize throughput.

Organizations that formalize and automate their repurposing processes report significant gains, including:

- Cycle Time Reduction: Up to 50 percent faster content turnaround through parallel processing and automated handoffs.

- Consistent Messaging: Centralized governance and AI-driven style enforcement achieve uniform brand voice across channels.

- Scalable Output: Ability to double content production without proportional increases in headcount.

- Improved ROI: Data-informed prioritization focuses resources on high-impact assets, boosting engagement and conversions.

- Transparency and Control: Comprehensive audit logs and dashboards provide executives with clear insights into workflow health and content performance.

Deliverables and Handoff Guidelines

Audit & Inventory

Deliverables include an asset inventory report, channel matrix, audience mapping dossier, and taxonomy document. Dependencies comprise access to CMS, DAM, analytics platforms, text classification tools such as OpenAI, and sentiment analysis via Azure Cognitive Services. Handoff involves publishing CSV and JSON files with a metadata guide, followed by a kickoff meeting to review critical findings, confirm taxonomy validity, and identify content gaps.

Strategy Definition

The strategy stage produces a repurposing blueprint, platform prioritization matrix, defined success metrics, and a high-level content calendar. Dependencies include audit outputs, brand guidelines, performance benchmarks, and AI modeling tools. Handoff criteria require stakeholder approval, deliverable completeness, and alignment on priorities. Approved artifacts trigger automated tasks in the project management system and a strategy briefing session.

Analysis Outputs

Deliverables encompass segmentation reports, theme maps, audience personas, format classification lists, and a prioritized asset ranking. Dependencies include integration with analytics tools and data warehouses, as well as AI services like OpenAI NLP engines and computer vision APIs for video analysis. Handoff to ideation occurs once outputs are validated; a review meeting ensures consensus on priority segments and confirms data integrity before creative work begins.

Ideation

Idea deliverables consist of briefs, thematic outlines, headline variations, and concept boards. Dependencies include segmentation and strategy reports, brand guidelines, and ideation platforms such as Jasper. Handoff into transformation requires sign-off on idea briefs, alignment with KPIs, and a kickoff call to clarify creative intent and performance targets.

Content Transformation

Transformation outputs include draft assets—rewritten text, summaries, video scripts, infographic outlines, and translations—each tagged with source, platform, and version history. Dependencies comprise idea briefs, original asset access, and AI services such as OpenAI and DeepL. Deliverables are uploaded to a shared workspace with embedded metadata; a preprocessing checklist verifies format readiness before adaptation.

Adaptation

Adaptation produces platform-specific assets—resized images, formatted videos, caption sets, hashtags, and metadata manifests—along with a style compliance report. Dependencies include repurposed drafts, platform guidelines, and AI agents like Canva. Handoff to orchestration begins once assets pass automated and manual quality checks; integration with the CMS triggers scheduling workflows and notifies the publishing team.

Orchestration & Scheduling

Orchestration deliverables comprise execution logs, task status reports, API event triggers, and a master queue of scheduled tasks. Dependencies include adapted assets, schedule parameters, and credentials for social media APIs. AI orchestrators such as AgentLink AI coordinate with project management and CMS APIs. Handoff to quality control is automated via triggers; a manifest details asset bundles and review criteria.

Quality Control

Quality control outputs include approved asset packages, annotated feedback logs, compliance checklists, and governance reports. Dependencies involve orchestration manifests, brand guidelines, legal requirements, and AI-assisted tools like Grammarly. Handoff to distribution initiates once assets achieve final approval; approved packs are exported via API or CSV to the scheduling system, accompanied by a distribution readiness report.

Distribution

Distribution deliverables consist of publication logs, channel performance forecasts, and an updated content calendar. Dependencies are approved assets, channel credentials, posting schedules, and predictive analytics platforms such as Buffer or Hootsuite. Handoff to performance monitoring involves exporting logs and forecasts to the analytics platform, mapping published assets to engagement metrics for real-time tracking.

Optimization

Optimization outputs include interactive dashboards, A/B test results, recommendation reports, and update trigger logs. Dependencies encompass distribution data, audience feedback, A/B testing platforms, and AI engines like Google’s Vertex AI. Handoff to audit or strategy occurs via automated alerts and task creation in the workflow tool, prompting teams to revisit asset inventories or adjust strategic documents—completing the closed-loop process for continuous improvement.

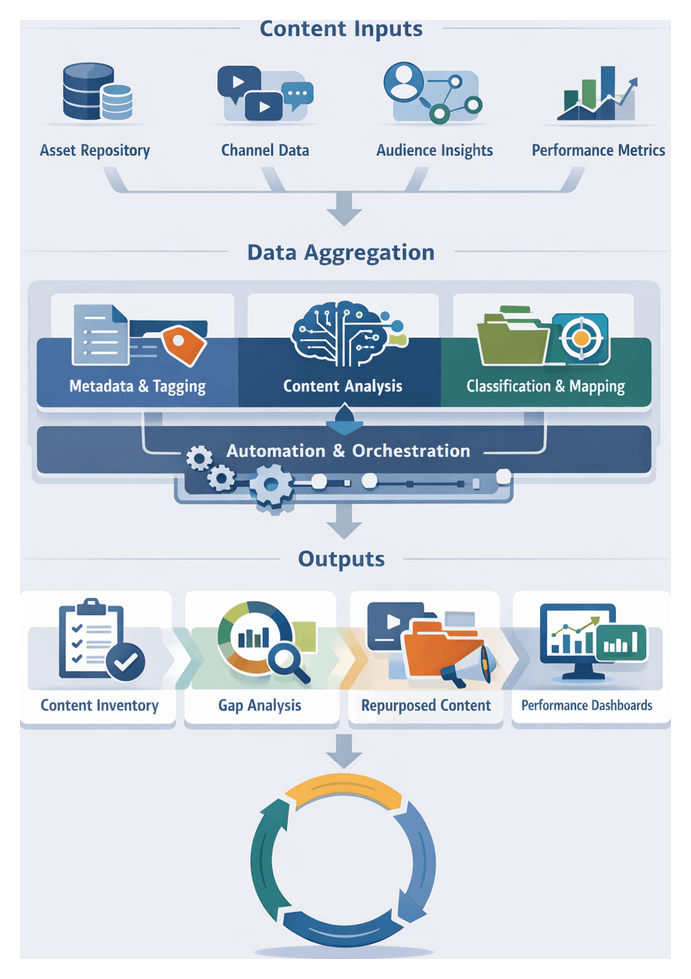

Chapter 1: Understanding the Content Ecosystem

Purpose and Scope of the Content Audit Stage

The content audit stage establishes a comprehensive, data-driven foundation for AI-powered social media repurposing. By systematically discovering, cataloguing, and analyzing every published, archived, and in-development asset, organizations gain a unified view of their content ecosystem. This clarity addresses critical challenges—duplicate content, thematic gaps, and inconsistent brand voice—while aligning existing materials with strategic objectives. A disciplined audit process not only identifies high-performing assets for rapid transformation but also flags underperforming pieces for revision or retirement, ensuring efficient use of resources.

In an era of rapidly evolving platforms and audience expectations, the audit stage anchors content operations in measurable insights. It mitigates risk by enforcing brand guidelines, secures executive sponsorship through transparent reporting, and fosters cross-functional collaboration among marketing, creative, analytics, and IT teams. With audit outputs feeding directly into AI engines, organizations achieve a seamless transition from inventory to ideation, maximizing the impact of every social media initiative.

Key Inputs and Prerequisites

To execute a thorough content audit, teams must assemble critical inputs, secure necessary access permissions, and align organizational stakeholders. The primary prerequisites include:

- Centralized Asset Repository: A single source of truth—hosted in a Content Management System (CMS) or Digital Asset Management (DAM)—containing blog posts, videos, podcasts, infographics, and social media content. Integrations allow for automated ingestion and metadata extraction.

- Channel Inventory and Permissions: A catalog of every publishing outlet—corporate blogs, YouTube, LinkedIn, Instagram, TikTok—and administrative access to analytics consoles. Unified credential management via Hootsuite or Buffer enables automated data retrieval.

- Audience Segmentation Data: Persona definitions and behavioral profiles sourced from CRM systems, CDPs, or platforms like Segment and Amplitude. These datasets include demographics, engagement history, and content preferences.

- Performance Metrics: Historical engagement statistics—views, shares, comments, conversion rates—collected from Google Analytics, native platform reports, BuzzSumo, and Sprout Social. Data must be exportable in structured formats (CSV, JSON).

- Brand Guidelines and Voice Documentation: Style guides, tone-of-voice manuals, and visual identity standards. AI models fine-tuned through Microsoft Azure Custom Neural Voice or OpenAI’s service use these guidelines to enforce consistency.

- Technology Environment: API credentials, integration middleware such as Mulesoft or Zapier, and secure sandbox environments for testing connectors and workflows without impacting production systems.

- Organizational Alignment: Executive sponsorship to secure resources, data governance policies to maintain quality and compliance, and a change management plan to communicate process shifts and stakeholder roles.

Audit Workflow and System Orchestration

Asset Ingestion and Consolidation

The audit begins with the discovery and ingestion of content assets from disparate repositories. Automated connectors and API integrations pull files, metadata, and performance records into a unified staging area. Each asset is assigned a unique identifier and tagged with source information, creation date, and content type. Ingestion errors—unsupported formats or authentication failures—trigger alerts to IT or DevOps teams, ensuring no asset is overlooked.

Metadata Standardization and Enrichment

Inconsistent metadata hampers effective management and analysis. A two-step process ensures uniformity: first, scheduled jobs normalize field names (for example, author, publish_date, content_type) to match the organization’s taxonomy. Second, AI-driven enrichment services populate missing attributes. Natural language processing agents, such as OpenAI’s GPT-4 and Hugging Face transformers, generate concise summaries and extract topic tags and sentiment scores. Computer vision models detect branded imagery and color palettes. Assets with confidence scores below predefined thresholds are flagged for manual review by content analysts.

- Establish required metadata schema and field definitions

- Normalize existing tags to align with taxonomy

- Invoke AI taggers for theme extraction and persona relevance

- Flag low-confidence enrichments for human validation

Classification, Thematic Tagging, and Audience Mapping

Once enriched, assets undergo classification through a tiered approach. Rule-based filters detect formats—articles, videos, infographics—and route them to specialized classifiers. Natural language understanding pipelines identify primary topics, subtopics, and tone in text, while computer vision services analyze visual elements in images and video frames. Thematic labels—such as “product_update,” “industry_insight,” or “customer_testimonial”—are consolidated in a theme index.

Audience mapping aligns each asset with one or more personas by cross-referencing thematic tags, engagement metrics (click-through rates, watch durations), and CRM data. High-engagement technical white papers may map to “Technical Decision Maker,” while emotional social videos link to “Brand Enthusiast.” Multiple persona associations are stored as arrays in metadata records. Marketing operations teams review initial mappings, adjusting weighting rules or segment definitions to reflect strategic priorities.

- Format detection and routing to appropriate classifiers

- Natural language processing for topic modeling and tone analysis

- Visual recognition for imagery classification

- Automated persona matching using CRM and performance data

Error Handling and Iterative Review

An orchestration layer coordinates the sequence of audit tasks, logging execution details and managing retries. Failed jobs—such as enrichment timeouts or classification errors—enter a dedicated support queue for manual intervention. Regular review cycles engage content strategists and subject matter experts to validate thematic tags and persona mappings. Feedback is incorporated into AI models as new training data, improving accuracy over successive iterations. Governance checkpoints allow legal and compliance teams to flag sensitive content before repurposing.

AI Integration and Automation

AI-Driven Content Analysis

Natural language processing and computer vision automate the extraction of themes, entities, and sentiment across large content repositories. Semantic tagging tools, including OpenAI’s GPT models and Hugging Face transformers, identify core messages, brand mentions, and emotional tone. Visual recognition services detect objects, scenes, and logos for precise asset classification. Machine learning algorithms then segment assets by persona, engagement patterns, and performance trends, forming the input for prioritized repurposing.

Intelligent Ideation and Transformative Repurposing

AI ideation engines generate creative angles, headlines, and thematic frameworks that adhere to brand voice guidelines. By analyzing historical data and market signals, these systems surface high-potential content concepts and clusters. Platforms like Jasper propose multiple headline variants optimized for each channel. Unsupervised learning groups assets around emerging topics, ensuring cohesive storytelling.

Transformative repurposing leverages summarization APIs and fine-tuned models—OpenAI Codex, custom GPT instances—to distill long-form content into micro-blogs, bullet points, or tweet-length posts. Paraphrasing tools adjust style and formality for platform-specific audiences. Script generation solutions such as Descript convert text into video and audio scripts with scene and dialogue suggestions, accelerating multimedia production.

- Headline and caption generation tailored to social channels

- Summarization, paraphrasing, and tone adaptation at scale

- Automated script generation for video and audio content

Cross-Platform Adaptation and Style Transfer

AI modules ensure assets conform to each platform’s technical requirements and aesthetic conventions. Computer vision APIs automatically resize and crop images, preserving focal points for Instagram, LinkedIn, TikTok, and emerging channels. Language models generate caption variants with embedded keywords and hashtags to maximize reach. Rule-based systems and machine learning enforce brand fonts, color palettes, and tone, maintaining consistency across diverse formats.

- Automated image resizing and focal point detection

- Caption optimization with embedded keywords and hashtags

- Style enforcement for visual and verbal brand alignment

Orchestration with AI Agents

Workflow orchestration platforms manage end-to-end repurposing pipelines, sequencing tasks and integrating AI services with enterprise systems such as DAM and CMS. Engines like Zapier handle task scheduling and event triggers, while microservices architectures connect NLP, vision, and scheduling APIs to central data stores and collaboration tools. Real-time monitoring and alerting enable rapid intervention in case of anomalies.

- Task scheduling and dependency management across AI-driven steps

- RESTful API integrations and microservices for modular workflows

- Error detection, alert notifications, and automatic rerouting

Human-in-the-Loop Governance and Continuous Optimization

Despite extensive automation, human oversight ensures brand compliance, legal alignment, and nuanced quality control. Automated policy checks scan content for sensitive terms, regulatory risks, and brand guideline violations. Suggestion overlays highlight grammatical and stylistic issues for reviewers, streamlining edits. Integrated approval workflows manage version control, comments, and sign-offs before publishing.

Post-publishing, AI-driven analytics tools collect performance data—engagement rates, conversion metrics, sentiment analysis—and feed insights back into repurposing workflows. Predictive modeling forecasts optimal posting times and formats, while automated A/B testing evaluates content variations. Real-time dashboards reveal performance trends, opportunities for iteration, and content gaps, driving a continuous improvement cycle.

- Automated compliance and style checks with flagging

- Reviewer support through suggestion overlays and sign-off modules

- Closed-loop optimization via predictive analytics and A/B testing

Audit Deliverables and Handoff Guidelines

Completing the audit yields a standardized package of deliverables to inform strategic planning and AI-driven transformation. Core outputs include:

- Asset Inventory Spreadsheet: A detailed table listing every item—posts, images, videos, articles—with unique IDs, channel origin, publish date, status, and enriched metadata.

- Performance Metrics Dashboard: Interactive visualizations or PDF exports summarizing likes, shares, comments, reach, and conversions by content type and channel, highlighting top and underperformers.

- Metadata and Tagging Schema Export: CSV or JSON files capturing existing tags, categories, audience segments, and custom fields, forming the taxonomy foundation for AI workflows.

- Theme and Format Classification Report: AI-generated mappings using OpenAI GPT-4 and IBM Watson NLU, including confidence scores and cross-references to source metadata.

- Content Gap Analysis: Analytical summary identifying missing topics, underrepresented formats, and audience segments lacking tailored content.

- Audit Summary Presentation: Slide deck synthesizing key findings, metrics, gaps, and recommended next steps to align stakeholders before strategy definition.

Handoff packages are version-controlled, named with standardized prefixes and date stamps (for example, AUDIT_Assets_YYYYMMDD.csv), and delivered via secure file shares or project management tools. Acceptance criteria require minimum metadata coverage—such as 95 percent of assets tagged—and validated persona assignments. Structured feedback loops address anomalies within defined service-level agreements.

Governance, Version Control, and Collaboration

Maintaining consistency and traceability across audit outputs demands robust governance and collaboration protocols. Key practices include:

- Naming Convention Standards: Predefined templates for file names, folder structures, and column headers, using consistent delimiters and date formats.

- Metadata Schema Documentation: Comprehensive data dictionaries and change approval processes to govern taxonomy updates and field modifications.

- Version Control and Change Logs: Committing deliverables to systems like Git or SharePoint with automated logs, enabling rollbacks and historical audits.

- Centralized Repository Structures: Hierarchical folders organized by audit date and content type, with Access Control Lists to manage permissions.

- Audit Governance Committee: Regular reviews of change requests, impact assessments for taxonomy updates, and scheduled refresh cycles to keep audit data current.

- Collaboration Protocols: Designated Slack or Teams channels, weekly stand-ups, shared Kanban boards, and clear escalation paths for data discrepancies.

Timeline and Milestones

- Week 1: Data Extraction, Access Validation, and Initial Asset Ingestion: Entry Criteria: CMS/DAM credentials, API tokens, channel analytics access Exit Criteria: Complete asset discovery with unique identifiers

- Week 2: Metadata Standardization, AI Enrichment, and Theme Mapping: Entry Criteria: Metadata schema definitions, AI model configurations: Exit Criteria: 90 percent of assets enriched and classified

- Week 3: Performance Dashboard Finalization and Content Gap Analysis: Entry Criteria: Consolidated performance exports and taxonomy alignment: Exit Criteria: Gap analysis report delivered with prioritized themes

- Week 4: Audit Summary Presentation and Stakeholder Review: Entry Criteria: Draft deliverables and summary slides prepared: Exit Criteria: Stakeholder sign-off and remediation requests logged

- Week 5: Delivery of Final Handoff Package: Entry Criteria: Incorporation of feedback and final quality checks: Exit Criteria: Handoff package versioned, shared, and acknowledged

- Week 6: Formal Sign-Off and Transition to Strategic Planning: Entry Criteria: Acceptance criteria met and sign-off form completed: Exit Criteria: Strategy teams equipped with validated, high-quality data

Chapter 2: Defining Repurposing Objectives and Strategy

Purpose and Context for Defining Repurposing Strategy

In today’s content-rich landscape, organizations face mounting pressure to adapt and distribute existing assets across an expanding array of social channels. Defining clear objectives and gathering the right inputs at the outset of the repurposing workflow prevents misalignment, reduces redundant effort, and accelerates turnaround. By translating high-level business imperatives—such as brand consistency, audience engagement, and campaign ROI—into actionable goals, teams can ensure every piece of source content is adapted with intent and measured against agreed metrics.

The proliferation of short-form videos, ephemeral stories, community forums and emerging platforms has magnified the complexity of content operations. Manual approaches to cropping, rewriting and retargeting long-form materials struggle to scale, often resulting in inconsistent voice, diluted messaging and missed performance benchmarks. A structured, purpose-driven strategy for repurposing content is now a strategic necessity for sustaining audience trust, maximizing asset lifespan and optimizing resource allocation across marketing ecosystems.

Defining Objectives and Gathering Inputs

At the core of a robust repurposing strategy lies a precise definition of objectives and a comprehensive catalog of inputs. Typical objectives include:

- Enhancing audience engagement through platform-tailored messaging and formats

- Maximizing content longevity via strategic format conversions

- Maintaining brand integrity by enforcing tone, style and visual guidelines

- Measuring impact with predefined KPIs such as reach, click-through and conversions

- Optimizing resources by identifying high-value assets and repurposing opportunities

Key inputs that inform and validate these objectives include:

- Brand guidelines, voice and style documentation

- Historical performance benchmarks and audience demographics

- Campaign goals, seasonal or event-driven priorities

- Audience personas and behavioral profiles

- Channel specifications—dimensions, character limits, metadata requirements

Content audit outputs: asset inventories, theme maps, classification tags

- Competitive insights from social listening and market research

- Budget, headcount and technology constraints

Collecting these inputs ensures that objectives are data-driven, aligned with brand strategy and feasible within organizational limits.

Establishing Alignment and Prerequisites

Effective strategy definition requires cross-functional consensus and a clear governance framework. Prerequisites include:

- Facilitated stakeholder workshops to review inputs and define success criteria

- Access to shared data repositories for performance dashboards and audit reports

- Governance frameworks detailing decision-making hierarchies and review protocols

- Technology readiness assessments confirming that AI platforms—such as OpenAI’s GPT-4—are configured to process inputs

Additional conditions for a predictable, repeatable process include:

- Completion of a comprehensive content audit with assets tagged by format and performance tier

- Defined roles and handoff points across audit, strategy, ideation and execution teams

- Freshness of performance data and audience insights

- Validated toolchains, from asset management systems to AI engines

- Scheduled brand, legal and compliance reviews integrated into the workflow

When these prerequisites are in place, strategy moves swiftly from planning into execution without unnecessary friction.

Analytical Framework and AI-Driven Validation

To prioritize objectives effectively, organizations apply an analytical matrix that scores goals on strategic impact and operational feasibility. By categorizing initiatives into quick wins, strategic projects and lower-priority items, teams focus on efforts that promise maximum return.

AI accelerates and refines this prioritization. By feeding performance benchmarks, audience data and competitive insights into natural language processing engines such as GPT-4 or clustering modules, teams can:

- Generate engagement lift scenarios for different repurposing angles

- Receive format and channel mix recommendations based on historical patterns

- Detect gaps in brand voice coverage across objectives

- Model resource requirements and throughput times for adaptation techniques

Upon completion, teams produce:

- A prioritized list of repurposing objectives with success metrics

- An input inventory cataloging data sources and assets

- Stakeholder approval records confirming alignment

- An AI configuration blueprint to initialize models with objectives and validation criteria

These deliverables become the foundation for strategic action planning and ideation.

Strategy Actions and Workflow

Translating objectives into execution entails a structured flow of actions and collaborations between human teams and AI platforms.

Consolidating Strategic Inputs

All brand guidelines, performance reports, audience insights and campaign briefs are centralized in a collaboration workspace. An AI engine normalizes file formats, tags content by category and consolidates conflicting versions.

Performance Benchmark Analysis

Using AI-driven tools, machine learning algorithms identify top-performing formats, optimal post times and engagement drivers. Natural language processing highlights high-impact captions and themes, while predictive models forecast reach under various scenarios.

Platform Suitability Assessment

An AI agent retrieves channel guidelines and content specifications, scores platforms on audience alignment and format compatibility, and produces a ranked channel matrix. Human teams flag emerging or niche channels for inclusion.

Defining Strategic Pillars and Themes

Through an ideation interface, stakeholders select AI-generated theme suggestions derived from high-performing content. Keyword clusters and theme relationships are visualized in a clustering map, and approved themes become metadata for each repurposing task.

Prioritization Algorithm and Task Scoring

An AI-driven scoring engine evaluates tasks on strategic impact, resource requirements, channel readiness and compliance risk. Composite scores determine execution order, with configurable weighting to reflect evolving priorities.

Sequencing and Scheduling

An orchestration module integrates with project management tools and content calendars to schedule tasks. Sequencing logic accounts for asset availability, parallelization opportunities and campaign deadlines. Interactive views allow manual adjustments, which automatically update AI processing schedules and resource assignments.

Coordination with Downstream Teams

Each task is handed off with a detailed brief—theme, format, target audience, success criteria—along with resource links, timeline dependencies and assigned roles. Integrated notifications and real-time dashboards ensure transparency and accountability as the workflow advances to ideation and execution.

AI-Driven Strategy Roles

Intelligent systems embedded in the strategy stage transform disparate data into actionable roadmaps.

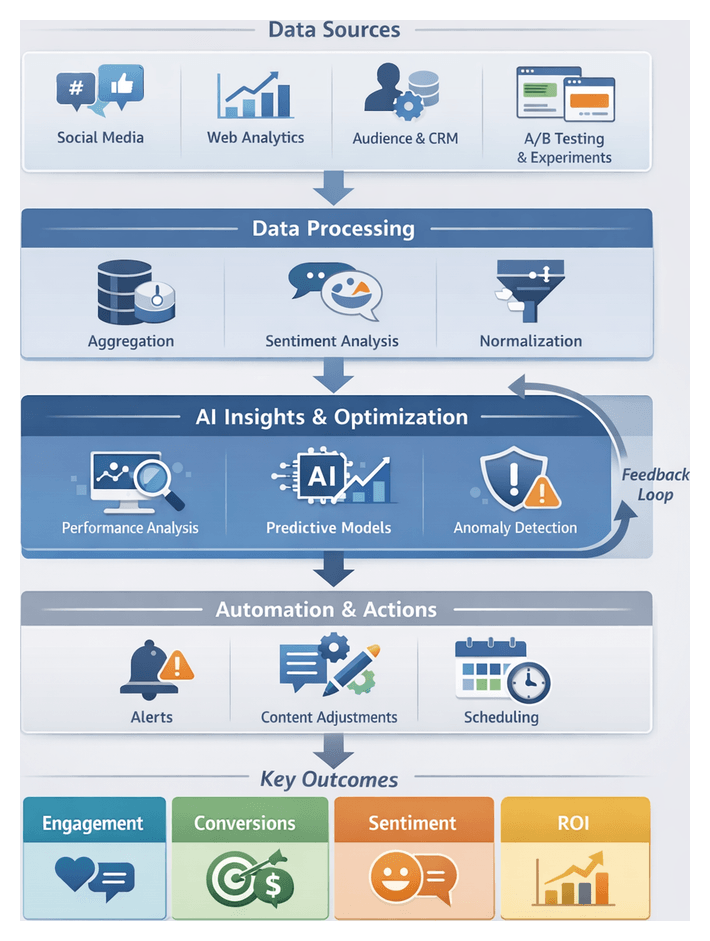

Data Aggregation and Performance Analysis

AI systems ingest metrics from social platforms, CRM and analytics dashboards. Solutions such as OpenAI GPT parse unstructured feedback, while AWS Comprehend classifies sentiment and topic relevance. Clustering and time-series analysis reveal top assets and emerging trends.

Priority Recommendation Engines

Using frameworks like Google Cloud AI and IBM Watson Studio, reinforcement learning models rank repurposing opportunities by projected ROI, social share potential and alignment with campaign KPIs.

Scenario Modeling and Impact Forecasting

Monte Carlo simulations and “what-if” analyses quantify risk and opportunity under varying conditions, generating impact curves and probability distributions to guide trade-off decisions.

Audience Segmentation and Personalization Alignment

Machine learning classifiers segment audiences into micro-cohorts. Platforms recommend tailored content angles, ensuring resource investment aligns with revenue potential.

Sentiment Analysis and Tone Optimization

Natural language processing models extract tonal attributes from existing content. Generative language models propose style variants calibrated to platform conventions, streamlining preliminary drafting.

Risk Assessment and Compliance Enforcement

AI governance modules scan themes against regulatory databases and policy repositories. Tools such as Google Cloud AI Vision and NLP APIs extend checks to image and video assets.

Feedback Loop and Iterative Refinement

Post-publication metrics and feedback continuously train predictive models. Dashboards visualize variances between forecasts and outcomes, prompting recalibration when model drift exceeds thresholds.

Decision Support and Collaboration

Interactive platforms present scenarios, priority rankings and risk assessments, enabling cross-functional teams to run rapid “what-if” variations and maintain alignment across marketing, compliance and operations.

Key Strategy Deliverables and Handoffs

At the conclusion of strategy definition, a set of formal deliverables guides the ideation and execution teams:

- Prioritized Platform Matrix: Ranked channels and formats based on impact and feasibility

- Repurposing Roadmap: Sequenced plan mapping assets to milestones, formats and channels

- Success Metrics Framework: Defined KPIs, both quantitative and qualitative, with integrations to tools like Sprout Social

- Resource and Responsibility Assignments: Roles, ownership and AI platform integrations such as GPT-4

- Risk and Compliance Checklist: Regulatory and brand safety registry

- Budget and Timeline Estimates: Financial outline and key deadlines

- AI and Data Requirements Document: Model specifications, data schemas and integration details

Integration points include asset inventories from platforms like HubSpot, performance data from Tableau, project management in Monday.com and API integrations via MuleSoft. Handoff to ideation follows these guidelines:

- Formal sign-off by marketing, legal and brand governance stakeholders

- Cross-functional briefing sessions with strategy leads, creators and AI specialists

- Secure transfer of assets, metadata and performance logs

- Setup of dedicated collaboration channels—Slack, Microsoft Teams or project boards

- Alignment on ideation milestones and quality baselines

Best Practices for Smooth Transition

- Maintain a single source of truth in a cloud knowledge repository or integrated marketing platform

- Visualize dependencies with lightweight orchestration tools such as ZenHub or Trello

- Implement change‐control protocols to manage updates to strategy deliverables

- Ensure transparent communication with AI engineering teams when adjusting model parameters

- Continuously refine handoff documentation based on ideation team feedback

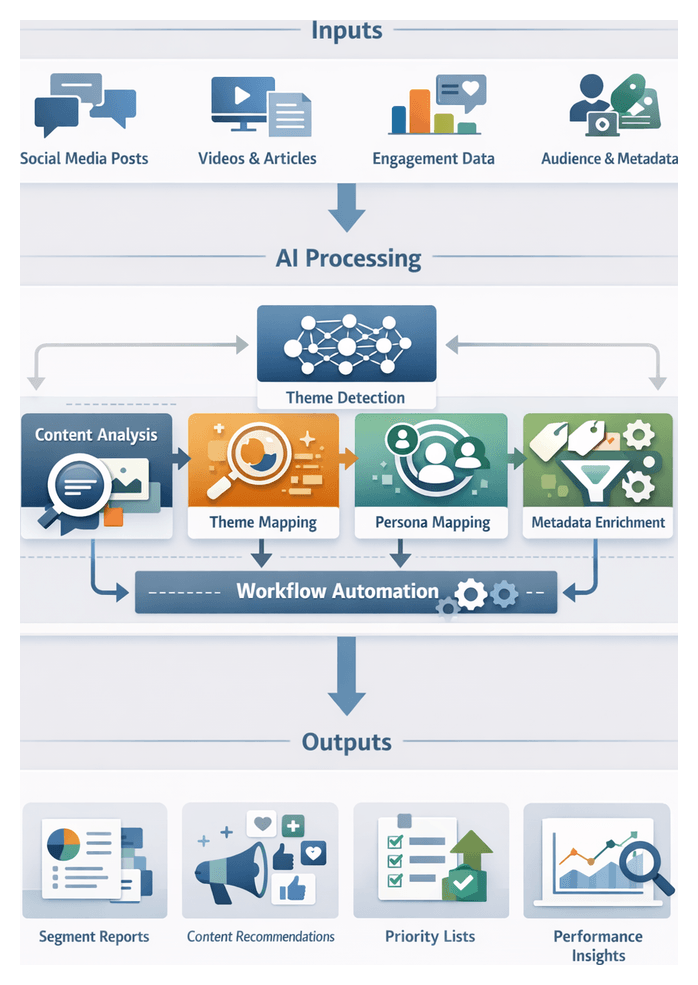

Chapter 3: AI-Driven Content Analysis and Segmentation

AI-driven content analysis and segmentation transforms disparate social media posts, videos, and articles into structured insights that guide targeted repurposing strategies. By applying advanced natural language processing, computer vision, and predictive modeling, organizations gain a precise mapping of content themes to audience segments. This enables prioritization of high-impact assets, alignment with brand voice, and optimized resource allocation across multi-channel environments.

Automated pattern detection and thematic extraction accelerate decision making, while codified workflows ensure repeatability and governance. Continuous performance data feeds refine theme taxonomies and segment definitions, creating a virtuous cycle of improvement. As a strategic compass, this stage empowers stakeholders from content strategists to data engineers to align on objectives, metrics, and handoff criteria, ultimately elevating engagement and preserving brand integrity.

Data and Infrastructure Prerequisites

Reliable AI-driven analysis depends on comprehensive inputs and robust technical conditions. Organizations should validate data completeness, consistency, and accessibility before initiating segmentation.

- Engagement Metrics: Quantitative measures—likes, shares, comments, watch times, click-through rates—sourced from platforms such as Google Analytics and social listening tools.

- Content Metadata: Information on publication dates, authorship, tags, categories, and campaign identifiers maintained in content management systems.

- Audience Demographics and Behavior: Attributes and signals—age, location, interests, session durations, conversion events—from platforms like Salesforce.

- Brand and Style Guidelines: Machine-readable tone, voice, and visual rules that inform theme extraction and segment definitions.

- Historical Performance Benchmarks: Baseline KPIs—engagement rates, conversion ratios, return on ad spend—that calibrate AI thresholds.

- Technological Infrastructure: Scalable storage and processing frameworks, including cloud data warehouses such as Snowflake, and real-time AI services like OpenAI GPT-4 and Google Cloud Natural Language API.

Key organizational and technical readiness conditions include:

- Data Quality Governance: Automated validation for missing values, inconsistent tags, and duplicates, with dashboards for anomaly detection.

- Compliance and Privacy: Encryption, consent management, and anonymization protocols to meet GDPR, CCPA, and other regulations.

- Cross-Functional Collaboration: Aligned objectives, performance thresholds, and risk parameters across marketing, data engineering, and legal teams.

- Model Validation and Bias Mitigation: Auditing AI outputs for fairness and relevance, leveraging tools like IBM Watson Natural Language Understanding.

- Compute and Orchestration Framework: Containerized AI services and schedulers with platforms such as Hugging Face Transformers and Kubernetes for elasticity and high availability.

Segmentation Workflow

The segmentation workflow orchestrates content ingestion, AI classification, persona mapping, metadata enrichment, and handoff to ideation.

Content Ingestion and Preprocessing

- API-Driven Retrieval: Orchestrator fetches text, images, video transcripts, and metadata from CMS or DAM repositories.

- Normalization and Cleaning: Standardize encoding, resize media, extract transcripts, remove boilerplate, and correct formatting anomalies.

- Parsing and Tokenization: Apply NLP tokenizers to split text into sentences, paragraphs, and named entities; tag media with basic descriptors.

- Logging and Monitoring: Capture ingestion timestamps, asset IDs, and exceptions for real-time visibility.

AI-Driven Theme Detection and Classification

Preprocessed assets are classified to surface themes, sentiment, and relevance scores.

- Classification API Calls: Submit batches to a classification service with pretrained and custom models aligned to organizational taxonomies.

- Theme Extraction and Sentiment Analysis: Generate ranked themes and polarity scores to tailor repurposed content.

- Confidence Filtering: Assets below score thresholds enter a secondary review queue or AI quality-assurance loop.

- Taxonomy Evolution: Automated concept mining proposes new themes, with human review and model retraining to integrate updates.

Audience Persona Mapping and Segment Scoring

- Persona Retrieval: Query enterprise CDP for persona definitions, content preferences, and engagement patterns.

- Feature Embeddings: Compute semantic vectors using the OpenAI GPT-4 embedding API.

- Similarity Matching and Scoring: Align content embeddings to persona profiles via cosine similarity; assign composite scores reflecting thematic fit and historical engagement.

- Priority Queuing: Route high-scoring assets to ideation workflows; archive or defer lower-priority items.

Metadata Enrichment and Orchestration

- Format, Tone, and Complexity Tags: Classify assets by format and assign mood indicators and readability levels.

- Channel Suitability Predictions: Tag optimal platforms—LinkedIn, TikTok, Instagram—based on format and persona alignment.

- Workflow Coordination: Orchestrator schedules classification, matching, and enrichment tasks; manages retries and error recovery.

- Deliverables and Handoff: Produce segment reports, enriched asset records, priority queues, and collaboration links for the ideation stage.

AI-Driven Techniques and Architectural Components

Natural Language Understanding and Topic Extraction

Apply named entity recognition, sentiment scoring, dependency parsing, and topic modeling (LDA, NMF) to surface key concepts and prevailing tones across large content sets.

Semantic Embedding and Similarity Analysis

Convert text into high-dimensional vectors via transformer encoders, use cosine similarity and vector databases to detect related assets and support clustering and deduplication.

Unsupervised Clustering and Pattern Detection

Employ k-means, hierarchical clustering, and DBSCAN to partition embedding space into natural theme clusters and surface anomalies for discovery.

Supervised Classification and Metadata Tagging

Fine-tuned transformer classifiers map assets to brand taxonomy with multi-label support and confidence scoring, feeding feedback loops for continuous improvement.

Graph Analytics and Relationship Mapping

Construct knowledge graphs of assets, topics, influencers, and personas to reveal multi-hop connections and identify high-impact nodes and relationships.

Supporting Systems

- ETL Pipelines: Automated data ingestion, normalization, and enrichment via streaming frameworks and batch processes.

- Feature Stores and Vector Databases: Central repositories for ML features and embeddings enabling low-latency retrieval.

- MLOps Platforms: Model versioning, retraining pipelines, drift detection, and performance monitoring.

- Workflow Orchestration: Task scheduling, dependency management, and dynamic resource allocation.

- Visualization Dashboards: Interactive theme maps, sentiment trends, and cluster heat maps for stakeholder insights.

- Human-in-the-Loop Interfaces: Annotation tools for expert validation, taxonomy updates, and governance checkpoints.

Analysis Deliverables and Transition Mechanisms

- Segment Reports: Grouped asset profiles by theme, persona, format, with engagement metrics and confidence scores.

- Theme and Topic Maps: Visual or tabular layouts showing clusters, coverage gaps, and emerging opportunities.

- Priority Asset Lists: Ranked inventories sorted by performance indicators to guide resource allocation.

- Metadata Enrichment Files: Standardized packages of tags, keywords, sentiment scores, and channel recommendations.

- Performance Analytics Extracts: Filtered KPI datasets segmented by platform and demographic cohort.

Successful delivery relies on data quality assurance, clear taxonomy and schema definitions, documented AI model configurations, secure integration with data warehouses, and defined governance roles.

Transition mechanisms include API-driven data pushes, exportable BI dashboards, automated notifications, structured CSV/JSON files for downstream engines, and versioned documentation with audit trails. These ensure seamless handoff to ideation and repurposing teams, accelerate time-to-market, and maintain transparency across stakeholders.

Governance, Auditing, and Feedback Loops

- Change Logs and Audit Trails: Track taxonomy updates, model retraining events, and manual overrides.

- Quality Review Checkpoints: Scheduled validations by strategists and analysts to correct AI outputs.

- Performance Feedback Integration: Capture creative acceptance rates and audience response to refine models.

- Access Control Policies: Role-based permissions to safeguard taxonomy and metadata integrity.

Scalability and Reusability

- Modular Reporting Templates: Adaptable layouts for new markets or verticals with localized data.

- Extensible Metadata Schemas: Flexible attribute sets that accommodate emerging content types.

- Parameterized AI Pipelines: Configurable workflows for campaign- or region-specific analysis.

- Centralized Knowledge Repositories: Libraries of validated outputs, taxonomy versions, and performance baselines for rapid onboarding and insight reuse.

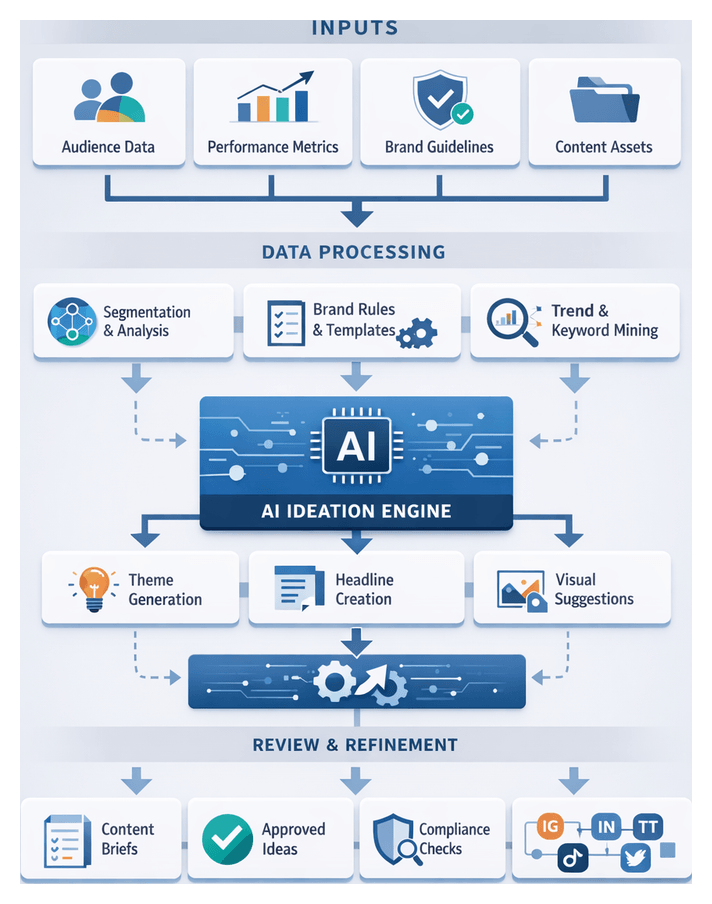

Chapter 4: Automated Content Ideation and Theme Generation

Purpose and Context of Automated Content Ideation

The automated content ideation stage transforms data insights into structured creative concepts, accelerating thematic generation while ensuring strategic alignment. By ingesting outputs from content audits, performance analytics, audience segmentation, and brand guidelines, AI-driven workflows produce on-brand idea briefs that guide writers, designers, and marketers. This approach mitigates creative bottlenecks, reduces brand drift, and delivers a repeatable system for uncovering angles tailored to defined audience segments across channels such as Instagram, LinkedIn, TikTok, and Twitter.

Advances in natural language generation and pattern recognition have made tools like GPT-4 and Google Vertex AI integral collaborators. These systems leverage historical performance metrics and brand directives to suggest headlines, themes, and narrative strategies in seconds. By defining clear ideation objectives—such as boosting engagement or reinforcing thought leadership—organizations guide AI toward outcomes that support key performance indicators like click-through rates, share counts, and conversion metrics. This human-machine feedback loop drives continuous improvement in concept relevance and creative impact.

Objectives and Governance

Well-articulated objectives direct AI systems to generate concepts that advance strategic goals. They serve as guardrails, preventing generic outputs and enabling transparent measurement. To maintain quality and compliance, organizations must establish governance structures that define roles and responsibilities for AI operators, creative leads, and compliance reviewers. Clear escalation paths for approvals, modifications, and compliance checks ensure that each idea adheres to legal, ethical, and brand standards before entering content transformation and adaptation stages.

Inputs and Prerequisites

Successful automated ideation relies on comprehensive inputs and technical readiness. Essential prerequisites include:

- Segment data from audience analysis, including thematic clusters and format preferences

- Historical performance metrics, such as engagement rates and click-through ratios

- Brand voice, tone guidelines, and approved vocabulary lists

- Audience personas and behavioral profiles capturing motivations and pain points

- Content audit reports, asset inventories, and gap analyses

- Market trends, competitive insights, and social listening data

- Campaign objectives, key messages, and SEO keyword clusters

- Cultural, seasonal, and event calendars

- Technical environment readiness: API credentials, CMS and DAM integration, and secure data pipelines

Integration of AI Tools and Platforms

Integrating AI services requires secure API connections, prompt template configuration, and real-time data ingestion. Platforms or custom orchestration pipelines built on Apache Airflow establish the technical foundation. Prompt libraries embed brand guidelines and audience cues, while analytics integrations feed performance and trend data into AI engines. Early collaboration between IT, marketing operations, and creative teams ensures seamless setup and governance enforcement.

Ideation Workflow

Triggering and Initiation

The workflow begins when the orchestration layer detects readiness signals—completed segment reports, updated performance benchmarks, and validated brand assets. A management system enqueues an ideation job, allocating compute resources and retrieving inputs from content repositories and analytics platforms. Tasks are scheduled based on priority, resource availability, and campaign timelines.

Data Ingestion and Preprocessing

Diverse inputs undergo consolidation and validation:

- Segment Aggregation: Retrieval of audience definitions, past performance data, and thematic tags.

- Asset Fetching: Import of text, images, and transcripts from the DAM system with associated metadata.

- Brand Guideline Integration: Extraction of style rules and tone directives from a centralized repository.

- Prompt Assembly: Population of templates with segment keywords, thematic phrases, and format instructions.

Automated validation ensures data completeness and quality, triggering alerts for manual resolution when discrepancies arise.

AI-Driven Concept Generation

With inputs prepared, specialized AI services operate in parallel:

- Theme Expansion: A natural language generation model like ChatGPT proposes overarching themes and narrative arcs.

- Headline and Angle Generation: Pattern-recognition modules analyze high-performing headlines and recombine structures for each channel.

- Visual Concept Suggestion: Image suggestion APIs advise on mood boards and key imagery descriptors.

An API gateway orchestrates prompt payloads, monitors execution, and aggregates responses into a unified workspace. Standardized JSON schemas, retry logic, and rate-limit handling maintain throughput under heavy load.

Iterative Refinement and Collaborative Review

Concepts undergo cyclical refinement combining automated scoring and human insight:

- Automated Scoring: A microservice assesses sentiment alignment and relevance using historical engagement data.

- Human Curation: Strategists review high-scoring ideas via a collaborative dashboard, upvoting, annotating, or requesting revisions.

- Feedback Integration: Curator inputs refine prompt templates and enforce brand lexicon compliance for subsequent AI cycles.

Typically two to three refinement cycles balance creative breadth with strategic focus, and all decisions are logged for audit trails.

Quality Assurance and Selection

Final evaluation ensures compliance with criteria:

- Alignment with campaign objectives and key messages

- Uniqueness across audience segments

- Readability and emotional resonance via readability algorithms

- Legal and regulatory compliance enforced through an AI-powered checker

Concepts meeting threshold scores are approved; others are revised or discarded based on curator guidance.

Packaging and Handoff

Approved ideas are compiled into structured briefs containing:

- Theme title and description

- Primary and secondary headlines

- Visual concept prompts or storyboards

- Target segment and channel notes

- Source asset references and data charts

- Version history and approval stamps

The briefs are exported to the content operations platform, triggering content transformation workflows. Metadata tags ensure correct style and format conversions, and notifications alert downstream teams.

AI Functional Roles in Ideation

Semantic Pattern Detection

Transformer-based models analyze content corpora to surface recurring concepts, sentiment clusters, and lexical relationships. Tools like GPT-4 and Google Vertex AI use embeddings to detect topic clusters, emergent sub-themes, and sentiment shifts that reveal narrative opportunities.

Concept Mapping and Theme Clustering

AI-driven topic modeling platforms such as IBM Watson Natural Language Understanding organize semantic insights into hierarchical theme structures. These systems integrate with centralized knowledge graphs in the CMS to store and retrieve themed entities, preserving institutional knowledge and reducing duplication.

Headline and Angle Generation

NLG engines produce headline variants and creative angles from theme clusters. An orchestration layer manages prompt variations, filters outputs by tone and length, and enforces compliance rules.

Tone and Style Consistency

Controlled generation techniques and style transfer algorithms embed brand tokens—such as “professional yet approachable” or “innovative and bold”—into outputs. Reinforcement learning from human feedback fine-tunes models, and real-time checks against the brand repository flag divergences for review or regeneration.

Audience-Centric Personalization

AI models adjust framing based on persona profiles, emphasizing segment-specific motivators. Integration with customer data platforms ensures personalization rooted in real user behavior, enabling parallel testing of multiple angles without sacrificing relevance.

Trend Analysis and Real-Time Adaptation

Streaming analytics ingest social listening data from platforms like Twitter, TikTok, and LinkedIn. Event-driven architectures using Apache Kafka or Google Pub/Sub update theme clusters and regenerate angles to reflect emerging trends, maintaining contextual relevance.

Collaborative Co-Creation Tools

Interfaces combining side-by-side editing, suggestion tracking, and version control enable human strategists and AI agents to co-create content outlines. Inline suggestions for synonyms, tone adjustments, and headline tweaks, along with AI-summarized comment threads, ensure alignment. Integrations with Slack and Microsoft Teams capture feedback and approvals within the ideation workflow.

Continuous Learning and Improvement

Post-distribution performance data—engagement rates, click-throughs, and sentiment analysis—feeds back into automated retraining pipelines managed by MLOps frameworks like MLflow or Kubeflow. This closed-loop learning adjusts theme weightings and prompt templates, driving ongoing enhancement of ideation quality.

Idea Deliverables and Handoff Criteria

Deliverables encapsulate thematic direction, creative prompts, and metadata, ensuring downstream teams can seamlessly transform ideas into content assets. Primary deliverables include:

- Idea briefs with working titles, theme summaries, persona alignment, and suggested formats

- Thematic outlines mapping key messages, subheadings, and supporting data points

- Headline and hook options generated by tools like Jasper and Copy.ai

- Visual prompts and mood boards from platforms such as Midjourney or Canva

- Metadata annotations: SEO tags from SurferSEO, analytics insights from Google Analytics, and trending hashtags

- Priority and sequencing recommendations based on strategic value and resource estimates

Deliverables advance only after validating dependencies:

- Up-to-date segment definitions and profile attributes

- Integrated brand guidelines enforced by AI workflow tools

- Loaded performance benchmarks and A/B test results

- Content inventory alignment to avoid duplication

- Applied regulatory and compliance filters for industry-specific requirements

Handoff criteria ensure readiness for transformation:

- Completion Indicators: Peer-reviewed briefs, headline sets meeting engagement thresholds, fully populated metadata fields

- Quality Gates: Automated brand compliance, audience alignment scoring, and regulatory approval codes

- Technical Integration: CMS API ingestion of JSON payloads, DAM transfer of visual assets, auto-created tasks in Trello or Asana, and notifications via Slack or Microsoft Teams

- Temporal Criteria: Scheduled batch releases aligned with editorial calendars and adaptive re-ideation triggers based on real-time analytics

When all criteria are satisfied, deliverables are marked “Ready for Transformation,” triggering downstream AI agents for content repurposing, format conversion, and platform adaptation. This structured approach ensures predictable throughput, consistent quality, cross-team visibility, and scalable production of content ideas at enterprise scale.

Chapter 5: Transformative Content Repurposing Techniques

Repurposing Objectives and Input Requirements

In advanced social media strategies, repurposing transforms long-form assets into channel-specific formats that maximize reach, engagement, and brand consistency. By converting articles, white papers, webinars, and reports into bite-sized posts, short videos, infographics, carousels, and interactive elements tailored for Instagram, TikTok, LinkedIn, and Twitter, organizations extend discoverability and accelerate throughput. Defining clear objectives and assembling high-quality inputs upfront ensures each repurposed asset aligns with brand guidelines and strategic priorities.

- Enhance Reach and Visibility: Optimize content length, style, and interactivity to platform norms, improving discoverability and share rates.

- Maintain Brand Voice: Preserve narrative tone and key messages through AI-powered paraphrasing and style-rule guardrails.

- Optimize Engagement Metrics: Leverage performance benchmarks—click-throughs, shares, comments, view completions—to prioritize formats with highest impact.

- Accelerate Scalability: Automate summarization, paraphrasing, formatting, and layout generation using AI tools to scale production without linear labor increases.

- Ensure Accessibility and Compliance: Integrate closed captions, alt text, and readability adjustments, adhering to legal and regulatory standards.

Effective repurposing requires robust prerequisites and well-structured inputs.

- Governance Framework: Define roles, responsibilities, and approval checkpoints for legal, brand, and compliance reviews before and after AI processing.

- Style Guide and Brand Guidelines: Document voice attributes, terminology preferences, formatting rules, and visual identity elements to guide AI models.

- Metadata and Taxonomy Schema: Implement standardized tags for topics, segments, formats, and campaign identifiers to enable automated filtering and classification.

- Technology Integration: Establish API connectivity between content management systems like Contentful, digital asset management platforms, AI services from OpenAI and Hugging Face, and collaboration tools.

- Quality Baselines: Use historical performance data to set engagement rate benchmarks that inform AI prioritization and continuous optimization.

- Asset Audit and Classification: Tag source assets by format, performance history, and repurposing potential, assigning suitability scores for specific transformations.

- Team AI Literacy: Provide training so content creators and editors understand AI configuration, output interpretation, and manual intervention requirements.

Source assets must be validated against quality criteria before entering the repurposing pipeline:

- Long-form text (articles, case studies, white papers) clear of typos with identifiable key messages.

- Audio/video recordings (webinars, podcasts) with high audio quality, transcripts generated via Lumen5 or similar tools, and accurate time-codes.

- Graphic files (infographics, slide decks) in editable formats (Photoshop, InDesign) with version history and style annotations.

- Interactive data visualizations with clean source datasets and configuration parameters for chart reformatting.

- Captions and transcripts in SRT or VTT formats for accessibility and subtitle styling.

- Pre-approved templates and brand collateral for rapid assembly using Jasper AI or AI-powered layout assistants.

Validating metadata tags, resolution standards, and compliance ensures AI engines receive well-structured inputs, reducing errors and preserving quality during transformation.

Transformation Workflow Overview

The repurposing operations workflow converts approved assets into a diverse portfolio of social media deliverables through a sequence of automated and manual phases. Key stages include content ingestion, metadata enrichment, automated task routing, AI-driven conversion, human review, asset management integration, and handoff to adaptation and scheduling. Consistent triggers and API interactions across specialized platforms eliminate ad-hoc processes and maintain governance.

Content Ingestion and Metadata Enrichment

Automated retrieval fetches source assets from a central repository via API endpoints—for example, Contentful or a proprietary DAM. Webhooks or scheduled jobs extract text, images, video, slide decks, and existing metadata. An enrichment microservice powered by OpenAI or Hugging Face models analyzes text to extract themes, sentiment scores, and named entities. These attributes populate custom fields—tone, primary keyword, target persona—within the asset object to guide downstream conversions.

Automated Routing and Scheduling

An orchestration platform evaluates each asset’s type, priority, and SLA requirements, enqueuing tasks into specialized pipelines:

- Text summarization and paraphrasing for blog posts and articles

- Video script generation from transcripts

- Bullet-to-graphic conversion for infographics

- Slide condensation for carousel formats

Message brokers (e.g., RabbitMQ, AWS SQS) manage queue workloads, while SLA metadata tracks turnaround times and compliance levels for full visibility.

AI-Driven Conversion Engines

Conversion tasks invoke AI engines tailored to content type:

- Text Transformation: Summarization condenses content to target word counts; paraphrasing engines from Jasper AI or fine-tuned LLMs rewrite with brand voice; format conversion maps segments into templates for Twitter threads, LinkedIn posts, and Instagram captions.

- Multimedia Generation: Video scripting services produce time-coded scripts; computer vision modules auto-crop images for social dimensions; data points populate infographic templates via Canva API or similar tools.

Each output is tagged with metadata—aspect ratio, file type, resolution—to drive subsequent adaptation tasks.

Human Review and Orchestration

After AI processing, assets enter the digital asset management system with status flags (In Progress, Pending AI Review, Pending Human Review, Ready for Adaptation). Designated reviewers receive notifications and access a central interface to verify message accuracy, brand tone, legal compliance, and visual consistency. Feedback loops route corrections back to AI pipelines or advance assets to the next stage.

A central orchestration dashboard tracks metrics such as processing time, error rates, throughput, and SLA compliance. Automated alerts address bottlenecks or repeated rejections, while dynamic routing reassigns tasks to backup AI engines or human operators as needed.

Handoff to Adaptation and Scheduling

Assets meeting quality criteria and marked Ready for Adaptation are bundled into packages containing finalized text, multimedia files, metadata tags, style guide pointers, and channel mappings. A webhook notifies the adaptation system—which may integrate with scheduling tools via Zapier or direct APIs—that new content is available. This seamless handoff minimizes idle time and ensures repurposed deliverables flow efficiently into format-specific adaptation and publishing workflows.

AI-Driven Format Transformation

AI engines power the core of format transformation, automating paraphrasing, summarization, style transfer, template rendering, and metadata enrichment at scale while preserving brand integrity.

Semantic Preservation and Brand Voice

Large language models like OpenAI GPT-4 and transformer systems from Hugging Face capture nuanced meanings and tone markers. Fine-tuning on brand corpora and integration with CMS style-rule engines enforce guardrails against forbidden phrases and tone drift, ensuring authentic, compliant outputs.

Automated Paraphrasing and Rewriting

Sequence-to-sequence models generate unique text variations for A/B testing and platform differentiation. Controlled rewriting parameters balance novelty with fidelity, entity anchoring preserves key names and dates, and version metadata maintains an audit trail. Orchestration platforms parallelize paraphrasing tasks across AI agents, slashing turnaround times.

Smart Summarization and Highlight Extraction

AI summarization combines extractive and abstractive methods to produce bullet lists, executive abstracts, or social captions. Services like SMMRY deliver quick summaries, while fine-tuned GPT models generate marketing-grade micro-stories. Summaries are stored alongside source files in the DAM for review and retrieval.

Multimodal Adaptation and Style Transfer

Vision-language models generate descriptive alt text, guide image cropping, and support style transfer to align tone with channel conventions. NLG paired with speech synthesis produces voice-over scripts optimized for timing. AI agents reference platform specification libraries to adjust word counts, sentence complexity, and calls to action.

Template-Driven Generation and Metadata Enrichment

Template engines in CMS or marketing suites scaffold repurposed assets. AI modules populate headlines, captions, and metadata—taxonomy codes, SEO keywords, campaign IDs—using named-entity recognition and topic modeling. Each asset emerges structured and annotated, ready for validation or direct publishing.

Quality Assurance and Feedback Loops

AI-powered quality control tools flag tone deviations, factual inconsistencies, and compliance issues. Review interfaces highlight changes and suggested corrections. Editor feedback via annotation APIs feeds active learning loops, continuously retraining models to reduce error rates and improve precision.

Deliverable Packaging and Cross-Platform Handoff

The final repurposing output is a deliverable package adhering to metadata, format, and brand standards, ready for adaptation, quality control, and scheduling.

Package Composition

- Draft social media posts in text and HTML, conforming to character limits and tone guidelines

- Video and audio scripts segmented by scene or timecode with visual and audio cues

- Copy blocks for graphic overlays or infographics with layout notes

- Annotated transcripts and bullet summaries for accessibility and multi-platform reuse

- Reference links to original long-form assets for traceability

Each item includes metadata—theme, platform, persona, version—facilitating automated retrieval from a shared repository.

Dependencies and Integration Points

Deliverables align with upstream inputs and downstream requirements:

- Brand guidelines dictating tone, terminology, and visual style