End to End AI Driven Delivery Workflow in Logistics and Transportation

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Delivery Complexity and Data Foundations

Modern logistics networks operate at unprecedented scale and velocity, driven by rapid e-commerce growth, tight delivery windows and omnichannel fulfillment strategies. Customer expectations for same-day or two-hour delivery and real-time tracking have transformed delivery from a predictable, linear process into a multifaceted system. Fluctuating demand spikes—from flash sales or peak shopping events—combine with variable transportation modes, unpredictable traffic conditions and external disruptions such as severe weather or public health emergencies to create an operational landscape where agility and continuous adjustment are essential.

Geographic complexity arises from multi-hub distribution architectures, cross-dock facilities and urban fulfillment centers, each with distinct throughput capacities and labor constraints. Transfers between long-haul, intermodal rail, maritime and last-mile vehicles must be tightly synchronized to avoid bottlenecks, idle assets and missed windows. Meanwhile, emerging micromobility options—cargo bikes and on-demand couriers—serve hyperlocal routes but add further scheduling intricacies.

Regulatory frameworks at local, regional and international levels impose curfews, emission zone restrictions, driver-hours-of-service limits and customs clearance requirements. Compliance demands automated verification against electronic logging device standards and customs protocols to prevent fines, delays and reputational risk. The integration of autonomous vehicle trials and drone corridors introduces evolving safety regulations, amplifying the need for real-time governance controls embedded in operational workflows.

The final mile remains the most resource-intensive segment, often accounting for over half of total logistics costs. Residential access controls, dynamic order cancellations, varying parcel dimensions and parking restrictions render manual route planning infeasible. Organizations must factor in vehicle configurations, customer availability windows and location-specific constraints to maintain high service levels within tight cost parameters.

Underpinning these challenges is a vast ecosystem of data sources. Fleet telematics systems deliver live GPS, speed and engine diagnostics. Traffic feeds and incident alerts update congestion profiles. Weather services broadcast forecasts and severe event warnings. Order management platforms record delivery preferences, special instructions and load details. Each feed arrives in disparate formats and update cadences, requiring robust ingestion, cleansing and normalization to form a unified, real-time data foundation.

Legacy systems—transportation management (TMS), warehouse management (WMS) and enterprise resource planning (ERP) platforms—often operate in silos, limiting visibility. Integrating these core applications with external streams demands secure APIs, standard schemas and middleware adapters to reconcile geocoding standards, time zones and address validation. Without this integration, decision-making suffers from latency, inaccuracies and data gaps.

Establishing a resilient data architecture involves:

- High-bandwidth, secure connectivity between vehicles, field devices and cloud services

- Robust messaging frameworks (MQTT brokers, RESTful APIs) with guaranteed delivery

- Up-to-date geographic information system layers and routing graphs

- Identity and access management controls for internal and third-party applications

- Data governance policies to enforce quality, privacy and auditability standards

With these technical prerequisites in place, organizations create the foundational platform necessary to deploy advanced AI and optimization engines that drive real-time responsiveness, cost efficiency and service excellence.

Structured End-to-End AI Workflows

Ad hoc operational responses and siloed point solutions are insufficient to address the pace, volume and variability of modern delivery demands. A structured, end-to-end AI workflow provides a unified framework to orchestrate data acquisition, decision logic and resource allocation in a reliable, transparent and auditable manner. By embedding clear process flows, integration contracts and governance controls, organizations transform fragmented efforts into seamless orchestration that reduces costs, elevates service quality and ensures real-time responsiveness.

Orchestration and Sequencing

A central orchestration platform acts as the conductor of the AI workflow, sequencing stages, handling concurrency and enforcing error-handling policies. For example, pipelines built on Apache Airflow or serverless state machines using AWS Step Functions can be configured to:

- Detect schedule triggers and external events (new orders, last-mile exceptions)

- Ingest telematics feeds, traffic APIs and order databases

- Execute data cleansing and validation before publishing to shared repositories

- Run predictive models for demand forecasting and travel-time estimation in parallel

- Queue outputs for optimization engines and await completion signals

- Dispatch finalized routes to scheduling modules and notify planners of exceptions

Automated sequencing ensures correct inputs at each stage, enforces quality checks before downstream tasks proceed and captures execution metrics—task durations, failure rates and data volumes—for real-time workflow health monitoring.

Integration with Enterprise Systems

Specialized AI components for forecasting, optimization and monitoring must interoperate smoothly with TMS, ERP, CRM and WMS platforms. Key integration patterns include:

- REST or gRPC endpoints for model inference services

- Consumption of normalized data from message queues or data lakes

- Publication of optimized routes and shift plans back to TMS via APIs or EDI

- Triggering CRM notifications for high-priority shipments requiring manual review

- Alignment of pick-and-pack sequences with delivery schedules in WMS

Clear integration contracts—defining schemas, protocols and retry policies—prevent brittle point-to-point connections and simplify maintenance when systems evolve.

Human-Machine Collaboration and Governance

Even advanced AI workflows acknowledge the critical role of human expertise for complex exceptions or high-value scenarios. Collaboration points allow operators to:

- Review routes violating regulations or cost thresholds

- Approve alternative schedules when traffic disruptions threaten performance

- Override recommendations based on local insights or emergent priorities

- Annotate feedback that feeds into continuous learning loops

Task management interfaces and mobile applications deliver risk scores, forecast uncertainties and optimization trade-off summaries. Human approvals and adjustments are captured as formal inputs for model retraining, ensuring that frontline knowledge refines future decisions.

Structured workflows embed governance across all stages, producing audit trails of data provenance, model versions, decision logic and human approvals. Immutable logs, version-controlled model registries, chain-of-custody records for data transformations and automated compliance reports enable rapid reconstruction of decision paths and adherence to regulatory obligations.

Scalable Infrastructure and Continuous Improvement

Container orchestration platforms like Kubernetes and serverless compute environments elastically allocate resources to AI modules and integration tasks. The orchestration layer monitors compute utilization, message queue backlogs and service latency against SLAs. During demand surges, additional inference instances and data processing jobs spin up automatically; during low-traffic periods, resources scale down to control costs.

A closed-loop feedback mechanism captures delivery performance metrics, exception logs and customer satisfaction scores. These outcome streams feed back into training pipelines to:

- Retrain demand forecasts for shifting shipment volumes

- Adjust traffic prediction parameters based on actual versus estimated travel times

- Tune optimization constraints to reflect evolving cost structures and SLAs

- Update rescheduling policies with new business rules or regulatory requirements

This living system adapts to evolving conditions, incorporates frontline feedback and drives incremental efficiency gains over time.

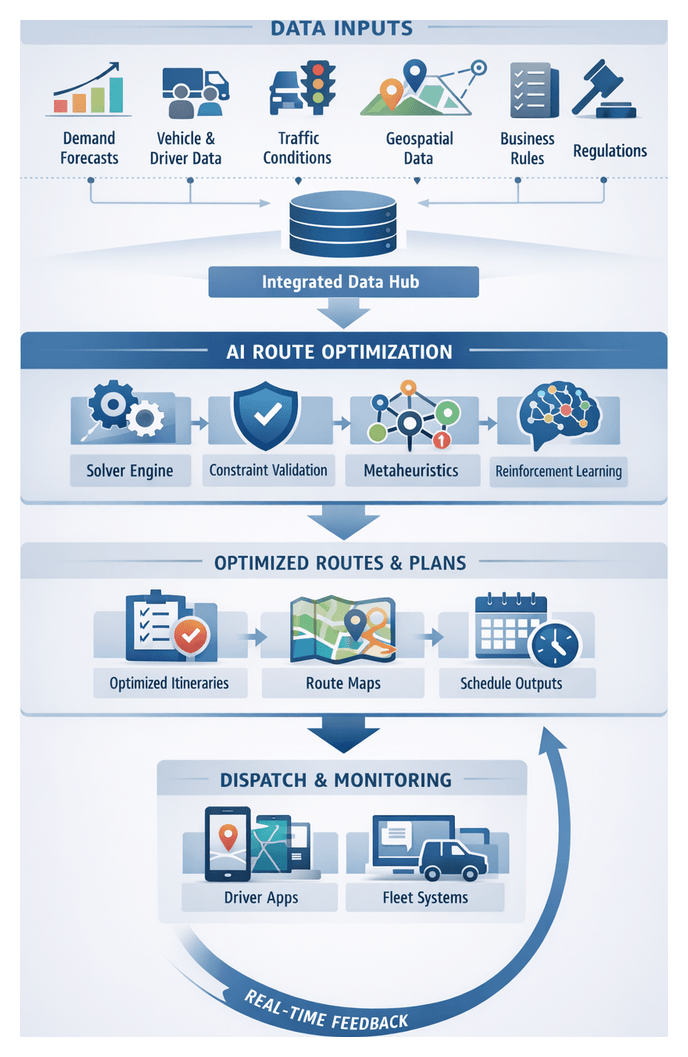

AI-Powered Routing Solutions

Routing in contemporary logistics demands dynamic, real-time adaptation to traffic fluctuations, variable demand and resource availability. AI extends beyond rule-based approaches, empowering organizations to anticipate conditions, optimize end-to-end flows and continuously learn from outcomes. Key AI capabilities and supporting systems include:

- Predictive Modeling: Machine learning algorithms forecast congestion by analyzing historical traffic, weather and event schedules. These forecasts enable proactive rerouting before bottlenecks occur.

- Optimization Engines: Advanced solvers using metaheuristics or mixed-integer programming generate efficient route plans. Open source frameworks like Google OR-Tools and commercial offerings integrate with orchestration platforms to recompute plans as new data arrives.

- Adaptive Learning Modules: Reinforcement learning agents evaluate executed routes against KPIs—on-time rates, distance, fuel usage—and adjust algorithm parameters to improve future outcomes.

- Real-Time Integration Layers: Event processing systems ingest live telematics, traffic and delivery confirmations. Stream analytics detect deviations and trigger reoptimization or exception workflows within seconds of an incident.

- Feedback Orchestration: Workflow managers ensure seamless data exchange between predictive models, optimization solvers and dispatch systems, delivering updated instructions to driver apps and exception dashboards in a synchronized fashion.

Workflow Roles of AI Components

- Data Preparation: AI agents validate and enrich incoming telematics and order data, aligning schemas and filling gaps before routing calculations.

- Prediction: Machine learning services generate traffic and demand forecasts, storing results in an intermediate data store accessible by the optimizer.

- Optimization: Routing engines retrieve forecasts and constraints, execute algorithms to produce route plans and forward assignments to dispatch modules.

- Execution Monitoring: Event handlers compare live progress to planned routes, invoking reoptimization or exception-management routines when thresholds are exceeded.

- Feedback Loop: Post-delivery data—actual travel times, service exceptions, fuel usage—feeds back into predictive and optimization models, driving continuous learning.

Embedding AI in routing yields measurable benefits: up to 20 percent reduction in miles driven, 10–15 percent improvement in on-time performance, decreased manual intervention and enhanced resilience against disruptions. These gains translate into stronger customer satisfaction, efficient asset utilization and sustainable competitive advantage.

Architectural Blueprint and Implementation Readiness

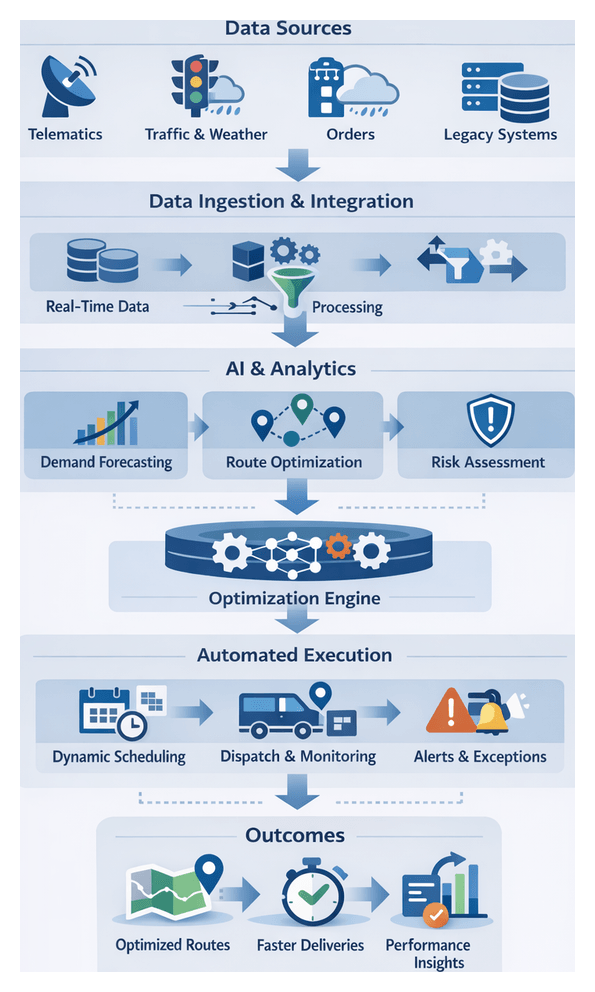

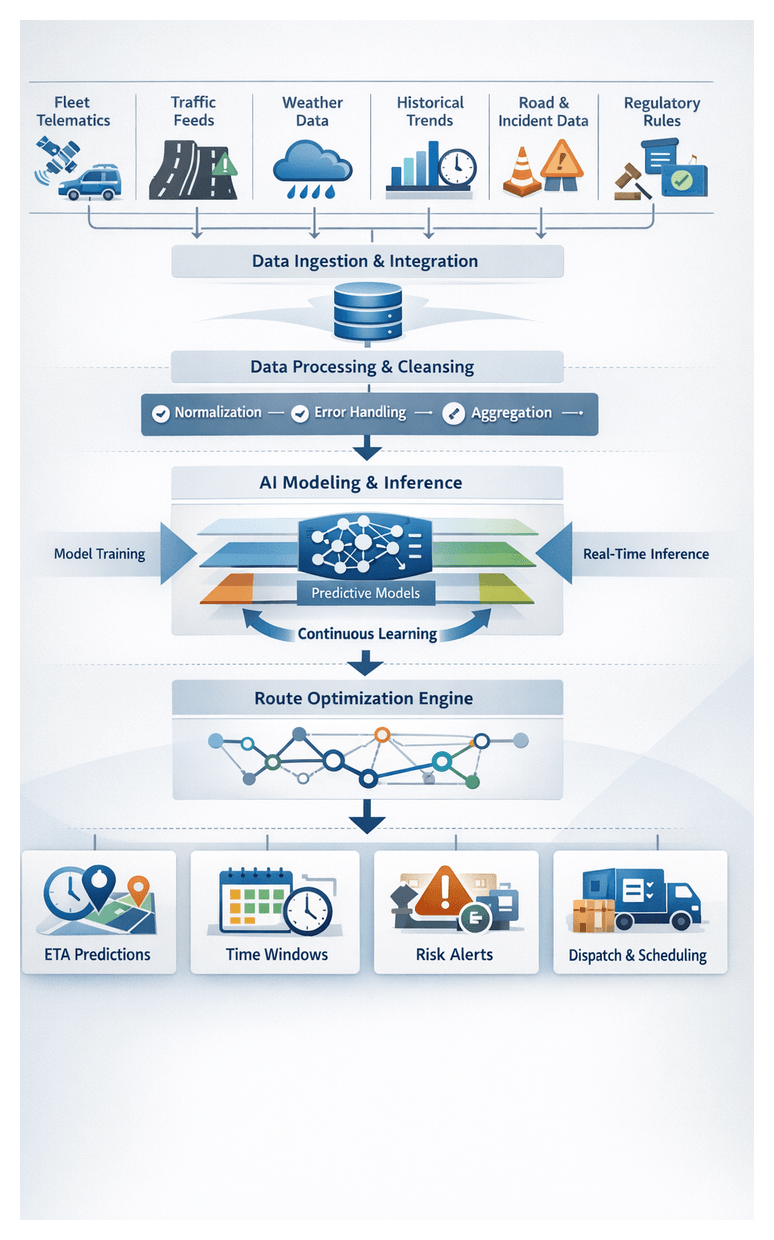

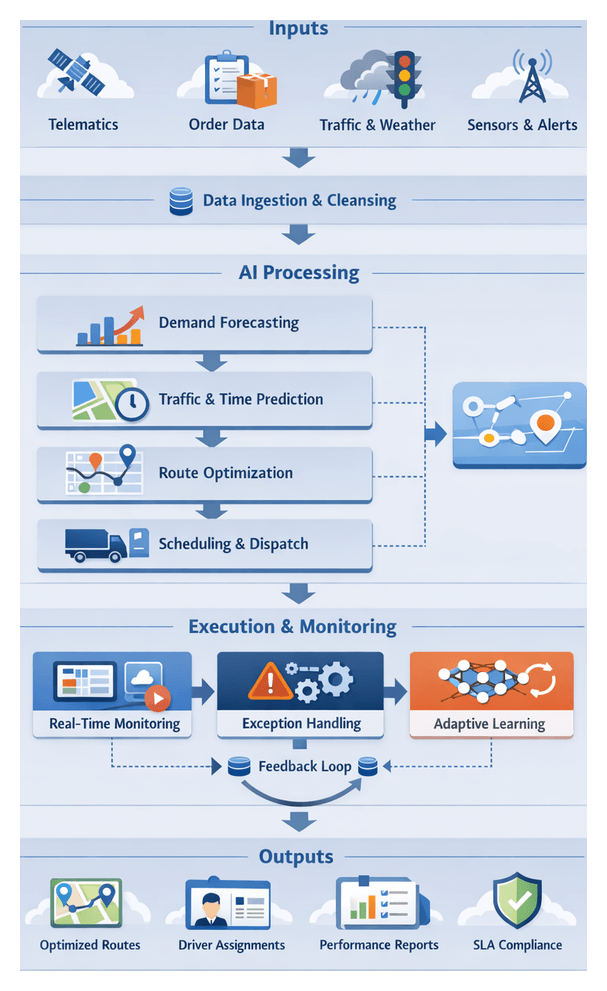

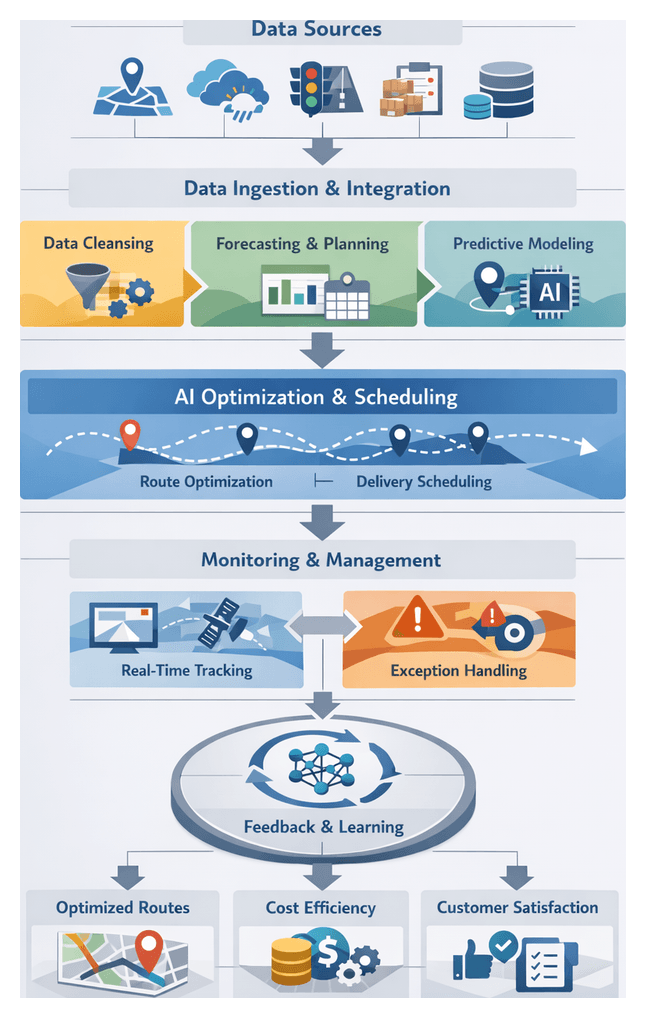

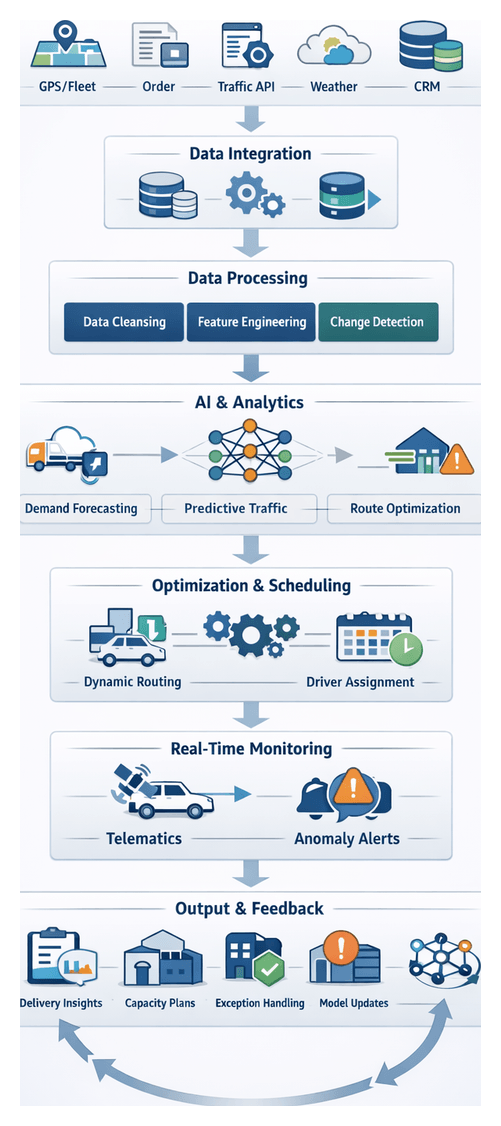

The architectural blueprint delivers a detailed representation of the end-to-end AI-driven delivery framework. It serves as a master reference for implementation teams, aligning multidisciplinary stakeholders around a cohesive vision. Key elements include:

- Logical and physical diagrams of data ingestion, processing pipelines, AI model training and inference, optimization engines and delivery interfaces

- Component definitions for microservices, AI agents, orchestration controllers and optimization solvers

- Data flow maps detailing schemas, formats and transformation rules, with identifiers for message brokers and stream processing frameworks

- Technology stack inventory of recommended platforms, libraries and infrastructure services

- Integration matrix of APIs, connectors and protocol specifications for external systems

- Security and governance overlays showing access controls, encryption gateways and audit logging principles

Dependencies and Prerequisites

Successful implementation depends on addressing data, technical, organizational and regulatory prerequisites. Critical dependencies include:

- Formalized SLAs and data access agreements with telematics, traffic, weather and order system providers

- Infrastructure readiness for high-volume ingestion, real-time processing and AI model training

- Provisioning of AI and orchestration platforms, solvers and analytics toolchains

- Compliance and security approvals for data privacy, encryption and access management

- Cross-functional alignment on roles, responsibilities and communication channels

- Verification of in-house expertise in machine learning, cloud architecture, streaming and integration

Integration Points and Validation Mechanisms

Effective handoff to development and operations teams requires structured artifacts and protocols:

- Architecture specification document with diagrams, component definitions and interface descriptions

- Version-controlled data schema repository for each feed and intermediate structure

- Integration catalog of API endpoints, message topics and sample payloads with error-handling guidelines

- Deployment guide outlining environment configurations and orchestration policies

Communication and coordination protocols include architecture review workshops, dependency tracking dashboards, integration working group meetings and change control procedures. Verification checkpoints encompass proof-of-concept deployments, interface contract testing, security reviews and performance benchmarking. Continuous architecture audits detect configuration drifts and enforce compliance with foundational principles.

Transition to Detailed Design

After handoff, teams decompose work into development sprints, guided by the architecture deliverables. Key activities include:

- Component design workshops to define classes, data structures and algorithmic flows

- Sprint planning with backlog items tied to performance, security and integration criteria

- Provisioning of development, testing and staging environments matching production topologies

- Onboarding real data feeds to validate ingestion and cleansing routines

- Setup of CI/CD pipelines enforcing code quality, integration tests and automated deployments

- Provisioning of model training clusters for reproducible experiments

- Instrumentation planning for telemetry, metrics and logs across microservices

- Security hardening with identity management, role-based access and network segmentation

- Creation of operational runbooks for incident response, data backfill and failover

- Knowledge transfer sessions to align on best practices and support responsibilities

By mapping architectural components to measurable KPIs—such as reduced delivery times and lower exception rates—teams embed real-time telemetry, configure alerts and establish feedback loops that translate strategic objectives into operational outcomes. This structured transition minimizes rework, accelerates time to value and ensures alignment between business goals, technical implementation and operational readiness.

Chapter 1: Data Ingestion and Integration

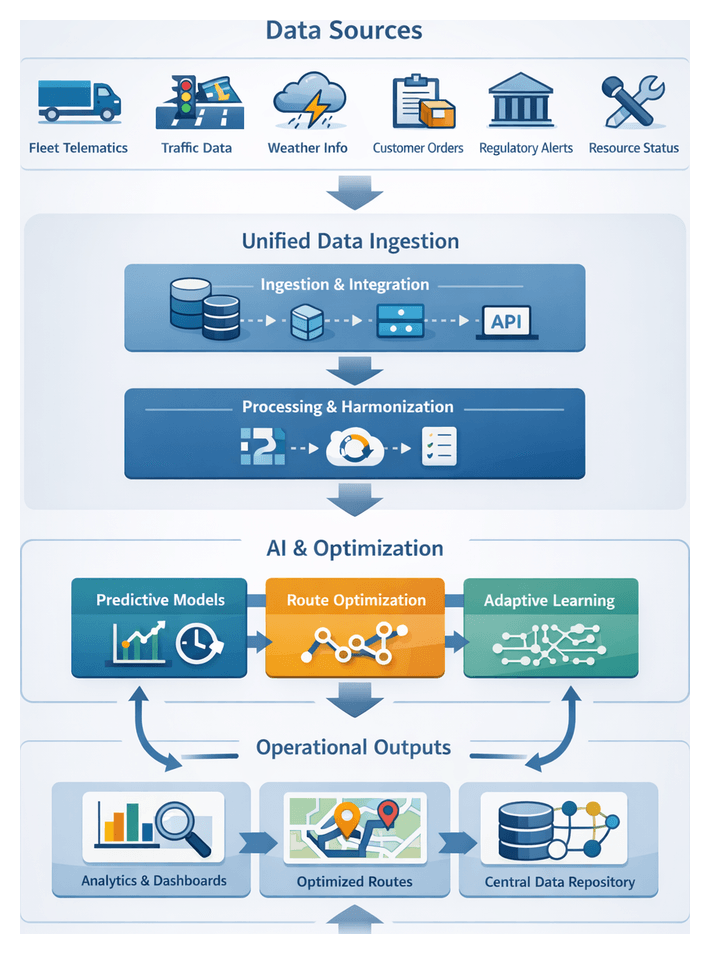

Unified Data Collection for Logistics Visibility

The foundational step in an AI-driven delivery workflow is the Unified Data Collection stage, which aggregates disparate operational data into a cohesive, timestamped repository. By capturing real-time fleet telematics, traffic conditions, weather events, customer orders and external constraints, this stage establishes the single source of truth that powers predictive models, optimization engines and adaptive decision making. A structured approach—with clear objectives, input definitions and validation checks—ensures downstream components receive accurate, consistent data while preserving lineage for governance and compliance.

Purpose and Scope

This stage delivers comprehensive visibility into:

- Fleet operations: GPS locations, vehicle speeds and usage metrics via platforms like Geotab and AWS IoT FleetWise.

- Traffic conditions: Real-time flow and incident data from TomTom Traffic API and HERE Traffic API.

- Weather updates: Current measurements and alerts from OpenWeatherMap and The Weather Company.

- Customer orders: Shipment details, time windows and special requirements from systems such as Salesforce, Oracle NetSuite and SAP ERP.

- Regulatory events: Road closures, permit requirements and special schedules published by government agencies or aggregators.

- Resource availability: Driver schedules, maintenance windows and depot capacity from workforce and fleet management solutions.

Embedding basic validation and harmonization routines at this stage reduces noise and inconsistencies before analytics, setting the quality bar for all subsequent processes.

Technical Prerequisites and Conditions

Reliable data ingestion demands the following:

- Network Resilience: Secure VPNs or dedicated lines, redundant paths and sufficient bandwidth for high-frequency streaming.

- API Access: Valid credentials with role-based controls and automated key rotation via HashiCorp Vault or Azure Key Vault.

- Time Synchronization: NTP alignment and unified ISO 8601 timestamps across sources.

- Schema Definitions: Versioned data contracts documented in a master catalog, specifying field types and units.

- Privacy and Compliance: Data sharing agreements, consent management and encryption (TLS in transit, at rest).

- Infrastructure: Scaled ingestion compute or serverless functions, message brokers such as Apache Kafka or Amazon Kinesis, and monitoring tools for latency and error rates.

Operational Metrics

Key performance indicators track data quality and readiness:

- Freshness: Latency from event generation to ingestion, targeted in seconds.

- Completeness: Percentage of critical fields populated, with thresholds (e.g., 98%).

- Schema Conformance: Incoming messages matching the contract, with deviation alerts.

- Error Rates: Ratio of malformed or rejected records.

- Duplication Rates: Frequency of duplicate messages within a time window.

- Uptime: Ingestion pipeline availability against SLOs.

Data Stream Integration and Consolidation Processes

This stage orchestrates real-time and batch feeds from telematics, order platforms, traffic and weather services into a unified pipeline. Connectors interface with source systems, a messaging backbone routes events for transformation, and a consolidation layer directs harmonized data into storage optimized for analytics and AI inference.

Ingestion Layer and Connectors

Specialized connectors handle:

- Change data capture from transactional databases.

- RESTful polling for order management systems.

- Publish/subscribe APIs for telematics and traffic feeds.

Common tools include Apache Kafka, Amazon Kinesis, Azure Event Hubs, Fivetran and Talend. Orchestration engines such as Apache Airflow or AWS Step Functions schedule and coordinate connector tasks, maintaining version control over workflows.

Real-Time versus Batch Processing

- Real-Time Streams: Continuous IoT, traffic and live order feeds requiring sub-second latency, processed by engines like Apache Flink or Google Cloud Dataflow.

- Batch Extractions: Periodic database dumps scheduled off-peak, loaded into staging for ELT routines.

Schema Mapping and Transformation

Captured events are transformed to a canonical logistics event model defining fields such as vehicle_id, timestamp, location and order_id. Transformation rules include:

- Field renaming and alignment.

- Unit standardization (distance, weight, currency).

- Data enrichment by joining traffic, weather or driver profiles.

Declarative mapping languages or SQL-based pipelines execute these rules with transactional consistency. AI-driven validation agents may propose corrections for ambiguous mappings.

Message Queuing and Pub/Sub Coordination

- Telematics Events Topic streams raw GPS and sensor data.

- Order Updates Queue captures new, modified or cancelled orders.

- Enriched Logistics Feed emits standardized records.

Connector instances publish to source topics. Transformation services subscribe, process and republish to target topics. Consumer groups scale horizontally, while health-check messages feed monitoring dashboards.

Consolidation into the Central Pipeline

Standardized streams and batches merge into a unified pipeline that feeds:

- A data lakehouse for raw and curated event storage.

- A data warehouse for analytics queries.

- Streaming tables for live AI inference and dashboards.

Platforms like Databricks or Snowflake support both streaming and batch ingestion, coordinating COPY or MERGE operations, partitioning strategies and retention aligned to compliance.

Data Integrity and Traceability

Each record carries metadata tags—source identifier, original timestamp, connector version and transformation references—to support audit trails and root cause analysis. AI-driven validation agents flag anomalies for human-in-the-loop review.

Transformative AI-Driven Routing Components

With a unified data foundation in place, AI transforms static route planning into dynamic, self-optimizing workflows. Predictive models anticipate demand and travel times, optimization engines generate high-quality routes under multiple constraints, and adaptive learning frameworks continuously improve performance using operational feedback.

Predictive Modeling

Forecasting components include:

- Time Series Forecasting: ARIMA, Prophet or LSTM networks project order volumes and delivery durations.

- Spatial Analysis: Graph neural networks and geospatial clustering refine localized estimates.

- Real-Time Fusion: Streaming integration of live traffic and weather feeds with continuous retraining.

Managed platforms such as Amazon SageMaker, Google Cloud AI Platform and Azure Machine Learning streamline model development, deployment and inference.

Optimization Engines

To solve the Vehicle Routing Problem under dynamic constraints, organizations employ:

- Metaheuristics: Genetic algorithms, ant colony optimization and simulated annealing.

- Exact Solvers: Mathematical programming with IBM CPLEX.

- Large Neighborhood Search: Iterative route refinement balancing exploration and exploitation.

- Reinforcement Learning: Agents adapt policies based on feedback from route outcomes.

Tools like Google OR-Tools and enterprise orchestration platforms automate resource scheduling, data preparation and solution validation.

Adaptive Learning

Continuous improvement relies on:

- Feedback collection from vehicle telemetry and customer confirmations.

- Automated retraining pipelines triggered by performance metrics.

- Hyperparameter tuning via automated machine learning techniques.

- Model versioning and rollback using platforms like MLflow.

Supporting Ecosystem

Routing AI integrates with:

- The data integration layer feeding telematics, orders and external feeds.

- A central data repository for raw and processed datasets.

- Compute orchestration engines scheduling training, inference and optimization tasks.

- APIs and microservices exposing results to Transportation Management Systems and driver apps.

- Monitoring and alerting solutions tracking route efficiency and exception rates.

Roles and Responsibilities

- Data Engineers: Build and maintain ingestion pipelines.

- Machine Learning Engineers: Develop and deploy predictive models.

- Optimization Specialists: Configure algorithms and define constraints.

- Platform Architects: Design infrastructure for orchestration and storage.

- Operations Managers: Translate objectives, monitor performance and manage exceptions.

- Driver Support Teams: Interface with AI outputs and handle field escalations.

Business Outcomes

- Cost Reduction: Optimized routes lower fuel consumption.

- Service Quality: Improved on-time delivery and customer satisfaction.

- Agility: Rapid response to disruptions and new orders.

- Scalability: Automated workflows handle growing volumes.

- Competitive Differentiation: Data-driven innovation in service offerings.

Feeding the Central Repository for Downstream Use

The final step in integration is populating the harmonized data into a central repository—whether a cloud data warehouse, data lake or hybrid platform—that serves as the authoritative source for forecasting, routing and scheduling systems. Clear outputs, dependencies and handoff mechanisms ensure seamless data delivery and real-time decision support.

Structured Outputs

- Unified Tables: Standardized schemas partitioned by date, region or fleet identifier.

- Metadata Catalog: Dataset descriptions, lineage and quality metrics tracked via AWS Glue Data Catalog or Apache Atlas.

- CDC Logs: Change streams capture inserts, updates and deletes for synchronized downstream state.

- Quality Reports: Versioned summaries of record volumes, missing values and validation violations.

- Audit Trails: Ingestion timestamps, job details and lineage records linking back to sources.

Downstream Dependencies

- Technical: Compute clusters, query engines and APIs with appropriate permissions.

- Data Contracts: Defined table names, column types, update frequency and freshness SLAs.

- Operational: Orchestration triggers based on load indicators, such as flag files or event notifications.

Handoff Mechanisms

- Event-Driven Notifications: Completion events published to Apache Kafka or Amazon EventBridge.

- File Drop: Parquet or ORC files deposited in object storage with downstream polling or event triggers.

- API Serving: REST or gRPC endpoints providing real-time access to integrated records.

- Shared Views: Logical views abstract physical tables for BI tools and training pipelines.

Versioning and Schema Evolution

- Semantic versioning of data schemas, with major/minor increments for breaking or backward-compatible changes.

- Central schema registry guiding consumers on compatible versions.

- Parallel publishing of old and new schema versions during migration periods.

Governance and Access Controls

- RBAC: Least-privilege permissions for users and services.

- Masking and Encryption: Tokenization of sensitive fields and encryption in transit and at rest.

- Audit Logs: Detailed records of repository access and queries for compliance monitoring.

Monitoring and Alerting

- Job success rates and durations to detect latency or failures.

- Data freshness indicators with alerts for SLA breaches.

- Error trend analysis for schema mismatches, validation failures and connector timeouts.

Case Study: Event-Driven Repository Handoff

A global provider writes cleansed datasets into a Snowflake warehouse and publishes load-complete events to Apache Kafka. The demand forecasting module subscribes to the ‘order_ingest_complete’ topic and immediately queries the latest orders. A traffic modeling service invokes Snowflake’s REST API for sub-hourly updates. Schema changes are managed via a registry, allowing pipelines to adapt automatically. This architecture achieves under ten-minute end-to-end latency and 99.9 percent job success rates.

Collaboration Practices

- Shared documentation of dataset definitions, update schedules and SLAs with regular cross-team reviews.

- Joint release planning to align pipeline deployments with downstream workflows.

- Cross-functional governance forums monitoring ingestion health and coordinating enhancements.

Chapter 2: Data Cleansing and Normalization

Delivery Complexity in Modern Logistics

The rapid expansion of global commerce has transformed delivery operations into dynamic, interconnected networks. Logistics providers now manage fluctuating demand driven by seasonality, promotions and omnichannel orders, while contending with urban congestion, regulatory restrictions and sustainability goals. To harness real-time data and advanced decision logic, organizations must first define the dimensions of delivery complexity and establish the inputs, prerequisites and conditions for an end-to-end AI-driven solution.

- Demand Volatility: Order volumes vary unpredictably based on market shifts and promotions.

- Traffic Dynamics: Time-sensitive congestion patterns, incidents and infrastructure changes.

- Operational Constraints: Vehicle capacities, driver hours and delivery time windows.

- Cost and Service Trade-Offs: Balancing fuel and labor expenses against on-time performance and customer satisfaction.

Effective complexity assessment requires high-fidelity inputs:

- Fleet telematics streams: GPS, speed, diagnostics and fuel metrics.

- Traffic and incident feeds from public sensors and crowd-sourced apps.

- Weather forecasts and real-time conditions.

- Order management data: pickup/drop-off locations, priorities and time windows.

- Regulatory policies: work-hour rules, vehicle restrictions and compliance mandates.

Prerequisites include a unified data schema that harmonizes diverse sources, standardized units of measure and robust data governance protocols. System requirements cover latency thresholds, well-documented APIs, quality assurance rules, scalability benchmarks and cross-functional alignment. Establishing clear KPIs—such as on-time delivery percentage, average dwell time and cost per mile—anchors both technical configurations and operational protocols.

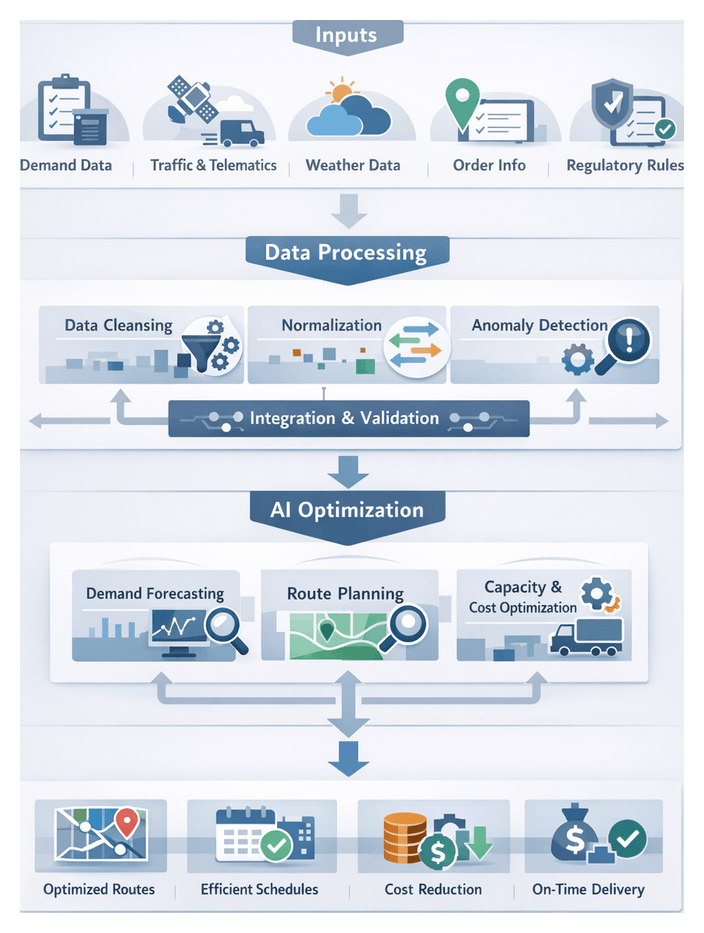

Reliable predictive analytics and optimization depend on clean, consistent data. The cleansing and normalization workflow transforms raw logistics inputs into standardized datasets, eliminating errors that could compromise downstream models.

Data Profiling and Initial Audit

Profiling tools scan incoming records to detect anomalies:

- Null or missing fields (timestamps, GPS coordinates, order IDs)

- Values outside acceptable ranges (negative distances, implausible speeds)

- Format inconsistencies (mixed date locales)

- Duplicate entries from overlapping batch and stream processes

An automated audit report summarizes issue frequencies and triggers alerts or pipeline pauses when thresholds are exceeded.

Automated Correction and Reconciliation

Rule-based engines and lightweight ML classifiers remediate common defects:

- Imputation of Missing Values: Statistical or ML regressors infer delivery durations and fuel consumption.

- Format Standardization: ETL tools like Apache NiFi and Talend convert dates to ISO 8601 and unify numeric formats.

- Duplicate Elimination: Hashing and similarity algorithms purge redundant records, flagging exceptions.

- Reference Data Reconciliation: Lookup tables and API calls to master systems such as Informatica PowerCenter ensure valid vehicle, zone and service codes.

Normalization of Units and Categorical Alignment

Field values are aligned to common standards to support aggregation and comparison:

- Unit Conversion: Distance, weight and volume metrics unified (e.g., kilometers, kilograms, liters).

- Categorical Mapping: Delivery statuses and vehicle conditions harmonized via a schema registry.

- Geospatial Standardization: Coordinates validated, reprojected and enriched through geocoding APIs.

Distributed processing frameworks like Apache Spark and cloud services such as Microsoft Azure Data Factory accelerate transformations at scale.

Coordination Between Systems and Actors

Efficient workflows rely on seamless interactions:

- Pipeline Orchestration: Schedulers trigger sequential or parallel tasks, manage retries and enforce SLAs.

- Cross-System Messaging: Event streams via Apache Kafka carry metadata and status updates.

- Human-in-the-Loop Validation: Dashboards enable stewards to review anomalies and approve corrections.

- Audit Logging: Transformation metadata, rules and code versions are recorded for compliance and rollback.

Adaptive Feedback Mechanisms

Continuous improvement is driven by feedback loops:

- Anomaly Trend Analysis: AI detectors identify emerging error patterns and suggest rule updates.

- Rule Performance Metrics: Correction success rates and residual error counts inform threshold tuning.

- Collaborative Knowledge Base: Engineers and domain experts share best practices and exception procedures.

Final Validation and Data Handoff

A staged quality check quarantines failing records and publishes validated datasets—tagged with version identifiers and metadata—to the central repository. Clean outputs feed demand forecasting and route optimization modules, ensuring reliable inputs for AI-driven logistics processes.

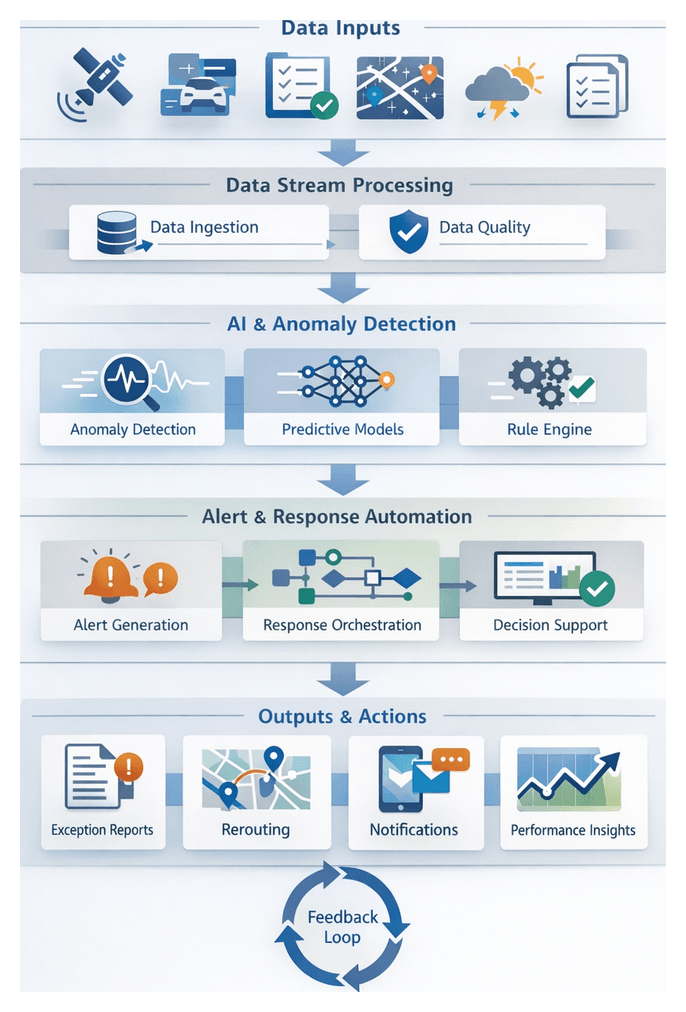

AI-Driven Anomaly Detection and Standardization

AI agents automate the detection of data anomalies and enforce standardization rules, safeguarding the integrity of downstream models.

Anomaly Detection Capabilities

Agents employ statistical and ML methods to flag deviations:

- Numeric outliers in speed or fuel streams

- Time series irregularities such as inconsistent timestamp intervals

- Semantic anomalies in categorical fields

Detection operates in two modes:

- Unsupervised: Clustering, density estimation and autoencoders identify novel errors using frameworks like Scikit-Learn and TensorFlow.

- Supervised: Classification models recognize known anomaly patterns, leveraging decision tree ensembles or gradient boosting.

Advanced Detection Models

- Isolation Forests: Efficient outlier identification in high-dimensional telematics.

- Local Outlier Factor: Detects density deviations in speed or location data.

- Autoencoders: Reconstruction error highlights multivariate anomalies.

- One-Class SVM: Encapsulates normal data boundaries when anomalies are rare.

- Convolutional Models: Capture temporal patterns and sensor drift.

Data Standardization and Entity Resolution

Agents enforce uniform field representations:

- Unit Conversion: Context-aware modules apply real-time conversions.

- Address Normalization: NLP with spaCy and rule sets standardize free-text entries.

- Fuzzy Matching: Levenshtein distance and probabilistic algorithms resolve entity duplicates.

- Time Zone Alignment: Timestamps unified to UTC or regional zones.

Integration into the Cleansing Pipeline

AI agents plug into key stages:

- Initial profiling for domain-agnostic outliers

- Rule-based screening of invalid ranges

- Standardization pass for textual and categorical fields

- Secondary anomaly re-scoring to catch residual issues

Status codes, confidence scores and metadata feed a central orchestrator that routes exceptions for remediation.

Human-in-the-Loop and Active Learning

When confidence is low, records are forwarded to experts for validation. Their feedback enriches labels, tunes thresholds and drives periodic model retraining, reducing manual reviews over time.

Performance Monitoring and Continuous Improvement

- Throughput and latency metrics ensure SLA compliance.

- Detection accuracy tracked via precision and recall.

- Standardization coverage measures normalized record ratios.

- Feedback backlog monitors pending expert reviews.

Roles and Handoff Dependencies

AI agents serve as automated scouts, standardization enforcers, quality sentinels and adaptive learners. Their outputs feed forecasting engines, traffic models and route optimization solvers, with exception reports documenting residual uncertainties.

Delivering Consistent Data to Predictive Models

The final handoff packages clean, normalized data—alongside metadata and versioning artifacts—for use by demand forecasting, traffic modeling, capacity planning and optimization engines.

Output Artifacts and Deliverables

- Standardized data tables with uniform schemas and units

- Data quality reports and validation logs

- Metadata registries capturing lineage and quality metrics

- Versioned snapshots for reproducibility

- Configuration manifests specifying schemas and partitions

Metadata Management and Catalog Registration

Cleaned datasets are registered in a central catalog with details on sources, transformations and quality. Tools such as Apache Atlas, Snowflake and Google BigQuery provide APIs and interfaces for metadata governance.

Dataset Versioning and Snapshotting

Immutable snapshots and semantic versioning track schema changes and logic updates. Integration with data lake formats like Delta Lake or Apache Iceberg optimizes storage and query performance while supporting retention policies.

Integration with Feature Stores

Normalized features are published to repositories such as Feast, AWS SageMaker Feature Store and Tecton. Consistent batch and real-time pipelines ensure feature freshness and reproducibility.

Orchestration and Handoff Mechanisms

- Workflow schedulers like Apache Airflow and Prefect

- ML pipelines orchestrated by Kubeflow

- Event-driven triggers via Apache Kafka or cloud event buses

- Serverless post-processing with AWS Lambda and Azure Functions

Dependency Management and Impact Analysis

Dependency graphs link data sources, cleansing tasks and model pipelines. Platforms such as Dagster and Azure Data Factory visualize workflows, detect schema drift and prevent incompatible promotions.

Monitoring and Validation of Delivered Data

- Regular data quality checks on new partitions

- Statistical validation against historical baselines

- Automated alerts for threshold breaches

- Dashboards displaying completeness, accuracy and freshness metrics

Best Practices for Handoff to Predictive Models

- Define SLAs for data freshness, processing windows and error rates

- Establish standardized APIs or data contracts for model consumption

- Maintain logging and audit trails linking models to cleansing runs

- Encapsulate transformation logic in feature engineering frameworks

- Foster collaboration between data engineering, data science and operations

By rigorously defining delivery complexity, implementing structured cleansing and normalization workflows, deploying AI-driven agents for anomaly detection and standardization, and formalizing the handoff to predictive models, organizations can achieve scalable, accurate and adaptive logistics operations. This integrated approach reduces costs, enhances service reliability and builds a foundation for continuous improvement in modern supply chains.

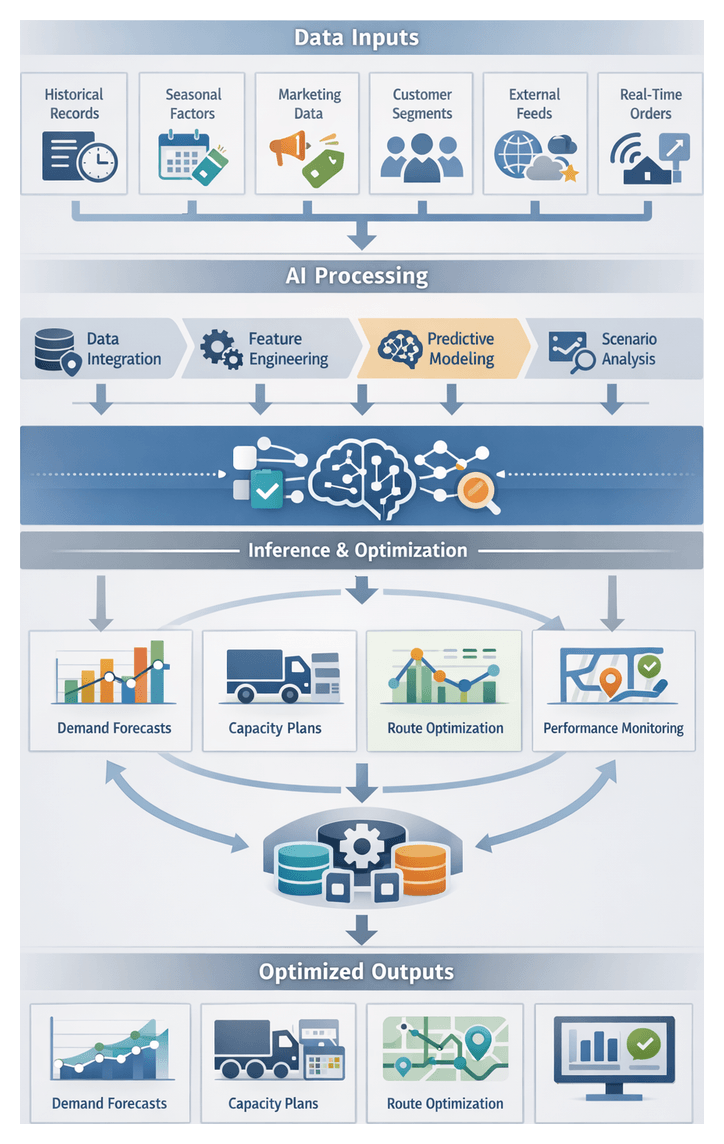

Chapter 3: Demand Forecasting and Capacity Planning

Purpose and Industry Context

The demand forecasting and capacity planning workflow transforms raw logistics and transportation data into actionable plans that align fleet resources with anticipated shipment volumes. By leveraging AI-driven models to anticipate future order volumes, delivery timing and geographic distribution, organizations shift from reactive dispatching to proactive planning. Accurate forecasts optimize resource allocation, minimize empty miles and improve service commitments, while strategic insights inform investments in fleet assets and facility expansions. In an era of e-commerce growth, seasonal promotions and supply chain disruptions, traditional rule-based approaches struggle to capture nonlinear trends and sudden spikes in demand. AI addresses these challenges through machine learning models capable of identifying temporal patterns, correlating external factors and adapting to new data, enabling carriers to anticipate demand shifts days or weeks in advance and reduce reliance on buffer capacity.

Data Inputs, Preparation, and Quality

Robust forecasting relies on a rich blend of historical, transactional and contextual data. Inputs must be cleansed, normalized and integrated into a central repository to support high-accuracy predictions.

- Historical Order Records: Time-stamped volumes, origins and destinations, product categories and service levels over a defined retrospective window.

- Seasonal and Calendar Factors: Holidays, promotional events, billing cycles and industry peaks such as back-to-school or year-end sales.

- Marketing Schedules: Discount campaigns, product launches and incentive programs that drive order frequency.

- Customer Segmentation: Patterns by account type, geography and service preferences for differentiated forecasts.

- External Feeds: Macroeconomic indicators, consumer sentiment, weather forecasts and competitor activity.

- Real-Time Transaction Streams: Incoming order confirmations for rolling short-term forecast updates.

Data quality prerequisites include consistent schemas for order dates, SKU identifiers and geographic codes; completeness thresholds for historical coverage; validity checks for outliers and improbable distances; defined latency windows for batch and streaming feeds; metadata annotation for lineage and ownership; and security controls to ensure regulatory compliance with GDPR or CCPA. Integration requires APIs or ETL pipelines from order management, warehouse systems and customer portals; message buses such as Kafka or Azure Event Hubs for live streams; data lakes or warehouses with schema management; third-party RESTful feeds; and authentication frameworks like OAuth2 or SAML. Upstream dependencies include cleansed and normalized order data, validated contextual feeds, centralized master reference tables and quality assessment reports confirming data meets accuracy standards.

Predictive Modeling Workflow

The predictive analytics workflow applies statistical and machine learning techniques to prepared data, producing demand forecasts that feed directly into capacity decisions. It consists of data preparation, feature engineering, model training and scenario evaluation.

Data Preparation and Feature Engineering

Data preparation extracts relevant variables, enriches raw inputs and engineers features that expose demand drivers to forecasting models. Key activities include automated data extraction from central repositories; time-series alignment into consistent intervals; feature creation such as rolling averages, trend indicators and event flags; and external data fusion via APIs or batch imports. Automated quality verification uses schema inference and integrity checks with tools like TensorFlow Data Validation and anomaly detection within scikit-learn pipelines. Proprietary platforms streamline ETL orchestration. Well-engineered features and clean inputs are essential for accurate model performance and reliable capacity projections.

Model Selection and Training

Model choice depends on demand patterns, data volume and forecast horizon. Common options include:

- ARIMA and Exponential Smoothing: For stationary series with modest seasonality.

- Gradient Boosting Machines: Using XGBoost or LightGBM to capture nonlinear feature interactions.

- Recurrent Neural Networks and Transformers: For complex seasonal and trend decomposition over long horizons.

- Ensemble Methods: Combining algorithms to balance bias and variance and improve resilience to anomalies.

During training, models are evaluated on hold-out sets using metrics such as MAPE and RMSE. Cross-validation folds are orchestrated by workflow engines, enabling parallel training on compute clusters. AI agents monitor training jobs, implement retry logic on failures and log performance metrics for continuous improvement. Version control, governance workflows and rollback procedures ensure model artifacts adhere to organizational standards.

Forecast Inference and Scenario Analysis

Trained models generate demand forecasts under multiple scenarios, providing planners with insights into capacity requirements across normal, peak and stress conditions.

- Baseline Forecast: Based on recent trends, seasonality and scheduled events.

- Confidence Bounds: Upper and lower intervals to assess risk exposure.

- What-If Analyses: Simulations of hypothetical changes such as promotions or regional spikes.

Inference jobs run on nightly or intra-day cadences, triggered by workflow orchestrators that manage batch pipelines, collect outputs and push results to decision support dashboards. AI-powered monitoring services track latencies, detect throughput bottlenecks and provision additional resources during demand surges.

Capacity Allocation and Output Deliverables

The convergence of forecasting and planning produces a suite of deliverables that drive downstream operations and optimization modules.

- Demand Projection Reports: Forecast tables with daily, weekly and monthly volumes broken down by region, customer segment and order type, accompanied by trend indicators.

- Capacity Allocation Plans: Recommendations for fleet composition (vans, trucks, electric vehicles), driver shift schedules, utilization targets and inventory staging for hubs.

- Scenario Analysis Dashboards: Interactive views for best-case, worst-case and baseline forecasts with sensitivity controls and threshold alerts.

- Confidence Metrics: Probability distributions, confidence intervals and risk scores highlighting under- or over-allocation potential.

- API Feeds and Data Artifacts: Structured outputs in JSON, CSV or Apache Parquet formats; RESTful endpoints delivering daily requirements; and webhooks for real-time updates.

AI-Driven Routing and Optimization

AI extends beyond forecasting into dynamic route optimization and adaptive learning, enabling proactive orchestration of delivery operations under multiple constraints.

Predictive Modeling for Routing

Machine learning models forecast travel times, congestion maps and demand heat-maps using historical GPS traces, traffic feeds and weather data. Data scientists develop and tune these models, while data engineers maintain pipelines that aggregate telemetry. Platforms such as Google Cloud AI, AWS SageMaker and IBM Watson provide infrastructure for training and deployment. Forecast outputs inform route planners of potential delays, enabling preemptive adjustments.

Optimization Engines

Optimization engines convert predictive outputs into executable routes by solving vehicle routing problems that account for capacities, time windows, work rules and transit time forecasts. Operations research engineers define objective functions and constraints, leveraging libraries such as OR-Tools or commercial solvers embedded in transportation management systems. Workflow orchestrators manage iterative solution cycles, seeding initial plans with heuristics and refining them via local search and metaheuristics to maximize on-time performance and resource utilization.

Adaptive Learning and Feedback

As deliveries execute, telematics and status updates stream back into AI modules. Machine learning engineers design retraining pipelines to incorporate new execution data, detect model drift and trigger automated retraining. Anomaly detection agents flag deviations and initiate corrective actions. Continuous integration platforms test updated models and solver parameters in sandbox environments before promotion. Reactive updates fine-tune models in response to real-time exceptions, while proactive cycles integrate historical logs and seasonal changes. This closed-loop learning refines routing accuracy and efficiency over time.

Integration, Coordination, and Governance

Seamless integration and cross-functional collaboration are essential for end-to-end capacity planning and routing orchestration.

- System Integration: Forecast exports via APIs or message queues into ERP and TMS modules; allocation rules engines translate forecasts into resource requirements; BI platforms such as Power BI or Tableau enable interactive planning dashboards; approved plans publish automatically to inventory, scheduling and workforce systems.

- Handoff to Optimization Engines: Tools consume geocoded demand points, fleet definitions and cost matrices derived from predictive travel times. Scheduling interfaces accept XML or JSON in APS schemas, direct database writes or message queue events.

- Data Exchange Protocols: OpenAPI-compliant REST endpoints, MQTT for real-time updates and secure FTP transfers maintain consistency and security. Metadata descriptors support automated validation and reconciliation.

- Organizational Roles: Solution architects design end-to-end workflows; data engineers manage pipelines; data scientists iterate models; operations research engineers define constraints; planning managers validate forecasts; IT operations ensure infrastructure scalability and recovery; DevOps engineers maintain deployment pipelines; business analysts align objectives with SLAs and cost targets.

- Governance and Quality Checks: Automated schema validation, sanity checks on volume totals, approval workflows logging reviewer identities, and audit trails linking forecasts to data versions and model parameters.

Monitoring, Feedback, and Continuous Improvement

Embedding monitoring and feedback loops ensures that forecasts and capacity plans remain accurate and aligned with actual operations.

- Forecast Accuracy Dashboards: Track error metrics over rolling windows to detect under- or over-provisioning trends.

- Error Analysis Reports: Identify drivers of model drift, including new customer segments or market shifts.

- Automated Retraining Triggers: Initiate model retraining when performance degrades beyond thresholds, using batch and streaming pipelines.

- Continuous Governance Reviews: Validate data inputs, quality controls and model artifacts against audit requirements.

- Best Practices: Maintain version control for models and outputs, implement incremental refreshes, ensure cross-functional visibility via dashboards, automate reconciliation of actuals versus forecasts, and schedule periodic model retraining using execution feedback.

By integrating demand forecasting, capacity planning and AI-driven routing within a governed, collaborative framework, organizations achieve responsive, data-driven logistics that optimize resource utilization, elevate service quality and maintain competitive advantage in dynamic markets.

Chapter 4: Predictive Traffic Modeling and Time Estimation

Traffic Prediction Data Integration

Accurate forecasting of traffic conditions is essential for AI-driven logistics orchestration. By unifying real-time vehicle telemetry, third-party traffic feeds and environmental data, organizations generate time-indexed travel time profiles that inform routing, scheduling and dispatch decisions. High-fidelity traffic predictions enable proactive management of bottlenecks, improved delivery window reliability and optimized fleet utilization across urban and intercity networks.

Key Data Inputs and Their Roles

- Fleet Telematics Streams: High-frequency GPS, speed and status signals recalibrate models with ground-truth segment travel times.

- Third-Party Traffic APIs: Network-wide insights from Google Maps Traffic API, HERE Traffic API and TomTom Traffic Index augment sparse telemetry.

- Historical Traffic Archives: Time-series patterns by hour, day and season establish baselines and distinguish recurring congestion.

- Weather and Environmental Feeds: Conditions from OpenWeatherMap and national services adjust predictions for rain, snow or extreme temperatures.

- Road Network Topology: GIS data on segment lengths, lane counts and signal timings provides the spatial framework for origin-destination modeling.

- Incident and Construction Reports: Real-time alerts on accidents, closures and planned works inject perturbations into forecasts.

- Regulatory Constraints: Time-of-day restrictions, dedicated lanes and local ordinances impose hard bounds on feasible travel times.

Prerequisites and Conditions

- Unified Data Repository: A central data lake with consistent timestamps, geospatial indexing and standardized units enables seamless correlation.

- Data Quality and Validation: Ingestion routines enforce lineage, completeness and anomaly checks, with alerts for telemetry dropouts or API outages.

- Latency Tiers: Sub-minute freshness for real-time feeds and hourly or daily updates for historical archives align with service-level agreements.

- Granularity Standards: Segment resolution and time buckets (e.g., five-minute intervals) balance model accuracy and compute performance.

- Metadata Tagging: Source identifiers, confidence scores and coverage footprints allow selective weighting during training and inference.

- Compliance and Privacy: Anonymization, consent mechanisms and regional handling of location data ensure GDPR and local regulatory adherence.

Integration Workflow Overview

- Ingestion and Staging: Raw streams and batch exports arrive via APIs, message queues or file transfers, then are time-stamped and partitioned by region.

- Schema Harmonization: Telematics, traffic and weather formats map into a unified schema with consistent geospatial projections.

- Cleansing and Error Handling: Out-of-range values, duplicates and timestamp inconsistencies are corrected or purged, with reconciliation flags for manual review.

- Temporal Alignment: Differing frequencies align to the modeling interval through aggregation or interpolation.

- Feature Enrichment: Each segment record is augmented with historical averages, incident counts, weather impact scores and regulatory flags.

- Quality Gate and Notification: Data completeness, freshness and consistency checks precede admission to the predictive engine, with automated alerts on violations.

- Handoff to Predictive Modeling: Validated datasets, accompanied by partition keys and version metadata, are published to the model input queue.

Dependencies on Upstream Processes

This integration relies on fully ingested telematics and customer order data, standardized cleansing routines, centralized traffic archives for model bootstrapping, and a metadata repository exposing network topologies and routing constraints. Satisfying these dependencies ensures the delivery of high-quality, actionable forecasts that feed dynamic route optimization and adaptive scheduling engines.

Modeling and Inference Workflow

Transforming enriched traffic inputs into precise travel time estimates involves a two-stage pipeline: offline model development and real-time inference. Data engineers and ML practitioners build and validate models in batch environments before deploying them to low-latency services that feed route optimization modules and dispatch systems.

Offline Model Development

- Data Extraction and Feature Assembly: Historical traffic, weather and incident logs are processed in Apache Spark or Apache Flink. Geospatial normalization and static attribute enrichment prepare inputs for training.

- Feature Engineering: Temporal indicators (peak hours, holidays), spatial context (urban vs suburban) and event flags (concerts, sports) are materialized into a feature store or vector database.

- Model Selection and Tuning: Algorithms such as gradient boosting with XGBoost and LightGBM, RNN/LSTM, CNNs on spatiotemporal grids and graph neural networks are prototyped in TensorFlow and PyTorch. Hyperparameter sweeps run on distributed clusters orchestrated by Kubeflow Pipelines or SageMaker.

- Cross-Validation and Backtesting: Hold-out periods and geographic zones validate generalization. Simulated delivery scenarios compare predictions to actual records to estimate confidence intervals.

- Packaging and Versioning: Approved models are registered in MLflow with metadata on training snapshots, features and hyperparameters, then containerized for deployment.

- Approval and Deployment: Operations managers review performance reports. Canary or blue-green strategies on Kubernetes minimize risk during rollout.

Real-Time Inference Pipeline

- Stream Ingestion: GPS, sensor networks, weather APIs and incident feeds flow through Apache Kafka or Amazon Kinesis into preprocessing microservices that apply training-consistent transformations.

- Inference Service: A stateless REST or gRPC endpoint running on Kubernetes or serverless platforms loads the latest model and returns segment travel time predictions with confidence scores.

- Smoothing: Temporal filters and rolling averages stabilize outputs, preventing abrupt variations that could undermine driver trust.

- Data Flow to Optimizer: Estimated times and confidence intervals feed the dynamic route optimization engine, ensuring schedules reflect current and anticipated conditions.

- Monitoring and Alerting: Prometheus and Grafana track latency, error rates and drift metrics. Anomaly detectors flag deviations for retraining triggers.

- Retraining Loop: Automated triggers launch new training pipelines when performance thresholds drop, incorporating fresh data to maintain accuracy.

System and Stakeholder Coordination

- Pipeline Orchestration: Apache Airflow or Prefect schedule preprocessing, training and deployment tasks with consistent failure handling and logging.

- Model Registry and CI/CD: Automated pipelines promote artifacts from staging to production, ensuring traceable version history and rollback capabilities.

- Dispatch Integration: Route optimization and dispatch systems subscribe to updated travel time feeds via APIs or event-driven webhooks.

- Cross-Functional Collaboration: Data scientists refine models while engineers maintain pipeline reliability. Shared dashboards provide visibility into performance and anomalies.

- Governance and Compliance: Auditors review model lineage and data usage. Automated documentation captures pipeline configurations and evaluation summaries.

Probabilistic Time Window Calculations

Beyond point estimates, probabilistic time windows assign confidence intervals around expected arrival times, enabling risk-aware scheduling and buffer optimization. Machine learning models estimate both the mean travel duration and its variance under current and forecasted conditions, transforming static commitments into adaptive delivery promises.

Key Modeling Approaches

- Gradient Boosted Trees using XGBoost or LightGBM for non-linear relationships on tabular features.

- RNNs and LSTMs capturing temporal dependencies in sequential traffic and delay data.

- CNNs on geo-gridded travel time matrices to detect local congestion patterns.

- Graph Neural Networks learning edge weights and node influences directly on road graphs.

- Bayesian frameworks, including Gaussian Processes, providing mean and uncertainty estimates for confidence intervals.

Feature Engineering and Data Integration

- Historical Travel Times aggregated by origin-destination, time of day and day of week.

- Real-Time Speeds from telematics or HERE Traffic API.

- Weather Indicators sourced from OpenWeatherMap.

- Incident Reports on accidents, closures and construction schedules.

- Road Attributes: speed limits, lane counts, intersection density, urban classification.

- Vehicle Profiles: load weight, type and historical driver behavior metrics.

Training and Continuous Learning

- Offline pipelines in Amazon SageMaker or Google Cloud AI Platform execute scheduled retraining aligned with data volume and seasonal shifts.

- Evaluation against benchmarks triggers promotions of models that improve accuracy or latency.

- Real-time feature stores supply low-latency access to precomputed features for online inference.

Handling Uncertainty and Variability

Techniques such as quantile regression, Monte Carlo dropout in neural networks and Bayesian inference yield asymmetric time windows—wider buffers where variance is high, tighter windows in stable conditions. These probabilistic outputs inform dynamic buffer sizing and risk-aware route adjustments.

Scalability and Governance

- Distributed inference on Kubernetes clusters or serverless functions using TensorFlow Serving or TorchServe auto-scales workloads based on real-time demand.

- Model governance enforces audit logs of inputs and predictions, bias detection across regions and segments, and compliance with ISO 27001 and GDPR standards.

Performance Metrics and Collaboration

- Mean absolute error, prediction interval coverage and inference latency track service quality against SLAs.

- Dashboards visualize drift and prompt retraining when deviation thresholds are crossed.

- Cross-functional teams align on evaluation criteria, feature refinement and threshold settings, ensuring operational insights feed back into model improvements.

Accurate probabilistic time windows enhance customer satisfaction, optimize resource utilization, mitigate delay risks and empower autonomous scheduling by feeding confidence scores into dispatch algorithms.

Estimated Time Outputs and Scheduling Hand-Offs

The culmination of predictive modeling delivers structured artifacts—segment-level estimates, route durations, time window boundaries and risk indicators—that feed route optimization and scheduling systems. Standardized formats, clear interface contracts and robust handoff protocols ensure seamless integration and maintain end-to-end efficiency.

Key Output Artifacts

- Segment-Level Travel Time Estimates with time-of-day adjustments.

- Route-Level Aggregated Durations summing contiguous segments.

- Probabilistic Time Window Boundaries (earliest departure, latest arrival).

- Confidence Intervals (e.g., 90th-percentile travel times).

- Delay Risk Scores derived from anomaly detection and incident feeds.

- Metadata Tags for generation timestamp, model version and input snapshot.

Data and System Dependencies

- Real-Time Traffic Feeds for transient condition capture; outages can degrade accuracy.

- Historical Archives for recurring pattern learning; gaps may skew estimates.

- Weather and Event Data subject to API availability and licensing.

- Inference Infrastructure (GPU clusters, cloud ML services) for low-latency scoring.

- Preprocessing Pipelines for data normalization; schema misalignments can propagate errors.

- Model Registry Services to ensure consistent deployment of the intended artifact.

Interface Contracts and Delivery Mechanisms

- Structured Schemas in JSON or Protocol Buffers, including fields such as segment_id, estimated_duration, confidence_interval, risk_score and timestamp.

- Time Matrix Representations as sparse or indexed matrices for efficient storage and transmission.

- API Endpoints (e.g., /api/v1/traffic/estimates) and message queues for event-driven or on-demand update delivery.

- Push vs Pull Models with defined staleness SLAs and retry policies.

- Error Handling Codes and fallback to historical averages or heuristic estimates when real-time feeds fail.

Handoff to Route Optimization Engines

- Triggering of full or incremental optimization runs based on time, events or manual requests.

- Incorporation of Confidence Bounds via chance-constrained programming or stochastic simulations.

- Data Synchronization through transactional handoffs or distributed locks to prevent processing of stale data.

- Feedback Loop Integration capturing planned vs actual travel times for later retraining.

Scheduling Dependencies and Downstream Consumption

- Time Slot Assignment using earliest and latest arrival estimates for batching and slot optimization.

- Resource Allocation of drivers, vehicles and loading docks with dynamic buffer rules based on risk scores.

- Customer Notifications conveying accurate arrival windows via SMS or email.

- Real-Time Monitoring combining live tracking data with initial estimates for continuous updates.

- Performance Reporting referencing original windows to trigger exception workflows when SLAs are at risk.

Best Practices for Reliable Handoffs

- Versioned APIs and Schemas to decouple evolution of predictive and optimization components.

- Message-Driven Architectures with partitioned topics keyed by region or fleet segment for scalability.

- Monitoring Metrics for data freshness, API latency and error rates to surface issues before impact.

- Automated Fallback Rules reverting to baselines when models or pipelines fail.

- Security Controls enforcing encryption in transit, access controls and audit logging of all handoffs.

By delivering precise, timely and well-structured travel time outputs and managing their dependencies with optimization and scheduling systems, logistics providers achieve enhanced reliability, resource efficiency and customer satisfaction. This final stage bridges predictive analytics and operational execution, realizing the promise of end-to-end AI-driven logistics orchestration.

Chapter 5: Dynamic Route Optimization Engine

Purpose of the Route Optimization Stage

The route optimization stage is the nexus where predictive insights, operational constraints and strategic objectives converge to generate executable delivery plans. By translating demand forecasts, traffic predictions and resource availability into cost-effective, time-sensitive routes, this stage ensures service level agreements are met while controlling distance, travel time and operational expenses. Leveraging advanced solvers instead of manual routing or static heuristics, logistics providers gain the agility to adapt to fluctuating demand, evolving traffic conditions and regulatory requirements, thereby maintaining high on-time performance and customer satisfaction.

Key Data Inputs and Prerequisites

Effective optimization relies on timely, accurate and standardized data streams. Before invoking the solver, the following inputs must be cleansed, validated and synchronized:

- Demand Forecasts – Projected delivery volumes by region, time window and customer priority from the demand forecasting module.

- Vehicle and Driver Profiles – Telematics data on capacities, fuel types, operating costs, current locations; driver schedules, qualifications, hour limits and rest mandates.

- Geospatial Network Data – Digital road network graphs, distance matrices, permitted routes per vehicle type; geocoded pickup/drop-off coordinates and access constraints.

- Traffic and Time Estimates – Congestion forecasts and travel time distributions sourced from predictive traffic models with live feeds and historical patterns.

- Business Rules and SLAs – Customer time windows, priority tiers, penalty costs, maximum stops per route and preferred depot assignments.

- Regulatory Constraints – Local driver hour regulations, vehicle weight and emissions restrictions, zone-specific delivery hours.

These streams feed into a central repository exposing standardized interfaces such as RESTful APIs or message queues. Timestamp alignment across sources is essential to maintain consistency, particularly for rolling horizon updates and real-time reoptimizations.

Triggering Conditions for Optimization Cycles

Optimization may be invoked under three principal scenarios to balance computational load with operational responsiveness:

- Batch Planning – Scheduled runs (e.g., nightly) that generate the next day’s complete routes from consolidated orders and forecasts.

- Rolling Horizon Updates – Mid-day reoptimizations of a moving window to incorporate new orders, cancellations or significant traffic deviations.

- On-Demand Rerouting – Real-time triggers responding to exceptions such as vehicle breakdowns, severe weather or high-priority shipments.

An orchestration layer monitors fleet status and event alerts, deciding whether a full or partial reoptimization is warranted to maintain service levels without unnecessary compute overhead.

Integration with Optimization Engines

The solver component underpins the route optimization stage, interfacing with one or more AI-driven products and libraries. Commonly used engines include:

- Google OR-Tools for open-source vehicle routing with time windows and capacity constraints.

- Gurobi for high-performance mixed-integer and quadratic programming.

- IBM ILOG CPLEX for enterprise-grade optimization with parallel heuristics.

- OptaPlanner as a Java constraint-solving toolkit supporting metaheuristics.

An orchestration microservice transforms standardized inputs into solver-specific formats, invokes the solver API, and converts outputs back into the workflow schema. Advanced implementations embed custom AI agents to adapt parameters based on historical performance and accelerate convergence.

Constraint Validation and Compliance

Before releasing routes to dispatch, the system verifies that all hard and soft constraints are satisfied:

- Vehicle capacity limits for weight and volume.

- Adherence to customer time windows under traffic variability.

- Driver shift durations, mandatory breaks and rest periods.

- Depot operating hours and permitted service stop windows.

- Company policies on maximum stops, prioritized segments and special handling.

Violations trigger structured error reports, which the orchestration may use to retry optimization with adjusted parameters or escalate for manual intervention. Successful validation tags routes for downstream scheduling.

Algorithmic Workflow for Route Generation

The route generation workflow executes a sequence of algorithmic actions to transform initial seeds into optimized itineraries. Each step exchanges messages via APIs or message brokers, ensuring modularity and traceability.

- Initial Route Seeding: A greedy nearest-neighbor heuristic assigns deliveries to vehicles based on estimated travel times. Integration with the predictive traffic service retrieves current time matrices, and when combined with Google OR-Tools the seeding phase produces feasible starting solutions within seconds. The orchestration logs outcomes before advancing valid seeds.

- Constraint Validation: Seeded routes pass through a validation microservice enforcing capacities, time windows, driver regulations and special handling rules. Violations emit events that a coordination agent resolves by splitting loads or adjusting sequences. Valid routes receive compliance flags.

- Cost Function Evaluation: A cost engine computes metrics—distance, duration, fuel consumption, tolls and service penalties—and applies strategic weightings to form an objective score. Results are stored for comparative analysis during iterative improvement.

- Iterative Improvement: Local search operators (2-opt, 3-opt, swap, relocate) explore neighboring solutions. Each candidate returns to validation and cost evaluation. A decision logic accepts improvements based on objective gains or probabilistic criteria, updating the incumbent solution and logging progress.

- Advanced Metaheuristics: Metaheuristic methods—guided local search, tabu search, simulated annealing—are scheduled during off-peak periods or on GPU-accelerated nodes. Integration with an AI engine dynamically adjusts penalties and manages tabu lists to diversify search and escape local optima.

- Reinforcement Learning Overlay: An RL agent selects and tunes neighborhood operations based on reward signals—solution quality, convergence speed and resource utilization. Platforms like Ray RLlib, TensorFlow Agents and OpenAI Baselines support distributed training. The agent refines policies over successive routing cycles.

- Convergence and Termination: The controller monitors iterations, time budgets and improvement thresholds. Upon meeting termination criteria, it sorts candidate routes by cost and service metrics and publishes a termination event to the orchestration broker, initiating downstream handoff.

Throughout this flow, the orchestration layer coordinates execution, handles retries, aggregates logs and publishes performance metrics. Constraint, cost and improvement services expose RESTful endpoints, while message buses decouple components for fault isolation and horizontal scaling. Data engineers, optimization specialists and operations managers collaborate via dashboards to monitor system health and solution quality.

AI Optimization Modules and System Integration

Robust route optimization combines metaheuristic solvers, reinforcement learning agents and constraint programming components within a microservices framework. This architecture supports modular deployment, dynamic scaling and seamless data exchange.

Metaheuristic Solvers

Techniques such as genetic algorithms, simulated annealing, tabu search and ant colony optimization explore large solution spaces to balance multiple objectives. Open source frameworks like Google OR-Tools provide both exact and metaheuristic routines, while commercial engines such as Gurobi and IBM CPLEX deliver high-performance implementations. Key capabilities include:

- Initial seeding via greedy or insertion heuristics.

- Neighborhood search (2-opt, 3-opt, swap, relocate).

- Multi-objective balancing of distance, time, cost and workload.

- Termination by time limits, convergence or quality gaps.

Reinforcement Learning Agents

RL modules treat routing as a sequential decision process, using reward signals to learn policies that generalize across demand patterns and traffic conditions. Platforms such as Ray RLlib, TensorFlow Agents and OpenAI Baselines support distributed training. Integration requires a simulation environment, experience replay stores and inference APIs for real-time policy application.

Constraint Programming Components

CP engines excel at encoding complex business rules—driver certifications, precedence constraints and specialized handling—and use propagation techniques to prune infeasible routes. The CP-SAT solver in OR-Tools and IBM CP Optimizer offer rich modeling languages. Routes invoking CP modules pass through RESTful or language-binding interfaces before cost optimization.

Microservices and Messaging Framework

Each solver and AI agent is deployed as an independent container exposing job submission, status and result endpoints. An API gateway routes workloads based on complexity, while a service registry enables dynamic instance discovery. Message brokers such as Apache Kafka and RabbitMQ coordinate asynchronous job queues, backpressure control and event distribution. Data contracts define consistent payload schemas for inputs, constraints and solutions.

Real-Time Feedback and Adaptive Tuning

Telemetry on actual departure times, on-route speeds and delays streams back to the optimization pipeline. AI modules analyze deviations and adjust heuristic weights or RL rewards to improve future routing. For instance, increased penalties on congested zones redirect metaheuristic search, while RL policies update to reflect new travel time distributions. Regular retraining ensures alignment with evolving operational realities.

Monitoring and Logging

Comprehensive observability underpins solution quality and transparency. Metrics on service latency, queue lengths and solver convergence are collected by Prometheus and visualized in Grafana. Job submissions, solver parameters and error traces flow into the ELK Stack for audit and root cause analysis. Integration with incident management ensures rapid responses to anomalies, preserving system resilience and performance.

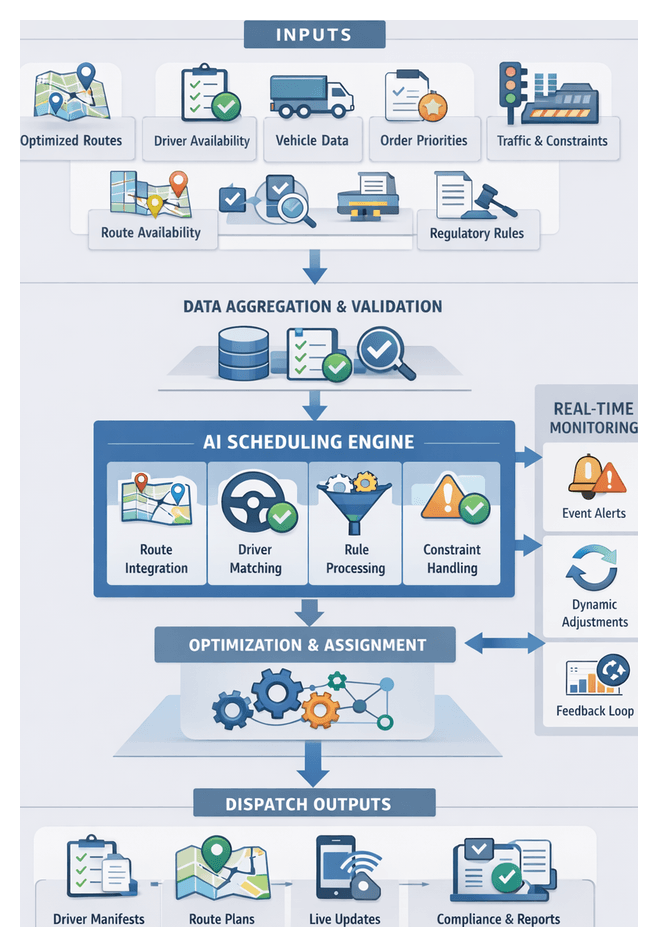

Optimized Route Outputs and Dispatch Handoffs

Upon convergence, the optimization stage emits standardized, validated artifacts that bridge strategic planning with tactical dispatch. Well-structured outputs facilitate seamless integration into scheduling platforms, driver applications and execution monitoring systems.

Key Output Artifacts

- Route Itineraries – Human-readable summaries of each vehicle’s stops, scheduled times, customer notes and handling instructions.

- Geospatial Path Models – GeoJSON or protocol buffer files with waypoints, turn-by-turn instructions and metadata on travel times and distances.

- Time-Window Allocations – JSON objects mapping each delivery to its service window with predictive confidence intervals.

- Load and Sequence Tables – Detailed cargo assignments per vehicle, including weight, volume and priority sequencing.

- Optimization Metadata – Summary metrics on total distance, drive time, fuel estimates and optimization score against baselines.

- Exception Flags – Listings of soft or hard constraint violations requiring manual review or secondary passes.

Dependencies and Data Validation

Output integrity relies on upstream inputs: demand forecasts, traffic predictions, regulatory rules, validated geocodes and external routing APIs such as HERE Routing API. Final validation steps include constraint re-verification, digital twin simulations and KPI checks against historical benchmarks. Exception scores above thresholds enter a human-in-the-loop review.

Handoff Mechanisms

- RESTful APIs – Secure JSON payloads over HTTPS for batch or real-time route submissions.

- Message Queues – Asynchronous delivery via Apache Kafka or AWS SQS, decoupling optimization from scheduling.

- SFTP Transfers – Secure CSV or XML drops with checksum validation for ETL ingestion.

- Database Sync – Direct inserts into shared repositories with change data capture to analytics and dispatch systems.