AI Powered Security and Risk Management A Practical End to End Workflow

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Security Landscape and Data Challenges

Over the past decade, the enterprise security landscape has been reshaped by cloud adoption, remote work expansion, and the proliferation of IoT devices. Threat actors—from nation-state campaigns to financially motivated cybercriminals—leverage sophisticated techniques such as fileless malware, zero-day exploits, and multi-stage attacks. These campaigns traverse hybrid clouds, virtualized data centers, on-premises infrastructure, and edge networks, exploiting gaps in visibility and coordination to remain undetected for extended periods. As digital footprints grow, security teams face unprecedented volumes, velocities, and varieties of telemetry data, challenging traditional perimeter defenses and manual processes.

Modern environments generate logs from firewalls, intrusion detection systems, endpoint detection and response agents, cloud security posture assessments, vulnerability scanners, threat intelligence feeds, and identity governance solutions. Each source delivers unique schemas, timestamp conventions, and metadata attributes. This heterogeneity creates:

- Visibility gaps, forcing analysts to pivot across multiple consoles and slowing investigations.

- Inefficient workflows dominated by manual exports, spreadsheet consolidations, and ad hoc scripts.

- Inconsistent reporting for compliance and executive metrics, eroding stakeholder confidence.

- Poor data quality, with duplicate events, missing fields, and misclassifications introducing noise.

- Scalability limits, as parsing pipelines and point solutions falter under growing log volumes.

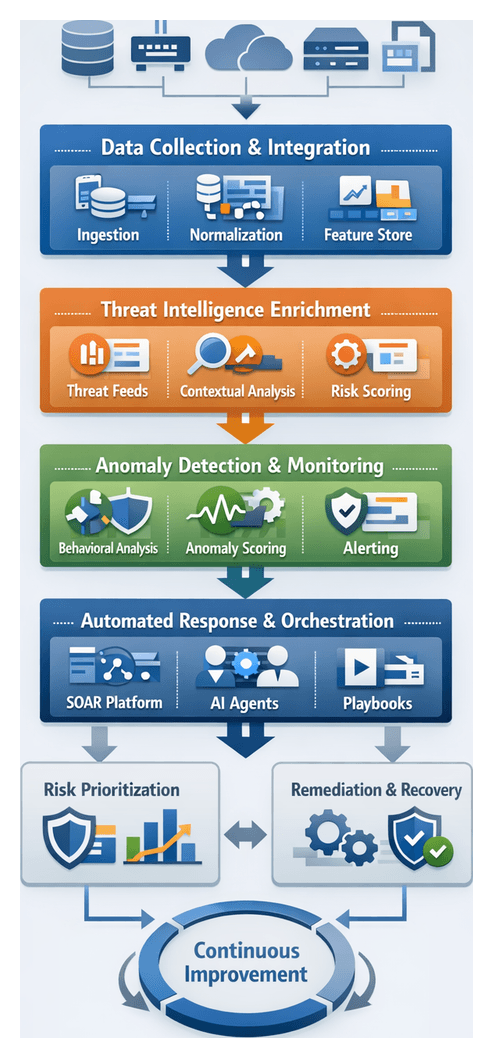

Unified Data Collection and Integration

Establishing a centralized data collection pipeline is the essential first step toward cohesive security operations. This stage aggregates and normalizes all security-relevant telemetry—logs, metrics, configuration snapshots, vulnerability findings, and threat indicators—into a unified stream. Key functions include:

- Aggregation: Ingest data from firewalls, SIEM platforms, Splunk, endpoint agents, cloud APIs, vulnerability scanners, and threat feeds.

- Normalization: Convert diverse formats into a canonical schema, apply consistent timestamp conventions, and standardize field names for source, severity, and asset identifiers.

- Deduplication and Filtering: Eliminate redundant events, suppress routine health checks, and focus processing on actionable telemetry.

- Preliminary Enrichment: Tag events with metadata—geolocation, vendor family, protocol context—to streamline downstream AI parsing and correlation.

- Reliability and Scalability: Leverage streaming platforms such as Apache Kafka or cloud-native equivalents with retry logic, backpressure handling, and horizontal scalability.

Prerequisites and Conditions

- Data Source Registry: Document all security systems with connection details, protocols (syslog, REST API, message bus), formats, and expected volumes.

- Secure Connectivity: Establish TLS, VPN, or private links and least-privilege service accounts for log and metric retrieval.

- Time Synchronization: Ensure all systems use NTP or equivalent time services for accurate event correlation.

- Governance Policies: Define classification rules, retention schedules, data residency, encryption standards, and role-based access controls.

- Schema Definition and Mapping: Develop a canonical event model covering fields such as timestamp, source IP, user identity, asset tag, and severity.

- Ingestion Infrastructure: Provision resilient message queues, streaming platforms, batch connectors, and change data capture mechanisms.

- Monitoring and Alerting: Implement dashboards tracking latency, throughput, error rates, and backlogs, with automated alerts for connector failures or schema mismatches.

- Cross-Functional Collaboration: Align security, IT operations, network engineering, and application teams on onboarding, schema updates, and change control.

- Continuous Improvement: Review performance and data quality metrics regularly to refine filtering rules and mappings.

- Security Hardening: Enforce host hardening, network segmentation, and key management for the ingestion platform and connectors.

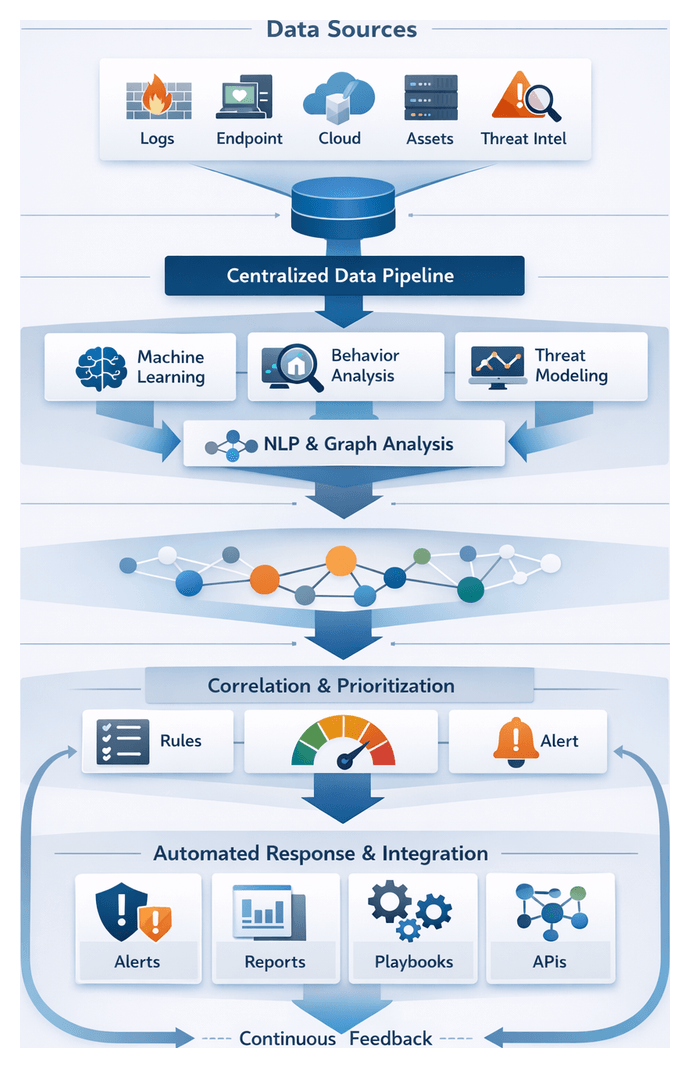

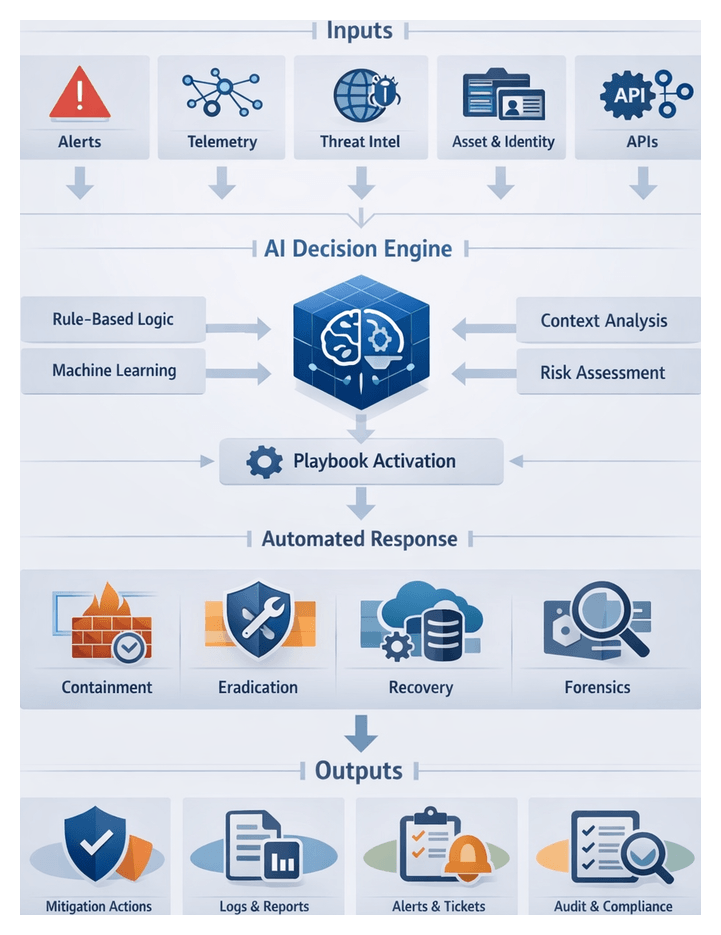

Orchestrated AI Workflows in Security

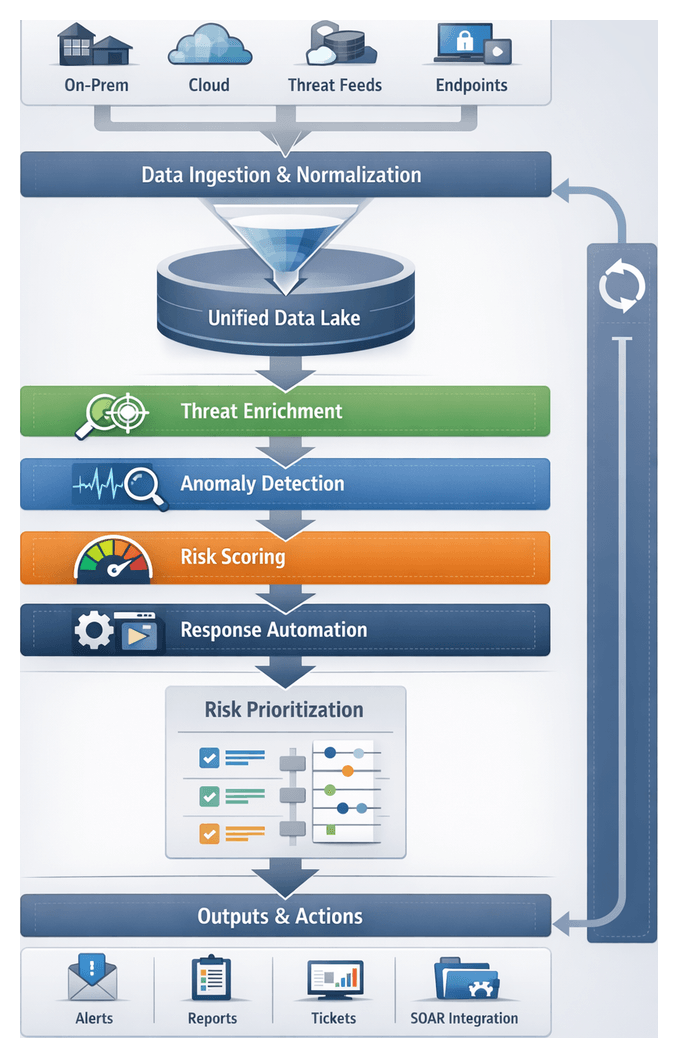

To overcome fragmented processes and manual handoffs, organizations implement an orchestrated AI workflow that unites people, processes, and technology into an adaptive pipeline. This orchestration layer acts as the traffic manager, directing data flows between detection engines, enrichment modules, ticketing systems, and response platforms. Key stages include:

- Trigger and Ingestion: Events, alerts, or scheduled scans initiate workflows via a central orchestration engine.

- Enrichment and Contextualization: AI agents retrieve relevant telemetry, threat intelligence, and asset data to add context.

- Automated Decisioning: ML models assess severity, compute risk scores, and recommend next steps.

- Task Assignment: Orchestration routes tasks to analysts, ticketing systems, or automated responders.

- Action Execution: Automated playbooks or human teams perform containment, remediation, or policy updates.

- Recording and Reporting: All actions and communications are logged for audit, analytics, and continuous improvement.

System Interactions and Integration Patterns

- Message Bus Integration: Event notifications travel via Kafka clusters or RabbitMQ queues, decoupling producers and consumers.

- RESTful APIs: Orchestration services call platforms like Cortex XSOAR to fetch playbook results or update incident statuses.

- Webhooks: Real-time cloud workload and SaaS integrations leverage webhooks to trigger flows on suspicious events.

- Data Lake Access: Enrichment engines query centralized asset inventories and historical logs stored in data lakes or via platforms such as Apache NiFi.

- Event Streaming: Bulk telemetry streams into data lakes for near-real-time consumption by AI modules.

Roles and Responsibilities

- Orchestration Engine: Manages triggers, routes tasks, enforces SLAs, tracks metrics, and logs handoffs.

- AI Enrichment Agents: Use ML and NLP to classify alerts, extract indicators, and pull context from intelligence feeds.

- Decisioning Models: Evaluate risk based on policies, historical trends, and supervised learning outcomes.

- Case Management Systems: Provide analysts with structured workspaces, integrating tasks, evidence, and communications.

- Automated Playbooks: Encapsulate conditional logic for containment, mitigation, and remediation through EDR tools and cloud APIs.

- Human Analysts: Validate AI recommendations, perform in-depth investigations, and authorize critical actions.

Auditability and Compliance

- Policy Gates: Enforce approvals and policy checks before critical actions like account suspension.

- Append-Only Logs: Record orchestration commands and AI outputs in tamper-resistant logs.

- Role-Based Access Controls: Restrict sensitive actions and data to authorized roles.

- Evidence Collection: Automatically attach artifacts such as packet captures and memory snapshots to incident records.

- Reporting Interfaces: Generate compliance reports and dashboards summarizing performance, SLA adherence, and control effectiveness.

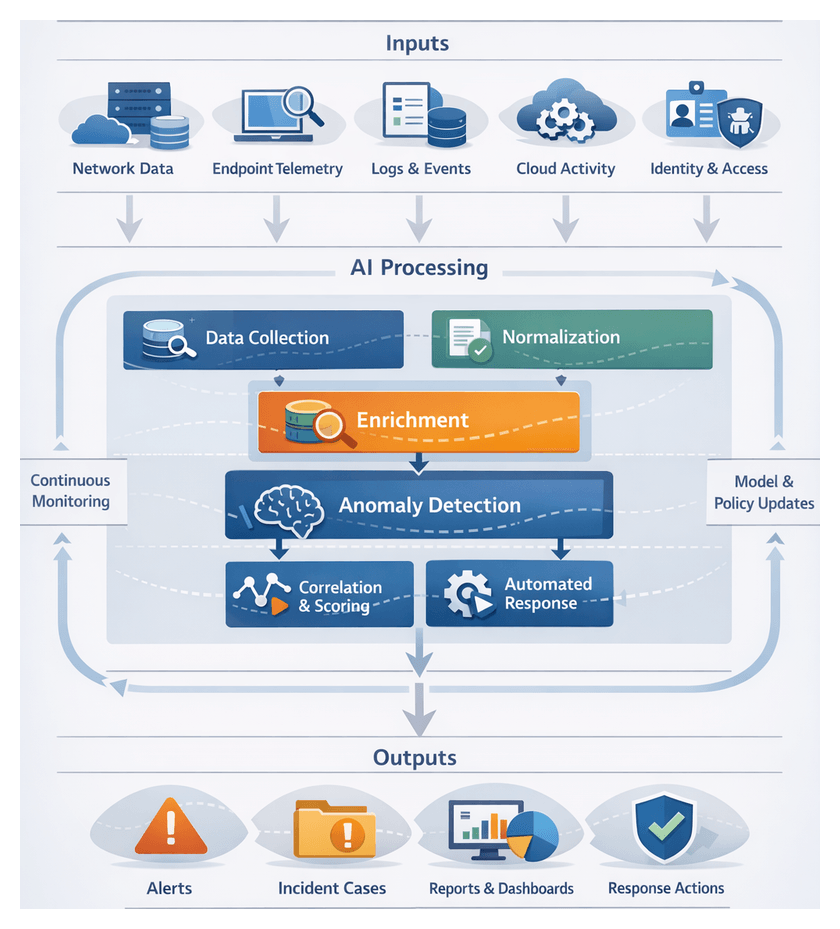

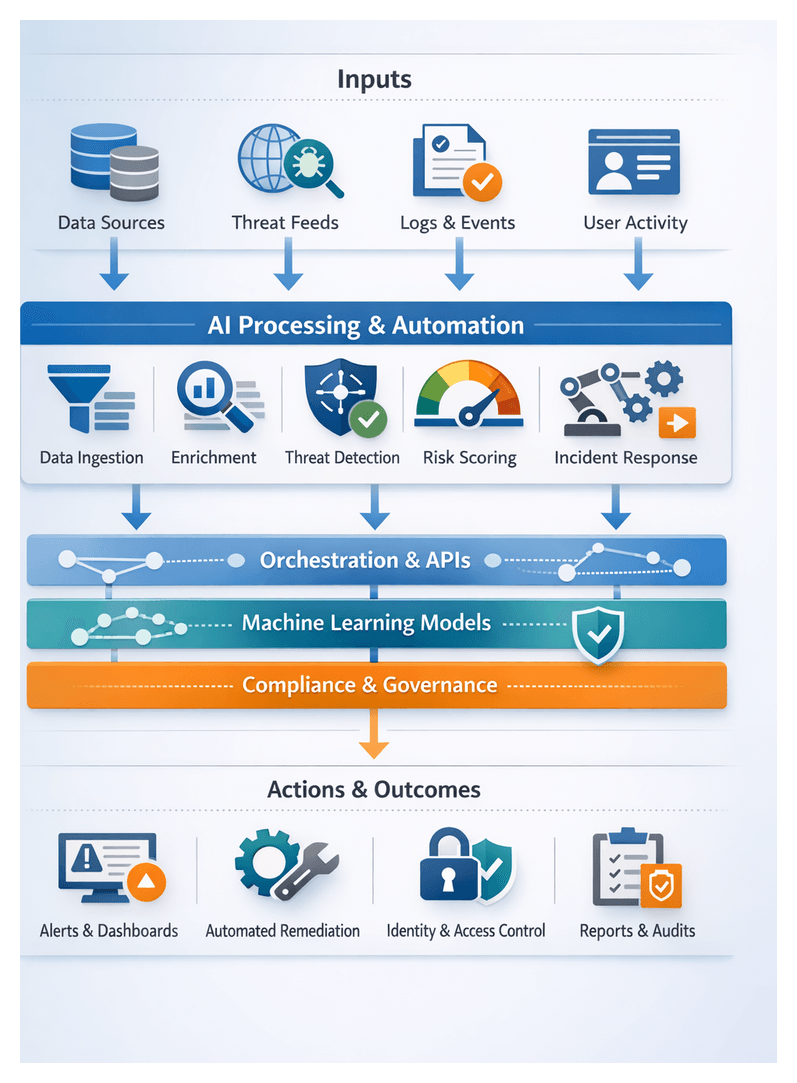

AI Agent Roles Across the Security Lifecycle

AI agents—software components leveraging machine learning, natural language processing, and decision logic—embed intelligence at each stage of the security lifecycle. A modular agent architecture accelerates threat detection, streamlines investigations, and bolsters risk management.

Data Ingestion and Enrichment Agents

- Connector Management: Maintain secure connections to sources such as SIEM platforms, Splunk, cloud logging services, and databases.

- Schema Normalization: Map vendor-specific formats into standardized records using ML classifiers.

- Contextual Tagging: Use NLP to extract entities—IP addresses, user IDs, file hashes—and enrich with metadata from CMDB systems.

- Noise Filtering: Suppress benign events through rule engines and anomaly detection models.

Detection and Analysis Agents

- Statistical Anomaly Detection: Profile normal baselines and surface deviations in network flows or user behavior.

- Signature and Heuristic Engines: Match known Indicators of Compromise using curated threat feeds.

- Behavioral Analytics: Apply deep learning to action sequences and assign risk scores to behaviors.

- Hybrid Correlation: Combine ML outputs with domain rules to reduce false positives and prioritize alerts.

Prioritization and Risk Scoring

- Asset Context Integration: Weight events using business impact data from IT service management systems.

- Threat Intelligence Correlation: Match anomalies with feeds such as VirusTotal and Recorded Future.

- Dynamic Risk Modeling: Calculate composite scores with Bayesian networks and decision trees.

- Alert Prioritization: Rank incidents to trigger high-priority workflows for critical assets.

Automated Response and Remediation Agents

- Playbook Selection: Use AI classifiers to match incidents with appropriate response procedures.

- Command Orchestration: Integrate with EDR tools, firewalls, and cloud APIs to isolate hosts or block IPs.

- Adaptive Execution: Apply reinforcement learning to refine quarantine scope and remediation timing.

- Recovery Confirmation: Run post-remediation scans and compliance checks to verify system restoration.

Orchestration and Workflow Coordination

- Task Sequencing: Ensure enrichment precedes detection and that risk scoring informs response decisions.

- State Management: Track incident states and synchronize updates across dashboards and ticketing systems.

- Exception Handling: Detect workflow failures, trigger manual approvals, and reroute tasks as needed.

- Scalability: Auto-scale containerized agent instances to handle event spikes and maintain availability.

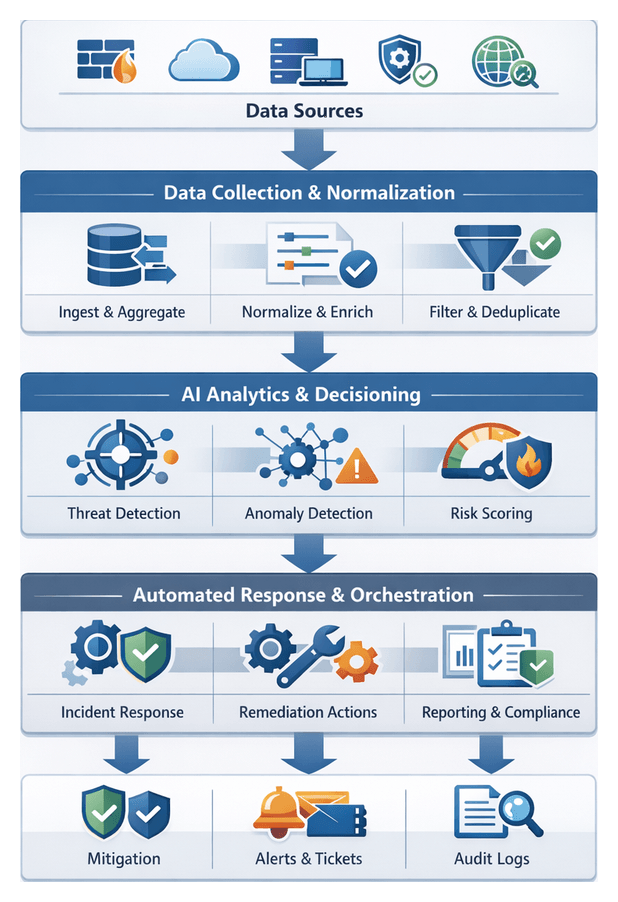

Workflow Architecture and Module Layout

A modular workflow architecture delivers consistency, scalability, and clarity. By defining clear interfaces, data contracts, and orchestration mechanics, organizations align technical teams, streamline integrations, and enable component substitution as needs evolve. The architecture is structured into three logical tiers:

- Data Orchestration Tier:

- Collection Engine: Aggregates logs, metrics, inventories, and threat feeds.

- Normalization Service: Converts inputs to canonical schemas.

- Enrichment Hub: Applies initial tagging and contextual metadata.

- Analytic Processing Tier:

- Threat Correlator: Matches indicators against internal telemetry.

- Anomaly Detector: Applies ML models for behavior baselining.

- Risk Scorer: Calculates dynamic risk ratings based on asset criticality.

- Action Execution Tier:

- Remediation Orchestrator: Automates patching and configuration updates.

- Case Management Interface: Generates investigation workflows and tickets.

- Compliance Reporter: Produces audit-ready evidence and summaries.

Interface Contracts and Handoffs

- Synchronous APIs: For on-demand risk evaluations and report generation, secured by OAuth2 or mutual TLS, documented via OpenAPI.

- Asynchronous Event Streams: Real-time telemetry, alerts, and retraining triggers over Apache Kafka topics, with schema management for backward compatibility.

- Batch Transfers: Scheduled exports for compliance archives and large scans via secure file shares or object storage.

Handoffs include validation checks with correlation identifiers and schema versions. Downstream modules acknowledge or return errors, triggering automated retries and alerts for integration issues.

Dependencies, SLAs and Governance

- Data Dependencies: Asset inventories, threat feeds, and historical logs.

- Compute Requirements: CPU, memory, GPU for model inference.

- Storage Needs: Databases, retention policies, and encryption standards.

- Network Constraints: Bandwidth, latency, and segmentation requirements.

- Third-Party Services: External APIs, licensing, and subscription tiers.

Each dependency is paired with service level agreements defining uptime targets, maintenance windows, and escalation paths. Architecture artifacts—workflow diagrams, interface catalogs, dependency matrices, and module registries—are maintained in version control with change workflows, peer reviews, and automated testing.

Readiness and Handoff to Implementation

Upon completing the architecture stage, teams receive a handoff packet containing high-resolution diagrams, interface catalogs, module registry exports, dependency matrices with SLAs, testing playbooks, deployment guides, and governance charters. Operational readiness reviews ensure that infrastructure, development, and security teams can deploy, integrate, and monitor the solution effectively. This structured transition accelerates time to value, minimizes rework, and establishes a clear chain of custody for every workflow component.

Chapter 1: Data Collection and Integration

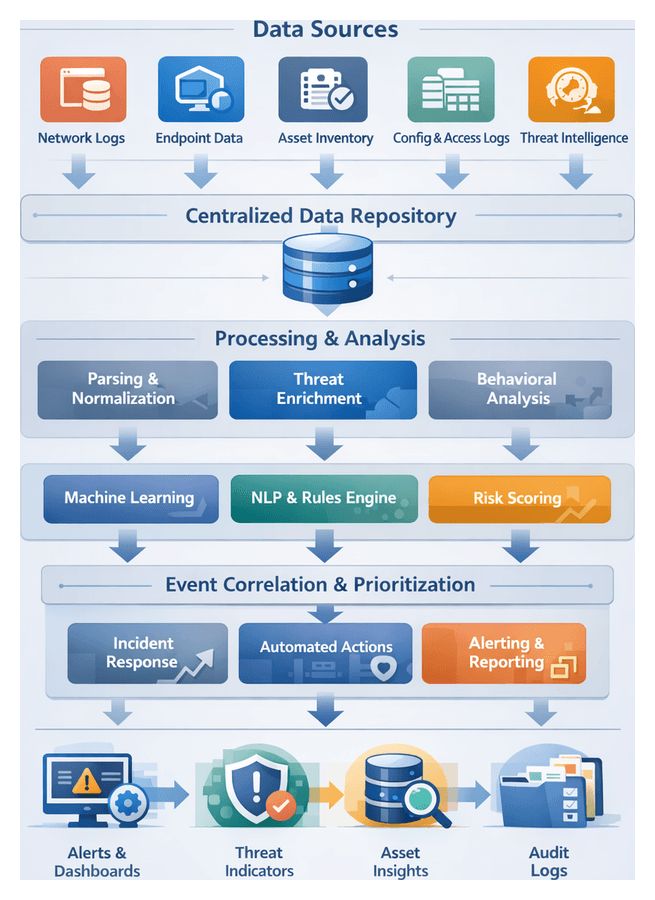

Establishing a Centralized Data Foundation

Purpose and Industry Context

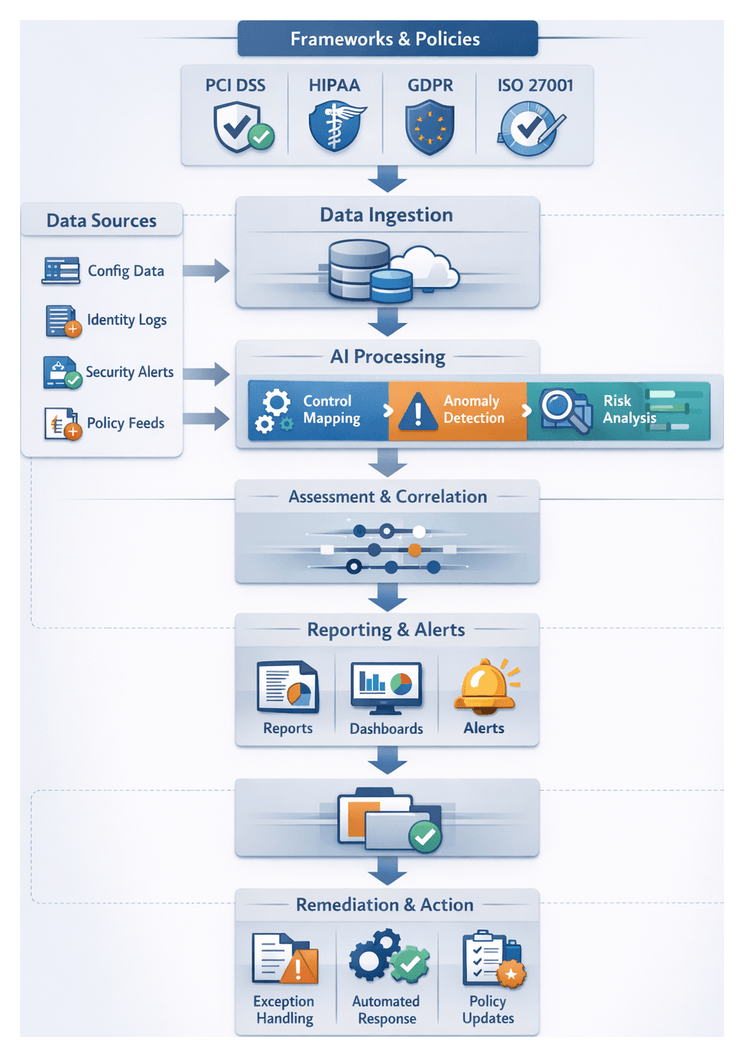

A centralized data pipeline unifies security telemetry—network logs, endpoint metrics, asset inventories, configuration records and external threat intelligence—into a single repository. This foundation eliminates silos, ensures consistent semantics and quality standards, and delivers the unified data fabric required for AI-driven analysis, risk scoring and automated response. By meeting regulatory requirements such as GDPR, HIPAA and PCI DSS, organizations in financial services, healthcare and critical infrastructure achieve both operational visibility and compliance readiness.

Core Data Inputs

- Network and Perimeter Logs: Traffic flows, firewall events and intrusion prevention records capture anomalous connections and lateral movement attempts.

- Endpoint and Host Metrics: CPU, memory and process activity from EDR platforms reveal unexpected code execution, privilege escalation and file integrity changes.

- Asset Inventory Records: Databases of hardware and software assets, including configurations and application versions, support vulnerability correlation and risk scoring.

- Configuration and Change Data: Patch histories, configuration snapshots and change control logs provide context for drift and compliance assessments.

- Identity and Access Logs: Authentication events, privileged session records and directory synchronization snapshots detect credential misuse and insider threats.

- External Threat Intelligence Feeds: Indicators of compromise, vulnerability disclosures and reputation scores from feeds in STIX/TAXII, CSV or JSON formats enrich internal records.

Prerequisites and Governance

- Data Governance Framework: Policies for ownership, retention, privacy and access control ensure alignment with organizational and regulatory mandates.

- Security Controls: TLS, VPNs, API keys and certificates protect data in transit and at rest, while role-based access controls restrict pipeline operations.

- Infrastructure Readiness: Scalable compute, storage and networking with high availability and disaster recovery provisions support high-velocity streams and large batch loads.

- Data Quality Baseline: Initial validation for completeness, timestamp accuracy and schema conformity prevents downstream errors and monitors ongoing fidelity.

- Schema and Field Mappings: A canonical model such as Elastic Common Schema guides normalization, with documented mappings from vendor-specific attributes.

Connectivity to Enterprise Platforms

- SIEM Solutions: Native ingestion connectors for Splunk and Elastic.

- Streaming Services: High-throughput collectors such as AWS Kinesis and Azure Event Hubs.

- Configuration and Asset Databases: Integrations with ServiceNow CMDB and other ITSM platforms.

- Endpoint Detection and Response: APIs from EDR vendors supply host metrics, threat alerts and forensic data.

- Cloud Workload Monitoring: Collectors for AWS CloudTrail, Azure Monitor and Google Cloud Logging.

Scalability and Compliance Considerations

- Elastic Resource Allocation: Container orchestration and auto-scaling adapt compute resources to ingestion demands.

- Partitioning and Sharding: Distributing streams by source or log type optimizes throughput.

- Batch vs. Streaming Modes: Real-time processing balanced with cost-effective batch ingestion for less time-sensitive data.

- Backpressure Management: Buffering and rate-limiting prevent downstream overload during surges.

- Retention and Security Policies: Automated purging, encryption at rest and in transit, data masking, audit trails and data residency controls satisfy legal requirements.

AI-Driven Parsing, Enrichment, and Contextualization

Transforming Raw Data into Actionable Intelligence

AI-driven parsing and enrichment bridges the gap between raw collection and advanced analysis by classifying streams, extracting entities and attaching contextual metadata. Machine learning models, NLP pipelines and rule engines reduce noise, surface high-value indicators and prepare semantically consistent records for anomaly detection, risk scoring and response orchestration.

Machine Learning for Event Classification

- Supervised classifiers assign events to known categories—firewall denials or authentication failures—based on labeled training data.

- Unsupervised clustering algorithms detect emerging patterns and outliers in high-volume streams.

- Anomaly detection models score records against historical baselines to flag significant deviations in real time.

Tools such as Splunk with the Splunk Machine Learning Toolkit and Elastic SIEM embed these models directly into the pipeline for continuous retraining and feedback-driven improvements.

Natural Language Processing for Unstructured Feeds

- Entity extraction identifies CVE codes, malware families and attacker group names.

- Topic modeling groups documents into themes to highlight emerging campaigns.

- Sentiment and intent analysis gauge urgency in threat advisories and dark-web postings.

Platforms like Recorded Future and ThreatConnect apply NLP to harvest, parse and correlate unstructured threat intelligence, enriching indicators with context and reputation metrics.

Rule Engines and Hybrid Enrichment

Rule engines codify deterministic policies and known IOCs for immediate triage, while hybrid systems route data to AI classifiers when complex inference is required. Security orchestration platforms such as Cortex XSOAR and Cisco SecureX coordinate rule execution alongside model inference based on context and performance needs.

Context Enrichment and Threat Tagging

- Asset mapping from CMDBs attaches business impact scores to hosts and services.

- Geo-location lookups translate IP addresses into country and city identifiers.

- Identity context from directory services flags privileged users and group memberships.

- Standardized tags—MITRE ATT&CK TTPs, vulnerability severity and malware taxonomy—enable precise filtering and visualization.

Metadata Management and Lineage

Data lineage frameworks record enrichment steps, model versions and rule sets applied to each record. Timestamps, pipeline stage identifiers and validation statuses support auditability, forensic analysis and retrospective corrections.

Orchestrating AI-Driven Security Workflows

Need for Orchestration

Fragmented tools and manual processes impede visibility, slow triage and introduce compliance gaps. An orchestrated AI workflow formalizes data ingestion, enrichment, detection, prioritization and response into a cohesive pipeline that delivers measurable risk reduction, consistent audit trails and rapid coordination between human analysts and machine agents.

Core Workflow Components

- Event Bus and Data Bus: Real-time transport of normalized events to analytics engines and orchestration modules.

- Orchestration Layer: Central engine enforcing workflow logic, routing events, applying business rules and tracking task status.

- AI Agents: Modular functions—natural language parsing, threat correlation, risk scoring and automated remediation.

- Human-in-the-Loop Interfaces: Dashboards and workbenches for analysts to review alerts, approve playbooks and refine models.

- Audit and Reporting Module: Captures every transition, decision rationale and response action for compliance and continuous improvement.

Workflow Flow and Interactions

- Data Ingestion and Normalization: Collectors apply format-specific parsers to attach metadata such as source, timestamp and environment tags.

- Event Routing and Pre-Filtering: Filtering agents discard low-value noise and route suspicious records to the orchestration queue.

- Intelligence Enrichment: AI agents augment events with threat feed correlation, vulnerability data and reputation scores.

- Behavioral Analysis and Detection: Analytics engines apply machine learning to identify deviations from established baselines and assign confidence scores.

- Risk Scoring and Prioritization: Models integrate asset criticality and business impact to rank incidents for response.

- Investigation Orchestration: Predefined steps—data collection, hypothesis generation, forensic capture—and human-machine task coordination.

- Automated Response Execution: Playbooks act through connectors to firewalls, EDR platforms and cloud APIs for containment and remediation.

- Feedback and Model Refinement: Remediation metrics and false-positive rates feed back into automated retraining and policy adjustment.

Integration and Coordination

- API-Based Integration: RESTful and message interfaces enable automated event push, intelligence retrieval and command execution.

- Event-Driven Architecture: Decouples producers and consumers; tasks trigger on event patterns rather than scheduled polls.

- Role-Based Task Assignment: Access controls assign tasks to appropriate analyst groups, track approvals and escalate overdue items.

- Shared Context Repository: Central store for enriched events, asset attributes and investigation notes to prevent redundant lookups.

- Audit Trail and Reporting: Real-time dashboards and audit-ready documentation capture workflow metrics and transitions.

Benefits of an Orchestrated Approach

- Reduced mean time to detect and respond through automated handoffs.

- Consistent, repeatable processes with standardized policies and playbooks.

- Optimized analyst effort as routine tasks are automated.

- Enhanced visibility and compliance via comprehensive logging and reporting.

- Scalable operations adapting to growing data volumes and evolving threats.

Delivering Unified Outputs and Handoff

Unified Artifact Formats

- Normalized Event Records: JSON objects with timestamp, source, event_type, severity and normalized fields for pattern detection.

- Asset and Inventory Catalogs: Enriched profiles of hosts, identities and cloud resources with ownership and classification metadata.

- Threat Indicator Collections: STIX or JSON feeds of IOCs, vulnerability signatures and reputation scores with confidence metrics.

- Contextual Tagging and Taxonomies: Controlled vocabularies—MITRE ATT&CK tactics, data sensitivity labels and criticality levels.

- Data Lineage and Provenance Logs: Metadata capturing source identifiers, transformation steps and quality metrics for auditability.

Validation and Quality Assurance

- Schema Conformance: Automated validators compare records against a centralized registry, routing invalid entries to exception queues.

- Referential Integrity: Cross-checks identifiers against master data sources to ensure asset and user references resolve.

- Completeness and Freshness: Thresholds for field population and maximum age limits on external feed entries to flag stale data.

- Duplicate Detection: Hashing techniques to collapse redundant records and preserve storage.

- Anomaly Filtering: Noise reduction filters drop or quarantine malformed events guided by configurable rules.

Delivery Mechanisms and SLAs

- Streaming Pipelines: Real-time topics via Apache Kafka for sub-second latency consumption.

- Batch Exports: Periodic deliveries to Snowflake or Hadoop in Parquet or Avro for model training and historical analysis.

- RESTful APIs: On-demand endpoints for ad-hoc enrichment and targeted lookups.

- File Share Interfaces: Secure FTP or object storage buckets for discrete payload drops and audit artifacts.

SLAs define latency objectives, throughput guarantees, uptime targets, error budgets and handoff notifications to ensure predictable delivery and accountability.

Error Handling and Governance

- Dead-Letter Queues: Isolated queues for records that fail validation for human review.

- Circuit Breakers: Rate-limiting controls to suspend ingestion when error thresholds are exceeded.

- Automated Rollback and Replay: Checkpointing for reprocessing from the last known good offset after systemic errors.

- Alerting and Diagnostics: Integration with Splunk and Elastic Stack to surface pipeline health metrics.

- Access Controls and Encryption: Role-based permissions, TLS in transit, AES-256 at rest and privacy filtering of PII.

Continuous Improvement of Output Quality

- Refine taxonomies to align with emerging threats and business priorities.

- Update transformation logic to capture new attributes and correct misclassifications.

- Tune SLAs based on operational metrics and incident response performance.

- Expand interfaces with GraphQL endpoints or new message bus connectors as analytics platforms evolve.

Ensuring Seamless Transitions

By standardizing outputs, formalizing handoff agreements and embedding observability, organizations deliver timely, trustworthy and actionable data to intelligence, detection, risk and response modules. This cohesive foundation accelerates threat mitigation and reinforces enterprise resilience.

Chapter 2: AI-Driven Threat Intelligence

Centralized Data Pipeline and Source Integration

Establishing a centralized data pipeline is the foundational step in any AI-driven security workflow. By aggregating infrastructure logs, endpoint telemetry, cloud metrics, asset inventories, identity records and external threat feeds, organizations eliminate silos, ensure consistency and create the raw material for advanced analytics. Solutions such as Splunk and Elastic ingest syslog streams, API calls and forwarders to provide a unified stream for downstream AI modules. This unified context supports threat detection, risk assessment and automated response at scale.

Inputs and Data Sources

- Infrastructure Logs from network firewalls, routers and switches via Splunk or Elastic.

- Endpoint Telemetry from EDR platforms such as CrowdStrike Falcon and Carbon Black.

- Cloud Metrics and Application Audits from AWS CloudTrail, Azure Monitor and Google Cloud Logging.

- Asset and Configuration Inventories maintained in ServiceNow, Device42 or Microsoft SCCM.

- External Threat Intelligence Feeds delivered via STIX/TAXII from providers like Recorded Future and ThreatConnect.

- Identity and Access Logs from Okta, Microsoft Active Directory and CyberArk.

Prerequisites for Reliable Ingestion

- Secure Connectivity: TLS-encrypted channels or VPN tunnels with least-privilege service accounts.

- Schema and Data Contracts: Canonical schemas (CEF, LEEF, JSON) with version control and deprecation policies.

- Time Synchronization: NTP/PTP across systems, original timestamps preserved and time zone normalization applied.

- Data Governance: Retention policies aligned with GDPR, HIPAA and PCI DSS, field-level encryption and audit logging.

- Scalability Planning: Horizontally scalable frameworks such as Apache Kafka, defined SLOs for latency and buffer capacity.

- Error Handling: Quarantine for malformed events, data quality metrics and alerting on ingestion failures.

- Operational Visibility: Dashboards for source health, throughput and latency; tooling for collector deployment and credential rotation.

These measures transform a collection of connectors into a resilient, secure and governed foundation for AI-driven intelligence.

Machine Learning Models for Threat Contextualization

In the enrichment stage, machine learning models elevate raw indicators into actionable insights by injecting risk scores, attribution data and behavioral context. Integrating AI-driven analysis enables filtering of noise and prioritization of high-impact threats for downstream correlation and response.

Supervised Classification

Supervised learning models—logistic regression, decision trees, random forests and gradient boosting—distinguish malicious indicators from benign ones. Historical labels on phishing domains, malware hashes and command-and-control IPs feed training pipelines. Real-time scoring is exposed through endpoints on platforms such as Amazon SageMaker and Vertex AI.

- Feature Engineering uses domain age, certificate properties and anomaly scores.

- Model Calibration ensures precision and recall with cross-validation.

- RESTful Inference Endpoints support on-demand predictions.

Unsupervised Clustering

Clustering algorithms—k-means, DBSCAN and hierarchical methods—identify latent patterns in unlabeled data. Grouping similar domains or URLs exposes campaigns and isolates outlier indicators for analyst review.

- Noise Reduction by separating anomalies.

- Campaign Identification through analyst-driven cluster labeling.

- Adaptive Grouping with incremental clustering on streaming data.

Graph-Based Relationship Mapping

Graph models represent indicators as nodes and associations—shared IPs, certificate reuse—as edges in databases such as Neo4j or Amazon Neptune. Graph neural networks and random walk algorithms compute embeddings that reveal hidden connections among threat infrastructure.

- Ingestion into graph stores from SIEM events and external feeds.

- Community Detection highlights high-risk clusters.

- Visualization of entity relationships guides threat hunting.

Natural Language Processing

NLP techniques extract entities, sentiment and relationships from unstructured text—threat reports, advisories and social media. Models like BERT or GPT derivatives perform named entity recognition on security-specific corpora.

- Text Preprocessing tokenizes and normalizes terminology.

- Entity Extraction identifies malware names, CVEs and targeted sectors.

- Contextual Embeddings link text findings back to existing indicators.

Ensemble and Hybrid Approaches

Combining multiple model types enhances accuracy and resilience. Voting ensembles aggregate classifier scores, while pipelines sequence NLP extraction, clustering and classification. Rule-based engines complement AI by enforcing signature matches.

- Stacked Models feed lower-level outputs into meta-classifiers.

- Sequential Enrichment applies multiple techniques in turn.

- Feedback Loops incorporate analyst verdicts into retraining.

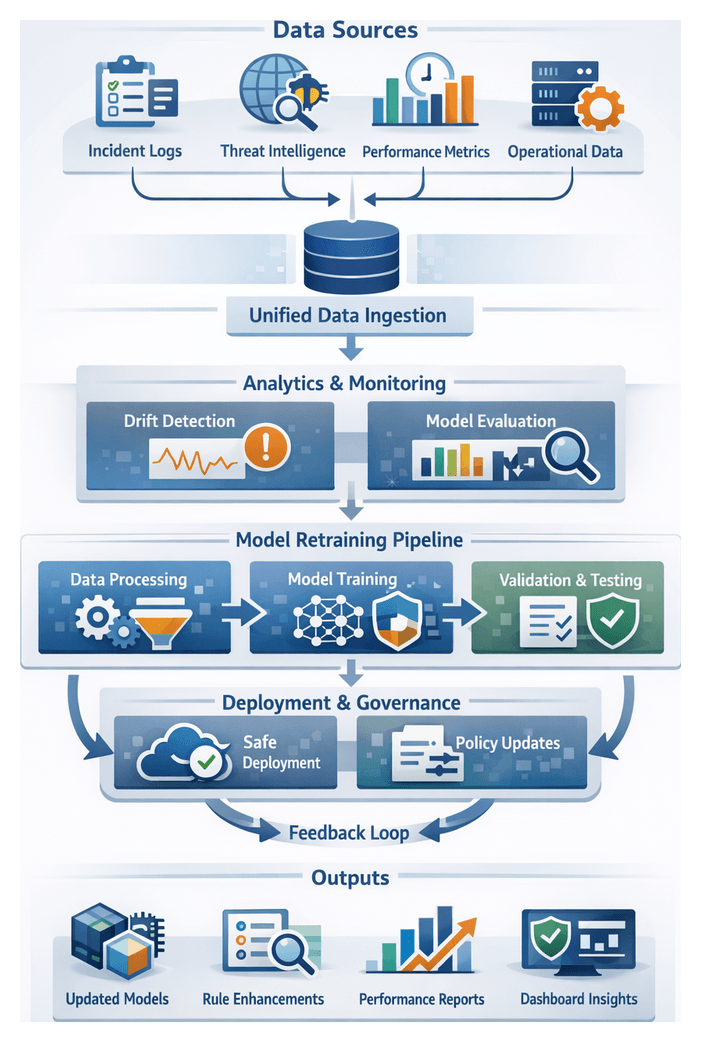

Continuous Learning and Model Governance

Automated retraining pipelines refresh models based on new labels and feature drift detection. CI/CD integration, canary deployments and automated validation guard against performance regressions. Feature stores like DataRobot Feature Store and Azure Machine Learning Feature Store centralize data for consistent training and inference.

- Data Versioning ensures reproducibility.

- Performance Monitoring tracks drift and latency.

- Rollback Plans address unexpected model behavior.

Intelligence Correlation and Prioritization Flow

This stage transforms enriched indicators and telemetry into prioritized, actionable alerts. By aligning threat data with internal asset and identity context, applying correlation rules and AI-driven pattern detection, organizations reduce noise and focus resources on high-impact events.

Data Mapping and Asset Contextualization

Accurate correlation begins with mapping indicators to assets and users. Queries against CMDBs or ServiceNow provide device owners and criticality. Identity resolution links logon events from Microsoft Active Directory and Okta to user profiles. Threat indicators from ThreatConnect feed into correlation rules alongside logs ingested by Splunk Enterprise Security.

Pattern Matching and Correlation Engines

- Rule-Based Correlation detects known TTP sequences.

- Behavioral Analysis uses ML models to spot anomalies such as data exfiltration.

- Graph Analytics surfaces multi-entity attack chains.

Platforms like IBM QRadar and Elastic Security generate composite events tagged with detection metadata and confidence scores.

Risk Scoring and Prioritization

- Severity Assignment evaluates exploitability, asset criticality and threat actor sophistication.

- Dynamic Scoring combines static risk factors with real-time intelligence.

- Thresholding auto-suppresses low-priority alerts.

Adaptive learning adjusts weights based on analyst feedback. Integration with Cortex XSOAR streamlines this feedback loop.

Orchestration and Integration

- Trigger Event from enriched feeds or streaming telemetry.

- Parallel Data Normalization, Asset Lookup and Entity Resolution.

- Routing to Correlation and Scoring Engines.

- Alert Publication via SIEM dashboards, ticketing systems or SOAR playbooks.

Microservices communicate over APIs and message queues, with defined SLAs for processing times and retry policies.

Stakeholder Coordination

- Shared Dashboards in SIEM or SOAR consoles.

- Synchronized Playbooks triggering incident response workflows.

- Feedback Channels for analysts to annotate and refine alerts.

- Governance Reviews to align rules and models with business risk.

Enriched Threat Feeds and Integration Interfaces

Structured, enriched threat feeds package correlated indicators, TTP mappings, risk scores and confidence metrics into standardized outputs for downstream systems. Adhering to schemas ensures interoperability and consistency across monitoring, risk assessment and response modules.

Structured Intelligence Outputs

- Indicator Packages in STIX-2.1 JSON, grouped by campaign or actor.

- Threat Actor Profiles summarizing attribution, malware families and historical patterns.

- MITRE ATT&CK Mappings with severity weights and detection difficulty ratings.

- Risk Scores and Confidence Metrics from ensemble models.

- Contextual Enrichment Fields including geolocation, business impact and sector tags.

Quality Controls and Dependencies

- Normalization Integrity with a unified threat taxonomy.

- Model Version Metadata embedded for reproducibility.

- Source Trust Scores to quarantine low-reliability feeds.

- Schema Compliance via a central registry and change logs.

- Latency SLAs ensuring timely enrichment.

Delivery Mechanisms

- RESTful APIs for on-demand queries.

- Publish/Subscribe streams via Kafka with Avro schemas.

- STIX/TAXII v2.1 servers for pull and push cycles.

- Webhooks for event-driven SOAR playbooks.

- Scheduled JSON or CSV exports to SFTP.

- SIEM Connectors for Splunk, Elastic Security and IBM QRadar.

- SOAR Integrations with Cortex XSOAR and ServiceNow Security Operations.

Handoff and Best Practices

- Real-Time Monitoring: Live telemetry correlation in SIEM and UEBA platforms.

- Risk Assessment: Ingestion of risk scores into vulnerability management and patching workflows.

- Case Management: Automated population of investigation templates with deep links to intelligence artifacts.

- Automated Response: SOAR playbooks triggered by confidence thresholds.

- Continuous Feedback: Post-incident data refines model parameters and source trust ratings.

- Data Validation Handshakes: Two-way acknowledgements and schema mismatch alerts.

- Schema Versioning: Feed URLs and headers embed version identifiers linked to a registry.

- SLA Monitoring: Alerts on delivery latency, consumer lag and error rates.

- Adaptive Throttling: Rate limiting and batching to protect legacy systems.

- Audit Trails: Immutable logs of enrichment transactions for compliance reviews.

This integrated workflow—from centralized ingestion through AI-driven enrichment, correlation and structured delivery—enables organizations to automate detection, prioritize threats and respond decisively, maintaining a proactive security posture in a dynamic threat landscape.

Chapter 3: Real-Time Monitoring and Anomaly Detection

Continuous Surveillance Prerequisites and Core Data Streams

Establishing continuous surveillance is the foundation of an AI-driven security workflow. It ensures real-time visibility across network, endpoint and cloud environments, feeding high-fidelity telemetry into detection engines. Prior to deploying anomaly detection models, organizations must satisfy technical, procedural and governance prerequisites to maintain data integrity, completeness and compliance.

Defining Scope, Boundaries and Retention

Security teams should first identify the assets, applications and user groups in scope for continuous monitoring. This includes:

- Network segments, virtual workloads and endpoint populations for telemetry capture

- Critical applications, services and identities that generate high-value logs

- Data retention periods and storage tiers aligned with GDPR, HIPAA or industry mandates

- Encryption and access controls to safeguard sensitive information

Clear scoping avoids overcollection and privacy breaches, balancing data depth against operational constraints.

Key Technical Prerequisites

- Synchronized Timekeeping: Configure Network Time Protocol (NTP) or Precision Time Protocol (PTP) across sensors, log forwarders and agents to ensure event correlation and accurate forensic reconstruction.

- Scalable Ingestion Infrastructure: Build a resilient data pipeline using streaming platforms such as Apache Kafka or Amazon Kinesis. Include load balancing, back-pressure controls and redundancy to prevent data loss during traffic spikes.

- Standardized Data Schema: Adopt common formats like Elastic Common Schema (ECS) or Splunk Common Information Model (CIM). Consistent field naming, timestamp formats and severity levels enable seamless cross-source correlation.

- Secure Connectivity: Encrypt telemetry in transit with TLS and at rest in storage. Implement certificate rotation and role-based access controls on ingestion endpoints.

- Baseline Profile Initialization: Collect representative data over 30–90 days under varied load conditions. This historical window enables machine learning models to learn normal behavior patterns.

- Asset and Identity Context: Enrich raw telemetry by integrating with a CMDB and identity directories such as Active Directory or Okta. Contextual attributes like asset criticality and user roles inform risk weighting.

- Retention and Compliance Policies: Implement tiered storage—hot, warm and cold—and automated deletion pipelines to comply with organizational and regulatory retention mandates.

Primary Telemetry Streams

A multi-facet view of activity is achieved by ingesting diverse telemetry:

- Network Traffic Metadata: Collect NetFlow, IPFIX and sFlow records from routers and cloud network appliances, revealing flow patterns without payload capture.

- Selective Packet Capture: Use tools like Zeek for deep protocol analysis on triggered sessions, balancing forensic depth against storage costs.

- Endpoint Telemetry: Stream process, file and registry events from agents such as CrowdStrike Falcon or Microsoft Defender for Endpoint.

- System and Application Logs: Normalize syslog, Windows Event Logs, database and web server logs using forwarders like Fluentd or Logstash.

- Cloud Service Telemetry: Capture AWS CloudTrail, Azure Monitor and Google Cloud Audit Logs to track API calls, configuration changes and access events.

- Identity and Access Management Events: Ingest authentication and authorization logs from identity providers, tracking SSO tokens, MFA events and privilege changes.

- Threat Intelligence Feeds: Integrate indicators from Recorded Future or AlienVault OTX, supplying reputations for IPs, domains and file hashes.

- Configuration Snapshots: Continuously export vulnerability scan results and compliance checks to detect drift and newly exposed risks.

Data Quality and Operational Readiness

Maintaining high-quality data streams demands ongoing governance and monitoring:

- Telemetry health dashboards for latency, error rates and ingestion volumes

- Schema validation to enforce presence and conformance of critical fields

- Adaptive sampling to control costs while preserving coverage of key events

- Enrichment pipelines for geolocation, ASN mappings and asset tagging, designed to be fault tolerant

- Privacy-preserving anonymization to protect PII without losing analytical utility

Operational governance includes 24×7 ownership, escalation procedures, change management for sensors and retention policies, and regular exercises to validate end-to-end readiness.

AI-Driven Ingestion, Normalization and Enrichment

AI capabilities automate ingestion and ensure consistency across heterogeneous data sources. Machine learning models dynamically infer schemas, classify feeds and filter noise, reducing manual configuration and maintaining parser accuracy as formats evolve.

Automated Ingestion and Schema Inference

Leading platforms such as Splunk Enterprise Security and Elastic Stack employ AI-driven parsers to:

- Automatically classify incoming sources by type and origin, routing high-priority streams appropriately

- Filter redundant or low-fidelity records, conserving pipeline capacity

- Cluster similar log formats to infer structural templates, accelerating onboarding of new devices

Contextual Enrichment and Confidence Scoring

Once normalized, raw telemetry is enriched with threat intelligence, vulnerability metadata and business context. AI engines perform:

- Entity resolution to consolidate references to the same host or identity across logs

- Context tagging for geolocation, business unit and compliance domain attributes

- Confidence scoring, weighing the reliability of enrichment sources

Platforms like IBM QRadar expose enrichment APIs that feed correlation rules and custom detections.

Behavioral Analytics and Alert Generation

Behavioral analytics leverages both statistical and machine learning methods to detect deviations from dynamic baselines, transform observations into prioritized alerts and orchestrate handoffs without overwhelming analysts.

Data Preprocessing and Feature Engineering

Structured and unstructured inputs—normalized logs from Splunk or Elastic SIEM, flow records, threat feed indicators, and user behavior logs—are validated, de-duplicated and enriched. Noise reduction filters remove benign events while enrichment agents append metadata such as asset criticality and vulnerability scores.

Baseline Modeling and Scoring

- Unsupervised learning jobs (clustering, autoencoders) run in data lakes, with model development on platforms like IBM Watson Studio.

- Automated feature pipelines transform attributes—session durations, file accesses, network statistics—for model training.

- Validated models are deployed into real-time inference layers using streaming frameworks such as Apache Flink.

- Each event is scored by deviation magnitude, threat context from Recorded Future feeds, asset criticality and user trust levels.

Aggregation, Correlation and Case Creation

Anomaly scores alone are insufficient. Correlation engines group related signals—common IPs, sequential authentication events or kill chain stages—using graph analysis. When an incident exceeds thresholds in linked events or cumulative risk, it is escalated to a case.

Notification, Assignment and Enrichment Handoffs

High-priority incidents publish structured alerts in CEF or JSON Schema to SIEM and SOAR queues. Automated tickets are created in ServiceNow or Jira Service Management. Notifications via email or collaboration platforms such as Slack alert on-duty analysts. Secondary enrichment jobs pull process traces from EDR systems, packet captures from forensics appliances and WHOIS data from VirusTotal, attaching findings to the case.

Analyst Interaction and Feedback Loops

Investigations begin in unified workspaces with event timelines, risk scores and enrichment artifacts. Analysts annotate evidence, mark false positives and update case status. These decisions feed back into retraining pipelines and policy repositories, refining detection logic and scoring thresholds.

High-Confidence Alerts and Handoff Mechanisms

High-confidence alerts signal events that meet strict statistical and behavioral criteria. Structured outputs include unique identifiers, timestamps, severity levels, source context, behavioral summaries, enriched attributes and suggested next steps. Alerts adhere to the Open Cybersecurity Schema Framework and are delivered in JSON or AVRO.

Dependencies and Validation

- Anomaly detection models in Elastic Security or Splunk UBA must be continuously validated to prevent drift.

- Threat intelligence feeds from Recorded Future and ThreatConnect require timely updates.

- Asset and identity repositories inform severity weighting; synchronization failures can misclassify alerts.

- Quality assurance filters enforce false positive controls and threshold recalibrations.

Service-level agreements measure latency from ingestion to alert generation, ensuring rapid detection of critical events.

Automated Handoff to Investigation and Response

- Case creation in ServiceNow or Jira Service Management with full alert details.

- Ingestion into SOAR platforms such as Cortex XSOAR, initiating playbooks and tracking remediation steps.

- Real-time dashboards in Microsoft Sentinel and IBM QRadar for manual review.

- Notifications through email, SMS or collaboration tools to on-call personnel.

Bidirectional integrations propagate analyst findings back to the monitoring layer, suppressing duplicates and adjusting baselines.

AI Agents for Orchestration and Automated Response

Intelligent agents bridge analytics and operational controls, executing dynamic playbooks via APIs to firewalls, endpoints and identity systems. They log each action for audit trails and learn from remediation outcomes to refine decision logic.

Policy-Driven Playbooks and Risk Prioritization

Agents follow adaptive playbooks based on incident characteristics and risk scores. AI-driven risk prioritization platforms such as Tenable.io combine threat intelligence, asset value and business context to rank alerts, guiding resource allocation.

Automated Containment and Recovery

- Endpoint isolation via EDR APIs to quarantine compromised hosts.

- Blocking malicious IPs or domains on firewalls and proxies.

- Revoking user credentials through identity management connectors.

- Coordinating patch deployment with vulnerability management tools.

- Post-remediation scans to validate system integrity and close feedback loops.

Platforms like Demisto (part of Cortex XSOAR) exemplify AI-powered response orchestration driven by reinforcement learning.

Scalability, Resilience and Continuous Improvement

To support high data volumes and evolving threats, the entire workflow leverages distributed processing, microservices and container orchestration with Kubernetes. Key features include:

- Horizontal scaling of ingestion, inference and enrichment nodes.

- Load-balanced API gateways and message queues for policy queries and alert distribution.

- Automated failover, retry mechanisms and self-healing workflows for pipeline resilience.

- Feedback loops triggering model retraining, policy tuning and enrichment rule updates.

Cross-functional roles—data engineers, data scientists, SOC analysts, SOAR engineers and governance teams—collaborate in regular reviews to align detection logic with threat intelligence, business priorities and compliance mandates. This living capability adapts to new applications, cloud services and adversary tactics, ensuring proactive defense powered by AI.

Chapter 4: Risk Assessment and Prioritization

Security Data Unification and Fragmentation Challenges

The modern threat landscape spans on-premises data centers, multi-cloud environments, edge locations and remote workforces. Each domain produces distinct streams of logs, metrics, asset inventories and external threat intelligence. When this security data resides in silos—with inconsistent schemas, isolated toolchains and delayed context—organizations face blind spots, slow detection, manual toil and compliance hurdles. Legacy ingestion pipelines buckle under high volumes, creating backlogs that impair real-time monitoring and elevate risk.

Overcoming these challenges demands a centralized data pipeline that:

- Aggregates logs and telemetry from platforms such as Splunk, Elastic and cloud provider services.

- Enforces schema normalization and data quality controls.

- Integrates threat feeds from Recorded Future and VirusTotal with internal inventories.

- Delivers enriched, metadata-rich events for downstream analytics and response.

This unified approach eliminates silos, accelerates detection, reduces manual interventions and ensures a complete audit trail for compliance frameworks such as PCI DSS, GDPR and NIST SP 800-53.

Orchestrated AI Workflows for Detection and Response

Ad hoc scripts and fragmented point tools cannot keep pace with today’s scale and complexity. Embedding AI agents within an orchestration layer creates structured, end-to-end workflows that deliver consistency, resilience and auditability. Key AI-powered components include:

- Data Ingestion Agents: Secure connectors fetch and normalize logs from SIEMs and cloud services.

- Enrichment Models: NLP and classification engines attach context—IoC mapping, actor attribution and risk tags.

- Anomaly Detection Engines: Unsupervised learning models flag deviations from behavioral baselines.

- Risk Scoring Agents: AI and statistical modules compute exploitability, impact and threat likelihood.

- Response Orchestration Agents: Playbook frameworks such as Cortex XSOAR and IBM Security SOAR automate containment and remediation tasks.

- Adaptive Learning Engines: Reinforcement learning pipelines refine models using post-incident feedback.

An orchestration backbone coordinates these agents via a fault-tolerant event bus, enforces policy management, and logs every workflow step for traceability. This design scales elastically, retries failures, and aligns security actions with business risk priorities.

Risk Evaluation and Prioritization Workflows

Transforming enriched events into prioritized risk lists requires a formalized sequence of aggregation, normalization, weighting, scoring, adjustment, grouping and handoff. This workflow delivers transparent, repeatable and auditable risk assessments that guide remediation and investment decisions.

- Input Aggregation: Collect threat intelligence from enrichment models, alerts from monitoring engines and asset metadata from CMDBs and platforms such as Splunk Enterprise Security.

- Normalization and Enrichment: Standardize data types, unify CVSS vectors and MITRE ATT&CK classifications, and append business process impact scores.

- Weight Assignment: Apply organizational and regulatory multipliers (PCI DSS, GDPR) stored in a policy repository.

- Risk Score Calculation: Combine AI-driven exploit probability (gradient boosting, neural networks) with impact projections (Monte Carlo simulation) into a 0–100 scale.

- Dynamic Adjustment: Recalibrate scores in real time based on active exploit campaigns, maintenance windows or network segmentation changes.

- Ranking and Grouping: Sort items into critical, high, medium and low tiers using clustering algorithms to batch related issues.

- Handoff: Deliver prioritized lists to ticketing systems such as ServiceNow Security Operations or dashboards, and trigger remediation playbooks.

The orchestration layer enforces SLAs, monitors latency and volume, and logs all policy versions and decisions to satisfy audit requirements.

Advanced Scoring Algorithms and Risk Modeling Engines

At the heart of risk assessment lie machine learning and probabilistic inference techniques that quantify risk across likelihood, impact and propagation. Core capabilities include:

- Supervised and Unsupervised Learning: Classification and regression models trained on historical incidents predict exploit likelihood, while clustering and anomaly detection surface novel risk patterns. Platforms such as Splunk Enterprise Security Machine Learning Toolkit and IBM QRadar Advisor with Watson provide prebuilt algorithms and feature management.

- Graph-Based Propagation and Bayesian Networks: Graph engines and Bayesian inference in suites like RSA Archer Suite model interdependencies and calculate how a compromise spreads through connected assets.

- Simulation and Scenario Analysis: Monte Carlo and discrete event simulations in platforms such as AttackIQ evaluate “what-if” scenarios, estimating loss distributions, time-to-compromise and control effectiveness.

- MLOps Framework: Feature stores, model registries, containerized inference services and workflow engines (e.g., Apache Airflow) automate training, validation, deployment and monitoring of risk models.

- Continuous Calibration: Feedback loops ingest incident closure data, detect drift, retrain models via automated pipelines and promote validated versions into production, leveraging services like Microsoft Azure Sentinel.

This combination ensures dynamic, explainable risk scores that adapt to emerging threats and changing environments.

Outputs and Handoff Mechanisms

The risk assessment stage generates deliverables that drive remediation, governance and reporting:

- Prioritized Risk Listings: Ranked inventories of assets and vulnerabilities.

- Interactive Dashboards: Heat maps, trends and KPIs via Splunk or IBM Security QRadar.

- Executive Summaries: High-level narratives and scorecards for leadership.

- Data Exports and API Feeds: JSON/CSV streams to SOAR systems and asset databases.

- Score Reports: Detailed breakdowns of criteria, weights and model versions.

These outputs are versioned, timestamped and annotated for auditability. Handoff to downstream modules occurs through:

- RESTful APIs: Integrations with vulnerability scanners like Tenable.io and Qualys VM.

- Message Queues: Real-time event streams consumed by automation platforms.

- SOAR Triggers: Playbooks in Cortex XSOAR initiate containment and patch workflows.

- Ticketing Integration: Automatic creation of remediation tickets in ServiceNow or Jira.

- Scheduled Reports: Recurring PDF or HTML summaries distributed via email or collaboration tools.

Interface-level SLAs, authentication policies and encryption requirements govern these exchanges to ensure security and reliability.

Continuous Improvement and Compliance Integration

A robust feedback framework captures remediation outcomes, new threat intelligence and control performance metrics to refine risk models and weight policies. As updates occur, the orchestrator automatically incorporates changes without disrupting core services. Compliance and audit readiness are supported through:

- Control Gap Matrices: Automated alignment to NIST CSF, ISO 27001 and other frameworks.

- Evidence Packages: Correlation of risk findings with remediation attestations and exceptions.

- Custom Dashboards: Real-time status of high-risk controls and assets for governance boards and auditors.

- Exportable Documentation: Methodologies, data sources and model configurations for external review.

By seamlessly integrating outputs with retraining workflows and compliance reporting, organizations maintain an adaptive risk posture and demonstrate proactive governance in a continuously shifting threat landscape.

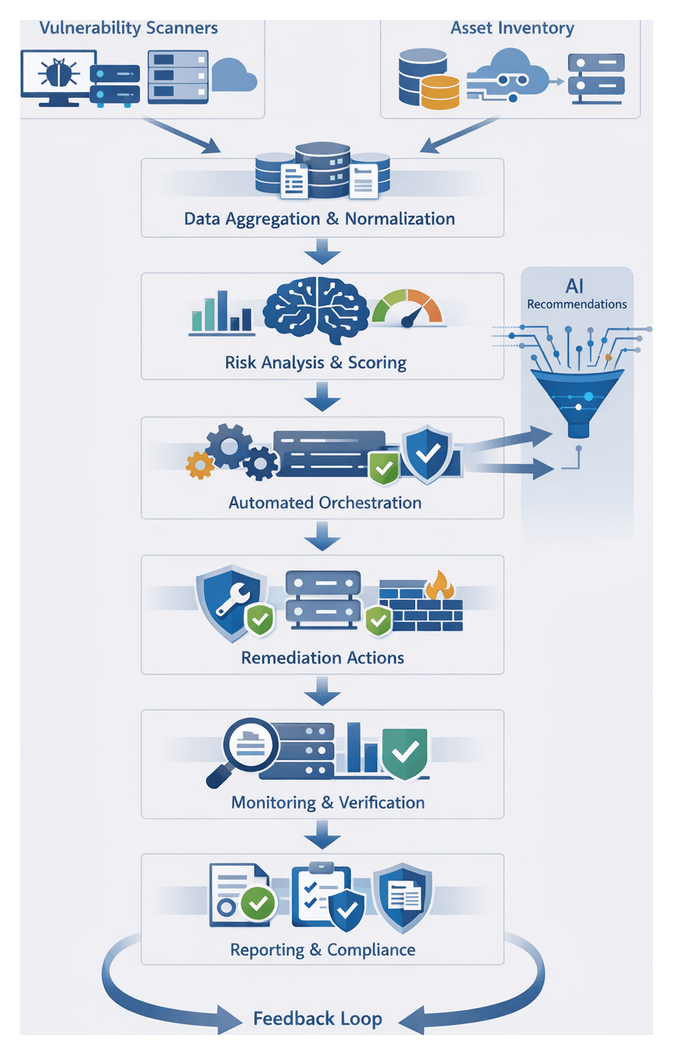

Chapter 5: Vulnerability Management Automation

Vulnerability Scan Results and Asset Inventories

Combining vulnerability scan outputs with comprehensive asset inventories establishes the foundation for automated vulnerability management. By ingesting data from multiple scanners and asset repositories, organizations create a unified view of every hardware and software component tied to known vulnerabilities. This high-fidelity dataset enables accurate risk scoring, prioritized remediation, and audit-ready reporting.

Automated vulnerability scanners such as Nessus, Qualys and Rapid7 InsightVM run both agentless and agent-based assessments alongside platforms like Microsoft Defender for Endpoint and Tanium. Manual penetration tests and red-team exercises supplement these tools. Asset data is sourced from CMDBs and ITSM systems such as ServiceNow and BMC Helix, cloud inventories from AWS Config, Azure Resource Graph and Google Cloud Asset Inventory, and network topology tools.

To ensure reliable ingestion and integrity, standardized asset identifiers (serial numbers, GUIDs) must align across systems. Scan policies—covering scope, credentials, frequency and network segmentation—should reflect asset criticality and maintenance windows. Authentication uses least-privilege service accounts and secure credential stores, while API connectors or secure file transfers provide data feeds with defined SLAs for freshness and error handling. A canonical schema for vulnerability records and asset attributes—capturing fields such as asset ID, OS version, CVE number, CVSS score, remediation recommendation and scan metadata—guides data normalization workflows that parse diverse formats, map severity levels, unify timestamps to ISO 8601, validate required fields and deduplicate records.

Connectivity between scanners, inventory repositories and ingestion pipelines requires firewall configurations, reliable APIs with retry logic, and a choice between batch exports or streaming deltas. Quality controls include record-count reconciliation, schema compliance checks, severity distribution monitoring, random sampling against source reports and SLA alerts for ingestion failures. By rigorously integrating and normalizing scan results and asset data, organizations achieve greater visibility, fewer false positives and accelerated time to remediation.

Automated Remediation Orchestration Sequence

The orchestration engine transforms enriched vulnerability data into coordinated remediation actions, replacing manual workflows with standardized, scalable automation. It ingests findings, asset context and risk scores to trigger predefined playbooks that execute patch deployments, configuration updates and compensating controls. By integrating with patch management systems, container registries, firewall controllers and cloud platforms, the engine delivers consistent, auditable and accelerated remediation.

Framework and Workflow Coordination

The typical sequence is:

- Trigger evaluation: receive vulnerability findings with severity, asset metadata and compliance requirements.

- Playbook selection: choose remediation workflows based on severity thresholds, asset criticality and business context.

- Approval gating: route high-impact actions through ITSM approvals.

- Execution: invoke APIs or automation agents to apply fixes on endpoints, servers, containers or network devices.

- Monitoring: track execution status, capture success or failure events and detect anomalies.

- Verification: perform post-remediation checks to confirm resolution and policy compliance.

- Feedback loop: update the vulnerability management platform and risk scoring engines with remediation outcomes.

Playbook Design and Modularity

Playbooks encapsulate discrete actions—OS patch deployments, container image rebuilds, firewall rule adjustments, application hardening and cloud policy enforcement. Modular design enables reuse, rapid adaptation to new vulnerabilities and centralized version control to propagate updates consistently.

Integration and Execution Engines

Orchestration platforms such as Palo Alto Networks Cortex XSOAR and Splunk Phantom provide connector libraries, state management, conditional logic and secure credential storage. They interface with vulnerability management platforms, CMDBs, endpoint management systems and cloud consoles to tailor remediation actions to operational context and minimize disruption.

Human-in-the-Loop and Approval Mechanisms

Integration with ITSM tools—ServiceNow and Jira Service Management—supports automated ticket creation, role-based approvals, time-bound escalations and comprehensive audit logs. This ensures governance for changes affecting critical systems while preserving remediation momentum.

Monitoring, Logging and Feedback

Execution logs, status dashboards and automated alerts feed back into security operations and risk engines. Post-remediation verification and compliance scans confirm vulnerability resolution. This continuous feedback maintains accurate asset risk profiles and drives improvement in playbooks and processes.

Scalability and Resilience

Best practices include:

- Distributed orchestration nodes across regions or data centers.

- Event-driven architectures with message queues to decouple modules and absorb peak loads.

- Idempotent playbooks for safe retries.

- Circuit breaker patterns to detect systemic failures and trigger fallbacks.

- Monitoring of queue backlogs, execution latencies and error rates.

Operational Metrics and AI-Driven Adaptation

Key performance indicators—mean time to remediate, percentage of automated fixes, playbook success rates, reduction in asset risk scores and patch compliance rates—guide workflow optimizations. Emerging AI capabilities enable predictive prioritization, adaptive playbook generation, anomaly detection during execution and natural language interfaces for ad hoc commands, evolving remediation ecosystems toward intelligent self-optimization.

AI-Driven Agent Recommendation and Ticketing Integration

AI models transform raw vulnerability data into prioritized remediation strategies by scoring each finding based on exploit prevalence, asset criticality and remediation complexity. Natural language processing extracts patch identifiers, change log steps and compensating controls from vendor bulletins. Reinforcement learning refines recommendations using feedback on deployment time, rollback incidents and user-reported issues.

Integration with ITSM Platforms

Structured ticket payloads—with CVE references, asset details, recommended steps, dependencies and SLA-aligned completion dates—are generated in ServiceNow or Jira. AI-driven rules route tickets to in-house teams, managed service providers or automated agents, assigning appropriate priority and resolver groups.

SOAR Orchestration and Playbooks

Orchestration engines consume AI recommendations and translate them into executable playbooks that:

- Validate patch applicability against live inventories

- Stage updates in test environments

- Deploy patches via endpoint management agents

- Coordinate reboots within maintenance windows

- Verify post-remediation compliance

Human analysts intervene at annotated checkpoints, while chat and mobile notifications keep stakeholders informed.

Dynamic Prioritization and Workflow Adjustment

Real-time threat intelligence feeds trigger reprioritization of tickets, SLA modifications or emergency playbooks that bypass standard change processes. Dynamic workflows reroute tasks, notify advisory boards or initiate out-of-band approvals when risk thresholds are exceeded.

Collaborative Feedback and Knowledge Base

Operational data from ticket updates—resolution times, rollback incidents and engineer comments—populate a semantic search-enabled knowledge base. The system auto-suggests proven solutions for recurring issues, integrating vendor documentation and internal runbooks to tailor steps to organizational configurations.

Governance, Audit Trails and Compliance

Each ticket records recommendation rationale, AI model version, timestamp and approver identity. Audit dashboards demonstrate compliance with patch policies, track backlog and measure adherence to risk-based SLAs, supporting regulatory requirements and internal governance.

Scalability, Security and Model Retraining

Microservices expose RESTful APIs for scanner ingestion across diverse platforms—Windows, Linux, macOS, network devices, industrial control systems and cloud services. Multi-tenancy partitions recommendations by business unit or geography. Encryption in transit and at rest, mutual TLS and role-based access controls safeguard vulnerability data. Continuous retraining pipelines ingest closed-ticket feedback, updated vulnerability databases and vendor advisories to refine classification, priority scoring and support for new asset types, with versioned model deployments ensuring traceability.

By embedding AI-driven recommendations into automated ticketing, organizations reduce mean time to remediation, lower manual workloads, improve compliance posture, accelerate critical fixes and enhance transparency through comprehensive audit trails.

Remediation Status Reporting and Change Management Handoffs

The final phase translates remediation actions into structured reports and integrates outcomes with change control systems. This ensures transparency, auditability and alignment with enterprise risk policies, closing the loop on the vulnerability lifecycle and demonstrating compliance to stakeholders.

Key Remediation Outputs

- Fix Confirmation Logs with time-stamped records of deployments, source identifiers and verification status.

- Compliance Check Results from post-remediation scans, policy indicators and deviation logs.

- Audit Trails and Evidence Packages consolidating actions, approvals, digital signatures and attachments.

- Executive Summary Reports featuring metrics on remediated vulnerabilities, outstanding issues, remediation velocity and risk reduction impact.

Dependencies and Validation Gates

- Accurate Asset Inventories referencing canonical identifiers from CMDBs or asset repositories.

- Consistent Scanner Configurations and up-to-date signature sets in tools like Qualys or Tenable to avoid false positives.

- Authorized Change Approvals captured in audit logs with timestamps and approver identities.

- Robust API Connectivity between remediation engines and reporting dashboards with retry and error-handling mechanisms.

- Encryption and Access Controls to secure remediation evidence in transit and at rest.

Integration with Change Management Systems

- Automated ticket creation and updates in ServiceNow or Jira, linking vulnerability IDs to ticket numbers.

- Bi-directional API calls to sync approvals and remediation status, with notifications based on escalation policies.

- Alignment of remediation schedules with maintenance windows and automated deferral logic for noncritical patches.

- Automated ticket closure upon verification scan success and generation of post-implementation review documents.

Handoff Mechanisms and SLAs

- SLA Definition mapping vulnerability severity and asset criticality to remediation targets encoded in playbooks.

- Automated Notifications at handoff points, embedding links to dashboards and ticket references.

- Escalation Paths for missed SLAs, notifying team leads, risk managers and executives across regions.

- Handoff Acknowledgments via digital signatures or confirmation clicks as audit evidence.

Feedback Loop to Risk Management

- Risk Score Adjustments that update asset risk profiles upon successful remediations or escalate on delays.

- Process Metrics—mean time to remediation, patch success rates and exception rates—informing playbook refinements.

- Model Retraining Inputs from false positives, remediation failures and exception justifications to improve AI predictions.

- Governance Reporting with real-time remediation statistics in dashboards supporting strategic planning and risk decisions.

By delivering structured remediation reports, enforcing change management handoffs and integrating results into the risk management continuum, organizations transform raw vulnerability data into enterprise-ready intelligence. The combination of automation, audit trails and governance interfaces ensures that remediation efforts are transparent, accountable and continually optimized—ultimately reducing exposure and reinforcing a mature security posture.

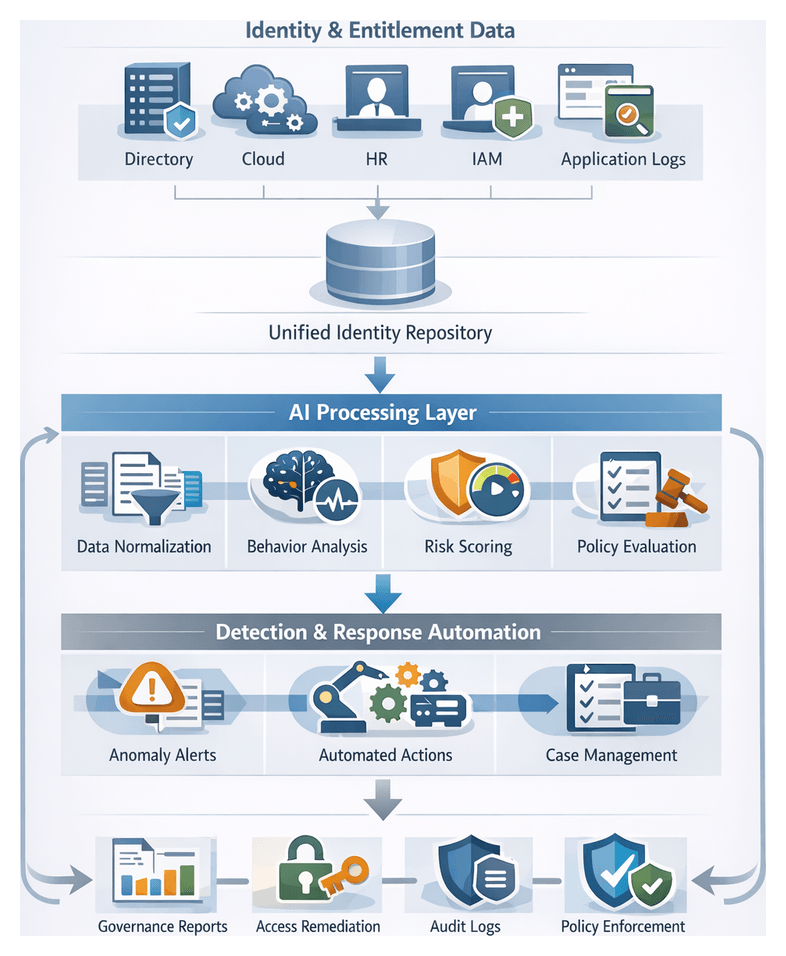

Chapter 6: Identity and Access Governance

Identity Data Collection and Entitlement Inputs

In the Identity and Access Governance stage, the objective is to aggregate identity attributes, entitlement assignments and access events from across on-premises and cloud platforms into a centralized repository. This unified identity graph provides a high-fidelity view of who has access to what resources, under which conditions and with what privileges, serving as the foundation for analytics, policy evaluation and anomaly detection.

Purpose and Scope

The primary aim of this stage is to maintain a trusted, normalized repository of identity data to support downstream governance processes. Key goals include:

- Centralizing user, service and application identities from Active Directory, Azure Active Directory, LDAP, HR systems and cloud IAM platforms

- Capturing roles, group memberships, delegated privileges and resource entitlements

- Recording authentication events, authorization decisions and privilege usage logs

- Normalizing disparate schemas to a consistent model and validating data integrity

- Enabling real-time ingestion or scheduled synchronization based on source criticality

Data Sources and Integration

Sources range from directory services and identity management platforms to HR systems, privileged access tools and application logs. Representative inputs include:

- Directory Services: Microsoft Active Directory, Azure Active Directory and LDAP servers supply core identity attributes and group hierarchies.

- IAM Platforms: Okta, SailPoint and native IAM modules expose entitlements, approval workflows and role definitions via RESTful APIs.

- HR and ERP Systems: Employment status, department assignments and lifecycle events inform orphan account detection and access revocation triggers.

- Privileged Access Management: Vault solutions record checkout logs, dynamic session credentials and just-in-time elevations.

- Cloud Providers: AWS IAM, Google Cloud IAM and Azure subscriptions provide service principals, resource-level roles and policy attachments.

- Application Logs: Authentication, single-sign-on assertions, API token usage and failed login events enrich entitlement usage analytics.

Prerequisites and Controls

Ensuring data accuracy and security requires:

- Encrypted, least-privilege service accounts and token-based authentication for all integrations

- Attribute mapping specifications to translate source schemas into a unified identity model

- Validation rules for mandatory fields, referential integrity checks and automated alerts for anomalies

- Onboarding procedures, change management workflows and rollback plans for new sources

- Data privacy controls, masking sensitive attributes and enforcing role-based access to the identity repository

With these prerequisites in place, organizations can eliminate silos, reduce manual reconciliation and lay the groundwork for adaptive, risk-driven access governance.

Continuous Access Review and Anomaly Detection

Moving beyond static reviews, continuous access review and anomaly detection orchestrates real-time monitoring of entitlement changes, authentication patterns and resource access events. By applying AI-driven models within streaming data pipelines, this phase detects privilege creep, orphaned accounts and policy violations as they emerge, triggering immediate remediation or human review.

Data Flows and Enrichment

- Streaming Ingestion: Connectors retrieve logs from identity providers, single-sign-on platforms and privileged access appliances.

- Normalization: Events standardize to a schema with fields for user ID, timestamp, action, resource and context metadata.

- Contextual Enrichment: The centralized identity store adds attributes such as role, department and historical access patterns.

- Behavioral Scoring: AI models assess deviations from baseline profiles, assigning risk scores that consider asset criticality and policy severity.

- Policy Evaluation: A policy engine applies segregation-of-duties rules, access review criteria and certification status to determine necessary actions.

- Alerting and Remediation: High-risk events generate tasks in case management systems or invoke automated remediation agents.

- Review and Resolution: Risk scores drive routing to identity administrators or automated modules for approval, revocation or escalation.

- Audit Logging: All actions, decisions and system changes feed into an immutable audit repository for compliance reporting.

Detection Models and Scoring

Core AI scenarios include:

- Privilege Creep: Clustering algorithms flag users whose privilege sets expand beyond peer norms.

- Orphaned Accounts: Rule engines detect stale accounts lacking recent activity or manager assignments.

- Anomalous Logins: Time-series forecasting and geo-IP checks identify unusual sign-on patterns.

- Excessive Group Memberships: Graph analysis finds users in critical groups exceeding policy thresholds.

- Policy Violation Prediction: Supervised classifiers learn from past audits to predict high-risk entitlement changes.

Risk scoring engines combine deviation magnitude, user risk profiles and policy weightings to prioritize events for human or automated action.

Review and Workflow Automation

- Task Creation: Tickets with event details, risk scores and recommended actions are opened in platforms like ServiceNow or Jira.

- Notification: Assigned analysts receive alerts via governance dashboards, email or collaboration tools.

- Investigation: Contextual links to related events, entitlement histories and user profiles support decision-making.

- Decision and Execution: Access approvals, revocations or escalations execute through directory and IAM connectors.

- Validation and Closure: Post-remediation monitoring confirms resolution and updates the audit trail.

- Feedback Loop: Outcome metadata refines AI models, recalibrates thresholds and reduces false positives over time.

Integration with periodic certification campaigns ensures that remediated entitlements are excluded from future review tasks and that unresolved anomalies are highlighted during formal attestation cycles.

Positioning AI Agents in the Security Process

AI agents act as intelligent intermediaries across the access governance lifecycle, automating data parsing, anomaly detection, decision support and remediation. By distributing specialized agents into microservices and event-driven pipelines, organizations can scale operations, maintain consistent audit trails and accelerate response times.

Agent Roles and Capabilities

- Data Ingestion and Normalization Agents: Parse logs (CSV, JSON, CEF) using NLP tokenization and regex patterns; integrate with Splunk and Elasticsearch for storage and indexing.

- Anomaly Detection Agents: Employ clustering algorithms (DBSCAN) and forecasting models (ARIMA, LSTM) to identify deviations from behavioral baselines.

- Threat Enrichment Agents: Query STIX/TAXII feeds and knowledge graphs for threat context, scoring indicators and mapping adversary tactics.

- Risk Scoring Agents: Combine CVSS data, asset criticality and historical outcomes to generate composite risk ratings and ranked dashboards.

- Orchestration Agents: Coordinate playbooks, retries and SLAs using Cortex XSOAR.

- Remediation Orchestration Agents: Execute containment, patch rollouts and access modifications through connectors to ServiceNow and Jira.

- Continuous Learning Agents: Monitor model drift, manage versioning with MLflow and TensorFlow Extended, and trigger retraining pipelines.

Architectural Patterns and Best Practices

- Microservices: Containerize agents with RESTful APIs or message queues for independent scaling and resilience.

- Event-Driven Pipelines: Use Kafka or AWS Kinesis streams for low-latency detection and real-time response.

- Batch Workflows: Schedule non-urgent tasks, such as compliance reporting, on Spark or Hadoop clusters.

- Hybrid Orchestration: Combine immediate alerts for critical events with batch processing for routine analyses.

- Security Controls: Enforce mutual TLS, encrypt data in transit and at rest, and apply RBAC to agent identities.