AI Enhanced Visual Effects Workflow for Creative Efficiency in Media and Entertainment

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Purpose and Strategic Foundations of an AI-Driven VFX Pipeline

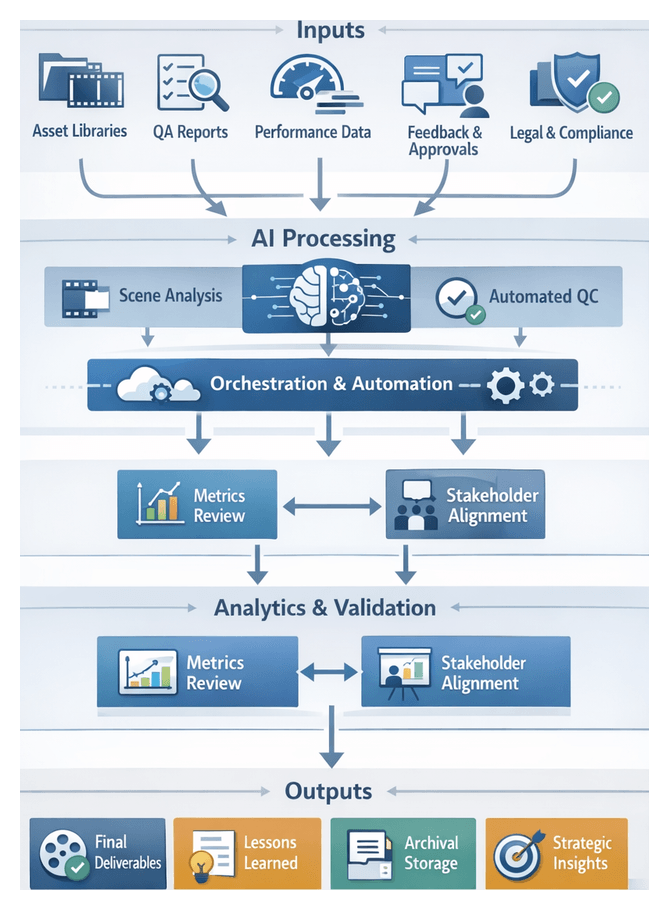

Establishing a clear foundation for an AI-enhanced visual effects pipeline aligns creative vision with technical execution and ensures that every component supports core business and artistic objectives. By defining targets such as accelerated asset turnover, consistent visual fidelity, and collaborative workflows, stakeholders agree on the balance between automation and creative control. This unified purpose prevents fragmented toolsets and manual handoffs, transforming repetitive tasks into measurable processes and guiding tool interoperability across production phases. A well-defined strategy also frames performance benchmarks and compliance requirements, empowering supervisors to monitor progress and maintain quality against quantifiable metrics.

Inputs, Requirements, and Prerequisites

Key Inputs and Stakeholder Alignment

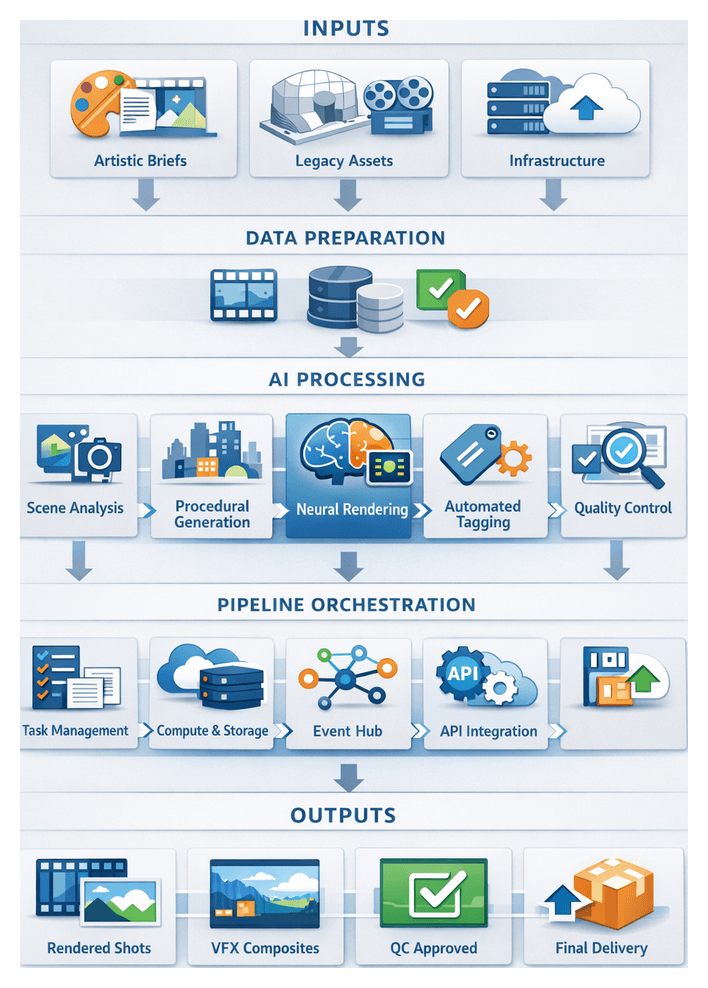

Successful pipeline design begins with a comprehensive understanding of project requirements, existing assets, and technical constraints. Critical inputs include:

- Artistic Briefs and Style Guides: Concept art, genre conventions, color palettes, and compositing standards inform AI model configurations and ensure outputs match creative intent.

- Legacy Assets and Reference Media: Texture libraries, 3D models, live-action plates, and footage archives accelerate AI training cycles and maintain brand continuity.

- Infrastructure Constraints: On-premises GPU availability, cloud rendering credits, network bandwidth, and storage quotas guide decisions on model complexity and parallelization strategies.

Engaging creative directors, VFX supervisors, pipeline engineers, and IT managers through cross-functional workshops captures diverse expectations and translates high-level goals into actionable technical specifications.

Prerequisites for Scalable AI Integration

Before deploying AI modules at scale, production teams must satisfy key conditions to guarantee stability and repeatability:

- Data Normalization: Standardize footage, texture maps, and model files with consistent frame rates, color spaces, naming conventions, and resolution settings to streamline automated ingestion.

- Metadata Standards: Define schemas for asset tagging—covering taxonomy, usage rights, version history, quality scores, and AI-generated annotations—to drive searchability and conditional logic across modules.

- Infrastructure Validation: Conduct network latency tests, storage I/O benchmarks, and GPU throughput assessments to verify that hardware can sustain AI workloads without bottlenecks.

- Model Governance: Document model provenance, training data, performance metrics, and retraining procedures to support transparency, reproducibility, and compliance with industry regulations.

- Team Training and Collaboration: Provide artists, technical directors, and engineers with hands-on labs, code repository pull-request workflows, and workshops to foster a collaborative culture and accelerate onboarding.

Quality Benchmarks and Operating Conditions

Transform subjective reviews into objective validation steps by codifying benchmarks for performance, fidelity, and compliance:

- Performance Targets: Define maximum runtimes for tasks like scene segmentation, render pass generation, and noise reduction to ensure predictable scheduling.

- Visual Fidelity Thresholds: Use metrics such as peak signal-to-noise ratio (PSNR), structural similarity index (SSIM), and texture resolution comparisons against reference sequences.

- Compliance Requirements: Encode broadcast standards (SMPTE, ITU), confidentiality protocols, and licensing restrictions into automated checks to prevent non-compliant assets from progressing.

Designing a Unified AI-Driven Workflow

Orchestration and Coordination Layer

A unified workflow relies on an orchestration layer that directs data flows, schedules tasks, and monitors system health. Core components include:

- Pipeline Management System: Track tasks, versions, and approvals with ShotGrid or ftrack.

- Event Bus: Dispatch asset and metadata updates via Apache Kafka or RabbitMQ.

- Service Registry: Maintain dynamic discovery using Consul or Kubernetes API.

- Compute Controller: Orchestrate render farms and cloud batches with AWS Batch or Azure Batch.

- Workflow Engine: Coordinate AI modules and rule-based scheduling with Apache Airflow or custom schedulers.

When an artist uploads a model, the message bus triggers downstream tasks—automated texturing, look development, or scene integration—without manual intervention. Continuous health monitoring alerts engineers to potential bottlenecks before deadlines are impacted.

Data and Metadata Exchange

Consistent data exchange hinges on a common model and standardized metadata schema accessible via RESTful APIs. Key metadata fields include asset identifiers, version history, AI annotations (bounding boxes, material predictions), quality flags, and scheduling data. This shared schema enables:

- Real-time visibility of asset status and dependencies.

- Automated handoffs when metadata flags update upon job completion.

- Traceability with logged actions, timestamps, and contextual notes.

System Actors and Collaboration Flow

Even in an automated pipeline, human expertise is essential. Core actors and their roles include:

- Technical Directors: Configure AI models and troubleshoot pipeline failures.

- Artists: Enhance AI-preprocessed assets for modeling, texturing, lighting, and compositing.

- Producers and Supervisors: Monitor milestones, budgets, and resource allocations.

- Pipeline Engineers: Maintain the orchestration layer and integrate new services.

- Compute Administrators: Optimize on-premises and cloud resources for cost and performance.

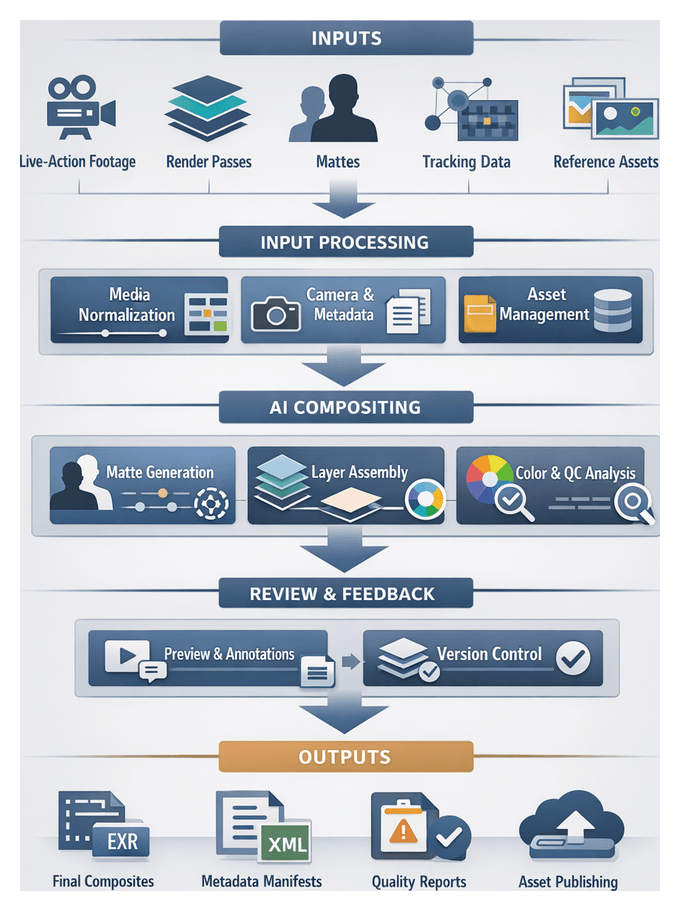

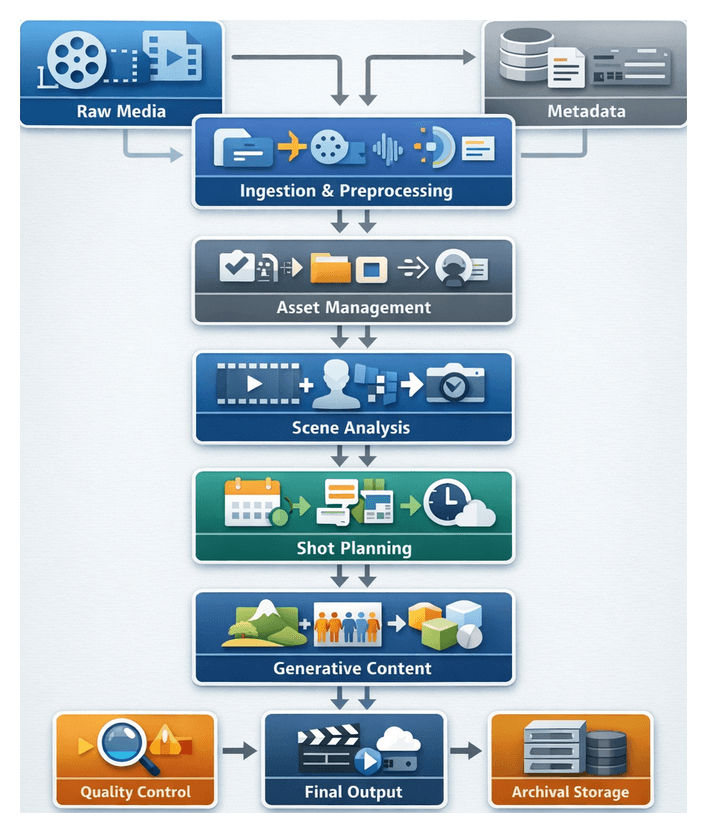

The collaboration flow sequences ingest, AI preprocessing, asset tagging, scene analysis, shot scheduling, procedural generation, neural rendering, compositing, QA, and final delivery. At each handoff, both AI agents and human reviewers share visibility into asset provenance, status flags, and pending actions.

API-Driven Integrations and Triggered Actions

Diverse tools connect via APIs and plugin adapters. Applications like Houdini and Blender expose render commands and version updates to the orchestration layer, while AI services such as Amazon SageMaker and Azure Machine Learning offer inference endpoints for annotations and style transfers. Event-condition-action rules automate workflow steps, for example:

- Upon batch preprocessing completion, invoke object detection and update metadata to “analysis_in_progress.”

- When QA flags lighting inconsistencies, open a ticket and escalate priority to “high.”

- Once all shots in a sequence are approved, initiate final format conversion jobs and notify delivery coordinators.

Cross-system authentication via OAuth and certificates maintains security while enabling rapid integrations.

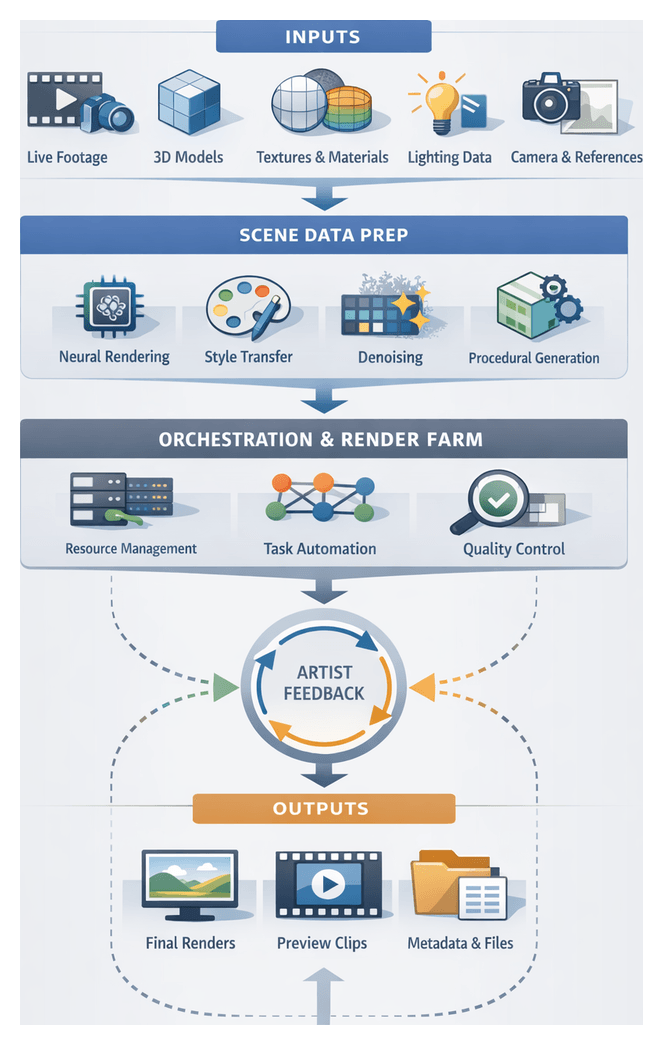

Real-World Scenario: Coordinated AI-Augmented Shot Delivery

- Footage ingest triggers denoising and normalization on a GPU cluster.

- Clean frames are cataloged and passed to a scene analysis service for segmentation.

- Procedural generation and crowd simulation modules produce environment assets and background elements.

- Neural rendering applies style transfer and material predictions for consistent aesthetic and dynamic lighting.

- Compositors receive pre-assembled layers with metadata annotations, enabling creative refinements without manual rotoscoping.

- Automated QA detects a shadow mismatch, enqueues corrective lighting tasks, and routes them back to rendering.

- Final outputs are packaged, manifests generated, and assets archived for future reuse.

Core AI Techniques and Their Roles

Neural Rendering and Style Transfer

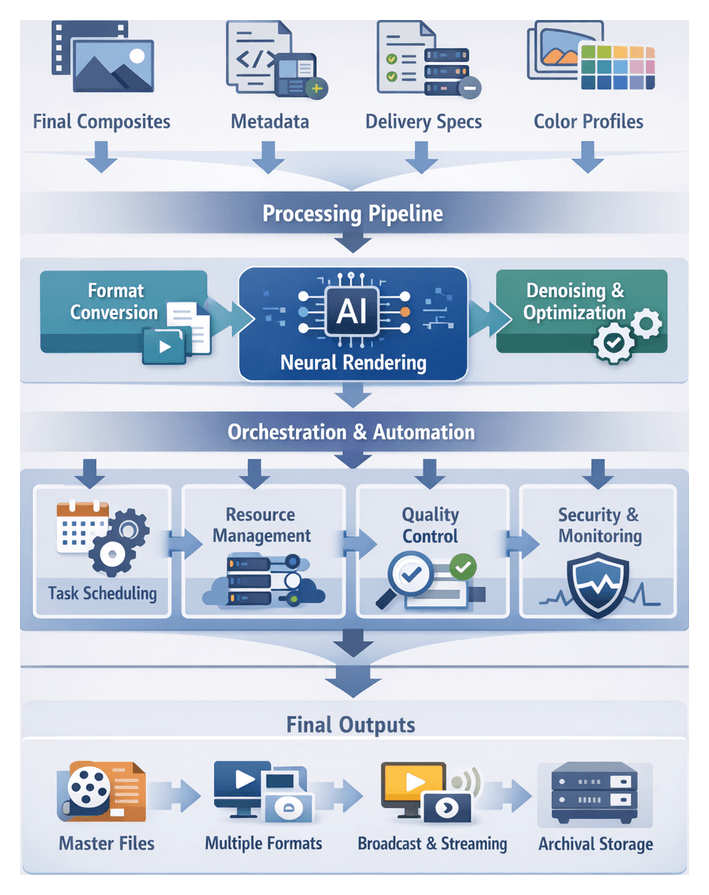

Deep networks synthesize photorealistic or stylized imagery from 3D geometry and reference art. Exposed as GPU-accelerated microservices via TensorFlow or PyTorch, these models handle relighting, denoising, and aesthetic alignment, reducing render times and ensuring consistency.

Computer Vision for Detection and Segmentation

Instance segmentation and keypoint detection models generate mattes and spatial metadata for compositing and procedural tasks. Batch or streaming services integrate through NVIDIA Omniverse connectors or Google Cloud Vision APIs, eliminating manual rotoscoping and accelerating matte creation.

Predictive Analytics and Scheduling Algorithms

Forecasting models trained in AWS SageMaker analyze historical time logs and resource metrics to optimize shot schedules, balance workloads, and flag high-risk tasks, improving on-time delivery and reducing idle compute time.

Procedural Generation and Generative Adversarial Networks

Rule-based engines in tools like SideFX Houdini generate base geometry, textures, and crowd behaviors. GANs refine these outputs, adding realistic detail and variation while respecting design constraints.

Automated Tagging and Classification

Classification models embedded in digital asset management platforms or as Azure Functions assign standardized tags to textures, audio clips, and 3D models, accelerating retrieval and enforcing version consistency.

Vector Embedding Search

Asset representations are encoded into high-dimensional vectors and stored in FAISS or Elasticsearch with vector plugins, enabling semantic search by nearest-neighbor queries and surfacing relevant assets quickly.

Workflow Orchestration and API Microservices

Each AI capability is exposed as a RESTful or gRPC endpoint within Kubernetes or Apache Airflow, enabling elastic scaling, fault isolation, and real-time status tracking across preprocessing, analysis, rendering, and delivery stages.

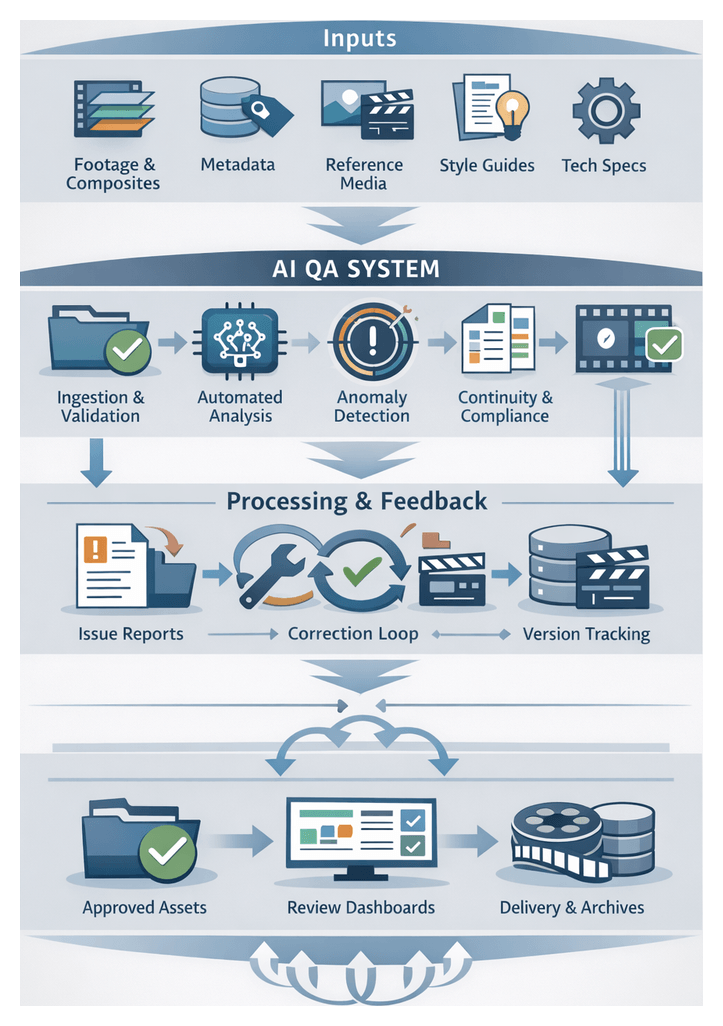

Automated QA and Anomaly Detection

Rule-based engines and neural networks inspect composite plates, detect artifacts and continuity errors, and log structured reports to JIRA or ShotGrid, speeding defect identification and reducing rework.

Deliverables, Dependencies, and Integration Mechanisms

Key Deliverables of the Foundational Stage

The initial definition stage produces structured artifacts that guide downstream teams:

- Pipeline Objectives Document detailing goals, performance targets, and quality criteria.

- Input Specification Pack covering media types, resolution standards, metadata schemas, and reference materials.

- AI Module Interface Definitions with API contracts, data formats, model versioning, and communication protocols.

- Unified Metadata Schema encompassing technical attributes and creative descriptors.

- Quality Assurance Criteria for footage integrity, naming conventions, and consistency rules.

- Workflow Diagram illustrating stages, decision gates, and dependencies.

- Risk and Dependency Register with mitigation plans and ownership assignments.

Dependencies and Prerequisites for Handoff

To ensure a seamless transition to media ingest and preprocessing, teams must satisfy prerequisites across three areas:

- Data Readiness: Access to approved storyboards, concept art, live-action plates, and centralized asset libraries with correct permissions; metadata compliant with agreed schemas.

- Toolchain Integration: Verified API connectivity for AI services, standardized application and plugin installs, and commissioned compute resources tested for performance.

- Organizational Alignment: Formal sign-off on objectives and quality benchmarks, clearly defined roles and responsibilities, and established communication protocols for issue tracking and change requests.

Integration Points and Handoff Mechanisms

Predefined touchpoints enable immediate custody transfer of assets and data:

- Ingestion Manifest and Data Mapping: Machine-readable file lists with metadata and mapping documents correlating source files to internal identifiers and folder structures.

- Quality Validation Gate: Automated reports on QA compliance and approval stamps from QA leads or VFX supervisors.

- API Endpoint and Workflow Trigger: Authenticated requests to launch preprocessing pipelines, with webhooks or message queues for status updates.

- Metadata Injection and Repository Sync: Bundled shot-level metadata ingested alongside raw media and synchronized asset catalog updates.

Traceability and Continuous Feedback

Embedding audit trails and feedback loops at every handoff enables root-cause analysis and iterative improvement:

- Historical records of manifest versions, approval timestamps, and trigger invocations.

- Logged feedback from ingest and preprocessing teams informing future pipeline releases.

- Change management processes for controlled updates to schemas, interfaces, and quality criteria.

Chapter 1: Establishing AI Pipeline Objectives and Inputs

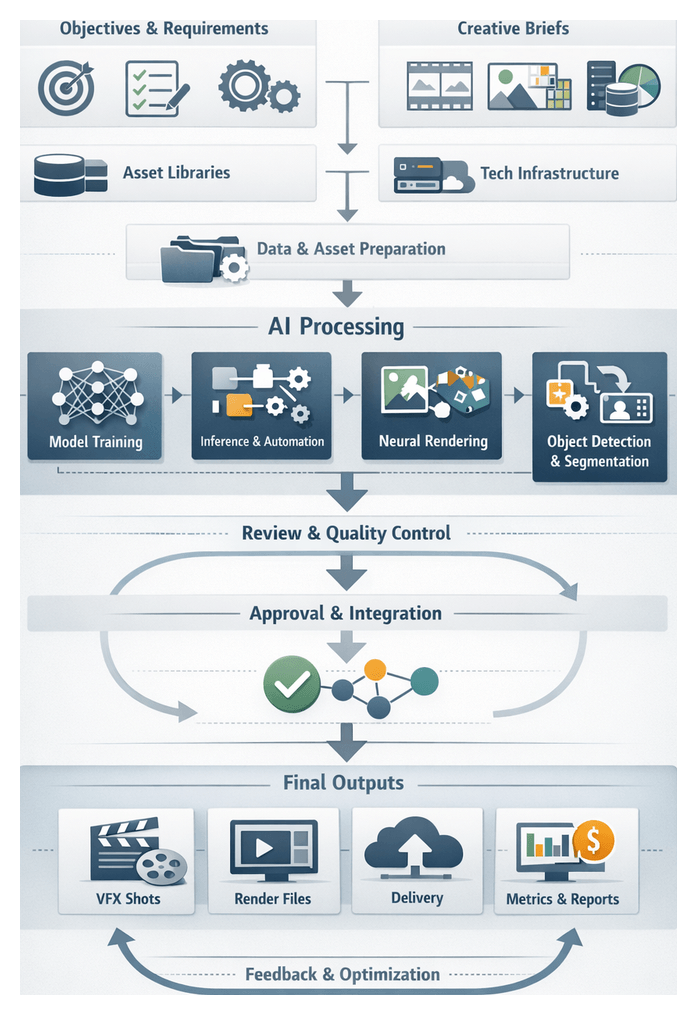

Establishing Objectives, Inputs, and Success Criteria

The foundational stage defines strategic objectives, required inputs, and success criteria to guide the AI-driven VFX pipeline. Aligning creative ambitions with operational feasibility ensures purposeful deployment of AI modules, efficient resource allocation, and consistent delivery of artistic and business outcomes.

Strategic Goals

- Clarify visual style aspirations for neural rendering and style transfer.

- Set targets for turnaround time, cost per shot, and compute utilization.

- Establish quality thresholds for artifact levels, texture fidelity, and frame consistency.

- Define collaboration protocols and data-sharing agreements across departments.

- Align pipeline capabilities with delivery schedules and platform requirements.

Required Inputs

Gather and validate all elements that drive AI modules and support downstream processes.

- Creative Briefs: Narrative context, art-direction guidelines, color palettes, concept art, storyboards, mood boards, and client specifications for resolution, color space, and delivery formats.

- Asset Libraries: 3D model repositories with version histories, texture archives annotated by resolution and UV conventions, live-action plates organized by scene and camera metadata, style reference videos, and licensed third-party datasets.

- Technical Infrastructure: Compute resources (CPU, GPU, memory, storage), network bandwidth for on-premises and cloud transfers, software stacks (deep learning frameworks, rendering engines, asset management), container orchestration platforms, and security controls.

- Data Standards: Metadata schemas for shot identifiers, timecode, camera settings; file naming conventions; interoperable formats (USD, Alembic, EXR, TIFF, FBX, USDZ); and model input/output specifications.

- Legal and Licensing: Usage rights for stock assets, open-source license compliance, talent and location releases, data privacy regulations, and audit trails for asset provenance.

Quality Benchmarks and KPIs

- PSNR and SSIM targets for denoising outputs.

- Error margins for edge artifacts and matte bleed in rotoscoping.

- Color consistency thresholds for style-transfer sequences.

- Latency goals for real-time previews.

- Throughput objectives for GPU-accelerated batch processing.

- Model accuracy rates for detection, segmentation, and stylization.

- Percentage of manual tasks automated and resource utilization efficiency.

Risk Management

Identify dependencies and mitigation strategies to prevent delays and maintain momentum.

- Data integrity risks from incomplete or corrupted inputs.

- Model performance variability due to insufficient training diversity.

- Infrastructure or cloud-service outages affecting throughput.

- Version mismatches between AI modules and creative applications.

- User adoption resistance and change-management challenges.

Core AI Integration Workflow

Mapping the integration workflow translates objectives and inputs into a coordinated sequence of actions, data exchanges, model invocations, and handoffs. This blueprint ensures that AI services, asset management systems, orchestration platforms, and creative teams operate in concert throughout the VFX lifecycle.

Key Actors and Components

- Creative Leadership: Defines artistic objectives and acceptance criteria.

- Pipeline Architects: Design APIs, data schemas, and orchestration plans.

- Data Engineers: Prepare datasets, enforce metadata standards, and secure transfers.

- AI Specialists: Train and fine-tune models for segmentation, synthesis, and rendering.

- Asset Management: Catalogs media and version histories.

- Orchestration Platform: Schedules jobs and allocates resources.

- Review Tools: Enable annotations and approval workflows.

Workflow Stages

- Conceptual Input Validation: Storyboards and style references are ingested with metadata tags for traceability.

- Data Preparation: Transcode, normalize, and enrich assets with standardized schemas.

- Model Training: Initialize networks on GPU clusters or cloud instances and track progress via the orchestration platform.

- Inference Orchestration: Expose trained models as microservices and configure sequential or parallel execution for tasks like segmentation, detection, and neural rendering.

- Automated Asset Generation: AI services produce breakdowns, layouts, and stylized frames, locking versions for review.

- Collaborative Review: Stakeholders annotate assets through integrated platforms, triggering iterative refinement.

- Quality Verification: Automated QA modules inspect artifacts and log issues back into the asset database.

- Handoff to Downstream: Approved assets are promoted to compositing or final rendering via automated triggers.

System Interactions

- Asset Management → AI Service: Secure API calls retrieve inputs with metadata.

- Orchestration → Compute Resources: Container deployments specify GPU/CPU requirements.

- AI Service → Review Platform: Outputs pushed with version tags for traceability.

- Review Platform → Orchestration: Approval or revision requests trigger conditional workflow branches.

- QA Module → Asset Management: Quality reports enrich asset histories.

Orchestration and Automation

- Declarative workflow definitions (YAML/JSON) describe stages, inputs, parameters, and conditions.

- Dynamic resource scaling optimizes GPU clusters and cloud instances.

- Pluggable connectors integrate with Autodesk ShotGrid, Foundry Nuke, or in-house systems.

- Automated retry and rollback policies handle inference or transfer failures.

Monitoring and Error Handling

- Centralized structured logs capture asset ID, model version, compute node, and timestamp.

- Health checks and alerts notify on-call teams via email, Slack, or SMS.

- Performance dashboards visualize metrics like latency and resource utilization.

- Critical errors trigger escalation workflows with ticket assignment and stakeholder notifications.

Agility and Continuous Improvement

Ongoing analysis of metrics, feedback, and error reports drives iterative refinement of models, workflows, and connectors. Regular post-mortem reviews identify bottlenecks, update orchestration scripts, and enhance efficiency and creative alignment.

Roles of AI Modules

Neural Rendering and Style Transfer

Neural rendering services transform scene representations into photorealistic or stylized images. Deployed as microservices, models trained on frameworks like TensorFlow and PyTorch, orchestrated by Kubeflow, provide GPU-accelerated inference. Generated frames are tagged with style parameters and stored in the asset system for rollback and batch re-rendering.

Object Detection, Segmentation, and Tracking

Detection and segmentation accelerate rotoscoping and compositing by producing masks and object layers automatically. AI services such as OpenCV, along with deep models on TensorFlow or PyTorch, use architectures like Mask R-CNN and YOLOv5. Outputs, delivered as structured JSON metadata, feed into compositing engines for edge refinement and automated blending.

Predictive Analytics and Resource Forecasting

Models trained on production data from Autodesk ShotGrid and streaming platforms such as Apache Kafka forecast timelines, resource demands, and cost estimates. Time-series models like Prophet or LSTM networks drive dynamic shot planning and alert producers to capacity constraints.

Automated Metadata Extraction and NLP

Natural Language Processing services extract descriptive tags, transcripts, and shot descriptions from scripts, storyboards, and dailies. Tools such as Adobe Sensei, Google Cloud Vision API, and AWS Rekognition automate indexing, normalize taxonomies, and improve asset discoverability through RESTful integrations.

Generative Models and Procedural Synthesis

Generative Adversarial Networks and Variational Autoencoders synthesize textures and environments, while procedural systems like Houdini and Runway ML apply these assets within geometry rules. Quality evaluator networks score candidates for artifact detection and style alignment before ingestion and metadata tagging.

AI-Driven Asset Search and Classification

Semantic search engines powered by Elasticsearch with k-NN and vector databases like Pinecone enable similarity queries across large catalogs. Embedding vectors update with each new asset, and in-context recommendations surface assets within applications such as Maya and Nuke.

System-Level Integration

All AI components operate as modular services in a service-oriented architecture managed by Kubernetes. Message brokers handle asynchronous workflows, while RESTful APIs and gRPC endpoints support synchronous calls. Inference metrics, logs, and feedback feed centralized dashboards, and automated retraining pipelines keep models robust against evolving production demands.

Foundational Stage Deliverables and Handoffs

Key Deliverables

- AI Pipeline Blueprint: Diagram and narrative detailing workflow stages, data flows, AI modules, and decision points.

- Data Schema Definitions: Metadata structures, file formats, and database schemas for asset management and automation.

- Model Specification Report: Catalog of selected frameworks—e.g., TensorFlow, PyTorch—with performance targets and evaluation metrics.

- Integration Interface Catalog: API endpoints, messaging protocols, payload schemas, and error handling conventions.

- Quality Benchmarks Document: Acceptance thresholds for image fidelity, metadata accuracy, throughput, and error rates.

Dependencies and Preconditions

- Provisioned compute clusters, network, and storage with container platforms configured.

- Sign-off from creative directors, VFX supervisors, and IT on blueprints and schemas.

- Access to sample footage, asset libraries, and annotation datasets with required permissions.

- Agreement on toolchain versions for render engines, asset management, and AI libraries.

- Security reviews, compliance documentation, and encryption standards finalized.

- Commercial and open-source license confirmations for all software and plugins.

Integration Documentation

- API Specifications: RESTful and gRPC definitions with URI paths, parameters, payload schemas, status codes, and authentication.

- Data Transfer Protocols: Secure FTP, HTTPS, or object storage APIs (Amazon S3, Azure Blob) with naming conventions and retry strategies.

- Messaging Interfaces: Event-driven integration using RabbitMQ, Apache Kafka, or cloud pub/sub with topic hierarchies and payload formats.

- Monitoring Interfaces: Logging formats, retention policies, and observability tool integrations (Grafana, Datadog).

Handoff to Media Ingest and Preprocessing

- Trigger Conditions: Approval and versioning of foundational deliverables and readiness checklist completed.

- Delivery Mechanism: Artifacts published to a shared repository with semantic version tags and automated notifications.

- Validation Checklist: Schema compliance checks, mock API tests, and sample inferences to verify endpoint responses and metadata alignment.

- Onboarding Session: Review of deliverables, interface specifications, and roles with the ingest team to resolve outstanding questions.

- Feedback Loop: Issue tracker for gaps discovered during implementation, enabling iterative refinement of foundational artifacts.

By rigorously defining objectives, inputs, workflows, module roles, and handoff procedures, teams establish a repeatable, transparent framework that accelerates downstream development and maintains alignment across stages, ensuring the AI-enhanced VFX pipeline delivers consistent creative and operational value.

Chapter 2: Need for a Unified AI-Driven Workflow

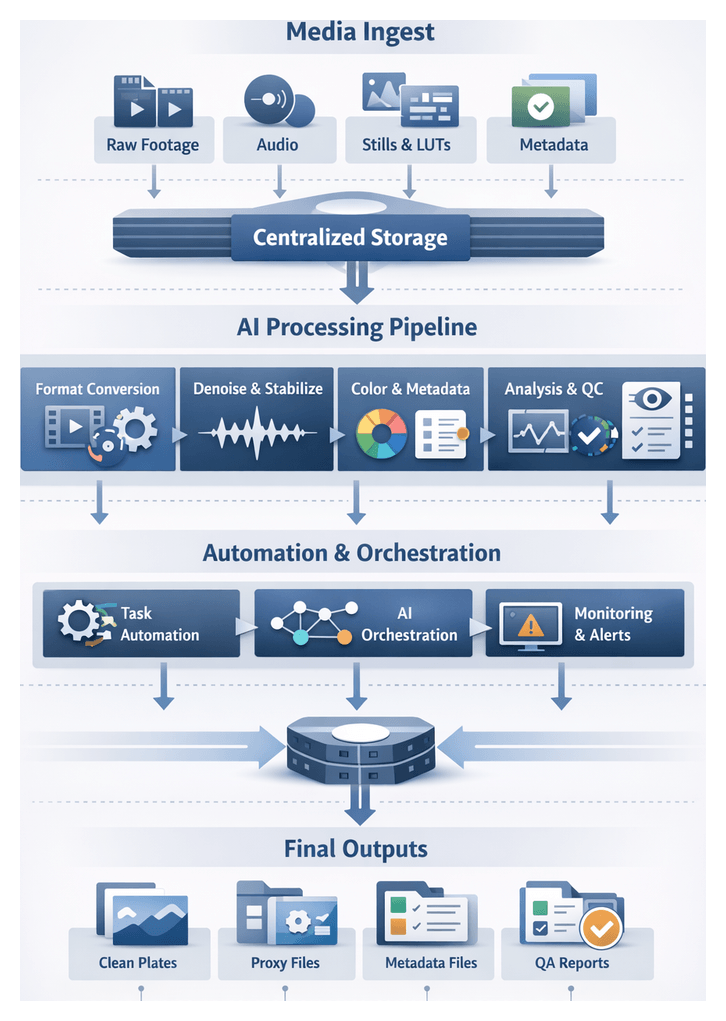

Raw Media Intake and Ingest

Purpose and Scope of Intake

The raw media intake stage establishes a unified foundation for an AI-driven visual effects pipeline by centralizing all source footage, reference materials, and ancillary assets. Automating format validation, metadata embedding, and file integrity checks minimizes manual handoffs and ensures consistent inputs for downstream modules. Key objectives include creating a single repository of standardized media, automating notifications upon ingest completion or exceptions, and embedding metadata to support search, tagging, and version control.

Input Types and Metadata Standards

A comprehensive ingest accommodates diverse formats and sidecar files while enforcing consistent metadata schemas. Typical inputs include camera RAW sequences (ARRIRAW, REDCODE, Blackmagic RAW), high-definition video (MXF, QuickTime MOV, MP4), frame sequences (EXR, DPX, TIFF), audio tracks, reference stills, LUTs, and animatics. Each asset is cataloged with capture settings and source identifiers.

- Industry schemas: Material Exchange Format (MXF), Broadcast Wave Format (BWF)

- Extensible frameworks: AAF, XML sidecar files

- Technical tags: timecode, frame rate, resolution, color space (Rec.709, ACES), audio channel mapping

- Custom fields: tracking markers, plate type, greenscreen information

AI-powered ingest managers such as AWS Elemental MediaConvert automate format validation, metadata extraction, and compliance enforcement.

Operational Environment and Acceptance Criteria

Effective intake demands robust infrastructure and clear protocols:

- High-throughput storage with scalable capacity

- Secure, low-latency networks linking on-set, dailies hubs, and central storage

- Permissioned access controls and audit trails

- Automated backup and replication

- Integration with production tracking systems (for example, ShotGrid)

- Preconfigured project profiles for ingest rules and naming conventions

- Monitoring dashboards for performance metrics and exception alerts

Acceptance criteria include bit-level checksum validation, consistency of frame rate and resolution, color space compliance, sync integrity, presence of essential metadata, and validity of tracking markers or greenscreen elements. AI-driven validators quarantine non-compliant assets for review, ensuring only verified inputs proceed.

Preprocessing Workflow and Orchestration

Format Conformation and Transcoding

Raw footage arrives in varied codecs and containers. An AI classifier inspects file signatures, frame rates, and resolution. Discrepancies trigger automated transcoding via FFmpeg or GPU-accelerated engines, aligning assets to project-standard formats. A centralized repository of profiles adapts dynamically to new camera technologies, routing tasks to on-premises GPU nodes or cloud instances for elastic scaling.

Noise Reduction, Stabilization, and Color Mapping

Once conformed, sequences pass through AI-powered denoising and stabilization. Deep convolutional networks attenuate sensor artifacts, low-light grain, and compression noise, while motion estimation corrects jitter. A confidence filter flags frames requiring manual review. Next, an AI color mapper applies lookup tables or neural transforms to convert footage into the pipeline’s working color space, embedding white balance, exposure, and dynamic range metadata.

Metadata Enrichment and QA Checkpoints

Computer vision models scan frames for scene markers and on-screen text, while speech-to-text engines transcribe dialogue. Tags ranging from camera settings to detected objects are appended in XML or JSON schemas. Integrated QA modules run frame-level anomaly detection, histogram analysis for broadcast-safe ranges, and audio-video sync checks. Automated reports summarize errors, triggering rollback or manual intervention if thresholds are exceeded.

Orchestration, Monitoring, and Human-in-the-Loop

An orchestration framework coordinates AI modules, legacy tools, and human review. Key components include:

- Event-driven messaging and RESTful APIs for task dispatch

- Kubernetes clusters hosting containerized services, auto-scaled by workload

- Pipeline schedulers defining directed-acyclic graphs of dependencies

- Resource managers allocating GPU, CPU, and memory based on real-time metrics

Human operators receive alerts when confidence scores fall below thresholds. Review dashboards present side-by-side comparisons, allow parameter adjustments, and feed corrections back into training datasets. Telemetry and logs captured by Prometheus and Grafana visualize throughput, error rates, and system health, enabling pipeline supervisors to optimize batch sizes and resource allocation.

AI Capabilities and Supporting Infrastructure

Neural Rendering and Style Transfer

Deep convolutional and transformer-based networks synthesize photorealistic frames, enforce consistent styles, and generate intermediate samples for smooth motion. GPU-accelerated inference servers using TensorRT or custom CUDA kernels serve models deployed via MLflow or Kubeflow Pipelines. High-throughput storage tiers ensure rapid frame delivery.

Object Detection, Segmentation, and Anomaly Detection

Region proposal networks and encoder-decoder architectures identify characters, props, and environments, generating masks and mattes for compositing. Anomaly detection models compare frames against learned baselines to flag mismatches in lighting, artifacts, or continuity errors. Inference clusters run atop Kubernetes, exposing gRPC and REST endpoints. Issue reports integrate with JIRA or Shotgun for streamlined remediation.

Generative Networks and Procedural Content

GANs and procedural rule engines produce textures, environments, and crowd simulations. Hybrid CPU/GPU farms orchestrated by Slurm or HTCondor execute synthesis tasks, with parameter stores retrieving dynamic rule sets. Outputs are versioned in distributed file systems, enabling rapid background generation and variation synthesis.

Predictive Analytics and Metadata NLP

Regression and time-series models forecast workload peaks and resource allocation, driving intelligent shot assignments and load balancing across on-premises and cloud renders. Data warehouses feed BI dashboards powered by Apache Superset or Tableau. NLP models analyze scripts and annotations to extract keywords, character names, and scene descriptors. Text clusters using Elasticsearch or OpenSearch enhance metadata search, with continuous retraining pipelines refining lexicons.

Orchestration Platforms and Data Exchange

End-to-end workflow definition, monitoring, and dynamic provisioning rely on Kubernetes with Helm charts and service meshes like Istio. Logging and monitoring via Prometheus and Grafana provide performance insights. Robust APIs, shared object storage (S3, NFS), and message brokers (RabbitMQ, Apache Kafka) underpin seamless data exchange, reducing friction when integrating new models or third-party tools.

Cleaned Footage Deliverables and Downstream Handoffs

Standardized Outputs and Metadata Manifests

At the end of preprocessing, the pipeline produces:

- High-resolution clean plates in EXR or DPX with consistent naming conventions

- Proxies encoded in H.264 or ProRes referencing full-resolution plates via sidecar metadata

- Timecode-aligned sequences verified against ingest logs

- Metadata manifests in JSON or XML detailing camera settings, lens calibration, color transforms, and processing logs

- QA reports from tools like Neat Video Denoiser and DaVinci Resolve Studio Neural Engine

- Color management LUTs with version identifiers and inverse transforms

- Checksum and digital fingerprint records (SHA-256) for integrity validation

Automated Handoff Mechanisms and Integration

Completion of cleaning triggers downstream workflows via:

- File system watchers monitoring output directories and checksum records

- Event-driven messages on RabbitMQ or Apache Kafka broadcasting “cleaned footage ready” events

- Asset status updates and notifications via platforms like ftrack and ShotGrid

- API-driven transfers to the digital asset management system

Upon handoff, assets are registered in the DAM with metadata, version control linkages, search indexing, and access permissions for high-resolution plates and proxies.

Data Integrity, Traceability, and Communication

- Checksum verification at rest and in transit with automatic retries on mismatch

- Append-only audit logs for ingest, processing, QA, and handoff events

- Metadata-driven lineage tracking linking raw inputs, processing modules, and parameters

- Compliance checks enforcing SMPTE timecode and DPP metadata schemas, generating certificates as needed

Real-time dashboards in ShotGrid or ftrack display progress and readiness. Automated email and chat alerts via Slack or Microsoft Teams inform stakeholders, while web-based review portals enable quick validation and sign-off. Exception reporting provides actionable remediation steps and responsible parties.

Scaling Considerations

- Elastic compute clusters that auto-scale cleaning tasks based on queue depth

- Hierarchical storage management migrating inactive assets from NVMe arrays to object storage

- Bulk API registration for parallel metadata ingestion into the DAM

- Load-balanced messaging infrastructure to prevent single points of failure

By delivering a cohesive set of high-fidelity media files, proxies, metadata packages, and QA reports—underpinned by robust handoff automation, integrity checks, and scalable infrastructure—the cleaned footage stage primes the pipeline for intelligent asset cataloging, scene analysis, and the remaining AI-enhanced VFX workflow.

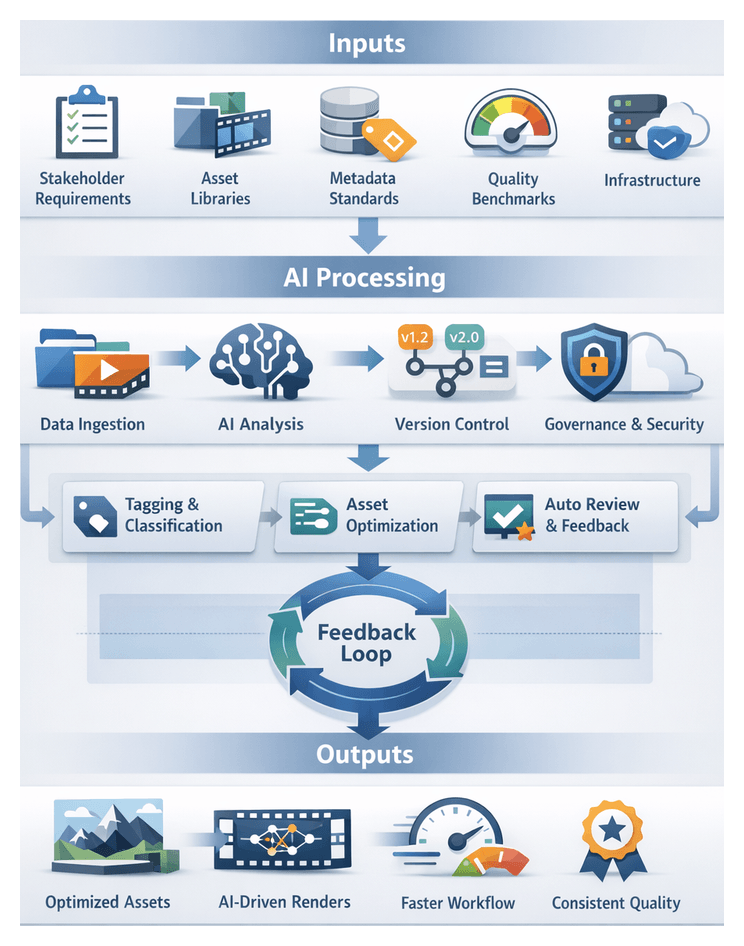

Chapter 3: Asset Cataloging Objectives and Input Sources

Defining Objectives and Inputs

The foundational stage of an AI-enhanced visual effects pipeline establishes clear objectives, aligns technical requirements with creative ambitions, and gathers critical data sources to drive efficiency, consistency, and scalable quality. By articulating strategic goals, codifying stakeholder requirements, and documenting asset inventories and governance policies, teams mitigate risks associated with fragmented workflows, misaligned deliverables, and redundant effort. A robust foundation ensures that every subsequent stage—from asset ingestion through final rendering—operates on a unified set of criteria and data standards.

Industry Context and Operational Pressures

Media and entertainment projects contend with accelerated release schedules, constrained budgets, and heightened audience expectations. Traditional VFX pipelines rely on fragmented toolsets, manual handoffs, and bespoke scripts that introduce bottlenecks and version conflicts. Unifying creative, technical, and production perspectives early in the process addresses miscommunication, enforces quality benchmarks, and defines the scope of AI-driven automation needed to maintain pace and precision under real-world constraints.

Strategic Objectives and Success Metrics

Project objectives typically revolve around three pillars: efficiency through task automation, consistency via standardized formats and naming conventions, and scalability enabled by flexible processes and data schemas. Defining measurable key performance indicators—such as reductions in manual prep time, adherence to naming conventions, shots per week throughput, and budget alignment—provides a north star for evaluating AI integration impact and enables continuous process improvement.

Key Input Categories

- Stakeholder Requirements: Creative briefs, technical specifications, style guides, and approval criteria from directors, producers, and supervisors.

- Asset Inventories: Model repositories, texture libraries, project archives, and reference material databases.

- Metadata Standards: Taxonomy schemas, tagging conventions, version control policies, and data governance rules.

- Quality Benchmarks: Frame-rate targets, resolution standards, color profiles, and fidelity thresholds aligned with delivery formats.

- Infrastructure Constraints: Compute resource availability, cloud platform selections, licensing considerations, and network bandwidth limits.

Prerequisites for Pipeline Initialization

- Governance Frameworks: Approved data governance policies, security protocols, and access controls to protect intellectual property.

- Data Availability: Verification that raw footage, reference assets, and metadata records are ingested into a centralized system.

- Technical Environment: Deployment of core AI frameworks such as TensorFlow or PyTorch and orchestration platforms like Autodesk ShotGrid.

- Team Alignment: Consensus on project scope, timelines, milestones, and risk tolerance among creative, technical, and production stakeholders.

- Baseline Audits: Initial assessments of asset quality, metadata accuracy, and infrastructure readiness to identify gaps and remediation plans.

Data Governance and Infrastructure Integration

Data Integrity and Governance Foundations

Security and traceability begin with clear governance constructs. Role-based permissions, encryption standards, and audit logging protect assets, while version management strategies enable branching, merging, and rollback with lineage tracking. Automated validation protocols enforce metadata completeness, format compliance, and naming conventions. Retention policies define archival schedules, deletion criteria, and backup procedures that align with organizational mandates, reducing downstream rework and enhancing trust in automated processes.

Integration with Existing Infrastructure

Studios often operate hybrid environments spanning on-premises render farms, cloud compute, and third-party services. Mapping integration points includes data ingestion APIs for secure transfer from asset management systems, job scheduling interfaces for render engines or Kubernetes clusters, tiered storage architectures for hot, warm, and cold data, and centralized telemetry platforms for monitoring pipeline health and performance. Documenting these interfaces ensures seamless orchestration of AI modules within the broader ecosystem.

Quality Benchmarks and Compliance Standards

Before AI-driven tasks commence, teams define objective quality benchmarks that align with creative intent and distribution requirements. Technical specifications—resolution, frame rate, bit depth, and codec standards—combine with visual fidelity metrics such as noise level thresholds and color accuracy targets. Review criteria utilize integrated platforms like ftrack and scoring rubrics for creative feedback. By codifying compliance standards, AI algorithms are tuned to produce outputs requiring minimal manual intervention, accelerating pass rates through review cycles.

Automated Tagging and Version Control

Automated tagging and version control eliminate manual metadata drudgery, enforce consistent naming conventions, and maintain a precise audit trail of asset evolution. By embedding AI classification engines and scalable versioning systems, production teams streamline discovery, collaboration, and iteration management across distributed projects.

High-Level Workflow

- Metadata extraction and classification

- Tag taxonomy enforcement

- Version assignment and branching

- Change tracking and merge management

- Audit logging and compliance reporting

- Feedback loops for AI model refinement

Metadata Extraction and Classification

Incoming assets pass through an AI engine that analyzes visual and technical attributes. Convolutional neural networks detect objects, materials, and environments, while semantic classifiers distinguish characters, props, and backgrounds. Technical metadata—polygon counts, texture resolutions, simulation cache durations—is extracted by specialized parsers. Microservices architecture orchestrated through message queues triggers each analysis step and publishes results to a centralized metadata store.

Tag Taxonomy Enforcement

A hierarchical taxonomy enforces standard tags at project, sequence, asset, technical, and creative levels. An AI-driven validation module cross-references generated tags against the approved taxonomy. Unrecognized terms are flagged for review by asset stewards, who can accept, reject, or propose updates, ensuring controlled evolution of the tag set as production requirements change.

Version Assignment, Branching, and Merging

Assets ingest with an initial version label following structured naming conventions. Automated version increments occur on modifications, with a ledger service tracking parent-child relationships. Branches enable parallel exploration, and an automated merge process reconciles changes through three-way comparisons. Conflict resolution is guided by a merge coordinator service that surfaces conflicts to technical directors for AI-assisted or manual resolution, preserving a complete audit trail.

Audit Logging and Compliance Reporting

Every tagging and version event is captured in robust audit logs, documenting actor identities, timestamps, and summaries. A compliance service aggregates logs into reports that support internal governance and external audits, detailing asset modifications, approval histories, and taxonomy changes.

Feedback Loops for Continuous Improvement

User corrections to tags and version conflicts feed back into training pipelines. Periodic retraining refines classification and merge models, reducing manual interventions. A metrics dashboard tracks tagging accuracy, merge conflict frequency, version turnaround time, and asset retrieval speed, enabling data-driven optimizations.

System Interactions and Notifications

- Asset Management System orchestrates ingestion and metadata storage.

- AI Classification Services handle object detection and technical parsing.

- Taxonomy Governance Module manages stakeholder reviews.

- Version Control System handles branching, merging, and delta storage for large media.

- Merge Coordinator service resolves conflicts with technical director input.

- Audit and Compliance Service collects logs and generates governance reports.

- Dashboard and Analytics Module monitors flow efficiency and model performance.

- Asset Stewards and Technical Directors guide taxonomy updates and merges.

Downstream Handoffs

- Rendering engines receive approved asset versions and metadata for neural rendering.

- Compositing platforms ingest updated assets with search-optimized tags.

- Scheduling modules adjust resource allocations based on version readiness.

- Quality assurance services use metadata to select test cases for visual consistency checks.

Security and Error Handling

Role-based permissions govern tag application, branching, and merge approvals. Authentication integrates with identity management, and encryption protects asset integrity. Automated retries and escalation paths handle classification failures, while conflict reports guide resolution of complex version conflicts, maintaining pipeline continuity.

Key AI Techniques and System Roles

A diverse set of machine learning and deep learning methods automate tasks, enhance creative options, and ensure consistent quality. Each technique integrates with supporting systems to deliver scalable performance and seamless artist workflows.

Computer Vision for Media Ingestion

Convolutional networks detect shot boundaries, extract keyframes, and label scenes. Services such as the Google Cloud Vision API integrate with message queues to notify preprocessing pipelines of new footage.

Semantic Asset Classification

Custom taxonomy models and named entity recognition assign rich metadata. Solutions like Clarifai or TensorFlow-based frameworks support batch pipelines managed by Apache Airflow and real-time metadata APIs.

Vector Embedding and Similarity Search

OpenAI’s CLIP models or custom Siamese networks generate embeddings for content-based retrieval. Indices built on Elasticsearch or FAISS provide fast nearest-neighbor queries alongside keyword filters.

Predictive Analytics for Scheduling

Regression models on platforms like Azure Machine Learning or AWS SageMaker forecast task durations and compute needs, feeding real-time recommendations to scheduling engines.

Generative Networks for Asset Creation

GANs and VAEs synthesize textures, crowd variations, and environments via engines such as Adobe Sensei or TensorFlow libraries. Rule engines orchestrate parameter sampling and ingest outputs with provenance metadata.

Neural Rendering and Style Transfer

Neural networks apply style transfer and cinematic grading. Implementations like NVIDIA’s Neural Style Transfer integrate into GPU clusters managed by Kubernetes, storing intermediate frames on object storage solutions like Amazon S3.

Deep Learning for Compositing and Rotoscoping

Convolutional matting networks generate alpha mattes for applications such as Nuke via integration with Blackmagic Design tools. Inference microservices process batch jobs and notify artists when results are ready for review.

Anomaly Detection for Quality Assurance

Unsupervised models detect continuity errors and rendering artifacts. Services like IBM Watson Visual Recognition extend with custom rules to enforce studio-specific standards, generating issue reports for manual inspection.

Integration and Orchestration Infrastructure

Container orchestration with Kubernetes hosts model serving pods, while workflow engines like Apache Airflow schedule interdependent tasks. A centralized feature store maintains embeddings, metadata, and preprocessed data. Message buses coordinate async processing, and monitoring frameworks provide visibility into latency, throughput, and model health.

Organized Asset Libraries and Cross-Project Dependencies

By this stage, tagged and versioned assets reside in a searchable library that supports current productions and future reuse. An intelligent catalog preserves provenance, exposes dependency graphs, and records usage histories, enabling artists to retrieve and repurpose assets on demand.

Primary Deliverables

- Centralized Catalog Database: A searchable index of assets with unique identifiers, content tags, and usage metadata, implemented on engines such as Elasticsearch.

- Hierarchical Taxonomy: Standardized categories and folder structures reflecting project conventions and supporting metadata inheritance.

- Version History and Change Logs: Audit trails of asset updates with timestamps, user annotations, and diff metadata.

- Dependency Graphs: Directed graphs capturing inter-asset relationships, generated by automated tools for impact analysis.

- AI-Enhanced Search Services: Content-based retrieval powered by classifiers and embedding models that continuously reindex assets.

- Access Control Matrices: Role-based permissions integrated with identity management and tools like Autodesk ShotGrid and ftrack.

Dependencies and Integration Points

- Preprocessed Assets: Tagged and versioned inputs from the tagging and version control stage.

- Storage and Data Lakes: High-concurrency file systems or object stores supporting lifecycle policies.

- Taxonomy Definitions: Controlled vocabularies and naming conventions enforced by governance teams.

- AI Models: Classification and embedding services trained on domain datasets and linked via microservices.

- Version Control Systems: Git or Perforce depots with hooks triggering metadata updates.

- Identity Services: Single sign-on and directory integrations aligning asset permissions with project dashboards.

Handoff Protocols

- Asset Manifests: Manifest files enumerating new and updated assets, semantic tags, file paths, and dependency URIs for downstream ingestion.

- API Endpoints: RESTful or GraphQL services enabling search, retrieval, and metadata queries by scene analysis and shot planning tools.

- Event Notifications: Publish-subscribe alerts for asset availability and taxonomy changes.

- Connector Plugins: Direct integration with DCC tools such as Autodesk Maya and Adobe Photoshop for in-context browsing and import.

- Analytics and Feedback: Usage metrics informing library optimization and AI model retraining.

By delivering a fully organized asset library with clear dependencies and robust handoff mechanisms, the pipeline ensures that subsequent stages—such as automated scene analysis, shot planning, and procedural generation—operate on reliable, high-fidelity resources. Cross-project dependencies are managed through shared taxonomies and versioning conventions, triggering validation checks and notifications when core assets are updated. Tiered storage, archival compliance, and metadata-driven retention policies make the library a strategic asset for future remasters and spin-offs, providing studios with a competitive edge in delivering agile, cost-effective visual effects.

Chapter 4: Framework of the AI-Enhanced VFX Workflow

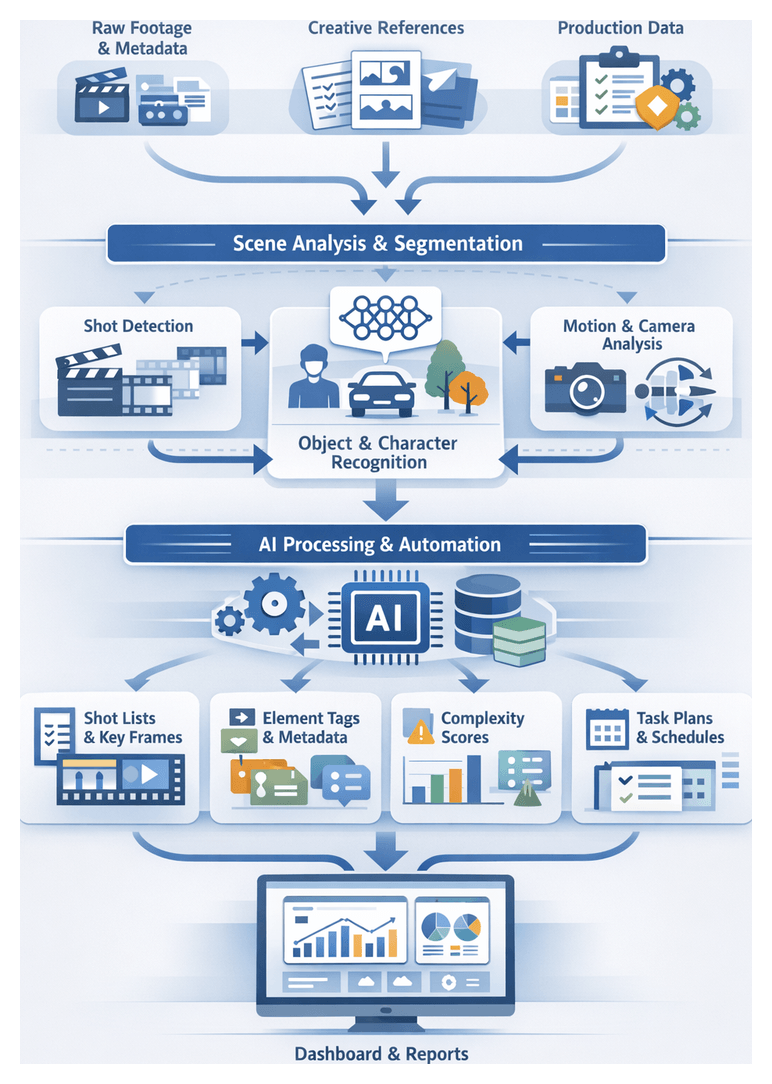

Scene Breakdown: Goals and Source Material Inputs

The scene breakdown stage transforms raw footage and reference materials into a structured blueprint that underpins every VFX task. By automating shot detection, object cataloging, and contextual prioritization, teams reduce manual ambiguity, detect complexities early, and align creative and technical stakeholders around a unified analysis.

Primary objectives:

- Shot Identification and Segmentation: AI models detect scene cuts, camera movements, and subshots to produce a definitive shot list.

- Element Cataloging: Objects, characters, environmental features, and effects requirements are recognized and labeled for targeted VFX planning.

- Contextual Prioritization: Production metadata, storyboard annotations, and creative notes are integrated to assign complexity scores, dependencies, and scheduling priorities.

Achieving these goals requires three categories of inputs:

- Raw Media and Technical Metadata: High-resolution camera plates, proxies, and on-set playback files accompanied by frame rates, resolutions, color spaces, camera model, lens data, timecode synchronization, and tracking logs. AI frameworks such as Google Cloud Vision and AWS Rekognition leverage this metadata to calibrate shot boundary detection and camera motion analysis.

- Creative Reference Materials: Storyboards linked to timecode ranges, concept art highlighting composition or lighting intentions, previz sequences with rough camera moves, and script breakdowns detailing dialogue beats or VFX notes. Solutions like Azure Computer Vision extract visual themes that enrich semantic tagging and narrative alignment.

- Contextual Production Data: Department briefs outlining VFX scope and budgets, artist availability schedules, workstation capabilities, style guides, quality benchmarks, and dependency maps. Incorporating these inputs allows predictive scheduling models to generate realistic timelines and resource plans.

Key prerequisites include standardized naming conventions, complete metadata at capture, version control for reference assets, and stakeholder agreement on analysis depth. When satisfied, the scene breakdown yields:

- Shot metadata packages for media asset management systems.

- Annotated breakdown spreadsheets with complexity metrics and task assignments.

- Structured JSON or XML files feeding automated segmentation and resource planning modules.

- Dashboards highlighting high-risk shots, compute and artist effort estimates, and scheduling recommendations.

This structured approach transforms a manual, error-prone process into a deterministic, AI-powered stage that accelerates analysis and anchors creative decision-making in objective data.

Scene Segmentation and Analysis Workflow

The segmentation and analysis workflow bridges media ingest and creative allocation by converting breakdown outputs into detailed metadata. Automated systems collaborate with asset repositories and production tools to detect shots, extract key frames, segment visual elements, and generate comprehensive breakdowns at scale.

Benefits:

- Eliminates manual shot logging and categorization delays.

- Ensures consistent footage interpretation across distributed teams.

- Feeds precise metadata into scheduling and resource allocation systems.

- Creates a robust foundation for specialized AI tools in downstream stages.

Initial Shot Detection and Key Frame Extraction

Once media ingestion completes, an asset management trigger invokes the shot detection module—using services like VisionPro AI or in-house neural models—to parse video streams into discrete shots. Representative key frames are extracted for rapid visual reference, segments are tagged with timestamp metadata, and the central tracking platform is updated to notify downstream services.

Parallel Annotation Streams

- Object and Character Detection: A convolutional neural network scans key frames to identify primary and secondary elements—actors, vehicles, props, and backgrounds. Detected objects are annotated with bounding polygons or masks and stored as structured metadata.

- Motion and Camera Analysis: A motion estimation engine tracks frame-to-frame movement vectors, identifies camera operations (zoom, pan, tilt), and computes shot complexity metrics such as motion blur prevalence and stabilization requirements.

An orchestration service consolidates annotations and motion data, performing consistency checks to verify alignment with shot boundaries and ensure no segment is overlooked.

Asset Management and Version Control Integration

Analysis data is automatically attached to asset entries in the repository, using semantic segment identifiers (for example, Scene12_Shot07_v001_analysis.json) to preserve history and allow rollback. An API contract defines endpoint specifications, authentication scopes, and data schemas for shot attributes, object lists, and motion metrics, ensuring pipeline resilience to upgrades.

Quality Validation and Compliance Checks

Before marking analysis as complete, automated routines verify:

- Presence of key frames and detected elements in every shot.

- Integrity of motion data and consistent frame sequences.

- Metadata schema conformance against versioned definitions.

Validation failures generate tickets in the production tracker and notify supervisors via email or chat, maintaining high data quality without manual oversight.

Handoff to Resource Planning Systems

Upon successful validation, the system pushes consolidated scene analysis packages—comprising shot complexity scores, compute requirements, annotated asset lists, and dependency mappings—to scheduling and resource planning engines. These inputs enable production managers to generate initial timelines and assign tasks to artists based on skill profiles and workload capacities.

Creative Collaboration and Monitoring

An interactive dashboard presents key frames alongside detected annotations for review by artists and supervisors. Change requests capture feedback, flag affected metadata, and trigger targeted reanalysis cycles. Meanwhile, an operational analytics service tracks throughput metrics—shots processed per hour, validation failure rates, time-to-handoff—and displays real-time dashboards. Automated alerts notify pipeline engineers when queues exceed thresholds, enabling proactive scaling or model tuning.

Core AI Techniques and System Roles

Advanced AI capabilities streamline routine tasks, guide creative decisions, and ensure consistency across complex VFX pipelines. Understanding their system roles enables studios to architect a cohesive environment in which models communicate seamlessly, accelerate production, and uphold quality standards.

Neural Rendering and Style Transfer

Neural rendering frameworks use learned representations of lighting, materials, and textures to synthesize photorealistic images rapidly. They serve as:

- Perceptual Consistency Engines that enforce unified color palettes and textures across assets.

- Iterative Feedback Modules generating intermediate frames for prompter review cycles.

- Compute Offload Layers deploying optimized inference workloads on GPU clusters or cloud services.

Product Example: Nvidia Omniverse integrates neural rendering with leading DCC tools.

Object Detection and Tracking

Machine vision models identify and follow elements within footage, enabling automated segmentation, rotoscoping, and context-aware asset placement. Their roles include:

- Rotoscope Accelerators that produce matte layers for compositing software.

- Tracking Coordinators outputting motion vectors and bounding boxes for match-moving.

- Data Validation Services comparing detection outputs against reference frames to ensure temporal coherence.

Product Example: Google Cloud Vision API provides robust object detection capabilities.

Generative Adversarial Networks for Asset Synthesis

GANs synthesize high-fidelity assets—textures, environmental elements, crowd personas—from limited seed libraries. System roles include:

- Procedural Expansion Units generating varied asset sets for large-scale scenes.

- Art Direction Interfaces accepting user constraints for mood, palette, and density.

- Quality Filter Pipelines evaluating outputs against discriminator networks to ensure fidelity.

Product Example: Runway offers GAN-powered asset generation.

Predictive Analytics for Scheduling and Resource Optimization

Predictive models analyze historical project data to forecast timelines, artist workloads, and compute requirements. They function as:

- Demand Forecast Engines estimating personnel and compute needs for upcoming milestones.

- Load Balancer Modules distributing tasks across on-premises render farms and cloud instances.

- Alerting Services notifying teams when forecasted utilization exceeds thresholds.

Product Example: AWS SageMaker provides time-series forecasting algorithms.

Machine Vision for Asset Categorization

AI classifiers and embedding networks automate tagging, indexing, and retrieval of digital assets. Roles include:

- Metadata Extraction Services processing assets to identify attributes and attach standardized tags.

- Semantic Search Engines using vector embeddings for similarity-based retrieval.

- Version Control Integrators tracking asset iterations and dependencies.

Product Example: Clarifai delivers AI-driven tagging and search solutions.

Rule-Based Engines and Workflow Orchestration

Rule-based systems manage dependencies, error recovery, and sequence AI services according to studio policies. They include:

- Pipeline Orchestrators scheduling and executing AI tasks, enforcing data handoff rules, and monitoring service health.

- Policy Enforcement Agents applying conventions, resolution standards, and security protocols.

- Audit and Logging Services capturing execution metadata for traceability and continuous improvement.

Product Example: Netflix’s Seer framework demonstrates large-scale workflow management (Netflix TechBlog).

Hybrid Cloud and On-Premises Integration

Hybrid deployments balance data security with scalability. Core components are:

- Data Bridge Connectors securing transfers between on-premises storage and cloud buckets.

- Auto-Scaling Controllers monitoring queue lengths and model latencies to provision resources dynamically.

- Cost Management Dashboards tracking compute usage and expenditure in real time.

Product Example: Adobe Sensei supports hybrid AI workflows within Creative Cloud.

These AI techniques, orchestrated by rule-based engines and integrated with hybrid infrastructures, form an end-to-end pipeline. Object detection feeds neural rendering; GANs generate missing assets under predictive analytics guidance; orchestration layers enforce standards; and audit logs drive model retraining and process refinement.

Analysis Reports and Resource Allocation Handoffs

Automated scene analysis culminates in comprehensive reports that translate segmentation and detection data into actionable insights for production planning, scheduling, and budgeting.

Dependencies and Input Validation

Reliable reporting depends on adherence to naming conventions, high-confidence object identification thresholds (typically above 85 percent), and version-controlled assets. Automated scripts validate shot counts, frame ranges, and asset lists against expected values, flagging discrepancies before report generation.

Report Structure and Formats

Standard deliverables include:

- A master shot breakdown in CSV or JSON for scheduling systems.

- Annotated storyboards with AI-generated object masks and environment segments.

- Complexity heat maps rendered as PNG or PDF summaries.

- Asset dependency spreadsheets detailing models, textures, and simulations.

- Risk assessment dashboards accessible via business intelligence platforms.

Uniform formats reduce friction, enabling planning and asset departments to import data without manual reformatting.

Integration with Production Platforms

Analysis reports are published to centralized tracking tools: ftrack delivers automated notifications with links to breakdown entries, while Autodesk ShotGrid ingests JSON-based shot metadata directly into task pipelines. Webhooks notify scheduling modules to update boards, and email alerts inform department leads of new assignments.

Handoff Mechanisms

Upon report completion:

- Automated export jobs write CSV and JSON files to network shares or cloud buckets.

- APIs push notifications to enterprise resource planning systems.

- Message queues broadcast events to downstream microservices.

- Email and chatbots deliver human-readable summaries with hyperlinks to detailed reports.

Parallel channels ensure both automated systems and human stakeholders receive timely information without delay.

Error Handling and Feedback Loops

Reports include an error and warning section documenting low-confidence detections, frame mismatches, missing assets, and version conflicts. Each issue entry specifies remediation steps, assigned owners, and severity levels. Tickets are created in issue-tracking systems, linked to specific report entries. Stakeholders comment on tickets, upload revised inputs, and once resolved, the system recalculates metrics and issues updated reports, maintaining an audit trail of changes.

Transition to Production Planning

Upon sign-off, the planning stage consumes deliverables to schedule:

- Artist assignments grouped by shot complexity and skill requirements.

- Render farm provisioning requests for GPU and CPU workloads based on predicted frame counts.

- Asset preparation tasks such as material optimization or model cleanup.

- Interdepartmental coordination items, including editorial alignment and vendor handoffs.

This structured handoff marks the formal transition from analysis to execution. By embedding analysis outputs into agile planning tools—such as Kanban boards or Scrum workflows with ticket creation via REST APIs—studios achieve a single source of truth, optimize resource utilization, reduce turnaround times, and minimize miscommunication. The fidelity of these reports directly influences adaptive scheduling algorithms, underscoring the strategic importance of rigorous analysis and reporting in an AI-enhanced VFX workflow.

Chapter 5: Establishing AI Pipeline Objectives and Inputs

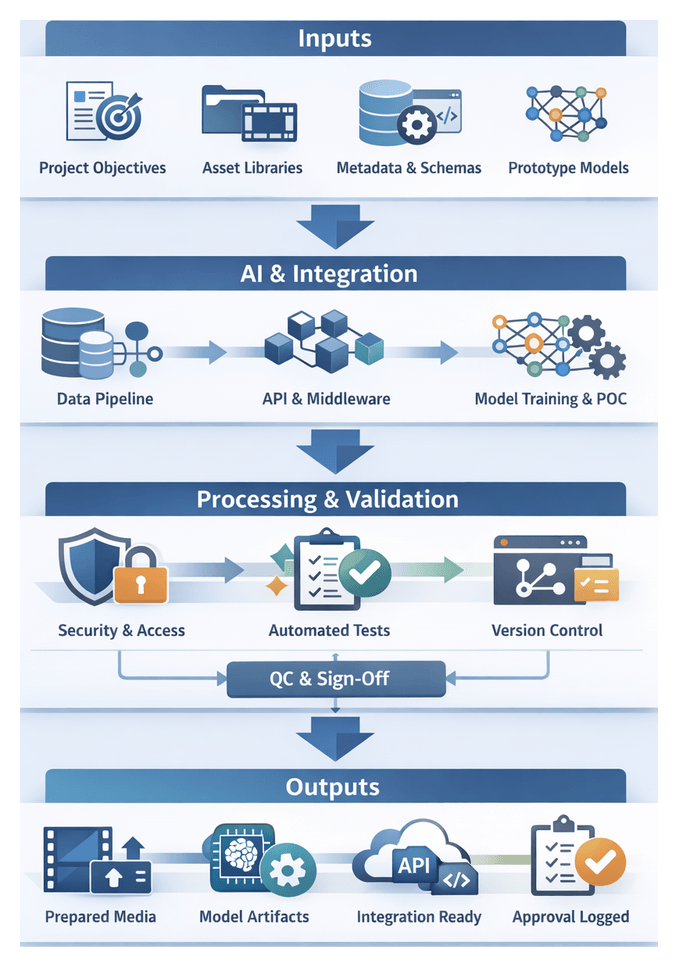

Foundational Stage Deliverables and Integration

The foundational stage of an AI-enhanced VFX pipeline establishes the baseline documentation, models, and integration agreements that guide all downstream production activities. Deliverables at this juncture serve as the contract between creative, technical, and operational teams, ensuring alignment on objectives, inputs, and success criteria. By codifying expectations early, teams reduce rework and accelerate the transition into media ingest and preprocessing.

Primary Deliverables

- Pipeline Objectives Document outlining project goals, performance targets, quality benchmarks, and stakeholder roles

- Input Specification Manifest inventorying required footage types, reference materials, asset libraries, and metadata standards

- Data Schema and Ontology Definitions for asset metadata models, annotation taxonomies, and naming conventions

- Proof-of-Concept Artifacts demonstrating AI model outputs for noise reduction, tagging, and scene analysis

- Integration Roadmap detailing API contracts, data transfer protocols, and middleware components

- Stakeholder Sign-Off Package recorded in ShotGrid for traceability

Critical Dependencies and Handoff Protocols

- Secure access to raw media repositories, whether on-premises storage or cloud buckets

- AI model licensing and provisioning, including services such as AWS SageMaker and RunwayML, with API keys and quotas

- Validation of GPU/CPU clusters, cloud instances, or on-prem render nodes configured for preprocessing workloads

- Network and security configurations aligned with corporate policies, including IAM and encryption standards

- Assignment of engineers, data scientists, and VFX supervisors trained in AI integration

- Quality assurance criteria for model performance on sample inputs, such as noise reduction thresholds and tagging accuracy

Handoff Protocols to Media Ingest and Preprocessing

- Package Release: Versioned bundle containing data schemas, model artifacts, and configuration files in a shared repository

- Automated Validation: Schema conformance checks and sample AI process runs to verify environment readiness

- Notification and Triggering: Event messages dispatched to the media ingest queue upon successful validation

- Artifact Delivery: Secure transfer of model weights, annotation guidelines, and API endpoint details

- Sign-Off Confirmation: Formal acceptance recorded in the asset management system

Governance, Version Control, and Best Practices

- Git-based repositories for pipeline definitions, schema files, and CI/CD scripts with mandatory pull request reviews

- Change logs summarizing deliverable updates and dependency changes

- Audit logs capturing handoff events, validation results, and sign-off timestamps

- Rollback mechanisms preserving snapshots of prior deliverable sets

- Automated CI/CD workflows using GitHub Actions, Jenkins, or GitLab CI for validation and deployment

- Service-level agreements defining turnaround times for packaging, validation, and sign-off

- Use of open formats such as Alembic, USD, and OpenEXR for compatibility

- Centralized schema definitions and pipeline configurations as a single source of truth

- Structured feedback loops and change-control processes to iterate without disrupting the ingest pipeline

Planning Stage: Blueprinting and Metrics

The planning stage translates scene breakdown data, asset inventories, and stakeholder requirements into a structured schedule and resource allocation plan. Acting as the bridge between preparatory analysis and creative execution, it ensures every shot is assigned the right talent, compute capacity, and timeline. By codifying prerequisites and metrics, this stage underpins predictable delivery, cost control, and optimal resource utilization.

Purpose and Context

Modern VFX productions face growing shot volumes, complex simulations, and distributed teams. An AI-enhanced planning process ingests breakdown reports, asset readiness statuses, and historical performance data to generate an optimized sequence of tasks. Tasks are assigned to artists or AI modules based on skill profiles, hardware availability, and priority rankings, reducing manual coordination and enabling dynamic schedule adjustments.

Prerequisites and Conditions

- Completed scene breakdown reports with shot segmentation, VFX requirements, and asset references

- Validated asset catalogs confirming plates, models, textures, and mattes

- Standardized metadata and naming conventions for shots and assets

- Stakeholder sign-off on creative briefs, revision scopes, and quality benchmarks

- Confirmed budgets and cost constraints

- Artist profiles detailing skill sets, availability calendars, and workload limits

- Compute resource inventories, including on-premises render nodes and cloud capacity

- Historical performance data capturing average task durations, error rates, and revision cycles

- Defined milestone dates for internal reviews, client approvals, and delivery commitments

Required Metrics

- Shot Complexity Score reflecting CG layers, simulation intensity, and compositing passes

- Artist Utilization Rate as a percentage of scheduled work hours

- Resource Availability Index measuring free compute slots and license capacity

- Task Lead Time from assignment to initial deliverable submission

- Milestone Adherence Ratio tracking deadline performance

- Historical Error Frequency indicating risk of rework

These metrics drive AI-based optimization, highlight high-risk shots for buffer allocation, and enable adaptive learning to improve forecast accuracy over time.

Scheduling Actions and Dynamic Workload Flow

In the scheduling stage, the planning blueprint becomes actionable timelines and assignments. A scheduling engine consumes scene complexity metrics, artist profiles, hardware availability, and milestone constraints to assemble a living production schedule. Predictive analytics, rule-based solvers, and real-time feedback loops enable dynamic adjustments without sacrificing creative quality.

Scheduling Workflow Sequence

- Task Prioritization based on critical path analysis, client urgency, and dependency chains

- Resource Matching aligning shots with artists and compute resources

- Timeline Construction assigning start and end dates while respecting deadlines

- Conflict Detection and Resolution identifying overlaps, shortages, and triggering automated routines

- Buffer Allocation inserting contingency time guided by historical variance data

- Schedule Publication dispatching assignments via email or chat notifications through Microsoft Teams or Slack

- Continuous Monitoring ingesting time tracking and status updates for real-time recalibration

System Interactions and Stakeholder Touchpoints

- Breakdown System to Scheduling Engine via RESTful APIs or message buses

- Engine to Resource Database using LDAP or SAML for real-time availability queries

- Engine to Notification Service integrating with Microsoft Teams and Slack

- Engine to Visualization Dashboard in ShotGrid for Gantt charts and heatmaps

- Feedback Loop from time tracking webhooks feeding into the scheduling engine

- Supervisory Overrides through interfaces

- Handling Variability with Predictive Scheduling

To address inherent uncertainty, predictive modules forecast delays and contention using historical throughput, revision rates, and peak utilization patterns. High-risk predictions trigger automatic adjustments such as reassigning tasks or provisioning additional cloud resources.

- Throughput Predictor estimating completion dates against historical curves

- Resource Contention Forecaster identifying future supply shortages

- Rework Probability Analyzer computing revision risk based on shot complexity

Dynamic Load Balancing and Real-Time Adjustments

- Trigger Detection identifying anomalies in progress or queue lengths

- Impact Analysis quantifying effects on milestones and dependencies

- Remediation Strategy Generation proposing task shifts, extended hours, or cloud scaling

- Stakeholder Notification and Approval via an approval dashboard

- Automated Rescheduling enacting the updated plan across all systems

Governance and Compliance

- Union Work-Hour Regulations enforcing daily/weekly limits and mandatory breaks

- License and Software Constraints preventing over-booking of specialized tools

- Asset Lock-Down Windows safeguarding finalized assets during reviews

- Budgetary Controls integrating cost models for artists and cloud usage

Predictive Forecasting and Resource Optimization

Predictive analytics and optimization engines form the analytical core of an AI-driven workflow. By applying machine learning to historical and real-time data, studios forecast shot durations, anticipate resource demands, and proactively align talent and infrastructure to workload.

Core Data Inputs and Feature Engineering

- Shot complexity metrics: polygon counts, particle effects, simulations

- Historical task durations from past projects

- Artist profiles detailing skills and average throughput

- Infrastructure logs of GPU/CPU utilization and network performance

- Project milestones, deadlines, and buffer allowances

Machine Learning Models for Demand Forecasting

- ARIMA and seasonal variants for time-series completion rates

- Gradient boosted trees (XGBoost, LightGBM) handling mixed features

- Neural networks (LSTM, GRU) for sequence prediction and regression

- Ensemble methods combining multiple forecasts

Cloud platforms such as Amazon Forecast and Google AI Platform streamline model training, tuning, and deployment.

Capacity Planning and Optimization Engines

- Integer linear programming for exact resource allocation

- Heuristic algorithms (genetic algorithms, simulated annealing) for large problem spaces

- Constraint satisfaction frameworks like OptaPlanner

- Mixed-integer optimization combining continuous and discrete variables

Dynamic Resource Allocation Roles

- Monitoring Agents tracking shot status, artist check-ins, and render jobs

- Anomaly Detectors flagging behind-schedule tasks or performance issues

- Reallocation Brokers invoking capacity planners to rebalance workloads

- Notification Services alerting managers and artists to reassigned tasks

Integration with Pipeline Management Systems

- Production tracking in Autodesk Shotgun or ftrack

- Asset management catalogs feeding complexity assessments

- Render farm APIs for automated provisioning and monitoring

- Collaboration tools like Slack and Microsoft Teams for real-time updates

Chapter 6: Mapping Core AI Integration Workflow

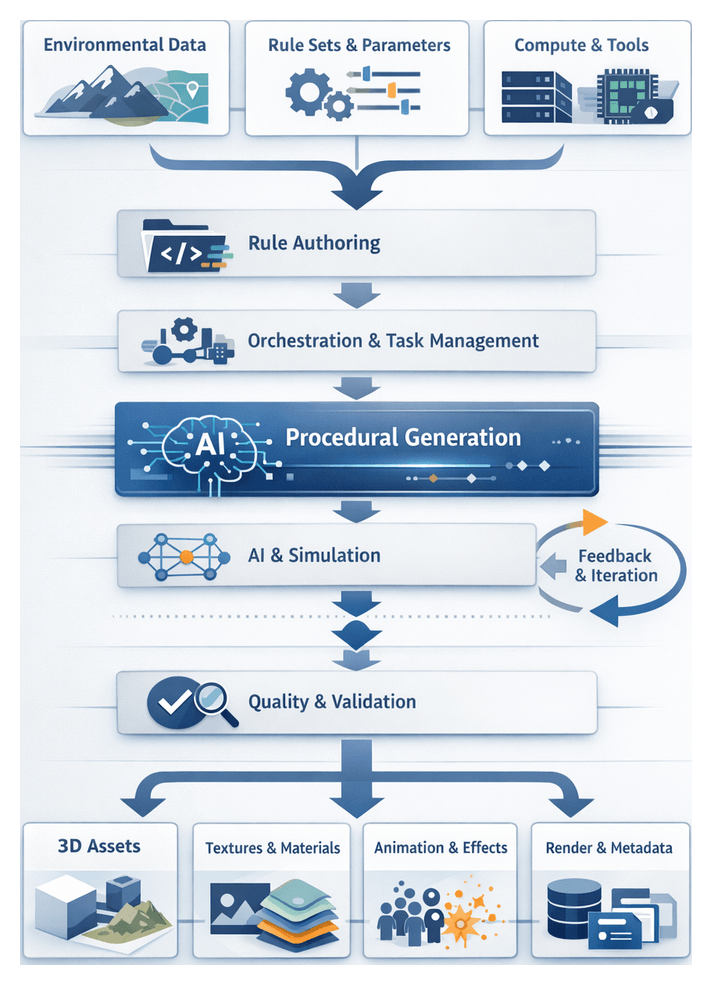

Purpose and Strategic Advantages of Procedural Generation

The procedural generation stage establishes an automated, rule-based framework for synthesizing complex visual assets at scale. By encoding artistic rules, physical behaviors, and stylistic guidelines into algorithms, teams can produce high-fidelity environments, crowds, textures, and dynamic simulations while reducing manual labor and accelerating iteration. Procedural methods deliver scalability, ensuring thousands of unique assets from minimal inputs; consistency, by applying uniform style and performance constraints; efficiency, through automated synthesis and rapid previews; flexibility, via adjustable high-level parameters; and resource optimization, by enforcing budgets suitable for real-time and final render stages. Coupled with AI-driven generative models, procedural generation empowers productions to respond swiftly to creative changes, maintain quality across sequences, and meet ambitious schedules and budgets.

Inputs, Rules, and System Prerequisites

Environmental Data and Metadata

Precise inputs form the foundation of reliable procedural synthesis. Key environmental data includes height maps and terrain meshes for topology, climate parameters for erosion and weathering, spatial coordinates for scene alignment, and material definitions for shader integration. Metadata such as shot plans, scene boundaries, and performance budgets ensures assets conform to the live-action context and technical requirements.

Rule Sets and User-Defined Parameters

Procedural rules and parameter sets translate creative briefs into executable scripts. Rule libraries, stored in version control systems, encode geometric grammars, distribution algorithms, and hierarchical relationships. Art direction constraints—style guides, mood boards, color palettes—and resource budgets define aesthetic and technical limits. User-defined controls, such as scale and density sliders, randomization seeds, and override lists, enable artists to explore variations and integrate handcrafted elements seamlessly.

Infrastructure and Team Coordination