AI Enhanced Video Editing Workflows A Practical End to End Guide for Media Production

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Purpose and Scope of Operational Analysis

Media production teams managing dozens of videos each week face mounting pressures: tight schedules, multiple format variants, complex approval pipelines and rapid iteration cycles. This analysis establishes a clear understanding of these operational challenges—capacity bottlenecks, quality variability and delivery delays—to guide the design of an end-to-end AI-enhanced editing workflow. By defining high-volume workflows and gathering key inputs, organizations can identify critical pain points, prioritize improvements and select AI-driven tools that align with strategic objectives, accelerating time to market and ensuring consistent, scalable video production.

Defining High-Volume Video Production and Industry Trends

High-volume video production is characterized by:

- Daily or weekly release cadences spanning dozens of individual assets.

- Multiple deliverables for social, broadcast, streaming and mobile channels.

- Approval pipelines involving creative directors, compliance reviewers, brand managers and external clients.

- Rapid iteration driven by marketing campaigns, breaking news or seasonal promotions.

Key industry trends intensify these pressures:

- Platform Proliferation: Each channel imposes unique technical and creative requirements.

- Data-Driven Creativity: Analytics inform iterative edits, A/B testing of overlays and calls to action.

- Distributed Teams: Cloud-native review and asset sharing enable collaboration across time zones.

- Cost and Time Pressures: Competition and shortened attention spans demand shorter production cycles.

- Emerging AI Capabilities: Advances in computer vision, natural language processing and generative models automate repetitive tasks and assist creative decisions.

Operational Prerequisites and Environmental Conditions

Successful integration of AI-driven modules requires foundational elements:

- Baseline Process Mapping: Detailed flowcharts capturing manual steps, decision points and handoffs.

- Governance Framework: Defined roles, version control policies, escalation procedures and QA guidelines.

- Technology Infrastructure: Scalable storage, high-throughput networks, GPU-enabled servers or cloud compute instances and secure access controls.

- Data Preparation: Standardized folder structures, naming conventions, metadata schemas and format specifications.

- Stakeholder Alignment: Executive sponsorship, cross-functional working groups and change management plans.

Collecting and normalizing metrics—project counts, turnaround times, resource inventories, process documentation and tool performance logs—provides the benchmarks against which AI interventions are measured.

Coordinating Systems, Teams and Data Exchange

Modern workflows span on-set capture tools, cloud asset management platforms and AI engines. A unified orchestration layer monitors system events, triggers automated tasks and manages notifications to keep producers, editors, sound designers, colorists and compliance officers aligned.

Typical integration points include:

- Ingestion API for media uploads.

- AI Metadata Service invocation via webhooks.

- Editing Platform Connectors syncing clip bins and timelines.

- Review Platform Webhooks propagating annotations and approval statuses.

- Render Trigger APIs for batch encoding jobs.

- Analytics Endpoints streaming performance metrics to dashboards.

Automation and human-in-the-loop checkpoints ensure quality and creative oversight. Reviewers receive batch assignments with frame-accurate annotation tools, feedback categorized by severity and change requests linked to project-management systems. SLA timers escalate overdue tasks. Clear communication protocols—real-time chat alerts, scheduled stand-ups and automated reports—combined with role definitions (Media Administrator, Data Engineer, Editor, Colorist, Sound Designer, Compliance Officer and Project Manager) maintain transparency and accountability.

Robust error-handling features include automated retries with exponential backoff, fallback paths to secondary services, escalation alerts, state checkpoints and incident tracking. Elastic scaling and modular design allow AI services to run in containerized clusters, automatically provisioning resources based on demand and enabling plug-and-play integration of new capabilities.

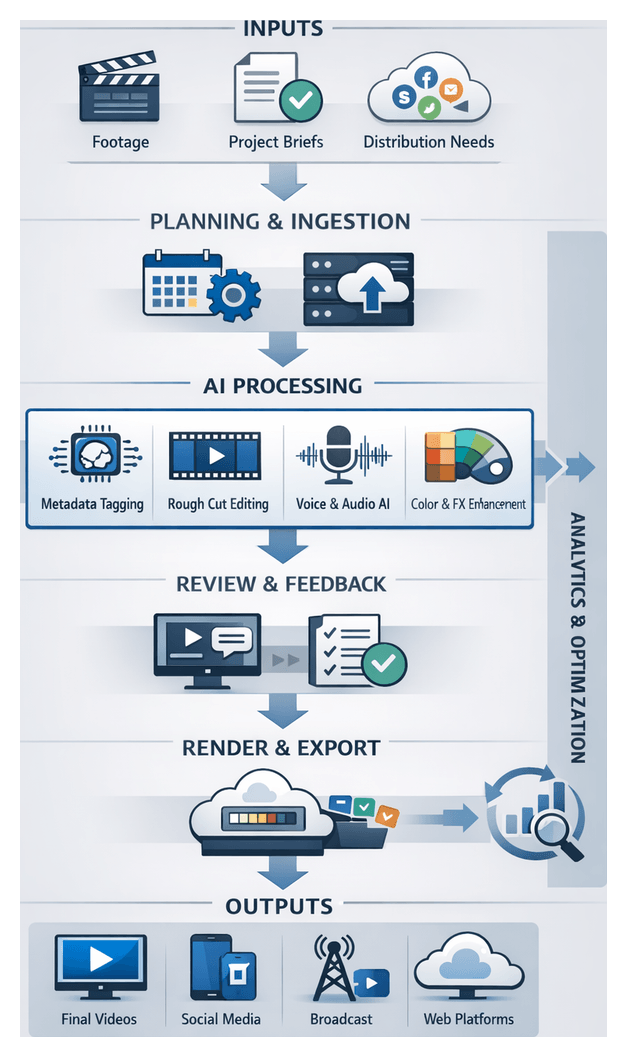

AI-Driven Workflow Phases

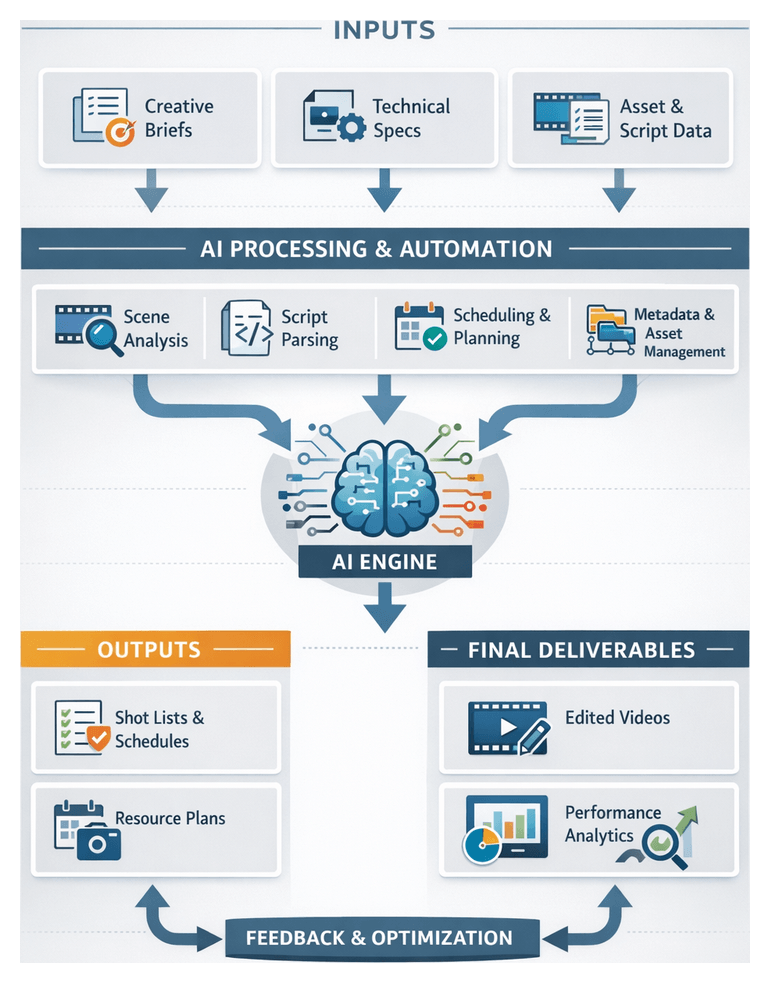

Pre-Production Planning Assistance

IBM Watson ingests project briefs, performs sentiment analysis and identifies narrative beats. Resource management platforms simulate schedules and budgets in real time. Approved timelines and shot lists populate the production pipeline via metadata schemas enforced by microservices.

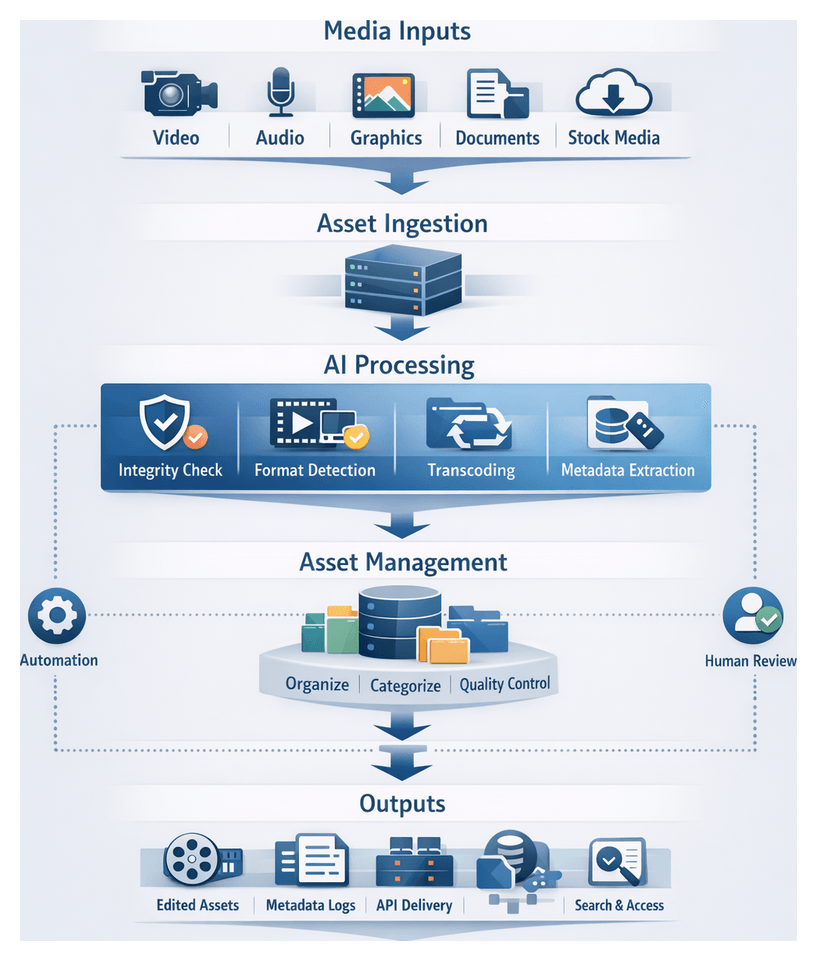

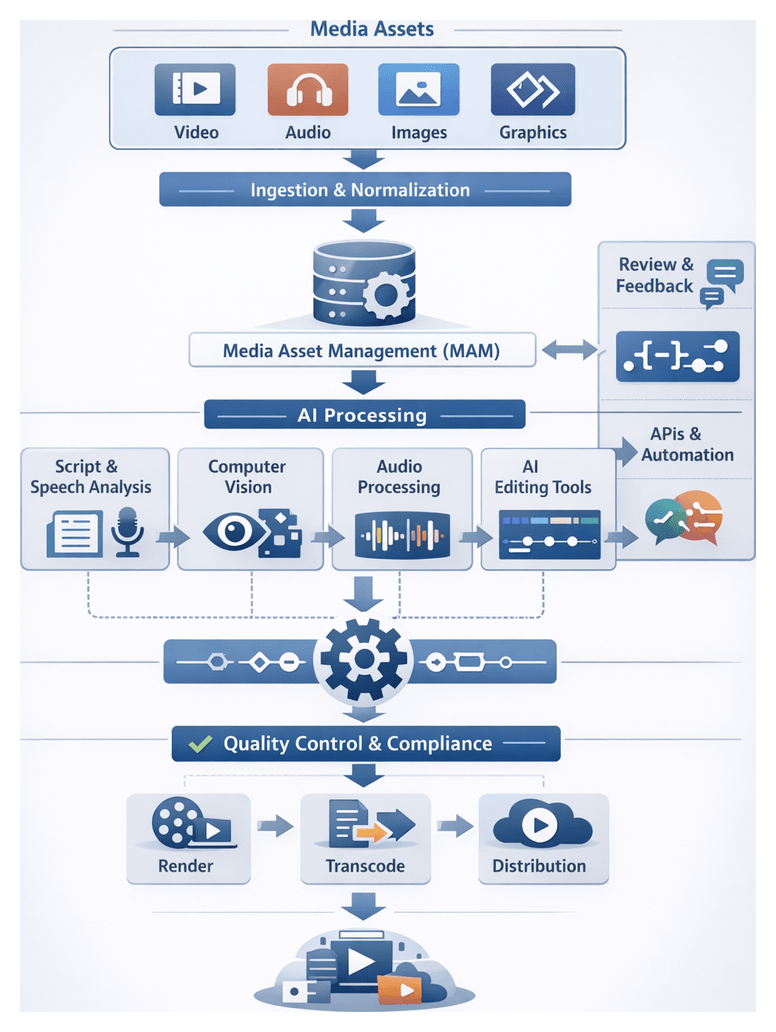

Automated Asset Ingestion and Normalization

Event-driven engines classify file types and invoke AWS Elemental MediaConvert for transcoding. A metadata bus records technical attributes and provenance. Automatic checksum verification and version control eliminate manual errors, while a unified catalog presents AI-suggested tags based on scene context.

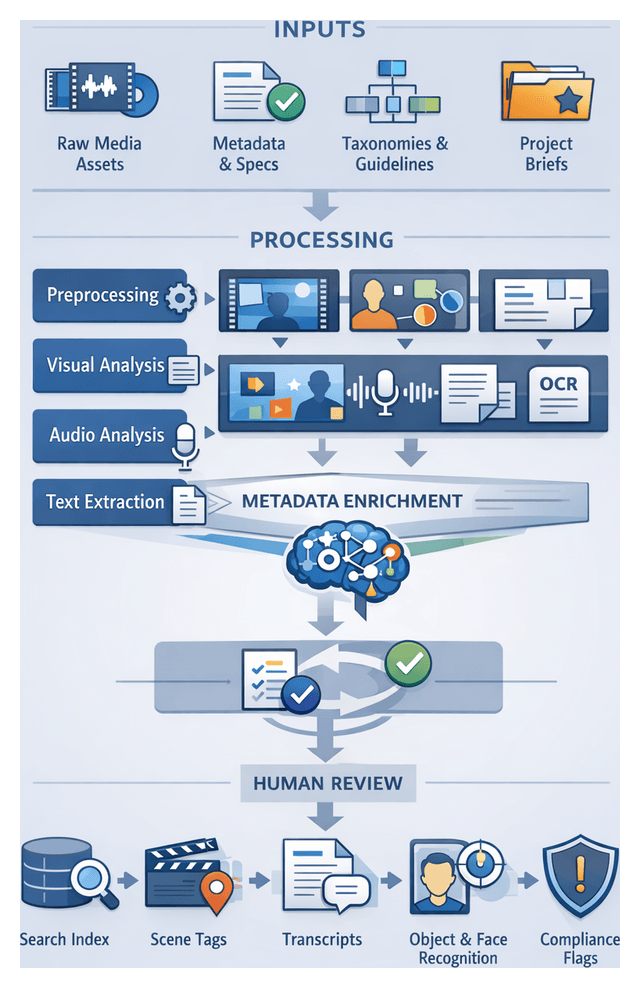

Intelligent Metadata Tagging and Search

Google Cloud Video Intelligence API, Microsoft Azure Video Indexer and Amazon Rekognition perform scene detection, object recognition and speech-to-text. A graph database links clips to speakers, locations and branded elements. Human-in-the-loop reviews refine annotations, and faceted search enables rapid asset retrieval.

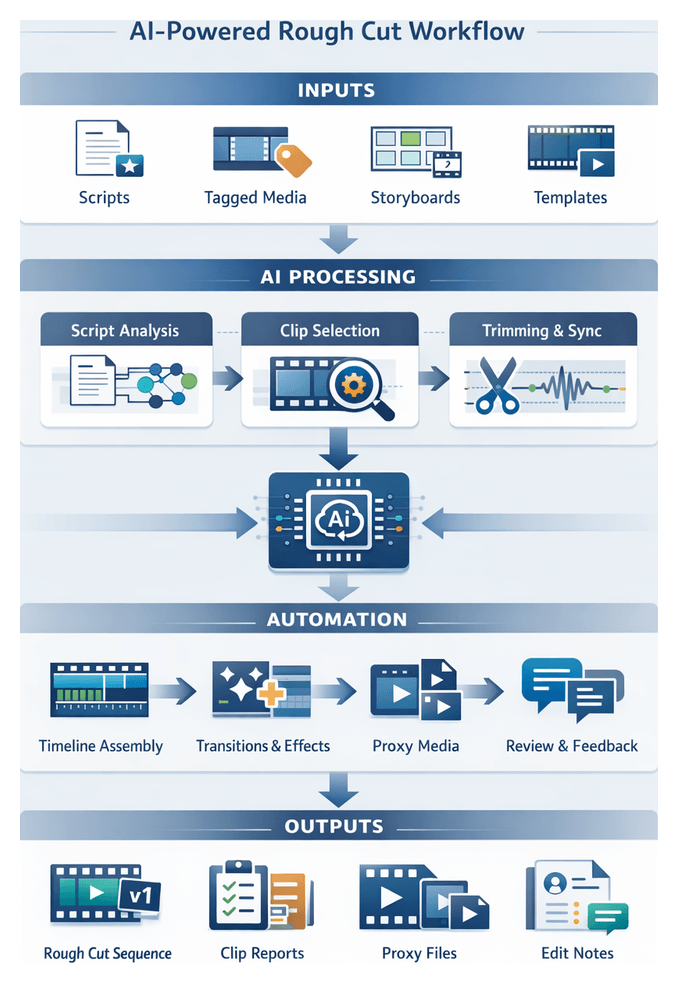

AI-Powered Rough Cut Generation

Tools like Blackbird match script cues with tagged assets, assembling preliminary timelines. Decision-support engines rank clip combinations by story flow and duration targets. Editors adjust low-confidence segments within integrated editing environments, while orchestration logs every transition.

Automated Voiceover Scripting and Narration

Copywriting modules suggest voiceover drafts. Upon approval, Adobe Sensei text-to-speech engines generate narration with controlled prosody. Tracks align automatically to visual cues, with versioning enabling auditions of alternative styles.

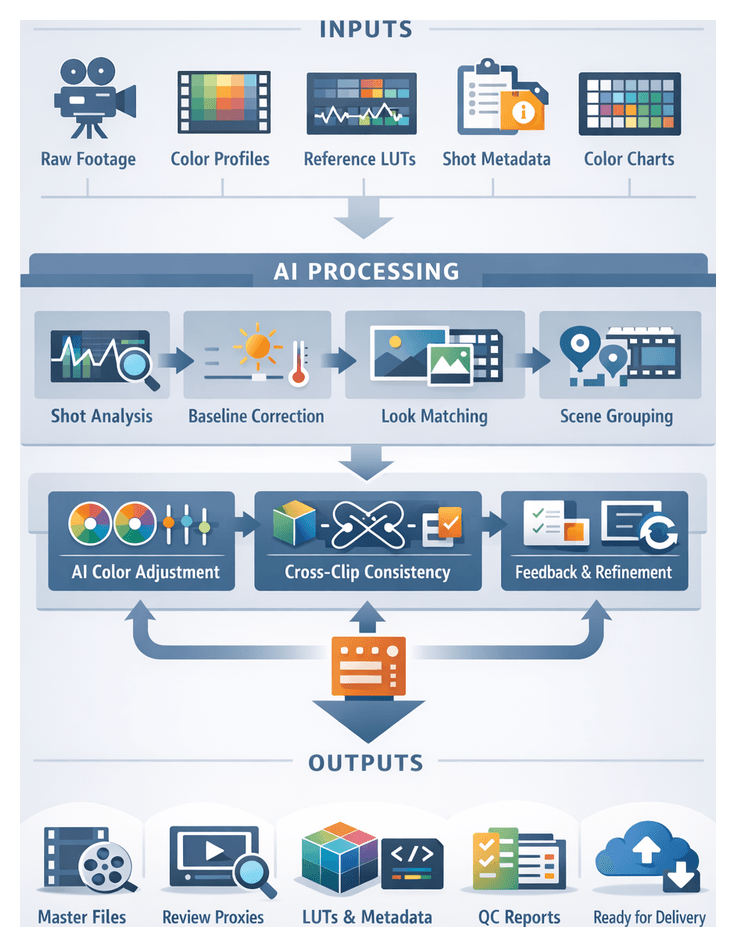

AI-Driven Color Correction and Grading

DaVinci Resolve Neural Engine applies baseline exposure corrections, creative LUTs and per-scene color matching. GPU-accelerated clusters grade multiple sequences in parallel, with feedback loops refining models based on editor preferences and performance metrics.

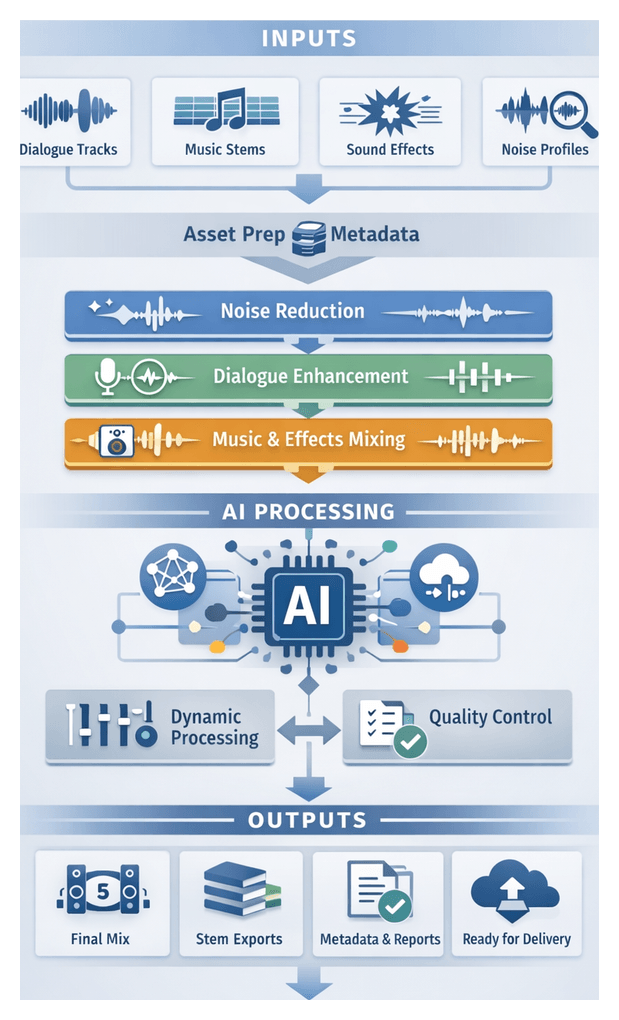

Enhanced Audio Processing and Sound Design

Deep-learning filters perform noise reduction, dereverberation and dialogue enhancement. Adaptive mixing algorithms balance stems to meet loudness standards. Editors preview mix adjustments in real time, and master stems carry metadata for broadcast and online platforms.

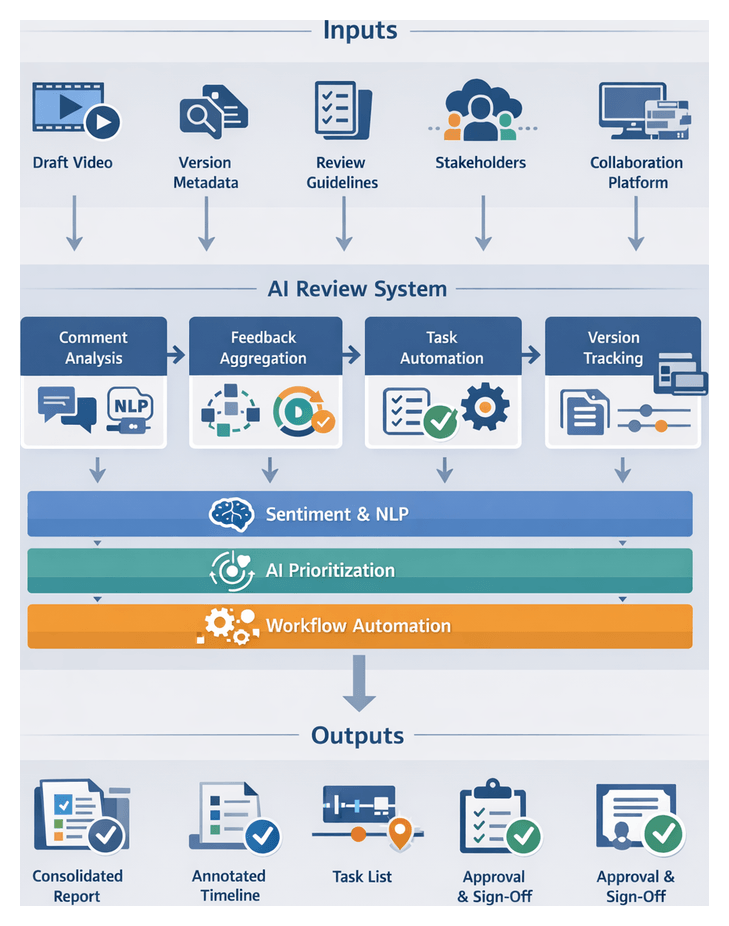

Collaborative Review and Feedback Orchestration

Frame.io centralizes draft sequences with time-coded comments. AI-driven comment analysis categorizes feedback, routes tasks to responsible roles and tracks resolution status. Real-time notifications and analytics dashboards surface recurring issues, tightening approval cycles.

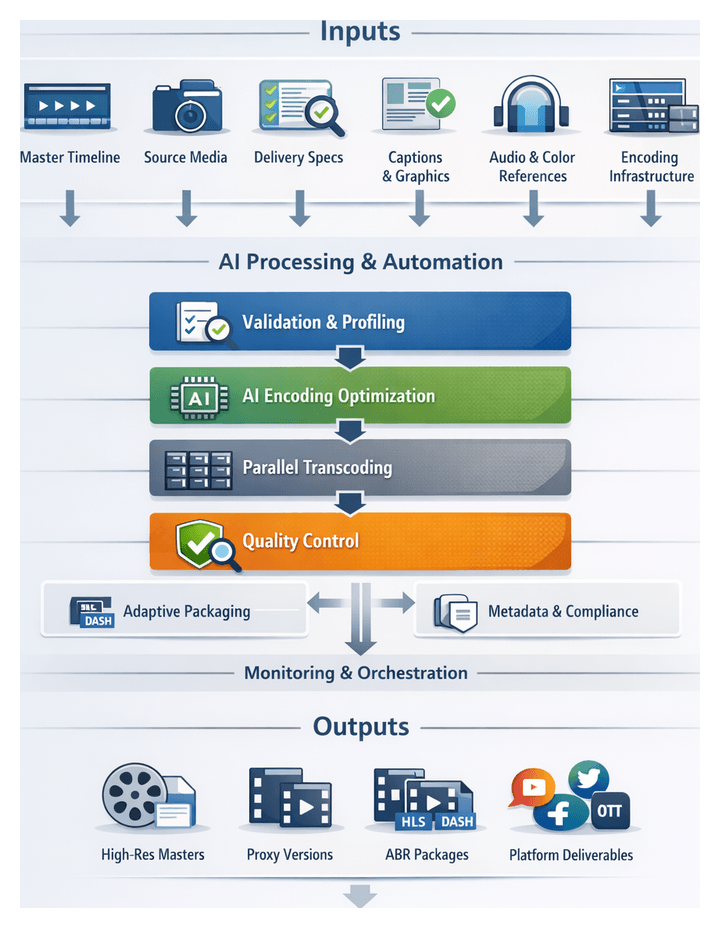

Automated Rendering and Format Conversion

An intelligent render farm, leveraging BirdDog Cloud Encoder or similar services, selects optimal encoding presets. Parallel containerized jobs via AWS Elemental MediaConvert execute batch renders. Outputs are validated against platform specifications and propagated to content delivery networks.

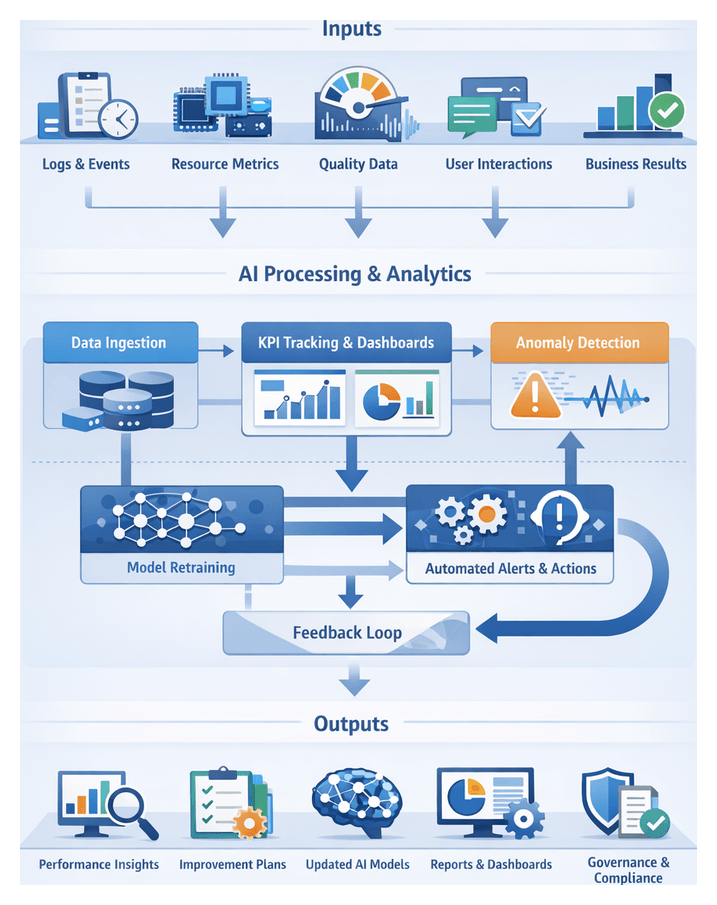

Performance Monitoring and Continuous Improvement

Telemetry agents collect processing times, error rates and resource usage. Dashboards built on Tableau or Power BI visualize key performance indicators. AI-driven insights predict bottlenecks and recommend optimizations, while periodic model retraining incorporates annotated corrections, driving continuous workflow enhancements.

Modular Solution Architecture and Governance

The end-to-end architecture decomposes into discrete stages with well-defined inputs, outputs and data contracts. Intermediate artifacts—planning documents, asset indexes, metadata catalogs, rough-cut timelines, voiceover tracks, graded clips, audio stems, review reports and rendered deliverables—propagate intelligence and structure downstream, reducing rework and ensuring traceability.

Core system components include:

- Adobe Sensei for asset tagging and sequence assembly

- DaVinci Resolve Neural Engine for color grading

- Frame.io for collaborative review and comment triage

- Avid Media Composer for AI-assisted timeline generation

- Microsoft Azure Video Indexer and Amazon Rekognition for semantic analysis

- On-premises or cloud render farms—such as BirdDog Cloud Encoder

- Analytics dashboards via Tableau or Power BI

Governance practices—schema validation, version control, automated notifications, role-based access controls, audit trails and automated QA scripts—embed quality checks at each handoff. Containerized microservices, auto-scaling compute and plug-in frameworks ensure elastic scalability and extensibility, future-proofing investments and enabling rapid integration of emerging AI innovations without disrupting ongoing operations.

Chapter 1: Pre-Production Planning and Objective Definition

Purpose and Inputs: Defining Goals and Specifications

In high-volume video production, rigorous pre-production planning sets the stage for efficiency, quality and alignment among all stakeholders. This foundational phase transforms broad concepts into actionable workflows by defining creative and business objectives, technical constraints and structured inputs that guide both human teams and AI modules. Clear goals and detailed specifications ensure that automated analyses—such as scene recognition, script parsing and scheduling algorithms—operate in service of the intended narrative and operational requirements.

Three core goals drive this stage:

- Establish Creative and Business Objectives—define narrative themes, brand tone, target audience and key performance indicators such as view counts, conversion rates or engagement metrics.

- Align Technical and Operational Parameters—specify format standards, delivery channels, budget allocations, resource availability and timeline constraints.

- Prepare Structured Inputs for AI and Human Planners—collect briefs, asset inventories, metadata taxonomies and tool configurations to enable automated processing and collaborative decision-making.

By meeting these goals, teams minimize ambiguity, reduce costly rework and create a unified reference for scripting, asset ingestion and editing. In environments juggling hundreds of assets and multiple distribution platforms, this preparation is vital for maintaining consistency under tight deadlines.

Project and Creative Briefs

The project brief encapsulates business goals, audience profiles, key performance indicators and compliance requirements. Formal sign-off ensures stakeholder alignment. Platforms provide version control, approval workflows and automated reminders to secure endorsements before production begins.

The creative brief conveys brand voice, visual references, narrative outlines and editorial tone. Integration with asset management systems via Adobe Sensei accelerates alignment by tagging and surfacing relevant mood boards, color palettes and reference clips.

Technical Specifications and Resource Inventories

Technical specifications define video resolutions, aspect ratios, codec preferences, audio standards and metadata schemas. Capturing these details early prevents downstream format mismatches. AI-driven validation tools can scan draft renders to flag encoding deviations before delivery.

Resource inventories enumerate personnel, equipment, locations and budget reserves. AI-powered scheduling engines from Celoxis or monday.com recommend optimal assignments, identify bottlenecks and simulate timeline scenarios based on team availability, gear lists and procurement lead times.

Script Analysis and Governance

Scripts provided in machine-readable formats enable AI tools like ScriptBook and OpenAI’s ChatGPT to extract scene structures, character breakdowns and sentiment analysis. Controlled vocabularies for scene categories, emotional tags and object classes ensure consistent metadata for shot list generation and searchability.

A formal governance framework outlines approval workflows, change request templates and version control practices. AI-enabled platforms automate routing of edits, maintain immutable audit trails and send deadline reminders, reducing coordination friction and ensuring every decision is documented.

Prerequisites for System Readiness

- Finalized and approved briefs, specifications and taxonomies.

- Configured AI modules for script parsing, asset tagging and scheduling.

- Provisioned asset management and collaboration platforms with established folder structures and naming conventions.

- Technical infrastructure—workstations, cloud compute and network capacity—meeting AI processing requirements.

- Active governance rules and change control workflows with defined escalation paths.

Meeting these conditions creates a controlled environment in which human planning and AI automation reinforce each other, reducing miscommunication and delivery delays as production scales.

Pre-Production Workflow Sequence

A structured pre-production sequence organizes tasks, roles and system interactions from initial kickoff through formal handoff of planning deliverables. This orchestration minimizes manual coordination and leverages AI to accelerate routine analyses, maintain consistency and foster collaboration.

Kickoff Workshop and Goal Alignment

A facilitated kickoff brings together producers, directors, editors, AI specialists and clients to define vision, technical requirements and success metrics. AI-assisted transcription services such as Otter.ai capture action items, while project management platforms like Asana record tasks, milestones and dependencies.

- Define creative vision, brand guidelines and target audience profiles.

- Document deliverables, deadlines and format specifications.

- Assign roles linked to PM tasks, with AI summarizing decisions and dependencies.

Script Parsing and Shot List Generation

The finalized script is ingested into an AI parsing engine to annotate scene boundaries, characters, props and dialogue segments. Metadata outputs include estimated shot types and resource requirements. Editors review and validate annotations through a collaborative interface before proceeding.

AI shot-list generators map narrative elements to visual templates, suggesting camera angles, framing and sequencing based on historical data and style guides. Creative teams refine entries, annotate custom directions and lock versions for integration with scheduling tools.

Scheduling and Technical Preparation

With a validated shot list, AI-powered schedulers like StudioBinder or ShotFlow integrate crew availability, location constraints and scene durations to propose optimized shoot calendars. Production coordinators review conflicts, adjust assignments and lock in dates, while automated notifications alert crew and vendors.

In parallel, technical leads complete AI-augmented questionnaires on resolution, codecs and metadata requirements. Digital Production Management software auto-generates technical specifications and folder structures, ensuring consistency for subsequent asset ingestion.

Stakeholder Review and Formal Handoff

Planning deliverables—shot lists, schedules and technical specs—are uploaded to review portals such as Frame.io or Wipster. Stakeholders receive alerts, leave time-coded annotations and approve revisions. AI-driven comment aggregation highlights common feedback themes and flags critical issues, automatically generating revision tasks.

Upon approval, the system exports XML or CSV shot lists, optimized schedules, configuration documents and annotated script breakdowns into the central media asset management platform. Notifications via Slack or Microsoft Teams inform asset managers and editors that planning is complete and raw media ingestion may begin.

AI Integration Across Production and Post-Production Stages

An end-to-end AI integration embeds intelligence at every critical decision point, transforming isolated tasks into a cohesive, adaptive ecosystem. Media asset management platforms, cloud rendering farms and collaborative review systems orchestrate data exchange, enforce security policies and deliver the computational power required by advanced analytics engines.

Automated Asset Ingestion and Format Management

Upon arrival, raw media is processed by AI-powered ingestion services that detect file formats, camera metadata and audio channels, normalizing footage into standardized containers. Transcoding engines convert unsupported codecs while preserving color space and dynamic range. Integrity validation classifiers flag corrupted frames or missing audio tracks, routing anomalies for manual inspection.

- Automatic format detection and batch transcoding.

- Integrity validation and error reporting.

- Metadata extraction and cataloging.

Intelligent Metadata Generation and Search

Computer vision models identify objects, scenes and shot types. Speech-to-text modules transcribe dialogue while sentiment analysis tools assess emotional tone. This rich metadata populates searchable indexes, enabling semantic queries by character, keyword or visual attribute. Human reviewers confirm AI tags via confidence scores, refining model accuracy over time.

- Automated scene and object recognition.

- Speech transcription and sentiment tagging.

- Semantic search with faceted filtering.

AI-Powered Rough Cut Assembly

AI edit assistants align dialogue transcripts with script references, selecting matching clips and sequencing them per the narrative outline. Timing algorithms trim excess footage, generating a cohesive rough cut. Editors can compare alternative cuts, apply transition styles and teach the system their stylistic preferences, enhancing future recommendations.

Automated Voiceover Scripting and Synthesis

Natural language generation modules process narration cues and tone guidelines to craft concise voiceover scripts. Integration with Amazon Polly or Azure Text-to-Speech synthesizes narration tracks in multiple voices and languages. Editors may override or adjust timing directly within the timeline.

AI-Driven Color Correction and Grading

Platforms like DaVinci Resolve Neural Engine analyze histograms, exposure and color balance to apply baseline corrections and creative looks. Face detection and region masks preserve skin tones while style matching engines synchronize color palettes across disparate shots.

Enhanced Audio Processing and Adaptive Mixing

AI filters remove noise, isolate dialogue and apply adaptive equalization. Tools such as iZotope RX detect artifacts and apply corrective processes. Loudness normalization algorithms ensure compliance with broadcast standards while editors preview mix adjustments in context.

Collaborative Review and Feedback Orchestration

Cloud review portals support synchronized playback, time-coded annotations and real-time voting. AI comment categorization distinguishes technical, creative and compliance feedback, prioritizing critical issues and assigning tasks via integration with Frame.io. Automated reminders and escalation paths streamline review cycles across distributed teams.

- Real-time annotation and version tracking.

- AI-driven feedback categorization and prioritization.

- Automated task assignment and escalation.

Automated Rendering and Multi-Format Delivery

Rendering engines execute parallel batch encoding, with AI optimizers selecting codecs, bitrates and resolutions tailored to each delivery channel. Tools like Telestream Vantage scale rendering capacity in the cloud. Watermarking, caption embedding and compliance checks occur automatically, generating metadata manifests for distribution.

- Smart codec and bitrate selection.

- Parallelized batch encoding.

- Automated compliance checks and manifest generation.

Performance Monitoring and Continuous Improvement

Analytics engines capture metrics on task durations, resource utilization and review cycle times. Dashboards visualize bottlenecks, while predictive models forecast constraints for upcoming projects. Feedback loops retrain AI models—for example, refining color grading algorithms based on manual correction data—to drive incremental gains in accuracy, turnaround time and cost efficiency.

Plan Deliverables, Dependencies, and Handoffs

The pre-production phase delivers an authoritative suite of artifacts—briefs, specifications, schedules, shot lists and metadata schemas—that guide all subsequent production and post-production activities. Formalizing outputs, tracking dependencies and standardizing handoff protocols eliminate ambiguity and accelerate time to final cut.

Primary Outputs

- Project and Creative Briefs—narrative objectives, audience profiles, brand guidelines and performance metrics, versioned in platforms such as Frame.io.

- Technical Specification Sheet—formats, codecs, resolutions and metadata schemas that inform automated transcoding and ensure compliance with delivery standards.

- Shot List and Storyboard—scene breakdowns with timecodes, camera angles, audio cues and metadata tags generated by AI tools like Google Cloud Video Intelligence API.

- Production Schedule and Resource Plan—time-sequenced shoot calendar linked to crew, equipment and location availability, dynamically adjustable by AI schedulers.

- Risk Register and Contingency Plans—identified bottlenecks and mitigation strategies maintained in live databases for automated monitoring.

- Metadata Schema and Taxonomy Guide—controlled vocabularies expressed in XML or JSON schemas, imported into AI engines for consistent annotation.

Key Dependencies and Governance

- Stakeholder Approvals—creative, technical and compliance sign-offs tracked via automated review workflows.

- Project Management Integration—synchronization with Asana, Trello or Jira through API connectors to enforce task dependencies and status updates.

- Data Quality and Version Control—metadata validation against schemas and document history preserved in version control systems for auditability.

- Procurement Lead Times—equipment bookings, talent contracts and permits factored into the resource plan with AI-driven procurement forecasts.

- Regulatory Compliance—legal review cycles and policy checks embedded in the workflow, with outcomes recorded in the risk register.

Handoff Protocols

- Project Repository Initialization—creation of a dedicated folder in the media asset management system, with access controls and tagged deliverables.

- API-Driven Data Transfer—serialization of planning outputs into JSON packages pushed to the ingestion engine to configure transcoding profiles and folder structures.

- Notification Triggers—alerts via Slack or Microsoft Teams informing asset managers and editors that the repository and metadata guides are ready.

- Ticket Creation—generation of tasks in the production tracking system for ingesting footage, applying naming conventions and initiating metadata tagging.

- Audit Log Entries—immutable records of user or system actions, timestamps and delivered artifacts for compliance and continuous improvement.

With these planning deliverables and protocols in place, the AI-driven editing workflow proceeds seamlessly into asset ingestion, intelligent tagging and automated assembly, all aligned with the original creative vision and technical specifications.

Chapter 2: Automated Asset Ingestion and Organization

Context and Imperatives for AI-Driven Workflows

As media production scales to meet diverse delivery requirements—web, broadcast, social—AI integration at every stage is no longer optional. Intelligent services automate routine tasks, surface real-time insights, and orchestrate complex handoffs, enabling teams to maintain creative control and consistent quality. The asset ingestion stage, which consolidates raw media into a unified repository, serves as the foundation for all downstream AI-driven processes.

Asset Ingestion and Structuring

Purpose and Strategic Value

Automating asset ingestion eliminates manual overhead, enforces consistency, and provides traceability from the moment footage, audio, graphics, and ancillary assets enter the workflow. By centralizing media in normalized formats and standardized naming conventions, editors, sound designers, colorists, and stakeholders can locate, preview, and select assets rapidly. Early integrity checks, format compliance validation, and a complete audit trail mitigate risk, prevent downstream errors, and support regulatory or security requirements.

Required Inputs and Delivery Mechanisms

Effective ingestion begins with a defined set of inputs and standardized delivery pathways. Key asset categories include:

- Camera Originals: Raw sensor files or native codecs from digital cinema cameras, DSLRs, mirrorless systems, and smartphones

- Audio Recordings: Location tracks, dialogue takes, ambient sound bounces, and field mixer outputs

- Graphics and Design Elements: Motion templates, logos, lower-thirds, title animations, and images from Adobe After Effects or Illustrator

- Stock Media and Licensing Files: Third-party clips, royalty-free music, sound effects, and usage rights documentation

- Production Documents: Shot logs, camera reports, metadata spreadsheets, slate information, and continuity notes

- Auxiliary Assets: Closed captions, translation scripts, transcripts, storyboards, and animatics

Delivery mechanisms may include secure FTP/SFTP, cloud collaboration platforms such as Frame.io, direct transfer via card readers, or automated on-set backups. For remote shoots, teams must provision sufficient bandwidth or employ high-speed data shuttle services to transfer large-format files promptly.

Infrastructure and Metadata Requirements

A robust ingestion infrastructure combines high-capacity storage arrays, scalable compute nodes, and optimized network fabrics. Local SSD caches handle burst transfers, while long-term archives reside on disk or tape libraries. GPU-accelerated transcoding servers and AI inference nodes power format detection, metadata extraction, and proxy generation, leveraging solutions such as AWS Elemental MediaConvert and Amazon S3 for cloud-native workflows.

Consistent metadata schemas and naming conventions ensure automated tagging and search indexing. Essential fields—project ID, scene, take, camera angle, location, talent tags, and production dates—are agreed upon in advance. File paths and names encode these attributes, for example: PROJ123_CAMA_SC05_TK02_20260210.MOV. Automation scripts enforce these conventions, renaming files and organizing directory structures at ingest time.

Ingestion Pipeline and Orchestration

The end-to-end ingestion pipeline orchestrates interactions among watcher services, AI-driven modules, and asset management platforms. A workflow engine such as Netflix’s Conductor monitors watch folders or upload endpoints and coordinates tasks through the following sequence:

- Trigger integrity validation: compute checksums (MD5, SHA-256) and quarantine files that fail verification

- Invoke format detection: AI-enhanced engines profile codecs, frame rates, and resolutions using AWS Elemental MediaConvert or on-premise tools

- Execute parallel transcoding: generate mezzanine and proxy formats on GPU clusters or via cloud services on Amazon S3

- Extract and enrich metadata: automated technical metadata extraction and custom AI tagging through Clarifai or Google Cloud Video Intelligence

- Apply naming conventions: rename assets and organize them in a hierarchical repository reflecting project taxonomy

- Categorize assets: route video, audio, graphics, and stills to respective partitions within a media asset management system such as Adobe Experience Manager Assets or CatDV

- Perform quality control checks: automated QC tools scan for dropped frames, audio clipping, and color inconsistencies, generating alerts for any failures

- Update asset catalog: register new entries, update indexes, and notify stakeholders via Frame.io or other collaboration platforms

Human-in-the-Loop and Exception Handling

While automation accelerates bulk processing, human oversight is vital at key checkpoints. After integrity validation, technical leads review flagged errors. Editors sample proxies post-transcoding to confirm visual fidelity. Production coordinators verify metadata—project codes, usage rights, and talent releases—before assets enter the MAM. The workflow engine tracks approvals and only advances batches upon sign-off.

Exception protocols maintain throughput and transparency:

- File Corruption: Quarantine corrupted files, log errors, and alert staff for re-transfer

- Metadata Mismatch: Flag conflicts between AI-extracted values and schema definitions for manual reconciliation

- Transcode Failures: Automatically retry failed jobs; escalate persistent errors with links to logs and source files stored on Amazon S3 or network volumes

Integration with chatOps channels (Slack, Microsoft Teams) and project management systems (Jira, Asana) ensures rapid response to interruptions.

Scalability, Performance, and Monitoring

To sustain peak workloads in high-volume environments, ingestion systems employ:

- Auto-Scaling Transcoding Clusters: Containerized media servers add GPU or CPU nodes under load

- Distributed File Systems: Parallel read/write capabilities reduce I/O contention

- Batching Strategies: Micro-batches for small assets and dedicated tasks for large masters

- Priority Queues: Preemptive pipelines for urgent assets like breaking news

Continuous monitoring dashboards aggregate performance metrics—queue times, error rates, throughput—and drive process refinements. Analytics engines recommend adjustments, such as retraining AI models or reallocating compute resources, maintaining optimal efficiency over time.

Traceability, Outputs, and Handoffs

Comprehensive audit trails record every action—file transfers, transcoding events, metadata edits—with immutable logs of hashes, timestamps, and user or AI-agent identifiers. Key outputs include:

- Structured Asset Repository: High-resolution masters and proxy derivatives organized by media type and scene identifiers

- Machine-Readable Manifests: JSON or XML files listing asset attributes and CSV reports summarizing batch imports

- Embedded and Sidecar Metadata: Core fields within files and sidecar XMP or JSON files with operator notes and ingest timestamps

- Transcoding and QC Logs: Success and and AWS Elemental MediaConvert

- Access Control Settings: Role-based permissions configured in Frame.io or Azure Media Services

These deliverables feed directly into intelligent metadata tagging and search pipelines. Handoff protocols include:

- API-Driven Transfers: POST sidecar metadata to Google Cloud Video Intelligence or IBM Watson Video Enrichment via RESTful endpoints

- Index Synchronization: Populate ElasticSearch or Solr clusters and update collaborative platforms like Frame.io and Wipster for immediate browsing

- Notifications and Task Assignments: Automated alerts in Jira or Asana and messages in Slack or Teams summarizing ingest batches and linking to asset dashboards

- QA and Approval Gates: Validation points where QA teams confirm proxy fidelity and metadata completeness before downstream processing

- Fallback Mechanisms: Retry logic for failed transfers and escalation paths to media engineers or production managers

By meticulously defining prerequisites, orchestrating AI-driven tasks, and formalizing outputs and handoffs, media teams build a transparent, auditable, and scalable foundation for all subsequent editing, tagging, and delivery processes.

Chapter 3: Intelligent Metadata Tagging and Search

In high-volume video production environments, transforming unstructured media into richly annotated, searchable resources is essential for accelerated editing, review, and delivery. By extracting descriptive, contextual, and technical metadata—such as scene boundaries, spoken dialogue, visual objects, facial appearances, and motion attributes—teams can locate, retrieve, and assemble content with precision and speed. Leveraging AI-driven pipelines reduces manual tagging bottlenecks, enforces consistency, and maintains compliance with organizational taxonomies and branding guidelines.

The objectives of this stage are threefold:

- Accurate Identification: Detect and label visual and auditory elements within each asset to support refined search queries.

- Contextual Enrichment: Map raw detection outputs to higher-level concepts, themes, and project-specific taxonomies aligned with narrative goals.

- Searchability and Accessibility: Index annotated assets in a media asset management or content management system that offers intuitive discovery interfaces.

Required Inputs

- Raw Media Assets

- High-resolution video files (MP4 with H.264/H.265) and isolated or embedded audio tracks.

- Auxiliary files such as still images, graphics, subtitles, and closed-caption files.

- Preliminary Metadata and Technical Specifications

- File naming conventions, ingestion logs, media checksums, and existing metadata fields (project ID, scene number, shoot date).

- Taxonomy Definitions and Style Guides

- Hierarchical subject structures, controlled vocabularies, keyword lists, and guidelines on terminology, language preferences, and sensitivity flags.

- Project Briefs and Creative Directives

- Narrative descriptions, brand attributes, audience targets, compliance requirements, and usage rights.

- AI Service Endpoints and Model Configurations

- Credentials and API endpoints for services such as Google Cloud Video Intelligence API, Amazon Rekognition, and Azure Video Indexer.

- Parameters for object detection thresholds, speech transcription models, and facial recognition criteria.

Prerequisites and System Conditions

- Asset Ingestion and Organization: Assets ingested into the MAM/CMS with validated integrity, normalized formats, and prescribed folder structures.

- Compute and Network Infrastructure: GPU-enabled inference servers or cloud quotas, optimized network bandwidth, and storage I/O for large media streaming.

- Data Governance and Compliance: Role-based access controls, audit trails, and privacy checks (GDPR, CCPA) for face and voice recognition.

- User Roles and Review Workflows: Metadata curators for verifying AI outputs, with human-in-the-loop feedback mechanisms to refine model performance over time.

Operational Dependencies

- Upstream: Pre-production documentation and ingestion logs that inform scene detection and supply baseline metadata.

- Downstream: Rough cut modules, voiceover scripting engines, and content recommendation systems that consume structured metadata.

Tagging and Indexing Workflow

The metadata tagging process unfolds through a coordinated sequence of phases that combine scalable AI analysis with targeted human validation. An event-driven architecture using workflow engines (for example, AWS Step Functions or Azure Durable Functions) and message queues (Amazon SQS or Google Pub/Sub) orchestrates tasks from ingestion to searchable index provisioning.

Phase 1: Asset Queueing and Preprocessing

Ingested files trigger a preprocessing routine that verifies integrity, extracts technical metadata (codec, resolution, duration), applies naming conventions, and assigns unique identifiers. A media validation service rejects or flags corrupt files, while message queues buffer tasks and distribute workloads across compute resources.

Phase 2: Automated Visual Analysis

Computer vision services such as Amazon Rekognition, Google Cloud Vision, and Clarifai perform scene detection, object recognition, and shot boundary identification. Results are consolidated by a microservice that aligns overlapping outputs, resolves conflicts via confidence thresholds, and formats aggregated data as JSON records.

Phase 3: Automated Audio Analysis

Speech transcription and acoustic classification engines—including AWS Transcribe, Google Cloud Speech-to-Text, and IBM Watson Speech to Text—process audio segments. Transcripts with timecodes, sentiment scores, and named entities enrich the metadata, while anomalies such as noise dominance are flagged.

Phase 4: Textual Content Extraction

Optical character recognition via Google Cloud Vision OCR or Azure Computer Vision extracts on-screen text from key frames. A metadata ingestion service maps custom fields from shoot logs and user notes into a unified schema.

Phase 5: Metadata Aggregation and Enrichment

An enrichment engine unifies visual labels, transcripts, and OCR results into comprehensive records. Entity resolution links detected items to knowledge graphs or brand registries, while custom models infer scene genres and sentiments. Outputs conform to standards such as IPTC or XMP.

Phase 6: Human-in-the-Loop Validation

Low-confidence tags are queued in annotation platforms like Frame.io for expert review. Editors correct misidentifications and enhance context. Feedback loops capture corrections for model retraining, improving future accuracy.

Phase 7: Indexing and Search Provisioning

Validated metadata is ingested into search engines such as Elasticsearch or Apache Solr. Custom analyzers support full-text queries, faceted navigation, and timecode retrieval. Search APIs enable editors to query by keywords, category, or production attributes, returning thumbnails with linked timecodes and confidence metrics.

Integrating AI Across Production Stages

Embedding AI throughout the production lifecycle ensures seamless connectivity from planning to delivery. Each stage benefits from specialized engines and cloud services that automate complex tasks, enhance consistency, and accelerate creative workflows.

Pre-Production Planning

NLP models analyze scripts and briefs to generate shot lists and storyboard outlines. Platforms like Adobe Sensei identify narrative structures and visual themes, while resource-planning engines forecast equipment and staffing needs based on historical project data.

Asset Ingestion and Normalization

AI-driven ingestion tools validate, transcode, and embed technical metadata. Services such as AWS Elemental MediaConvert and media asset management systems like Avid MediaCentral enforce standardized naming and format conventions.

Rough Cut Generation

Machine learning aligns tagged assets with script cues to assemble preliminary sequences. Embedding services from OpenAI recommend clip ordering and transitions, producing a structured rough cut for creative refinement.

Voiceover Scripting and Narration

Language models adapt dialogue to brand voice, and text-to-speech engines such as OpenAI’s audio models generate narration in multiple languages. Audio is synced automatically to timelines, reducing reliance on studio bookings.

Color Grading and Look Matching

The DaVinci Resolve Neural Engine analyzes footage histograms and reference images to suggest exposure, white balance, and stylized LUTs. Automated grades maintain visual continuity across shots with minimal manual intervention.

Audio Enhancement and Sound Design

AI filters in tools like iZotope RX perform noise reduction, dereverberation, and adaptive mixing. Models suggest effect placements and ambient textures based on scene context, delivering polished stems ready for mastering.

Collaborative Review and Feedback

Platforms such as Frame.io aggregate comments using machine learning to categorize feedback by theme and priority. Automated notifications and sentiment analysis streamline approval cycles and maintain audit trails.

Rendering and Format Conversion

Smart encoding pipelines in AWS Elemental MediaConvert select optimal codecs and bitrates for each distribution channel, adjust compression dynamically, and inject metadata and watermarks according to delivery requirements.

Performance Monitoring and Continuous Improvement

AI dashboards track KPIs—edit turnaround times, review latencies, and resource utilization. Predictive models forecast capacity constraints, and retraining pipelines leverage human corrections to refine AI accuracy over successive projects.

Outputs and Deliverables

- Enriched Metadata Records: Scene descriptors, object and face labels, sentiment annotations, transcripts, and speaker IDs organized within hierarchical taxonomies.

- Scene Boundary Maps: Timecode markers for scene transitions, shot angles, and frame-accurate retrieval.

- Speech-to-Text Transcripts: Word-level transcripts with timestamps for closed captions and narrative analysis.

- Confidence Scores and Quality Metrics: Reliability indicators for each annotation and flags for human review.

- Visual Thumbnails with Overlayed Tags: Keyframe snapshots annotated with principal metadata for rapid browsing.

- Object Tracking Data: Temporal motion paths and spatial coordinates for advanced editing functions.

- Compliance Flags: Automated detection of restricted content for legal and policy review.

- Inter-Asset Relationship Graphs: Linked assets such as B-roll alternatives and multi-camera angles for contextual navigation.

Dependencies and Handoff

Essential Dependencies

- Completed Ingestion and Normalization: Verified and standardized media files.

- Pretrained and Custom Models: Access to services like Amazon Rekognition, Google Cloud Video Intelligence, and custom domain-specific models.

- Defined Taxonomy and Schemas: Controlled vocabularies, hierarchical structures, and naming conventions.

- Compute and Storage Infrastructure: GPU-enabled processing and resilient search stores (Elasticsearch or Apache Solr).

- Identity and Access Management: Secure role-based controls and enterprise single sign-on.

- Human Review Workflows: Dashboards and annotation tools for curator validation.

- API and Integration Endpoints: RESTful or GraphQL interfaces for downstream applications.

- Version Control and Change Management: Protocols for updating models and schemas without disrupting consumers.

Handoff to Downstream Systems

- Rough Cut Tools: API or flat-file delivery of shot lists, timecodes, and confidence thresholds for sequence assembly.

- Editing Interfaces: Search panels within NLE environments for drag-and-drop access to tagged thumbnails.

- Voiceover and Subtitling: Timestamped transcripts for text-to-speech engines and closed-caption file generation.

- Color Grading and VFX: Taxonomy-driven shot grouping and object masks for consistent grading and effects work.

- Review Platforms: Time-indexed comment threads and metadata-filtered feedback dashboards.

- Compliance Systems: Legal review queues for flagged assets with audit logs of detections and validations.

- Analytics and Monitoring: Metrics on indexing throughput, tag distribution, and review latency for continuous optimization.

- MAM and Distribution: Synchronization of the searchable index with Media Asset Management systems and delivery manifests.

- Retraining Pipelines: Feedback loops capturing curator corrections to improve AI models over time.

By consolidating intelligent metadata tagging, event-driven workflows, AI integrations across production stages, and clear dependencies and handoff mechanisms, media teams achieve a scalable, end-to-end ecosystem. This foundation enables precise asset discovery, rapid rough cut assembly, accelerated voiceover and color workflows, and continuous model improvement for high-quality video production at scale.

Chapter 4: AI-Powered Rough Cut Generation

In high-volume media production environments, the initial assembly of raw footage into a coherent narrative sequence often represents a significant time sink. AI-Powered Rough Cut Generation automates clip selection, ordering, and basic trimming by analyzing script cues, asset metadata, and visual patterns, reducing first-pass editing from days to minutes. This stage serves as the foundation for creative refinement, ensuring consistency across projects and enabling editors to focus on storytelling rather than technical setup.

Integrating machine learning models trained on annotated footage with natural language processing of shooting scripts and similarity search for visual and audio content enables AI-driven tools to produce a structured preliminary edit that maintains narrative intent. These automated outputs create a standardized baseline featuring transitions, branding elements, and proxy media, significantly speeding up downstream workflows like color grading, sound design, and collaborative review. This approach delivers a dependable, scalable solution that meets the rapid turnaround demands of news, marketing, and episodic production while offering quantifiable quality metrics for ongoing enhancement.

Inputs, Prerequisites, and Technical Requirements

Required Inputs and Data Sources

- Script Documents: Finalized shooting scripts or dialogue transcripts in formats like Final Draft or Fountain, containing scene descriptions, dialogue lines, and timing notes.

- Tagged Media Assets: Video clips, audio files, and graphics enriched with metadata—scene numbers, character names, shot types, key objects, emotional tone—produced during Intelligent Metadata Tagging.

- Editing Templates and Brand Guidelines: Predefined sequence presets specifying track organization, transition defaults, color palettes, graphic overlays, and pacing parameters aligned to corporate identity.

- Timecode and Synchronization Data: Metadata establishing audio/video sync for multi-camera shoots, imported via tools such as Avid ScriptSync or built-in features in Adobe Premiere Pro.

- Reference Storyboards or Animatics: Visual guides that inform shot order and composition, enhancing the AI’s ability to align media to narrative beats.

Key Prerequisites

- Metadata Completeness: At least 95% of assets tagged with required fields—scene, take, shot type, semantic labels—to prevent sequence gaps or misordered clips.

- Consistent Naming Conventions and Folder Structures: Uniform schemes enable automated grouping and retrieval of related assets without manual intervention.

- Script-Asset Alignment Verification: Dialogue transcripts linked to media timecodes with over 98% accuracy, aided by services like Adobe Sensei speech-to-text alignment.

- Template Configuration Review: Sample footage tests to validate track counts, transition presets, and title placeholders prior to full assembly.

- Compute Resource Availability: GPU-accelerated nodes or cloud instances, as required by engines like Blackbird’s TrimInterface, to avoid delays in large-scale processing.

- Stakeholder Sign-Off: Alignment on pacing guidelines, version strategy, and approval criteria to minimize rework.

Technical and Organizational Requirements

- Centralized Media Asset Manager: A DAM/MAM platform such as Avid MediaCentral for scalable media retrieval via API.

- AI Model Configuration and Version Control: Project-specific settings for documentary, marketing, broadcast, or social media applications, with rigorous tracking of model parameters.

- User Authentication and Access Control: Role-based permissions and single sign-on integration to secure templates, metadata, and sequence files.

- Data Governance Policies: Guidelines on media retention, metadata editing, and cut ownership to prevent conflicts in collaborative workflows.

- Integration with Review Platforms: Direct handoff of rough cuts into collaborative tools—such as Frame.io or Wipster—for immediate feedback cycles.

Automated Workflow for Sequence Assembly

Scene Extraction and Script Parsing

- Script Ingestion: Upload scripts (PDF, DOCX, FDx) to the orchestration layer, normalize text, and convert to structured JSON with scene numbers, slug lines, and timecode hints.

- Scene Segmentation: Use an NLP engine—based on models like OpenAI GPT-4 or Vertex AI—to identify slug lines, dialogue blocks, and actions, tagging each scene with a unique identifier and estimated duration.

- Metadata Enrichment: Extract thematic tags (for example, “testimonial,” “demo,” “interview”) to guide subsequent asset matching.

- Event Notification: Emit completion events via Apache Kafka or AWS EventBridge, providing links to parsed JSON in the asset repository.

Asset Matching and Clip Selection

- Metadata Query Assembly: Construct search queries combining script tags—location, subject, action—with Boolean filters for resolution, frame rate, and color profile.

- Similarity Search Invocation: Invoke AI embedding services—such as Adobe Sensei or custom vector engines—to compare scene descriptors against precomputed clip embeddings.

- Candidate Review: Present top-ranked clips for editor approval or rejection; adjust thresholds or expand search parameters as needed.

- State Update: Write approved asset IDs and in/out points to the project database and notify the trimming module via REST API or messaging events.

Automated Trimming and Synchronization

- Initial Trim Application: Extract start/end times from script cues and speech transcripts to define rough clip boundaries.

- AI Boundary Refinement: Analyze visual and audio signals to adjust trim points for natural pauses and continuity, using video frameworks like FFmpeg.

- Keyframe Alignment: Snap cut points to nearest keyframes and apply crossfades to preserve timing integrity.

- Proxy Generation: Create low-resolution clips for rapid preview, linking to high-resolution masters for final conforming.

- Error Handling: Retry failed trim jobs with alternate parameters or notify editors for manual intervention.

Timeline Assembly and Template Integration

- Template Selection: Choose a timeline template based on content type, target platform, and runtime requirements.

- Automated Clip Placement: Insert trimmed clips in scene order, assign tracks, apply default audio levels, and enforce transition styles.

- Branding Overlays: Populate opening animations, lower thirds, and end credits from the design asset library via integrations.

- Version Control: Commit the assembled timeline as “RoughCut_v1,” recording template identifiers and change descriptions for branching.

Interactive Adjustments and Handoff

- Proxy Timeline Review: Editors import the proxy timeline into NLEs like Adobe Premiere Pro or Avid Media Composer, relinking to high-resolution sources as needed.

- Real-Time Collaboration: Use cloud plugins for simultaneous viewing, annotation, and timecoded comments; AI aggregates feedback by type and priority.

- Iterative Refinement: Capture edit deltas and update downstream modules—color grading, audio mixing—through the orchestration layer.

- Downstream Triggering: Upon approval, initiate workflows for voiceover integration, color correction, and final audio processing, carrying metadata tags forward.

- Status Dashboard: Display assembly progress, review feedback, and handoff readiness with automated alerts for project managers.

AI Capabilities and Supporting Systems

Core Editing Algorithm Functions

- Clip Selection and Ranking: Analyze metadata attributes—scene descriptions, recognized faces, spoken keywords, visual sentiment—using services like Google Cloud Video Intelligence API or Microsoft Azure Video Indexer to assign relevance scores.

- Automated Trimming and Timing: Employ deep learning to detect natural shot boundaries, action peaks, and pauses, predicting optimal in/out points.

- Sequence Ordering: Use transformer-based NLP to interpret script segments and align clips to narrative arcs, adjusting pacing with transitional shots.

- Transition Suggestion: Recommend fades, crossfades, or hard cuts by analyzing color profiles and motion vectors, integrating with Adobe Sensei style-transfer.

- Audio-Visual Synchronization: Align separate audio tracks using waveform comparison and timecode metadata via tools like AWS Elemental MediaConvert.

- Style and Tone Matching: Apply machine learning classifiers trained on stylistically rated footage to maintain consistent mood labels—”energetic,” “dramatic,” “informational.”

Supporting System Roles

- Metadata Repository: Centralized NoSQL index (for example, Elasticsearch) for fast retrieval and update of clip attributes.

- AI Inference Engine: Containerized microservices hosting ranking, trimming, and assembly models on GPU clusters.

- Orchestration Layer: Workflow manager (Apache Airflow or Kubernetes) coordinating service execution and exposing REST APIs.

- User Interface and Dashboard: Front-end for configuring algorithm parameters, reviewing timelines, and providing feedback; integrates with Frame.io for annotation and version control.

- Feedback Loop: Logs editor adjustments to retrain models on real usage patterns.

- Security and Access Control: Enterprise authentication and role-based permissions to safeguard assets and processes.

Human-in-the-Loop Governance and Scalability

- Adjustable Confidence Thresholds: Editors define minimum scores for automatic inclusion, flagging low-confidence clips for manual review.

- Interactive Timeline Editing: Standard NLE interfaces for rearranging, trimming, or replacing clips with metadata updates in real time.

- Automated Change Logging: Transparent logs of all manual edits for quality audits and model retraining.

- Template and Style Overrides: Policy engine enforces custom rules—maximum shot length, preferred transitions—during assembly.

- Containerized Deployment and Hardware Acceleration: Dynamic scaling of AI services across on-premise or cloud GPU/TPU clusters.

- Asynchronous Processing and Caching: Non-blocking job handling with intelligent reuse of intermediate results to optimize throughput.

- Quality Metrics and Dashboards: Automated checks for shot rhythm, scene completeness, and sync accuracy; real-time visualization of performance and error rates.

- Continuous Model Retraining: Scheduled cycles incorporating fresh editor feedback to refine algorithm behavior.

Outputs, Dependencies, and Handoff Protocols

Primary Outputs

- Preliminary Timeline Sequence: Export your project in standard formats such as Adobe Premiere Pro XML and Avid AAF. This process includes clearly defined scene boundaries and transitions, ensuring a seamless handoff for further editing or review.

- Clip Selection Report: Indexed listing of media clips used, timecode in/out points, metadata references, and AI confidence scores.

- Metadata Enhancement Log: Records of tags and markers added during assembly—emotional tone flags, pacing notes, keyword timestamps.

- Proxy Media Pack: Low-resolution proxy files named to link seamlessly with original masters for review and conforming.

- Automated Notes File: Text or JSON capturing AI-generated editorial annotations, narrative flow gaps, and script-visual inconsistencies.

Key Dependencies

- Validated Script and Cue Sheet: Finalized, time-aligned text with clear scene descriptions and dialogue references.

- Comprehensive Metadata Index: Up-to-date repository of object labels, facial IDs, transcripts, and segmentation data.

- Standardized Templates: Current sequence presets defining pacing rules, transitions, and title placements.

- AI Model Configuration and Versioning: Specific weights, thresholds, and scoring functions under strict version control.

- Compute Resource Allocation: Adequate GPU or cloud compute capacity to process large media batches without bottlenecks.

- Project File Export and Registration: Auto-ingest sequences into the asset management platform, tagging with generation metadata and version history.

- Proxy Linkage: XML mappings or EDLs linking proxy media to high-resolution masters for seamless resolution switching.

- Review Platform Integration: Upload curated previews to Frame.io or Descript, complete with timecode annotations and playback controls for stakeholders.

- Notification and Task Generation: Dispatch workflow tasks to sound design, color grading, and VFX teams via project management tools or proprietary engines like Blackbird.

- Quality Assurance Checkpoints: Automated scripts verify sequence integrity—media references, template placeholders, metadata consistency—and flag issues for correction.

By uniting precise inputs, robust AI capabilities, and structured handoff mechanisms, the AI-Powered Rough Cut Generation stage transforms the initial edit process into a predictable, high-throughput operation. This foundation unlocks accelerated creative refinement, consistent quality, and measurable improvements across every phase of video production.

Chapter 5: Automated Voiceover Scripting and Narration

Purpose and Benefits of Automated Voiceover Production

The Automated Voiceover Scripting and Narration stage transforms a picture-locked rough cut into a final audio mix by integrating AI-driven script adaptation, style consistency, and text-to-speech generation. This approach standardizes tone, accelerates turnaround, and reduces dependency on scarce voice talent scheduling. Media teams achieve consistent narrative delivery across multiple videos and languages, rapid script iteration with immediate audio previews, scalable access to diverse voice profiles, dynamic pacing for regional audiences, and improved alignment between narration and visuals, minimizing ADR cycles. By freeing creative and technical teams from manual audio tasks, automated voiceover production enhances both quality and efficiency.

Prerequisites and Essential Inputs

Before engaging AI-driven narration tools, projects must supply accurate, approved materials and clear guidelines. Essential inputs fall into four categories.

Script and Style Guidelines

- Finalized Source Text: Approved script with annotations for scene breaks, emphasis, and pauses.

- Segmented Dialogue Blocks: Discrete script segments mapped to rough cut timeline cues.

- Alternate Variations: Translations or tone adjustments for multilingual or multi-voice versions.

- Brand Voice Profile: Style guide detailing emotional tone, pacing, vocabulary preferences, and prohibited language.

- Audience Persona: Demographic and cultural context informing accent strength, formality level, and speech rate.

Timing and Technical Data

- Locked Picture Cut Reference: Time-coded export (EDL or AAF) establishing exact segment durations.

- Metadata Timing Cues: Scene markers, subtitle timestamps, and visual event triggers for AI pacing adjustments.

- Buffer and Overlap Parameters: Defined lead-in and lead-out times for natural breathing and smooth transitions.

Voice Assets and Infrastructure

- Synthetic Voice Models: Pre-trained voices from Amazon Polly, Azure Cognitive Services Text-to-Speech, or Google Cloud Text-to-Speech, selected by language, persona, and neural quality.

- Recorded Talent Files: Approved actor recordings with metadata for speaker, language, and recording conditions.

- Audio Calibration Profiles: Reference samples defining loudness (LUFS), EQ curves, and dynamic range for AI normalization.

- API Credentials: Valid keys for TTS and audio processing platforms with adequate quotas and permissions.

- Audio Editing Environment: DAW templates, such as Adobe Audition session files, preconfigured for automated import of voice tracks.

Legal, Compliance, and Localization

- Rights and Clearances: Licensing agreements for synthetic and third-party voice assets.

- Accessibility Standards: Closed-caption accuracy, audio description requirements, and translation mandates.

- Privacy Policies: Data handling guidelines for voice prints and biometric models, ensuring compliance with regulations.

- Translation Memory and Glossaries: Approved brand terminology and technical jargon repositories for consistent localization.

AI-Driven Workflow Process

The integrated workflow combines automated tasks and human oversight to convert scripted text into synchronized voiceover tracks. Coordination is managed by an orchestration layer that interfaces with AI services, asset repositories, and editing platforms.

Script Ingestion and Refinement

- Parsing and Segmentation: AI ingests the approved script, identifies scene markers and timing cues, and divides text into manageable segments.

- Language Normalization: Modules normalize abbreviations, numbers, and technical terms to ensure accurate pronunciation.

- Style Enforcement: AI references brand voice profiles to adjust tone, pacing, and vocabulary using services like the text transformation API in Amazon Polly or Google Cloud Text-to-Speech.

- Prosody Markup: Punctuation and emphasis tags are inserted to guide TTS engines on pauses and inflection.

Human Review and Voice Selection

- Editorial Approval: Editors review refined text in a side-by-side interface, accepting or rejecting AI suggestions and adding pronunciation notes.

- Voice Catalog Query: System queries voice asset management for attributes such as gender, age, and accent.

- Voice Previews: Short samples are generated via tools like Descript Overdub or ElevenLabs Voice Lab to validate emotional inflection.

- Configuration Lock-In: Chosen voice models are registered in the rendering configuration to ensure consistency across segments.

Text-to-Speech Generation and Synchronization

- Batch API Requests: Segments are batched and submitted to TTS engines—such as Amazon Polly, Azure Cognitive Services Text-to-Speech, Google Cloud Text-to-Speech, Murf AI, or Replica Studios—optimizing throughput and handling rate limits.

- Error Handling: Transient failures are retried automatically; persistent errors escalate to audio engineers.

- Asset Ingestion: Generated audio files are validated, renamed by project ID and segment, and ingested into the media asset repository.

- Project files or XML exports: for Adobe Premiere Pro and Avid Media Composer now include updated voice clips and waveform proxies, enhancing the editing process with accurate audio representations. This integration allows editors to synchronize visuals with precise audio cues, streamlining the workflow and improving overall project quality.

- Alignment Module: AI analyzes video keyframes and speech boundaries to adjust offsets, crossfades, and fade-in/out markers, tagging segments requiring manual adjustment.

Iteration and Quality Control

- Feedback Interface: Editors mark segments for tone, pacing, or wording changes, which the orchestration engine queues for reprocessing.

- Automated Refinement: Targeted updates regenerate audio, reattach clips, and update metrics on revision counts and turnaround times.

- Quality Assurance: Automated checks flag clipping, silence gaps, and prosody anomalies; human reviewers verify pronunciation, pacing variance, and emotional resonance against style guides.

System Orchestration and Scalability

- Workflow Engine: Tasks are sequenced via a message bus and orchestrated using AWS Step Functions or Google Cloud Workflows.

- Model Serving: Containerized services using BentoML or Kubeflow coordinate TTS and refinement modules.

- Auto-Scaling: Compute resources adjust based on queue depth to meet SLAs for high-volume production.

- Monitoring and Alerts: Dashboards track synthesis latency, system utilization, and error rates, alerting administrators to anomalies.

Core AI Capabilities

This stage relies on specialized AI modules that convert text into expressive, brand-aligned audio, integrated seamlessly within the production ecosystem.

Text-to-Speech Engines

- Neural Synthesis: Platforms like Google Cloud Text-to-Speech, Amazon Polly, and Azure Cognitive Services Text-to-Speech use deep neural networks for artifact-free audio.

- Pronunciation Accuracy: Grapheme-to-phoneme converters and custom lexicons ensure correct rendering of technical terms and proper nouns.

- Batch Processing: APIs support large volumes of text, returning audio files tagged with timing metadata for precise timeline alignment.

Style Transfer and Emotion Control

- Voice Embedding: Tools like Descript Overdub and Replica Studios capture vocal characteristics to mimic specific styles.

- Adaptive Tokens: Parameters adjust warmth, energy, and clarity at the sentence level without retraining.

- Emotion Modeling: Services such as IBM Watson Text to Speech analyze sentiment cues and apply prosody adjustments for emotional inflection.

Phoneme-Level Customization

- Forced Alignment: Algorithms match synthetic speech to video frames, generating viseme data for character animation.

- Custom Lexicons: Domain-specific vocabulary and multilingual transitions maintain lip-sync accuracy.

- Tool Integration: Outputs feed directly into Adobe Premiere Pro, Unreal Engine, or animation pipelines, reducing manual keyframe work.

Integration and Quality Assurance

- Media Asset Management: Scripts, voice profiles, and audio tracks are versioned and stored in centralized repositories.

- NLE Plugins: Editors invoke AI services from the timeline to preview and import approved voice tracks without context switching.

- Human-in-the-Loop: Automated checks flag anomalies; editors review side-by-side comparisons of script, phoneme timeline, and audio.

Scalability and Security

- Cloud-Native Architecture: GPU-accelerated inference and auto-scaling clusters handle peak production loads.

- Caching: Frequently used voice profiles and pronunciation rules are cached to reduce latency.

- Security Controls: Encryption at rest and in transit, token-based authentication, and compliance certifications (SOC 2, ISO 27001, GDPR).

- Governance: Role-based access and audit logging protect sensitive voice data and maintain regulatory compliance.

Final Outputs and Handoffs

Upon completion, the workflow produces finalized voiceover tracks synchronized to the video timeline, accompanied by metadata manifests and deliverable packages for sound design and mixing teams.

Deliverables and Metadata Packaging

- Audio Files: Variants include clean reads, performance takes, and localized versions in uncompressed WAV (48 kHz/24-bit) or compressed MP3/AAC formats.

- Manifest File: Consolidated XML or JSON listing file names, timecode in/out points, voice model IDs, processing parameters, version history, and approval timestamps.

- Metadata Fields: Project ID, segment number, voice talent or AI model details, normalization settings, and review status.

Quality Assurance and Validation

- Automated Checks: Audio analysis engines scan for clipping, unwanted silence, and prosody deviations, computing intelligibility and synchronization scores.

- Human Spot Checks: Reviewers verify pronunciation accuracy, timing variance (±50 ms), and emotional resonance against QA checklists.

- QA Report: Batch-level documentation of metrics and any segments flagged for re-rendering or manual correction.

Handoff Protocols

- Audio Stems Export: Files with embedded timecode markers and scene transition cues.

- Manifest Delivery: Accompanied by pronunciation dictionaries or emotional tone profiles.

- AAF or FCPXML Package: For import into Avid Pro Tools or Apple Logic Pro, preserving timeline alignment.

- Synchronization Verification: Checksum or waveform comparison tools ensure narration aligns with the latest video edit.

- Review Versions: Secure cloud links to compressed previews allow final creative approvals before mix.

Version Control and Timeline Integration

Voiceover assets and manifests are registered in version control systems such as Git LFS or Perforce, tagged to milestone identifiers. Editors import approved stems into the master sequence in the NLE, and automated reconciliation scripts cross-reference video and audio timecodes to detect drift beyond thresholds. Any corrective actions are tracked in project management tools, ensuring full traceability as the project advances to final rendering and distribution.

Chapter 6: AI-Driven Color Correction and Grading

The color correction and grading stage transforms raw footage into polished visual output that fulfills creative intent, technical standards, and distribution requirements. By automating exposure matching, white balance adjustments, and stylistic treatments, AI-driven tools accelerate high-volume workflows, ensure visual continuity across scenes, and uphold brand guidelines. These systems analyze tonal ranges, skin tones, and scene dynamics to propose or apply corrective transforms, freeing colorists to focus on narrative-driven artistic decisions. This stage bridges technical compliance—meeting broadcast or platform specifications—and creative finishing that reinforces mood, emphasizes story beats, and supports a unified aesthetic.

Inputs and Prerequisites

- Raw Footage Files: Camera originals in log or raw formats (ARRI Alexa Open Gate, RED RAW, Blackmagic RAW, Sony S-Log) provide maximum dynamic range. AI engines require uncompressed or lightly compressed footage to analyze sensor data accurately.

- Color Profiles and Metadata: Embedded metadata—color space (Rec.709, Rec.2020, DCI-P3), exposure values, ISO, white balance—guides automated transforms. Consistent metadata standards enable AI models to interpret scene conditions reliably.

- Reference Looks and LUTs: Look-up tables (.cube, .3dl) or reference stills from creative briefs define target aesthetics. Systems such as Adobe Sensei and Colorlab AI leverage these references to synchronize grade characteristics across the timeline.

- Shot Logs and Scene Metadata: Detailed shot logs—including scene numbers, takes, lenses, filters—and AI-driven scene detection enable batch grouping. Accurate metadata tags link shots to their narrative context for uniform grading.

- Color Management Framework: An established pipeline (ACES or custom profile) ensures fidelity across tools. Platforms like DaVinci Resolve automatically conform footage to the chosen color space.

- File Naming and Organization: Consistent naming conventions and folder structures, applied during ingestion, allow AI grading modules to target correct clips without manual intervention.

- Color Charts and Calibration: Standardized charts (X-Rite ColorChecker, neutral gray cards) captured on set let AI algorithms calibrate exposure and white balance, reducing drift across time-coded shots.

Automated Color Matching Workflow

Shot Analysis and Baseline Correction

Each clip undergoes frame-by-frame analysis. Key steps include metadata retrieval, histogram profiling, white balance estimation, and computation of baseline transforms to normalize exposure and color temperature.

Reference Look Import and Matching

Reference assets—still images or LUTs—are ingested and analyzed. AI extracts color palettes, contrast ratios, and saturation curves, then ranks compatible looks. Colorists select desired references, which the system associates with shot groups.

Scene Segmentation and Grouping

AI identifies scene boundaries from edit metadata and clusters shots by lighting, location, and lens attributes. Tags (interior/exterior, time of day) are applied, and operators refine groupings to reflect narrative and stylistic intent.

Automated Color Adjustment

- Baseline Apply: Normalizes exposure and white balance using computed transforms.

- Look Application: Applies selected LUTs and adjusts primary color wheels, contrast, and saturation to match reference descriptors.

- Local Refinement: Semantic masks isolate faces or key objects for selective skin-tone optimization and highlight/shadow control.

- Iterative Optimization: Multiple AI passes refine transforms until color metrics align with targets.

- Batch Processing: Parallel GPU or cloud instances scale grading for high-volume workloads.

Cross-Clip Consistency and Temporal Coherence

- Shot-to-Shot Comparison: Keyframe histograms across adjacent clips are compared to minimize abrupt shifts.

- Temporal Smoothing: Parameter transitions are smoothed to ensure gradual color changes between frames.

- Global Adjustment Layer: Scene-wide modifications maintain an overarching look, counteracting minor shot-level variances.

- Consistency Reports: Dashboards highlight variance metrics and flag shots exceeding tolerances for review.

Feedback Loops and Human-in-the-Loop Overrides

- Live Previews: Real-time streaming of graded clips for immediate assessment.

- Annotation Capture: Editors annotate color adjustments directly in grading interfaces.

- Adaptive Learning: Override data and editorial notes retrain models to align future suggestions with stylistic preferences.

- Approval Gates: Automated checks verify all overrides are addressed and consistency metrics are within acceptable ranges before export.

System Integration and Data Flow

Seamless AI-driven grading depends on tightly integrated systems and a unified data ecosystem. Core components include a central asset repository feeding AI microservices, a rule-based orchestration platform, collaboration and review interfaces, and analytics dashboards.

- Asset Repository: Secure storage layer for raw footage, proxies, and project files, accessible via APIs or event triggers.

- AI Microservices: Discrete engines for shot detection, color recommendation, and metadata enrichment.

- Workflow Orchestrator: Platforms like Frame.io or cloud-native managers sequence tasks, route outputs to review interfaces, and manage dependencies.

- Review Interface: Collaborative applications for time-stamped annotations, approvals, and version control.

- Monitoring Dashboard: Real-time console reporting throughput, error rates, and model performance metrics.

Secure, API-first architectures, event-driven engines, and role-based permissions ensure elasticity, interoperability, and auditability. Infrastructure spans on-premise GPUs, cloud instances, and serverless functions provisioned dynamically based on workload.

Key AI-driven functions across the end-to-end pipeline include:

- Automated Ingestion: File validation and proxy generation via services like AWS Elemental MediaConvert.

- Intelligent Tagging: Visual analysis with Clarifai or Google Cloud Video Intelligence; speech transcription for metadata enrichment.

- Rough Cut Generation: Timeline assembly using templates in Adobe Premiere Pro powered by Adobe Sensei.

- Voiceover Scripting: Neural TTS from Descript or AWS Polly.

- Audio Enhancement: Noise reduction and leveling in iZotope RX.

- Color Grading: Batch jobs in DaVinci Resolve and Baselight.

- Review and Feedback: Version control and annotations in Frame.io or Wipster, integrated with ftrack or ShotGrid.

- Rendering and Delivery: Multi-format exports via AWS Elemental or Azure Media Services.

Outputs, Dependencies and Handoffs

Primary Deliverables

- High-bit-depth masters (ProRes 422 HQ, DNxHR, DPX, OpenEXR).

- Timeline-conformant sequences (XML, AAF, EDL).

- Review proxies (H.264, ProRes LT with timecode burn-ins).

- Lookup tables (.cube, .3d lut) capturing applied grades.

- Color chart reference stills generated by DaVinci Resolve and Colorlab AI.

Metadata Sidecars and Reference Files

- XML/JSON sidecars with per-clip transforms, lift/gamma/gain data, and keyframe information.

- Shot-level logs with timecode ranges, revision IDs, and timestamps.

- Technical QC reports summarizing histograms, scopes, and gamut compliance.

- Creative style guides pairing reference stills with notes on mood and palette.

- Checksum manifests (MD5, SHA-1) for transfer validation.

Version Control and Revision History

- Revision identifiers in filenames and metadata (e.g., shot1_Grade_v02.dpx).

- Sidecar markers indicating parent version, authoring profile, and modification date.

- Change logs summarizing incremental adjustments.

- Automated diff reports highlighting keyframe differences.

- Audit trails in production asset management systems for accountability and rollback.

Dependencies for Downstream Processes

- Consistent timecode and frame-rate metadata for sync accuracy in audio post and VFX.

- Embedded or sidecar color transforms readable by Adobe Premiere Pro and Blackmagic Design Fusion.

- Reference LUTs and log metadata for VFX matching in composites and CG renders.

- Proxy-to-master mapping tables allowing sound editors to work at low resolution.

- Scope delineation for shots requiring further manual grading versus final approval.

Handoff Protocols

- Automated exports to storage or cloud buckets via Aspera or S3 presigned URLs.

- Generation of AAF/XML project files linking to graded clips and proxies for review in Frame.io or Adobe Productions.

- Notifications through ftrack or ShotGrid to alert audio, VFX, and finishing teams.

- Integration with review portals for real-time annotations and ticketing.