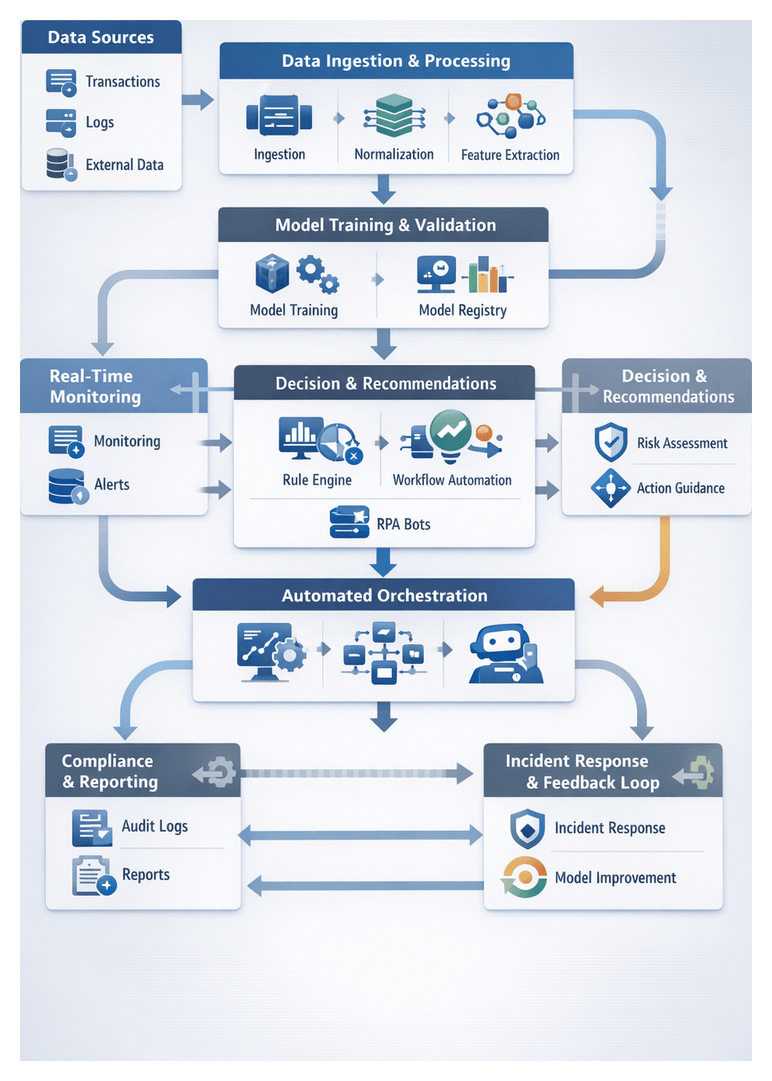

AI Enhanced Risk Mitigation Workflow for Financial Services

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Evolving Risk Landscape

Financial institutions face a rapidly shifting environment of cyber threats, complex regulations and volatile markets. Recent advancements in digital banking, open APIs and real-time transaction platforms have expanded the attack surface, allowing criminals to exploit legacy system vulnerabilities, synthetic identities and sophisticated phishing campaigns. At the same time, regulators update guidelines on anti-money laundering, data privacy and capital adequacy, demanding a proactive understanding of emerging risks.

Key drivers shaping this landscape include escalating cyberattacks such as ransomware and supply chain intrusions, intensifying regulatory mandates, market volatility from geopolitical events, digital transformation complexities and rising customer expectations for security and seamless experiences. A structured overview of these factors creates a unified risk taxonomy, aligns cross-functional teams on shared definitions and underpins AI-driven analyses with relevant context.

To build this foundation, institutions must aggregate inputs from:

- Internal Data Sources such as historical transactions, customer profiles, credit assessments and operational logs

- External Intelligence Feeds including threat actor reports, vulnerability disclosures and global sanction lists

- Regulatory and Market Data covering enforcement actions, policy updates, market indices and macroeconomic indicators

Technological prerequisites include a unified data infrastructure with real-time connectivity to intelligence sources, governed by a robust data governance framework that enforces quality and lineage. Organizational prerequisites encompass executive sponsorship, cross-disciplinary collaboration among risk, compliance, IT and business units, and a skilled analytics team. Environmental conditions such as continuous data refresh, standardized taxonomies, scalable compute resources and strong security controls ensure the overview remains current and actionable.

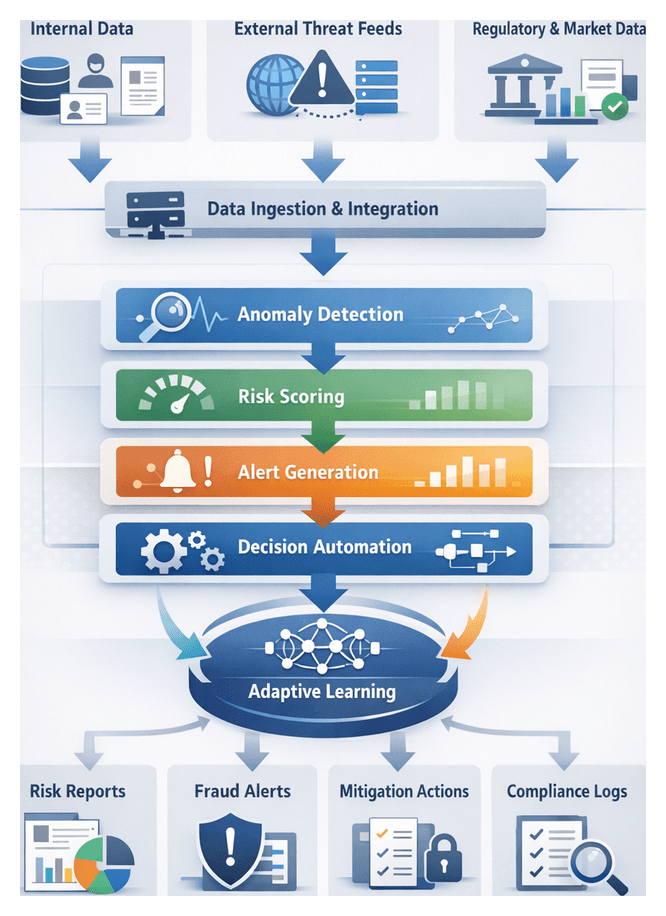

Consistent Risk Workflow Foundation

A repeatable, transparent risk workflow is essential to process high volumes of data, detect threats in real time and satisfy regulatory requirements. By codifying discrete phases—data ingestion, signal detection, risk scoring, recommendation generation and response orchestration—institutions create a predictable process flow that reduces friction and accelerates remediation.

Predictable Process Flow

- Data intake and normalization via preconfigured connectors and streaming platforms ensure uniform record formats

- Signal identification follows a scan-classify-tag pattern, applying consistent feature-extraction logic

- Risk scoring uses standardized aggregation rules to produce comparable assessments

- Decision support modules generate recommendations with approved templates and severity rankings

- Response orchestration triggers actions through defined APIs and workflow engines, enforcing policies and regulatory mandates

System Coordination

Interconnected systems—transaction engines, threat intelligence platforms, AI inference services and orchestration tools—exchange data through:

- Event bus integration for decoupled publish-subscribe messaging

- REST or gRPC API contracts with versioned schemas

- Message queues and topics for reliable delivery and alert broadcasting

- Orchestration engine connectors to invoke identity verification, transaction holds and compliance report generators

Role Alignment and Accountability

Defined roles and handoffs drive accountability:

- Data Engineers maintain ingestion pipelines and data quality

- AI/ML Engineers develop, train and version detection models

- Risk Analysts review signals and adjust thresholds

- Compliance Officers verify that workflows meet regulatory standards

- Incident Response Teams investigate alerts and manage resolution

- IT Operations oversee orchestration engines and disaster recovery

Embedded tracking, immutable event logs, versioned artifacts, digital signatures and role-based access controls ensure auditability and support root-cause investigations.

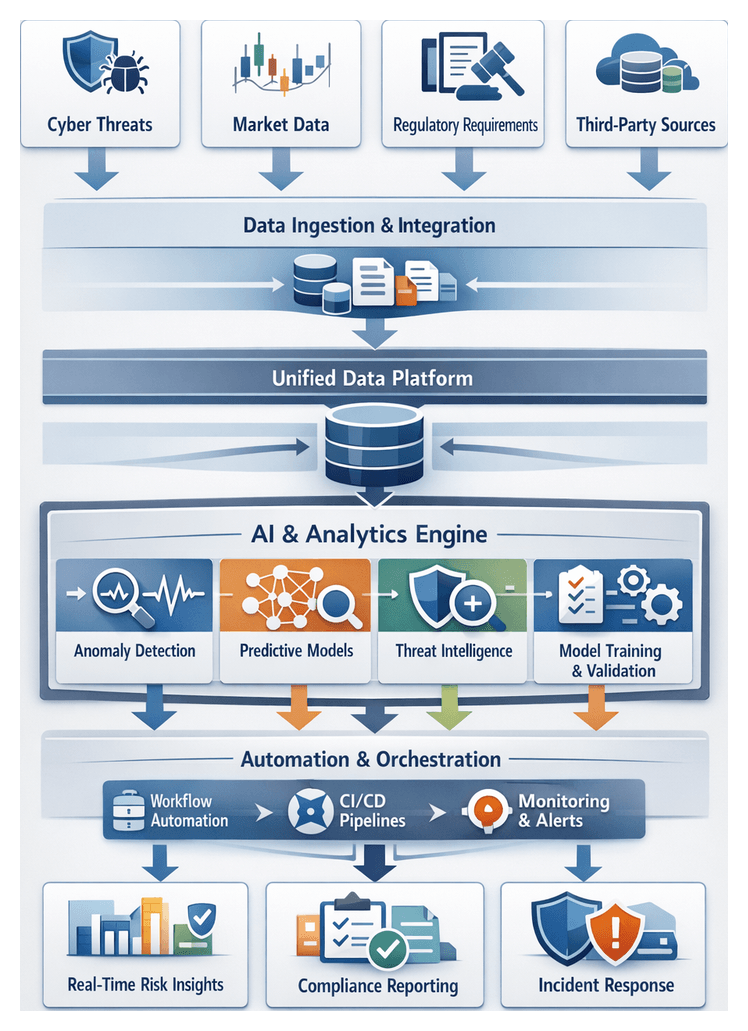

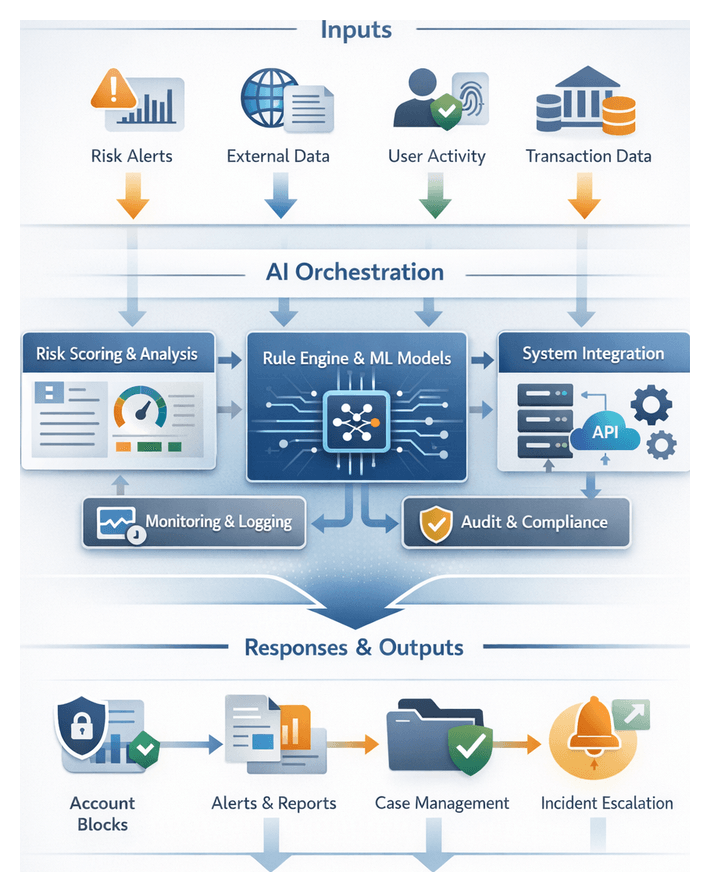

AI-Driven Risk Mitigation

Traditional rule-based systems struggle with the volume, velocity and variety of modern risk data. Integrating AI across the workflow enables automated preprocessing, real-time detection and adaptive learning to deliver timely, accurate risk insights.

Intelligent Data Connectivity and Preprocessing

AI-driven connectors and transformation engines ingest and normalize unstructured logs, extract features and reconcile taxonomies. Natural language processing identifies entity relationships in regulatory texts, while graph-based models reveal hidden transactional networks.

Supporting platforms include:

- Streaming ingestion with Apache Kafka and Confluent

- AI-powered clustering in data transformation engines to detect schema drift

- Enrichment via external threat feeds with entity resolution models

- Anomaly detection for quality assurance before downstream processing

Automated Detection and Classification

Normalized data fuels supervised and unsupervised models for real-time signal identification. Inference services score records against classification models while anomaly detection frameworks, including isolation forests and autoencoders, adapt to evolving patterns.

Key integrations feature lightweight inference engines, feature extraction pipelines computing statistical, temporal and graph metrics, and metadata tagging services that augment events with risk profiles, geolocation data and regulatory context.

Predictive Modeling and Risk Scoring

Ensemble methods combine tree-based models, gradient boosting and neural networks to generate composite risk scores that reflect both probability and potential impact. Model development and management leverage:

- TensorFlow and PyTorch for custom algorithm development

- AWS SageMaker for distributed training and drift monitoring

- DataRobot for automated feature engineering and model selection

- Experiment tracking and model registry systems for reproducibility and auditability

Orchestration and Real-Time Decisioning

Risk scores trigger automated workflows and alerts. Business logic engines enforce thresholds, prompting transaction holds, multi-factor authentication challenges or analyst review. Core components include:

- Camunda or Apache Airflow for workflow orchestration

- Edge-deployed inference services for sub-second responses

- Splunk Enterprise Security for centralized alerting and dashboards

- Integration adapters connecting core banking, CRM and incident response systems

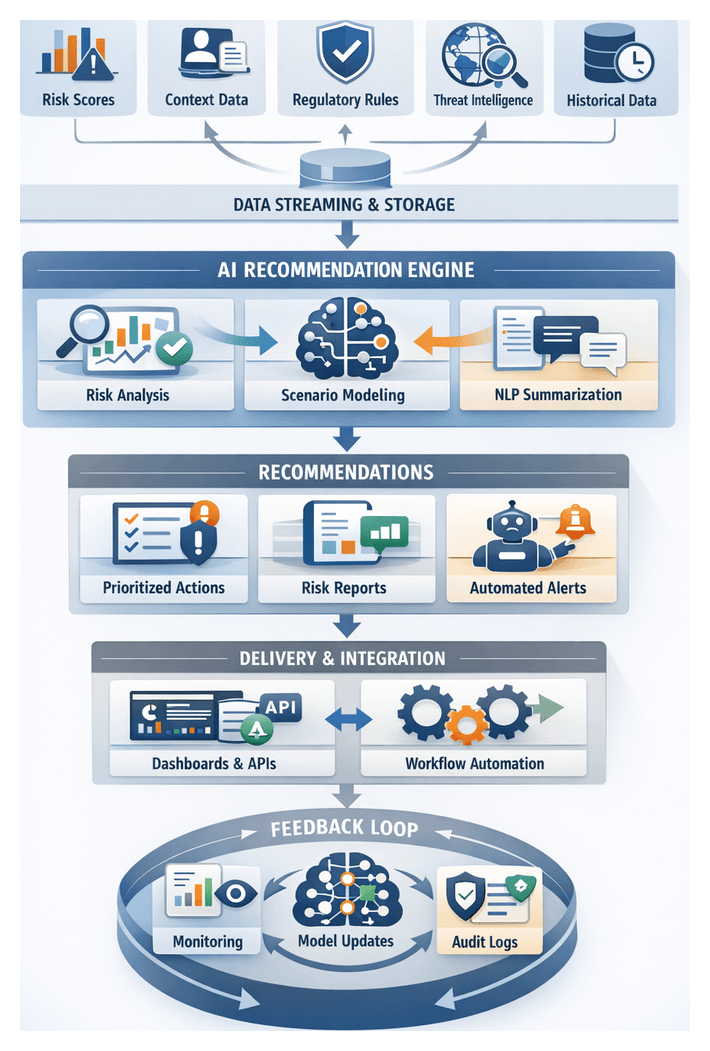

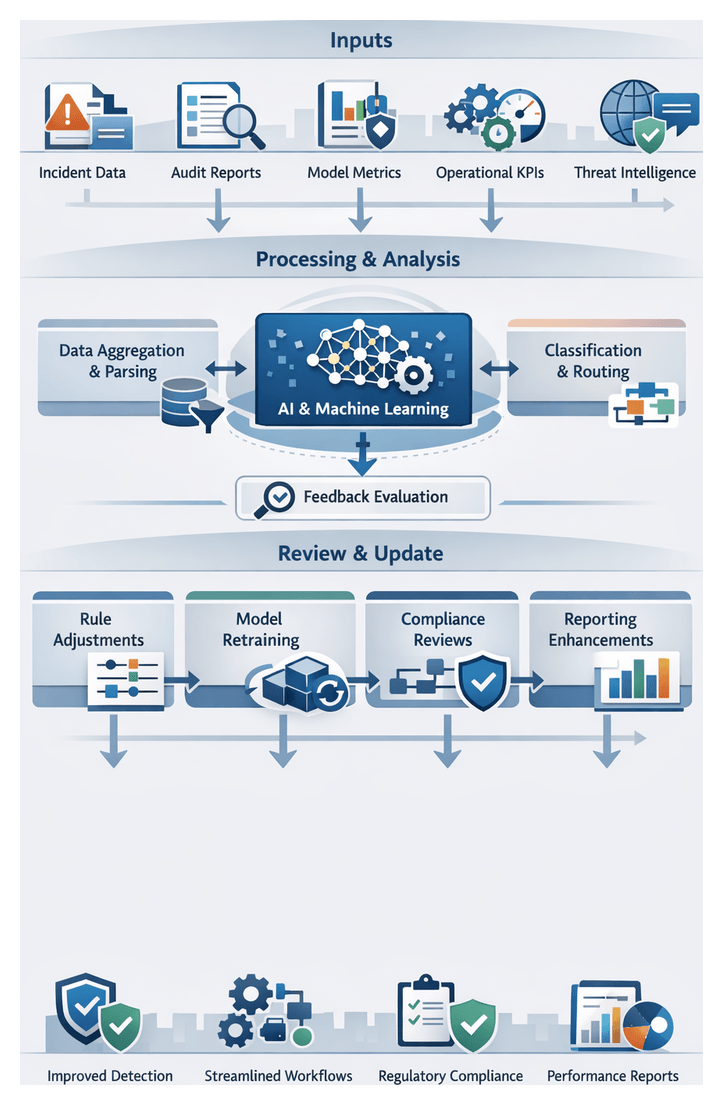

Adaptive Learning and Continuous Optimization

Feedback from incident outcomes, audit findings and policy changes feeds automated retraining pipelines and policy management modules. Automated schedulers, experiment tracking dashboards and simulation environments support model refinement and safe deployment of workflow updates.

This adaptive framework sustains high detection accuracy, reduces false positives and ensures regulatory alignment.

Framework Overview and Strategic Deliverables

Solution architects translate risk insights, regulatory mandates and organizational goals into strategic deliverables that guide implementation. These artifacts include:

High-Level Framework Outputs

- Architectural Blueprint detailing data flows, system interfaces, AI model lifecycles, security controls and compliance checkpoints

- Interface and Data Schema Definitions with API contracts, field-level metadata and validation rules

- Technology Stack Inventory listing platforms such as Apache Kafka, Databricks and monitoring dashboards

- Governance and Compliance Matrix mapping regulations to workflow stages, controls and evidence artifacts

- Risk and Performance Metrics Catalog defining KPIs such as mean time to detection, false positive rate and model drift indicators

- Roadmap and Implementation Plan with phased deliverables, milestones and resource assignments

Dependencies and Integration Points

- Data Source Availability with stable access and change-management processes for core banking, transaction logs and threat feeds

- Infrastructure Readiness provisioning for batch and streaming workloads, CI/CD pipelines and container orchestration

- Regulatory Sign-Off from legal, compliance and audit functions

- Toolchain Integration with ETL platforms, AI environments like TensorFlow or PyTorch, orchestration engines such as Apache Airflow and monitoring dashboards

- Resource Alignment including data engineers, data scientists, security analysts, DevOps and compliance specialists

- Vendor Dependencies covering SLAs for threat intelligence, market data and managed services

Handoff and Continuity

A structured handoff to implementation teams involves transferring documentation, conducting workshops, logging issues and formalizing acceptance criteria. Phased kickoff of data ingestion planning leverages interface definitions, data schemas and security controls to preserve design intent and accelerate progress toward a unified, AI-driven risk mitigation capability.

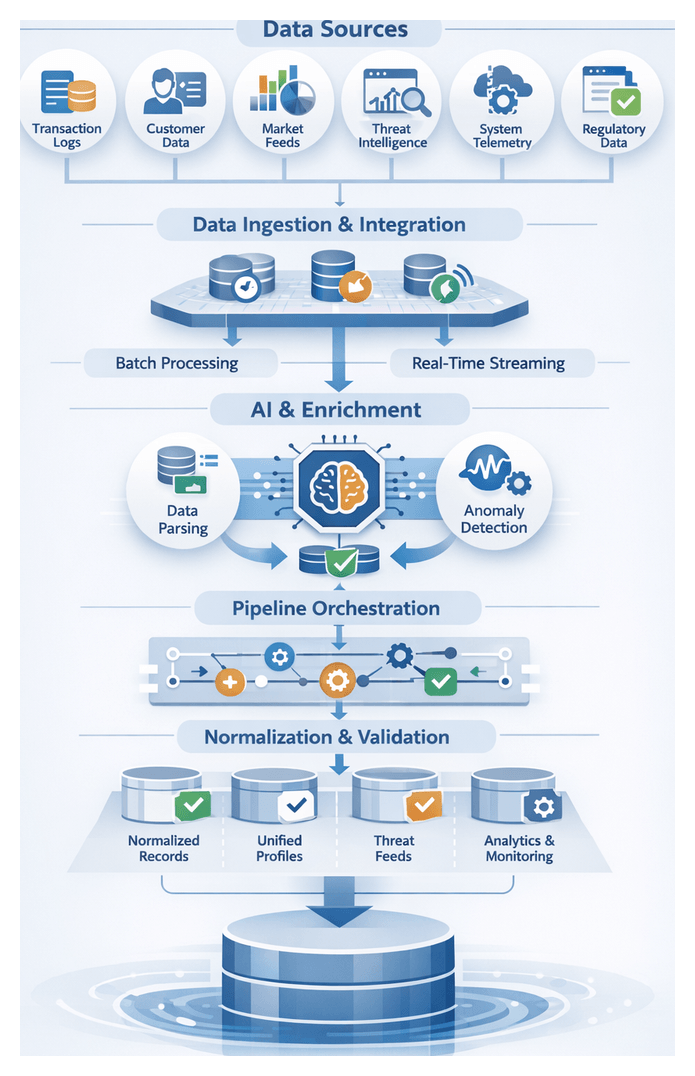

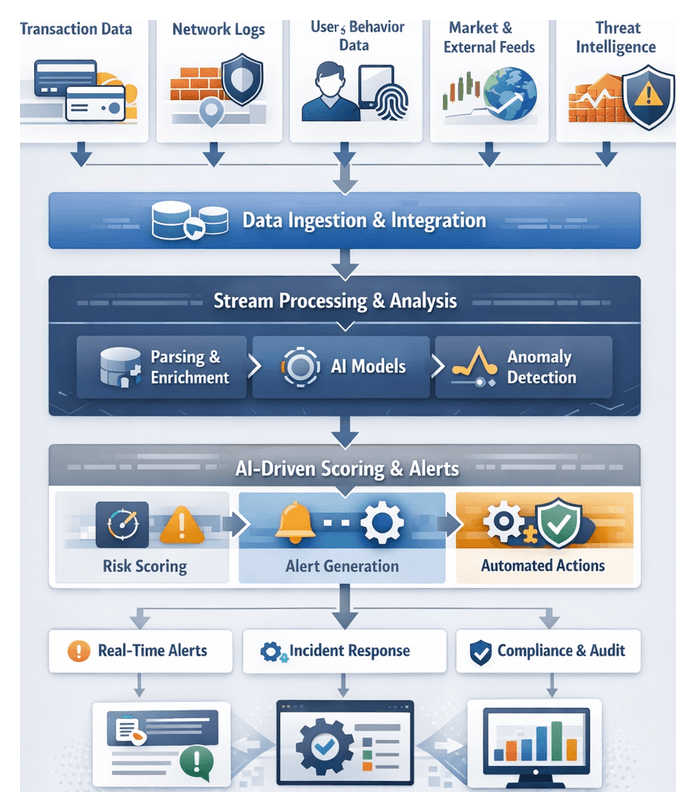

Chapter 1: Data Ingestion and Integration

Establishing a unified foundation for AI-driven risk mitigation begins with systematic data ingestion from internal and external sources. Financial institutions must collect transaction logs, customer profiles, market data, threat intelligence, and system telemetry into a consistent, high-quality dataset. This layer accelerates downstream analytics, anomaly detection, and automated decision support while enforcing governance controls.

Key Data Sources and Inputs

- Transaction Logs: Payments, transfers, trade executions, and settlement events from core banking, trading, and payment platforms.

- Customer Profiles: KYC documentation, credit scores, device fingerprints and dynamic behavioral tags.

- Market Data Feeds: Pricing, exchange rates, and indicators from vendors and public sources.

- External Threat Intelligence: Indicators of compromise, blacklists, vulnerability alerts, and threat actor profiles.

- Network and System Telemetry: Firewall logs, intrusion detection alerts, and endpoint monitoring streams.

- Regulatory Feeds: Sanctions lists, policy bulletins, and compliance updates.

Prerequisites and Connectivity

- Secure Connectivity: Provision API endpoints, SFTP/FTPS, message queues or direct database links with OAuth or mutual TLS.

- Data Governance Framework: Define classification, retention, and access policies in compliance with GDPR, PCI DSS and similar regulations.

- Schema and Format Standards: Agree on common data models, schema registries and serialization formats (JSON, Avro, Parquet).

- Infrastructure Readiness: Ensure bandwidth, VPN tunnels and hybrid cloud architectures support high-throughput ingestion.

- Latency Objectives: Establish SLAs for data freshness to guide batch versus streaming choices.

Batch and Streaming Ingestion Strategies

An optimal ingestion framework combines batch and streaming pipelines:

- Batch Ingestion: Scheduled ETL jobs using AWS Glue and Apache Spark load high-volume historical data into data lakes or warehouses.

- Streaming Ingestion: Real-time capture via Apache Kafka, Amazon Kinesis or Google Pub/Sub to detect anomalies within seconds.

AI-Driven Connectors and Enrichment

To reduce manual mapping and accelerate time to insight, AI components perform intelligent parsing, enrichment and validation:

- AI-Driven Connectors: Natural language processing and pattern recognition engines such as Apache NiFi AI Extensions or Debezium infer schemas, extract entities and suggest field mappings.

- Automated Enrichment: Integrate third-party identity verification and geolocation services to add contextual risk metadata.

- Anomaly Detection: Unsupervised models flag schema changes or data spikes, triggering remediation before they impact downstream workflows.

Data Quality and Governance

Embedding validation and profiling early ensures trustworthy inputs:

- Schema Conformity: Automated checks verify field presence and data types against the central registry.

- Completeness and Range Checks: Validate numeric fields, patterns for IBANs or SWIFT codes, and referential integrity.

- Profiling and Cataloging: Data catalogs track lineage and metadata, enabling audit trails and root-cause analysis.

- Governance Controls: Policies enforce retention, access controls and encryption in transit and at rest.

Landing and Staging

Ingested data is temporarily staged in raw form before normalization. Checkpointing, backpressure handling and dead-letter queues manage ingestion failures. Alerts and retry policies maintain pipeline resilience while preventing data loss.

Pipeline Orchestration and Normalization

Once ingested, data flows through orchestrated pipelines that standardize schemas, enforce quality rules and prepare assets for analytics. Orchestration platforms manage both batch and streaming workflows, coordinating extraction, transformation and load tasks with auditability and scalability.

Orchestration Topology and Tools

- Directed Acyclic Graphs: Platforms like Apache Airflow, Azure Data Factory or Informatica Intelligent Cloud Services schedule and execute ETL tasks in dependency order.

- Parameterization: Dynamic configurations enable pipelines to adapt to varying data partitions, time windows and environments.

- CI/CD Integration: Version control of pipeline definitions ensures repeatable deployments and rollback capabilities.

- Retry Policies and SLAs: Built-in policies detect failures, enforce deadlines and notify on-call teams.

Stream Processing for Real-Time Normalization

Low-latency risk detection relies on continuous jobs in frameworks such as Apache Flink or Spark Structured Streaming:

- Field Reconciliation: Type casting, name mapping and currency code standardization on the fly.

- Windowed Aggregations: Rolling metrics, for example five-minute average transaction volumes.

- Enrichment Lookups: Attach external risk scores or threat indicators from microservices.

- Exactly-Once Semantics: Checkpointing and offset management guarantee no data loss or duplicates.

Schema Registry and Metadata Catalog

A centralized schema registry enforces canonical definitions. Pipelines query the registry to validate incoming records and detect evolutions. A metadata catalog records lineage, capturing which DAGs and scripts generated each dataset. This supports impact analysis and accelerates troubleshooting.

Normalization Rules and Quality Checks

- Referential Integrity: Ensure customer identifiers align with profile tables.

- Range and Pattern Validation: Transaction amounts within thresholds and identifiers matching expected formats.

- Deduplication: Remove repeated events before publishing.

- Null Handling: Apply defaults or filter incomplete records.

Metrics on error rates, throughput and schema mismatches feed monitoring dashboards. Alerts route to operational teams for rapid remediation.

Team Coordination and Governance

- Data Engineers: Design and maintain pipeline logic and connectors.

- DevOps Teams: Provision and scale orchestration infrastructure.

- Risk Analysts: Define normalization requirements and risk categories.

- Security and Compliance Officers: Audit metadata logs and enforce policies.

Regular change-control processes govern schema updates, connector upgrades and pipeline modifications to prevent disruptions.

Error Handling and Recovery

- Automated Retries with Backoff: Isolate transient issues while avoiding resource thrashing.

- Quarantine Queues: Route problematic records for manual inspection.

- Checkpointing: Enable streaming jobs to resume from the last safe state.

- Incident Runbooks: Define escalation paths for batch and streaming failures.

Security and Monitoring

- Access Controls: Role-based permissions for pipeline deployment, data access and schema changes.

- Encryption: Protect sensitive fields in transit and at rest.

- Audit Logs: Capture user actions to satisfy regulatory requirements.

- Operational Metrics: Track latency, success rates, throughput and resource utilization.

Enterprise monitoring tools correlate logs and metrics across the workflow, enabling proactive scaling and tuning.

Handoff to Downstream Analytics

Upon normalization, datasets are published to curated zones in the data lake. Each asset includes:

- Standardized Schemas documented in the catalog.

- Lineage Pointers to sources and transformation scripts.

- Quality Metrics on record validity and completeness.

- Access Policies restricting consumer permissions.

These deliverables feed AI-driven detection engines, informing classification and scoring algorithms in subsequent stages.

Consolidated Data Lake Outputs and Dependencies

The culmination of ingestion, enrichment and normalization is a unified data lake that underpins risk signal detection, model training and real-time monitoring. This repository provides consistent, high-quality and traceable datasets with clear interfaces for downstream consumers.

Key Outputs

- Normalized Transaction and Event Tables: Unified records with timestamp, account IDs, counterparties and metadata ready for risk algorithms.

- Unified Customer Profiles: Versioned identity tables augmented with risk scores and relationship networks, annotated for audit.

- Enriched Threat Feeds: Contextualized external intelligence linked to internal events.

- Metadata Catalog and Data Dictionary: Centralized documentation of schemas, field definitions and lineage.

- Data Quality Scorecards: Periodic reports on completeness, accuracy, uniqueness and freshness.

- CDC Logs: Append-only change data capture streams supporting time-travel queries and backfills.

- Audit and Lineage Artifacts: Execution metrics, source offsets and transformation parameters for full traceability.

Core Dependencies

- Source System Integration: Native connectors, API gateways and CDC streams from transactional platforms and CRMs.

- Orchestration Frameworks: Apache Airflow, AWS Glue, Apache Kafka and Amazon Kinesis coordinate batch and streaming jobs.

- Schema Management: Registries and governance tools such as AWS Lake Formation and Azure Data Lake Storage.

- AI-Driven Profiling and Quality Modules: Engines like Talend Data Quality detect anomalies and suggest cleansing rules.

- Metadata and Lineage Tracking: Open lineage frameworks capture data flow through ETL scripts and AI enrichment services.

Integration with Downstream Stages

Well-defined interfaces ensure seamless handoff to risk signal detection, model training and monitoring workflows:

- Data Access APIs: RESTful and GraphQL endpoints expose parameterized views of unified tables for detection pipelines.

- Versioned Exports: Immutable snapshots in Parquet or ORC for model development, tracked by model registries.

- Streaming Subscriptions: Confluent Platform or Azure Event Hubs topics deliver live events to inference engines.

- Event Bus Notifications: Publish critical changes and quality alerts to trigger investigation or reprocessing workflows.

- Secure Data Sharing: Governed exchange via AWS Data Exchange or Azure Data Share for external collaboration.

By orchestrating ingestion, AI-driven enrichment, rigorous normalization and a robust data lake, financial institutions establish a resilient, scalable foundation for proactive, AI-enhanced risk mitigation.

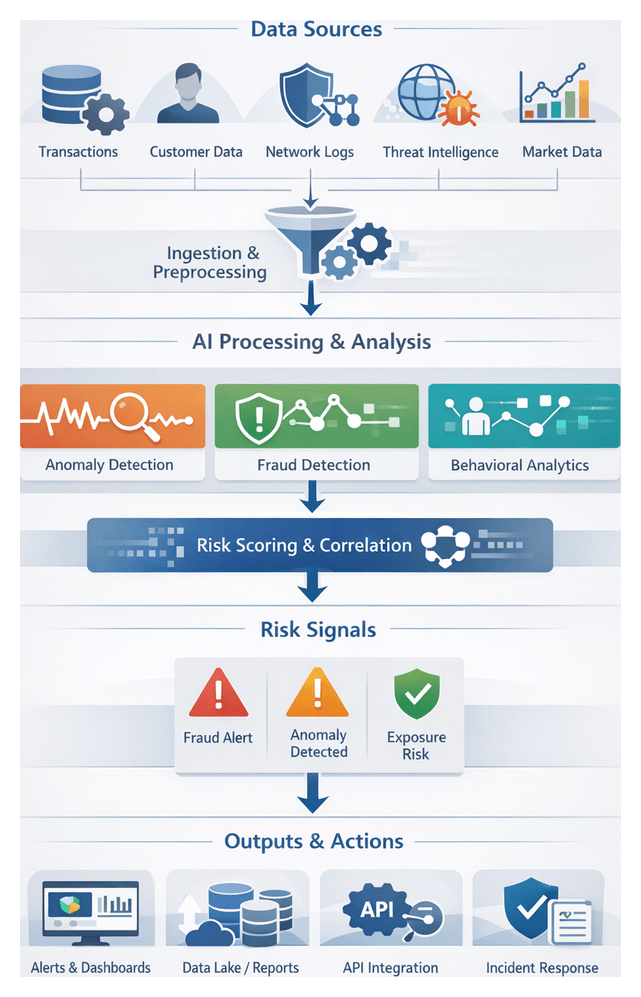

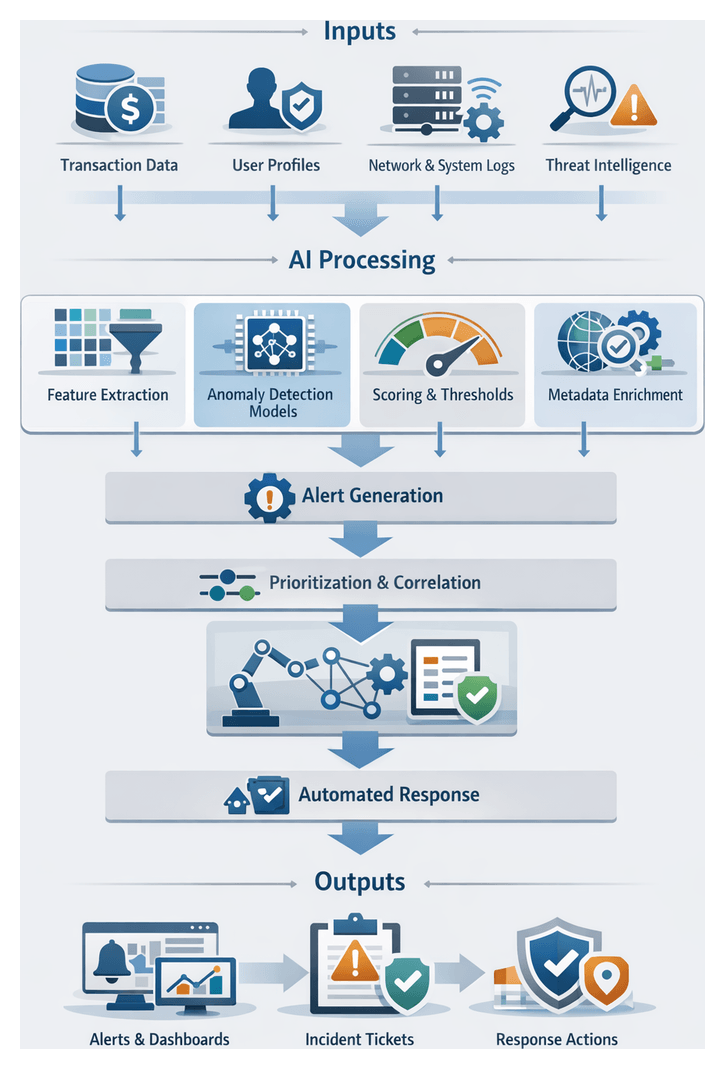

Chapter 2: Risk Signal Identification

The risk signal identification stage converts raw, normalized data into early indicators of potential fraud, cyber threats, credit exposure, and regulatory infractions. By establishing clear goals and leveraging AI-driven scanning, organizations gain the earliest possible warning of emerging risks, enabling timely mitigation and compliance.

Primary Objectives

- Detect early warning indicators of fraudulent transactions, unauthorized access, or market anomalies

- Identify deviations from behavioral baselines for customers, accounts, and counterparties

- Flag suspicious patterns in network traffic, system logs, and external threat feeds

- Provide structured metadata to support prioritization, scoring, and escalation

- Enable real-time processing to minimize detection latency and support immediate response

Data Inputs, Dependencies, and Prerequisites

Effective signal detection relies on diverse, high-quality inputs, robust infrastructure, and governance compliance. Consistency, completeness, and timeliness are critical to minimize false positives and ensure reliable performance.

Key Data Sources

- Normalized transaction records with timestamps, amounts, currencies, and counterparties

- Customer profiles, KYC attributes, and behavioral baselines

- Network and system logs, intrusion alerts, and API call metadata

- External threat intelligence feeds for indicators of compromise and malicious IPs

- Market data streams, volatility measures, and macroeconomic indicators

- Reference data such as merchant codes, risk ratings, and watchlists

Upstream Dependencies

- Data lake outputs and metadata catalog describing schemas, lineage, and refresh cadences

- AI preprocessing services for parsing, tokenization, and preliminary feature generation

- Feature store updated in real time with behavioral baselines and computed risk factors

- Model registry containing versioned AI artifacts, performance benchmarks, and inference endpoints

- Configuration store for detection rules, thresholds, and parameter settings

Prerequisites for Execution

- Validated ingestion and normalization with automated data quality checks

- Deployment of fine-tuned classification models and feature extractors

- Provisioned compute resources, including GPU or FPGA accelerators

- Secure network segmentation, encryption, and least-privilege access controls

- Regulatory mapping to AML, KYC, PSD2 and documentation for auditability

Signal Scanning and Classification Workflow

This workflow transforms ingested data streams into tagged risk events through segmentation, preprocessing, AI model inference, and enrichment. Modularity and horizontal scaling ensure low-latency analysis across varying throughput demands.

Data Stream Segmentation and Routing

Streams are routed by source, format, and priority via a message broker or streaming platform into specialized scanning modules:

- High-priority transaction streams for real-time monitoring

- User activity logs for behavioral analytics

- Network flow records for intrusion detection

- Threat intelligence feeds for reputation scoring and IOC matching

Preprocessing and Feature Extraction

Preprocessing agents perform cleansing, normalization, and feature engineering:

- Data sanitization, duplicate removal, and format standardization

- Time-window aggregation for rolling statistics

- Sessionization of events to define behavioral contexts

- Encoding categorical fields into numerical or vector representations

- Enrichment with geolocation, device fingerprinting, and watchlist checks

AI-powered preprocessing agents, such as Elastic machine learning modules, adapt feature sets in response to data drift or schema changes.

Classification Engine and Inference

Feature vectors feed into a classification engine that coordinates parallel inference across multiple models:

- Supervised fraud detection classifiers trained on historical cases

- Unsupervised anomaly detectors for deviations from baselines

- Graph-based money laundering detectors leveraging network analysis

- Natural language processing models for communication logs

Frameworks such as Apache Kafka Streams and Microsoft Azure Sentinel analytic rules dynamically select models based on policy and data attributes, yielding probability scores, confidence metrics, and explanatory features.

Risk Tagging, Prioritization, and Correlation

Inference outcomes produce structured risk signal objects enriched with metadata:

- Signal type, confidence score, and contributing features

- Source identifier, timestamp, and enrichment annotations

- Model version, processing environment, and error codes

A prioritization module applies business rules and severity thresholds to rank signals. Correlation engines, such as those in Splunk Enterprise Security, group related events into composite incidents using temporal, entity, and graph relationships.

Orchestration, Scalability, and Continuous Improvement

A centralized orchestration layer manages dependencies, retries, and resource allocation to uphold SLAs and support human-in-the-loop coordination.

Workflow Orchestration and Monitoring

- Define data pipelines in tools like Apache Airflow or IBM Watson Orchestrate, enforcing processing latencies

- Deploy stream processing jobs on Kubernetes with autoscaling policies

- Use service meshes and API gateways for secure, versioned interfaces

- Visualize metrics in Kibana or Grafana dashboards

- Integrate analyst feedback into triage dashboards and ticketing workflows

Adaptive Learning and Feedback Loops

- Collect expert labels on alerts for retraining

- Monitor data drift and model performance to trigger retraining

- Orchestrate retraining with MLflow or Kubeflow

- Present rolling evaluation metrics to identify emerging blind spots

Tagged Signal Outputs and Downstream Handoffs

Tagged signals are enriched, schema-validated artifacts that feed scoring, monitoring, and response systems. They balance contextual richness with lightweight structures for high-throughput transmission.

Output Schema Elements

- Unique identifier, detection timestamp, and source details

- Classification label, confidence score, and model metadata

- Contextual attributes such as transaction amount, geolocation, and watchlist flags

- Enrichment references to threat intelligence providers

- Processing metadata including preprocessing steps and runtime environment

Outputs conform to versioned schemas managed by registries such as Confluent or Azure Event Hubs.

Handoff Patterns

- Real-time streaming via Apache Kafka or Azure Event Hubs for low-latency dashboards

- Batch export to cloud storage or data lakes for ETL into Snowflake or Amazon Redshift

- Synchronous or asynchronous RESTful API calls to scoring and response services

- Message queues like RabbitMQ or Amazon SQS for guaranteed delivery and retry semantics

Operational Best Practices

- Maintain schema compatibility and manage evolution via registry versioning

- Design idempotent consumers to handle duplicates using unique IDs

- Monitor end-to-end latency and throughput against SLOs

- Enforce encryption in transit and at rest, and apply least-privilege access

- Implement dead-letter queues for graceful degradation during outages

- Continuously validate data quality and flag anomalies for resolution

Governance and Compliance

- Define data retention policies aligned with GDPR, PCI DSS, and SOX

- Enforce encryption standards such as AES-256 and TLS 1.2

- Apply role-based access control and data masking for sensitive fields

- Trigger automated regulatory reporting for high-risk categories

By unifying objectives, inputs, AI-driven workflows, and robust handoff mechanisms, organizations can implement a cohesive risk mitigation platform that delivers real-time detection, transparent auditability, and continuous improvement.

Chapter 3: AI Model Training and Validation

Evolving Risk Landscape and Foundational Prerequisites

The financial services industry faces an increasingly interconnected array of cyber threats, regulatory mandates, and market dynamics. Advanced fileless malware, AI-driven phishing campaigns, and living-off-the-land attacks demand continuous monitoring and adaptive defenses. Regulatory requirements—from Basel III and GDPR to PSD2—impose stringent reporting controls and force rapid policy updates. Meanwhile, high-frequency trading, digital asset platforms, and global economic volatility expose institutions to liquidity and counterparty risks. The shift toward cloud adoption, open banking, and fintech partnerships further expands the attack surface and introduces complex third-party dependencies.

Traditional siloed risk functions struggle under terabytes of daily log data, fragmented taxonomies, and rule-based systems that generate excessive false positives. Manual compliance reporting amplifies error risks and delays regulatory responses. To address these challenges, firms must adopt a unified, AI-enabled risk framework built on robust data governance, scalable infrastructure, and cross-functional oversight.

Key Drivers of Emerging Risk

- Cyber Threat Evolution: Rapidly evolving adversarial tactics require behavioral analytics and AI-powered detection.

- Regulatory Complexity: Global standards demand precise data lineage and timely audit trails.

- Market Volatility and Operational Strain: Algorithmic trading and macro uncertainty stress liquidity and capacity.

- Digital Transformation and Third-Party Dependencies: Cloud, open banking, and fintech integrations create new failure points.

Operational Implications

- Data Volume and Velocity: Near-real-time ingestion of logs, telemetry, and threat feeds outpaces legacy systems.

- Fragmented Governance: Inconsistent taxonomies hinder prioritization and escalation.

- Escalating False Positives: Rule-based alerts overwhelm analysts and obscure genuine threats.

- Regulatory Reporting Burden: Manual compilation risks non-compliance and fines.

These pressures necessitate an integrated approach that embeds AI across detection, response, and audit workflows. By unifying cyber, compliance, and market risk data, institutions can achieve proactive, real-time risk mitigation.

Prerequisites for AI-Enabled Risk Management

- Data Governance and Quality Controls: Establish ownership, retention, metadata standards, and validation rules.

- Unified Risk Taxonomy: Align terminology, severity scales, and escalation criteria across domains.

- Scalable Data Infrastructure: Deploy high-throughput ingestion platforms such as Apache Kafka and centralized data lakes.

- Interoperable Security and Compliance Tools: Integrate SIEM platforms like Splunk or IBM QRadar via open APIs into orchestration layers.

- Cross-Functional Governance Council: Form a steering committee with representatives from cybersecurity, compliance, risk management, and IT operations.

- Baseline Threat Intelligence: Subscribe to reputable feeds for Indicators of Compromise and vulnerability disclosures.

AI-Driven Model Development and Validation Workflow

Building reliable risk models requires a standardized workflow that bridges data science, experimentation platforms, validation systems, and governance checkpoints. Clear handoffs and metadata tracking ensure consistent evaluation against performance, reliability, and compliance criteria before deployment.

Candidate Model Identification and Architecture Design

Data scientists collaborate with risk analysts and business stakeholders in scoping workshops to define objectives—false positive rates, detection latency, and resource constraints. Historical incident data are reviewed to annotate features and establish target variables. A landscape assessment identifies suitable techniques—gradient boosting, neural networks, or ensemble methods—based on data volume and latency requirements. Lightweight tree-based algorithms often serve real-time needs, while deeper neural models support batch analysis.

- Define modeling goals and key performance metrics.

- Inventory candidate algorithms according to data characteristics.

- Sketch architecture diagrams showing data flow from ingestion to inference.

- Document dependencies on feature stores, compute clusters, and libraries.

Experiment Tracking and Configuration Management

A centralized experiment tracking system records hyperparameters, dataset versions, code commits, and evaluation metrics. Platforms such as MLflow or Weights & Biases capture metadata automatically, enable side-by-side run comparisons, and integrate with continuous integration services to validate code and dependencies. Container images or virtual environments are stored in shared registries to guarantee reproducibility.

- Register experiments with metadata: author, dataset snapshot, code version.

- Enable automated logging of metrics: loss curves, validation accuracy, resource usage.

- Integrate CI pipelines to enforce code quality and dependency compatibility.

- Store container images in a shared registry for reproducible execution.

Cross-Validation and Performance Benchmarking

Unbiased estimates arise from k-fold cross-validation, partitioning data into folds and aggregating performance distributions. Orchestration tools schedule parallel jobs on CPU or GPU clusters, with results visualized on dashboards to detect overfitting or variance issues early.

- Partition datasets into training and validation folds with stratification.

- Execute parallel training jobs using configured container images.

- Aggregate metrics and compute confidence intervals.

- Generate comparative analyses to highlight algorithm strengths and weaknesses.

Hyperparameter Optimization

After establishing baselines, hyperparameter tuning explores parameter spaces through grid search, random search, or Bayesian methods. Libraries like Hyperopt or managed services such as AWS SageMaker Hyperparameter Tuning automate trial execution across distributed compute instances, identifying optimal configurations based on multi-objective criteria.

- Define search spaces for learning rates, tree depths, regularization factors.

- Configure algorithms and stopping criteria to manage runtime.

- Monitor trials via unified dashboards.

- Select parameter sets balancing accuracy and latency.

Explainability and Fairness Assessment

Explainability frameworks such as SHAP and LIME generate feature contribution profiles, while bias assessments test performance across demographic or account segments. Automated scripts compare subgroup metrics to detect disparate impacts and recommend mitigation strategies such as re-sampling or constraint adjustments.

- Embed explainability tools into validation pipelines to produce feature importance reports.

- Define segments for bias evaluation per regulatory guidelines.

- Execute subgroup analyses and document disparities.

- Recommend corrective actions where bias thresholds are exceeded.

Validation Approval and Model Registration

Governance boards comprising risk managers, compliance officers, and senior data scientists review experiment logs, validation metrics, and explainability artifacts. Approved models are registered in centralized registries—such as MLflow Model Registry, Kubeflow Pipelines, Amazon SageMaker Model Registry, or Databricks Model Registry. Each entry includes artifacts, metadata, performance benchmarks, and validation sign-off, with version control ensuring immutability and audit trail integrity.

- Assemble governance packages with logs, metrics, and fairness reports.

- Conduct formal review meetings to capture approval decisions.

- Register approved artifacts and metadata in the model registry.

- Enforce version control for traceable, audited model entries.

AI Orchestration and Platform Capabilities

A robust orchestration layer coordinates resource management, experiment lifecycles, pipeline automation, and governance controls. This backbone enables scalable, compliant, and transparent AI operations within financial institutions.

Resource Management and Automated Provisioning

- Cluster Orchestration: Kubernetes allocates GPU and CPU nodes dynamically.

- Resource Autoscaling: Platforms such as AWS SageMaker and Google AI Platform scale instances based on queue depth.

- Cost Governance: Budget policies and spot instance utilization reduce infrastructure spend.

Experiment Tracking and Reproducibility

- Metadata Capture: MLflow records parameters, metrics, and dataset references.

- Artifact Management: Centralized registries store model binaries and serialized pipelines.

- Version Control Integration: DVC links data and code to experiment IDs for full traceability.

Pipeline Orchestration and Workflow Automation

- DAG Scheduling: Kubeflow Pipelines define sequential and parallel tasks.

- Parameterized Workflows: Templates support automated batch executions across scenarios.

- Integration Hooks: Prebuilt connectors link to data lakes, feature stores, and model registries.

Hyperparameter Tuning

- Grid and Random Search: Parallel trial execution at scale.

- Bayesian Optimization: Azure Machine Learning converges faster on optimal configurations.

- Early Stopping: Prune underperforming runs to allocate resources efficiently.

CI/CD Integration and DevOps Practices

- Automated Testing: Unit tests, data drift checks, and performance benchmarks run on every code commit.

- Containerization: Docker images ensure consistency across environments.

- Pipeline Triggers: Code or data updates invoke CI/CD pipelines to retrain, validate, and promote models.

Monitoring, Alerting, and Drift Management

- Performance Dashboards: Tools like Databricks visualize accuracy, latency, and throughput.

- Data Drift Detection: Statistical tests trigger retraining via predefined thresholds.

- Automated Retraining: Pipelines redeploy updated models upon validation success.

Collaboration, Governance, and Roles

- Data Scientists: Design experiments, select features, and interpret outputs.

- MLOps Engineers: Configure pipelines, manage resource policies, and enforce governance.

- Platform Administrators: Oversee cluster health, security, and cost management.

- Compliance Officers: Review audit logs and registry entries for regulatory adherence.

Model Artifacts, Integration Interfaces, and Operational Hand-off

Validated models produce artifact bundles and metadata that enable real-time risk monitoring and decision support. Clear integration patterns and handoff protocols ensure consistency, traceability, and reliability as models transition from validation to production.

Model Artifact Bundles

- Serialized Weights and Parameters: Formats such as ONNX or Protobuf for interoperability.

- Architecture Definitions: JSON or YAML for frameworks like TensorFlow and PyTorch.

- Preprocessing Pipelines: Exported via scikit-learn or custom modules to ensure consistent feature transformations.

- Container Images: Encapsulate runtime dependencies with Docker and Kubernetes.

- Model Signatures: Define input/output schemas, data types, and validation logic.

Metadata and Performance Reports

- Evaluation Metrics: ROC AUC, precision, recall, F1-score, and calibration curves.

- Confusion Matrices: Contextualize error types for threshold selection.

- Explainability Analyses: SHAP or LIME feature importance reports.

- Drift Baselines: Distribution statistics for incoming features.

- Validation Logs: Cross-validation details, hyperparameter settings, and early stopping criteria.

Model Registry and Version Control

Model registries such as MLflow Model Registry, Kubeflow Pipelines, Amazon SageMaker Model Registry, and Databricks Model Registry serve as authoritative sources for staged rollouts. Each entry includes metadata fields for performance benchmarks, ownership, compliance attestations, and dependency snapshots. Version control ensures all updates, rollbacks, and forks are meticulously tracked.

Integration Interfaces and APIs

- RESTful Microservices: Synchronous scoring of transactions via containerized endpoints.

- gRPC Endpoints: Low-latency, high-throughput inference for mission-critical flows.

- Batch Scoring: Orchestrated by Apache Airflow or Prefect.

- Serverless Functions: Scalable inference via AWS Lambda or Google Cloud Functions.

- Feature Store Integrations: Ensuring runtime parity with stores like Feast or Tecton.

Operational Hand-off to Real-Time Monitoring

- Trigger CI/CD pipelines with Jenkins or GitLab CI to deploy model containers or serverless functions.

- Update routing in API gateways such as Kong or Apigee.

- Register health checks and metrics in Prometheus and Grafana for real-time visibility.

- Rebase data transformation pipelines on validated definitions using Apache Kafka with Flink or Spark Structured Streaming.

- Conduct smoke tests with synthetic or historical transactions to verify end-to-end stability.

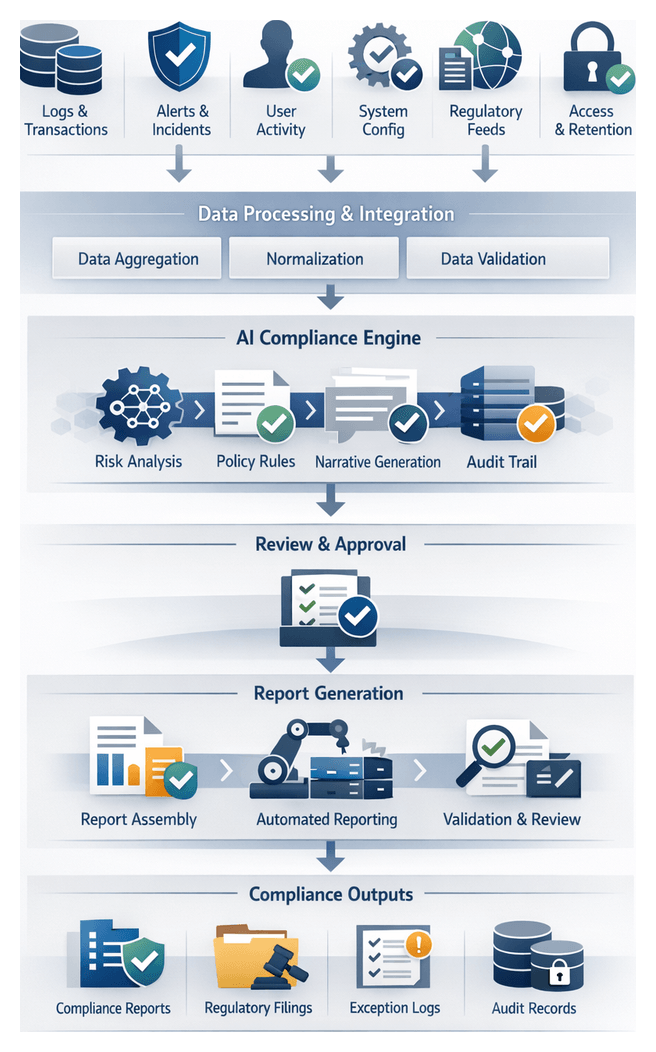

Compliance and Audit Documentation

- Versioned Approval Certificates signed by risk and compliance officers.

- Validation Reports aligned with BCBS 239 and SR 11-7, detailing data provenance and bias assessments.

- Immutable Logs of training runs and drift analyses stored in compliance vaults.

- Data Lineage Diagrams from metadata management tools.

- Regulatory Narratives generated by AI reporting engines for auditor submissions.

By centralizing artifact bundles, metadata, and integration interfaces, financial institutions can accelerate time-to-production while maintaining transparency, auditability, and resilience. This structured, AI-enabled framework transforms risk management into a proactive, competitive advantage.

Chapter 4: Real-Time Risk Monitoring

In modern financial services, risk management has evolved from periodic reviews to an uninterrupted, AI-driven capability that continuously safeguards assets, ensures compliance, and accelerates incident response. At its core, real-time risk monitoring aims to ingest diverse operational data streams, detect anomalies within milliseconds, and trigger automated or human-led interventions before events escalate. This always-on posture not only enhances detection speed but also aligns with regulatory mandates such as AML, Dodd-Frank, and PSD2, which require immediate oversight and auditability of transactions and user activities.

The strategic importance of continuous monitoring spans several dimensions:

- Real-time risk detection: Identify fraudulent transactions, insider threats, or market irregularities as they occur.

- Regulatory alignment: Satisfy immediate reporting requirements under AML frameworks and other financial regulations.

- Operational resilience: Lower mean time to detection (MTTD) and response (MTTR) via automated alerting and integration with incident management tools like ServiceNow.

- Data-driven insights: Maintain feedback loops that fine-tune AI algorithms based on live operational data.

- Scalability: Sustain performance during transaction spikes without compromising latency or accuracy.

Establishing this foundation requires a robust environment that addresses data integration, security, and system availability. Key preconditions include a low-latency, high-throughput data pipeline using platforms such as Apache Kafka or Amazon Kinesis, a unified data schema for consistent AI model interpretation, and inference endpoints deployed with tools like TensorFlow Serving. Security measures—TLS encryption, network segmentation, and role-based access controls—must safeguard data in motion and at rest, while observability systems capture metrics on data flow, model latency, and system health. Defining service-level agreements for end-to-end latency, availability, and data loss thresholds aligns cross-functional teams around performance targets and ensures accountability.

Core Data Streams and Governance

A comprehensive risk monitoring solution relies on a rich tapestry of real-time data sources. Streamed into a unified analytics fabric, these feeds empower AI engines to correlate disparate events and surface high-confidence alerts. Primary data categories include:

- Transaction Streams: Retail and wholesale payments, fund transfers, card authorizations, and wire transactions, enriched with timestamps, identifiers, amounts, and geolocation.

- Network and Infrastructure Logs: Firewall records, proxy logs, DNS queries, and system access events that reveal lateral movements or data exfiltration attempts.

- User and Entity Behavior Analytics (UEBA): Authentication events, session telemetry, and device fingerprints that detect insider threats and account takeovers.

- Market and External Data: Real-time market feeds, macroeconomic indicators, and sentiment analysis that contextualize trading risks.

- Threat Intelligence Feeds: Indicators of compromise from services like Recorded Future and open sharing platforms, integrated to enrich internal logs.

- Application and Middleware Metrics: Performance measures, queue depths, and error rates that flag operational anomalies or attack vectors.

- Regulatory and Audit Events: Policy updates, access reviews, and audit logs from compliance systems.

High-fidelity analysis demands rigorous data quality and governance practices. Ingress validation rules must enforce schema compliance and time synchronization, typically via NTP, to ensure accurate event ordering. Enrichment services augment raw feeds with customer risk profiles, geographic risk scores, and transaction purpose codes, while privacy controls anonymize or tokenize personally identifiable information in accordance with GDPR and CCPA. Recording lineage metadata enables end-to-end traceability, bolstering trust in automated risk assessments. Security and compliance preconditions—such as encrypted transport, least-privilege IAM policies, audit trails of configuration changes, data retention policies, and regular vulnerability assessments—provide the foundation for reliable and defensible continuous monitoring.

Stream Processing and Alert Generation

Transforming high-velocity event streams into actionable alerts involves a coordinated sequence of ingestion, enrichment, analytics, and routing processes. This stage leverages scalable streaming platforms, AI modules, and complex event processing to ensure that risk signals are prioritized and delivered without delay.

Data Ingestion and Partitioning

Event sources—from core banking systems and network telemetry to web application logs and threat intelligence—feed into message buses such as Confluent Platform or Amazon Kinesis. Key considerations include schema evolution management, idempotent producers to avoid duplicates, partitioning by attributes like account ID or geolocation, and transport encryption.

Parsing, Normalization, and Enrichment

Raw records in JSON, XML, or binary formats are parsed into a canonical schema. AI-powered parsers apply named entity recognition to extract critical fields, after which enrichment layers augment events with contextual metadata—geolocation risk scores, device fingerprint reputations, and recent anomaly indicators from centralized data stores.

Streaming Analytics Frameworks

Enriched events flow into frameworks such as Apache Flink or Apache Spark Streaming. These engines execute stateless and stateful operations—sliding windows, session aggregations, temporal joins—and real-time complex event processing that flags patterns like rapid failed logins or unusual fund transfers.

AI-Driven Scoring and Alert Logic

Low-latency inference services score events across fraud likelihood, compliance risks, and cybersecurity threats. Models—ranging from gradient boosted trees to neural networks—are served via APIs on inference clusters with auto-scaling. When scores exceed thresholds, alert generation modules encapsulate the event into structured payloads containing unique identifiers, processing lineage, feature contributions, severity levels, and recommended playbooks.

Orchestration, Fault Tolerance, and KPIs

A central orchestrator prioritizes alerts using rule engines that escalate high-value accounts or sanction matches and group related signals to reduce noise. Alerts are delivered to incident platforms, message queues, or notification channels with retry logic, dead-letter queues, and replay capabilities to prevent data loss. Operational teams monitor KPIs such as event-to-alert latency, throughput, false-positive rates, and uptime via dashboards built on Grafana or Kibana, enabling continuous optimization of resource allocation and processing logic.

Live AI Inference Architectures and Integration

Live inference engines convert streaming data into instant risk predictions, forming a critical bridge between data ingestion and alerting workflows. These systems must balance millisecond-level response times with high throughput, resilience, and auditability.

Inference Serving Platforms

Specialized model servers such as TensorFlow Serving, NVIDIA Triton Inference Server, and lightweight runtimes like ONNX Runtime deliver optimized runtimes supporting gRPC, REST, GPU acceleration, and dynamic batching. Managed services—Amazon SageMaker Endpoints or Google Cloud AI Platform Prediction—abstract infrastructure concerns while enforcing autoscaling and health checks.

Streaming Integration Patterns

- Pull-based invocation within Apache Flink or Apache Spark Streaming, where event batches call inference endpoints synchronously.

- Push-based publishing to topics in Apache Kafka or Confluent Platform, with consumers enriching and routing predictions.

- Serverless execution via AWS Lambda or Azure Functions triggered by streams in Amazon Kinesis or Azure Event Hubs.

Trade-offs among patterns involve considerations of throughput, latency, backpressure resilience, and operational complexity. Selection hinges on acceptable latency, fault tolerance, and platform expertise.

Model Versioning and Governance

Continuous evolution of AI models demands robust version control and canary deployment strategies. Semantic versioning of artifacts in registries like MLflow allows attributes such as feature schemas, training data snapshots, and performance metrics to be tracked. Traffic splitting directs a portion of live data to candidate models for drift analysis and false-positive monitoring, with automated rollback upon deviation from predefined health thresholds.

API Interfaces and Security

Inference endpoints expose REST or gRPC APIs secured with mutual TLS, OAuth 2.0, or token-based schemes. Protocols accommodate JSON, Avro, or Protocol Buffers payloads, while role-based authentication and rate limiting prevent unauthorized or excessive access. Audit logs capture every request and response, including timestamps, model versions, and metadata, supporting regulatory audits under Basel III, GDPR, and PSD2.

Scalability, Availability, and Monitoring

Container orchestration on platforms like Kubernetes enables horizontal autoscaling of inference pods based on CPU, GPU, or custom metrics such as request latency. Service meshes (Istio, Linkerd) implement load balancing, retries, and circuit breaking. Multi-region deployments ensure geographic resilience, with state store replication for consistent predictions. Telemetry—latency percentiles, throughput metrics, error rates, and input drift detection—feeds back into MLOps pipelines, triggering retraining or capacity adjustments.

Visualization, Alert Handoffs, and Operational Oversight

The final stage translates AI outputs into actionable intelligence via monitoring dashboards and structured alert payloads. These deliver situational awareness to security operation centers, compliance teams, and executives, while enabling automated workflows to contain threats and satisfy audit requirements.

Dashboard Metrics and Tools

Dashboards consolidate time-series risk scores, anomaly rates, and system health metrics into visual formats such as line charts, heatmaps, and top-n lists. Platforms like Splunk, Kibana, Tableau, and Microsoft Power BI support real-time updates through streaming indexes or in-memory stores, while drill-down tables enable analysts to explore event context.

Alert Structures and Delivery Channels

Structured alerts include unique identifiers, timestamps, risk and anomaly scores, contextual metadata (account IDs, device fingerprints, IP addresses), model explanations, and recommended escalation paths. Payloads are serialized in JSON or Avro to ensure compatibility with downstream systems. Handoff mechanisms include:

- Message queues (Apache Kafka, RabbitMQ) streaming alerts to security orchestration tools

- RESTful APIs consumed by SOAR platforms such as ServiceNow

- Email, SMS gateways for critical notifications

- Webhooks to ticketing and collaboration systems

- Database writes for audit and compliance repositories

Reliability, Auditability, and Access Control

High-availability clusters, replicated data pipelines, and real-time monitoring of end-to-end latency ensure service-level objectives are met. Immutable logs of alerts, dashboard snapshots at reporting intervals, and access logs detailing user interactions support AML, KYC, and other compliance programs. Role-based access controls grant security analysts detailed drill-downs, compliance officers aggregated views without PII, and executives strategic scorecards. APIs and dashboards remain extensible to accommodate new threat scenarios, visualization types, and integration endpoints.

By orchestrating these monitoring outputs with clearly defined handoff protocols and governance guardrails, financial institutions achieve a proactive, AI-driven risk management framework. Continuous feedback loops—from incident outcomes, telemetry analysis, and user interactions—drive iterative enhancements, ensuring that risk detection and response capabilities evolve in concert with emerging threats and regulatory demands.

Chapter 5: Anomaly Detection and Alerting

Anomaly detection establishes the criteria and inputs necessary to identify deviations from expected behavior in financial operations. By defining clear detection parameters and readiness conditions, organizations ensure high-fidelity signals for alert generation, minimize false positives, accelerate response times, and maintain an auditable framework aligned with regulatory mandates. Shifting from static, rule-based systems to adaptive, AI-driven models enables real-time risk mitigation that scales with transaction volumes, system logs, network flows, and user behavior data.

Effective anomaly detection aligns technical capabilities with business objectives and compliance requirements, supports continuous performance measurement, and integrates consistently with alert management and incident response workflows. In fast-moving markets where delays can incur significant financial and reputational losses, a structured detection stage delivers timely, accurate, and governance-ready risk signals.

Detection Criteria and Data Inputs

Detection criteria encompass the rules, thresholds, and statistical models that distinguish normal activity from anomalies:

- Behavioral Baselines – Statistical representations of transaction amounts, frequencies, geolocations, and channel usage over defined windows.

- Deviation Measures – Metrics such as standard deviation, clustering distances, and density estimates that quantify divergence from baselines.

- Contextual Filters – Business logic or policy constraints, for example excluding internal maintenance transactions or whitelisted entities.

Required Data Inputs

- Transaction Streams – Real-time feeds of payments, wire transfers, ATM withdrawals, and authorizations with timestamps, amounts, account IDs, merchant details, and channel codes.

- User and Account Profiles – Demographics, risk tiers, historical summaries, and relationship attributes enabling stratified baselining.

- Network and System Telemetry – Logs from authentication services, VPN gateways, database monitors, and endpoint agents revealing unusual access or exfiltration patterns.

- External Threat Intelligence – Indicators of compromise, known malicious IPs, botnet signatures, and fraud ring watchlists enhancing anomaly prioritization.

- Environmental Metadata – Market events, holidays, regulatory embargoes, and maintenance windows to adjust expected activity baselines.

Enriched features—transaction velocity, inter-account linkages, entity graphs, and device fingerprints—support unsupervised learning approaches and reduce detection latency.

Prerequisites and Operational Readiness

To guarantee data quality, model readiness, and operational alignment, anomaly detection must be underpinned by:

- Data Normalization – Harmonized schemas, units, and time zones via streaming frameworks with connectors.

- Feature Store Access – Precomputed rolling averages, z-scores, cluster assignments, and centrality measures loaded into a low-latency feature repository.

- Baseline Model Deployment – Unsupervised engines (autoencoders, isolation forests) and statistical engines deployed for inference, reflecting the latest training cycles.

- Threshold and Policy Definitions – Documented risk thresholds, alert severities, and escalation rules managed in a policy repository.

- Observability Infrastructure – Streaming platforms, message queues, and monitoring agents capturing inference metrics, detection latencies, and error rates.

Governance foundations include data lineage documentation, role-based access controls compliant with GDPR and CCPA, data masking or tokenization, and scheduled audits for completeness and consistency.

Operational alignment spans risk committees, data scientists, compliance and legal reviews, and IT/DevOps coordination to provision inference services, manage deployment pipelines, and ensure high availability.

Clear handoff conditions to alert generation require anomaly records enriched with timestamps, model identifiers, feature values, deviation scores, confidence indicators, audit trail entries, and routing instructions for downstream systems.

Detection Workflow Overview

The anomaly detection workflow orchestrates multiple systems to identify deviations, score their significance, and generate actionable alerts. Normalized event streams feed detection microservices that retrieve feature definitions from a feature store such as Feast and model artifacts from registries like MLflow or Kubeflow.

Data Flow and System Interactions

- Ingestion – Normalized streams of transaction logs, network flows, and user activities published to Apache Kafka or Amazon Kinesis.

- Feature Retrieval – Detection services query centralized stores for feature schemas and model artifacts.

- Inference Execution – Low-latency engines process events in real time; GPU clusters with NVIDIA Triton Inference Server scale for high throughput.

- Score Calculation – Raw model outputs normalized and calibrated against dynamic thresholds from HashiCorp Consul or AWS Parameter Store.

- Alert Construction – Orchestrators compile alert objects including entity IDs, timestamps, anomaly scores, confidence metrics, and feature contributions.

- Dispatch – Alerts sent to management systems such as Splunk, IBM QRadar, or Microsoft Sentinel.

Anomaly Scoring Sequence

- Score Normalization – Universal function rescales raw outputs into a fixed range (e.g., 0–100).

- Dynamic Thresholds – Adapt to time-of-day, volume, and incident rates using InfluxDB or Amazon Timestream.

- Confidence Calibration – Methods like isotonic regression or Platt scaling produce reliability measures.

- Feature Attribution – Explainability via SHAP library highlights top contributing factors.

- Metadata Enrichment – Append geolocation, device fingerprint, and risk profiles using AI APIs.

Alert Generation, Prioritization and Integration

Alert Generation and Prioritization

- Typing – Categorize by risk domain (fraud, compliance breach, system abuse) via rule engines or classification models.

- Prioritization – Compute composite scores combining anomaly severity, confidence, business impact weightings, and user risk.

- Dependency Correlation – Cross-reference active incidents using Elastic Stack for chain detection.

- Deduplication – Collapse similar alerts into single tickets with aggregated counts.

Integration with Alerting Platforms

- API Handoff – RESTful or message queue delivery to PagerDuty, ServiceNow, or Datadog using OpenC2 schemas.

- Webhooks – Push notifications to Slack or Microsoft Teams for real-time visibility.

- Dashboards – Populate Grafana or Kibana widgets showing alert volume, severity, and time-to-detect.

- Audit Logging – Record all events in immutable stores like AWS QLDB for traceability.

Coordination with Response Teams

- Ticket Generation – Auto-create incidents with full alert context and unique identifiers.

- Escalation Protocols – Playbook-driven rules trigger tiered escalations based on time and severity.

- Orchestration Hooks – Webhooks to SOAR platforms like Palo Alto Networks Cortex XSOAR or Splunk SOAR automate response actions.

- Analyst Acknowledgment – Analysts review and update incident status; orchestration engines log actions to maintain state.

- Feedback Loop – Outcomes, including false positives or confirmed breaches, feed back into model training and threshold adjustments.

AI-Driven Risk Mitigation Architecture

AI integration embeds machine learning models, automation engines, and supporting infrastructure across the risk management workflow. By combining predictive analytics, unsupervised learning, natural language processing, and robotic process automation, institutions shift from reactive defenses to proactive threat anticipation.

Core AI Capabilities

- Data Ingestion and Preprocessing – Schema inference, normalization, entity resolution, and semantic tagging via platforms like Snowflake and Databricks.

- Predictive Analytics – Supervised models (logistic regression, random forests, gradient boosting) orchestrated in AWS SageMaker and Azure Machine Learning.

- Unsupervised Anomaly Detection – Clustering, autoencoders, and isolation forests prototyped in TensorFlow and managed via MLflow.

- Natural Language Understanding – Sentiment analysis, entity extraction, and compliance mapping with Google Cloud AI Platform.

- Automated Decision Engines – Business logic and RPA workflows orchestrated by UiPath and Workato to execute holds, notifications, or referrals.

Supporting System Roles and Integration Layers

- Data Management – Central repositories in Snowflake or Amazon Redshift with metadata catalogs for lineage and quality.

- MLOps Pipelines – Experiment tracking, versioning, and model deployment via Kubeflow and MLflow.

- Streaming Layers – Low-latency ingestion in Apache Kafka or Amazon Kinesis with on-the-fly inference.

- API Management – Gateways enforcing authentication, authorization, and rate limits for risk insight consumption.

- Compliance and Logging – Immutable audit trails via ledger databases ensuring verifiable action histories.

Synergy Between AI and Supporting Systems

- Detection Speed – High-throughput Kafka pipelines and GPU-accelerated serving detect threats in milliseconds.

- Accuracy – Continuous retraining on feedback data reduces false positives, guided by data management quality rules.

- Scalability – Containerized services on Kubernetes enforce data residency and multi-tenant policies.

- Explainability – Interpretability frameworks expose feature importances for audits and analyst insight.

- Governance – Policy engines validate compliance, trigger human reviews on deviations, and archive all decisions.

This integrated architecture delivers regulatory acceleration, operational efficiency, enhanced risk posture, and continuous adaptability to emerging threats.

Alerts Output and Escalation Dependencies

Overview of Alert Outputs

Anomaly detection produces structured alerts enriched with metadata, severity ratings, and escalation directives. Key components include a unique identifier, UTC timestamp, source context, normalized anomaly score, severity level, alert type, supporting evidence, suggested actions, and escalation routing. Embedding quantitative metrics and qualitative context enables both automated orchestration and clear guidance for human investigators.

Alert Data Structure and Metadata

- Schema Version – Ensures backward compatibility as fields evolve.

- Event Context – Nested fields like user location, device fingerprint, transaction amounts, and threat intelligence tags.

- Model Metadata – References to model version, training snapshot, and performance metrics.

- Correlation Keys – Attributes linking alerts to related events in SIEM or fraud platforms.

- Audit Trail – Timestamps, service identifiers, and processing latency for compliance reporting.

- Escalation Matrix – Pointers to policies defining pathways by severity, region, or business unit.

Escalation Dependencies and Integration

- Streaming Platform – Real-time routing via Apache Kafka or cloud event services.

- SIEM – Ingestion into Splunk Enterprise Security or IBM QRadar for correlation and compliance reporting.

- Ticketing – Automated incident creation in ServiceNow or JIRA Service Management based on severity mapping.

- Notifications – Delivery via email gateways, SMS APIs, Slack, or Microsoft Teams to on-call responders.

- Access Control – Identity management integration for immediate user interventions like MFA or password resets.

Handoff Mechanisms to Incident Response

- Publish/Subscribe – Alerts published to topics; multiple consumers subscribe for parallel processing.

- API Push – Synchronous delivery via RESTful endpoints with acknowledgements and correlation tokens.

- Batch Export – Aggregated CSV or JSON uploads for offline reporting and trend analysis.

- Webhooks – HTTP callbacks trigger automated playbooks in orchestration platforms.

- Database Writes – Direct storage in SQL or NoSQL for BI tool queries and custom dashboards.

Operational Considerations and Best Practices

- Schema Governance – Formal version control and change management for alert schemas.

- Health Monitoring – Dashboards and health checks for message buses, SIEM connectors, and APIs.

- SLAs – Defined throughput and latency targets for high-severity alerts.

- Escalation Playbooks – Role-based mappings of severities to response teams and actions.

- Redundancy and Failover – Dual brokers and parallel endpoints to avoid single points of failure.

- Periodic Testing – Simulations and tabletop exercises to verify end-to-end alerting and escalation.

- Feedback Integration – Incident outcomes update thresholds and models to continuously refine detection accuracy.

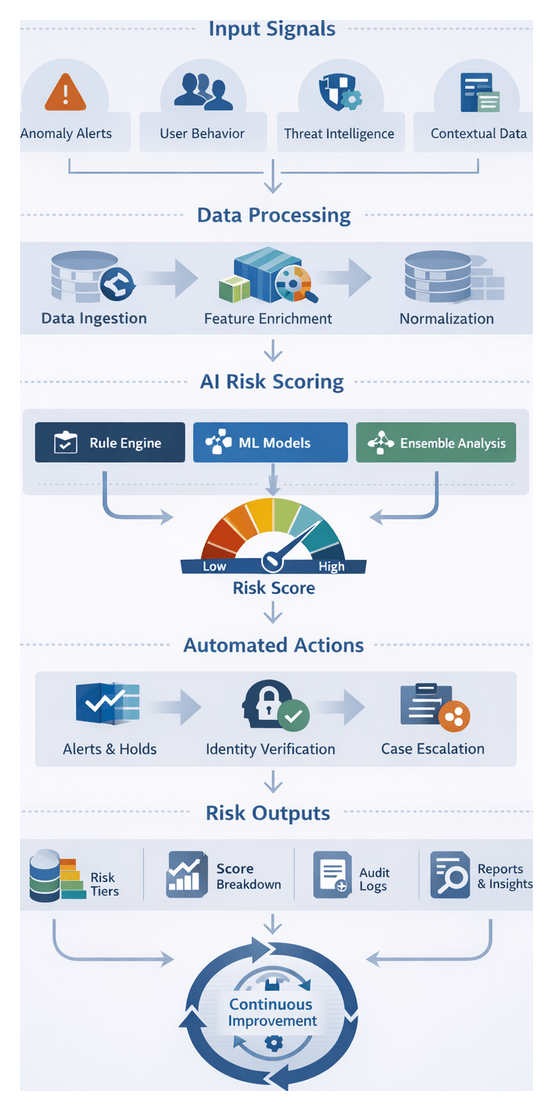

Chapter 6: Automated Risk Assessment and Scoring

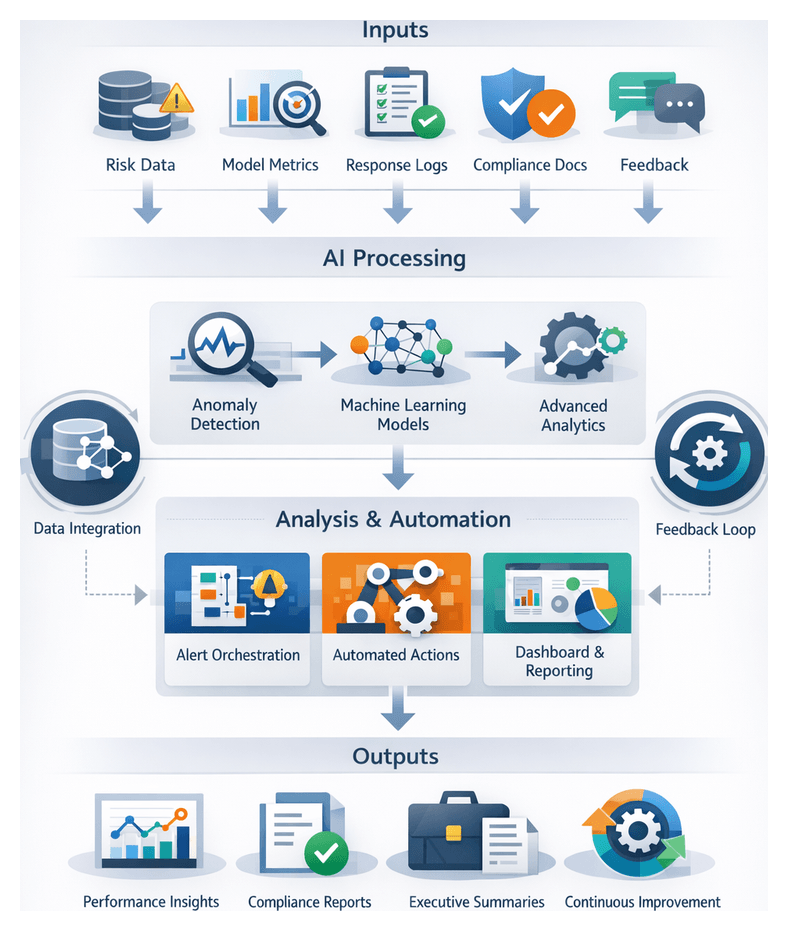

Scoring Framework Objectives and Workflow Overview

Automated risk aggregation and scoring is the analytical core of an AI-driven risk mitigation workflow. It consolidates heterogeneous risk signals—from anomaly alerts and user behavior metrics to external threat intelligence—into a single, quantifiable score. This unified risk metric enables consistent evaluation across business units, supports real-time prioritization of alerts, and triggers automated responses in accordance with organizational policies. By embedding explainability and auditability into scoring, institutions can demonstrate compliance with regulatory requirements and maintain stakeholder trust.

In a landscape characterized by sophisticated cyberattacks, evolving compliance mandates, and volatile market conditions, a coherent scoring framework prevents fragmented risk assessments and delayed responses. The objectives of this stage are to:

- Standardize threat severity quantification to deliver consistent risk evaluations across diverse systems and events.

- Prioritize incidents by impact, likelihood, and risk appetite to focus resources on high-value threats.

- Automate decision triggers—such as transaction holds, identity verifications, or case escalations—via predefined thresholds.

- Generate auditable reports with transparent scoring methodologies for regulators, auditors, and internal teams.

- Provide interpretability through explainable metrics and factor breakdowns that analysts can trust and act upon.

Successful execution requires seamless integration with upstream data ingestion, signal identification, model training, real-time monitoring, and downstream response orchestration. Together, these components form an end-to-end mechanism for proactive risk management, continuous improvement, and regulatory alignment.

Input Signals and Data Quality Requirements

The integrity of risk scores depends on high-quality input signals and contextual data. Key sources include:

- Normalized Risk Signals: Classified event tags produced by the signal identification stage, such as failed login attempts or policy violations.

- Anomaly Detection Outputs: Quantitative deviation scores from unsupervised models flagging outliers in transaction volumes or user behavior.

- Contextual Attributes: Customer profiles, account histories, device fingerprints, geolocation data, transaction metadata, and session details.

- External Threat Intelligence: Curated feeds and vulnerability reports from Recorded Future and frameworks like MITRE ATT&CK.

- Model Confidence Metrics: Probability estimates and calibration scores that guide weighting in composite calculations.

- Regulatory Parameters: Predefined thresholds, tier definitions, and policy constraints aligned with internal risk appetite and compliance frameworks.

- Historical Analytics: Aggregated incident outcomes and past scores serving as references for calibration and trend analysis.

- Environmental Context: Market volatility indices, system maintenance schedules, and live threat alerts that influence dynamic evaluations.

To ensure trustworthiness, input data must meet stringent quality and availability conditions:

- Completeness: All required fields and features must be present and populated across datasets.

- Accuracy: Data values validated through reconciliation routines, schema checks, and anomaly detection in ingestion pipelines.

- Freshness: Near real-time ingestion and processing to capture current risk landscapes with minimal latency.

- Consistency: Harmonized formats, units, and taxonomies achieved via normalization and schema mapping.

- Traceability: End-to-end lineage tracking that records data origin, transformation steps, and model inputs for audit purposes.

- Security: Role-based permissions, encryption in transit and at rest, and secure transmission channels.

- Regulatory Alignment: Compliance with GDPR, CCPA, PCI DSS, and other data protection regulations in data handling and storage.

Technical and Organizational Prerequisites

Establishing a robust scoring stage requires both a solid technical foundation and enterprise-wide alignment:

- Unified Data Platform: Scalable, high-performance repositories such as Snowflake or modern lakehouse architectures to store and query normalized data.

- Stream Processing Framework: Real-time pipelines powered by Apache Kafka or AWS Kinesis to ingest continuous event streams.

- AI/ML Infrastructure: Model development and serving environments such as Amazon SageMaker, H2O.ai, or Google Cloud AI Platform for hosting, versioning, and scaling algorithms.

- Workflow Orchestration: Engines like Apache Airflow or Kubeflow Pipelines to schedule and manage end-to-end tasks, dependencies, and retries.

- Risk Score Registry: A centralized artifact store to register scoring configurations, model metadata, and versioned pipelines for reproducibility.

- Integration APIs: Secure RESTful interfaces for receiving normalized signals and returning computed risk scores to downstream systems.

- Monitoring and Logging: Observability platforms like Splunk, the ELK Stack, Prometheus, and Grafana to track throughput, latency, errors, and drift.

- Security Frameworks: Identity management and single sign-on via Okta, combined with encryption and access controls.

- Governance and Audit Tools: Policy enforcement platforms to validate scoring configurations, maintain audit trails, and manage stakeholder approvals.

Organizational prerequisites include documented risk appetite statements, cross-functional collaboration among risk, data engineering, compliance, and IT teams, comprehensive training programs, change management processes for model updates, and incident response playbooks triggered by risk thresholds. Regular performance reviews and recalibration cycles ensure the framework adapts to emerging threats and evolving regulatory expectations.

Core Aggregation and Scoring Process

The aggregation and scoring pipeline converts upstream signals into actionable risk profiles through a coordinated sequence:

- Data Retrieval: Query normalized event streams and anomaly detection outputs from the data lake or feature store.

- Contextual Enrichment: Append customer attributes, geolocation data, device details, and historical risk trends via microservices.

- Normalization: Map disparate metrics to a common scale to ensure compatibility across scoring models.

- Rule-Based Aggregation: Apply explicit business logic—implementable via engines such as Drools or OpenRules—to assign base weights reflecting policy mandates and compliance rules.

- Algorithmic Scoring: Invoke AI models, including supervised classifiers, ensemble learners, graph-based networks, and probabilistic methods, to compute composite risk scores.

- Tier Assignment: Map numerical scores to categorical tiers (low, medium, high, critical) for rapid prioritization and routing.

- Persistence and Distribution: Store finalized scores with metadata in the risk repository and disseminate via REST APIs, message streams, or batch exports.

Orchestration frameworks ensure task dependencies and retries. Real-time inference runs on platforms such as TensorFlow Serving or NVIDIA Triton Inference Server, auto-scaled by Kubernetes. Batch scoring, for end-of-day or portfolio analyses, is managed by Apache Airflow. A message broker like Apache Kafka distributes events to fraud detection consoles, case management systems, and response orchestrators, enabling parallel, low-latency reactions.

AI Scoring Methods and System Components

Achieving high accuracy and adaptability requires a layered AI architecture and supporting systems:

- Supervised Classification: Logistic regression, random forests, gradient boosting machines, and deep neural networks translate labeled risk events into probability scores.

- Ensemble Learning: Techniques such as bagging, boosting, and stacking combine multiple models to reduce variance and improve generalization.

- Graph-Based Scoring: Graph convolutional networks and relational embeddings analyze entity relationships to detect collusive patterns and money laundering networks.

- Bayesian Models: Incorporate prior risk knowledge and update posterior probabilities as new evidence arrives, enhancing explainability by tracing belief shifts.

- Unsupervised Methods: Autoencoders, clustering, and one-class SVMs establish behavioral baselines, where deviations generate anomaly scores for hybrid integration.

- Hybrid Rule-AI Systems: Deterministic rules encoded in human-readable formats work alongside AI outputs to enforce hard thresholds and contextual overrides.

These algorithms rely on the following system components:

- Feature Store: Solutions like Feast manage versioned feature repositories, enabling consistent online and offline access for training and real-time inference.

- Inference Engines: TensorFlow Serving and NVIDIA Triton host serialized models and provide low-latency REST or gRPC endpoints, auto-scaled via Kubernetes.

- Model Registry: Tools such as MLflow archive model binaries, code, performance metrics, and support promotion workflows and rollbacks under governance controls.

- Business Rules Engine: Engines like Drools or OpenRules enforce regulatory thresholds and context-based overrides in an auditable, human-readable format.

- Workflow Orchestration: Apache Airflow, Kubeflow Pipelines, and Prefect coordinate end-to-end pipelines—from data ingestion and feature computation to model inference and alert generation.

- API and Integration Layer: RESTful endpoints, message queues, and webhooks expose risk scores, explanatory feature attributions, and confidence metrics to downstream systems under strict authentication and authorization controls.

- Explainability Modules: SHAP, LIME, and decision path visualizations decompose model outputs into feature contributions, while natural language summaries assist analysts and regulators.

- Monitoring and Feedback: Prometheus, Grafana, and custom dashboards track drift indicators, latency, throughput, and error rates. Feedback from incident outcomes feeds into retraining pipelines, closing the continuous improvement loop.

Risk Score Outputs and Downstream Integration