AI Driven Retail Sales Workflow Solutions

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Operational Challenges Facing Retail Sales Teams

Retail organizations today operate in omnichannel ecosystems where online marketplaces, mobile apps and brick-and-mortar stores generate vast, disparate data streams—from point-of-sale transactions to web clickstreams and loyalty interactions. When these sources remain siloed, sales teams lack a unified customer view, impeding demand forecasting, personalized offer management and real-time responsiveness. Legacy processes compound the issue: regional managers, category planners and store associates follow varied procedures for inventory checks, pricing adjustments and promotion rollouts, leading to duplicated efforts, approval delays and inconsistent customer experiences. Disconnected communication channels further fracture the brand narrative as digital promotions clash with in-store offers, eroding customer trust and diminishing lifetime value.

Examining these operational challenges serves three strategic aims: establishing a baseline of existing workflows and data landscapes, pinpointing the pain points that stall sales growth and defining clear objectives for workflow unification. By mapping process diagrams, data inventories and performance metrics—such as conversion rates, transaction values and stock-out frequencies—stakeholders gain a shared understanding of where automation and data integration will deliver the greatest return. This clarity paves the way for AI-orchestrated workflows that bridge silos, automate routine tasks and ensure consistent, personalized interactions at scale.

Key Inputs for Workflow Transformation

- Process Documentation

- End-to-end workflows for order processing, inventory management, pricing updates and promotional execution

- Standard operating procedures and exception-handling protocols

- Data Source Inventory

- POS transaction logs, customer master records and loyalty data

- Inventory system exports with stock levels, replenishment lead times and warehouse allocations

- External feeds—competitor pricing, economic indicators and demand forecasts

- Technology Infrastructure Assessment

- Existing platforms: ERP, CRM, POS, e-commerce, data warehouses and middleware

- Network topology, data throughput and integration touchpoints

- Current automation capabilities—batch schedules, event triggers and API endpoints

- Performance Metrics Baseline

- Sales conversion rates, average transaction value and customer satisfaction scores

- Inventory turnover ratios, stock-out frequencies and carrying costs

- Promotion lift, markdown effectiveness and campaign ROI

- Stakeholder Interview Insights

- Feedback from sales managers, store associates and customer service teams

- Input from marketing, finance and supply chain on dependencies and priorities

- Data Governance and Quality Reports

- Assessments of data completeness, accuracy and timeliness

- Documentation of data ownership, stewardship roles and compliance requirements

Prerequisites for AI-Orchestrated Workflows

- Executive Sponsorship and Alignment: Leadership commitment to harmonize processes end-to-end, allocate resources, and champion change management.

- Cross-Functional Collaboration Framework: Governance structures—steering committees and working groups—to coordinate decisions across sales, marketing, operations and IT.

- Baseline Data Access Agreements: Data sharing protocols, security controls and privacy safeguards to grant AI teams access to source systems.

- Process Ownership and Accountability: Designation of process owners to maintain workflows, validate AI recommendations and oversee continuous improvement.

- Change Management Readiness: Assessment of culture, communication channels and training capacity for new tools and roles.

- Technical Environment Stabilization: Reliable systems with minimal unplanned downtime, consistent data pipelines and performant integration layers.

From Fragmentation to Orchestrated AI-Driven Workflows

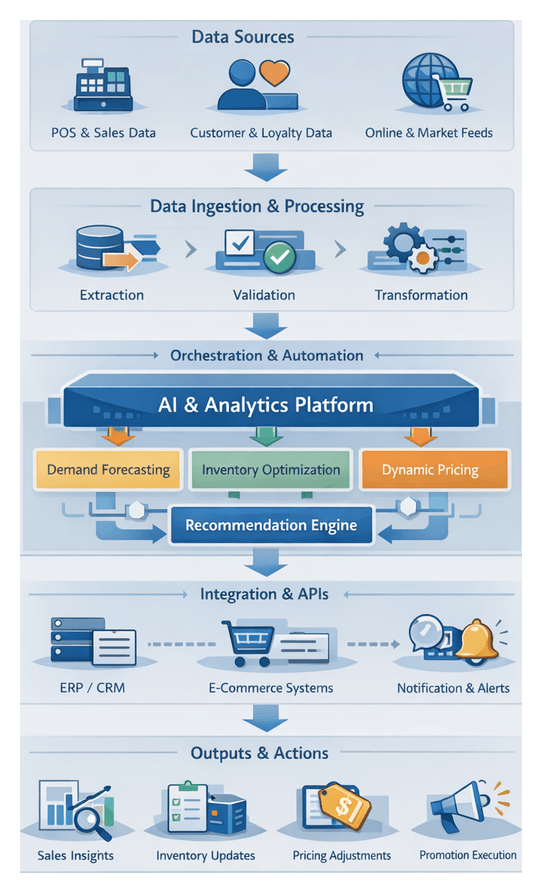

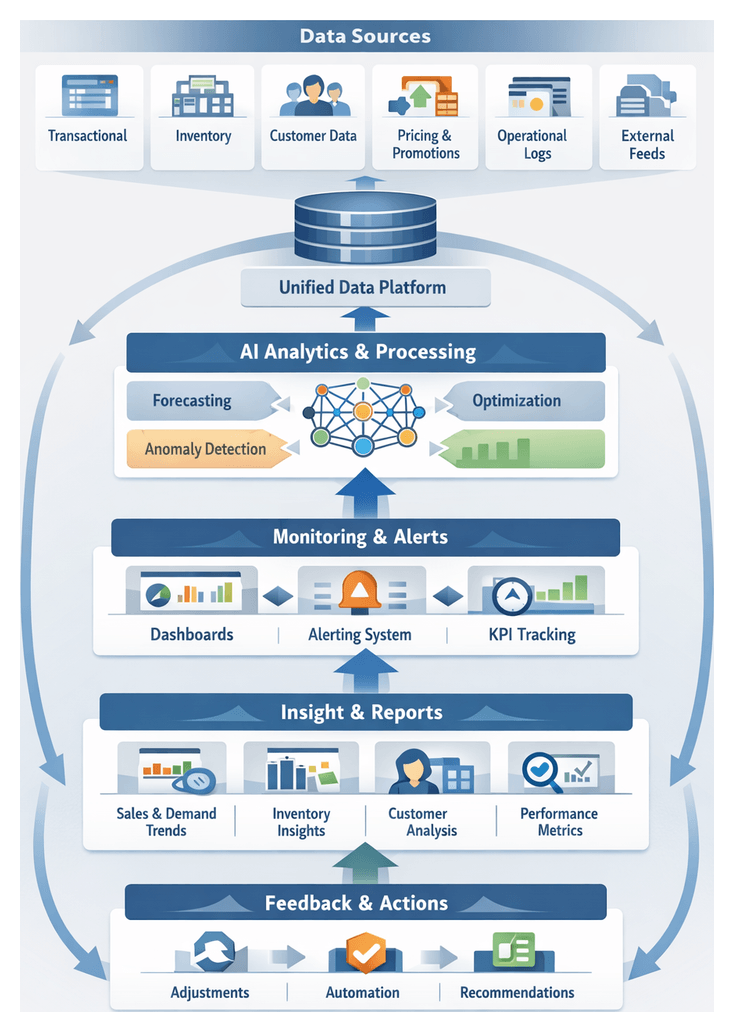

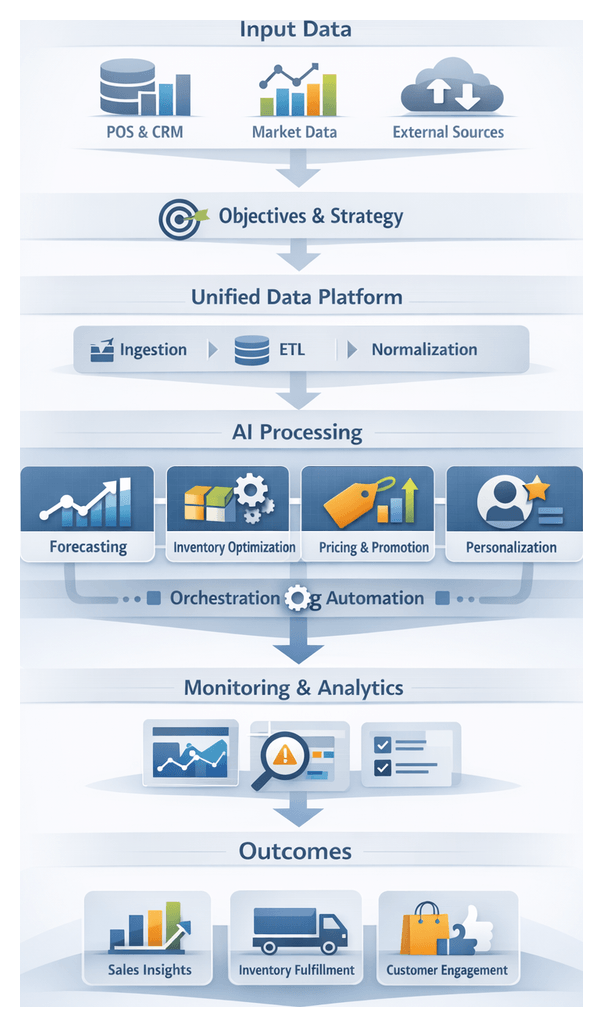

A unified workflow orchestrates data, insights and decisions across the retail sales ecosystem. Transactional, customer and inventory data flow into a centralized platform. AI agents then execute forecasting, optimization and recommendation tasks. Integration services validate outputs, route information to downstream systems or human actors and ensure transparency, speed and accuracy in delivering actionable intelligence to planning, operations and store teams.

Data Ingestion and Preprocessing

- Data Extraction: Change-data-capture connectors ingest records from POS terminals, e-commerce platforms and loyalty apps.

- Staging and Validation: Schema checkers verify format consistency; anomaly detection modules flag missing, duplicate or outlier records.

- Transformation and Enrichment: Scripts standardize units, apply currency conversions and append external attributes like regional economic indicators.

- Normalization and Loading: Cleaned, enriched records populate the unified data platform for downstream AI services.

AI Agent Orchestration and Scheduling

- Event-Driven Triggers and Time-Based Schedules: Pub/sub messages and calendars initiate AI workflows, such as daily demand forecasts.

- Dependency Management: Orchestration enforces data readiness checks before invoking inventory optimization or pricing models.

- Resource Allocation: Tasks run on compute clusters or cloud instances—Amazon SageMaker or Google Cloud AI—according to CPU, memory and GPU needs.

- Failure Handling: Automated retry policies and escalation workflows ensure rapid recovery and alert operators when needed.

System Actor Roles and Information Handoffs

- Data Engineers: Monitor pipelines, resolve validation errors and optimize storage schemas.

- Data Analysts: Validate AI model performance and provide contextual feedback.

- Inventory Planners: Adjust replenishment schedules based on demand forecasts.

- Merchandising Managers: Finalize dynamic pricing and promotion campaigns.

- Store Managers and Sales Associates: Access AI assistant insights on mobile or in-store tablets.

- IT Operations: Maintain integration health, manage API credentials and enforce security standards.

Model Retraining and Continuous Feedback

- Performance Monitoring: Dashboards track forecast accuracy, price elasticity responses and inventory turns.

- Error Analysis: Agents flag divergent predictions for root-cause investigation.

- Retraining Jobs: Updated datasets trigger automated pipelines on platforms like Salesforce Einstein.

- Validation Gates: A/B testing in controlled environments ensures only improved models go live.

Integration Layer and API Interactions

- RESTful Endpoints: Forecasting agents retrieve historical data; pricing engines push updates to e-commerce platforms.

- Message Queues: Low-stock alerts publish to topics consumed by order management and fulfillment systems.

- Microservices Architecture: Decoupled services handle inventory calculations, promotion checks and segmentation queries.

- Service Discovery: Dynamic registries ensure reliable service connectivity as instances scale.

Collaborative Review and Approval Gates

- Forecast Sign-Off: Planning teams review and adjust demand predictions.

- Pricing and Promotion Approval: Merchandising and legal teams vet dynamic price changes before publication.

- Inventory Allocation Confirmation: Regional operations managers confirm stock commitments.

Monitoring, Exception Handling and Resilience

- Real-Time Dashboards: Visualize throughput, latency and success rates for each workflow component.

- Anomaly Detection Agents: Identify unusual patterns—drops in data volume or error spikes.

- Automated Alerts and Escalation Policies: Notify teams via email, SMS or collaboration platforms; escalate persistent failures to executive dashboards.

- Auto-Scaling Compute and Multi-Region Deployments: Elastic infrastructure on cloud services ensures resilience during peak seasons.

- Disaster Recovery Plans: Regular data snapshots and infrastructure backups enable rapid restoration.

Human-AI Collaboration and Continuous Improvement

- AI-First Analysis: Agents handle high-volume tasks—price adjustments, reorder suggestions.

- Human-In-The-Loop Decisions: Strategic choices—campaign themes or regional assortments—remain under human control.

- Role-Based Dashboards: Tailored interfaces for store managers, planners and marketing leads.

- Feedback Channels: Store teams and analysts comment on AI outputs, providing business context for future model enhancements.

- Post-Event Reviews and Feature Engineering Workshops: Cross-functional teams analyze performance variances and refine predictor variables.

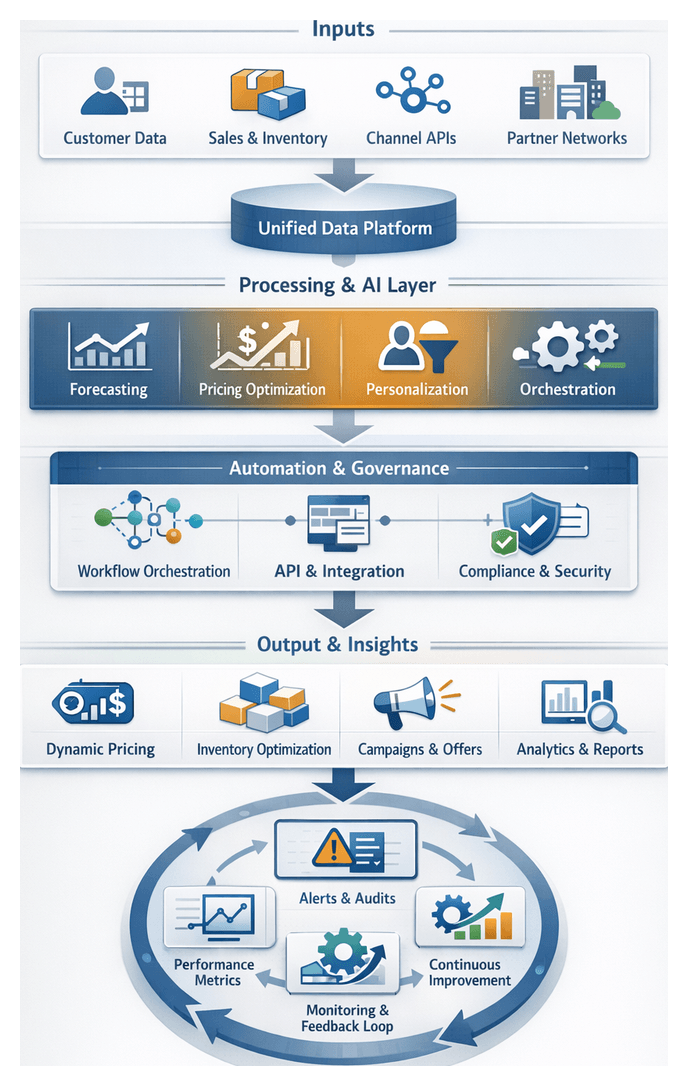

Core AI Components in the Retail Sales Solution

Advanced AI modules—forecasting agents, inventory optimization, dynamic pricing and in-store assistants—integrate with supporting systems to form a unified sales workflow. Each capability contributes distinct value, from demand prediction to personalized customer engagement.

Forecasting Agents

- Data Pipelines: Orchestrated via Apache Airflow or Azure Data Factory, these agents extract sales, traffic and weather data and apply feature engineering.

- Model Training: Services like Amazon Forecast or Microsoft Azure Machine Learning train ensembles of time-series and neural network models.

- Scenario Simulation: Monte Carlo analyses assess promotional impacts and supply disruptions, informing risk-adjusted forecasts.

- Delivery: Forecasts are served via APIs or message queues to inventory and pricing modules.

Inventory Optimization Models

- Safety Stock Calculations: Dynamic thresholds adjust to demand volatility.

- Replenishment Planning: Multi-echelon optimization in platforms like Blue Yonder Luminate or RELEX Solutions.

- Channel Allocation: Maximizes revenue by distributing stock across online, in-store and partner channels.

- Alerting and Exceptions: Low-stock notifications route to supply chain teams via collaboration tools.

Pricing Engines

- Competitive Intelligence: APIs or web scraping ingest competitor pricing data.

- Elasticity Modeling: Algorithms estimate demand sensitivity for personalized price optimization.

- Promotion Simulation: Scenario engines forecast margin impact; approval workflows integrate with ERP systems.

- Automated Deployment: Price updates and coupon offers push to POS, e-commerce and digital signage networks via standardized APIs.

- Leading Platforms: Pricefx and DynamicYield.

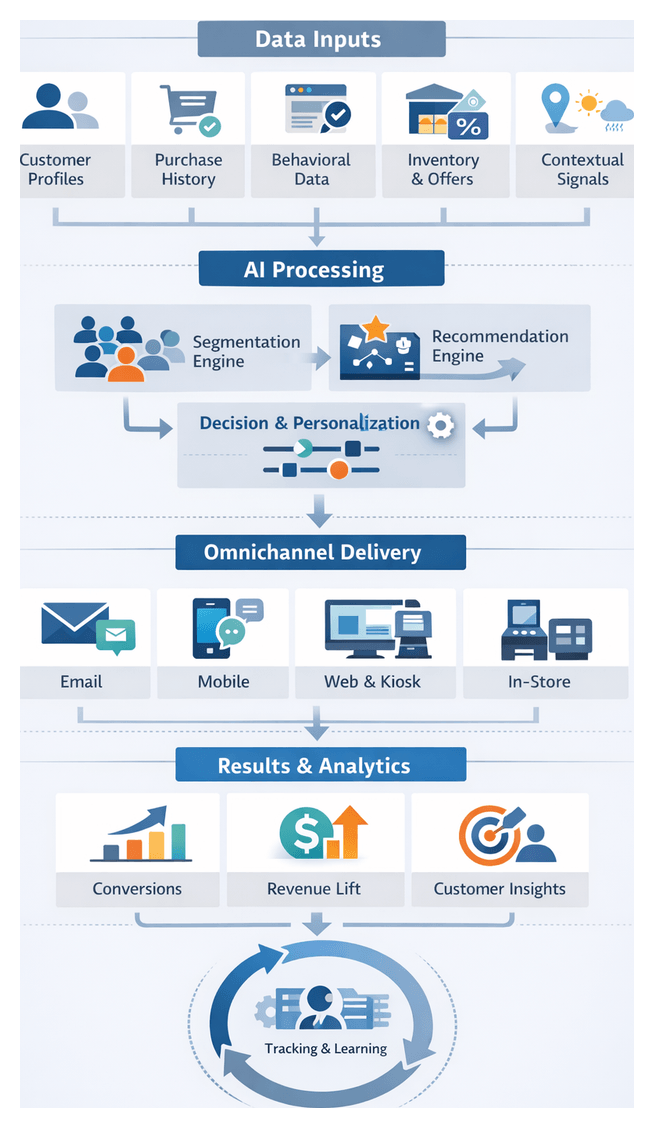

AI Assistants for Sales Associates

- Context Retrieval: IBM Watson Assistant or Salesforce Einstein surfaces customer profiles, purchase history and preferences.

- Recommendation Engines: Collaborative filtering and content-based models suggest complementary or higher-margin items.

- Inventory Lookup: Live availability across stores and fulfillment centers enables reservations and ship-to-home options.

- Task Automation and Coaching: Guides associates through upselling prompts and micro-learning modules.

Supporting Systems and Integration Layers

- Unified Data Platform: Cloud data lakes such as Databricks or Snowflake, and warehouses like Google BigQuery or Azure Synapse Analytics.

- Orchestration Engines: Apache Airflow or Prefect schedule tasks and manage dependencies.

- API Management and Service Mesh: Gateways secure access; meshes ensure reliable communication and observability.

- Monitoring and Logging: Centralized systems collect performance metrics, error traces and resource usage; anomaly detectors flag bottlenecks.

- Security and Compliance: Identity and access management, encryption in transit and at rest, and audit logging safeguard sensitive data.

Blueprint Outputs and Governance for Implementation

A comprehensive solution blueprint translates strategic objectives into actionable designs and artifacts. By defining architecture diagrams, data flow maps, component specifications and dependency matrices early, cross-functional teams share a single source of truth that accelerates development, testing and deployment.

Architectural Artifacts and Documentation

- Solution Blueprint Diagram: Layered visuals of ingestion pipelines, the unified data platform, AI orchestration layer (Apache Airflow or Kubeflow), and connectors to CRM, ERP and POS.

- Component Specifications: API definitions, authentication methods, message queue topics (Amazon Kinesis or Apache Kafka), data retention policies and model performance targets.

- Data Flow Maps: Tabular and graphical representations of extract-transform-load processes, annotated with quality checks and business logic rules.

- Integration Interface Definitions: Catalogue of REST endpoints, webhooks to CRM platforms like Salesforce, batch file formats and streaming schemas.

Dependency Mapping

- Data Source Dependencies: POS systems, ERP feeds and external market providers with SLAs and access credentials.

- Compute and Storage Dependencies: Cloud services—Amazon SageMaker or Azure Machine Learning—and container platforms like Kubernetes; data lake, warehouse and feature store requirements.

- AI Model Dependencies: Sequencing of training—for example, demand forecasting precedes inventory optimization and pricing simulations.

- Operational Dependency Graphs: Workflow tasks from data ingestion through AI inference to reporting.

Handoff to Development and Deployment Teams

- Artifact Repository Publication: Centralized storage in Git or documentation portals with controlled access.

- Design Review Sessions: Workshops with architects, engineers, data scientists and operations leads to confirm decisions, resolve issues and assign ownership.

- Implementation Roadmap: Phased milestones—data platform provisioning, model training pipeline deployment, integration testing and operational readiness.

- Deployment Playbooks: Infrastructure as Code templates (for example Terraform), CI/CD pipeline definitions, rollback procedures and validation checks.

Governance and Continuous Alignment

- Architecture Review Board: Periodic assessments of proposed changes and impact on downstream workflows.

- Configuration Management: Version control for diagrams, specifications and interface definitions.

- Continuous Feedback Loops: Post-pilot learnings and operational monitoring insights feed back into blueprint updates.

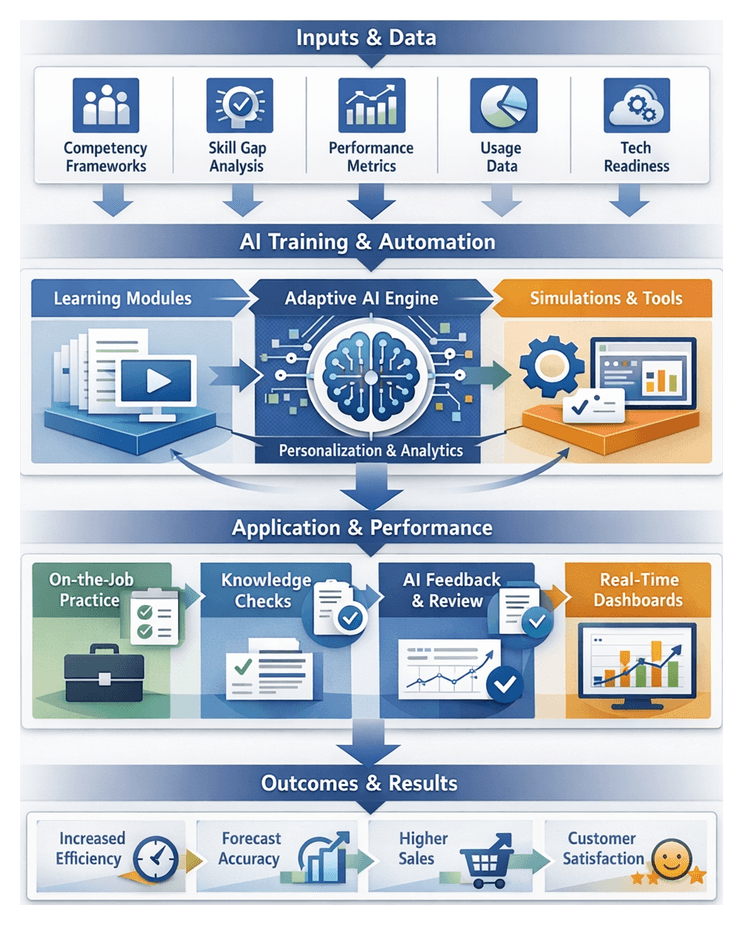

Chapter 1: Defining Sales Performance Objectives

Establishing clear, measurable sales performance objectives is the strategic cornerstone of any AI-orchestrated retail workflow. By translating corporate goals into precise revenue milestones, customer satisfaction targets and operational efficiency indicators, organizations align executive leadership, sales operations, finance, marketing and technology teams around a unified vision. This clarity ensures that downstream AI components—demand forecasting agents, inventory optimization models, pricing engines and sales assistants—operate against a shared success framework. In the absence of well-defined objectives, automated processes risk misalignment, wasted resources and inconsistent customer experiences.

In today’s dynamic retail environment—characterized by rapid market shifts and evolving customer expectations—the objectives stage acts as a nexus between strategic intent and operational execution. It informs the design of data pipelines, machine learning configurations and AI agent orchestration. Investing time in this phase creates a transparent foundation for decision making, resource prioritization and continuous performance tracking.

Several industry-specialized platforms accelerate alignment and model configuration. For example, AI-driven solutions embed best-practice objective frameworks for revenue simulation, satisfaction forecasting and KPI tracking, while allowing customization to unique business needs.

Key Inputs, Prerequisites and Governance Conditions

Data Inputs

- Historical Sales Data: Transaction records by channel, region, product category and time period enable trend analysis, seasonality detection and variance decomposition.

- Customer Experience Metrics: Net Promoter Scores, satisfaction surveys, repeat purchase rates and average order values deliver quantitative and qualitative insights that balance growth with loyalty.

- Operational Performance Indicators: Fulfillment lead times, stock-out frequency, days of inventory on hand and order accuracy rates establish baseline efficiency benchmarks.

- Market and Competitive Intelligence: External forecasts, competitor pricing indices and macroeconomic indicators contextualize internal targets.

- Financial Constraints: Budget allocations for marketing, promotions, inventory investment and staffing guide objectives toward healthy top-line growth and profitability.

Organizational Prerequisites

- Executive Sponsorship and Governance: C-suite commitment and a cross-functional steering committee ensure ongoing oversight and accountability.

- Stakeholder Alignment Sessions: Structured workshops capture diverse perspectives, surface conflicts and build consensus on priority metrics.

- Data Ownership and Access Policies: Agreements on stewardship, access controls and privacy compliance align teams on data quality and usage constraints.

- Technology Infrastructure Readiness: A modern data platform or cloud-native warehouse with validated connectivity to point-of-sale systems, CRM platforms and external feeds.

- Change Management Framework: Communication plans, training curricula and feedback loops prepare the organization for AI-orchestrated performance measures.

Environmental Conditions and Governance

- Regulatory Compliance: Local regulations on pricing transparency, data usage and promotional disclosures must be integrated into objective definitions.

- Data Quality Standards: Profiling exercises confirm inputs meet accuracy, completeness and timeliness thresholds, with remediation plans for gaps.

- Baseline Performance Review: Analyzing prior results—successes, shortfalls and root causes—identifies where AI automation can deliver the greatest impact.

- Risk Assessment and Mitigation: Cataloging potential obstacles—supply chain disruptions, market shifts or integration delays—with contingency strategies ensures objectives are ambitious yet achievable.

- Governance Cadence: Weekly dashboards, monthly executive briefs and quarterly checkpoints create transparency and facilitate course corrections.

Stakeholder Alignment Workflow

Initiation and Collaboration Setup

The alignment process begins with a project sponsor convening a core team of subject matter experts from marketing, finance, operations, sales and IT. This team defines scope, timeline and communication cadence. Key activities include:

- Identifying department leads and contributors for revenue, margin and operational inputs.

- Establishing a unified collaboration platform, such as Slack or Microsoft Teams, to centralize discussion, document sharing and decision logs.

- Configuring automated notifications and reminders for timely review and workshop attendance.

- Deploying a shared repository—like Atlassian Confluence—for agendas, templates and working drafts.

Data and Requirements Gathering

Using predefined templates, teams submit historical revenue breakdowns, campaign performance summaries, lead time statistics and regional quota histories. AI-powered document intelligence tools ingest spreadsheets and presentations, extract key metrics and generate executive summaries that highlight anomalies and trends. Deliverables include a consolidated data dossier, gap analysis report and a dependency matrix mapping objectives to data sources and responsible teams.

Cross-Functional Workshops and Consensus Building

Workshops follow a structured agenda:

- Presentation of aggregated metrics and draft objectives.

- Breakout discussions by function to assess feasibility and risks.

- Plenary session to report findings and propose revisions.

- Real-time objective adjustment with collaborative editing.

- AI-driven sentiment analysis to detect unresolved concerns and assign follow-up tasks.

Conflict resolution employs decision matrices, AI-assisted negotiation tools and impact simulations—powered by platforms such as Amazon Forecast—to visualize trade-offs between cost, revenue and service levels. Executive escalation paths secure sponsor endorsement when needed.

Validation, Sign-Off and Handoffs

Finalized objectives are circulated for digital signatures or electronic acknowledgments. A dependency map links each objective to downstream workflows in the data platform and forecasting models. Approved targets are published to shared dashboards, ensuring transparency. Handoff tasks include:

- Uploading objectives and metric definitions to the centralized data warehouse.

- Configuring data feeds that merge targets with historical transactions, customer profiles and inventory records.

- Triggering scheduled workflows in orchestration engines that initiate demand forecasting runs.

- Tagging forecast outputs with objective identifiers for variance analysis.

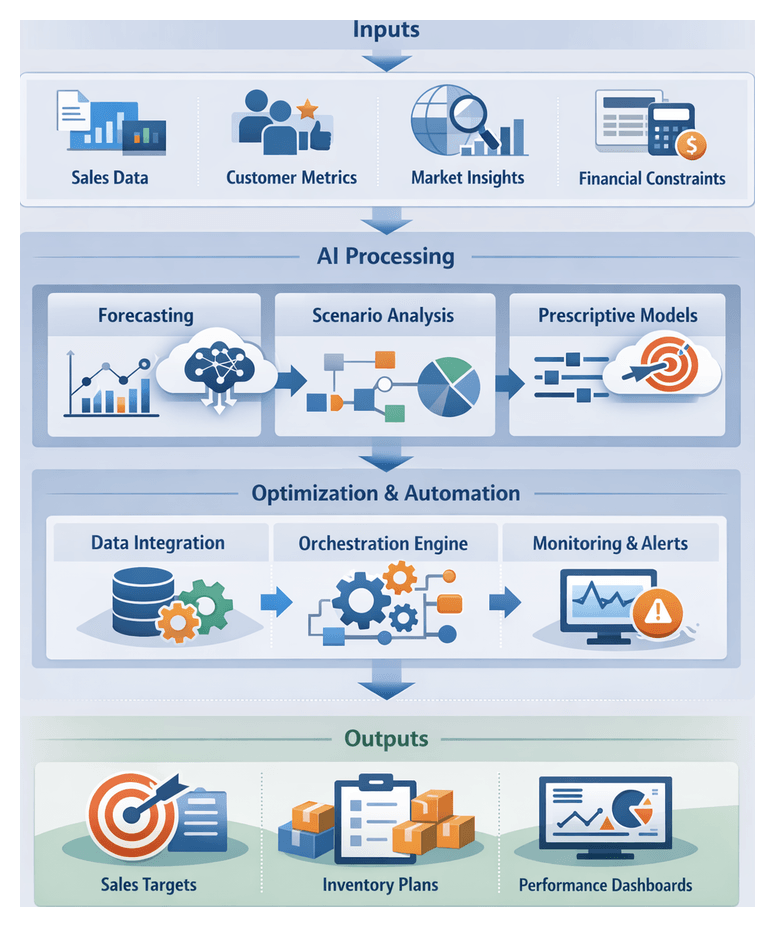

AI-Driven Capabilities and Supporting Systems

An AI-orchestrated objective-setting workflow integrates advanced analytics methods and robust architectural components to transform raw data into adaptive goals and continuous performance insights.

Core AI Capabilities

- Predictive Modeling: Time-series and regression algorithms forecast sales, traffic and margin metrics based on historical data, seasonality and market indicators.

- Scenario Simulation: Monte Carlo and what-if analyses generate outcome ranges under various pricing, assortment and spend configurations.

- Prescriptive Optimization: Constraint-based engines—such as DataRobot Decision AI—recommend target settings that maximize revenue or profit within service and capacity limits.

- Anomaly Detection: Unsupervised learning monitors performance deviations to flag emerging risks or opportunities.

- Natural Language Generation: Automated narrative summaries translate complex analytics into executive-friendly insights.

- Unified Data Platform: Consolidates point-of-sale records, inventory levels, customer profiles, marketing spend and external indicators into a centralized repository with consistent schemas and real-time access.

- Forecasting Agents: Automated services—such as Amazon Forecast and Azure Machine Learning—that generate baseline demand projections.

- Prescriptive Analytics Engine: Platforms like DataRobot ingest forecasts, inventory policies and financial objectives to recommend optimal targets.

- Orchestration Layer: Workflow management services schedule data ingestion, trigger model runs, manage dependencies and ensure end-to-end reliability.

- Visualization and Monitoring Tools: Dashboards in Microsoft Power BI and Tableau present recommended objectives, scenario comparisons and real-time performance against targets.

Integrated Workflow Stages

- Data Aggregation and Validation: The unified platform ingests sales, inventory and market feeds. ML-based data quality agents detect anomalies and trigger cleansing routines.

- Baseline Forecast Generation: Forecasting agents produce SKU- and store-level projections that inform target calculations.

- Scenario Analysis and Recommendation: The prescriptive engine simulates pricing, promotional and supply scenarios to propose balanced objectives.

- Stakeholder Review and Adjustment: Interactive dashboards and NLG summaries highlight trade-offs for cross-functional review and manual refinement.

- Target Publication and Scheduling: Approved objectives are published to the data platform and downstream systems, with orchestration services scheduling daily progress checks and alerts for threshold breaches.

Key Deliverables, Governance and Handoffs

Primary Deliverables

- Performance Objective Document: Formal specification of revenue targets, satisfaction scores, conversion thresholds and efficiency metrics, complete with units, horizons and tolerance bands.

- KPI Catalog and Metadata Schema: Definitions, data source mappings, calculation formulas and update frequencies, enabling automated ingestion and normalization.

- Dependency Matrix: Mapping of inputs, outputs and responsible parties with data formats, delivery cadences and approval owners.

- Stakeholder RACI Chart: Roles and responsibilities for marketing, finance, operations, IT, analytics and executive oversight.

- Dashboard Prototype: Wireframes for executive and operational dashboards specifying chart types, filters, alerts and drill-down paths.

- Data Requirements Specification: Field definitions, source references, quality criteria and transformation rules for each KPI.

Stakeholder Ecosystem and Interdependencies

Core functional domains and their contributions:

- Marketing: Campaign schedules, target segments and customer lifetime value assumptions.

- Finance: Profit margins, cost forecasts and pricing assumptions.

- Operations: Throughput capacity, fulfillment metrics and labor productivity data.

- IT and Data Engineering: Platform capabilities, data access protocols and integration standards.

- Analytics and Modeling: KPI validation, data quality assessments and forecast model alignment.

- Executive Steering Committee: Final endorsement, trade-off arbitration and approval for downstream handoffs.

Handoff Mechanisms and Automation

- Shared Specification Repository: Version-controlled storage in Atlassian Confluence for traceable document access.

- API-Driven Metadata Injection: RESTful endpoints import KPI definitions into the data platform’s metadata service for lineage and dashboard templating.

- Forecasting Agent Configuration Package: A JSON/YAML bundle of targets, seasonality adjustments and scenario parameters published to the orchestration layer.

- Automated Notifications: Email and collaboration-platform alerts with links to updated artifacts and change logs.

- Integration Tickets: Automated creation of Jira issues to track data pipeline tasks, model adjustments and dashboard builds.

Governance and Version Control

- Checkpoint Reviews: Scheduled domain-owner approvals documented in steering committee minutes.

- Semantic Versioning: Major and minor version numbers for structural changes and parameter tweaks.

- Audit Logging: Timestamped, attributed modifications retained for compliance and traceability.

- Approval Workflows: Automated routing of change requests through designated approvers.

- Rollback Procedures: Defined steps to revert to prior versions in case of errors.

Preparing Systems for Downstream Integration

- Data Model Alignment: Extending canonical schemas and ingestion pipelines to include new KPI fields and metadata.

- API Contract Definition: Publishing OpenAPI or AsyncAPI specifications for forecasting agent endpoints.

- Metadata Tagging: Cataloging KPIs, data sources and dependencies with business glossaries and sensitivity labels.

- Security and Access Controls: Role-based permissions and logging for objective definitions and orchestration triggers.

- Environment Configuration: Provisioning development, staging and production environments with Infrastructure as Code.

- Automated Testing and Validation: CI pipelines verifying KPI calculations, data transformations and API responses against specifications.

By rigorously defining objectives, aligning stakeholders, embedding AI capabilities and delivering structured artifacts with automated handoff mechanisms, retail organizations lay the groundwork for seamless integration with unified data platforms and forecasting agents. This precision at the objective-setting stage accelerates deployment, reduces rework and ensures that all downstream systems operate in concert to achieve defined sales performance goals.

Chapter 2: Building a Unified Data Platform

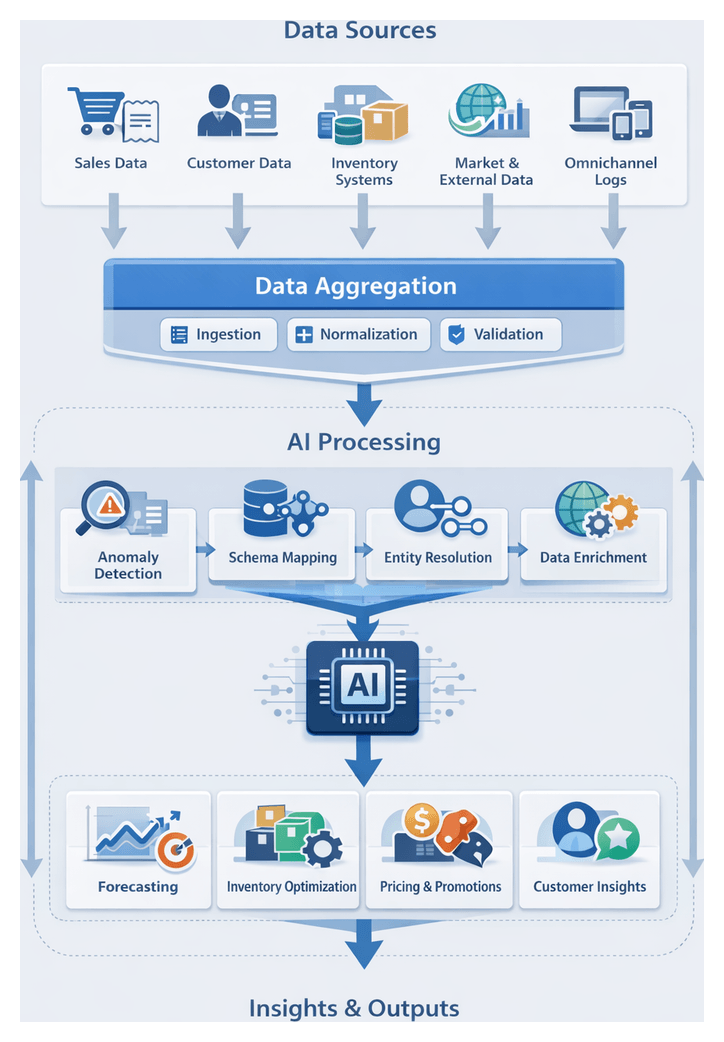

Purpose and Scope of the Data Aggregation Stage

The Data Aggregation stage establishes a unified foundation for all downstream retail analytics, forecasting, and automation workflows. Diverse streams—from point-of-sale transactions to customer loyalty records—are systematically collected, standardized into a common schema, and aligned for real-time accessibility. This centralized repository resolves fragmentation across channels, enforces data governance, and validates completeness and accuracy before feeding AI agents. Without this robust framework, forecasting accuracy, inventory optimization, dynamic pricing, and personalized engagement suffer from inconsistent inputs, undermining decision confidence and customer experiences.

- Standardize heterogeneous inputs—POS, CRM, ERP, external feeds—into a query-ready repository

- Define data ownership, governance roles, and cataloging standards via metadata tools

- Validate ingestion success, schema conformity, and freshness SLAs

- Support real-time and batch feeds to match latency requirements

Core Data Sources and Technical Prerequisites

Retail organizations integrate multiple systems to create a 360° view of operations and customers:

- Transactional Sales: Point-of-sale and e-commerce platforms, including Shopify and Oracle Retail Xstore, must stream purchase events or deliver scheduled extracts.

- Customer Relationship Management: Platforms such as Salesforce CRM supply loyalty tiers, demographics, and engagement histories for personalization and segmentation.

- Inventory and Supply Chain: ERP and warehouse solutions—SAP S/4HANA, Oracle NetSuite—provide stock levels, lead times, safety thresholds, and replenishment rules.

- External Market Indicators: Economic metrics, competitor pricing and local event calendars via APIs like Quandl or subscription feeds augment internal data with context.

- Omnichannel Engagement Logs: Web sessions, mobile interactions, call-center logs and social media touchpoints require event hubs or change data capture for low-latency ingestion.

Key technical conditions include:

- Data governance framework and metadata cataloging—e.g., Collibra

- Compliance with PCI DSS, GDPR/CCPA, secure transfer (TLS), encryption at rest and role-based access

- Provisioned cloud warehouses—Snowflake, Google BigQuery, Azure Synapse Analytics

- API credentials and service accounts for ETL/ELT tools—Fivetran, Talend

- Agreed canonical entity definitions and schema registry for validation

Data Ingestion and Normalization Workflow

The ingestion and normalization workflow bridges raw inputs and the unified platform, ensuring consistency, accuracy, and readiness for AI-driven agents. The process orchestrates extraction, staging, transformation, validation, and loading into curated zones, leveraging both batch and streaming methodologies based on latency needs.

Actors and Infrastructure

Coordination spans source system owners, data engineering teams, integration platforms, streaming infrastructure, storage layers, monitoring services, and AI quality agents. Key tools include:

- ETL/ELT and orchestration: Apache NiFi, Informatica, Azure Data Factory, AWS Glue, Apache Airflow

- Streaming: Apache Kafka, AWS Kinesis

- Storage: Databricks, Snowflake, Hadoop clusters

- Monitoring: Prometheus, Grafana, cloud-native dashboards

- AI Quality Agents: anomaly detection, schema adherence checks, enrichment triggers

Ingestion Patterns

- Batch Ingestion: Hourly to daily extracts for historical loads, reconciliation and bulk updates, scheduled via orchestrators.

- Streaming Ingestion: Real-time capture of transactions and events using CDC connectors, supporting dynamic pricing and replenishment alerts.

- Hybrid Approaches: Streaming deltas for freshness, full batch extracts off-peak for reconciliation, balancing resource use and completeness.

Workflow Phases

- Source registration in metadata catalog and initial schema harvesting

- Connection setup with encrypted credentials and bulk landing zone extract

- Incremental streaming via Kafka or Kinesis with raw event staging

- Schema evolution handling through registry services and automated updates

- Normalization: canonical model alignment, date/time conversion, unit and currency standardization, enumeration mapping

- Deduplication and record matching using fuzzy and probabilistic algorithms

- Enrichment: geolocation, demographic segments, third-party market trends, AI inferrals

- Validation and quality checks: completeness, referential integrity, range checks, anomaly flagging

- Loading into curated zones in the data lake or warehouse with partitioning and indexing

- Audit logging and lineage tracking for traceability and compliance

Error Handling and Monitoring

- Retry strategies with exponential backoff for transient failures

- Dead-letter queues for exception routing and steward review

- Real-time dashboards displaying latency, throughput and error metrics

- Alerting via email, Slack or Teams when SLAs are breached or volumes deviate

AI-Driven Data Quality and Integration

AI capabilities automate detection and resolution of data inconsistencies, accelerate source onboarding, and enforce continuous governance. Embedded at pre-ingestion, schema mapping, entity resolution, pipeline orchestration, and monitoring stages, AI reduces manual cleansing by up to 80 percent and ensures downstream agents operate on high-fidelity data.

Pre-Ingestion Validation and Anomaly Detection

Supervised and unsupervised models flag unusual patterns—spikes in returns, negative prices, missing IDs—quarantining suspect batches within ETL tools such as Talend and generating alerts for rapid resolution. Core techniques include clustering, time-series reconstruction, auto-encoders, and feedback loops that refine thresholds.

Automated Schema Mapping

Natural language processing and statistical profiling speed schema alignment within metadata catalogs like Collibra. AI suggests field mappings, generates ETL recipes, handles unit conversions and maintains versioned lineage for audit. Semantic similarity algorithms and user-friendly interfaces enable rapid manual overrides when needed.

Entity Resolution and Enrichment

Graph algorithms and probabilistic matching unify duplicate customer profiles and product identifiers. Real-time enrichment uses APIs and machine learning scrapers to augment records with competitor prices, attribute details, and demographic data. Platforms like Great Expectations embed validation and enrichment, assigning confidence scores for manual review of low-certainty merges.

AI-Enhanced Pipeline Orchestration

Workflow engines—AWS Glue, Azure Data Factory—use AI modules to auto-scale compute, parallelize transformations, and prefetch external feeds. Event-driven architectures connect Kafka streams to model serving platforms such as DataRobot and Amazon SageMaker, enabling on-the-fly predictive scoring within data flows.

Continuous Monitoring and Feedback Loops

AI agents track data health indicators—completeness, accuracy, timeliness—and detect drift in source profiles. Automated tickets in governance platforms, root cause analysis via causal inference, and self-healing scripts correct minor mismatches. Feedback from forecasting accuracy, inventory optimization results, and pricing performance refines validation rules and anomaly detectors over time.

Consolidated Data Output and System Interfaces

The unified platform delivers curated data products to AI workflows and business applications via standardized schemas, metadata conventions, and flexible interfaces. This ensures forecasting agents, inventory models, pricing engines, and AI assistants receive timely, reliable inputs through well-defined handoffs.

Key Data Artifacts

- Cleaned transactional tables reconciled across channels

- Master customer profiles with unified identifiers, loyalty tiers, consent metadata

- Normalized inventory and product catalogs featuring SKUs, stock levels, lead times

- Feature store tables with precomputed demand and pricing indicators

- Metadata repository containing schema definitions, lineage and quality scorecards

Interface Patterns

- RESTful APIs for on-demand queries with OAuth 2.0 authentication

- SQL endpoints on Snowflake and Databricks Lakehouse for ad hoc analytics and batch exports

- Real-time event streams via Apache Kafka topics for low-latency updates

- Scheduled exports in CSV, Parquet or ORC to object storage with webhook or email notifications

Handoff and Orchestration

- Completion triggers from orchestration engines launch downstream forecasting and replenishment jobs

- Dependency mapping verifies dataset freshness, schema version and access rights

- Policy enforcement restricts sensitive fields, enforces retention and logs usage

- Notifications to stakeholders via email, Slack or Microsoft Teams upon updates or failures

Governance and Observability

- Lineage tracking across all transformation steps for traceability

- Performance metrics—latency, success rates, response times—monitored with AI anomaly detectors

- Comprehensive audit logs capturing schema changes, access events, orchestration actions

- Feedback loops enabling consumer teams to submit quality tickets and request schema enhancements

Best Practices for Scalable Outputs

- Define SLAs for freshness, accuracy and availability aligned to AI requirements

- Version output schemas and maintain backward compatibility

- Automate regression tests validating content and interface performance

- Adopt consumer-driven contract testing to prevent disruptions

- Decouple transformation logic from delivery layers for independent scaling

Chapter 3: Implementing AI Agents for Demand Forecasting

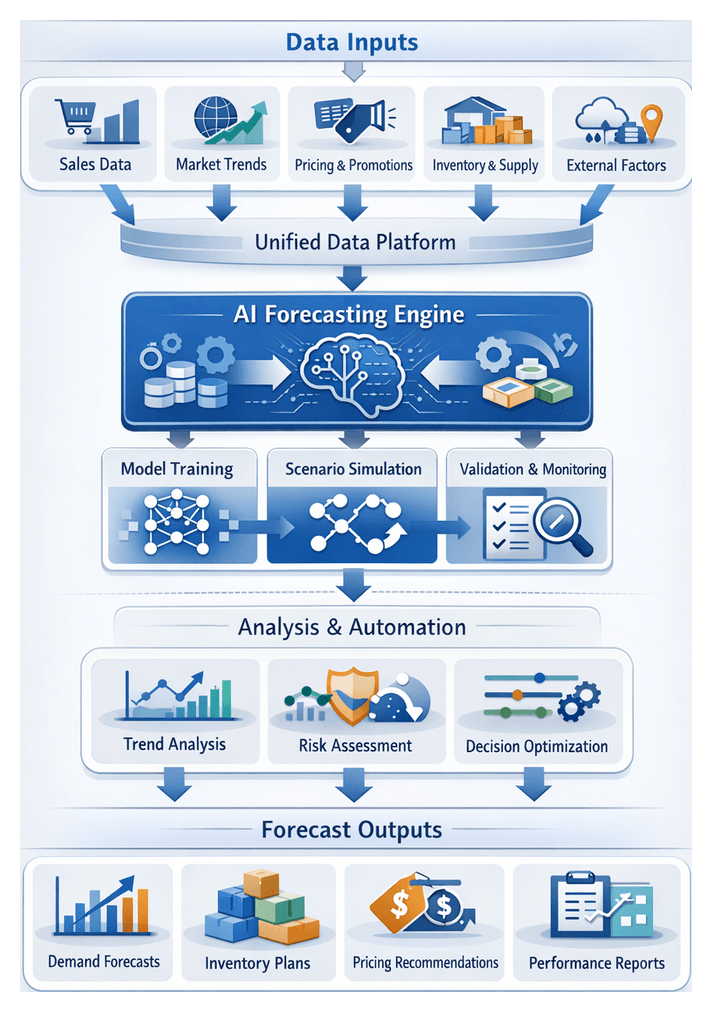

Forecasting Stage Objectives and Data Foundations

Accurate demand forecasting underpins effective inventory management, pricing strategies, promotional planning and workforce allocation in retail. The forecasting stage transforms historical transactions and contextual signals into quantitative and qualitative demand estimates, aligning cross-functional teams around common goals and enabling data-driven decision making. Key objectives include:

- Short-Term Demand Prediction: Daily and weekly sales forecasts to guide replenishment cycles and allocate stock across brick-and-mortar and e-commerce channels.

- Seasonal and Event-Driven Projections: Anticipation of demand fluctuations tied to holidays, promotions, weather events and local market activities.

- New Product Introduction Forecasts: Estimation of sell-through rates for newly launched SKUs using analogous item data and category trends.

- Channel-Level Segmentation: Separate forecasts for in-store, mobile and online channels to tailor inventory and marketing tactics.

- Risk and Uncertainty Quantification: Confidence intervals and probability distributions to support safety-stock calculations and scenario planning.

Deliverables from this stage comprise:

- Point Forecasts and Prediction Intervals for each SKU, store location and time horizon.

- Scenario Analyses under alternative assumptions—promotional spend, competitor actions or supply disruptions.

- Trend and Seasonality Components isolating recurring patterns and long-term shifts.

- Model Performance Reports measuring MAPE, RMSE, bias and service-level adherence.

- Narrative Insights explaining anomalies, emerging trends and potential drivers of demand changes.

Achieving reliable forecasts requires assembling comprehensive data inputs:

- Historical Sales Records: Transaction-level data, returns, promotions and price points.

- Promotional and Marketing Calendars: Campaign schedules, advertising spend and coupon distributions.

- Pricing and Competitive Intelligence: Historical prices, competitor pricing feeds and elasticity parameters.

- Inventory and Supply Chain Signals: On-hand stock levels, lead times, supplier metrics and distribution throughput.

- External Market Indicators: Economic data, weather patterns, local events and social media sentiment.

- Store and Channel Attributes: Footprint, customer demographics, online traffic metrics and conversion rates.

Data quality prerequisites include completeness, consistency, recency, accuracy, traceability and scalability. Organizations must integrate inputs from legacy point-of-sale systems, marketing platforms, ERPs and external feeds into a unified data repository—such as a centralized data lake or warehouse—with automated ETL/ELT pipelines managed by tools like AWS Glue or Apache Airflow. Robust governance frameworks, incorporating data profiling and anomaly detection agents, ensure ongoing adherence to quality standards.

Technical and organizational foundations encompass:

- Compute Infrastructure: GPU-enabled clusters or cloud services via AWS SageMaker and Azure Machine Learning for model training and inference.

- Orchestration Layer: Workflow engines such as Apache Airflow or Prefect to coordinate data ingestion, model execution and result delivery.

- API Management: Gateways like Amazon API Gateway or Apigee to expose forecasting services securely to downstream consumers.

- Cross-Functional Collaboration: Clear roles for data engineers, MLOps specialists, data scientists, demand planners and IT operations under a governance framework defining access controls, explainability requirements and retraining cadences.

Model training conditions demand sufficient historical depth (one to two years), aligned temporal granularity, outlier handling processes, engineered features (moving averages, holiday flags, elasticities), cross-validation strategies and scheduled retraining intervals. Establishing quantitative performance criteria—MAPE, RMSE, bias, service-level adherence and lead-time error—is essential for continuous monitoring and trust in automated forecasts.

Forecasting Agent Workflow and Orchestration

The forecasting agent workflow operationalizes demand prediction through a coordinated sequence of automated processes and human oversight. Key stages include:

Data Retrieval, Validation and Staging

- Scheduled Extraction: Orchestration jobs query the unified data platform nightly for historical sales, promotions and external indicators.

- Schema Validation: AI-driven data quality agents verify field types, missing values and anomalies, triggering alerts via ticketing or collaboration tools.

- Normalization and Enrichment: Transformation scripts align timestamps, geocode locations and enrich customer segments using machine learning clusters.

- Staging: Clean datasets are deposited into model staging areas accessible through secure API endpoints.

Model Execution Coordination

- Dependency Resolution: The orchestrator ensures data ingestion, quality checks and metadata updates are complete.

- Resource Allocation: Compute instances—from on-prem GPU nodes to cloud platforms—are provisioned based on model complexity and data volume.

- Task Dispatch: Containerized model runs receive configuration parameters—training window, seasonal adjustments and feature sets.

- Parallel Scenario Simulation: Concurrent executions produce baseline forecasts, promotional uplift analyses and real-world event simulations.

Training Pipeline and Version Management

- Configuration Retrieval: Hyperparameters and feature definitions are managed in registries like MLflow or Git repositories.

- Data Sampling: Modules select training, validation and test sets to balance recent trends with historical variability.

- Model Training: Time-series algorithms—ARIMA, LSTM networks or gradient-boosted trees—train on prepared data, logging performance metrics.

- Validation and Selection: Automated services compare candidate models, promoting the best performer to inference and archiving others for lineage.

- Artifact Storage: Serialized pipelines and transformation scripts are stored in artifact repositories with version tags linking code and data.

Forecast Generation and Quality Assurance

- Threshold Validation: Automated checks compare forecasts against historical bounds and business rules, flagging extremes.

- Residual Analysis: Scripts compute residual distributions to detect biases or mismatches.

- Human-in-the-Loop Review: Demand planners receive alerts for manual investigation and adjustments.

- Publishing: Approved forecasts are committed to forecast databases and exposed via APIs to inventory and pricing systems.

Error Handling, Monitoring and Feedback Loops

- Centralized Logging: Workflow logs are aggregated into platforms like Datadog or the ELK Stack.

- Alert Rules and Automatic Retries: Monitoring agents track failures and data latency, triggering retries or notifications.

- Performance Monitoring: Real-time sales data is compared against forecasts, with deviations recorded for root-cause analysis.

- Adaptive Retraining: Workflows initiate retraining when error thresholds are breached, incorporating new ground-truth data.

- Governance Reviews: Periodic stakeholder meetings assess performance metrics, feature efficacy and process improvements.

Roles and Responsibilities

- Data Engineers: Build and maintain data pipelines, enforce quality standards and manage schemas.

- MLOps Specialists: Oversee orchestration services, container environments and artifact repositories.

- Data Scientists: Develop forecasting experiments, select algorithms and validate performance.

- Demand Planners: Review forecasts, conduct scenario analyses and provide feedback for model refinement.

- IT Operations: Monitor infrastructure health, ensure security compliance and resolve capacity issues.

Core AI Components in the Retail Sales Workflow

A harmonized retail AI ecosystem integrates forecasting agents with inventory optimization models, dynamic pricing engines and AI-powered sales assistants, all supported by unified data platforms and orchestration layers. Key components include:

Demand Forecasting Agents

These agents fuse point-of-sale data, ERP records and external feeds to generate probabilistic demand predictions using time-series models like ARIMA, LSTM networks and Prophet. They detect anomalies, run scenario simulations and self-calibrate as new data arrives by leveraging platforms such as Amazon Forecast and Google Cloud AI Platform.

Inventory Optimization Models

These models convert forecasts into replenishment quantities and safety-stock levels across multi-echelon supply networks. They adjust dynamically for demand variability and lead-time uncertainty, prioritize channel allocations, and trigger real-time reorder events. Cloud-native solutions include the Blue Yonder Luminate Platform and Oracle Inventory Management.

Dynamic Pricing Engines

Leveraging real-time inventory positions, competitor prices and demand elasticity models, pricing engines recommend optimal price points and discount strategies. They incorporate competitive intelligence, promotion lift analyses and business guardrails, delivering updates to e-commerce sites and POS systems. Platforms such as Pricefx and SAP CPQ automate price workflows with audit trails and version control.

AI-Powered Sales Assistants

Embedded in mobile apps and CRM platforms, AI assistants analyze customer profiles, purchase history and real-time inventory to suggest personalized recommendations, upsell prompts and stock availability alerts. Conversational interfaces powered by natural language processing streamline product discovery. Retailers leverage Salesforce Einstein and Microsoft Power Virtual Agents to enable data-driven customer interactions.

Supporting Infrastructure and Orchestration

- Unified Data Platforms aggregating POS records, customer data and external feeds into a single source of truth.

- Event Streaming and ETL Pipelines using Confluent, Apache Kafka and AWS Glue.

- Workflow Engines such as Apache Airflow or Prefect for scheduling and dependency management.

- API Management for secure access via gateways like Amazon API Gateway.

- Monitoring and Logging with platforms such as Datadog to track performance and system health.

By encapsulating each AI capability behind microservices and orchestrating tasks through event-driven architectures, retailers achieve end-to-end visibility, modular scalability and rapid innovation. Real-time feedback loops ensure actual sales and customer responses continuously refine forecasting and pricing models.

Forecast Outputs, Integration and Governance

The forecasting stage produces structured outputs and integration points that feed downstream systems in inventory, pricing, supply chain and sales enablement.

Outputs and Dependencies

- Quantitative Forecasts: Point estimates, prediction intervals, scenario series and decomposed trend components.

- Qualitative Reports: Accuracy metrics (MAPE, RMSE), anomaly explanations and trend narratives.

- Downstream Consumers: Inventory optimizers, pricing engines, merchandising dashboards, ERP replenishment systems and AI sales assistants.

Integration Mechanisms

- Scheduled Pipelines: ETL jobs managed by Azure Data Factory or Apache Airflow deliver forecasts to data warehouses.

- RESTful APIs: On-demand query endpoints secured by API gateways return JSON payloads with forecast details.

- Event-Driven Messaging: Forecast updates published to queues and topics via Amazon SQS, AWS Kinesis or Apache Kafka.

- Data Streaming: Platforms like Apache Flink process sub-hourly inputs and emit incremental forecast deltas.

Governance and Version Control

- Model Registry Integration: Cataloging versions, hyperparameters and training datasets in registries like MLflow.

- Output Tagging: Embedding model version and timestamp in each record for traceability.

- Monitoring Dashboards: Tracking model drift, input distribution changes and performance alerts.

Best Practices and Quality Assurance

- Standardize Forecast Schemas with fields for SKU, location, timestamp, point estimate and prediction bounds.

- Implement End-to-End Monitoring of forecast latency and consumption with alerting for failures.

- Enforce Contract Testing to validate downstream parsing of forecast payloads.

- Document Data Contracts and Refresh Cadences for each dependent system.

- Align Model Retraining Schedules with Inventory and Pricing Planning Cycles.

Example Integration Scenario

- Daily forecast files written to a Snowflake data lake trigger an event in Amazon S3.

- An AWS Lambda function publishes messages to an SQS queue named “DailyForecastUpdates”.

- The Blue Yonder Luminate Platform ingests updates to recalibrate safety stocks and generate replenishment proposals.

- Simultaneously, the pricing engine queries the forecasting REST API ahead of the weekly markdown cycle.

- A Tableau dashboard retrieves forecast data via JDBC for regional planning meetings.

Continuous Feedback and Improvement

- Automated Sales Data Ingestion routes POS and depletion data back into the forecasting pipeline for retraining.

- Error Attribution modules correlate forecast accuracy with business outcomes like promotional uplift and stockout rates.

- Adaptive Retraining Triggers launch model updates when error thresholds are exceeded.

By ensuring rigorous data governance, defined integration patterns and closed-loop feedback mechanisms, the forecasting stage becomes the linchpin of an AI-orchestrated retail workflow—enabling synchronized, data-driven decisions across inventory, pricing and sales operations.

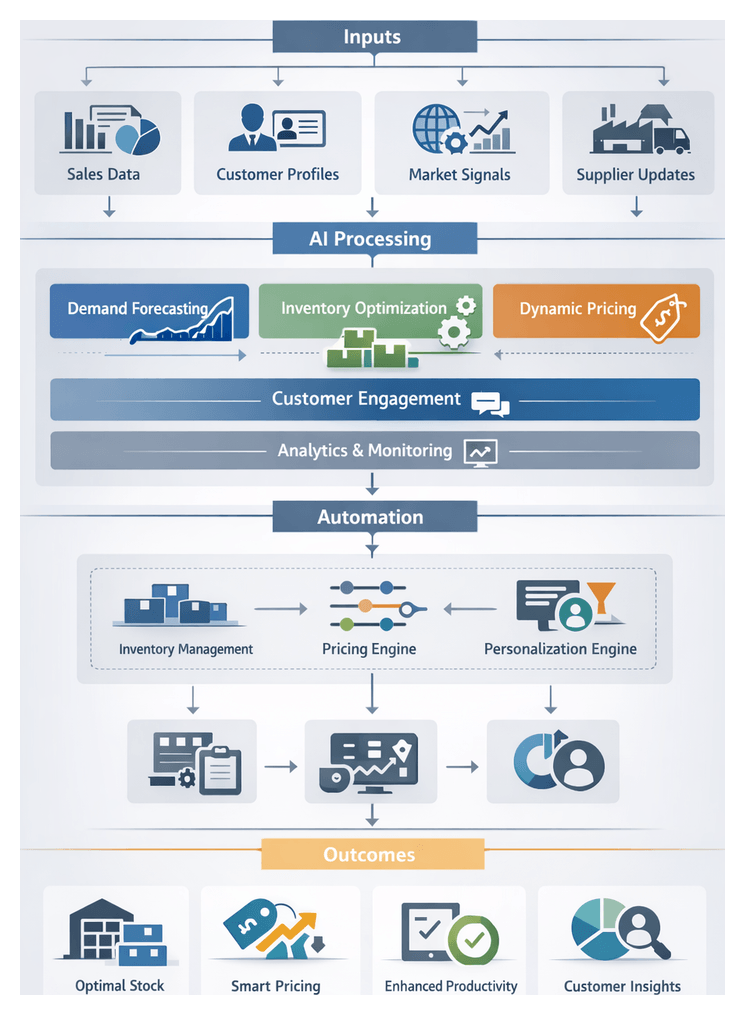

Chapter 4: Integrating AI Agents into Inventory Workflows

Purpose and Significance of Inventory Optimization

Inventory optimization is the pivotal stage where demand forecasts, operational constraints, and business objectives converge to guide stock distribution and replenishment decisions. In a modern retail environment marked by diverse sales channels, fluctuating consumer demand, and complex supply networks, manual inventory management falls short. AI-driven optimization ensures that each product is available at the right location, in the right quantity, at the right time, while minimizing carrying costs and mitigating stockout risks.

By translating predictive insights into actionable allocation plans and replenishment orders, the system dynamically adjusts stock levels across warehouses, distribution centers, and retail outlets. This proactive approach supports consistent customer experiences, reduces overstock and obsolescence, and aligns inventory investments with expected sales performance, ultimately protecting margins and enhancing operational resilience.

- Balance service levels and carrying costs with optimal stock buffers across channels

- Leverage demand forecasts to trigger timely replenishment and prevent stockouts

- Dynamically allocate inventory by channel priority and regional demand

- Support promotions and seasonal spikes with scalable safety stock strategies

- Respond rapidly to disruptions through continuous re-optimization

Prerequisites and System Integration

Data Readiness

- Demand Forecast Data: Granular forecasts by SKU, location, and time interval, adjusted for promotions and seasonality, validated for completeness.

- Current Inventory Records: Real-time visibility into on-hand stock across all nodes, with frequent synchronization to reflect receipts, transfers, and sales.

- Supply Lead Times: Supplier performance and transit metrics, including variability, to anticipate replenishment delays.

- Product Hierarchies and Attributes: Structured taxonomy of product families, SKUs, and variants, including perishability, size, and seasonality.

- Cost and Budget Parameters: Carrying cost rates, ordering costs, and budget ceilings for alignment with margin targets and working capital constraints.

- Service Level Targets: Defined fill-rate goals by channel or region to balance availability and cost efficiency.

System Integration and Governance

- Unified Data Platform Connectivity: APIs or messaging frameworks deliver forecast updates, inventory snapshots, and transactional events in near real time.

- Order Management Interface: Automated handoffs of replenishment recommendations into procurement or ERP systems.

- Alerting and Exception Handling: Integration with notification services and operational dashboards for timely issue resolution.

- Security and Access Controls: Role-based permissions, authentication, and encryption to protect data integrity and governance.

- Cross-Functional Collaboration: Defined decision rights and policies established by merchandising, supply chain, and finance teams.

- Performance Monitoring Framework: Dashboards tracking inventory turnover, stockout rates, and forecast bias, with AI-driven deviation detection.

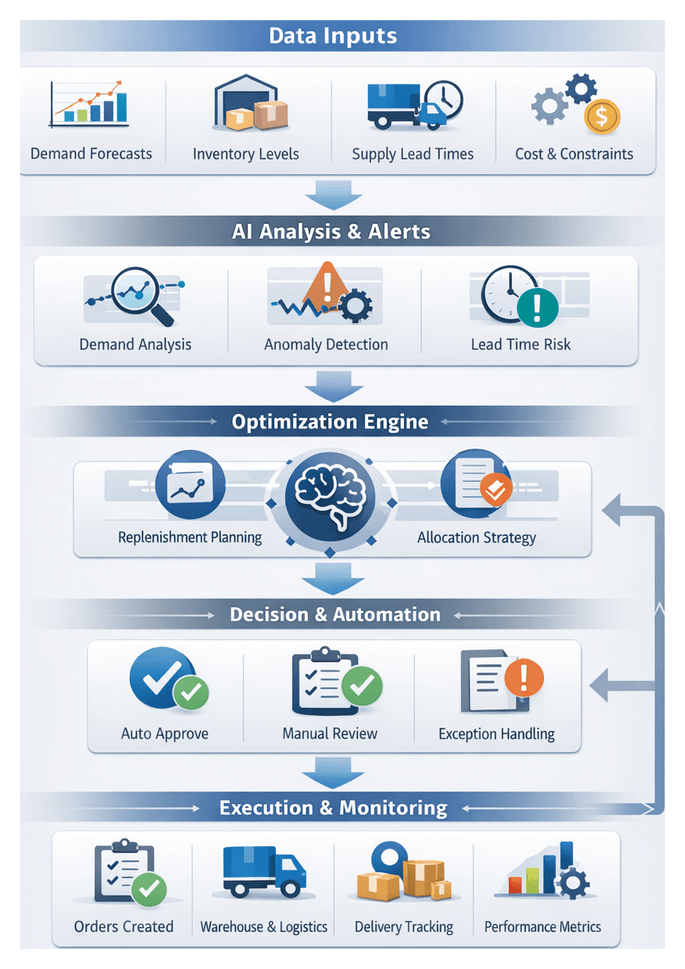

AI-Driven Replenishment and Allocation Workflow

Step 1: Continuous Inventory Monitoring

Real-time telemetry is ingested from point-of-sale transactions, IoT shelf sensors, inbound shipment notices, and external market signals. A streaming platform—such as Apache Kafka or Amazon Kinesis—normalizes events into a unified store. An AI data orchestration service enriches this data with item categorization, location hierarchies, and stock health metrics.

Step 2: Trigger Generation and Anomaly Detection

An AI agent analyzes stock levels against thresholds and forecasted demand using time-series anomaly detection. Alerts may include:

- Threshold-Based Alerts when inventory falls below safety stock

- Anomaly Alerts identifying unexpected consumption spikes or drops

- Lead-Time Risk Detection flagging potential supplier delays

Alerts enter an orchestration layer queue with metadata on trigger type, confidence score, and urgency.

Step 3: Replenishment Recommendation Generation

The orchestration layer invokes an optimization model with inputs such as demand forecasts, carrying costs, service level targets, and supplier constraints. AI-driven engines—such as Relex Solutions or Blue Yonder Luminate Planning—run constrained linear programs or heuristics to produce recommendations including order quantities, supplier selection, and delivery dates. Each suggestion receives a priority score for automated or manual processing.

Step 4: Approval and Exception Handling

Recommendations are classified by a rules engine:

- Auto-Approved: Within predefined cost and budget limits

- Manager Review Required: Exceed thresholds or involve new suppliers

- Exception Escalation: Data anomalies or conflicting signals

Notifications via Slack or Microsoft Teams and procurement portals present context, visualizations, and override options. Approved items progress to order creation.

Step 5: Order Creation and Execution Tracking

Approved recommendations transform into purchase orders or internal transfers. Systems involved include:

- Order Management System for EDI 850 or API calls

- Warehouse Management System for transfer tasks

- Enterprise Resource Planning for financial postings

APIs ensure acknowledgments and capture integration failures for automated retries.

Step 6: Execution Monitoring and Confirmation

An AI monitoring agent tracks supplier acknowledgments, shipment statuses, and goods receipt validations. Discrepancies trigger corrective actions such as back-orders or credit memos. Execution metrics feed back into performance dashboards.

AI Functions in Stock Allocation

Integrated within the replenishment workflow, specialized AI functions optimize multi-echelon stock distribution:

- Demand Sensing and Real-Time Adjustment: Refines forecasts with point-of-sale, promotions, weather, and social media data; triggers rapid stock re-allocation.

- Predictive Allocation Modeling: Uses machine learning to estimate demand probabilities by SKU-location, incorporating seasonality and local factors.

- Optimization Engine: Solves mixed-integer programs or network flow models to maximize service levels and minimize total cost, subject to capacity and lead-time constraints.

- Scenario Simulation and What-If Analysis: Enables planners to test safety stock, lead-time, or promotion impacts and evaluate cost-service trade-offs.

- Reinforcement Learning and Adaptive Policies: Learns from outcomes to refine allocation strategies over time, optimizing for long-term KPIs.

- Exception Detection and Alerting: Identifies anomalous allocation patterns and delivers alerts for timely intervention.

Platforms such as Blue Yonder Luminate, Oracle Retail Inventory Optimization, and SAS Inventory Optimization embed these AI functions within comprehensive supply chain suites. Integration with ERP, WMS, OMS, TMS, POS, and third-party data feeds forms a coordinated orchestration layer that automates allocation execution and feedback loops.

Alerting and Downstream Handoffs

Alert Outputs and Formats

The alerting stage transforms analytic insights into actionable notifications:

- Low-Stock Alerts with SKU, location, projected run-out, and reorder suggestions

- Overstock Warnings with markdown or promotional recommendations

- Expiration Notices for perishable goods needing repricing or redistribution

- Allocation Imbalance Notifications recommending transfers

- Replenishment Execution Confirmations with order references and delivery windows

Dependencies and Integration Patterns

- Order Management Systems via RESTful APIs for automatic order creation—e.g., Blue Yonder Luminate Platform

- Warehouse Management Systems consuming imbalance notices—e.g., Relex Solutions

- Merchandising Tools for dynamic repricing

- Store Execution Apps delivering push alerts—e.g., Infor Coleman

- Analytics Dashboards subscribing to event streams—e.g., IBM Watson Supply Chain

Integration employs patterns such as event-driven messaging, webhook callbacks, scheduled polling, file-based transfers, and push notifications to ensure reliable delivery and context-rich payloads.

Monitoring, Acknowledgement, and Best Practices

Robust handoffs require end-to-end observability and governance:

- Delivery Status Dashboards using Prometheus or Grafana

- Acknowledgement callbacks and error retry policies with exponential backoff

- Security via encryption, API keys, and role-based access

- Idempotence and deduplication to prevent duplicate actions

- Schema versioning for backward compatibility

- Business escalations through PagerDuty for unacknowledged critical alerts

By combining comprehensive alert outputs, precise integration patterns, and rigorous monitoring, the inventory optimization workflow ensures that AI-driven decisions flow seamlessly into operational systems. This end-to-end orchestration maintains alignment between real-time demand signals and inventory positions, driving superior service levels while controlling costs.

Chapter 5: Automating Pricing and Promotion Strategies

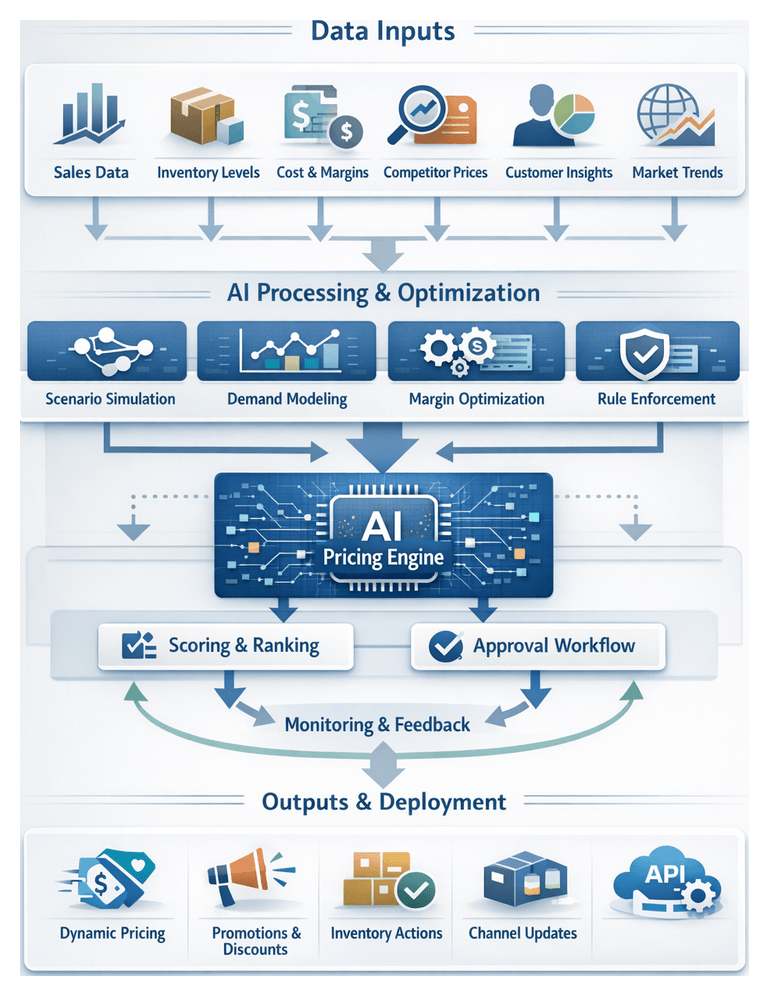

Pricing Strategy Goals and Data Foundations

Effective AI-driven pricing begins with clearly defined objectives and rigorous data prerequisites. At this stage, retailers translate high-level goals—such as target gross margin, market share expansion or inventory clearance—into quantifiable parameters for dynamic price adjustments and promotions. By aligning on numeric targets and acceptable price movement bounds, organizations ensure AI models prioritize among potentially conflicting objectives, balancing revenue growth, margin protection and customer satisfaction.

Key strategic goals include:

- Accelerating inventory turnover through optimized markdown timing and severity

- Managing customer perception by avoiding erratic price swings

- Maintaining competitive responsiveness in fast-moving segments

- Protecting profit during peak demand with surge pricing or premium assignments

To support these goals, the following data inputs must meet standards for accuracy, timeliness and completeness:

- Historical Sales and Transactions: SKU-level volumes, revenue by period, promotional lift metrics. Prerequisite: at least one full seasonal cycle retained for seasonality modeling.

- Inventory and Fulfillment: Real-time stock across distribution centers, stores and e-commerce; lead times; replenishment schedules. Prerequisite: unified ERP, WMS and POS integration.

- Cost and Margin Structures: Unit and landed costs, variable overheads, gross margin targets. Prerequisite: centralized cost repository with version control.

- Competitive Intelligence: Live feeds or daily competitor price snapshots, market basket substitution patterns. Prerequisite: integration with external providers or web-scraping services.

- Customer Segmentation and Behavior: Purchase frequency, average order value, channel preference; price sensitivity models. Prerequisite: CRM with unified identifiers and consent management.

- Promotion Calendars and Campaign Plans: Scheduled events, discount tiers, channel assignments; approval workflows. Prerequisite: marketing operations system feeding the pricing engine.

- Macroeconomic and Market Indicators: Consumer confidence indices, CPI trends, weather patterns, local events. Prerequisite: automated ingestion pipelines for third-party feeds.

- Channel Performance Metrics: Online conversion rates, cart abandonment, store footfall, fulfillment cost variances. Prerequisite: web analytics and in-store sensor data integration.

Data must be refreshed at defined cadences—sales and inventory updates every 15 minutes, competitive feeds hourly—and pass quality checks such as schema validation and anomaly detection. Pricing adjustments adhere to corporate policies, MAP agreements and regional regulations encoded in a policy engine. Robust APIs connect the unified data platform, approval workflows and POS or e-commerce systems, while audit logs capture decision lineage for compliance.

Leading AI platforms power these capabilities. For model training and inference, retailers leverage Google Vertex AI, while end-to-end dynamic pricing can be orchestrated by AI solutions. Evaluating each tool’s data ingestion, explainability and real-time deployment features ensures alignment with business roadmaps.

Orchestration of Pricing and Promotions

A centralized orchestration layer coordinates data integration, model execution, approvals and deployment sequencing. It ingests demand forecasts, inventory positions and competitive intelligence, normalizes units and currencies, and flags anomalies through exception handlers before modeling begins.

The algorithmic recommendation loop iterates as follows:

- Scenario Simulation: Reinforcement learning agents simulate customer responses under varying price and promotional conditions.

- Elasticity Modeling: Supervised models estimate demand sensitivity using historical response patterns and segment behaviors.

- Margin Optimization: Mixed-integer programming integrates cost inputs and margin targets to enforce financial policies.

- Constraint Enforcement: Business rules—price floors, channel restrictions, tax regulations—filter invalid proposals.

- Scoring and Ranking: Candidate prices and bundles are scored on revenue lift, conversion probability and inventory impact.

The top-scoring recommendations enter the approval workflow, where merchandising, finance, legal and marketing teams sequentially validate brand alignment, budget compliance, regulatory constraints and scheduling. Automated notifications and escalation policies maintain momentum, rerouting tasks to backups when necessary.

Upon approval, the orchestration layer dispatches updates across channels:

- E-commerce platforms via REST APIs

- Point-of-sale systems through secure message queues

- Digital signage connectors and mobile app push notifications

- Partner portals via SFTP or secure API integrations

Each endpoint confirms receipt; failures trigger rollback procedures or alert support teams. Exception handling routines automatically revert price floor violations, suspend promotions on insufficient stock, pause workflows on data quality alerts and enable manual overrides for senior managers. Periodic governance reports highlight exception trends and guide policy refinements.

AI Models and Supporting Infrastructure

Dynamic pricing combines supervised learning, reinforcement learning and optimization techniques. Regression and tree-based algorithms predict price elasticity, while frameworks like TensorFlow Agents and Ray RLlib train reinforcement learning policies that balance sales volume and margin objectives. Optimization engines such as PROS and Pricefx solve constrained pricing problems via mixed-integer programming and convex solvers.

Operationalizing these models requires:

- Data Ingestion and Feature Store: Streaming platforms like Apache Kafka or Amazon Kinesis feed real-time events into a centralized repository to ensure consistency between training and inference data.

- Model Training and Experimentation: Platforms such as Amazon SageMaker and Google Cloud AI Platform support versioned experiments, hyperparameter tracking and validation.

- Model Serving and Inference: Kubernetes-based environments using KFServing or cloud endpoints like Azure Machine Learning handle batch and real-time inference at scale.

- Orchestration and Workflow Management: Engines such as Apache Airflow or Prefect schedule retraining, feature computation and price generation tasks.

Integration layers expose model outputs via RESTful APIs or message queues. Commerce platforms like Shopify, Oracle Retail and SAP Commerce consume these feeds for automated price updates. Closed-loop feedback through A/B testing frameworks and real-time monitoring refines elasticity estimates and policy rewards. Explainable AI techniques, including SHAP and LIME, surface factor contributions for stakeholder review. Audit trails capture data versions, model artifacts and decision logs, while role-based access controls secure sensitive strategies.

Deliverables, Integration and Handoff

The pricing and promotion stage yields core deliverables that downstream systems and teams consume to execute campaigns:

- Price Adjustment Recommendations: Time-bound SKU price points with rationale from elasticity and competitive models.

- Promotional Calendar: Sequenced events mapped to dates, channels, customer segments and asset deadlines.

- Markdown and Clearance Plans: Tiered markdown thresholds tied to inventory age or levels, with velocity and margin projections.

- Test and Control Cohorts: Assignments for A/B testing including sample sizes, criteria and benchmarks.

- Pricing Matrix and Tiers: Loyalty, wholesale and regional structures, including volume discounts and bundle guidelines.

- Business Rules Documentation: Discount limits, regulatory notes and override protocols.

These outputs integrate with:

- Merchandising platforms (e.g., Pricefx) for assortment planning and allocation

- CRM systems for segment definitions and personalized offer codes

- POS and e-commerce platforms via API or batch loads for consistent pricing

- Inventory systems to trigger clearance or redistribution based on markdown plans

- Marketing automation for creative deployment and engagement tracking

- Analytics dashboards for post-campaign performance evaluation

Deliverables are packaged in standardized formats—CSV/TSV, XML—exposed via RESTful APIs with JSON payloads, broadcast through messaging platforms such as Kafka or Azure Event Hubs, or written directly to shared databases via ETL jobs. Handoff protocols include automated validation checks, stakeholder sign-off workflows, pre-production staging with end-to-end testing, synchronized release windows and notification acknowledgments. Quality controls verify margin floors, detect promotional overlaps, audit regulatory compliance and enforce brand guidelines.

Monitoring, Feedback and Governance

Continuous monitoring agents track real-time performance metrics—sales velocity, inventory depletion, competitive responses, margin variance and customer sentiment—from unified dashboards. Automated anomaly detectors surface underperformance or margin erosion, triggering corrective actions or human intervention workflows. Event and transaction logs feed back into machine learning pipelines to refine elasticity models and policy simulations. Periodic optimization reports summarize lessons learned, best-performing promotions and recommendations for future campaigns.

Governance frameworks oversee fairness, transparency and compliance. Explainability tools allow review of model drivers, while audit trails document data sources, model versions and decision outcomes. Role-based access controls and periodic policy reviews maintain stakeholder trust, ensuring that AI-driven pricing and promotions operate within prescribed guardrails and drive sustainable commercial impact.

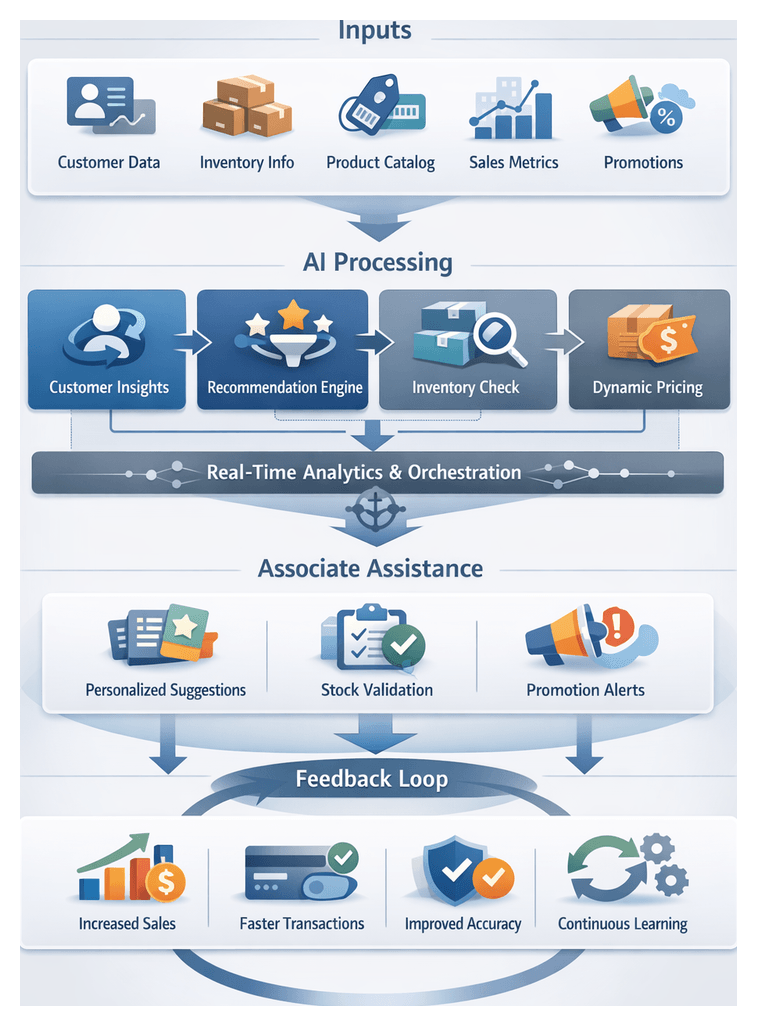

Chapter 6: Empowering Sales Associates with AI Assistants

Purpose and Business Impact of AI-Powered Sales Associate Assistance

Equipping frontline staff with real-time, context-rich guidance has become essential in modern retail. AI-powered sales associate assistance delivers personalized product recommendations, stock availability alerts, and promotional suggestions at the point of interaction. By embedding intelligent agents into associate workflows, retailers reduce decision latency, improve conversion rates, and transform staff from transactional facilitators into strategic advisors.

Deploying these tools drives measurable outcomes. Faster transaction times boost customer satisfaction and throughput during peak periods. Personalized recommendations powered by real-time context and predictive analytics increase average order value through targeted upsell and cross-sell efforts. Unified data access reduces errors and discrepancies, minimizing shrinkage and improving inventory turnover. Continuous learning loops refine recommendations and inventory forecasts, enhancing both frontline support and supply chain decisions over time.

Key Inputs, Technical Prerequisites, and Organizational Conditions

Critical Data Inputs

- Customer Profile and History: Real-time access via CRM platforms such as Salesforce, including purchase history, loyalty tier and preferences.

- Inventory Availability and Location: Live stock counts and replenishment schedules from systems like Oracle NetSuite.

- Product Catalog Metadata: Item attributes, images and pricing rules from the product information management service.

- Store Operational Metrics: Sales velocity and foot traffic analytics provided by in-store analytics platforms.

- Promotional Campaign Data: Active discounts and bundling offers governed by promotion engines such as DynamicPricing.ai.

- Contextual Signals: Weather, local events and other factors influencing customer behavior.

- Communication Channel Status: Connectivity indicators for handheld devices and failover provisions.

- Unified Data Platform Integration: APIs to ingest data from POS, CRM, inventory and promotion systems into the AI assistant’s data store.

- AI Assistant Infrastructure: On-premises or cloud environment hosting natural language processing services, recommendation engines and conversational modules.

- Authentication and Access Control: Single sign-on with role-based permissions governing data visibility.

- Real-Time Event Bus: Messaging framework—such as Apache Kafka or AWS EventBridge—to deliver inventory updates and customer interactions within seconds.

- Device Compatibility: Support for tablets, handheld terminals or browser-based interfaces.

- Network Resilience and Security: Encryption in transit and at rest, compliance with PCI DSS and GDPR.

- Change Management: Training programs and communication plans preparing associates for AI-driven workflows.

- Data Governance Framework: Policies defining data ownership, quality standards and refresh cadences.

- Cross-Functional Collaboration: Coordination among IT, merchandising, marketing and operations teams.

- Performance Tracking and Feedback Loops: User satisfaction surveys and usage analytics guiding continuous refinement.

- Vendor and Tool Selection: Evaluation of platforms based on technical and security criteria.

In-Store AI Interaction Flow

The in-store interaction flow orchestrates data retrieval, decision logic and context-aware recommendations across CRM, inventory, POS and analytics systems. It guides associates through defined phases:

- Phase 1: Session Initialization and Context Retrieval

- Phase 2: AI-Driven Exploration and Recommendation

- Phase 3: Inventory Validation and Reservation

- Phase 4: Transaction Completion and Data Synchronization

- Phase 5: Feedback Capture and Continuous Learning

Phase 1: Session Initialization and Context Retrieval

Upon launch, the assistant authenticates the associate via the retail network service and retrieves role-based permissions. If a customer identifier is provided, the system queries the CRM—such as Salesforce—to fetch loyalty tier, purchase history and pending orders. Biometric verification or QR code scanning can streamline identification. Contextual parameters—active promotions, average spend, pending pickups—are cached locally to ensure low latency and support offline operation.

Phase 2: AI-Driven Exploration and Recommendation

The assistant employs a recommendation engine combining multiple models:

- Collaborative filtering to leverage segment behaviors

- Content-based matching for style and preferences

- Trend detection from social signals

- Promotional affinity aligned with campaign rules

An orchestration service aggregates and ranks suggestions by purchase likelihood, inventory freshness and revenue targets, then presents top items as interactive cards.

Phase 3: Inventory Validation and Reservation

Selected SKUs are validated via real-time API calls to the inventory system—such as Oracle NetSuite—checking on-hand, in-transit and available-to-promise quantities. If stock is insufficient, the assistant queries nearby stores or warehouses. It then requests holds through the order management service, triggering inter-store transfers or rain checks as needed. For omnichannel orders, expedited shipping options and cost trade-offs are calculated in real time.

Phase 4: Transaction Completion and Data Synchronization

The assistant interfaces with the POS—such as Square—to compile order details, apply promotions and process payment. Simultaneously, a synchronization API streams transaction events to analytics platforms like Tableau, updating dashboards with conversion metrics. Gift wrapping or additional services are scheduled via the workforce management module, and all session details are recorded in the CRM for follow-up.

Phase 5: Feedback Capture and Continuous Learning