AI Driven Predictive Maintenance An End to End Workflow for Transportation and Logistics

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Overview of Predictive Maintenance Challenges

Asset-intensive operations in transportation and logistics face mounting pressures from unplanned equipment failures, escalating maintenance costs, data silos, and growing technical complexity. Traditional reactive repairs and fixed-interval servicing are no longer sufficient to sustain high fleet availability, control expenditures, and meet stringent safety and compliance requirements. A shift to AI-driven predictive maintenance can address these systemic issues by leveraging real-time condition monitoring, advanced analytics, and automated decision support.

- Unplanned Equipment Failures and Their Impact

- Operational disruptions such as missed delivery windows, network bottlenecks, and emergency maintenance that divert resources

- Financial penalties from idle assets, overtime labor, expedited parts procurement, and increased insurance premiums

- Elevated safety and compliance risks, including accident potential, regulatory fines, and workforce morale challenges

- Rising Costs and Resource Inefficiencies

- Over-servicing due to time-based intervals that ignore actual equipment condition

- Under-servicing that misses emerging faults, leading to catastrophic breakdowns

- Spare parts imbalances causing excess carrying costs or stockouts

- Poorly coordinated technician schedules, idle time, and emergency call-outs

- Data Fragmentation and Visibility Gaps

- Siloed telemetry in telematics platforms, CMMS records, and ERP schedules

- Inconsistent naming conventions, sampling rates, and data formats

- Latency from batch uploads and manual reconciliations that hinder real-time insight

- Increasing Asset Complexity

- Mixed mechanical, electronic, and software stacks with new failure modes

- Frequent firmware updates, networked control systems, and cybersecurity considerations

- Vendor-specific diagnostics and rapid obsolescence of sensors and control modules

Without timely warnings or a holistic view of asset health, fleets can experience up to 60 percent of repair costs from reactive maintenance. Unplanned downtime drives spare parts inflation, undermines service reliability, and erodes customer trust. To reverse these trends, organizations must establish the technical and organizational foundations for predictive maintenance, aligning data, processes, and stakeholder objectives toward a proactive model.

Prerequisites for Predictive Maintenance

Successful deployment of AI-driven predictive maintenance depends on a clear understanding of current maintenance challenges and the assembly of key inputs. These prerequisites create a common context for diagnostics, solution design, and performance measurement.

- Historical Maintenance Records: Detailed work order logs, inspection reports, repair durations, corrective actions, and cost breakdowns for labor, parts, and downtime

- Asset Utilization Metrics: Usage patterns by vehicle or equipment type (mileage, operating hours, load cycles), KPIs such as mean time between failures (MTBF) and overall equipment effectiveness (OEE), and environmental conditions impacting degradation

- Data Infrastructure: IoT networks or edge computing platforms for real-time data capture, centralized repositories, data governance frameworks for quality and lineage, and integration interfaces with ERP, finance, and scheduling systems

- Organizational Readiness: Stakeholder alignment across operations, maintenance, IT, and finance; change management processes for new workflows; and skill assessments to identify gaps in analytics and digital literacy

With these inputs and conditions in place, a predictive maintenance initiative can quantify business impact, prioritize high-value assets, and define clear success criteria for improved uptime, cost efficiency, and compliance.

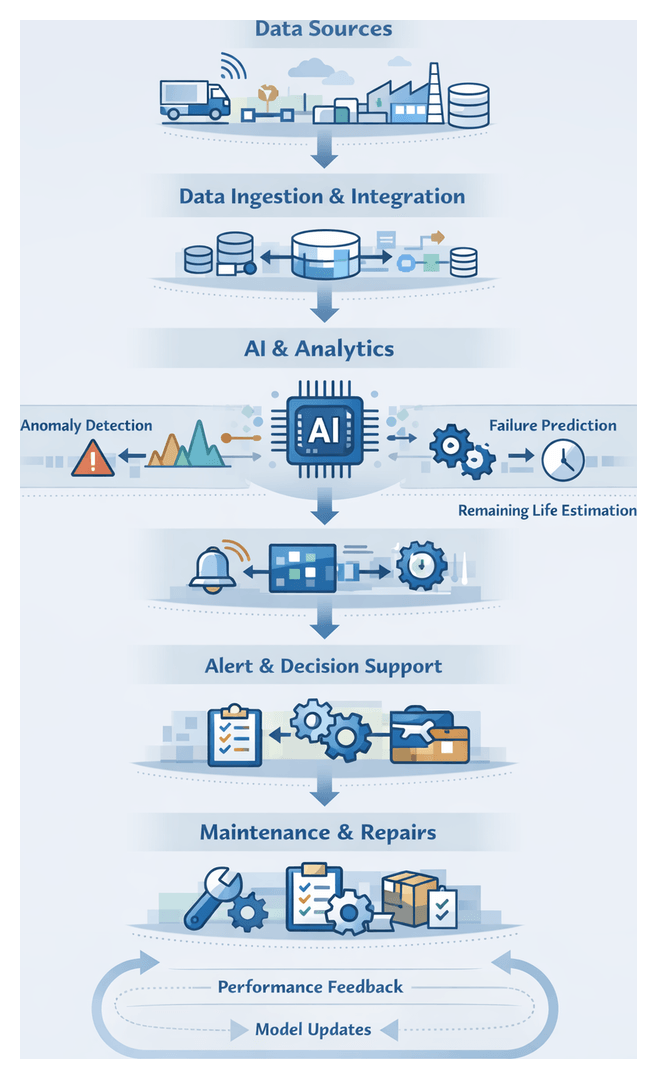

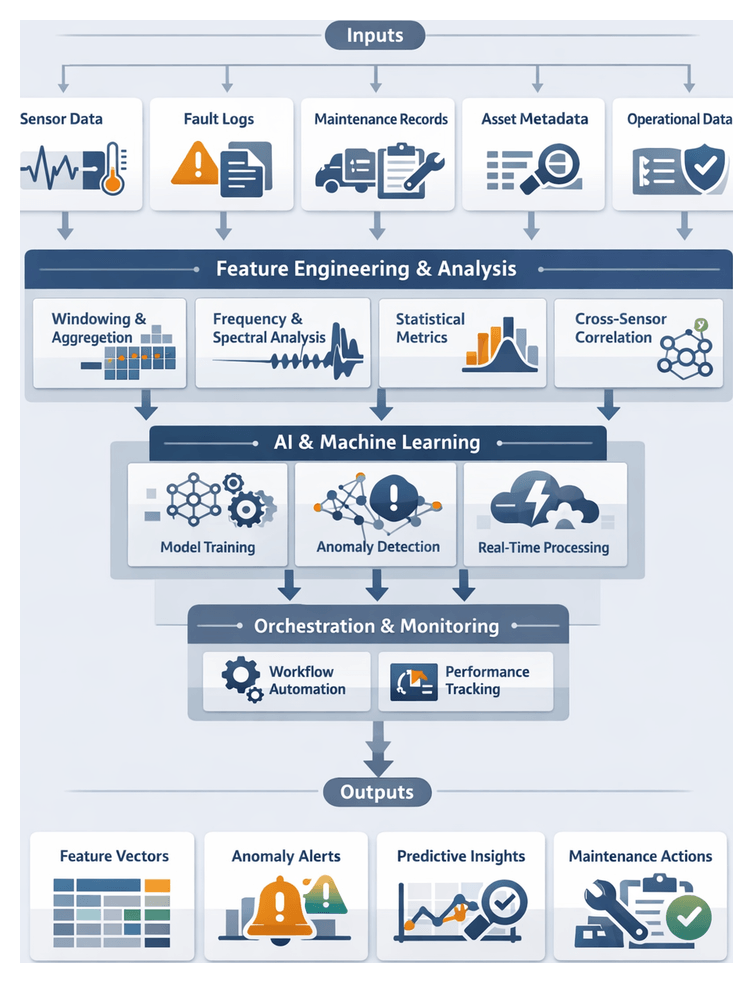

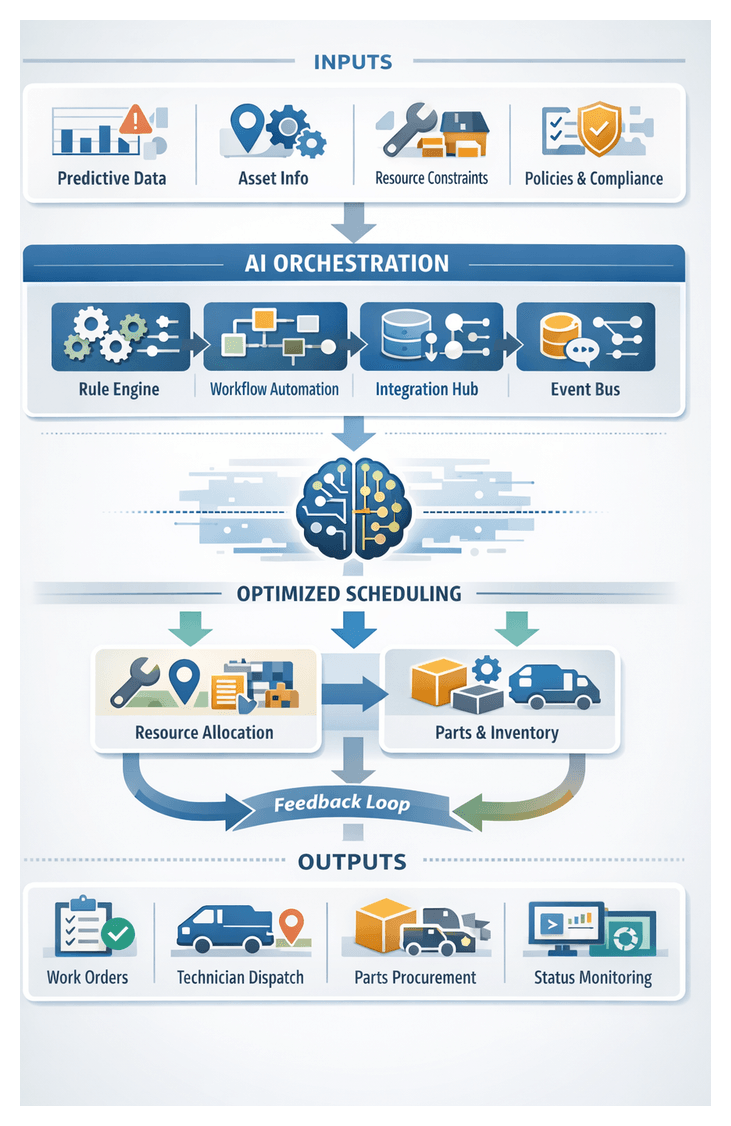

AI-Driven Predictive Maintenance Workflow

An end-to-end predictive maintenance solution integrates sensors, edge computing, cloud platforms, analytics modules, orchestration engines, and human operators. Seamless data flows and clearly defined handoffs ensure that insights reach decision makers and field crews with minimal latency and maximum context.

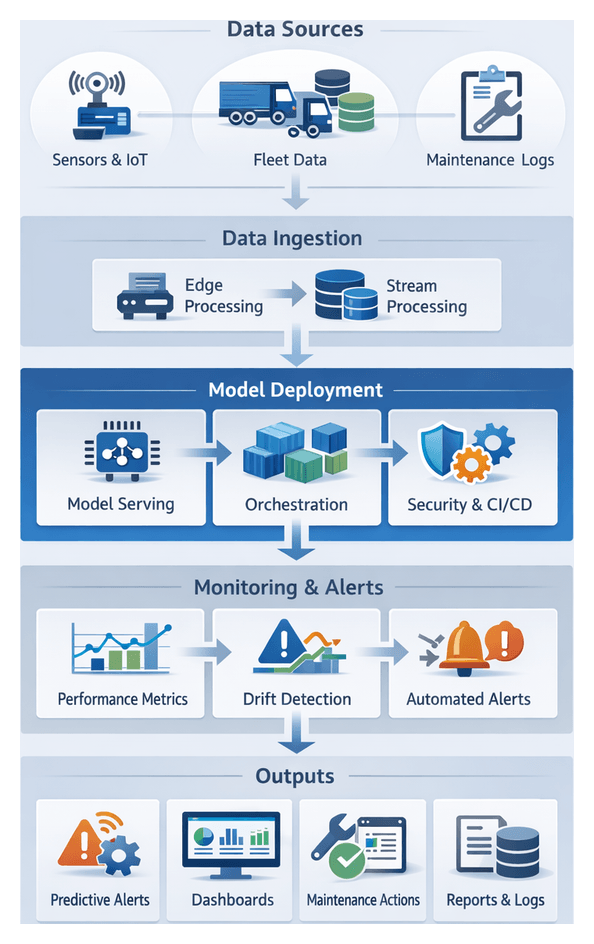

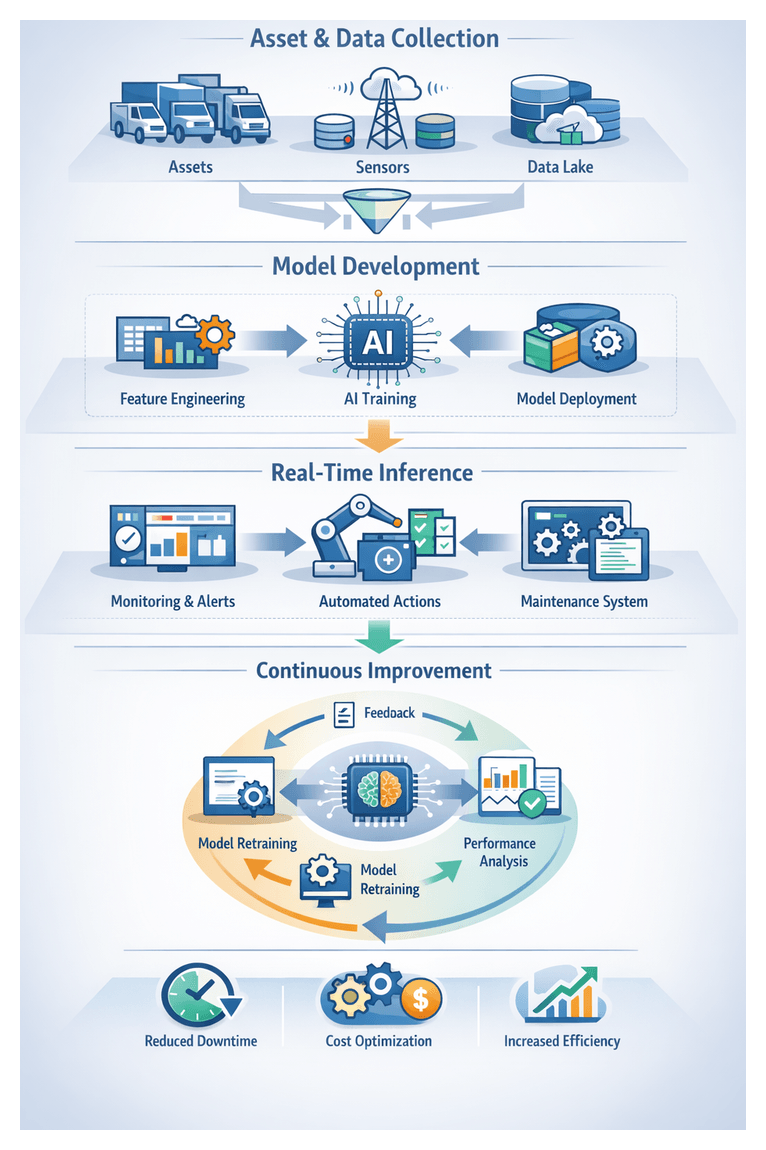

Data Acquisition and Edge Ingestion

Sensor nodes capture vibration, temperature, pressure, and GPS signals at configurable intervals. Edge gateways aggregate these streams, perform initial filtering, and forward events to a central broker. Key components include:

- IoT device management via Azure IoT Central or AWS IoT Core

- On-device noise reduction and anomaly flagging with TensorFlow Lite or PyTorch Mobile

- Publish-subscribe infrastructure using Apache Kafka or MQTT brokers

- Operational dashboards monitoring ingestion health, dropped messages, and gateway performance

Field technicians validate sensor installations and signal quality, while network engineers ensure secure, low-latency connectivity through VPN tunnels and firewall configurations.

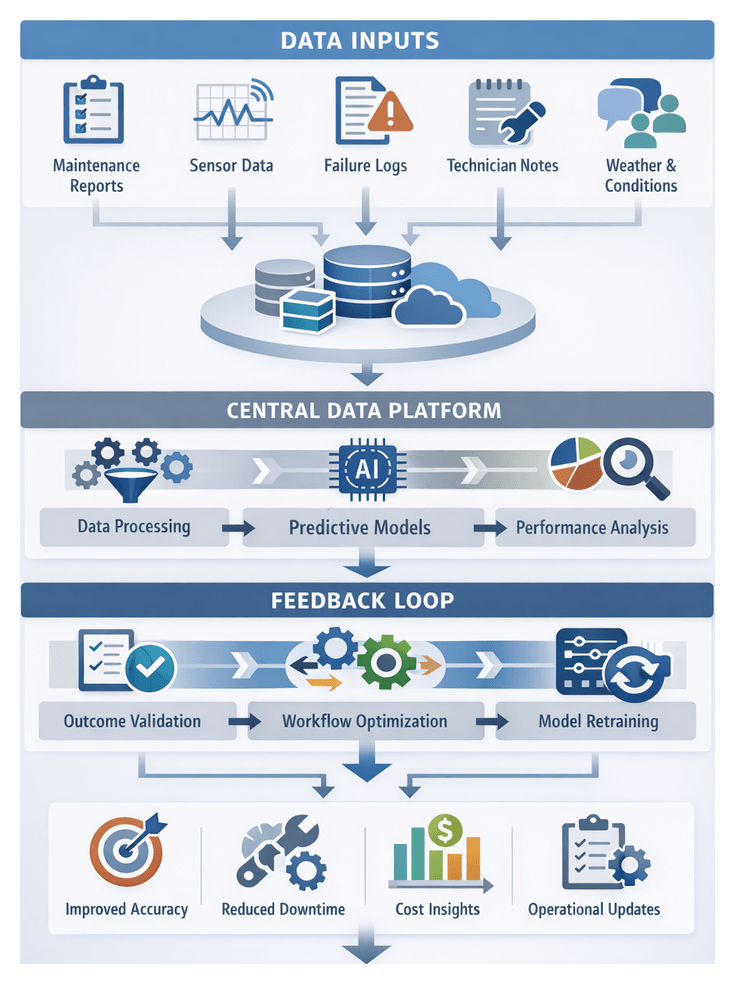

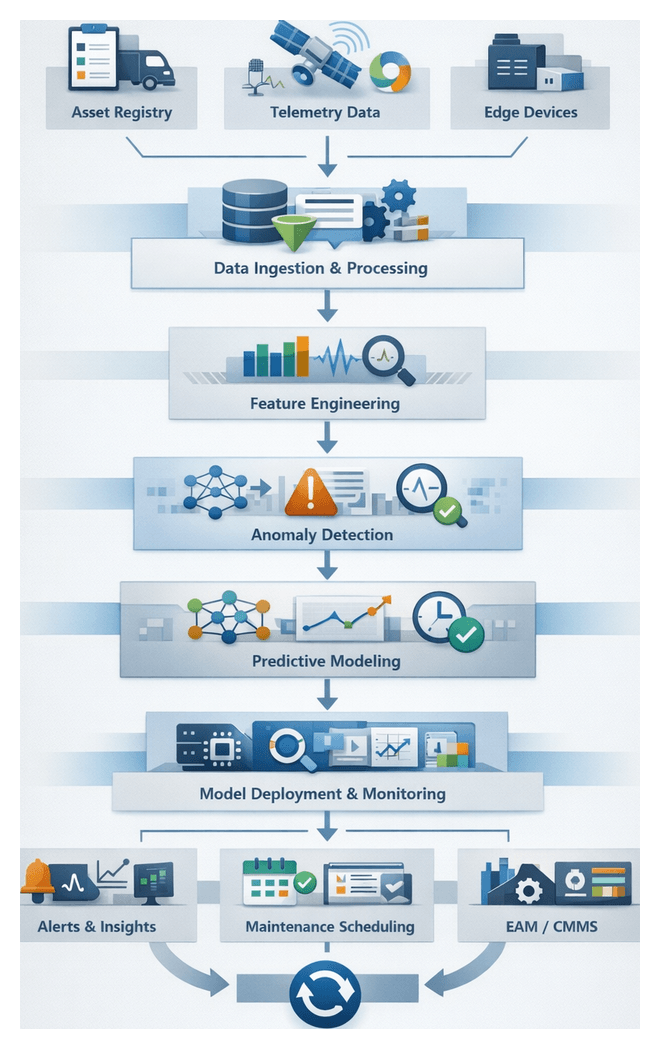

Data Integration and Central Processing

Edge and operational data converge in a unified platform for schema validation, metadata enrichment, and alignment with maintenance and scheduling records. Integration workflows leverage:

- Snowflake or Azure Data Factory for ETL/ELT pipelines

- AI-driven data quality modules that detect and correct missing timestamps, inconsistent units, and duplicates

- Metadata catalogs tracking data lineage and version history for compliance and model explainability

- Asset hierarchies managed in systems like IBM Maximo or SAP EAM, linked by data stewards

Shared dashboards in Microsoft Power BI or Tableau allow cross-functional teams to review ingestion metrics, quality scores, and exception reports.

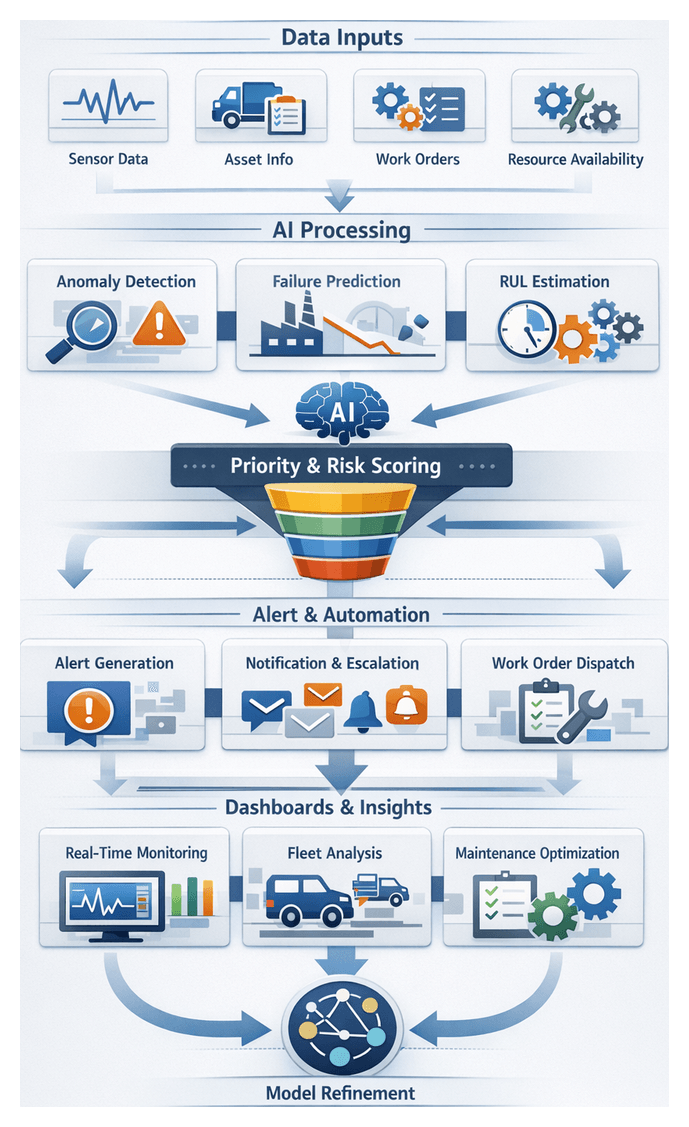

AI-Enabled Monitoring and Forecasting

With a clean, unified dataset, analytics services generate features, train models, and perform inference to detect anomalies, forecast failures, and estimate remaining useful life (RUL).

- Anomaly detection engines ingest streaming data to set dynamic thresholds, recognize multivariate pattern deviations, and enrich alerts with contextual insights

- Predictive models use feature synthesis pipelines and ensemble strategies—powered by platforms such as Amazon SageMaker or Google Cloud AI Platform—to estimate component RUL with Bayesian uncertainty bounds

- Explainable AI techniques, including SHAP analysis, reveal key drivers behind failure forecasts

Machine learning engineers and DevOps teams maintain CI/CD pipelines to register, deploy, monitor, and retrain models, ensuring forecast accuracy and responsiveness to data drift.

Alerting and Decision Support

Prediction outputs feed an alerting engine that correlates failure probabilities with asset criticality and operational context to prioritize notifications. Key features include:

- Scoring logic combining model confidence, business impact, and current fleet utilization

- Multi‐channel notifications via email, SMS, or in-app alerts within IBM Maximo or Oracle Enterprise Asset Management

- Interactive dashboards showing geospatial maps, trend charts, and drill-down views of sensor anomalies

- Decision support assistants recommending optimal repair windows based on technician availability, parts inventory, and service agreements

Maintenance planners coordinate parts reservations and field crew assignments, documenting handoffs in the CMMS and tracking collaboration through integrated ticketing systems.

Maintenance Orchestration and Execution

Automated orchestration transforms prioritized alerts into actionable work orders. The orchestration engine:

- Generates structured work orders specifying tasks, tools, and safety procedures

- Optimizes technician schedules by matching skills, certifications, and proximity

- Manages parts requisitions, inventory checks, and purchase order workflows

- Guides mobile field apps to capture completion metrics, photos, and sign-off data

Supervisors monitor job statuses in real time, intervening to resolve delays or reassign tasks. The CMMS remains the single source of truth for maintenance activities and compliance records.

Continuous Improvement and Governance

Post-maintenance data—actual downtime, repair durations, parts consumption, and subsequent performance—feeds back into analytics to validate predictions and refine processes.

- Outcome analytics compare predicted and actual failure events to measure model precision, recall, and cost benefits

- Retraining pipelines update feature weights and deploy improved models via automated MLOps workflows

- Process mining uncovers bottlenecks and handoff delays in orchestration logs

- Governance frameworks enforce model versioning, auditability, encryption, access controls, and compliance with standards such as ISO 27001 and GDPR

- High-availability architectures and disaster recovery plans ensure uninterrupted monitoring and inference capabilities

This iterative cycle fosters a data-driven culture, continuously enhancing prediction accuracy, asset uptime, and maintenance productivity.

Asset Assessment and Integration

The asset assessment stage defines the fleet’s single source of truth, producing structured deliverables that guide sensor deployment, monitoring configurations, and analytics workflows.

Core Deliverables

- Asset Registry and Metadata Catalog: Central repository of unique identifiers, manufacturer specifications, maintenance history pointers, and location hierarchies with a governed schema

- Criticality Matrix: Probability of failure and operational impact scores, resulting in high, medium, or low risk classifications

- Prioritized Asset List: Ranked assets by descending risk level for focused monitoring and resource allocation

- Risk Profiles and Failure Likelihood: Quantitative scores, confidence intervals, and documented failure modes for anomaly detection calibration

- Data Quality Audit Reports: Automated validation results highlighting missing or inconsistent attributes and remediation guidance

Dependencies and Integration Patterns

- Data Sources: ServiceNow CMDB, IBM Maximo, Computer-Aided Design repositories, telematics feeds, and vendor supply chain systems

- Infrastructure: Cloud storage (AWS S3, Azure Blob Storage), ETL tools (Informatica PowerCenter, Talend), and message brokers (Apache Kafka)

- Stakeholders: Maintenance planners, reliability engineers, IT operations, data governance councils, and business leadership

Handoff Mechanisms

- API-Driven Exchange: RESTful endpoints delivering JSON payloads of asset metadata, criticality scores, and location, secured via OAuth2

- Scheduled Batch Exports: Nightly ETL jobs exporting CSV or Parquet snapshots with version tags to a shared data lake

- Event Notifications: Message topics broadcasting asset onboarding or risk score updates to sensor calibration engines and analytics orchestrators

- Documentation and Training: Data dictionaries, API specifications, sample queries, process flows, and workshops to ensure proper interpretation and use

- Feature Engineering Integration: Tagging time series data with asset risk levels and metadata to enable dynamic thresholding in anomaly detection models

- Governance and Version Control: Change control pipelines for schema definitions and registry snapshots, with automated tests and sign-off gates

By defining clear deliverables, rigorously mapping dependencies, and establishing robust handoff protocols, organizations ensure that sensor deployment, data integration, and analytics stages operate on a trusted, prioritized view of the asset fleet.

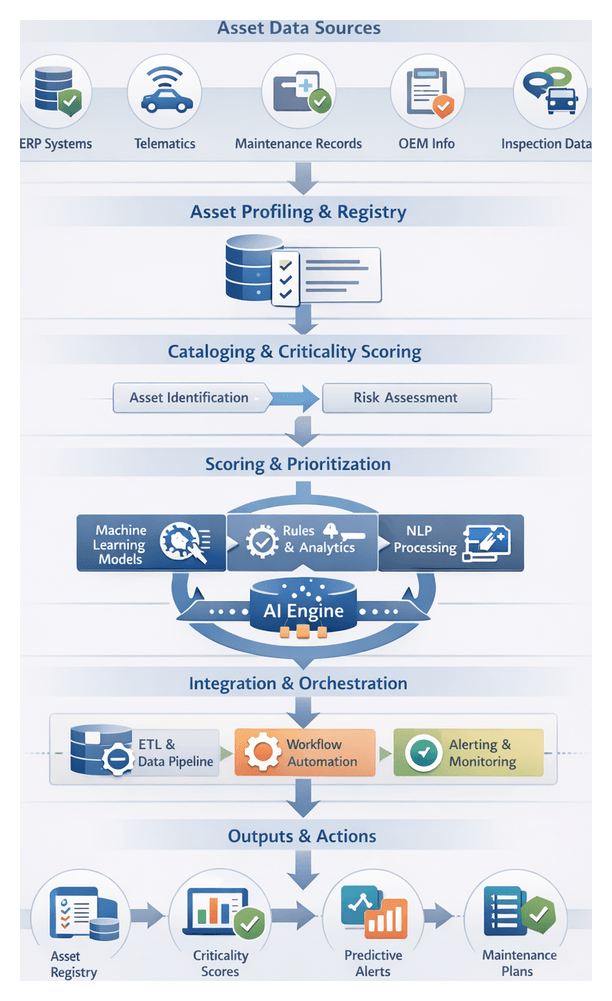

Chapter 1: Asset Inventory and Criticality Assessment

Asset Profiling and Registry

Purpose and Objectives

Establishing a comprehensive, authoritative inventory of fleet assets is the foundation of any predictive maintenance program. Asset profiling creates a single source of truth by uniquely identifying vehicles, equipment, and components, capturing manufacturer details, model specifications, installation dates, warranty information, and maintenance histories. It standardizes naming conventions and taxonomy, maps hierarchical relationships among assets and subcomponents, and aligns records with organizational structures and operational zones. Governed by policies for data stewardship, this stage ensures that machine learning models, sensor deployments, and real-time analytics operate on consistent, high-fidelity inputs.

Required Inputs

- Enterprise asset masters from ERP systems such as SAP or Oracle, CMMS platforms like ServiceNow or IBM Maximo, and configuration databases.

- Maintenance histories, including work orders, service logs, failure reports, and warranty claims.

- Telematics and usage data—mileage, engine hours, load cycles, and environmental metrics.

- Vendor and OEM documentation, technical manuals, and parts catalogs.

- Physical audit reports and field inspection records.

- Geospatial data—GPS coordinates, route assignments, depot locations.

- Regulatory certificates, inspection logs, and compliance records.

- Financial and procurement records covering acquisition costs, depreciation, and spare inventory.

Prerequisites

- Executive sponsorship and cross-functional alignment among operations, maintenance, IT, and finance.

- A data governance framework defining ownership, access controls, update schedules, and audit trails.

- Standardized taxonomy and naming conventions for asset classes, hierarchies, and attributes.

- Master data management tools or registries with API access to downstream systems.

- Secure integration capabilities connecting ERP, CMMS, telematics, and inspection applications.

- On-site verification planning with field teams and mobile data capture tools.

- Quality assurance criteria for data completeness, accuracy, and timeliness.

- Change management processes for bulk imports, overrides, and incremental updates.

Cataloging and Criticality Scoring

High-Level Workflow

- Asset discovery and onboarding

- Metadata enrichment and verification

- Risk factor assessment

- Criticality algorithm execution

- Score review and adjustment

- Registry publication and handoff

Asset Discovery and Onboarding

New and existing assets are identified through system scans and manual input, consolidating records from procurement databases, vendor catalogs, and ERP. Basic identifiers—serial numbers, acquisition dates, manufacturer details—populate the registry.

Metadata Enrichment and Verification

The registry integrates with transportation management, GPS fleet tracking, and CMMS systems to append operational schedules, maintenance histories, and route profiles. Automated validation flags missing or conflicting entries for human review.

Risk Factor Assessment

Rule-based and statistical checks evaluate factors such as failure frequency, mean time between failures, environmental exposure, and maintenance backlog. A risk engine normalizes these factors against fleet baselines to produce preliminary risk vectors.

Criticality Algorithm Execution

Machine learning models—trained on historical incidents—combine risk vectors with operational impact metrics (utilization rates, downtime costs) to generate composite criticality scores.

Score Review and Adjustment

Maintenance planners and asset managers inspect score breakdowns via a decision support interface, adjust weightings for specific contexts, and annotate rationales prior to finalization.

Registry Publication and Handoff

Validated scores update the central asset registry. Downstream systems subscribe to registry events via an event-driven message bus, triggering sensor deployment orchestration and predictive analytics modules.

Integration and Orchestration

An integration layer connects ERP, CMMS, fleet management, and data science platforms through APIs and batch extracts. A workflow orchestration engine monitors milestones, retries failed tasks, and logs execution details for auditability.

Exception Handling and Governance

- Central exception management triages data quality issues, routing critical failures to maintenance operations and minor issues to data stewards.

- Audit dashboards track model versions, rule sets, user overrides, and scoring trends.

- Scheduled rescoring (monthly or quarterly) and near-real-time scoring modes accommodate both stable and dynamic assets.

AI-Driven Risk Evaluation and Prioritization

Machine Learning Models for Predictive Risk Scoring

Supervised algorithms—gradient boosting machines, random forests, neural networks—map historical failure events to asset features and telemetry. Time-series models capture sequential dependencies, while unsupervised methods identify emerging anomaly patterns. Feature selection highlights key predictors, guiding both model transparency and condition monitoring.

Rule Engines and Knowledge Graphs

Domain rules enforce safety thresholds in real time and overlay knowledge graphs that link assets to manuals, part hierarchies, and service bulletins. Hybrid scoring merges statistical outputs with deterministic rules to produce comprehensive risk indices.

Natural Language Processing for Maintenance Logs

NLP techniques extract structured data—failure descriptions, symptoms, corrective actions—from free-text work orders. Named entity recognition and sentiment analysis surface latent risks and recurring issues.

Feature Store and Data Infrastructure

- Batch and streaming pipelines ingest sensor streams, maintenance histories, and operational logs to compute rolling aggregates and statistical summaries.

- Data quality checks detect missing values, outliers, and schema drift.

- Access controls ensure that sensitive features are visible only to authorized systems and users.

MLOps and Model Management

An MLOps platform supports automated training pipelines, a model registry, and CI/CD for machine learning. Retraining triggers on performance degradation or new failure data, maintaining model accuracy as conditions evolve.

Scoring and Orchestration Engines

High-throughput scoring engines process thousands of assets per hour, while low-latency APIs enable on-demand assessments. Orchestration coordinates scoring jobs, resource allocation, and integration with rule engines.

Explainable AI and Decision Support

Explainability tools such as SHAP and LIME quantify feature contributions to risk scores. Interactive dashboards visualize risk concentrations, score trends, and recommended maintenance actions, integrating GIS mapping for geographic context.

Scalability and High Availability

Container orchestration platforms like Kubernetes deploy scoring and preprocessing components across clusters. Auto-scaling and failover configurations ensure consistent throughput and resilience under varying loads.

Outputs, Dependencies, and Handoffs

Primary Deliverables

- Comprehensive Asset Registry with unique identifiers, manufacturer specifications, lifecycle status, and digital twin links.

- Criticality Matrix ranking assets by operational impact, failure probability, and cost implications.

- Asset Metadata Catalog defining required fields, value ranges, and unit conventions for data schemas.

- Risk Evaluation Report summarizing AI-derived scores, sensitivity analyses, and model attributions.

- Data Quality and Completeness Analysis identifying gaps, inconsistencies, and remediation tasks integrated with issue-tracking tools.

Data Source Dependencies

- ERP systems (SAP, Oracle) and CMMS platforms (ServiceNow, IBM Maximo)

- Fleet telematics and usage records

- Vendor OEM catalogs and technical datasheets

- Regulatory registries and compliance databases

Platform Dependencies

- ETL frameworks (Informatica, Talend, Apache NiFi)

- Automated ML platforms (DataRobot) and custom AI frameworks

- Metadata repositories (Apache Atlas, Collibra)

- Version control systems and identity/access management layers

Organizational Dependencies

- Cross-functional governance boards and steering committees

- Maintenance engineering and reliability teams

- IT and data operations for infrastructure and security

- Operations and fleet managers for usage validation

Handoff Protocols

Sensor Deployment

- Asset location, environmental context, and priority tiers guide sensor selection and scheduling.

- Data contracts specify payload structures and serialization formats for configuration tools.

Edge Processing and Data Ingestion

- Lookup tables map sensor IDs to asset identifiers and criticality tiers on edge nodes.

- Filtering and aggregation rules optimize bandwidth and highlight key signals.

- Metadata tags propagate with each data packet for routing and indexing.

Data Integration and Quality Management

- Schema registration in the data catalog enforces validation of incoming streams.

- Reference data synchronization updates asset metadata and criticality scores.

- Automated quality rules use asset attributes to flag anomalies and trigger reconciliation.

Governance and Compliance

- Audit trails record registry modifications, user actions, and justification codes.

- Access control lists synchronize with identity management for new or retired assets.

- Regulatory reporting feeds transmit metadata to external agencies and compliance dashboards.

Best Practices

- Maintain standardized, machine-readable data contracts for all handoff artifacts.

- Implement automated notification workflows to alert teams of new registry versions.

- Apply semantic versioning and changelogs to capture enhancements and corrective actions.

- Enforce sign-off gates with governance and engineering stakeholders before deployment.

- Leverage sandbox environments and CI/CD pipelines for end-to-end handoff testing.

- Document APIs, runbooks, and user guides in a centralized knowledge base.

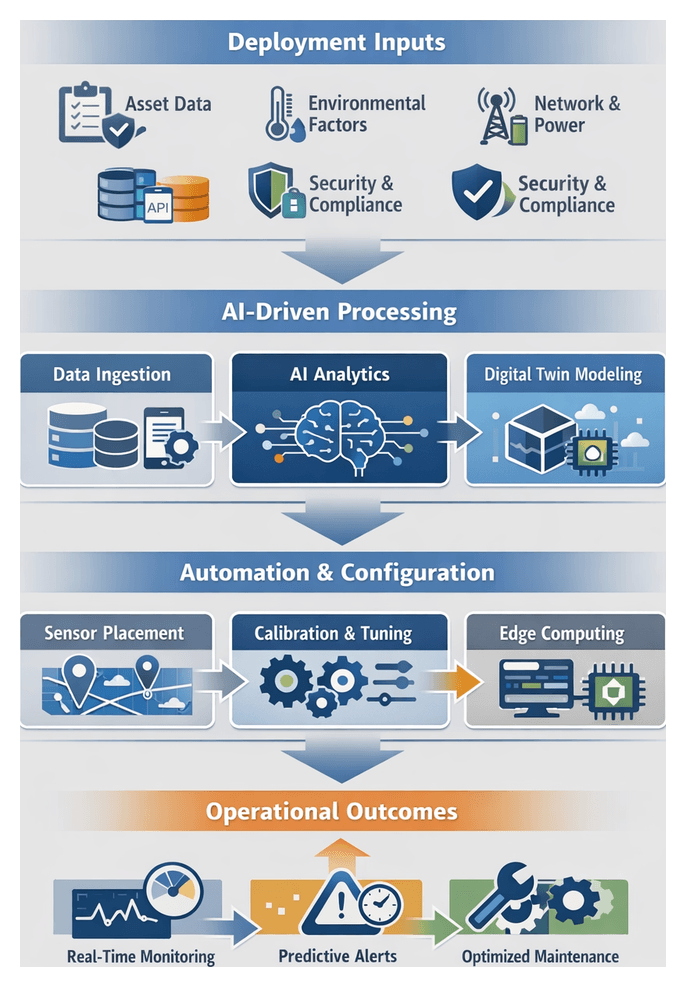

Chapter 2: Sensor Infrastructure Design and Deployment

Stage Objectives and Deployment Inputs

The sensor infrastructure design and deployment stage establishes the foundation for real-time condition monitoring in transportation and logistics. Its purpose is to translate strategic maintenance goals—such as minimizing unplanned downtime, extending asset life, and optimizing repair schedules—into a concrete deployment plan ensuring that analytics and automation workflows receive high-quality, consistent data.

Key objectives include:

- Alignment with Maintenance Use Cases: Select and place sensors to support predictive scenarios—bearing vibration monitoring, engine temperature trending, hydraulic pressure fluctuations.

- Data Quality and Continuity: Define sampling rates, resolution thresholds, and retention policies for granular, historical context.

- Risk Mitigation: Standardize installation processes, failure-mode assessments, and fallback procedures to minimize rollout disruptions.

- Scalability and Flexibility: Architect a modular ecosystem that accommodates diverse assets, protocols, and future expansion.

- Security and Compliance: Integrate encryption, authentication, and regulatory requirements (e.g., FMCSA, IECEx) into hardware and network design.

Before procurement and detailed planning, projects must gather inputs across technical, operational, and organizational domains:

- Asset inventory with criticality scores and maintenance history

- Operational profiles: duty cycles, route types, ambient conditions

- Environmental surveys: temperature extremes, humidity, dust, vibration zones

- Network topology: 4G/5G coverage, LoRaWAN gateways, Wi-Fi access, satellite links

- Power and edge compute requirements: vehicle wiring, battery capacity, gateway specs

- Data integration and security policies: API specifications, protocols (MQTT, OPC UA), encryption standards

- Regulatory and safety standards: emission monitoring, explosive-atmosphere directives, electronic logging devices

- Procurement approvals and budget constraints

- Stakeholder roles, governance models, change-management plans

- Deployment timelines and sequencing guidelines

Sensor Placement and Configuration Workflow

A structured workflow ensures optimal sensor coverage, robust data capture, and seamless integration into analytics platforms. Key stages include:

Planning and Stakeholder Alignment

Project leads convene maintenance engineers, reliability analysts, IT architects, and operations managers to review criticality data, environmental constraints, and network requirements. Formal sign-off gates define roles, responsibilities, and timelines, reducing rework.

Site Survey and Asset Mapping

Field engineers and reliability specialists assess physical locations, environmental factors, and existing network and power access. Mobile survey apps such as PTC ThingWorx Navigate capture photographs, GPS coordinates, and ambient measurements. Survey outputs feed sensor modeling and risk analysis.

Sensor Selection and Placement Modeling

Engineering teams evaluate candidates by measurement range, ingress protection, edge computing capabilities, supported protocols, and power options. Placement optimizers like AWS IoT SiteWise Edge simulate field-of-view, signal attenuation, and interference to propose mounting positions that maximize data fidelity.

Network Architecture and Data Flow Design

Network architects define data flow diagrams showing connections from sensors to edge gateways and central platforms. Best practices include traffic segregation via VLANs or private APNs, redundant paths for critical sensors, device-level authentication, and QoS policies. Peer reviews ensure compliance with IT standards and security requirements.

Physical Installation and Configuration

Under maintenance-engineer guidance, technicians mount sensors with vibration-damping brackets or insulated enclosures, secure cabling, connect power, and provision devices using mobile tools or centralized platforms such as Azure IoT Central. Standardized templates define data formats, sample rates, and filter settings.

Calibration and Validation

Calibration teams apply reference signals and stress tests to verify accuracy and stability across operating conditions. AI-enhanced assistants compare live outputs to baselines, adjusting offsets and gains. Validation encompasses zero-point/spread calibration, noise and drift checks, and end-to-end data path verification.

Integration with Data Ingestion Platform

Configuration teams export metadata—location, type, units—to data catalogs and update ingestion pipelines in platforms like AWS IoT SiteWise or Apache Kafka. Schema mappings, retention policies, and security layers (TLS, token management) are defined. Automated scripts onboard new sensor streams into real-time dashboards.

Operational Readiness and Continuous Improvement

A readiness checklist verifies installation, calibration, connectivity, and documentation. Training familiarizes operations staff with maintenance procedures and health-check routines. Post-deployment, reliability engineers analyze signal patterns to refine placements and sampling settings, feeding updates back into configuration management.

AI-Enabled Configuration and Calibration

Embedding AI into configuration and calibration streamlines setup, optimizes performance, and maintains data quality via adaptive, automated processes.

Adaptive Sampling Rate Optimization

Machine learning conducts spectral analysis on historical vibration, temperature, or pressure traces to identify key frequency components. Reinforcement learning agents then propose dynamic sampling schedules aligned with operating context—engine load or ambient temperature—while respecting bandwidth and power limits.

Automated Sensor Calibration

- Model-Based Calibration: Digital twin simulations generate synthetic reference data. ML models learn mappings between raw readings and true values, auto-estimating offset and gain.

- In-Situ Self-Calibration: Neural networks on edge gateways compare overlapping streams—e.g., dual temperature probes—to infer drifts and apply real-time corrections.

- Federated Learning: Calibration adjustments from individual vehicles aggregate in a federated framework, refining global models without sharing raw data.

Edge-Based Data Quality Validation

Lightweight anomaly-detection models at the edge validate streams before cloud ingestion. Solutions like Edge Impulse deploy TinyML classifiers on microcontrollers; NVIDIA Jetson Nano runs CNNs on multi-axis accelerometer data. Detected anomalies trigger self-tests, calibration resets, or maintenance alerts.

Digital Twin-Driven Configuration

Platforms such as Azure Digital Twins build virtual replicas of assets and environments. Genetic algorithms and Bayesian optimization explore sensor placement and orientation, generating calibration curves that preempt thermal gradients or vibration resonances. Profiles export to IoT edge managers for deployment.

Continuous Calibration and Drift Compensation

Time-series forecasting models predict gradual sensor drift by comparing live data to rolling baselines. Exceeding thresholds triggers automated recalibration—remote scale-factor adjustments or technician instructions. Continuous monitoring also retrains models to evolving conditions.

Integration with Device Management Platforms

Within AWS IoT Core or Google Cloud IoT Core, AI agents assess metrics—noise floors, packet loss, anomaly frequencies—to schedule calibration profile rollouts. Staged batches and A/B testing evaluate impacts on data fidelity and network utilization.

Roles of Supporting Systems and Best Practices

- Configuration Management Databases store baseline parameters and logs.

- Digital Twin frameworks enable virtual optimization.

- IoT device registries manage credentials and firmware.

- MLOps platforms handle model versioning and deployment.

- Edge orchestration environments run containerized AI inference.

Human expertise remains essential for oversight. Interactive dashboards present AI recommendations—offset corrections, sampling adjustments—for engineer review and authorization. Operator feedback refines training data, reducing false alarms over time.

Deployment Artifacts, Dependencies, and Handoffs

At completion, the sensor infrastructure stage delivers artifacts, defines dependencies, and executes formal handoffs to data engineering and edge analytics teams.

Key Deployment Artifacts

- Network Topology: Schematics of sensor placements, gateways, and segments. Diagrams from EdgeX Foundry or Visio stencils with VLAN IDs.

- Configuration Templates: JSON/YAML files for provisioning—sampling rates, packet formats, encryption. Snippets for Microsoft Azure IoT Edge and AWS IoT Greengrass.

- Calibration Logs: Reports of reference measurements, drift corrections, and validation statuses.

- Asset-to-Sensor Registry: Tables linking sensor IDs to assets with locations and serial numbers.

- Security Credentials: Firewall rules, certificates, VPN endpoints documented in secret management services.

- Installation Checklists: Signed forms, torque settings, cable routing, geotagged inspection photos.

- Metadata Schema: Definitions for asset IDs, locations, sensor types, and data quality tags.

System and Infrastructure Dependencies

- Asset management and ERP systems (SAP, Oracle) for master data.

- IT networks for bandwidth provisioning, VLAN segmentation, and gateway connectivity.

- Mechanical specifications for mounting and power sources.

- Security frameworks (ISO 27001, NERC CIP) for encryption and access control.

- Vendor SLAs for firmware, calibration certificates, and support.

- Operational constraints: deployment windows, seasonal cycles, change-management board approvals.

Integration Points and Handoffs

- Commissioning Package: Configuration files, calibration logs, and topology diagrams in version-controlled repositories.

- Data Ingestion Configuration: Connectors and scripts for Apache NiFi or StreamSets Data Collector.

- Edge Orchestration: Deployment manifests for containerized preprocessing, integrated with Kubernetes or Docker Swarm.

- Metadata Registration: Automated ingestion into data catalogs for lineage and discovery.

- Security Handoff: Firewall changes, tokens, and certificates coordinated with security operations.

- Training and Readiness: Workshops, runbooks, and incident escalation paths for field and operations staff.

Quality Assurance and Change Management

- Artifact Review Board ensures deliverables meet naming, schema, and security standards.

- Automated CI/CD pipelines validate JSON/YAML schemas and configuration consistency.

- End-to-end connectivity tests record latency and packet loss metrics.

- Formal sign-offs by engineering leads; deviations managed via change requests.

- Git repositories track versions and peer reviews; Change Advisory Board evaluates major updates.

Best Practices for Artifact Management

- Standardize naming: include environment, asset class, and date (e.g., PROD_VEH_ENG_20260215_NETTOPO.json).

- Centralize storage in artifact management platforms with access controls and retention policies.

- Embed metadata tags for searchability and integration with data catalogs.

- Conduct quarterly audits to retire or archive deprecated artifacts.

By defining clear objectives, executing a rigorous placement and configuration workflow, leveraging AI for calibration, and formalizing deliverables and handoffs, organizations create a scalable, reliable sensor network that underpins effective predictive maintenance across the fleet.

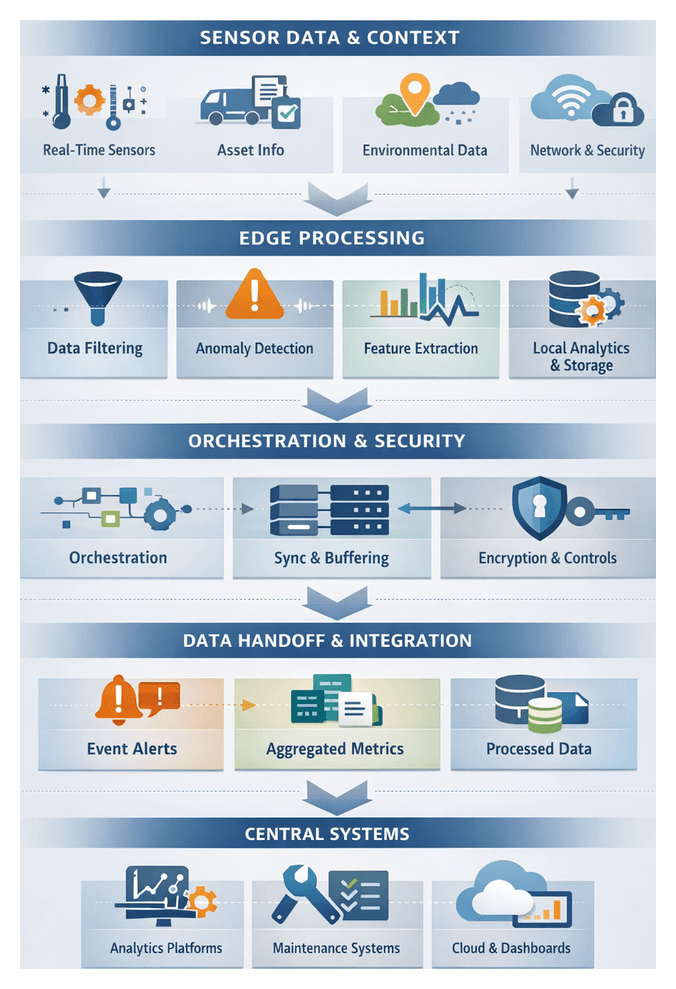

Chapter 3: Data Acquisition and Edge Processing

Purpose and Scope of Edge Processing

Data acquisition and edge processing form the critical bridge between raw sensor signals and centralized analytics in AI-driven predictive maintenance for transportation and logistics. By executing initial filtering, transformation, and anomaly flagging close to the source, edge processing reduces bandwidth consumption, lowers latency, and preserves data fidelity. This stage enables near–real-time detection of emerging equipment issues, supports adaptive maintenance scheduling, and ensures continued local processing during intermittent connectivity. It establishes a consistent schema and quality standards before integrating with downstream predictive models and asset management systems.

Key Inputs and Environmental Prerequisites

Successful edge processing demands comprehensive real-time and contextual data, alongside a robust operational environment. Inputs include:

- Real-Time Sensor Streams: Continuous or periodic readings from accelerometers, gyroscopes, thermal probes, pressure transducers, voltage and current sensors, and acoustic monitors.

- Sensor Metadata: Calibration coefficients, sampling frequencies, resolution parameters, and health indicators.

- Asset Static Attributes: Vehicle or equipment identifiers, manufacturing specifications, maintenance history, and criticality scores.

- Environmental Context: Geospatial coordinates, ambient weather conditions, track or road status, and loading characteristics.

- Processing Configuration: Filtering thresholds, aggregation windows, anomaly score rules, and deployment manifests.

- Network and Topology Data: Connectivity status, bandwidth availability, node hierarchy, and quality-of-service settings.

- Security Credentials: Device certificates, encryption keys, and authentication tokens.

Prerequisites include provisioned edge compute platforms—such as AWS IoT Greengrass, Azure IoT Edge, or Edge Impulse—running a lightweight Linux distribution with container support. Network infrastructure must offer LTE, 5G, Wi-Fi or satellite links with defined latency and bandwidth guarantees. Power stability, thermal management, and environmental protection ensure reliable node operation. Time synchronization via NTP or PTP aligns multi-sensor data streams, while a data governance framework enforces ownership, retention, encryption, and compliance with standards such as SAE J1939 or ISO 27001. Operational readiness requires remote provisioning, firmware update processes, and continuous monitoring of node health.

Orchestration and Workflow Management at the Edge

The orchestration layer on each edge node coordinates data pipelines, resource allocation, inter-module communication, and interactions with central systems. Processing pipelines are defined by configuration manifests that specify module order, input/output schemas, and execution triggers. When sensors stream data, the edge agent dynamically provisions containers or lightweight threads to host:

- Data Ingestion Agents that validate packets and enqueue messages locally.

- Filter Modules applying moving averages, low-pass filters or wavelet transforms to remove noise.

- Aggregators computing windowed statistics—min, max, mean, standard deviation—for feature extraction.

- Anomaly Detectors running rule-based engines or lightweight machine learning models to flag threshold breaches or probabilistic failures.

- Security Agents handling mutual TLS, key management, and authentication.

- Sync Agents managing buffered handoffs, retries, and acknowledgments with central services.

The orchestration engine uses a directed acyclic graph representation to manage dependencies, enforce timeouts, allocate CPU/GPU resources, and reroute data flows on failure. Configuration updates—filters, models or thresholds—are delivered via over-the-air updates or pulled from a central registry. Heartbeat signals and back-pressure mechanisms synchronize with a central orchestration plane, preventing overload of downstream platforms and ensuring consistent pipeline versions across the fleet.

To handle connectivity disruptions, the orchestration layer implements store-and-forward buffers with time-stamped journals, conflict resolution logic, and duplicate-suppression rules. Upon link restoration, records are replayed in order, guaranteeing no loss of critical events even in remote deployments.

AI-Driven Noise Reduction and Anomaly Detection

Edge AI models enhance signal quality and identify early fault indicators with minimal manual tuning. Noise reduction techniques include:

- Deep learning denoising autoencoders trained on paired clean and noisy signals.

- Adaptive filtering with LSTM networks that adjust parameters based on operating conditions.

- Wavelet transforms combined with neural predictors for fine-grained suppression of transient disturbances.

- Edge-optimized signal enhancement libraries in TensorFlow Lite and the AWS IoT Greengrass ML Inference engine.

Implementing these models requires profiling raw sensor noise spectra, selecting architectures that balance latency and accuracy, and converting artifacts via the TensorFlow Lite Converter or the OpenVINO™ toolkit. Models and parameters are deployed through edge platforms such as Azure IoT Edge or AWS IoT Greengrass. A local orchestration agent monitors inputs, invokes inference, and triggers fallback routines when data diverges from training domains.

Anomaly flagging leverages:

- Statistical control charts monitoring variance and kurtosis.

- Unsupervised clustering and density estimators like k-means or Gaussian mixture models.

- One-class classifiers such as isolation forests or one-class SVMs.

- Deep neural architectures including variational autoencoders and CNNs.

- Prebuilt models in Amazon Lookout for Equipment for common mechanical failure modes.

Streaming inference workflows buffer and window denoised data into fixed-length frames, prioritize high-risk segments, aggregate scores over multiple windows, and publish alerts via local brokers such as Eclipse Mosquitto or AWS IoT Core. Edge Impulse simplifies end-to-end pipeline deployment, from data ingestion and model training to live inference on constrained devices.

Supporting systems integrate AI modules with:

- Edge Data Managers maintaining local time-series databases (InfluxDB).

- Message Brokers routing metrics and alerts to central dashboards or cloud services.

- Device Management Services delivering model updates and security patches.

- Local Actuation Interfaces triggering on-device responses when anomalies exceed thresholds.

Roles in the AI decision pipeline include signal preprocessing, feature extraction, anomaly scoring, alert generation, and local decision support, guiding real-time corrective actions to reduce fault severity. Deployments must address compute and memory constraints, latency requirements, power budgets, model lifecycle management, and security compliance to ensure sustained performance.

Processed Outputs and Integration Handoffs

Edge processing yields structured data artifacts optimized for downstream analytics and enterprise systems. Primary deliverables include:

- Time-Stamped Event Logs capturing discrete occurrences—threshold breaches, state changes, anomaly flags—annotated with synchronized timestamps.

- Aggregated Metrics summarizing sensor readings over intervals to preserve trends while reducing volume.

- Compressed Data Chunks serialized in Apache Avro or Protocol Buffers with optional delta encoding.

- Anomaly Metadata embedding confidence scores or categorical labels from AI detectors.

- Preprocessed Feature Streams such as rolling standard deviations, spectral bands, and gradient profiles.

- Health-Check Heartbeats reporting node status, resource usage, and sensor health.

Reliable handoffs depend on network availability and bandwidth, secure and updated compute modules (AWS IoT Greengrass, Azure IoT Edge), accurate time synchronization, shared schema definitions, valid security credentials, and stable power and environmental conditions.

Integration patterns include:

- Broker Publishing to Apache Kafka, Google Cloud Pub/Sub, or Azure Event Hubs with QoS controls.

- RESTful or gRPC Calls for synchronous, lower-volume handoffs with retry and circuit-breaker logic.

- Batch Transfers of files to Amazon S3, Google Cloud Storage, or Azure Blob Storage using manifests and checksums.

- Hierarchical Aggregation via intermediate gateways that consolidate streams from subordinate devices.

- Secure Pub/Sub over MQTT or AMQP with TLS and token authorization.

Data integrity and lineage are maintained through immutable identifiers, SHA-256 hashing of raw inputs, embedded transformation metadata, and automatic registration in catalogs such as Apache Atlas or AWS Glue.

Security and compliance measures enforce end-to-end encryption, fine-grained role-based access control, audit logging, retention policies, and secure over-the-air updates of firmware and analytics models. Operational resilience is achieved through monitoring dashboards (Prometheus, Grafana), automatic retries and dead-letter queues, local buffering with embedded databases, and self-healing workflows using orchestration frameworks.

Scalability strategies include load-adaptive batching, stream partitioning by asset group or region, elastic resource provisioning at the edge and in the cloud, and back-pressure feedback to manage ingestion rates. An example workflow for an industrial trucking fleet applies a moving average filter, computes spectral features, packages aggregates into Avro records, publishes to a Kafka topic, purges local buffers upon acknowledgment, and sends heartbeats over MQTT secured via TLS. Central services validate schemas, register topics, decrypt payloads into a time-series database, and trigger analytics pipelines for anomaly detection and forecasting.

Clear handoff protocols with defined ownership, SLAs for data freshness, notification channels for ingestion failures, and living documentation of endpoints, topics, and schemas ensure that data engineers, maintenance planners, and operational teams can act on processed data with confidence. This structured approach enables reliable, secure, and scalable predictive maintenance workflows that minimize unplanned downtime and optimize asset utilization.

Chapter 4: Data Integration and Quality Management

In predictive maintenance for transportation and logistics, data integration and quality management establish the unified foundation for advanced analytics, machine learning models, and maintenance orchestration. This stage consolidates real-time sensor streams, event records, maintenance logs, operational schedules, master data, and external context into a centralized, high-fidelity repository. A cohesive data environment accelerates insight generation, ensures accurate failure predictions, and supports scalable operations across a heterogeneous fleet.

Core objectives include:

- Consolidating diverse data sources for end-to-end visibility of asset health and maintenance history

- Harmonizing formats, units, and semantics via standardized schemas and ontology definitions

- Implementing automated validation, cleansing, enrichment, and anomaly detection to resolve inconsistencies at scale

- Enforcing role-based access controls, encryption, and audit trails to satisfy governance and regulatory mandates

- Maintaining real-time synchronization through incremental updates and event-driven ingestion mechanisms

Data Source Inputs

Each input stream contributes unique context to the asset health profile and collectively supports comprehensive analysis:

- Real-Time Sensor Logs: Telemetry capturing vibration, temperature, pressure, fluid levels, and GPS coordinates. Streaming frameworks such as Apache Kafka and AWS IoT Core transport data, while Azure Data Factory or Apache NiFi orchestrate flows.

- Event and Fault Records: On-board diagnostics, fault code registries, and safety alerts ingested via AWS Glue or Talend Data Integration.

- Maintenance Work Orders and Service Logs: Historical and scheduled tickets, technician notes, parts replacements, and timestamps from CMMS solutions such as IBM Maximo, SAP EAM, or Infor EAM.

- Operational and Scheduling Data: Route plans, utilization metrics, driver assignments, and shift schedules from TMS or ERP platforms like SAP S/4HANA and Oracle Fusion Cloud.

- Environmental and External Context: Weather conditions, road surface quality, cargo details, and regulatory data integrated via ETL connectors and public APIs.

- Master Data and Reference Tables: Asset metadata, part catalogs, fleet configurations, and organizational hierarchies managed through MDM services to align identifiers and support criticality scoring.

Prerequisites and Technical Foundations

- Data Governance Framework: Policies defining ownership, stewardship roles, quality thresholds, and retention guide validation rule design, access control enforcement, and lineage tracking.

- Connectivity and Network Topology: Reliable, low-latency links between edge collectors, on-premises systems, and cloud platforms, enabling appropriate use of streaming, micro-batch, or bulk ingestion.

- Schema and Ontology Definitions: Unified data models standardizing terminology, units, and attribute hierarchies. Predefined schemas support automated mapping via platforms like Informatica Intelligent Cloud Services.

- Data Security and Compliance: Encryption in transit and at rest, tokenization of sensitive fields, and adherence to regulations such as GDPR or CCPA.

- Resource Provisioning and Scalability Planning: Capacity planning for storage, compute, and networking with elastic scaling through Amazon S3, Google Cloud Storage, or Azure Data Lake Storage.

- Monitoring and Observability: Logging, tracing, and alerting via tools such as AWS Glue DataBrew or Datadog ensure timely detection of ingestion failures, schema drift, and quality degradation.

Data Ingestion and Staging

At the outset, heterogeneous inputs converge into a governed staging environment managed by an orchestration engine. Real-time streams arrive via platforms like Confluent or cloud messaging services, while batch extracts of maintenance logs and historical records are scheduled through Apache Airflow. Each pipeline includes metadata descriptors capturing source system, schema version, and ingestion timestamp to enable traceability and manage schema evolution.

Service level agreements define acceptable latency, throughput, and error budgets. The orchestration engine triggers pipelines based on time schedules, file arrival events, or upstream API calls. Incoming payloads land in raw object stores in formats such as JSON, Avro, or CSV. A metadata registry maintains the mapping between raw schemas and processing contracts, automatically notifying data stewards when schema changes occur and initiating compatibility checks. System logs record each step for audit and troubleshooting purposes.

Normalization and Schema Mapping

Once ingested, raw data undergoes normalization to align with a canonical schema. Field-level transformations convert units of measure, align timestamps to a common time zone, and cast data types using mapping definitions in tools such as Talend or Informatica. The orchestration engine retrieves versioned schemas from the metadata registry, applies transformation scripts, and routes records with unexpected fields to quarantine queues for data steward review.

Normalized data is persisted in a relational staging database or a columnar data lake. Data engineers validate sample batches against business rules—such as ensuring bearing speeds in RPM fall within plausible ranges—to confirm transformation accuracy before downstream processing.

Validation, Deduplication, and Correction

Ensuring data integrity requires a layered approach combining deterministic checks with AI-driven analytics. Validation, deduplication, and correction workflows maintain a unified, accurate repository that underpins reliable forecasting and decision support.

Intelligent Validation and Error Handling

Rule-based engines enforce constraints on required fields, data types, sequential timestamps, and reference lookups. Concurrently, AI frameworks—leveraging platforms such as Great Expectations—model expected attribute behavior, detecting subtle anomalies and proposing adaptive validation rules.

- Behavioral Profiling: Unsupervised learning establishes baseline distributions for sensor streams and maintenance events, flagging deviations with anomaly scores.

- Contextual Consistency Checks: Natural language processing correlates free-text entries—technician notes or parts descriptions—with structured data to verify cross-domain alignment.

- Adaptive Rule Generation: Statistical inference suggests new quality rules based on emerging patterns, reducing manual maintenance of validation logic.

- Error Handling Tiers: Minor discrepancies trigger automated imputation or default substitution. Critical violations spawn incident tickets in service management systems and display alerts on a centralized error dashboard, enabling rapid triage and remediation.

- Audit Logging: All validation outcomes are logged, supporting compliance reporting and periodic data quality scorecards shared with stakeholders.

Machine Learning–Driven Deduplication

Duplicate or conflicting records—arising from concurrent streams, pipeline retries, or overlapping batch windows—are resolved using AI-powered record linkage and entity resolution. Vendors such as DataRobot and custom models built with TensorFlow or PyTorch optimize matching thresholds, distinguishing true duplicates from legitimate rapid-fire events.

- Feature Embedding: Records are transformed into vectors capturing timestamps, geospatial coordinates, part numbers, and narrative text for high-dimensional similarity analysis.

- Unsupervised Clustering: Density-based algorithms group near-duplicate records despite naming variations and partial data.

- Probabilistic Matching: Graphical models estimate equivalence likelihood when identifiers are incomplete, balancing precision and recall according to business thresholds.

Consistency Enforcement and Master Data Management

An MDM hub consolidates identity attributes across source systems to maintain golden records for each asset and component. Deterministic keys—such as asset ID and event sequence—combine with probabilistic matching to detect and reconcile conflicting references. Reconciliation tasks surface in stewardship interfaces for human review, ensuring that canonical attributes—manufacturer, model, installation date—remain authoritative.

- Deterministic deduplication using unique identifiers and timestamps

- Probabilistic algorithms to resolve near-duplicates across evolving schemas

- Data steward reconciliation workflows to approve authoritative records

- Publication of golden records for downstream consumption

Automated Correction and Enrichment

AI services proactively correct errors and enrich data with inferred values and contextual metadata, minimizing manual remediation and enhancing analytic value.

- Predictive Imputation: Regression and matrix-factorization models predict missing sensor readings or maintenance attributes, tagging estimates with confidence scores for traceability.

- Canonicalization of Values: Natural language understanding maps synonyms, abbreviations, and misspellings to standardized terms. Solutions from Trifacta and Alteryx leverage transformer architectures for this alignment.

- Knowledge Graph Integration: AI-driven graphs link assets, parts, failure modes, and maintenance actions, enriching records with vendor details, lead times, and compliance requirements.

- Feedback-Driven Correction Loops: User validations from technicians and planners feed back into supervised models, refining correction logic over time without manual rule updates.

Data Enrichment and Contextual Augmentation

With clean and consistent records established, enrichment microservices augment data by linking maintenance logs to failure taxonomies, injecting operational schedules, and merging external context. Weather feeds, road surface ratings, and cargo characteristics are pulled from APIs and third-party providers. GPS coordinates map to route segments via geospatial indexes, and repair codes align with enterprise taxonomies. Each enrichment step appends lineage metadata—source, timestamp, transformation—to ensure full traceability and support feature engineering for analytics teams.

Quality Monitoring and Automated Feedback Loops

Continuous observability maintains data quality over time. Dashboards display metrics for record freshness, schema compliance, validation pass rates, and duplicate counts. Alerts trigger on sudden volume drops or quality degradations, prompting investigations by data engineering teams. Aggregated metrics over sliding windows detect gradual drifts in data patterns that static thresholds may miss.

Automated feedback loops leverage unsupervised clustering to identify emerging data distributions—for example, sensor firmware updates altering output characteristics. When new clusters deviate from established norms, the system quarantines affected records and notifies field engineers to inspect and recalibrate devices. This proactive approach prevents corrupted data from propagating into predictive models and operational decisions.

Data Products and Outputs

The integration stage delivers consolidated, high-fidelity data products that fuel downstream analytics and operations:

- Clean Data Lake: Time-aligned, gap-filled sensor streams and historical records with embedded quality metadata and anomaly flags.

- Unified Asset Histories: Harmonized chronological profiles capturing failure events, repair actions, usage patterns, and environmental contexts.

- Master Data Catalog: Indexed inventory of data domains, fields, schemas, lineage, and quality indicators.

- Data Quality Dashboard: Interactive reports visualizing missing value rates, schema compliance, validation outcomes, and duplicate summaries.

- Metadata and Lineage Reports: Machine-readable traces of each data element through ingestion, cleansing, enrichment, and integration steps.

- Enrichment Logs: Detailed records of external reference joins, semantic mappings, and AI-driven imputations.

- Schema Registry Artifacts: Versioned canonical model definitions referenced by downstream pipelines to validate assumptions and ensure compatibility.

Dependencies and Integration Requirements

- Raw Data Ingestion Pipelines: Continuous streams of sensor telemetry, maintenance logs, scheduling records, and third-party data sources powered by AWS Glue or Apache Kafka.

- Data Storage Infrastructure: Scalable object stores such as Amazon S3 or HDFS, paired with compute clusters or serverless engines for batch and micro-batch transformations.

- ETL and Orchestration Frameworks: Workflow engines like Apache Airflow

- Schema Mapping and Standardization Rules: Templates and dictionaries maintained by data architects and SMEs to reconcile field naming, units, and data types across sources.

- AI-Driven Quality Validation Modules: Machine learning models and rule-based engines embedded in environments such as Databricks, Azure Data Factory, or Google Cloud Dataflow.

- Metadata Management Systems: Catalog services like Apache Atlas or commercial catalogs storing dataset descriptions, lineage graphs, and governance policies.

- Access Control and Security Policies: Role-based permissions, encryption keys, and network controls safeguarding sensitive data during integration workflows.

- Collaborative Processes: Handoff protocols between data engineers, quality analysts, and domain SMEs to resolve mapping ambiguities, approve schema changes, and monitor SLA adherence.

Handoffs to Downstream Analytics and Operations

- Feature Engineering and Anomaly Detection: Data scientists access unified datasets via SQL interfaces or APIs to derive time series features and feed anomaly detection models, referencing canonical schema definitions from the registry.

- Predictive Model Development: Training environments consume labeled historical data and enriched records, registering model artifacts alongside dataset snapshots for reproducibility and lineage tracking.

- Real-Time Inference Deployments: Edge and cloud inference engines subscribe to change data capture topics or Kafka streams broadcasting new integrated records in lightweight JSON payloads.

- Alerting and Visualization Systems: BI dashboards and alerting services query curated data marts through semantic layers, ensuring maintenance planners view only validated, error-free information.

- Maintenance Orchestration Engines: Work order and scheduling tools invoke integration APIs to fetch asset health indicators, creating alerts or orders only when data quality thresholds are satisfied.

- Continuous Improvement Feedback Loop: Post-maintenance outcomes, technician annotations, and actual repair times are appended to unified histories and ingested back into integration pipelines, updating enrichment logs and quality dashboards for ongoing refinement.

Chapter 5: Feature Engineering and Anomaly Detection

Feature Creation Objectives and Inputs

The feature engineering stage transforms high-velocity sensor streams and operational records into structured attributes that expose early indicators of component wear, system degradation, and abnormal conditions. Establishing clear objectives and precise input requirements ensures that downstream anomaly detection algorithms receive consistent, meaningful metrics tied to real-world behavior. By prioritizing data provenance, temporal alignment, and domain relevance, organizations create a solid foundation for scalable predictive maintenance across diverse fleets.

Key objectives for feature creation include:

- Deriving statistical summaries—mean, variance, skewness, and kurtosis—over sliding windows to quantify central tendencies and dispersion.

- Computing time-domain features such as rate of change, peak counts, and dwell time above thresholds to capture dynamic stress cycles.

- Performing frequency-domain analyses, including fast Fourier transforms and wavelet decompositions, to detect characteristic vibration signatures.

- Generating cross-sensor correlation metrics to identify interdependencies, for example between engine temperature and exhaust backpressure under varied loads.

- Integrating operational metadata—asset age, cumulative mileage, load profile, and environmental conditions—to contextualize sensor readings and adjust failure thresholds.

- Encoding maintenance histories, repair codes, and fault logs as categorical or temporal features that inform supervised models of past system behavior.

Feature engineering relies on diverse input categories drawn from multiple systems:

- Raw Sensor Telemetry—Continuous time-series data from accelerometers, temperature probes, pressure transducers, current sensors, and GPS devices.

- Event and Fault Logs—Diagnostic trouble codes, safety alerts, and operator-reported events providing labeled instances of anomalies.

- Maintenance Histories—Structured records of scheduled services, replacements, and unscheduled repairs for establishing baselines.

- Asset Metadata—Vehicle attributes such as make, model, manufacturing date, engine type, and configuration to support normalization and transfer learning.

- Operational Context—Route profiles, load weights, driver behaviors, and environmental factors like ambient temperature and road conditions.

- Data Quality Indicators—Metrics such as sampling rate, completeness, and sensor health status guiding feature selection and filtering.

Prerequisites and data conditions ensure engineered features reflect true system behavior rather than collection artifacts:

- Timestamp Synchronization—Align all data to a common reference, correcting clock drift and normalizing to Coordinated Universal Time.

- Data Completeness Thresholds—Require a minimum percentage of valid readings within feature windows, with imputation or exclusion of gaps.

- Unit Standardization—Convert physical measurements to consistent units to prevent model bias.

- Noise Filtering and Baseline Correction—Apply filters and drift adjustments to remove out-of-band noise and preserve anomaly signals.

- Label Availability—Link historical failure labels or maintenance flags to feature records for supervised training.

- Schema Consistency—Maintain stable data schemas and manage changes via versioning to preserve pipeline integrity.

- Data Lineage—Record input sources, transformation logic, and timestamps for auditability and interpretability.

Effective data management underpins scalable feature engineering:

- Centralize raw and engineered data in a feature store or data lake, enabling standardized access and versioning.

- Isolate development, testing, and production environments to safeguard live pipelines and support controlled rollouts.

- Version control feature definitions and transformation scripts in a code repository, enforcing peer review and documentation.

- Apply retention policies to balance storage costs and performance, archiving older records while keeping recent data accessible.

- Leverage distributed compute frameworks such as Apache Spark or Dask to parallelize feature computations and accelerate time to insight.

Popular frameworks and managed services streamline feature creation and anomaly detection:

- scikit-learn for prototyping statistical feature extractors and baseline models.

- SciPy and Librosa for advanced spectral and wavelet analyses.

- TensorFlow and PyTorch for deep learning-based feature learners such as autoencoders and recurrent networks.

- AWS IoT Analytics and Azure Machine Learning for integrated data ingestion, feature engineering, and model training pipelines.

Workflow and Pipeline Orchestration

The feature engineering and anomaly detection workflow orchestrates extraction, transformation, and enrichment tasks across batch and streaming contexts. Coordination among scheduling tools, compute clusters, metadata services, and model hosting environments ensures seamless flow of feature artifacts from a centralized repository to real-time inference engines and offline training systems.

Data Retrieval and Preprocessing Handoff

Orchestrators such as Apache Airflow or Kubeflow trigger extraction jobs that retrieve preprocessed sensor readings, maintenance logs, and contextual data from a unified data repository. A manifest describing file locations, schemas, and quality metrics passes between components to enable automated lineage tracking and dependency resolution.

Windowing and Time-Series Feature Computation

Continuous streams are segmented into time windows—fixed durations or event-driven intervals—over which statistical aggregations execute in parallel on distributed platforms. Core steps include:

- Defining window schemas and event time semantics.

- Scheduling batch tasks or streaming jobs by asset group.

- Applying aggregations—mean, variance, skewness, kurtosis, rolling percentiles—across sensor channels.

- Joining auxiliary metadata such as operating mode or geographic location.

- Writing interim feature tables to the warehouse and updating the metadata catalog.

Spectral and Frequency-Domain Pipelines

Dedicated pipelines leverage SciPy to compute fast Fourier transforms, power spectral densities, and wavelet coefficients. Operators configure frequency bands of interest via version-controlled files, and orchestration systems distribute workloads across compute nodes to balance sensor sampling rates.

Statistical Baselines and Comparative Profiles

Baseline services analyze historical feature distributions using clustering and density estimation pipelines built on scikit-learn. Reference metrics—interquartile ranges, Mahalanobis distance thresholds—are emitted to an event bus powered by Apache Kafka whenever baselines update, ensuring anomaly detectors consume current envelopes.

Batch and Real-Time Coordination

Workflows distinguish between batch feature generation for offline training and streaming computation for live detection. A shared feature store abstracts storage, unifying outputs under a consistent API to minimize training-serving skew. Synchronization mechanisms propagate new feature definitions and verify schema alignment across modes.

Integration with Anomaly Detection Models

Feature vectors feed into models hosted by TensorFlow Serving or PyTorch deployments. Batch scoring services apply models to historical feature tables and persist anomaly scores, while edge or cloud-function inference engines process streaming feature windows with millisecond latency. Model versions, input schemas, and scoring logs are tracked for traceability and rapid rollback.

Observability and Alerting

An observability framework monitors pipeline latency, feature drift, and inference rates, feeding dashboards powered by Grafana. Alerting rules trigger notifications when service-level objectives are breached or feature distributions deviate from norms, accelerating root-cause analysis and preserving trust in anomaly signals.

AI-Driven Detection Algorithms

AI-driven algorithms underpin robust anomaly detection by distinguishing between benign fluctuations and genuine fault precursors. Algorithms run on edge devices, centralized platforms, and decision engines to deliver timely, actionable insights.

Statistical Baseline Models

Moving averages, exponentially weighted smoothing, and ARIMA models establish dynamic thresholds that adapt to seasonal patterns. Lightweight implementations on edge controllers trigger local alerts, while streaming platforms maintain sliding windows for real-time updates.

Unsupervised Learning for Novel Patterns

Clustering techniques such as k-means, DBSCAN, and Gaussian mixtures segment feature spaces—combining vibration bands, temperature gradients, and usage metrics—to flag data points outside dense clusters. Batch and streaming unsupervised analyses run on TensorFlow or PyTorch frameworks, with adaptive retraining to refine cluster definitions.

Supervised and Semi-Supervised Classification

Where labeled failure records exist, classification models—random forests, gradient boosting, support vector machines—predict discrete health states. Pipelines in AWS SageMaker or Azure Machine Learning curate balanced datasets, tune hyperparameters, and manage model registries. Semi-supervised methods enhance coverage when labeled examples are scarce.

Deep Learning Architectures

Convolutional neural networks extract spatial and temporal hierarchies from spectral signatures and imagery, while long short-term memory networks capture sequential dependencies. Autoencoders compress normal behavior into compact representations, flagging anomalies when reconstruction errors exceed thresholds. GPU-accelerated clusters handle training, and edge AI chips or optimized cloud functions deliver inference at low latency. Interpretability techniques such as attention mapping and feature importance analysis provide engineers with explanations for flagged anomalies.

Hybrid Rule-Based and AI Systems

Rule engines codify domain expertise—maximum temperature rises or vibration limits—while AI models adapt to evolving patterns. Confidence scores increase when rule-based checks and machine learning predictions align, prompting immediate action. A rules management service interfaces with AI pipelines to update threshold logic without redeployment.

Streaming Analytics and Event Brokers

Frameworks such as Apache Kafka and Apache Flink orchestrate data flows through detection algorithms, ensuring at-least-once delivery and stateful computations. Streaming analytics apply windowing and incremental feature computation to preserve temporal context under variable data rates.

Alert Scoring and Prioritization

Multi-criteria fusion engines aggregate outputs from statistical, unsupervised, supervised, and deep learning algorithms, weighting signals by severity, confidence, and asset criticality. Decision-support platforms ingest scored alerts alongside asset metadata, presenting ranked work items and recommending optimal maintenance windows based on risk and operational impact.

Feedback Loops and Continuous Learning

Field feedback from maintenance confirmations and dismissals flows into annotation tools that integrate with retraining schedulers. Automated pipelines aggregate labeled events, retrain supervised classifiers, and update unsupervised cluster definitions, ensuring the detection system evolves with new failure modes and operating profiles.

Outputs, Dependencies, and Handoffs

The deliverables of feature engineering and anomaly detection serve as foundational inputs for predictive models, monitoring dashboards, and maintenance orchestration systems. Well-defined outputs, rigorous dependency management, and robust handoff protocols ensure seamless continuity across the end-to-end workflow.

Principal Deliverables

- Engineered Feature Vectors—Time-aligned metrics including trend slopes, rolling statistics, spectral components, and baseline comparisons, stored in a centralized feature store.

- Anomaly Scores and Flags—Quantitative deviation scores and categorical flags indicating potential fault conditions.

- Metadata Registrations—Records of feature definitions, computation logic, version identifiers, and data lineage in services like Feast or Amazon SageMaker Feature Store.

- Batch and Streaming Feeds—Historical exports for retraining and real-time streams for live inference.

- Event Notifications—Messages published to event buses or message brokers when anomalies exceed thresholds.

- Quality Assurance Reports—Summaries of data completeness, distribution checks, and detection performance metrics.

Upstream Dependencies

- Cleaned Data Sources—Schema-consistent, timestamp-aligned, and validated inputs from integration and quality management stages.

- Compute Environments—Scalable resources such as Apache Spark clusters, Kubernetes microservices, or serverless functions.

- Feature Store Platforms—Repositories configured with schemas and access controls for efficient retrieval.

- Machine Learning Frameworks—Version-controlled toolkits like TensorFlow, scikit-learn, and PyTorch.

- Orchestration Engines—Workflow tools such as Apache Airflow or Prefect managing directed acyclic graphs of tasks.

- Observability Stacks—Logging and monitoring platforms tracking pipeline health and performance.

- Data Governance Services—Catalogs capturing lineage, ownership, and compliance metadata.

Handoffs to Downstream Systems

- Model Development Pipelines—Batch exports of feature vectors and labeled outcomes ingested into environments for training, validation, and hyperparameter tuning using tools like MLflow.

- Real-Time Inference Engines—Streaming feature feeds and anomaly flags delivered to edge or cloud endpoints for continuous assessment.

- Alerting and Visualization Platforms—Event triggers integrated via REST APIs or message connectors into dashboards such as Grafana and incident response tools like PagerDuty.

- Maintenance Orchestration Systems—API calls or message-based integrations pushing high-confidence anomaly notifications into CMMS for automated work order creation.

- Metadata Synchronization—Automated routines registering new feature definitions and detection metrics with governance platforms to keep catalogs current.

Versioning, Traceability, and Governance

- Assign semantic version identifiers to feature definitions and transformation code, capturing changes in Git repositories.

- Record data lineage from raw sensors to engineered values using metadata services for auditability.

- Manage anomaly threshold parameters in centralized policy stores to ensure consistency across environments.

- Log event publications with timestamps and acknowledgments to support post-incident analysis and compliance.

By defining precise outputs, rigorously managing dependencies, and implementing robust handoff protocols, organizations achieve reliable, scalable AI-driven predictive maintenance. This disciplined approach ensures that engineered features, anomaly scores, and metadata artifacts power downstream models, real-time monitoring, and maintenance orchestration with precision and repeatability.

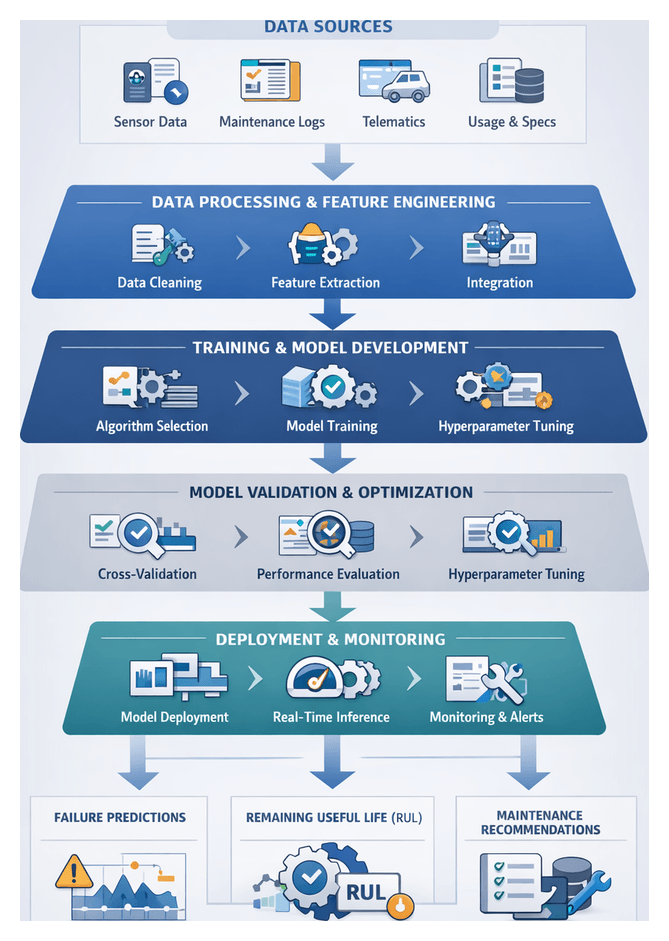

Chapter 6: Predictive Model Development and Validation

Purpose and Scope of Predictive Model Development

The predictive model development stage represents the analytical core of AI-driven maintenance in transportation and logistics. By transforming historical sensor readings, telematics logs, and maintenance records into machine learning and statistical models, organizations gain the ability to forecast failure probabilities and estimate remaining useful life (RUL) for vehicles, trailers, engines, and other mission-critical assets. These forecasts enable a shift from reactive or calendar-based servicing to condition-based maintenance, optimizing spare-parts inventory, reducing unplanned downtime, and extending asset longevity. In an industry where uptime drives delivery reliability and customer satisfaction, accurate failure forecasts support planned interventions, resource alignment, and minimized operational disruptions, while delivering quantifiable financial benefits and informed risk management.

Data Ecosystem and Prerequisites

Robust predictive models rely on a mature data ecosystem comprising integrated sources, preprocessing pipelines, and governance frameworks. Prior to training, the following prerequisites must be met:

- Comprehensive Asset Registry with unique identifiers, manufacturer specifications, and usage histories.

- Sensor Infrastructure and Edge Processing for vibration, temperature, and pressure readings, including noise reduction and event flagging at the point of capture.

- Unified Data Platform consolidating time-series sensor logs, maintenance histories, telematics traces, and operational schedules.

- Feature Engineering Outputs such as trend slopes, frequency components, and comparative baselines, prepared as model inputs.

- Data Quality Management routines for validation, deduplication, and correction to ensure completeness and consistency.

- Governance, Security, and Compliance Policies enforcing role-based access, data lineage tracking, and industry regulations (for example FMCSA and ISO standards).

Meeting these conditions ensures that predictive algorithms operate on trustworthy data, with standardized metadata that stakeholders can audit and trust.

Training Data and Annotation Standards

The effectiveness of supervised learning hinges on representative inputs and high-quality labels. Core categories of training data include:

- Time-Series Sensor Data from accelerometers, thermocouples, and pressure transducers at high sampling rates.

- Maintenance and Repair Logs detailing component replacements, service actions, failure diagnoses, and labor durations.

- Failure Event Labels with fault type, severity, initiation and resolution timestamps, and corrective outcomes.