AI Driven Legal Research and Risk Mitigation Workflow Guide

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Operational Challenges in Legal Research and Risk Management

The volume and complexity of legal materials continue to grow at an unprecedented pace. Organizations generate petabytes of contracts, court filings, statutes, regulations, internal policies and external alerts each year. Simultaneously, regulatory landscapes evolve constantly across multiple jurisdictions, driven by policy reforms, technological advances and geopolitical developments. Legal teams struggle to locate relevant documents, extract key provisions and assess compliance obligations using manual processes. This leads to high costs, delayed decision making and increased risk exposure.

- Document Volume Explosion: A steady influx of master service agreements, court dockets, regulatory guidance and industry bulletins overwhelms traditional intake methods.

- Evolving Regulatory Dynamics: Continuous updates in data privacy, environmental standards and financial regulations demand real-time visibility into contract triggers and remediation actions.

- Document Complexity and Heterogeneity: Contracts may contain embedded spreadsheets, scanned signatures and multilingual annexes, while case files mix typed transcripts and discovery materials in diverse formats.

- Unchecked Risk Exposure: Manual review often misses indemnity obligations, termination deadlines or jurisdictional conflicts until after breaches occur.

- Data Silos and Fragmented Systems: Disparate repositories, on-premises servers, cloud storage and specialized applications prevent unified access and end-to-end workflows.

- Resource Constraints and Skill Gaps: Highly skilled attorneys spend excessive time on routine tasks, while locating experts in niche regulatory domains remains challenging.

- Version Control and Auditability: Ensuring traceable document versions, AI model parameters and reviewer annotations is essential for compliance audits and litigation support.

- High Stakes of Timely Research: Missed deadlines can trigger fines, sanctions or litigation setbacks, placing pressure on legal teams to deliver rapid, evidence-based insights.

Addressing these challenges requires a systematic intake process that captures, normalizes and classifies all relevant materials before applying advanced analytics. Prerequisites include a unified document management policy, standardized taxonomies, secure connectors to diverse data sources and governance frameworks for data privacy and model accountability.

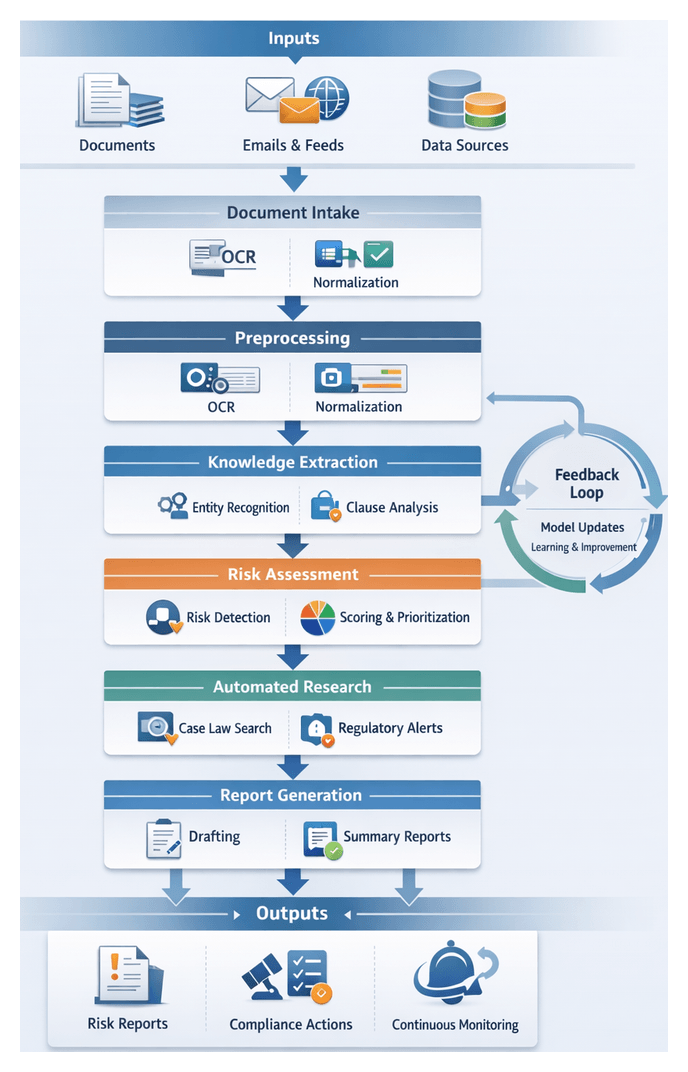

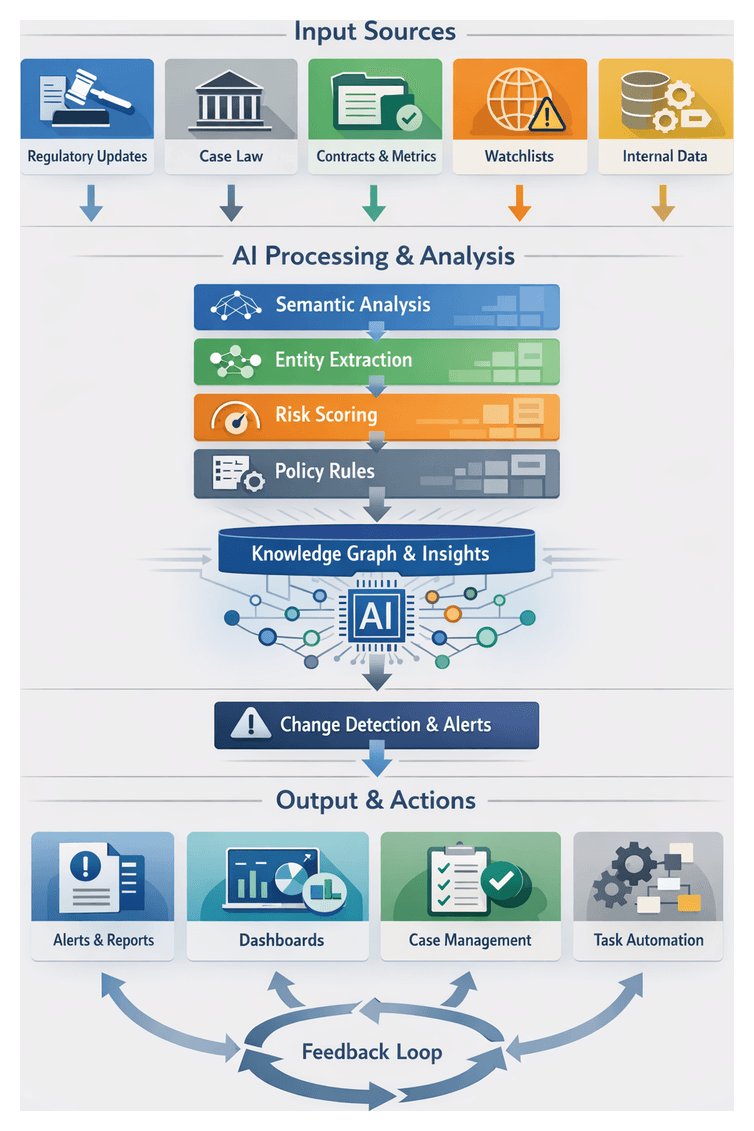

Towards a Cohesive AI Workflow

A cohesive AI workflow integrates stages of data ingestion, text processing, knowledge extraction, risk analysis and reporting into an orchestrated pipeline. By replacing ad hoc handoffs and email exchanges with standardized interfaces and event-driven triggers, legal operations achieve predictable throughput, transparent monitoring and clear accountability.

Key Participants and Systems

- Document Intake System: Ingests contracts, case files, regulatory texts and external feeds via upload portals, email services or APIs.

- Preprocessing Engine: Applies optical character recognition and format conversion, producing machine-readable text and enriched metadata.

- Knowledge Extraction Module: Uses tools like Kira Systems and Google Document AI to identify entities, clauses and relationships.

- Risk Classification Engine: Employs rule-based checks and machine-learning models on platforms such as Azure Machine Learning to flag compliance issues and contractual exposures.

- Scoring and Prioritization Service: Ranks risks by severity using business context from systems like DocuSign CLM.

- Automated Research Agents: Conduct semantic searches in databases such as Thomson Reuters Westlaw and LexisNexis.

- Report Drafting Interface: Leverages AI drafting modules like OpenAI GPT-4 and human review in platforms such as Clio.

- Orchestration Layer: Coordinates tasks, triggers exception workflows and maintains audit logs.

- Monitoring and Alerting Component: Tracks regulatory changes and contract performance, generating timely notifications.

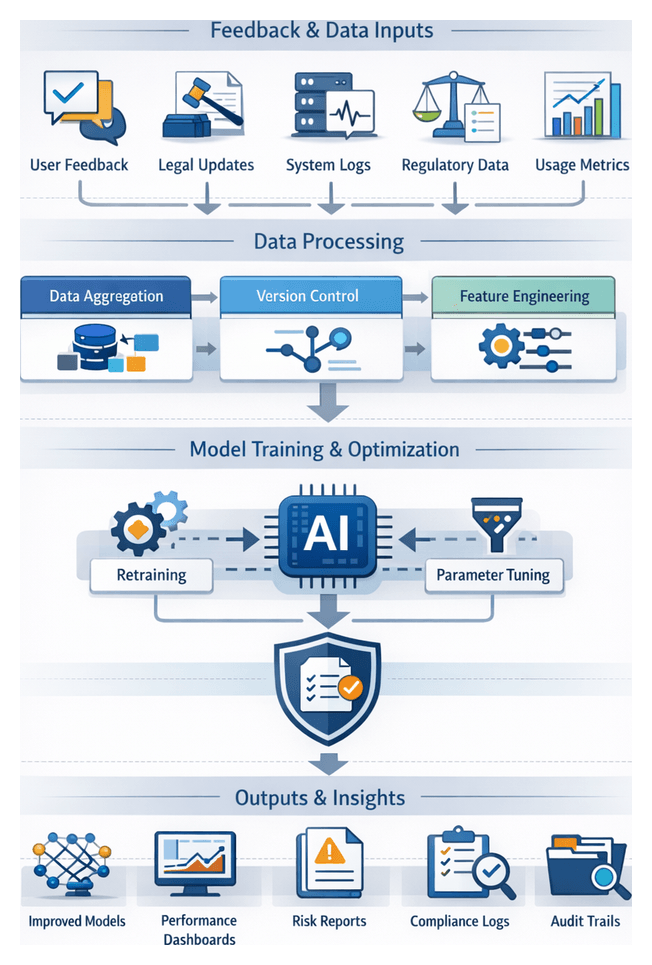

- Feedback and Learning Pipeline: Uses tools like DataRobot to retrain models based on user corrections and performance metrics.

Workflow Sequence

- Ingestion: Documents enter via portals, email connectors or APIs and are cataloged with preliminary metadata.

- Preprocessing: OCR engines and format converters produce normalized text formats stored in a unified repository.

- Extraction: NLP engines extract parties, dates, obligations and clauses, populating a knowledge graph.

- Risk Detection: Rule sets and ML classifiers analyze extracted data to generate risk flags and confidence scores.

- Scoring: Risks are quantified and ranked according to impact models and external risk factors.

- Automated Research: High-priority items trigger semantic searches for relevant case law and statutes.

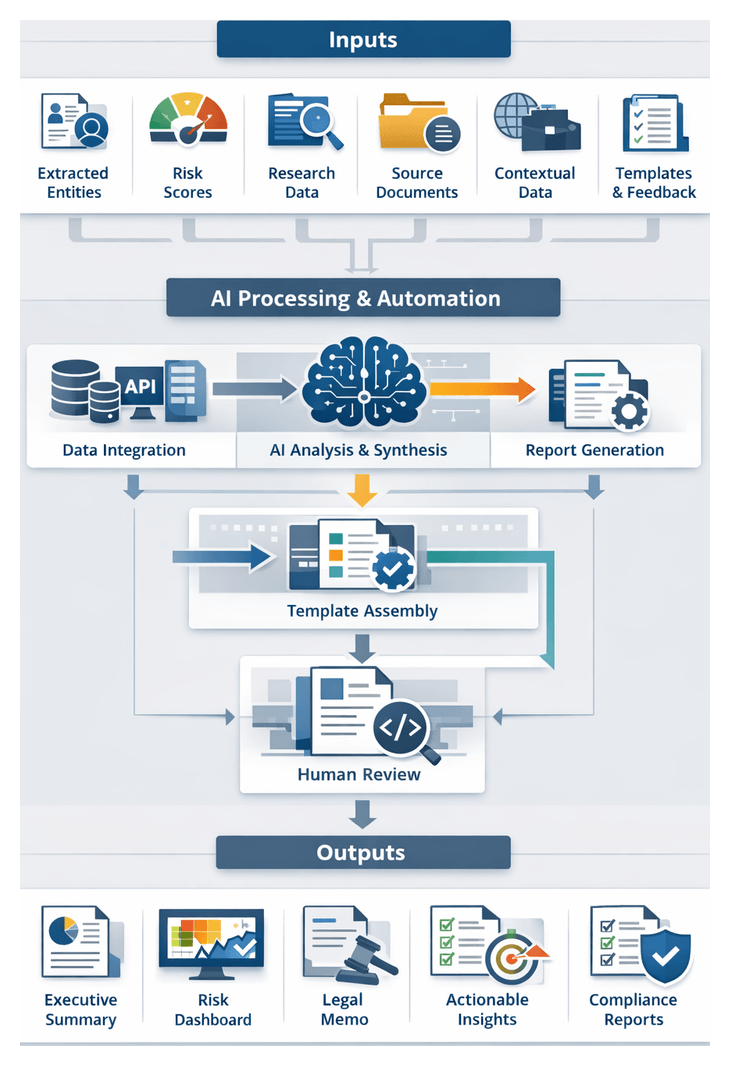

- Drafting: NLG modules compile executive summaries and dashboards for review.

- Decision Support: Approved findings are integrated into case management systems with assigned tasks and deadlines.

- Continuous Monitoring: Alerts for regulatory updates or contract expirations are routed to stakeholders.

- Feedback Loop: User annotations and performance data feed retraining pipelines, updating models and ontologies.

AI Capabilities in Research and Risk Analysis

Artificial intelligence transforms legal research by automating routine tasks, revealing hidden relationships and enabling predictive insights. By moving beyond keyword searches to context-aware models, AI enhances accuracy and accelerates time to insight.

Core Functions

- Document Classification and Topic Tagging: Pretrained classifiers such as Google Cloud Natural Language categorize documents by risk profile, jurisdiction or subject.

- Entity Extraction and Relationship Mapping: Engines like IBM Watson Discovery identify legal entities and build knowledge graphs that reveal contractual linkages.

- Semantic Search and Contextual Retrieval: Services in Microsoft Azure Cognitive Services surface relevant precedents and clauses based on intent rather than exact terms.

- Predictive Analytics and Risk Forecasting: ML models analyze historical outcomes to predict dispute likelihood and compliance violations.

- Automated Summarization and Issue Spotting: Natural language generation via OpenAI GPT-4 produces concise summaries highlighting key obligations and deadlines.

Integration and Governance

- API-First Design: Each AI capability exposes RESTful or gRPC interfaces, enabling modular scalability.

- Event-Driven Processing: Message buses such as Apache Kafka dispatch tasks and trigger downstream services automatically.

- Model Management: A registry tracks versions, training data provenance and performance metrics, with automated validation before deployment.

- Security and Compliance: End-to-end encryption, role-based access controls and audit logging ensure confidentiality and regulatory adherence.

- Explainable AI and Bias Mitigation: Tools provide feature-level explanations for risk scores, while training data audits prevent unintended bias.

Risk Signal Generation

- Clause Flagging: Pre-trained models detect non-standard indemnities or liability language for attorney review.

- Regulatory Alerts: Change-detection algorithms compare new regulations against existing obligations and trigger notifications.

- Exposure Scoring: Numeric values reflect potential financial and reputational impact, guiding prioritization.

- Contextual Classification: Hybrid engines combine policy rules with ML patterns to categorize alerts by type and severity.

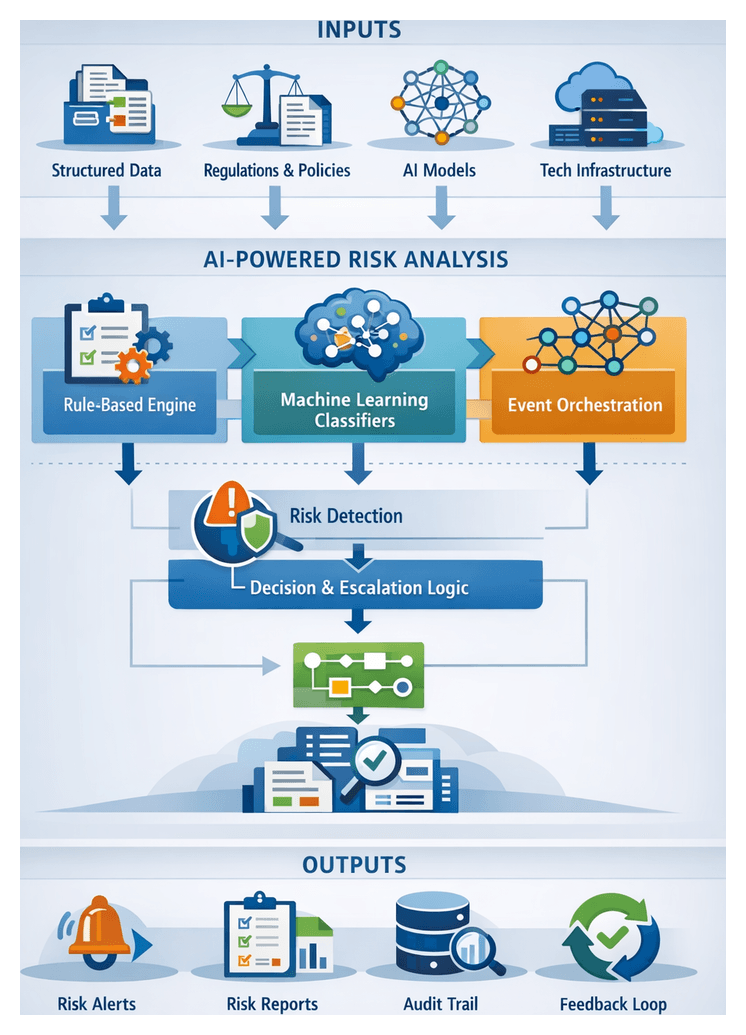

Architecture of an AI-Driven Solution

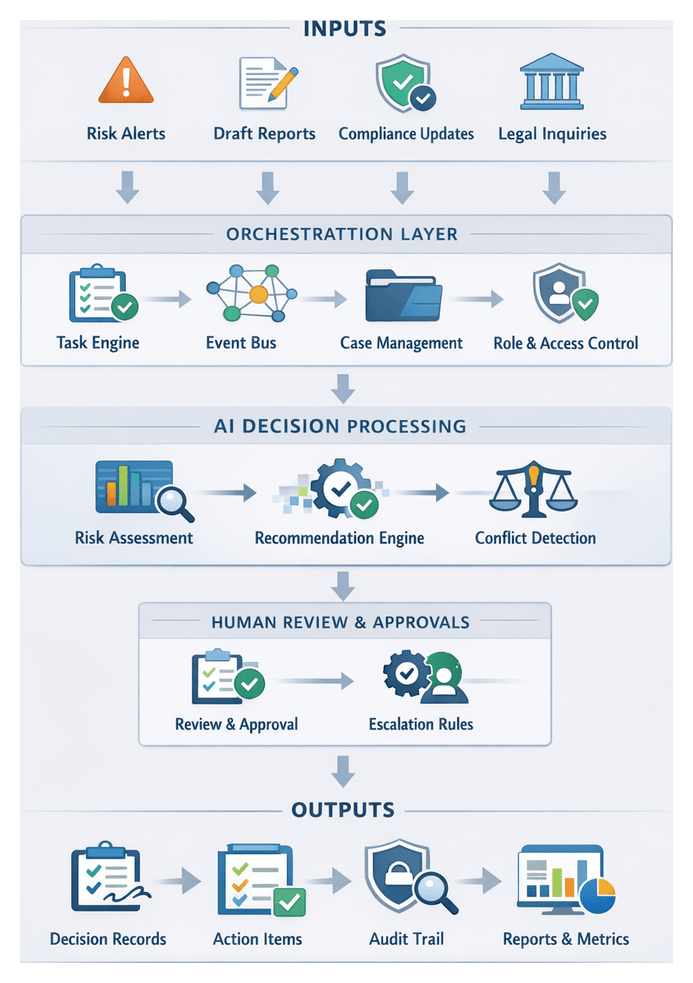

A modular, layered architecture underpins a scalable, transparent AI workflow. Distinct stages encapsulate inputs, outputs and dependencies, with a central orchestration layer managing sequencing, human-in-the-loop approvals and audit trails.

- Data Ingestion: Catalogs raw assets and metadata, publishing file pointers to preprocessing queues.

- Preprocessing and Normalization: Converts files to plain text or XML, detects languages and enriches metadata.

- Knowledge Extraction: Populates entity tables and knowledge graphs via NLP pipelines.

- Risk Identification and Classification: Generates risk flags and preliminary categories using policy engines and ML classifiers.

- Risk Scoring and Prioritization: Produces quantitative scores and priority lists based on impact models.

- Automated Legal Research: Curates case law, statutes and annotated summaries via semantic search engines.

- Insight Generation and Drafting: Creates draft memoranda, dashboards and language templates with NLG models.

- Decision Support and Orchestration: Assigns tasks, logs approvals and routes action items to compliance processes.

- Compliance Monitoring and Alerting: Delivers real-time alerts and change logs tied to performance metrics.

- Continuous Learning and Optimization: Retrains models, updates ontologies and refines workflows based on feedback.

Integration and Resilience

- Message Queues and RESTful APIs: Standardized interfaces ensure decoupled, fault-tolerant handoffs.

- Model Registry and Fallback Strategies: Tracks AI artifacts and provides rule-based backups when needed.

- Health Monitoring and Containerization: Automated checks and isolated runtime environments support reliable operations.

- Horizontal Scaling and Encryption: Stateless stages scale on demand, while data encryption safeguards sensitive content.

Implementation Roadmap

- Baseline Assessment: Map current processes, repositories and integration points.

- Pilot Deployment: Deploy ingestion, preprocessing and extraction on a representative document set.

- Iterative Expansion: Introduce risk detection, scoring and research agents, refining handoffs based on user feedback.

- Governance Onboarding: Establish policies for data privacy, access controls and model accountability.

- Continuous Improvement: Leverage feedback loops to retrain models, update taxonomies and optimize the workflow.

By aligning technology with human expertise, enforcing robust governance and adopting a modular architecture, legal teams can transform research and risk management. The result is faster, more accurate insights, reduced operational costs and a resilient platform that adapts to evolving regulations and organizational needs.

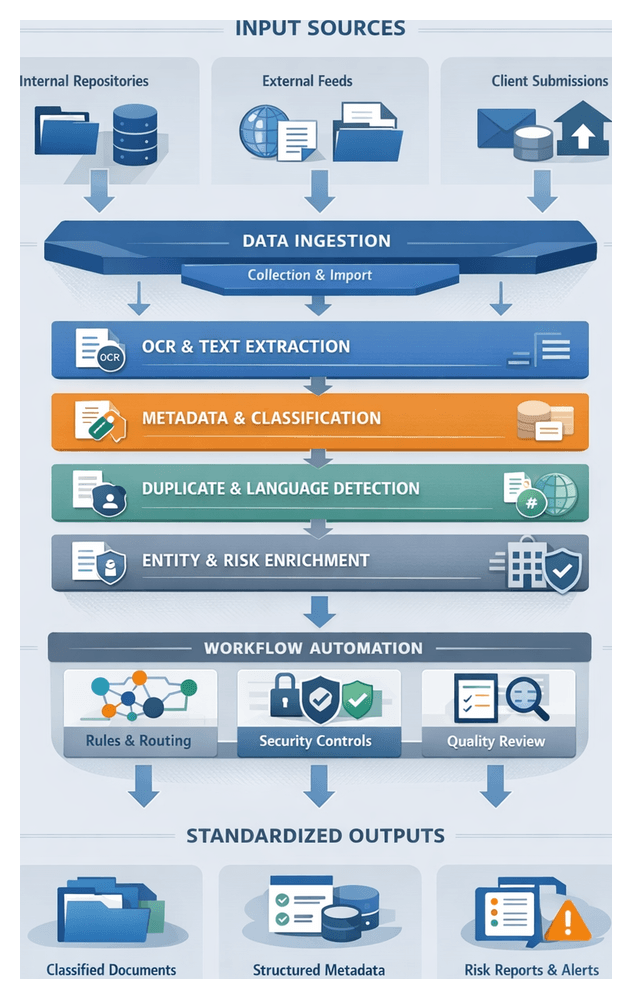

Chapter 1: Data Ingestion and Document Intake

Purpose and Goals of the Intake Stage

The Intake Stage establishes a unified, secure, and standardized foundation for AI-driven legal research and risk mitigation. By aggregating contracts, filings, regulatory bulletins, correspondence, and third-party feeds into a governed repository, the intake process eliminates manual bottlenecks and siloed information. Four core objectives guide its design:

- Unified capture of all incoming documents and data streams.

- Consistent classification through an initial taxonomy and metadata schema.

- Secure handling via encryption, access controls, and audit trails.

- Seamless routing based on document type, jurisdiction, or priority.

Achieving these goals ensures that downstream AI stages—entity extraction, risk analysis, and automated reporting—operate on organized, high-quality inputs, accelerating time-to-insight and reducing operational risk.

Prerequisites and Governance

Governance and Policy Framework

A robust governance model defines ownership, roles, and standards for retention, security, and compliance. Essential elements include:

- A firm-wide classification taxonomy for categorizing documents (agreements, pleadings, discovery materials) and tagging attributes such as jurisdiction, counterparty, and confidentiality level.

- Role-based access controls and approval workflows to restrict document viewing and routing.

- Compliance alignment with regulations such as GDPR or HIPAA, preserving audit trails and preventing unauthorized disclosures.

Technical Infrastructure and Connectivity

Scalable IT infrastructure supports high-volume ingestion and secure storage. Key requirements include:

- Elastic storage solutions, on-premises or cloud-based, with redundancy and backups.

- Encrypted network channels (VPNs or SSL/TLS) and sufficient bandwidth for batch uploads, streaming feeds, and API integrations.

- Connector frameworks for systems like SharePoint, iManage, NetDocuments and external sources such as PACER or regulatory websites.

Data Quality and Standardization

Consistent formatting and metadata integrity at intake reduce downstream errors and improve AI accuracy:

- Supported file formats (PDF/A, Word, TIFF, XML, JSON) with procedures to flag or convert unsupported types.

- Mandatory metadata fields (document date, author, source, counterparty) and controlled vocabularies.

- Exception management workflows for corrupted files or missing metadata, with quarantine buckets and notification processes.

Sources and Document Types

The intake system must ingest content from diverse internal and external locations, applying the appropriate classification and routing logic from the outset.

Internal Repositories

- Document Management Systems such as iManage or NetDocuments.

- Contract Lifecycle Management platforms like Luminance and Kira Systems.

- Case and matter management tools tracking filings and client communications.

- Shared network drives and email archives containing legacy files and draft memos.

External Feeds and Regulatory Sources

- Judicial dockets and filings via PACER or state court registries.

- Regulatory publications from the SEC, Federal Register, or European Commission.

- Industry watchlists and compliance databases from providers such as Dow Jones or Thomson Reuters.

Client and Counterparty Submissions

- Email attachments and secure portal uploads containing signed agreements or exhibits.

- Hard-copy scans digitized with OCR services like Google Cloud Vision API or Azure Form Recognizer.

- Structured data feeds from third-party providers delivering market data and research.

AI-Driven Ingestion Capabilities

The intake stage leverages AI microservices to transform raw inputs into enriched, standardized data assets.

Optical Character Recognition

Image-based files are converted to machine-readable text with high-precision OCR engines such as Amazon Textract, ABBYY FineReader, or Google Cloud Document AI. These services preserve tables, columns, and footnotes, returning text outputs with positional metadata for layout reconstruction.

Metadata Extraction and Tagging

AI models identify key attributes—title, author, dates, parties, jurisdiction—using tools like Azure Form Recognizer or IBM Watson Natural Language Understanding. Extracted fields include confidence scores and adhere to custom taxonomies, facilitating accurate indexing and search.

Preliminary Classification

Supervised classifiers based on transformer architectures or decision trees assign document types—contracts, pleadings, regulatory notices—using platforms such as Relativity or open-source frameworks built on spaCy. Low-confidence results route to human review queues, blending AI speed with expert oversight.

Language Detection and Multi-Language Support

Language detection models, including open-source libraries or commercial services like Google Cloud Translation, identify document languages to invoke appropriate OCR engines or translation workflows, ensuring global materials are processed correctly.

Duplicate Detection

Fingerprinting techniques (MinHash, SimHash) detect exact and near duplicates to prevent redundant processing and maintain version links across revisions.

Data Enrichment and External Reference Linking

Extracted entities are validated and enriched against external databases or APIs such as OpenCorporates, attaching standardized identifiers, corporate addresses, and status information that inform risk models.

Integration Patterns and Model Governance

Each AI capability operates as a microservice within an event-driven architecture, using message queues and an API gateway to orchestrate processing. Model governance frameworks monitor performance metrics—OCR character error rate, classification precision—and trigger retraining with human-verified examples when drift occurs. Versioned artifacts and validation reports ensure auditability.

Security and Compliance

All data in transit and at rest is encrypted, and role-based access controls restrict service invocation. Models flag personally identifiable information for redaction, and audit logs capture service calls and predictions for regulatory review.

Intake Workflow and System Orchestration

The end-to-end intake process transforms raw inputs into QA-validated, metadata-enriched packages ready for preprocessing. Sequential actions include source integration, central repository consolidation, pre-assessment filtering, metadata enrichment, automated classification, dynamic routing, quality control, and handoff.

- Source integration via email APIs, SFTP, watch-folders, eDiscovery connectors, and secure web portals.

- Capture and normalization using engines like ABBYY FlexiCapture, converting legacy formats into searchable PDFs and applying version control.

- Content filtering with duplicate detection, password handling, and size thresholds.

- Metadata extraction, entity recognition, and context inference with Amazon Comprehend or on-premise NLP services.

- Machine learning classification and tagging yielding topic labels, risk flags, and priority markers.

- Dynamic routing by a BPMN engine such as Camunda, directing documents to high-priority, language-specific, standard pipelines, or exception queues.

- Automated and manual quality checks ensuring metadata completeness, OCR accuracy, and sampling-based audits.

- Handoff to preprocessing via message buses or API triggers once validation criteria are met.

System interactions span capture engines, AI microservices, orchestration platforms, case management portals, and monitoring dashboards. Key roles include intake coordinators, paralegals for validation, and IT specialists for connector maintenance and model updates. Performance metrics—throughput, intake time, error rate, SLA adherence, queue depths—are tracked on dashboards with alerting to guide continuous improvement.

Outputs, Handoff, and Quality Assurance

At the intake stage’s conclusion, standardized deliverables enable seamless downstream processing:

- Document packages containing original files, OCR or native text, version IDs, and checksums, serialized as ZIP archives or JSON manifests and ingested into platforms.

- Structured metadata artifacts—classifications, extracted fields, language tags, provenance details, preliminary risk flags—stored in indexes such as Elasticsearch or managed datastores via Google Document AI.

- Quality and confidence metrics covering OCR accuracy, completeness checks, format compliance, and duplicate scores, guiding conditional routing or human review.

Handoff mechanisms include message queues (Kafka, RabbitMQ), RESTful APIs, change data capture streams, or filesystem watchers. Dependency management and versioning capture package versions, timestamped metadata snapshots, integrity proofs, and AI service release references. Error handling employs classification tiers, retry policies with backoff, engine fallback options, and dead-letter queues, with alerts escalated for human intervention. This rigorous framework ensures that preprocessing and extraction pipelines receive reliable, traceable inputs, maintaining throughput and compliance in AI-driven legal research workflows.

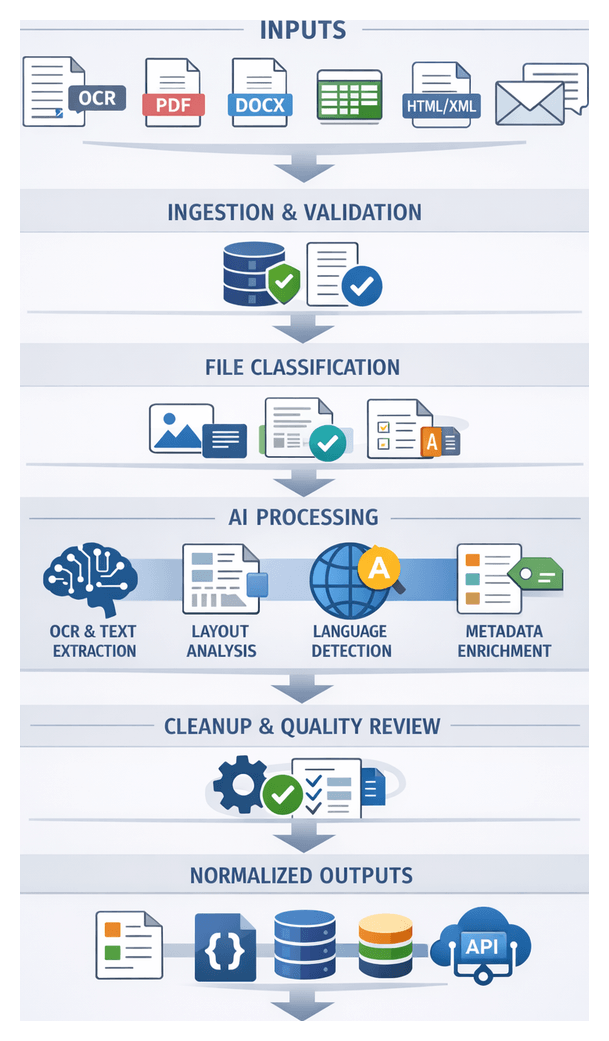

Chapter 2: Preprocessing and Text Normalization

Preprocessing Objectives and Inputs

The preprocessing stage transforms incoming legal documents—regardless of format or origin—into a unified, machine-readable text representation enriched with aligned metadata. By enforcing input validation, format conversion, and metadata alignment at scale, it minimizes manual remediation, accelerates processing cycles, and ensures full auditability. Consistent normalization underpins reliable downstream AI functions such as entity extraction, clause segmentation, and risk detection.

Key objectives of this stage include:

- Convert heterogeneous file formats into a standardized text representation suitable for NLP and machine learning.

- Validate document integrity and quality, flagging files that fail resolution, completeness, or encoding standards.

- Extract and enrich metadata attributes—such as document type, creation date, jurisdiction, and author—from headers and content.

- Annotate language, encoding, and structural markers (headings, tables, lists) to guide downstream parsing.

- Maintain traceability by logging transformation steps, tool versions, and corrective actions.

Prerequisites and Tooling

- Secure Repository Access: Raw files and ingestion metadata must reside in a version-controlled storage with system read/write permissions.

- Metadata Schema: A controlled vocabulary and schema defining required fields (for example, document_id, jurisdiction_code) and permitted values.

- Quality Thresholds: Minimum image resolution (300 DPI), file size limits, and UTF-8 encoding standards. Outliers are flagged for review.

- Language Configuration: Supported languages and character sets specified. Unsupported languages routed to human operators.

- Service Credentials: API keys and endpoints for OCR and extraction tools such as Amazon Textract, Google Cloud Vision, and ABBYY FlexiCapture.

- Error Handling Policies: Defined retry logic, fallback conversions, and escalation procedures for persistent failures.

Acceptable Input Formats

- Scanned Images (TIFF, JPEG, PNG) require OCR with high-resolution scans processed by services like Amazon Textract or Google Cloud Vision.

- Searchable PDFs are parsed directly when containing embedded text; otherwise OCR fallback applies.

- Word Documents (DOC, DOCX) leverage libraries that preserve semantic markup (headings, tables, lists).

- Spreadsheets (XLSX, CSV) convert tabular data into structured arrays, mapping headers to metadata fields.

- Markup Files (HTML, XML) strip irrelevant tags while retaining hierarchy and content structure.

- Email Archives (EML, MSG) extract headers, body text, and attachments, preserving cross-references for traceability.

Text Normalization Workflow Sequence

A central orchestration engine governs the text normalization pipeline, coordinating document repositories, AI services, metadata systems, human validation interfaces, and logging tools. The following sequence ensures that raw files become structured, high-quality inputs for downstream analysis.

- Workflow Initiation and Format Validation: The orchestration engine triggers upon receipt of ingestion outputs, invoking a format validation service to confirm supported file types. Non-compliant or corrupted files are routed to an exception queue.

- File Classification and Routing: A microservice inspects headers and MIME types to classify each file as native text, image-only, or mixed content, routing it to the appropriate processing stream.

- AI-Driven Text Extraction: Image-based and mixed content files are processed by OCR services such as Amazon Textract, Google Cloud Vision, or Azure Cognitive Services. Native text files proceed directly to encoding normalization.

- Layout Analysis and Structural Reconstruction: Bounding box metadata guides a layout engine—using solutions like Azure Form Recognizer or open-source frameworks combining Tesseract with custom heuristics—to identify paragraphs, headings, tables, and lists, reconstructing the document hierarchy.

- Language Detection and Encoding Standardization: Language identification services (for example, langdetect) tag primary and secondary languages at block level. Text is normalized to UTF-8, and unsupported languages trigger alerts.

- Metadata Enrichment and Tagging: Standardized metadata fields—title, author, date, jurisdiction, document type—are extracted and mapped using a metadata management service augmented by NLP libraries such as spaCy. Controlled vocabulary terms ensure consistency.

- Noise Reduction and Text Cleanup: Cleanup routines eliminate hyphenation errors, strip non-textual artifacts, normalize whitespace and punctuation, and correct common OCR misrecognitions. High-impact corrections are logged for audit.

- Quality Assessment and Human Review: Documents receive quality scores based on OCR confidence, layout success, and metadata completeness. Those meeting thresholds advance automatically; others enter a human review queue for validation.

- Exception Management: Errors—format incompatibility, timeouts, metadata conflicts—are logged centrally. Transient failures trigger retries; persistent issues generate alerts for operations teams.

- Versioning and Delivery: Original and normalized outputs are persisted in a version-controlled repository with timestamps, agents, and change logs. The final structured bundles—text payloads, metadata, quality metrics—are forwarded to extraction APIs.

AI-Driven Normalization Functions and Roles

AI services automate critical transformation tasks within the normalization pipeline, ensuring consistent quality and efficiency across large document volumes.

Character Recognition and Optical Text Extraction

OCR engines—such as ABBYY FlexiCapture, Google Cloud Vision, and Amazon Textract—detect printed and handwritten text, preserve font styles and reading order, and generate confidence scores for gating low-confidence segments.

Document Layout Analysis and Structural Segmentation

AI modules distinguish document regions—title pages, clause headers, tables, footnotes—using convolutional neural networks and visual cues. Platforms like Azure Form Recognizer and custom Tesseract-based heuristics assign semantic tags to guide targeted downstream processing.

Language Detection and Text Standardization

Automated language identification tools (for example, langdetect) evaluate text at sentence or paragraph granularity, enabling locale-aware tokenization and integration with translation services such as Amazon Translate or Azure Translator. Encodings and diacritics are normalized to Unicode standards.

Semantic Noise Reduction and Cleanup

Sequence-to-sequence models and transformer-based denoisers correct OCR artifacts, recombine hyphenated words, remove watermarks and graphic overlays, and standardize punctuation and special characters, improving input quality for NLP models.

Advanced Table, Chart, and Form Extraction

Deep learning modules in Azure Form Recognizer or Amazon Textract’s table analysis detect grid structures, infer merged cells, and extract header relationships. Embedded charts are transcribed to capture legends and axis labels. Outputs—CSV, JSON, or XML—preserve cell coordinates and semantic labels.

Metadata Alignment, Enrichment, and Validation

NLP models, including spaCy fine-tuned for legal entities, extract author names, execution dates, and jurisdiction references. Extracted values are mapped to a controlled schema, validated against business rules, and enriched with external data (for example, corporate registries).

Governance, Monitoring, and Dynamic Role Assignment

A governance layer tracks OCR confidence, segmentation accuracy, and entity extraction metrics in real time. Low-confidence outputs route to human reviewers; feedback loops retrain models. Dynamic assignment directs specialized content (multilingual or complex contracts) to expert teams, while audit logs capture every normalization event.

Normalized Outputs and Processing Handoffs

Upon completion of normalization, standardized artifacts and clear handoff mechanisms enable seamless integration with knowledge extraction components. Stringent quality controls, metadata schemas, and packaging formats ensure data integrity and auditability.

Core Output Artifacts

- Canonical Text Streams: Fully extracted text stripped of extraneous formatting, with consistent markup for paragraphs, headings, tables, and lists.

- Enriched Metadata Records: Structured fields capturing document IDs, source references, format types, language codes, and timestamps.

- Structural Tag Index: Mappings of text offsets to semantic tags—clause headers, numbered sections, signature blocks—for targeted extraction.

- Quality and Confidence Metrics: OCR confidence scores, language mismatch alerts, and tokenization error rates guiding downstream logic.

Metadata Schema and Enrichment

- Document ID: Persistent unique identifier across systems.

- Source Reference: Originating repository or feed name.

- Format and Language: Original file type and detected primary language.

- Normalization Timestamp: Completion time for audit and performance tracking.

- Checksum and Version: Cryptographic hash of text streams and version increments for lineage.

Packaging and Transmission Formats

- JSON Bundles: Nested representation of text, metadata, and tags conforming to canonical schemas.

- XML Transmissions: Schema-validated packages for legacy integrations.

- Parquet Files: Columnar structures for analytics pipelines and data warehouses.

- Database Inserts: Bulk loading into relational or NoSQL stores (for example, PostgreSQL, MongoDB).

Handoff Mechanisms

- Message Queues and Event Streams: Platforms like Apache Kafka or RabbitMQ enabling asynchronous triggers.

- Object Storage Notifications: S3 or Cloud Storage events invoking serverless functions.

- RESTful APIs: Synchronous or asynchronous endpoints accepting JSON or XML payloads.

- Shared File Systems: Network volumes with manifest files signaling readiness.

Dependencies and Downstream Integration

- Taxonomy Alignment: Metadata must conform to controlled vocabularies used by extraction models.

- Model Parameter Configuration: Language, domain, and document type parameters driven by metadata fields.

- Service Orchestration: Workflow engines such as Apache Airflow coordinating normalization and extraction tasks.

- Security Credentials: Authorized tokens or certificates required for artifact consumption.

Quality Assurance and Exception Handling

- Reprocessing Triggers: Low confidence or incomplete tag coverage flags documents for retry or human review.

- Partial Outputs: Generated when only sections normalize successfully, with error logs indicating skipped content.

- Audit Logs and Notifications: Centralized tracking of exceptions by type, frequency, and document attributes.

Security, Governance, and Compliance

- Encryption: TLS in transit and AES-256 at rest for all artifacts.

- Access Controls: Role-based permissions enforced via identity and access management.

- Retention Policies: Defined retention and secure purge schedules to meet data minimization requirements.

- Compliance Reporting: Automated generation of processing and access logs for audits.

Versioning and Audit Trail

- Immutable Versions: Each output assigned a unique version identifier tied to its checksum and parameters.

- Diff Reports: Textual change logs capturing manual corrections and reprocessing reasons.

- Audit Records: Tamper-evident logs documenting timestamps, principals, and model configurations.

By delivering standardized outputs, enforcing rigorous normalization standards, and defining clear handoff mechanisms, this stage ensures that downstream AI services receive high-quality, traceable inputs—enabling accurate entity recognition, clause mapping, and risk analysis within legal research workflows.

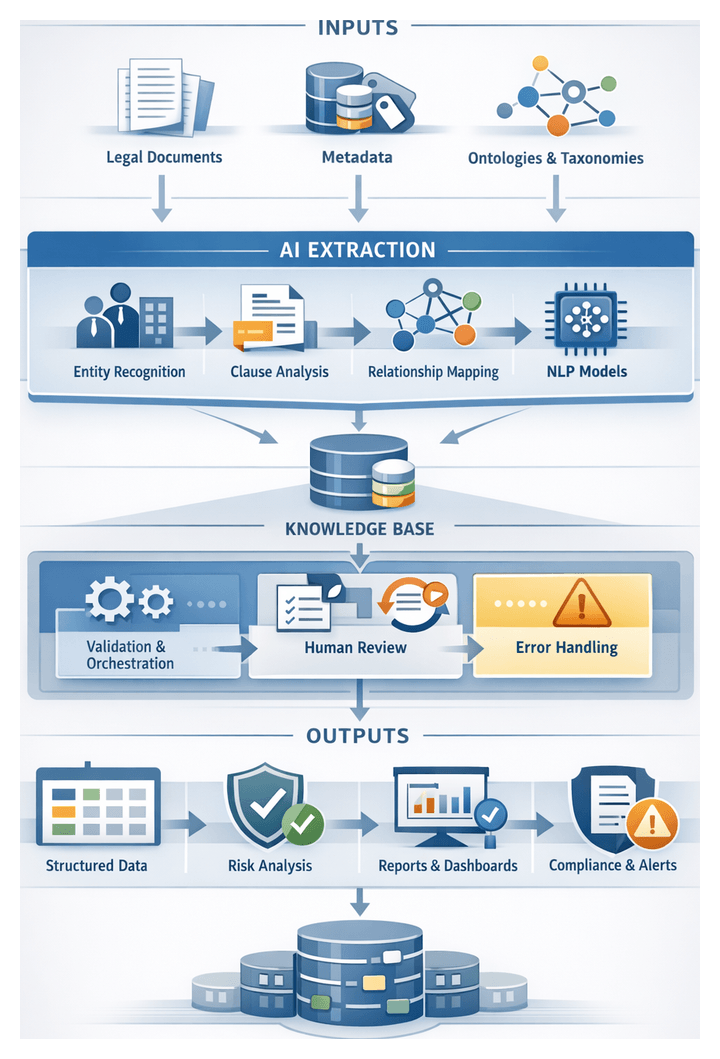

Chapter 3: Knowledge Extraction and Structuring

Purpose of the Extraction Stage

The extraction stage transforms unstructured legal documents into a structured, machine-readable knowledge base, enabling precise risk analysis, classification, and decision support. By isolating parties, obligations, clauses and relationships, this stage converts narrative text into discrete data elements that power downstream workflows. Key objectives include:

- Data Structuring: Parsing contracts, case law, regulations and memoranda to capture semantic relationships.

- Entity Recognition: Detecting parties, dates, monetary values, jurisdictions, statutes and other domain-specific elements.

- Clause Identification: Isolating provisions such as indemnities, termination rights, confidentiality obligations and compliance conditions.

- Relationship Mapping: Linking entities and clauses in a relational graph that models dependencies and cross-references.

- Knowledge Base Population: Feeding extracted elements into a centralized repository or knowledge graph for query, aggregation and traceability.

By automating these tasks, organizations accelerate review cycles, enhance risk detection accuracy and maintain rigorous audit trails for compliance and governance.

Inputs, Prerequisites, and System Integration

Normalized Text Artifacts

High-quality, preprocessed text forms the basis of reliable extraction. It must feature accurate character recognition with minimal OCR errors, consistent encoding and formatting, correct language segmentation, and preservation of structural elements such as headings, lists, tables and footnotes.

Enriched Metadata Sets

Metadata provides context for extraction logic and disambiguation. Essential elements include:

- Document Type (contract, regulatory text, case opinion, memorandum)

- Source and Date (creation or modification timestamps)

- Jurisdiction and Governing Law

- Version History and Revision Identifiers

- Custom Tags (department, matter type, risk level, client identifier)

Reference Ontologies and Taxonomies

Ontologies and taxonomies define the legal vocabulary and relationship schema. They comprise entity dictionaries, hierarchical taxonomies (for example Party Counterparty Subsidiary), ontology graphs modeling permissible relationships, and regulatory thesauri mapping citations to standardized identifiers.

Pretrained NLP Models and Resources

Extraction leverages both rule-based and machine-learning approaches. Required models and resources include:

- Named Entity Recognition (NER) models fine-tuned for legal entities (parties, dates, amounts, obligations).

- Clause Segmentation algorithms trained to detect headings, numbering schemes and semantic boundaries.

- Relation Extraction modules for inferring dependencies between entities and clauses.

- Lexical and Syntactic Tools such as tokenizers, part-of-speech taggers and domain-adapted embeddings.

System Connectivity and Integration Points

Seamless integration with upstream and downstream systems ensures efficient data flow and operational visibility. Key interfaces include:

- Data Ingestion APIs or file-transfer mechanisms delivering normalized text and metadata.

- Knowledge Base Endpoints for persisting entities, clause structures and relational graphs.

- Logging and Monitoring Services capturing extraction metrics and diagnostic data.

- Configuration Management for rules, model versions and resource definitions ensuring reproducibility.

Entity and Clause Extraction Workflow

Workflow Initiation and Input Validation

An orchestration engine—often schedule-driven by Apache Airflow—assigns unique identifiers to document packages containing text, token boundaries and metadata. Pre-extraction validation checks confirm text completeness, language consistency, and metadata integrity. Failed documents route to a staging area for manual review.

Orchestration and Service Coordination

A message broker such as Apache Kafka delivers document payloads to microservices or serverless functions in a decoupled pipeline. Core steps include enqueuing text and metadata, invoking NER and clause segmentation services, storing interim artifacts, and triggering human-in-the-loop reviews. Monitoring dashboards track queue depths, latencies and error rates to maintain high throughput.

Named Entity Recognition and Classification

Tokenized text is processed by legal-domain AI models, including:

- spaCy with custom contract corpora

- Hugging Face Transformers fine-tuned BERT variants

- OpenAI GPT-based endpoints for dynamic, context-aware extraction

These services return structured JSON with entity types, offsets, confidence scores and embeddings for downstream processing.

Clause Segmentation and Relationship Mapping

Parallel to NER, clause segmentation engines apply rule-based patterns and machine-learning classifiers to partition text into clauses (termination, indemnification, confidentiality, etc.). Dependency parsers and semantic role labelers map relationships such as “amends,” “refers to” or “imposes obligation on.” Interim knowledge graphs—often stored in Neo4j—capture these interdependencies for query-driven analysis.

Integration with Knowledge Graph and Repositories

Extracted entities and clauses are merged into a central knowledge graph with de-duplication, normalization of synonyms (for example Tenant = Lessee) and annotation of provenance metadata (document IDs, timestamps, model versions). This integration supports complex queries such as retrieving all confidentiality clauses involving a specific counterparty.

Human-in-the-Loop Review and Feedback

Critical extraction junctures are augmented with human review interfaces, often integrated into case management platforms. Reviewers validate or correct entity labels, clause boundaries and custom annotations. Feedback is captured to retrain models, ensuring iterative improvements and traceability of changes.

Error Handling and Dynamic Reprocessing

Low-confidence extractions or incomplete fragments trigger automated remediation workflows, which invoke fallback rule-based extractors, split large documents for targeted reprocessing, or escalate critical failures via alerting channels. Corrected artifacts are reintegrated into the knowledge graph and downstream systems are notified of updates.

Coordination with Downstream Systems

Validated outputs are dispatched to risk identification services through RESTful APIs, event notifications on Kafka topics, or bulk exports into data warehouses. Payloads include comprehensive metadata—extraction timestamps, model versions and reviewer logs—to support auditability and regulatory compliance.

Performance Monitoring, Scalability and Resource Management

Extraction throughput, latency, model accuracy and human-intervention rates are continuously tracked via dashboards. Container orchestration platforms like Kubernetes manage microservice scaling based on queue backlogs. High-speed NoSQL caches hold interim results, while long-term archives reside in cost-effective object storage.

Roles of NLP Models and Supporting Infrastructure

Model Archetypes and Extraction Roles

NLP models convert raw text into actionable data by performing:

- Named Entity Recognition identifying parties, dates, monetary values and jurisdictions using transformer-based models such as LegalBERT.

- Relation Extraction uncovering semantic links between entities with graph convolutional networks and dependency parsing.

- Clause Classification segmenting and labeling document sections via sequence classifiers.

- Semantic Similarity and Contextual Embeddings assessing meaning using embeddings from libraries like Hugging Face Transformers.

Supporting System Components

- Document Management Systems providing repositories, version control and metadata indexing.

- Message Queues and Stream Processors such as Apache Kafka for ordered delivery and parallel processing.

- Model Serving Platforms like TensorFlow Serving or ONNX Runtime for RESTful deployment with GPU acceleration.

- Knowledge Graph Databases such as Neo4j for efficient traversal of interconnected entities and clauses.

- Monitoring and Logging Systems using Prometheus and visualization dashboards to alert on accuracy drops or latency spikes.

Deployment and Integration Patterns

- Monolithic Pipeline chaining all NLP functions within a single service for simplicity.

- Microservice Architecture encapsulating each function in its own service to enable targeted scaling and independent updates.

- Hybrid Batch and Real-Time Processing combining asynchronous batch jobs for bulk ingests with low-latency inference for high-priority documents.

Data Flow and Annotation Management

Annotations follow a consistent schema capturing document IDs, character offsets, label types, confidence scores and model versions. A centralized schema registry ensures uniform definitions across services. A validation workbench allows legal analysts to correct annotations, feeding back into retraining workflows.

Scalability, Reliability and Performance Considerations

- Autoscaling clusters (Kubernetes) adjusting model-serving pods based on resource metrics.

- Load balancing across model instances with health-check driven routing.

- Model version control via DVC or MLflow for reproducibility and rollback.

- Caching of high-confidence outputs to bypass redundant inference.

Security and Compliance

- Encrypted data in transit (TLS) and at rest (AES-256).

- Role-based access controls and audit logging of API calls and model outputs.

- On-premises or hybrid deployments for data residency requirements.

- Use of platforms with SOC 2 and ISO 27001 certifications.

Roles and Responsibilities

- Data Engineers building pipelines, managing schemas and orchestrating workflows.

- Machine Learning Engineers fine-tuning models, optimizing inference and managing versioning.

- Legal SME Analysts defining taxonomies, reviewing outputs and guiding training data selection.

- DevOps Teams maintaining infrastructure, monitoring health and enforcing security.

- Project Managers coordinating cross-functional efforts and aligning with legal objectives.

Structured Knowledge Outputs and Handoff

Entity Tables and Attribute Profiles

Entity tables enumerate extracted concepts—counter-parties, jurisdictions, statutes, values, dates and renewal terms. Columns capture entity type, canonical name, document IDs, character offsets, confidence scores and normalization links. These tables enable rapid filtering, aggregation and risk engine queries.

Clause and Obligation Mappings

Clause mappings record identifiers, taxonomy labels, text spans, associated entities and hierarchical context. Obligation mappings link parties to actions and deadlines, specifying scope, notice periods and potential financial impact for risk scoring.

Relationship and Knowledge Graphs

Property graphs or RDF models represent entities and clauses as nodes with edges denoting relationships like “party to contract” or “clause references statute.” Extraction tools such as spaCy and AWS Comprehend facilitate semantic relationship extraction. Knowledge graphs support multi-document queries and visual dashboards for holistic risk assessment.

Document Summaries and Anchor Points

AI drafting modules—such as OpenAI GPT or Microsoft Azure Cognitive Services—generate concise summaries with anchor points linking back to source text. Human reviewers validate these summaries to accelerate stakeholder review and exception handling.

Provenance Metadata and Audit Trail

Each artifact includes extraction timestamps, model versions, processing host identifiers and applied confidence thresholds. Audit logs capture human corrections and rule overrides, ensuring traceable pipelines for compliance and dispute resolution.

Dependencies and Quality Constraints

- Preprocessing accuracy to avoid misaligned offsets and missing metadata.

- Consistent document-level metadata for context-aware extraction.

- Regular model calibration and retraining for evolving terminology.

- Version control of models, taxonomies and business rules for consistent scoring.

- Automated validation workflows to flag low-confidence extractions.

Packaging and Data Exchange Mechanisms

- JSON or Avro bundles with schema definitions for entity tables, clause mappings and graphs.

- Graph exports in GraphML, Turtle or Neo4j Bolt protocol.

- Event streams via Kafka or RabbitMQ for real-time updates.

- RESTful APIs returning structured artifacts on demand.

- Secure file transfers (SFTP or encrypted object stores) for batch exports.

Handoff to Risk Identification and Classification

- Automated triggers initiate risk pipelines upon arrival of new data bundles.

- API-driven pulls by engines of structured data at scheduled intervals or on demand to perform classification tasks.

- Streaming integration into policy engines for near-real-time high-risk detection.

- Batch processing for bulk classification, generating risk tags and escalation flags.

Integration with Downstream Systems and Reporting

- Case Management Systems (iManage, Clio) populated with entity tables and clause mappings.

- Compliance Dashboards in Tableau or Microsoft Power BI visualizing risk graphs and metrics.

- Document Management Platforms (SharePoint, Box) enriched with anchor points and summaries.

- Reporting Engines aggregating extraction volumes, model performance and human intervention rates for continuous improvement.

Chapter 4: Risk Identification and Classification

Purpose of the Risk Identification Stage

The risk identification stage transforms structured outputs from knowledge extraction into actionable risk signals. By applying rule-based logic and machine-learning classifiers, it detects compliance issues, contractual breaches and litigation triggers before they escalate. Early codification of risk enables legal teams to prioritize reviews, allocate resources efficiently and maintain an audit trail for decision support and regulatory reporting.

In high-volume, evolving regulatory environments, manual review is unsustainable. AI enhances scalability and consistency by recognising patterns across thousands of documents, freeing legal professionals to focus on validation and strategy rather than repetitive analysis.

Prerequisites and Operational Readiness

Required Inputs

- Structured Text and Metadata

- Normalized text with clause segmentation and language standardization

- Tagged metadata: jurisdiction, effective dates, counterparties, document type

- Entity relationships from knowledge extraction, covering parties, obligations and deadlines

- Regulatory and Policy Frameworks

- Libraries of statutes, industry regulations and compliance guidelines

- Internal policy rule sets and risk taxonomies

- Watchlists and sanction lists from external providers

- AI Models and Training Data

- Pretrained classifiers tuned for legal text, including transformer-based networks

- Annotated datasets of past risk assessments and remediation decisions

- Evaluation metrics and calibration parameters with defined confidence thresholds

- Technology Infrastructure

- AI platforms such as IBM Watson Discovery and Microsoft Azure Cognitive Services

- Secure storage, access controls and integration with case management systems

- Operational Alignment

- Defined roles for experts validating risk signals and refining rules

- Escalation protocols guiding transition to scoring and review stages

- Feedback mechanisms for capturing corrections and policy updates

Readiness Criteria

- Completion of data ingestion, preprocessing and knowledge extraction with audit logs

- Approval of risk taxonomies detailing financial, regulatory, reputational and operational categories

- Configuration of rule engines and ML endpoints with error-handling logic

- Stakeholder sign-off on escalation workflows and notification channels

- Periodic reviews of integration stability, model accuracy and policy alignment

Meeting these conditions ensures that risk identification aligns with legal operations strategy, regulatory obligations and organizational risk appetite.

Risk Detection Workflow

The risk detection workflow orchestrates AI services, rule engines, policy repositories and human interfaces to identify compliance violations and contractual deviations. An event-driven architecture leverages messaging platforms and orchestration services to maintain throughput and reliability.

Input Preparation and Event Generation

Structured outputs from knowledge extraction—delivered as JSON or database records—trigger the workflow via an event bus such as Apache Kafka. Components then:

- Validate payload schema completeness

- Enrich events with provenance, timestamps and processing lineage

- Route to orchestration engines like Camunda or AWS Step Functions

Rule-Based Trigger Evaluation

Deterministic rules in engines such as Open Policy Agent enforce corporate and regulatory policies. Examples include:

- Absence or modification of indemnity clauses in high-value contracts

- Expired regulatory references in jurisdiction-specific agreements

- Performance milestone deadlines at risk

Rule violations emit signals tagged with policy identifiers, severity levels and recommendation codes for classification or direct escalation.

Machine-Learning Risk Classification

Parallel to rules, ML classifiers detect nuanced risk patterns. Services may include OpenAI GPT-based classifiers or AWS Comprehend. The process involves:

- Feature extraction from entity tables, combining contract values, party risk profiles and external data

- Model inference via RESTful APIs, returning probability scores for categories like financial exposure or reputational damage

- Aggregation of rule-based signals and model outputs into unified risk vectors with confidence metadata

Decision Logic and Escalation Pathways

Orchestration engines apply decision-tree logic to consolidated signals:

- High-severity rule triggers escalate to senior counsel

- ML confidence above thresholds (for example, 0.85) generates automated alerts via YouTrack or Microsoft Teams

- Medium risks create tasks in systems like iManage or Clio

- Low-risk signals log to repositories for periodic review

Human Review and Feedback Loop

Flagged items enter review queues where analysts can validate or override AI classifications, annotate remediation plans and approve or reclassify risks. Feedback events feed continuous learning pipelines to refine models and rules over time.

Integration with Compliance Repositories

APIs to platforms such as LexisNexis and Westlaw Edge verify regulatory references and enrich signals with case law. Updates from external sources refresh policy engines and rule bases to reflect the latest legal developments.

Audit Trail and Logging

Each decision point logs timestamps, services invoked, rule and model identifiers and user actions. Centralized logging with the ELK stack or Splunk supports regulatory audits, forensic analysis and operational monitoring.

Scalability and Parallel Processing

To process large document volumes, tasks are sharded by type or jurisdiction and distributed across compute clusters or serverless functions. Autoscaling inference clusters and batch processing off-peak workloads maintain performance under variable demand.

Continuous Improvement and Versioning

Updates to rules, models and workflow logic undergo version control and testing in staging environments. Automated validation ensures that new versions maintain or improve accuracy without disrupting operations.

AI Classification Capabilities

AI-driven classification applies supervised learning, natural language processing, rule engines and knowledge graph integration to convert raw legal text into explainable risk categories.

Supervised Learning Models

- Training Data Management harnesses expert-annotated datasets to train classifiers balancing common and rare risk types

- Feature Engineering uses embeddings from TensorFlow or PyTorch to represent syntactic and semantic patterns

- Model Architectures include transformer-based classifiers (BERT, RoBERTa) and gradient-boosted trees for interpretability

- Continuous Retraining integrates user feedback and data versioning for iterative model updates

Natural Language Processing and Semantic Understanding

- Contextual Embeddings capture term meanings in context and support multilingual analysis with Google Cloud AutoML or Azure Cognitive Services

- Named Entity Recognition identifies parties, dates and values using IBM Watson Natural Language Understanding

- Semantic Role Labeling clarifies agent, action and object roles to improve risk assignment accuracy

Rule Engines and Policy Orchestration

- Deterministic Regulatory Rule Sets enforce statutes and compliance criteria

- Policy Libraries encode internal guidelines, managed via interfaces that allow legal teams to update rules

- An orchestration layer in platforms like ContractPodAi coordinates rule execution and model inference

Knowledge Graphs and Ontologies

- Ontology Management uses standards like LKIF-Core to map hierarchical legal relationships

- Graph Databases such as Neo4j store entities and relationships for rapid inference

- Semantic Enrichment links extracted entities to external reference data, augmenting risk profiles

Confidence Scoring and Explainability

- Probability Scores guide threshold-based routing and human review

- Feature Attribution employs SHAP or LIME to highlight decision drivers

- Audit Logs record inputs, model versions, rule sets and outputs for compliance

Outputs and Handoff to Scoring

The risk identification stage generates annotated documents, risk catalogs, summary matrices, alerts and validation reports, all of which feed downstream scoring and prioritization workflows.

Annotated Documents and Risk Tag Catalog

- Annotations embed risk markers with RiskType tags, clause identifiers, highlight ranges and audit metadata

- Risk tag catalogs list DocumentID, ClauseID, RiskCategory, DetectionMethod, ConfidenceScore and versioning details

Risk Summary Matrices and Alerts

- Summary matrices aggregate counts, confidence statistics, portfolio segments and trend indicators for visualization

- Real-time alerts and payloads for platforms like Microsoft Teams or Slack include critical flags and remediation recommendations

Validation and Confidence Reports

- False positive/negative counts and user override logs

- Performance metrics (precision, recall, F1 scores) and retraining recommendations

Handoff Mechanisms

- Publishing catalogs and annotations via REST APIs or event buses

- Triggering scoring workflows with DocumentID and tag references

- Supplying matrices and validation metrics for dynamic threshold calibration

- Updating matter systems with initial assessments for resource allocation

Integration Patterns, Governance and Monitoring

- Event-Driven Architecture: publish risk events on a pub/sub bus for scoring and alerting

- API-First Design: standardized REST endpoints for retrieving annotations and catalogs

- Data Contracts: strict JSON schemas or Avro contracts to enforce consistency

- Incremental Updates: delta payloads for new or changed risk tags

- Audit Trails: trace identifiers linking back to extraction and ingestion events

Governance and Traceability

- Role-Based Access Control to restrict output visibility

- Immutable logs of output generation events with model and rule versioning

- Data retention policies for archival and deletion of risk artifacts

- Encryption at rest and in transit for output data

Monitoring and Operational Metrics

- Output volumes: annotated documents and risk tags per interval

- Error rates: serialization failures and API timeouts

- Latency: from extraction completion to output availability

- Model drift indicators from validation reports

Through a comprehensive suite of outputs, rigorous governance and well-architected integration, the risk identification and classification stage establishes a reliable foundation for prioritization, research and decision support in AI-driven legal workflows.

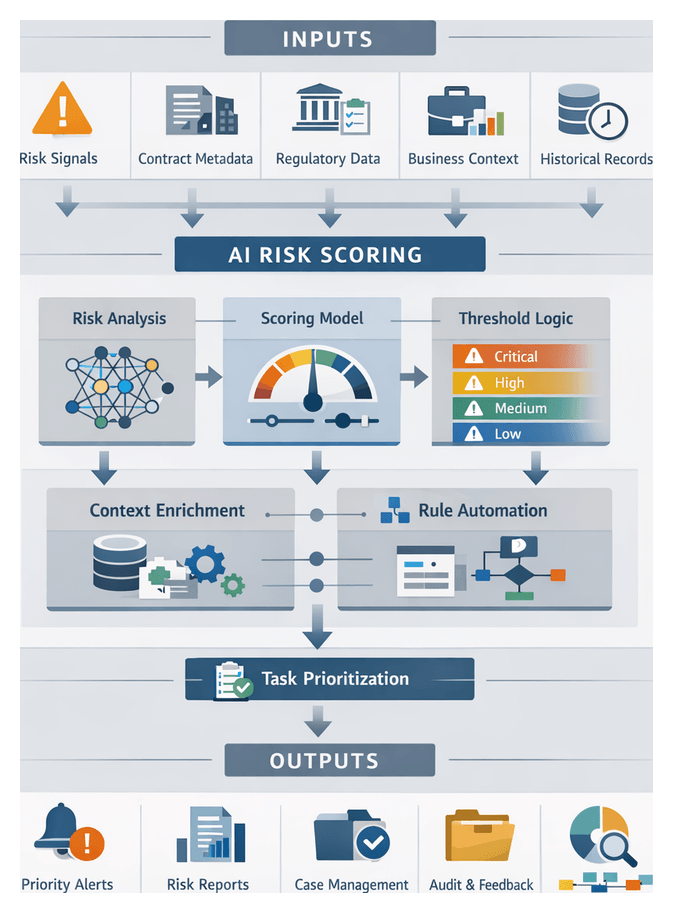

Chapter 5: Risk Scoring and Prioritization

The Role of Risk Scoring in Legal Operations

Risk scoring transforms raw risk signals into standardized metrics that quantify severity, likelihood, and potential impact. This phase bridges identification and quantification, guiding legal teams to focus on the most significant exposures, align mitigation efforts with business priorities, and enable data-driven decisions. A repeatable scoring framework ensures transparency, auditability, and a defensible rationale for prioritization.

Strategically, risk scoring aggregates individual events into a holistic view of organizational exposure, informing portfolio-level assessments. Operationally, it generates prioritized worklists for review or escalation, reducing subjective judgments and inefficiencies. Adopting a structured approach delivers consistency across teams, accelerates response times, and lays the foundation for predictive analytics and trend analysis. Embedding governance controls within scoring logic ensures that legal, financial, operational, and reputational factors are accounted for in every assessment.

- Standardize disparate risk signals into unified scoring scales.

- Rank and filter risks to guide review priorities.

- Integrate business context and regulatory requirements.

- Enable transparent criteria for audit trails and compliance reporting.

- Support ongoing monitoring and dynamic risk adjustment.

Inputs and Organizational Prerequisites for Effective Scoring

Accurate risk scoring relies on high-quality inputs and robust organizational processes. The following data categories form the backbone of the scoring stage:

- Identified Risk Signals and Classifications: Risk tags such as compliance breach, contractual non-performance, and associated confidence scores from AI classifiers, each with metadata on detection rationale and initial severity indicators.

- Entity and Contractual Metadata: Counterparty details, jurisdiction, effective and expiry dates, obligation types, penalty provisions, and governance clauses for context-aware weighting.

- Business Context and Impact Factors: Transaction volumes, financial thresholds, strategic significance, and exposure limits drawn from ERP or financial systems.

- Regulatory and Policy Frameworks: Regulatory change feeds, compliance checklists, policy version histories, and audit criteria to reflect evolving legal requirements.

- Historical Data and Calibration Sets: Records of past risk events, actual loss amounts, litigation durations, and settlement figures for training and benchmark calibration.

- Model Configuration and Parameter Settings: Algorithmic features, weighting schemes, threshold definitions, calibration datasets, and governance controls for score adjustments and escalation triggers.

Technical infrastructure and organizational processes must support these inputs:

- System integrations with document repositories, case management, analytics platforms, and dashboards to ensure bidirectional data flow.

- Data quality standards, including validation routines, error handling, and metadata consistency checks.

- Security and access controls with role-based permissions, data encryption in transit and at rest, and comprehensive audit logging.

- Governance processes, such as change advisory boards, to oversee scoring model updates and enforce policy alignment.

- Cross-functional teams of data scientists, legal experts, compliance officers, and IT specialists for model development, monitoring, and refinement.

Conditions that increase the likelihood of successful deployment include:

- Pilot and validation phases on representative contract sets to benchmark performance and identify gaps.

- Cross-functional collaboration among legal operations, risk management, compliance, finance, and IT to define weighting criteria and impact factors.

- Iterative feedback loops incorporating user feedback, expert overrides, and post-mortem analyses to refine scoring parameters.

- Continuous monitoring and reporting via real-time dashboards and periodic score distribution analyses to detect drift and maintain calibration.

- Regulatory change management processes to update scoring logic in response to new laws or policy shifts.

AI-Driven Scoring and Prioritization Workflow

The prioritization workflow transforms quantitative risk scores into actionable task lists and resource assignments. By applying threshold logic, business context rules, and escalation engines, the system elevates high-impact risks for immediate attention while routing lower-level items into standard review queues.

1. Workflow Initiation

When the risk scoring engine publishes a batch of scored items, each carries metadata including document ID, risk type, score, confidence level, and timestamp. An orchestration layer—often implemented with Microsoft Azure Logic Apps—listens for completion events and dispatches items into the prioritization queue.

- Event detection via completion messages or webhooks.

- Input validation of required fields before advancing items.

- Queue insertion into a prioritized broker such as Apache Kafka or Amazon SQS.

2. Threshold Evaluation and Tier Assignment

- Fetch risk band definitions from a policy engine like PolicyManager Pro.

- Compare raw scores against numeric ranges for tiers: Critical, High, Medium, Low.

- Assign tier tags and log mapping events for auditability.

3. Business Context Enrichment

Contextual attributes are fetched from enterprise systems—CLM, ERP, CRM—to refine priorities:

- Contract value lookup from CLM databases.

- Regulatory urgency from compliance calendars.

- Historical precedent from case management archives.

- Stakeholder priority from CRM sensitivity indicators.

4. Rule-Based Adjustments and Escalation

A decision engine such as Drools applies conditional logic:

- Escalate risks tied to revenue above configurable thresholds.

- Route cybersecurity compliance risks to a “Security Review” queue.

- Flag manual override cases for supervisory review.

5. Task Generation and Resource Mapping

With final tiers and escalation flags, the orchestration layer constructs task objects containing document references, priority scores, recommended actions, and due dates. These tasks are routed into case management platforms such as Salesforce Service Cloud or Jira Service Management via REST APIs, assigning users based on skill matrices and availability.

6. Notification and Collaboration

- Immediate alerts for Critical-tier items via SMS or push.

- Daily summaries for High and Medium tiers via email or dashboard widgets.

- Weekly reports for Low-tier items to support batch reviews.

7. Human Review and Exception Handling

- Validate AI-derived tiers and contextual enrichment.

- Adjust priorities or reassign tasks based on expert judgment.

- Capture feedback on scoring and rule effectiveness.

8. Monitoring, Auditing, and Feedback Loops

- Audit logs record every decision point and user override.

- Performance metrics feed into visualization tools like Tableau or Microsoft Power BI.

- User feedback collected in-app informs rule tuning and threshold adjustments.

9. Scalable and Resilient Design

- Event-driven architecture supports decoupled parallel processing.

- Idempotent task creation prevents duplication.

- Configuration-driven thresholds and rules enable rapid adaptation.

- High availability via clustered services and automatic failover.

AI Scoring Models and System Functions

AI-driven scoring models convert identified risks into quantifiable metrics. These models, combined with system functions for deployment, monitoring, and explainability, deliver reproducible, transparent risk assessments.

Model Selection and Architectural Patterns

- Logistic regression and linear models for interpretable binary classification.

- Ensemble methods (XGBoost, LightGBM) for high predictive accuracy.

- Deep neural networks with embeddings from OpenAI or Google Cloud Natural Language.

- Transformer architectures fine-tuned via Hugging Face Transformers.

- Anomaly detection models for novel risk patterns.

Feature Engineering and Data Inputs

- Entity-level attributes from the extraction stage (termination clauses, indemnities).

- Clause metrics: length, amendment history, template deviations.

- Semantic embeddings of clause or document text.

- Historical outcomes: dispute results and litigation records.

- Business context indicators from systems like Salesforce or Oracle ERP Cloud.

- Temporal features: time-to-renewal and notice deadlines.

Model Training, Calibration, and Validation

- Data splitting with balanced representation for training, validation, and testing.

- Hyperparameter tuning via Amazon SageMaker Autopilot or Azure Machine Learning.

- k-fold cross-validation for robustness.

- Calibration techniques (Platt scaling, isotonic regression) for probability alignment.

- Performance metrics: precision, recall, ROC-AUC, Brier score.

- Bias and fairness assessments with mitigation strategies.

System Integration and Deployment

- Model as a microservice with RESTful APIs using Kubeflow or MLflow.

- Event-driven scoring triggered by Apache Kafka or AWS EventBridge.

- Serverless inference via AWS Lambda or Azure Functions.

- Batch re-scoring jobs with AWS Batch or Google Cloud Dataflow.

- Integration into platforms such as iManage or Mitratech TeamConnect.

Real-Time Inference and Orchestration

- Low-latency inference endpoints with GPU acceleration.

- Autoscaling via Kubernetes Horizontal Pod Autoscaler or AWS Application Auto Scaling.

- Workflow orchestration using Camunda or n8n.

- State management with distributed caches like Redis.

Explainability, Auditability, and Compliance

- Feature importance analysis using SHAP or LIME.

- Decision logs stored in append-only systems such as Elasticsearch or Amazon OpenSearch.

- Integration with policy engines like IBM Operational Decision Manager for automated approvals and escalations.

- User feedback loops for overrides and annotations that feed retraining pipelines.

- Regulatory reporting for SOC 2 and GDPR transparency.

Monitoring, Retraining, and Continuous Improvement

- Telemetry collection with Prometheus and Grafana.

- Drift detection to identify shifts in feature distributions or score patterns.

- Automated retraining triggers in Azure Machine Learning or Amazon SageMaker.

- Canary deployments to validate new models before full rollout.

- Governance dashboards for executive visibility into model health and recommended updates.

Output Artifacts and Resource Handoffs

The culmination of scoring and prioritization is a suite of artifacts that quantify and contextualize risks, enabling decision support and resource allocation.

Overview of Scoring Artifacts

- Scorecard reports listing risks with severity, likelihood, impact factors, and overall indices.

- Confidence intervals that communicate model certainty and guide manual validation triggers.

- Priority flags (High, Medium, Low) for rapid filtering in dashboards.

- Annotated risk profiles with extracted clauses, entities, and AI-generated explanatory notes.

Scorecard Report Structure

- Risk ID linking to the source document.

- Document reference or file path.

- Entity and clause details that generated the risk signal.

- Severity and likelihood scores.

- Composite risk index.

- Confidence interval bounds.

- Priority flag based on configured thresholds.

Handoff Mechanisms to Downstream Systems

- Case management platforms such as LegalCaseFlow receive scorecards via API or batch uploads.

- Dashboards and BI tools ingest data for interactive monitoring.

- Automated research agents trigger targeted legal research on high-priority risks.

- Alerting services dispatch email, SMS, or in-app notifications when thresholds are exceeded.

Integration Patterns

- RESTful APIs for synchronous data access.

- Message queues for asynchronous, high-throughput event delivery.

- Scheduled exports of CSV or JSON to data lakes.

- Webhooks for real-time push notifications.

Resource Allocation and Task Assignment

- Automatic task creation in matter management systems, linking to risk profiles.

- Assignment of subject-matter experts based on jurisdiction and practice area.

- Deadline and escalation pathways defined by confidence intervals and SLAs.

- Tracking of resolution status and time spent for performance analytics.

Governance, Auditability, and Continuous Improvement

All scoring outputs carry metadata references to source documents, extraction anchors, model versions, and applied policy rules to maintain full traceability. Embedded checksums and version identifiers ensure integrity, while comprehensive logging captures every API call, file transfer, and user interaction for audit readiness.

Feedback from post-mortem assessments and user corrections feeds back into the continuous learning pipeline. This loop enables periodic retraining, threshold adjustments, and rule tuning, driving ongoing optimization of the risk scoring and prioritization framework.

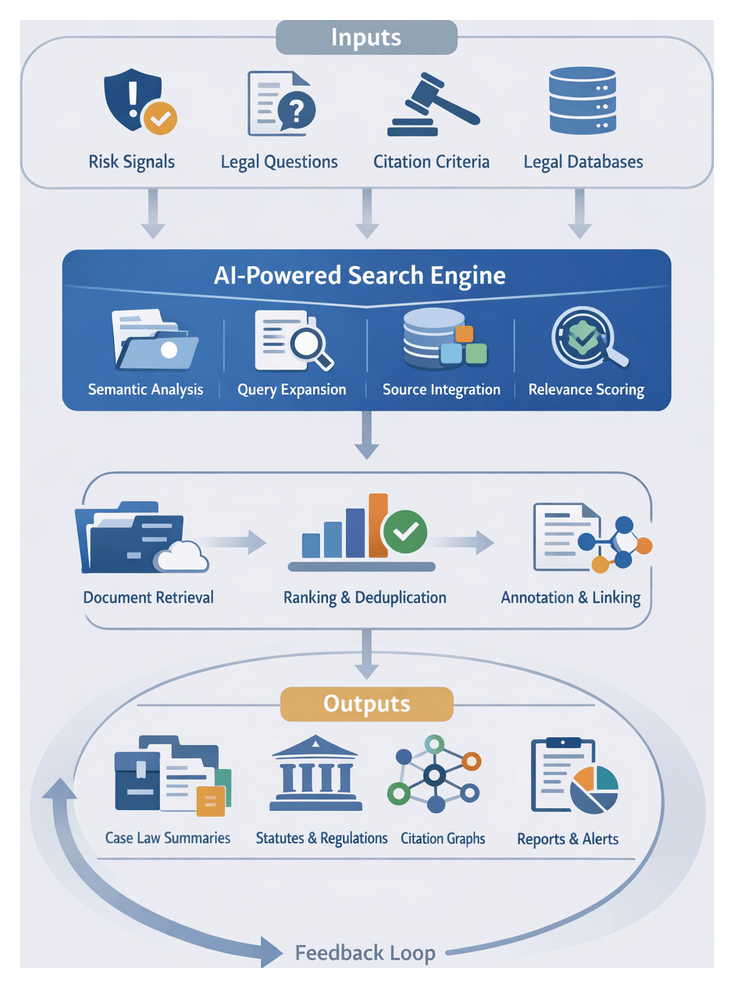

Chapter 6: Automated Legal Research

The automated legal research stage transforms identified risk signals, contractual inquiries, and compliance requirements into precise, AI-optimized searches that deliver comprehensive, accurate, and contextually relevant authorities. By leveraging semantic search, natural language understanding, and relevance scoring, this stage accelerates inquiry resolution, reduces manual effort, and minimizes oversight. Legal teams gain rapid access to statutes, case law, administrative rulings, and secondary commentary aligned with the issues surfaced in preceding workflow stages. The result is more consistent, defensible analysis and faster response to emerging risks.

Required Inputs and Prerequisites

- Extracted Entities and Risk Signals: Outputs from clause and risk identification feed query refinement. Entity tags (parties, obligations, regulatory references) carry metadata—confidence scores, context snippets, jurisdictional scope.

- Defined Legal Questions: Natural language issues or structured risk identifiers provided by legal teams guide the AI in semantic parsing and query formulation.

- Citation Patterns and Precedent Criteria: Citation networks, headnote mappings, and precedence filters (for example, binding in the Second Circuit or persuasive authority from state supreme courts) shape result ranking.

- Access to Legal Databases: Credentials and API integrations for platforms such as Westlaw Edge, LexisNexis, Bloomberg Law, and Casetext CARA.

- Semantic Search Index: An up-to-date index of legal texts, maintained internally or via services, supports concept clustering, topic modeling, and jurisdictional filtering.

- Secondary Source Libraries: Treatises, practice guides, and law review articles accessible via publisher feeds or in-house repositories supplement primary authorities.

- Jurisdictional and Language Settings: Filters for federal, state, or international law and multilingual preferences ensure retrieved materials are applicable and correctly interpreted.

- Search Agent Configuration: Role definitions (semantic matcher, citation analyzer, relevancy ranker), model priorities, and fallback protocols govern agent behavior.

- Performance Benchmarks: Response time targets, throughput requirements, and error thresholds maintain service-level agreements.

- Security and Privacy Controls: Encryption, role-based access, audit logging, and compliance with GDPR, CCPA, or client policies safeguard confidential content.

Automated Search and Retrieval Workflow

Query Submission and Interpretation

Queries originate from legal professionals, risk analysts, or triggered events within matter management systems. Inputs may include natural language questions, structured risk codes, and filters for jurisdiction or document type. An orchestration service parses the payload and invokes an AI interpretation module that applies transformer-based models to:

- Classify intent (statutory analysis, case precedent, regulatory comparison)

- Extract entities (statute names, case citations, regulatory sections)

- Apply jurisdictional and date filters

Integration with services such as Microsoft Azure Cognitive Search enriches semantic understanding, ensuring nuances of legal terminology are captured.

Query Expansion and Multi-Source Coordination

A query builder maps parsed tokens to index fields across internal and external repositories. Synonyms and legal thesaurus expansions—for example, “force majeure” to “act of God”—improve recall. Domain ontologies, retrieved from knowledge graphs or solutions, surface related legal concepts and precedent patterns. The orchestration layer dispatches expanded queries in parallel to:

- Internal repositories indexed by ElasticSearch or Apache Solr

- Subscription platforms via secure API connectors to Westlaw Edge, LexisNexis, Bloomberg Law, Casetext CARA

- Regulatory portals and government feeds through RESTful interfaces

- Secondary source libraries for treatises and commentary

Connectors log requests for auditability and monitor latency to prevent bottlenecks. As results stream in, they are unified into a consolidated set.

Relevance Scoring, Deduplication, and Annotation

Retrieved documents undergo multi-stage evaluation:

- Initial Scoring applies field-weighting and keyword density algorithms.

- ML-Driven Ranking refines scores using classifiers trained on historical research, user preferences, and citation patterns.

- Citation Analysis computes authority metrics—citation counts, appellate history, jurisdictional weight—elevating controlling precedents.

A deduplication service collapses identical or near-duplicate texts by comparing checksums and content similarity. Metadata is normalized—titles, dates, jurisdictions—ensuring uniform filtering. An entity linking module then applies named entity recognition to annotate statutes, clause references, and defined terms, anchoring them to a central knowledge graph. These annotations enable instant navigation between research outputs and extracted obligations or risk signals.

Result Packaging, Delivery, and Feedback

Once scored and annotated, results are packaged into structured containers that may include:

- Paginated lists sorted by combined relevance and authority scores

- Faceted filters for jurisdiction, source type, date range, and risk category

- Previews with highlighted terms and annotated passages

- Downloadable bundles in PDF or DOCX formats

The orchestration layer emits a JSON payload for insight generation and notifies user interfaces or case management systems when results are ready. Real-time feedback loops allow users to flag irrelevant results, adjust filters, or request query refinements. These interactions inform continuous improvement of expansion and ranking models.

AI Search Agents and System Integration

AI search agents replace manual keyword searches with context-aware retrieval, combining natural language understanding, knowledge graph reasoning, and predictive relevance. Key functions include:

- Semantic Query Interpretation: Intent classification, entity disambiguation, query expansion, and contextual filtering using transformer architectures.

- Hybrid Relevance Algorithms: Machine learning models trained on prior research, rule-based heuristics prioritizing binding jurisdictions, and fusion techniques blending both approaches. User feedback—clicks, saves, manual ratings—continuously refines scoring.

- Federated Source Connectivity: Gateways for LexisNexis, Thomson Reuters Westlaw, and Casetext CARA; open access harvesters; and internal connectors to document management systems. Normalization engines unify disparate metadata into a common schema.

- Real-Time Citation Analysis: Neutrality checks for good-law status, KeyPoint extraction of pivotal passages, citation network graphing, and automated Shepardization to classify citing references as positive, negative, or cautionary.

- Workflow Integration: API-driven handoffs to case management systems like Clio and Legal Workspace, collaboration plugins for Microsoft Teams and Slack, task automation for drafting or review, and audit logging for compliance and billing.

Governance layers—performance dashboards, model review boards, data lineage logs, and security controls—ensure accuracy, transparency, and adherence to ethical and regulatory standards.

Outputs and Integration Points