AI Driven Automated Financial Reporting An End to End Workflow for Finance and Banking

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Context and Industry Imperatives

The finance and banking sector operates under relentless pressure to deliver accurate, timely reports within stringent regulatory frameworks. Legacy systems, manual reconciliations and siloed data sources contribute to prolonged close cycles, elevated error rates and compliance risks. In this environment, an end-to-end AI-enabled reporting pipeline becomes a strategic imperative. By transforming disparate data flows into standardized, auditable outputs, institutions can accelerate decision making, meet regulatory deadlines and enhance stakeholder transparency.

Adopting a structured AI workflow addresses key operational challenges. It replaces error-prone manual tasks with automated validation checks, surfaces anomalies early and embeds continuous compliance controls. From a strategic standpoint, this approach fosters resilience against evolving regulations and market dynamics, ensuring that reporting frameworks remain adaptable, defensible and aligned with business objectives.

Structured AI-Enabled Reporting Workflows

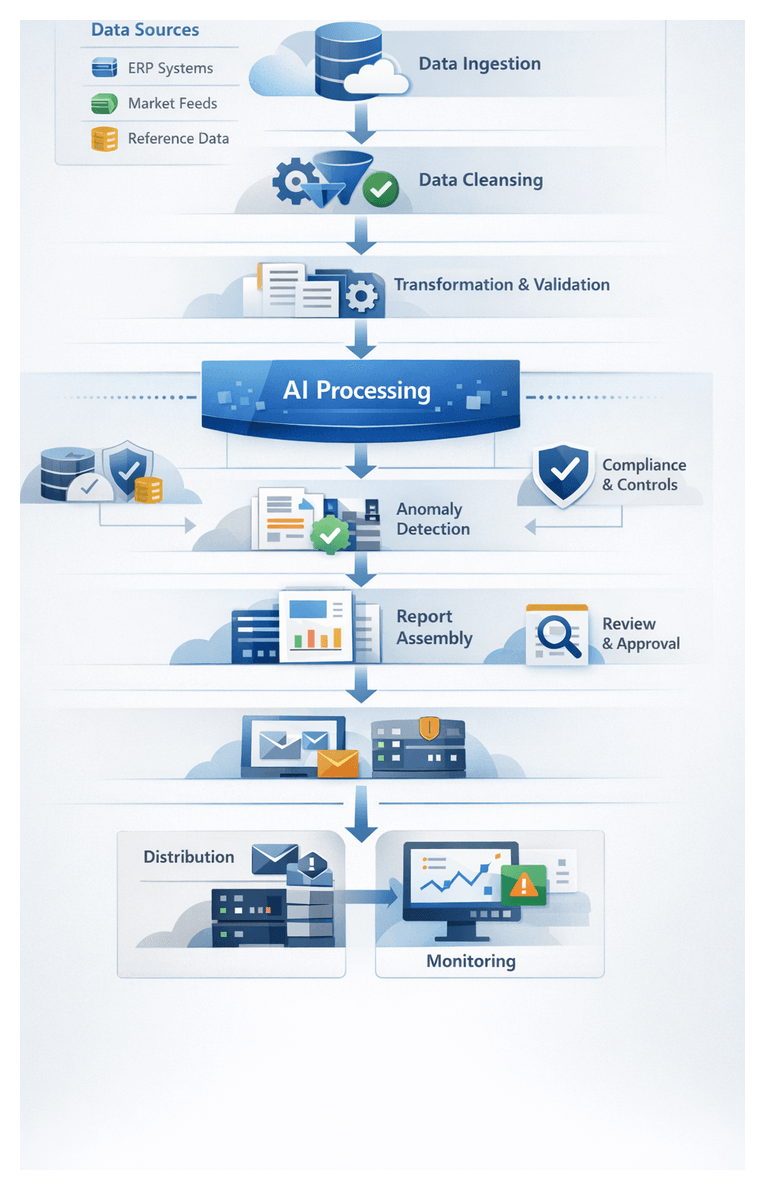

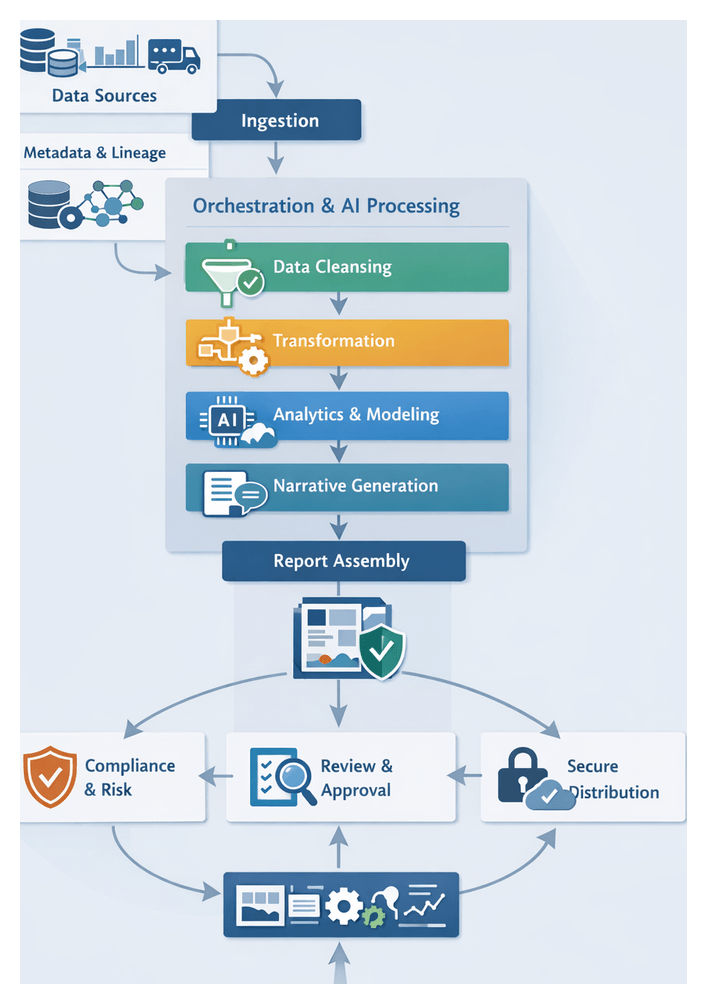

A structured AI workflow delineates discrete stages—from data ingestion through report distribution—each governed by defined inputs, outputs and handoff protocols. This modular design isolates complexity, applies targeted AI capabilities and maintains end-to-end visibility. Core elements include orchestrators that sequence tasks, AI agents that perform specialized functions and monitoring components that enforce service-level objectives and error thresholds.

- Workflow Orchestration coordinates connectors, validation engines and natural language generation services. Platforms such as Apache Airflow trigger ingestion agents, invoke cleansing routines and manage retry logic.

- AI Agents encapsulate discrete functions—schema matching, anomaly detection, narrative synthesis—allowing individual models to be updated without disrupting the broader pipeline.

- Monitoring and Governance components capture metadata, audit logs and performance metrics to support compliance, continuous improvement and drift detection.

This structured design enforces version control, exception handling and retry policies at each stage. It prevents ad hoc interventions, promotes repeatability and satisfies audit requirements. By encapsulating AI logic into autonomous agents, finance teams gain the flexibility to retrain models, refine rule sets and integrate new data sources with minimal operational risk.

Data Ingestion: Foundation for Automation

The data ingestion layer serves as the foundational pillar of an AI-driven reporting solution. Its purpose is to gather, centralize and harmonize structured and semi-structured data streams—ranging from ERP extracts to market feeds—into a unified repository. A formalized ingestion stage eliminates manual extraction efforts, accelerates the close cycle and ensures that downstream automation operates on a complete, consistent dataset.

Key goals of the ingestion layer include:

- Establishing secure, automated connections to on-premises and cloud systems

- Ingesting batch extracts and real-time streams within defined SLAs

- Capturing metadata and lineage for auditability

- Providing an extensible framework that accommodates new sources and schema changes

Successful ingestion requires collaboration among IT, data governance and finance stakeholders to define:

- Source Inventory and Access—cataloged connection details for ERP platforms like SAP, trading systems, treasury applications and external market providers such as Bloomberg and Refinitiv; credentials managed via secure vaults under least-privilege principles.

- Connector Configurations—RESTful or SOAP APIs implemented through tools like AWS Glue or Azure Data Factory, with endpoint definitions, throttling limits and retry policies.

- File Transfers and Streams—secure FTP or object-storage transfers for flat-file exports; Apache Kafka for high-frequency market feeds.

- Reference Data—exchange rates, instrument master files and regulatory taxonomies from authoritative providers.

- Network and Security—firewalls, VPNs, TLS encryption and compliance with SOC 2, ISO 27001 or FFIEC standards.

- Metadata and Schema Registry—central repository of canonical definitions, versioning and automated schema validation.

- Governance Policies—data retention, archival rules and SLOs for freshness, volume thresholds and quality metrics.

- Infrastructure Planning—provisioned compute, storage and network capacity, with auto-scaling clusters to handle peak loads.

- Change Management—version control for connector configurations, scripts and orchestrations; formal approval processes for schema or workflow updates.

- Scheduling and Handoffs—task schedules aligned with business events; orchestration rules for dependency management and alerting.

- Stakeholder Sign-off—formal alignment on data ownership, SLAs and exception handling protocols.

By codifying these prerequisites, organizations mitigate risks from fragmented sources and ad hoc extraction methods. The resulting unified data lake or staging repository becomes the single source of truth for all automation stages, from cleansing and transformation to narrative generation and distribution.

Embedding AI Agents into the Close Cycle

AI agents—autonomous software entities powered by machine learning, rule engines and natural language capabilities—transform the financial close cycle by automating data collection, validation, analysis and narrative drafting. These digital workers collaborate with human professionals, enabling finance teams to focus on interpretive and strategic activities rather than routine data handling.

Core AI capabilities include:

- Connector Orchestration—agents configure and invoke data connectors, handle authentication and manage retries automatically.

- Schema Matching—models detect and reconcile schema mismatches when source templates change.

- Anomaly Detection—unsupervised algorithms flag outliers in trial balances and transaction streams.

- Data Validation—rule engines enforce business logic and regulatory requirements.

- Preliminary Analysis—statistical routines compute KPIs and variance reports.

- Natural Language Generation—models such as GPT-4 assemble draft commentary and variance explanations.

- Continuous Learning—feedback loops capture human corrections to refine training datasets.

Supporting systems and integration components include:

- Message Brokers—Enterprise Service Bus or Apache Kafka for reliable event routing and data distribution.

- API Gateway—secure access to external feeds and third-party data.

- Orchestration Platforms—tools like UiPath coordinate agent schedules and monitor execution statuses.

- Model Management—systems such as DataRobot for versioning, performance tracking and automated deployments.

- Metadata Repository—stores schema definitions, transformation rules and audit logs.

- Secure Data Lake—centralized storage with access controls and encryption.

Common integration patterns include:

- Event-Driven Microservices—agents subscribe to domain events (for example, “new ERP batch posted”) and execute tasks asynchronously for real-time responsiveness.

- Pipeline Orchestration—central orchestrator invokes agent services sequentially or in parallel, providing structured visibility into workflow progress.

Agents embed at multiple touchpoints within the close cycle:

- Data Ingestion—fetching raw data, detecting schema changes and normalizing formats.

- Data Cleansing—applying anomaly detection, imputing missing values and logging interventions.

- Transformation—mapping entries to chart of accounts structures using classification models.

- Analytics—batch scoring forecasting models and flagging outlier trends.

- Narrative Drafting—generating preliminary commentary based on statistical outputs.

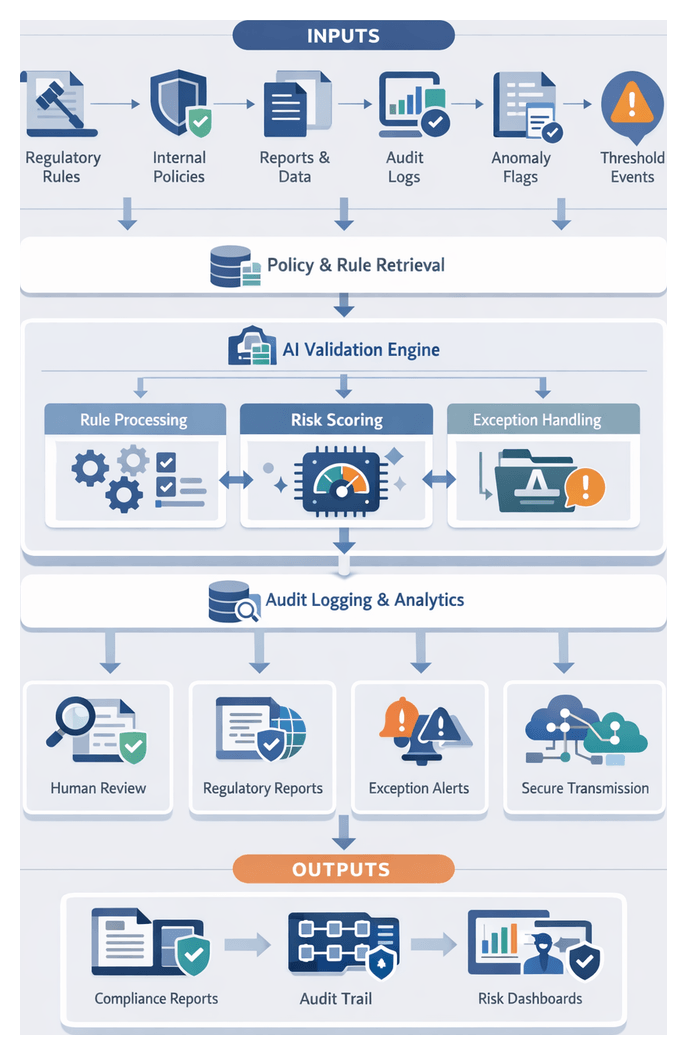

- Compliance Checking—evaluating regulatory rules and assigning risk scores to exceptions.

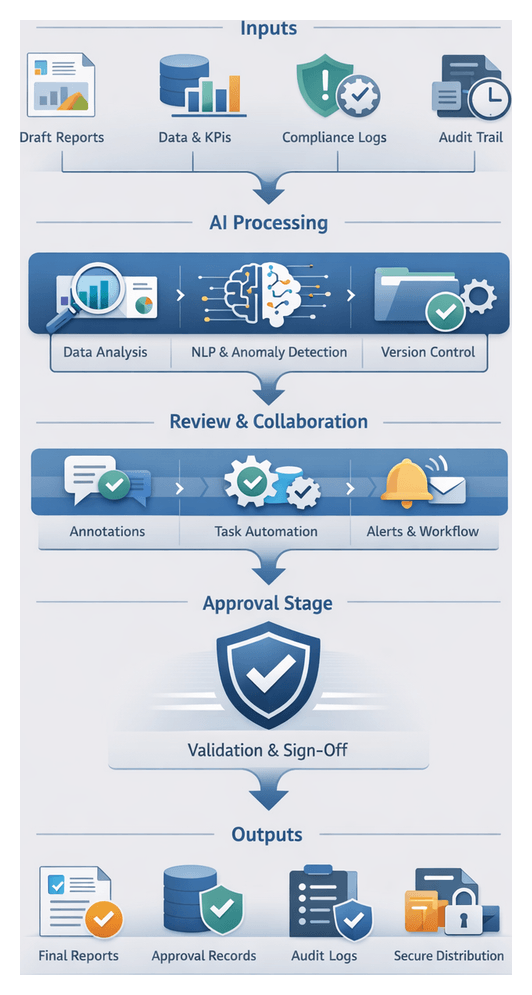

- Review Facilitation—routing tasks to reviewers, tracking annotations and sending reminders.

High-Level Architecture and Component Outputs

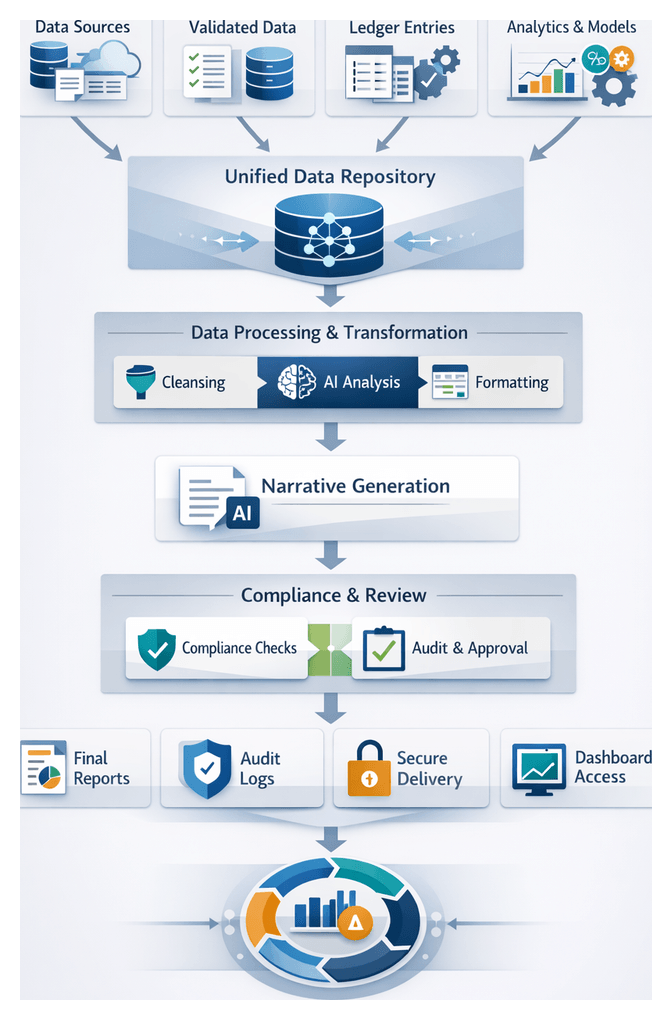

The automated reporting solution architecture integrates AI agents, traditional data stores and user interfaces into a cohesive system. At its core, this blueprint defines data flows across stages—ingestion, cleansing, transformation, analytics, narrative generation, assembly, compliance checks, review, distribution and monitoring. Each component produces well-defined outputs and relies on explicit dependencies, ensuring that handoffs maintain data integrity and context.

- Data Ingestion Layer: Output: Unified raw data snapshot combining ERP extracts, trading logs, market feeds and reference tables in a landing zone. Handoff: Populates centralized data lake or staging database and triggers the cleansing service.

- Data Cleansing Service: Output: Validated and normalized dataset with anomalies flagged. Handoff: Stores cleansed data in sandbox schema and publishes quality metrics to governance dashboard.

- Intelligent Transformation Engine: Output: Structured ledger entries aligned to chart of accounts and consolidated balances. Handoff: Writes transformed ledgers to reporting database and signals the analytics module.

- AI-Powered Analytics Module: Output: Trend analyses, variance reports, anomaly flags and forecasts. Handoff: Exposes results via RESTful endpoints for the narrative generator and dashboards.

- Natural Language Generation Service: Output: Draft narratives enriched with compliance annotations. Handoff: Delivers fragments to report assembly and archives versioned drafts.

- Report Assembly Engine: Output: Fully assembled reports (PDF, HTML, dashboards) with branding and visuals. Handoff: Publishes final reports to secure repositories and notifies review teams.

- Compliance and Risk Controls: Output: Audit logs, exception reports and risk matrices. Handoff: Feeds artifacts to review portal and archives records in an immutable ledger.

- Collaborative Review Portal: Output: Annotated report versions, reviewer comments and sign-off metadata. Handoff: Promotes approved reports to distribution module.

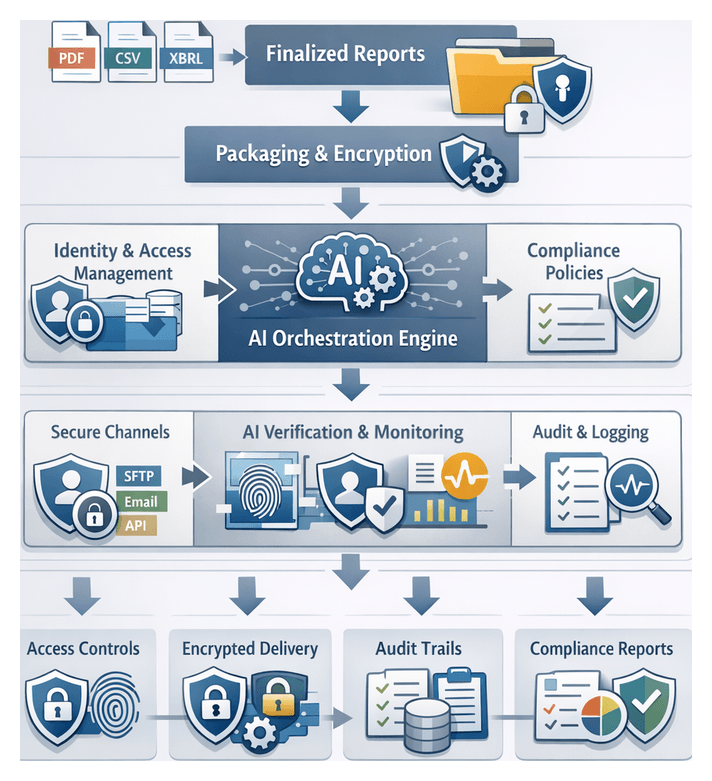

- Secure Distribution: Output: Encrypted report packages, secure links and delivery logs. Handoff: Delivers to recipient portals or email gateways and updates monitoring dashboard.

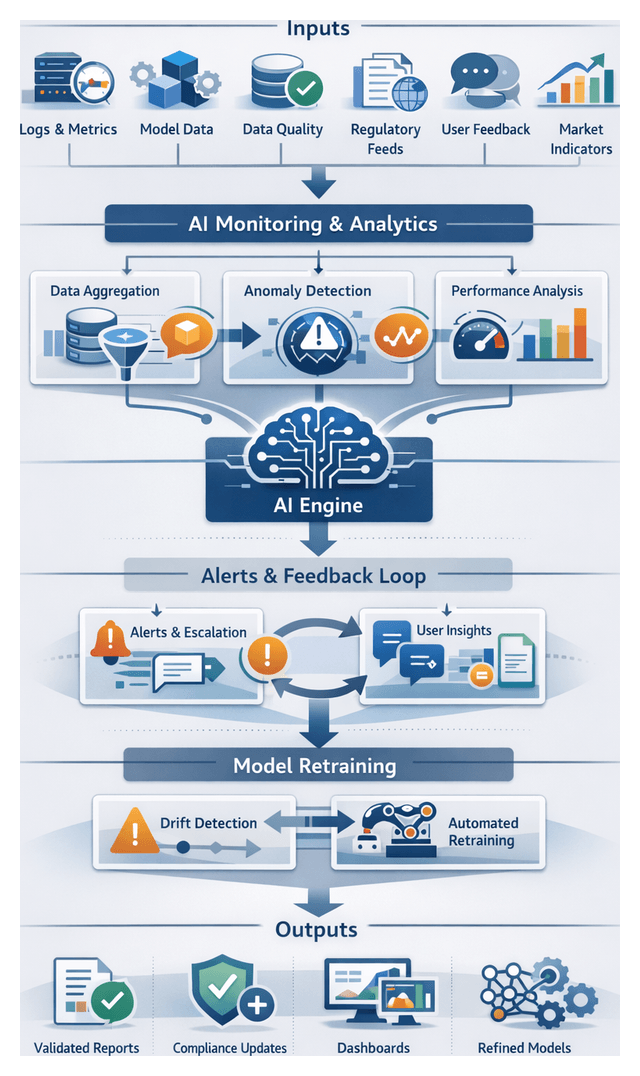

- Continuous Monitoring Layer: Output: Performance dashboards, model drift alerts and retraining configurations. Handoff: Triggers pipeline updates and workflow refinements via CI/CD pipelines and tools like Kubeflow Fairing.

Integration interfaces and system dependencies must be clearly defined, covering data connectivity (REST, SOAP, JDBC, SFTP), message buses (Apache Kafka), orchestration platforms (Apache Airflow), API gateways, metadata catalogs and security layers. Machine-readable interface specifications, data contracts and solution blueprints ensure transparency, facilitate collaboration and support audit activities.

By aligning component outputs with downstream needs and embedding feedback loops, the architecture supports continual improvement. Metrics and health indicators feed the monitoring layer, triggering retraining workflows or rule updates in a controlled DevOps environment. This design preserves the integrity of financial reporting processes while enabling agility in response to changing business requirements and regulatory demands.

Chapter 1: Data Ingestion and Integration

Data Ingestion Foundations

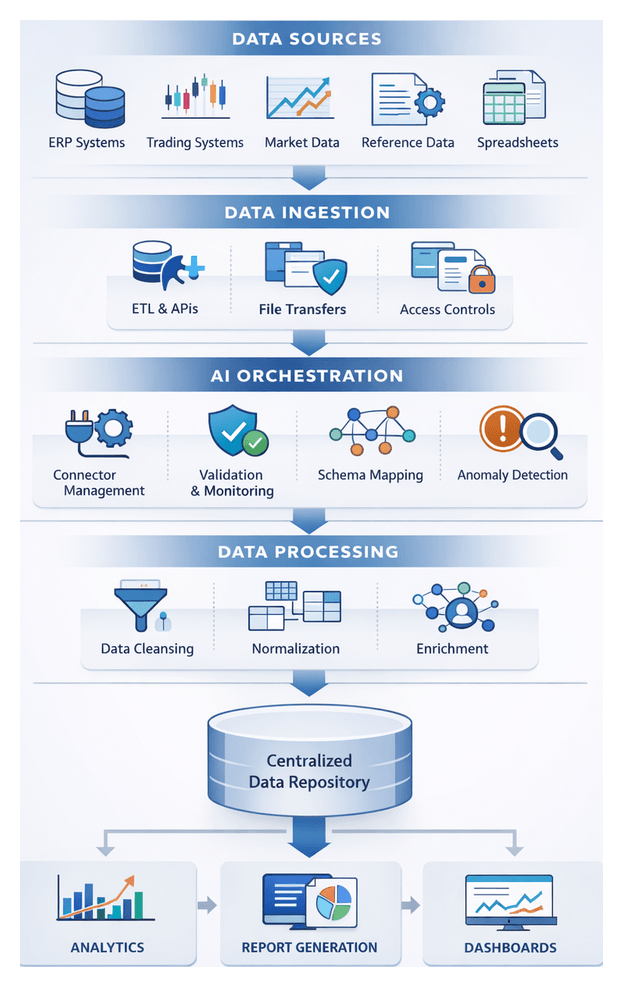

The data ingestion stage establishes the foundation for a robust, AI-driven financial reporting workflow by identifying, connecting, and consolidating disparate inputs into a unified repository. Its primary objectives are to centralize data, ensure completeness and timeliness, apply initial validation, and enforce governance and security from the outset. This orchestration accelerates downstream processes—cleansing, transformation, analytics, narrative generation, and report assembly—while minimizing manual intervention and compliance risk.

Key Data Source Types

- ERP Systems: Platforms such as SAP, Oracle Financials, and Microsoft Dynamics provide core accounting entries and ledger balances, typically accessed via native extraction utilities or REST APIs.

- Trading and Treasury Systems: Solutions like Calypso and Murex generate position files and cashflow projections, ingested via secure file transfers or specialized connectors.

- Market Data Feeds: Real-time and end-of-day price feeds from Bloomberg or Refinitiv arrive via FIX protocols, message queues, or vendor APIs.

- External Reference Data: Exchange rates, instrument master lists, and mapping tables published as CSV, XML, or JSON by third parties or regulatory bodies.

- Spreadsheets and Flat Files: Excel workbooks and text files require automated uploads, validation rules, and change-management controls.

- Unstructured Documents: PDF statements and scanned forms may be parsed by AI-driven engines to extract structured records.

Ingestion Objectives and Success Criteria

- Data Completeness: Capture all expected records, tracked by row counts and key field thresholds.

- Data Timeliness: Meet fixed close schedules and SLAs, monitoring extraction start and finish times.

- Schema Alignment: Verify column presence, data types, and field lengths before loading.

- Quality Flags: Detect nulls in key fields, invalid codes, and out-of-range amounts for review or remediation.

- Security and Access Controls: Encrypt data in transit and at rest, enforce role-based access, and maintain audit logs in line with GDPR and SOX.

Prerequisites and Conditions

- Data Access Agreements: SLAs with system owners and vendors define extraction windows and retention policies.

- Network Connectivity and Security: VPN, SSL/TLS channels, firewall configurations, certificates, and key management must be in place.

- Credential Management: Secure vaults store service accounts and API keys, with rotation policies and audit logs.

- Data Governance Framework: Documented master data definitions, taxonomies, and change-management workflows.

- Infrastructure Capacity Planning: Allocate compute, storage, and network resources to meet peak volumes.

- Monitoring and Observability: Logging frameworks, metric collectors, and alerting rules enable rapid root-cause analysis.

Role of AI Agents in Orchestration

AI-driven platforms automate connector configuration, schema discovery, and error handling. Agents monitor for schema drift, standardize field names and data types, and maintain an audit log of all ingestion activities, accelerating source onboarding and strengthening resilience against API changes.

Handoff to Downstream Processing

Upon completion, the ingestion pipeline delivers raw, structurally validated records to the cleansing stage. Change data capture markers, version tags, and run identifiers ensure traceability. Automated alerts and dashboards notify stakeholders of status, quality metrics, and any exceptions requiring review.

Orchestrating Connectors and APIs

The orchestration layer functions as the conductor of the ingestion workflow, coordinating connectors and APIs to extract, transfer, and stage data reliably and on schedule. Enterprises deploy engines such as MuleSoft Anypoint Platform or Informatica Intelligent Cloud Services, augmented by AI agents that validate configurations, detect anomalies, and trigger self-healing routines.

Connector Configuration and Scheduling

Data engineers register connectors by specifying endpoints, authentication schemes (OAuth 2.0, API keys, certificate-based TLS), extraction queries, and frequency parameters. The orchestration engine persists these definitions and generates an ingestion calendar. AI agents analyze schedules to detect overlaps and resource contention, suggesting optimizations such as staggering high-volume pulls to maximize throughput.

API Invocation and Parameterization

On schedule, the engine issues API calls or database queries, performing token retrieval, request assembly (interpolating dates, account IDs, currency codes), and payload submission over HTTPS. Responses are transformed into canonical formats (JSON-lines, Avro) and written to staging areas. AI-driven validation inspects schemas in real time, invoking fallback rules or alerts upon mismatches.

Monitoring, Logging, and Error Handling

Execution metadata—timestamps, row counts, data volumes, and performance metrics—is streamed to centralized services like Datadog or Splunk. Structured events published to Kafka or Amazon EventBridge trigger downstream tasks. Retry logic with exponential backoff handles network, authentication, or format errors, while failures beyond thresholds automatically open tickets in platforms such as ServiceNow. AI monitoring agents spot recurring issues, adjust connector parameters within guardrails, and escalate critical alerts.

Security, Authentication, and Governance

Sensitive financial data is protected by integrating with identity providers (Okta, Azure AD) to enforce role-based access. Credentials are stored in encrypted vaults like HashiCorp Vault or AWS Secrets Manager. Transport security (TLS 1.2 ) safeguards API calls, and AI-driven compliance agents verify adherence to data residency, privacy regulations, and contractual rules, quarantining non-compliant payloads for review.

Integration with Scheduling and Workflow Engines

Orchestration integrates with enterprise schedulers (Control-M, AutoSys), container platforms (Kubernetes, AWS Lambda), and workflow engines such as Apache Airflow or the AgentLinkAI Orchestration Service. AI agents coordinate across these systems to optimize resource allocation, delay or reschedule jobs during maintenance windows, and ensure end-to-end pipeline resilience.

Handoff to Normalization

Upon connector completion, the orchestration layer consolidates raw extracts into a staging repository and publishes completion events with metadata. AI-driven normalization agents detect schema mismatches and apply initial formatting rules. Dependencies recorded in the orchestration engine trigger downstream cleansing and preprocessing tasks, with automated notifications summarizing execution status and anomalies.

AI-Driven Data Collection and Normalization

Embedding AI throughout the ingestion process accelerates configuration, enhances data quality, and reduces manual intervention. AI roles focus on automating connector lifecycles, detecting schema drift, enriching metadata, and standardizing incoming records to deliver a clean, unified dataset for downstream processing.

Connector Orchestration and Self-Healing

AI-driven engines integrated with platforms like Apache Airflow and Prefect schedule connector jobs, monitor execution, and predict bottlenecks using machine learning models trained on historical metrics. Self-healing routines restart jobs, switch to fallback endpoints, or throttle requests to maintain feed continuity, alerting operations teams only when interventions exceed thresholds.

Automated Schema Detection and Field Mapping

AI models compare incoming schemas against baseline definitions, detecting added columns or type changes. Semantic similarity, historical usage, and domain ontologies guide mapping suggestions—such as aligning “trade_timestamp” with “execution_time”—which data engineers can validate, feeding corrections back to refine the model.

Semantic Enrichment and Knowledge Graph Integration

By referencing financial ontologies and domain-specific taxonomies, AI agents annotate fields with standardized descriptors—account classifications, instrument types, regulatory codes—and leverage knowledge graphs to infer relationships, ensuring each record carries both raw values and semantic context.

Anomaly Detection at Ingestion

Statistical methods and unsupervised learning detect outliers in volume, value ranges, or correlations. Suspect records—such as abnormal foreign exchange spikes—are quarantined or flagged for rapid review, preventing errors from propagating downstream.

Intelligent Prioritization and Sampling

AI agents analyze the impact of each dataset on critical reporting deliverables, allocating bandwidth and compute to high-value feeds and sampling lower-priority sources. Adaptive scheduling elevates or delays connectors in response to performance degradation, ensuring timely delivery of essential records.

Data Normalization and Standardization

Rule-based transformations and machine learning classifiers standardize units, date formats, numeric precision, and categorical labels. Currency conversions use real-time rate feeds, dates normalize to ISO 8601, and clustering algorithms group transaction types under canonical categories.

Metadata Management and Lineage Tracking

AI components capture lineage at each ingestion step, recording source systems, applied transformations, and quality checks. Centralized catalogs by Informatica or Talend recommend tags, define domains, and surface usage patterns, synchronizing technical and business metadata.

Performance Monitoring and Feedback Loops

Observability platforms equipped with AI correlate latency, error rates, and throughput with business outcomes. Feedback loops ingest user annotations and exception logs to refine anomaly thresholds, schema mappings, and prioritization heuristics over time.

Integration with Enterprise Orchestration

AI services communicate via REST APIs and event-driven architectures with platforms such as Azure Data Factory and AWS Glue, triggering upstream or downstream tasks based on real-time ingestion events, ensuring synchronized execution across connectors, normalization routines, and metadata updates.

Governance, Compliance, and Security

Policy engines enforce regulatory rules—GDPR privacy mandates, SOX controls—masking or routing sensitive data during ingestion. Continuous compliance checks generate audit logs of every decision and transformation, embedding security validation within AI agents to prevent non-compliant data from entering the reporting pipeline.

Unified Repository Architecture and Handoff

The culmination of ingestion is a centralized repository consolidating raw and normalized financial data, enriched with metadata and lineage. This authoritative store supports cleansing, transformation, analytics, narrative generation, and reporting, while providing auditability, version control, and performance optimization.

Repository Structure and Artifacts

- Raw Data Staging: Immutable copies of extracts from ERP platforms, trading systems, market feeds, and reference tables are stored in object storage like Azure Data Lake Storage or Snowflake internal stages.

- Normalized Data Domains: Core domains—general ledger entries, transaction details, currency rates, and counterparty master data—are aligned to standardized schemas via SQL, Apache Spark on Databricks, or managed pipelines in Google Cloud Dataflow.

- Metadata Catalog: Searchable definitions, field descriptions, owner contacts, ingestion timestamps, and lineage pointers reside in AWS Glue Data Catalog or open-source solutions like Apache Atlas.

Versioning and Lineage

Datasets are tagged with unique identifiers, timestamps, and checksums, while lineage platforms—such as Confluent or Pachyderm—map downstream tables to upstream raw files and transformation scripts, enabling audit-ready traceability.

Critical Upstream Dependencies

- Source System Availability: SLAs with ERP, trading engines, and market data vendors ensure extract availability and schema stability.

- Connector and API Health: Stable connectivity, valid credentials, and schema adherence are monitored by automated health checks and retry logic.

- Schema Contracts: Formal change-management workflows notify pipelines of field additions or type changes to prevent data loss.

- Security Controls: Key management, encryption certificates, and role-based permissions guarantee only authorized pipelines access repository layers.

- Network Performance: Load-balancing, compression, and incremental extraction address bandwidth demands of high-volume feeds.

Metadata-Driven Data Quality Gates

Quality gates validate record counts, field completeness, and value ranges before committing to normalized domains. AI-assisted anomaly detection flags deviations in real time, triggering exception workflows and automated correction routines. Quality metrics—null percentages, schema drift indicators, and ingestion latency—are stored in the metadata catalog for trend analysis and proactive remediation.

Handoff to Cleansing and Preprocessing

- Event Triggers: Completion events published to Apache Kafka or cloud pub/sub services include dataset identifiers, timestamps, and catalog references.

- Batch Scheduling: Workflow engines like Apache Airflow execute cleansing DAGs once all feeds arrive successfully.

- API Invocation: REST or gRPC endpoints expose schema definitions and data slices, enabling on-demand preprocessing.

- Audit Logging: Every handoff action is recorded with user and system identifiers, timestamps, and status codes in the metadata catalog.

Governance, Monitoring, and Continuous Improvement

Governance rules in the metadata catalog define retention policies and access entitlements. Monitoring dashboards track storage utilization, query performance, and data freshness, surfacing alerts for threshold breaches. Continuous improvement processes analyze ingestion metrics to refine metadata models, optimize resource allocation, and drive cost-performance balance over time.

By defining clear objectives, leveraging AI-driven orchestration, and establishing a unified repository with robust handoff protocols, finance organizations create a scalable, audit-ready foundation for end-to-end automated financial reporting.

Chapter 2: Data Cleansing and Preprocessing

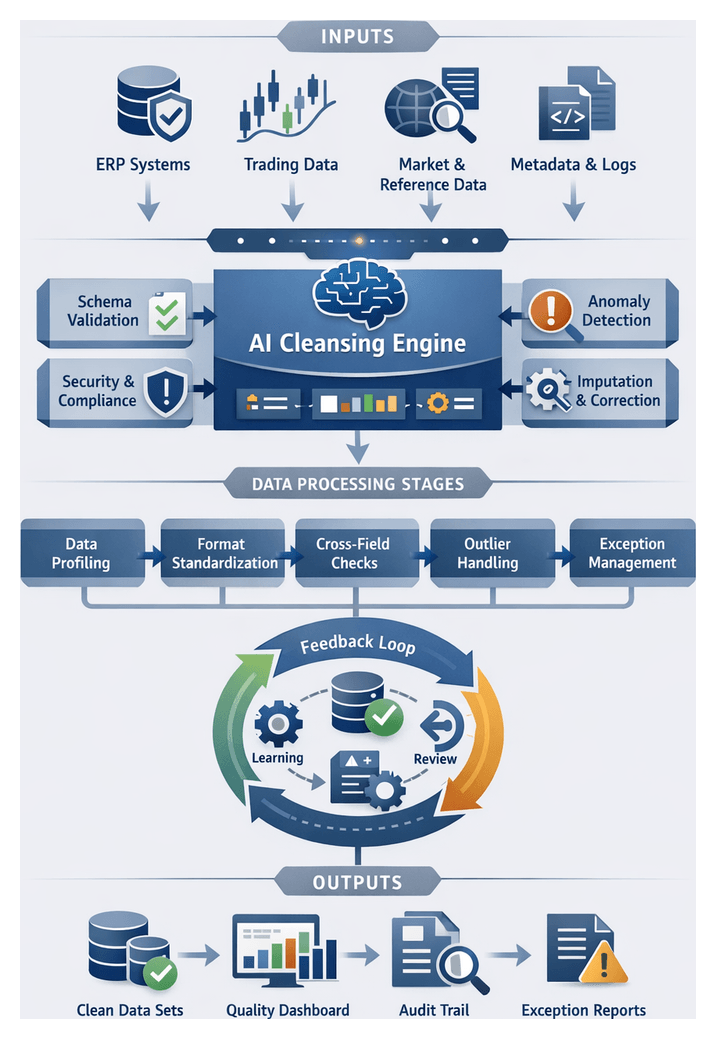

Cleansing Stage Objectives and Input Requirements

The cleansing stage establishes the quality gate for automated financial reporting workflows, ensuring that all incoming data meets rigorous standards before transformation, analysis, and narrative generation. In finance and banking, where precision and regulatory compliance are paramount, undetected data issues can lead to costly misstatements and audit exceptions. This stage defines explicit data quality objectives, catalogs acceptable input types, and enforces prerequisites to mitigate risk, accelerate close cycles, and maintain confidence in reporting outputs.

Key Data Quality Objectives:

- Completeness: All required fields and records—transaction details, account identifiers, reference codes—must be present.

- Accuracy: Values must fall within valid ranges, match reference tables, and adhere to validation rules for amounts, dates, and codes.

- Consistency: Formats for dates, numbers, and text are standardized, and discrepancies across sources are resolved.

- Validity: Data types, schemas, and business rules are enforced; any violations are flagged.

- Timeliness: Timestamps and periods align with the financial close cycle; stale or future-dated entries are quarantined.

- Traceability: Lineage metadata captures source identifiers, batch timestamps, and connector logs for audit and root-cause analysis.

Input Data Types and Sources:

- General ledger and sub-ledger extracts from ERP platforms such as SAP or Oracle Financials.

- Transactional feeds from trading systems, treasury applications, and payment networks.

- Market data streams and reference rates from vendors like Bloomberg or Refinitiv.

- External benchmarks and regulatory tables: currency codes, country classifications, taxonomies.

- Supplementary metadata: source system identifiers, batch timestamps, connector logs.

- Prior-period reconciliations and audit adjustments for comparative checks.

Input Criteria and Prerequisites

Schema Conformance:

Each dataset must match centralized schema definitions—column names, data types, and record structures—registered in a schema registry. Deviations trigger alerts or quarantine for investigation.

Contextual Metadata Requirements:

- Source System Identifier: Unique code for origin application or feed.

- Batch and File Timestamps: Extraction times to support timeliness checks.

- Record-Level Lineage Tags: Correlation IDs linking entries to ingestion logs.

- Regulatory Context Flags: Indicators for jurisdictions, currency regimes, compliance boundaries.

- Processing Context Attributes: Workflow run IDs, user approvals, configuration versions.

Access and Security Preconditions:

- Secure Credentials: Validated service accounts or token-based authentication for connectors.

- Encryption-at-Rest and In-Transit: Compliance with policies and standards like PCI DSS and GDPR.

- Role-Based Access Controls: Restrict cleansing logic modifications and data exposure.

- Audit Logging: Tamper-proof records of access, modifications, and approvals.

System and Resource Availability:

- Provisioned Compute Resources: CPU, memory, and storage to handle period-end data spikes.

- Connector Health Checks: Pre-run validations of database connections, message queues, and APIs.

- Scalability Mechanisms: Autoscaling or orchestration via platforms such as Kubernetes or Apache Airflow to manage dynamic workloads.

- Disaster Recovery Paths: Fallback routines and data snapshots for system failures or network disruptions.

Best Practices for Input Preparation:

- Implement a Centralized Data Glossary using a governance tool like Collibra to standardize definitions and maintain a single source of truth.

- Automate Schema Validation with AI-driven profiling solutions to detect schema drifts before ingestion.

- Define Modular Validation Rules in reusable components to adapt quickly to regulatory changes.

- Embed Real-Time Monitoring via dashboards powered by Google Cloud Dataprep to surface data quality metrics and alert stakeholders.

- Maintain a Quarantine Framework: Secure staging area for non-conforming data with automated notifications to data stewards.

- Foster Cross-Functional Collaboration among data owners, compliance officers, and IT operations to align on input specifications and remediation responsibilities.

Intelligent Cleansing Workflow

The intelligent cleansing workflow transforms raw ingested records into validated, consistency-enforced datasets ready for transformation. An orchestration engine coordinates profiling, anomaly detection, imputation, format standardization, and exception handling, with AI agents monitoring execution, applying machine learning models, and routing exceptions to data stewards. The following steps outline this end-to-end process:

Step 1: Data Profiling and Metadata Extraction

The orchestration engine invokes profiling services to extract statistical summaries—cardinality, null rates, value distributions—and metadata descriptors (min/max, standard deviation, pattern frequency). Tools like Informatica Data Quality catalog module automate pattern detection and seed anomaly models. Profiling results populate the metadata repository and inform thresholds for anomaly detection.

Step 2: Anomaly Detection and Scoring

An anomaly detection microservice applies rules-based checks (ISO 4217 currency codes, date range validations) and unsupervised learning algorithms to flag irregularities. Machine learning models trained on historical cleansed data compute anomaly scores, while categorical anomalies are identified using frequency-based methods and embeddings. An AI agent adjusts thresholds dynamically and logs anomalies with contextual metadata for review.

Step 3: Missing Value Identification and Imputation

Records with nulls undergo a multi-tiered imputation strategy: simple replacements with median or mode, contextual imputations using reference tables, and predictive imputations via a DataRobot model. Each attempt is scored for confidence; low-confidence cases trigger manual review alerts through the collaboration platform.

Step 4: Format Standardization and Schema Alignment

- Standardize dates to YYYY-MM-DD and validate against business calendars.

- Normalize numeric values for common precision and scale.

- Apply NLP routines for text normalization (punctuation, case).

- Reconcile source-specific schemas with the target model via a knowledge graph in Trifacta.

Schema versions are tracked, and automated mapping updates prevent drift and ensure downstream compatibility.

Step 5: Outlier Handling and Correction

- Apply statistical smoothing or percentile capping for numeric outliers.

- Cross-reference categorical outliers with master data in an MDM system; escalate unmatched codes to data stewards.

- Invoke a rule engine for complex anomalies (e.g., negative balances) to suggest corrections based on historical patterns.

Corrected records re-enter anomaly detection to confirm resolution; unresolved cases remain in exception queues.

Step 6: Referential Integrity and Cross-Field Validation

- Verify foreign keys (account IDs, branch codes) against master tables.

- Ensure debit and credit totals reconcile within each transaction.

- Validate aggregated balances against summary entries.

Violations are tagged with root-cause metadata and routed to system owners, with AI agents monitoring resolution progress.

Step 7: Error Logging, Triage, and Exception Routing

- High-severity financial discrepancies trigger immediate alerts to senior analysts.

- Medium-severity schema mismatches generate tickets in the issue management system.

- Low-severity anomalies are batched for periodic review.

An AI agent tracks ticket resolution times and escalates overdue issues, capturing detailed audit records for compliance.

Step 8: Human-In-The-Loop Review and Feedback Incorporation

- Data stewards review suggested imputations and corrections, approving or modifying changes.

- Annotations capture override rationale, feeding back into model retraining pipelines.

- AI agents detect steward decision patterns and propose rule enhancements to reduce manual interventions.

Step 9: Automated Rule Refinement and Model Retraining

- AI agents aggregate steward annotations to identify frequently overridden rules.

- Supervised learning pipelines retrain anomaly detection and imputation models with enriched datasets.

- Updated rules and models are versioned in the governance repository and validated before deployment.

Step 10: Clean Data Validation and Handoff Preparation

- Aggregate counts and reconciliations confirm no records were dropped.

- Quality dashboards display metrics—anomaly reduction, imputation success—for stakeholder sign-off.

- Cleaned datasets are stamped with version metadata and prepared for secure transfer to transformation.

Meeting predefined thresholds, the dataset is handed off to the intelligent transformation pipeline for chart of accounts mapping and regulatory classification.

AI-Driven Quality Enforcement

AI agents augment traditional validations with machine learning–driven anomaly detection, adaptive feedback loops, and automated remediation suggestions. This multi-layered approach ensures comprehensive data validation, reduces manual intervention, and supports faster close cycles, improved compliance, and stakeholder confidence.

Automated Rule-Based Validation Engines

Rule engines codify business logic, regulatory policies, and schema constraints into executable validations. AI workflows integrate leading frameworks:

- Great Expectations for defining and documenting data expectations.

- Amazon Deequ for scalable rule execution on Apache Spark.

- Custom rule modules for business-specific checks like cross-entity balance validations.

Machine Learning–Driven Anomaly Detection

- Unsupervised clustering and density estimation identify high-dimensional outliers.

- Time series models monitor periodic financial metrics for spikes, dips, and drifts.

- Autoencoder networks quantify reconstruction error to detect structural anomalies.

- Statistical methods—Isolation Forests and k-nearest neighbors—provide lightweight detectors.

Adaptive Feedback Loops and Active Learning

- Flagged records are annotated by experts; feedback retrains detection models.

- Rules engines auto-generate suggestions based on recurring error patterns.

- Active learning prioritizes labeling of ambiguous records to maximize model improvements.

Explainability and Model Interpretability

Techniques such as SHAP and LIME provide feature-level insights. Interactive dashboards trace anomaly scores to contributing factors, building trust and accelerating remediation.

Integration with Orchestration and Monitoring Systems

- Job scheduling via Apache Airflow, Prefect, or Azure Data Factory.

- Distributed execution on Apache Spark, Kubernetes, or serverless engines.

- Real-time dashboards track error rates, anomaly counts, and validation coverage.

- Audit trails capture rule versions, model configurations, and feedback actions.

Integration with Data Catalogs and Metadata Repositories

- Auto-populate metadata for new sources in catalogs like Collibra or Alation.

- Annotate validation outcomes in the catalog to expose quality scores.

- Trigger stewardship workflows when quality scores fall below thresholds.

Scalability and Performance Optimization

- Parallelize validations and ML scoring with Apache Spark clusters.

- Perform incremental validations on changed partitions to reduce processing.

- Incorporate streaming anomaly detectors for real-time pipelines.

- Leverage cloud autoscaling to handle workload peaks.

Governance, Security, and Compliance

AI agents enforce role-based access, encryption at rest and in transit, and encode compliance rules (GDPR, SOX, Basel) to flag PII exposure, segregation-of-duties violations, and risk concentration anomalies. Automated compliance reports aggregate results for regulator submissions.

Supporting Data Lineage and Audit Trails

- Capture lineage for each record: source references, applied rules, anomaly scores, and feedback outcomes.

- Version-control rule sets and ML artifacts for reproducibility.

- Generate audit summaries highlighting data quality evolution, rule changes, and retraining events.

- Feed metadata into GRC platforms for regulatory review and internal controls.

Case Study: Monthly Close Process

- Rule-Based Validations detected missing cost center codes and invalid currency conversions across 500,000 entries in under ten minutes.

- ML Anomaly Detection surfaced 2,300 unusual entries with anomalous intercompany balance spikes.

- Adaptive Feedback Loops cut false positives by 60% after two cycles through analyst annotations.

- Integration with the Airflow orchestrator ensured immediate handoff to transformation without manual delays.

- Comprehensive audit trails accelerated external reviews by 30% and satisfied regulatory auditors.

Key Benefits and Considerations

- Enhanced accuracy with combined rule-based and ML-driven validations.

- Operational efficiency by automating routine checks and focusing expert effort on exceptions.

- Scalability to accommodate growing data volumes and source diversity.

- Continuous improvement through feedback loops and adaptive models.

- Regulatory compliance via transparent audit trails and lineage records.

Success depends on governance frameworks for rule management, domain expertise for annotation, and selection of interpretable models to ensure explainability.

Clean Data Output and Downstream Handoffs

At the end of the cleansing stage, standardized, validated datasets form the foundation for reporting and analysis. These outputs carry provenance, audit trails, and contextual metadata, enabling seamless downstream workflows with minimal rework and reduced risk.

Clean Data Artifacts:

- Validated Transaction Records: Unified schema with standardized fields; exception logs for failed records.

- Master Reference Tables: Refreshed chart of accounts mappings, currency rates, and entity hierarchies.

- Data Quality Dashboard: Real-time metrics on completeness, validity, consistency, and timeliness via platforms like Talend Data Fabric or Great Expectations.

- Provenance and Lineage Records: Embedded metadata on source systems, cleansing rules, and AI agent versions.

- Exception Case Bundles: Records flagged for manual review with remediation actions and reviewer comments.

Dependencies Reviewed Before Handoff:

- Schema Contracts: Conformance to registry definitions, data types, and mandatory attributes.

- Reference Integrity: Valid foreign key resolution against master tables.

- Security Controls: Data encryption and role-based permissions in downstream environments.

- Audit Documentation: Timestamps, rule versions, and operator logs assembled for compliance.

- Service-Level Agreements: Processing times, freshness windows, and error-handling SLAs verified.

Key Data Quality Metrics:

- Completeness Score: Percentage of missing critical fields post-cleansing.

- Validity Rate: Proportion of records passing format, range, and referential checks.

- Consistency Index: Alignment across related entries, such as matching debits and credits.

- Anomaly Count: Records flagged by ML models for unusual patterns.

- Remediation Backlog: Exception cases awaiting review or rule updates.

Handoff Protocol to Transformation Stage

- Completion Signal: Cleansing workflow publishes an event to the orchestration engine once all gates clear.

- Schema Verification: Transformation workflow retrieves and compares schema from the registry.

- Metadata Synchronization: APIs update catalogs with new dataset versions, lineage details, and quality snapshots.

- Secure Data Transfer: Encrypted, permission-controlled landing zone for transformation engines.

- Pre-Transformation Validation: Lightweight checks reconfirm integrity before full processing.

- Acknowledgement and Audit Logging: Transformation stage emits an acknowledgement event with timestamps and identifiers.

Ongoing Monitoring and Issue Escalation

- Health Checks: Scheduled jobs sample staging data to verify freshness, format stability, and volume trends.

- Alerting Mechanisms: Threshold-based notifications for quality metric deviations.

- Feedback Loop: Automated tickets feed discrepancies back into cleansing workflows for rule refinement and model retraining.

By defining clear artifacts, enforcing dependency checks, automating handoffs via orchestrators like Apache Airflow, and embedding continuous monitoring, organizations ensure that the transformation stage proceeds with confidence, driving accurate, timely, and compliant financial reporting.

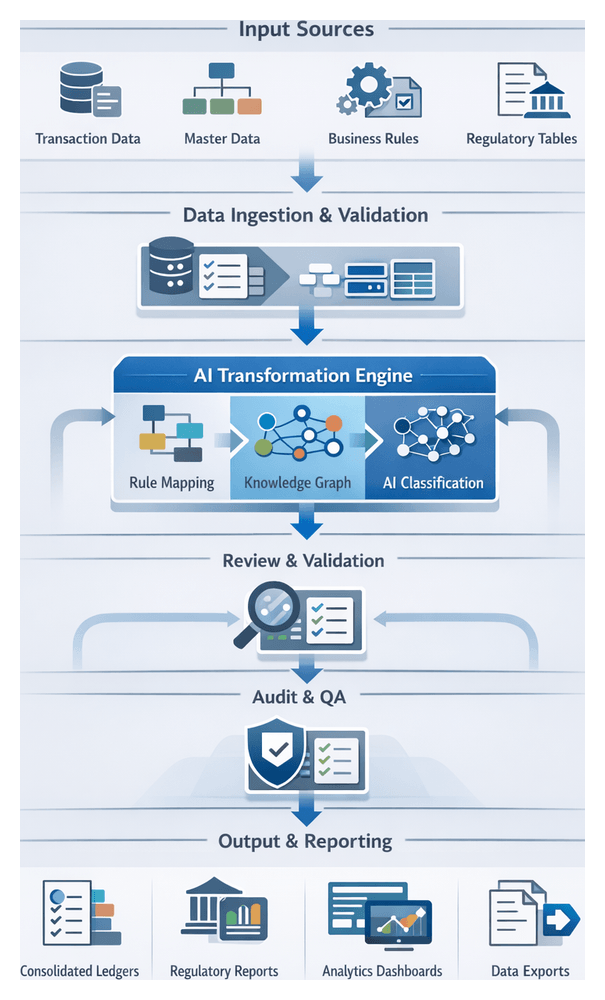

Chapter 3: Intelligent Data Transformation

Purpose and Scope of the Transformation Stage

The transformation stage is the critical nexus where cleansed transaction data is converted into structured, standardized formats ready for analysis, consolidation, and regulatory disclosure. By aligning disparate ledger entries, trading records, and external feeds with predefined charts of accounts, regulatory classifications, and organizational hierarchies, this stage enforces consistency, applies business rules at scale, and generates audit-ready outputs. Precision and traceability are essential to satisfy frameworks such as IFRS, US GAAP, and Basel III. Embedding intelligent mapping, classification, and calculation engines reduces operational risk, accelerates the close cycle, and underpins all downstream analytics, narrative generation, and report assembly functions.

- Standardize entries into a unified ledger schema aligned with corporate charts of accounts

- Map transactions to regulatory categories for compliance reporting and risk analysis

- Apply currency conversion, revaluation adjustments, and inter-company eliminations

- Maintain a complete audit trail of transformation logic, versioned rule sets, and data lineage

- Produce enriched, structured outputs for analytics, narrative generation, and reporting

Inputs, Prerequisites, and Governance

Reliable transformation depends on validated inputs, up-to-date reference data, and a governed infrastructure. Inputs must meet quality metrics—completeness, accuracy, timeliness—and carry metadata tags documenting validation status.

- Cleansed Transaction Data: Verified records from preprocessing pipelines, with anomalies remediated and cleanse logs attached

- Master Data Definitions: Account hierarchies, cost center lists, legal entity structures managed by automated tools

- Business Rule Libraries: Classification criteria, currency rates, revaluation logic, and consolidation rules versioned in a centralized repository

- Regulatory Reference Tables: Code lists and classification schemes mandated by IASB, FASB, Basel, and local authorities

- Metadata and Schema Registries: Definitions of field semantics and data types that underpin validation and output schema generation

- Audit Configuration: Settings for versioning, lineage capture, and exception thresholds

Prerequisites include sign-off on data cleansing, availability of rule libraries, aligned metadata schemas, sufficient compute capacity, secure connectivity, and configured audit logging. Business analysts and regulatory specialists maintain rule libraries, updating mapping and classification logic through automated change management workflows. A centralized metadata registry ensures consistent interpretation of data across systems, supporting audit traceability and rollback capabilities. Scalable infrastructure and orchestration platforms such as Apache Airflow or Databricks must be provisioned for efficient batch or streaming jobs. Governance teams certify that all governance and compliance requirements are met before transformation commences.

AI-Driven Mapping and Categorization Workflow

This workflow orchestrates deterministic and AI-driven components to harmonize cleansed data with reporting requirements. A centralized orchestration engine manages dependencies, invoking rule engines, knowledge graph services, and classification models in sequence.

- Data Intake: Retrieve batched records with contextual metadata from the cleansing repository

- Reference Loading: Fetch and cache the active chart of accounts and classification rules via secured API calls to the metadata service

- Rule-Based Mapping: Apply deterministic mapping rules for straightforward cases, reducing AI load

- Knowledge Graph Enrichment: Invoke a graph service to infer semantic relationships among entities, products, and regulatory categories

- AI-Driven Classification: Trigger supervised and unsupervised models for ambiguous records, producing proposed mappings with confidence scores

- Confidence Evaluation: Automatically accept high-confidence mappings, route low-confidence entries to human review, and apply secondary checks for intermediate cases

- Human-in-the-Loop Review: Present exceptions in a review interface; record decisions to refine models and rules

- Final Tagging: Assign account codes, cost centers, regulatory classifications, and standardized metadata

- Quality Assurance: Cross-validate aggregates against expected balances; trigger alerts or rollbacks for discrepancies

- Publish to Repository: Persist finalized records and lineage metadata for downstream analytics and reporting

System interactions include:

- Orchestration engine to rule engine (e.g., OpenRules, Drools)

- Orchestration to knowledge graph services (e.g., Neo4j)

- Orchestration to AI classification engines and review interfaces

- Monitoring and logging services capturing audit trails, timestamps, and payload details

Error handling distinguishes transient failures—automatically retried with backoff—from permanent errors routed to exception queues. Business rule violations and low-confidence AI failures trigger dedicated remediation workflows. Continuous feedback loops capture human corrections, rule failure patterns, and newly inferred graph relationships, feeding model retraining and rule refinement. To scale, the workflow leverages parallel processing, asynchronous integration via message queues, auto-scaling infrastructure, in-memory caching of reference data, and real-time monitoring to maintain throughput and low latency.

Key benefits include consistent application of business rules, accelerated data readiness, comprehensive auditability, rapid adaptability to policy changes, and transparent mapping decisions supported by confidence scores and exception reports.

Embedding AI Agents in Data Collection, Validation, and Preliminary Analysis

AI agents automate routine tasks throughout the reporting cycle, orchestrating connectors, enforcing quality, and generating initial insights.

- Connector Orchestration: Agents invoke REST or SOAP APIs against ERP platforms, trading systems, and market data services, managing authentication, retries, and execution logs

- Schema Discovery: Lightweight inference models detect changes in source structures and adapt mapping rules dynamically

- Incremental Retrieval: Watermarking strategies fetch only new or updated records, optimizing performance

- Logging and Audit Trails: Centralized logging captures every connector action for full traceability

- Rules-Based Checks: Predefined validations for account balances, date sequences, and cross-ledger reconciliations

- Anomaly Detection: Unsupervised models surface statistical outliers in amounts, volumes, and time series behaviors

- Master Data Validation: Fuzzy matching against reference repositories for identifier resolution

- Feedback Loops: Analyst resolutions retrain anomaly models to improve future detection

- Aggregated Metrics: Compute totals by business unit, region, and product line

- Variance Analysis: Compare current, prior, and budget figures; highlight significant deviations

- Trend Detection: Time series models identify emerging patterns and threshold breaches

- Pre-Scoring: Assign preliminary risk scores for fraud detection and compliance pipelines

Supporting systems include workflow orchestration engines such as Apache Airflow, messaging platforms like Apache Kafka or RabbitMQ, centralized metadata repositories, observability tools (Prometheus with Grafana), and security frameworks integrating OAuth or LDAP. Embedding AI agents yields faster close cycles, reduced error rates, audit readiness, horizontal scalability, and adaptive learning. Best practices involve clear objectives, a unified metadata repository, human-in-the-loop controls, incremental rollouts, continuous monitoring and retraining, and rigorous change governance.

Delivering Transformed Ledgers and Integration Interfaces

The final stage formalizes transformed ledgers into authoritative outputs and defines integration endpoints for analytics, narrative generation, and reporting systems.

- Standardized general ledger tables for profit and loss, balance sheet, and cash flow

- Subledger details for receivables, payables, fixed assets, and inventory

- Regulatory classification flags under IFRS, US GAAP, Basel III, Solvency II

- Currency translation records with rate logs and gain/loss calculations

- Audit-friendly crosswalks between source codes and chart of accounts segments

Outputs reside in cloud platforms such as Snowflake, Amazon Redshift, or Parquet files on Databricks, or on-premises in Oracle Exadata or SQL Server. Schemas are registered in catalogs like Apache Atlas or Collibra.

- Dependency Matrix: Align cleansed inputs, rule definitions (OpenRules, Drools), graph records (Neo4j), and versioned charts of accounts

- Lineage Tracking: Automated tools detect upstream changes and orchestrate targeted reprocessing to maintain responsiveness

Integration endpoints:

- Batch extraction via JDBC/ODBC for Tableau and Microsoft Power BI

- RESTful APIs through gateways like NGINX or Kong

- Message streams to Apache Kafka for ML scoring in TensorFlow Serving or PyTorch

- Secure file transfers via SFTP for regulatory filings

Data contracts specify schema definitions, refresh schedules, and error rules. Automated contract testing with Pact or Stoplight verifies conformance. Monitoring of API latency, queue lag, and batch completion uses platforms like Datadog or Splunk.

- API and Streaming Handoffs: Kafka events trigger microservices on Kubernetes; REST endpoints support dynamic report; webhook notifications alert downstream systems

- Metadata and Lineage: Orchestration engines such as Apache Airflow or Prefect capture end-to-end data journeys

- Quality Assurance: Validate control totals and balance integrity; exceptions routed for AI-driven reconciliation and reprocessing

- Security and Compliance: Encryption at rest and in transit using AWS KMS, Azure Key Vault, Google Cloud KMS; access via Auth0 or Okta; continuous compliance scanning

- Monitoring and Alerts: Track transformation runtime, API availability, data freshness, and error rates; anomaly detection on telemetry; incident response via PagerDuty or OpsGenie

- Versioning and Change Management: Track mapping rule and schema changes in Git; support concurrent schema versions; automate deployments through CI/CD pipelines

This integrated framework delivers fully validated ledger artifacts and robust interfaces, providing a scalable, audit-ready foundation for end-to-end AI-driven financial reporting, analytics, narrative generation, and executive decision support.

Chapter 4: AI-Powered Analytics and Insight Generation

Analytics Stage Objectives and Data Requirements

The analytics stage transforms cleansed, normalized data into actionable business insights for automated financial reporting. By applying statistical analysis, machine learning models, and rule-based algorithms, it generates performance indicators, forecasts, and anomaly alerts that support decision making and narrative disclosures. In finance and banking contexts, analytics must comply with IFRS, GAAP, Basel and other regulatory frameworks, ensure reproducible methodologies for key metrics—return on equity, liquidity ratios, credit exposures—and establish thresholds for early detection of outliers or compliance breaches.

Successful analytics depends on comprehensive inputs that meet strict quality and structural criteria:

- Structured Ledger Data

- Reconciled general ledger entries and sub-ledger mappings

- Account hierarchies aligned with corporate taxonomy and regulatory codes

- Financial Statements

- Balance sheet, profit & loss, and cash flow aggregates across periods and entities

- Historical Time Series

- Multi-period transaction histories and rolling windows for trend analysis and model training

- External Reference Data

- Market prices, interest rate curves, FX rates, GDP, CPI, unemployment figures, and peer benchmarks

- Metadata and Configuration

- Business rules for revenue recognition, provisioning, risk weights, and alert thresholds

- Feature definitions and serialized machine learning model artifacts

Each input must satisfy quality gates for completeness, accuracy, consistency, timeliness, lineage, and traceability. Data readiness demands a unified repository accessible via high-performance interfaces, scalable compute resources for batch and real-time analytics, containerized model deployment frameworks (Kubernetes or Docker), role-based security controls, and governance mechanisms for KPI definitions and regulatory approvals. Outputs adhere to standardized schemas—tabular reports, JSON or XML payloads with metadata annotations and confidence scores—and trigger events for narrative generation, visualization, and compliance workflows.

Statistical and Machine Learning Workflow Sequence

This stage orchestrates data ingress, feature engineering, model operations, anomaly detection, and scoring across batch and streaming contexts. Coordination between orchestration engines, feature stores, model registries, compute clusters, and alerting services ensures timely, accurate, and compliant analytical outputs.

Data Ingress and Feature Preparation

Ingestion jobs, scheduled by platforms such as Apache Airflow, extract transformed ledgers, account mappings, and regulatory labels into an analytics data lake or feature store. Metadata validation against catalog schemas confirms completeness and consistency. Feature engineering agents compute rolling aggregates, financial ratios, lag features, moving averages, volatility measures, and statistical indicators, versioning them in the feature store with lineage metadata for reproducibility.

Model Selection and Configuration

An AI orchestration engine consults a model registry—tracking algorithms from ARIMA and exponential smoothing to gradient boosted trees and neural networks—selecting candidates based on data freshness, latency requirements, and explainability constraints. Hyperparameters, cross-validation settings, and evaluation metrics are sourced from configuration files or parameter stores. A run specification outlining compute environment, data inputs, model code repository, and notification endpoints is version-controlled for audit purposes.

Batch Scoring Workflow

- Job Submission: The orchestration engine submits run specifications to platforms such as Apache Spark or Databricks.

- Data Loading: Worker nodes retrieve feature partitions from the feature store.

- Model Execution: Serialized model artifacts are fetched from the registry and applied to feature sets.

- Result Aggregation: Predictions, probability estimates, residuals, and anomaly flags are aggregated into result tables or message queues.

- Validation Checkpoint: Post-scoring services verify totals against expected ledger balances.

Completion notifications are dispatched via messaging infrastructure such as Apache Kafka, and performance metrics are logged for capacity planning and SLA tracking.

Real-Time Evaluation Pipeline

For low-latency requirements—intraday liquidity analysis or fraud detection—streaming events traverse a message bus into engines like Apache Flink. Lightweight models deployed as microservices apply inference on each event, producing scores, confidence intervals, and feature attributions for explainability. Results feed dashboards and risk systems, while feedback loops trigger retraining when data drift or low confidence is detected.

Anomaly Detection and Alerting

- Statistical tests (z-score, residual analysis) and ML-based detectors examine batch and streaming outputs.

- Rules engines evaluate anomalies against severity thresholds, routing alerts via email, collaboration platforms, or ticketing systems.

- Incident records in management tools enable analysts to review, annotate, and resolve anomalies, with feedback refining detection parameters and retraining triggers.

Audit Logging and Traceability

Every action—data retrieval, feature computation, model training, scoring, anomaly flagging—is recorded in a write-once, append-only audit log. Entries capture timestamps, actor identities, data snapshot identifiers, model versions, parameters, execution outcomes, and references to change requests or incidents, supporting tamper-evident audits and regulatory compliance.

Machine Learning Agents and MLOps Integration

Specialized AI agents automate feature engineering, model lifecycle management, scoring, monitoring, and explainability, integrating with MLOps platforms and data repositories to deliver transparent, governed analytics.

Core Agent Types

- Feature Engineering Agents: Generate temporal and statistical variables, encode categorical attributes, normalize features using libraries such as scikit-learn, and enrich with external market indicators.

- Model Orchestration Agents: Coordinate training pipelines, hyperparameter tuning, experiment tracking via MLflow or Elyra.

- Scoring and Inference Agents: Deploy models on platforms like Databricks or Kubeflow for batch and real-time predictions.

- Monitoring and Drift Detection Agents: Compute statistical divergence metrics, detect data and concept drift, trigger retraining when thresholds are exceeded.

- Explainability and Compliance Agents: Leverage SHAP and LIME to produce feature attributions, generate audit-ready documentation, and serve explainability dashboards for compliance officers.

Model Lifecycle Orchestration

Agents select algorithms using heuristics or AutoML frameworks such as TensorFlow AutoML and H2O.ai, execute hyperparameter optimization via grid or Bayesian search, parallelize training in cloud or on-prem clusters, and log experiment metadata in registries. Validation includes k-fold cross-validation, stress scenarios, baseline comparisons, and model card generation for governance review. Approved models are promoted to production with containerization on Docker and orchestration on Kubernetes.

Scoring and Deployment Patterns

Scoring agents expose RESTful APIs for low-latency inference, schedule batch inference during off-peak hours, and stream predictions through event-driven architectures like Apache Kafka. Consistency is maintained by versioning model artifacts and shared transformation code. Fallback mechanisms ensure reporting continuity during system failures.

Continuous Monitoring and Retraining

Monitoring agents track performance, compute PSI and KL divergence, compare prediction distributions to baselines, and invoke retraining pipelines when drift or degradation occurs. Notifications provide drift diagnostics to data scientists and business stakeholders, integrating with CI/CD workflows for automated promotion of approved model versions.

Human-in-the-Loop Collaboration

Despite automation, human expertise shapes analytics by reviewing ambiguous patterns, experimenting in interactive notebooks, signing off on model deployments via ticketing systems, and providing feedback on prediction accuracy. Collaborative platforms like Slack or Microsoft Teams receive alerts and reports, enabling timely decision-making in financial close activities.

Insight Outputs and Handoff Protocols

Insight outputs—anomaly flags, trend forecasts, performance metrics, risk scores, and scenario simulations—are packaged with metadata and dependencies to ensure seamless integration with narrative engines, visualization platforms, and compliance systems.

Output Types and Formats

- Anomaly Reports: Structured JSON or tabular listings of outliers and irregularities.

- Trend Forecasts: Time series projections generated with tools like Prophet and TensorFlow.

- Performance Dashboards: Aggregated KPI summaries, variance analyses, and scorecards.

- Risk and Compliance Scores: Outputs from regulatory rule engines and ML classifiers.

- Scenario Simulations: What-if analyses for market, policy, or operational changes.

Standardized Delivery Mechanisms

- Delimited Files (CSV, TSV) and database tables for bulk transfers.

- Structured JSON/XML payloads for API-driven consumption.

- Message queues via Apache Kafka or AWS Kinesis for real-time updates.

- Data cubes and RESTful endpoints for BI tools and custom dashboards.

Metadata and Lineage

- Source system identifiers, ingestion timestamps, and pipeline versions.

- Model version, hyperparameters, training dataset snapshot, and validation metrics.

- Confidence scores, p-values, and annotations explaining data imputation or overrides.

- Processing timestamps and quality flags to track SLA adherence and exception handling.

Handoff to Narrative and Visualization

- JSON payloads with metric names, values, annotations, and template selection keys for engines like DataRobot NLG.

- Controlled vocabularies mapping analytics outputs to narrative sections.

- Embedded visualization metadata—chart types, axis configurations, and drill-down hierarchies—for automatic dashboard layouts in tools such as Tableau and Power BI.

Compliance, Audit Trails, and Quality Assurance

- Immutable logs capturing insight generation events, approvals, and exception workflows.

- Rule engine inputs for regulatory validations (Sarbanes-Oxley, IFRS checks).

- Quality checks for drift detection, low confidence scores, and excessive data imputations.

- Trigger mechanisms—scheduled batch runs, event-driven invocations, and ad hoc requests—to refresh insights on demand.

By formalizing insight outputs, metadata, dependencies, and handoff protocols, the analytics stage becomes a reliable foundation for narrative generation, visualization, compliance, and audit processes, enhancing operational efficiency, governance, and regulatory resilience in financial reporting.

Chapter 5: Automated Narrative Generation

Automated narrative generation transforms quantitative analytics into clear, context-rich commentary that guides readers through financial results, risk assessments, and performance drivers. By converting statistical outputs, anomaly flags, and trend forecasts into coherent prose, organizations bridge the gap between raw data and decision-ready insight. In regulated environments such as banking and finance, this stage ensures disclosures meet compliance requirements, maintain consistency across reporting periods, and reinforce the institution’s brand voice.

Key objectives include:

- Articulating key financial movements, such as revenue variance and margin fluctuation.

- Highlighting anomalies detected by AI-driven analytics in terms of risks or opportunities.

- Providing forward-looking commentary based on forecast models for strategic planning.

- Embedding regulatory and compliance language aligned with IFRS, GAAP, or Basel requirements.

- Maintaining a consistent tone and terminology in line with corporate style guides.

By automating narrative creation, finance teams reduce drafting time, minimize human error in disclosures, and free subject-matter experts to focus on strategic review.

Essential Inputs and Prerequisites

Reliable narrative generation depends on well-defined analytical outputs, metadata, templates, and data quality controls:

- Analytical Outputs: KPIs (revenue growth, cost ratios, liquidity metrics), anomaly reports, trend analyses, forecast summaries, and segment breakdowns.

- Contextual Metadata: Reporting period definitions, regulatory reference data, risk appetite indicators, and comparative benchmarks.

- Templates and Style Artifacts: Pre-approved narrative templates (earnings commentary, MD&A), a glossary and taxonomy, brand voice guidelines, and a compliance ruleset.

- Data Quality Preconditions: Accurate, complete, and consistently formatted inputs validated against master data and reference tables.

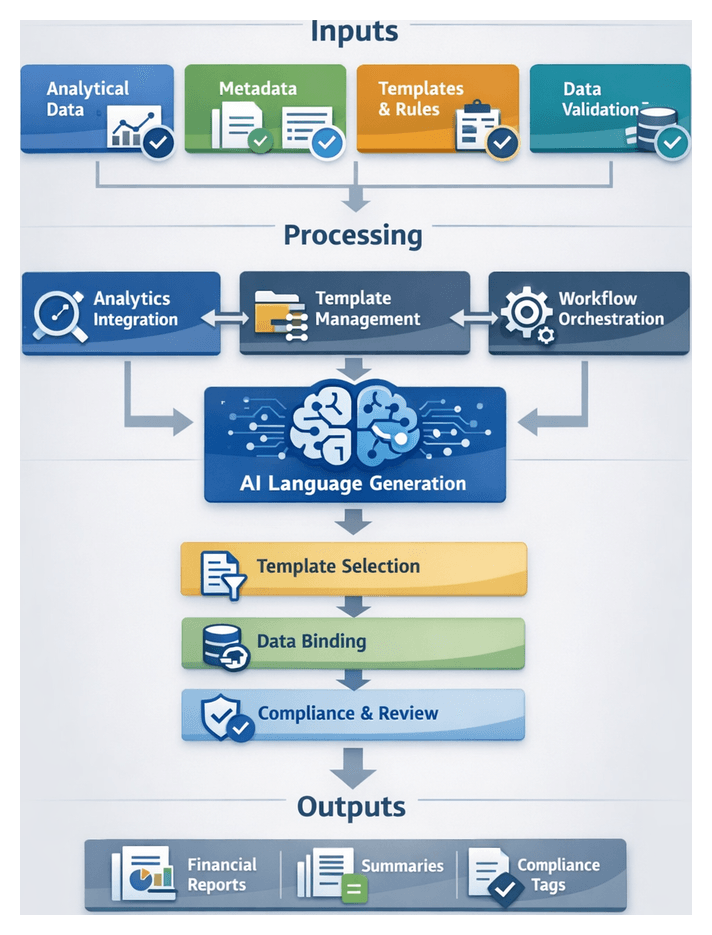

Key Components and Integration Architecture

The narrative generation stage relies on specialized AI components and integration layers to access inputs and deliver outputs into the reporting pipeline:

- Natural Language Generation Engine: Domain-tuned NLG platforms such as OpenAI GPT-4, IBM Watson Natural Language Generation, or Arria NLG.

- Template Management System: Repository for narrative templates, style guides, and compliance rules that interfaces with the NLG engine, enabling template updates without code changes.

- Analytics Integration Layer: APIs delivering anomaly flags, trend data, and forecasts from AI-powered analytics, with secure, low-latency data exchange and error handling.

- Workflow Orchestration: Orchestration platforms schedule the narrative job, monitor execution, and manage retries in case of data dependency delays.

Template Selection and Text Assembly

The system transitions from raw analytical data to structured prose through a controlled flow of template selection, data binding, content sequencing, and validation:

Initial Inputs and Repository

- Analytical outputs: time-series trends, variance analyses, anomaly flags, forecast summaries.

- Report metadata: period identifiers, entity hierarchy, regulatory framework, materiality thresholds.

- Audience profiles: tone and depth requirements for executives, auditors, or internal stakeholders.

- Compliance flags: mandatory disclosures and phrasing constraints.

Template repository entries include identifiers, modular content blocks, placeholder maps, conditional logic, styling attributes, and regulatory annotations. Each element serves a distinct purpose: identifiers streamline content retrieval, modular content blocks enhance flexibility, and placeholder maps guide content placement. Conditional logic allows for dynamic content adaptation, while styling attributes ensure visual consistency. Regulatory annotations maintain compliance, safeguarding against legal pitfalls.

Together, these components create a robust framework for efficient content management and deployment.

AI-Driven Template Ranking and Selection

- Relevance Scoring: Machine learning models assess template fit based on context, language style, and historical usage.

- Compliance Verification: Rule engines filter non-conforming templates against regulatory flags.

- Performance Heuristics: Past metrics such as review turnaround times guide template prioritization.

- Selection: The orchestrator assembles an initial structure, mapping content blocks to analytical insights.

Data Binding and Placeholder Resolution

- Placeholder matching and validation against data fields ensures that all required inputs are accounted for. Implement exception workflows to address any missing data, allowing for seamless adjustments and maintaining the integrity of the final output. This process not only enhances data accuracy but also streamlines the overall workflow, reducing the risk of errors and improving turnaround times.

- Value formatting for numbers, dates, and currencies according to regional settings.

- Contextual enrichment: pluralization, article selection, and numeric-to-text conversion.

- Cross-reference integration: inline links to charts or tables managed in the report assembly stage.

Content Sequencing and Flow

- Dependency Analysis: Ensuring logical order (e.g., overview before variance explanation).

- Transition Generation: Leveraging models such as AWS Comprehend to create connective sentences.

- Tone Consistency Checks: Style evaluation models enforce uniform vocabulary and complexity.

- Adaptive Reordering: Customizing block order based on audience profile.

Compliance, Exception Handling, and Feedback

- Regulatory and brand style audits ensure mandatory language and approved phrasing.

- Fallback mechanisms: default templates, manual intervention queues, data request triggers, and graceful degradation for missing inputs.

- Continuous learning: telemetry on template performance, exception frequency, and reviewer edits informs model retraining and rule updates.

AI Language Models and Domain Tuning

Transformer-based models such as GPT-4, Azure OpenAI Service, and Amazon Bedrock provide the generative backbone. Domain tuning ensures compliance and brand alignment through:

- Fine-Tuning: Training on historical financial narratives, regulatory filings, and annotated commentary to imbue industry jargon, compliance patterns, and corporate tone.

- Prompt Engineering: Structured input templates with placeholders, tone instructions, and regulatory context flags enable rapid adaptation without retraining.

These models integrate with metadata management, style and compliance repositories, human-in-the-loop interfaces, and monitoring frameworks that track semantic accuracy, regulatory adherence, and readability metrics. Specialized agents include:

- Model Orchestrator: Selects appropriate model instances based on report type and throughput needs.

- Domain Adapter: Manages fine-tuned checkpoints and retraining schedules aligned with regulatory updates.

- Prompt Manager: Constructs and optimizes prompt templates with dynamic context variables.

- Compliance Verifier: Executes semantic checks and regulatory rule validations.

- Feedback Integrator: Aggregates reviewer annotations and performance metrics for continuous improvement.

Generated Outputs and Downstream Handoffs

The narrative engine produces structured content artifacts in a standardized schema, ensuring seamless integration into the report assembly process:

- Executive summaries, section-level narratives, footnote explanations, and meta-segments for compliance tagging.

- JSON-like schema fields: SectionIdentifier, ContentText, ContextTags, StyleProfile, and VersionInfo.

Handoff to the dynamic report assembly stage follows a protocol of artifact publication, orchestrator notification, schema validation, content merging with visuals, and final compliance confirmation. Robust error handling addresses schema validation failures, content quality flags, dependency breakdowns, and audit trail recording. Version control tracks template, model, and content versions, along with change metadata for full traceability.

Human-in-the-loop processes manage expert review of high-risk sections through review task creation, inline annotations, and digital sign-off workflows. The result is a consistent, efficient, and scalable narrative output that accelerates report production while ensuring regulatory compliance and audit readiness.

Chapter 6: Dynamic Report Assembly and Formatting

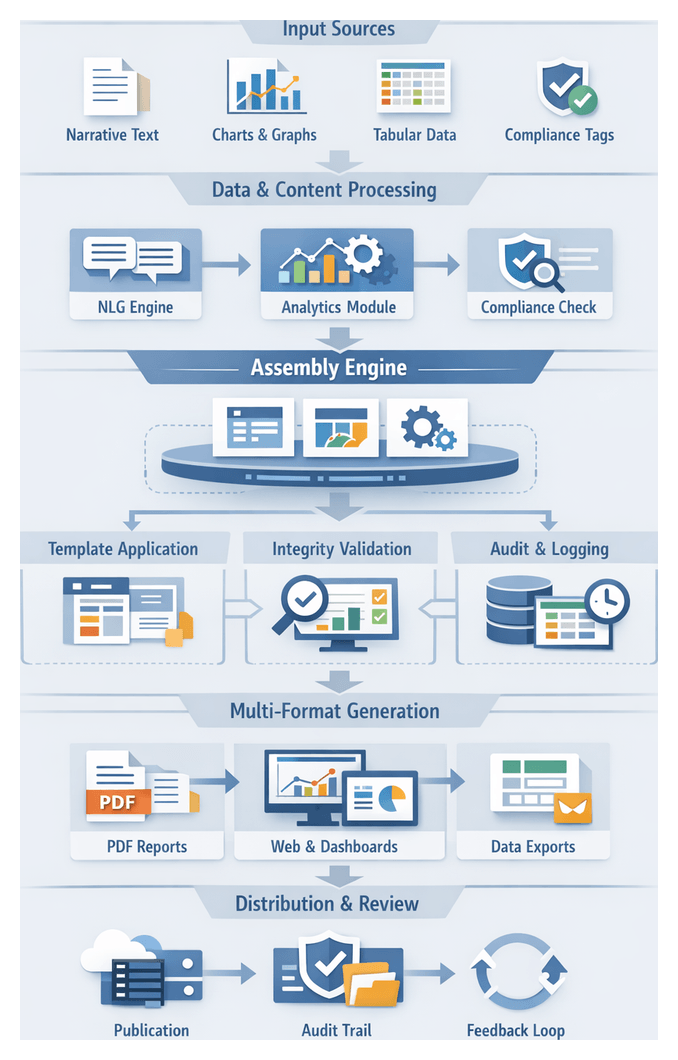

Assembly Stage: Purpose and Strategic Role

The assembly stage represents the culmination of automated financial reporting workflows, orchestrating narrative text, analytical charts, tabular data, and compliance annotations into a cohesive deliverable. Beyond aggregation, this phase validates that each component adheres to branding guidelines, regulatory requirements, and formatting standards. By integrating outputs from natural language generation modules, machine learning analytics, and compliance checks, the assembly engine produces professionally styled documents optimized for PDF, web, and interactive dashboards. In banking and finance contexts, this stage is critical for consistency, compliance, and timeliness: any error can undermine disclosures, jeopardize filings, or erode stakeholder confidence.

Key Objectives

- Integrity Validation: AI agents perform structural checks on narratives, visualizations, and tables to confirm alignment with analytics outputs and regulatory annotations.

- Template Application: Layout engines map validated components onto master templates in Adobe InDesign Server or custom HTML/CSS frameworks, enforcing style rules from font usage to pagination.

- Multi-Format Output: Generation of PDF, HTML5, and dashboard slices tailored to finance teams, auditors, and executives.

- Audit Trail Maintenance: Logging of template versions, input hashes, and AI decisions to create transparent provenance records for internal and external audits.

- Extensibility: Modular workflows allow configuration of new formats or compliance mandates without code changes, enhancing agility for design updates and regulatory shifts.

Essential Inputs and Prerequisites

Successful assembly depends on readiness of upstream assets:

- Narrative Documents: Commentary and disclosures from NLG modules, verified for compliance and clarity.

- Analytical Visualizations: Charts and infographics produced by ML engines, rendered for tools like Tableau and Microsoft Power BI.

- Tabular Data Sets: Financial line items and schedules in CSV, XLSX, or database exports.

- Regulatory Annotations: Metadata tags, footnotes, and disclosure checklists applied during compliance checks.

- Brand Assets and Templates: Logos, color palettes, typography rules, and layout definitions stored in Bynder or similar DAM systems.

- Metadata and Audit Information: Source timestamps, AI agent identifiers, and version histories for traceability.

Prerequisites include signed-off narratives, completed analytics runs with anomaly flags, validated compliance tags, updated template definitions, and network access to generation engines and asset repositories.

Layout and Template Workflow Execution

This stage transitions from content aggregation to visual composition. A template repository, layout engine, AI-driven design agents, and validation modules collaborate to arrange narratives, tables, charts, and graphics into branded, compliant templates. AI agents map content blocks to dynamic placeholders, apply style rules, and generate proofs for review, eliminating manual positioning and ensuring consistency across PDF, web, and dashboard formats.

Workflow Trigger and Template Selection

- Orchestration Signal: Triggered by completion of narrative and visualization modules in platforms like Apache Airflow or Prefect.

- Metadata Extraction: Attributes such as report type, region, language, and distribution channel guide template lookup.

- Rules-Based Selection: A template registry returns the best-matching file; fallback options raise exception flags for manual review.

Content Block Mapping