AI Agent Orchestration for Customer Interaction An End to End Workflow Solutions Guide

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Bridging Communication Silos

Organizations engage customers through email, web chat, voice, social media and messaging apps. Each channel may be managed by separate systems and teams, resulting in fragmented information flows, inconsistent responses and manual handoffs. Agents spend time reconciling disparate records and customers must repeat context, raising resolution times and support costs while undermining satisfaction. Without a unified view of conversations and performance metrics, leadership lacks end-to-end visibility required for data-driven decisions and continuous improvement.

Eliminating communication gaps begins with a clear articulation of objectives, the data inputs required for analysis and the organizational prerequisites for integration readiness. Establishing this foundation enables the design of an end-to-end orchestration layer that preserves context, enforces consistent service levels and unlocks advanced AI capabilities.

- Strategic Objectives

- Context Preservation: Maintain complete interaction history and customer preferences across all touchpoints.

- Service Consistency: Apply uniform response standards and brand voice.

- Operational Efficiency: Remove data reentry and streamline routing processes.

- Data-Driven Insights: Aggregate holistic datasets for analytics and trend detection.

- Scalability: Enable rapid onboarding of new channels and AI services.

- Key Data Inputs

- Channel Logs: Email threads, chat transcripts, call recordings and social media feeds.

- Customer Profiles: Unique identifiers, demographics, purchase history and communication preferences.

- Routing Rules: Business logic for queue assignments, priority handling and escalations.

- System Configurations: API endpoints, data schemas and middleware specifications.

- Compliance Policies: Data retention, encryption standards and regulatory requirements.

- Integration Prerequisites

- Executive Sponsorship: Leadership commitment to cross-functional collaboration.

- Governance Framework: Steering committee with IT, support, security and compliance representatives.

- Technology Audit: Inventory of messaging platforms, CRM, telephony and middleware.

- API Access: Credentials and service agreements for each channel connector.

- Data Governance: Ownership, quality standards, retention and audit controls.

- Security Baseline: Risk assessments, privacy impact analyses and architecture reviews.

- Change Management: Training strategies and communication plans for new workflows.

- Proof of Concept Environment: Sandbox instances for integration validation.

Organizations that meet these conditions minimize technical debt, ensure compliance and align stakeholders, setting the stage for a unified interaction workflow and AI-driven orchestration.

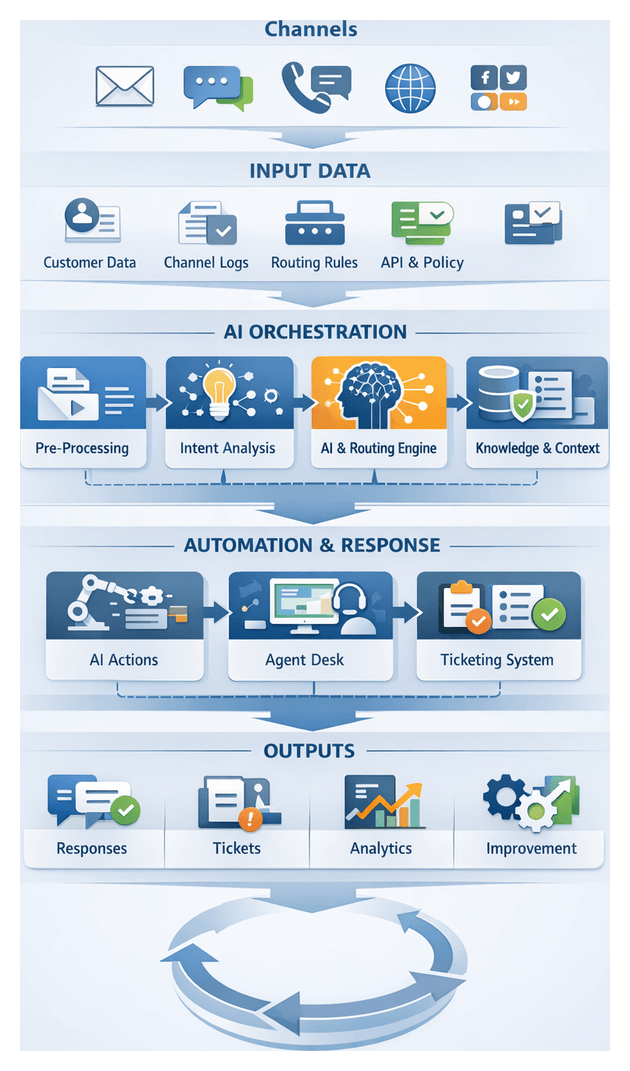

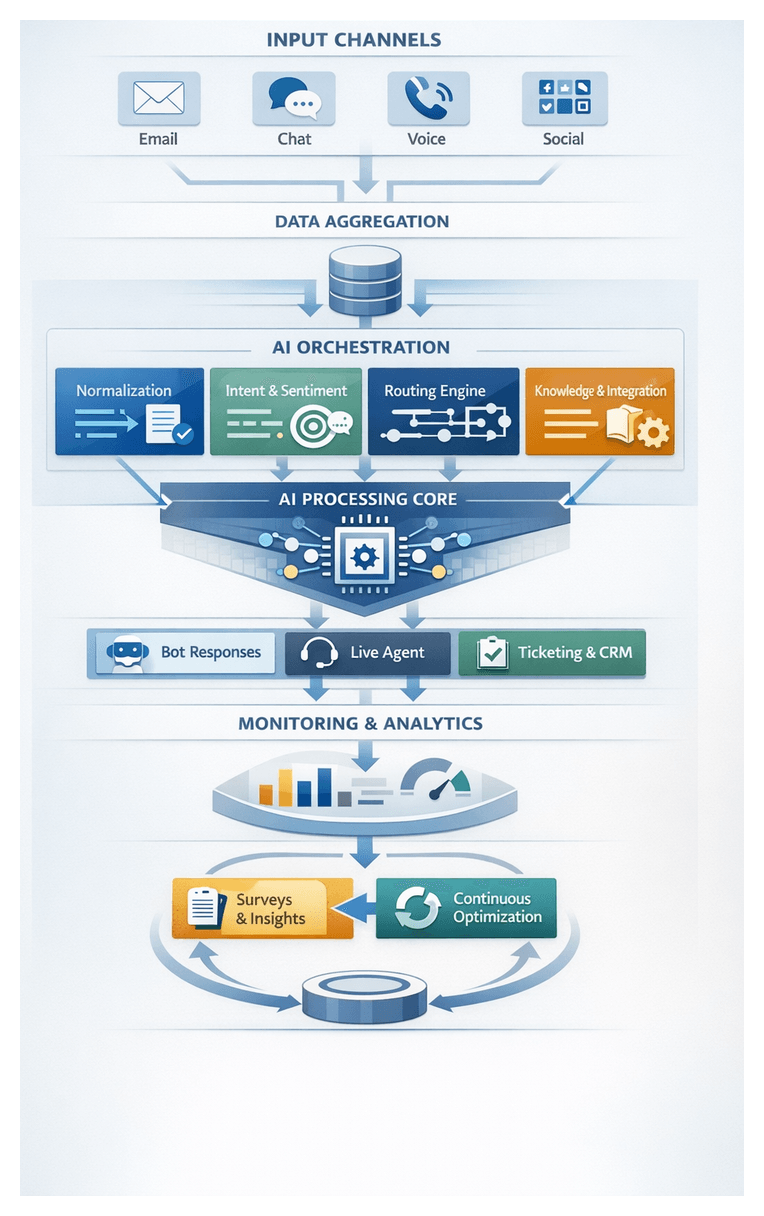

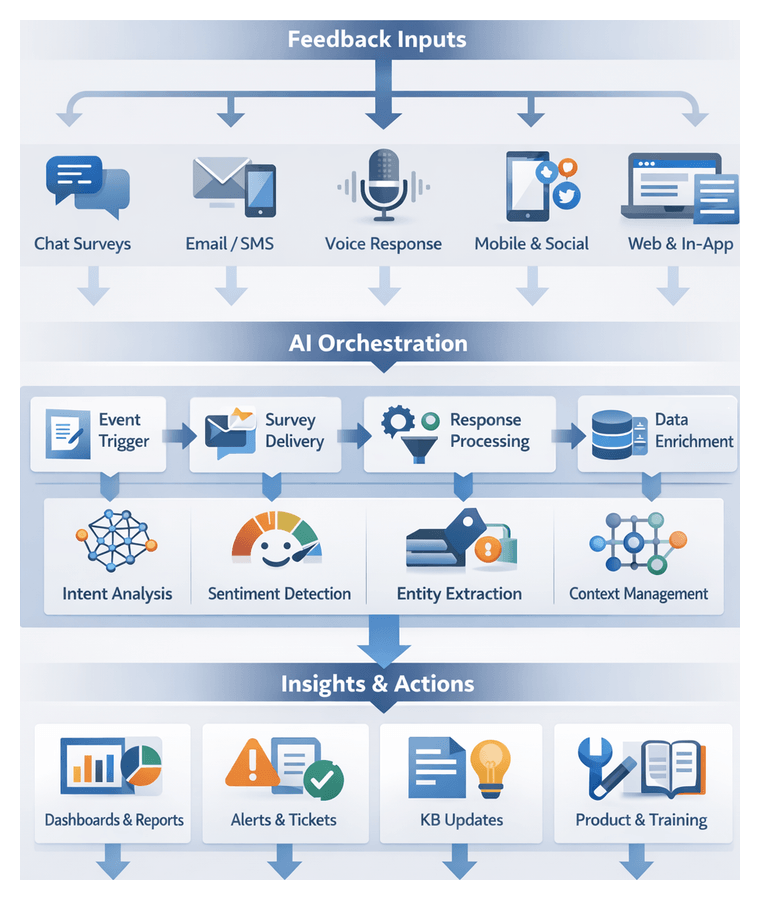

Designing the Unified Interaction Workflow

A unified interaction workflow consolidates incoming inquiries, normalizes messages and enforces consistent processing steps from reception through resolution and analytics handoff. By applying a standardized sequence of validation, enrichment, intent analysis and routing, organizations reduce response times, eliminate context gaps and achieve real-time visibility across all customer engagements.

Workflow Scope

- Channel aggregation and message normalization

- Metadata enrichment and context assembly

- Intent detection and prioritization

- Routing to AI modules or human agents

- Response generation and delivery

- Ticket creation and long-running case management

- Logging, reporting and feedback loops

Core Components

- Channel Connectors: APIs that ingest messages from email servers, chat platforms such as Cisco Webex, voice systems like Amazon Connect and social media services.

- Message Broker: Central queue or event bus that buffers inbound messages and mediates between connectors and processing modules.

- Pre-Processor: Sanitizes content, filters noise and attaches metadata including customer identifiers and geolocation.

- Orchestration Layer: Coordinates workflow steps, invokes AI services and enforces routing logic.

- AI Services: Machine learning models for intent classification, entity extraction, sentiment analysis and response generation via platforms such as the OpenAI API.

- Agent Desktop: A unified interface displaying conversation context, recommended responses and case history for human escalation.

- Ticketing System: CRM or workflow management platforms that record complex cases, manage SLAs and track resolution progress.

Interaction Flow

- Channel Reception: Inbound message arrives via connector. Broker assigns a unique interaction ID.

- Pre-Processing: Normalize encoding, remove markup and enrich payload with profile and context data.

- Intent Detection: NLP service classifies intent and returns confidence scores.

- Prioritization & Routing: Orchestration computes priority based on intent, sentiment and SLAs, routing to AI or human queues.

- Response Generation: AI module drafts replies using templates and context variables; human agents review if needed.

- Delivery & Logging: Final response is dispatched through the connector and all actions are logged.

- Escalation: Low-confidence or complex inquiries trigger ticket creation in the workflow system with full context.

Error Handling & Fall-Backs

- Automatic Retries: Transient failures re-queue messages until successful processing.

- Confidence Escalation: Low-confidence classifications route to human agents.

- Graceful Degradation: If AI services are unavailable, send standard acknowledgments with ETAs.

- Alerts & Intervention: Persistent errors trigger alerts to operations teams via email or collaboration tools.

Benefits

- Consistent Service Levels: SLA-driven processes reduce variability in response quality.

- Improved First-Contact Resolution: Centralized context and intent insights enable faster outcomes.

- Enhanced Visibility: Real-time dashboards provide end-to-end performance metrics.

- Cost Reduction: Automating routine tasks allows agents to focus on complex issues.

- Scalability: Microservices and event-driven design support rapid channel onboarding.

- Continuous Improvement: Interaction data feeds analytics for model retraining and process optimization.

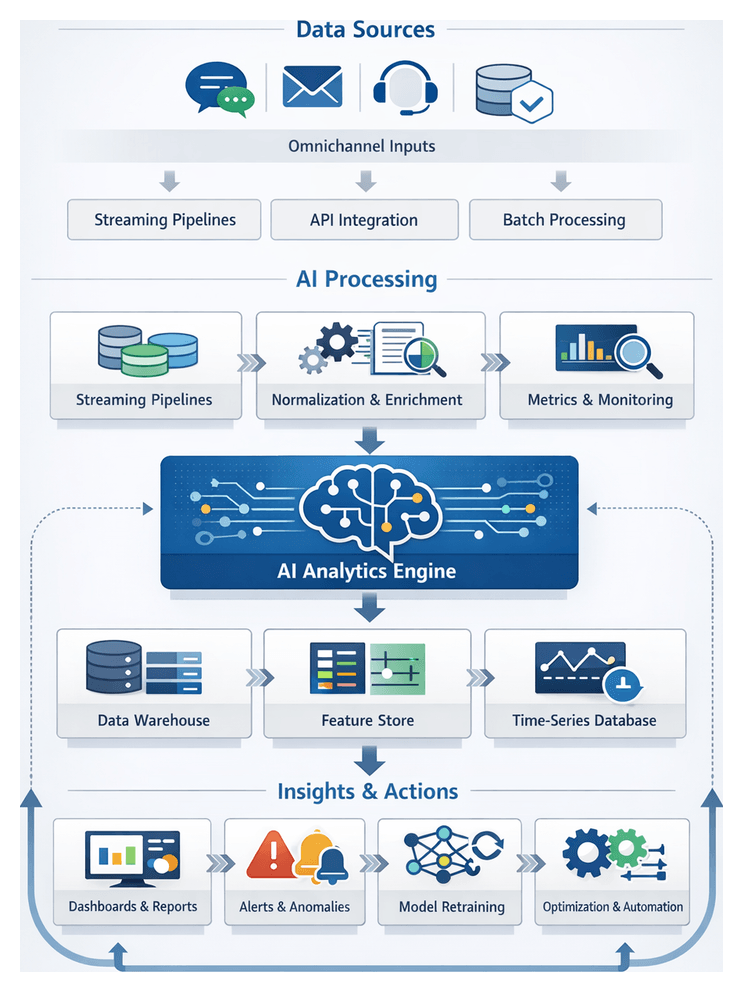

AI-Driven Orchestration Architecture

Positioned at the heart of the unified workflow, the AI orchestration layer acts as the central control plane that sequences tasks, manages state and invokes specialized services. It transforms isolated components into a coherent, automated solution capable of handling complex interactions with consistency and resilience.

The orchestration layer delivers four core capabilities:

- Message Routing & Flow Control: Directs inbound and outbound interactions across channels and AI services.

- Contextual State Management: Maintains session state, metadata and history to preserve conversation continuity.

- Service Choreography: Invokes intent detection, agent selection, knowledge retrieval, response generation, ticketing and analytics services in configurable sequences.

- Exception Handling & Escalation: Applies fallback logic for errors, low-confidence predictions and business rule breaches.

Engine Foundations

Robust orchestration relies on platforms such as IBM Cloud Pak for Integration or open-source frameworks like Node-RED. These tools provide visual workflow builders, message queue adapters and pluggable service connectors, exposing REST or gRPC APIs for management and integration.

Event-Driven Architecture

Interaction events—such as inquiry received, intent classified or response dispatched—are published to a distributed broker. Systems like Apache Kafka or Google Cloud Pub/Sub durably store and fan out events to subscribed microservices. The orchestration layer monitors streams, applies routing rules and emits new events as workflows advance.

Microservices & API Gateways

Individual AI functions and supporting systems run as containerized microservices behind an API gateway that enforces security, rate limiting and authentication. This abstraction allows the orchestration engine to invoke services without concern for network details, using standardized payloads in JSON or Protocol Buffers.

AI Microservices & Model Management

Core AI tasks—intent detection, entity extraction, sentiment analysis and response generation—are each deployed as versioned services. Models may be served via the OpenAI API or custom classifiers on Azure Cognitive Services. A model registry tracks versions, performance metrics and deployment history, enabling A/B testing and safe rollouts.

Contextual State & Session Tracking

A centralized context store holds messages, extracted entities, intent scores, knowledge references and ticket identifiers. Databases or in-memory grids such as Redis ensure low-latency reads and writes. The orchestration layer updates this store at each stage, guaranteeing that downstream services and human agents operate on the same context snapshot.

Integrating AI & Supporting Systems

- Intent Analysis: Pass sanitized text and metadata to the intent microservice; receive ranked labels and confidence scores for routing decisions.

- Response Generation: Invoke NLG services to assemble replies using templates, entities and context. Apply post-processing rules for brand compliance and localization.

- Knowledge Retrieval: Query vector search platforms such as Pinecone or Elasticsearch to fetch relevant articles and inject summaries into responses.

- Ticketing: Create and update cases in systems like Zendesk or ServiceNow, supplying priority, category and SLA metadata.

- Analytics: Emit structured logs and events to streaming platforms like Amazon Kinesis or Confluent for dashboarding and continuous learning.

Scalability & Resilience

Container orchestration with Kubernetes enables horizontal scaling of stateless services and clustering of stateful components. Message brokers provide backpressure management and event replay to prevent data loss. Deployment strategies such as blue/green and canary releases support safe updates, while distributed tracing tools like Jaeger or Zipkin offer end-to-end visibility for debugging and performance tuning.

Governance & Security

The orchestration layer enforces role-based access controls for workflow modifications and sensitive API invocations. Data encryption in transit and at rest protects customer information. Comprehensive audit logs capture every event, decision path and escalation trigger to satisfy regulatory mandates such as GDPR or HIPAA.

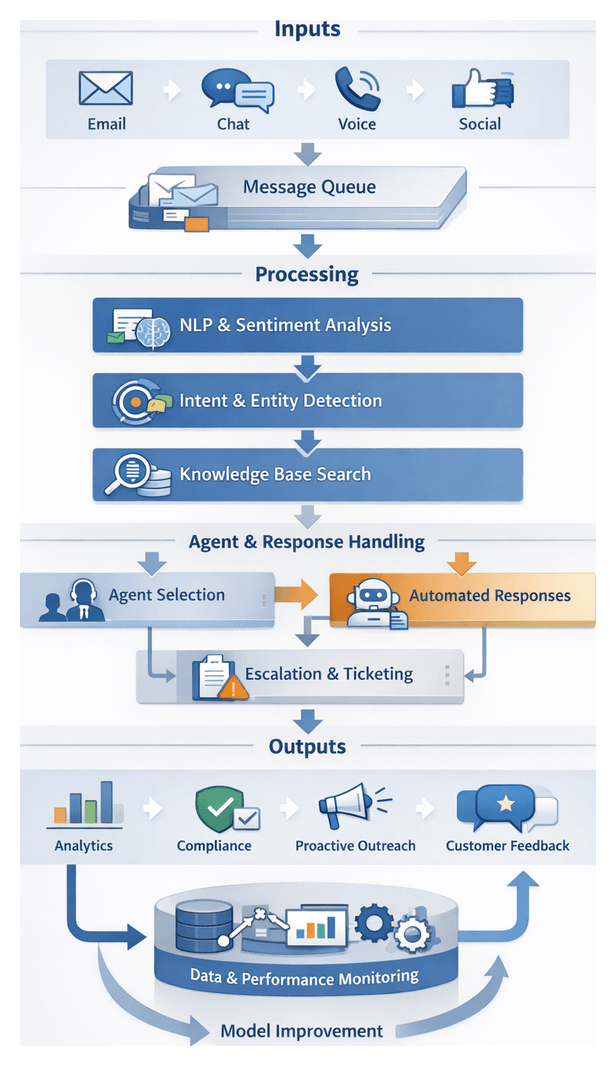

Modular System Blueprint and Integration

A modular architecture isolates responsibilities into discrete components that communicate via well-defined APIs and event streams. This approach simplifies maintenance, enables independent scaling and supports incremental enhancements. The primary modules include:

- Channel Integration Layer

- Natural Language Processing & Intent Detection

- AI Agent Selection & Orchestration

- Automated Response Generation

- Ticketing & Workflow Management

- Knowledge Base & Self-Service

- Escalation & Human Handoff

- Proactive Outreach Automation

- Feedback Collection & Sentiment Analysis

- Performance Analytics & Continuous Improvement

Core Components and Roles

- Channel Adapters: Connect to email servers, chat platforms, telephony systems and social media APIs to sanitize and normalize incoming data.

- Message Router: Evaluates metadata to assign priorities, detect language and forward requests to processing queues.

- Intent Analyzer: Runs NLP pipelines for tokenization, classification and entity extraction to produce structured data.

- Agent Selector: Applies business rules and confidence thresholds to route inquiries to AI modules or live agents.

- Response Composer: Uses NLG models and template engines to generate personalized replies.

- Ticket Generator: Creates and updates records in workflow systems with categorization, priority and SLA tagging.

- Knowledge Retriever: Performs semantic search against repositories, scores results for relevance and injects content into responses.

- Escalation Manager: Monitors confidence scores and complexity indicators to trigger agent handoffs with full context.

- Engagement Scheduler: Orchestrates proactive messages based on customer data, campaign rules and predictive timing analytics.

- Feedback Processor: Gathers survey responses, chat ratings and text feedback, applying sentiment and topic modeling.

- Analytics Engine: Aggregates logs and KPIs to drive dashboards, alerts and retraining triggers for continuous improvement.

Data Flow and Interaction Patterns

- A customer message arrives via a channel adapter and is normalized into a unified envelope.

- The router enriches the envelope with metadata and enqueues it for NLP processing.

- The intent analyzer generates a structured payload with intent labels, entities and confidence scores.

- The selector dispatches the payload to an AI agent or escalation manager based on routing rules.

- The response composer or human agent resolves the inquiry and produces a response object.

- The ticket generator creates or updates a case in the workflow system for multi-step issues.

- Resolved interactions feed the feedback processor to capture sentiment and satisfaction data.

- All logs and metrics are forwarded to the analytics engine for monitoring and model retraining.

Outputs Generated at Each Stage

- Normalized Message Envelope: Raw text, channel metadata, timestamps and identifiers.

- Intent Payload: Classification, extracted entities, language code and confidence scores.

- Agent Assignment Record: Selected channel, routing rationale and fallback flags.

- Response Object: Reply content, template IDs, personalization tokens and delivery metadata.

- Ticket Record: Case ID, priority, category, SLA deadline and assignment group.

- Knowledge Reference List: Ranked articles with relevance scores and retrieval timestamps.

- Escalation Bundle: Conversation history, attachments and knowledge links for live agents.

- Outreach Schedule: Timing, content variants and target segments for proactive messages.

- Feedback Dataset: Survey responses, sentiment scores and thematic codes.

- Analytics Report: Aggregated metrics, trend visualizations and model performance indicators.

Handoff Interfaces and Integration Points

- RESTful APIs for synchronous calls to intent analyzers and response composers.

- Message queues (Kafka or RabbitMQ) to buffer inbound requests and decouple services.

- Webhook callbacks for real-time notifications to CRM and ticketing platforms.

- Batch exports to data warehouses for analytics and retraining.

- OAuth-based authentication and role-based access controls for secure communication.

- JSON schemas to validate payloads exchanged between modules and external partners.

System Dependencies and Orchestration Requirements

- Container orchestration (Kubernetes) for automated deployment, scaling and self-healing of microservices.

- Service discovery tools to locate module endpoints and manage dynamic configuration.

- Distributed tracing and logging systems to monitor request flows and support troubleshooting.

- API gateways to enforce security policies, rate limits and centralized routing.

- Configuration stores (Consul or etcd) for versioned parameter control across environments.

- CI/CD pipelines to accelerate updates and maintain consistency between staging and production.

This blueprint for a unified, AI-driven customer interaction solution delivers reliable experiences, clear handoffs and actionable insights, positioning organizations to scale and innovate with confidence.

Chapter 1: Customer Inquiry and Channel Integration

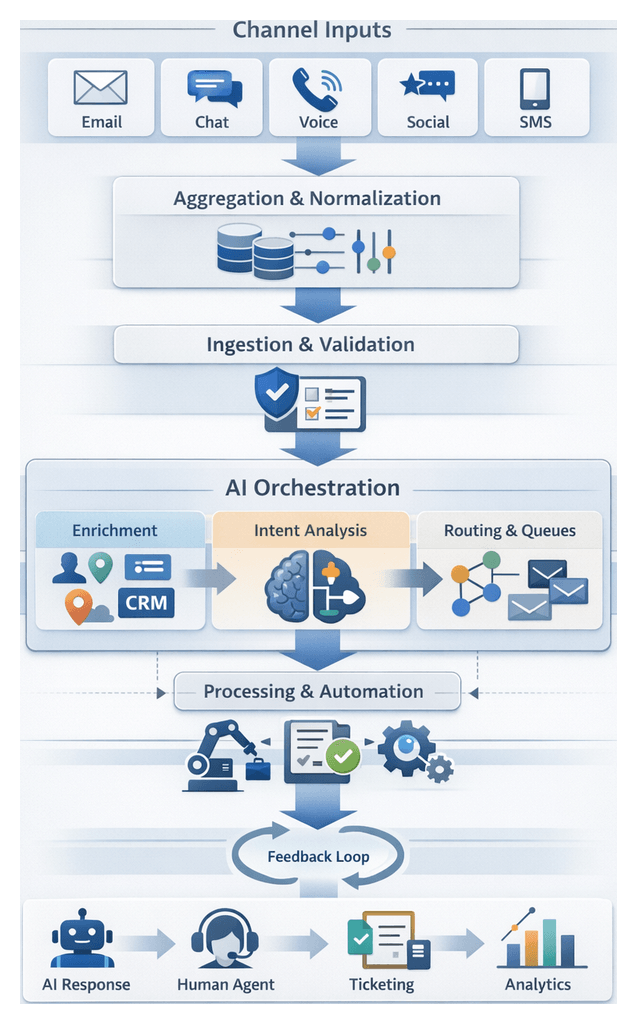

Channel Aggregation Foundation

Purpose and Industry Context

Channel aggregation consolidates customer messages from email, web chat, voice calls, social media and mobile messaging into a unified input stream. By centralizing inbound data, this layer provides a single source of truth for AI-driven processing, preventing fragmented context, duplicated efforts and inconsistent service levels. As enterprises support five or more channels, they face up to 90 percent more inquiries and threefold coordination overhead compared to single-channel operations. Leading providers such as Twilio and Zendesk break down silos, but a dedicated aggregation layer remains critical to deliver seamless omnichannel experiences, uphold regulatory compliance and reinforce risk management objectives.

Required Inputs, Connectivity and Normalization

Each channel connector must map raw payloads and metadata into a common schema. Key data elements include:

- Customer Identifier: Unique ID from CRM or identity system

- Timestamp: UTC-formatted receipt time

- Channel Type: Email, chat, voice or social

- Message Content: Text, transcript link or attachment metadata

- Session Context: Conversation ID or thread reference

- Metadata Attributes: Language code, sentiment, geolocation, user agent

- Security Tokens: API keys or OAuth tokens

Technical prerequisites for channel connectivity include secure API credentials with least-privilege roles for platforms like Twilio Programmable Chat, Twilio Voice and Amazon Connect, validated webhooks or polling endpoints, agreed JSON or XML schemas, network allowlists and TLS certificates, rate limiting policies and centralized logging pipelines.

Post-ingestion normalization enforces ISO 8601 UTC timestamps, UTF-8 encoding, attachment metadata extraction, HTML sanitization, automatic language tagging and identifier unification (for example mapping user_id and customer_id to a single field). Consistent formatting enables downstream AI services to process inputs without custom per-channel logic.

Security, Compliance and Operational Readiness

The aggregation layer must meet encryption, access control and data governance mandates. Requirements include TLS 1.2 for transit, AES-256 at rest, role-based access control, retention policies aligned with GDPR or CCPA, pseudonymization in non-production environments, immutable audit logs and consent management. Health checks and baseline tests validate readiness:

- Connectivity Verification: Test messages from each connector

- Schema Validation: Sample payloads through normalization rules

- Error Handling Simulation: Inject malformed messages to test fallbacks

- Performance Benchmarking: Latency under peak load

- Security Testing: Vulnerability scans and penetration tests

- Compliance Audit Review: Data residency and privacy checks

After validation, normalized records are published via message queues such as Apache Kafka, Amazon SQS or managed event buses, carrying routing metadata for downstream orchestration.

Orchestration of Inbound Workflows

Ingestion and Validation

Channel adapters serve as the entry point, interfacing with IMAP/SMTP servers, Twilio Programmable Chat, Twilio Voice, Amazon Connect, social APIs, SMS gateways and bots. Adapters extract payloads, transform them into a unified event schema and publish to the orchestration broker with metadata headers for language detection and priority tagging.

Validation services perform schema checks against the unified event definition, while sanitization modules apply PII redaction, antivirus scanning and content filtering. Violations route messages to dead-letter or human review queues according to business policy.

Enrichment and Routing

Enrichment services augment messages with context from CRM, identity and geolocation systems. Examples include:

- CRM lookups via Salesforce for customer profile and history

- Identity provider APIs for authentication status and loyalty tier

- Geolocation services for regional compliance and language preferences

- Sentiment pre-scoring using engines like IBM Watson Tone Analyzer

The orchestration engine aggregates metadata into an enriched event, then applies business rules—often via Drools—to evaluate priority, SLA requirements, channel constraints and workload distribution. The routing outcome assigns a target queue or API endpoint for AI services or human agent pools.

Queuing and Processing Patterns

Orchestrated events enter durable brokers such as Apache Kafka, AWS SQS or Azure Service Bus. Key considerations include message durability, consumer concurrency, idempotency keys, and time-to-live settings. Supervisors monitor queue depths and rates, generating alerts for threshold breaches to scale resources or investigate bottlenecks.

Real-time channels like live chat or voice bypass persistent queues in favor of in-memory routing and WebSocket callbacks for sub-second latency. Asynchronous channels like email accumulate in batch queues for bulk processing during off-peak hours, focusing on throughput rather than minimal response time.

Error Handling and Recovery

Error handling frameworks classify failures as transient or permanent. Transient errors such as network timeouts trigger retries with exponential backoff. Permanent errors route messages to dead-letter queues with full context for manual triage. Alerts notify operations teams via email or messaging apps. Remediation actions are applied and messages reprocessed, ensuring no customer inquiry is lost.

Fallback logic diverts critical inquiries to manual channels. For example, if the intent detection queue is unavailable, high-priority messages escalate to a dedicated inbox monitored by support agents.

Performance, Scalability and Observability

The orchestration engine scales horizontally on container platforms like Kubernetes, adjusting adapter and validation instances based on CPU, memory and queue metrics. Message brokers partition topics or shard queues for parallel consumption. Backpressure mechanisms throttle adapters or shed noncritical workloads when thresholds are reached. Circuit breakers protect against degraded external services.

Serialization optimizations leverage Protocol Buffers for high-throughput channels, and HTTP/2 multiplexing reduces overhead. Caching frequent enrichment lookups accelerates processing. Observability relies on metrics and logs collected by platforms such as Datadog and Splunk. Distributed tracing visualizes transaction flows, while dashboards display throughput, latency, error rates and queue backlogs. Alerts integrate with PagerDuty or Opsgenie for rapid incident response.

AI-Driven Coordination Capabilities

Channel Detection and Message Normalization

Upon ingestion, channel classification models detect source types and apply canonical formatting. Tools like Amazon Lex or Google Dialogflow extract structured text from transcripts and attachments, producing a normalized record with timestamp, customer identifier, content and channel metadata.

Contextual Enrichment and Intent Decisioning

AI enrichment services tag records with CRM data, session context, sentiment scores and language flags. Intent detection services powered by transformer-based models assign high-confidence labels. A decisioning engine evaluates labels against policies to select AI agents for billing, technical support or self-service, or triggers escalation if confidence is low.

Dynamic Routing and Response Generation

Routing logic maps decisions to AI modules or human queues. Asynchronous handoffs leverage brokers such as Apache Kafka or cloud event buses. Draft responses from AI agents undergo final validation for brand compliance, tone adjustments and personalization. Natural language generation engines like Microsoft Azure Language Service or OpenAI models ensure coherent, contextually relevant replies.

Integration with Supporting Systems

The orchestration layer exposes REST and gRPC endpoints via an API gateway, centralizing authentication, rate limiting and monitoring. Knowledge base queries against search platforms like Elasticsearch provide contextual articles, while ticketing interfaces invoke APIs in Zendesk or ServiceNow for deep investigation. Feedback loops retrain models on labeled outcomes to continuously optimize performance.

Strategic Value and Best Practices

An AI-driven orchestration layer delivers agility to onboard new channels, consistent customer experiences through unified context management, rapid response times via parallelized processing, and continuous improvement through analytics and model retraining. Key best practices include defining clear data contracts, robust error handling and retry policies, data privacy by anonymizing sensitive fields, versioning AI models and APIs, and governance for business rules and model drift monitoring.

Consolidated Output and Handoff Interfaces

Unified Message Record and Validation

The unified message record combines raw content, enriched metadata and contextual flags in a standardized data object. Components include a globally unique ID, channel information, UTC timestamps, sanitized content, enrichment metadata and priority or compliance flags. Records conform to JSON schema or Protocol Buffer definitions, abstracting channel-specific fields into a consistent structure.

Validation checks confirm connector health, schema compliance using JSON Schema validators or Apache Kafka schema registries, availability of enrichment services such as IBM Watson Natural Language Understanding, and execution of data privacy filters. Failures route records to exception queues for manual review.

Delivery Protocols to Intent Detection

Validated records are dispatched via:

- Message Queues or Topics: Publishing to an AWS SQS queue or Kafka topic named “intent-input”

- RESTful API Endpoints: Posting JSON payloads to NLP gateway endpoints with defined retry logic

- gRPC or WebSocket Streams: Persistent streams with flow control for low-latency delivery

- Event-Driven Triggers: Cloud functions such as AWS Lambda or Azure Functions activated on new record creation

Each protocol includes acknowledgment workflows and monitoring hooks to ensure exactly-once processing. Metrics on queue depth, latency and error rates feed centralized observability dashboards.

Audit, Versioning and Monitoring

Audit metadata appended to each record tracks processing node identifiers, stage-level timestamps and error logs. This data persists in document stores or time-series databases for traceability. Configuration management uses schema registries, feature flags and deployment tags to negotiate schema versions, enable new connectors and correlate behavior to code releases.

Operational monitoring tracks ingestion throughput, error rates, latency and queue backlogs. Predefined alerts notify on-call engineers or trigger automated remediation, integrating with platforms like PagerDuty or Opsgenie to accelerate incident response.

Flexibility for Downstream Variations

The integration layer supports adapter plugins to transform records for specific consumers, multi-channel fan-out to analytics and intent detection, and dynamic routing rules for high-priority segments. By abstracting transport and format variations, core ingestion logic remains stable while accommodating evolving business requirements and new AI services.

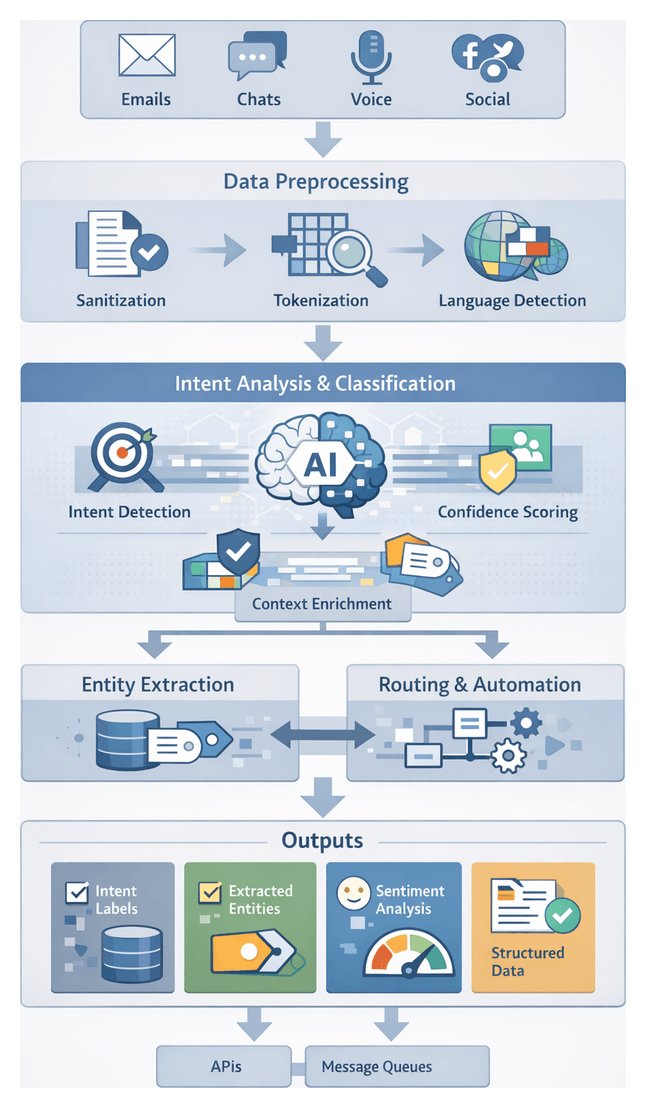

Chapter 2: Intent Detection and Natural Language Processing

Clarifying Intent Analysis Objectives and Data Requirements

The intent detection stage transforms unstructured customer inquiries—email, chat, voice transcripts or social media comments—into structured representations that capture each message’s core purpose. By defining clear objectives, specifying required inputs and establishing data quality standards, organizations ensure consistent, high-precision AI services that minimize misclassification risk and optimize workflow efficiency.

- Identify and classify customer intents with precision, meeting defined accuracy, precision and recall benchmarks

- Normalize diverse expressions into a standardized taxonomy and enrich messages with metadata

- Provide confidence scores to inform routing, escalation and fallback logic

- Support multilingual and domain-specific variants with structured outputs compatible with APIs and message queues

- Target classification accuracy above 90% on production data

- Balanced precision and recall to minimize false positives and negatives

- Average processing latency under 200 ms per message

- Scalability to handle peak throughput without performance degradation

- Sanitized text transcripts or message bodies with standardized UTF-8 encoding

- Channel identifier, timestamp and session context metadata

- Customer profile attributes, prior interaction history and language/locale indicators

- Embedded content (files, links, emojis) annotated or normalized

Contextual signals—order history, sentiment scores, customer segments and open cases—boost accuracy by disambiguating similar requests. Prerequisites include reliable channel connectors, preprocessing microservices for normalization, language detection, tokenization, and low-latency model endpoints. Robust governance enforces consent checks, PII redaction, encrypted transport, audit logging and compliance with GDPR, CCPA and industry regulations.

Text Processing Pipeline and Integration

The text processing pipeline orchestrates microservices and middleware to convert raw messages into enriched inputs for AI models. A clear flow—from initial normalization through intent classification—ensures low latency, consistency and full traceability.

Pipeline Initialization and Preprocessing

A controller service dequeues messages from an ingestion queue, validates required fields (customer ID, channel metadata, timestamp, content) and applies sanitization routines that normalize whitespace, strip unsupported characters and enforce UTF-8 encoding.

Tokenization and Normalization

Tokens are generated and normalized by engines such as spaCy or Google Cloud Natural Language, applying case folding, punctuation removal and contraction expansion to reduce variability and improve model performance.

Language Detection and Routing

A language detection module—powered by Azure Text Analytics—returns a language code and confidence score. Supported languages proceed; unsupported queries trigger fallback flows or human review.

Preliminary Classification and Priority Assignment

Lightweight classifiers assign broad categories (billing, technical support, general inquiry) and confidence-based routing decisions. A rule engine tags priority based on customer tier, sentiment indicators or urgency keywords, influencing queue ordering and resource allocation.

Metadata Enrichment and Contextual Tagging

CRM and third-party APIs supply profile data, past interactions and open tickets. Messages are tagged—”VIP Customer,” “High Churn Risk”—to inform decision-making and model selection in downstream stages.

Intent Classification and Model Coordination

An API gateway routes enriched payloads to an intent classification cluster hosted behind a load balancer. Multiple AI endpoints—optimized by domain or language—return detailed intent labels and confidence metrics. Parallel invocations enable cross-validation and conflict resolution.

Error Handling and Quality Assurance

Services implement retry logic with exponential backoff for transient failures and alerting for permanent errors. A sampling mechanism diverts messages to a human-in-the-loop QA service, feeding corrections back into retraining pipelines.

Inter-Service Messaging and Logging

Asynchronous frameworks—such as Apache Kafka or AWS SQS—decouple services and support horizontal scaling. Status events flow into centralized observability platforms like Elastic Stack and AWS CloudWatch, enabling end-to-end traceability and real-time dashboards.

Handoff to Entity Extraction

Processed records—containing normalized text, tokens, language code, intent label, confidence scores, priority tags and metadata—are published to an entity extraction queue via a versioned API contract. Downstream services subscribe and perform fine-grained analysis of dates, product identifiers and references.

AI-Driven Orchestration of Customer Interactions

The orchestration layer applies AI to coordinate message intake, processing, routing and response generation across channels. By centralizing control, it delivers consistent service, automates manual tasks and maintains context continuity throughout the customer journey.

Core AI Components

- Message Processing Engine: Ingests and sanitizes inputs, annotates emotive cues and enriches with metadata.

- Intent Analysis Module: Uses transformer-based classifiers to assign intent labels and confidence scores, leveraging historical data for continuous improvement.

- Entity Extraction Service: Identifies product SKUs, dates, order numbers and sentiment indicators for personalized responses.

- Orchestration Logic Engine: Combines rule-based and AI-driven decisioning to route interactions to specialized AI agents or human teams.

- Response Generation Framework: Employs natural language generation models and knowledge base templates to draft compliant, brand-aligned replies.

- Context Management Store: Retains session variables, past interactions and agent annotations to prevent information loss.

Integration Interfaces

- API Gateway and Webhooks: Standardized REST or event-driven endpoints for inbound and outbound messages.

- Message Queueing: Brokers buffer and order high-volume traffic with at-least-once delivery semantics.

- Event Bus: Publish-subscribe architecture distributes state change events to logging, analytics and monitoring services.

- Backend Connectors: Prebuilt integrations with CRM, ticketing and knowledge management systems for real-time data retrieval.

Governance and Continuous Improvement

Operational Monitoring

Dashboards track throughput, intent detection latency, response accuracy and escalation rates. Real-time alerts flag anomalies for rapid intervention.

Quality Assurance and Compliance

Automated audits compare outputs against regulatory and brand standards. AI-powered scanners flag potential issues for human review.

Model Retraining and Feedback Loops

Labeled data from resolved cases, agent corrections and customer feedback feed into scheduled retraining cycles, ensuring model relevance in evolving contexts.

Strategic Benefits

- Consistent Customer Experience: Unified standards and brand voice across all channels.

- Scalable Automation: Modular AI components scale horizontally to meet demand.

- Accelerated Resolutions: Intelligent routing and real-time responses reduce handling times.

- Data-Driven Insights: Visibility into every workflow stage supports proactive optimization.

- Future-Ready Architecture: Decoupled, API-centric design simplifies integration of new channels and models.

Structured Output Artifacts and Downstream Handoffs

Intent detection produces structured artifacts—machine-readable records that downstream systems interpret and act upon. Standardized outputs ensure consistency, traceability and extensibility across integration points.

- Intent Labels: Ranked intents with confidence scores.

- Extracted Entities: Named entities tagged with type and text span.

- Sentiment Scores: Polarity and magnitude metrics indicating emotion or urgency.

- Language and Tone Metadata: Detected language codes and stylistic markers.

- Context Enrichment Tags: References to customer profile elements and conversation history.

- Processing Diagnostics: Model version identifiers, latency metrics and timestamps.

Schema and Format

Outputs typically adhere to JSON or Protobuf schemas, providing clear contracts for producers and consumers. Versioned schemas support backward compatibility and safe evolution.

- intent: { name: “OrderStatusInquiry”, confidence: 0.92 }

- entities: [ { type: “OrderID”, value: “12345”, start: 10, end: 15 } ]

- sentiment: { score: -0.35, magnitude: 0.78 }

- language: “en”, tone: “neutral”

- context: { userId: “A789”, sessionId: “S456”, previousIntent: “Greeting” }

- diagnostics: { modelVersion: “v3.1.2”, processingTimeMs: 85 }

Dependencies and Integration Points

- Model Hosting Services: spaCy, Google Cloud Natural Language API, Amazon Comprehend, on-premise frameworks like Hugging Face Transformers.

- Message Brokers: Apache Kafka, RabbitMQ.

- Metadata Stores: Graph databases or key-value stores for customer profile and context data.

- API Gateways: RESTful or gRPC endpoints enforcing authentication, rate limits and contract validation.

- Monitoring Tools: Platforms like Elastic Stack and AWS CloudWatch for observability and alerting.

Downstream Handoff Mechanisms

- Synchronous API Calls: HTTP/gRPC endpoints that process requests and return routing decisions in real time.

- Asynchronous Event Publishing: Messages published to topics or queues for downstream consumers to process at their own pace.

Error Handling and Fallback Strategies

- Confidence Thresholds: Low-confidence intents trigger fallback agents or clarifying prompts.

- Entity Resolution Failures: Missing or ambiguous values invoke enrichment services or customer clarification.

- Model Unavailability: Alternate endpoints or rule-based classifiers maintain degraded service.

- Error Logging: Structured logs capture codes, stack traces and input samples for rapid diagnosis.

Best Practices for Reliable Handoffs

- Schema Versioning: Semantic versioning with clear migration guides.

- Contract Testing: Automated validation of input/output contracts to detect breaking changes early.

- Traceability: Embed correlation IDs in every payload for end-to-end observability.

- Security and Compliance: Enforce encryption in transit, token-based authentication and role-based access control.

- Performance SLAs: Define and monitor latency and throughput targets, with alerts for deviations.

- Comprehensive Documentation: Maintain up-to-date API guides, sample payloads and integration playbooks.

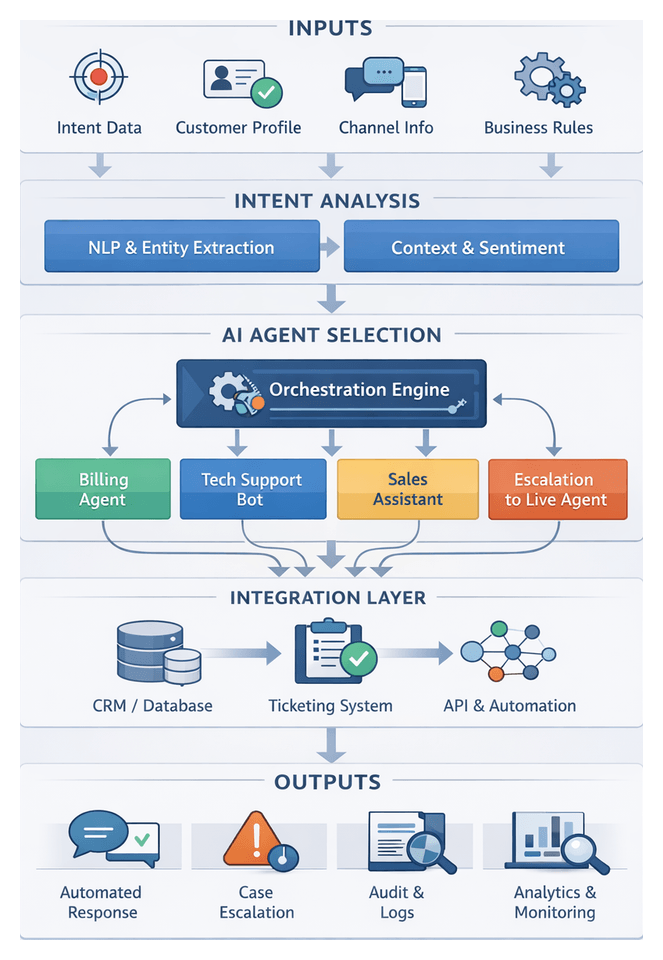

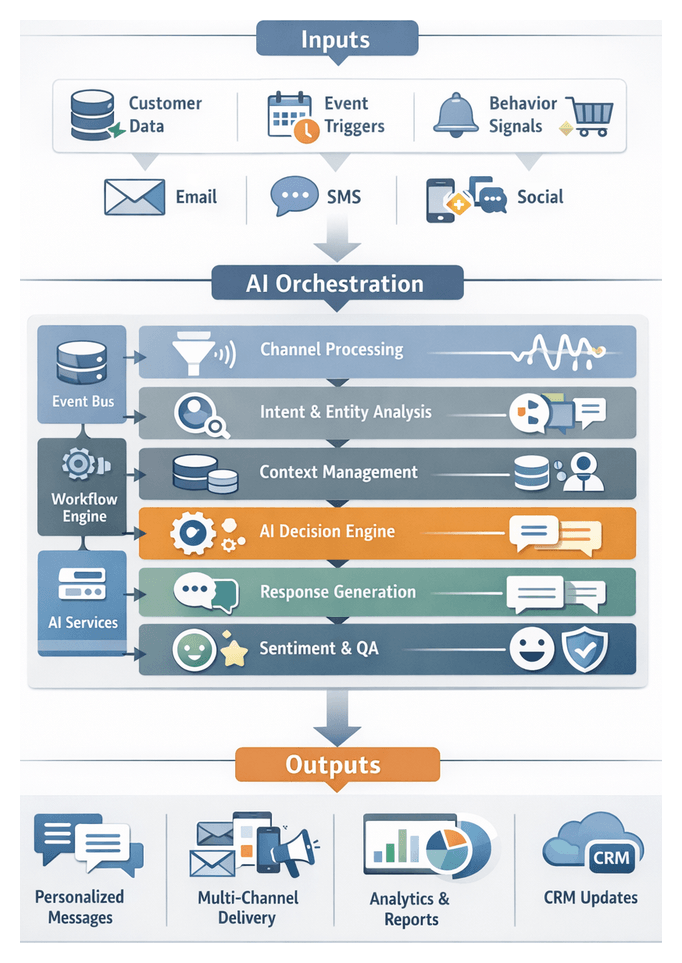

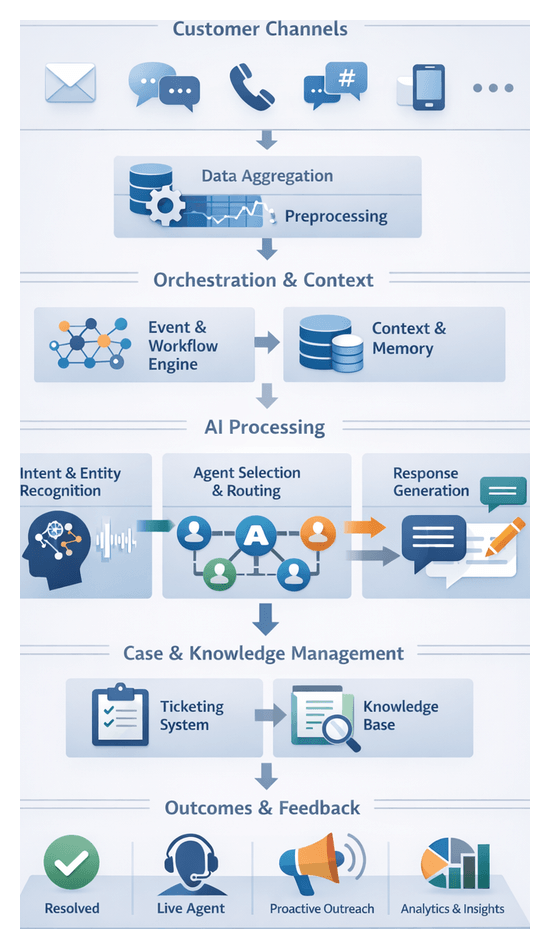

Chapter 3: AI Agent Selection and Orchestration

Purpose and Context of AI Agent Selection

Unifying customer interactions across email, chat, voice and social channels requires a modular orchestration layer that moves from broad intent detection to precise resolution. The AI Agent Selection stage sits at the heart of this pipeline, matching each inquiry to the optimal virtual assistant, chatbot or specialized AI module. By interpreting intent confidence, customer profile data and business rules, this decision hub minimizes manual handoffs, speeds responses and ensures consistent service quality while maintaining compliance with policy and regulatory boundaries.

Traditional monolithic chatbots often trade off depth for breadth, leading to escalations and unsatisfactory experiences. A modular approach divides responsibilities among purpose-built agents—for billing inquiries, technical support, product recommendations or account management—coordinated by a central orchestration engine. This engine evaluates metadata from upstream intent analysis, applies rule-based and machine learning criteria, and invokes downstream services such as ticketing systems or live agent dashboards. The result is a dynamic, context-aware workflow that aligns the right intelligence with each customer request.

Required Inputs and Prerequisites

Accurate agent routing depends on a comprehensive, schema-driven set of inputs delivered via APIs or message queues. Key data elements include:

- Intent labels and confidence scores from NLP classifiers

- Extracted entities (order numbers, dates, product IDs)

- Conversation context (dialogue history, sentiment trends)

- Customer profile attributes (tier, lifetime value, language, geography)

- Channel metadata (source application, timestamps, device context)

- Service level agreements and business rules

- Real-time sentiment scores and mood indicators

- Knowledge base references for relevant articles or templates

- System health and availability metrics for AI modules

Before invoking selection logic, the orchestration layer must ensure:

- Normalization of inbound data into a unified record

- High-fidelity intent classification with confidence thresholds

- Metadata enrichment via CRM connectors, loyalty databases and compliance registries

- Availability of agent modules with up-to-date registry information

- Policy and compliance checks for regulated data

- Configured fallback and escalation paths

- Loaded SLAs and routing rules

- Enabled monitoring and logging hooks

Routing Workflow and Decision Mechanics

Once inputs are validated, the orchestration engine coordinates with a business rules management system (BRMS) and machine learning classifiers to determine agent assignment. It applies a hierarchical rules workflow:

- Intent confidence thresholds: High scores proceed to assignment; low scores trigger fallback classification or human review.

- Customer priority segmentation: Premium‐tier or high-value customers route to specialized agents or live support.

- Complexity estimation: Entity counts, conversation length and sentiment volatility guide whether a simple FAQ bot or a transactional assistant is needed.

- Channel-specific rules: Voice, regulated messaging or accessibility channels may enforce dedicated agents for compliance and security.

- Real-time load balancing: Queue lengths and processing latencies across modules are monitored to evenly distribute workload.

- Fallback and escalation conditions: Retries, health check failures or low post-response confidence trigger rerouting to general assistants or live agents.

For complex inquiries, the engine may invoke parallel calls to multiple agents—for example, a transactional assistant and a returns bot—then merge their outputs into a composite response. Retry logic, exponential backoff and circuit breaker patterns maintain resilience under load or external service degradation.

Interaction patterns include synchronous API calls for low-latency tasks and asynchronous messaging via Apache Kafka or RabbitMQ for event-driven processing. Webhook callbacks and streaming ingestion support long-running sessions and continuous context updates.

Integration and Handoff Interfaces

After selecting the appropriate AI agent, the orchestration layer constructs a handoff package containing:

- Structured interaction record (transcript and data payload)

- Routing metadata (decision rationale, priority flags, SLA deadlines)

- Session context token for tracking and context persistence

- API endpoint references for the selected agent module

- Monitoring hooks for status updates and error notifications

This standardized format simplifies integration with specialized AI modules, third-party agent frameworks and human agent platforms. Common external systems include CRM platforms (such as Salesforce Service Cloud), order management APIs, ticketing tools and notification gateways. Compensating actions roll back partial updates if downstream failures occur.

Outputs and Transition to Automated Response

The assignment outputs form a self-contained artifact for downstream components. Key elements are:

- Response payload: Draft text or structured fields from the AI agent

- Agent confidence score: Numerical certainty metric guiding fallback or escalation

- Intent and entity metadata: Refined labels and data points for context

- Routing directives: Next steps such as automated reply or human escalation

- Conversation context snapshot: Serialized dialogue state and session variables

- Audit records: Timestamps, agent IDs and decision rationale

- Escalation flags: Indicators for manual intervention

- Next‐step recommendations: Knowledge base articles or actions for efficiency

Transition mechanisms include:

- RESTful APIs: Secure HTTP endpoints for low-latency scenarios

- Message queues and pub/sub: High-throughput delivery via Amazon SQS, Apache Kafka or RabbitMQ

- Callback webhooks: Signed payloads with retry logic for third-party integrations

- Data stream bridges: Mirroring outputs to Kafka topics for analytics and monitoring

- File-based drops: NDJSON exports to shared storage (for example, Amazon S3) for batch workflows

Standard headers—correlation IDs, timestamps and version identifiers—and schema enforcement via JSON Schema or Avro contracts ensure traceability and prevent drift.

Operational Monitoring and Governance

A robust observability framework captures logs and metrics across the orchestration workflow. Key practices include:

- Latency tracking: Measuring end-to-end processing time and alerting on threshold breaches

- Error and exception logging: Categorizing failures—serialization errors, timeouts, schema mismatches—for root cause analysis

- Confidence distribution analysis: Monitoring agent scores to detect model drift

- Handoff success rate: Tracking downstream ingestion rates and capacity issues

- Duplicate and sequence checks: Ensuring idempotency and correct ordering via tokens and sequence numbers

- Fallback and escalation trends: Identifying coverage gaps where AI needs enhancement

- Audit trail integrity: Reconciling logs against message bus archives for compliance verification

Automated alerts from observability tools—such as Prometheus and the ELK stack—enable rapid response to anomalies. Change management, performance monitoring, risk assessments and compliance auditing govern updates to selection rules, agent configurations and threshold adjustments.

Advanced Orchestration and Continuous Improvement

Beyond core routing, advanced capabilities drive strategic optimization:

- Multi-agent collaboration: Synchronous context sharing when multiple specialists contribute to a single inquiry

- Adaptive learning feeds: Retraining triggers based on selection outcomes and customer feedback

- Business impact scoring: Tagging assignments with KPIs to measure effects on resolution rates and satisfaction

- Governance and explainability: Applying frameworks to interpret selection decisions for compliance and ethical review

- Semantic knowledge retrieval: Using vector embeddings and tools like Elasticsearch to surface relevant articles

- Template and NLG orchestration: Filling response fragments with dynamic data via engines such as OpenAI GPT models or Microsoft Turing NLG

- Scalable architecture: Containerization and Kubernetes orchestration for auto-scaling, circuit breakers for resilience and plugin frameworks for emerging AI models

By integrating these enhancements, the AI Agent Selection stage evolves into a strategic lever for continuous improvement—refining immediate resolution paths and fueling long-term advancements in the entire support ecosystem.

Chapter 4: Automated Response and Resolution

Defining Response Generation Goals and Inputs

The automated response stage transforms structured input from intent detection, entity extraction and session context into coherent, personalized replies that align with brand guidelines and service level expectations. Clear definition of objectives and requisite inputs ensures consistency, accuracy and scalability across channels.

Strategic Importance of Automated Response

- Reduced resolution time through instant reply generation, improving satisfaction and loyalty.

- Consistent brand voice and compliance via templating rules and dynamic filters.

- Scalability to absorb peak volumes without proportional headcount increases.

- Data-driven performance measurement enabling continuous model tuning.

Key Goals for Response Generation

- Accuracy: Map detected intent and entities to knowledge base entries or business logic flows.

- Relevance: Tailor responses using customer-specific context such as purchase history or support tier.

- Coherence: Maintain conversational continuity across multiple turns.

- Brand Alignment: Enforce tone, vocabulary and regulatory constraints.

- Latency: Achieve sub-second response formulation for real-time interactions.

- Escalation Readiness: Trigger human handoff when automated resolution is insufficient.

Essential Input Elements

- Intent labels and confidence scores from intent detection modules.

- Extracted entities—account numbers, product IDs, dates—identified by NLP models.

- Session context including historical transcripts and channel metadata.

- Customer profile data from CRM systems: demographics, SLA status, loyalty tier.

- Knowledge base references via semantic search engines such as OpenAI GPT-4 Retrieval Plugin or Dialogflow Knowledge Connector.

- Business rules from policy engines governing discounts, compliance and data retention.

- Template repositories containing approved phrasing, placeholders and fallback options.

Prerequisites and System Conditions

- Completion of upstream processing: channel normalization, sanitization, intent and entity analysis.

- API connectivity to CRM, knowledge management platforms and policy repositories.

- Template version control ensuring synchronized distribution across runtime environments.

- Active compliance filters for data privacy and export controls.

- Defined performance benchmarks and monitoring hooks for SLA adherence.

- Configured fallback protocols and human handoff triggers for low-confidence scenarios.

Leveraging AI platforms such as Microsoft Azure Cognitive Services Language Studio, IBM Watson Assistant and OpenAI GPT-4 ensures timely, brand-aligned natural language replies that meet enterprise objectives.

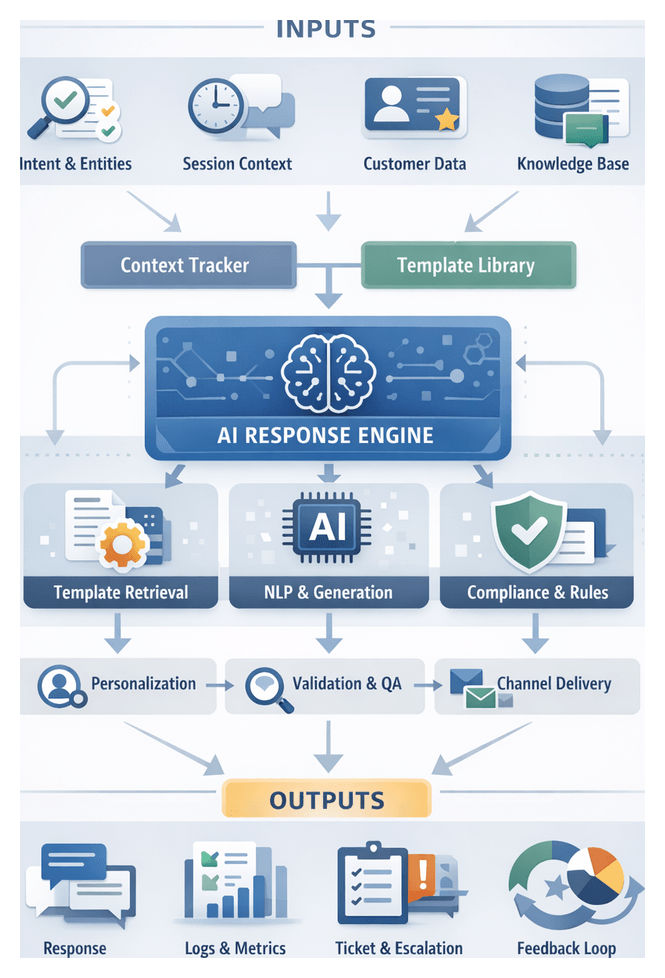

Overview of the Dynamic Response Workflow

The dynamic response workflow orchestrates template retrieval, NLG, compliance checks and multi-channel delivery to transform enriched inquiries into customer-facing messages. Centralized orchestration ensures consistent messaging, rapid turnaround and adaptive handling of edge cases.

Key Components and Actors

- AI Response Engine (for example, OpenAI GPT-4) for generative text and contextual coherence.

- Template Management Service storing response frameworks with placeholders.

- Context Tracker maintaining session history, user profile and dynamic variables.

- Compliance and Brand Consistency Module enforcing regulatory and style guidelines.

- Delivery Channels (email, SMS, chat, voice) interfacing with downstream messaging platforms.

- Ticketing and Escalation System (for example, Zendesk or ServiceNow).

- Logging and Monitoring Tools (for example, Datadog, Splunk).

Step-by-Step Workflow

- Receive Structured Inquiry: Orchestration layer accepts normalized text, intent label, entities, session context and customer attributes.

- Template Lookup: Query Template Management Service by intent and channel, retrieving base template with placeholders.

- Variable Resolution: Populate placeholders with real-time data—account balance, recent orders, loyalty status.

- Natural Language Generation: AI Response Engine refines or expands the template. Channel-specific models like Dialogflow CX or Rasa handle specialized dialogues.

- Compliance and Brand Enforcement: Draft reply passed to compliance module for prohibited term filtering and style consistency.

- Quality Validation: AI-driven validators assess grammar, sentiment alignment and factual consistency, producing a confidence score.

- Dispatch Preparation: Format reply for target channel—plain text, HTML email or SSML—and append tracking metadata.

- Delivery via Channel Connectors: Transmit reply through platform APIs and subscribe to delivery events.

- Session Update and Logging: Record delivered message, outcome data and follow-up triggers in the monitoring tool.

- Escalation Checkpoint: Trigger human handoff if confidence falls below threshold or negative sentiment is detected.

Integration Patterns

- Asynchronous Messaging Queues (for example, Kafka or AWS SQS) decouple services under load.

- API Gateways route external callbacks into the workflow securely.

- Event-Driven Triggers adjust routing and parameters on template updates or model retraining.

- State Management Stores (Redis or DynamoDB) maintain ephemeral session state.

- Monitoring Dashboards aggregate logs and metrics for real-time visibility.

- Webhooks synchronize CRM or billing updates upon resolution.

Template Selection and Personalization

- Intent Confidence maps to specialized templates.

- Customer Segment determines phrasing and offerings.

- Channel Constraints enforce character limits and media support.

- Contextual Flags invoke priority templates for urgent cases.

Placeholders such as {customer_name}, {order_id} and {next_steps_link} are populated via CRM APIs, ensuring data accuracy and privacy compliance.

Content Assembly and Validation

- Natural Language Refinement rewrites text to match brand voice and readability standards.

- Consistency Check verifies all placeholders are resolved and no markup remains.

- Sentiment Alignment scores tone against desired profiles for emotional resonance.

Compliance and Brand Consistency

- Regulatory Rule Matching ensures mandatory disclosures for GDPR or financial warnings.

- Prohibited Content Filtering redacts banned terms automatically.

- Brand Glossary Enforcement validates terminology and trademark usage.

Multi-Channel Delivery and Logging

- Protocol Adaptation converts content to REST calls, SMTP or WebSocket pushes.

- Message Sequencing respects timing constraints to avoid spamming.

- Delivery Receipts capture sent, delivered and read events via webhooks or polling.

- Error Handling and Retry Logic implement backoff and fallback to human agents on persistent failures.

Escalation and Ticketing Handoffs

- Low Confidence Score or negative sentiment triggers escalation.

- Repeated unresolved inquiries invoke human intervention.

Escalations package conversation history and metadata for ticket creation via APIs to ServiceNow or Zendesk, minimizing agent ramp-up time.

Monitoring, Logging and Feedback Integration

- Real-Time Metrics on throughput, latency and errors displayed on dashboards.

- Session Record Logs archived for audit and retraining.

- Customer Feedback Loop via surveys or ratings feeding model evaluation.

- Continuous Improvement Triggers launch retraining on identified failure patterns.

AI Response Models and Context Management

Generative AI engines and context management systems form the backbone of automated replies, combining language models with structured memory to deliver accurate, coherent and personalized interactions across channels.

Generative Response Engines

- OpenAI GPT-4 – state-of-the-art transformer model for nuanced language generation.

- Azure OpenAI Service – GPT models in a scalable, secure cloud environment.

- Dialogflow CX – combines rule-based flows with NLU for complex dialogues.

- IBM Watson Assistant – intent classification and response generation with hybrid deployment options.

Generation strategies include:

- Template-based NLG ensuring brand consistency and compliance.

- Retrieval-augmented generation combining knowledge base retrieval with generative models.

- Fine-tuned domain models trained on proprietary data for industry-specific terminology.

- Hybrid logic orchestrating sentiment-aware or compliance sub-models before final assembly.

Context Management Systems

- Rasa – open-source AI with built-in tracker store and custom actions support.

- Amazon Lex – session management integrated with AWS services and slot resolution.

- Redis and DynamoDB for scalable, low-latency dialogue state storage.

Integration Architecture

- Conversation event ingestion with enriched metadata arrives via the orchestration layer.

- Context retrieval fetches dialogue state and session memory by conversation ID.

- Model invocation supplies user message, context window and knowledge snippets to the response engine.

- Response synthesis returns candidate replies with confidence scores.

- Response validation via policy engines or classifiers checks for compliance and safety.

- Context update commits reply and state changes to the context store.

- Delivery forwards the final response to the output channel with tracking metadata.

Role of Knowledge Systems

- Vector search indexes such as Pinecone for semantic retrieval.

- Elasticsearch for keyword-based lookups.

- Knowledge graph services for entity-centric relationships.

- Content management systems storing versioned knowledge base articles.

Monitoring and Continuous Improvement

- Response accuracy metrics via automated and human evaluation.

- Context drift detection identifying misaligned state.

- Throughput and latency monitoring to enforce performance SLAs.

- User satisfaction scoring from surveys or sentiment analysis.

- Model retraining triggers on error rates or vocabulary shifts.

Delivered Outputs and Service Continuity Handoffs

At the completion of automated response processing, the system emits structured deliverables that record AI decisions, update session context and trigger downstream actions such as ticketing, escalation and analytics.

Key Deliverables

- Response Payload: Formatted reply including text, media links and actionable suggestions.

- Updated Session Context: Conversation variables, intent and entity states, and continuity flags.

- Escalation Indicators: Markers for unresolved issues requiring human attention.

- Ticket Trigger Events: Data packets initiating ticket creation in workflow systems.

- Audit and Logging Records: Timestamps, confidence scores, decision rationale and delivered content.

- Feedback Prompts: Survey or rating requests queued for presentation.

Output Schema Elements

- messageId – Unique identifier for interaction traceability.

- timestamp – ISO-8601 finalization time of the response.

- content – Text or rich media generated by the NLG model.

- channelMetadata – Platform identifier, session token and locale.

- contextBundle – Encapsulated state including intentLabel, entities and sentiment.

- resolutionStatus – Enumeration of success, partial resolution or escalation.

- nextActions – Array of recommended follow-up steps or knowledge links.

Standardized schemas enable downstream systems to parse and process interaction records without custom adapters.

Session Context Enrichment

Metadata fields such as topicHistory, customerProfileUpdates and session duration markers are persisted in high-performance stores to support seamless continuity for AI modules or live agents, reducing customer effort and avoiding repetitive queries.

Dependency on Intent and Entity Accuracy

IntentConfidence and fallbackTrigger metadata embed upstream detection results, guiding downstream trust levels and escalation rules to ensure transparency of AI decision-making.

Logging and Compliance Records

- DecisionLog: Sequence of model invocations, confidence scores and template selections.

- ContentArchive: Immutable storage references for delivered messages.

- ComplianceTags: Flags for sensitive content, PII handling and retention policies.

These artifacts feed SIEM solutions and compliance dashboards without impacting customer-facing performance.

Ticketing and Workflow Handoffs

- TicketPayload aggregates subject, priority, category and context for ticket creation.

- API calls to ServiceNow, Zendesk or Jira Service Management.

- Assignment rules based on team availability, SLA and skill profiles.

- Status synchronization ensures consistent views across AI orchestrator and human teams.

Live Agent Escalation Interfaces

- Context Snapshot summarizing intent history and prior AI responses for agent dashboards.

- Transcript Transfer to unified agent desktops preserving full conversational context.

- Suggested Knowledge Links curated by AI to accelerate resolution.

- Handoff Notification alerts agents via chat or email with deep-link access to the session.

Knowledge Base Feedback Loop

- UnmatchedQuery Reports logging inputs with no suitable template or article.

- CustomerRating Data linking satisfaction scores to response variants.

- Content Improvement Flags identifying opportunities to enrich knowledge articles.

Notifications, Alerts and Monitoring Outputs

- PerformanceMetrics—response time, API latency, model throughput—streamed to Prometheus or Datadog.

- ErrorAlerts for failed handoffs, ticket creation errors or inference exceptions.

- SLACompliance Flags triggering automated escalations as deadlines approach.

Data Persistence and Storage Dependencies

Session contexts use in-memory stores like Redis for low-latency access, while historical records and logs reside in document databases or data lakes with encryption-at-rest and role-based access controls to meet security requirements.

Analytics and Continuous Improvement

Structured records of intents, resolution outcomes and energy consumption feed data warehouses and AI retraining pipelines. Programmatic tagging of resolved, escalated or content-deficient cases generates labeled datasets that drive iterative optimization of NLU models, response generators and routing logic, ensuring the solution evolves in line with customer needs and business objectives.

Chapter 5: Ticketing and Workflow Management Integration

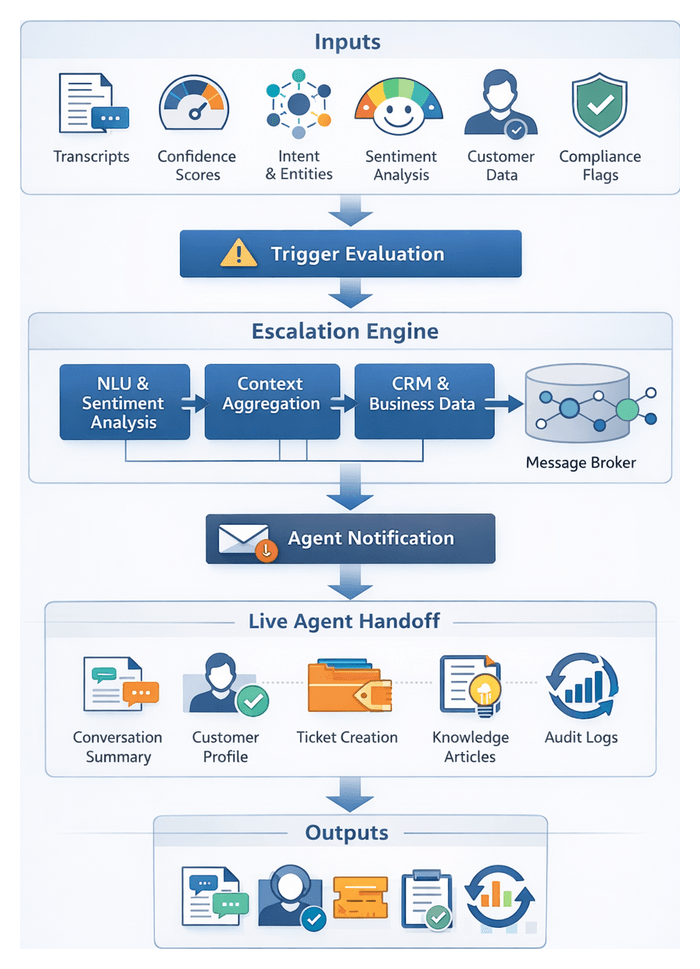

Purpose and Prerequisites for Ticket Creation

In unified customer interaction workflows, generating a support ticket marks the transition from automated resolution to structured case management. Ticket creation ensures transparent tracking, standardized handling, and consistent escalation according to service level agreements. It preserves the conversational history, captures metadata, and provides an auditable record that supports performance measurement, resource allocation, and compliance across channels.

Operational Context

Multiple communication channels—email, web chat, social media, voice bots—feed into a centralized orchestration layer. AI models address routine inquiries, resolve FAQs, and recommend knowledge articles. When an issue remains unresolved or meets escalation criteria, the orchestration engine initiates ticket creation. At that moment, the system must gather and normalize all relevant data to ensure effective handoff to human agents or specialized teams.

Key Prerequisites

- Unified Message Record: A consolidated object containing raw transcripts, intent labels, extracted entities, and sentiment scores to serve as the primary data payload.

- Identity Resolution: A persistent customer identifier, enriched via systems such as Salesforce or an internal CRM, linking the inquiry to account profiles and SLA tiers.

- Access Controls: Authorized API credentials and service accounts with role-based permissions for ticket operations.

- Taxonomy and Field Mapping: A shared data model defining categories, priorities, impacts, and custom tags to align AI classifications with routing rules.

- Service Catalog Integration: References to predefined services or offerings to automate team assignments, SLA clocks, and entitlement checks.

- Escalation Policies: Business logic specifying triggers—such as low intent confidence, repeated messages, negative sentiment, or timeout—for ticket conversion.

Required Ticket Inputs

- Customer Identifier and Contact Details: Unique customer ID, email address, phone number, or social handle, plus subaccount or organization ID in multi-tenant environments.

- Channel and Timestamp Metadata: Originating channel and normalized timestamps to ensure accurate SLA countdowns.

- Intent Labels and Confidence Scores: Primary and secondary intent classifications from NLP models, with confidence metrics to inform human review triggers.

- Extracted Entities: Structured data points—product IDs, order numbers, dates, error codes—identified via entity recognition services.

- Priority and Impact Assessment: Priority level derived from customer tier, sentiment polarity, business impact, or regulatory deadlines.

- Category and Issue Type: Taxonomy code mapping to support queues, such as “technical support,” “billing inquiry,” or “feature enhancement.”

- Subject or Summary: Auto-generated concise summary extracted from key phrases or user-provided headlines.

- Detailed Description and History: Full transcript or email thread, including attachments and screenshots, preserving chronological order.

- Sentiment and Urgency Indicators: Quantitative sentiment scores and urgency flags from real-time analysis.

- Attachments and Supporting Documents: Links or binary payloads for screenshots, logs, and error dumps, with secure storage references.

- Service Entitlement and SLA Parameters: Contractual service level definitions, response targets, and resolution deadlines.

- Correlation Identifiers: Request or trace IDs linking the ticket to preceding automated workflows or external system events.

Impact of Structured Inputs

Comprehensive, structured ticket inputs enable automated assignment, precise SLA calculations, proactive notifications, data-driven analytics, and reduced misrouting. High-quality tickets accelerate time to resolution, increase first-contact resolution rates, and improve overall customer satisfaction.

Ticket Initialization and AI-Driven Enrichment

Trigger Conditions and Data Capture

The orchestration engine triggers ticket creation when intent confidence falls below a threshold, complexity metrics exceed predefined limits, or customers request human assistance. The payload includes the original transcript, channel metadata, timestamps, detected entities, and knowledge base references used during automated resolution attempts.

Sanitization and Enrichment

Before committing the ticket, the system sanitizes sensitive data to comply with privacy regulations. Concurrently, AI services annotate the payload with classification tags, sentiment scores, and preliminary categories. Natural language processing models extract additional context—such as product identifiers or billing codes—to streamline downstream routing and reporting.

Contextual Metadata Enrichment

- Intent Labeling: Classifying the inquiry’s purpose—troubleshooting, billing query, feature request—using services like IBM Watson Natural Language Classifier or custom transformer models.

- Entity Extraction: Identifying product names, order numbers, service plans, and error codes via Azure Cognitive Services.

- Sentiment Scoring: Assessing tone and urgency to flag negative sentiment or escalation language.

- Customer Context Mapping: Enriching tickets with account tier, past interactions, and contract details through APIs such as Salesforce Einstein.

These enrichment steps produce a robust ticket record combining unstructured text with structured metadata, delivered via reliable message queues to downstream workflow engines.

Automated Classification and Routing

Classification and Priority Scoring

- Multi-Label Classification: Assigning categorical tags—technical support, account management, product feedback—using ensemble models that blend gradient-boosted trees and deep neural networks.

- Priority Estimation: Calculating urgency scores based on sentiment intensity, customer segment, and historical resolution times.

- SLA Adherence Prediction: Forecasting the probability of meeting service level agreements to flag at-risk tickets for expedited handling.

- Cross-Channel Correlation: Detecting duplicate cases across email, chat, and social media by matching topic embeddings to consolidate conversations under a single ticket ID.

Enriched and scored tickets are ingested by platforms like Zendesk AI and Freshdesk Freddy via RESTful APIs, updating dashboards and agent worklists automatically.

Routing Decision Orchestration

- Owner Recommendation: Machine learning models suggest the most qualified agent or team based on historical performance, skill profiles, and current workload.

- Escalation Path Identification: Rule-based engines informed by AI predictions trigger automatic escalation to senior tiers or specialized task forces.

- Workload Balancing: Reinforcement-learning algorithms distribute tickets in real time to maximize throughput and minimize response latency.

- Dynamic Queue Reshuffling: Continuous reassessment of assignments as new tickets arrive or agent availability changes.

Routing capabilities integrate with orchestration layers in ServiceNow Virtual Agent and Jira Service Management, exposing assignment events through webhooks and message streams.

Predictive SLA Management

- Time-to-Resolution Forecasting: Regression models predict remaining resolution time based on ticket attributes and historical trends.

- Proactive Alerting: Automated notifications to escalation contacts when breach risks exceed thresholds.

- Impact Analysis: Simulation engines evaluate the effect of reassigning tickets or reallocating staff on overall SLA compliance.

Streaming analytics frameworks connect AI inference services to management dashboards and alert systems for real-time SLA monitoring.

Feedback Loop and Model Retraining

- Outcome Tagging: Recording resolutions, reopen flags, and escalation events to label training data.

- Performance Monitoring: Tracking model accuracy, precision, recall, and drift to detect degradation.

- Automated Retraining Pipelines: Scheduled workflows that extract labeled tickets, retrain models, validate performance, and deploy updated versions.

- Human-in-the-Loop Validation: Subject matter experts review AI suggestions, providing high-quality signals and governance oversight.

Continuous learning cycles ensure enrichment and routing capabilities evolve with changing customer behavior and service offerings.

Workflow Actions, Status Tracking, and Escalations

Rule-Based Assignment and Recommendations

Upon creation, the workflow engine evaluates assignment rules that consider ticket category, customer tier, language preferences, and agent skills. Platforms like ServiceNow and Zendesk expose rule engines via REST APIs, enabling custom logic for team or agent pool selection. AI-driven owner recommendations can surface alongside rule-based assignments to guide supervisors or support fully automated deployments.

Status Automation and Notifications

Tickets progress through statuses—New, In Progress, On Hold, Resolved—driven by events captured by the orchestration layer. Automated notifications inform customers of acknowledgments and status changes via their preferred channels. Internal teams receive alerts for SLA breaches or tickets lingering beyond thresholds. Notification logic consolidates messages to prevent alert fatigue and maintain clarity of actionable items.

- Customer Acknowledgment: Confirmation messages with ticket ID, expected response time, and self-service portal links.

- Team Alerts: Summarized notifications with direct ticket links sent to support groups.

- Escalation Triggers: Real-time monitoring services dispatch alerts to management dashboards or SMS for high-priority items.

- Chat Ops Integration: Structured messages via webhooks to collaboration channels for on-the-spot discussion and case claiming.

Escalation Mechanisms and Supervisory Coordination

The orchestration layer continuously evaluates ticket age against SLA definitions. Approaching breach thresholds trigger automatic escalations to higher-level support tiers or managerial queues, including ownership reassignment and priority elevation. Supervisors access dashboards aggregating tickets by status, priority, and SLA risk. Real-time metrics and predictive alerts guide ad hoc interventions and ensure accountability across support operations.

Structured Ticket Outputs and Handover Protocols

Structured Ticket Object

- Unique Identifier: Globally unique ticket ID for cross-system traceability.

- Inquiry Metadata: Channel, timestamp, customer identifier, language code, and customer segment.

- Intent and Entity Data: Intent labels, confidence scores, extracted entities, and sentiment metrics.

- Priority and SLA Parameters: Priority level and associated service level agreement thresholds.

- Assignment Information: Suggested or assigned support group, queue, or agent.

- Contextual History: Conversation transcripts, AI summaries, attachments, and related ticket references.

- Escalation Flags: Indicators for urgent handling, compliance requirements, or regulatory considerations.

- Audit Metadata: Provenance data capturing AI model versions, action logs, and event records.

The ticket object is serialized in JSON or XML to align with schemas defined by enterprise or cloud-based workflow platforms.

APIs and Integration Protocols

- RESTful Endpoints: Create, update, and query operations for downstream systems to retrieve or modify ticket data.

- Message Queues and Streams: Asynchronous broadcasts of events—ticket.created, ticket.updated, ticket.closed—to subscribed consumers.

- Webhooks: Callback URLs receiving HTTP notifications upon defined ticket events.

- Polling Interfaces: Periodic API queries based on timestamps or sequence numbers where push integrations are not viable.

Each integration enforces authentication, authorization, and data validation to ensure security and integrity.

Downstream Dependency Management

- Knowledge Base Updates: Tickets with novel issues queue for content author review, populating self-service portals.

- Case Escalation Engines: Specialized workflows for legal reviews, engineering investigations, or compliance checks via API handovers.

- Field Service Dispatch: Resource scheduling modules assign technicians and manage routes based on on-site support requests.

- Feedback and Survey Systems: Post-closure surveys feed sentiment analysis pipelines for continuous improvement.

- Analytics and Reporting: Ticket data streams into data lakes and analytics services for monitoring resolution times, workload distribution, and quality metrics.

Collaborative Workspaces and Human Handoff

- Unified Context View: Consolidated display of conversation history, AI annotations, customer profile, and SLA status.

- Knowledge Recommendations: Contextual article suggestions ranked by relevance to ticket entities and sentiment signals.

- Response Templates: Prebuilt messages dynamically populated with extracted entities.

- Collaboration Tools: Integrated chat threads, escalation request buttons, and internal tagging for subject-matter consultations.

- Status Controls: Lifecyle actions—pending, on hold, resolved—with SLA countdown timers to alert agents of approaching deadlines.

Audit Logging and Compliance

- Timestamped events for creation, updates, assignments, and closures.

- Agent actions including status changes, internal notes, and resolutions.

- AI model version identifiers and input/output records for enrichment and classification.

- Error logs for failed handover attempts or integration timeouts.

- Change history for modifications to priority, category, or SLA parameters.

Comprehensive logs support post-incident reviews, regulatory audits, and model governance.

Feedback Loops and Continuous Improvement

- Resolution Metadata: Elapsed time, resolution codes, root cause classifications, and customer satisfaction ratings.

- Closed-Loop Feedback: Flags for knowledge base updates and model retraining based on new inquiry types.

- Reporting Aggregates: Summarized metrics for dashboards monitoring operational health and identifying bottlenecks.

These artifacts feed analytics pipelines that drive SLA compliance reporting, trend detection, and algorithmic refinements, ensuring each customer inquiry transitions seamlessly from automated handling to human resolution within a unified support ecosystem.

Chapter 6: Knowledge Base and Self-Service Enablement

Addressing Fragmented Customer Communications

In an era defined by rapidly evolving customer expectations and proliferating channels—email, chat, voice calls and social media—organizations struggle with siloed data and inconsistent protocols. Fragmentation leads to context loss, redundant inquiries and operational overhead as agents reconcile parallel threads. Disparate metadata standards and varying SLAs across platforms result in delayed responses and uneven service quality. Poor traceability undermines the ability to personalize interactions, detect trends or resolve issues proactively. Overcoming these gaps requires a deliberate assessment of the channel landscape and unified input standards that ensure every message is captured, enriched and standardized before processing.