AI Agent Orchestration for Business Solutions

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

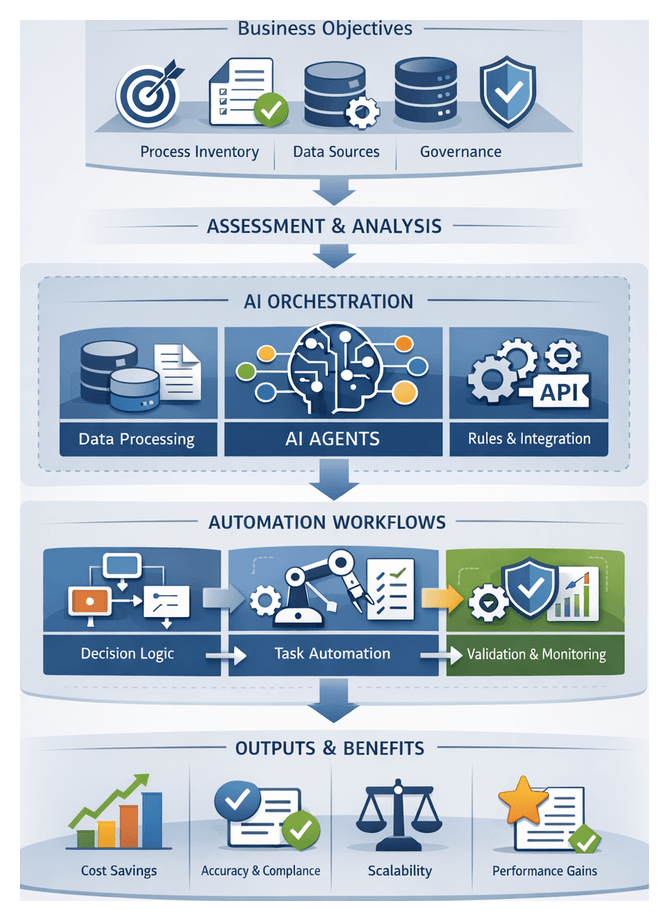

Understanding Core Automation Challenges

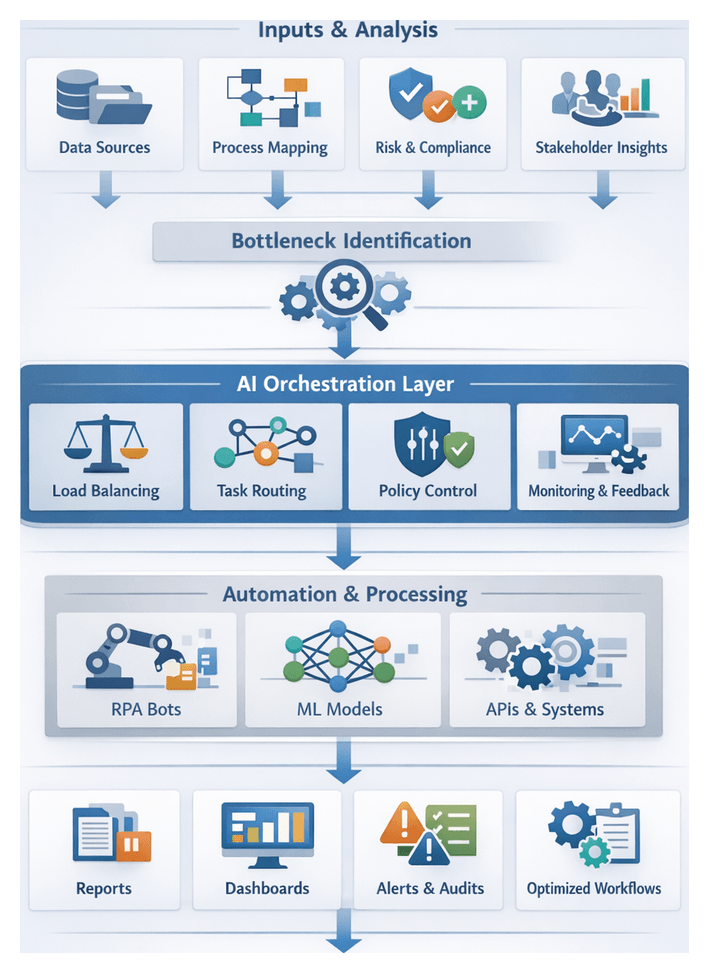

The first phase of any AI-driven orchestration strategy focuses on diagnosing the fundamental obstacles that impede efficiency, reliability, and scalability. By mapping existing workflows, pinpointing manual touchpoints, and uncovering technology constraints, teams establish a clear baseline from which to design targeted automation interventions. This rigorous assessment ensures that resources address root causes—such as process fragmentation, data inconsistencies, and governance gaps—rather than superficial symptoms.

Purpose of the Assessment

- Document end-to-end processes, including manual tasks, system handoffs, decision gateways, and exception paths.

- Quantify operational pain points by analyzing cycle times, error rates, rework frequencies, and compliance incidents.

- Evaluate automation readiness by reviewing data quality, process documentation standards, and the integration maturity of existing applications.

- Define clear objectives and success metrics—such as throughput increases, cost reductions, or customer satisfaction improvements—that align with strategic priorities.

- Engage stakeholders across business, IT, risk, and compliance functions to validate findings and secure executive sponsorship for the initiative.

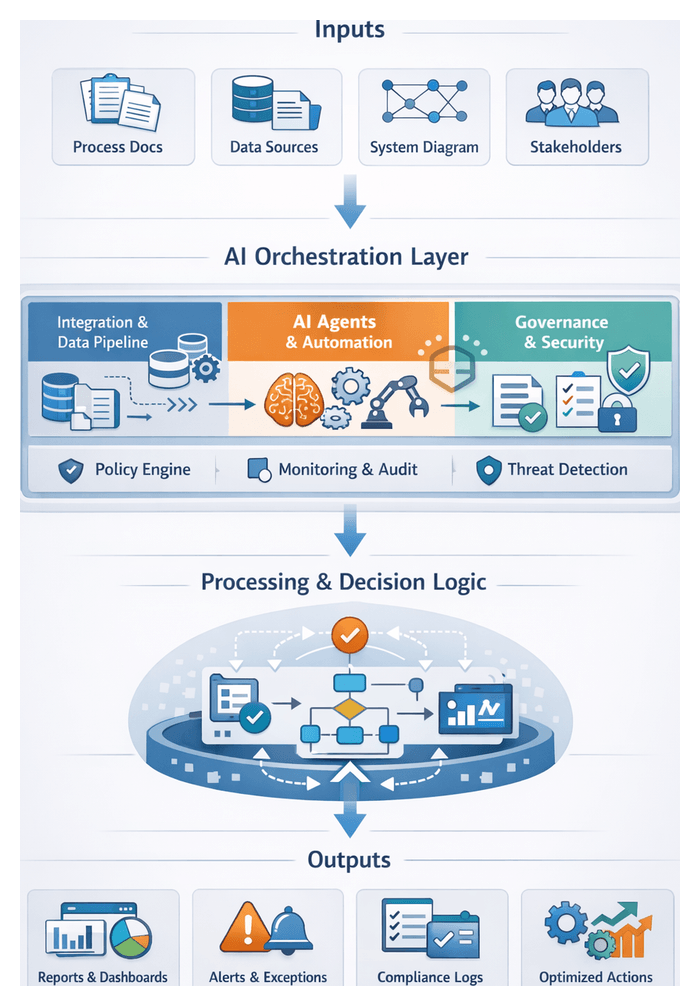

Inputs Required

- Process Artifacts: Detailed maps, swimlane diagrams, standard operating procedures, and any legacy automation scripts offering insight into current task flows.

- Performance Data: Historical dashboards, reports, and logs from ERP, CRM, or custom applications capturing throughput, delays, and exception volumes.

- Technology Inventory: An itemized catalog of systems, databases, integration platforms, APIs, and middleware, including data formats and access methods.

- Stakeholder Feedback: Interviews and surveys with process owners, frontline operators, compliance officers, and IT support teams to capture qualitative insights on pain points and improvement goals.

- Exception Logs and Audit Records: Incident reports, customer complaints, and regulatory findings that highlight systemic weaknesses and risk exposures.

- Regulatory Frameworks: Documentation of relevant standards, governance policies, data privacy mandates, and audit requirements guiding workflow design.

Prerequisites and Success Conditions

- Executive Sponsorship: Demonstrable commitment from senior leadership, including budget approval, governance oversight, and cross-functional alignment.

- Cross-Functional Team: A structured project team with business analysts, IT architects, data engineers, compliance specialists, and change managers.

- Secure Access: Authorized connectivity to systems, databases, APIs, and file repositories, supported by data governance and security clearances.

- Documentation Standards: Agreed formats and notation conventions (BPMN, value stream mapping, flowcharts) to ensure consistency and clarity.

- Baseline Metrics: Established performance benchmarks and qualitative user feedback to measure the impact of subsequent automation.

- Change Management Plan: Communication and training strategies to prepare stakeholders for process automation and foster adoption.

- Risk Mitigation Framework: Identification of potential risks—such as data integrity issues or integration failures—and predefined mitigation plans.

With these elements in place, organizations can transition from discovery to design, confident that they understand both the operational landscape and the governance requirements necessary for scalable AI-driven workflows.

Imperative for Structured Orchestration

Point solutions, one-off scripts, and siloed automations often deliver short-term gains but fail to scale without a cohesive orchestration framework. Structured orchestration unifies dispersed automation efforts, enforces consistency, and provides end-to-end visibility across complex, multi-system workflows. By imposing a formal workflow blueprint, enterprises reduce technical debt, strengthen governance, and ensure reliable performance as processes evolve.

Limitations of Ad Hoc Automation

- Lack of Visibility — Fragmented tools and scripts operate without a centralized dashboard, obscuring performance trends and bottleneck identification.

- Inconsistent Error Handling — Custom retry logic and undocumented failure modes lead to silent errors, manual firefighting, and data corruption.

- Siloed Knowledge — Sparse or outdated documentation forces teams to reverse-engineer solutions when issues arise, increasing support overhead.

- Deployment Drift — Version mismatches and evolving APIs break integrations; without governance, corrective patches are applied unevenly.

- Governance Gaps — Security and compliance teams lack centralized oversight, complicating audit readiness and policy enforcement.

Core Attributes of Structured Orchestration

- Workflow Blueprint — A detailed map of activities, decision points, and exit conditions, ensuring predictable execution paths.

- Central Coordination Engine — A dedicated orchestration platform triggers tasks, manages dependencies, and enforces sequencing.

- Reusable Components — Standardized connectors, templates, and agent interfaces accelerate development and maintain consistency.

- Dynamic Scaling — The orchestration layer adapts to volume changes, branching logic, and resource constraints through load balancing.

- Auditability — Integrated logging, metrics collection, and dashboards deliver real-time insights into performance and compliance.

- Governance Controls — Role-based access, approval gates, and policy checks embedded in workflows safeguard sensitive operations.

Interactions Across Systems and Stakeholders

Structured orchestration coordinates:

- AI Agents performing tasks such as document extraction, predictive scoring, or natural language understanding.

- Enterprise Applications supplying data and events via APIs, message queues, or file transfers.

- Human Participants reviewing exceptions, making approvals, and providing critical judgments.

- Orchestration Services handling task scheduling, routing logic, and state management.

- Monitoring Tools capturing execution logs and alerting on anomalies.

Measurable Benefits

- Scalability — Extend standardized workflows across new geographies and business units without proliferating custom code.

- Reliability — Centralized error handling and retry policies minimize failure rates and downtime.

- Transparency — Unified dashboards and audit trails provide stakeholders with clear visibility into process health.

- Compliance — Embedded security and governance controls support regulatory requirements.

- Agility — Reusable components accelerate new automation deployments.

- Maintainability — A cohesive orchestration layer simplifies version control, testing, and change management.

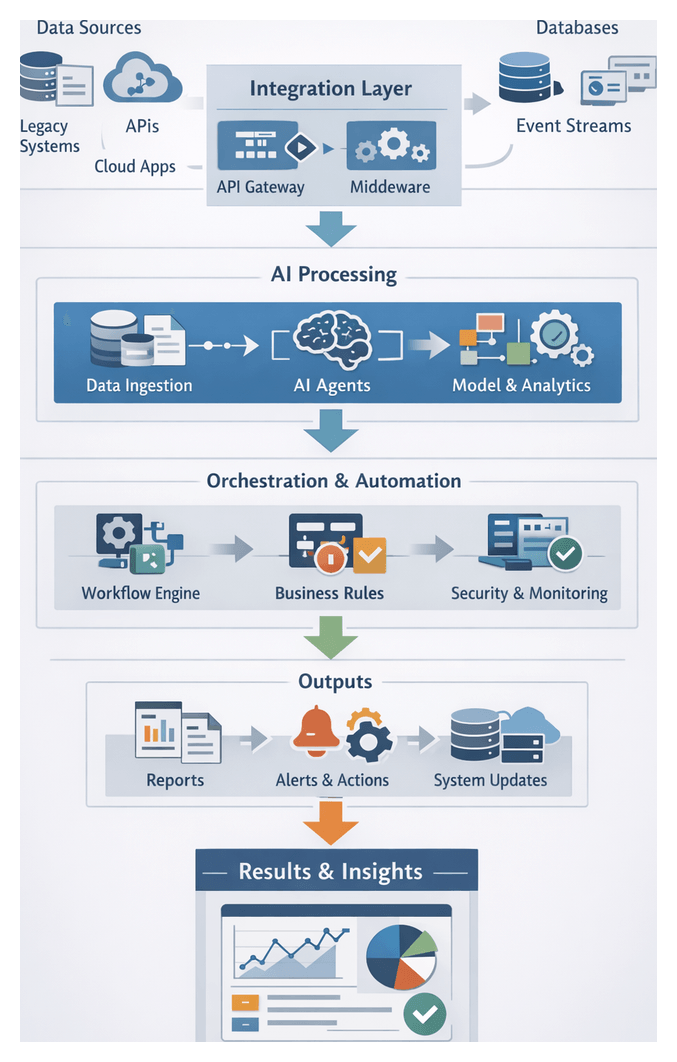

Leading platforms illustrate how AI agents, enterprise systems, and human workflows can be integrated under a unified orchestration layer to deliver consistent, auditable outcomes at scale.

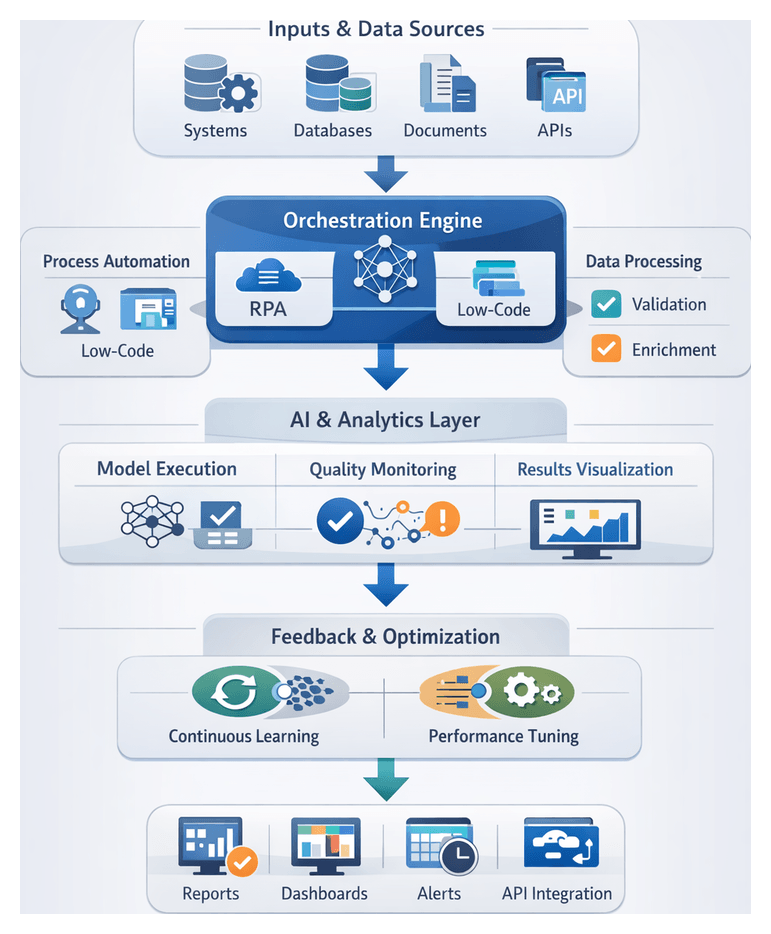

Positioning AI Agents Within the Workflow Framework

AI agents are specialized actors within orchestrated workflows, responsible for tasks ranging from data ingestion to decision execution. Defining their roles, integration interfaces, and governance mechanisms ensures that each agent contributes reliably to end-to-end process objectives.

Mapping Agent Roles to Functional Layers

Agents can be categorized by the layer in which they operate:

- Input Processing Agents ingest and normalize raw inputs—scanning documents, parsing unstructured text, and validating data accuracy.

- Analytic and Reasoning Agents apply machine learning models, statistical analyses, or rule engines to generate insights such as predictive scores or risk assessments.

- Decisioning Agents interpret analytic outputs against business rules and context to recommend actions, trigger human reviews, or invoke downstream processes.

- Orchestration and Coordination Agents oversee the workflow, monitor task statuses, manage retries, and enforce transactional integrity.

Integrating Data Connectivity and System Interfaces

- API Gateways and RESTful Services provide standardized endpoints for agent invocation, with versioning and schema validation to support upgrades.

- Message Queues and Streaming leverage platforms such as Apache Kafka or Amazon Kinesis to decouple producers and consumers and buffer data spikes.

- Database Connectors and Data Lakes supply agents with secure access to structured repositories, warehouses, or lakehouse architectures.

- Authentication and Authorization employ OAuth, API keys, or token-based security with least-privilege principles and secret rotation policies.

Orchestrating Decision Logic: Rule-Based Versus AI-Driven

Decision gateways may implement:

- Rule-Based Logic for deterministic, auditable checks—compliance thresholds, eligibility rules, and binary conditions.

- AI-Driven Logic utilizing services such as OpenAI or Google Cloud AI to handle ambiguous inputs, classify text, or predict outcomes.

Hybrid architectures combine both approaches—routing high-risk exceptions to human review based on model confidence scores and applying fallback rules to ensure continuity.

Designing Seamless Inter-Agent Handovers

Formal handovers define the contract between agents, specifying:

- Structured payloads (JSON or protocol buffers) with explicit fields and metadata.

- Quality thresholds—minimum confidence scores, completeness checks, and validation rules.

- Versioning schemes and schema evolution paths to maintain backward compatibility.

- Error handling protocols—retry logic, exception queues, and escalation workflows for validation failures.

Balancing Synchronous and Asynchronous Operations

Workflows often mix:

- Synchronous Calls for user-facing interactions—chatbots, approval portals—requiring low latency and clear error messaging. Prebuilt APIs like Microsoft Azure Cognitive Services deliver sub-second responses.

- Asynchronous Flows for batch analytics, data enrichment, and long-running ML tasks. Agents publish events or use work queues, enabling orchestrators to monitor and trigger downstream steps upon completion.

Embedding Governance, Security, and Monitoring

Governance is woven into each orchestrated workflow:

- Access Controls enforce role-based permissions at API and infrastructure layers.

- Audit Logging captures agent activations, input parameters, and decisions in centralized repositories to support GDPR, SOC 2, and other compliance mandates.

- Performance Monitoring tracks throughput, error rates, and resource utilization; dashboards and alerting agents detect anomalies in real time.

Leveraging Orchestration Infrastructure

- Workflow Engines such as Apache Airflow define task graphs, manage parallelism, and persist execution state.

- Container Platforms like Docker and Kubernetes package agents for consistent runtime environments and auto-scaling.

- Service Meshes secure and observe service-to-service communication with circuit breaking and resilience patterns.

- Configuration Services centralize parameters and feature flags, enabling dynamic adaptation without redeployment.

Outputs, Dependencies, and Handoffs

At the culmination of the introductory phase, stakeholders receive a comprehensive package of artifacts that crystallize the project’s strategic rationale, technical assumptions, and governance framework. These deliverables pave the way for detailed design and implementation.

Key Outputs and Deliverables

- Challenge Assessment Summary: A report detailing critical pain points, process bottlenecks, data quality gaps, and manual intervention hotspots.

- Orchestration Imperative Statement: A strategic memorandum explaining the shortcomings of ad hoc automations and the necessity for a formal orchestration framework.

- AI Agent Role Matrix: A mapping of agent types—such as conversational assistants, document intelligence engines, and analytics agents—to specific tasks. For example, an IBM Watson document processing engine for invoice extraction or a cognitive assistant for customer triage.

- Solution Blueprint Overview: A layered narrative and visual outline of the end-to-end orchestration strategy, from objective definition through monitoring and continuous improvement.

- Scope Definition and Assumptions: Documentation of in-scope processes, excluded systems, data readiness levels, and preliminary technology stack considerations.

Dependencies and Input Requirements

- Executive and Process Owner Interviews: Scheduled sessions to validate strategic goals, pain point assessments, and success metrics.

- Process Documentation Access: Existing SOPs, flowcharts, policy manuals, and legacy scripts to inform current-state mapping.

- Data Maturity Audit: Preliminary profiling of datasets to evaluate completeness, quality, metadata availability, and governance controls.

- Technology Landscape Inventory: A comprehensive list of CRM, ERP, document repositories, RPA tools, and AI services for integration planning.

- Governance and Compliance Guidelines: References to industry regulations, security policies, and audit frameworks shaping workflow constraints.

- Stakeholder Alignment Workshops: Working sessions where cross-functional teams endorse assessments, refine assumptions, and agree on the high-level blueprint.

Handoff Mechanisms to Detailed Design

- Introduction Stage Sign-Off Packet: A versioned digital package containing all deliverables, stored in the project repository for traceability.

- Transition Workshop: A facilitated meeting to review outputs, confirm priorities, assign leads for Chapter 1 tasks, and schedule data-gathering activities.

- Data and Process Inventory Template: A standardized schema—spreadsheet or database—for capturing process maps, data sources, input formats, and initial success metrics.

- Roles and Responsibilities Matrix: An updated RACI chart defining ownership for each deliverable and upcoming design tasks.

- Traceability Log: A living document capturing key decisions, assumption changes, and risk items, ensuring transparency as the project progresses.

- Governance Gateway Approval: Formal sign-off by the steering committee or governance board, certifying that the introduction phase meets quality, compliance, and strategic alignment criteria.

By delivering structured outputs, securing necessary inputs, and embedding robust handoff protocols, organizations establish a disciplined foundation for subsequent chapters—starting with defining business objectives and use cases—thereby reducing risk and accelerating time to value in AI-driven orchestration initiatives.

Chapter 1: Defining Business Objectives and Use Cases

Establishing Foundational Inputs and Process Prioritization

Purpose and Industry Context

Laying the groundwork for AI-driven workflow automation begins with identifying high-impact business processes and defining the requisite inputs for successful execution. In complex enterprise environments—characterized by dispersed data silos, legacy systems, and evolving customer demands—targeted automation ensures that resources focus on initiatives aligned with strategic objectives. By cataloging existing workflows, evaluating baseline performance, and securing stakeholder commitments, organizations mitigate the risk of low-value pilots and set clear metrics for measuring return on investment.

Leading firms employ systematic methods—such as process mining, lean management, and value stream analysis—augmented by specialized to map and score candidate processes against business goals. This structured approach avoids ad hoc deployments and ensures that automation efforts leverage AI capabilities where they deliver maximum benefit.

Key Benefits of Focusing on High-Value Processes

- Accelerated ROI through rapid cost savings and productivity gains in high-effort or high-error areas.

- Stronger stakeholder buy-in as early wins demonstrate tangible value in functions like order processing or invoice reconciliation.

- Enhanced risk management by validating compliance controls in well-defined processes.

- Greater scalability potential via standardized inputs and repeatable patterns suited for AI agent orchestration.

- Alignment with strategic imperatives—whether top-line growth or cost optimization—reinforcing executive support.

Essential Inputs and Prerequisites

- Business Objectives Documentation: Clear articulation of targets, such as revenue growth, customer satisfaction, or cost reduction.

- Comprehensive Process Inventory: Registry of workflows, owners, system dependencies, documented procedures, and performance metrics.

- Baseline Performance Data: Quantitative measures—cycle times, error rates, throughput—enabling objective comparison.

- Data Availability Assessment: Audit of required data sources, formats, quality standards, access permissions, and integration points.

- Executive Sponsorship and Governance: Steering committees and sponsors to ensure resource allocation and compliance oversight.

- Technology Capability Review: Inventory of existing automation tools, AI platforms, and middleware to identify compatibility and gaps.

- Cross-Functional Teams: Collaboration across IT, operations, compliance, and business units for end-to-end accountability.

Conditions for Success

- Established data governance framework covering stewardship, quality controls, and lineage tracking.

- Process transparency via documented maps or process mining tools to uncover hidden variants.

- Consensus on prioritization criteria—impact, complexity, risk—among departmental leaders.

- Clear understanding of current automation maturity to build on existing investments.

- Change management readiness with communication and training plans to support adoption.

- Defined success metrics, including time savings, error reduction, scalability potential, and financial impact.

Process Identification Activities

- Process Mapping Workshops: Facilitate sessions with owners and frontline staff using tools such as Lucidchart or Microsoft Visio to capture workflows, decision points, variations, and exceptions.

- Data Readiness Assessment: Leverage profiling techniques and services like Google Cloud Dataflow to validate data accessibility, accuracy, and consistency.

- Value-Effort Scoring: Apply a matrix to weigh volume, cost, error rates, and strategic relevance against integration complexity and data preparation requirements.

- Risk and Compliance Review: Engage compliance officers to document privacy, audit trail, and encryption requirements for regulated processes.

- Technology Compatibility Analysis: Evaluate connectors and middleware needed for platforms such as Adobe Document Services, ABBYY FlexiCapture, and Azure Cognitive Services.

- Alignment Workshops: Confirm that prioritized processes map to executive KPIs and that necessary data sources, SMEs, and technology components are in place or road-mapped.

Articulating Workflow Actions and Sequences

Concept and Importance

Transitioning from high-level objectives to detailed task sequences creates the blueprint for AI agent orchestration. A well-defined workflow map ensures that tasks, decision criteria, data handoffs, and exception paths are transparent and aligned with business requirements. This clarity prevents isolated automation, uncovers dependencies and bottlenecks, and provides the reference for configuring orchestration engines and AI agents.

Scope Definition and Role Identification

Begin by delineating workflow boundaries—trigger events and final deliverables. Identify all participants:

- Human stakeholders such as business analysts and compliance officers.

- AI agents for language understanding, predictive analytics, anomaly detection, and document processing.

- Enterprise systems including CRM databases, ERP modules, document repositories, and messaging queues.

Define responsibilities, inputs, outputs, and access constraints for each role, informing permissions and exception-handling protocols.

Modeling Process Flows

- Identify core tasks from trigger to outcome.

- Sequence tasks logically, indicating dependencies.

- Define parallel branches for concurrent activities like validation and enrichment.

- Model decision points with conditions based on data values, confidence scores, or policy rules.

- Document loops and retries for quality checks, approvals, and error recovery.

This exercise uncovers hidden dependencies and informs resource allocation, scheduling, and performance expectations.

System and Agent Interactions

- Specify data formats and schemas (JSON, XML, CSV).

- Document API endpoints, message queue topics, and file paths.

- Define authentication and authorization methods such as OAuth scopes or certificates.

- Outline error handling strategies for timeouts, validation failures, and exceptions.

- Establish latency and throughput requirements to meet SLAs.

Codifying these details reduces integration risk and ensures reliability.

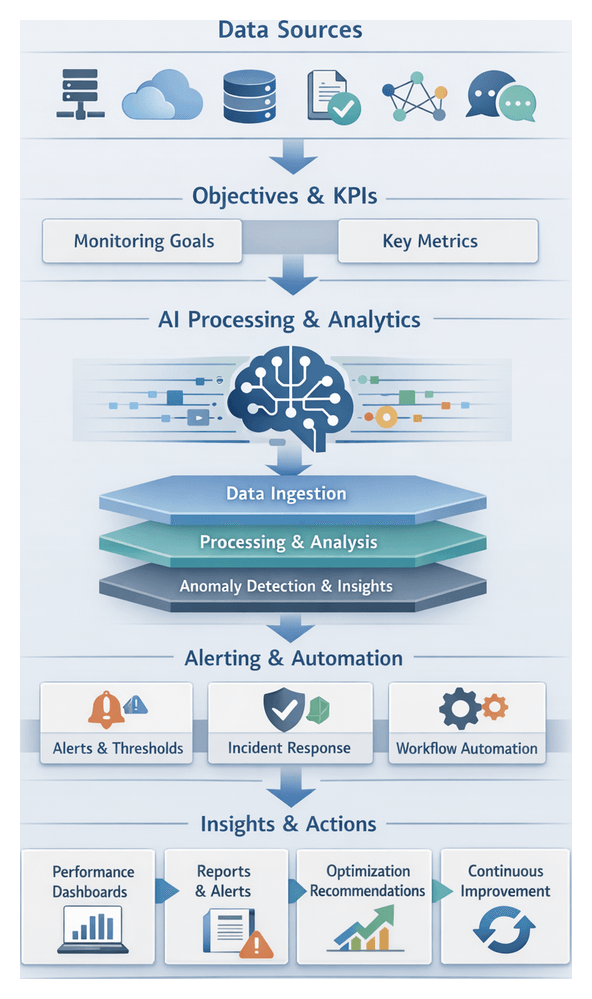

Transparency, Monitoring, and Improvement

- Centralized monitoring dashboards for real-time visibility into workflow stages, pending tasks, and throughput metrics.

- Event streaming and logging in standardized formats to record actions, decisions, and data transformations.

- Lineage tracking metadata linking outputs to original inputs, model versions, and configurations.

- Automated notifications and alerts for SLA breaches or anomalies.

These practices support rapid issue diagnosis, compliance demonstration, and iterative optimization.

Tools and Techniques

- BPMN for standardized depiction of workflows and message flows.

- Flowcharting software with swimlanes and layered diagrams.

- Collaborative platforms for real-time co-authoring of process maps.

- Version control with Git to track changes and support continuous delivery.

- Built-in workflow editors and monitoring modules in AI orchestration platforms.

Aligning AI Agent Roles with Strategic Objectives

Defining Strategic Objectives and Metrics

Translate organizational ambitions into discrete objectives—such as reducing cycle times by X percent, improving first-contact resolution, enhancing data accuracy, or increasing lead conversion. Pair each objective with measurable KPIs, for example a five-minute average handle time or a 10 percent reduction in invoice exceptions.

Cataloguing Agent Capabilities

Construct a capability matrix for AI agent types:

- NLP Agents—OpenAI or Google Cloud AI.

- Document Intelligence—Azure Cognitive Services or IBM Watson.

- Analytics and Predictive Modeling—Amazon SageMaker or Databricks.

- RPA Bots—UiPath or Automation Anywhere.

Score each agent against strategic objectives to prioritize deployment.

Mapping Agents to Workflow Stages

- Data Ingestion: Use document intelligence agents to extract invoice details, reducing manual errors by 80 percent.

- Validation and Enrichment: Deploy analytics agents to cross-check values against historical trends.

- Decision Support: Leverage NLP chatbots for dynamic stakeholder interaction and case updates.

- Execution and Reporting: Employ RPA bots to post transactions in ERP systems and update dashboards.

Governance and Accountability

- Define ownership for agent performance, maintenance, and versioning.

- Establish reporting cadences for agent-level KPIs and exception volumes.

- Specify escalation criteria for low confidence scores or anomaly rates.

Integrating Supporting Systems

- Centralized data repositories for consistent access and audit trails.

- MLOps and CI/CD pipelines—Kubeflow or Prefect—for model lifecycle management.

- Workflow orchestrators such as Apache Airflow to coordinate agent executions.

- Identity and access management frameworks for secure, least-privilege access.

Driving Continuous Improvement

- Monitor throughput, accuracy, and exception metrics in real time.

- Retrain models or adjust parameters to address performance declines.

- Rebalance workloads between agents and human operators for optimal efficiency.

- Identify new use cases where agents can be repurposed for additional value.

Defining Deliverables and Process Handoffs

Core Deliverables

Each use case definition should yield:

- Business Case Document outlining objectives, benefits, risks, and strategic alignment.

- Use Case Canvas capturing scope, actors, data inputs, decision points, and success metrics.

- Process Flow Diagram with swimlanes, branching logic, and agent interactions.

- Data Inventory and Schema Definitions cataloging sources, field formats, and permissions.

- Metrics Framework listing KPIs, SLAs, and targets for accuracy, throughput, and latency.

- RACI Matrix assigning roles and responsibilities.

Deliverable Standards and Quality Gates

Enforce consistent templates and naming conventions to facilitate peer review and reuse. Implement quality gates—completeness checks, stakeholder sign-offs, and policy validations—using automation platforms like UiPath or Microsoft Power Automate to trigger workflows and approvals.

Dependency Mapping

Map relationships between:

- Cross-functional teams, data stewards, legal, and AI specialists.

- Systems and data sources including CRM, ERP, data warehouses, and third-party APIs.

- AI agents requiring upstream preprocessing or human validation.

- Technology stack elements such as middleware, message queues, and monitoring tools.

Visualize dependencies with matrices or graphs in platforms.

Handoff Protocols

Define trigger conditions and handshake mechanisms—for example, validated dataset availability or performance thresholds—that initiate downstream tasks. Specify machine-readable schemas and API contracts (JSON Schema, OpenAPI) to minimize integration friction. Embed SLAs for latency and error rates, and outline review cycles with automated notifications and audit logs for end-to-end accountability.

Tools and Templates for Management

- Jira or Asana for use case templates, task assignments, and approval workflows.

- Confluence or SharePoint for document repositories and version histories.

- Git and GitHub for managing schemas, API contracts, and configuration files.

- Slack or Microsoft Teams with integrated bots for handoff notifications.

Governance and Compliance Integration

- Policy references for GDPR, HIPAA, and industry regulations.

- Risk assessment templates for data sensitivity and AI bias.

- Access control matrices ensuring segregation of duties.

- Automated audit trails capturing version changes, reviewer comments, and approvals.

Transparency and Accountability Practices

- Checklist-driven sign-off templates detailing required artifacts and approvals.

- Automated notifications for handoff initiation, review, and completion.

- Version control tags and change logs summarizing updates.

- Stakeholder dashboards providing real-time visibility into deliverable status and SLA compliance.

- Periodic audits to identify process gaps and standardization opportunities.

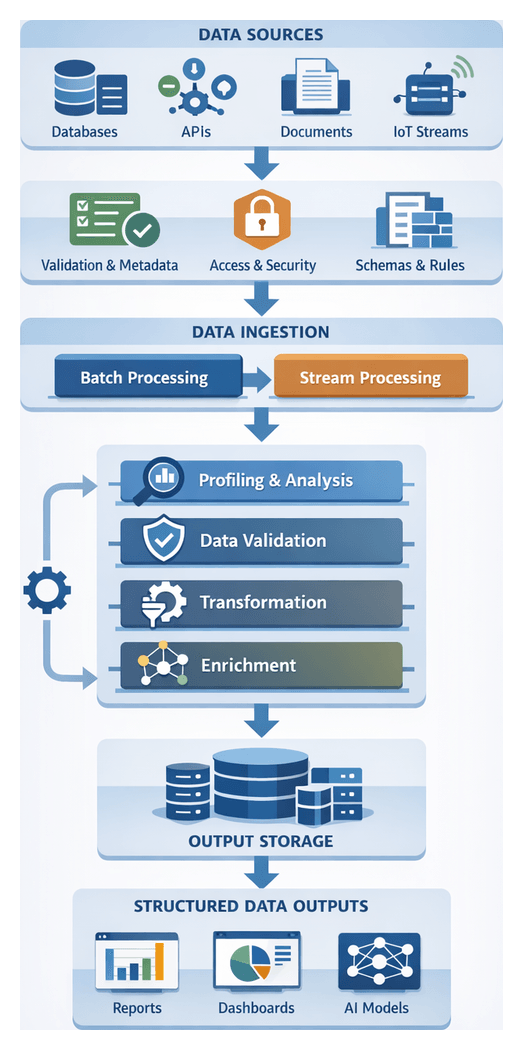

Chapter 2: Data Collection and Preprocessing

Preparing and Ingesting Quality Data Inputs

The foundation of any AI-driven workflow is high-quality, consistent data. This stage identifies all relevant sources, applies initial validation rules, and records metadata and lineage to ensure transparency and governance. By defining clear objectives—consolidating transactional databases, document repositories, IoT feeds, external APIs and more—and enforcing completeness, accuracy and timeliness checks, organizations reduce downstream errors and accelerate time to value.

Key Inputs and Prerequisites

- Source System Definitions: A catalog of enterprise data warehouses, document management systems, object stores and third-party APIs.

- Data Schemas and Formats: Relational schemas, JSON or CSV layouts that describe field names, types and constraints.

- Business Glossaries and Ontologies: Domain taxonomies and code lists that ensure consistent interpretation across teams.

- Quality and Compliance Rules: Thresholds for required fields, acceptable value ranges and regulatory mandates (for example, GDPR or HIPAA).

- Access Credentials and Connectivity: Secure tokens, API keys, network gateways and compute resources sized for ingestion workloads.

- Stakeholder Alignment: Roles for data owners, stewards and IT operations, plus documented governance policies and SLAs for latency and error handling.

- Metadata Capture Mechanisms: Automated lineage, timestamp and transformation logging for audit readiness.

Ingestion Strategies

Choosing between batch and streaming ingestion depends on use-case requirements for freshness, volume and cost.

- Batch Ingestion: Aggregates data on a fixed schedule—hourly, daily or weekly—and processes large volumes in bulk. Common tools include Databricks, AWS S3 and Azure Data Factory.

- Stream Ingestion: Captures events in real time to support immediate insights and automated responses. Architectures often leverage Apache Kafka, AWS Kinesis or Google Cloud Storage with Pub/Sub.

Tools and Platforms for Data Collection

- ETL Platforms: Talend, Informatica provide connectors, workflows and quality components.

- Cloud Data Services: Azure Data Lake, Amazon Redshift, BigQuery offer scalable storage and metadata management.

- API Gateways: MuleSoft, Kong simplify secure access to web services.

- Data Catalog and Governance: Alation, Collibra automate metadata harvesting, lineage tracking and policy enforcement.

Orchestrating Data Cleansing and Transformation

This stage converts heterogeneous raw inputs into reliable, standardized datasets ready for AI processing. An orchestration engine sequences profiling, validation, transformation and enrichment tasks, ensuring traceability and governance at each step and delivering a structured output for modeling or analytics agents.

Core Components and Workflow Sequence

- Ingestion Layer: Interfaces that capture new data from file stores, databases and APIs.

- Profiling Service: Tools such as Trifacta or open-source libraries like Pandas profiling detect patterns, nulls and outliers.

- Validation Engine: Rule-based systems that enforce data types, ranges and referential integrity.

- Transformation Modules: Bulk normalization, aggregation and schema mapping via AWS Glue or Azure Data Factory.

- Enrichment Agents: AI-driven services such as DataRobot Paxata augment records with external reference data or predictive features.

- Orchestration Engine: A workflow manager that sequences tasks, handles retries and maintains audit logs.

- Output Repository: A data mart or staging area where cleansed datasets are stored and cataloged.

Typical Action Flow

- Trigger ingestion on schedule or file arrival.

- Invoke profiling service and generate a quality report.

- Execute validation rules; route failures to exception queues.

- Apply normalization, type casting and schema alignment.

- Call enrichment agents to append reference data or compute features.

- Run post-transformation checks to confirm standardization.

- Aggregate results into the output store and notify consumers.

- Produce audit logs and data lineage metadata.

Data Profiling and Validation

Profiling AI agents analyze distributions, null frequencies and pattern deviations to guide validation. Tools such as Great Expectations and TensorFlow Data Validation automate rule generation and anomaly detection. Rule-based engines enforce schema conformance, uniqueness and referential integrity, diverting violations for automated or manual remediation.

Transformation and Enrichment Integration

Standardization includes upper-casing codes, normalizing dates and mapping source schemas to target models using platforms like Informatica or Talend. AI agents hosted on Google Cloud Dataflow perform imputation and feature engineering. Natural language processing agents extract structured fields from unstructured text. Orchestration handles API calls, rate limits and error retries for seamless automation.

AI-Driven Validation and Enrichment Roles

Specialized AI agents enforce quality gates and enrich records with contextual features. By embedding validation and enrichment into the orchestration layer, teams maintain traceability, accelerate insights and improve decision accuracy.

Validation Agents

- Schema Conformance: Verifying field types, lengths and required attributes.

- Anomaly Detection: Identifying outliers, data drift or sudden distribution shifts with statistical and ML techniques.

- Uniqueness and Referential Integrity: Detecting duplicates or orphaned records using fuzzy matching and foreign-key checks.

- Completeness Checks: Flagging missing values and triggering imputation or exception workflows.

Validation agents integrate with Apache Airflow or Prefect to schedule checks post-ingestion and quarantine suspect batches until issues are resolved.

Enrichment Agents

- Entity Resolution: AI models link disparate records into unified profiles.

- Metadata Tagging and Classification: Using the OpenAI API or TensorFlow NLP pipelines to label text and generate semantic embeddings.

- Geospatial Enrichment: Converting addresses to coordinates and appending demographic data.

- Feature Engineering: Generating rolling averages, risk scores and derived metrics via AWS SageMaker Feature Store.

- Third-Party Data Integration: Appending firmographics, credit ratings or market indicators from external APIs.

Metadata and Governance Systems

- Data Cataloguing: Platforms like Azure Purview and Collibra store metadata, track lineage and enable business-glossary search.

- Policy Enforcement Engines: Automating privacy, retention and masking rules for compliance.

- Audit Logging: Recording every validation check, enrichment step and steward approval to support regulatory audits.

Delivering Structured Outputs and Handoffs

Finalizing the preprocessing stage involves producing well-defined output artifacts, formalizing data contracts with downstream consumers and establishing handoff mechanisms that preserve integrity and traceability.

Output Specifications and Formats

- File Formats: JSON, CSV, Apache Parquet or Avro chosen for schema complexity, compression and compatibility.

- Schema Definitions: Documents that list field names, types, allowed ranges and nullability.

- Partitioning Strategy: Organizing by date, region or business unit to optimize query performance.

- Metadata Enrichment: Attaching record counts, timestamps and lineage identifiers to outputs.

- Versioning Rules: Embedding semantic version numbers or timestamps for reproducibility and rollback.

Data Contracts and Dependency Mapping

- Consumption Points: AI agents, data warehouses, BI tools or enterprise applications that ingest the dataset.

- Refresh Cadence: Real-time streams, micro-batches or nightly full refreshes.

- Error Boundaries: Acceptable missing or anomalous record rates and escalation paths.

- Access Controls: Role-based permissions and encryption requirements for sensitive fields.

Quality Assurance and Validation Checks

- Structural Tests: Ensuring outputs match declared schemas with no extra or missing columns.

- Completeness Checks: Verifying record counts and required partitions.

- Referential Integrity: Confirming foreign-key relationships resolve correctly.

- Value Range Assertions: Detecting out-of-bounds values or unexpected null patterns.

- Duplicate Detection: Removing repeated records based on primary or composite keys.

Handoff Mechanisms and Triggering Conditions

- Event-Driven Messaging: Emitting messages to brokers when new data is available.

- API Notifications: Posting webhooks to registered endpoints for on-demand fetch.

- Scheduled Pulls: Downstream processes query object stores or catalogs for new versions.

- File System Watches: Monitoring paths for appearance of files matching naming conventions.

- Orchestration Workflows: Progressing tasks only when prior outputs pass quality gates.

Governance, Traceability and Audit Trails

- Lineage Metadata: Capturing source systems, transformation versions and operator identifiers.

- Processing Metrics: Logging job runtimes, record counts and validation outcomes.

- Immutable Artifacts: Archiving raw and transformed outputs in write-once storage.

- Audit Queries: Providing searchable interfaces for compliance reporting and investigations.

Integration into Downstream Workflows

- Model Training Pipelines: Consuming feature tables for retraining and batch inference.

- Real-Time Inference Engines: Fetching lookup tables and normalization parameters for live predictions.

- Reporting and Visualization: Loading dimensional tables into BI platforms for dashboards.

- Operational Applications: Importing reference data into CRM, ERP or order management systems.

Error Handling, Retry Logic and Scalability

- Automatic Retries: Configurable backoff and retry limits for failed tasks.

- Partial Reloads: Selective reprocessing of affected partitions instead of full pipeline restarts.

- Manual Intervention Gates: Pausing workflows and notifying stakeholders when failures exceed thresholds.

- Fallback Datasets: Using cached versions of previous outputs when fresh data is unavailable.

- Distributed Storage and Asynchronous Delivery: Leveraging object stores, message queues and partition pruning to scale throughput and minimize contention.

By rigorously preparing, cleansing, validating and enriching data, then delivering structured outputs with formal contracts and handoff mechanisms, organizations create a resilient, transparent and scalable foundation for all downstream AI and business processes.

Chapter 3: Selecting and Configuring AI Agents

Defining Agent Selection Criteria and Inputs

Establishing clear selection criteria and input specifications creates the foundation for a reliable, scalable AI agent orchestration solution. Organizations articulate business objectives and technical requirements to guide the choice of AI agents, ensure data integrity, and confirm compatibility with existing systems. A structured approach reduces risk, avoids rework, and accelerates deployment.

The objectives of this phase are:

- Clarify functional requirements by translating business goals into precise AI capabilities.

- Ensure data readiness through profiling of sources, formats, and quality metrics.

- Align technical constraints including infrastructure, integration points, and compliance mandates.

- Standardize evaluation via a reproducible framework comparing cost, performance, security, and support.

Successful agent selection relies on four key input categories:

- Business Objectives and Use Cases – Defined goals such as automating invoice approval, extracting customer insights, or routing support tickets.

- Data Characteristics – Profiles of volume, variety, velocity, veracity, formats, error rates, and update frequencies.

- Performance Targets – Benchmarks for accuracy, response time, throughput, and cost per transaction aligned to SLAs.

- Integration and Deployment Constraints – Connectivity requirements, API standards, deployment models, and regulatory obligations.

Before evaluating agents, teams must satisfy key prerequisites:

- Use Case Definition Complete – Documented workflows with decision points, input/output artifacts, roles, and KPIs.

- Data Inventory and Quality Assessment – Cataloged repositories, formats, error rates, and governance policies.

- Technology Stack Overview – Middleware, API gateways, connectivity diagrams, and security frameworks.

- Stakeholder Alignment – Consensus on risk profiles, data usage guidelines, and rollout strategies.

- Budget and Timeline Constraints – License costs, resource projections, and milestone dates for proof-of-concept and full rollout.

With these conditions met, define a weighted scoring model across dimensions such as:

- Capability Fit

- Performance under realistic workloads

- Scalability for volume and concurrency

- Security, compliance, and certifications

- Ease of integration via SDKs and APIs

- Total cost of ownership

- Vendor support and community engagement

This objective framework enables comparison of solutions like Amazon SageMaker, Hugging Face document extraction, or conversational models powered by OpenAI GPT-4. Once agents are shortlisted, specify precise inputs including data schemas, context window limits, preprocessing rules, error handling protocols, and security controls. Documenting these specifications in standardized templates ensures seamless configuration of input pipelines and validation of data conformity before runtime.

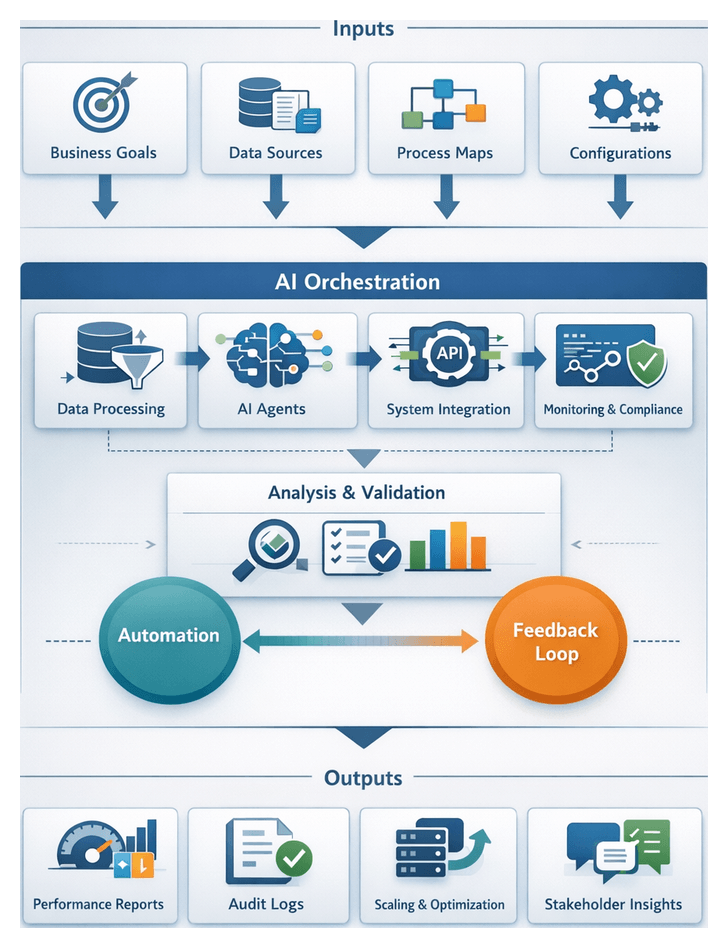

Establishing the Imperative for Structured Orchestration

Fragmented, ad hoc automation initiatives often lead to coordination gaps, data silos, and unpredictable outcomes. To scale AI-driven workflows reliably, organizations must adopt structured orchestration frameworks that deliver end-to-end visibility, traceability, and governance.

Common failures of point solutions include:

- Lack of Coordination across disparate scripts and bots.

- Limited Visibility into multi-stage process health.

- Inconsistent Outcomes due to varied error-handling logic.

- Fragmented Governance bypassing enterprise policies.

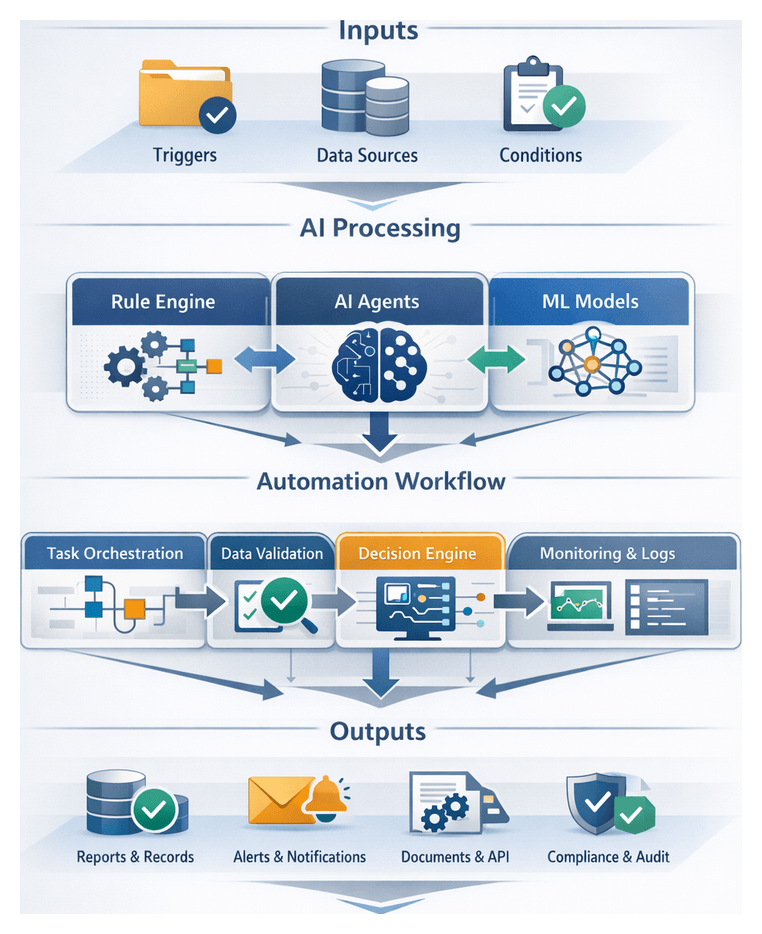

Structured orchestration defines how tasks flow among AI agents, humans, and legacy systems. Core interaction patterns include:

- Sequential Processing where each stage waits for completion signals.

- Parallel Execution of independent tasks with coordinated aggregation.

- Event-Driven Triggers that activate stages based on data events or manual approvals.

- Conditional Routing using decision gateways on model outputs or business rules.

- Feedback Loops that refine processes using performance metrics or user input.

Embedding governance controls at each transition enforces:

- Role-Based Access Controls for initiation, modification, and approval.

- Approval Gates for compliance reviews or managerial sign-offs.

- Audit Trails capturing inputs, outputs, and decision criteria.

- Policy Enforcement to reject or quarantine data violating standards.

Defining end-to-end workflow objectives ensures alignment with business goals. Typical metrics include throughput targets, latency requirements, accuracy thresholds, compliance ratios, and resource utilization levels.

Roles in the orchestration ecosystem include:

- Orchestration Engine – Central conductor enforcing sequencing and dependency rules via tools such as UiPath Orchestrator.

- AI Agents – Specialized for tasks like language understanding, vision inspection, or predictive scoring.

- Human Operators – Intervene at approval gates, resolve exceptions, and provide feedback.

- Enterprise Systems – Source and sink data through standardized APIs, keeping CRM, ERP, and repositories synchronized.

- Monitoring and Analytics Services – Aggregate logs and metrics, with platforms like DataRobot generating alerts and dashboards.

Modern architectures leverage event-driven integration and centralized state stores to decouple components, react instantly to data changes, and maintain process state. Robust error-handling patterns such as automated retries, fallback paths, escalation workflows, and compensation transactions preserve continuity. Continuous measurement of stage-level throughput, error rates, resource consumption, and user satisfaction enables dynamic optimization and iterative refinement of orchestration logic.

Mapping AI Capabilities and Integration Roles

AI agents provide diverse capabilities that must be aligned to workflow tasks and supported by integration systems. Understanding each function and its supporting infrastructure is essential for a cohesive solution.

Core AI capabilities include:

- Natural Language Processing

- Computer Vision

- Predictive Analytics and Machine Learning

- Robotic Process Automation

- Conversational AI and Virtual Assistants

- Knowledge Graph and Semantic Reasoning

NLP Agent Functions

NLP agents perform text analysis, entity extraction, sentiment detection, and document understanding. They convert unstructured content into structured data using techniques such as tokenization and named entity recognition. Common engines include OpenAI API and TensorFlow models.

Computer Vision Agent Functions

Vision agents interpret images and video for object detection, optical character recognition, and quality inspection. Scalable deployments leverage TensorFlow with orchestration on Kubernetes.

Predictive Analytics and ML Agents

These agents analyze historical data to forecast trends and generate probability scores. Pipelines often use Apache Airflow for orchestration, with models built in TensorFlow and real-time scoring via Apache Kafka.

Robotic Process Automation Agents

RPA agents automate rule-based tasks across applications without code changes to legacy systems. Platforms such as UiPath and Automation Anywhere provide low-code design environments. Integration with NLP or vision agents enables end-to-end automation of both cognitive and structured steps.

Conversational AI and Virtual Assistants

Conversational agents manage multi-turn dialogues using NLP and context tracking. Solutions like Google Dialogflow and IBM Watson Assistant integrate with back-end APIs to process service requests within user conversations.

Supporting systems facilitate communication, data flow, and governance:

Orchestration Platform Responsibilities

Orchestration engines coordinate task sequencing, retries, and dependency tracking. Tools include Apache Airflow and Camunda.

Data Integration and Pipeline Systems

Platforms such as MuleSoft, Dell Boomi, and Informatica handle schema mapping, quality checks, and batch or streaming ingestion.

Messaging, Event Streaming and API Gateways

Decoupled communication relies on Apache Kafka, RabbitMQ, or AWS EventBridge, while API gateways such as Kong and AWS API Gateway enforce security and protocol translation.

Monitoring, Logging and Feedback Agents

Operational visibility is provided by agents collecting metrics and traces, visualized in dashboards via Prometheus and Grafana.

Security, Compliance and Governance Agents

Security gateways and policy engines such as Open Policy Agent enforce access controls, encryption, and audit logging across workflows.

Error Handling, Recovery and Resilience Strategies

Define retry policies, fallback routes, dead-letter queues, and confidence-score thresholds to route low-confidence results to human review. Patterns like circuit breakers and back-pressure controls prevent cascading failures.

Ensuring Scalability and Performance Efficiency

Container orchestration on Kubernetes enables auto-scaling and health checks of AI services. Performance testing guides capacity planning to maintain throughput and latency targets.

Version Control, Model Management and Deployment Pipelines

Frameworks such as MLflow manage model artifacts, metadata, and versioning. CI/CD pipelines automate testing and deployment of new agent configurations across environments.

Strategic Alignment of Capabilities and Roles

A task-to-agent mapping matrix clarifies responsibilities, reduces overlap, and supports governance. Continuous review of performance and resource utilization drives iterative refinement and sustained business value.

Specifying and Managing Agent Outputs

Defining clear output specifications is critical for seamless handoffs in AI orchestration. Outputs must include content structure, format, and metadata to enable automated validation, routing, and integration into downstream systems.

Output Format Standards and Protocols

- JSON for nested structures and web service integration.

- XML for schema-validated exchanges in enterprise service buses.

- CSV for tabular exports and analytics workflows.

- Binary Formats such as Protocol Buffers and Avro for high-throughput serialization.

Data Schema and Metadata Requirements

- Field Definitions specifying names, types, ranges, and cardinality.

- Schema Versioning for backward compatibility and controlled migrations.

- Provenance Metadata including agent IDs, model versions, and timestamps.

- Quality Metrics such as confidence scores and error flags.

Identifying Dependencies and Input-Output Mapping

- Input-Output Matrices linking agent outputs to downstream inputs.

- Conditional Dependencies that trigger alternative paths based on output values.

- Data Transformation Rules for field renaming, type conversions, and filtering.

Designing Handoff Interfaces

- Transport Mechanisms including RESTful APIs, message queues, event streams, or direct database writes.

- Authentication and Security using API tokens, OAuth2, mutual TLS, and encryption.

- Error Handling via retry policies, dead-letter queues, and alert thresholds.

- Timeouts and SLAs to enforce performance objectives.

Ensuring Reliable Handoffs and Continuity

- Idempotency Controls to safely process repeated messages.

- Stateful Checkpointing for resuming long-running processes.

- Circuit Breakers and Back-off Strategies to protect downstream systems.

- Monitoring and Alerting on success rates, latencies, and error volumes.

Versioning and Change Management

- Semantic Versioning for interface updates and compatibility.

- Schema Evolution Policies to add, deprecate, and migrate fields.

- Canary Deployments and A/B Testing for gradual rollouts.

- Documentation and Change Logs to inform developers and stakeholders.

Validation and Monitoring of Agent Outputs

- Schema Validation using automated validators to enforce format and types.

- Quality Gates with rules on confidence scores and error rates.

- Operational Dashboards aggregating throughput, latency, and quality metrics.

- Feedback Loops integrating human-in-the-loop review to improve future accuracy.

By standardizing output formats, enforcing schema and metadata contracts, and implementing reliable handoff interfaces with robust validation and monitoring, organizations ensure that AI agent outputs integrate smoothly into complex workflows. This rigor underpins resilient, scalable, and maintainable AI-driven solutions that consistently deliver business value.

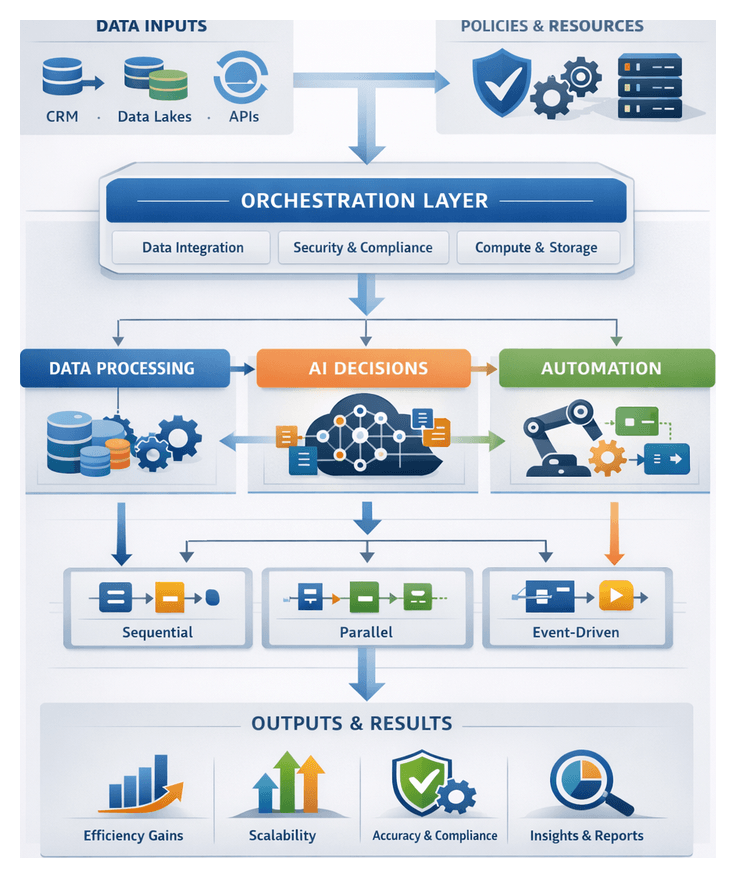

Chapter 4: Designing the Workflow Architecture

Establishing Workflow Objectives and Data Inputs

Designing an AI-driven orchestration framework begins with clearly defining workflow objectives and assembling a comprehensive blueprint of data inputs. Objectives translate strategic imperatives—such as reducing operational costs or accelerating time-to-market—into concrete performance targets. Common objective categories include:

- Efficiency gains: streamline manual processes, eliminate redundant tasks, and reduce end-to-end cycle times through automation.

- Reliability and consistency: enforce standardized procedures, minimize error rates, and maintain data integrity across all execution paths.

- Scalability: architect systems that accommodate growth in transaction volumes, new business lines, or geographic expansion without significant redesign.

- Transparency and auditability: embed logging and monitoring at decision points to provide end-to-end visibility into data transformations and agent interactions.

- Compliance adherence: incorporate regulatory requirements—such as GDPR, HIPAA, or SOX—into workflow logic and validation gates from the outset.

Establishing these objectives requires collaboration among executive sponsors, process owners, data engineers, and IT architects. Stakeholder workshops capture desired outcomes, map success metrics—like percentage reduction in manual handoffs or error rates—and define acceptable performance thresholds. Documented use cases and key performance indicators (KPIs) ensure that design decisions remain aligned with business strategy.

Concurrently, teams must identify all data inputs that feed the orchestration layer. Data sources often span enterprise systems such as CRM, ERP, HR platforms, cloud data lakes, and external feeds including market data or social media streams. To create a robust data input catalog:

- Inventory all systems: list each source system, database, API, or file repository that provides process-critical information.

- Define schemas and formats: record field-level metadata, message protocols, file types (CSV, JSON, XML), and document structures.

- Assess accessibility: determine whether direct queries, RESTful APIs, message brokers, event streams, or batch file transfers are required.

- Evaluate quality and lineage: profile data for completeness, consistency, and accuracy, and trace its origin to establish trust levels.

- Estimate frequency and volume: forecast data arrival patterns, throughput requirements, peak loads, and latency tolerances.

Early profiling of data inputs mitigates integration risks by revealing transformation requirements, potential cleansing needs, and capacity constraints. It also informs decisions about infrastructure provisioning and network bandwidth.

Defining prerequisites and operational conditions is equally important. Before agent orchestration can commence, teams must ensure:

- Compute and storage provisioning: secure adequate CPU, memory, GPU, and disk resources for data ingestion, model training, and inference workloads.

- Security and compliance controls: implement identity and access management policies, encryption for data in transit and at rest, and audit logging mechanisms.

- Data governance and stewardship: assign ownership of data domains, establish versioning protocols, and define retention and archival policies.

- Service-level agreements: validate availability, throughput, and latency commitments for internal and external APIs, middleware, and third-party services.

- Model readiness: confirm that AI models have undergone rigorous training, validation, performance benchmarking, and bias testing according to organizational standards.

With objectives and prerequisites established, the next step is to set initial parameters and constraints that govern workflow execution. These settings may include:

- Performance thresholds: acceptable ranges for response times, throughput, error rates, and model confidence scores.

- Resource quotas: limits on CPU, memory, or GPU usage per agent or service tier to prevent resource contention.

- Timeout and retry policies: rules for handling failed or delayed operations, including retry intervals, backoff strategies, and escalation pathways.

- Concurrency limits: maximum number of parallel tasks or threads allowed for each orchestration node.

- Data retention rules: duration for which intermediate artifacts, logs, and transaction records are preserved before archival or deletion.

Finally, cross-functional validation workshops map objectives to data inputs and parameters, review prerequisite fulfillment, perform risk assessments for data quality and integration dependencies, and secure stakeholder buy-in. This alignment process transforms high-level goals into a concrete, traceable design blueprint that informs subsequent agent orchestration and implementation activities.

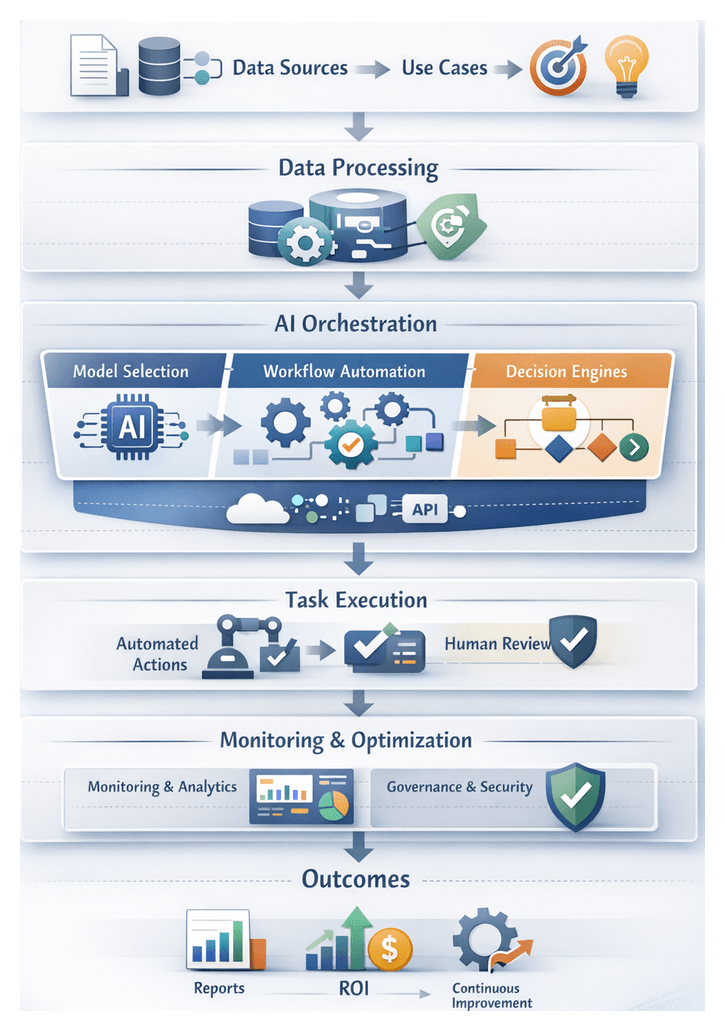

Agent Sequence Orchestration

Defining how AI agents are activated, sequenced, and coordinated is critical for end-to-end process automation. Sequence orchestration ensures that data flows smoothly, decisions occur at the right time, and systems interact without manual intervention. The design involves mapping activation flows, specifying triggers, selecting coordination patterns, and implementing robust synchronization and error handling.

Mapping Activation Flows

A comprehensive activation flow map documents each agent’s inputs, outputs, and logical dependencies. This map should:

- Visualize end-to-end logic: from data ingestion and preprocessing to analytics, decision, and action agents.

- Identify parallelism opportunities: determine which agents can run concurrently without data conflicts.

- Highlight decision gateways: specify conditional branches for exceptions, escalations, or alternate paths.

Collaborative modeling sessions using BPMN tools or low-code workflow designers help align process architects, data engineers, and AI specialists. These sessions result in swimlane diagrams, sequence flows, and state transition diagrams that serve as reference blueprints for implementation.

Designing Trigger Conditions

Triggers define when agents execute. Common types include:

- Event-Driven Triggers initiated by messages on a queue or event bus. For example, a document ingestion agent runs when a new file is uploaded to a repository.

- Time-Based Triggers scheduled at fixed intervals or via cron expressions, ideal for batch analytics or periodic synchronization.

- State-Change Triggers activated when specific data attributes hit predefined thresholds, such as inventory levels or credit utilization rates.

- User-Action Triggers invoked by user requests or API calls for on-demand processing.

Clear trigger semantics and validation logic ensure that preconditions are met before execution, reducing errors and enhancing predictability.

Coordination Patterns

Three primary coordination patterns guide agent interaction:

- Sequential Coordination agents run in a fixed order, suitable for linear workflows where each step depends on prior outputs.

- Parallel Coordination agents execute concurrently on independent tasks or data partitions, improving throughput with synchronization points to merge results.

- Publish-Subscribe Coordination agents subscribe to events published by upstream producers, decoupling components and enabling dynamic topology changes.

Hybrid approaches often combine these patterns, governed by conditional gateways that route execution dynamically based on runtime data.

Implementing Event-Driven Architecture

Event-driven architectures decouple agent interactions and support scalable, responsive workflows. Organizations can leverage platforms such as Apache Kafka, AWS EventBridge, and Azure Event Grid. In this model, agents publish events upon completion or failure, and downstream agents subscribe to relevant event types. This approach reduces direct dependencies, supports dynamic scaling, and simplifies the introduction of new agent types without modifying existing producers.

Synchronization and Dependency Management

Managing complex dependencies and synchronization involves techniques such as:

- State Machines modeled with services like AWS Step Functions or Azure Logic Apps, defining each step, transitions, and error handling behaviors.

- Distributed Locks and Semaphores managed through systems like Redis or ZooKeeper to prevent race conditions when agents update shared resources.

- Barrier Synchronization pausing parallel tasks at barrier points until all participants complete, useful for batch aggregation or synchronized model ensembles.

Explicit dependency metadata allows orchestration engines to optimize scheduling, minimize idle time, and regulate backpressure when downstream systems become saturated.

Error Handling and Retry Mechanisms

A resilient orchestration framework anticipates and mitigates failures with:

- Retry Policies configured with exponential backoff and jitter to handle transient errors gracefully.

- Compensation Workflows that reverse partial updates or rollback transactions when a later stage fails irrecoverably.

- Dead-Letter Queues for isolating events that exhaust retry attempts, enabling manual inspection and remediation without blocking the main event stream.

- Alerting and Escalation to notify operators or trigger alternative processing paths when error thresholds are breached.

Documenting error classifications, expected recovery behaviors, and escalation paths ensures that the orchestration layer remains transparent and maintainable as the agent ecosystem evolves.

AI Decision Point Coordination

Orchestrating AI-driven decisions requires integrating machine learning models, deterministic business rules, and human oversight into a unified decision layer. This coordination layer ensures that each decision point receives appropriate inputs, executes with the required logic, and hands off results to downstream systems or agents, with human intervention only when necessary.

Embedding Business Rules and AI Models

Decision workflows often combine:

- Rule Engines for deterministic policies, thresholds, and approvals.

- Machine Learning Models for probabilistic predictions, confidence scoring, and anomaly detection.

- Human-in-the-Loop Checks for high-risk or ambiguous cases.

For example, a loan underwriting process may reject incomplete applications via rules, call an AI model hosted on AWS SageMaker to predict default risk, and route borderline cases to human analysts. This layered approach balances consistency, speed, and accuracy.

Data Pipelines and System Orchestration

Reliable decision coordination depends on well-architected data pipelines that deliver structured and unstructured inputs to AI agents. Patterns include:

- Event-Driven Pipelines using Apache Kafka to publish data changes and trigger model evaluations in real time.

- Batch ETL Jobs that aggregate features for scheduled inference runs.

- Real-Time APIs that supply contextual data to conversational agents powered by Google Cloud AI Platform.

Orchestration engines coordinate these pipelines, enforcing data contract versioning, handling retries, and monitoring performance metrics and SLAs.

Middleware and Event-Driven Frameworks

Middleware abstracts protocol differences between REST, gRPC, and messaging, manages secure credentials and tokens, routes outputs to appropriate consumers, and load-balances inference requests. Event-driven services such as AWS EventBridge and Azure Event Grid enable low-latency, scalable event routing and decoupled architectures.

Consistency, Traceability, and Monitoring

Transparent decision making requires capturing:

- Input Data Snapshots at each decision node.

- Model Versions, Hyperparameters, and Training Data Lineage.

- Decision Outcomes, Confidence Scores, and Applied Business Rules.

- Audit Metadata including Timestamps, Agent or User IDs, and Exception Flags.

Centralized dashboards—such as IBM Watson OpenScale—aggregate logs and metrics, enabling trend analysis, drift monitoring, and governance reporting. This data supports continuous improvement of decision models and processes.

Leveraging AI Orchestration Platforms

Specialized platforms provide low-code designers, prebuilt connectors for CRM, ERP, and document management, policy templates for approvals and guardrails, and monitoring consoles. An examples includes OpenAI APIs integrated via custom connectors. Standardizing on a unified orchestration layer reduces integration complexity and accelerates time-to-value.

Agent Roles and Collaboration

Different AI agents fulfill specialized functions within decision workflows:

- Analytics Agents analyze numeric or time-series data for forecasting, anomaly detection, and scoring.

- Natural Language Agents interpret unstructured text, extract intent, sentiment, and entities from documents, emails, or chat messages.

- Document Processing Agents convert PDFs, images, and scanned forms into structured records via OCR, classification, and extraction models.

- Recommendation Agents generate personalized suggestions and content rankings using collaborative filtering or deep learning.

These agents collaborate at decision points, feeding results into a central decision engine or state machine, which then routes outcomes to downstream systems or human reviewers as needed.

Best Practices for Decision Point Management

- Modularize agents by function to allow independent updates, testing, and scaling.

- Implement canary deployments to test new models on a small subset of traffic before full rollout.

- Define escalation paths for decisions below confidence thresholds, routing them to secondary models or human experts.

- Automate model retraining with feedback loops that use outcome data to schedule training jobs on platforms like Azure Cognitive Services.

- Regularly audit and refactor business rules to prevent conflicts, redundancies, or coverage gaps as policies evolve.

Deliverables and Handoff Protocols

The culmination of the design phase is a comprehensive set of deliverables and handoff protocols that guide development, integration, and governance. These artifacts ensure clarity on scope, data requirements, execution flow, and compliance obligations.

Core Deliverables

- Process Specification Documents containing narrative descriptions, swimlane diagrams, sequence flows, and data flow diagrams that define each workflow step, decision gateway, and agent interaction.

- Data Contract Definitions with formal schemas (JSON Schema, XML Schema Definition, Avro) specifying field metadata, validation rules, sample payloads, and versioning conventions.

- Dependency Matrix tabulating each component—AI agents, rule engines, external APIs, data stores—including upstream and downstream dependencies, execution order, and failure impact classifications.

- Handoff Protocols detailing API endpoints, HTTP methods, URL patterns, request/response schemas, message broker topics, file transfer conventions, timeout thresholds, retry policies, and error codes.

- Governance and Compliance Checklist specifying security controls (TLS 1.2 , AES-256), IAM policies, audit logging requirements, data masking rules, regulatory mappings (GDPR, HIPAA, SOX), and stewardship responsibilities.

Mapping Dependencies and Resiliency

Dependency mapping involves:

- Component Identification assigning unique identifiers to agents, rule modules, external systems, and human tasks, categorized by functional domain.

- Input and Output Cataloging defining exact data elements each component consumes and produces, referencing data contracts and sample payloads.

- Execution Sequencing specifying logical order or timestamp dependencies, trigger conditions, and concurrency constraints.

- Failure Impact Assessment classifying potential failures as transient or terminal, and linking each to error handling patterns like retry, compensate, or escalate.

- Resiliency Planning identifying critical path components, specifying high availability configurations, load-balancing strategies, backup agents, and alternative data sources.

Formal Handoffs and Control Gates

- API-Based Handoffs for synchronous interactions: define REST or gRPC endpoints, authentication schemes, and contract tests.

- Event-Driven Handoffs for asynchronous integrations: specify event topics, payload schemas, retention policies, and consumer group configurations using platforms like Apache Kafka or AWS EventBridge.

- File Exchange Handoffs for batch workflows: outline directory structures, naming conventions, transfer protocols (FTP, SFTP), checksum or digital signature procedures, and polling schedules.

- Human Task Handoffs for manual approvals: integrate with business process management suites like Camunda or Apache Airflow, specifying user interfaces, access controls, SLA timers, and escalation rules.

- Control Gates and Validation embedding automated checks at each handoff for schema compliance, data integrity, threshold validations, and business rule assertions, with defined error notifications and rollback procedures.

Versioning and Change Management

Workflows must adapt over time. Formal versioning and change management processes include:

- Semantic Versioning applies MAJOR.MINOR.PATCH conventions to data contracts, incrementing versions based on compatibility impacts.

- Component Release Cycles define branching strategies, release windows, and CI/CD pipelines for automated integration and end-to-end testing.

- Impact Assessment Workflows engage a change review board to evaluate proposed modifications, update dependency matrices, and revise compliance checklists prior to production rollout.

Tooling and Best Practices

Effective execution relies on integrated toolchains for design, documentation, and governance. Recommended tools include:

- Workflow Modeling with BPMN editors such as the Camunda Modeler to design, version, and export process diagrams.

- API Documentation using Swagger/OpenAPI or Postman to publish interactive specifications and generate client libraries.

- Event Schema Registries like Confluent Schema Registry to manage Avro, JSON, or Protobuf schemas and enforce compatibility rules.

- Policy-as-Code Frameworks such as Open Policy Agent (OPA) or enterprise GRC platforms for automated compliance checks and policy enforcement.

By delivering comprehensive specifications, mapping all dependencies, and defining rigorous handoff and compliance protocols, teams create a scalable, reliable, and auditable foundation for AI-powered workflows. This robust design phase bridges the gap to development and deployment, ensuring smooth implementation and continuous optimization.

Chapter 5: Integration with Enterprise Systems

Background and Purpose of Enterprise Integration

In today’s digital transformation landscape, enterprises leverage AI-driven automation to optimize decision-making and customer experience. However, realizing the full potential of intelligent agents requires seamless integration with both legacy and modern systems. Transitioning from monolithic architectures to API-led microservices promotes reuse, scalability and agility—replacing brittle point-to-point connections with standardized interfaces. Early articulation of integration requirements prevents costly refactoring and enables parallel innovation among business, IT and AI specialists.

Hybrid cloud architectures further complicate integration, as on-premises databases, private cloud services and public cloud applications must coexist under consistent security and performance policies. Integration planning must address network latency, data sovereignty and governance across environments. Establishing clear parameters at the outset ensures reliable data exchange, maintains compliance and supports AI-driven workflows at enterprise scale.

Defining Integration Scope and Interfaces

System Inventory and Data Endpoints

Effective integration begins with a comprehensive inventory of systems, APIs and data sources:

- Application landscape documentation, including vendor names, versions and deployment models.

- API specifications and service contracts for REST endpoints, SOAP operations and payload schemas.

- Data dictionaries or metadata catalogs detailing field definitions and business rules.

- Authentication mechanisms such as OAuth tokens, API keys, SAML assertions or JSON Web Tokens.

- Network topology diagrams, firewall rules and VPN requirements.

- Service level agreements specifying throughput, latency and availability targets.

Streaming and event-driven sources—such as Apache Kafka or corporate event buses—require definitions of topic schemas, retention policies and consumer groups. Callback URLs, webhook configurations and queue endpoints support asynchronous workflows, while idempotency protocols and retry policies guard against duplicate processing.

Prerequisites: Security, Network and Compliance

Before integration work begins, teams must establish:

- Service accounts with least-privilege access and secure token vaults for credentials.

- Identity federation or single sign-on with providers like Okta.

- Encryption in transit and at rest to protect sensitive data flows.

- Firewall rules, dedicated virtual private clouds and load-balancing configurations for high availability.

- Compliance checks for data residency, audit logging and industry mandates such as GDPR, HIPAA or SOX.

Staging and sandbox environments enable connectivity testing, capacity planning and failover simulations, ensuring that integration channels can handle production loads and recover gracefully from component failures.

Alignment with Business Objectives

Integration endpoints must support key workflow goals, for example:

- Real-time synchronization between CRM systems—such as Salesforce—and AI customer service agents.

- Batch or streaming ingestion from ERP platforms like SAP S/4HANA into predictive analytics agents.

- Document retrieval from Microsoft SharePoint for AI-driven content classification.

- Event triggers from Apache Kafka to initiate AI agent workflows.

Pilot integrations with representative endpoints validate performance, data transformations and security policies—informing the broader integration roadmap and refining data mappings and error-handling logic before enterprise-wide deployment.

Endpoint Interfaces and Data Contracts

Each integration point requires a defined data contract that specifies:

- Operation definitions, including HTTP verbs, URI templates and input parameters.

- Payload structures with field-level definitions, data types and validation rules.

- Response codes and error payload formats to support exception handling.

- Asynchronous patterns such as publish-subscribe or request-reply.

- Schema versioning strategies for backward compatibility.

Publishing API documentation to a developer portal—with sample requests, sandbox credentials and interactive consoles—facilitates contract-driven development. Tools like Swagger Codegen or OpenAPI Generator can scaffold stubs and client libraries, while CI/CD pipelines and semantic versioning ensure stable interfaces for AI agents.

Tooling for Enterprise Integration

Common integration platforms and middleware include:

- MuleSoft Anypoint Platform for API-led connectivity and reusable assets.

- Dell Boomi AtomSphere for low-code integration flows and pre-built connectors.

- IBM App Connect for event-driven and API integrations.

- Azure Logic Apps for serverless orchestration.

- Apache Camel and Spring Integration for code-centric routing and transformation.

Selection criteria include connector availability, security standard support, scalability, monitoring features and total cost of ownership. Commercial suites offer enterprise support and connectors, while open-source frameworks reduce licensing costs but may require more internal expertise.

Middleware Workflows and API Orchestration

Role of Middleware

The middleware layer orchestrates data flows, API calls and business logic across enterprise systems and AI agents. By abstracting connectivity, providing transformations and managing transactional integrity, it decouples AI services from core applications and enforces consistent validation rules before and after agent execution. Centralized logging, distributed tracing and security policies ensure traceability and governance.

Orchestration Models and Patterns

Key middleware patterns include:

- Request-Response: Synchronous API calls for low-latency tasks.

- Publish-Subscribe: Asynchronous messaging via brokers for long-running AI processes.

- Choreography: Event-driven handlers coordinating actions without a central orchestrator.

- Central Orchestration: Defined workflows with sequence control, error handlers and compensation transactions.

API Gateway and Management

An API gateway consolidates endpoints, enforces security and provides traffic management:

- Authentication and authorization using OAuth 2.0 or JWT.

- Rate limiting, throttling and IP whitelisting.

- Request transformation, such as XML-to-JSON conversion.

- Centralized logging for audit trails and performance metrics.

Integration Workflow Sequence

- An upstream system issues a request to the API gateway.

- The gateway applies validation and routes to the orchestrator.

- The orchestrator invokes AI agents via REST or gRPC.

- Agents process inputs—such as document text or transaction data—and return results.

- The orchestrator applies transformations, merges context and triggers subsequent tasks or publishes messages to queues.

- For asynchronous flows, workers resume when agents complete processing.

- Final results are compiled and returned through the gateway or pushed to target systems.

Error Handling and Reliability

Resilient orchestration employs:

- Retry policies with exponential back-off for transient failures.

- Circuit breakers to halt calls to failing endpoints.

- Compensation transactions for rollback on partial failures.