The Creative Synergy Harmonizing AI Agents and Human Ingenuity in Content Creation

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Setting the Stage for the Modern Digital Content Landscape

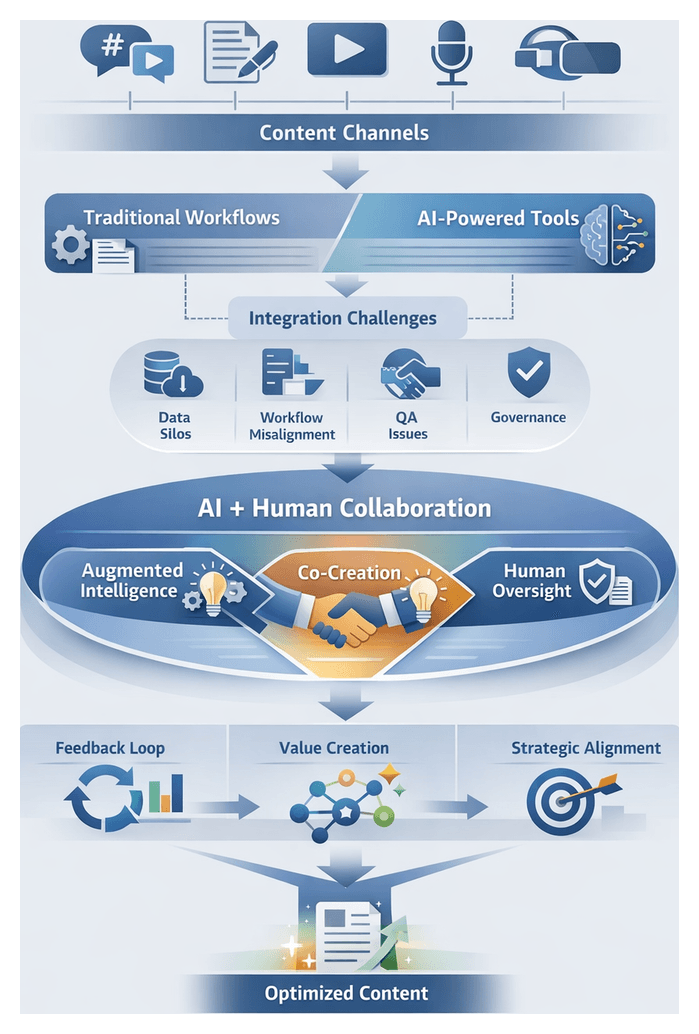

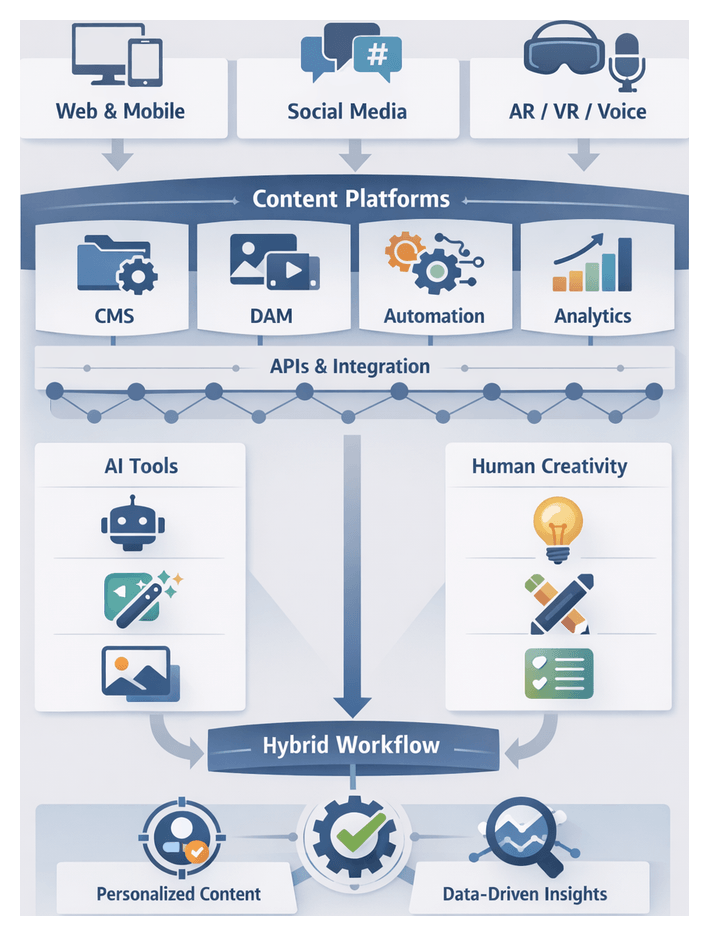

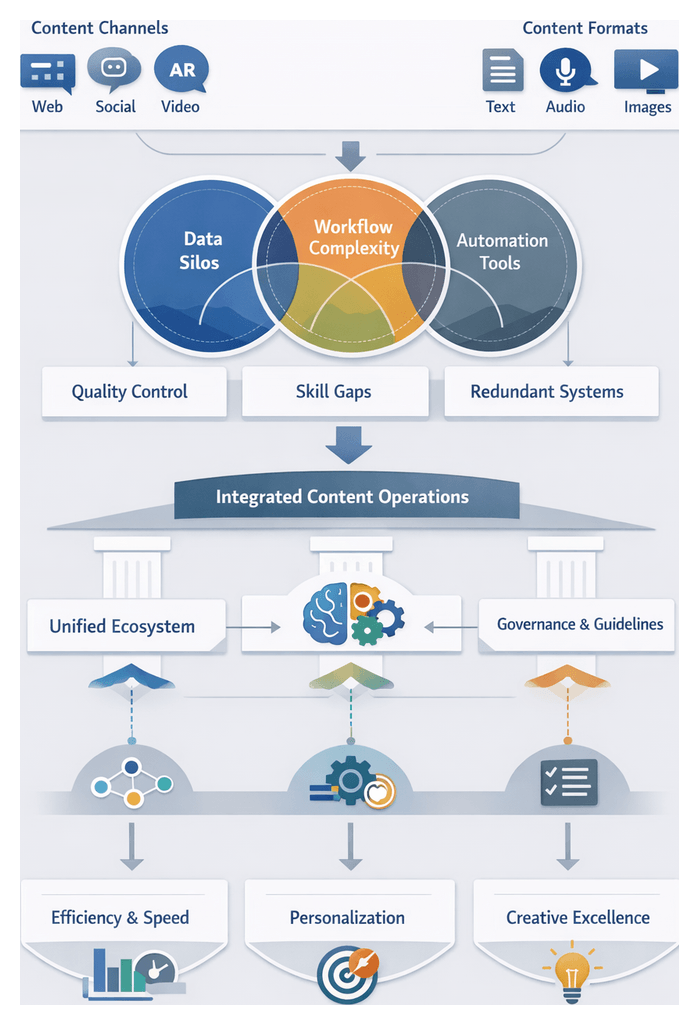

The creation and distribution of digital content today unfolds amidst a proliferation of channels, formats, and ever-rising audience expectations. Brands must craft narratives for social feeds, blogs, video platforms, podcasts, messaging apps, emails, white papers, interactive tools, and immersive experiences in augmented and virtual reality. Each channel demands unique storytelling approaches, visual assets, metadata strategies, and performance metrics. To sustain relevance and achieve scale, organizations have evolved from manual drafting and desktop publishing to content management systems like WordPress, Drupal and HubSpot, followed by marketing automation suites that enable rule-based personalization and scheduling. More recently, AI-driven platforms such as OpenAI, Copy.ai, Jasper and Grammarly have introduced natural language understanding, generation, and optimization capabilities, promising dramatic productivity gains.

Proliferation of Channels and Formats

- Social media: Short-form visuals, concise captions, interactive polls and stories to drive engagement and shares.

- Blogs and articles: In-depth narratives enriched with data visualizations, infographics, and embedded multimedia for thought leadership.

- Video content: Scripts, storyboards, subtitles and layered audio-visual storytelling optimized for platforms like YouTube and TikTok.

- Podcasts: Structured outlines, show notes and supporting resources to boost discovery, SEO and listener retention.

- Interactive guides: Quizzes, calculators, configurators and immersive modules that adapt to real-time user inputs.

This diversity places immense pressure on content teams to deliver optimized assets rapidly. Legacy editorial calendars and manual hand-offs often become bottlenecks, prompting the adoption of automated engines that can repurpose core messaging, generate localized variants and enforce style guidelines at scale.

Evolution of Content Production Methods

Content workflows have progressed in stages. Early models relied on manual drafting, siloed reviews and desktop publishing. The emergence of CMS platforms streamlined publishing and templating, while marketing automation enabled bulk campaigns and simple personalization rules. Programmatic content further allowed dynamic data insertion—user location, behavior and demographics—into preapproved templates, boosting relevance yet lacking creative nuance. The latest wave introduces AI-driven agents that draft coherent text across topics, optimize headlines for SEO, recommend imagery based on semantic analysis and perform translations for global audiences.

Standalone tools such as Copy.ai and Jasper and integrated modules within enterprise suites now handle first drafts, A/B headline tests and variant creation. Despite these capabilities, many organizations deploy AI in isolated pockets—one team uses an AI for email subject lines, another relies on human editors for press releases—leading to redundant efforts, inconsistent brand voice and quality variances.

Integration Challenges Across Systems and Teams

- Siloed Tool Ecosystems: Disconnected platforms impede seamless asset hand-off and unified reporting.

- Data Fragmentation: Inconsistent metadata and scattered audience profiles limit personalization fidelity.

- Process Misalignment: Manual approval gates stall automated drafts, while unsupervised AI output may stray from brand guidelines.

- Quality Assurance Gaps: AI can generate errors or biased content that require human review for factual accuracy and legal compliance.

- Change Management Resistance: Creative teams may perceive AI as a threat, underutilizing its potential.

- Technical Debt: Legacy systems often lack APIs or plugin architectures needed for real-time AI integration.

Addressing these hurdles demands cross-functional collaboration among IT, marketing, legal and creative departments. Shared objectives, clear governance and continuous feedback loops unite stakeholders around integration milestones, ensuring that automated capabilities amplify rather than fragment brand consistency.

Creative Synergy: Balancing AI and Human Ingenuity

Creative synergy emerges when AI agents and human creators contribute complementary strengths—algorithmic efficiency, data-driven insights and generative power alongside emotional intelligence, cultural context and storytelling craft. Rather than replacing human ingenuity, AI augments human judgment, stimulating ideation, accelerating iteration and handling routine tasks. Humans, in turn, refine AI outputs, inject strategic intent and ensure that narratives resonate authentically with target audiences.

Interpretive Perspectives on Collaboration

- Augmented Intelligence: AI as a cognitive amplifier, surfacing latent patterns, accelerating research and automating repetitive tasks, freeing creatives for strategic thinking.

- Co-Creative Agents: Generative algorithms act as partners, proposing narrative arcs, stylistic variations or visual concepts that humans shape and contextualize.

- Human-in-the-Loop Governance: Embedded checkpoints where human oversight adjudicates AI recommendations, essential in regulated or brand-sensitive contexts to maintain compliance and ethical standards.

Analytical Frameworks for Assessing Synergy

Collaboration Maturity Model

- Ad Hoc Automation: Isolated AI pilots without governance or integration.

- Defined Processes: AI roles codified within workflows, basic performance tracking.

- Managed Collaboration: Continuous feedback loops between AI outputs and human review, supported by collaborative tooling.

- Optimized Co-Creation: Dynamic adaptation of models based on human input, co-design of prompts and parameters.

- Strategic Synergy: Full integration of AI and human workflows within enterprise content strategy, driving continuous innovation.

Value Co-Creation Framework

- Resource Integration: Algorithmic data processing combined with human expertise to yield differentiated content.

- Actor Roles: Clear division—AI handles sentiment analysis, humans craft narratives—ensuring mutual reinforcement.

- Value Propositions: Benefits such as personalized content at scale, interactive storytelling and rapid experimentation.

- Experience Co-Creation: Audience engagement shaped by AI recommendations and human storytelling techniques.

Decision-Action Feedback Loop

- Iteration Velocity: Speed of AI-generated proposals, human review turnaround and AI refinement.

- Decision Accuracy: Rate of AI outputs approved without modification, indicating alignment with human preferences.

- Learning Amplification: Improvement in AI performance through human feedback, measured by reduced revision rates and enhanced output quality.

Managing Interpretive Tensions

- Authenticity vs. Scale: Preserving human voice and emotional resonance while scaling production.

- Control vs. Autonomy: Balancing centralized governance of AI outputs with creative freedom.

- Innovation vs. Consistency: Enabling exploration of generative models without violating brand standards.

- Transparency vs. Efficiency: Disclosing AI involvement can foster trust but may complicate workflows.

Periodic stakeholder reviews and audience testing panels help navigate these tensions, ensuring that hybrid content meets standards for authenticity, creativity and alignment with strategic goals.

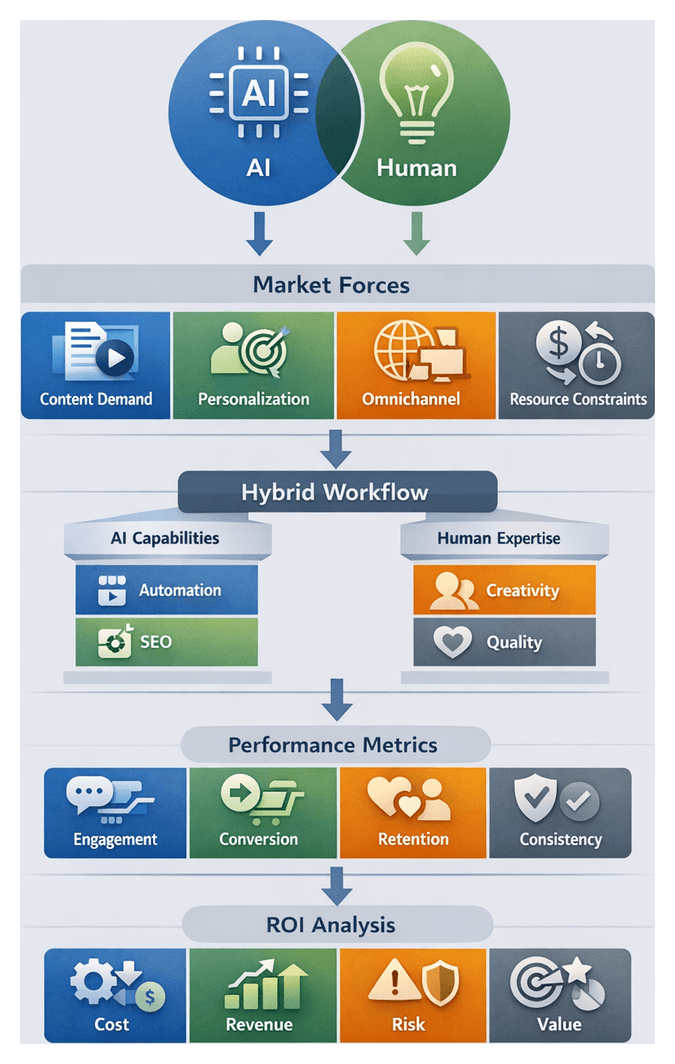

Market Imperatives and Emerging Technologies

Enterprises today face relentless market pressures: surging content volumes, demand for hyper-personalization, cost optimization imperatives and algorithmic complexity. Audiences expect timely, contextually relevant communications across every touchpoint. Automated content generation addresses throughput but often lacks the nuance needed for sustained engagement. AI-human synergy offers a strategic path forward, combining real-time data insights with human editorial expertise to craft semantically rich narratives that perform well in search and recommendation algorithms.

Cost and Performance Drivers

Budgets are scrutinized for measurable ROI. AI agents excel at drafting routine updates, generating data-driven headlines and performing keyword analysis, reducing manual effort and cycle times. Human editors and creative directors are indispensable for ensuring messaging aligns with brand strategy, cultural sensibilities and legal requirements. Hybrid models allocate repetitive tasks to AI while reserving complex editorial judgment for specialized talent, optimizing costs without compromising quality.

Key AI-Driven Tools and Platforms

- ChatGPT for conversational ideation, rapid draft iterations and prompt-based refinement.

- Jasper AI for persona-based templates, tone adaptation and variant generation.

- Adobe Firefly for text-to-image generation and design asset creation.

- Canva Magic Write for integrated generative writing in collaborative design workflows.

- Microsoft Copilot for AI assistance across productivity applications and document authoring.

- Google Cloud AI suite for enterprise-grade AI services embedded in content management systems.

Forward-looking organizations integrate these tools into unified creative ecosystems that ensure interoperability, govern version control and preserve asset traceability from ideation through publication.

Competitive Dynamics and Industry Trends

Early adopters of AI-human frameworks accelerate campaign launches by up to 40 percent and demonstrate higher engagement and retention. Agencies and in-house studios face CTOs and CFOs demanding evidence of impact on KPIs such as conversion, time-on-page and brand lift. Cross-functional governance committees align marketing, IT, legal and finance on AI investments, ethical guidelines and resource allocation. Meanwhile, digital-native competitors leverage micro-personalization, voice assistants and social commerce channels to experiment rapidly, compelling legacy brands to upskill teams in prompt engineering, model evaluation and data interpretation.

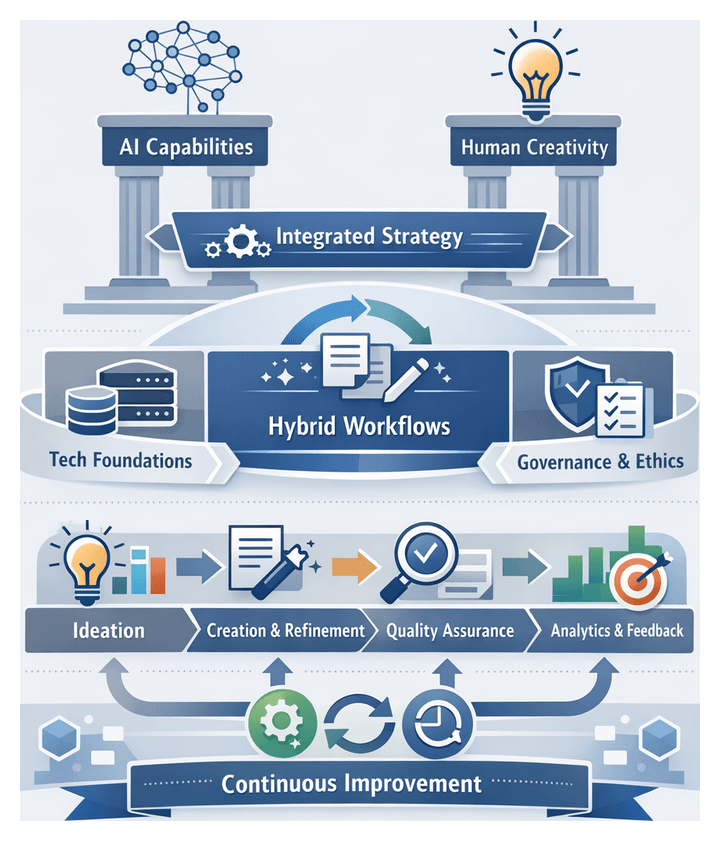

Strategic Frameworks for Integration, Governance and Future Readiness

Realizing the full potential of AI-human synergy requires structured decision-making tools, robust governance, continuous performance monitoring and a culture of experimentation. Organizations must align technological investments with strategic content objectives, ensure ethical and legal compliance, and equip teams with the skills to co-create effectively with AI.

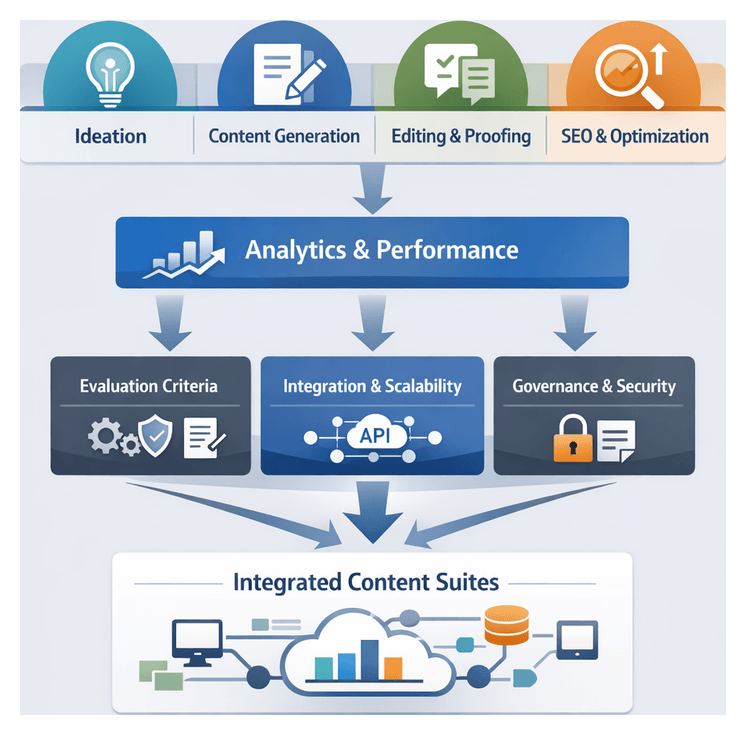

Analytical Frameworks for Resource Allocation

Value-Chain Mapping

Document the end-to-end content process—from ideation, research and drafting to review, distribution and analytics—to identify stages where AI augmentation delivers maximum efficiency and where human curation is essential.

Capability-Suitability Matrix

Plot AI strengths (speed, scalability, pattern recognition) against human strengths (nuance, originality, audience empathy) to assign tasks to the optimal collaborator.

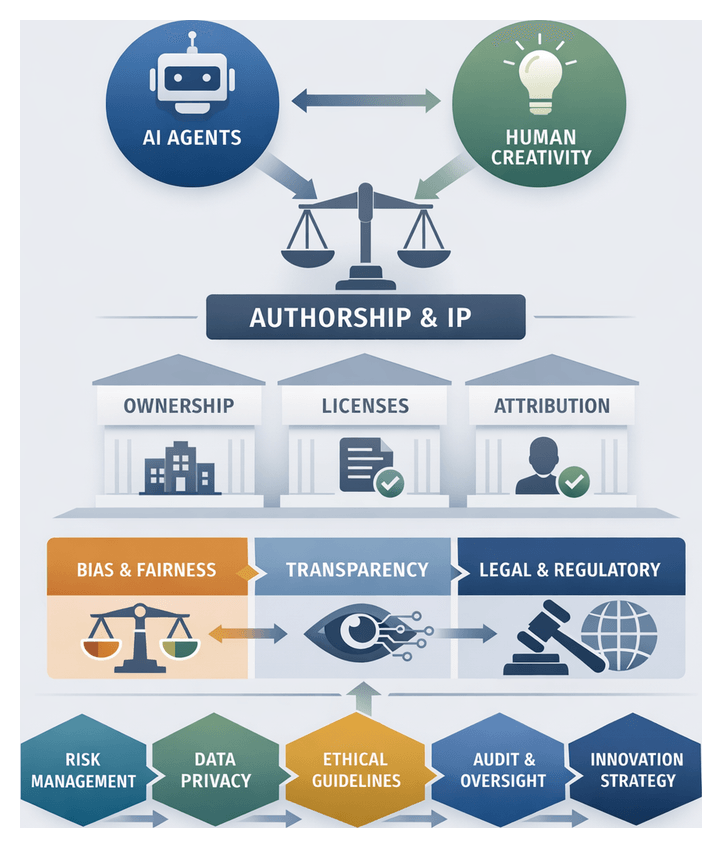

Governance and Risk Management

- Establish clear policies for authorship attribution, intellectual property and ethical AI usage.

- Implement rigorous review processes to detect bias, factual errors and tone inconsistencies.

- Form cross-functional review boards to oversee brand alignment, regulatory compliance and reputational risk.

Tool Selection and Interoperability

Choose AI platforms that integrate via APIs and plugins with existing content management and analytics systems to avoid workflow disruption. Consider flexible solutions such as ChatGPT for customizable integration and prompt management.

Performance Monitoring and Continuous Learning

- Track real-time engagement, conversion rates and brand lift to evaluate hybrid content effectiveness.

- Refine prompt designs, review workflows and training datasets based on analytics insights.

- Establish experiment labs and knowledge-sharing forums to pilot emerging AI modalities and share best practices.

Key Limitations and Risk Factors

Even advanced AI models can misunderstand context, exhibit cultural insensitivity or surface biased language influenced by training data. Overreliance on AI for novelty risks homogenization, as models recombine existing patterns rather than generate fundamentally original ideas. Robust human oversight, diverse training data and deliberate sessions of human-only brainstorming mitigate these risks.

Future-Focused Considerations

Advances in multimodal AI, adaptive co-authoring interfaces and immersive experiences will continue to reshape content creation. Organizations that maintain a learning mindset, engage proactively with ethical and regulatory developments, and contribute to industry standards will lead in crafting responsible, innovative and impactful storytelling experiences.

Summary of Strategic Insights

- An integrated view of where AI provides scale and where human creativity remains indispensable.

- Analytical tools—value-chain mapping and capability-suitability matrices—to guide task allocation.

- Governance frameworks and risk management processes to ensure quality, compliance and brand integrity.

- Recommendations for capability building, interoperable tool selection and performance monitoring.

- A roadmap for continuous adaptation to evolving AI capabilities and market dynamics.

By synthesizing these insights into clear strategies and governance structures, content leaders can architect adaptive, ethically grounded operations that harness both AI efficiency and human ingenuity for high-impact digital storytelling.

Chapter 1: The Evolution of Content Creation in the Digital Age

The Evolving Digital Content Ecosystem

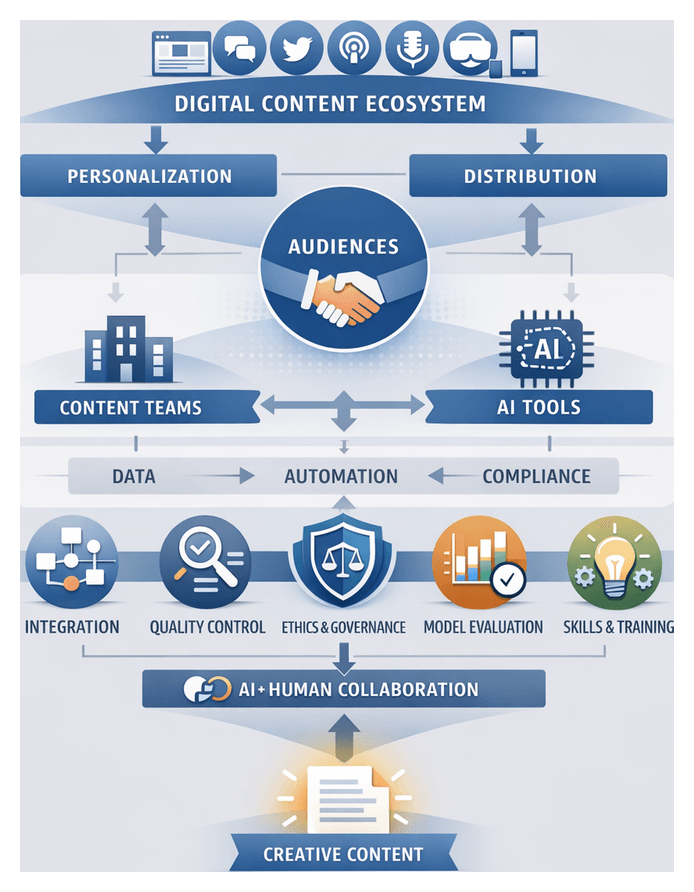

Organizations today operate within a hyperconnected environment in which digital content proliferates across diverse platforms—websites, blogs, social media networks, mobile applications, podcasts, video streaming services and immersive virtual events. Audiences expect personalized, on-demand experiences, placing pressure on brands and publishers to generate targeted content at scale. Each channel carries unique technical requirements, audience behaviors and performance metrics. Social networks such as LinkedIn, Facebook, Twitter and emerging platforms demand real-time posting and community engagement. Owned properties host long-form assets—whitepapers, e-books, case studies—designed to capture leads and establish thought leadership. Visual and audio formats, from YouTube videos and live streams to podcast series and short-form TikTok clips, cater to varied consumption preferences. Interactive environments in mobile apps and virtual events further elevate expectations for curated content and user participation.

This diversity of formats, frequencies and responsiveness creates a production environment where traditional manual processes struggle to keep pace. To maintain visibility and audience trust, organizations must balance breadth with depth—ensuring that content resonates in context while scaling volume efficiently.

Automation, AI Tools, and Integration Challenges

To meet escalating demands, enterprises have adopted automation technologies ranging from content management systems (CMS) and marketing automation platforms to advanced AI agents. Early solutions enabled scheduling, distribution and basic personalization. Recent advances in natural language processing and machine learning have produced specialized generative applications capable of:

- Producing initial drafts from outlines or prompts by leveraging models such as GPT-4 and Google’s Gemini;

- Optimizing headlines, meta descriptions and social snippets to improve SEO and engagement;

- Analyzing audience behavior and sentiment across platforms, feeding insights back into topic selection and tone calibration;

- Automating translation and localization workflows for global reach;

- Orchestrating multiple specialized agents for ideation, drafting and optimization;

- Streamlining collaborative review through platforms like Jasper and interactive assistants such as ChatGPT.

By automating routine or data-intensive tasks, these tools free human teams to focus on strategy, creativity and relationship building. Yet integrating these systems with human artistry introduces significant challenges:

- Maintaining Brand Voice and Quality: AI excels at consistent syntax but may lack contextual nuance. Human oversight is essential to preserve tone, values and storytelling conventions.

- Data Silos and Workflow Fragmentation: Disconnected point solutions for SEO, social scheduling or translation can lead to version conflicts and handoff delays.

- Governance and Compliance: Automated models may reproduce biases or sensitive content. Clear audit trails and governance workflows are imperative to manage risk.

- Role Clarity and Skill Gaps: As AI handles keyword research or draft generation, human roles must evolve toward strategic oversight, editorial leadership and prompt engineering.

- Technical Integration and Scalability: Robust APIs, middleware and data governance models are required for seamless collaboration between CMS, analytics platforms, automation engines and AI services.

Addressing these challenges demands a holistic approach: defining end-to-end content lifecycles, aligning stakeholders on quality standards, and establishing integrated operating models.

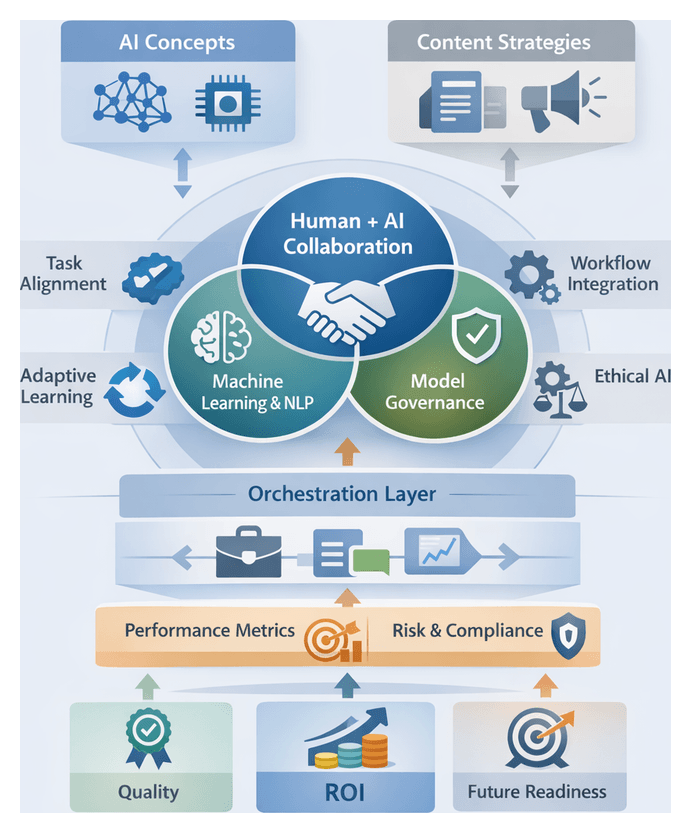

Models of AI-Human Creative Collaboration

Creative synergy refers to the dynamic collaboration between AI agents and human creators that yields content exceeding what either could achieve alone. Thought leaders increasingly frame AI as a catalyst that amplifies human capacity rather than a replacement. Three industry orientations illustrate this shift:

- Augmentation-First Approach: AI suggestions for headlines, story arcs or visual palettes integrate directly into creative environments. Editorial assistants propose alternative sentence structures and image-generation tools supply mood boards for human refinement.

- Co-Creation Frameworks: AI agents learn from author revisions, engaging in iterative dialogs with writers. Interactive media and game design teams use these frameworks to generate plot variations that evolve with human adjustments.

- Data-Driven Innovation: AI analyzes engagement metrics and sentiment data to inform human brainstorming. Predictive models identify trending topics and optimal publication schedules, while creatives ensure narrative vision and brand consistency.

To guide organizational design, experts propose interpretive frameworks:

- Complementarity Model: Allocates routine, data-intensive processes—keyword research, A/B testing, template copy—to AI, reserving cultural context, emotional resonance and strategic judgment for humans.

- Human-in-the-Loop Paradigm: Treats AI outputs as provisional drafts. Human editors validate, refine or reject suggestions to align content with brand voice and compliance standards.

- Adaptive Learning Cycle: AI models continuously learn from human corrections and style guidelines. Creators periodically recalibrate parameters and introduce new data sources, fostering co-evolution.

- Orchestration Architecture: A central layer routes content briefs to specialized modules—natural language processors, image synthesizers, sentiment analyzers—and aggregates outputs for human review, enabling scale with consistent governance.

Strategic Frameworks and Performance Metrics

Measuring AI-human synergy requires multidimensional metrics combining quantitative and qualitative lenses:

- Creative Impact: Assesses originality, narrative depth and emotional resonance through expert panels or audience scoring systems.

- Operational Efficiency: Tracks time-to-publication, throughput volumes and cost per asset, comparing hybrid workflows to human-only processes.

- Engagement Quality: Goes beyond click metrics to measure dwell time, interaction depth and social sentiment using tools like ChatGPT and Google’s Bard.

- Governance and Compliance: Monitors adherence to editorial, regulatory and ethical standards. Automated auditing tools flag biases, copyright issues and brand-voice deviations.

A dual-axis model helps teams map projects by operational metrics—content velocity, cost, throughput—and qualitative metrics—brand alignment, engagement depth, creative originality. This mapping informs whether tasks should be machine-driven volume or human-led narrative.

Content lifecycles typically span three phases: ideation and research, creation and synthesis, and review and refinement. AI agents excel in the first two phases by aggregating data, suggesting structures and producing draft variants. Human creators dominate the refinement phase—ensuring contextual appropriateness, verifying factual accuracy in regulated contexts, and infusing cultural sensitivity.

Embedding continuous feedback loops connects human edits and audience responses back into AI model fine-tuning and editorial guidelines, fostering an adaptive ecosystem of collective intelligence.

Sector-Specific Applications and Market Dynamics

The convergence of AI agents and human ingenuity has become a strategic imperative across industries. Firms adopting hybrid content models report up to a 40% reduction in production time and a 25% increase in engagement metrics. Recent technological enablers include GPT-4, Google’s Gemini, orchestration platforms like Jasper, and generative imagery tools such as Adobe Firefly.

Sector-specific impacts:

- Digital Media and Publishing: AI generates data briefs, topic synopses and real-time fact-checking, freeing journalists for investigative reporting.

- E-commerce and Retail: Automated product descriptions and dynamic promotional copy scale catalog updates, while human strategists refine messaging for key customer segments.

- B2B Marketing and Thought Leadership: Machine-assisted research aggregation supports whitepaper drafting, with experts injecting domain credibility and strategic framing.

- Financial Services and Insurance: AI ensures regulatory compliance in routine communications, enabling advisors to focus on personalized insights and relationships.

- Education and Training: Intelligent tutoring systems personalize learning pathways, complemented by instructional designers who embed pedagogical nuance.

Competitive differentiation arises from a two-stage model: machine-driven volume followed by human-led refinement. This blend delivers freshness and relevance without sacrificing brand distinction.

Governance, Ethics, and Implementation Considerations

Effective implementation requires proactive governance, ethical safeguards and careful change management:

- Governance Frameworks: Define roles, responsibilities and decision rights across AI agents and human creators. Establish prompt approval protocols, editorial review gates and escalation paths for quality exceptions.

- Ethical Safeguards: Conduct bias audits, enforce data provenance standards and maintain transparency reports. Declare AI-assisted content and document audit trails to uphold audience trust.

- Technical Integration: Ensure interoperability between CMS, analytics platforms and AI engines. Anticipate challenges when connecting tools such as Copy.ai and Jasper, addressing latency, data silos and security vulnerabilities.

- Talent Development: Realign team structures around emerging roles—prompt engineering, data analysis, AI ethics. Offer structured training to bridge skill gaps and foster collaborative mindsets.

- Scalability Roadmaps: Adopt phased rollouts, pilot high-velocity content zones before enterprise-wide deployment. Use dual-track pilots to compare AI integration in social media campaigns versus long-form thought leadership.

- Limitations and Risks: Acknowledge boundary conditions—contextual accuracy in regulated industries, overreliance on automation, QA overhead and the tension between scalability and customization.

By institutionalizing governance mechanisms, embedding continuous feedback loops and fostering a culture of collaborative innovation, organizations can harness AI’s scalability without relinquishing the human spark that drives memorable storytelling.

Chapter 2: Understanding AI Agents and Their Capabilities

The Modern Digital Content Ecosystem

The volume and variety of digital content have surged as audiences engage across web pages, social media, mobile apps, podcasts, video platforms and emerging immersive environments. Static HTML pages and basic content management systems have given way to dynamic blogs, micro-content streams and multimedia pipelines spanning video, audio, interactive graphics and even augmented reality. With each new device—from smart speakers to wearable displays—content teams juggle distribution, personalization and compliance across an ever-expanding landscape.

Consumers now expect data-driven, personalized messaging delivered at precisely the right moment on their preferred channels. Marketing, editorial and product teams compete to satisfy these expectations while preserving brand voice, quality standards and regulatory compliance. Traditional human-only workflows often buckle under these demands, prompting organizations to adopt automated tools to boost efficiency without sacrificing creativity or authenticity.

AI-Driven Automation: Tools and Integration

Automated systems have moved from experimental pilots to core components of modern content operations. Natural language generation engines power first drafts of blog posts, product descriptions and email campaigns. Machine learning modules analyze performance metrics, suggest topics, and optimize distribution tactics in real time. Automated proofreading and style-checking tools enforce brand guidelines and accelerate review cycles.

Leading platforms such as OpenAI GPT-4 and Adobe Sensei provide APIs and pre-built integrations for popular content management systems. Marketing automation suites embed AI-driven segmentation, personalization and predictive analytics. Yet deploying these services requires careful configuration, training on domain-specific data, and seamless connectors to legacy repositories, editorial calendars, analytics stores and customer profiles.

- Data Silos and Fragmented Systems: Integrating AI across isolated media asset libraries, editorial plans and customer profiles demands both technical glue and organizational alignment.

- Quality Assurance: Automated drafts can contain factual errors or tone mismatches. Robust review frameworks are essential to ensure accuracy and brand consistency.

- Workflow Alignment: Embedding AI tools often requires redefining roles and retraining staff. Change management strategies help overcome resistance and unlock benefits.

- Governance and Compliance: Policies must address intellectual property, data usage, attribution and regulatory requirements for AI-generated content.

- Talent and Skills: New roles such as prompt designers and AI content curators bridge editorial expertise and technical fluency.

- Bias and Ethical Risks: Models trained on unbalanced data can perpetuate stereotypes. Bias audits, dataset diversification and ethical guardrails are critical.

Evaluating and Selecting NLP and ML Solutions

Organizations employ quantitative benchmarks and human-centered evaluations to assess NLP models. Key metrics include:

- Perplexity and Cross-Entropy: Measure a model’s confidence in predicting text sequences. Lower scores indicate greater fluency.

- BLEU and ROUGE: Gauge overlap between machine outputs and human references for translation and summarization tasks.

- Precision, Recall and F1: Evaluate classification and information extraction accuracy.

- Human Evaluation Panels: Expert reviewers assess clarity, tone and cultural appropriateness beyond statistical metrics.

Comparative benchmarks across architectures guide tool selection:

- Transformer-Based Models: GPT-4, T5 excel at coherent generation, conversational agents and creative writing.

- Encoder-Decoder Frameworks: BART, Pegasus specialize in abstractive summarization and translation.

- Contextual Embedding Models: BERT, RoBERTa power classification, sentiment analysis and metadata tagging.

- Retrieval-Augmented Generation: Combines external knowledge bases with generative models to enhance factual accuracy in regulated contexts.

Strategic frameworks align technology with content objectives:

- Capability-Maturity Grid: Plots tools by linguistic fidelity, domain adaptability and production readiness.

- Use-Case Fit Analysis: Matches architectures to tasks such as ideation, drafting, localization or analytics.

- Resource Footprint Assessment: Weighs computational costs, latency and infrastructure requirements. Cloud-native APIs like Hugging Face Transformers and IBM Watson are benchmarked for scalability and ease of integration.

- Governance and Compliance Filters: Ensures model selection aligns with privacy regulations, bias mitigation and auditability needs.

Embedding AI Agents in Creative Workflows

AI agents have become strategic imperatives in creative environments—marketing agencies, newsrooms, e-learning studios and corporate communications. These systems catalyze ideation, amplify human talent and optimize output. Expert frameworks include:

- Creative Augmentation Model: Positions agents on a spectrum from ideation support to near-autonomous generation, defining required human oversight.

- Human-in-the-Loop Paradigm: Embeds continuous feedback loops where human judgment refines AI suggestions to preserve nuance and brand voice.

- Sociotechnical Systems View: Examines the interplay of technology, organizational structure and human behavior.

- Capability Maturity Matrix: Maps stages from template-based drafting to context-aware narrative construction, guiding investment priorities.

Deployment spans sectors:

- Marketing and Advertising: Rapid A/B testing of copy variants, persona development and keyword optimization at scale.

- Media and Publishing: AI summarization and data visualization accelerate news workflows and surface emerging stories.

- E-Commerce and Retail: Personalized product descriptions and campaign messaging trained on consumer behavior data boost conversions.

- Education and E-Learning: Adaptive lesson content, formative assessments and scenario narratives aligned to learner progress.

- Corporate Communications: Automated draft generation for newsletters, executive speeches and stakeholder reports, paired with sentiment analysis.

Managing Risks: Governance, Ethics, and Oversight

AI agents operate within evolving ethical and regulatory frameworks addressing data privacy, intellectual property and disclosure requirements. Effective oversight models balance automation and human control through:

- Governance Structures: Clear decision rights and accountability channels for AI-human collaboration.

- Ethical Guardrails: Policies on data provenance, authorship attribution and transparency to uphold trust.

- Explainability Protocols: Feature-importance analyses, attention visualizations and model cards for auditability.

- Bias Mitigation: Quantitative disparity scores, dataset diversification and diverse focus groups to detect and correct bias.

- Validation Archetypes: Advisory (brainstorming partner), validation (automated reviewer), augmentation (task execution under supervision).

Domain practitioners recognize that AI’s semantic comprehension is statistical, not experiential. Surface-level coherence may mask gaps in contextual understanding, cultural sensitivity and substantive reasoning. Human review—particularly in regulated industries such as finance, healthcare, legal and pharmaceuticals—is therefore indispensable.

Building a Sustainable Hybrid Model

The most effective content strategies combine AI proficiency with human creativity under clear governance. Key strategic considerations include:

- Stakeholder Buy-In: Engage creatives, legal teams and executives in pilot programs to define success criteria and foster collaboration.

- Performance Metrics: Develop KPIs capturing efficiency gains, quality improvements, audience engagement and compliance.

- Change Management: Provide training, knowledge-sharing forums and incentives to encourage experimentation while preserving core creative values.

- Continuous Monitoring: Implement feedback loops to track error rates, user sentiment and model drift, enabling iterative refinement.

- Infrastructure Planning: Balance on-premises clusters with elastic cloud resources. Model quantization and selective offloading optimize cost and latency.

- Knowledge Management: Centralize content libraries, taxonomies and metadata to support cross-functional reuse and ongoing AI training.

By foregrounding analytical rigor, ethical stewardship and collaborative cultures, organizations can harness AI’s transformative potential without ceding creative control. Hybrid workflows unlock scalability, resilience and strategic agility—positioning content operations as a driver of brand value, customer engagement and revenue growth in the digital age.

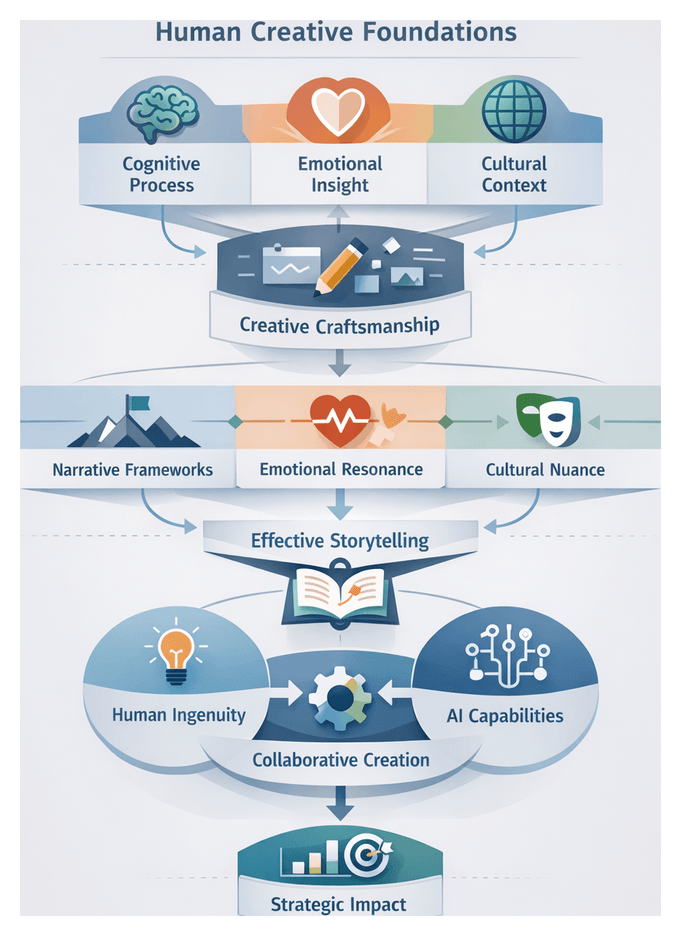

Chapter 3: The Essence of Human Creativity in Writing

Human Creative Foundations

At the core of compelling content lies a uniquely human capacity to blend cognitive rigor, emotional insight and cultural awareness. This creative foundation extends beyond assembling words or data—human authors exercise judgment, intuition and lived experience to craft narratives that resonate, inspire and endure. Understanding these cognitive and expressive pillars is essential for organizations seeking depth, authenticity and strategic impact in their content.

Cognitive Drivers of Creativity

Human creative thought unfolds through interrelated mental processes that enable ideation, problem solving and narrative construction. Key components include:

- Divergent Thinking: Generating multiple possibilities rather than a single solution, opening pathways for originality.

- Associative Memory: Drawing on past experiences and knowledge to form unexpected connections between ideas.

- Analogical Reasoning: Applying patterns from one domain to enrich concepts in another, fostering layered meaning.

- Metacognition: Reflecting on one’s own thinking to refine ideas, mitigate biases and guide creative choices.

By balancing exploration with critical evaluation, human creators navigate complexity and select concepts that align with strategic goals.

Emotional and Contextual Insight

Emotion infuses content with resonance. Empathy enables writers to inhabit audience perspectives, tailoring tone, pacing and anecdotes to evoke curiosity, trust or urgency. Emotional regulation ensures that passion enhances rather than overwhelms clarity. Equally vital is cultural nuance: human authors adapt language to regional idioms, professional jargon and evolving trends, weaving metaphors and symbols that draw on shared histories and societal norms. This deep contextual attunement nurtures trust and relevance in ways purely algorithmic systems cannot fully replicate.

Iterative Craftsmanship and Authentic Voice

True creativity thrives through cycles of ideation, critique and refinement. Initial concepts evolve through peer review, audience testing and editorial scrutiny. Editors make stylistic choices—word selection, sentence rhythm and narrative voice—that elevate clarity and reinforce brand personality. Personal voice and authenticity emerge when authors integrate their values, experiences and anecdotes, forging a human connection that builds loyalty. Ethical stewardship underpins every creative decision, guiding fairness, representation and transparency to maintain credibility and respect diverse perspectives.

Storytelling and Emotional Nuance

Storytelling is the vehicle through which ideas gain emotional depth and cultural significance. Human authors structure narratives that engage audiences from opening to resolution, employing frameworks and emotional arcs that transcend mere information delivery.

Narrative Architecture

Creators draw on classic and contemporary models to frame content strategically:

- Hero’s Journey: Positioning brands or individuals as protagonists overcoming challenges and driving transformation.

- Problem–Solution Arc: Establishing empathy through shared pain points, then showcasing unique value propositions.

- Anthropological Storytelling: Leveraging cultural metaphors and archetypal motifs to deepen audience resonance.

Selecting and adapting these architectures requires contextual judgment to align narrative structures with organizational goals and audience expectations.

Emotional Resonance

Emotional drivers engage attention, shape memory and motivate action. Content strategists apply insights from Emotional Contagion Theory, Affective Neuroscience and Brand Emotion frameworks to craft subtext, tension and release. While sentiment analysis and A/B tests can validate impact, the initial creation of nuanced emotional beats remains an interpretive art that relies on empathy, cultural literacy and lived experience.

Cultural Context and Interpretive Frameworks

Effective narratives navigate cultural ecosystems where symbols, tropes and conventions carry diverse meanings. Human practitioners use Intercultural Communication theory, Cultural Semiotics and ethnographic Contextual Inquiry to ensure authenticity and avoid misinterpretation. They embed intentional ambiguity, symbolic layering and rhetorical devices that invite audiences to co-construct meaning—an engagement depth beyond current AI capabilities.

Building Trust Through Story

Trust emerges from transparency, consistency and authenticity. Human authors incorporate verifiable data, clear attribution and personal anecdotes as authenticity signals. Editorial review and peer feedback loops validate narrative integrity, while ethical guidelines ensure balanced perspectives and prevent manipulative tactics. This conscientious approach reinforces credibility across channels and strengthens audience relationships.

AI-Human Creative Synergy

Blending human ingenuity with AI-driven tools creates a co-creative model where each party amplifies the other’s strengths. Rather than replacing human insight, AI agents handle routine tasks and data synthesis, freeing creators to focus on narrative strategy, emotional nuance and judgment.

Conceptualizing Synergy

Creative synergy evolves from tool-centric automation to collaborative partnerships. AI accelerates research, draft generation and pattern recognition, while humans contextualize outputs, refine tone and ensure strategic alignment. This reciprocal workflow enhances scalability without sacrificing brand voice or emotional depth.

Industry Frameworks

- Complementary Roles Model: Assigning research, data analysis and rapid iteration to AI, and contextual judgment, narrative design and ethical oversight to human experts.

- Orchestration Framework: Treating AI agents—such as GPT-4—and platforms like Jasper AI as instrumental sections in a creative orchestra, with human strategists conducting overall thematic direction.

- Human-in-the-Loop Continuum: Embedding continuous feedback loops in tools like ChatGPT, where human reviewers guide generative models through iterative prompts and brand-specific adjustments.

- Value-Creation Matrix: Mapping tasks by innovation and repeatability—automating high-volume summaries and reports, while reserving bespoke storytelling for human teams supported by AI-driven research and sentiment analysis.

Measuring Impact

Successful synergy balances efficiency, quality and strategic impact:

- Efficiency Metrics: Cycle time reduction and cost per asset—AI often cuts drafting time by 30–50 percent, allowing human talent to focus on high-value work.

- Quality Metrics: Brand voice consistency and error rates—tools like Grammarly enforce style guidelines, while human editors validate emotional resonance and cohesion.

- Strategic Impact Metrics: Engagement lift, conversion rate and share of voice—hybrid content frequently outperforms fully manual or automated outputs in click-through and social amplification.

A balanced scorecard ensures that efficiency gains do not compromise narrative quality or audience trust.

Governance and Adoption Strategy

Implementing AI–human workflows requires cross-functional collaboration among content operations, data science and legal teams. Defining decision rights, quality standards and compliance thresholds establishes clear handoff points where AI outputs undergo human review and augmentation. Pilot projects yield insights that inform tool selection, scaling strategies and talent development, enabling organizations to evolve alongside advances in generative AI while preserving creative leadership.

Strategic Considerations and Future-Proofing

Maximizing the value of human creativity and AI synergy demands nuanced strategy, continuous evaluation and adaptive organizational design.

Balancing Novelty and Familiarity

Innovative ideas must align with audience expectations and brand coherence. Too much novelty can alienate followers; too little risks blending into a crowded landscape. Creative teams reconcile this by anchoring fresh concepts in recognizable brand signals and audience values, ensuring both differentiation and relevance.

Ethics and Authenticity

Authenticity is an ethical imperative and strategic differentiator. Human teams uphold transparency, consent and accurate attribution, especially when handling personal stories or sensitive topics. Embedding ethical review processes in workflows safeguards reputation and aligns content with corporate values.

Expertise, Scalability and Integration

High-value content often demands deep domain knowledge—legal, medical or financial expertise—that transcends AI’s pattern-based outputs. Organizations must allocate human specialists to tasks requiring interpretive frameworks and scenario analyses, while leveraging AI for routine updates, data summaries and preliminary research. Clear integration points and handoff protocols ensure that AI-generated suggestions are contextualized and refined by domain experts.

Organizational Design and Continuous Evolution

Human creativity flourishes in environments that foster autonomy, cross-functional collaboration and psychological safety. Leaders should assemble teams combining veteran storytellers, data analysts and cultural consultants. Decision matrices guide when to deploy premium creative resources versus AI-assisted workflows. Regularly reviewing performance metrics—engagement depth, sentiment analysis and conversion rates—enables strategic recalibration, ensuring that resource allocation adapts to audience feedback and market dynamics. Investing in training for narrative design, visual storytelling and ethical stewardship equips human talent to lead in an AI-augmented future.

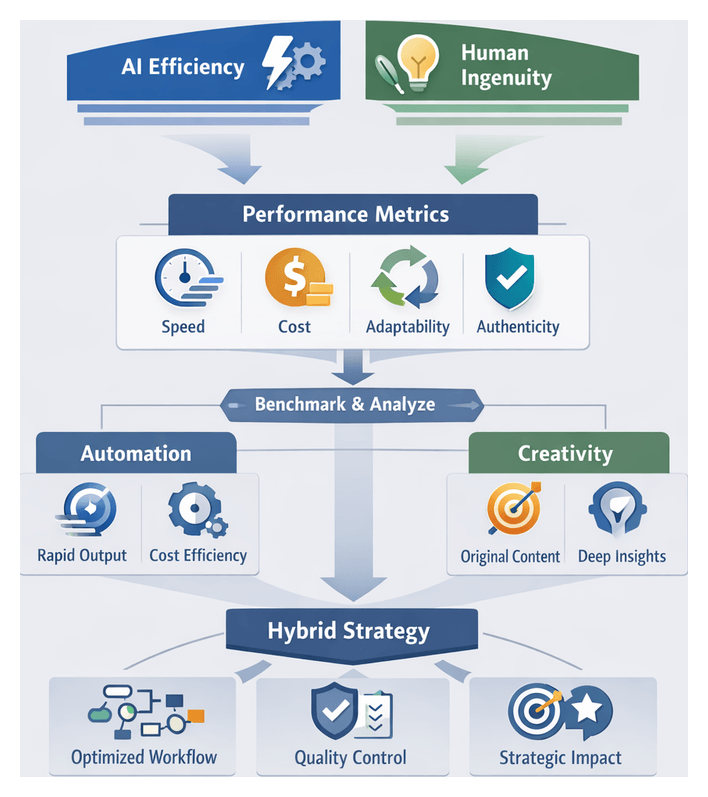

Chapter 4: Comparative Analysis - AI Efficiency Versus Human Ingenuity

Contextualizing Efficiency and Ingenuity Metrics

Organizations producing digital content must navigate the dual imperatives of operational speed and creative depth. As AI-driven systems such as GPT-4 and Jasper AI automate routine tasks, decision-makers require a clear evaluative framework. Efficiency captures measurable gains in productivity and cost savings, while ingenuity reflects human contributions to narrative originality, emotional resonance, and strategic framing. By defining precise metrics for these dimensions, teams can align investments, optimize workflows, and choose whether to deploy automated agents or human experts for each content initiative.

Defining Core Performance Metrics

A robust framework rests on four primary metric categories that balance operational performance with creative quality:

- Speed: Time from task initiation to published deliverable, including draft generation and revision cycles.

- Cost: Total resource expenditure, spanning subscription fees, compute costs, salaries, and overhead.

- Adaptability: Ease of repurposing content across formats, channels, and audience segments.

- Authenticity: Alignment with brand voice, narrative originality, and emotional impact.

Anchoring analysis in these categories enables balanced assessment of AI’s throughput advantages against the nuanced capabilities of human creativity.

AI Efficiency in Content Workflows

Modern AI agents excel at rapid output and standardized formatting. Platforms like GPT-4 and Jasper AI generate headlines, outlines, and full drafts within seconds, reducing time to first draft by orders of magnitude. Efficiency measurement involves tracking turnaround times for defined tasks, such as metadata creation or SEO-driven paragraphs, and comparing them to human benchmarks.

Cost analysis aggregates subscription and compute expenses, yielding indicators like cost per word and cost per finalized draft. In adaptability, AI systems produce multiple content variants—blog posts, email subject lines, ad copy—from a single prompt. Teams measure the ratio of AI-generated variants that require no manual edits. Authenticity assessment involves brand compliance checks against style guides, where AI outputs often fall short, indicating the necessity of human review for tone consistency and narrative alignment.

Human Ingenuity and Depth of Insight

Human authors contribute domain expertise, cultural awareness, and storytelling finesse that machines cannot replicate. Although the initial drafting process is slower—requiring research, interviews, and iterative editing—these investments yield high-stakes deliverables such as white papers, thought leadership pieces, and brand manifestos.

Cost components include salaries, benefits, and ongoing professional development, varying with specialization and project complexity. Human adaptability is evident in seamless integration of qualitative research and stakeholder feedback. Authenticity metrics, including narrative coherence, voice consistency, and cultural sensitivity, typically favor human authorship.

To quantify ingenuity, organizations deploy:

- Peer review scores on analytical rigor and originality.

- Audience engagement metrics—time on page, social shares, sentiment analysis.

- Qualitative feedback from focus groups and expert panels.

Comparative Benchmarking and Workflow Design

Benchmarking requires side-by-side comparisons of AI and human outputs using uniform criteria. Typical paired tasks might involve generating a 500-word blog post via an AI agent and commissioning an expert writer for an equivalent piece. Key indicators include time to first and final draft, cost per draft, number of revisions, and post-publication performance metrics. Aggregating data across content types produces baseline values to guide hybrid workflow design.

Foundational insights for hybrid workflows include:

- Task segmentation by metric thresholds: Assign high-volume, low-complexity tasks to AI and reserve nuanced narrative development for human teams.

- Quality checkpoints: Implement human review stages for any content where authenticity is critical.

- Iterative prompt calibration: Use performance feedback to refine AI prompt structures and improve alignment with brand standards.

- Skill development: Train writers in prompt engineering and AI oversight to enhance strategic contributions.

Strategic Content Roles and Contextual Suitability

Optimal division of labor depends on content objectives, risk profiles, and resource capabilities. Critical contextual considerations include:

- Market-Driven Volume: In e-commerce, news media, and financial services, high-volume demands favor AI platforms like GPT-4 and Jasper AI for rapid generation of product descriptions, updates, and reports. Human specialists oversee brand storytelling and validate emerging cultural nuances.

- Regulatory Compliance: Sectors such as healthcare, pharmaceuticals, and banking require precise terminology and disclaimers. Tools like Compliance.ai scan documents for non-compliant language, while legal experts interpret policy shifts and retain decision-making authority.

- Creative Branding: Emotional engagement campaigns demand narrative layering, cultural literacy, and symbolic language. AI drafts may offer headline variants or brainstorming prompts, but human teams craft and refine brand ethos through metaphors and allegories.

- Data-Intensive Personalization: Marketing platforms such as Optimizely and Dynamic Yield deliver AI-driven content recommendations and real-time A/B testing. Strategists define segmentation logic, audit outputs for fairness, and balance personalization with data privacy regulations.

- Crisis Communication: In emergencies, AI tools like Crisp monitor social sentiment and draft initial statements. Communication specialists ensure authenticity, ethical sensitivity, and adherence to organizational values in final messaging.

- Interactive Experiences: Conversational platforms such as Dialogflow and Rasa handle routine user interactions, while human agents manage escalations and deliver domain-expert responses.

This mapping aligns task complexity, creative depth, compliance demands, time sensitivity, and ethical risk with the strengths of AI and human contributors.

Governance, Quality Control, and Cost Considerations

Maintaining brand integrity and regulatory compliance requires robust governance frameworks. Key elements include:

- Multi-stage reviews: AI drafts undergo human editing for factual accuracy, tone alignment, and bias mitigation.

- Prompt templates and content filters: Guardrails that constrain AI outputs within approved style and compliance parameters.

- Performance analytics: Dashboards that monitor throughput, engagement, and anomaly detection to inform continuous refinement.

- Transparency policies: Disclosures of AI usage where appropriate to preserve audience trust and meet evolving regulations.

Cost analysis must account for AI subscription fees, integration and fine-tuning expenses, and human resource investments in talent acquisition and training. Hybrid deployments optimize total cost of ownership by automating standardized tasks and directing human expertise toward innovation and brand differentiation.

Limitations, Mitigation, and Continuous Improvement

AI capabilities remain bounded by training data quality and algorithmic design. Common limitations include:

- Bias and outdated information: Unchecked training corpora can perpetuate stereotypes or present obsolete facts.

- Creative boundaries: Machines struggle with truly novel metaphors, cultural subtleties, and emotional depth.

- Over-automation risks: Excessive reliance on AI can erode brand uniqueness and audience trust.

Mitigation strategies encompass:

- Regular model updates: Incorporate sector-specific data and brand lexicons to minimize informational gaps and stylistic drift.

- Bias audits: Apply frameworks such as recommendations from the AI Now Institute to detect and address unintended prejudices.

- Human challenge sessions: Encourage writers to critique AI suggestions, propose original ideas, and inject proprietary research.

- Feedback loops: Capture performance data to refine prompts, model inputs, and editorial processes over time.

Decision Frameworks for Hybrid Deployment

Strategic content allocation benefits from a structured decision matrix. Key steps include:

- Assess Content Complexity: Rate initiatives on a spectrum from formulaic to narrative-rich.

- Evaluate Volume Requirements: Estimate output scale and parallelization needs.

- Determine Risk and Compliance Levels: Identify regulatory or reputational stakes.

- Allocate Resources: Assign routine, high-volume tasks to AI agents and strategic, high-impact projects to human experts.

- Monitor and Iterate: Use speed, adaptability, authenticity, and engagement metrics to refine the hybrid model continuously.

This framework ensures that each content initiative leverages the optimal blend of AI efficiency and human ingenuity.

Strategic Compass for AI-Human Collaboration

By integrating precise efficiency and ingenuity metrics, organizations can craft hybrid workflows that deliver both scale and distinction. AI platforms accelerate repetitive processes and surface data insights, while human talent infuses content with strategic framing, emotional resonance, and ethical judgment. Continuous benchmarking, governance, and iterative calibration enable teams to capitalize on emerging AI capabilities without compromising the authenticity and creative depth that underpin lasting audience engagement and brand equity.

Chapter 5: Striking the Optimal Balance with Hybrid Workflows

The Evolving Digital Content Landscape

Over the past decade, the proliferation of digital channels has transformed content production into a complex, multi-dimensional ecosystem. Websites, blogs, social media platforms, video services, newsletters, podcasts, interactive applications, and immersive environments now coexist, each with unique formatting requirements, audience behaviors, and performance metrics. Audiences expect seamless experiences across devices, demanding relevance, timeliness, and personalization. In response, content teams navigate a continuous cycle of planning, production, distribution, analysis, and iteration, all under pressure to scale while upholding quality and brand integrity.

Several key trends have intensified both the volume and complexity of content needs:

- Personalization at Scale: Real-time segmentation and AI-driven dynamic content assembly tailor messaging to individuals’ interests, locations, and stages in the customer journey.

- Multimedia Integration: Campaigns often blend text, imagery, video, audio, interactive graphics, and augmented reality, demanding coordinated workflows across disciplines.

- Rapid Turnaround: Real-time marketing and news cycles compress ideation, approval, and publication timelines, heightening demand for automated support.

- Global Reach: Localization and translation workflows multiply the number of content variants to serve diverse markets and languages.

- Data-Driven Optimization: Performance analytics continuously inform creative processes, requiring agile systems that can iterate in response to insights.

Traditional manual processes, even when bolstered by basic content management systems, struggle to keep pace with these demands. To address scale and efficiency challenges, organizations have progressively adopted automated tools ranging from rule-based marketing platforms to advanced AI-driven assistants. Early marketing automation handled email campaigns and lead scoring, while modern solutions powered by natural language processing and machine learning enable automated topic ideation, headline generation, keyword optimization, summarization, and draft article production.

On the design side, template-driven graphic tools and video editing assistants auto-generate visual assets at scale. Emerging AI agents further extend capabilities by autonomously executing end-to-end workflows: researching topics, assembling outlines, drafting copy, suggesting multimedia elements, and optimizing distribution timing. When applied effectively, these solutions accelerate production, reduce costs, and ensure consistency across high-volume initiatives.

Hybrid Creativity: AI and Human Collaboration Models

Maximizing the benefits of automation requires integrating AI-driven tools with human creativity through structured collaboration models. Industry practitioners describe three strategic paradigms:

- Human Augmentation: AI tools operate as intelligent assistants that enhance human capacity without supplanting creative authority. In centaur teams, human writers and editors work in tight feedback loops with models. For example, ChatGPT and GPT-4 generate initial drafts and outlines, while humans refine narrative flow and ensure brand voice.

- Orchestration: An AI conductor dynamically allocates tasks across human and machine agents based on real-time performance metrics. This service-oriented approach adapts workflows in response to shifting priorities, routing research, drafting, and review tasks to the most efficient contributors.

- Modular Embedding: AI capabilities are treated as interchangeable components within existing toolchains. Language models, semantic engines, and analytics modules plug into platforms where they deliver maximum leverage, enabling teams to swap or upgrade components without disrupting core processes.

These paradigms rest on interpretive frameworks that guide the alignment of tools and tasks. The sociotechnical systems perspective examines how AI influences roles, communication patterns, and knowledge flows. The task-technology fit model identifies which content tasks—ideation, drafting, research, optimization—are best suited to specific AI capabilities delivered by platforms such as Jasper and MarketMuse. Participatory design frameworks involve creators in tool selection and customization, preserving tacit knowledge and ensuring solutions reflect day-to-day practices.

Thought leaders emphasize balanced integration strategies. Andrew Ng advises focusing on high-leverage tasks that are scarce, specific, and strategic. Erik Brynjolfsson highlights productivity gains from complementary AI systems that expand human expertise. Ben Shneiderman’s human-centered AI manifesto calls for transparency, control, and accountability within AI-mediated workflows. Case studies illustrate these principles in action: editors at The New York Times use Adobe Sensei to surface data-driven story ideas while retaining final narrative control; startups rely on Jasper for rapid copy testing, applying human curation to select high-impact versions; and teams embed Grammarly as an in-line quality gate to offer real-time style guidance without overriding authorial intent.

Strategic Integration and Organizational Alignment

Successfully harmonizing AI-driven automation with human creativity involves addressing common integration challenges:

- Data and System Silos: Content assets, metadata, analytics, and brand guidelines often reside in disparate tools. Teams spend excessive time exporting, transforming, and reimporting data.

- Inconsistent Tooling and Interfaces: Each platform may employ different user experiences, asset libraries, and governance models, leading to fragmented creative environments.

- Workflow Friction: Rigid approval and handoff processes can negate automation gains. Bottlenecks arise when AI-generated drafts require extensive human revision or compliance checks.

- Skill Gaps and Role Uncertainty: Content professionals may lack expertise in prompt engineering and AI oversight, while AI specialists may be unfamiliar with narrative craft and brand strategy.

- Quality Control and Trust: Without transparent governance and audit trails, stakeholders hesitate to rely on AI-assisted outputs for high-stakes communications.

- Governance and Compliance: Embedding regulatory requirements, brand guidelines, and ethical standards into automated workflows adds complexity to integration projects.

To overcome these obstacles, organizations should establish a governance and operating model that aligns leadership vision, team structures, and cultural mindsets. Key elements of this model include:

- Leadership Sponsorship: Senior executives must champion AI-human collaboration as a core strategic priority, providing visible advocacy, dedicated resources, and integration of hybrid metrics into performance dashboards.

- Cross-Functional Governance: A governance council with representatives from editorial, legal, compliance, IT, and marketing ensures policy consistency, addresses data privacy, and maintains brand integrity.

- Center of Excellence: Establish a CoE or hybrid content lab that defines standards, monitors performance, stewards ethical considerations, and disseminates best practices across the organization.

- Change Management: Adapt frameworks such as Kotter’s eight-step process or ADKAR to guide teams through awareness, desire, knowledge, ability, and reinforcement phases, balancing centralized oversight with local experimentation.

- Talent Development: Invest in upskilling programs covering prompt design, data literacy, AI oversight, and creative synthesis, fostering a culture of continuous learning and collaboration.

- Tool Interoperability: Aim for a composable technology architecture where platforms integrate seamlessly with content management systems and analytics suites, reducing friction and avoiding siloed operations.

Ensuring Quality, Governance, and Continuous Improvement

Maintaining content integrity and driving strategic impact requires robust controls, iterative feedback loops, and forward-looking practices:

Balancing Autonomy and Human Control

Frameworks that define an autonomy spectrum—from advisory modes to full automation—help teams determine the optimal level of machine involvement for each content type. Human-in-the-loop (HITL) models ensure that AI suggestions are vetted by experts, preserving editorial judgment and mitigating risks such as hallucination or bias. Explainable AI (XAI) techniques, available in tools like GPT-4 and MarketMuse, provide transparency into algorithmic reasoning, enabling practitioners to calibrate trust based on confidence scores and attribution data.

Analytical Frameworks and Metrics

To evaluate hybrid workflows, organizations deploy both qualitative and quantitative metrics:

- Throughput and Efficiency—Time-to-publication, revision rates, and cost per piece.

- Quality and Engagement—Audience satisfaction, click-through rates, dwell time, and sentiment analysis.

- Creativity and Innovation—Number of unique concepts generated, brand lift, and perceived emotional resonance.

Four analytical lenses guide decision-making:

- Capability Maturity Models: Assess proficiency across governance, technology infrastructure, talent, and performance, identifying gaps and prioritizing strategic initiatives.

- Cost-Benefit Analysis: Quantify production cost savings, projected revenue uplift, and the value of redeployed human bandwidth for high-impact work.

- Risk-Reward Matrices: Plot initiatives by potential benefit versus operational or reputational risk to allocate human oversight proportionate to stakes.

- Feedback-Driven Optimization Cycles: Employ agile retrospectives, A/B testing, and continuous feedback from analytics dashboards to fine-tune AI models and editorial guidelines.

Mitigation of Limitations and Future-Proofing

Awareness of potential pitfalls enables proactive risk management:

- Quality Variability—Institute multi-stage human reviews to validate tone, accuracy, and brand alignment.

- Algorithmic Bias—Use bias detection tools, diverse training data, and periodic audits to ensure fairness.

- Overreliance on Templates—Encourage human-led ideation sessions to maintain narrative freshness.

- Data Security—Anonymize inputs, enforce access controls, and consider private cloud or on-premises deployments.

- Change Fatigue—Implement phased rollouts, gather stakeholder feedback, and celebrate quick wins to sustain momentum.

Future-proofing hybrid content operations involves continuous model refresh processes, adaptive governance that evolves with technological advances, expansion into multimodal content formats, and establishment of scalable centers of excellence that pilot new AI capabilities. By embedding these practices, organizations position themselves to thrive amidst dynamic market demands and technological breakthroughs, harnessing the synergy of AI agents and human creativity to deliver differentiated content at scale.

Chapter 6: Evaluating Quality, Voice, and Brand Consistency

Understanding the Modern Digital Content Ecosystem

In today’s era of rapid technological change, organizations rely on digital content to inform, engage, and differentiate their audiences. Content travels across websites, mobile apps, social media, community forums, voice assistants, and immersive experiences such as AR/VR, each demanding its own format, interaction model, and performance criteria. Smartphones, tablets, wearables, and smart speakers intensify the need for responsive design, adaptive media, and voice-enabled interfaces that ensure accessibility and seamless user experiences across devices.

Underlying this ecosystem is a sophisticated technology stack. Content management systems such as WordPress and HubSpot CMS serve as the backbone for authoring, versioning, and publishing. Digital asset management platforms centralize rich media assets for efficient reuse. Marketing automation and CRM integrations enable segmentation and personalized messaging at scale. Analytics suites measure key performance indicators—page views, click-through rates, dwell time, conversion metrics—and feed insights back into editorial planning.

As organizations pursue headless architectures and API-first designs, they decouple content creation from presentation, allowing developers and marketers to deliver dynamic, context-driven experiences across web, mobile, email, and IoT devices. This flexibility supports rapid innovation but increases complexity in implementation, requiring tighter coordination among IT, marketing, creative, and compliance teams. Maintaining a coherent narrative across disparate channels demands rigorous governance and unified content strategies.

Consumer behavior has shifted toward on-demand information and privacy-preserving personalization under regulations such as GDPR and CCPA. Multi-touch attribution and semantic search optimization compel content teams to integrate SEO best practices, voice search readiness, and accessibility standards. Balancing discoverability, compliance, and audience relevance across search engines, social platforms, and direct interactions is a strategic imperative for modern digital leaders.

Evolving Content Operations and Collaborative Workflows

Traditional linear content production—strategy, drafting, editing, design, development—often led to bottlenecks, version conflicts, and siloed teams. In contrast, agile content operations, or ContentOps, emphasize cross-functional collaboration, modular content, and iterative delivery. Creative sprints borrowed from software development accelerate ideation, prototyping, stakeholder reviews, and optimization based on real-time analytics.

Centralized editorial calendars, metadata taxonomies, and design systems ensure consistency while allowing regional teams to adapt messages for local audiences. As organizations scale globally, AI-driven content tagging and translation workflows reduce manual effort, enabling dynamic page assembly and automated content recommendations grounded in user profiles and behavioral data.

Cloud-based authoring tools such as Google Docs and Microsoft 365 facilitate real-time coauthoring, commenting, and versioning across geographies. Collaborative design platforms like Figma support interactive prototyping and feedback loops between designers, copywriters, and developers. Project management systems—Asana, Trello, Jira—track tasks, deadlines, and dependencies, providing visibility into throughput and resource allocation.

Despite these advances, aligning teams around a unified content vision remains challenging. Localization introduces additional layers—multilingual editorial review, regional regulatory considerations, and nuanced cultural adaptation. Clear communication protocols, robust approval processes, and shared governance frameworks are essential to ensure brand, legal, and quality standards are consistently met.

The Rise of AI-Driven Content Tools

Automated content generation tools have matured from simple templates to sophisticated platforms that leverage large language models and retrieval-augmented generation. OpenAI’s ChatGPT and Jasper offer intuitive interfaces for generating blog posts, social media updates, email sequences, and more, guided by brand-specific training and custom prompts.

Copy.ai and other specialized platforms enable marketers to rapidly prototype headlines, product descriptions, and ad copy. Enterprise solutions integrate internal knowledge bases with AI, ensuring factual accuracy and brand alignment. Prompt engineering techniques refine outputs by specifying tone, context, audience, and desired length.

Visual content creation has been revolutionized by diffusion-based image generators such as Midjourney and DALL·E, which can produce illustrations, concept art, and mockups on demand. For multimedia, Synthesia, Lumen5, and Descript automate video and audio production, offering text-to-video synthesis, automated editing, AI-powered voiceovers, and transcription.

Beyond generation, intelligent assistants such as Grammarly and Hemingway App analyze grammar, style, and readability in real time. SEO tools like Clearscope and Surfer SEO recommend keyword usage, content structure, and metadata enhancements to improve search visibility. Analytics-driven platforms monitor performance data continuously, suggesting subject lines, posting schedules, and visual assets that drive engagement.

Drivers and Challenges of Automation

Multiple factors fuel the adoption of AI-driven content tools:

- Scalability Imperative: Deliver localized, personalized messaging across multiple brands, languages, and markets without proportionally expanding teams.

- Acceleration: Real-time social interactions and news cycles demand swift ideation, drafting, A/B testing, and publishing.

- Cost Efficiency: Automating repetitive or formulaic tasks—metadata generation, product descriptions, data-driven reports—frees creative resources for strategic initiatives.

- Data-Driven Insights: AI systems analyze audience behavior at scale, uncovering content gaps, trending topics, and optimal distribution channels.

- Competitive Pressure: Brands integrating AI into content operations can outpace rivals with higher content velocity and precision.

- Talent Scarcity: The digital skills gap and demand for specialized writers and designers make automation an essential augmentation strategy.

- Continuous Learning: Automated tools enable rapid incorporation of performance feedback, fostering a test-and-learn culture.

Yet organizations face significant hurdles when embedding AI into established workflows:

- Disparate Technology Stacks: Siloed platforms and AI services lacking unified APIs lead to manual integrations and error-prone handoffs.

- Quality Assurance: AI outputs may miss brand voice subtleties, cultural context, or regulatory requirements, necessitating thorough human review.

- Voice and Tone Consistency: Generic training data can yield inconsistent outputs. Expertise in prompt engineering and style modeling is required to maintain brand personality.

- Algorithmic Bias: Models trained on broad data sets can perpetuate prejudices or produce hallucinatory content. Bias audits and provenance documentation are essential.

- Intellectual Property: Uncertainties around rights for AI-generated content require clear policies on licensing, attribution, and reuse.

- Change Management: Without strategic communication, training, and leadership support, AI integration can face resistance from creative professionals.

- Data Privacy and Security: Provisioning proprietary or customer data to third-party AI providers demands encryption, access controls, and contractual safeguards.

- Overreliance on Automation: Excessive dependence on AI can produce formulaic, homogenized content that fails to differentiate the brand.

- Vendor Lock-In: Custom integrations or proprietary platforms may limit future flexibility and increase switching costs.

Hybrid Content Governance and Human-Driven Creativity

Amidst automation, human creativity remains essential for storytelling, emotional resonance, and ethical judgment. Effective hybrid workflows assign AI agents to data-intensive and formulaic tasks—such as metadata tagging, initial draft creation, and keyword optimization—while human experts focus on narrative strategy, brand storytelling, and contextual adaptation.

Key strategic considerations for hybrid workflows include:

- Governance Frameworks: Establish clear policies for AI usage, data governance, prompt design, approval hierarchies, and intellectual property rights.

- Role Definition: Define responsibilities for AI specialists (prompt engineers, data scientists), content creators, and editors. Invest in training programs for AI tool proficiency and ethical considerations.

- Editorial Guidelines: Maintain a centralized style guide informing AI training data and human briefs. Update guidelines to reflect evolving customer insights and regulatory changes.

- Integration and Interoperability: Prioritize platforms with open APIs, prebuilt connectors, and support for standardized data formats (JSON, XML). Centralize planning, asset management, and analytics in a unified dashboard.

- Iterative Feedback Loops: Implement cyclical review processes where human editors refine AI outputs, and learnings inform model fine-tuning and prompt template updates.

- Pilot Programs and Centers of Excellence: Launch small-scale pilots to validate use cases, capture best practices, and build internal expertise. Establish an AI Content Center of Excellence to govern adoption and performance.

- Performance Measurement: Use balanced scorecards to assess operational efficiency, content quality, and business impact. Track metrics like turnaround times, error rates, engagement, and ROI.

- Change Management: Foster a culture of experimentation. Communicate strategic rationale for automation, celebrate early wins, and address concerns through training and open forums.

Frameworks for Brand Voice Alignment

Maintaining a consistent brand voice across AI-generated and human-authored content is vital for credibility and differentiation. Organizations apply a mix of analytical frameworks:

- Rule-Based Systems: Tools like Acrolinx enforce machine-readable style rules, vocabulary controls, and punctuation standards.

- Machine Learning Models: Fine-tuned language models on brand-specific corpora using platforms such as Jasper AI and OpenAI ChatGPT ensure semantic consistency and tone adaptation.

- Hybrid Templates and Prompt Engineering: Modular content blocks—opening statements, value propositions, calls to action—paired with precise prompts guide AI to generate contextually relevant text under human supervision.

- Voice Monitoring and Feedback: Real-time analytics with Brandwatch and Grammarly track sentiment alignment, engagement voice consistency, and flag deviations for review.

Companies often adopt a layered approach, integrating these frameworks into governance processes. Voice alignment maturity models progress from ad hoc manual checks to predictive machine learning systems, culminating in adaptive AI-human loops that self-optimize over time.

Implications for Brand Trust and Audience Engagement

Brand trust is built through consistency, authenticity, and emotional resonance. In hybrid ecosystems where AI generates initial drafts and humans refine nuance, governance frameworks and collaborative protocols ensure brand integrity.

Transparency about AI usage—through disclosure statements like “Drafted with AI Assistance” and published ethical guidelines—reduces audience uncertainty and positions the brand as both innovative and responsible. In sectors like healthcare and finance, AI-assisted chatbots such as IBM Watson Assistant embed sentiment analysis and escalate complex inquiries to human experts, reinforcing trust in sensitive contexts.

Industry context influences trust dynamics. Regulated sectors prioritize factual accuracy and compliance, creative industries emphasize originality and cultural relevance, and B2B marketing demands data-driven insights framed by domain expertise. Regardless of context, blending AI insights with human judgment preserves credibility and fosters long-term loyalty.

Community engagement further enhances trust. Brands use AI to identify trending topics and streamline content calendars but rely on community managers to facilitate discussions, curate user-generated content, and host live events. This hybrid approach combines scalability with genuine human connection, sustaining brand advocacy over time.

Standardizing Brand Voice Ecosystems

Delivering a coherent brand presence across diverse channels and formats requires a unified ecosystem that defines tone, style, and thematic priorities. Key elements include:

- Core Voice Attributes: Define three to five non-negotiable pillars—approachable expertise, narrative warmth, technical precision—that underpin all content.

- Content Archetype Mapping: Assign tailored voice profiles to formats such as blog posts, product pages, social updates, and email campaigns, ensuring both AI agents and human writers share a common reference.

- Voice Reference Library: Maintain an annotated repository of exemplar texts for training human teams and fine-tuning AI models.

- Modular Style Guide: Integrate general grammar rules with channel-specific modules, and embed AI-powered editing via Jasper AI or GPT-4.

- Editorial Checkpoints: Embed style evaluations at outline approval, first draft review, and final edit for both AI-generated and human-authored content.