Securing the Future with Intelligence A Comprehensive Guide to AI Agents in Security and Risk Management

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Historical Evolution of Security Operations

Over the past thirty years, security operations have transformed from basic perimeter defenses into sophisticated, data-driven command centers. Early approaches relied on firewalls, intrusion detection systems, and signature-based antivirus software to protect network boundaries. Human analysts manually reviewed logs and hunted for threats, a reactive process that struggled to keep pace with the growing volume and complexity of attacks. The advent of Security Information and Event Management (SIEM) platforms addressed some limitations by aggregating logs and generating near–real-time alerts. Solutions such as IBM Security QRadar Advisor with Watson and Splunk Phantom introduced automation for alert triage, though significant human oversight remained essential.

The shift to big data analytics and cloud infrastructures gave rise to distributed Security Operations Centers (SOCs) capable of ingesting terabytes of telemetry. Endpoint Detection and Response (EDR) platforms like Palo Alto Networks Cortex XDR and CrowdStrike Falcon moved the focus inside the network perimeter, employing behavioral analytics and machine learning to detect anomalies on endpoints, servers, and cloud workloads. Despite these advances, the volume of alerts often overwhelmed security teams, revealing an urgent need for more intelligent, adaptive mechanisms that could learn from data, prioritize risk, and automate routine response actions.

Today’s landscape demands a transition from reactive detection to proactive, autonomous defense. Platforms such as Rapid7 InsightVM apply machine learning to vulnerability management, while the Darktrace Enterprise Immune System uses network immunology to model normal behavior and detect deviations. Yet adversaries innovate faster than traditional controls, driving organizations toward AI agents capable of end-to-end threat management with minimal human intervention.

Defining AI Agents within Security and Risk Contexts

AI agents in security are autonomous software entities that perceive environmental signals, reason over complex data sets, and execute actions to mitigate risk. Unlike rule-based automation, they employ adaptive learning and probabilistic reasoning to interpret threat indicators, assess risk likelihood, and recommend or enact countermeasures. Practitioners evaluate these agents across three core capacities:

- Perception: ingesting diverse streams of telemetry, logs, and external threat intelligence

- Reasoning: applying statistical inference, pattern analysis, or rule induction to predict adversarial behavior

- Action: initiating alerts, orchestrating workflows, or autonomously enforcing policies to contain or neutralize threats

To navigate the expanding ecosystem of AI-driven solutions, organizations employ interpretive frameworks and taxonomies:

- Function-Based Taxonomy: categorizing agents by core functions such as threat detection, incident response, vulnerability assessment, identity management, and compliance monitoring

- Autonomy Maturity Model: defining stages from semi-autonomous tools that require human approval to fully autonomous systems capable of closed-loop decision-making

- Data-Centric versus Behavior-Centric Agents: distinguishing solutions that rely on known indicators of compromise from those leveraging anomaly detection and predictive forecasting

- Integration Spectrum: positioning agents along a continuum from standalone point tools to deeply embedded components in Security Orchestration, Automation, and Response (SOAR) platforms

Rigorous evaluation criteria guide solution selection and alignment with risk management goals:

- Detection Accuracy and Coverage: true positive and false positive rates, mean time to detect, and scenario breadth

- Explainability and Transparency: human-interpretable justifications and auditability of decisions

- Scalability and Performance: throughput, latency, and capacity to process large data volumes

- Integration Flexibility: API support, data model compatibility, and ease of embedding within existing workflows

- Governance and Compliance Alignment: policy engines, audit logging, and adherence to frameworks such as NIST SP 800-53 and ISO 27001

- Operational Resilience: failover mechanisms, adversarial robustness, and behavior under degraded conditions

Market Drivers and Challenges for AI Adoption

Several converging factors accelerate AI agent adoption in security and risk management:

- Expansion of Attack Surfaces: cloud migration, microservices, Internet of Things (IoT), and remote work models increase the number of endpoints and applications requiring protection.

- Escalating Threat Sophistication: advanced persistent threats (APTs), ransomware-as-a-service, and automated exploit frameworks employ AI-driven techniques to evade detection.

- Talent Shortages: a global cybersecurity workforce gap exceeding half a million skilled professionals leads to alert fatigue and analyst burnout.

- Regulatory Pressures: stringent requirements for continuous monitoring, audit trails, and rapid incident reporting in finance, healthcare, and critical infrastructure sectors.

- Demand for Speed and Accuracy: organizations require faster decision-making under resource constraints to reduce dwell time and limit damage.

Legacy tools, designed for static perimeters, struggle in dynamic hybrid environments. Human analysts cannot sustain manual processes at scale, prompting a shift toward AI agents that shoulder routine tasks, surface high-priority incidents, and augment expert decision-making.

Ecosystem Forces Shaping Autonomous Decision-Making

The rise of AI agents reflects broader technological and market dynamics:

- Machine Learning Democratization: open-source frameworks and libraries enable rapid deployment of supervised, unsupervised, and reinforcement learning models.

- Cloud and API-Driven Integrations: microservices architectures and API-first designs allow agents to interlink SIEM, SOAR, identity providers, and cloud consoles, fostering cross-domain orchestration.

- Zero-Trust and Risk-Based Models: continuous risk assessment at every transaction and access request requires adaptive controls orchestrated by AI agents.

- Economic Imperatives: rising total cost of ownership for traditional SOC tools, staffing challenges, and alert fatigue drive interest in automation that reduces time-to-containment and delivers measurable ROI.

These forces converge to create a fertile environment for AI agents to execute autonomous security decision-making tasks, operating at machine speed while preserving human oversight where it matters most.

Urgency of Autonomous Intelligence in Threat Landscapes

Today’s adversaries use automation, machine learning, and cloud computing to launch large-scale, adaptive campaigns. Polymorphic malware, self-propagating worms, and fileless techniques evade signature-based defenses, while expanding cloud and IoT environments defy static scans. Manual SOC workflows ingest millions of alerts daily, resulting in alert fatigue, high error rates, and extended dwell times that leave attackers free to explore networks and exfiltrate data.

With over three million unfilled cybersecurity positions globally, organizations cannot rely solely on human expertise. Autonomous intelligence agents ingest heterogeneous data streams, correlate events across domains, and surface high-fidelity insights without constant human intervention. Applications include:

- Cloud-native architectures with rapid asset provisioning

- IoT and operational technology in manufacturing and energy

- Remote and hybrid workforces with distributed endpoints

- DevSecOps pipelines integrating vulnerability assessment into CI/CD

- Supply chain ecosystems requiring real-time monitoring of third-party dependencies

For example, Darktrace Enterprise Immune System establishes dynamic patterns of life for every device, alerting on deviations. IBM Security QRadar Advisor with Watson uses natural language processing to contextualize alerts and automate root cause analysis. Palo Alto Networks Cortex XDR correlates telemetry across endpoints, networks, and cloud workloads to orchestrate behavior-based detection and automated response. These capabilities shrink detection-to-response windows from days to minutes, reducing the scope and impact of breaches.

Gartner projects that by 2025, organizations adopting AI-driven security operations will achieve 50 percent lower operational costs and 30 percent fewer successful breaches. Autonomous intelligence shifts security teams from reactive firefighting to proactive, risk-centric activities—threat hunting, adversary simulation, and resilience testing—supported by continuous situational awareness.

Reader Objectives and Insights Roadmap

This guide equips security and risk management professionals with the strategic, analytical, and conceptual frameworks needed to adopt AI agents effectively. By linking historical evolution, theoretical foundations, and practical perspectives, readers will learn to align AI capabilities with business objectives, governance models, and operational realities.

Intended Audience

- Chief Information Security Officers and risk executives

- Security operations managers and architects

- IT and DevOps leaders integrating compliance automation

- Data scientists and machine learning engineers

- Governance, risk, and compliance professionals

- Ethics and privacy officers

- Consultants and analysts advising on AI and enterprise resilience

Core Strategic Takeaways

Readers will gain a nuanced understanding of how to:

- Justify AI investments through risk reduction, operational efficiency, and compliance improvements

- Frame AI agents as collaborative partners that augment human expertise

- Design governance models balancing autonomy with oversight

- Measure key outcomes such as dwell time reduction, return on security investment, and compliance posture

Analytical and Interpretive Frameworks

The guide introduces models such as pattern-of-life analysis for insider threats, graph theory for fraud detection, and probabilistic risk analysis for financial transactions. It also presents scenario-based reasoning for incident prioritization, decision trees for remediation orchestration, and feedback loops for continuous learning.

Chapter Mapping

- Foundation of AI Agents in Security and Risk Management: terminology, agent taxonomy, architecture

- Threat Detection and Predictive Analytics Agents: anomaly detection and forecasting

- Automated Incident Response Agents: comparing rule-based and adaptive approaches

- Vulnerability Assessment Agents: autonomous scanning and exploit simulation

- Identity and Access Management Agents: adaptive authentication and policy adaptation

- Fraud Detection and Financial Risk Management Agents: real-time transaction integrity

- Compliance Monitoring Agents: NLP-driven regulation interpretation and continuous auditing

- Behavioral Analytics and Insider Threat Agents: unsupervised learning and contextual response

- Integration and Interoperability of AI Security Agents: modular architectures and standards

- Future Trends and Ethical Considerations: generative techniques, bias mitigation, governance

Key Considerations and Next Steps

- Ensure data quality and feature engineering to avoid skewed insights

- Plan for integration complexity with standardized APIs and data transformation layers

- Address model bias and explainability, maintaining audit trails for regulated environments

- Assess organizational readiness, including culture, skills, and governance structures

- Monitor changing threat tactics and update models with agile retraining mechanisms

- Select vendors with extensible roadmaps to avoid platform lock-in

Establish a cross-functional team to translate these insights into a pilot program. Define success criteria, prioritize high-impact use cases, and implement feedback loops for continuous refinement. With a foundation of responsible innovation and strategic alignment, organizations can operationalize autonomous intelligence and build a resilient, future-ready security posture.

Chapter 1: Foundation of AI Agents in Security and Risk Management

Security Operations Evolution and Market Drivers

Over the last two decades, security operations have transformed from manual, perimeter-focused defenses into complex, data-driven ecosystems. Early approaches relied on firewalls, intrusion detection systems and antivirus solutions to protect static network boundaries, with analysts manually correlating logs and investigating alerts. As enterprises embraced cloud services, mobile workforces and interconnected supply chains, the security perimeter dissolved and threat actors deployed advanced tactics such as targeted phishing, fileless malware and multi-stage campaigns. This shift generated vast volumes of unstructured data and alert noise, overwhelming traditional Security Information and Event Management platforms and manual processes.

Key market forces driving the adoption of AI agents in security operations include:

- Escalating threat complexity that demands continuous analysis of heterogeneous data streams.

- Global talent shortages that constrain 24×7 monitoring and response capabilities.

- Regulatory pressure from standards such as GDPR and CCPA, which require robust incident detection and reporting.

- Operational efficiency imperatives to balance constrained budgets with proactive threat prevention.

These drivers have fostered a new paradigm integrating AI-driven agents capable of learning from data, reasoning about risk and autonomously executing response actions. Organizations now view autonomous intelligence as a strategic imperative to maintain resilience in the face of evolving threats.

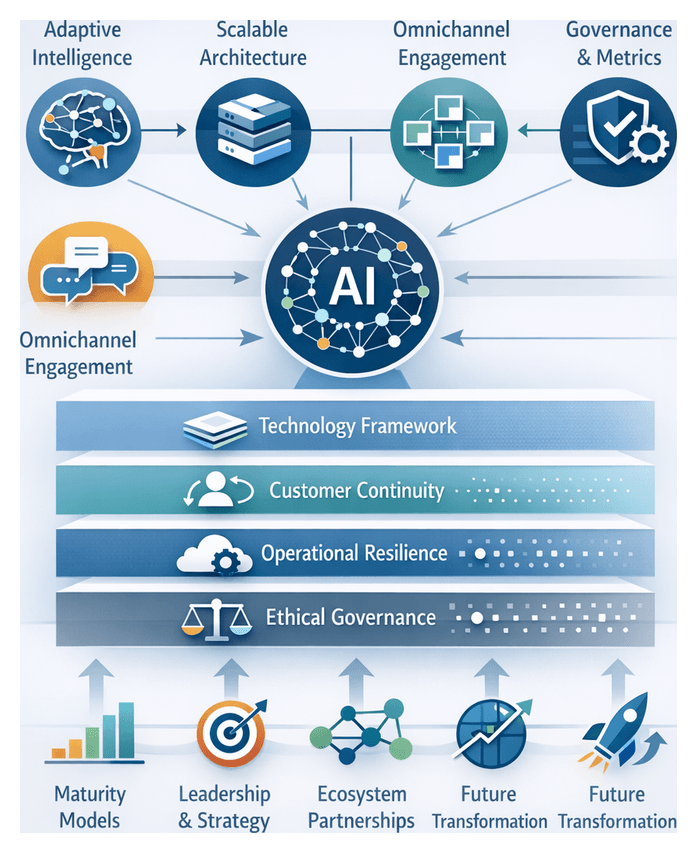

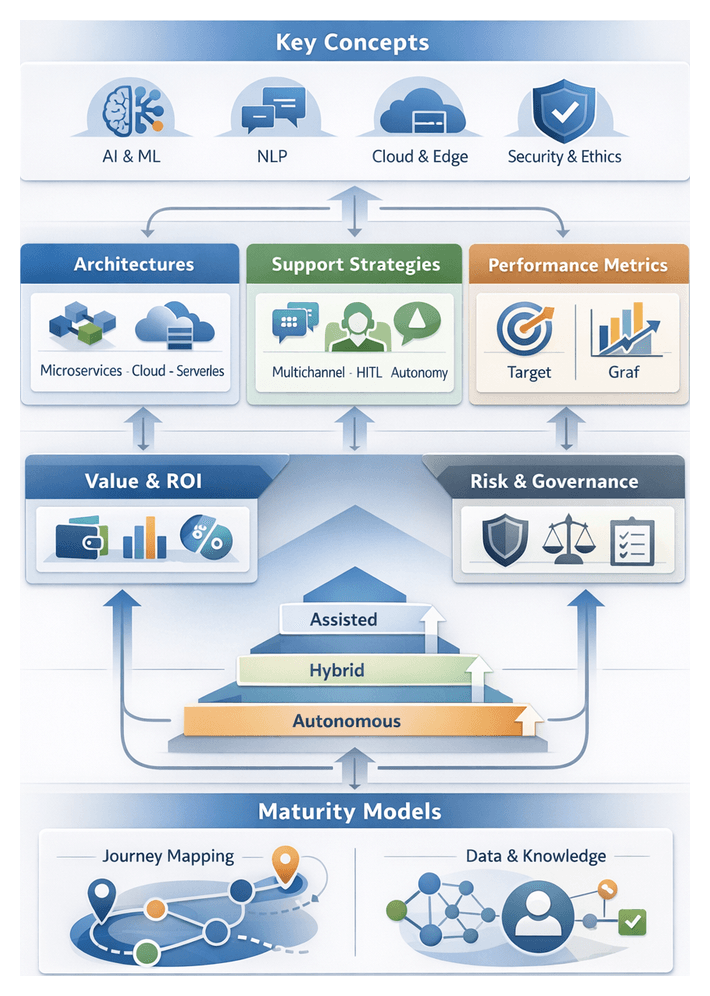

Conceptual Framework for AI Agents

In security and risk management, AI agents are software entities that perceive their environment, make decisions based on analytical models and execute actions to achieve specific objectives. They extend beyond static rule engines by learning continuously, adapting to new threats and collaborating with human operators.

An AI agent architecture comprises three core modules:

- Sensing and Data Ingestion: Gathering telemetry from network sensors, endpoint agents, cloud workloads, identity logs and external threat intelligence feeds to ensure visibility across hybrid infrastructures.

- Reasoning and Decision Making: Applying supervised, unsupervised and reinforcement learning models alongside probabilistic inference and knowledge graphs to assess risk levels and prioritize responses.

- Action and Orchestration: Executing containment, remediation or recovery tasks—such as isolating endpoints, modifying firewall rules or revoking compromised credentials—via integration with platforms like Splunk Phantom and IBM Security SOAR.

AI agents can be classified by autonomy level:

- Advisory agents that recommend playbooks for human approval.

- Semi-autonomous agents that automate low-risk tasks while escalating complex scenarios.

- Fully autonomous agents that optimize response policies with minimal human intervention.

Defining these autonomy tiers clarifies governance requirements and guides integration into existing security ecosystems.

Modern Threat Landscape and the Case for Autonomous Intelligence

Cyber threats are proliferating in sophistication and scale. Recent trends include the explosive growth of Ransomware-as-a-Service, high-impact supply chain exploits, an expanding Internet of Things attack surface and stealthy advanced persistent threats. Traditional human-centric Security Operations Center models struggle to match the speed and complexity of these evolving risks.

Autonomous intelligence addresses these challenges by:

- Operating at machine speed to analyze terabytes of data in real time and initiate containment within seconds.

- Scaling consistently across on-premises, multi-cloud and edge environments.

- Learning continuously through feedback loops and reinforcement to refine detection models and reduce false positives.

Organizations that delay integrating autonomous AI agents risk exposure to advanced attacks, regulatory penalties and reputational harm.

Learning and Decision-Making Paradigms

Supervised Learning

Supervised learning models—such as random forests, support vector machines and deep neural networks—are trained on labeled datasets to achieve high accuracy on known threats. Advantages include predictable performance, alignment with audit trails and model explainability via feature importance and tools like LIME. Challenges include the effort to label data at scale and class imbalance. Platforms like IBM Security QRadar Advisor with Watson integrate supervised models with expert curation to streamline data annotation.

Unsupervised techniques—clustering, autoencoders and density estimation—detect anomalies without labeled data, uncovering novel attack vectors and insider threats. They adapt baselines in dynamic environments, but may produce higher false positives. Tiered alerting and correlation with threat intelligence feeds mitigate noise. Darktrace Enterprise Immune System exemplifies unsupervised deep learning that models enterprise “self” for rapid anomaly detection.

Reinforcement Learning

Reinforcement learning (RL) enables agents to optimize response strategies based on reward signals, balancing containment speed against business impact. Through simulated attack drills, RL agents learn multi-stage mitigation sequences and cumulative risk reduction. Early deployments use sandboxed environments—often built on frameworks like OpenAI Gym—before transitioning to controlled production. Palo Alto Networks Cortex XDR incorporates RL-inspired analytics for continuous policy refinement.

Hybrid and Ensemble Strategies

Combining learning paradigms enhances resilience against concept drift and adversary evolution. Common patterns include:

- Parallel ensembles that fuse supervised and unsupervised outputs via voting or meta-learning.

- Layered analysis where unsupervised models triage anomalies and supervised classifiers confirm threats.

- Contextual reinforcement using supervised pre-training followed by RL fine-tuning in feedback loops.

Effective orchestration of hybrid architectures requires standards for model governance, version control and continuous validation.

Reasoning and Decision Frameworks

Reasoning engines translate model scores into actionable decisions using Bayesian networks, rule engines and knowledge graphs. For example, platforms like Splunk Phantom embed rule-based playbooks, while knowledge graphs informed by the MITRE ATT&CK framework support multi-hop inference and threat attribution. Integrating symbolic logic with machine-learned signals balances agility with governance.

Autonomous Security Functions

Autonomous security functions embed intelligent decision-making into routine risk management workflows, reflecting an organization’s security maturity.

Continuous Monitoring and Observability

Agents ingest real-time telemetry from network logs, endpoints, cloud services and third-party feeds. Unsupervised learning and behavioral analytics establish dynamic baselines, surfacing subtle deviations. Analysts rely on dashboards from platforms such as Microsoft Sentinel and Darktrace Enterprise Immune System to maintain situational awareness across kill chain phases and regulatory controls.

- Frameworks like the OODA loop structure continuous detection workflows.

- Data integration from SIEM, EDR and cloud-native logs enables unified threat visibility.

- Compliance-driven monitoring generates evidence streams aligned with NIST SP 800-53 and ISO 27001.

Dynamic Threat Mitigation and Response

Adaptive agents convert static playbooks into context-aware remediation strategies. Using decision trees and Markov models, agents select actions that minimize time to containment while preserving business continuity. Platforms such as IBM Security QRadar Advisor with Watson and Palo Alto Networks Cortex XDR automate playbooks with human-in-the-loop checkpoints for ambiguous scenarios.

- Graduated response models isolate low-confidence threats automatically and escalate high-severity alerts to analysts.

- Response tactics refine continuously based on historical effectiveness and asset criticality.

- Policy guardrails ensure automated actions comply with governance and risk appetite.

Contextual Threat Hunting and Proactive Defense

Autonomous agents enable hypothesis-driven threat hunting by correlating cross-domain indicators against frameworks like MITRE ATT&CK. Tools such as Splunk Phantom and CrowdStrike Falcon orchestrate automated hunt campaigns, executing query templates and enrichment workflows to surface concealed threats.

- Hunt paradigms apply risk scoring and asset context to prioritize investigations.

- Threat intelligence feeds and behavioral baselines enrich hunt outcomes.

- Proactive defense allocates resources toward high-value investigations.

Policy Enforcement and Automated Compliance

Autonomous compliance agents translate regulatory texts into machine-executable rules, detecting and remediating policy deviations. Natural language processing frameworks adjust controls in real time—exemplified by Okta Adaptive MFA. Dashboards highlight compliance gaps and correlate them with operational changes.

- Governance taxonomies align enforcement with GDPR, PCI DSS and SOX.

- Risk-based prioritization focuses analysts on high-impact deviations.

- Audit trails support external audits and internal policy reviews.

Adaptive Vulnerability Management

Agents continuously assess exploit likelihood, asset exposure and threat context to prioritize remediation. Platforms like Rapid7 InsightVM integrate vulnerability detection with patch orchestration, using predictive risk scores based on CVSS metrics and real-world exploit data.

- Predictive scoring guides patching priorities by combining vulnerability metrics with threat intelligence.

- Adaptive scanning schedules optimize resource use and minimize operational impact.

- Integration with CMDBs maintains context-rich asset inventories.

Cross-Domain Orchestration and Collaborative Defense

Maximal value emerges when agents collaborate through shared data schemas and orchestration platforms, enabling end-to-end workflows. Modular architectures and standards like STIX/TAXII and OpenC2 allow platforms such as IBM Security SOAR to coordinate detection, response and compliance functions in alignment with defense-in-depth and zero trust principles.

- Standardized APIs and messaging protocols enable coherent threat intelligence exchange.

- Performance metrics such as mean time to detect and respond evaluate orchestration efficacy.

- Collaborative defenses preserve accountability while accelerating coordinated actions.

Strategic Insights and Adoption Roadmap

Organizational Readiness Dimensions

Successful AI agent adoption rests on people, processes and culture that support data-driven decision making. Readiness dimensions include:

- Data Maturity: Integrated, cleansed and contextualized data feeds underpin accurate models.

- Skill and Expertise: Cross-disciplinary teams of domain experts, data scientists and analysts are essential for continuous tuning.

- Process Integration: Embedding agents into incident response, vulnerability management and access review workflows ensures seamless augmentation.

- Executive Sponsorship: Leadership commitment secures budget and drives cultural acceptance of automated frameworks.

- Governance and Oversight: Policies for model validation, performance monitoring and exception handling maintain accountability and compliance.

Technology Alignment and Integration Considerations

AI agents deliver maximum value within cohesive, interoperable ecosystems. Critical factors include:

- Modular Architectures: Decoupled components for ingestion, analytics and orchestration enable incremental deployment and upgrades.

- Standards-Based Interfaces: RESTful APIs and common data schemas facilitate integration between SIEM, SOAR and ITSM tools.

- Vendor Ecosystem Compatibility: Integrating anomaly detection from Darktrace with orchestration in Splunk Phantom or pairing endpoint analytics from CrowdStrike Falcon with IBM Security QRadar Advisor with Watson.

- Scalability and Performance: Distributed computing and elastic cloud platforms support high-velocity data streams.

- Security and Resilience: Secure development lifecycles and adversarial testing harden agents against manipulation.

Data and Analytics Foundations

Robust analytic frameworks underpin AI agent effectiveness. Key considerations include:

- Model Transparency: Explainable AI techniques build trust and support forensic justification.

- Bias and Drift Management: Continuous validation and adaptive retraining guard against performance degradation.

- Hybrid Approaches: Layering rule-based logic with supervised, unsupervised and RL models ensures comprehensive detection.

- Contextual Enrichment: Incorporating threat intelligence, asset criticality and business context aligns decisions with risk appetites.

- Real-Time vs Batch Processing: Balancing streaming analytics for immediate response with batch analysis for strategic insights optimizes resources.

Governance, Risk and Compliance Frameworks

Robust governance balances agility with control. Essential elements include:

- Policy Definition: Defining agent autonomy boundaries, escalation thresholds and human-in-the-loop checkpoints.

- Auditability and Reporting: Logging agent decisions, model versions and data inputs for investigations and audits.

- Compliance Alignment: Mapping agent capabilities to ISO 27001, NIST SP 800-53 and GDPR control objectives.

- Risk Appetite Management: Incorporating residual risk from false positives and negatives into risk registers.

- Ethical Safeguards: Conducting privacy impact assessments and ethical reviews for behavioral analytics.

Measuring Impact and Maturity

To demonstrate value, organizations track metrics aligned with AI agent deployment stages:

- Detection Efficacy: True and false positive rates, time to detection.

- Response Acceleration: Mean time to containment and remediation.

- Operational Efficiency: Analyst productivity gains and automation coverage.

- Business Risk Reduction: Changes in breach frequency, remediation windows and compliance violations.

- Maturity Progression: Stages from pilot to full integration and continuous optimization.

Key Limitations and Risk Considerations

AI agents offer transformative potential but present inherent risks:

- Data Quality Constraints: Inaccurate or biased inputs lead to flawed outputs.

- Automation Bias: Overreliance without human validation can exacerbate errors.

- Adversarial Manipulation: Attackers may probe and evade agent logic without adversarial testing.

- Scalability vs Precision: Simplifying models for scale may reduce detection fidelity.

- Vendor Lock-In: Proprietary platforms can hinder future innovation and increase costs.

Phased Adoption Roadmap

Industry leaders recommend a phased approach to integrate AI agents effectively:

- Conduct a readiness assessment, aligning AI objectives with risk priorities.

- Launch targeted pilots in high-impact use cases such as anomaly detection and automated triage.

- Establish cross-functional governance bodies for policy, validation and ethical oversight.

- Scale deployment iteratively, investing in data infrastructure, talent and platform integration.

- Embed continuous feedback loops driven by performance metrics, user feedback and threat intelligence.

By aligning strategic insights with pragmatic execution, organizations can embed AI agents as integral pillars of their security and risk management ecosystem, balancing innovation with governance to achieve resilient, adaptive defenses.

Chapter 2: Threat Detection and Predictive Analytics Agents

Security Operations Evolution and Market Drivers

Over the last two decades, security operations have transitioned from perimeter-centric defenses—firewalls, intrusion prevention systems, antivirus—to intelligence-driven, proactive postures. Traditional controls depended on static rule sets and known signatures, protecting defined network edges. With the advent of cloud computing, mobile devices, remote workforces, and IoT proliferation, the security boundary has dissolved. Attackers exploit zero-day vulnerabilities, encrypted channels, and living-off-the-land techniques to bypass legacy defenses. In response, enterprises must achieve continuous visibility and resilience across hybrid architectures.

Several market forces are accelerating this transformation:

- Digital Transformation: Large-scale adoption of public and private clouds, microservices, and containerization requires holistic monitoring and dynamic policy enforcement across distributed assets.

- Regulatory and Compliance Pressure: Frameworks such as GDPR, CCPA, PCI DSS, and sector-specific mandates impose stringent requirements for data protection, breach reporting, and audit trails.

- Talent Shortages: A global cybersecurity skills gap limits the capacity of security operations centers (SOCs) to manually investigate alerts, driving automation to augment human expertise.

- Threat Complexity: Advanced persistent threat actors, ransomware gangs, and supply-chain attackers deploy multi-stage campaigns requiring rapid detection and containment.

- Data Proliferation: Exponential growth in telemetry from endpoints, applications, network devices, and OT sensors overwhelms manual processing capabilities.

- Cost Optimization: Organizations seek to reduce total cost of ownership by consolidating disparate security tools into integrated platforms and leveraging machine learning to streamline processes.

These dynamics have spurred next-generation security platforms—XDR, SOAR, threat intelligence systems—that incorporate artificial intelligence and machine learning. While these platforms improve coordination, many SOCs still face high alert volumes, lengthy investigation cycles, and inconsistent risk prioritization. Embedding autonomous AI agents into security ecosystems enables closed-loop workflows that ingest data, analyze signals, make decisions, and automate remediation. This agent-centric model unlocks scalable, adaptive defenses capable of responding to evolving threat vectors in real time.

AI Agent Framework and Integration

An AI agent within a security ecosystem is an autonomous software entity that perceives its environment, analyzes telemetry, learns from data, and takes actions. A comprehensive agent framework comprises:

- Perception Modules: Agents collect and normalize heterogeneous data—logs, network flows, endpoint sensor readings, identity management events, vulnerability feeds—through parsers, collectors, and enrichment engines. Data is structured and tagged to support downstream analysis.

- Analytical Core: Machine learning techniques—supervised, unsupervised, reinforcement learning—operate alongside statistical engines and heuristic rules. These components detect anomalies, profile behaviors, and forecast attacker tactics. Deep neural networks, autoencoders, clustering algorithms, and time-series models collaborate to refine detection accuracy.

- Decision Layer: Policy engines and probabilistic scoring mechanisms evaluate risk thresholds, context, and business impact. Decision logic orchestrates whether to escalate alerts, initiate automated containment, or continue monitoring. Human-defined playbooks integrate with adaptive policies to maintain governance and oversight.

- Action and Orchestration Interfaces: Agents interface with security controls—next-generation firewalls, endpoint protection platforms, cloud security APIs, identity providers—and with SOAR engines to execute remediation steps. Playbooks define multi-stage workflows across tools, ensuring coordinated response.

- Learning Feedback Loop: Outcomes of automated actions, analyst investigations, and post-incident reviews feed back into training pipelines. Reinforcement learning and model retraining reduce false positives and improve detection of emerging threats.

In an integrated ecosystem, specialized agents—threat-hunting assistants, vulnerability scanners, insider-risk monitors—operate concurrently at network, endpoint, identity, and application layers. They publish insights to a centralized orchestration platform, enabling situational awareness and granular control. This modular architecture augments human analysts, reduces manual toil, and delivers repeatable, consistent outcomes.

Anomaly Detection and Predictive Model Evaluation

Anomaly detection and threat forecasting models are the analytical backbone of proactive defense. Security teams, data scientists, and risk managers evaluate these models by both theoretical soundness and real-world performance. Model categories include:

- Supervised Classification Models: Algorithms such as decision trees, random forests, support vector machines, and deep neural networks trained on labeled datasets distinguish malicious from benign events with high precision, given quality labels. However, they may falter when encountering novel threats.

- Unsupervised and Semi-Supervised Techniques: Clustering, density estimation, and autoencoders learn normal behavior profiles and detect outliers without extensive labeling. Semi-supervised methods combine limited labeled samples with abundant unlabeled data to improve sensitivity.

- Statistical and Rule-Based Models: Z-score analysis, moving averages, time-series decomposition, and threshold-based heuristics offer interpretable detection of deviations from historical baselines.

- Time-Series Forecasting: ARIMA, exponential smoothing, and state-space models forecast key metrics—network traffic, system calls, user logins—and flag deviations as early warnings.

- Hybrid and Ensemble Frameworks: Layered pipelines integrate supervised classifiers, unsupervised detectors, and forecasting models to address varied threat characteristics and reduce blind spots.

Rigorous validation ensures model reliability. Key metrics and practices include:

- Confusion Matrix Analysis: Measuring true positives, false positives, true negatives, and false negatives to compute precision and recall.

- F1 Score and Fβ Measures: Balancing precision and recall according to cost of missed threats versus false alarms.

- ROC AUC: Evaluating trade-offs between true positive rate and false positive rate across thresholds.

- False Positive Rate and Alert Fatigue Metrics: Tracking proportion of low-risk alerts to maintain SOC efficiency.

- Detection Latency (Time-to-Detect): Measuring delay between anomaly onset and alert generation to minimize dwell time.

- Precision at K (P@K): Assessing accuracy within top-ranked anomalies to focus analyst effort.

- Cross-Validation and Bootstrapping: Ensuring generalizability and confidence intervals for performance metrics.

Security environments evolve rapidly, necessitating drift detection and bias mitigation. Techniques such as synthetic minority oversampling (SMOTE) and cost-sensitive learning address class imbalance, while continuous drift monitoring dashboards trigger retraining of models when data distributions shift. Interpretability frameworks—feature importance ranking, SHAP values, LIME explanations, rule extraction via decision trees—provide transparency for governance and build analyst trust.

Integration with SIEM and SOAR platforms enhances operational value. For example, IBM Security QRadar Advisor with Watson and Splunk Phantom ingest anomaly scores into centralized dashboards and automate incident response playbooks. Proprietary solutions like Darktrace Enterprise Immune System use unsupervised pattern discovery to detect novel threats in network telemetry. Security data scientists emphasize custom feature engineering, enriching models with threat intelligence feeds, vulnerability metrics, and user behavior logs to improve detection fidelity.

Strategic model selection involves trade-offs:

- Balancing Sensitivity and Specificity: Defining acceptable false positive rates against risk tolerance and resource capacity.

- Resource Allocation: Assessing in-house development costs versus off-the-shelf solutions, factoring expertise, data labeling, and validation processes.

- Governance and Compliance: Ensuring model decision trails and explainability meet regulatory requirements for audit and reporting.

- Vendor versus In-House Expertise: Choosing between managed detection services for rapid deployment and internal teams for deeper customization and control.

Operational Scenarios for Threat Forecasting

Threat forecasting provides strategic foresight by transforming real-time telemetry into predictive insights. Key operational scenarios include:

Network Security Operations

Forecasting engines analyze streaming flow data, endpoint logs, and vulnerability disclosures to project attack vectors and exploitation probabilities. SOC teams use severity forecasts to prioritize investigations, adjust sensor thresholds, and allocate analyst shifts. Scenario-based workshops map high-confidence predictions to containment playbooks using MITRE ATT&CK taxonomies, ensuring actionable intelligence.

Fraud Monitoring in Financial Services

Transaction monitoring agents synthesize sequential payment data, customer profiles, device fingerprints, and external economic indicators to estimate fraud likelihood. Outputs presented as risk bands drive proactive rule updates, credit limit adjustments, and customer re-authentication. Forecast models also support regulatory stress tests by simulating loss scenarios under varying market conditions.

Supply-Chain Risk Assessment

Forecasting bots ingest supplier performance metrics, open-source intelligence, and logistics data to predict disruptions—from component shortages to cybersecurity breaches in vendor networks. “If-then” impact matrices link forecasted risks to operational outcomes, guiding procurement strategies, alternative sourcing, and contingency budgeting.

Cloud and Hybrid Infrastructure Monitoring

Agents reconcile telemetry from public clouds—AWS, Azure, Google Cloud—and on-premises systems to forecast misconfigurations, emerging container vulnerabilities, and anomalous identity activities. Forecast outputs feed adaptive policy engines that dynamically enforce security controls, aligning with DevSecOps pipelines and continuous integration workflows.

Identity-Centric Forecasting

User and entity behavior analytics track login patterns, access requests, and device usage to forecast credential compromise or insider malicious activity. Risk trajectories—gradual deviations from historical baselines—trigger adaptive authentication and access reviews, balancing security and user experience.

Industrial Control Systems and Operational Technology

Forecasting in OT environments combines sensor readings, command logs, and maintenance histories to anticipate equipment failures or cyber-physical attacks. Cross-disciplinary teams use risk matrices mapping probability against safety, production, and compliance impacts. Predictions inform preventative maintenance schedules and isolation of critical subsystems.

Third-Party and Ecosystem Monitoring

Agents monitor vendor breach disclosures, patch cycles, and financial health metrics to forecast third-party risk exposure. Composite risk indices inform vendor scorecards, contractual terms, and insurance strategies within a holistic ecosystem risk model.

Collaborative Forecasting and Intelligence Sharing

Federated learning and shared threat intelligence feeds enable organizations to collaboratively train forecasting models without exchanging sensitive data. Sector-specific taxonomies and joint governance frameworks expand horizon scanning capabilities and accelerate discovery of emerging threat patterns.

Strategic Insights and Critical Deployment Considerations

Threat detection and predictive analytics agents elevate security operations from reactive to anticipatory postures. Key strategic insights include:

- Comprehensive Data Ingestion: Broad coverage across network, endpoint, cloud, identity, and OT data to minimize blind spots while retaining signal specificity.

- Hybrid Model Portfolios: Combining supervised classifiers for known threats, unsupervised detectors for zero-day anomalies, and ensemble techniques for layered defense.

- Robust Validation and Metrics: Employing confusion matrix analysis, ROC AUC, detection latency, precision at K, and continuous drift monitoring to maintain model efficacy.

- Explainable and Transparent AI: Integrating SHAP, LIME, and rule extraction to satisfy audit, compliance, and executive reporting requirements, fostering trust in algorithmic decisions.

- Seamless Platform Integration: Leveraging open APIs and messaging protocols to connect predictive engines with SIEM, SOAR, ticketing, and orchestration systems, ensuring coordinated response.

- Governance, Privacy, and Ethics: Aligning data usage policies with GDPR, CCPA, and sector mandates; establishing oversight mechanisms to prevent misuse of predictive signals.

- Total Cost and Resource Planning: Accounting for infrastructure, data engineering, model management, and specialized talent in cost-benefit analyses and phased rollouts.

- Cross-Functional Collaboration: Bringing together security engineers, data scientists, threat intelligence analysts, legal, and business stakeholders to align analytic hypotheses with organizational risk appetite.

Industry Perspectives and Tool Integration

Security leaders emphasize vendor neutrality and open frameworks to avoid lock-in. Open source libraries such as scikit-learn, TensorFlow, and PyTorch enable custom model development, while managed solutions accelerate deployment. Benchmarking against datasets like UNSW-NB15 and KDD Cup provides reference points, but real-world complexity requires production validation. Integration ease—API support, connector availability, dashboard unification—influences tool selection. Platforms that offer threat intelligence fusion, behavioral analytics, and incident orchestration under unified interfaces deliver higher ROI. Vendors differentiate by offering prepackaged playbooks, enrichment connectors to threat intelligence feeds, and low-code interfaces for custom analytics. Organizations must weigh the flexibility of open source against the turnkey capabilities of commercial platforms, aligning choices with maturity levels and strategic roadmaps. Collaborative ecosystems, enabled by standards such as STIX/TAXII, facilitate sharing of indicators and contextual threat data across stakeholders.

Continuous Improvement and Governance

Continuous improvement requires establishing governance forums—analytics councils, security architecture boards—that oversee model performance reviews, change management, and risk appetite alignment. Documented processes for model versioning, incident feedback incorporation, and periodic audit ensure sustainable predictive security programs. Training for analysts and executives on interpreting model outputs and understanding algorithmic limitations fosters organizational readiness and accelerates adoption.

- Data Quality and Completeness: Centralizing telemetry in data lakes with standardized schemas to ensure reliable model inputs and avoid sensor blind spots.

- Model Bias and Concept Drift: Implementing continuous retraining pipelines and fallback anomaly detection for novel threats.

- Balancing False Positives and False Negatives: Calibrating thresholds to align with resource capacity and risk tolerance, with tiered alert strategies for high-confidence automation and human review of lower-confidence events.

- Performance and Scalability: Provisioning compute and stream processing platforms to sustain real-time inference at scale and minimize detection latency.

- Integration Complexity: Prioritizing architectural standardization and open interfaces to reduce friction in embedding predictive agents within existing workflows.

- Governance and Compliance: Establishing audit trails for model decisions, data retention policies, access controls, and anonymization techniques to adhere to privacy regulations.

- Ethical Oversight: Formulating clear policy guardrails to govern the use of behavior-based analytics and prevent discriminatory or intrusive outcomes.

- Resource and TCO Planning: Conducting rigorous cost-benefit analyses for software licensing, infrastructure provisioning, data engineering, and talent acquisition.

By embedding autonomous AI agents within a modular architecture and enforcing disciplined governance, organizations can automate routine security tasks, elevate threat awareness, and anticipate adversarial changes. Iterative proofs-of-concept, phased deployments, and continuous feedback loops anchor a resilient, adaptive defense posture capable of meeting evolving strategic and operational objectives.

Chapter 3: Automated Incident Response and Remediation Agents

Contextualizing AI-Driven Incident Orchestration

Over the last twenty years, incident response has evolved from manual playbooks and analyst-driven workflows into sophisticated, AI-augmented systems. Early security operations centers struggled under the weight of rising alert volumes and complex threats—polymorphic malware, fileless attacks and coordinated campaigns that moved at machine speed. Legacy rule-based systems could not adapt to novel tactics, leading to delayed containment and unaddressed risks. This urgency spurred the adoption of orchestration platforms and automation, paving the way for AI-driven incident orchestration.

AI-driven incident orchestration coordinates artificial intelligence agents to manage end-to-end response workflows. It extends traditional SOAR capabilities by embedding adaptive learning and contextual reasoning throughout the incident lifecycle—alert triage, threat enrichment, decision guidance, containment, remediation and post-incident analysis. This shift from static playbooks to intelligent workflows empowers organizations to ingest heterogeneous data, derive real-time insights and execute actions with speed and precision.

Architectural Foundations

An effective AI-driven orchestration platform comprises interdependent modules that work in concert to automate and refine response actions.

- Data Ingestion and Normalization: Aggregates telemetry from endpoints, networks, cloud services, identity systems and external feeds. Normalization engines convert disparate formats into a unified schema for downstream analysis.

- Analytics and Intelligence Layer: Employs supervised, unsupervised and reinforcement learning models to detect anomalies, enrich alerts with threat context and assign risk scores. Natural language processing parses unstructured threat reports and security bulletins.

- Decision Orchestration Engine: Coordinates response workflows by translating risk assessments into conditional tasks across detection, containment and remediation phases. It supports parallel execution, escalation protocols and dynamic playbook adjustments.

- Automation and Execution Modules: Interfaces with firewalls, endpoint detection and response agents, identity management and cloud platforms to carry out containment and remediation via robust API integrations.

- Feedback and Learning Loop: Captures outcomes from automated actions, analyst overrides and post-incident reviews. This loop continuously refines model parameters and decision thresholds to improve future response accuracy.

- Governance and Audit Framework: Logs agent decisions, executed actions and human interventions. Enforces role-based access controls, change management procedures and compliance reporting for transparency and accountability.

At its core, the platform harmonizes a rich tapestry of data—asset inventories, user identities, network flows, process behaviors and threat intelligence. Timely ingestion, deep contextual enrichment and high data integrity enable AI agents to generate multidimensional risk scores that inform precise, prioritized actions.

Adopting AI-driven orchestration addresses persistent SOC challenges:

- Alert fatigue mitigation through automated false-positive filtering

- Accelerated response via automated isolation of endpoints and revocation of compromised credentials

- Standardized protocols across global teams to ensure consistency

- Scalability to handle growing digital footprints without proportional headcount increases

- Continuous improvement driven by feedback loops that refine decision logic

Decision Frameworks for Automated Response

Incident response decision frameworks guide automated or semi-automated remediation actions along two primary axes: rule-based schemas and adaptive learning models, and the level of context awareness incorporated into decision logic.

Rule-Based Versus Adaptive Models

Rule-Based Logic

Encodes expert knowledge as if-then statements within static playbooks. It delivers predictability, ease of audit and regulatory compliance by executing predefined action sequences when alert conditions trigger. However, its rigidity can impede adaptation to novel attack patterns, requiring manual overrides or delayed responses.

Adaptive Learning

Leverages historical incident data, real-time telemetry and threat intelligence to evolve remediation strategies. Supervised and reinforcement learning enable systems to propose contextually relevant actions and reduce analyst fatigue. The trade-off lies in transparency: complex models may lack explainability, necessitating robust governance to maintain trust.

Interpretive Frameworks and Governance Hierarchies

Practitioners align decision logic to established frameworks for clarity and control:

- NIST Special Publication 800-61r2 to map automated actions onto preparation, detection, containment, eradication and recovery phases.

- MITRE ATT&CK to prioritize responses against high-impact adversary tactics and techniques.

A tiered approval hierarchy balances speed and risk:

- Tier 1 Automated Actions: Low-risk tasks like isolating endpoints or blocking malicious IPs, executed without human intervention.

- Tier 2 Analyst-Review Actions: Mid-risk measures such as applying host-based firewall rules or resetting credentials, requiring confirmation.

- Tier 3 Expert Approval: High-impact steps like system reimaging or network segmentation changes, reserved for senior analysts.

Integration with Security Orchestration Platforms

Security orchestration platforms provide the connective tissue for AI agents, standardizing data exchange, maintaining audit trails and enforcing policies. Leading solutions:

Experts assess platform capabilities by integration breadth, data model consistency, workflow flexibility, analytics and governance controls. They categorize AI agent integration maturity into three levels:

- Data-Centric Integration: Agents enrich incident records via APIs but remain external to core playbooks.

- Workflow Integration: Agents execute discrete tasks—alert triage, enrichment or containment—within orchestrated playbooks.

- Adaptive Orchestration: Agents autonomously adjust playbooks in real time, leveraging feedback loops to refine response sequences.

Deeper integration elevates agent autonomy—from classification to direct execution of containment actions—while orchestration platforms enforce guardrails through policy definitions, approval thresholds and exception handling protocols.

Vendor strategy influences integration decisions. A single-vendor ecosystem simplifies data schemas and support but may limit innovation. A best-of-breed approach fosters access to specialized tools at the cost of increased connector management. Industry standards such as STIX/TAXII, OpenC2 and MITRE ATT&CK enable interoperability by aligning data taxonomies and playbook development across diverse environments.

Comprehensive governance spans change management, performance monitoring, cross-functional communication and training. Integrated platforms become learning hubs where AI agents and security professionals co-evolve—tuning playbooks, optimizing hand-offs and updating threat models to sustain resilience.

Balancing Speed and Accuracy

Automated response demands a strategic calibration of speed to limit dwell time and accuracy to preserve operational continuity. Key insights include:

- Response latency directly impacts risk exposure; milliseconds matter in preventing exfiltration.

- Precision preserves business workflows and stakeholder trust by minimizing false positives.

- Dynamic calibration—continuous adjustment of thresholds based on feedback loops and performance metrics—is essential.

- Human-machine collaboration, with escalation protocols and in-the-loop checkpoints, delivers optimal outcomes.

- Governance and auditability underpin trust through transparent logging and explainable decision trails.

Analytical Frameworks

Practitioners employ quantitative models to guide response profiles:

- Delay-Cost Analysis: Weighs expected losses from delayed containment against costs of misdirected interventions to derive optimal latency targets.

- Confidence Threshold Optimization: Uses ROC and precision-recall curves to set dynamic confidence scores that trigger automated or human-reviewed actions.

- Risk Score Calibration: Aligns actions with contextual risk assessments—asset criticality, user profiles and threat intelligence—to prioritize high-impact events.

- Continuous Feedback Loops: Feeds outcomes of automated actions—successful containments, false positives or user impacts—back into learning models to self-optimize over time.

Governance, Limitations and Future Directions

Robust governance ensures automated interventions comply with policies, SLAs and regulatory constraints. Essential controls include role-based approval gates, transparent decision logs, human-in-the-loop controls for high-impact actions and legal reviews for compliance. Even advanced systems face inherent limitations:

- Data Quality and Context Gaps: Noisy or incomplete telemetry can misguide agents.

- Model Bias and Drift: Static training data may fail to represent evolving adversary tactics.

- Adversarial Manipulation: Attackers may probe response behaviors to induce false positives or evade detection.

- Operational Overhead: Continuous model retraining and governance reviews demand dedicated resources.

- Integration Complexity: Interfacing with legacy infrastructure and third-party services can introduce latency and misconfigurations.

Looking ahead, innovation will converge on:

- Adaptive Orchestration Engines that self-adjust policies and thresholds in real time through meta-learning.

- Contextual Policy Networks built on knowledge graphs to assess downstream impacts before executing interventions.

- Explainable AI Enhancements that generate natural language justifications for automated decisions to improve audit readiness.

- Collaborative Intelligence Ecosystems leveraging federated learning to share anonymized threat insights and response strategies across organizations.

By grounding AI-driven orchestration in a framework of architectural rigor, decision analytics, integration maturity and robust governance, security teams can accelerate response velocity without sacrificing precision—transforming incident management into a resilient, adaptive discipline.

Chapter 4: Vulnerability Assessment and Penetration Testing Agents

Evolution of Vulnerability Assessment and the Rise of Autonomous Discovery

Over the past two decades, vulnerability assessment has transitioned from intermittent manual scans and scheduled network sweeps to continuous, automated processes embedded within security operations. Early practices relied on manual penetration tests and periodic network scans, often leaving extended windows of exposure. As threat actors deployed automated scanners, exploit kits, and sophisticated chaining techniques, traditional tools—bound by predefined signatures and static rule sets—struggled to keep pace with distributed, cloud-native, and microservices architectures.

Modern enterprise infrastructures span on-premises data centers, multi-cloud environments, containerized applications, Internet of Things endpoints, and remote devices. Each introduces unique protocols, configurations, and threat vectors. The convergence of operational technology and information technology further expands the attack surface to include industrial control systems and embedded devices with real-world safety implications. The result is an imperative for security teams to adopt self-driving, adaptive, and context-aware vulnerability discovery.

Key market and operational drivers fueling this shift include escalating threat sophistication, continuous delivery in DevOps pipelines, talent shortages, regulatory mandates for timely remediation evidence, and the demand for real-time risk posture visibility. These forces converge to transform vulnerability assessment into an autonomous discipline that continuously identifies, correlates, and prioritizes vulnerabilities with minimal human intervention.

Core Mechanisms of AI-Powered Vulnerability Discovery

Asset Identification and Profiling

Autonomous agents maintain a dynamic inventory of hardware and software assets by ingesting telemetry from network sensors, endpoint agents, cloud management APIs, and container orchestrators. Machine learning models classify unknown devices based on network behavior, protocol fingerprints, and system metadata. This continuous profiling ensures that ephemeral workloads and rapidly changing resources never evade assessment.

Contextual Vulnerability Correlation

AI systems correlate scan results with threat intelligence feeds, exploit databases, and internal incident logs. Natural language processing analyzes vulnerability descriptions and advisories to surface active exploit campaigns targeting specific software versions. By filtering out low-risk findings, security teams focus on exposures most likely to be weaponized.

Risk-Based Prioritization and Scoring

Autonomous platforms assign risk scores using predictive algorithms that factor in asset criticality, network exposure, exploitability metrics, and historical incident data. Reinforcement learning refines these models over time, aligning remediation recommendations with observed outcomes. This approach mitigates alert fatigue by directing attention to high-impact vulnerabilities.

Analytical Foundations and Continuous Learning

Machine learning underpins each stage of autonomous discovery. Supervised models trained on historical scan data distinguish true positives from false alarms, while unsupervised clustering detects novel vulnerability patterns. Feature extraction algorithms identify subtle indicators of weakness, such as unusual port combinations and misconfigured access controls. Graph analytics map relationships among assets, vulnerabilities, and threat actors, enabling simulation of potential attack paths and proactive defense planning.

Feedback loops continuously refine analytical models. As remediation actions unfold, agents adjust scanning heuristics and prioritization criteria to enhance accuracy and reduce manual tuning efforts.

Defining and Evaluating AI Agents in Security Ecosystems

AI Agent Taxonomy and Frameworks

- Detection Intelligence: Ingest and correlate heterogeneous data sources to identify anomalies, signature deviations, and emerging attack patterns.

- Decision Orchestration: Apply rule sets, probabilistic models, or reinforcement learning to determine optimal response actions within contextual constraints.

- Autonomous Execution: Initiate containment or remediation tasks—such as endpoint isolation or firewall adjustments—with varying degrees of human oversight.

Industry frameworks such as the MITRE ATT&CK® matrix, Gartner’s SIEM maturity model, and the NIST Artificial Intelligence Risk Management Framework guide practitioners in mapping agent capabilities to strategic objectives and identifying gaps in control coverage.

Perspectives on Autonomy and Adoption Models

- Human-in-the-Loop: Analysts approve critical response actions, ensuring decisions remain traceable to input data and model logic.

- Adaptive Automation: Agents begin with low-risk tasks, such as alert triage, and progressively advance to automated remediation under strict guardrails.

- Full Autonomy: Agents manage end-to-end incident workflows independently in high-velocity threat environments, minimizing human latency.

Organizations deploy AI agents via three primary models:

- Center-Led Deployment: A centralized team pilots agents, develops best practices, and extends capabilities to business units.

- Federated Approach: Business units select and manage agents tailored to their risk profiles under overarching governance.

- Managed Services Partnership: External providers deliver AI-driven operations through platforms such as CrowdStrike Falcon or Palo Alto Networks Cortex XDR.

Performance Metrics and Key Indicators

- Detection Accuracy: True positive rate versus false positive rate against benchmark data sets or red-team exercises.

- Mean Time to Detect (MTTD) and Mean Time to Respond (MTTR): Speed and efficiency in identifying and mitigating threats.

- Alert Volume Reduction: Percentage decrease in alerts requiring human analysis.

- Cost Avoidance: Financial impact of incidents prevented or contained by agent actions.

- Model Drift and Update Cadence: Frequency of retraining cycles to maintain detection fidelity.

Continuous Validation in Dynamic Environments

Continuous validation treats security as an ongoing assurance process that adapts to shifting configurations, application updates, and dynamic workloads. Unlike static scans, validation agents integrate with DevSecOps pipelines, container orchestration platforms, serverless architectures, and multi-cloud deployments to provide a real-time risk snapshot for informed prioritization.

By aligning with COBIT objectives and the NIST Cybersecurity Framework, continuous validation extends passive monitoring to proactive verification of control effectiveness. Agents act as automated auditors, evaluating infrastructure-as-code templates, container images, and runtime behavior against policy baselines.

Integration with Cloud Security Posture Management tools—such as Qualys Cloud Platform, Tenable.io, and Palo Alto Networks Prisma Cloud—enables machine learning-driven risk scoring across diverse cloud services. Shift-left validation embeds policy-as-code checks into build pipelines, preemptively reducing exposures before deployment.

Continuous attack surface mapping leverages network discovery, cloud API enumeration, and orchestration visibility to construct real-time asset inventories. Predictive models trained on historical exploit data forecast which vulnerabilities are likely to be weaponized—for example, Tenable.io’s Predictive Prioritization. Integration with threat intelligence feeds contextualizes findings with active exploit campaigns, enhancing the relevance of validation outputs.

Optimizing Coverage and Managing False Positives

Balancing Coverage Depth and Breadth

Effective assessment balances comprehensive visibility across networks, endpoints, applications, containers, and cloud services (breadth) with thorough protocol analysis, code-level review, and exploit simulation (depth). A tiered scanning strategy assigns high-depth, frequent assessments to mission-critical assets, while lower-priority resources receive broader, less intensive scans. Dynamic risk scoring frameworks adjust priorities in real time based on threat intelligence, business context, and historical patterns. Tools such as Rapid7 InsightVM and Tenable.io facilitate this adaptive scanning approach.

Risk-Based Remediation Workflows

Autonomous agents synthesize exploitability metrics, active threat feeds, and asset roles to generate prioritized remediation queues. Composite risk scores extend CVSS baselines with contextual modifiers—such as compensating controls and network segmentation—enabling cross-functional teams to allocate patches, configuration updates, or network isolations where they matter most.

Reducing False Positives and Alert Fatigue

Multi-stage validation pipelines cross-verify initial findings against threat intelligence databases, historical scan results, and behavioral telemetry. Correlation with evidence of active reconnaissance or exploit attempts filters out low-confidence alerts. Human-in-the-loop feedback refines detection heuristics and suppression rules, while detailed explainability—highlighting code injection vectors or affected libraries—accelerates triage.

Continuous Improvement and Governance

Metrics-driven programs track scanning accuracy, coverage gaps, and alert validation rates. Periodic recalibration of machine learning models ensures alignment with evolving threat tactics. Post-remediation data informs risk scoring refinements, closing the loop between assessment, remediation, and validation. Governance frameworks establish roles, escalation paths, and exception procedures, supplemented by manual reviews and red teaming to address blind spots.

Integration with Security Platforms

- SIEM: IBM Security QRadar Advisor with Watson

- SOAR: Splunk Phantom

- Ecosystem Orchestration: Cisco SecureX

- Asset and Ticketing Systems: Unified dashboards correlate vulnerability data with incident records and IT service management workflows

- DevSecOps Toolchains: Embedding scans in code repositories, build servers, and deployment pipelines

Strategic Value and Organizational Impact

Adopting autonomous discovery and continuous validation delivers accelerated detection cycles, improved accuracy, resource optimization, enhanced resilience, and measurable ROI. Security teams shift from reactive patch management to predictive risk control, strengthening board-level confidence and supporting compliance by demonstrating a mature, data-driven approach. Embedding these practices within enterprise risk management fosters cross-team accountability, aligns performance metrics—such as mean time to validation and control compliance rates—with strategic objectives, and sustains cybersecurity investments as digital environments evolve.

Chapter 5: Identity and Access Management Agents

Security Operations Evolution and Market Drivers

Over the past two decades, security operations have shifted from manual log reviews and rule-based intrusion detection to centralized SIEM platforms and SOAR solutions. However, the rapid adoption of cloud infrastructure, containerization, and distributed architectures has outpaced legacy tools. Market drivers accelerating this transformation include the expanding attack surface from cloud and IoT deployments, increasingly sophisticated threat actors, stringent regulatory mandates for continuous monitoring and real-time reporting, a global shortage of skilled security professionals, and the demand for cost efficiency and operational resilience. These forces have rendered manual processes and static rules insufficient, creating an imperative for solutions that learn from data, reason about novel threats, and orchestrate responses at machine speed. Autonomous AI agents in security and risk management represent this paradigm shift, promising proactive, adaptive protection rather than reactive defense.

Framing AI Agents in Security and Identity Ecosystems

An AI agent in security and risk management perceives its environment through diverse telemetry, analyzes threats with machine learning, decides on optimal response strategies based on contextual risk assessments, and acts by executing containment, remediation, or investigation workflows without explicit human intervention. These functional dimensions—perception, analysis, decision, and action—span a spectrum from specialized detection modules to comprehensive orchestration engines. Industry examples illustrate this range:

- Threat Detection Agents such as Darktrace Enterprise Immune System, leveraging unsupervised learning to identify emerging anomalies

- Predictive Analytics Agents like IBM Security QRadar Advisor with Watson, forecasting threat trajectories from historical patterns

- Incident Response Agents including Splunk Phantom and Palo Alto Networks Cortex XDR, orchestrating rapid containment and remediation

- Vulnerability Assessment Agents such as Rapid7 InsightVM, using machine learning to prioritize critical weaknesses

- Identity and Access Agents like Okta Adaptive MFA, enforcing dynamic authentication policies informed by behavioral intelligence

By mapping AI agents to these roles, security leaders can evaluate emerging technologies, understand integration points with existing platforms, and identify essential capabilities for modern attack surfaces.

Understanding Behavioral Biometrics in Adaptive Authentication

Behavioral biometrics analyzes patterns in human interactions—keystroke dynamics, mouse trajectories, touchscreen gestures, and application usage—to continuously verify identity. Unlike static credentials, behavioral signals provide ongoing, probabilistic risk indicators. Integrated into risk-based authentication frameworks, these signals enable adaptive policies that respond in real time to deviations from user baselines.

Analytical Frameworks and Model Interpretation

Behavioral models employ supervised learning, anomaly detection, and probabilistic scoring, often within a Bayesian framework or ensemble methods combining autoencoders, clustering algorithms, and support vector machines. Dashboards map risk scores to policy thresholds, enabling dynamic triggers such as step-up authentication or session termination. Built-in logging supports audit trails and post-incident forensics.

Evaluating Performance and Operational Viability

- Accuracy Metrics: False acceptance rate (FAR), false rejection rate (FRR), and equal error rate (EER) guide maturity assessments, with optimal EERs typically below 5 percent.

- Scalability: Solutions must handle millions of events across desktops, laptops, and mobile devices without performance degradation.

- Privacy and Compliance: Pseudonymization, consent management, and data minimization ensure alignment with GDPR, CCPA, HIPAA, and internal governance frameworks.

Policy Adaptation and Risk-Based Controls

Dynamic access control adjusts authentication requirements based on composite risk scores derived from behavioral anomalies, geolocation, device reputation, and network context. Policies evolve from silent monitoring for minor deviations to step-up challenges or session termination for high-risk events. Standards such as OpenID Foundation RBA and FIDO Alliance guidelines inform consistent implementations that uphold the principle of least privilege.

Key Performance Indicators

- Authentication Efficiency: Reduction in step-up challenges versus baseline multi-factor authentication rates

- Risk Mitigation Impact: Decline in account takeover incidents and fraudulent access events

- User Friction Index: Satisfaction scores and dropout rates during authentication flows

- Operational Cost Savings: Decreases in helpdesk tickets for password resets and access issues

- Compliance Alignment: Audit readiness and evidence of adherence to data privacy regulations

Challenges and Future Directions

Behavioral biometrics faces debates over model bias, adversarial resilience, and privacy impact. Continuous validation, bias audits, and red-teaming exercises mitigate these risks. Emerging trends include federated learning for privacy preservation, explainable AI for transparency, and converged risk scoring platforms that integrate behavioral, threat intelligence, and network telemetry. Solutions like BioCatch and BehavioSec exemplify advanced behavioral verification in practice.

Managing Privileged Access at Scale

Privileged Access Management (PAM) has evolved from siloed controls to a strategic, identity-centric discipline aligned with Zero Trust principles. Modern environments feature a proliferation of high-risk identities—human users, machine credentials, API tokens, and service accounts—across cloud services, microservices, IoT devices, and distributed workforces. Effective PAM at scale requires intelligence-driven frameworks that assess risk, enforce policies, and adapt in real time.

Risk Models and Core Capabilities

- Contextual Risk Scoring: Real-time evaluations of user behavior, session attributes, geolocation, and device posture guide access decisions.

- Just-In-Time Privilege: Temporary, task-bound credentials minimize the exposure from dormant accounts.

- Secrets Management Integration: Vault solutions centralize storage and rotation of secrets, as exemplified by HashiCorp Vault.

- Behavioral Analytics: Machine learning detects anomalous privileged activity—command sequences, lateral movements—flagging potential insider threats.

PAM Maturity Continuum

- Discovery and Inventory of all privileged identities, including embedded credentials

- Vault and Control via platforms such as CyberArk and BeyondTrust, with role-based access, rotation, and session recording

- Automation and Orchestration integrated with ITSM, DevOps pipelines, and cloud platforms for credential provisioning and policy enforcement

- Intelligent Threat Analytics leveraging AI to correlate identity signals and prioritize alerts

AI-Enhanced Privileged Access Management

Advanced PAM platforms discover new credentials in code repositories and cloud consoles, apply natural language processing to translate policy documents into enforceable rules, and embed behavioral scoring engines to contextualize sessions. Identity governance systems such as SailPoint and Ping Identity provide entitlement reviews and role mining, ensuring privileged roles align with business functions and segregation of duties requirements.

Governance and Compliance Integration

- Periodic Privilege Access Reviews to validate assignments against business needs

- Policy Change Advisory Boards balancing security objectives with operational requirements

- Risk Acceptance Processes for controlled exceptions with defined sunset clauses

- Continuous Improvement Cycles informed by post-mortem analyses and analytics

Regulatory mandates such as SOX, HIPAA, and PCI DSS drive the embedding of compliance controls into PAM policy engines and real-time dashboards, turning reporting exercises into integrated outcomes of intelligent access management.

Balancing Security with User Experience

Effective identity and access strategies achieve robust protection without undue friction. Core tensions arise between risk coverage and user fatigue, model accuracy and adaptation speed, privilege restriction and operational efficiency, and privacy safeguards versus observability. Addressing these tensions requires analytical frameworks and governance mechanisms that prioritize both security and usability.

Analytical Frameworks

- Risk-Based Authentication Matrix: Contextual signals determine authentication requirements, with continuous monitoring of false positive and false negative rates.

- User Friction and Adoption Scorecard: Metrics such as time-to-authenticate, failure rates, and support ticket volume correlate with security incident data to identify high-burden controls.

- Behavioral Drift Analysis: Tracking deviations in keystroke dynamics, mouse movement, and touch gestures to measure drift tolerance and maintain model sensitivity without lockouts.

Strategy and Governance Considerations

- Define a Friction Budget to quantify acceptable authentication steps per scenario

- Adopt Iterative Policy Refinement through cross-functional teams that integrate security, UX, and compliance perspectives

- Invest in Explainable AI Techniques to maintain transparency and regulatory auditability

- Implement Just-In-Time and Just-Enough Access workflows for temporary, context-driven privilege elevations

- Ensure Data Privacy and Ethical Use via anonymization, retention limits, and user consent protocols

- Align with Compliance and Audit Requirements through traceable logs of authentication events, risk scores, and policy decisions

Limitations and Potential Pitfalls

- Overfitting Behavioral Models can increase false negatives when user patterns evolve

- Shadow IT Evasion may arise if controls impose excessive friction

- Bias and Discrimination Risks require rigorous bias testing and diverse data sampling

- Integration Complexity with existing directories and platforms can introduce latency and failure points

- Over-Automation Without Oversight may reduce visibility into edge-case decisions; a hybrid human-in-the-loop model balances efficiency with accountability

Strategic Recommendations and Roadmap