Optimizing Workplace Productivity Insights and Strategies for AI Agent Implementation

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

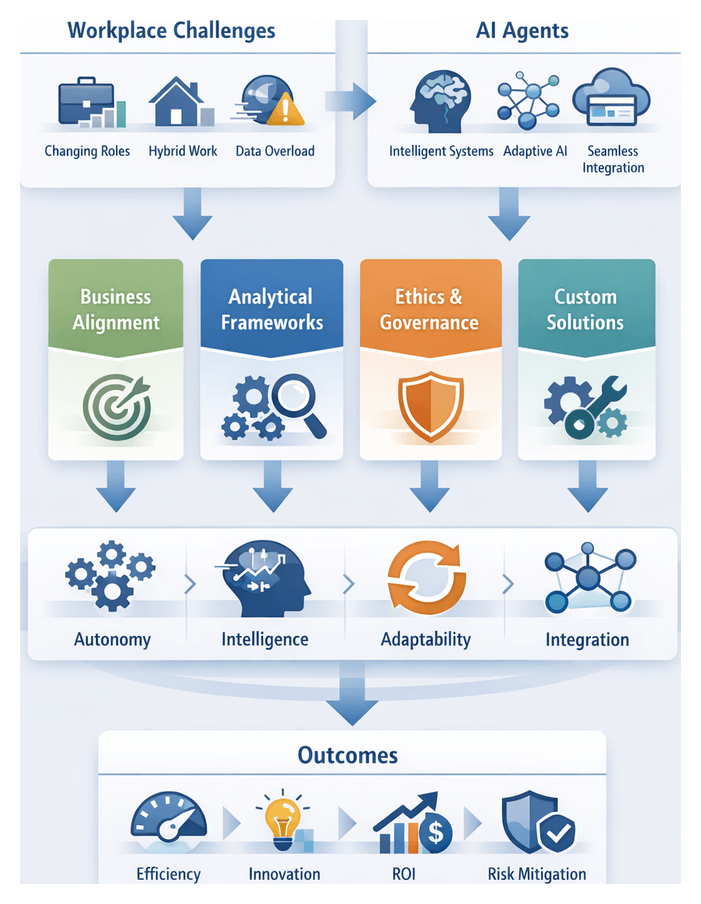

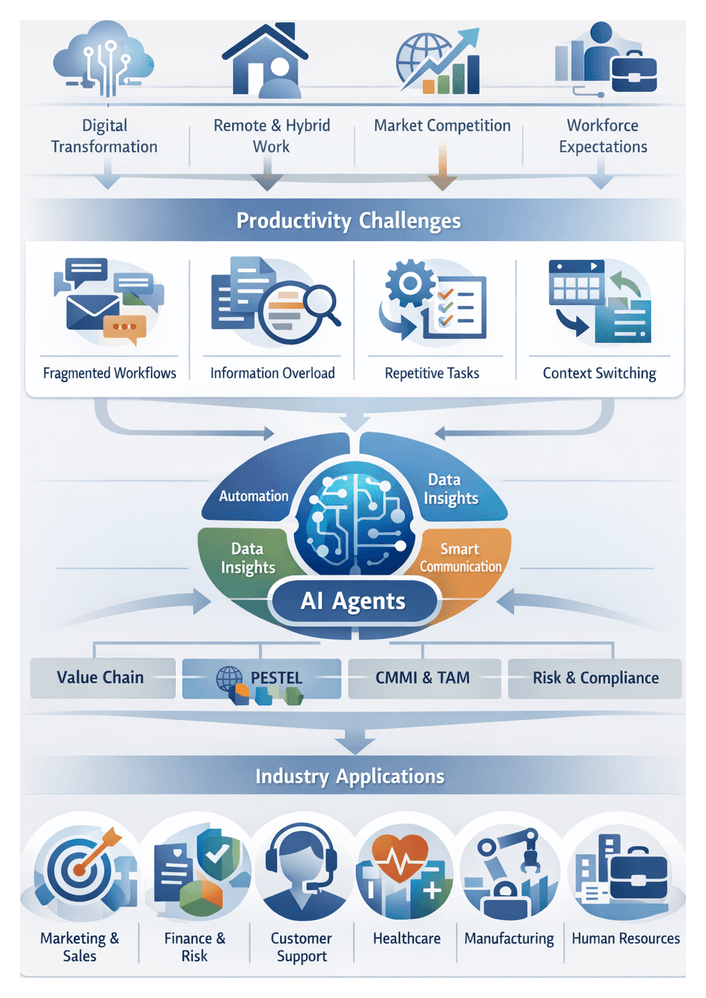

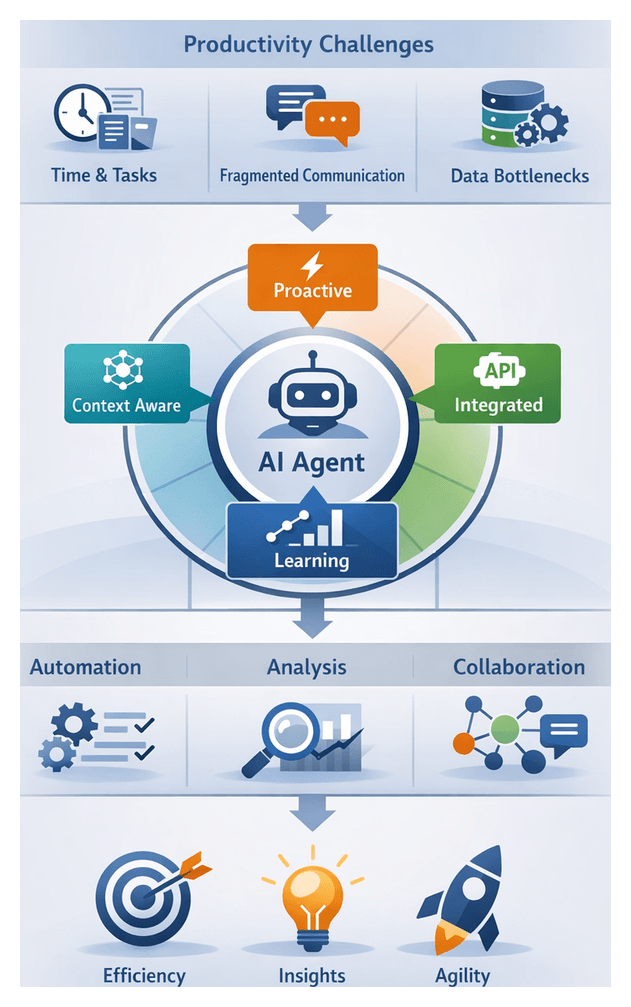

Evolving Productivity Challenges in the Modern Workplace

Shift in Employee Roles and Expectations

Over the past decade, the definition of productivity has transcended traditional metrics of hours worked or basic output. Knowledge workers are now expected to master domain-specific expertise alongside digital literacy, cross-functional collaboration and adaptive problem-solving. As business processes integrate data analytics, customer engagement platforms and automated workflows, employees must balance creative tasks with transactional responsibilities. This heightened complexity blurs the lines between operational duties and strategic decision-making, requiring individuals to dynamically reallocate focus between routine administration and high-value innovation. Concurrently, talent shortages and evolving workforce preferences have intensified expectations around autonomy, continuous development and meaningful work, prompting organizations to redesign workflows that support both business objectives and employee well-being.

Hybrid and Distributed Work Dynamics

The rise of hybrid and remote work has reshaped communication norms and availability expectations. Synchronous meetings now coexist with asynchronous messaging in platforms like Slack and Microsoft Teams, offering flexibility but introducing coordination challenges. Time zone differences and cultural nuances complicate information flow, often resulting in duplicated efforts and delayed decisions. Without clear protocols and shared repositories, teams struggle to maintain alignment and preserve institutional knowledge. Organizations must therefore invest in documented processes, transparent project management and collaboration tools that support both real-time and self-directed work.

Data Overload and Cognitive Strain

Employees now contend with an unprecedented proliferation of digital information. Email threads, chat channels, project dashboards and document libraries generate constant notifications that fracture attention. Studies indicate that knowledge workers may lose up to twenty minutes after an interruption before regaining full focus on complex tasks, extending completion times and increasing error risk. Routine activities—such as data entry, information retrieval and scheduling—consume significant mental bandwidth, leaving little capacity for strategic thinking. Recognizing cognitive load as a critical inhibitor of productivity underscores the need for solutions that reduce fragmentation and enable deeper concentration.

Persistent Operational Bottlenecks

Despite investments in enterprise systems, many organizations still face entrenched sources of friction:

- Information silos that hinder cross-departmental visibility

- High volumes of repetitive administrative tasks such as scheduling and data formatting

- Email overload leading to delayed critical communication and decision-making

- Scheduling conflicts across disparate calendar systems

- Manual data entry and reconciliation with high error rates

- Lengthy approval cycles due to lack of automated routing and validation

These bottlenecks not only slow operations but also erode employee engagement and heighten burnout risk. Organizations that fail to address these low-value tasks demand significant effort to reallocate resources toward higher-order activities, prolonging product development timelines and impeding customer satisfaction.

The Growing Performance Gap

While tools such as ERP systems, collaboration suites and analytics platforms promise streamlined processes, integration challenges and inconsistent usage often limit their impact. Employees revert to workarounds—spreadsheets, personal trackers or ad hoc communication channels—that bypass standardized workflows and obscure data insights. This misalignment between technology investment and actual productivity outcomes reflects a deeper issue: static solutions lack the contextual awareness and adaptability required to manage end-to-end workflow complexity.

Emergence of AI Agents as Cognitive Collaborators

From Static Automation to Dynamic Intelligence

AI agents represent a paradigm shift from rule-based scripts to autonomous systems capable of interpreting natural language, learning from context and adapting over time. Unlike traditional automation, which executes predefined tasks, AI agents employ machine learning and natural language processing to understand user intent and surface relevant information proactively. Use cases range from intelligent scheduling assistants and email triage to strategic decision-support systems that analyze data trends and recommend next steps. By offloading routine work—such as meeting coordination and document drafting—AI agents reduce cognitive burden and free employees to focus on activities that drive innovation.

Technological Maturity and Scalability

Recent advances in large language models and real-time reasoning have propelled AI agents into production-ready offerings. Solutions like ChatGPT, Google Gemini and Microsoft 365 Copilot integrate seamlessly with enterprise applications, offering robust APIs and scalable deployments via cloud and edge infrastructure. Key trends include:

- Model Performance Ceiling – Human-level accuracy on language, image and code benchmarks narrows the gap between manual and automated tasks.

- API-Driven Integration – Frameworks from vendors like AWS AI services enable rapid embedding of agent capabilities into existing workflows.

- Distributed Infrastructure – Elastic compute and edge deployments ensure low-latency, mission-critical performance.

This confluence of technological readiness and vendor support reduces adoption risk and accelerates time to value, marking a transition from isolated pilots to enterprise-scale deployments.

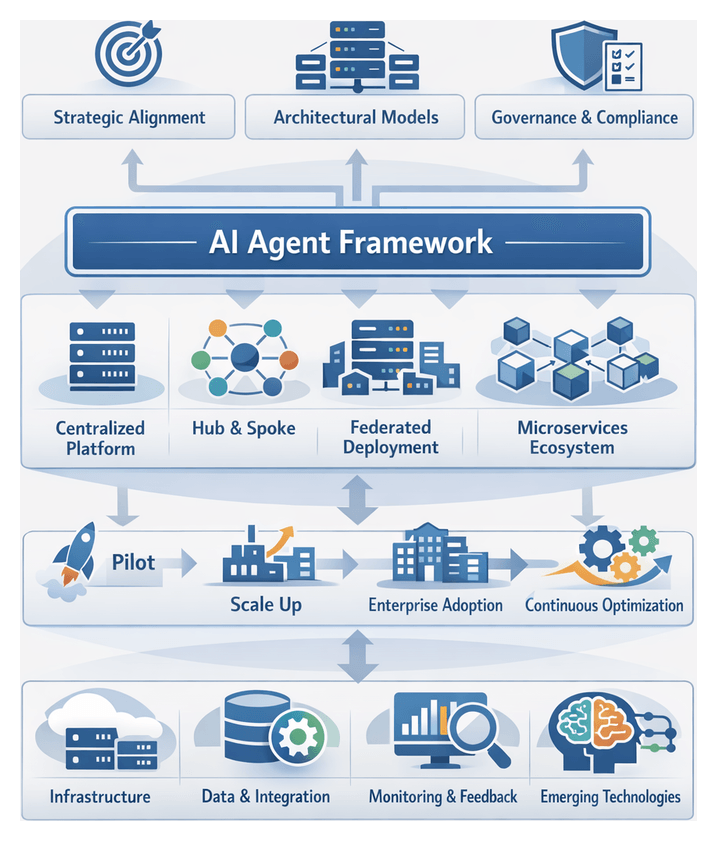

Conceptual and Analytical Frameworks for AI Agent Adoption

To guide strategic investment and governance, organizations employ a suite of analytical lenses:

- Technological Maturity Models – Benchmark agent autonomy, learning proficiency and integration depth, drawing on the Gartner Hype Cycle and Capability Maturity Model Integration.

- Socio-Technical Systems Theory – Examine the interplay between people, processes and technology to optimize adoption and align with organizational culture.

- Value Chain Analysis – Identify high-impact deployment areas across procurement, customer service and product development for competitive differentiation.

- Dynamic Capabilities Framework – Assess how agents enable sensing, seizing and transforming business processes in response to market shifts.

- Ethical and Governance Models – Embed transparency, fairness and accountability through bias audits, data minimization and audit trails.

Enterprises translate these frameworks into actionable assessments by evaluating agents across four key dimensions:

- Autonomy – Percentage of tasks executed end-to-end without human intervention.

- Intelligence – Accuracy of language understanding and predictive analytics, as evidenced by IBM Watson Assistant and Google Dialogflow.

- Adaptability – Speed and effectiveness of model refinement through successive retraining cycles.

- Integration – Seamlessness of connection with CRMs, ERPs and collaboration platforms like Salesforce Einstein.

Weighted scoring matrices enable prioritization of use cases based on strategic objectives—for example, emphasizing integration and intelligence for customer support, or autonomy and adaptability for internal knowledge management.

Overlaying maturity models with ethical and socio-technical considerations helps balance feasibility with user acceptance, guiding investments that adhere to compliance requirements and organizational culture.

Design Principles and Governance Considerations

Embedding AI agents effectively demands a holistic approach combining design thinking, agile practices and robust governance:

- Role Definition – Clarify whether agents function as assistants, collaborators or autonomous operators to shape user interfaces and policy frameworks.

- Trust and Transparency – Incorporate confidence scores, decision trace logs and explainability dashboards to build user trust.

- Human-Agent Collaboration Models – Establish “man-in-the-loop” and “human-on-the-loop” protocols for oversight and feedback.

- Agile Development – Use iterative sprints, rapid prototyping and cross-functional workshops to ensure agents address real pain points.

- Change Management – Deploy training programs, documentation and communication plans to foster cultural readiness and skill development.

- Bias Mitigation and Privacy – Apply diverse sampling, differential privacy and federated learning techniques to prevent discriminatory outputs and maintain compliance.

- Auditability and Accountability – Design traceable workflows and assign oversight roles—such as ethics committees or AI stewardship councils—to monitor agent behavior and governance adherence.

Strategic Imperatives and Competitive Dynamics

As AI agents move from pilot to mainstream, competitive dynamics intensify. Organizations augmenting human teams with agent-driven insights can accelerate decision cycles, optimize costs and elevate customer experiences via responsive chatbots and personalized interfaces. According to Porter’s Five Forces, intelligent agents heighten rivalry and raise barriers for late adopters. Research from McKinsey indicates enterprises implementing AI at scale report 20–30 percent improvements in key performance indicators, setting new benchmarks for operational excellence.

Workforces now expect seamless, intelligent assistants embedded in tools such as Google Bard and mobile scheduling apps. Technology Acceptance Model research confirms that perceived usefulness and ease of use drive rapid adoption and lower burnout rates. Organizations that delay risk strategic disintermediation, talent drain and fragmented point solutions that undermine long-term agility.

Roadmap Overview and Core Strategic Themes

This guide presents a structured path for evaluating, integrating and scaling AI agents, centered on four interdependent themes:

- Alignment with Business Objectives – Map agent capabilities to clear goals using scorecards and value-at-stake analyses to focus on high-impact scenarios.

- Analytical Rigor – Employ performance and trust metrics—throughput, error rates, explainability scores—and ROI calculators incorporating costs for services like AWS AI services, subscription fees for Microsoft 365 Copilot and licensing for Google Bard.

- Domain-Specific Customization – Integrate industry ontologies, proprietary data and bespoke models to address unique workflows across marketing, finance and operations.

- Ethical Stewardship and Governance – Implement bias detection, privacy-preserving architectures and audit frameworks that align with GDPR, CCPA and sector regulations.

Subsequent chapters explore practical applications—from administrative automation with ChatGPT plugins and scheduling via Asana and Trello to collaboration enhancements in Zoom and strategic decision support with IBM Watson. Architectural patterns for ERP, CRM and data warehouse integration, measurement frameworks for ROI and domain-specific customization techniques round out the roadmap.

Key considerations—including data quality, integration complexity and cultural readiness—will be examined in depth to temper expectations with practical risk assessments and ensure sustainable success.

Expected Outcomes and Next Steps

By internalizing the insights, frameworks and case studies presented, readers will develop the capability to:

- Define Targeted AI Agent Strategies – Prioritize use cases using strategic alignment and the Capability Value Matrix.

- Quantify Performance Gains and ROI – Track metrics such as throughput, error reduction and labor cost savings to validate impact.

- Customize Agents for Domain Impact – Leverage industry ontologies and custom data pipelines for deeper integration and higher adoption.

- Govern and Scale Responsibly – Implement ethical oversight, bias mitigation and compliance protocols as agents assume greater autonomy.

- Anticipate Future Trends – Incorporate emerging capabilities—multimodal interfaces, autonomous learning loops and federated architectures—into long-term roadmaps.

A blended approach of executive strategy sessions, technical deep dives, functional workshops and ethics forums will help cross-functional teams translate this guide into actionable pilot initiatives. Regular review cadences, continuous learning programs and governance checkpoints will ensure AI agent deployments deliver sustainable competitive advantage and foster an innovation culture.

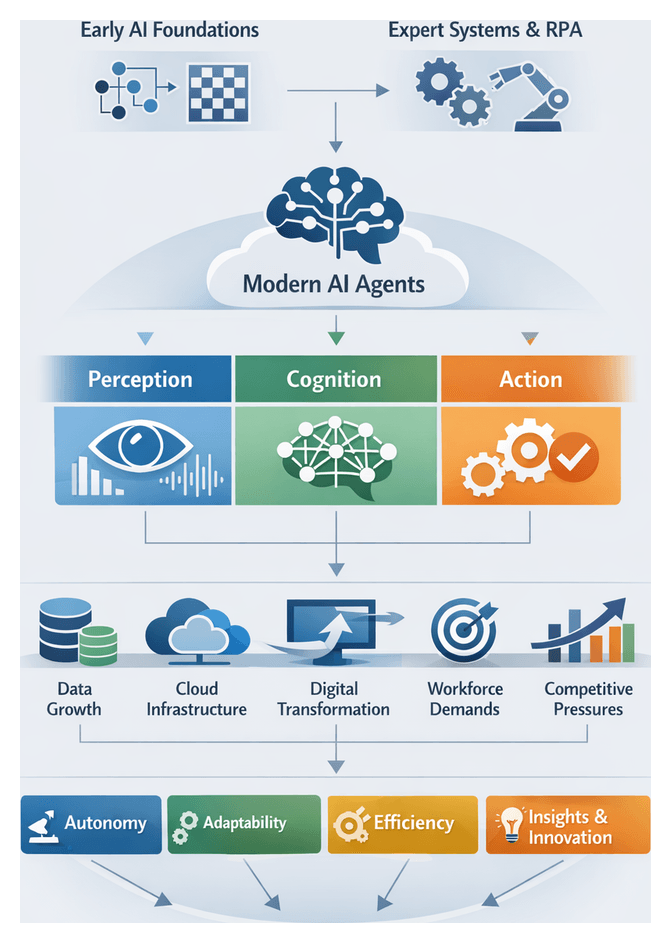

Chapter 1: Foundations of AI Agents in the Workplace

Historical Evolution and Business Definition of AI Agents

The idea of software entities capable of autonomous reasoning dates back to early computing pioneers such as Alan Turing and John McCarthy, who laid the groundwork for rule-based systems in the 1950s and 1960s. Initial applications focused on constrained problem-solving tasks, exemplified by chess programs and theorem provers. The 1970s and 1980s saw the rise of expert systems—if-then rule engines applied to medical diagnosis and engineering design—though they required extensive knowledge engineering and could not learn from new data.

With the advent of Robotic Process Automation (RPA) in the early 2000s, software bots began automating repetitive back-office tasks by mimicking user interactions, streamlining data entry and invoice processing. In the 2010s, advances in machine learning and natural language processing gave rise to intelligent virtual assistants such as Siri and Alexa, introducing flexible conversational interfaces and probabilistic reasoning.

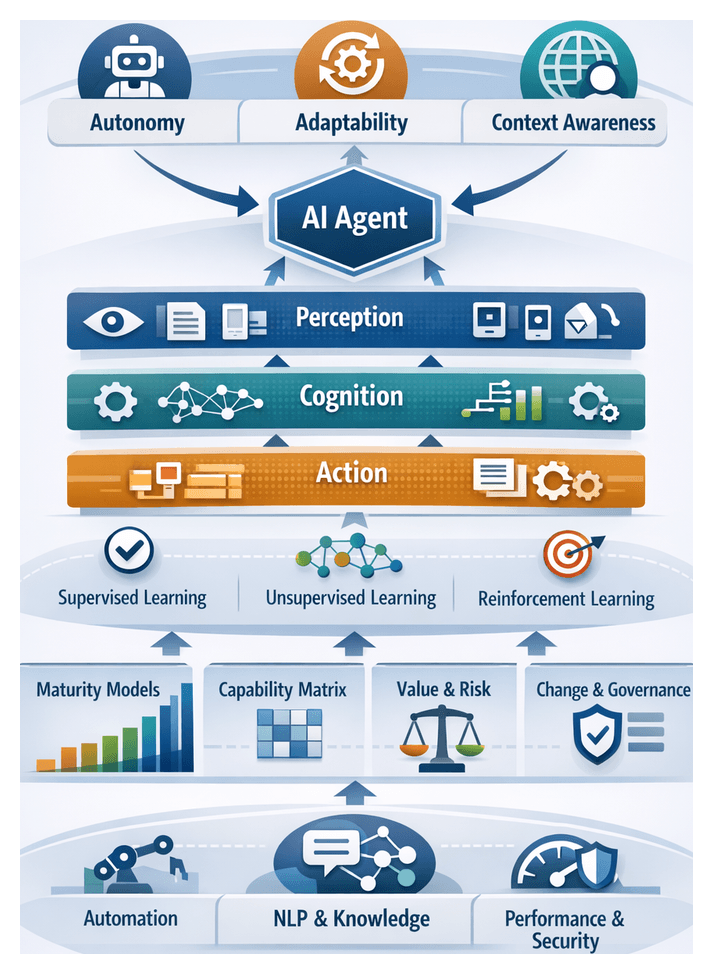

Today’s AI agents combine deep learning, large language models, cloud computing, and real-time analytics to perceive inputs, reason over information, and take actions toward predefined objectives. In business contexts, an AI agent exhibits:

- Autonomy: Operating with minimal human intervention to initiate tasks and make decisions.

- Adaptability: Learning from data and interactions to refine behavior over time.

- Context Awareness: Interpreting unstructured inputs—natural language or visual data—and adjusting responses to situational nuances.

At their core, AI agents encompass three functional layers: perception (ingesting and interpreting text, speech, or sensor data), cognition (applying machine learning models or business rules to derive insights and plan next steps), and action (executing tasks such as database updates, message dispatch, or workflow triggers).

Core Pillars and Drivers of AI Agent Adoption

AI agent capabilities can be organized into three interconnected pillars:

- Data Perception: Ingesting and normalizing inputs from diverse sources, including emails, documents, APIs, and live data streams.

- Reasoning and Decision Making: Applying analytics, predictive models, and business rules to identify opportunities, risks, or next best actions.

- Task Execution: Interfacing with users, third-party systems, or downstream processes to complete activities and close feedback loops.

Several forces drive enterprise adoption:

- Digital Transformation Imperative: Organizations digitize processes to stay competitive, investing in intelligent automation.

- Data Proliferation: Exponential growth of structured and unstructured data creates demand for AI-driven analysis and decision support.

- Cloud-Native Architectures: Scalable infrastructure lowers barriers to deploying sophisticated AI models at enterprise scale.

- Workforce Expectations: Employees expect seamless digital experiences and self-service tools that enhance productivity.

- Competitive Dynamics: Early AI agent adopters gain efficiency advantages, raising industry benchmarks for productivity.

Strategically, AI agents extend automation into domains requiring judgment, adaptability, and learning, delivering:

- Operational Agility: Reconfiguring workflows in response to market shifts or internal changes.

- Scalability: Replicating trained agents across teams and geographies without proportional headcount increases.

- Innovation Acceleration: Offloading cognitive tasks to agents so human teams focus on creative and strategic work.

- Enhanced Decision Quality: Leveraging data-driven insights to minimize errors in manual parsing.

Industry Perspectives and Capability Frameworks

Analysts categorize AI agents along a continuum from rule-based RPA to cognitive automation with natural language understanding and adaptive learning. Three archetypes emerge:

- Transactional Bots: Automate repetitive data tasks with high reliability and low complexity.

- Conversational Assistants: Engage employees or customers via chat or voice, balancing automated resolution with seamless human hand-off.

- Advisory Agents: Generate strategic insights by analyzing structured and unstructured data, surfacing trends and anomalies.

Industry frameworks for evaluating agent capabilities include:

- Maturity Model Analysis: Stages from basic task automation to fully autonomous, context-aware agents.

- Capability Matrices: Mapping functions like natural language processing, computer vision, and predictive analytics to use cases.

- Value-Risk Assessment: Balancing quantitative ROI projections with qualitative risks—data sensitivity, compliance exposures, and change management.

- Open Architecture Index: Measuring interoperability with enterprise systems, data warehouses, and analytics platforms.

- Human-AI Collaboration Scorecards: Assessing interaction effectiveness, user satisfaction, and hand-off processes.

Key interpretive dimensions span:

- Autonomy and Decision-Making: Calibrating agent authority to risk tolerance and governance mechanisms.

- Learning and Adaptation: Refining models through supervised and reinforcement learning, with feedback loops incorporating user corrections and outcome metrics.

- Integration and Interoperability: Evaluating API readiness, data schema alignment, event-driven flows, and performance benchmarks.

- Explainability and Trust: Exposing decision logic, confidence scores, and audit trails to foster credibility and compliance.

Roles and Domain Applications

AI agents fulfill distinct strategic roles that span operational, tactical, and strategic domains:

- Process Orchestrators: Trigger subprocesses, monitor status, and escalate exceptions to reduce cycle times and costs.

- Engagement Assistants: Interface through chat, voice, or email, scaling support operations and improving satisfaction scores.

- Insight Generators: Analyze data to surface recommendations, measured by prediction accuracy and business impact.

- Compliance Monitors: Continuously review transactions and communications for policy adherence, tracking violation detection and remediation times.

- Knowledge Curators: Index organizational content to provide context-aware answers, gauged by relevance and user engagement.

Administrative Workflows

Automating email triage, scheduling, and document processing reallocates human cognitive resources to higher-value work. AI agents analyze message content, sender reputation, and calendar cues to prioritize emails, auto-draft replies, and suggest meeting slots using solutions such as Microsoft Power Automate. Document-processing agents equipped with OCR and semantic extraction ingest invoices, contracts, and purchase orders. Platforms such as UiPath classify, validate, and route documents, shifting focus from data entry to exception management.

Customer-Facing Operations

In support centers, AI agents triage tickets by topic and urgency, assign them to queues, and predict escalation risk using historical resolution and sentiment analysis. Conversational agents such as IBM Watson Assistant and Amazon Lex provide 24/7 engagement, balancing containment and hand-off metrics, and integrating with CRM systems, knowledge bases, and live chat.

Supply Chain and Logistics

Agents monitor inventory across warehouses, apply predictive analytics to forecast stockouts and surpluses, and trigger replenishment orders via platforms like Blue Yonder Luminate Platform. In the order-to-cash cycle, agents orchestrate billing, shipping, and exception handling, linking order management systems with logistics providers to optimize carrier selection and accelerate cash conversion.

Human Resources and Employee Services

Conversational agents guide new hires through orientation, training modules, and policy acknowledgments, escalating queries to HR partners when needed. Analytics on engagement, time-to-productivity, and satisfaction correlate agent-facilitated onboarding with faster integration and reduced attrition.

IT and Technical Operations

AIOps agents ingest telemetry, detect anomalies, correlate events, and suppress noise using solutions like Moogsoft and Splunk IT Service Intelligence. Coupled with runbook automation, they reduce mean time to detect and respond, shifting from reactive firefighting to proactive system management.

Marketing and Sales Enablement

Agents analyze engagement data to assign lead scores and refine models based on closed-won and closed-lost outcomes through platforms such as Salesforce Einstein. They also generate draft content, optimize channel selection, and adjust timing using multi-touch attribution and marketing mix modeling frameworks, ensuring campaign performance aligns with objectives.

Strategic Foundations for Deployment

Strategic Alignment and Goal Definition

- Articulate business outcomes in quantitative terms—reductions in overhead or cycle times.

- Map agent capabilities to specific process bottlenecks or value levers.

- Establish executive sponsorship and cross-functional governance to align technical teams and business units.

Organizational Readiness and Cultural Considerations

- Leadership commitment and change management capacity.

- Data literacy and technical skills within the workforce.

- Process maturity and standardization.

- Openness to experimentation and iterative learning.

Data Infrastructure and Quality Imperatives

- Real-time data pipelines and cleansing protocols to minimize bias and errors.

- Metadata management for discoverability and lineage tracking.

- Scalable storage and compute architectures for high-volume processing.

Governance, Risk Management, and Ethical Safeguards

- Defined roles for model stewardship spanning data scientists, compliance officers, and business owners.

- Risk assessments for algorithmic bias, privacy breaches, and operational failures.

- Audit trails and documentation standards for input, output, and decision rationale traceability.

- Ethics review panels to validate use cases against corporate values and regulations.

Integration Complexity and Technical Constraints

- API gateways and microservices to encapsulate agent functions.

- Event-driven data flows and message buses for real-time coordination.

- Containerization and orchestration platforms for deployment, scaling, and rollback.

- Security architectures with encryption, authentication, and role-based access control.

Measurement, Evaluation, and Continuous Refinement

- Define KPIs aligned to goals—time saved, accuracy, and user satisfaction.

- Deploy monitoring dashboards for trends, anomalies, and utilization metrics.

- Implement feedback mechanisms for error reporting and improvement suggestions.

- Conduct periodic governance reviews to update models and incorporate new data.

Limitations and Strategic Cautions

- Potential algorithmic bias from incomplete training data.

- Performance degradation in novel scenarios beyond training scope.

- Integration challenges with legacy or poorly documented systems.

- Regulatory uncertainties affecting agent autonomy.

- Workforce tensions without proactive reskilling and role redesign.

By internalizing these strategic, organizational, technical, and governance considerations, enterprises can navigate AI agent deployment with confidence, unlocking sustainable productivity gains and competitive advantage.

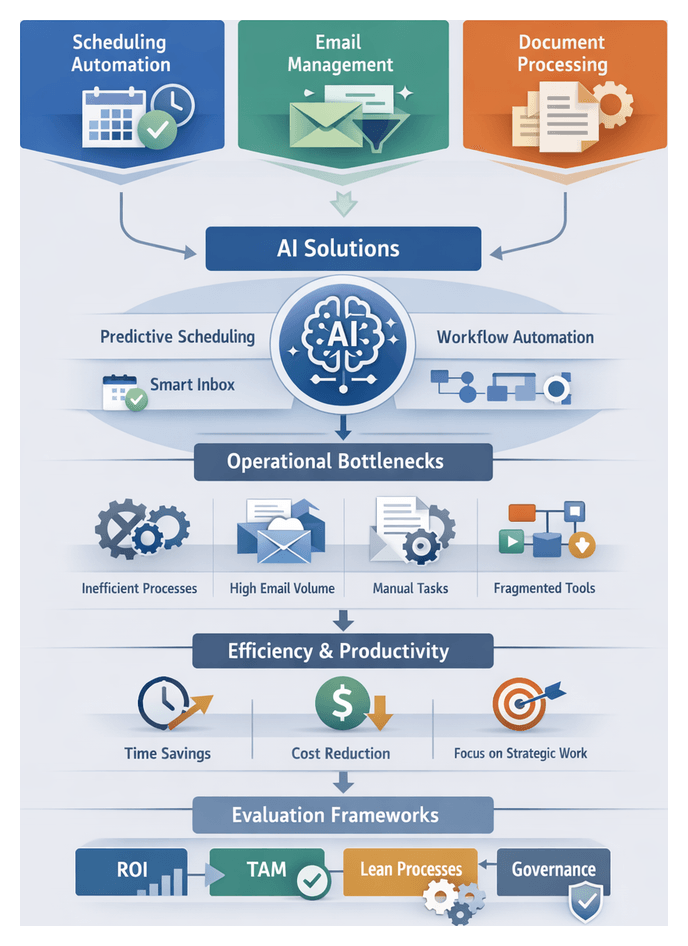

Chapter 2: Automating Administrative Tasks for Efficiency

Defining Administrative Automation and Its Impact

Administrative tasks underpin day-to-day operations, from coordinating complex schedules and managing high volumes of email to processing critical documents. These routine activities can consume up to 30 percent of employee time, diverting focus from strategic initiatives. Traditional workflows rely heavily on manual effort—checking calendars, drafting standard responses and routing documents—creating a burden on staff and driving labor costs upward.

Administrative automation applies intelligent software agents, robotic process automation and natural language processing to streamline or eliminate repetitive tasks. By integrating artificial intelligence and machine learning, organizations reduce time spent on low-value activities and reallocate resources toward strategic, creative and customer-facing work. AI-driven agents deliver predictive scheduling, smart email management and automated document handling, enabling enterprises to scale support functions without proportional headcount increases and to maintain service levels during periods of growth or sudden demand spikes.

Operational Challenges and Bottlenecks

Outdated processes and fragmented technologies create common productivity drains:

- Inefficient scheduling coordination, leading to back-and-forth email threads and missed meetings

- High email volume, resulting in delayed responses and information overload

- Manual document routing and approval cycles, causing process delays and versioning errors

- Disparate tools for travel bookings, expense reports and invoice processing

- Repetitive data entry across multiple systems, increasing the risk of human error

These bottlenecks slow operations, frustrate staff and undermine customer satisfaction. Without real-time visibility into pending tasks and approvals, managers respond reactively rather than planning proactively, eroding competitive advantage in fast-paced industries.

AI-Powered Administrative Solutions

Scheduling Simplification

Coordinating meetings is time-consuming. Intelligent scheduling agents automate core steps:

- Analyze participants’ calendars to identify optimal meeting times

- Generate and send invitations automatically

- Manage rescheduling requests and update all stakeholders

- Integrate with video-conferencing platforms and room booking systems

Tools such as Calendly leverage machine learning to predict availability patterns, reduce negotiation overhead and ensure higher attendance rates through automated reminders and agenda distribution.

Email Management Optimization

AI-enabled email agents enhance inbox efficiency by:

- Filtering and categorizing incoming messages by priority and topic

- Suggesting or auto-generating draft responses using natural language generation

- Scheduling follow-up reminders and tracking unanswered items

- Performing sentiment analysis to flag urgent or sensitive communications

Solutions such as Superhuman, Boomerang and built-in features in Microsoft Outlook and Gmail’s Priority Inbox help users process email strategically, reducing clutter and ensuring critical messages receive timely attention.

Document Handling and Workflow Automation

AI agents transform document processing by:

- Automatically extracting key information from scanned or digital documents

- Classifying and tagging items based on content and metadata

- Routing documents through predefined approval paths with status tracking

- Ensuring compliance with version control and audit requirements

Platforms such as UiPath, Automation Anywhere and Blue Prism integrate optical character recognition and AI-driven classifiers to digitize workflows. Legal teams also leverage agents integrated with DocuSign for automated signature routing and audit trails, freeing counsel to focus on high-value negotiation.

Frameworks and Metrics for Evaluating AI Agents

Analytical Frameworks

To assess AI agent performance, organizations draw on multiple models:

- Technology Acceptance Model (TAM) evaluates perceived usefulness and ease of use, linking factors to adoption rates.

- Return on Investment (ROI) Analysis quantifies cost savings from time reclaimed versus implementation expenses.

- Lean Process Improvement identifies waste and cycle-time reduction opportunities in administrative workflows.

- Employee Experience Frameworks measure impacts on satisfaction, stress levels and work-life balance.

- Governance and Compliance Models ensure data privacy, audit trails and algorithmic transparency.

Key Metrics for Scheduling Agents

- Time-to-Schedule – Average elapsed time from request to calendar confirmation.

- Meeting Fill Rate – Percentage of proposed slots accepted by all participants.

- Reschedule Frequency – Average number of rescheduling events per meeting.

- Participant Satisfaction – Survey scores on ease and responsiveness.

- Calendar Utilization – Percentage of working hours occupied by meetings.

- Administrative Overhead Reduction – FTE hours saved from scheduling tasks.

Vendors like Calendly and x.ai publish benchmarks to guide performance targets and track improvements over time.

Key Metrics for Email Agents

- Processing Throughput – Emails processed per hour, including classification and response generation.

- Classification Accuracy – Percentage of messages correctly categorized by priority or topic.

- Response Quality Score – Evaluations of coherence, relevance and tone of automated replies.

- Inbox Zero Achievement Rate – Percentage of users maintaining an empty inbox at day’s end.

- Engagement and Trust Levels – User surveys on confidence in agent-generated content.

- Risk and Escalation Metrics – Frequency of false positives and negatives in urgent communications.

Solutions such as Superhuman and enterprise features in Outlook and Gmail provide analytics dashboards for continuous model refinement.

Document Automation Metrics

- Cycle Time Reduction – Percentage decrease in document approval times.

- Extraction Accuracy – Rate of correctly captured data fields.

- Exception Rate – Frequency of manual interventions required.

- Compliance Incidents – Number of audit findings or versioning errors.

- Cost Savings – Reduction in headcount and error-related expenses.

Strategic Themes and Implementation Considerations

Successful automation aligns with high-value business priorities and employs a phased, modular approach. Pilot deployments in defined contexts build stakeholder confidence and reduce risk before broader roll-outs. Scalability and interoperability ensure that agents integrate into a cohesive ecosystem rather than functioning as isolated point solutions.

Key considerations include:

- Process Mapping – Document existing workflows and identify rule-based tasks for automation.

- Technology Integration – Ensure seamless connectivity between calendars, email clients and document repositories.

- Data Governance – Establish policies for secure access, privacy compliance and auditability.

- Performance Measurement – Define metrics for time saved, error reduction and user satisfaction.

- Vendor Strategy – Balance proprietary platforms against open-ecosystem tools for flexibility and cost control.

Change Management and Organizational Readiness

End-user trust and skills are critical. Integrated training programs should combine technical onboarding with clear communication about productivity gains. Explainability features in agent interfaces allow users to review decision rationales and override actions. Feedback loops and governance councils ensure performance metrics remain tied to business outcomes and employee satisfaction.

Governance, Compliance and Vendor Ecosystems

Robust governance models embed compliance checkpoints within agent logic, enforce data retention and audit requirements, and monitor algorithmic bias. Procurement teams use multi-criteria decision analysis to compare vendors on features, security, support and total cost of ownership. Automation champions in each department serve as liaisons, identify friction points and drive grassroots adoption.

Future Outlook and Adaptive Strategies

Administrative AI agents will evolve toward hyperautomation, combining robotic process automation with large-language models and computer vision. This convergence will enable interpretation of unstructured data—such as PDF attachments and scanned forms—and execution of complex multi-step transactions. Organizations preparing for this shift adopt modular architectures and plugin frameworks, decoupling agent logic from core applications to allow rapid upgrades of underlying AI models.

Continuous improvement cycles, rigorous A/B testing and cross-functional steering committees will review performance dashboards, surface emerging risks and align roadmaps with corporate objectives. Explainable AI and privacy-enhancing technologies such as differential privacy and federated learning will reduce data exposure while maintaining model fidelity. Training programs will evolve from static tool instruction to dynamic skill development, teaching employees to calibrate, audit and interpret agent outputs. In this future state, administrative AI agents serve not only as efficiency levers but as catalysts for a more adaptable, data-driven workplace ethos.

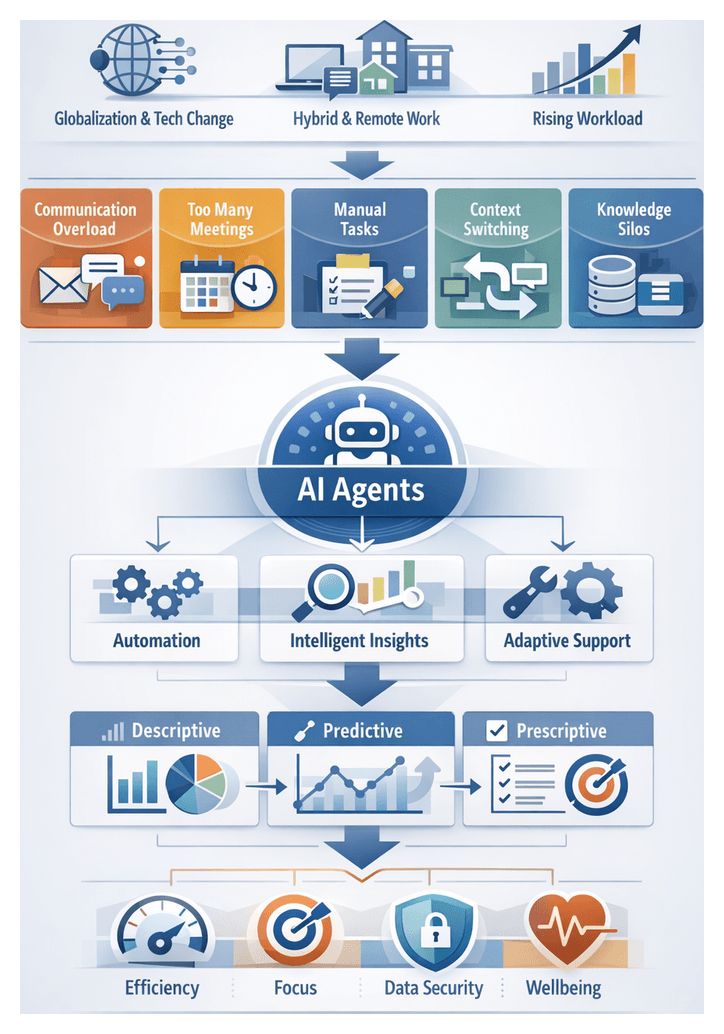

Chapter 3: Enhancing Time Management with Personal AI Assistants

The Evolving Productivity Landscape

Over the past decade, globalization, rapid technological change and shifting workforce demographics have transformed how work gets done. Hybrid and remote arrangements are now standard, requiring seamless coordination, intelligent automation and adaptive support systems. Incremental process tweaks no longer suffice as task complexity and volume outpace traditional productivity models.

Core operational bottlenecks include:

- Communication Overload: Employees spend up to 30 percent of their time managing emails and messages, delaying decisions and obscuring priorities.

- Meeting Proliferation: Virtual and hybrid meetings often lack clear agendas, consuming unproductive hours and exacerbating decision fatigue.

- Manual Administrative Tasks: Scheduling, data entry and document management remain labor-intensive and error-prone, consuming time that could be better spent.

- Context Switching: Frequent interruptions incur hidden costs, with individuals requiring up to 15 minutes to regain full focus.

- Fragmented Knowledge Access: Information silos and outdated search processes hinder rapid problem solving, leading to redundant work and delayed responses.

Knowledge workers lose an average of 2.5 hours per day to non-core tasks and disruptions, translating into millions in unrealized labor value and contributing to stress, burnout and turnover. Competitive pressures, digital transformation agendas, evolving workforce expectations, hybrid work models and data proliferation are converging to elevate productivity optimization to a strategic imperative.

AI agents—software entities capable of autonomous task execution, contextual assistance and continuous learning—emerge as a powerful response. By managing routine tasks, understanding priorities, integrating with enterprise systems and generating data-driven insights, agents extend human capacity and address the root causes of inefficiency. Advances in natural language processing, machine learning and cloud-native frameworks, coupled with rich organizational data and a growing vendor ecosystem, create a window of opportunity for AI-driven productivity transformation. Vendors like Clara Labs illustrate early success in autonomous scheduling and email triage.

Analytical Frameworks and Time Tracking Tools

Time tracking tools have evolved into sophisticated analytical platforms that translate raw timestamps into actionable insights. Evaluation frameworks focus on metrics such as data accuracy, granularity, user adoption, engagement iterations, insight utility and integration with broader productivity ecosystems.

Interpretive Models and Metrics

Organizations apply three dominant analytical models:

- Descriptive Analysis: Visualizations of time distribution reveal macro-level patterns, such as peak focus periods and context-switch frequency.

- Predictive Modeling: Machine learning algorithms forecast workloads, anticipate bottlenecks and estimate time requirements for recurring tasks.

- Prescriptive Recommendations: AI-driven guidance on schedule optimization, break timing and task batching leverages behavioral science principles.

Expert Considerations

Key factors when selecting a solution include:

- Contextual Accuracy: Distinguishing billable from non-billable work and capturing overhead tasks.

- Behavioral Insights: Identifying collaboration hotspots and focus patterns for targeted interventions.

- Privacy Compliance: Ensuring data protection through anonymization, consent mechanisms and adherence to regulations.

- Scalability: Managing concurrent users, centralized administration and performance at enterprise scale.

Comparative Analysis of Leading Platforms

- RescueTime: Automated categorization of computer activity with AI models assigning productivity scores to websites and applications.

- Toggl Track: Manual and semi-automated time entry with AI-powered idle detection and predictive project forecasting.

- Clockify: Free tier with AI-enhanced time entry suggestions and API support for custom dashboards.

- Time Doctor: Screenshot monitoring, keystroke analytics and prescriptive alerts to address productivity lags.

- Microsoft Viva Insights: Organizational analytics combined with personal well-being recommendations for focus and collaboration balance.

Standard interpretive frameworks such as the Eisenhower Matrix, cognitive load indexes, and collaboration heatmaps anchor analytical outputs in established productivity theories, converting tool outputs into strategic insights on process optimization, resource allocation, and employee well-being.

AI Agents in Individual Workflows

Embedding AI-driven assistants into personal workflows transforms time management from a static plan into a dynamic collaboration. Key capabilities include autonomous task management, context-aware scheduling, notification triage and data-driven recommendations. The following scenarios illustrate how agents amplify individual performance.

Morning Briefings and Agenda Alignment

Agents scan communication channels and data feeds overnight to deliver concise, tailored briefs that align daily agendas with organizational priorities. This reduces time spent gathering dispersed information and enables leaders to focus on strategic decision-making.

Deep Work and Focus Management

Products such as Clockwise use machine learning to identify optimal focus windows, suppress non-urgent notifications and schedule uninterrupted work blocks. Organizations report up to 40 percent increases in focused time for writing, analysis and design tasks.

Adaptive Scheduling in Dynamic Environments

In fast-paced sectors, agents monitor calendar changes and resource commitments in real time, proposing schedule adjustments that preserve high-priority tasks. This reduces manual rescheduling friction and helps professionals maintain resilience amid shifting demands.

Meeting Preparation and Follow-Up

Tools like Otter.ai automate agenda creation, transcribe discussions and extract action items, integrating summaries into task lists. This accelerates readiness and ensures accountability by closing the loop on follow-up.

Interrupt Management and Notification Triage

By assessing sender importance and message urgency, agents prioritize high-value notifications and defer non-critical communications. Integration with platforms like RescueTime helps measure context-switch reductions and their impact on effective working hours.

Contextual Task Sequencing

Agents dynamically reorder to-do lists based on location, energy levels and deadlines, aligning tasks with Eisenhower Matrix principles to optimize effort allocation across varying contexts.

Personalized Break and Recharge Recommendations

By analyzing usage patterns and wearable-derived signals, agents suggest timely breaks and brief wellness activities, supporting sustained focus and reducing burnout risk.

End-of-Day Reflection and Planning

Agents prompt daily reviews, summarizing accomplishments, flagging incomplete items and identifying emerging priorities. Personalized insights on focus versus meeting time foster self-awareness and continuous improvement.

Role-Specific Use Cases

- Sales Professionals: Recommended follow-up windows and personalized outreach templates based on communication analytics.

- Software Developers: Uninterrupted coding intervals aligned with sprint deadlines and task context.

- Researchers and Analysts: Curated literature, data extraction and dedicated research blocks for evidence-based decisions.

- Customer Support Specialists: Prioritized ticket handling by urgency and customer value, with scheduled proactive outreach.

Adoption, Governance, and Continuous Improvement

User Acceptance and Change Dynamics

Adoption success depends on perceived usefulness and ease of use, as described by the Technology Acceptance Model and Rogers’ Diffusion of Innovation. Pilot programs, early adopters and internal champions help refine value propositions and build momentum. Cultural readiness and clear communication are critical to prevent low utilization and user frustration.

Balancing Personalization with Scalability

Mass-customization strategies combine standardized core functionalities with configurable preferences. Segmenting users into personas and offering tiered service levels ensures consistent support while allowing power users to access advanced modules. Governance around customization boundaries preserves enterprise-wide scalability.

Data Privacy, Security, and Compliance

AI assistants process sensitive calendar entries, emails and task lists. Compliance with frameworks such as NIST SP 800-53 and ISO/IEC 27001, as well as regional regulations like GDPR, CCPA and LGPD, requires privacy-by-design principles, minimal data retention, encryption and robust audit trails. Role-based access controls and consent mechanisms protect employee trust.

Ethical Considerations

Transparency, fairness and accountability underpin trustworthy AI, guided by IEEE and European Commission frameworks. Advisory boards and algorithmic impact assessments guard against bias and unintended consequences. Clear feedback channels empower users to report inaccuracies and drive continuous ethical refinement.

Strategic Alignment and Integration

Aligning AI assistant deployments with corporate objectives—such as employee engagement, operational efficiency and innovation—requires defining KPIs like time saved, task backlog reduction and collaboration metrics. Conceptual integration mapping ensures seamless interoperability with email, calendar, project management and communication platforms, accelerating time to value.

Measuring Impact and Continuous Improvement

Balanced scorecards combine utilization rates, task completion time reductions, user satisfaction scores and Net Promoter Scores. Time-series analytics isolate the assistant’s contribution, informing iterative enhancements to algorithms, interfaces and feature sets. This feedback loop aligns with agile practices and fosters a data-driven culture.

- Utilization: Percentage of eligible users actively engaging with the assistant.

- Efficiency Gains: Average reduction in task turnaround times.

- User Satisfaction: Survey ratings and qualitative feedback.

- Accuracy: Rate of correct suggestions versus manual intervention.

- ROI: Cost savings relative to deployment and maintenance expenses.

Change Management and Training

Structured onboarding—self-guided tutorials, live workshops and peer mentoring—accelerates adoption. Change champions demonstrate real-world use cases, and micro-learning modules embedded within assistants provide just-in-time guidance, reducing reliance on traditional training.

Limitations, Risks, and Long-Term Outlook

AI assistants may struggle with complex context, cross-domain tasks and nuanced preferences, risking automation bias and eroded critical thinking. Risk registers should address algorithmic errors, data drift and vendor lock-in. Ongoing investments in model retraining, data quality and feature evolution are essential. Emerging advances in multimodal AI and federated learning promise enhanced context awareness and privacy, but introduce new governance challenges.

Risk Mitigation Strategies

- Establish an AI governance council for policy oversight.

- Implement regular model evaluations to detect drift.

- Maintain transparent documentation of capabilities and limitations.

- Design fallback procedures for unresolved tasks.

- Periodically review vendor agreements to ensure flexibility and portability.

By combining proven analytical frameworks, strategic alignment, ethical stewardship and robust governance, organizations can unlock the full potential of AI-driven time management. Leaders who balance innovation with prudent risk management will capture sustainable productivity gains and drive transformational outcomes.

Chapter 4: AI-Driven Collaboration and Team Dynamics

The Role and Value of AI-Driven Collaboration Agents

In an era of hybrid and remote work, organizations grapple with dispersed teams, fractured workflows, and information overload that undermine productivity and cohesion. Collaborative AI agents embed intelligence into everyday platforms to automate routine coordination, surface critical insights, and maintain contextual continuity across conversations, documents, and tasks. Unlike rule-based bots, these agents harness natural language processing, machine learning, and predictive analytics to interpret user intent, anticipate next steps, and execute actions autonomously or semi-autonomously. Key capabilities include real-time meeting transcription and summarization, contextual task suggestions, intelligent notification filtering, calendar and project-management integration, and adaptive learning that tailors assistance to team behavior. By reducing administrative burden and ensuring aligned understanding of priorities, AI agents transform collaboration into a strategic asset that accelerates decision cycles, improves accountability, and scales as organizations grow.

Foundational Concepts and Evolution of AI-Augmented Platforms

Effective AI agents rest on three foundational principles. Contextual awareness enables agents to ingest data from communication channels, document repositories, and project systems, constructing holistic models of ongoing work. Adaptive learning continuously refines agent behavior based on interaction patterns, user feedback, and outcome data, ensuring relevant and personalized support. Human-centered automation emphasizes augmentation over replacement, with agents offering suggestions, alerts, and draft content that preserve human judgment and foster trust.

Collaboration platforms have evolved from basic file sharing and centralized chat to integrated ecosystems featuring video conferencing, interactive whiteboards, and holistic project management. AI agents represent the next evolutionary leap: proactively initiating tasks, summarizing complex discussions, and coordinating workflows without explicit prompts. Milestones include:

- Rule-based workflow automation for fixed tasks, such as scheduled reminders

- Conversational chatbots responding to keywords and simple commands

- Predictive assistants that suggest meeting times and auto-categorize content

- Proactive AI agents embedded in communication and productivity suites

Advances in large-scale language models, reinforcement learning, and context modeling have endowed agents with deeper language understanding, reasoning over data, and seamless collaboration alongside human users.

Analytical Frameworks and Performance Metrics for Real-Time Collaboration

Organizations apply structured models to assess how AI agents affect collaboration within socio-technical systems. Leading frameworks include:

- Socio-Technical Systems Model examines alignment of agents with workflows, cultural norms, and communication patterns, evaluating user acceptance and training needs.

- Collaboration Maturity Model tracks progression from siloed communication to integrated, AI-enabled teamwork, using metrics on coordination transparency and adaptive response.

- Social Network Analysis maps team interactions, measuring network density and information flow, enhanced by metadata such as topic tags and sentiment indicators.

Key performance indicators balance quantitative throughput with qualitative user experience. Widely adopted metrics include:

- Response Latency–average time between user queries and AI-augmented replies, with lower latency correlating to faster decision cycles.

- Resolution Rate–proportion of interactions fully handled by agents without human escalation.

- Meeting Efficiency Index–combines meeting duration, agenda adherence, and follow-up completion; solutions such as Microsoft Teams Copilot report 30 to 40 percent reductions in meeting time.

- Collaboration Engagement Score–frequency of AI suggestions adopted, corrections to AI outputs, and user-initiated clarifications, indicating trust and integration into workflows.

- Error Reduction Rate–decline in miscommunications and duplicated work; Slack GPT cites up to a 25 percent drop in coordination errors.

Pilot studies quantify real-world impacts. A consulting firm saw a 20 percent increase in decision throughput and 15 percent fewer follow-up meetings after deploying Zoom IQ for automated transcription. A technology company integrating Otter.ai achieved a 35 percent rise in stakeholder alignment by sharing AI-generated highlights. Financial services managers saved eight hours weekly using chat-based agents and calendar assistants, while knowledge management platforms with embedded AI indexing cut information retrieval time by 40 percent.

Measurement challenges include isolating AI impact from concurrent improvements, unifying analytics across platforms, ensuring user privacy and compliance, and avoiding metric fatigue by focusing on high-impact KPIs. Emerging analytical directions point to predictive collaboration analytics, prescriptive insights for meeting design, sentiment-driven morale indicators, and continuous closed-loop learning that dynamically refines agent behavior.

Enhancing Communication, Coordination, and Decision-Making

AI agents reshape both formal and informal team interactions, improving media richness, boundary spanning, and shared mental models. By capturing meeting transcripts, tagging decisions, and indexing documents, agents reduce information asymmetry. For example, Cisco Webex Assistant generates real-time summaries and action-item lists that circulate instantly, strengthening cross-functional linkages and enabling social network ties based on emergent expertise.

Decision velocity accelerates when agents analyze conversation threads, surface pending questions, and propose relevant data or historical precedents. Slack AI exemplifies in-flow assistance that aligns with Lean Management principles, minimizing delays and cognitive load. Unified workspaces with dynamic ontologies, such as Microsoft Viva, reconcile data from CRM, ERP, and content systems, fostering shared context and overcoming terminology barriers.

Behavioral shifts accompany technological gains. Real-time nudges prompt inclusion of diverse contributors and highlight linguistic biases, cultivating more inclusive dialogues. Yet organizations must guard against overreliance on AI, preserving human critical evaluation through complementary strengths models. In distributed environments, asynchronous summaries, prioritized alerts, multilingual transcription, and AI-driven scheduling optimize global collaboration across time zones and geographies.

Evaluating communication impact combines metrics like reductions in email volume, average response times, and decision latency with user satisfaction surveys. Tracking the adoption rate of AI-generated summaries and auto-indexed documents against project outcomes builds data-driven narratives on collaboration efficacy. Mitigation strategies address alert fatigue with configurable thresholds, foster trust through explainable AI, implement privacy governance in line with standards such as ISO 27001, and drive cultural adoption through stakeholder engagement and pilots.

Strategic Alignment, Governance, and Adoption

Embedding AI agents into organizational strategy requires linking agent capabilities to corporate priorities via Balanced Scorecard or OKR frameworks. Real-time transcription, agenda management, and automated task assignment should map directly to cycle-time reduction, enhanced alignment, and accelerated decision-making. Governance forums that include executives and team leads enable iterative calibration of agent parameters, balancing ROI forecasts with day-to-day usability. Agile governance cycles or staged maturity models guide transitions from pilots to enterprise-wide deployments.

Change management is critical. Applying the ADKAR model (Awareness, Desire, Knowledge, Ability, Reinforcement) and cultivating “AI champions” encourage peer advocacy. Scenario-based workshops using Microsoft Copilot, Slack AI, or Zoom’s AI Companion expose accuracy and attribution concerns in realistic contexts. Tracking engagement rates, task completion times, and sentiment survey results identifies friction early and informs tailored training.

Governance structures must embed ethical oversight. Drawing on IEEE’s Ethically Aligned Design and the European Commission’s Ethics Guidelines for Trustworthy AI, committees define policies for content validation, escalation protocols, and audit trails. RACI matrices assigned to AI-generated deliverables ensure that responsibility and accountability rest with designated human roles, preserving agility while safeguarding decision integrity.

Technical Integration, Security, and Continuous Improvement

Seamless interoperability with communication, project-management, and knowledge systems underpins agent effectiveness. A service-oriented architecture leveraging REST APIs, Webhooks, and identity frameworks such as OAuth 2.0 or SAML ensures secure, real-time data exchange among tools like Jira, Asana, and Zoom’s AI Companion. Semantic alignment through enterprise ontologies and metadata tagging reconciles task identifiers across platforms, while periodic integration audits detect schema drift and performance bottlenecks.

Security and privacy demand end-to-end encryption of audio and transcript data, role-based access controls, and compliance with GDPR, HIPAA, or CCPA requirements. Explicit user consent for recording and data processing, rigorous bias audits using fairness metrics, and human-in-the-loop safeguards in high-risk scenarios uphold ethical standards. Continuous performance monitoring integrates dashboards tracking meeting time saved, transcription accuracy, and follow-up completion rates alongside embedded feedback prompts. Regular retrospective sessions review metrics, share best practices, and prioritize feature enhancements, ensuring that AI agents evolve in alignment with team dynamics and strategic objectives.

Chapter 5: Intelligent Knowledge Management and Decision Support

Foundations of AI-Driven Knowledge and Decision Support

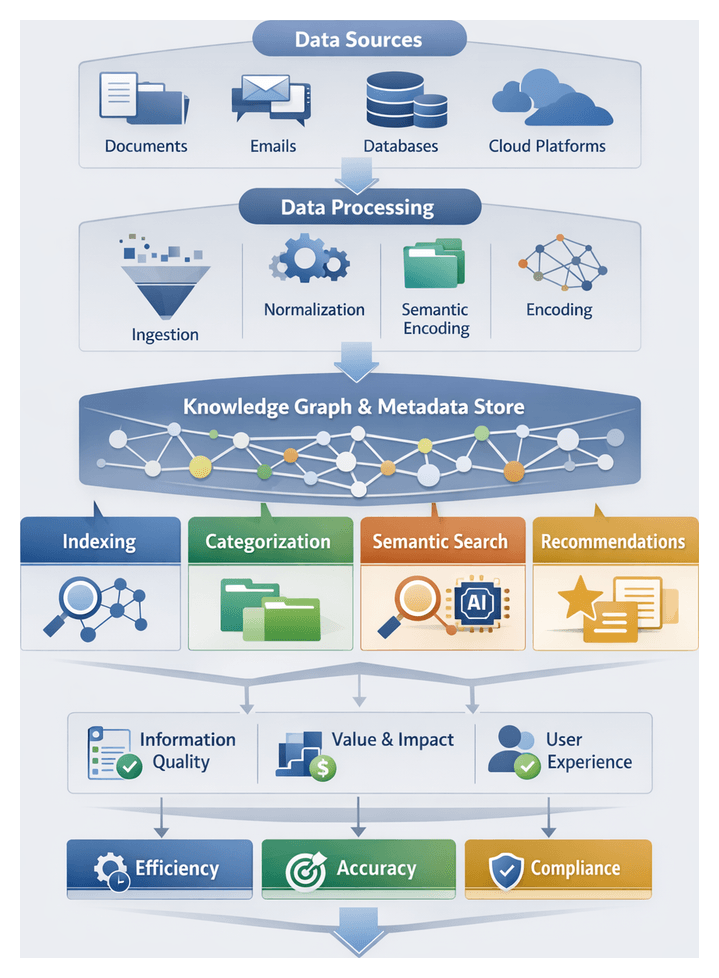

Enterprises generate exponential volumes of structured and unstructured data—from documents and emails to video, code repositories, and collaborative platforms. Traditional keyword search struggles with scale, context and evolving domain vocabularies, leaving knowledge workers spending up to thirty percent of their time hunting for relevant information. AI-powered knowledge management and decision-support agents apply machine learning and natural language processing to transform raw data into semantically rich knowledge graphs and embeddings, delivering precise indexing, categorization, search and recommendations aligned with organizational context.

Core functions of these agents include:

- Indexing: Automated extraction of key terms, entities and relationships to build searchable representations.

- Categorization: Supervised and unsupervised classification of content into topics, domains or project taxonomies.

- Semantic Search: Interpretation of user intent through natural language queries, extending beyond exact keyword matches.

- Recommendation and Discovery: Proactive surfacing of related documents, experts and best practices based on behavior, roles and project context.

- Summarization: Generation of concise abstracts that enable rapid assessment of document relevance.

- Continuous Learning: Feedback loops refine algorithms based on usage patterns, new content ingestion and search success rates.

Architecturally, these systems comprise:

- Data Ingestion: Connectors and pipelines aggregating content from enterprise systems, cloud repositories and collaboration tools.

- Preprocessing and Normalization: Tokenization, language detection and entity extraction to prepare text for deeper analysis.

- Semantic Encoding: Transformer-based embedding models that convert text into dense vector representations capturing meaning and relationships.

- Metadata Store and Knowledge Graph: Graph databases or document stores maintaining entity relationships, annotations and taxonomy mappings.

- Search and Retrieval Engine: Hybrid frameworks combining vector similarity search with inverted indexes for keyword fallback and filters, exemplified by Elastic Enterprise Search and Microsoft Azure Cognitive Search.

- User Interface and APIs: Dashboards, chat interfaces and RESTful endpoints that enable seamless querying and result delivery.

- Feedback and Analytics: Monitoring modules tracking query performance, user satisfaction and content gaps to drive iterative improvements.

Adopting AI-driven retrieval transforms decision quality, operational efficiency and collaboration. Timely access to comprehensive insights reduces reliance on intuition, automates routine discovery tasks and fosters cross-functional teamwork by surfacing expertise profiles and project-specific documents. Automated classification and tagging support compliance and risk mitigation, while continuous learning ensures systems scale with data volumes and evolving vocabularies.

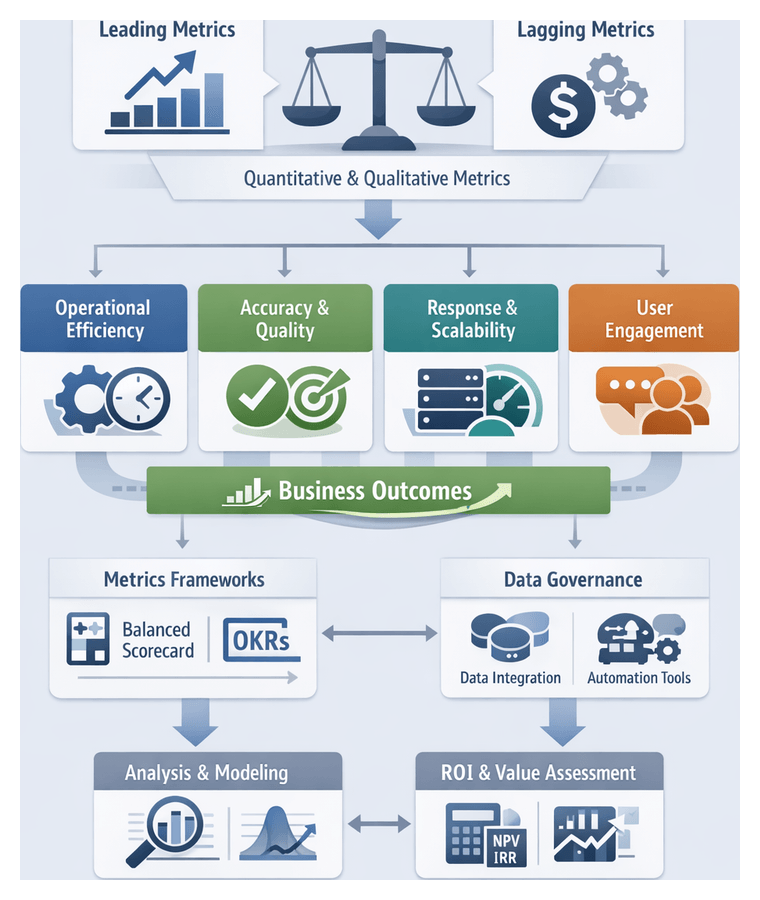

Evaluating AI Decision Support Effectiveness

Structured analytical frameworks guide organizations in assessing AI decision-support systems on multiple dimensions: data integrity, algorithmic robustness, user experience and business impact. The Information Quality Framework examines accuracy, completeness, consistency and timeliness of insights. The Value Realization Framework aligns outputs with strategic objectives, quantifying benefits in terms of revenue uplift, cost avoidance and risk mitigation. McKinsey’s Value-at-Stake model estimates economic impact by mapping improved decision accuracy and speed to financial metrics, while the Technology Acceptance Model links perceived usefulness and ease of use to adoption rates.

Key metrics and methodologies include:

- Algorithmic Metrics: Precision, recall and F1 scores for classification tasks; mean absolute error and root mean squared error for predictive analytics.

- Process Metrics: Time-to-decision and decision throughput measure acceleration of business processes and volume of decisions per unit time.

- User-Centric Metrics: Net Promoter Score (NPS) gauges willingness to recommend the system; Decision Confidence Index tracks acceptance versus override rates of AI suggestions.

- Data Quality Dimensions: Accuracy, completeness, consistency and timeliness of underlying datasets and model outputs.

Interpretive models from decision theory and behavioral economics enrich evaluation. Prospect theory reveals how framing risk-reward trade-offs influences user preferences. Human-AI teaming frameworks assess optimal task allocation between analysts and AI agents, using metrics like cognitive workload reduction and synergy scores. Domain-specific considerations apply tailored requirements:

- Financial Services: Regulatory compliance demands explainability and audit trails. Back-testing against historical outcomes validates predictive power, while SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations) serve as critical evaluation tools.

- Healthcare: Diagnostic recommendations require validation against clinical trial data and peer-reviewed literature. Performance extends to patient outcomes—readmission rates, treatment efficacy and adverse event reduction—benchmarking against FDA guidelines for Software as a Medical Device.

- Manufacturing: Predictive maintenance and supply-chain optimization emphasize unplanned downtime reduction, asset utilization and inventory turnover. Systems are evaluated on their ability to forecast equipment failures with sufficient lead time.

Emerging analytical platforms integrate advanced evaluation capabilities. IBM Watson Decision Platform visualizes real-time accuracy metrics and business impact estimations. Microsoft Azure Machine Learning offers automated interpretability reports detailing feature importance and decision pathways. Amazon SageMaker includes Clarify for bias detection and explainability analysis. Google Cloud AI Platform Vertex AI Model Monitoring tracks prediction drift and data skew. DataRobot provides a unified interface for benchmarking models against business metrics under consistent evaluation protocols.

Embedding these frameworks into governance structures ensures continuous alignment of AI agents with strategic goals and regulatory requirements. Cross-functional review committees leverage performance reports to inform model retraining schedules, data augmentation efforts, and user interface enhancements, fostering stakeholder trust and driving sustained improvements in decision quality, operational efficiency, and competitive advantage.

Strategic Applications of AI Research Assistants

AI-powered research assistants extend analytical capabilities across market analysis, risk modeling, competitive intelligence, investment strategy and regulatory compliance. By integrating structured data (sales figures, financial statements) with unstructured inputs (social media, news feeds, customer reviews) and applying natural language processing, sentiment analysis and predictive modeling, these agents surface actionable insights that inform high-level strategy within established frameworks.

Market Analysis and Trend Forecasting

Organizations combine real-time point-of-sale data with social listening analytics to produce adaptive demand forecasts. AI assistants continuously correlate transaction volumes with consumer sentiment signals, enabling product managers to adjust inventory and promotions on a daily cadence. Platforms like DataRobot automate feature engineering, model selection and performance validation, allowing analysts to focus on interpretation. Interpretive models such as Porter’s Five Forces and the Ansoff Matrix translate AI-generated signals into coherent market entry, diversification and positioning strategies.

Scenario Planning and Risk Modeling

AI-driven scenario tools ingest probabilistic distributions of macroeconomic indicators, geopolitical events and industry variables to simulate multiple futures. Monte Carlo simulations quantify probabilities of supply shocks, demand fluctuations and operational interruptions. Features include dynamic parameter adjustment based on leading indicators, automated correlation analysis, sensitivity dashboards and graphical what-if visualizations. Palantir Foundry provides an ontology-driven environment that aligns simulation components with enterprise data definitions, supporting cross-group consistency and enabling validation against historical crises to enhance preparedness.

Competitive Intelligence and Mergers & Acquisitions

Strategic M&A teams employ AI assistants to automate due diligence by scanning patent databases, litigation records, human capital disclosures and media reports. Agents flag targets with rapidly growing patent citation networks and assess leadership continuity risk by analyzing executive profiles. Solutions like Thomson Reuters Eikon AI merge real-time news analytics with entity-level risk scoring, categorizing rumors, probes and executive changes to inform bid strategies, deal structures and regulatory anticipation.

Portfolio Optimization and Investment Strategy

Asset managers integrate quantitative models—mean-variance optimization, risk parity—with qualitative factors such as ESG ratings. AI agents recommend portfolio configurations balancing projected returns, volatility and strategic objectives. IBM Watson Discovery enriches models with insights extracted from regulatory filings, earnings transcripts and ESG disclosures. Portfolio managers apply multi-factor attribution frameworks to interpret AI outputs, ensuring allocations drive risk-adjusted returns and long-term value creation.

Regulatory Compliance and Policy Strategy

AI assistants continuously scan regulatory announcements, public consultations and enforcement actions to map evolving policy landscapes. Agents extract obligations, deadlines and reporting requirements, presenting them in interactive policy registers. Thomson Reuters Regulatory Intelligence classifies developments by jurisdiction, business line and entity type, enabling unified compliance views. Compliance officers apply likelihood-impact matrices to prioritize remediation and align initiatives with strategic risk tolerances.

Cross-cutting analytical viewpoints ensure that AI-generated insights integrate seamlessly into strategic decision making:

- Framework Integration: Embedding insights within Porter’s Five Forces, Balanced Scorecard or resilience models to maintain coherence with strategy.

- Bias Mitigation: Applying audit protocols and data-quality checks to validate representative inputs.

- Human-Augmentation: AI surfaces evidence and recommendations while senior leaders provide context, judgment and ethical oversight.

- Continuous Feedback Loops: Post-decision reviews compare projected outcomes with actual performance to refine models and interpretive criteria.

Alignment, Governance and Integration for Business Impact

To realize measurable returns, organizations must align agent capabilities with high-value objectives such as accelerating time-to-insight, reducing decision cycles and improving collaboration. Key steps include:

- Outcome Definition: Articulate target decisions or processes, setting KPIs like reduced research time or increased forecast accuracy.

- Stakeholder Engagement: Involve executives, domain experts and end users early to validate use cases, prioritize features and secure adoption.

- Value Realization Timeline: Balance rapid prototyping with strategic road-mapping, defining milestones for pilots and phased roll-outs.

- Cost-Benefit Analysis: Compare total cost of ownership—including licensing, integration and maintenance—against anticipated efficiency and decision-quality gains.

Effective data governance underpins agent reliability. Establish metadata standards, taxonomy alignment and continuous stewardship. Key governance components include:

- Metadata Management: Consistent tagging and classification schemas for precise indexing and semantic search.

- Data Lineage and Provenance: Tracking data origin, transformations and usage to support auditability and compliance.

- Quality Controls: Automated validation rules, deduplication and anomaly detection to uphold data accuracy and relevance.

- Access and Security Policies: Role-based permissions and encryption standards to protect sensitive information while enabling collaboration.

Seamless integration with enterprise systems ensures agents embed in daily workflows. Best practices include:

- API Consistency: RESTful or GraphQL interfaces with clear versioning to prevent disruptions.

- Data Synchronization: Near-real-time or batch updates that maintain consistency between source systems and knowledge indices.

- Modular Architecture: Microservices or containerized components that can be deployed and scaled independently.

- Vendor Ecosystem Alignment: Tools supporting standard connectors or prebuilt integrations—such as embedding an intelligent assistant into SharePoint or Slack.

User adoption hinges on change management. Role-based training, champion networks, intuitive user experiences and structured feedback loops foster trust and engagement. Scalability demands elastic compute resources, distributed knowledge graphs and vector databases, comprehensive performance monitoring and caching strategies for high-frequency queries.

Ethical, compliance and security safeguards must be embedded throughout deployment. Conduct regular bias audits, implement privacy controls in compliance with GDPR or CCPA, maintain access logs and audit trails, and enforce end-to-end encryption with robust key management. Continuous improvement relies on usage analytics, periodic model retraining, governance review boards and prioritization frameworks that balance quick wins with long-term enhancements.

Organizations should anticipate limitations—model errors, domain coverage gaps, interpretability challenges and maintenance overhead—and design human-in-the-loop processes to mitigate risks. By aligning AI agents and Microsoft Copilot with strategic goals, enforcing rigorous governance, integrating seamlessly and fostering a culture of continuous learning, enterprises unlock lasting efficiency gains and enhanced decision quality.

Chapter 6: Integrating AI Agents with Enterprise Systems

Architectural Foundation for AI Agent Integration

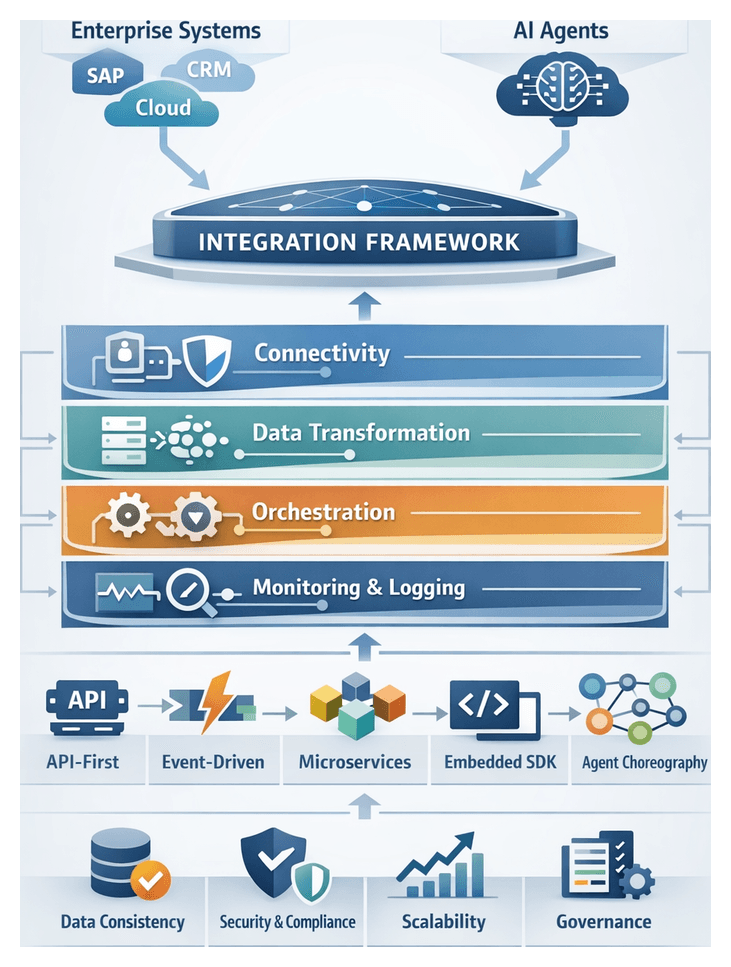

Modern enterprises often juggle a constellation of legacy systems such as SAP, Oracle and Salesforce alongside cloud platforms and bespoke line-of-business applications. As organizations embrace AI agents to automate tasks, generate insights and enhance decision-making, seamless embedding into this complex ecosystem becomes critical. Absent a structured integration framework, AI agents risk becoming isolated tools that fail to leverage existing data and processes, undermining both efficiency and strategic alignment.

A comprehensive integration architecture delivers multiple benefits:

- Ensuring data consistency through unified exchange conventions and schema validations.

- Maintaining security and compliance with standardized authentication, authorization and auditing.

- Promoting scalability via reusable connectors and defined integration patterns that support cross-departmental deployment.

- Facilitating governance by exposing clear service contracts and enabling version control of integration artifacts.

- Reducing time to value through prebuilt templates and low-code connectors that minimize custom development.

The architecture is organized into distinct layers, each addressing a specific concern:

- Connectivity Layer provides secure, authenticated channels between AI agents and enterprise systems. It includes API gateways and message brokers, with platforms such as MuleSoft and Dell Boomi supporting REST, SOAP, JMS and other standards.

- Data Transformation Layer normalizes heterogeneous formats through schema mapping, field validation and enrichment processes. Streaming solutions like Apache Kafka and ETL frameworks handle both real-time and batch transformations.

- Orchestration Layer governs the flow of tasks across agents and services. Workflow engines such as Camunda and Microsoft Power Automate offer model-driven and low-code tooling for designing complex business processes.

- AI Agent Layer embodies domain-specific capabilities—natural language understanding, computer vision, predictive analytics—executing logic based on orchestration inputs and interacting with downstream systems.

- Monitoring and Logging Layer captures observability data, including logs, performance metrics and audit trails. Solutions like Splunk and Elastic Stack aggregate and visualize this information to support troubleshooting and optimization.

Proven Integration Patterns

- API-First Pattern – Agents expose and consume RESTful interfaces, managed by an API gateway that enforces routing, security and rate limits.

- Event-Driven Pattern – Agents subscribe to event streams published on platforms like AWS EventBridge or Apache Kafka, reacting to business events in real time.

- Microservices Pattern – AI capabilities are deployed as independent microservices in container platforms such as Amazon EKS or Google Kubernetes Engine, enabling granular scaling and lifecycle management.

- Embedded SDK Pattern – Integration libraries are embedded directly into existing applications, offering streamlined authentication, data exchange and on-device model execution.

- Agent Orchestration Pattern – Complex processes leverage multi-agent choreography defined through BPMN or custom workflows, coordinating data hand-offs and decision points across specialized agents.

API and Data Interoperability

Rather than treating AI agents as disconnected add-ons, leading enterprises embed them within a connected ecosystem where seamless data exchange, consistent semantic definitions and robust interface governance are strategic imperatives. Interoperability spans two dimensions:

- Interface Interoperability – The protocols, schemas and security mechanisms that permit one system to invoke or be invoked by another. Common protocols include REST, gRPC and GraphQL; payload formats encompass JSON, XML and Apache Avro; authentication standards range from OAuth 2.0 and JWT to mutual TLS.

- Data Interoperability – The shared understanding, mapping and transformation of data elements as they traverse disparate systems. This includes canonical data models, schema harmonization, data lineage and quality enforcement.

Several interpretive frameworks help architects assess interoperability maturity and prioritize investments:

- Connectivity Maturity Models – Define levels from isolated proofs-of-concept to fully orchestrated, event-driven ecosystems that minimize custom code and maximize reuse.

- Semantic Interoperability Grids – Catalog data domains, entity definitions and taxonomies, supported by governance processes to ensure evolving schemas remain aligned.

- Open versus Proprietary API Evaluations – Weigh trade-offs between vendor lock-in and rapid integration benefits, considering open-source gateways and community-driven standards.

When selecting interface technologies, organizations balance technical requirements with strategic implications:

- Developer Productivity – SDK availability, community examples and learning curves associated with new protocols or frameworks.

- Vendor Ecosystem Alignment – Compatibility with integration platforms such as MuleSoft or Dell Boomi and support for hybrid, multi-cloud deployments.

- Operational Resilience – Fault-tolerance patterns like circuit breakers and exponential backoff, and observability through distributed tracing and API analytics.

Data interoperability relies on metadata repositories, data catalogs and master data management. Key domains include:

- Schema Harmonization – Defining canonical models for core entities and mapping proprietary schemas to common formats.

- Data Lineage and Provenance – Tracking origin, transformations and consumption paths to support auditability under regulations such as GDPR and CCPA.

- Quality and Integrity Metrics – Automated validation rules, completeness and accuracy checks, and periodic reconciliations.

To measure interoperability success, enterprises track metrics such as API latency and throughput, error rates and reliability (MTBF, MTTR), data consistency scores and integration velocity (time to onboard new sources and expose agent endpoints). Aligning these indicators with executive dashboards and balanced scorecards enables data-driven decisions on scaling or remediating integration efforts.

Emerging standards shape future interoperability, ensuring vendor-agnostic architectures and contract-first development:

- OpenAPI and AsyncAPI for machine-readable API contracts and code generation.

- GraphQL Federation for unified queries across multiple services, reducing over-fetching or under-fetching of data.

- OData for standardized query capabilities and metadata annotations in enterprise datasets.

Scalability, Performance, and Reliability

Deploying AI agents to support mission-critical processes demands architectures that can elastically scale, withstand failures and deliver consistent performance. Cloud-native environments, orchestrated via containers and microservices, underpin these requirements.