Mastering AI and Human Agent Collaboration Strategic Insights for Business Excellence

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

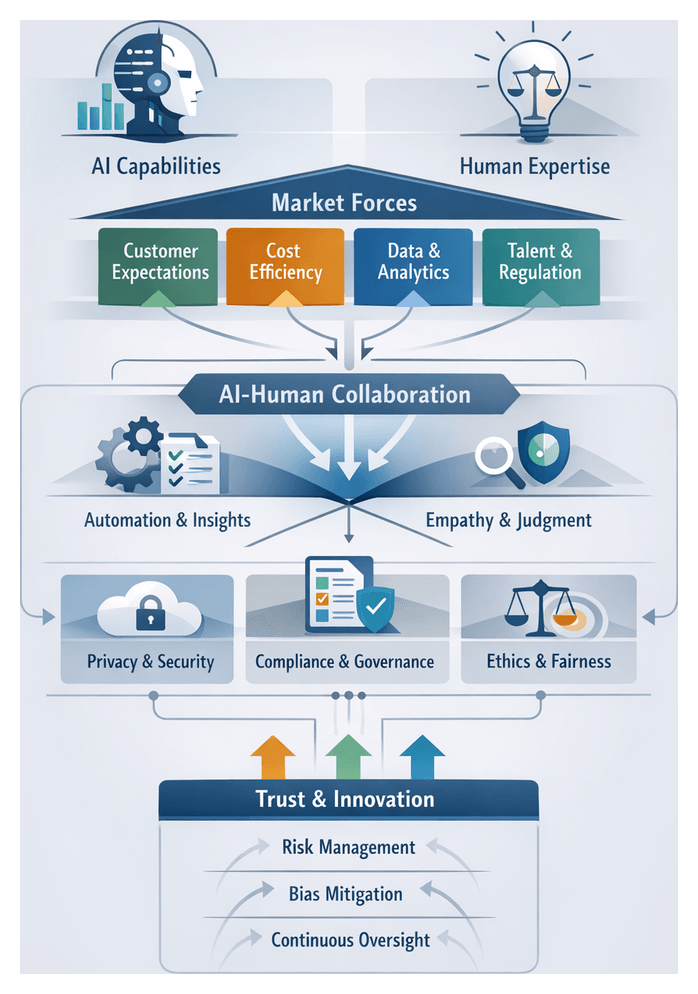

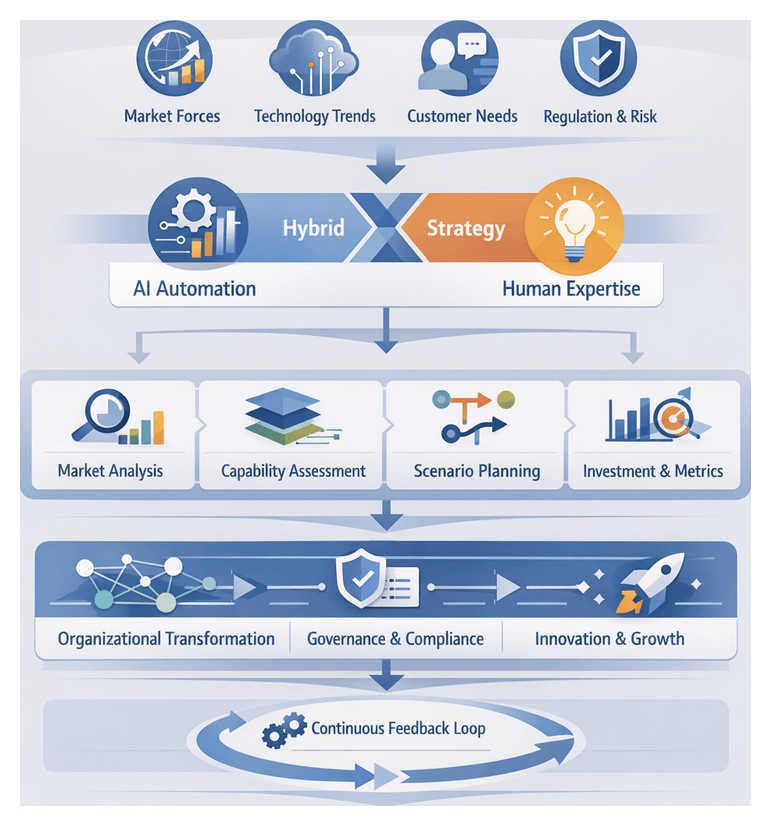

Market Drivers and Strategic Imperatives

Enterprises today are compelled by intensified competition, shifting customer expectations, and cost pressures to integrate artificial intelligence agents with human professionals. Globalization and digital transformation have lowered barriers to entry, raising the bar for service quality and speed. AI platforms such as Salesforce Einstein and IBM Watson Assistant leverage machine learning, natural language processing, and predictive analytics to handle routine inquiries 24/7, analyze vast data sets, and deliver consistent responses. These capabilities reduce response latency, improve first-contact resolution rates, and allow human agents to focus on complex, emotionally sensitive, or strategic engagements.

Customer expectations now emphasize omnichannel continuity, instant resolution, and personalized experiences. AI agents efficiently manage order status checks, account lookups, and basic troubleshooting across chat, voice, social, and messaging channels, ensuring uniformity and scalability. However, when interactions require empathy, nuanced judgment, or negotiation, human professionals remain essential. Hybrid models route inquiries based on defined criteria—complexity, sentiment, customer value—balancing the efficiency of automation with the authenticity of human engagement to sustain satisfaction and loyalty.

Operationally, organizations confront rising labor costs, workforce shortages, and unpredictable demand cycles. Intelligent automation absorbs volume spikes without costly temporary staffing, drives down cost per contact, and generates analytics that highlight process bottlenecks. Human agents, relieved of repetitive tasks, are redeployed to high-value roles such as relationship management, upselling, and complex problem resolution—enhancing job satisfaction, reducing turnover, and unlocking revenue opportunities. Consulting firms report up to 30 percent productivity gains from effective hybrid deployment.

Regulatory and ethical imperatives also shape integration strategies. Data privacy laws such as GDPR and CCPA mandate strict controls over customer information. Bias mitigation, transparency, and accountability are integral to governance frameworks. Human oversight is embedded in hybrid architectures to audit AI recommendations, manage escalations, and ensure compliance with internal policies and external regulations. This collaborative governance model safeguards against unintended consequences and reinforces customer trust.

Sector-specific considerations illustrate diverse interpretations of hybrid models. In financial services, AI drives fraud detection and compliance monitoring while advisors handle complex wealth planning. Healthcare organizations combine diagnostic agents with clinician judgment under patient-safety constraints. Retailers deploy conversational bots for routine queries and escalate VIP or context-rich cases to brand-trained human staff. Business process outsourcers use AI for high-volume transactional tasks, reserving human expertise for exceptions and escalations. Each industry tailors hybrid ecosystems to its risk profile, regulatory demands, and customer dynamics.

Conceptual Framework and Interpretive Models

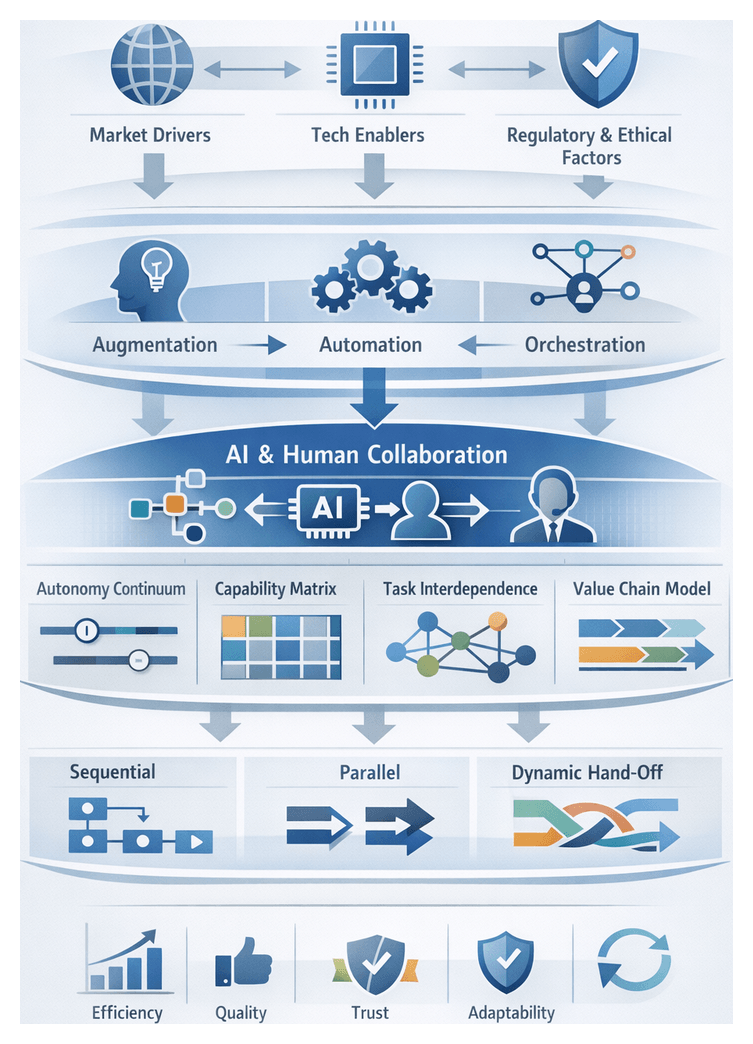

Effective orchestration of AI and human agents relies on a shared conceptual landscape that distinguishes interaction archetypes:

- Augmentation: AI enhances human capabilities by providing real-time insights and recommendations.

- Automation: AI autonomously executes low-complexity, high-volume tasks.

- Orchestration: Human agents oversee AI activities, handling exceptions and escalation decisions.

Analytical frameworks guide design, evaluation, and governance:

- Continuum of Autonomy and Control maps tasks along a spectrum from full human leadership to complete automation, clarifying hand-off points.

- Capability Synergy Matrix aligns AI strengths—scale, speed, precision—with human strengths—empathy, adaptability, relationship building—to optimize task assignments.

- Task Interdependence Model categorizes tasks as pooled, sequential, reciprocal, or team-based, revealing where AI reduces hand-off friction and where human coordination is vital.

- Value Chain Integration Model assesses collaboration across primary and support activities, highlighting opportunities to reshape procurement, operations, and after-sales service.

From an architectural perspective, three high-level collaboration patterns emerge:

- Sequential Model: AI agents handle initial, routine stages and transfer complex cases to human agents at predefined decision nodes.

- Parallel Model: AI gathers data, suggests options, and human agents simultaneously craft personalized responses, reducing cycle time.

- Dynamic Hand-Off Model: Orchestration engines monitor context, performance metrics, and compliance triggers to route tasks in real time between AI and human agents.

Evaluative criteria ensure collaboration effectiveness. Quantitative metrics include response time improvements, error-rate reductions, and scalable volume handling. Qualitative measures cover customer sentiment, perceived service quality, and agent satisfaction. Composite indexes integrate data from Salesforce Einstein dashboards, interaction logs from IBM Watson Assistant, performance reviews, and scenario-based stress tests simulating peak demand and regulatory scrutiny. This rigorous assessment supports continuous improvement and aligns collaboration initiatives with strategic goals such as loyalty, cost containment, and innovation velocity.

Trust and governance frameworks underpin sustainable hybrid models. The Trust Calibration Model examines how transparency, explainability, and performance consistency influence human acceptance of AI. Ethics review boards comprising domain experts, ethicists, and technologists oversee algorithmic decision-making and embed escalation pathways. Emerging lenses such as Human-Centered AI prioritize experience design, Ethical Synergy frameworks integrate fairness auditing and bias detection, and Adaptive Collaboration approaches foster continuous learning loops where AI evolves from human feedback and agents upskill via AI-driven training recommendations.

Timeliness of Hybrid Models

The convergence of technological maturity, market expectations, and strategic imperatives makes this a critical moment for adopting hybrid AI-human ecosystems. Key technological enablers include:

- Large Language Model advances exemplified by OpenAI GPT-4 and PaLM 2, which deliver nuanced intent understanding and near-human fluency.

- Multimodal intelligence platforms like Google Bard and Vision AI offerings that integrate text, image, and audio processing for enriched interactions.

- Real-time learning capabilities within Microsoft Azure Cognitive Services and reinforcement learning frameworks that refine agent performance based on live feedback.

- Enterprise-grade integration via MLOps practices and standardized APIs in solutions such as IBM Watson, facilitating seamless connectivity with CRM, knowledge bases, and workforce management systems.

Customer expectations for 24/7 availability, hyper-personalization, and ethical transparency heighten the urgency. Hybrid models deliver always-on support through AI, reserving human involvement for sensitive or complex matters. They synchronize context across channels, ensuring seamless omnichannel experiences.

Under economic and operational pressures—rising labor costs, talent scarcity, and volatility—organizations require scalable service models. AI agents address high-volume, low-complexity requests cost-effectively, while humans focus on consultative and high-risk interactions. Hybrid frameworks enable rapid prototyping of conversational capabilities, real-time analytics for iterative improvement, and operational resilience during disruptions such as supply chain setbacks or health crises.

Analytical models help assess urgency:

- Technology Adoption Lifecycle signals that the early majority must move beyond pilots to mainstream hybrid operations.

- Customer Experience Maturity Model benchmarks progress from reactive support to proactive, anticipatory service.

- Capability Gap Analysis maps required competencies against current human and AI strengths, identifying immediate integration priorities.

Risks of delayed adoption include competitive disadvantage, cost escalation from aging legacy systems, talent attrition due to repetitive work, and regulatory exposure from unsupervised AI implementations. Industry analysts project that by 2025, 75 percent of service organizations will embed AI agents in at least one channel (Gartner), while Forrester reports 20–40 percent reductions in handling times for hybrid deployments. IDC highlights that tech-driven challengers use hybrid models as a key differentiator, raising the stakes for incumbents.

Guide Objectives and Analytical Tools

This guide provides senior leaders and domain specialists with a structured roadmap and analytical toolkit for designing, implementing, and governing hybrid AI-human ecosystems. Readers will benefit from:

- A synthesized analysis of market drivers—competitive, technological, customer, operational, and regulatory—that shape the hybrid imperative.

- An interpretive framework for classifying tasks by cognitive load, emotional risk, and strategic value, mapping them to augmentation, automation, or orchestration archetypes.

- Sector-based use cases in finance, healthcare, retail, and business process outsourcing, illustrating contextual adaptations and measurable outcomes.

- High-level architectural patterns—sequential, parallel, and dynamic hand-off—and design principles for seamless workflows and conversational continuity.

- A balanced impact measurement approach combining quantitative metrics (handle time, error rates, scalability) with qualitative indicators (customer sentiment, agent satisfaction).

- A critical examination of ethical, legal, and compliance considerations, including data privacy safeguards, bias mitigation strategies, and governance protocols.

- A forward-looking outlook on emerging agent capabilities—multitask learning, affective computing, decentralized AI—and a strategic roadmap for sustainable integration.

Core analytical tools featured throughout include:

- Performance Dimension Matrix: Evaluating accuracy, scalability, empathy, and adaptability across agent configurations.

- Task Complexity Stratification: Mapping tasks by volatility, ambiguity, and emotional risk to appropriate agent archetypes.

- Integration Maturity Curve: Assessing organizational readiness across technology, culture, and governance dimensions.

- Impact Measurement Spectrum: Balancing velocity-driven metrics such as handle time with relational outcomes like loyalty and advocacy.

- Ethical Risk Profile: Layering transparency, fairness, and accountability considerations onto deployment scenarios.

Considerations and Pathways to Operationalization

To translate strategic insights into operational results, organizations should pursue a phased mobilization strategy:

- Diagnostic Assessment: Audit existing workflows, data infrastructures, governance frameworks, and skill inventories.

- Strategic Prioritization: Identify high-impact, low-risk use cases aligned with organizational objectives.

- Pilot and Validate: Conduct controlled experiments to test hybrid models, collect performance data, and refine protocols.

- Scale and Integrate: Leverage modular architectures, open APIs, and reusable components to expand successful pilots across channels and regions.

- Govern and Adapt: Establish oversight committees for continuous performance measurement, ethical compliance, and iterative improvement loops.

Key considerations include:

- Data Quality and Governance: Ensure robust pipelines, clear ownership, and consistent standards.

- Talent and Skill Development: Evolve human roles toward analytical oversight, exception handling, and empathetic engagement.

- Technology Interoperability: Integrate legacy systems and disparate platforms via open APIs or middleware.

- Risk Management and Compliance: Embed regulatory and ethical protocols directly into AI workflows.

- Change Management and Cultural Alignment: Secure stakeholder buy-in through transparent communication, iterative training, and pilot successes.

- Cost-Benefit Balance: Employ phased deployments for pilot validation, incremental scaling, and recalibration of ROI expectations.

- Vendor and Ecosystem Selection: Evaluate partners for technical excellence, ethical commitments, and roadmap alignment.

While this guide offers a comprehensive strategic foundation, practitioners must remain vigilant to rapid technological change, context-specific variability, integration complexity, and evolving ethical and legal frameworks. By fostering a learning mindset, adaptive governance, and cross-industry collaboration, organizations can anticipate future evolutions—from affective computing to decentralized AI—and maintain a competitive edge through effective AI-human collaboration.

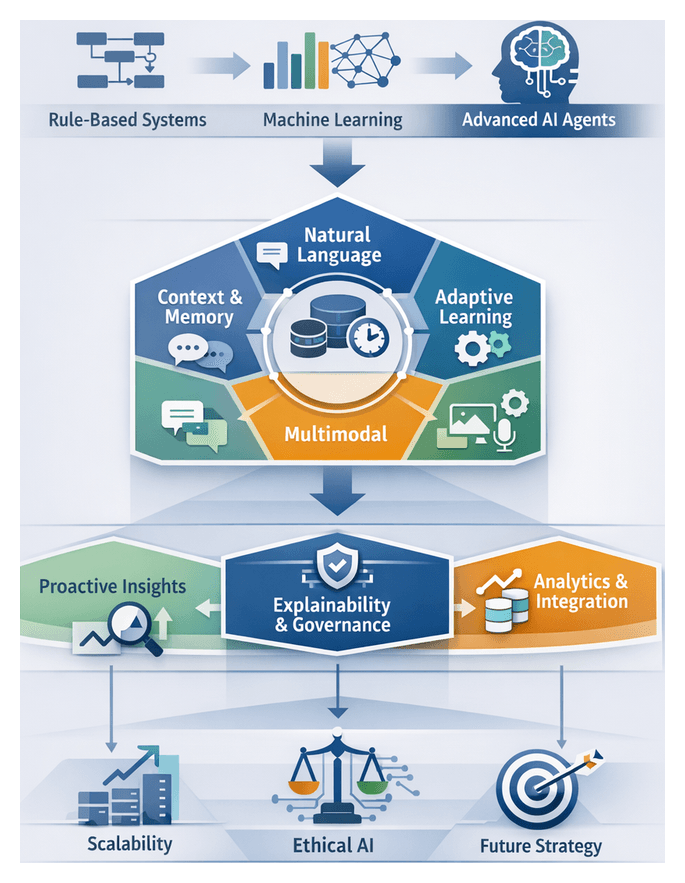

Chapter 1: The Emergence of AI Agents in Modern Enterprises

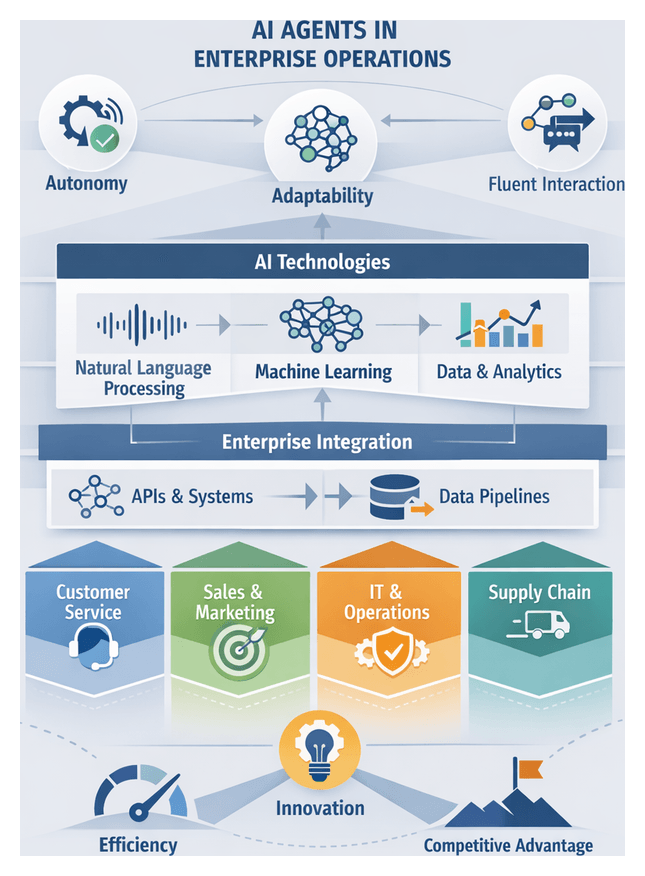

Defining AI Agents in Enterprise Operations

AI agents are autonomous, software-based collaborators that perform tasks, make decisions, and engage with users in ways that closely mimic human interaction. Unlike traditional automation tools that follow predefined workflows, these agents leverage natural language processing, machine learning, and advanced analytics to interpret unstructured inputs, adapt to evolving circumstances, and continuously refine their performance. They act as intelligent intermediaries between systems, processes, and people, operating around the clock, scaling to meet fluctuating demand, and delivering personalized experiences. This capability enables organizations to drive efficiency, foster innovation, and achieve competitive differentiation across diverse functions.

The adoption of AI agents aligns with broader digital transformation initiatives, as enterprises face ever-growing data volumes, heightened customer expectations, and intensifying competitive pressures. By orchestrating disparate data sources, automating routine inquiries, and surfacing actionable insights, AI agents reduce response times, minimize errors, and free human talent to focus on high-value activities. Embedding intelligence at the operational edge—whether in customer support, supply chain optimization, or internal help desks—creates a foundation for continuous process innovation and strategic agility.

AI agents distinguish themselves through five defining features:

- Autonomy: Initiating actions based on real-time data analysis, triaging tasks by priority, and escalating issues when human intervention is required.

- Adaptability: Refining models over time via machine learning, improving intent recognition and outcome prediction.

- Fluent Communication: Managing multi-turn conversations and maintaining contextual awareness through advanced natural language understanding and generation.

- Integration Capability: Connecting seamlessly with enterprise systems via APIs to orchestrate workflows and exchange data.

- Scalability: Operating in cloud or distributed architectures to handle high volumes of concurrent interactions without performance degradation.

In contrast to basic chatbots driven by decision trees or robotic process automation (RPA) that excel at rule-based tasks, AI agents combine adaptive learning with probabilistic reasoning and conversational design. This versatility allows them to guide customers through personalized product recommendations, troubleshoot technical issues in real time, and support decision-making processes.

Core technologies underlie AI agent capabilities:

- Natural Language Processing modules that parse text or speech, extracting intent, entities, and sentiment.

- Machine Learning architectures—including supervised, unsupervised, reinforcement, and hybrid models—that learn from historical data to optimize decisions.

- Knowledge Graphs and semantic ontologies that provide contextual reasoning by linking concepts and relationships.

- Real-time Analytics and event-driven frameworks that detect patterns and trigger automated actions.

- Data Management Pipelines that ensure high-quality inputs, supported by monitoring and feedback loops for continuous improvement.

AI agents find application across enterprise domains: customer service for routine inquiries and transactions; sales and marketing for lead qualification and campaign personalization; IT support for fault diagnosis and automated remediation; human resources for onboarding and policy guidance; and supply chain for demand forecasting and logistics coordination. Leading platforms include IBM Watson Assistant, Google Dialogflow, Microsoft Azure Bot Service, and open source solutions like Rasa. Selecting the right platform requires balancing deployment flexibility, language coverage, analytics depth, and ecosystem compatibility.

Core Technologies: Natural Language Processing, Machine Learning, and Data Management

Natural Language Processing has evolved from rigid, rule-based engines to flexible, data-driven models emulating human communication. Enterprises evaluate NLP solutions on several dimensions:

- Semantic Accuracy: Capturing intent and nuance, especially in specialized domains such as finance, healthcare, or legal services.

- Throughput at Scale: Maintaining low-latency performance under peak loads, often targeting sub-100 millisecond response times.

- Language Coverage: Supporting multiple languages and dialects for consistent global customer experiences.

- Integration Flexibility: Connecting with CRM, ERP, and back-office systems to orchestrate cross-channel workflows.

Transformer-based architectures such as BERT variants and GPT iterations deliver superior context retention and parallel processing. Benchmarking studies compare models across metrics like perplexity, F1 score, and human evaluation ratings. Open-source communities supplement vendor offerings by sharing pre-trained checkpoints and fine-tuning recipes.

Machine Learning architectures power AI agents’ decision-making capabilities:

- Supervised Learning: Leveraging labeled datasets for intent classification and entity recognition within conversational agents.

- Unsupervised and Self-Supervised Learning: Extracting latent representations via autoencoders or contrastive learning and predicting masked tokens or next sentences for broad corpus coverage.

- Reinforcement Learning: Optimizing dialogue policies and decision strategies through reward-driven simulations before live deployment.

- Hybrid Architectures: Blending supervised fine-tuning with reinforcement policy updates to balance precision on known intents with adaptability for novel scenarios.

Technical maturity models classify agent architectures on a continuum from static batch models to fully autonomous systems capable of real-time calibration, guiding enterprises in investing in MLOps tooling, retraining pipelines, and governance mechanisms.

Data Management is the foundation for scalable intelligence. Key domains include:

- Data Quality and Lineage: Ensuring traceability for bias audits, privacy compliance, and drift diagnostics.

- Scalable Storage and Access: Employing distributed file systems, data lakes, or columnar warehouses for rapid retrieval of large corpora.

- Metadata and Feature Management: Maintaining centralized registries that capture transformation logic, feature importance, and lineage graphs to reduce redundancy and accelerate new use-case onboarding.

Cross-functional councils of data engineers, compliance officers, and business analysts oversee data governance policies, service-level objectives for freshness and reliability, and investments in cataloging and validation platforms.

Assessing Readiness: Maturity Frameworks and Interpretive Models

Comprehensive maturity assessments help organizations evaluate preparedness for advanced AI agents across strategic, operational, and technical dimensions. Prominent frameworks cover:

- Strategy Alignment: Executive sponsorship, budget allocation, and defined use-case roadmaps.

- Process Integration: Change management practices, cross-team collaboration, and governance structures.

- Technology Infrastructure: Evaluation of compute resources, model orchestration platforms such as TensorFlow and PyTorch, and deployment pipelines.

- Data Ecosystem: Coverage of data sources, quality assurance processes, and regulatory compliance.

- Performance Monitoring: Continuous evaluation of agent accuracy, user satisfaction, and cost efficiency via dashboards and automated alerts.

Expert analyses guide platform selection and investment prioritization through several lenses:

- Vendor Ecosystem Analysis: Comparing integrated suites such as OpenAI GPT against modular, best-of-breed components.

- Total Cost of Ownership: Accounting for licensing, training overhead, data management, and governance expenses in lifecycle models.

- Risk and Compliance: Addressing data privacy regulations, model interpretability, and bias mitigation requirements.

- Scalability Roadmaps: Planning for horizontal scaling of inference clusters, model versioning, and disaster recovery.

- Talent and Skills Alignment: Assessing in-house expertise in ML engineering, data science, and DevOps alongside upskilling programs.

AI-Human Synergy: Strategic Imperatives and Market Context

Recent technological breakthroughs, including foundation models like GPT-4 and enhancements in computer vision, speech recognition, and multimodal fusion, have elevated AI from experimental to mission-critical. Cloud-based GPU and TPU clusters from Google Cloud AI and Microsoft Azure AI democratize access to high-performance computing, while edge inference accelerators and model distillation techniques support real-time responsiveness.

Customer expectations for immediate, personalized interactions and 24/7 availability make hybrid AI-human models essential. AI agents resolve routine inquiries rapidly, allowing human professionals to address high-value scenarios that require empathy, negotiation, or complex judgment. In sectors such as retail and finance, brands deploying AI-assisted recommendations and proactive alerts achieve higher loyalty, while failure to meet digital standards drives customer churn.

Market leaders employ hybrid triage systems to optimize resource allocation. AI agents pre-screen support tickets, classify complexity, and autonomously resolve standard issues or route prioritized cases to specialized teams. In financial services, AI-driven compliance monitoring frees analysts for strategic risk assessments. In healthcare, virtual assistants handle patient triage, enabling clinicians to focus on diagnosis and treatment. Retailers leverage AI for dynamic pricing and inventory alerts, enhancing human-led consultations and premium support lines.

Regulatory and ethical frameworks underscore the importance of human-in-the-loop controls. AI agents generate traceable decision logs, flag ambiguous scenarios for review, and operate within defined guardrails to ensure fairness, transparency, and accountability.

Strategic Considerations: Implementation, Governance, and Risks

Successful AI agent deployment extends beyond technology installation. Leaders must address organizational, technical, and ethical dimensions:

- Data Strategy and Management: Invest in unified architectures, metadata management, and real-time ingestion to ensure representative, high-quality data.

- Integration with Legacy Systems: Employ API-led architectures and standardized protocols to connect AI platforms with CRM, ERP, and knowledge bases.

- Change Management and Cultural Alignment: Communicate objectives and limitations transparently, build AI literacy, and foster collaborative mindsets among human agents.

- Governance, Compliance, and Ethics: Establish frameworks addressing privacy, algorithmic fairness, and auditability, supported by ethical oversight councils.

- Performance Measurement and Continuous Feedback: Define KPIs such as resolution times, escalation rates, and satisfaction scores, and embed feedback loops for model adaptation.

- Talent and Skill Investments: Develop upskilling programs in data interpretation, prompt engineering, and ethical AI, while equipping human supervisors in exception handling and trust calibration.

Organizations must also heed limitations and risks:

- Algorithmic Bias and Fairness Concerns: Conduct regular bias audits, employ diverse training corpora, and integrate fairness-aware algorithms.

- Over-Reliance and Automation Bias: Maintain clear decision rights and ensure human review of high-impact or ambiguous cases.

- Transparency and Explainability Challenges: Incorporate explainability features to diagnose errors and satisfy regulatory inquiries.

- Data Privacy and Security Risks: Implement robust access controls, encryption, and anonymization to comply with regulations like GDPR or CCPA.

- Technical Debt and Maintainability: Enforce version control, lifecycle management, and scheduled retraining to prevent performance degradation.

- Regulatory and Legal Uncertainties: Monitor evolving laws on automated decision-making, data sovereignty, and digital consumer rights with early legal engagement.

Balancing ambition with prudence involves articulating clear objectives, adopting iterative pilots, ensuring sustained human oversight, evolving governance structures, and fostering a culture of continuous learning. By anchoring AI agent initiatives in data integrity, transparent governance, and adaptive feedback mechanisms, organizations can harness the transformative potential of AI-human ecosystems to achieve sustainable competitive advantage.

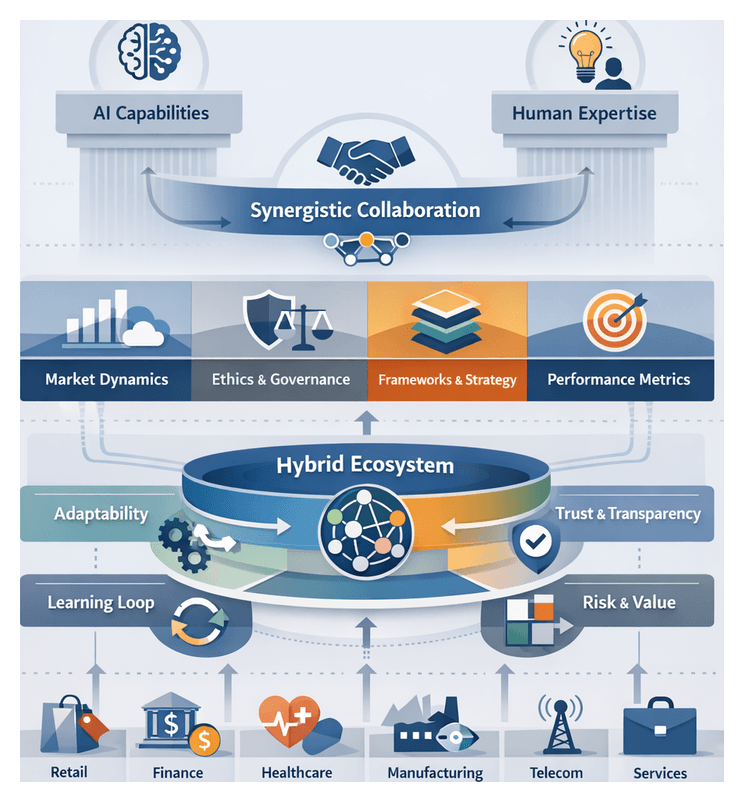

Chapter 2: The Enduring Value of Human Agents

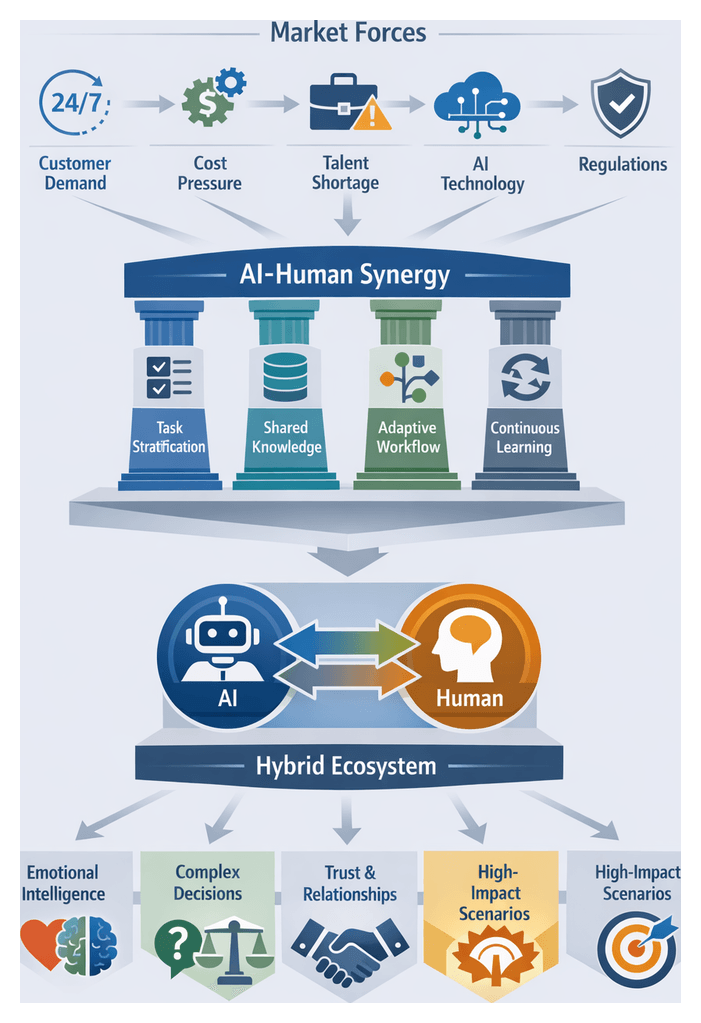

Market Forces Driving AI-Human Collaboration

Organizations today must deliver seamless, efficient, and personalized customer experiences under constant cost and talent pressures. Customer expectations for real-time, context-aware support across voice, chat, email and emerging channels have transformed competition from price wars to experience wars. Meanwhile, labor shortages and regulatory requirements raise the stakes for effective governance of both automated and human workflows. Advances in cloud computing, data analytics and machine learning have lowered the barriers to deploying intelligent automation at scale, yet no fully human or fully automated model can satisfy the complexity of modern customer journeys. A strategic fusion of AI capabilities with human expertise is essential for operational agility, service consistency and sustainable differentiation.

Key market forces include:

- Escalating customer demand for 24/7, omnichannel interactions.

- Cost pressures that drive automation while preserving high-value human touchpoints.

- Talent shortages and burnout in customer-facing roles.

- Technological advances enabling scalable intelligent automation.

- Regulatory and privacy mandates requiring controlled human-AI governance.

Framework for AI-Human Synergy

Orchestrating AI and human agents as complementary co-pilots hinges on four principles that assign tasks by complexity, share a unified knowledge layer, adaptively route interactions and sustain continuous learning loops.

- Task Stratification: AI addresses high-volume, deterministic queries; humans handle ambiguous, emotionally charged or high-risk scenarios.

- Shared Knowledge Ecosystem: A unified data layer delivers real-time context, customer history and sentiment analysis to both AI and humans.

- Adaptive Collaboration: Dynamic workflows enable AI to escalate or defer to human agents based on confidence thresholds, sentiment cues and compliance triggers.

- Continuous Learning Loops: Human feedback refines AI models, while AI-driven insights inform coaching, decision support and performance metrics.

This structured approach unlocks operational efficiency, consistency of service and enhanced customer satisfaction by leveraging each agent’s intrinsic strengths.

Timing and Technological Enablers

Recent developments amplify the viability of hybrid models:

- Advances in Natural Language Understanding: Transformer-based architectures interpret context, intent and sentiment with unprecedented accuracy.

- Proliferation of Generative AI: Large language models generate coherent, human-like text and voice across channels.

- Real-Time Analytics and Orchestration: Millisecond-scale monitoring of interaction streams enables rapid AI or human intervention.

- Evolution of Customer Expectations: Consumers accept AI when it delivers speed, relevance and personalization.

- Strategic Imperatives for Agility: Economic volatility demands resilient, scalable engagement platforms.

These trends lower cost and complexity barriers, positioning hybrid AI-human ecosystems as strategic differentiators rather than experimental pilots.

Evaluating Human Capabilities in Hybrid Ecosystems

Emotional Intelligence

Emotional intelligence (EI) in professional contexts encompasses self-awareness, self-regulation, social awareness and relationship management. Organizations deploy psychometric instruments such as EQ-i 2.0 and ESCI to benchmark agent capabilities, framing scores as developmental indicators. Real-time platforms augment these assessments with behavioral analytics: Qualtrics integrates sentiment analysis of customer feedback into performance dashboards, while Salesforce Einstein applies machine learning to chat and voice transcripts to identify empathetic language and deliver coaching prompts.

Judgment Under Complexity

Judgment—the ability to make sound decisions amid uncertainty—relies on pattern recognition and mental simulation. Scenario-based assessments and simulations score agents on decision quality, resolution speed and adherence to ethical guidelines. Organizations align evaluation criteria with strategic objectives, whether minimizing compliance risk in financial services or maximizing first-call resolution in support centers.

- Accuracy of emotional perception and matching customer affective states.

- Consistency of self-regulation under stress.

- Adaptability of judgment across evolving products, regulations or demographics.

- Effectiveness of social awareness in identifying unspoken needs.

- Quality of decision-making in novel scenarios balancing risk and advocacy.

- Integration of feedback into continuous improvement cycles.

Leading practices include multi-source assessments, evaluator calibration sessions and transparency of scoring methodologies to mitigate biases and uphold trust.

Contexts Where Human Expertise Prevails

High-Stakes and Risk-Sensitive Environments

- Financial markets and wealth management where advisors interpret volatility and complex trade-offs.

- Healthcare and medical triage requiring diagnostic judgment and ethical sensitivity.

- Crisis management and incident response demanding rapid coordination and accountability.

Emotionally Charged and Conflict-Intensive Interactions

- Dispute resolution and complaints handling, where empathy drives loyalty more than speed.

- Support for vulnerable or at-risk populations relying on nonverbal cue detection.

- Ethical and moral deliberations involving sensitive personal data or end-of-life decisions.

Culturally Sensitive and Multilingual Engagements

- Cross-border customer service requiring cultural fluency and regulatory awareness.

- Market expansion and localization guided by qualitative research and community engagement.

- High-touch hospitality and luxury brands delivering personalized, etiquette-driven experiences.

Complex Decision-Making Under Ambiguity

- Strategic consulting and advisory services that synthesize cross-disciplinary insights.

- Product innovation and co-creation workshops led by human facilitators.

- Regulatory interpretation and policy guidance in evolving compliance landscapes.

Trust Building and Long-Term Relationship Management

- B2B sales and enterprise account management leveraging industry networks and negotiation acumen.

- Subscription and membership services focusing on adoption coaching and renewal negotiations.

- Brand ambassadorship and thought leadership signaling authenticity and trust.

Human Agents as Strategic Differentiators

Human agents bring adaptive reasoning, cultural fluency and relational intelligence that algorithms cannot replicate. Core competencies—empathy, contextual judgment and emotional regulation—transform routine exchanges into value-co-creation moments. Analytical lenses such as Service-Dominant Logic, the socio-technical systems framework, the resource-based view, the job characteristics model and the emotional labor framework help organizations assess and cultivate these strategic assets.

Operationalizing Hybrid Collaboration

Human networks entail higher per-interaction costs, scalability constraints and performance variability. Training investments must cover technical, regulatory and soft-skill domains, while support programs address emotional labor and mitigate biases. Privacy, compliance and governance frameworks are essential to maintain consistency, protect data and uphold ethical standards.

Key Considerations for Integration

- Process mapping and segmentation to allocate human oversight where stakes and complexity are highest.

- Skills development and role definitions aligned with active listening, ethical reasoning and cultural competence.

- Governance structures specifying escalation pathways, decision rights and compliance controls.

- Technology orchestration that routes interactions based on AI-detected triggers such as sentiment or regulatory flags.

- Performance metrics combining quantitative indicators with qualitative sentiment and brand perception assessments.

- Cross-functional collaboration among data scientists, service designers, compliance and HR specialists.

Future Outlook for Human Agents

As generative AI and predictive analytics mature, human professionals will increasingly assume oversight, mentorship and innovation facilitation roles. Continuous learning architectures—blending microlearning, real-time coaching and communities of practice—will sustain adaptive expertise in advanced critical thinking, ethical judgment and creative problem-solving. By positioning humans as stewards of brand integrity and customer relationships, organizations can orchestrate seamless hybrid ecosystems that deliver enriched experiences, resilient performance and enduring competitive advantage.

Chapter 3: Comparative Strengths and Limitations

Contrasting Performance Dimensions

Evaluating artificial intelligence agents, human professionals, and hybrid models requires a structured framework across four core dimensions: accuracy and precision; scalability and speed; empathy and emotional intelligence; and adaptability and learning. By mapping capabilities to these axes, organizations can align resource allocation with strategic objectives, balancing efficiency, quality, and customer experience.

Accuracy and Precision

Accuracy reflects an agent’s ability to deliver correct outcomes, while precision measures consistency across repeated tasks. AI systems excel in structured data environments, achieving error rates below one percent in document processing and compliance screening. Machine learning classifiers and anomaly detection algorithms sustain high throughput with minimal variance. Human agents, on the other hand, handle ambiguous or context-dependent tasks more effectively, interpreting nuanced requests and applying judgment. Manual processes typically exhibit error rates of three to five percent, influenced by fatigue and cognitive bias. Best practice combines AI for bulk processing and exception flagging, routing edge cases to human experts to maximize overall accuracy while controlling risk.

Scalability and Speed

Scalability denotes the capacity to absorb increased workloads without proportional cost rises, while speed measures response times. AI agents offer near-instantaneous processing and parallel handling of thousands of requests per minute, constrained only by infrastructure. Cloud platforms enable dynamic scaling to meet peak-demand SLAs. Human agents require recruitment, training, and supervision, leading to plateaued performance as volume grows. Yet in complex, unpredictable scenarios, skilled professionals may resolve issues faster by drawing on cross-functional knowledge and improvisation. Hybrid operations deploy AI for standard inquiries and preserve human bandwidth for critical or high-priority interactions, ensuring rapid resolution across the spectrum of demand.

Empathy and Emotional Intelligence

Empathy and emotional intelligence are essential in customer interactions that involve distress, frustration, or high stakes. Human agents recognize tone, cultural nuances, and unspoken cues, tailoring communication to defuse tension and build rapport. AI-driven sentiment analysis and emotion recognition tools can detect negative language or stress indicators, enabling systems to suggest empathetic dialogue paths. However, algorithmic empathy is limited by training data and response templates. Effective support operations use AI to surface high-risk interactions at scale, flagging them for human intervention where genuine emotional connection is required.

Adaptability and Learning

Adaptability measures responsiveness to novel situations, while learning captures ongoing improvement. AI models can be retrained via online learning and automated pipelines, ingesting new interaction data to refine decision boundaries. Robust governance is essential to prevent drift or bias. Human agents exhibit instant adaptability, interpreting emerging policies and integrating cross-domain insights. Hybrid models close the feedback loop: AI detects shifting conversation patterns and recommends updates to scripts or knowledge bases, which humans validate and implement, ensuring both agent types evolve together in alignment with business needs.

Analytical Trade-offs for Hybrid Decision Making

Strategic integration of AI and human agents involves balancing technological capabilities against organizational priorities, risk tolerance, and stakeholder expectations. A rigorous trade-off analysis spans environmental volatility, task complexity, and ethical accountability, guiding resource allocation and governance structures.

Volatility and Resilience

In volatile markets, organizations must weigh scalable throughput against situational adaptability:

- Throughput Strengths: AI delivers predictable response times, parallel processing, and 24/7 availability.

- Adaptability Strengths: Humans apply real-time judgment, creative problem solving, and emotional resilience.

Further trade-offs arise between risk containment and opportunity capture:

- Containment-Focused Automation: standardizes workflows to minimize operational risk but may overlook novel revenue streams.

- Opportunity-Driven Human Intervention: empowers personalized cross-sell and loyalty moments at the expense of consistency.

Scenario planning and volatility modeling help establish thresholds for AI autonomy and human oversight, balancing resilience with agility.

Complexity and Flexibility

Complex tasks require reconciling analytical precision with contextual reasoning:

- Pattern Recognition: AI achieves high recall and reproducible analytics in well-defined domains like fraud detection and predictive maintenance.

- Contextual Reasoning: Humans interpret soft cues, reconcile conflicting objectives, and navigate ambiguous regulations.

Standardization versus customization also shapes deployment models:

- Standardized Automation: leverages reusable templates and micro-interactions for efficiency, trading off personalization.

- Custom Human Engagement: delivers bespoke solutions and relationship building, requiring ongoing training and quality assurance.

Modular service architectures combine automated frameworks for routine flows with configurable human touchpoints for differentiated engagements.

Ethical Accountability

Balancing transparency, fairness, and performance is critical as AI assumes greater autonomy:

- Transparency versus Optimization: AI models may optimize metrics without explainable logic, while human agents articulate reasoning but risk concealing biases.

- Bias Mitigation versus Efficiency: algorithmic fairness initiatives demand data governance and audits; diversity and inclusion programs require cultural change.

Dual-track approaches embed bias detection in AI pipelines and human oversight committees for edge-case review. Compliance with regulations such as GDPR mandates explainable interactions and audit trails.

Key analytical viewpoints include:

- Trade-Space Mapping: visualizing performance envelopes for AI and human agents across metrics

- Scenario Simulation: stress-testing hybrid models under extreme volatility and complexity

- Governance Layering: defining decision rights, escalation protocols, and continuous audits

- Continuous Feedback Loops: integrating customer sentiment and operational telemetry to recalibrate trade-offs

Operational Suitability Scenarios

Mapping interaction characteristics—volume, complexity, emotional intensity—to agent capabilities reveals six archetypal scenarios. Leading organizations design ecosystems where AI, humans, or hybrids operate where they deliver optimal value.

Routine, High-Volume Interactions

Tasks such as billing inquiries, password resets, and order tracking demand speed, accuracy, and cost efficiency. Conversational AI chatbots and virtual assistants automate repetitive flows, with continuous learning loops that refine models based on fallback rates. Human supervisors monitor quality and handle exceptions, creating an AI-first triage with human fallback to maintain customer satisfaction while reducing costs.

Complex, Knowledge-Intensive Engagements

In technical troubleshooting and multi-step problem solving, AI-augmented desktops assist human agents by retrieving knowledge-base articles, surfacing historical cases, and suggesting diagnostic pathways. This “human-in-the-loop” model reduces cognitive load and accelerates resolution while preserving expert oversight for nuanced judgments.

Emotionally Charged Interactions

Customer complaints and crisis communications require genuine empathy and active listening. AI sentiment analysis serves as an early detector, routing high-risk cases to specialized human agents. This hybrid approach optimizes resources: bots handle routine feedback, humans manage emotional labor to restore trust and loyalty.

Rapid-Response, Real-Time Channels

Live chat, social media, and messaging apps demand instantaneous responses. AI provides immediate acknowledgments and basic information, while human agents intervene for nuanced issues or sentiment shifts detected by real-time analytics. Tiered workflows leverage AI scalability during peak events and human judgment for critical escalations.

Personalized, Consultative Sales Engagements

Cross-sell and up-sell scenarios benefit from AI-driven recommendation engines that analyze behavioral data and generate next-best offers. Human representatives then craft tailored proposals, negotiating contract terms and fostering long-term relationships. Analytics dashboards presenting customer lifetime value and propensity scores guide human prioritization.

Regulated or High-Stakes Compliance Scenarios

In financial advice, insurance underwriting, and healthcare consultations, AI enforces rule-based validations, flags exceptions, and logs audit trails. Human experts retain accountability for discretionary approvals and interpret grey-area regulations. “Compliance guardrails” ensure routine checks are automated, with predefined escalation paths for complex judgments.

Adaptive Collaboration in Hybrid Dynamics

Certain interactions blend volume, complexity, and emotional weight, such as real-time fraud alerts coupled with anxious customers. Predictive routing engines evaluate sentiment, customer value, and complexity indicators to assign tasks dynamically. Intelligent workflows orchestrate AI for continuous monitoring and humans for trust-critical segments, forming an adaptive collaboration model that responds to evolving signals.

Synthesis: Strategic Priorities and Governance

Optimal agent strategies are contingent on the relative importance of accuracy, scalability, empathy, and adaptability within specific business objectives. A disciplined prioritization framework aligns capabilities with mission-critical outcomes:

- Rank interaction types by impact on customer loyalty and revenue

- Map performance dimensions against risk thresholds (compliance, privacy)

- Calculate marginal benefits of automation versus human oversight

- Balance long-term scalability needs with upfront investment constraints

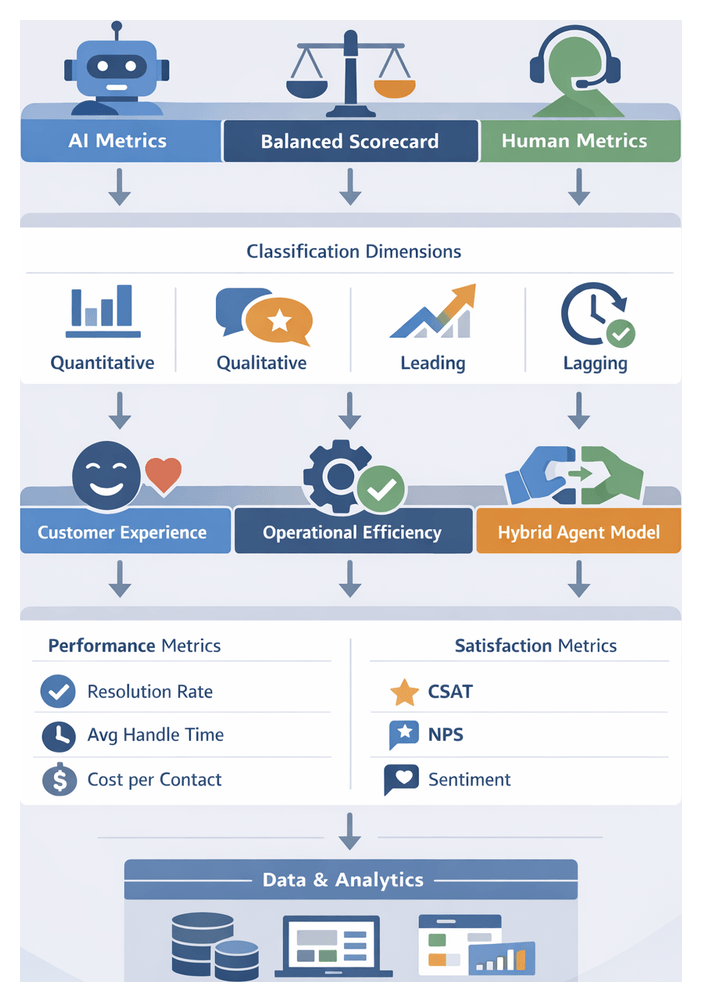

Strategic imperatives include:

- Phased Integration Roadmap: pilot hybrid models in defined use cases to validate hypotheses, refine hand-over logic, and measure impact before scaling

- Shared Knowledge Ecosystems: develop unified repositories feeding AI training pipelines and human knowledge bases for consistency and rapid learning

- Balanced Scorecard Monitoring: combine quantitative metrics (resolution time, error rates, cost per interaction) with qualitative indicators (customer sentiment, brand perception, agent satisfaction)

- Governance and Ethics Frameworks: establish steering committees, define ethical principles (transparency, fairness, accountability), map regulatory obligations, embed bias-detection pipelines, and enforce privacy impact assessments

- Culture of Collaboration: foster cross-functional teams where data scientists, operations leaders, and frontline agents co-author process improvements

Key limitations and risk considerations include data quality dependencies, adaptation latency, governance constraints, customer trust variations, cost structures, and ethical bias exposure. Addressing these requires ongoing capability development, continuous feedback, and iterative review.

Future readiness demands modular architectures that accommodate emerging AI capabilities such as contextual understanding and zero-shot learning, periodic trade-off reassessment, continuous upskilling for human agents, and scenario planning to stress-test hybrid models. By institutionalizing rigorous governance, strategic alignment, and adaptive performance calibration, organizations can harness the synergistic potential of AI and human professionals for sustained competitive advantage.

Chapter 4: Industry Use Cases and Business Applications

Market Dynamics and Strategic Imperatives

Organizations face converging pressures from digital disruption, customer expectations, technological advances, competitive intensity, economic constraints, talent realities and regulatory imperatives. Ubiquitous connectivity and on-demand services raise the bar for instantaneous, personalized experiences across channels. Automated agents powered by ChatGPT or Dialogflow can field routine inquiries at scale, while human professionals address complex or emotionally sensitive interactions. Fragmented architectures undermine continuity as customers shift between messaging, voice assistants and social media. Integrating AI and human workflows on unified platforms preserves context and enables seamless handovers, reducing friction and reinforcing brand loyalty.

Breakthroughs in large language models, reinforcement learning, cloud-based inference and specialized accelerators are expanding the scope of tasks suited to AI. Solutions like Microsoft Copilot embed assistants into productivity suites and Amazon Lex powers scalable conversational interfaces. Low-code/no-code tools democratize process automation, enabling business users to configure hybrid workflows without deep technical expertise. To sustain agility, organizations adopt modular, API-driven architectures that support iterative upgrades of individual components without disrupting end-to-end processes.

Competitive pressure accelerates innovation cycles. Startups harness AI to rapidly prototype novel services, while incumbents deploy hybrid agent models to maintain speed-to-market. Embedding AI-driven sentiment analysis to flag high-value interactions for human escalation ensures that strategic issues receive tailored attention. This balance accelerates deployment while preserving quality and trust.

Economic efficiency remains central. AI agents provide elastic capacity, scaling with demand to contain labor costs, improve first-contact resolution and optimize resource allocation through real-time analytics. Delegating repetitive processes to AI reduces cost-per-interaction by up to 40 percent, freeing budget for strategic initiatives.

Talent constraints in advanced analytics, AI governance and conversational design demand new roles—AI trainers, prompt engineers and hybrid supervisors—alongside upskilling for frontline agents. Comprehensive training in data literacy, ethical AI and change management ensures that human professionals can interpret AI insights and intervene judiciously.

Regulatory frameworks—GDPR, CCPA and industry-specific mandates—require transparent AI decision-making, auditability and respect for data subject rights. Ethical considerations around bias, fairness and accountability necessitate governance policies, model validation protocols and human review of high-stakes decisions. Proactive compliance builds trust and mitigates legal risks.

Evaluating Hybrid Performance and Value

Assessing the impact of pure AI, human-only and hybrid models requires standardized metrics across operational efficiency, customer experience and risk management. Operational benchmarks include average handle time, with AI-chatbots often halving resolution durations, first-contact resolution rates above 80 percent and cost-per-contact reductions of 20–40 percent under hybrid strategies. Customer experience relies on satisfaction scores above 90, net promoter lifts of around 10 points and sentiment analysis to monitor emotional tone. Risk management metrics measure compliance accuracy near 100 percent, error rates below 1 percent in critical processes and escalation frequencies reflecting optimal AI autonomy without quality degradation.

Industry-specific outcomes vary. In retail and e-commerce, Dialogflow-powered order tracking and product recommendations cut handle times by 50 percent, while human agents manage complex returns and loyalty issues. Financial services embedding IBM Watson for automated KYC achieve over 99 percent compliance accuracy and 60 percent reduction in manual reviews, with advisors handling exceptions. Healthcare providers using Microsoft Azure Bot Service improve first-contact resolution by 30 percent for scheduling and prescription refills; clinicians oversee diagnosis support. High-volume support centers leveraging AgentLink AI report 25 percent cost savings and maintain satisfaction above 88 percent through iterative feedback between AI and human teams.

Interpretive frameworks guide strategic evaluation. The balanced scorecard aligns financial goals with efficiency metrics, customer objectives with satisfaction and loyalty, internal processes with compliance accuracy and learning with employee engagement. The technology adoption lifecycle contrasts performance across innovators to laggards, revealing accelerated maturity and larger efficiency gains in hybrid deployments. The value realization model tracks benefits over time against investment baselines, balancing cost savings, revenue uplifts and risk mitigation to calculate net return.

Experts caution that no single metric suffices. Gartner recommends limiting AI autonomy triggers to maintain escalation rates near 15 percent before diminishing returns in customer satisfaction. McKinsey finds that combining AI automation with targeted human intervention can boost productivity by up to 30 percent while preserving net promoter increases of 8–12 points. Forrester urges continuous sentiment monitoring, integrating real-time dashboards for timely human support when emotion signals spike.

Emerging patterns show that hybrid maturity correlates with stable outcomes: systematic refinement of hand-off protocols and feedback loops secures consistent resolution and satisfaction rates. Domain-specific AI models outperform general-purpose bots by 20–30 percent in intent recognition. Empowered human agents who interpret AI recommendations drive higher resolution rates and lower error frequencies. Robust governance frameworks underpin compliance and customer trust, ensuring sustainable performance.

Contextualizing Hybrid Agent Strategies

Effective hybrid models consider external market conditions, customer profiles, technological readiness, cultural factors, competitive dynamics and vendor ecosystems. Multidimensional context analysis informs which collaboration models deliver optimal value, the timing of initiatives and governance structures needed for resilience and ethical integrity.

Regulatory and Compliance

In finance, healthcare and telecommunications, data residency rules and cross-border transfer restrictions dictate on-premises versus cloud processing. Auditability standards require comprehensive logging, while professional codes of conduct shape agent training. Risk-based frameworks—such as the European Banking Authority’s stress tests or the U.S. Food and Drug Administration’s software as a medical device guidelines—guide layered governance, with periodic AI validation and human final approval in critical scenarios.

Customer Segmentation and Behavior

Demographic and behavioral profiling reveals which cohorts embrace AI and which demand human interaction. Journey maps and personas combine quantitative indicators—channel switching, resolution times—with qualitative feedback to pinpoint friction points. Channel affinity analysis informs the mix of text bots, voice assistants and human operators. Behavioral triggers—abandoned carts, negative sentiment—activate dynamic routing rules, ensuring empathetic human engagement where self-service falls short.

Technology Maturity

Organizations with unified data platforms, modular APIs and real-time analytics enable seamless AI-human handoffs. Assessments using the capability maturity model integration reveal levels of standardization, automation and continuous improvement. High maturity correlates with formal governance, embedded change management and integrated performance dashboards, facilitating rapid prototyping and iterative refinement.

Culture and Capabilities

A culture valuing experimentation and data-driven decision-making accelerates hybrid adoption. Change models—Kotter’s eight steps, Schein’s cultural dimensions—help leaders articulate vision, mobilize champions and reinforce new behaviors. Cross-functional governance forums and performance systems aligned with collaborative KPIs—such as joint resolution rates—foster shared accountability. Training in critical thinking, emotional intelligence and ethical reasoning equips teams to co-manage AI-human interactions.

Competitive Dynamics

Porter’s Five Forces and the Blue Ocean Strategy canvas reveal how customer bargaining power, threat of substitution and market fragmentation influence the urgency of hybrid investments. Brands facing low switching costs must deliver seamless experiences through intelligent coordination, while cost leaders may emphasize AI for high-volume tasks and reserve human expertise for premium segments. Time-to-market for new offerings often begins with AI prototypes, with human oversight ensuring compliance and quality.

Vendor Ecosystems

Vendor selection shapes integration complexity and total cost of ownership. Frameworks like the Gartner Magic Quadrant and Forrester Wave help map provider capabilities, distinguishing platform leaders and niche specialists. Turnkey implementations and co-development alliances influence customization speed and knowledge transfer. Licensing and usage-based pricing require alignment with projected volumes and seasonal peaks. Reference architectures accelerate prototyping but must align with data governance. Partnerships with system integrators and managed service providers support end-to-end lifecycle management.

Scenario-based planning exercises layer these factors onto potential use cases, using heat maps, decision matrices and impact-effort charts to translate context into clear strategic pathways. Continuous reassessment and feedback loops ensure that hybrid agent strategies evolve alongside market conditions and technological progress.

Lessons from Hybrid Deployments and Future Directions

Key Success Factors

- Strategic alignment between business objectives and hybrid processes

- Robust data governance and unified integration

- Human-centric workflow design with clear escalation protocols

- Continuous monitoring and feedback loops between AI and human agents

High-performing implementations define clear outcomes—such as improved resolution rates or increased upsell—leveraging AI for scale and human judgment for nuance. Data streams must be unified and high quality to maintain contextual relevance. Feedback loops, where human experts review AI suggestions to refine models, drive adaptive resilience and support iterative improvements.

Common Pitfalls

- Overestimating AI capabilities without sufficient change management

- Fragmented escalation processes for edge-case handling

- Ethical and regulatory oversights leading to bias or compliance gaps

- Operational complexity when human-AI coordination lacks modular architecture

Premature scaling of underprepared systems can frustrate users and expose risks. Clear change-management programs, structured training and stakeholder buy-in are essential to avoid underperformance. Governance checkpoints and audit trails prevent unintended bias and maintain compliance. Modular architectures and defined accountability mitigate scalability challenges.

Strategic Considerations

- Modular, API-driven architectures for incremental capability upgrades

- Balanced investment in human expertise and AI innovation

- Transparent governance with documented decision criteria and bias mitigation

- Communities of practice for cross-functional knowledge sharing

Incremental pilots of advanced AI modules in controlled environments allow organizations to validate performance before enterprise-wide rollout. Cross-functional committees ensure alignment on ethics, compliance and strategic priorities. Communities of practice foster shared learning among AI engineers, domain experts and customer-facing teams, accelerating continuous improvement.

Limitations and Future Research

Quantifying long-term returns remains challenging, especially for intangible benefits like brand loyalty and employee engagement. Multilingual and cross-cultural deployments expose variations in expectations and legal requirements that general models may not address. As AI roles deepen, algorithmic bias and transparency concerns intensify, demanding rigorous validation and interpretability frameworks. Hybrid ecosystems are dynamic; periodic reassessment against evolving standards, channels and behaviors is critical. Collaboration among academia, industry consortia and regulators can close empirical gaps in ethical certification, real-time bias detection and adaptive governance.

Hybrid AI-human collaboration is a strategic imperative, not a transitional phase. By aligning technological innovation with organizational readiness, embedding robust governance and fostering continuous learning, enterprises can unlock new horizons of customer value and operational excellence.

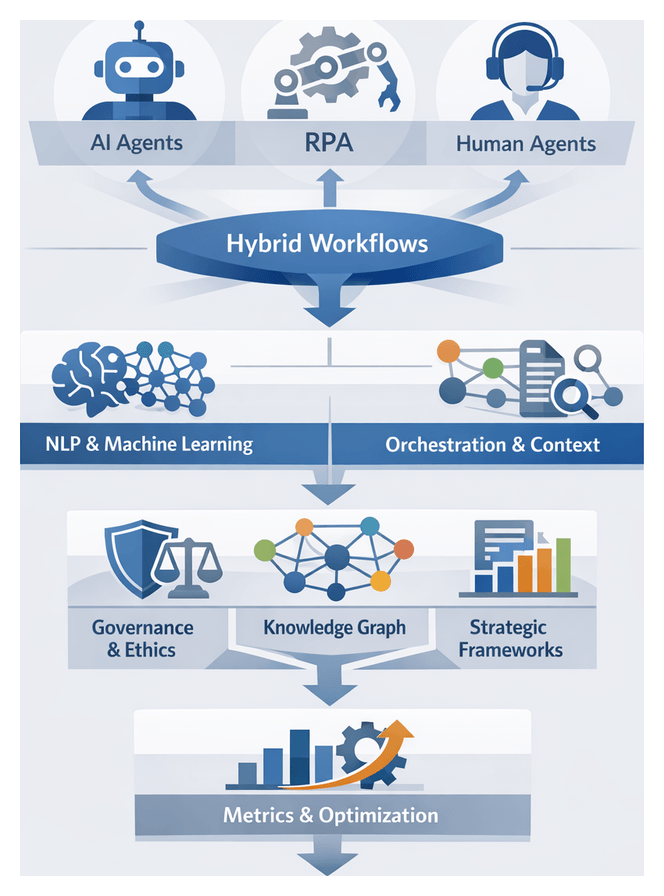

Chapter 5: Architecting Hybrid Collaboration Models

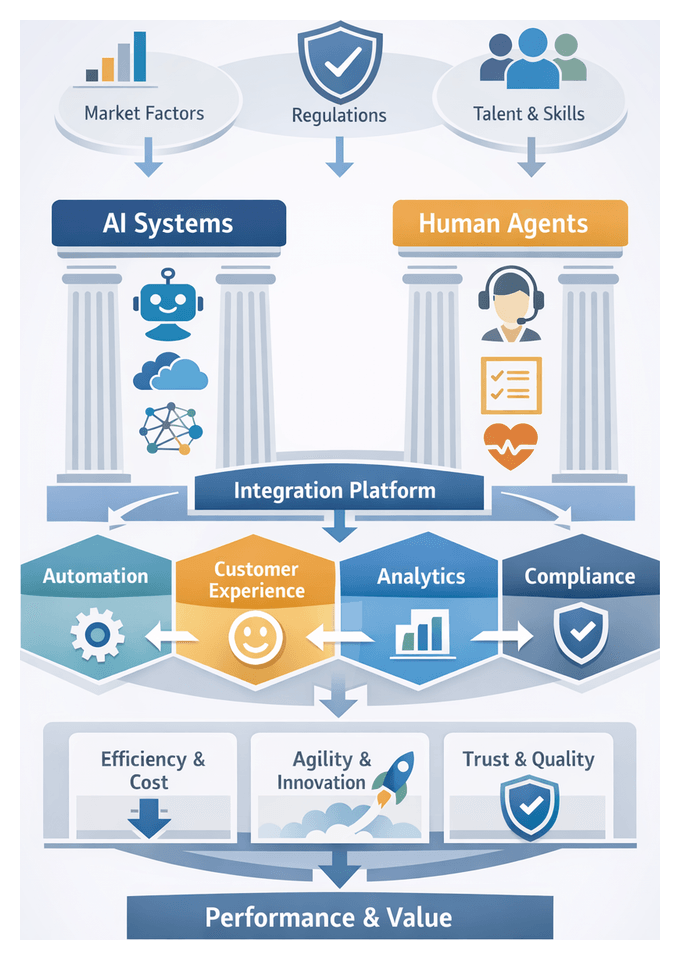

Market Forces Driving AI-Human Collaboration

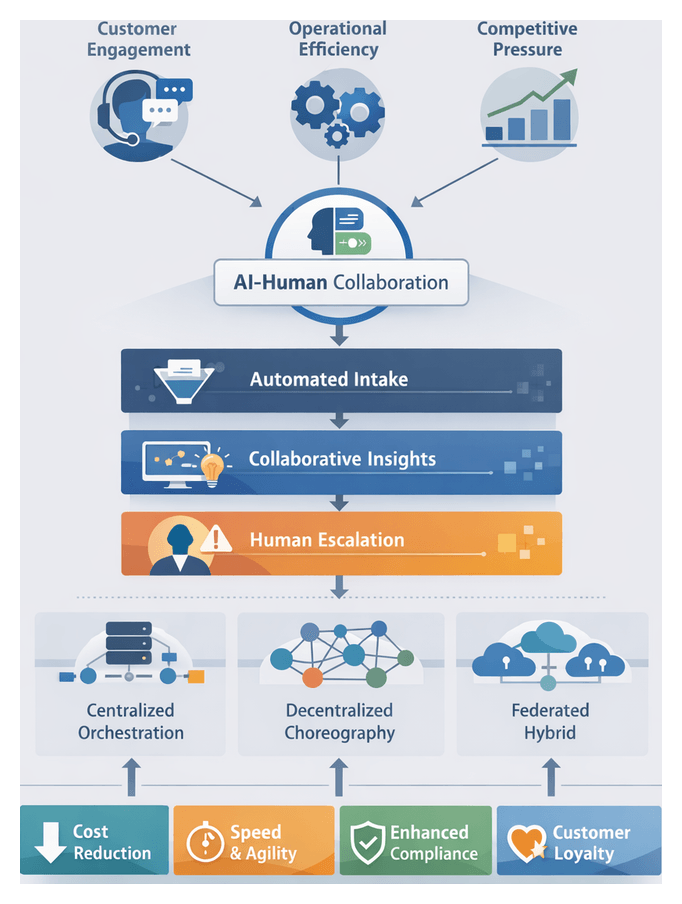

In today’s competitive landscape, organizations face mounting pressure to deliver personalized, responsive experiences while containing costs and maintaining agility. Rising labor expenses, evolving customer expectations, and digital-first disruptors have accelerated the shift toward integrated AI-human models. Three key drivers underscore this transition:

- Complex Customer Engagement: Clients demand real-time, omnichannel support that blends empathy with efficiency.

- Operational Efficiency: Automating routine tasks frees human agents to focus on exceptions and strategic interventions.

- Competitive Urgency: Digitally native challengers force legacy enterprises to adopt AI augmentation or risk falling behind.

By aligning automated intelligence with human judgment, hybrid collaboration becomes a strategic imperative. Firms that invest in complementary AI-human capabilities can reduce costs, accelerate response times, and enhance customer loyalty without increasing headcount.

Strategic Timing and Technological Readiness

Recent advances in deep learning, natural language processing, and real-time analytics have matured AI platforms to enterprise-grade reliability. Solutions like IBM Watson now deliver contextual understanding once limited to human experts. Simultaneously, remote work trends and talent shortages have heightened the value of augmenting human agents with AI to handle routine volumes and maintain service levels during peak demand.

Regulatory developments—such as GDPR and CCPA—and scrutiny around algorithmic fairness further drive hybrid adoption. Automated controls complemented by human oversight offer a robust framework for compliance, bias mitigation, and data privacy. Organizations that act now can capture first-mover advantages, embed scalable models, and build capabilities to accommodate future innovations.

Conceptual Framework for AI-Human Synergy

Effective hybrid models harness the unique strengths of AI and humans through layered interactions. A three-stage architecture illustrates this synergy:

- Automated Intake: AI platforms such as OpenAI GPT-4 parse inquiries, classify intent, and resolve routine issues.

- Collaborative Processing: AI surfaces insights—sentiment cues, risk flags, personalized recommendations—and delivers them via unified dashboards to human agents.

- Strategic Escalation: Human experts handle complex or emotionally sensitive cases, applying contextual judgment and empathy.

Key principles include seamless transition between AI and human agents, transparent data exchange that enables models to learn from human interventions, and governance protocols to ensure quality and trust. By framing AI and people as collaborators, organizations unlock collective intelligence to tackle intricate challenges swiftly and accurately.

Integration Architectures for Scalability and Governance

Selecting the right architecture is critical to sustaining high-volume hybrid interactions. Three dominant models prevail:

Centralized Orchestration

A core engine coordinates data flows among AI components, human interfaces, and backend systems. Platforms like Google Contact Center AI and IBM Watson Assistant provide unified consoles for intent recognition, agent hand-offs, and policy enforcement. Centralization simplifies monitoring, compliance, and auditability but can become a bottleneck under peak loads unless designed for horizontal scalability and fault tolerance.

Decentralized Choreography

Decision logic is distributed across microservices that communicate via event streams or message buses. Azure AI Services exemplify this approach with event grid and service bus constructs. Choreography offers superior scalability and fault isolation but requires robust service meshes to enforce governance, security, and traceability across components.

Federated Hybrid Architectures

Combining orchestration and choreography, federated designs use a lightweight central layer for session management while enabling local autonomy for processing. This model supports regional data residency, centralized monitoring of model drift, and balanced governance. Financial institutions, for example, route classification tasks through a managed API and handle credit adjudications within local data centers.

Architects evaluate solutions against key dimensions:

- Resilience and Fault Tolerance: failover strategies, recovery objectives, and chaos-engineering practices.

- Operational Visibility: quality of telemetry, alert thresholds, and dashboard completeness.

- Scalability and Performance: capacity under realistic and burst traffic scenarios.

- Governance and Compliance: enforcement of data policies, access controls, and audit logging.

- Flexibility and Extensibility: ease of onboarding new AI services, workflows, or third-party integrations.

Vendors like Salesforce Einstein and Twilio Flex offer preconfigured connectors and programmable frameworks to accelerate deployments. Strategic criteria—alignment to business objectives, technical debt, vendor lock-in, data governance maturity, and innovation velocity—guide phased architectures that evolve from turnkey pilots to modular, open ecosystems.

Seamless Hand-over Mechanisms

The transition between AI and human agents critically impacts service quality and efficiency. High-performing organizations embed seamless hand-overs that preserve context, maintain conversational continuity, and dynamically prioritize escalations.

Enhancing Customer Experience Consistency

Cohesive transitions require unified session histories, real-time sentiment alerts, and escalation rules tied to customer value. When chatbots smoothly pass cases to humans, brands minimize customer effort and protect Net Promoter Scores.

Strengthening Operational Resilience

Seamless hand-overs enable elastic workforce utilization and operational redundancy. During peak events or staffing gaps, AI absorbs routine volumes while human specialists focus on exceptions. This load smoothing reduces average handling times and supports adherence to service-level agreements.

Aligning Training and Workforce Development

As AI handles more routine tasks, agents must be upskilled for nuanced, high-value interactions. Role redefinition, contextual training with AI-generated dialogue logs, and continuous feedback loops ensure agents stay engaged and effective.

Governance and Compliance

Robust controls for data minimization, role-based access, audit trails, and consent management safeguard privacy and regulatory adherence during hand-overs.

Monitoring and Continuous Improvement

Hand-over data enables analysis of success rates, time-to-resolution deltas, sentiment shifts, and qualitative feedback. Treating this data as a strategic asset drives iterative refinements and aligns technology with human workflows.

Contextual Applications

- Retail and E-commerce: AI manages product inquiries, passing complex returns to human agents during peak sales.

- Financial Services: Virtual assistants handle basic account questions, escalating suspicious transactions to advisors.

- Healthcare and Insurance: AI triages symptom checks and policy inquiries, with licensed professionals addressing clinical or claim complexities.

- Telecommunications and Utilities: Chatbots resolve billing questions, handing off technical troubleshooting to senior support staff.

Leadership Imperatives

Executives must invest in scalable hand-over infrastructures, align cross-functional teams, embed policies within ethical AI frameworks, and redefine talent strategies to reflect hybrid roles. Mastery of seamless transitions distinguishes industry leaders in AI-driven customer engagement.

Design Principles for Operational Harmony

Aligned Orchestration of Agent Roles

Define clear boundaries and hand-off points that assign automated tasks—like data queries and forecasting—to AI, and complex advisory interactions to human professionals. Role taxonomies guide decision engines and training programs to ensure strategic alignment.

Transparency and Shared Context

Capture and display metadata—sentiment scores, AI confidence levels, previous outcomes—in unified dashboards. Transparency fosters trust, enables coherent experiences, and supports accountability.

Modular Architecture for Scalability and Flexibility

Decouple AI services from core engagement systems using microservices and standardized APIs. Conversational modules built on Google Dialogflow or OpenAI GPT-4 can evolve independently, reducing vendor lock-in and simplifying compliance.

Continuous Feedback Loops and Performance Calibration

Implement closed-loop learning where agents annotate AI outputs, flag anomalies, and feed qualitative insights back into model retraining. Metrics such as correction frequency and post-escalation satisfaction measure calibration effectiveness.

Governance, Accountability, and Ethical Guardrails

Establish oversight boards to review fairness metrics, privacy impact assessments, and stress-test scenarios. Embed bias audits and incident response plans into design workflows to uphold ethical standards.

Human-Centric Augmentation and Empowerment

Present AI recommendations—confidence intervals, alternative options, risk alerts—in ergonomically designed interfaces. Scenario-based simulations prepare agents to interpret outputs and refine prompts, reinforcing human agency.

Alignment with Business Strategy and Key Metrics

Map hybrid workflows to KPIs—average handle time, first-contact resolution, upsell conversion—and apply A/B tests to validate value drivers. Dashboards integrating AI logs with business analytics enable real-time performance tracking.

Adaptability and Resilience in Evolving Environments

Embed scenario planning and capability roadmaps to evaluate performance under spikes or regulatory changes. Cross-functional councils and centers of excellence oversee technology refresh cycles, ensuring graceful pivots.

- Data Quality Constraints: High-integrity pipelines are essential to prevent biased or incomplete inputs.

- Change Management Complexity: Address cultural resistance and skills gaps through stakeholder alignment and phased rollouts.

- Regulatory Uncertainty: Maintain agile policies to adapt to evolving transparency and privacy mandates.

- Interoperability Challenges: Leverage middleware or service meshes to bridge legacy systems and vendor platforms.

- Resource and Cost Trade-offs: Balance investments in governance, feedback mechanisms, and maintenance against efficiency gains.

- Ethical and Reputation Risks: Conduct continuous audits to detect bias and protect customer trust.

Adhering to these design principles—aligned orchestration, transparency, modularity, iterative feedback, robust governance, human-centric augmentation, strategic alignment, and adaptability—enables organizations to build sustainable, scalable AI-human collaboration models that deliver differentiated customer experiences and long-term competitive advantage.

Chapter 6: Organizational Readiness and Cultural Alignment

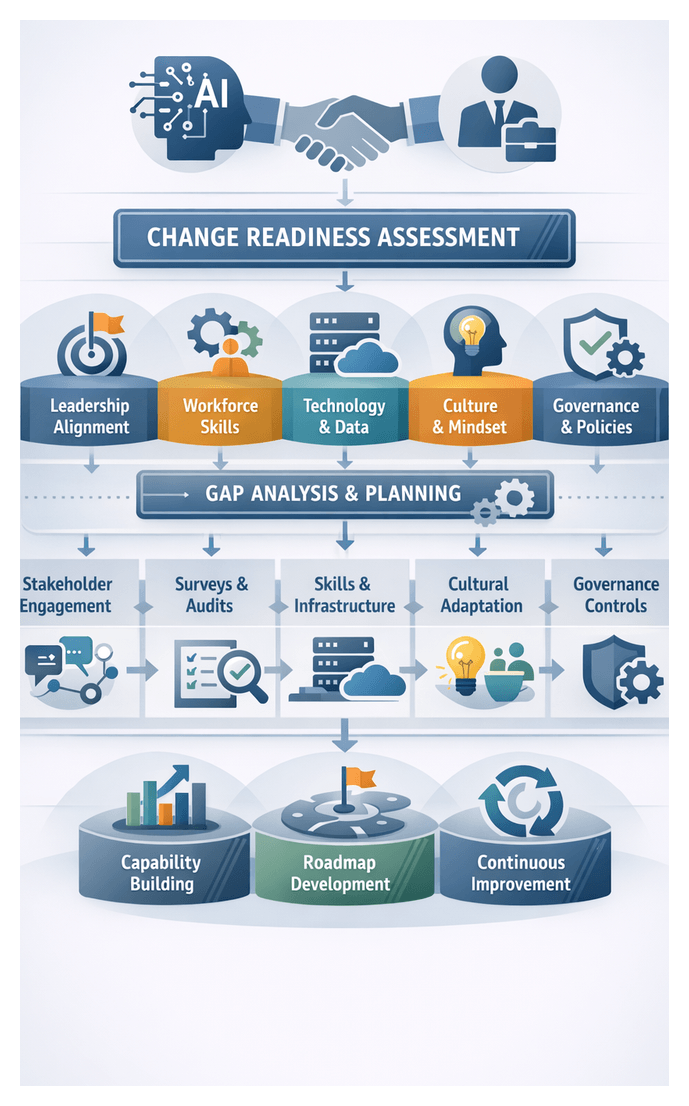

Assessing Change Readiness and Capabilities

Organizations preparing to integrate AI agents alongside human professionals must first evaluate their capacity for change. A readiness assessment aligns leadership vision, workforce skills, technology infrastructure, cultural mindsets, and governance processes to ensure strategic investments in hybrid collaboration deliver maximum value. Readiness is a continuum reflecting an enterprise’s ability to adapt and learn, not a binary state.

This assessment clarifies AI objectives, highlights gaps, and engages stakeholders across executive sponsors, IT, operations, HR, and frontline teams. It examines both tangible assets—data architecture, integration platforms, automation frameworks—and intangible attributes such as leadership commitment and cultural openness.

Key Dimensions of Readiness

- Leadership Alignment and Vision

- Workforce Skills and Competencies

- Technology and Data Infrastructure

- Cultural Mindsets and Behavioral Change

- Governance, Policies, and Processes

Leadership Alignment and Vision

Leaders articulate a clear vision for AI-human collaboration and link it to measurable outcomes. Indicators include executive sponsorship, strategy integration, and transparent communication of goals and milestones. High alignment accelerates decision paths and cross-functional cooperation.

Workforce Skills and Competencies

Hybrid teams require both technical proficiency—data literacy and AI concepts—and adaptive skills such as problem solving and critical thinking. Assessments map existing skills to required capabilities, review training programs, and evaluate talent mobility. Continuous development and cross-training foster collaboration between AI specialists and business users.

Technology and Data Infrastructure

Robust ecosystems support seamless AI workflows from data ingestion to real-time inference and human handover. Evaluate data quality frameworks, API-driven integration architectures, cloud scalability, and security controls. Audits reveal infrastructure gaps that may hinder pilot programs or full deployments.

Cultural Mindsets and Behavioral Change

Cultivating openness to experimentation, trust in AI outputs, and collaboration norms is essential. Recognize and reward cross-disciplinary problem solving, and support teams as they absorb new workflows. Visible role modeling and storytelling reinforce desired behaviors.

Governance, Policies, and Processes

Clear decision rights, escalation protocols, ethical controls, and continuous improvement mechanisms ensure safe and efficient collaboration. Strong governance reduces risk and ambiguity, while weak processes invite delays and inconsistent outcomes.

Conducting the Assessment

- Stakeholder Interviews to capture qualitative insights.

- Surveys and Diagnostic Tools to quantify readiness and benchmark performance.

- Document and System Review of strategies, process documentation, and technology inventories.

- Gap Analysis Workshop with cross-functional teams to validate priorities.

- Roadmap Development assigning owners, timelines, and success criteria.

Building Foundational Capabilities

- Targeted Skill Development through training, certifications, and mentorship.

- Technology Upgrades including modern data platforms and scalable compute resources.

- Governance Framework Establishment defining policies and oversight committees.

- Cultural Activation via innovation labs and co-creation workshops.

- Process Reengineering to integrate AI-driven decision support and human checkpoints.

Monitoring Progress and Adapting

- Periodic Re-assessment of readiness dimensions.

- Performance Metrics such as deployment velocity, user satisfaction, and model accuracy.

- Governance Reviews evaluating ethical compliance and risk exposure.

- Continuous Learning through communities of practice and post-implementation reviews.

An ongoing readiness capability ensures that organizations evolve with technological advances and market shifts, enabling sustained AI-human collaboration.

Leadership and Stakeholder Roles

Effective AI-human integration depends on clearly defined roles and governance structures. Responsibilities span executive sponsors, middle managers, frontline champions, cross-functional bodies, and external partners. Analytical frameworks such as RACI matrices, stakeholder salience models, and maturity assessments provide structure and objectivity.

Executive Sponsors and Coalition

A coalition of executives—including the CEO, CFO, and COO—provides legitimacy, budget, and strategic coherence. Steering committees and performance dashboards track adoption milestones, reinforce urgency, and model AI-driven decision making in leadership forums.

Middle Management as Translators

Middle managers bridge strategy and operations, embedding AI objectives into team goals and KPIs. They coordinate pilots, facilitate training, and sustain momentum through feedback loops. Clear role charters and accountability mechanisms ensure alignment with workflows.

Frontline Champions

Operational champions validate AI use cases in live environments, advocate for tools among peers, and convene communities of practice. Incentives such as recognition programs empower grassroots innovation and inform governance decisions.

Cross-Functional Governance

A two-tiered structure—an oversight board and an implementation council—balances strategic policy with technical execution. Legal, compliance, HR, IT, and business units define risk thresholds, ethical guardrails, and operational standards, preventing siloed decision-making.

External Stakeholders

Vendor partnerships, consulting alliances, regulatory bodies, and industry consortia extend governance beyond the enterprise. Joint steering committees, co-development roadmaps, and policy forum participation ensure compliance and drive innovation.

Role Clarity and Accountability

Tools such as the RACI matrix and stakeholder heat maps define decision rights and engagement levels. Living role charters, updated through governance reviews, reduce ambiguity and accelerate issue resolution.

Leadership Mindsets and Influence