Intelligent Defenders AI Agents Revolutionizing Threat Detection in 2026

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Security Landscape in 2026 and Emerging Threats

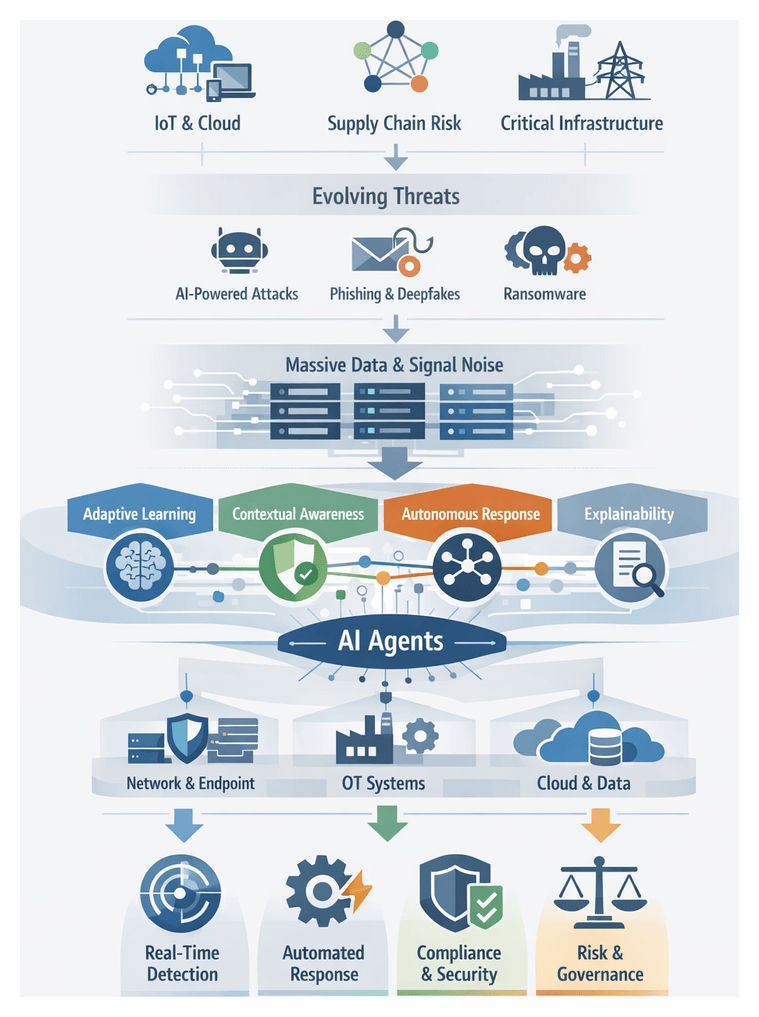

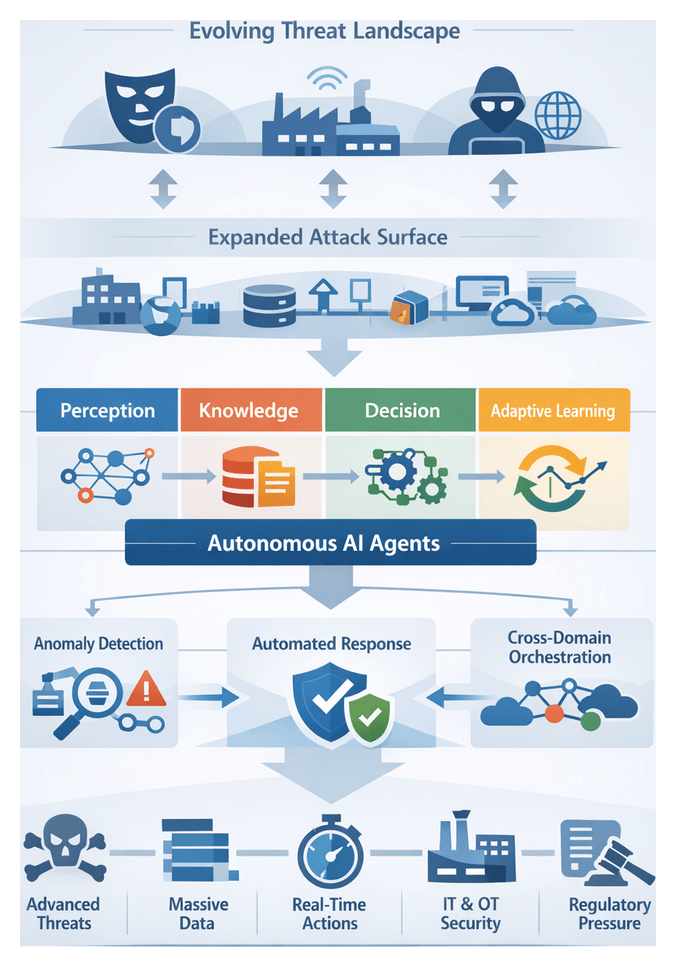

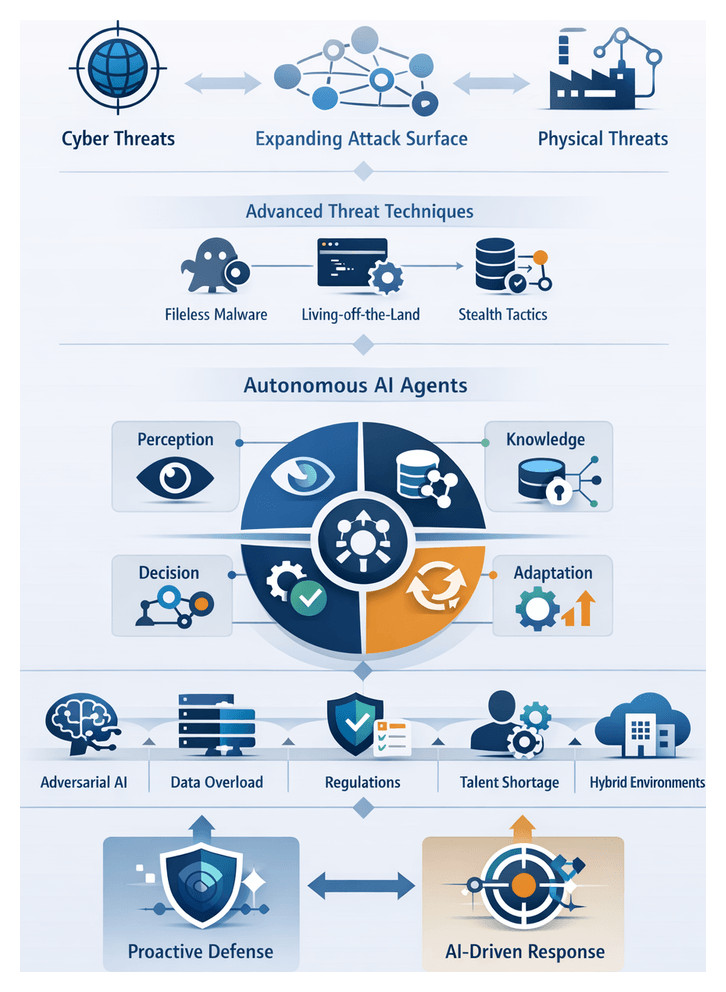

As organizations enter 2026, the security landscape has evolved into a complex battlefield spanning digital and physical realms. The rapid adoption of Internet of Things sensors, 5G-enabled edge computing clusters and expansive multi-cloud infrastructures has broadened the attack surface. Each connected device, cloud instance and third-party integration represents a potential foothold for adversaries. Supply chain interdependencies further amplify risk, as a breach in one vendor ecosystem can cascade through multiple downstream organizations.

Malicious actors now leverage automation and artificial intelligence to orchestrate large-scale campaigns. Automated reconnaissance tools map network topologies in seconds, while machine-generated phishing and voice deepfakes evade human scrutiny. Ransomware-as-a-service platforms dynamically customize payloads to bypass signature-based defenses. In this environment, static patch-after-breach strategies prove insufficient against adversaries who adapt faster than manual processes can respond.

Critical infrastructure sectors—energy grids, water treatment facilities and transportation networks—depend on operational technology systems originally designed without cybersecurity in mind. In late 2024, a minor vulnerability in an industrial controller allowed attackers to disrupt pipeline operations, illustrating how cyber-physical convergence can imperil millions of consumers. Such incidents underscore the necessity for real-time detection across network telemetry and sensor-level anomalies.

The volume and velocity of security data have exploded. Network flows, endpoint logs, user behavior analytics, threat intelligence feeds and cloud audit records collectively generate hundreds of millions of events per day. Even skilled analysts using platforms like Microsoft Azure Sentinel or Splunk struggle to prioritize alerts and uncover subtle attack patterns buried within routine noise.

Emerging threat categories amplify this complexity. Generative AI crafts highly personalized social engineering lures, adversarial machine learning corrupts detection models, and insider threats exploit legitimate privileges across blurred hybrid work environments. Legacy architectures built around perimeter defenses and static rule sets cannot adapt at the required speed or scale. Organizations thus face mounting regulatory pressures, board-level demands for transparent security metrics and supply chain mandates that raise the stakes of non-compliance.

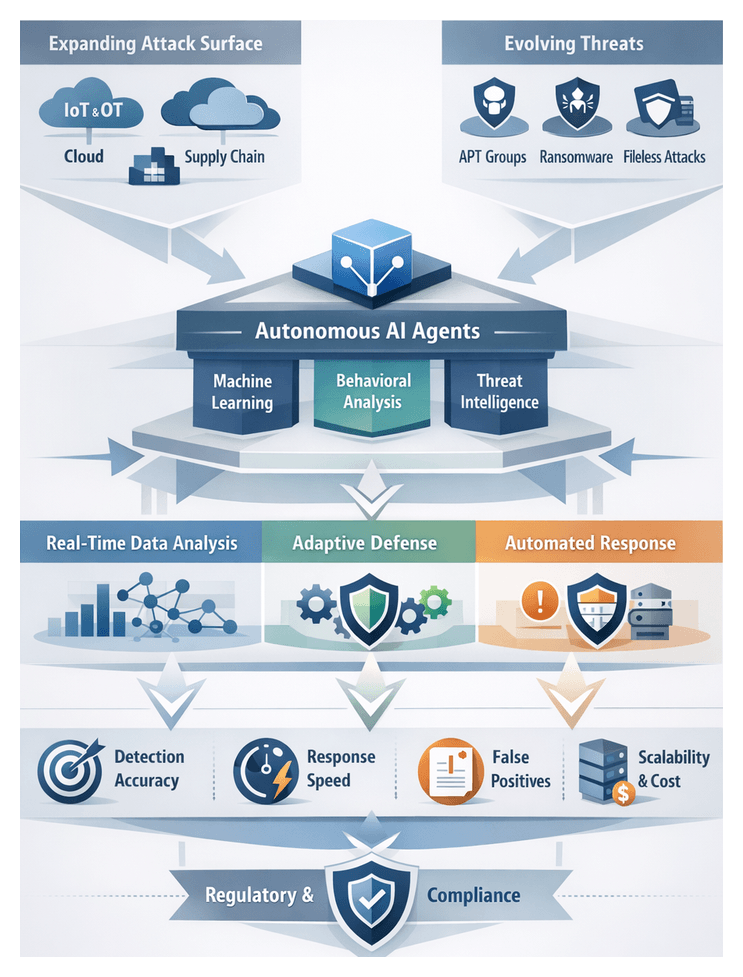

To thrive in this dynamic environment, security teams must transition from reactive to proactive postures. Detection capabilities should evolve beyond signature matching toward behavioral analysis and anomaly detection driven by advanced machine intelligence. Automated workflows need to triage and remediate incidents with minimal human intervention, reserving expert resources for strategic tasks. Leading-edge organizations are piloting autonomous agent frameworks such as Darktrace Antigena and CrowdStrike Falcon Fusion XDR. These platforms integrate network metrics, endpoint process flows, user authentication events and external intelligence to construct holistic situational awareness. Agents can then initiate containment actions—isolating compromised hosts or quarantining malicious processes—often before human analysts become aware of an incident.

Deploying autonomous defenses at scale presents challenges in integration, orchestration and governance. Security leaders must define escalation thresholds, human override policies and audit trails. Investments in data hygiene and labeling practices ensure reliable inputs for learning algorithms, while cross-functional teams refine detection rules and guide continuous improvement. The convergence of cyber and physical threats, AI-driven adversary techniques and data volume growth has created a turning point: autonomous AI agents offer the potential to detect nuanced deviations, coordinate multi-vector responses and learn iteratively from emerging threat patterns. Operating continuously at machine speed, they bridge the gap between detection and action.

Conceptual Framework of Autonomous AI Agents

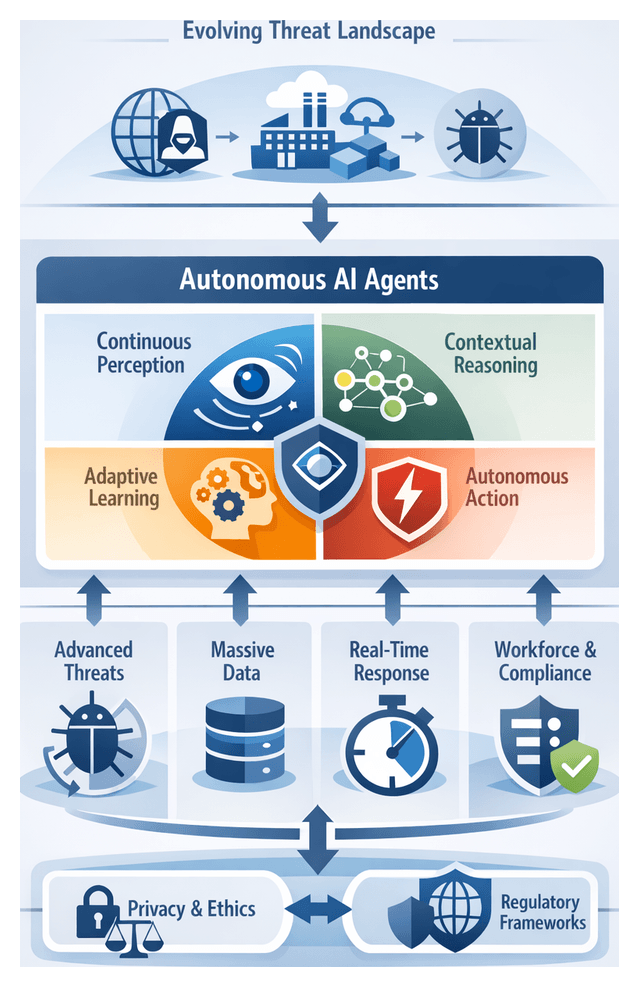

Autonomous AI agents represent a paradigm shift from tool-centric defenses to self-governing systems that operate with minimal human intervention. These dynamic entities sense environmental changes, evaluate emergent threats and execute adaptive responses. Unlike rule-based engines that rely on static signatures, agents ingest heterogeneous data streams, apply probabilistic inference and refine internal models over time to address polymorphic threats and zero-day exploits at scale.

Standards organizations and research consortia provide reference models that guide agent adoption. The National Institute of Standards and Technology (NIST) positions agents within the cyber resiliency lifecycle, while the MITRE ATT&CK framework offers a threat behavior ontology for categorization and prediction. Security operations leaders assess agent efficacy using metrics such as mean time to detect (MTTD), reduction in dwell time, true positive rate and false positive rate. Cost-benefit analyses examine total cost of ownership and projected return on investment when integrating agents into existing workflows.

Analysts apply classic decision-making theories, notably the OODA loop (Observe, Orient, Decide, Act), to autonomous agents. Instead of human operators, agents ingest telemetry, contextualize threat indicators, determine optimal response paths and execute containment or remediation steps continuously at machine speed. In Security Operations Centers, agents serve as force multipliers—escalating high-fidelity alerts, recommending investigative playbooks and automating routine triage tasks. Conceptually, an agent functions as a junior analyst with specialized capabilities, learning from human feedback to refine future actions.

Interpretive frameworks such as the security mesh view agents as interconnected nodes within a distributed fabric, sharing threat intelligence, harmonizing risk assessments and orchestrating coordinated responses. Within zero trust architectures, agents dynamically validate every transaction and user behavior, granting access based on continuous risk scoring. This alignment with zero trust principles—where trust is never assumed and decisions derive from real-time analytics—reinforces agents as dynamic policy engines.

Core Attributes of Autonomous AI Agents

- Adaptive Learning: Utilization of reinforcement learning and continuous retraining to evolve detection models in response to emerging threats.

- Contextual Awareness: Correlation of user behavior, asset profiles and external intelligence to generate alerts with richer context.

- Decentralized Execution: Operation at network edges and endpoints to minimize latency and maintain coverage during segmentation.

- Autonomous Response: Initiation of containment measures—such as isolating endpoints or modifying firewall rules—without manual approval.

- Explainability: Provision of human-readable justifications for decisions to support auditability and regulatory compliance.

From a governance perspective, risk officers incorporate agent-derived metrics into enterprise risk management models, informing board-level reporting. Auditors review logs that record decision rationales and evidence of change management for autonomous rule updates. In regulated industries, vendor evaluations use request-for-proposal frameworks that map agent capabilities—threat coverage, scalability, integration maturity and model transparency—to business outcomes, guiding procurement decisions.

Drivers Accelerating AI-Based Threat Detection

- Proliferation and Sophistication of Cyber Attacks: Adversaries employ automated exploitation, polymorphic malware and coordinated campaigns. Zero-day exploits have surged, while fileless techniques account for over a quarter of new attack methods. Platforms like Darktrace demonstrate how unsupervised learning models surface previously unseen threats by inferring malicious intent from anomalous activity.

- Explosion of Security Data and Signal Noise: Terabytes of daily telemetry across network flows, endpoint logs, cloud events and threat feeds overwhelm analysts. Autonomous AI agents use scalable data-fusion and statistical models to distill noisy inputs into actionable insights. For example, Google Chronicle applies high-throughput logging and threat scoring to reveal critical events hidden within billions of records.

- Imperative for Real-Time Detection and Response: Automated attacks can traverse networks and exfiltrate data within minutes. Streaming analytics architectures with sub-second threat scoring enable platforms like Azure Sentinel to trigger adaptive countermeasures instantly, reducing dwell time and limiting impact.

- Regulatory and Compliance Pressures: Regulations such as GDPR, CCPA and PCI DSS demand rapid breach detection, reporting and data protection. Automated detection technologies that log decision rationales and maintain immutable audit trails help substantiate compliance during audits.

- Persistent Skills Shortage and Operational Constraints: A global talent gap of over three million cybersecurity roles hinders 24×7 operations. AI agents automate routine analysis, triage incidents and execute low-risk remediation, allowing analysts to focus on threat hunting and strategy.

- Digital Transformation and Expanded Attack Surface: Cloud migration, software-defined networks and hybrid work models introduce diverse entry points. Unified agents such as CrowdStrike Falcon combine endpoint telemetry with cloud analytics to detect lateral movement and account compromise in real time.

- Convergence of Cyber and Physical Security: Cross-domain attacks exploit both IT and OT systems. AI agents that ingest data from access controls, surveillance platforms, industrial sensors and network logs provide a unified risk view, essential for protecting critical infrastructure.

- Competitive and Reputational Imperatives: Cyber resilience is a key differentiator in finance, healthcare and retail. Transparent metrics on time-to-detect, time-to-contain and false positive rates demonstrate proactive security postures that bolster stakeholder trust and brand equity.

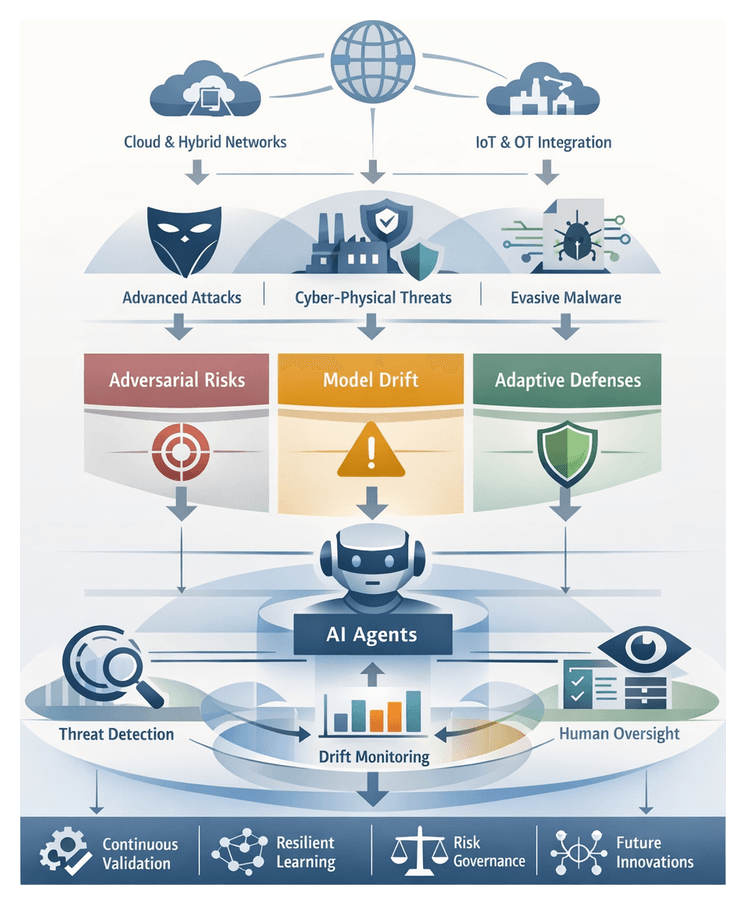

Key Considerations and Limitations

- Data Quality and Bias: Agents depend on large volumes of training data that may contain inconsistencies or biases, leading to blind spots or discriminatory detection patterns.

- Model Drift and Adversarial Manipulation: Attackers may exploit vulnerabilities in models through adversarial inputs or induce concept drift. Continuous monitoring and robust retraining pipelines are essential.

- Integration and Interoperability: Seamless integration with heterogeneous security stacks and orchestration frameworks requires standardized interfaces and data schemas.

- Governance, Ethics and Privacy: Policies must govern autonomous actions, human override processes and data handling to ensure accountability, transparency and compliance with evolving AI regulations.

- Human Oversight and Skills Gap: Effective deployment relies on skilled teams capable of interpreting AI outputs, investigating alerts and tuning agent parameters.

- Scalability and Performance Trade-Offs: Real-time inference demands significant compute resources. Balancing throughput, latency and cost is critical to maintain operational performance.

- Explainability and Trust: Black-box models can erode stakeholder confidence. Investing in explainable AI techniques supports transparency and auditability.

- Resource and Cost Constraints: Licensing commercial platforms, building proprietary models and maintaining data pipelines can strain budgets. Clear cost-benefit analyses are necessary to prioritize investments.

- Emerging Unknown Threats: Rapid adversary innovation—zero-day exploits, deep-fake social engineering and quantum-resistant cryptanalysis—requires continuous research and collaboration to anticipate new vectors.

- Vendor Lock-In and Technical Debt: Dependence on specific platforms creates lock-in risks. Open architectures and modular frameworks help preserve strategic flexibility.

By acknowledging these limitations and addressing them through governance, skilled personnel and resilient architectures, organizations can harness the full potential of autonomous AI agents while mitigating attendant risks. As threats continue to converge across cyber and physical domains, success will hinge on strategic alignment, continuous evaluation and a balanced integration of human expertise and machine intelligence.

Chapter 1: The Evolution of Threat Detection

The Security Landscape in 2026 and New Emerging Threats

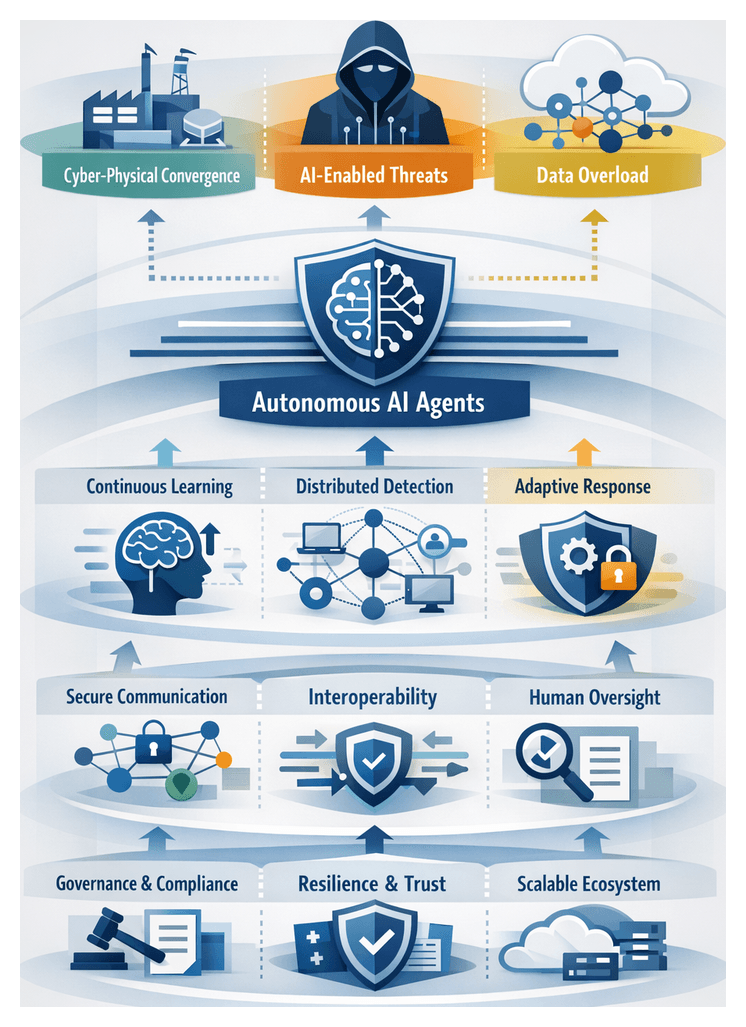

As organizations enter 2026, the security environment has evolved far beyond perimeter defense and signature-based detection. The integration of operational technology with information technology, the rapid proliferation of cloud services and the ubiquity of edge devices have blurred traditional boundaries, creating hybrid physical-cyber scenarios across smart cities, manufacturing floors and critical infrastructure. Cybercriminals and nation-state actors systematically exploit supply-chain weaknesses, injecting malicious components into software and hardware distribution channels. They leverage polymorphic malware frameworks, AI-driven social engineering and automated propagation tools to conduct targeted phishing, credential stuffing and deepfake campaigns that can traverse global networks within seconds, demanding defenses that operate at scale and in real time.

Modern digital ecosystems introduce novel attack vectors across industrial control systems, smart building infrastructure and connected medical devices, enabling adversaries to move laterally with minimal friction. Concurrently, digital transformation initiatives drive accelerated adoption of microservices architectures, containerized workloads, serverless functions and multi-cloud deployments. While these technologies offer unprecedented agility and efficiency, they also expand the threat surface and complicate vulnerability management. Security teams must ingest and analyze a deluge of telemetry from network flow logs, packet captures, endpoint sensors, user and entity behavior analytics, threat intelligence feeds and IoT device metrics—overwhelming traditional security operations centers and manual processes.

Regulatory frameworks such as the California Consumer Privacy Act and the European Union’s General Data Protection Regulation have heightened the stakes for data protection and transparency. Companies are required to demonstrate accountability in their security controls, maintain auditable logs and report breaches within strict timeframes. Industry standards bodies—from the National Institute of Standards and Technology Cybersecurity Framework to the MITRE ATT&CK knowledge base—provide structured guidance for threat modeling and risk management. Yet implementation gaps persist as organizations struggle with fragmented telemetry sources, siloed toolsets and the scarcity of skilled analysts. Against this backdrop, autonomous AI agents emerge as a strategic imperative for orchestrating real-time threat detection, reducing human burden and maintaining compliance in a dynamic threat landscape.

Autonomous AI Agents as a Conceptual Framework

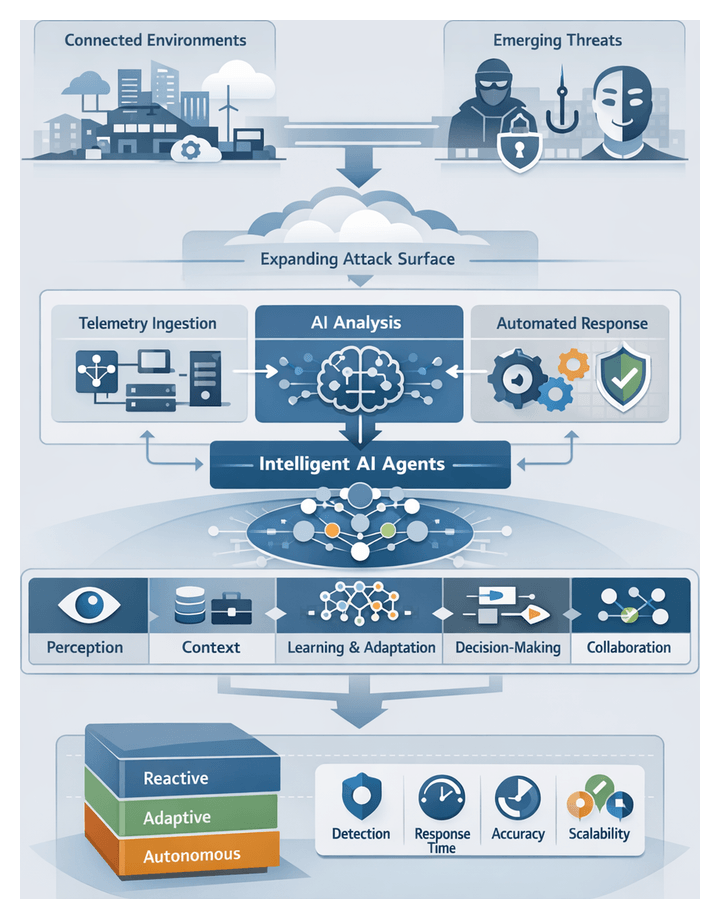

Autonomous AI agents represent a paradigm shift from reactive, rule-based security controls to proactive, self-directed systems that continuously learn, reason and act. Conceptualized as intelligent defenders, each agent maintains an ongoing perception of its environment, ingesting diverse telemetry streams, synthesizing contextual information and executing mitigation actions without manual intervention. By embedding advanced analytics engines, adaptive learning loops and policy-driven decision logic, autonomous agents evolve their detection strategies over time, refining models through feedback from false positives, adversarial testing and confirmed incidents.

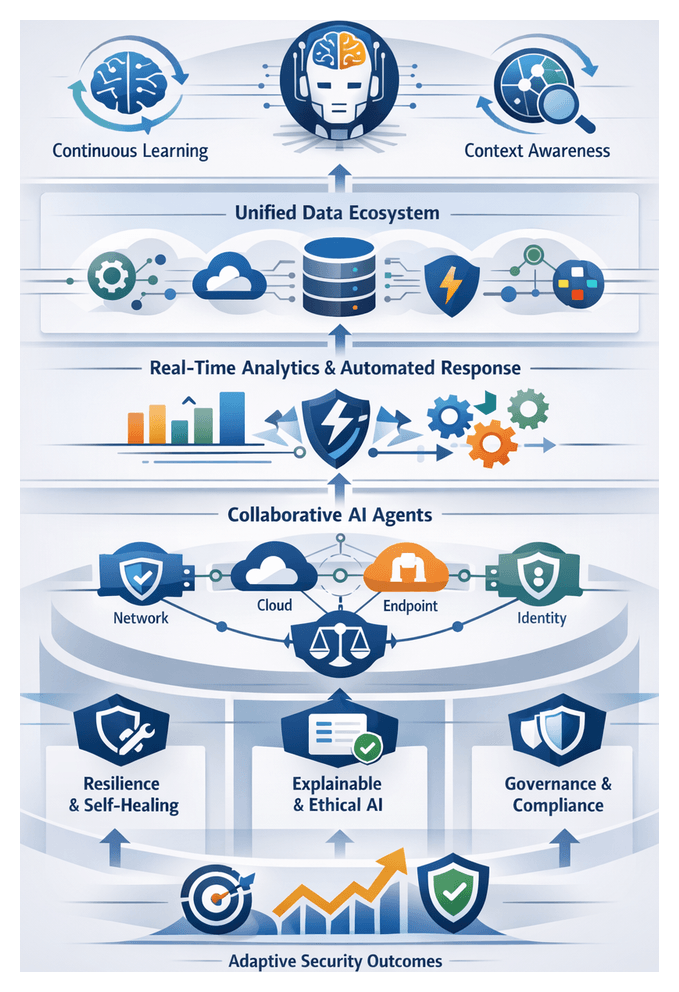

Within a multi-agent ecosystem, these systems collaborate and share intelligence to maximize coverage and reduce mean time to detection. Agents delegate tasks to human analysts or peer agents, escalate high-risk alerts and coordinate containment workflows across network segments, cloud environments and edge deployments. Research in autonomous systems underpins this approach, emphasizing robust decision-making under uncertainty, explainability and federated learning to balance centralized governance with distributed adaptability.

Core Attributes

- Perception: Continuous ingestion and interpretation of network flow, endpoint activity, application logs and IoT telemetry to capture a comprehensive view of the threat surface.

- Contextual Reasoning: Correlation of signals with historical baselines, asset criticality, business processes and enterprise risk models to prioritize threats according to impact.

- Analysis and Adaptive Learning: Integration of supervised, unsupervised and reinforcement learning algorithms that detect anomalies, update detection thresholds and refine heuristics autonomously.

- Decision Autonomy: Ability to initiate response actions—ranging from alert escalation to automated containment—based on predefined risk policies and dynamic threat assessments.

- Collaboration: Information sharing between agents and human teams via standard protocols, enabling coordinated responses and collective intelligence.

- Scalability: Elastic performance across high-velocity data streams and distributed environments, from on-premises data centers to edge deployments.

Maturity Models and Benchmarking

Organizations leverage maturity models to chart their progress toward fully autonomous defense. Typical maturity levels include:

- Reactive Monitoring: Agents detect known threat signatures and generate alerts for human analysts, with minimal automation of responses.

- Adaptive Detection: Agents employ machine learning to identify anomalies and adjust thresholds, yet require manual validation and tuning before acting.

- Assisted Response: Agents propose or initiate predefined containment measures—such as quarantining compromised endpoints—subject to analyst approval.

- Autonomous Defense: Agents execute multi-stage response workflows, update detection heuristics in real time and coordinate with peer agents without human intervention.

Standardized benchmarking frameworks—such as MITRE ATT&CK evaluations—assess agent performance across key indicators:

- Detection Accuracy: Percentage of simulated or real-world threats correctly identified.

- Time to Detect (TTD): Elapsed time from initial compromise to agent recognition of malicious activity.

- Time to Respond (TTR): Interval from threat detection to automated or orchestrated containment actions.

- Alert Noise Ratio: Volume of non-actionable or false-positive alerts per genuine security incident.

- Adaptation Rate: Frequency and effectiveness with which models update to counter emerging adversary tactics.

Vendors like Vectra AI and Darktrace publish anonymized performance data from these benchmarks, enabling security teams to compare autonomous efficacy and drive vendor selection based on empirical metrics. Smaller organizations often adopt turnkey platforms—such as Microsoft Sentinel or CrowdStrike Falcon—that embed autonomous detection modules out of the box, prioritizing seamless integration and minimal customization.

Drivers Accelerating AI-Based Threat Detection

Several converging factors are accelerating the adoption of autonomous AI agents in enterprise security:

- Explosion of Data Volume and Velocity: Organizations generate terabytes of security-relevant telemetry daily, exceeding the processing capacity of manual and rule-based systems and necessitating automated analysis at machine speed.

- Increased Sophistication of Adversaries: Attackers leverage automation, polymorphic and fileless malware, as well as AI-powered social engineering, to evade traditional signature-based defenses and bypass static rule sets.

- Operational Constraints: Security teams face talent shortages, burnout and budget pressures. Autonomous agents handle routine alert triage, freeing analysts to focus on strategic threat hunting and incident response.

- Regulatory and Compliance Imperatives: Evolving privacy laws and industry mandates demand auditable, transparent security operations. AI-driven logging and decision-tracking provide verifiable trails to support compliance with CCPA, GDPR, PCI DSS and emerging AI governance regulations.

- Need for Real-Time Decision-Making: In a landscape where threats can propagate globally within seconds, automated agents minimize dwell time and limit potential damage by executing immediate containment actions.

These drivers have prompted vendors to integrate machine learning libraries, graph analytics engines, real-time streaming architectures and explainable AI frameworks into their platforms. Open-source initiatives and commercial offerings alike emphasize agent orchestration, federated learning and human-AI teaming to meet enterprise governance and scalability requirements.

Guide Overview and Reader Outcomes

Organized to support a logical progression from foundational concepts to strategic deployment, this guide provides analytical frameworks, conceptual models and practical case studies. Readers will advance through the following themes:

- Evolution from static signature engines to adaptive, AI-driven detection and the strategic implications for security operations

- Anatomy of an AI agent: perception modules, knowledge repositories, decision engines and adaptive learning loops

- Machine learning paradigms—supervised, unsupervised, reinforcement and transfer learning—and their applications in threat detection

- Data ecosystem mapping: normalization techniques, data quality considerations and integration of diverse telemetry sources

- Real-time analytics: streaming architectures, event correlation methods and automated countermeasure workflows

- Multi-agent collaboration and human-AI teaming frameworks for coordinated detection and response

- Cross-sector case studies with performance metrics, scalability considerations and operational impacts in finance, healthcare, infrastructure and retail

- Technical resilience: adversarial manipulation, model drift, false positives and strategies for sustaining agent reliability over time

- Ethical, privacy and regulatory considerations aligned with global governance frameworks, including the EU AI Act and the NIST AI Risk Management Framework

- Future trends: explainable AI, predictive threat hunting, quantum-enhanced detection and self-healing network architectures

By engaging with this guide, readers will:

- Align AI agent architectures with business objectives, risk appetites and security goals

- Apply comparative frameworks to evaluate learning paradigms, data quality impacts and analytics design trade-offs

- Assess scalability, performance and resilience of multi-agent deployments in hybrid environments

- Balance innovation with ethical, privacy and compliance imperatives through governance models and audit mechanisms

- Anticipate how emerging computational paradigms and threat hunting methodologies will reshape future defense strategies

Applying Insights to Security Roadmaps

Security teams can operationalize these frameworks by conducting internal maturity assessments aligned with the thematic pillars. Starting with threat landscape analysis, organizations should map existing capabilities against desired maturity levels and identify gaps across agent anatomy, data ecosystem and operational workflows. Cross-functional workshops facilitate human-AI teaming exercises to define roles, responsibilities and escalation protocols. Illustrated case studies provide performance benchmarks, while compliance checklists guide regulatory alignment. Structured roadmaps and pilot programs enable iterative refinement, supporting continuous improvement and rigorous model governance.

Contextual Boundaries

- Scope of Analysis: Focuses on conceptual frameworks and strategic guidance; detailed implementation requires further technical research.

- Rapid Technological Change: Agent capabilities and adversarial tactics evolve quickly; frameworks should be revisited and adapted periodically.

- Data Variability: Quality, volume and formats of telemetry differ across environments; data normalization and fusion strategies must be tailored locally.

- Regulatory Diversity: Global jurisdictions impose varying requirements on data sovereignty, privacy and AI governance; region-specific legal analysis is recommended.

- Case Study Applicability: Benchmarks from early adopters serve as reference points but may not directly translate to all organizational contexts.

Chapter 2: Anatomy of an AI Agent

Security Landscape and Emerging Threats

As organizations enter 2026, security defenses face an intricate fusion of cyber and physical attack vectors. The boundary between traditional IT environments and operational technology has dissolved under the pressure of hybrid work models, cloud migrations, and the proliferation of Internet of Things devices. Industrial control systems, smart buildings, and networked medical equipment now connect directly to corporate networks and public cloud services, offering adversaries diverse paths to exploit software vulnerabilities, manipulate programmable logic controllers, or compromise supply-chain components.

Malicious actors leverage automation and artificial intelligence to accelerate reconnaissance, craft highly personalized spear-phishing campaigns, and execute adversarial machine learning to evade detection. Custom generative models produce phishing content tailored to specific organizational contexts, while automated lateral-movement frameworks identify and exploit network misconfigurations without human intervention. As a result, breach lifecycles compress from weeks to hours, demanding security solutions that match this velocity.

The volume, velocity, and variety of security telemetry have grown exponentially. Daily petabytes of packet captures, endpoint logs, cloud audit trails, and user behavior analytics overwhelm traditional rule-based systems. Security operations centers struggle to extract meaningful signals from this noise in real time, leading to alert fatigue and unaddressed threats lingering undetected. Simultaneously, regulatory regimes—such as GDPR, CCPA, PCI DSS, and HIPAA—impose stringent breach notification timelines and transparency requirements, while geopolitical tensions drive nation-state actors to target critical infrastructure sectors.

Key dimensions of this evolving threat environment include:

- Convergence of cyber and physical systems, expanding attack surfaces across IT, OT, and IoT domains

- AI-augmented adversaries automating vulnerability discovery, evasion techniques, and phishing workflows

- Supply-chain compromises propagating malicious code across thousands of downstream environments in minutes

- Overwhelming telemetry growth challenging real-time analysis and signal discrimination

- Heightened regulatory and geopolitical pressures requiring rapid detection, transparent reporting, and compliance management

Legacy signature engines and static correlation rules can no longer keep pace with polymorphic malware, fileless attacks, or cloud misconfigurations. Excessive false positives and missed novel threats erode trust in alerts, while manual tuning cannot adapt swiftly enough. To confront this relentless environment, enterprises must embrace adaptive security architectures powered by autonomous AI agents capable of continuous learning, decentralized decision-making, and coordinated response across dispersed infrastructures.

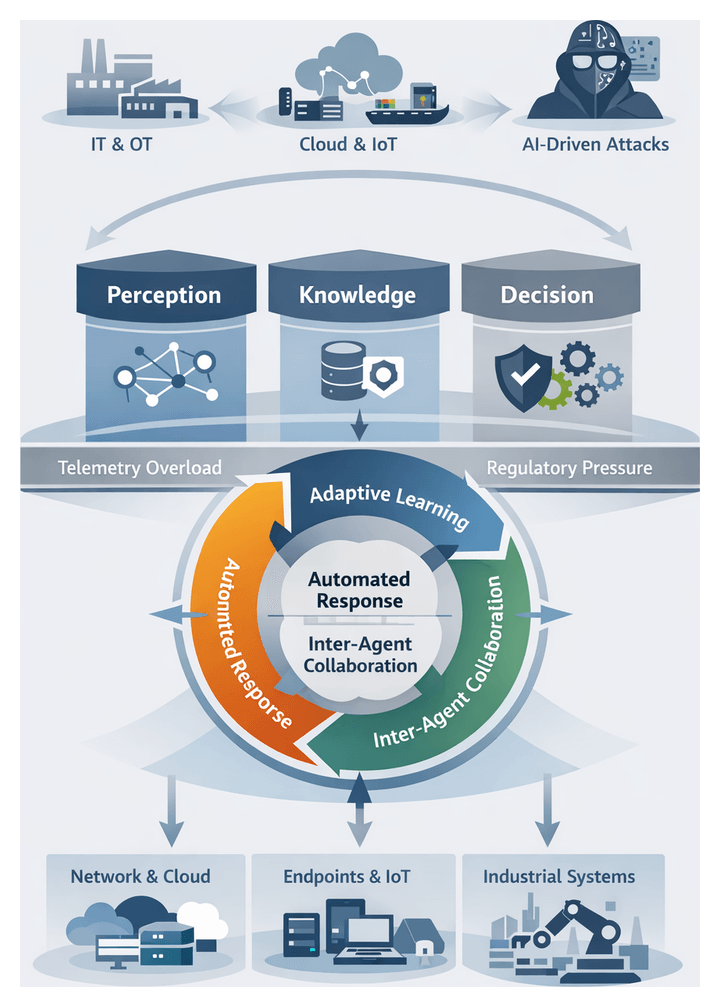

Conceptual Framework of Autonomous AI Agents

Autonomous AI agents represent a transformative approach to threat detection and response. These software entities function as intelligent sensors and responders, continuously perceiving inputs from diverse data sources, reasoning over contextual knowledge, and executing actions to neutralize or escalate incidents within predefined governance boundaries. Unlike traditional tools that depend on static signatures or rule sets, autonomous agents evolve their detection logic through feedback loops, refine confidence thresholds, and collaborate with peer agents to share threat intelligence.

Defining Attributes

- Perception and Contextual Awareness: Agents ingest network flows, host process logs, cloud API events, and threat intelligence feeds, normalizing and enriching raw data into structured events.

- Adaptive Learning Loops: Supervised, unsupervised, and reinforcement learning models enable agents to refine detection criteria, reinforce accurate identifications, and suppress false positives over time.

- Decentralized Decision Making: Localized analytics allow agents to autonomously trigger automated countermeasures—such as workload isolation or endpoint quarantine—when risk thresholds are exceeded.

- Inter-Agent Collaboration: Distributed agents exchange anonymized anomaly summaries and coordinate remediation actions across network segments and cloud regions.

- Governance-Conscious Autonomy: Policy and compliance guardrails define the scope of automated actions, ensuring that agents escalate complex incidents to human analysts according to organizational risk frameworks.

This modular architecture comprises a perception layer for data ingestion and feature extraction, a knowledge repository for contextual enrichment, decision engines executing inference and risk evaluation, and action modules for response orchestration. By decoupling these components, organizations can introduce new detection algorithms, integrate additional threat feeds, and update policies without disrupting core capabilities.

As a cohesive paradigm, autonomous AI agents align security operations with the dynamic nature of modern attacks, delivering continuous surveillance, rapid containment, and scalable defenses that adapt alongside evolving threat tactics.

Core Drivers of AI-Based Threat Detection

Several converging trends have elevated AI-driven detection from an innovation to an operational imperative:

- Escalating Attack Sophistication: Polymorphic malware, living-off-the-land techniques, and adversarial machine learning evade signature-based defenses, highlighting the need for behavior-centric analytics

- Data Volume and Velocity: Trillions of daily telemetry events—from endpoints, networks, clouds, and applications—overwhelm manual correlation, necessitating automated anomaly detection and prioritization

- Real-Time Decision Requirements: Automated response engines embedded within agents close the gap between detection and remediation, containing threats before they propagate

- Talent Shortages and Cost Pressures: The cybersecurity skills gap forces organizations to maximize efficiency; autonomous agents shoulder routine tasks, enabling lean teams to focus on advanced threat hunting and strategic initiatives

- Regulatory and Compliance Imperatives: Continuous monitoring and detailed audit trails support obligations under GDPR, CCPA, PCI DSS, HIPAA, and emerging national cybersecurity directives

- Digital Transformation and Cloud Adoption: As enterprises migrate to public clouds and microservices, security controls must adapt to ephemeral workloads; agents integrate with cloud APIs to maintain full visibility

Together, these drivers create a compelling case for embedding AI-based threat detection throughout security architectures. Organizations that harness machine learning, automation, and autonomous decision-making gain a decisive edge in detecting and disrupting sophisticated attacks.

Interplay of Perception, Knowledge, and Decision Modules

Effective AI-driven security depends on the tight integration of perception, knowledge representation, and decision engines. Industry practitioners view this triad as a unified analytical system where each layer enhances and depends on the others to deliver high-fidelity detections.

Perception as Threat Sensing

The perception layer functions as a global sensor network, gathering raw telemetry from endpoints, network taps, cloud environments, and external threat feeds. Leading solutions—such as IBM QRadar, Splunk, and Darktrace—employ advanced normalization and feature extraction to convert diverse data formats into consistent, enriched events. Breadth (coverage across network, host, cloud, and application layers) and depth (inspection granularity, from packet headers to system call traces) determine detection accuracy and analysis latency.

Knowledge Representation and Contextualization

Knowledge modules assign meaning to perceived events by fusing threat intelligence, attack graph constructs, behavioral baselines, and policy rules. The MITRE ATT&CK framework provides a standardized ontology, while proprietary feeds from CrowdStrike and Cortex XDR enrich detection with the latest indicators of compromise. Centralized knowledge stores ensure consistency; distributed repositories enhance local context awareness. Key evaluation criteria include coverage scope, update cadence, and the ability to reconcile conflicting sources without redundancy or bias.

Decision Engines and Analytical Frameworks

Decision engines synthesize perception outputs and knowledge context to determine risk and recommend responses. Architectures range from rule-based systems offering explainability, to statistical anomaly detectors adept at spotting deviations, to graph-based inference models that map entity relationships for multistage attack detection. Platforms like Microsoft Defender for Endpoint pair confidence scoring with explainable AI interfaces, enabling analysts to trace detections back to contributing features and accelerate validation.

Integrative Architectures and Data Flows

Orchestration layers coordinate synchronous pipelines for immediate correlation alongside asynchronous, event-driven streams—often leveraging Apache Kafka—for scalable, modular analysis. Hybrid designs route high-priority events through low-latency paths while funneling bulk telemetry into micro-batches for deep retrospective examination. This dual approach balances throughput, elasticity, and end-to-end latency requirements.

Strategic Evaluation Models

Security leaders employ frameworks such as the OODA loop, Belief-Desire-Intention cognitive models, the Kill Chain, and the Diamond Model of Intrusion Analysis to assess module synergy and emergent capabilities. Performance metrics—mean time to detect (MTTD), mean time to respond (MTTR), precision, recall, and alert fatigue indices—quantify operational efficiency. Comparative studies show that architectures with tightly integrated perception and knowledge modules can reduce false positives by up to 30%, while graph-based decision engines accelerate threat containment by 20–25%.

Deployment Contexts for Autonomous Agents

Optimal placement and configuration of AI agents across varied environments ensure comprehensive threat coverage. Deployment contexts include:

Network-Scale Deployments

Distributed agents act as sensors at data centers, branch offices, and cloud peering points. Lightweight edge modules detect anomalies early, while central inference clusters perform deep packet inspection and behavioral analytics. Integration with network detection and response platforms—such as Darktrace—leverages unsupervised learning models to establish baselines of “normal” traffic and flag subtle deviations, even within encrypted channels.

Endpoint and Workload Integration

Endpoint agents instrument kernel processes, user-mode activity, and in-memory execution to detect fileless attacks and living-off-the-land techniques. Solutions like CrowdStrike Falcon and Microsoft Defender for Endpoint combine on-device inference with cloud orchestration for cross-endpoint correlation, threat hunting, and automated response at scale.

Cloud-Native and Containerized Environments

AI agents interface with container orchestrators—such as Kubernetes—to monitor microservices, API calls, and resource utilization. Native detection services like AWS GuardDuty and Google Cloud Security Command Center embed AI agents within the provider’s infrastructure, enabling continuous assessment of configuration drift and malicious API behavior under the shared responsibility model.

Industrial Control Systems and IoT Networks

Resource-constrained MD sensors monitor industrial protocols—Modbus, DNP3, and proprietary SCADA communications—using passive or side-channel techniques. Vendors such as Claroty provide agent-lite appliances that inventory assets, track communication patterns, and detect command-sequence anomalies within segmented OT zones, safeguarding critical infrastructure operations.

Hybrid and Sector-Specific Architectures

Organizations with heterogeneous estates deploy hybrid agent architectures blending network, endpoint, and workload detection. Cross-domain correlation engines aggregate agent alerts with external feeds and historical incident data, mapping observations to MITRE ATT&CK techniques for prioritized response. Sector-specific adaptations include:

- Financial Services: Ultra-low latency agents monitor high-frequency trading systems and payment networks for transaction anomalies and insider threats, while supporting PCI DSS audit trails.

- Healthcare: Agents balance detection precision with HIPAA privacy requirements, distinguishing anomalous behavior in electronic health record systems and medical IoT devices without interrupting clinical workflows.

- Retail and E-Commerce: Agents integrate with point-of-sale networks and web application firewalls to detect payment card skimmers, credential harvesting, and unusual user journeys across online storefronts.

- Critical Infrastructure: Fault-tolerant agents operate under NERC CIP and IEC 62443 standards, providing early warning of coordinated attacks on utilities, transportation networks, and telecommunications backbones.

Risk-based models, kill-chain analysis, and resource-impact assessments guide agent placement and configuration, ensuring that detection coverage aligns with asset criticality and operational constraints.

Design Considerations and Performance Insights

Adopting autonomous AI agents requires careful balancing of architectural complexity, operational performance, and resource overhead. Security leaders should evaluate the following dimensions:

Component Complexity Versus System Overhead

Monolithic architectures minimize inter-module latency but complicate updates and scaling. Microservices designs enable independent component upgrades and horizontal scaling, though they introduce network serialization overhead. Clear API contracts and interface definitions facilitate targeted optimization of critical modules.

Scalability Trade-offs

Distributed agents processing parallel workloads require model synchronization. Eventual consistency reduces coordination delays but may produce temporary detection gaps, while strong consistency ensures uniform behavior at the cost of higher transport overhead. Organizations must align these trade-offs with latency requirements and autonomy preferences.

Accuracy Versus Latency

Ensemble learning models and multi-stage correlation pipelines improve detection precision and reduce false positives but demand greater compute cycles. Tiered decision frameworks conduct rapid initial scans for urgent threats, followed by in-depth secondary analysis, balancing speed and accuracy.

Resource Demand and Cost Management

AI inference and model training impose demands on CPU, GPU, memory, and storage resources. Elastic provisioning and usage-based scaling in cloud environments can optimize cost efficiency, while on-premises deployments may leverage hardware accelerators selectively for compute-intensive tasks.

Adaptability and Maintenance

Continuous learning loops sustain detection effectiveness but require staging environments for model validation, rollback mechanisms, and auditable change controls. Industries with stringent uptime mandates—such as healthcare and energy—often impose stricter update cadences to preserve stability.

Explainability and Analyst Trust

Transparent AI models that surface feature contributions, confidence intervals, and decision paths empower analysts to validate alerts and refine detection logic. Embedding contextual metadata—related historical events, threat intelligence matches, and policy references—enhances situational awareness and reduces triage time.

Integration with Security Ecosystems

Agents should support standardized data schemas—CEF, STIX/TAXII—and offer prebuilt connectors for SIEM, SOAR, and identity management platforms. Harmonized data flows enable unified dashboards, cross-system automation, and consolidated reporting, preventing siloed insights that obscure multi-stage attack patterns.

Robustness and Resilience Under Adversarial Conditions

Adversaries may attempt to poison models or exploit blind spots. Defensive measures—such as input sanitization, anomaly-based model monitoring, ensemble diversification, and graceful fallback modes (signature-based detection or manual escalation)—safeguard system integrity. Regular red-team exercises and adversarial testing detect drift early and restore efficacy before gaps widen.

Performance Benchmarking and Metrics

- True Positive Rate (TPR) and False Positive Rate (FPR)

- Mean Time to Detect (MTTD) and Mean Time to Respond (MTTR)

- Throughput (events processed per second) and end-to-end latency

- Resource utilization under peak load

- Model drift indicators and retraining frequency

- Alert prioritization accuracy

Establishing baseline benchmarks under representative workloads guides capacity planning and supports compliance reporting. Continuous monitoring of metric trends reveals emerging constraints or model degradation, triggering proactive tuning or scaling measures.

Limitations and Future Directions

Despite their advanced capabilities, AI agents face inherent limitations: encrypted traffic and proprietary protocols may evade inspection, data biases can skew detections toward familiar patterns, and resource constraints may limit model complexity. Complementary defenses—network segmentation, manual threat hunting, and active deception—remain vital components of a layered security strategy. Looking forward, advances in hybrid human-AI workflows, edge inference, and quantum-enhanced analytics promise to address current gaps, while evolving data sovereignty regulations and privacy mandates will shape the design of context-aware, locally processing agents.

By thoughtfully balancing these design considerations and performance insights, security leaders can deploy autonomous AI agents that deliver scalable, adaptive, and transparent threat detection—prepared to meet the challenges of 2026 and beyond.

Chapter 3: Machine Learning Foundations

As organizations enter 2026, the security landscape has grown more complex and perilous. Traditional perimeter defenses no longer suffice against converging cyber and physical attack vectors. Ubiquitous connectivity, remote work, cloud adoption and the proliferation of Internet of Things devices have expanded the attack surface. Nation-state actors, organized cybercrime syndicates and opportunistic hackers exploit supply-chain compromises, fileless malware and advanced social engineering to disrupt operations and steal data. Operational technology networks converge with information technology systems, creating real-world safety risks in sectors such as energy, transportation and manufacturing. A successful breach can trigger production halts, safety incidents and regulatory violations with severe financial and reputational costs.

To counter these threats, organizations must shift from reactive, signature-based defenses to adaptive, intelligence-driven approaches. Real-time threat detection, context-aware analytics and proactive containment strategies are essential for resilience. In this context, autonomous AI agents emerge as a pivotal innovation, offering continuous monitoring, rapid decision-making and self-directed response capabilities that can outpace advanced adversaries.

Autonomous AI Agents as a Conceptual Framework

Autonomous AI agents represent a new paradigm in threat detection. Unlike traditional security tools that rely on static rules and manual intervention, autonomous agents integrate perception, knowledge, reasoning and learning in a unified architecture. They operate with a high degree of self-direction, continuously sensing their environment and adapting to emerging threats.

Key attributes of autonomous AI agents include:

- Self-governance, coordinating sensing and response workflows without manual orchestration

- Continuous learning, adapting to new threat patterns through online learning mechanisms

- Real-time autonomy, enabling sub-second threat identification and containment

- Collaborative intelligence, where multiple agents share insights to build a collective defense posture

Agents ingest diverse data streams—network telemetry, endpoint logs, user behavior analytics and external intelligence feeds—through perception modules. Knowledge repositories maintain formalized threat intelligence alongside contextual awareness of organizational assets and policies. Decision engines evaluate observations against evolving threat models, weighing risk factors and response options. Reinforcement learning techniques refine policies over time: upon detecting a novel threat, an agent triggers an immediate response—such as isolating an endpoint or throttling network access—while updating its internal models for improved future defense.

Drivers Accelerating AI-Based Threat Detection

Several factors are driving the rapid adoption of AI-driven threat detection and autonomous agent architectures:

- Escalating Attack Sophistication: Adversaries use polymorphic malware, living-off-the-land techniques and deepfake social engineering to evade static controls.

- Data Volume and Velocity: Security data streams—from network flows to cloud audit logs—have grown exponentially, overwhelming manual analysis.

- Workforce Constraints: Talent shortages and alert overload strain security teams, making automated triage and response essential.

- Real-Time Decision Needs: Rapid lateral movement of threats demands instantaneous containment without awaiting manual approval.

- Regulatory and Compliance Pressures: Frameworks such as GDPR and CCPA require demonstrable data protection controls and incident response audit trails.

- Cloud and Hybrid Architectures: Distributed environments introduce visibility gaps; agents ensure consistent monitoring across on-premises, cloud and edge.

Comparing Learning Paradigms for Threat Detection

The choice between supervised learning and reinforcement learning frameworks is a strategic decision that shapes detection capabilities and operational workflows.

Data Dependency and Labeling Requirements

Supervised models require labeled datasets of historical attacks and benign activity, creating labeling bottlenecks and potential dataset imbalances. Reinforcement learning reduces labeling overhead by interacting with simulated or live environments, using reward signals to guide learning. High-fidelity simulations or sandboxed environments are critical to avoid policies that perform poorly in production.

Adaptability to Evolving Threats

Supervised models detect known patterns but struggle with novel or polymorphic attacks until retrained. Reinforcement agents continuously refine policies through exploration and exploitation, adapting to changing adversary behaviors in real time. Safeguards are needed to prevent policy drift and ensure safe exploration.

Interpretability and Explainability

Supervised methods built on decision trees, random forests or gradient boosting offer clearer feature attributions and auditability. Tools such as TensorFlow support model visualization. Reinforcement learning policies, especially deep networks, are often opaque, requiring explainability layers or surrogate models to translate decisions into human-readable insights.

Training Complexity and Resource Considerations

Supervised training scales with dataset size and model complexity but benefits from parallelizable pipelines. Reinforcement learning demands extensive sampling of state-action trajectories and high-fidelity environment simulations, often necessitating specialized hardware accelerators and greater infrastructure investment.

Sample Efficiency and Convergence Time

Supervised models achieve high sample efficiency with labeled data and data augmentation. Reinforcement agents may require millions of interactions before policy convergence. Hybrid approaches that pretrain on labeled datasets before environment interaction can accelerate convergence.

Response Time and Real-Time Constraints

Supervised inference typically executes in milliseconds, suitable for high-throughput environments. Reinforcement-based decision engines may introduce additional latency due to sequential state evaluations; optimizations like model pruning and quantization help reduce response times.

Robustness Against Adversarial Manipulation

Supervised classifiers can be evaded by adversarial examples crafted to exploit model weaknesses. Reinforcement agents, with feedback-driven learning, can develop countermeasures but require explicit adversarial training or environment perturbations to maintain resilience.

Integration Within Existing Security Frameworks

Supervised models integrate smoothly via APIs with SIEM platforms and endpoint protection tools. Reinforcement agents require tighter coupling with enforcement mechanisms—such as automated containment systems and dynamic network segmentation—entailing more complex integration and strict access controls.

A comparative overview illustrates their complementary strengths:

- Supervised Methods: Pros: high interpretability, predictable resource demands, rapid inference Cons: limited adaptability, labeling bottlenecks, retraining latency

- Reinforcement Methods: Pros: continuous learning, reduced labeling reliance, adaptive resilience Cons: high computational overhead, opaque policies, integration complexity, risk of policy drift

Hybrid ensembles leverage both paradigms—using frameworks such as OpenAI Gym and Ray RLlib—to filter high-confidence threats with supervised classifiers and apply reinforcement-driven containment strategies. Feedback from adaptive modules enriches labeled datasets, creating a virtuous cycle of continuous improvement.

Deploying Models for Novel Threat Recognition

Recognizing zero-day exploits and polymorphic malware demands a shift from static training to continuous, context-driven adaptation. Deploying models for novel threat detection reshapes the architecture and governance of security analytics platforms.

Continuous Model Training and Adaptation

Continuous training pipelines enable agents to ingest telemetry and external intelligence in near real time. Solutions from Darktrace and Microsoft Azure Sentinel embed retraining triggers tied to anomalous event rates or flagged indicators of compromise. Governance mechanisms—such as drift detection and statistical quality gates—ensure retraining does not degrade performance or introduce bias.

Balancing Generalization and Specialization

Effective novel threat recognition balances broad anomaly detection with asset-specific specialization. Tiered model architectures funnel suspicious events from general detectors into specialized submodels for critical assets. Ensemble strategies combine unsupervised clustering with supervised classification to surface and validate context-rich anomalies.

Leveraging Transfer and Meta-Learning Frameworks

Transfer learning accelerates readiness by fine-tuning pre-trained models based on global threat intelligence—such as representations derived from CrowdStrike Falcon data—on local network logs. Meta-learning frameworks further enable rapid adaptation from few labeled examples, essential for low-frequency but high-impact attack types.

Contextual Feature Engineering and Embedding

Embedding temporal, spatial and behavioral contexts enhances sensitivity to irregular patterns:

- Temporal embeddings capturing session durations and sequence patterns

- Graph-based features mapping relationships between IP addresses, user accounts and devices

- User behavior profiles establishing baselines and flagging deviations for high-risk roles

Operational Implications and Strategic Considerations

Adaptive deployments require an integrated operating model blending AI-driven insights with human oversight:

- Explainability Tools: Platforms like IBM QRadar Advisor with Watson and Palo Alto Networks Cortex XDR supply contextual rationales for alerts.

- Cross-Functional Governance: Data scientists, threat hunters and compliance officers align on data retention, audit policies and performance SLAs.

- Resilience Planning: Redundant deployments across cloud and on-premises environments safeguard against service disruptions, with fallback rules maintaining baseline coverage during retraining.

Key Considerations in Model Selection and Adaptation

Model choice and adaptation strategies are shaped by data realities, operational constraints and regulatory frameworks. Critical considerations include:

- Data sufficiency and label reliability, ensuring representative and up-to-date samples

- Concept drift and model staleness, requiring online learning or scheduled retraining

- Adversarial vulnerability, mitigated through adversarial training and defense-in-depth

- Resource and latency constraints, balancing model complexity with real-time responsiveness

- Explainability and compliance, favoring transparent models or integrated interpretability layers

- Integration overhead, managing the complexity of ensemble or hybrid architectures

- Computational footprint and deployment topology, leveraging edge inference or split architectures

- Data governance and privacy, incorporating federated learning or differential privacy as needed

- Adaptation mechanisms, including canary testing, performance monitoring and automated rollback procedures

- Cross-disciplinary collaboration, aligning data scientists, security analysts and executive stakeholders

By adopting a holistic approach that integrates robust governance, continuous evaluation and cross-functional engagement, organizations can harness the full potential of machine learning for threat detection while maintaining resilience against evolving adversaries.

Chapter 4: Data Ecosystem and Integration

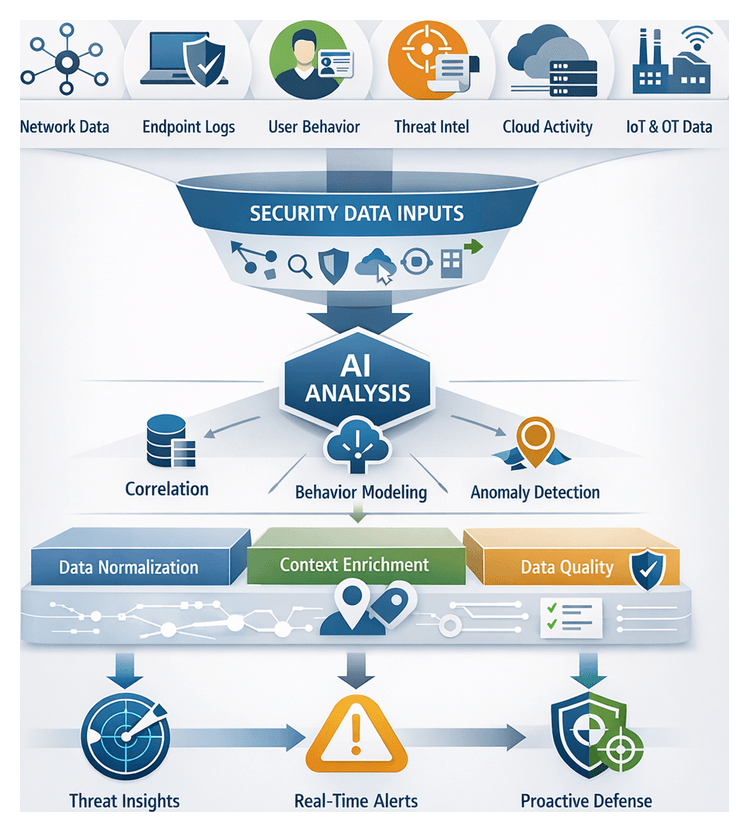

Security Data Inputs for AI-Driven Detection

AI-driven threat detection depends on a rich, diverse foundation of security data spanning network activity, endpoints, identities, applications, industrial systems, and external intelligence. Organizations operating across on-premises networks, cloud platforms, Internet of Things (IoT) deployments, and operational technology (OT) environments generate vast streams of telemetry, logs, and contextual signals. Autonomous agents synthesize these inputs to model baselines, detect anomalies, and predict emerging threats in real time. The following overview describes the core categories of security data that underpin next-generation detection architectures.

- Network Telemetry

- Endpoint and Workload Logs

- User and Entity Behavior Analytics (UEBA)

- Threat Intelligence Feeds

- Cloud and Application Activity Logs

- IoT and Operational Technology Sensor Data

- External Open Data Sources and Dark Web Indicators

Network Telemetry

Network telemetry—including packet captures, flow records, firewall logs, DNS queries, and proxy logs—reveals communication patterns and volumetric trends. AI agents analyze metadata such as protocol usage, session durations, and volumetric anomalies to detect lateral movement, data exfiltration, and command-and-control callbacks. Platforms like Splunk and Elastic ingest and index massive telemetry volumes for real-time correlation and retrospective hunts. Enrichment with geo-location databases and IP reputation services provides context for prioritizing alerts and isolating high-risk traffic.

Endpoint and Workload Logs

Endpoint detection and response (EDR) and workload protection solutions produce detailed logs of process executions, file accesses, registry modifications, and system calls. AI agents use these records to reconstruct attack kill chains, trace malware behaviors, and assign risk scores to hosts. Leading platforms such as CrowdStrike Falcon and Palo Alto Cortex XDR deliver continuous visibility into host activities and enable automated containment actions for anomalous behaviors.

User and Entity Behavior Analytics (UEBA)

As credential-based attacks and insider threats rise, UEBA platforms analyze authentication logs, access patterns, file transfers, and resource utilization to distinguish normal from anomalous behaviors. Solutions like Microsoft Sentinel feed AI models with enriched behavioral profiles, enabling detection of account takeovers, privilege misuse, and insider collusion. Correlating UEBA data with network and endpoint signals produces a holistic view that reduces false positives and accelerates investigations.

Threat Intelligence Feeds

External threat intelligence enriches local telemetry with indicators of compromise (IOCs), malware signatures, threat actor profiles, and emerging tactics. Feeds from commercial vendors, open-source communities, ISACs, and dark web monitoring services—such as Recorded Future and Anomali—supply dynamic blocklists and narrative intelligence. AI agents match local events against IOCs and leverage threat actor trends to adapt detection rules proactively.

Cloud and Application Activity Logs

Cloud platforms emit audit trails, API call logs, configuration changes, and access records via services like AWS CloudTrail, Azure Activity Log, and Google Cloud Audit Logs. Web application firewalls and performance monitors add event streams reflecting user interactions and anomalous HTTP requests. AI agents correlate cloud and on-premises telemetry to detect misconfigurations, token misuse, privilege escalations, and suspicious administrative actions, recommending policy adjustments and automated remediation.

IoT and Operational Technology Sensor Data

Industrial control systems, smart buildings, and critical infrastructure rely on sensors, PLCs, and embedded devices communicating over protocols such as Modbus, DNP3, and OPC UA. Specialized collectors ingest SCADA traffic, vibration and energy meter readings, and firmware inventories. Platforms like Nozomi Networks and Claroty enable AI models to detect deviations from process norms, unauthorized firmware changes, and lateral movement between IT and OT networks.

External Open Data Sources and Dark Web Indicators

Public repositories such as the National Vulnerability Database and exploit archives, combined with dark web monitoring by platforms like Intel 471, reveal zero-day disclosures, planned ransomware campaigns, and credential sales. Integrating these open data streams with internal telemetry and commercial intelligence enables forward-looking detection, allowing AI agents to adjust focus on emerging exploit patterns before full-scale attacks occur.

Harmonizing and Normalizing Security Data

Diverse data sources require alignment against common models and contextual enrichment to support accurate cross-domain analytics. Effective harmonization strategies encompass schema alignment, canonical modeling, metadata tagging, and temporal and spatial consistency frameworks. These foundations enable unified feature sets for machine learning and graph-based analyses.

Semantic Schema Alignment

Aligning source-specific attributes—IP addresses, user IDs, event types—to a unified vocabulary prevents semantic drift. Teams often extend frameworks such as MITRE ATT&CK to include organizational roles, regulatory contexts, and custom threat classes. Layered schema strategies maintain core entity consistency while accommodating evolving peripheral attributes, preserving correlation integrity and alert enrichment.

Canonical Data Modeling

A flexible canonical model bridges heterogeneous formats by ingesting each feed into a neutral representation capturing both event details and security context. Versioned schema contracts ensure backward compatibility and traceability as fields evolve. This approach balances the need for forensic granularity with real-time processing constraints.

Metadata Enrichment and Contextual Tagging

Enriching raw inputs with geolocation confidence, user risk scores, historical anomaly indices, asset criticality, and vulnerability tags transforms data into actionable intelligence. Metadata tags guide automated decision engines and provide transparent hooks for analysts to understand AI reasoning, strengthening trust in outcomes.

Temporal and Spatial Consistency Frameworks

Consistency frameworks align timestamps across time zones, reconcile clock drift, and map network segments and cloud regions into unified topologies. Sliding-window analyses preserve event order, while watermarking flags ingestion delays. These measures prevent misclassification of routine activities and ensure reliable anomaly detection over synchronized timeframes.

Interpretive Frameworks

Normalized data can be analyzed using multi-dimensional vector modeling for unsupervised clustering and anomaly scoring, or probabilistic graph models where entities become nodes linked by communication edges. Blending these approaches enables identification of suspicious corridors of activity and scoring of individual events, provided normalization integrity is maintained through cross-disciplinary validation exercises.

Normalization in Vendor Ecosystems

Vendors embed normalization modules within their platforms: Splunk offers the Common Information Model, IBM QRadar uses Device Support Modules, and Elastic Security integrates ingest pipelines with processors for date, geolocation, and threat enrichment. Organizations evaluate built-in strategies against adaptability needs, supplementing with custom microservices or community libraries to fill protocol gaps.

Governance and Adaptive Normalization

Data stewardship councils comprising IT operations, risk, compliance, and threat intelligence define normalization policies, approve schema changes, and oversee testing. Guardrail models combine non-negotiable rules with extension points for novel feeds. Looking ahead, AI-driven pattern recognition promises self-supervised inference of canonical structures, requiring validation mechanisms for schema drifts and clear analyst intervention pathways.

Ensuring Data Quality for Accurate Detection

Data quality—completeness, consistency, timeliness, accuracy, and relevance—directly impacts detection performance. Autonomous agents processing flawed or incomplete inputs generate elevated false positives and negatives, delayed alerts, and model drift. The following dimensions and strategies outline how to assess and enhance data ecosystems for optimal threat detection.

- Completeness and Coverage: Gaps in network flows or endpoint logs hinder correlation, increasing dwell times. Organizations with under 80 percent log coverage in critical segments experience up to 30 percent longer breach dwell times.

- Consistency and Standardization: Disparate formats and timestamp conventions require canonical schemas—such as OCSF—or tools like Splunk AI Advisor to standardize events and reduce heuristic workarounds.

- Timeliness and Latency: Streamed ingestion pipelines, exemplified by Microsoft Sentinel, minimize lag to support real-time anomaly detection. Legacy batch transfers introduce blind spots.

- Accuracy and Veracity: Misconfigured sensors, faulty collectors, or adversarial poisoning degrade analytic trust. Automated integrity checks aligned with NIST controls ensure only authentic data feeds models.

- Relevance and Representativeness: Domain-specific behaviors—such as medical device telemetry—require custom enrichment. Off-the-shelf models often underperform without tailored threat intelligence, as recommended by Elastic Security.

Domain-Specific Data Quality Challenges

- Network Traffic: Packet sampling and drops impede detection of low-and-slow exfiltration. Cisco Secure Network Analytics addresses this with enriched flow metadata but relies on robust capture strategies.

- Endpoint Telemetry: Sensor misconfigurations and agent version mismatches—common in deployments of CrowdStrike Falcon or VMware Carbon Black—fragment host visibility.

- Cloud and Containers: Ephemeral logs and inconsistent tagging across auto-scaled workloads generate noise and blind spots.

- OT and ICS: Proprietary protocols and infrequent communications challenge agents trained on IT data. Platforms like Nozomi Networks and Claroty provide specialized collectors for industrial traffic.

- User Behavior Analytics: Incomplete identity resolution and inconsistent multi-factor authentication logs dilute anomaly baselines, increasing false positives.

Assessing Data Quality

- Data Quality Maturity Model: Guides progression from ad hoc collection to optimized, monitored pipelines, prioritizing schema validation and redundant collectors.

- NIST SP 800-150: Prescribes minimum logging elements for threat scenarios, enabling enriched datasets through asset context and intelligence tags.

- Forrester Data Quality Framework: Aligns quality KPIs—false alert reduction, mean time to detection—with business risk, securing investment in foundational improvements.

Impacts of Poor Data Quality

- False Positives: Incomplete or inconsistent data inflates benign anomalies, overwhelming analysts and delaying response.

- False Negatives: Missing telemetry creates blind spots exploited by advanced actors.

- Detection Latency: Ingestion delays extend adversary dwell times.

- Model Drift: Static schemas and outdated training sets degrade relevance, missing new attack patterns.

- Compliance Risks: Incomplete audit trails expose organizations to regulatory fines and reputational harm.

Strategies to Improve Data Quality

- Data Governance and Ownership: Clear stewardship for each source ensures schema consistency and quality monitoring.

- Automated Validation and Profiling: Continuous profiling detects schema drifts and ingestion anomalies for proactive remediation.

- Domain-Enriched Models: Augment logs with MITRE ATT&CK mappings, asset criticality, and vulnerability scores for richer feature engineering.

- Cross-Domain Data Fusion: Federated query and semantic harmonization across IT, OT, cloud, and identity sources enable composite threat insights.

- Continuous Feedback Loops: Analyst input refines data classifications and exception handling, strengthening model trust over time.

Integrating Diverse Data Streams

Bringing together heterogeneous security data into a unified analytics architecture presents challenges across governance, scalability, interoperability, and compliance. A principled integration strategy balances these dimensions to deliver resilient, adaptable detection ecosystems.

Data Governance and Quality Assurance

- Implement a centralized metadata registry capturing source attributes, ingestion timestamps, schema versions, and transformation histories to support traceability and root-cause analysis.

- Define stewardship roles accountable for de-duplication, timestamp reconciliation, and semantic validation of log fields.

- Schedule periodic data profiling to measure completeness, consistency, and accuracy, guiding feed enhancements or decommissioning.

- Apply risk-based data classification at ingestion, enforcing masking, encryption, and retention policies for sensitive or regulated data.

Scalability and Performance Trade-offs

- Combine centralized data lakes with streaming platforms to ensure hot-path analytics leverage in-memory or partitioned stores optimized for low-latency inference.

- Use orchestration frameworks that decouple compute from storage, allowing parallelism adjustments without duplicating raw datasets.

- Establish end-to-end latency benchmarks from ingestion to alert generation, tuning partitioning strategies and identifying pipeline bottlenecks.

- Plan elastic capacity for peak ingestion events—major software updates or global incidents—and align provisioning with budget cycles.

Interoperability and Vendor Ecosystems

- Adopt standards such as STIX/TAXII for threat intelligence and Common Event Format for logs to reduce transformation overhead and future-proof integrations.

- Evaluate vendor support for modular connectors and extensible SDKs enabling bi-directional data flow between detection, ticketing, and orchestration systems.

- Maintain an inventory of licensed components, open-source libraries, and custom integrations, reviewing periodically for end-of-life risks.

- Conduct interoperability tests validating schema compliance, throughput, and error handling under simulated loads.

Security and Compliance Constraints

- Enforce fine-grained access controls and role-based policies at the pipeline level to restrict sensitive data fields to authorized processes.

- Apply end-to-end encryption for data in transit and at rest, backed by robust key management aligning with audit and compliance requirements.

- Perform privacy impact assessments to document lawful bases for personal data processing and retention under relevant regulations.

- Capture audit logs of data access, model training, and inference outcomes to support forensic investigations and compliance demonstrations.

Continuous Monitoring and Adaptation

- Define KPIs for pipeline health—ingestion completeness, transformation error rates, and model drift alerts—to drive proactive maintenance.

- Schedule architecture reviews aligned with intelligence updates, assessing the need for new data feeds or normalization adjustments.

- Adopt a modular integration approach, treating data connectors as replaceable components for rapid onboarding of innovative sources.

- Establish cross-functional governance forums—including security operations, data engineering, legal, and compliance—to evaluate and approve pipeline changes.

By aligning governance rigor with operational agility, planning for scalable performance, ensuring interoperability, and embedding continuous monitoring, organizations can integrate diverse security data streams into cohesive detection ecosystems. Such resilient architectures evolve alongside adversary tactics and regulatory mandates, sustaining effective AI-driven threat detection.

Chapter 5: Real-Time Analytics and Decision Making

Security Landscape and the Imperative for AI-Driven Defense

As organizations enter 2026, the security environment is more complex and dynamic than ever. Boundaries between cyber and physical domains have dissolved, enabling supply chain compromises, deepfake-enabled social engineering, and coordinated campaigns that disrupt digital systems, operational technology, and safety-critical infrastructure simultaneously. Nation-state actors and criminal syndicates exploit legacy defense gaps, mixing zero-day exploits with human-operated ransomware and targeted data exfiltration to maximize impact.

The proliferation of connected devices—from industrial control systems to consumer Internet of Things—expands the attack surface exponentially. Each endpoint becomes both a potential intrusion vector and a dependency nexus, where a breach on a low-value sensor network can cascade through production lines or critical services. Concurrently, networks now generate petabytes of logs, flows, and events daily, overwhelming security teams struggling to identify actionable indicators in a constant data firehose.

Physical safety and cyber resilience are inseparable imperatives. An attack that disables factory robotics or manipulates building controls can jeopardize human life and erode public trust. In this context, static, manual security practices have become obsolete. Organizations must adopt solutions capable of continuous adaptation, real-time analysis, and autonomous response to stay ahead of rapidly evolving threats.

Conceptual Framework of Autonomous AI Agents

Autonomous AI agents represent a next-generation paradigm for continuous threat detection and response. These software entities integrate four core attributes:

- Perception modules that collect and preprocess diverse data streams—network telemetry, endpoint sensors, user behavior analytics, and external intelligence.

- Knowledge repositories storing historical patterns and evolving threat intelligence, enabling contextualization of observations against a rich informational backdrop.

- Decision engines employing machine learning and heuristic reasoning to detect anomalies, assess risk, and determine appropriate countermeasures.

- Adaptive learning loops that refine models over time by incorporating feedback from successful detections and false-positive tuning.

Unlike static signature engines and rule-based systems that require constant manual updates, autonomous agents discover new indicators of compromise through self-learning and orchestrate countermeasures with minimal human intervention. This reduces mean time to detection and response while scaling to complex, distributed environments. Leading implementations include Splunk AI-Driven Security Analytics, and Microsoft Sentinel, each combining modular architectures with real-time inference and automated containment across cloud-native and on-premises deployments.

Drivers Accelerating AI-Based Threat Detection

Several converging factors compel organizations to embrace AI-driven threat detection:

- Escalating Attack Sophistication: Adversaries leverage supply chain poisoning, living-off-the-land techniques, encrypted command-and-control, and deepfakes that static defenses cannot counter.

- Explosion of Data Volume: Enterprise environments process millions of security events per second across distributed cloud and edge networks, overwhelming rule-based filtering and leading to alert fatigue.

- Demand for Real-Time Decisions: High-impact breaches unfold in minutes; organizations require instantaneous detection and automated response to contain lateral movement and prevent data exfiltration.

- Resource and Talent Constraints: The cybersecurity skills gap leaves teams understaffed and overextended. Autonomous agents augment human expertise by automating routine analysis and incident triage.

- Regulatory and Liability Pressures: Data privacy regulations such as GDPR and CCPA, along with emerging AI governance frameworks, demand transparent, auditable security controls.

- Convergence of IT and OT Security: Integrated visibility across operational technology and information technology is essential for holistic threat detection in industrial and critical infrastructure settings.

Together, these drivers create an imperative for solutions that combine high-throughput data ingestion, adaptive learning, and automated orchestration—characteristics inherent to the autonomous agent model.

Correlation Techniques for Anomaly Detection

Effective anomaly detection involves aggregating and contextualizing disparate signals into coherent threat narratives. Correlation techniques elevate isolated deviations into actionable insights by applying three foundational principles:

- Temporal Association: Align events by timestamp to uncover sequences or clusters indicating causality.

- Contextual Linkage: Overlay metadata—user identities, geolocation, process hierarchies—to tie events across infrastructure layers.

- Analytical Weighting: Use probabilistic or heuristic scoring to quantify relationship strength between events.

Statistical Correlation Models

Statistical frameworks track co-occurrence frequencies and baseline distributions, employing metrics such as Pearson’s correlation coefficient or mutual information to quantify relationships. Configurable modules in platforms like Splunk and Elastic SIEM enable tuning of sensitivity thresholds to balance false positives and detection coverage across high-velocity data streams.

Graph-Based Detection Frameworks

Graph analytics model entities—users, devices, processes—as vertices and interactions—network connections, file operations, API calls—as weighted edges. Traversal algorithms and community detection reveal anomalous subgraphs indicative of lateral movement or command-and-control infrastructures. Solutions such as IBM QRadar integrate graph databases to accelerate detection of stealthy, multistage intrusions, though adoption requires substantial compute resources and graph-theory expertise.

Sequence and Temporal Analysis

Sequence mining and time-series analysis identify recurrent event chains that diverge from established workflows. Sliding window techniques, hidden Markov models, and burst detection uncover anomalous patterns such as off-hours database queries or latency anomalies. Stream processors like Apache Flink and Esper enable continuous sequence detection at scale, but maintaining relevance demands adaptive window sizing and contextual filters to reduce spurious alerts.

Integrated Threat Context Correlation