Intelligent Compliance Leveraging AI Agents for Regulatory Excellence in the Utilities Industry

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Regulatory Complexity in the Utilities Sector

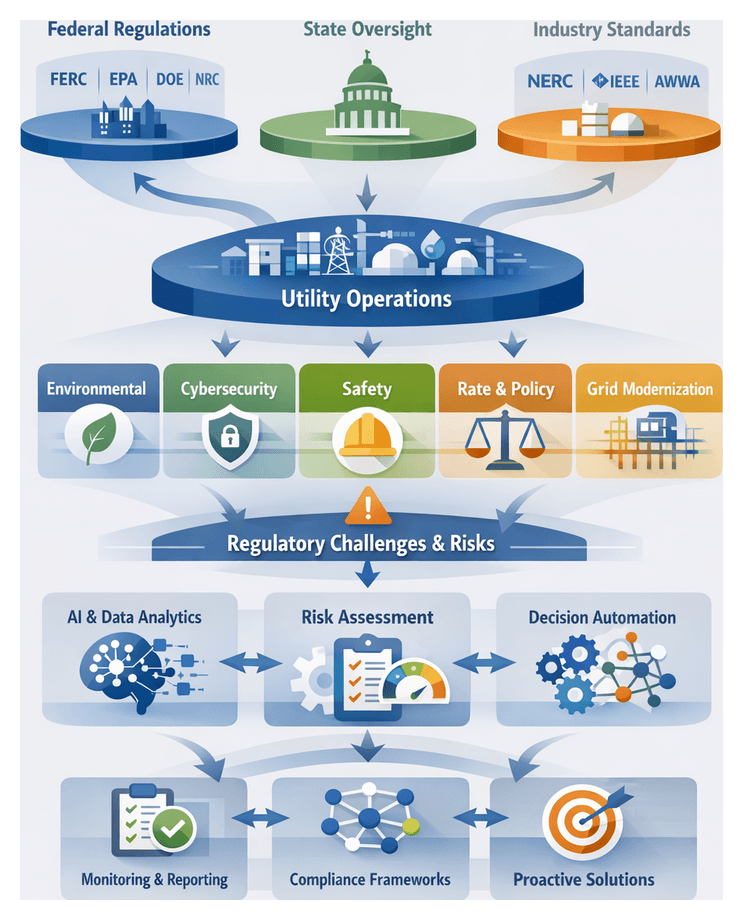

The utilities industry operates at the nexus of public interest and critical infrastructure, governed by a multifaceted network of statutes, administrative rules, technical standards, and jurisdictional mandates. From electricity generation and distribution to water treatment and natural gas transmission, utility providers must satisfy safety, reliability, environmental stewardship, and fair-pricing obligations. Federal authorities such as the Federal Energy Regulatory Commission (FERC), the Environmental Protection Agency (EPA), and the North American Electric Reliability Corporation (NERC) set baseline requirements, while state public utility commissions, environmental agencies, and emergency management offices layer additional rules. Industry standards bodies, including the Institute of Electrical and Electronics Engineers (IEEE) and the American National Standards Institute (ANSI), further contribute technical guidelines—often incorporated by reference into regulations.

Originally conceived under the principle of natural monopoly oversight, U.S. utility regulation evolved through landmark legislation such as the Federal Power Act and the Public Utility Regulatory Policies Act. Over time, regulators introduced measures addressing grid reliability, environmental impact, cybersecurity, and market competition. States enacted renewable portfolio standards, energy efficiency obligations, and consumer protection rules, resulting in a labyrinth of overlapping requirements. Utilities operating across multiple jurisdictions contend with distinct compliance timetables and enforcement mechanisms, demanding centralized governance and coordinated tracking systems to avoid missed deadlines and inconsistent reporting.

Drivers of Regulatory Proliferation and Data Imperatives

Technological innovation, policy objectives, and stakeholder expectations have accelerated regulatory proliferation. Smart grid deployments, advanced metering infrastructure, and Internet-connected sensors improve operational visibility but introduce data privacy concerns, cybersecurity vulnerabilities, and novel failure modes. Regulators responded with mandates such as NERC Critical Infrastructure Protection (CIP) standards, state-level data breach laws, and smart grid interoperability requirements.

- Environmental regulations governing emissions, water use, and waste management

- Renewable portfolio standards and carbon reduction targets

- Consumer protection rules for billing accuracy and service transparency

- Cybersecurity mandates and data privacy frameworks

Parallel to regulatory tightening, utilities face an explosion of data. Smart meters, supervisory control and data acquisition systems, customer information platforms, and third-party sensors generate terabytes of operational, environmental, and financial data daily. Fragmented across asset management tools, CRM systems, and dedicated monitoring platforms, this data must be harmonized rapidly to meet compressed reporting cycles. Ensuring data veracity and maintaining audit trails challenges legacy manual workflows and spreadsheets, creating voracious demand for analytical frameworks that can ingest diverse data types, reconcile anomalies, and surface compliance insights in near real time.

Operational Challenges and the Case for Intelligent Compliance

Traditional compliance approaches—manual reviews, static checklists, siloed subject-matter expertise—struggle under the weight of expanding regulations and data volumes. Fragmentation leads to inconsistent metrics, version control issues, and labor-intensive reconciliation. Data residing in separate systems impairs end-to-end visibility, obscuring risk exposure until an audit or incident occurs. Non-compliance penalties range from financial fines and operational restrictions to reputational damage and, in extreme cases, license revocations.

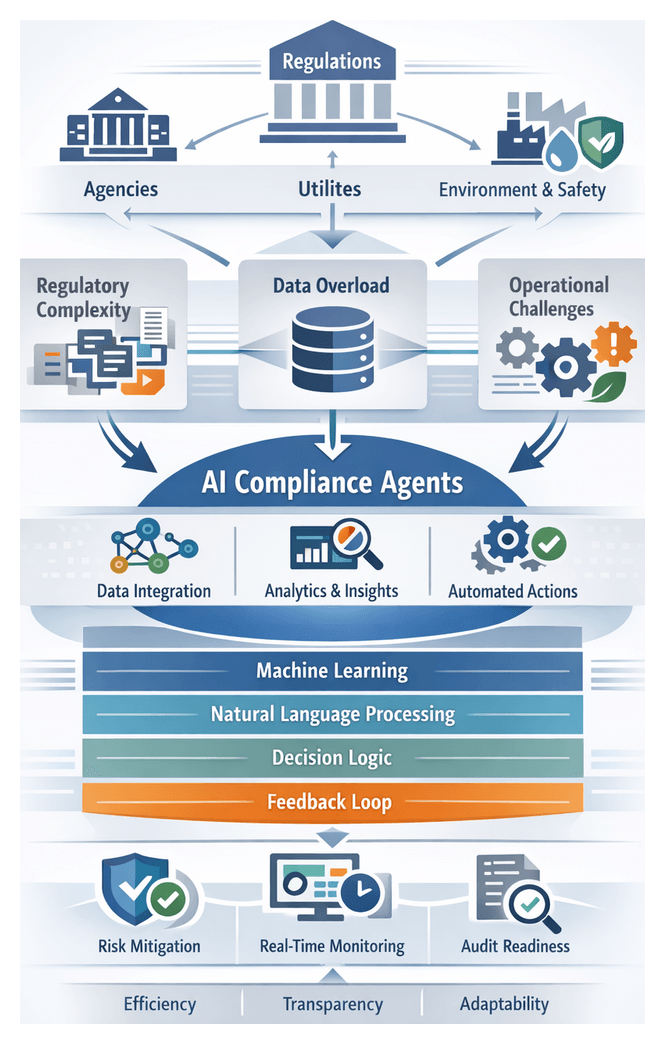

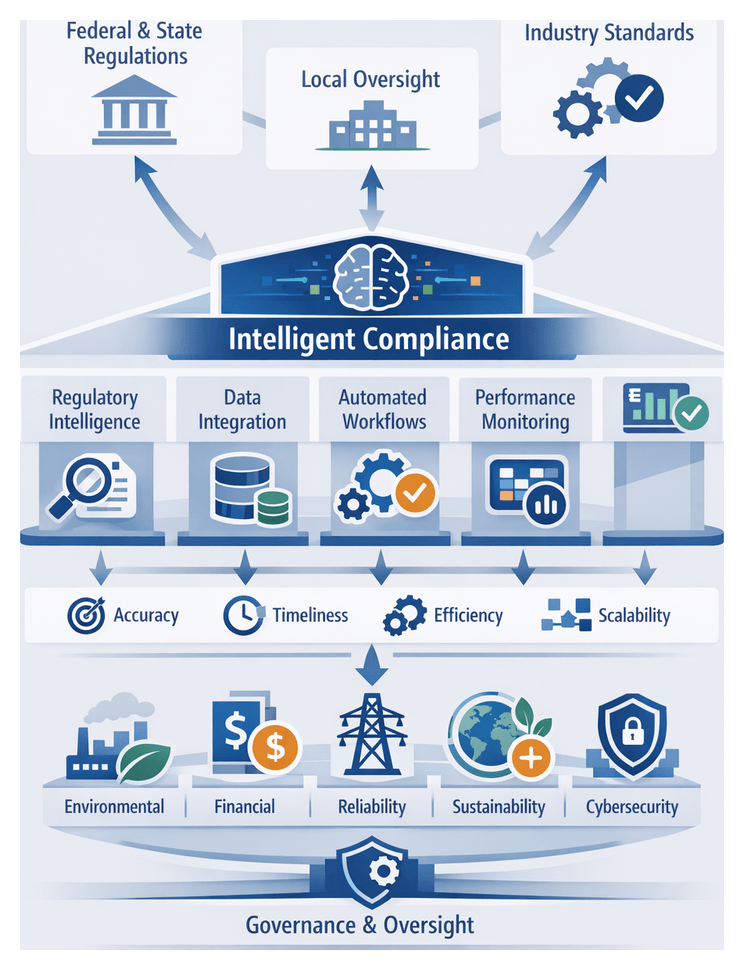

Intelligent compliance solutions, powered by artificial intelligence and automation, offer a transformative alternative. By integrating data from disparate sources, applying advanced analytics, and automating routine tasks, utilities can establish continuous monitoring, rapid adaptation to rule changes, and proactive risk management. AI-driven agents ingest regulatory texts, extract obligations, map requirements to internal processes, and generate actionable insights. Real-time dashboards, anomaly detection alerts, and automated submission workflows shift compliance from reactive to proactive, freeing experts to focus on strategic planning.

Automated audit trails, version control, and evidence repositories enhance audit readiness. When regulators request information, teams respond swiftly with accurate documentation. Scenario analysis and impact forecasting enable modeling of proposed regulatory changes, supporting mitigation strategies before rules take effect. In a sector driven by decarbonization goals, digital innovation, and dynamic stakeholder expectations, intelligent compliance agents empower utilities to navigate complexity with agility, reduce costs, and foster a culture of continuous improvement.

Foundations of AI-Driven Compliance Agents

Compliance agents are autonomous or semi-autonomous systems integrating machine learning, natural language processing (NLP), and decision automation. Machine learning models recognize patterns in structured and unstructured data, NLP interprets semantic content of statutes and guidance, and decision engines translate insights into governed actions. Conceptualized as socio-technical systems, agents operate within feedback loops of continuous learning, rule refinement, and audit reporting.

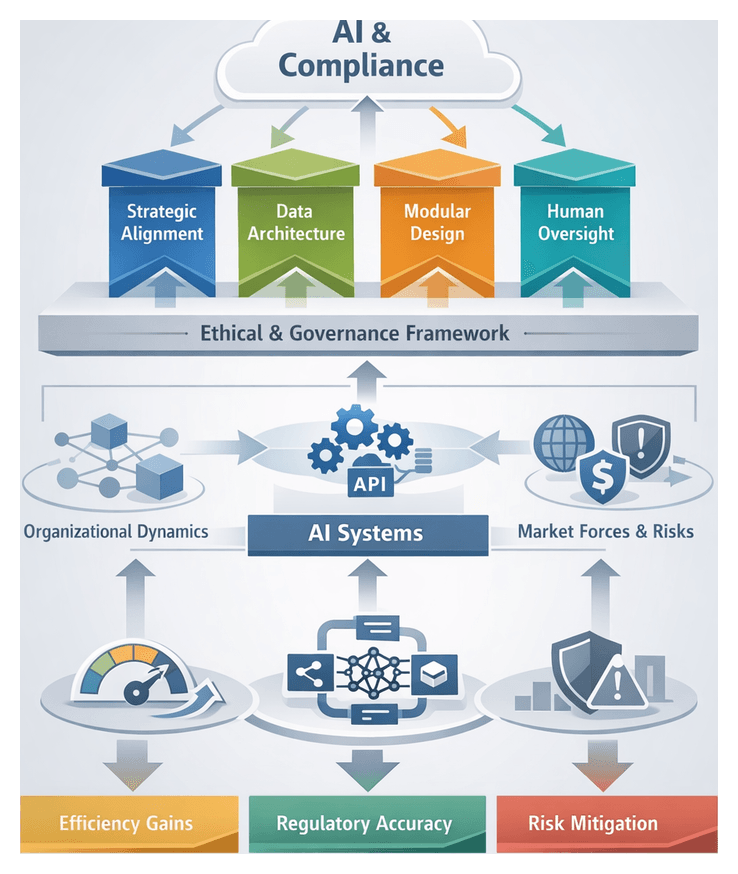

An AI-driven compliance architecture comprises modular layers:

- Data Acquisition and Pre-Processing: Normalization of structured data and NLP tokenization, annotation, and entity extraction from regulatory texts.

- Pattern Recognition and Classification: Machine learning classifiers tag text segments by regulatory category, detect anomalies in time-series data, and predict risk scores.

- Inference and Decision Logic: Business rules, thresholds, and escalation policies trigger workflows, notifications, or report generation.

- Feedback and Continuous Learning: Incorporation of regulator feedback and audit findings into retraining pipelines, refining both statistical models and rule definitions.

Balancing precision and recall in semantic feature extraction is critical to minimize false positives while capturing regulatory nuance. Pattern recognition models require regular retraining to accommodate evolving regulations and operational changes. Decision logic engines must adhere to change-control processes mirroring traditional policy governance to preserve traceability and accountability.

Analytical Frameworks and Industry Perspectives

Practitioners apply interpretive lenses to evaluate compliance agents:

- Governance, Risk, and Compliance (GRC) Model: Assesses the agent’s role in enterprise risk management, control implementation, and continuous monitoring.

- Technology Adoption Maturity Framework: Charts progression from rule-based prototypes to self-learning agents, evaluating people, processes, and technology readiness.

- Socio-Technical Systems Analysis: Examines human-machine interactions, accountability flows, and change management dependencies.

- Regulatory Text Analytics Paradigm: Measures semantic accuracy and agility in translating legal prose into structured policy ontologies.

Leaders in utilities emphasize regulatory complexity mitigation, data-centric intelligence, transparency, and scalability. Vendor platforms such as IBM Watson and Microsoft Azure Cognitive Services demonstrate convergent pipelines that normalize data, apply NLP and machine learning, enforce decision logic, and support continuous learning. Evaluation criteria include model accuracy, semantic coverage, explainability metrics, latency, throughput, and governance features such as audit trails and change histories.

Market Trends and the Evolving Solution Landscape

Escalating regulatory demands, data proliferation, and economic risks have sparked widespread interest in intelligent automation. Industry surveys reveal that most utility providers are piloting or evaluating AI-enabled platforms for compliance management. Key market drivers include:

- Scalability: Automated agents update regulatory mappings across jurisdictions without manual intervention.

- Transparency: Explainable AI and automated audit logs offer regulators clear evidence of decision-making.

- Cost Efficiency: Reduced reliance on external consultants and manual workflows cuts compliance overhead.

Modular, API-driven offerings integrate with enterprise systems, embedding best-practice workflows while allowing customization and governance. Organizations adopting these solutions report accelerated regulatory approvals, improved audit readiness, and the ability to redeploy compliance personnel to strategic projects.

Strategic Outcomes and Guide Objectives

This guide equips executives, compliance officers, and technical leaders to transform compliance from a cost center into a strategic enabler. Core objectives include:

- Contextual Mastery: Align business goals with compliance mandates amid evolving policy landscapes.

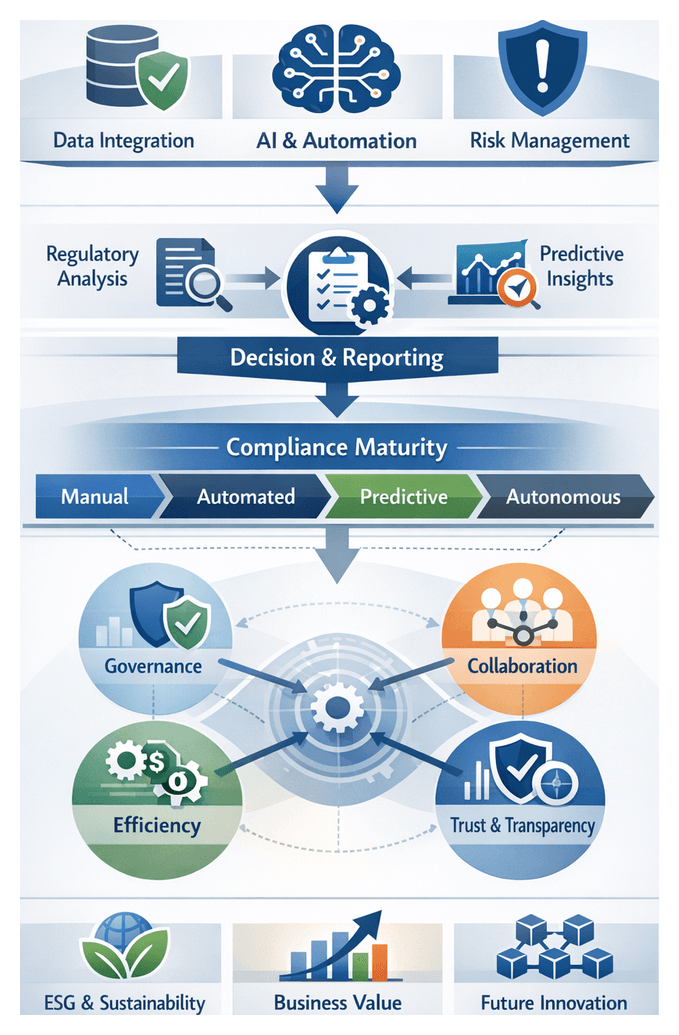

- Analytical Frameworks: Apply models such as the Regulatory Complexity Index, Compliance Maturity Continuum, Data Governance Quadrant, and Risk-Return Trade-off Matrix to prioritize initiatives.

- Strategic Perspective: Harness AI agents to shift from reactive reporting to proactive risk management and competitive advantage.

- Critical Insights: Understand trade-offs around interpretability, data integrity, policy uncertainty, and ethical considerations.

- Forward-Looking Guidance: Anticipate emerging regulatory and technological trends to shape adaptable roadmaps.

Engaging with these frameworks enables readers to diagnose compliance challenges, evaluate AI methodologies for asset management, environmental reporting, and billing, align technology and policy strategies, establish governance models that balance autonomy and control, anticipate shifts in regulations and AI innovation, and foster cross-functional collaboration across compliance, IT, operations, and legal teams.

Key Considerations and Limitations

A balanced perspective recognizes that AI agents, while powerful, operate within constraints:

- Regulatory Ambiguity: Broad or evolving language in statutes requires human judgment to resolve gray areas.

- Data Quality and Completeness: Incomplete or inconsistent data can skew analytics, producing false positives or undetected risks.

- Interpretability vs. Performance: Complex models may deliver higher detection rates but challenge auditability and board oversight.

- Integration and Change Overhead: Embedding AI into legacy landscapes demands stakeholder alignment, training, and cultural readiness.

- Policy Evolution: Adaptable architectures are essential to accommodate new rulemakings and guidance updates.

- Ethical and Legal Dimensions: Privacy, algorithmic bias, and liability frameworks must be governed through policy, not just technology.

- Resource Constraints: Smaller utilities may require phased deployments and partnerships to mitigate budget and talent limitations.

By maintaining awareness of these factors, organizations can craft AI compliance strategies that are resilient, aligned with capacity, and capable of evolving alongside regulations and technology. This guide serves as a strategic reference—bridging regulatory imperatives and AI innovation, and empowering industry leaders to navigate complexity with confidence.

Chapter 1: The Evolving Regulatory Landscape in Utilities

Federal, State, and Industry Oversight

The utilities sector delivers essential services—electricity, natural gas, water and wastewater management—under an intricate web of regulations designed to protect public health, ensure system reliability and guard the environment. At the federal level, authorities such as the Federal Energy Regulatory Commission (FERC), Environmental Protection Agency (EPA), Department of Energy (DOE) and U.S. Nuclear Regulatory Commission (NRC) set standards for interstate transmission, emission limits, energy policy and nuclear safety. Complementing these mandates, state public utility commissions oversee rate cases, integrated resource planning, local safety codes and consumer protections. Utilities operating in multiple jurisdictions must reconcile diverse statutes, commission orders and municipal ordinances, often confronting overlapping or conflicting obligations.

Industry bodies further shape compliance requirements. The North American Electric Reliability Corporation (NERC) enforces Critical Infrastructure Protection (CIP) standards for bulk power security. The Institute of Electrical and Electronics Engineers (IEEE) issues technical and safety specifications. The National Institute of Standards and Technology (NIST) provides cybersecurity frameworks. The American Water Works Association (AWWA) sets water quality and treatment standards. These voluntary and mandatory standards become enforceable when referenced by regulators or auditors, demanding continuous alignment of controls and assets with evolving criteria.

- Decarbonization mandates and renewable portfolio standards drive new planning and reporting obligations.

- Integration of customer-sited solar, storage and electric vehicle charging introduces varied interconnection rules and tariffs.

- Heightened cybersecurity threats prompt stringent NERC CIP requirements and commission-specific audits.

- Grid modernization projects—smart meters, automated outage management—raise data privacy and interoperability challenges.

- Environmental and health regulations—emissions, stormwater, water quality—require sophisticated monitoring and reporting systems.

Utilities must coordinate legal, engineering and operations teams to manage multiple permitting processes, reporting deadlines and audit responses. Centralized compliance calendars and conflict resolution protocols are essential to avoid project delays, enforcement actions and reputational harm. High-fidelity data from SCADA networks, customer information systems, environmental monitors and financial ledgers must be aggregated, validated and documented to satisfy agency audit trails and evolving form requirements.

Risk Frameworks and Emerging Policy Trends

A proactive risk-based approach prioritizes obligations by severity, likelihood and remediation timeframe. Regulatory impact assessments quantify potential fines and the probability of enforcement, while escalation protocols ensure critical issues reach senior leadership swiftly. Looking ahead, utilities face new mandates on transportation electrification, climate risk disclosures, performance-based ratemaking and data privacy. Continuous regulatory intelligence—monitoring rulemaking dockets and stakeholder consultations—combined with scenario planning and stakeholder engagement, transforms compliance into a strategic enabler of innovation and competitive advantage.

Foundations of AI-Driven Compliance Agents

Core Capabilities and Interpretive Frameworks

AI-driven compliance agents leverage machine learning, natural language processing (NLP), knowledge representation and decision automation to ingest regulatory texts, extract obligations and map them to organizational processes. Three dimensions distinguish these solutions:

- Cognitive capability: Depth of semantic understanding needed to identify entities, relationships and conditional obligations in statutes and codes.

- Reasoning and decision logic: Application of learned knowledge and policy rules to evaluate scenarios and generate recommendations.

- Actionability: Integration with reporting pipelines, audit workflows and operational controls for automated or semi-automated remediation.

Interpretive frameworks guide agent design:

- Regulatory knowledge graphs represent statutes, sub-clauses, controls and organizational units as interconnected nodes, enabling impact analysis and dependency visualization.

- Compliance ontologies define formal vocabularies for regulatory concepts, interpretation rules and process mappings, ensuring semantic consistency.

- Risk-based modeling integrates requirements with risk appetite and operational priorities to prioritize alerts and quantify residual risk.

- RegTech taxonomies categorize compliance tasks—monitoring, reporting, auditing—into modular components for gap analysis and tool selection.

Evaluative Criteria and Ethical Governance

Organizations assess AI agents using both technical and governance metrics. Key performance indicators include recall and precision in requirement classification, time-to-insight for new regulations, reduction in manual review hours and percentage of automated remediation actions. Governance teams measure interpretability (transparent rationales for recommendations) and maintainability (ease of updating models and ontologies).

- Semantic accuracy: Correct identification and classification of obligations, exceptions and tasks within regulatory texts.

- Integration depth: Connectivity with enterprise data sources, operational systems and reporting platforms.

- Scalability: Capacity to process high volumes of regulatory changes and data without performance degradation.

- Governance controls: Audit trails, model version control and role-based access management.

- User adoption: Ease of use for compliance analysts and reduction in manual interventions.

Regulatory authorities and industry bodies influence agent design through guidelines on data integrity, auditability and control objectives. FERC and NERC emphasize cybersecurity and reliability standards. European regulators stress transparency and explainability. ISO standards—ISO 27001 for security management and ISO 19600 for compliance systems—provide governance frameworks. Ethical AI principles from IEEE, OECD and the European Commission underscore fairness, transparency, accountability and privacy. Robust governance structures must define human oversight, exception-handling protocols, change-control procedures and audit logging to align AI recommendations with legal and ethical requirements.

Interoperability and Maturity Models

Interoperability hinges on open data standards such as the Common Information Model (CIM) and RESTful APIs, enabling seamless integration between AI agents, SCADA systems, ERP modules and document repositories. Industry consortia like the Open Compliance and Ethics Group (OCEG) promote reference architectures and shared regulatory libraries to reduce vendor lock-in and integration costs.

Maturity frameworks guide adoption stages:

- Baseline: Manual processes supported by static rule engines and spreadsheets.

- Transitional: Partial automation with AI-assisted review and periodic model retraining.

- Advanced: Integrated agents with continuous learning, automated report generation and semi-autonomous control loops.

- Fully autonomous: Self-governing systems that detect, decide and act on compliance issues with real-time audit trails and minimal human oversight.

The Imperative for Intelligent Compliance

Regulatory Tightening as a Catalyst for Innovation

Regulatory bodies have intensified oversight across environmental protection, cybersecurity, data privacy and consumer rights. FERC orders on distributed energy resources, EPA emissions standards under the Clean Air and Clean Water Acts and state wildfire mitigation regulations illustrate this trend. Utilities face expanded reporting mandates, cross-jurisdictional overlaps and data-driven enforcement. Agencies increasingly demand machine-readable filings—XBRL, structured XML—pressuring organizations to automate data extraction and validation.

AI-driven agents transform compliance from reactive to proactive. Natural language processing parses regulatory bulletins, applies semantic tagging and maps new requirements to policies and procedures. For example, AgentLinkAI integrates regulatory feeds with a centralized taxonomy, enabling compliance leads to visualize directive impacts across operations, cybersecurity and environmental management. Living compliance architectures cascade changes automatically through policy libraries, control frameworks and audit checklists, reducing update delays and material risk exposure.

Data Proliferation and Scalable Risk Management

Smart grid technologies, advanced metering and real-time SCADA systems generate petabytes of data annually—from time-series sensor feeds to maintenance logs. This volume and velocity, combined with structural heterogeneity across relational databases, NoSQL stores, flat files and document repositories, complicate unified compliance reporting and traceability.

- Continuous data lineage and governance demand end-to-end traceability of how data informs audit reports and regulatory filings.

- Machine learning–powered data orchestration automates schema mapping, data quality assessment and metadata generation.

- Semantic indexing tools such as IBM Watson Discovery extract entities and context from large document collections, accelerating validation against regulatory requirements.

- Cloud-native catalog services like Microsoft Azure Purview classify data by sensitivity and regulatory attributes, providing a unified view of compliance posture.

Robust data governance—master data management, quality dashboards and defined stewardship roles—ensures reliable inputs to AI models. High-integrity data underpins accurate risk assessments, policy mappings and anomaly detection, creating a cycle of continuous compliance improvement.

Escalating Consequences and Predictive Insights

Non-compliance penalties have grown in magnitude and frequency. EPA and FERC enforcement can impose multi-million dollar fines per violation, often accompanied by remedial orders. Customer trust erodes rapidly after breaches or environmental infractions. Mandatory shutdowns, litigation costs and long-term remediation—environmental cleanup, cybersecurity overhauls, third-party certifications—further burden utilities.

Predictive risk models powered by supervised learning analyze historical incident data against current operational metrics to forecast non-compliance scenarios. Platforms such as Palantir Foundry combine real-time ingestion with customizable risk dashboards, enabling teams to allocate resources to high-priority areas before issues escalate. Case studies highlight the financial stakes: penalties exceeding $30 million after a data breach, remediation costs over $100 million following environmental violations. These outcomes underscore that the cost of inaction far outweighs investments in intelligent compliance solutions.

Strategic Frameworks and Deployment Considerations

Guide Objectives and Anticipated Outcomes

This guide equips executives, compliance leaders and technology architects with strategic frameworks to harness AI agents for regulatory excellence. Readers will be able to:

- Articulate the evolving regulatory architecture, key agencies and policy trends shaping compliance obligations.

- Deconstruct core AI methodologies—machine learning, natural language processing, rule-based engines—and assess their suitability for specific regulatory tasks.

- Compare architectural paradigms—from centralized platforms to federated modules—and evaluate trade-offs in scalability, latency and governance.

- Assess data integration and governance requirements, identifying critical sources, quality thresholds and stewardship models.

- Synthesize best practices for automating reporting workflows, anomaly detection and risk scoring, focusing on accuracy, speed and auditability metrics.

- Anticipate organizational challenges—stakeholder alignment, change management, talent development—and devise mitigation strategies.

- Analyze real-world cases to extract lessons learned, success factors and common pitfalls.

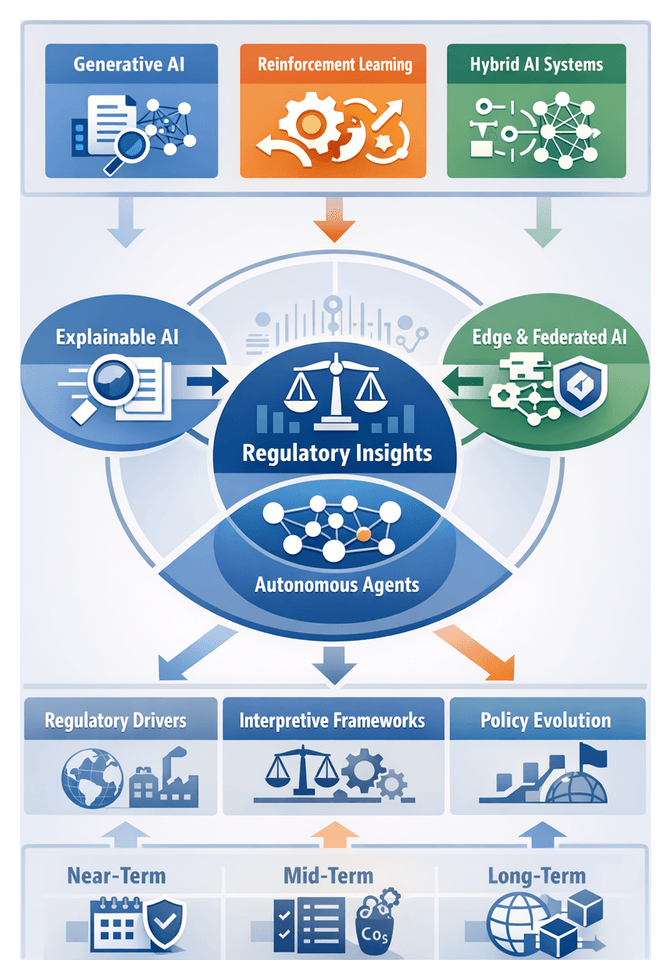

- Project emerging trends—generative models, regulatory sandboxes, data privacy evolutions—and craft a forward-looking innovation agenda.

Key Considerations and Limitations

- Data quality and completeness: Fragmented or siloed data can undermine AI outputs. Invest in integration platforms and stewardship before expecting consistent results.

- Model interpretability: Prioritize transparent frameworks and post-hoc analysis capabilities to satisfy audit requirements.

- Regulatory volatility: Design modular rule libraries and retraining workflows to accommodate shifting policy regimes.

- Governance and accountability: Define human oversight roles, exception-handling protocols and validation checkpoints.

- Integration with legacy systems: Plan for API development, data format harmonization and middleware to bridge SCADA, GIS and ERP platforms.

- Cultural and skills barriers: Foster collaboration between data scientists, regulatory experts and operations personnel; invest in upskilling.

- Vendor ecosystem evaluation: Avoid lock-in by selecting platforms that support open standards, interoperability and extensibility.

- Performance monitoring: Track false positive rates, drift detection and latency; establish periodic retraining and recalibration processes.

- Cost-benefit alignment: Conduct rigorous analyses that account for direct savings and indirect value such as risk reduction and stakeholder trust.

Chapter 2: Key Compliance Challenges and Risks

Regulatory Landscape and Compliance Challenges

The utilities industry encompasses generation, transmission and distribution of electricity, natural gas, water and wastewater treatment—all essential services governed by a complex web of federal, state and local regulations. Agencies such as the Federal Energy Regulatory Commission (FERC), the Environmental Protection Agency (EPA) and the North American Electric Reliability Corporation (NERC) establish mandates on market design, emissions limits and grid reliability. State public utility commissions set rate structures, safety requirements and service quality standards, while municipalities impose additional rules on water sourcing, discharge and stormwater control. This multilayered framework often creates overlapping obligations, varied reporting formats and conflicting compliance timelines across jurisdictions.

Regulations in the utilities sector fall into distinct categories:

- Reliability and performance standards, including contingency planning and outage reporting defined by NERC and regional transmission organizations.

- Environmental compliance, covering air emissions, water effluents, waste management and habitat protection enforced by the EPA and state agencies.

- Cybersecurity mandates, such as NERC Critical Infrastructure Protection (CIP) standards, requiring secure operational technology and incident reporting.

- Consumer protection and rate regulation, overseeing pricing, territories, complaint resolution and affordability programs.

- Occupational health and safety rules administered by OSHA and state equivalents, ensuring safe practices for field crews and plant operators.

Traditional compliance approaches reliant on manual data gathering, spreadsheets and periodic audits struggle to keep pace with real-time data feeds from sensors, inspections and external monitoring systems. Disparate data silos force teams to reconcile information across ERP platforms, environmental systems, cybersecurity logs and billing databases, increasing the risk of errors, omissions and delayed submissions. For utilities operating in multiple regions, compliance processes must align governance structures, data schemas and audit trails across different reliability orders, water quality standards and disclosure requirements.

Recent shifts in energy policy—driven by decarbonization targets, renewable portfolio standards and distributed energy resource integration—have further expanded the compliance perimeter. Smart grid deployments and advanced metering infrastructure generate new data streams and security considerations, prompting regulators to tighten disclosure obligations and performance benchmarks. In this evolving landscape, proactive compliance strategies powered by artificial intelligence and automation have emerged as critical capabilities. Leading platforms demonstrate how AI-driven agents can parse regulatory texts, map requirements to operational controls and automate data ingestion, validation and report generation.

Key Risk Dimensions in Utility Compliance

Effective compliance management demands a holistic understanding of risk factors spanning data quality, process integrity, regulatory interpretation, technological vulnerabilities, cultural dynamics and financial exposures. Analytical frameworks—drawing on standards like ISO 31000, COSO ERM, ISO 9001 and ISO 8000—guide organizations in identifying, evaluating and treating compliance risks.

Data Integrity and Process Efficiency

Accurate, complete and timely data underpins every regulatory submission and audit trail. Deficiencies in data governance—such as inconsistent definitions, incomplete lineage and lack of version control—drive misreporting of emissions, energy consumption and safety incidents. Utilities apply data quality scorecards, reconciliation protocols and performance indicators to monitor error rates and correction times. Implementing a single source of truth through metadata registries and robust stewardship models elevates data integrity from a back-office function to a core compliance imperative.

Legacy workflows and manual processes create operational bottlenecks. Manual data entry, paper approvals and siloed reporting tools contribute to delays, human error and gaps in auditability. Process inefficiency is evaluated using metrics such as cycle time variance, error frequency and resource utilization. Lean Six Sigma and BPMN methodologies help map process flows, quantify non-value-added activities and prioritize automation initiatives.

Regulatory Interpretation and Technological Vulnerabilities

Voluminous and technical regulatory texts often contain ambiguous definitions that vary by jurisdiction. Interpretive risk arises when overlapping mandates from FERC, EPA and state commissions lead to divergent compliance approaches. Risk matrices and scenario analyses help quantify the probability of misinterpretation against potential impact, informing internal guidance and reducing subjectivity in compliance judgments.

The convergence of operational technology and information systems expands the cybersecurity attack surface. Smart grids, IoT devices and AI analytics platforms enhance efficiency but require adherence to NERC CIP standards on access management and incident reporting. The NIST Cybersecurity Framework supports asset categorization, threat modeling and control evaluation. Continuous monitoring and dynamic risk scoring align security controls with evolving threats.

Organizational Culture and Financial Exposures

A compliance culture that treats obligations as strategic enablers fosters ownership across engineering, operations, legal and finance teams. Maturity models such as the Compliance Maturity Model and CMMI benchmark governance clarity, training effectiveness and leadership engagement. Surveys and focus groups gauge employee perceptions and guide targeted interventions.

Financial, legal and reputational exposures converge when compliance failures occur. Direct fines, remediation costs, increased insurance premiums and litigation risks can threaten viability. Analytical techniques like Monte Carlo simulation and sensitivity analysis quantify downside scenarios, while risk appetite statements define acceptable loss thresholds. A unified risk register consolidates exposures and supports cross-functional decision making.

Integrated Analytical Frameworks

Combining quantitative and qualitative methodologies ensures a balanced view of compliance risk. ISO 31000 provides principles for risk identification, analysis and treatment, while the COSO ERM framework links risk to strategy and performance. Bowtie analysis visualizes risk pathways, identifies critical controls and allocates resources to barriers. Triangulating insights from multiple frameworks uncovers control weaknesses and their financial implications, guiding strategic investment in mitigation efforts.

Imperative for Intelligent Compliance Solutions

The convergence of tightening regulations, exponential data growth and escalating non-compliance costs has transformed compliance from a periodic obligation into a continuous, data-driven discipline. Traditional manual approaches are no longer sufficient to meet real-time reporting demands and predictive risk mitigation requirements.

Regulatory Tightening and Data Proliferation

Agencies worldwide are introducing more stringent rules on emissions reporting, reliability metrics and data security. NERC CIP standards are evolving to counter emerging cyber threats, while state commissions tie incentives to performance measures such as outage duration and customer service. Utilities must track greenhouse gas outputs with greater granularity and frequency, compress reporting timelines and expand disclosure scopes.

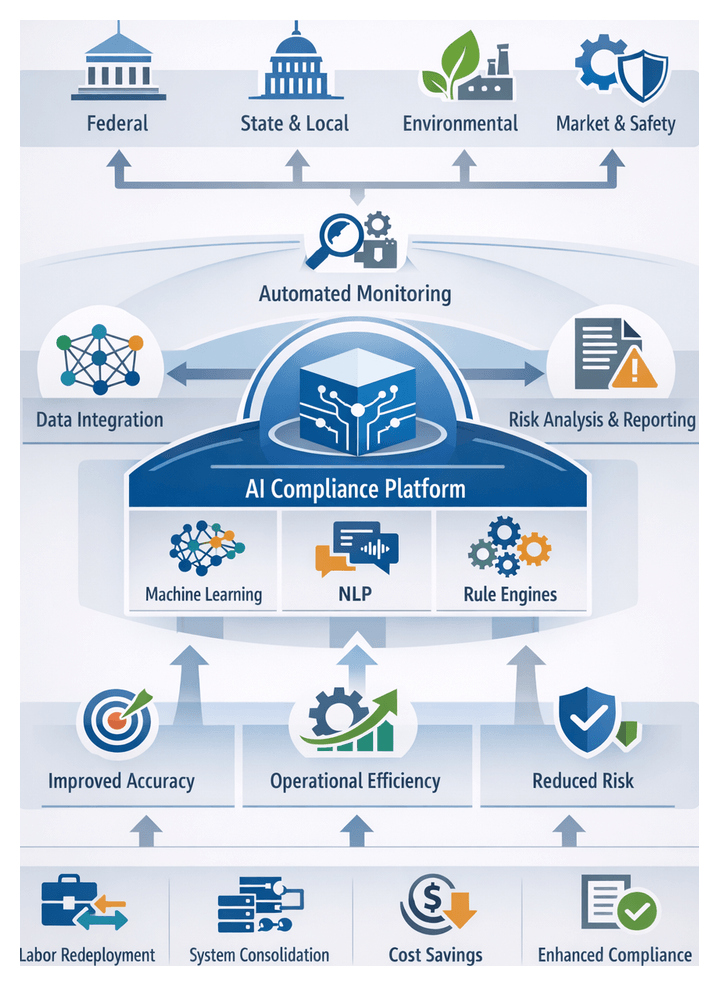

Smart grid technologies and advanced metering infrastructure generate terabytes of data daily. Integrating network management systems, customer information platforms, environmental sensors and third-party feeds challenges legacy ETL processes. Intelligent compliance solutions employ machine learning to classify unstructured documents, natural language processing to extract obligations and anomaly detection to flag deviations, delivering near real-time dashboards for proactive decision making.

Escalating Costs and Competitive Dynamics

Penalties for regulatory breaches can reach millions per infraction, with indirect costs including heightened scrutiny, higher insurance rates and reputational damage affecting credit ratings and financing capacity. Studies show total non-compliance costs—remediation, legal fees and opportunity losses—can be five to ten times the initial penalty. Early detection and corrective action via AI-driven agents reduce this multiplier effect.

Compliance excellence also drives competitive differentiation. Microgrids and energy service companies increasingly compete on reliability, sustainability and transparency. Proactive compliance performance enhances ESG ratings, attracts investors and builds stakeholder trust. Automated disclosures and continuous improvement demonstrated through AI-powered dashboards serve as reputational assets in regulatory hearings and public forums.

Assessing Urgency and Organizational Strategy

Analytical models guide investments in intelligent compliance. The Risk-Opportunity-Control model balances breach probability and impact against operational benefits and existing control strength, signaling where AI investment yields greatest return. Regulatory Change Radars track rulemaking activity, comment periods and enforcement actions, prioritizing automation in high-velocity areas. The Compliance Maturity Curve benchmarks progression from basic reporting to predictive assurance, identifying gaps between current capabilities and future imperatives.

Boards and executives must treat compliance as a cross-functional competency. Capital budgets should allocate funds for AI platforms, data architecture upgrades and change management. Talent strategies must emphasize data literacy, regulatory expertise and analytical skills. Establishing a Regulatory Technology Council ensures executive oversight of tool selection, integration and AI-related risks, embedding compliance innovation within broader corporate objectives.

Application Contexts and Pilot Approaches

Intelligent compliance solutions apply across generation, transmission, distribution and retail. In generation, AI agents monitor emissions, flag permit deviations and automate environmental reporting. In transmission and distribution, they analyze outage data against service standards and prepare reliability filings. Retail operations benefit from tariff compliance checks, data privacy controls and billing accuracy monitoring. Utilities often pilot in one domain to validate ROI, refine data governance and build expertise before scaling enterprise-wide.

Integrated Risk Mitigation and Strategic Considerations

Mitigating compliance risk in utilities requires an integrated approach anchored in data visibility, process automation and governance controls. By embedding analytics and AI-driven workflows within everyday operations, utilities can shift from reactive remediation to proactive risk management.

Pillars of Proactive Risk Management

- Unified Data Visibility: Consolidate SCADA, ERP, environmental monitoring and financial systems into a cohesive analytics layer for cross-cutting analysis of anomalies and compliance thresholds.

- Intelligent Automation: Encode regulatory logic into machine-readable rules, deploy machine learning for anomaly detection and automate report generation, validation and exception handling.

- Governance and Control Architecture: Define roles, responsibilities and escalation paths; implement audit trails for AI-driven decisions; ensure transparency and accountability.

- Cross-Functional Collaboration: Establish integrated governance committees and joint risk assessments with engineering, operations, legal and finance to foster shared risk ownership.

- Adaptive Monitoring and Feedback: Employ real-time analytics and periodic model recalibration to update risk parameters and control thresholds in line with regulatory changes and technological evolution.

Implementation Considerations and Limitations

Strategic planning and execution must address inherent challenges to realize sustainable mitigation benefits:

- Data Quality and Completeness: Invest in data stewardship, standardized schemas and periodic quality audits to prevent false positives and uncover genuine risk signals.

- Model Interpretability: Apply explainable AI techniques and document algorithms to satisfy auditors and regulators on decision criteria and auditability.

- Regulatory Dynamics: Design modular systems capable of rapid rule updates to accommodate new requirements without full redeployment.

- Cultural Readiness: Execute change management strategies—stakeholder education, role redefinitions and governance alignment—to overcome resistance and skill gaps.

- Integration Complexity: Plan for custom connectors, data normalization and phased migrations to integrate legacy systems with AI platforms.

- Human-in-the-Loop Oversight: Balance automation with expert review by defining thresholds for AI alerts and manual intervention.

- Automation Bias: Regularly validate rules and anomaly detection models to detect drift, bias or gaps that could mask non-compliance events.

- Cost-Benefit Alignment: Establish clear metrics—such as reductions in fines, audit findings and manual effort—to measure AI investment ROI and guide resource allocation.

Risk mitigation is an ongoing journey requiring continuous assessment cycles, governance reviews and adaptive roadmaps. By acknowledging data, model and organizational limitations, utilities can sustain regulatory excellence, enhance operational resilience and maintain trust with regulators, investors and customers.

Chapter 3: Fundamentals of Artificial Intelligence in Compliance

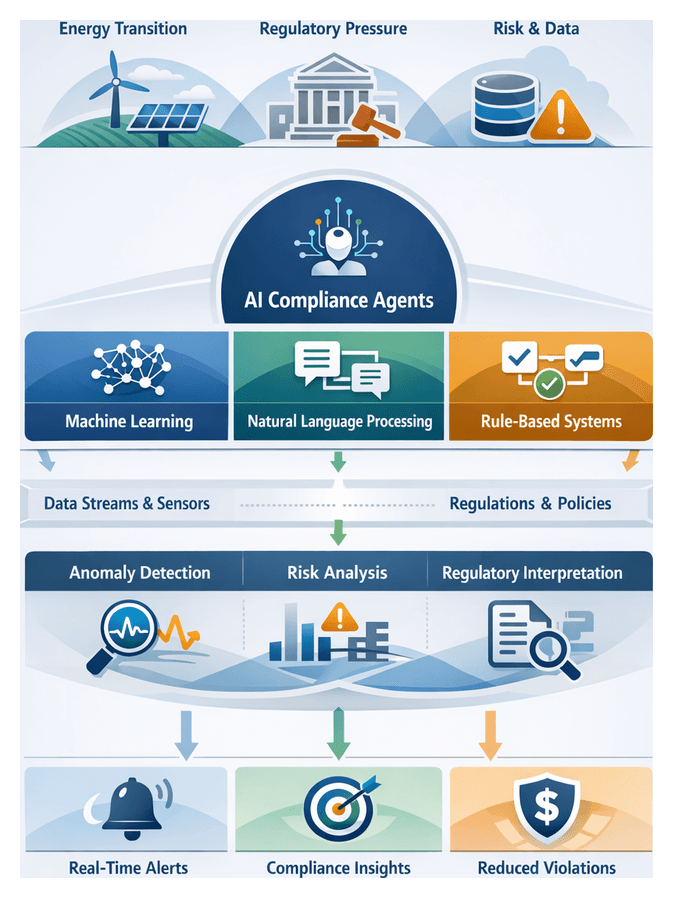

Driving Forces Behind Intelligent Compliance Agents

The utilities sector faces unprecedented pressure from energy transition mandates, decarbonization targets and investor demands for transparent environmental, social and governance disclosures. Simultaneously, regulators are tightening requirements—North American Electric Reliability Corporation’s critical infrastructure protection standards emphasize continuous monitoring, while the U.S. Environmental Protection Agency imposes stricter emission thresholds and accelerated reporting windows. Against this backdrop, legacy manual compliance processes struggle to scale, prompting utilities to adopt AI-driven compliance agents that interpret complex statutes, reconcile multi-jurisdictional rules and deliver actionable insights in real time.

Petabytes of data stream from smart meters, SCADA systems, distributed energy resources and weather sensors, generating compliance-relevant signals such as voltage excursions, emission readings and contingency events. Without intelligent parsing and contextual analysis, critical anomalies either slip through unnoticed or trigger costly false positives. Meanwhile, the financial and reputational cost of non-compliance can reach multi-million-dollar exposures, with major breaches reducing enterprise value by up to five percent. By embedding machine learning and natural language processing into compliance workflows, utilities shift from reactive, labor-intensive review cycles to proactive, pattern-based risk detection and streamlined reporting.

Across environmental, reliability and cybersecurity functions, AI agents continuously monitor operational metrics against regulatory thresholds—automatically generating notifications for emission deviations, analyzing grid event logs against NERC criteria or correlating threat intelligence with access records for intrusion detection. These contextual applications demonstrate how compliance intelligence extends human expertise, integrating domain ontologies, regulatory taxonomies and operational metadata into an end-to-end analytic framework that supports strategic agility and stakeholder trust.

Foundational AI Methodologies

Machine Learning Foundations

Machine learning algorithms learn patterns from historical and real-time data to classify documents, forecast risks and detect anomalies without explicit rule coding. Key categories include:

- Supervised Learning: Trains models on labeled data to predict outcomes such as regulatory category or risk level.

- Unsupervised Learning: Discovers structure in unlabeled data, for example clustering incident reports to reveal emerging compliance issues.

- Semi-supervised Learning: Combines limited expert-tagged records with large unlabeled corpora to extend coverage when manual annotation is costly.

- Reinforcement Learning: Learns optimal sequential decision policies, with potential for dynamic workflow routing in complex reporting processes.

Platforms like Amazon SageMaker and Google Cloud AI Platform enable utilities to build, train and deploy models at scale. Predictive analytics modules leverage diverse datasets—from audit logs to sensor readings—to provide early warning of compliance breaches and support scenario analysis.

Natural Language Processing Techniques

Natural language processing transforms unstructured regulatory text into structured data elements that drive automation. Core techniques include:

- Text Classification: Assigning clauses to categories such as environmental, financial or safety obligations.

- Named Entity Recognition: Extracting references to statutes, agencies, thresholds and technical terms.

- Semantic Parsing: Interpreting legislative intent to map obligations into actionable rules.

- Sentiment and Context Analysis: Evaluating narrative content in reports or stakeholder communications for risk indicators.

Pre-trained services such as IBM Watson Natural Language Understanding, Amazon Comprehend and Google Cloud Natural Language API accelerate requirement extraction. Open-source libraries like spaCy and transformer models from Hugging Face support fine-tuning on utility-specific corpora, improving domain accuracy and minimizing out-of-vocabulary rates.

Rule-Based Systems and Expert Engines

Rule-based engines encode explicit “if-then” logic to enforce deterministic compliance checks. Their attributes include:

- Deterministic Outcomes: Ensures consistent decisions, critical for auditability and regulatory reporting.

- Modular Rule Authoring: Enables subject-matter experts to codify new mandates as discrete rules without deep programming.

- Execution Transparency: Provides traceability from data inputs through rule evaluations to final determinations.

- Scalability: Processes high-volume transactions, such as meter validations or permit renewals, in near real time.

Business rule management systems like Drools and IBM Operational Decision Manager facilitate integration of rule logic with ML and NLP pipelines, achieving both interpretability and adaptability.

Integrative Architectures and Hybrid Approaches

Robust compliance agents orchestrate machine learning, natural language processing and rule-based logic within cohesive pipelines. Common integration patterns include:

- Preprocessing Pipeline: NLP modules extract entities and obligations from new regulations, feeding structured data into ML classifiers for risk scoring.

- Decision Orchestration: Rule engines leverage ML predictions to route cases, with confidence thresholds triggering human review for ambiguous items.

- Continuous Feedback Loop: Audit outcomes and user corrections retrain ML models and refine rule definitions to adapt to policy changes.

Advanced deployments incorporate large language models such as GPT-4 from OpenAI for conversational assistants, automatic drafting of filings and narrative explanations. Interpretive frameworks—regulatory knowledge graphs, compliance ontology layers and human-in-the-loop checkpoints—balance automation throughput with auditability and control.

Rigorous evaluation frameworks employ performance metrics such as precision-recall curves and ROC analysis to optimize trade-offs between false positives and missed obligations. Explainability techniques like SHAP and LIME provide visibility into model decisions, reinforcing interpretability as a core compliance control alongside documented versioning, bias assessments and governance policies.

Strategic Considerations for Responsible AI Adoption

Aligning Strategy, Culture, and Governance

Successful AI initiatives begin with leadership commitment and cross-functional collaboration. Governance bodies comprising compliance, data science, legal and risk representatives define policies for model development, approval workflows and exception handling. Executive sponsorship clarifies how AI supports risk management, operational efficiency and stakeholder trust, securing resources for data stewardship and continuous monitoring.

Ensuring Data Quality and Integration

Data underpins every AI component. Organizations must profile and standardize sources—regulatory archives, sensor feeds, billing records and third-party disclosures—remediating missing values, duplicates and schema inconsistencies. A unified data catalog and documented lineage support audit trail requirements and ensure a single source of truth for compliance agents accessing enterprise systems via modular ingestion layers.

Interpretability, Ethical Oversight, and Risk Management

Transparent decision logic is non-negotiable in regulated environments. Utilities should favor explainable models—rule-based hybrids, decision trees or attention-driven NLP architectures—that allow subject-matter experts to review inferences, annotate rationale and intervene when necessary. Ethics and legal counsel must oversee bias assessments, fairness evaluations and privacy-by-design principles to prevent discriminatory outcomes in applications such as customer usage analysis.

Risk management frameworks correlate AI use cases with potential liabilities, applying heat maps to prioritize oversight. Continuous governance ensures prompt response to deviations from expected performance and aligns with evolving regulatory guidelines on algorithmic transparency and data protection.

Scalability, Performance, and Continuous Monitoring

AI agents must accommodate surges in data volume and processing demands. Cloud-native architectures, containerization with Kubernetes and elastic resource allocation deliver high availability and rapid failover. Performance benchmarks should mirror peak operational conditions and regulatory deadlines to prevent delays that incur penalties.

Continuous monitoring tracks key metrics—false positive rates, processing latency, audit coverage and data distribution shifts. Defined thresholds trigger model retraining, governance reviews or reversion to manual processes, maintaining alignment with regulatory intent and minimizing undetected violations.

Change Management, Metrics, and Vendor Ecosystem

Adoption reshapes workforce roles, augmenting human expertise with AI-driven insights. Training programs in model validation, data interpretation and exception management build analytics literacy across compliance, legal and operations teams. Cross-functional workshops and executive communication plans reinforce desired behaviors.

Quantifiable metrics—reduction in report preparation time, decrease in compliance violations, improved risk scoring accuracy and lower operational costs—demonstrate return on investment. Real-time dashboards enable leadership to monitor progress and refine resource allocation.

Vendor selections should weigh total cost of ownership, integration capabilities with existing IT infrastructure and domain expertise in utilities. Proof-of-concept engagements and case studies inform decisions between proprietary platforms such as Microsoft Azure AI and Google Cloud AI, specialized startups or open-source alternatives.

Phased rollouts of high-impact, well-understood use cases mitigate risks, build institutional knowledge and manage expectations regarding AI capabilities. Transparent communication of model confidence levels and fallback protocols preserves appropriate human vigilance.

By integrating these strategic considerations—from data governance and interpretability to scalability and change management—utilities can deploy AI-driven compliance agents that deliver sustained accuracy, efficiency and regulatory resilience. Those who master these foundational elements will secure a lasting competitive advantage in regulatory excellence.

Chapter 4: AI Agent Architectures and Capabilities

Regulatory Complexity and Drivers

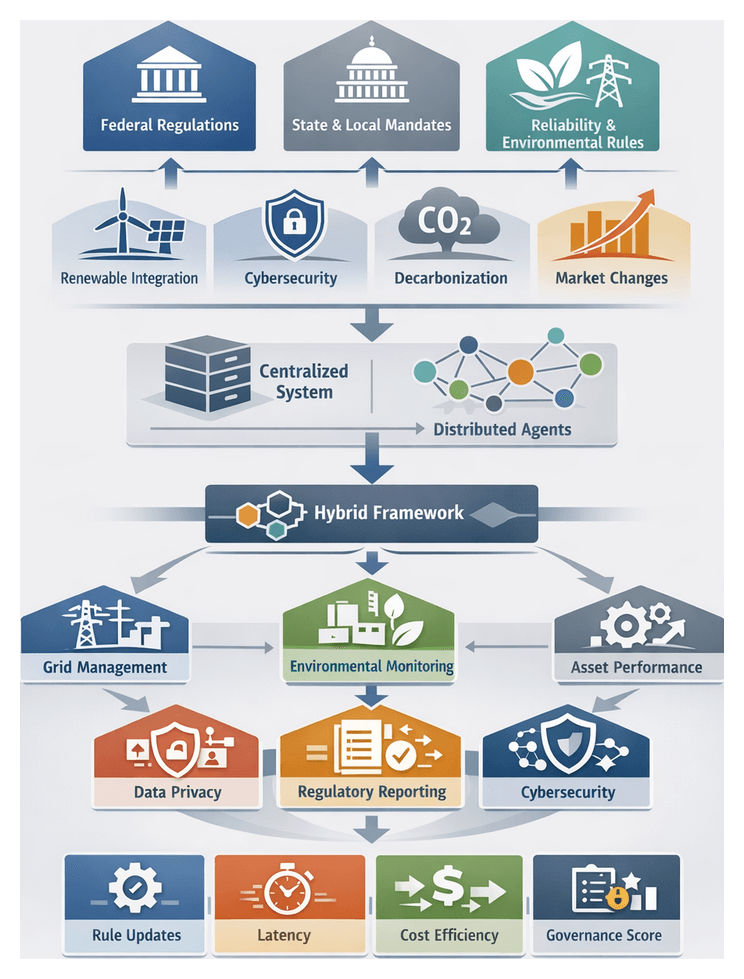

The utilities sector navigates one of the most intricate regulatory landscapes of any industry. Providers of electricity, gas, water and wastewater services must comply with statutes, rules and guidelines issued by federal bodies such as the Federal Energy Regulatory Commission (FERC) and the Environmental Protection Agency (EPA), regional reliability organizations like the North American Electric Reliability Corporation (NERC), state public utility commissions and local authorities. These overlapping mandates address safety, reliability, environmental protection and market conduct, and evolve in response to technological advances, policy shifts and emerging risks.

Regulatory complexity arises not merely from rule volume but from diverse oversight bodies, rapid policy change, technical detail in standards and jurisdictional variations. Drivers such as the integration of renewable and distributed energy resources, smart grid digitalization, cybersecurity threats, decarbonization targets and market restructuring further amplify compliance demands. Utilities must translate legal text into actionable controls, maintain audit readiness and meet service objectives—all within a dynamic, multi-jurisdictional environment.

Historically, utility regulation began in the early 20th century with government-granted monopolies and consumer safeguards. The energy crises of the 1970s led to FERC’s creation, and environmental incidents brought stringent EPA pollution controls. In the late 1990s, mandates for market liberalization and reliability standards spawned NERC and enhanced state-level frameworks. Today’s regulatory landscape reflects converging drivers:

- Integration of renewables and interconnection standards

- Smart grid technology with cybersecurity and data privacy obligations

- Climate policies driving emissions reporting and decarbonization targets

- Complex tariff and billing regulations under market restructuring

- Demand for transparency in governance, reporting and performance metrics

Utilities operating across state lines face jurisdictional overlaps. A single transmission project may require FERC approval, NERC reliability permits, state environmental assessments and local zoning clearances. Within a state, multiple agencies may share authority over aspects such as stormwater discharge, species protection and rate recovery. Variations in interpretation and enforcement mean a requirement considered immaterial in one jurisdiction may trigger penalties in another.

Traditional compliance methods—periodic audits, manual checklists and isolated point solutions—lack holistic visibility and agility. They strain resources, delay rule change identification and fragment data systems, increasing non-compliance risks and diverting skilled professionals from strategic tasks. To overcome these challenges, leading utilities are adopting intelligent compliance solutions powered by artificial intelligence, leveraging machine learning, natural language processing and decision automation to transform reactive compliance into proactive risk management.

AI Compliance Agent Architectures

Modeling Paradigms

AI-driven compliance agents in utilities are designed under two primary paradigms: centralized architectures and distributed frameworks. These models are evaluated for scalability, performance, governance and resilience.

- Centralized Architectures: A single compliance engine ingests regulatory texts, operational telemetry and reporting data, applying a unified rulebase for consistent interpretation. Benefits include a single source of truth, streamlined governance and simpler integration. Challenges include potential performance bottlenecks and increased latency for edge-level anomaly detection.

- Distributed Architectures: Domain-specific agents operate closer to data sources or functional units, each with localized rule sets and data contexts. This model delivers modularity, fault tolerance and faster real-time monitoring. It requires sophisticated orchestration, federated policy management and greater governance overhead to maintain consistency.

Hybrid and Modular Approaches

Many utilities adopt hybrid frameworks, combining centralized oversight with distributed execution. A central policy engine disseminates high-level rules to edge agents, which apply context-specific logic. Modular design allows discrete compliance modules—for document classification, anomaly scoring or report generation—to be orchestrated by central workflows while deployed across distributed infrastructure. This federated model accommodates incremental adoption and balances governance with local agility.

Evaluative Frameworks and Metrics

Architectural selection is guided by strategic priorities, operational contexts and risk profiles. Key dimensions include regulatory volatility, operational latency tolerance and governance maturity. Use-case mapping—such as environmental reporting or cybersecurity anomaly detection—ensures alignment with business objectives. Critical analytical metrics include:

- Time to rule update propagation across agents

- End-to-end decision latency from data ingestion to compliance output

- Model drift detection rate to identify misalignment with current regulations

- Resource cost per compliance transaction

- Governance compliance score aggregating audit findings

Leading practitioners report a trend toward federated compliance platforms to orchestrate distributed services. Fully centralized solutions remain popular for their lower integration complexity and predictable ownership costs, while distributed frameworks excel where sub-second response is critical, such as real-time grid reliability monitoring.

Deployment Contexts in Utilities

AI compliance agents deliver value across diverse operational domains. Each context has unique regulatory pressures, data landscapes and stakeholder demands.

Grid Management and Stability

- Real-time Data Ingestion from SCADA, PMUs and power-flow models

- Anomaly Detection and Alerting using machine learning for voltage and frequency deviations

- Regulatory Reporting Automation with tools like IBM Watson to generate mandatory grid performance submissions

Environmental Compliance and Emissions Monitoring

- Sensor Integration from CEMS, effluent meters and ambient air quality sensors

- Predictive Compliance Forecasting to model emission trends and guide operational adjustments

- Automated Permit Management tracking conditions, deadlines and policy updates

Asset Performance and Reliability

- Condition-Based Monitoring of vibration, oil quality and thermal imaging data

- Maintenance Compliance Tracking against inspection schedules and regulatory intervals

- Risk-Based Decision Support integrating risk scores with compliance priorities

Customer Billing and Data Privacy

- Personal Data Classification via NLP tools to enforce CCPA and GDPR policies

- Billing Accuracy Verification detecting metering errors and unauthorized consumption

- Consent Management automating logging of customer preferences within CRM systems

Regulatory Reporting and Audit Readiness

- Report Generation Automation to format structured and unstructured data into regulatory templates

- Cross-Functional Data Integration unifying finance, operations and legal inputs

- Interactive Audit Portals offering regulators real-time dashboard access for collaborative review

Demand Response and Energy Trading

- Market Rule Interpretation with NLP parsing evolving rulebooks and penalty structures

- Bid Compliance Verification simulating market scenarios against regulatory constraints

- Settlement Exception Monitoring analyzing trading outcomes and flagging discrepancies

Cybersecurity and Critical Infrastructure Protection

- Threat Intelligence Integration ingesting global feeds to contextualize attacks

- Automated Vulnerability Assessments scanning configurations and access logs

- Incident Response Coordination orchestrating evidence collection and notification workflows

Across these domains, key themes emerge. Domain specialization ensures agents understand industry terminology and regulatory hierarchies. Strong data governance supports data integrity, lineage and security. Explainability and auditability remain essential, demanding transparent models and traceable decision pathways. Hybrid models combining AI automation with expert oversight yield balanced compliance strategies that transform regulatory obligations into proactive, efficient operations.

Architectural Trade-offs and Best Practices

Key Trade-offs

- Latency versus Accuracy: Real-time monitoring favors streamlined pipelines, while periodic reporting supports deep inference.

- Autonomy versus Control: Higher agent autonomy accelerates workflows but necessitates human review checkpoints and guardrails.

- Centralization versus Distribution: Centralized models simplify governance; distributed agents enhance resilience and local responsiveness.

- Interpretability versus Performance: Complex models offer superior pattern recognition; rule-based modules maintain transparency.

- Customization versus Standardization: Tailored configurations reflect local regulations; standardized templates support consistency and faster deployment.

Best Practice Recommendations

- Adopt a Layered Governance Model: Centralize policy authoring and distribute runtime inference to local subsystems.

- Implement Transparent Policy Encoding: Use explicable rule engines for core logic and reserve opaque ML for anomaly scoring.

- Leverage Modular Interfaces: Define clear API contracts between ingestion, reasoning and reporting modules.

- Employ Adaptive Thresholds: Calibrate detection parameters based on historical performance and regulatory risk tolerance.

- Prioritize Data Lineage: Capture metadata at each stage to trace data origin, transformations and usage.

- Integrate Human Oversight Loops: Escalate high-impact or ambiguous decisions to expert review.

- Plan Incremental Adoption: Pilot agents in low-risk functions to refine models before enterprise rollout.

- Establish Continuous Validation: Replay historical events to test agent responses and retrain models regularly.

- Maintain an Extensible Framework: Architect for plug-and-play integration of new AI capabilities.

- Foster Cross-Functional Collaboration: Align IT, compliance, data science and operations through shared governance forums.

Considerations and Limitations

- Regulatory Evolution Risk: Architectures must adapt to amendments and jurisdictional divergences.

- Data Quality Dependencies: Efficacy depends on completeness, accuracy and timeliness of inputs.

- Model Degradation: Continuous retraining and drift detection are required to maintain relevance.

- Governance Overhead: Distributed topologies increase synchronization and version control demands.

- Interpretability Challenges: Deep learning models may obscure decision rationales without explainability frameworks.

- Integration Complexity: Legacy silos and proprietary interfaces may require middleware solutions.

- Change Management: Organizational readiness and cultural alignment are critical for adoption.

Chapter 5: Data Integration and Governance for AI-driven Compliance

Understanding the Utilities Data Ecosystem

The utilities sector operates on a foundation of diverse, high-volume data streams that span generation, transmission, distribution, customer management and market operations. Real-time outputs from advanced metering infrastructure and SCADA systems coexist with historical trend logs, transactional records and external feeds such as weather forecasts, market prices and cybersecurity intelligence. Geographic information systems map assets and environmental constraints, while customer information systems maintain billing, service and demographic profiles. For AI-driven compliance agents to deliver accurate monitoring, predictive risk detection and automated reporting, stakeholders must first catalog these data domains, identify sources, and map flows end to end.

This multifaceted landscape presents both opportunity and complexity. On one hand, AI models can fuse operational telemetry with external indices to detect regulatory breaches before they occur. On the other hand, siloed repositories, inconsistent schemas and varying latency profiles can undermine analytics and trigger false positives. A comprehensive data inventory establishes the baseline for architecture design, data quality assurance and governance policies that together enable reliable, auditable AI systems.

Integration Strategies and Frameworks

Integrating disparate data sources into a coherent fabric is a prerequisite for any AI-enabled compliance framework. Data may be classified into operational systems (AMI meters, SCADA telemetry, maintenance logs), enterprise platforms (ERP financial modules, CIS databases), geospatial layers (GIS asset maps, environmental zones), regulatory filings (FERC submissions, state reports) and external feeds (weather, market pricing, inspection reports). Key integration challenges include:

- Format Diversity – Relational databases, time-series platforms, document repositories, flat files and IoT streams each require specialized connectors and parsers.

- Access Restrictions – Legacy systems may lack robust APIs, while contractual or regulatory constraints limit third-party data usage.

- Latency Variability – Real-time monitoring demands streaming ingestion, whereas financial and regulatory reports often rely on batch updates.

- Semantic Inconsistencies – Divergent naming conventions, units of measure and metadata definitions across vendors and regions introduce ambiguity.

- Security and Privacy – Sensitive telemetry and customer information require encryption, masking and role-based access controls.

To address these obstacles, utilities are adopting layered architectures such as data fabrics or data meshes that decouple point-to-point integrations and promote governed self-service access. Automated, API-driven pipelines enforce data contracts, while metadata management and cataloging tools provide discoverability and consistency. Core platforms in this space include AWS Lake Formation for secure data lake builds, Microsoft Purview for unified data governance, Informatica for intelligent data quality, IBM Watson Knowledge Catalog for metadata management and Collibra for governance orchestration. Streaming architectures such as Kappa and Lambda inform design choices for real-time versus batch processing, guiding tool selection and operational policies.

Interpretive models like the Data Integration Maturity Model and risk-impact matrices help organizations prioritize initiatives. By ranking data sources according to compliance risk and implementation complexity, teams can align roadmaps with strategic objectives, ensuring that AI agents access the most critical feeds first.

Ensuring Data Quality and Stewardship

The integrity of AI-driven compliance hinges on rigorous data quality metrics. Core dimensions include:

- Accuracy – Validation against calibrated sensors, manual audit checks and external reference datasets.

- Completeness – Ensuring required fields such as meter readings, geographic coordinates and customer attributes are fully populated.

- Consistency – Harmonizing naming conventions, code lists and units of measure across systems.

- Timeliness – Aligning data freshness with use cases, from sub-second grid stability monitoring to monthly financial reconciliations.

- Lineage – Tracking provenance through documented ingestion and transformation pipelines to support audit trails.

Standards such as ISO 8000 for data quality and the Utility Industry Architecture Framework (UIAF) provide templates for governance processes, while DAMA International’s DMBoK and ISO 38500 inform stewardship policies. Embedding these standards into data cataloging platforms—such as Collibra and Microsoft Purview—enables automated profiling, enrichment and policy enforcement.

Effective stewardship requires clearly defined roles:

- Data Owner – Senior leader who specifies requirements, approves policies and resolves conflicting definitions.

- Data Steward – Practitioner responsible for day-to-day quality monitoring, metadata governance and issue resolution.

- Data Custodian – IT professional who manages storage, security controls and pipeline deployments.

- Governance Council – Cross-functional committee overseeing policy creation, dispute resolution and strategic alignment.

- AI Model Owner – Domain expert validating agent outputs, monitoring performance and authorizing model updates.

- Model Risk Officer – Specialist ensuring interpretability, fairness and consistency with regulatory criteria.

- Compliance Auditor – Internal or external reviewer conducting periodic assessments of data processes and model behavior.

Formalizing these roles in a governance charter and leveraging platforms such as Collibra or Microsoft Purview for automated workflow management ensures accountability and transparent audit trails.

Governance Foundations for AI-Driven Compliance

Policy, Standards and Frameworks

A robust governance framework integrates policy definitions, technical controls and organizational structures to safeguard legal, ethical and operational requirements. Key components include data usage policies, privacy thresholds, model transparency criteria and escalation procedures for anomalous outputs. Technical guidelines align with the National Institute of Standards and Technology’s AI Risk Management Framework, while risk and compliance registers catalog regulatory obligations, data sensitivities and model risks.

Technical Controls and Continuous Monitoring

- Access Management – Role-based controls and segregation of duties restrict data and model artifacts to authorized users.

- Encryption and Masking – Encrypt data at rest and in transit, apply anonymization in non-production environments.

- Audit Trails – Immutable logs of data access, pipeline executions and decision outputs support forensic analysis.

- Automated Policy Enforcement – Policy engines block non-conforming data flows or model behaviors in real time.

- Performance and Drift Monitoring – Continuous checks on model accuracy and input distributions trigger governance workflows when metrics deviate.

Regulatory Alignment and Privacy Safeguards

Utilities must map data and model activities to sector-specific statutes—such as FERC, state public utility commissions and NERC CIP—as well as data privacy laws like GDPR and CCPA. Maintaining a regulatory register with retention schedules, data subject rights and impact assessments ensures pre-deployment compliance and supports audit readiness.

Governance Maturity and Improvement

Adopting a maturity model—from Ad Hoc and Repeatable stages to Defined, Managed and Optimized—helps benchmark governance capabilities and prioritize enhancements. Metrics dashboards track effectiveness, while continuous improvement cycles incorporate lessons from audits, regulatory changes and operational incidents.

Limitations and Mitigation Strategies

- Bureaucratic Delays – Implement agile governance processes to balance oversight with innovation velocity.

- Resource Constraints – Leverage cloud-based governance platforms to reduce upfront investment and scale controls.

- Regulatory Ambiguity – Maintain close dialogue with regulators and update frameworks as AI-specific guidance emerges.

- Cultural Resistance – Build executive sponsorship and integrate governance training into professional development.

- Data Fragmentation – Employ data fabrics or meshes to unify visibility and enforce consistent policies across legacy and modern systems.

Strategic Recommendations

- Align Governance with Business Imperatives – Ensure compliance frameworks support reliability, cost efficiency and customer satisfaction goals.

- Prioritize by Risk – Use risk-impact matrices and compliance value stream mapping to focus on high-leverage data domains.

- Automate Controls – Adopt policy-as-code, integrated data catalogs and model governance tools to scale enforcement without proportional headcount increases.

- Foster a Stewardship Culture – Embed governance responsibilities into performance metrics and reward proactive data quality management.

- Iterate Continuously – Regularly review and update policies, tools and roles to reflect regulatory shifts, emerging risks and technological advances.

By integrating diverse data sources through governed architectures, enforcing rigorous quality and stewardship standards, and embedding comprehensive governance foundations, utilities can unlock the full potential of AI-driven compliance agents. This integrated approach transforms compliance from a reactive, manual exercise into a strategic capability—ensuring regulatory adherence, mitigating risk and enabling continuous operational excellence.

Chapter 6: Automating Regulatory Reporting Processes

Regulatory Complexity and the Need for Intelligent Compliance

The utilities sector operates under a dense web of federal, state, and local regulations designed to ensure reliable service, protect public health, and meet environmental objectives. Landmark statutes such as the Federal Power Act, the Clean Air Act, and the Safe Drinking Water Act delegate rulemaking to agencies including FERC and the EPA, while state public utilities commissions impose additional requirements on rates, safety, and reporting. Industry standards from NERC, AWWA, and IEEE add further voluntary or semi-mandatory obligations. Utilities must reconcile these overlapping mandates with operational priorities—grid resilience, renewable integration, cybersecurity, and digital transformation—across multiple jurisdictions with distinct reporting formats and approval processes. Emerging policy goals around climate resilience, distributed energy resources, and data privacy continue to expand the regulatory perimeter and intensify compliance demands.

Traditional compliance models relying on manual data gathering, spreadsheets, and periodic audits struggle to keep pace with evolving requirements. Data silos across grid management, water treatment, billing, and asset maintenance hinder unified compliance oversight. Regulators issue dozens of rule modifications, guidance memos, and reporting clarifications each quarter, requiring constant workflow adjustments and resource reallocation. The financial and reputational consequences of non-compliance—fines, operational restrictions, license revocations, and stakeholder distrust—make proactive, strategic compliance management essential.

An intelligent compliance framework addresses these challenges by combining regulatory intelligence, integrated data repositories, automated workflows, and performance dashboards. Natural language processing scans regulatory texts to extract obligations and map them to internal processes. Machine learning models analyze historical filings to predict audit focus areas and recommend data quality improvements. Decision automation triggers review workflows when anomalies or missing submissions are detected. Centralized rule libraries standardize requirements expression, while data lakes consolidate operational metrics for real-time monitoring. Cross-functional governance structures ensure auditability of algorithmic decisions, model interpretability, and secure data pipelines. Change management prepares staff to trust intelligent tools while retaining the expertise needed for nuanced regulatory interpretation.

Analytical Foundations: Accuracy, Timeliness, Efficiency, and Scalability

Deploying AI-driven reporting agents requires rigorous analytical frameworks to measure performance across four dimensions: accuracy, timeliness, operational efficiency, and regulatory adaptability. Decision makers evaluate systems using quantitative metrics, interpretive models, and cost frameworks to ensure that intelligent automation delivers credible, scalable compliance outcomes.

Accuracy Metrics

- Precision and Recall

- Precision: proportion of correctly identified regulatory elements among those flagged, minimizing false positives.

- Recall: proportion of actual requirements captured by the agent, reducing false negatives.

- F1 Score

- Balances precision and recall into a single performance indicator, with minimum thresholds set for regulatory filings.

- Error Distribution Analysis

- Diagnoses systemic biases by examining patterns in false positives and negatives, guiding model retraining and data enrichment.

- Threshold Sensitivity

- Adjusts confidence levels to align with risk tolerance, trading off between capturing subtle compliance signals and avoiding unnecessary reviews.

Timeliness and Workflow Efficiency

- Cycle Time Reduction: AI agents automate repetitive tasks, shortening reporting cycles from weeks to days.

- Parallel Processing: Concurrent handling of multiple reporting streams eliminates sequential bottlenecks and supports last-minute data updates.

- Continuous Reporting: Embedding validation checks into operational systems enables near real-time compliance monitoring and proactive issue resolution.

Platforms such as IBM Watson OpenScale and Microsoft Azure AI provide workflow analytics dashboards that track task durations, exception rates, and throughput. Techniques like value stream mapping and process mining quantify non-value-added activities and highlight opportunities for further automation. In one case, an AI reporting agent reduced human review time by 70 percent, freeing staff for strategic analysis and regulatory strategy planning.

Resource Allocation and Cost Structures

- Labor Redeployment: Compliance personnel shift from manual data validation to exception resolution and policy interpretation.

- Operational Cost Savings: Error reduction and fewer external consultancy fees lower total cost of compliance.

Financial evaluation frameworks such as Total Cost of Ownership and Return on Compliance Investment compare pre- and post-automation expense profiles. A mid-Atlantic utility documented a 25 percent net reduction in compliance operating costs within six months of deploying an AI solution, attributing nearly half the savings to internal teams handling regulatory inquiries using automated insights. Tools from Google Cloud AI support scenario planning to model long-term cost trajectories under changing regulatory regimes.

Scalability and Regulatory Adaptability

- Modular Rule Management: Rapid incorporation of new rule sets into the agent’s knowledge base without major system overhauls.

- Data Throughput Capacity: Maintenance of SLA-compliant processing times under elevated reporting volumes.

- Configurability: User interfaces that enable non-technical staff to adjust mappings, validation logic, and report parameters.

Performance and stress testing simulate multi-jurisdictional filings to ensure sub-second validation workflows. Technology maturity assessments guide organizations in prioritizing enhancements—such as natural language rule ingestion and automated regulatory change detection—to maximize adaptability as policy landscapes evolve.

Context-Specific Reporting Domains