AI Driven Automation in Compliance and Risk Management An Industry Insights Guide

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

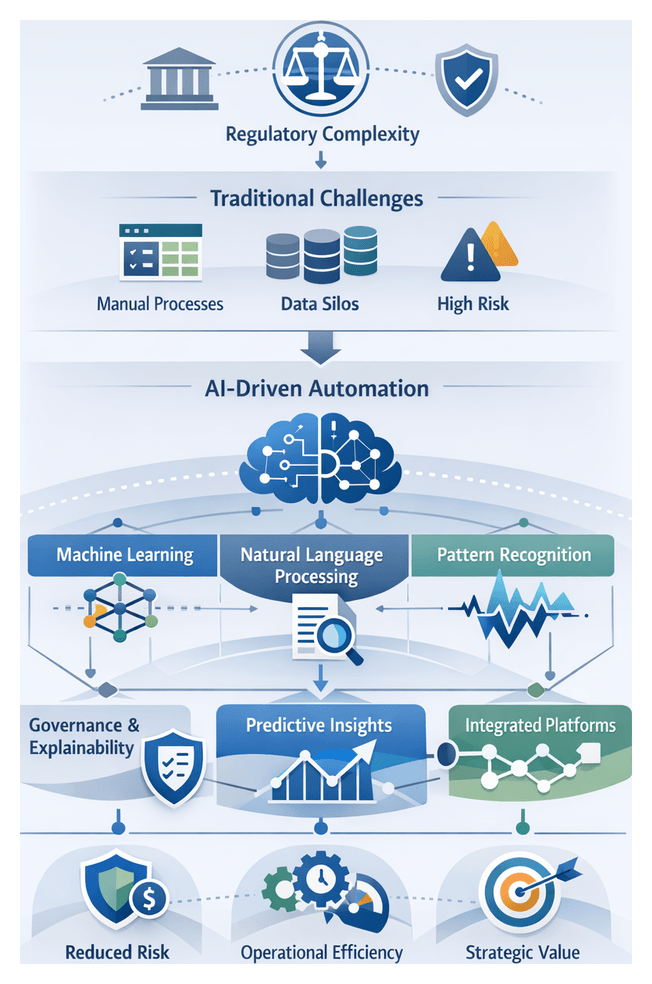

Current Compliance and Risk Management Challenges

Organizations today face an unprecedented convergence of regulatory complexity, operational inefficiency, and heightened risk exposure. Global standards—Basel III, the European Union’s Anti-Money Laundering Directives, Sarbanes-Oxley, GDPR—and dozens of other mandates impose extensive documentation, reporting deadlines, and validation checkpoints. Compliance teams often allocate more than ten percent of annual budgets to manual review, data aggregation, and exception handling, producing error rates of 5–20 percent and delaying risk detection by days or weeks. Legacy controls rely on spreadsheet reconciliations, static rule engines, and siloed data across disparate systems, undermining visibility and responsiveness.

Meanwhile, regulators are shifting toward continuous supervision, real-time reporting, and proactive risk management. Institutions must integrate scenario analysis, stress testing, and transparent audit trails on demand. Without an automated, analytics-driven technology foundation, many firms struggle to adapt new rules, harness rich data assets, and maintain control effectiveness. The result is a triad of challenges:

- Rising complexity from overlapping, rapidly changing regulations.

- Operational inefficiency due to fragmented, manual processes.

- Heightened risk exposure from detection and response delays.

Addressing these challenges requires moving beyond traditional compliance operating models toward solutions that transform compliance from a cost center into a strategic enabler of resilience and growth.

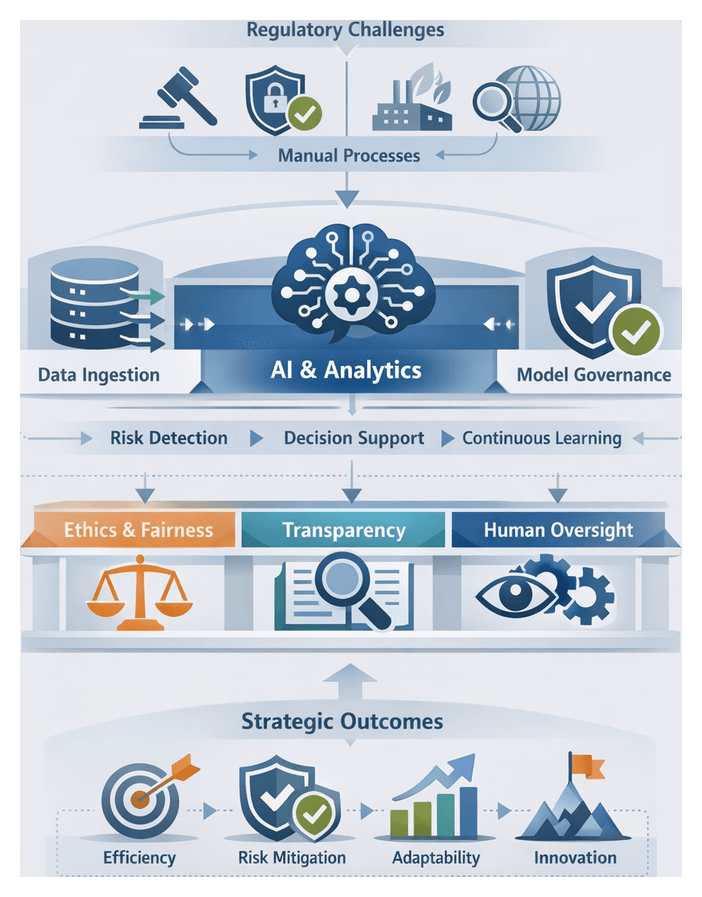

Conceptual Framing for AI-Driven Automation

AI-driven automation applies machine learning, natural language processing (NLP), predictive analytics, and knowledge graphs to compliance and risk workflows. Unlike rule-based engines, AI models learn from historical data, adapt to new patterns, and detect complex relationships that manual processes miss. In practice, organizations use AI to classify regulatory documents, extract clauses, score transaction patterns, forecast exposures, and link entities and regulations into unified views for rapid impact analysis.

By shifting from reactive verification to proactive insight, AI enhances control efficacy, operational resilience, and insight generation. Machine learning algorithms flag anomalous behavior in real time; NLP engines parse policy updates across jurisdictions; clustering techniques group correlated events for deeper investigation. Knowledge graphs map entities, transactions, and controls in a dynamic, end-to-end compliance architecture supporting continuous monitoring and rapid adaptation.

Strategic implementation approaches include establishing a Compliance Center of Excellence; defining use-case roadmaps with measurable objectives; adopting agile development cycles; and integrating with enterprise data platforms such as Palantir Foundry or in-house lakes. Early pilots with IBM Watson for document review or Microsoft Azure Cognitive Services for text analytics validate value and build stakeholder confidence. Model risk management frameworks oversee development, validation, and monitoring in line with supervisory guidance.

Interpretive frameworks guide AI investments:

- Maturity Models: Phases from pilot to optimization define governance needs and resource allocations.

- Risk-Reward Matrices: Balance anticipated risk reduction against implementation complexity.

- Control Taxonomies: Map AI capabilities to preventive, detective, and corrective controls for uplift estimation.

- Ethical and Governance Benchmarks: Shape requirements for transparency, fairness, and accountability.

Evaluative criteria encompass model accuracy (precision, recall, false positive rates), explainability, data governance alignment, operational integration, scalability, and compliance and ethics risk. Embedding human-in-the-loop controls, upskilling teams in data literacy and model interpretation, and positioning AI as an augmentation of human expertise ensure balanced, sustainable adoption.

Relevance of AI Adoption in Today’s Environment

Four converging forces make AI adoption in compliance and risk management a strategic imperative:

- Data Proliferation and Complexity: Organizations manage structured transactions and unstructured content—emails, social media, legal agreements—at volumes beyond manual frameworks. AI techniques such as NLP and pattern recognition synthesize these streams, prioritize high-risk cases, and provide explanatory insights. Financial firms apply real-time anomaly detection to payment patterns; life sciences companies use NLP to analyze regulatory submissions and scientific literature for labeling compliance.

- Shifting Regulatory Demands: Supervisory bodies worldwide now require explainability, transparency, and ethical controls for automated systems. Jurisdictions in the EU, Singapore, and U.S. states are publishing AI risk-management principles. Institutions that implement retrainable models can adjust to rule changes in days rather than months, reducing reporting errors and enforcement risks. Governance frameworks aligned with COSO or ISO 31000 ensure version control, validation protocols, and documentation standards.

- Technological Maturity: Cloud computing, containerization, and MLOps platforms underpin scalable model training and monitoring. Open-source ecosystems—TensorFlow, PyTorch, scikit-learn—alongside enterprise solutions from SAS and many others accelerate experimentation and production performance. Prebuilt models for text classification, anomaly detection, and predictive analytics now include built-in governance and bias detection tools.

- Economic and Competitive Pressures: Early adopters report 20–30 percent cost reductions in reporting and monitoring, faster remediation of control gaps, and enhanced audit readiness. AI-driven insights extend beyond compliance to credit risk, operational resilience, and ESG, creating unified risk platforms. Firms that delay face escalating burdens and lose market agility.

In this landscape, AI is not an optional upgrade but a cornerstone of agile, insight-driven compliance that supports strategic objectives, optimizes resource allocation, and anticipates emerging threats.

Strategic Roadmap and Key Considerations

Roadmap and Key Learning Objectives

This guide progresses from foundational concepts to advanced applications, providing strategic insights and practical considerations for AI-driven compliance:

- Foundations of Compliance and Risk Management: Traditional frameworks and the limits of manual controls.

- The Regulatory Landscape in the AI Era: Mapping AI-related requirements and supervisory guidelines.

- Core AI Techniques: Pattern recognition, NLP, supervised learning, and their compliance applications.

- Data Governance and Quality: Stewardship, lineage, and validation best practices.

- AI for Risk Detection and Predictive Analytics: Anomaly detection and proactive mitigation.

- Automating Reporting and Filings: Intelligent architectures for document classification and audit readiness.

- Enhancing AML and Fraud Prevention: Behavioral analytics and adaptive detection frameworks.

- Integrating AI into Enterprise Risk Frameworks: Governance models and stakeholder engagement.

- Assessing Performance and ROI: Metrics, cost-benefit analysis, and continuous improvement loops.

- Future Trends and Ethics: Generative models, real-time decision engines, and responsible AI governance.

Critical Considerations for Effective Adoption

- Cultural Transformation: Secure executive sponsorship, foster cross-functional collaboration, and cultivate a data-driven mindset across risk, compliance, and technology teams.

- Skills and Competencies: Invest in upskilling compliance professionals, data scientists, and IT staff in analytical literacy, model governance, and domain knowledge.

- Data Quality and Integration: Harmonize data sources, enforce consistent taxonomies, and implement rigorous validation to safeguard model accuracy and regulatory trust.

- Regulatory Alignment and Model Risk: Adopt formal model risk frameworks, including documentation, version control, and change-control procedures, to meet supervisory expectations.

- Bias Mitigation and Explainability: Employ interpretable architectures and post hoc explainers to detect and correct bias, ensuring transparency for internal governance and external audits.

- Vendor and Technology Evaluation: Conduct due diligence on platforms and providers, evaluating technical capabilities, compliance certifications, and data security protocols.

- Scalability and Performance: Design modular architectures and leverage elastic compute resources to maintain consistent performance under peak demand.

- Continuous Governance: Establish AI steering committees or risk councils for ongoing oversight, performance reviews, and alignment with evolving objectives and regulations.

Limitations and Cautions

- Dependence on Historical Data: Models trained on past patterns may mispredict novel risk behaviors or unprecedented regulatory changes.

- Over-Reliance on Automation: Excessive trust in algorithmic outputs can erode professional judgment; human oversight is essential for edge cases and context interpretation.

- Regulatory Ambiguity: Divergent AI guidelines across jurisdictions require agility to adapt and avoid compliance gaps.

- Bias and Fairness Risks: Even well-governed models can perpetuate historical biases; continuous monitoring and corrective measures are mandatory.

- Integration Complexity: Interoperability with legacy systems may extend implementation timelines; phased strategies minimize disruption.

- Maintenance and Model Drift: Without retraining protocols and monitoring, performance can degrade as data distributions shift.

- Cost of Ownership: Licensing, infrastructure, and governance costs contribute to total ownership; holistic cost-benefit analyses should account for long-term maintenance and scalability.

Pathways for Ongoing Evolution

- Iterative Pilots and Proofs of Concept: Target high-impact areas—transaction monitoring, report generation—to validate assumptions and refine governance before scaling.

- Cross-Industry Collaboration: Participate in consortia, standards bodies, and regulatory sandboxes to accelerate best practices and inform supervisory dialogues.

- Advanced Analytics Exploration: Investigate generative AI for scenario simulation, graph analytics for network risk, and real-time decision engines for proactive controls.

- Responsible AI Frameworks: Embed ethical guidelines, impact assessments, and transparency protocols to uphold evolving norms and regulatory expectations.

- Integrated Risk Intelligence Platforms: Develop unified systems combining compliance data, risk indicators, and AI analytics for holistic visibility and faster decision-making.

- Continuous Skills Development: Maintain training programs, certifications, and talent pipelines to sustain capability and foster innovation in compliance analytics.

Chapter 1: Foundations of Compliance and Risk Management

Complex Challenges in Compliance and Risk Management

Organizations today navigate a labyrinth of regulatory demands from bodies such as the Basel Committee, the European Securities and Markets Authority and the Financial Action Task Force. Jurisdictional fragmentation, sector specialization and rapid policy updates force compliance teams to interpret evolving guidelines, maintain extensive documentation and demonstrate adherence across diverse business lines. Manual processes—spreadsheets for filings, checklist-based control execution and human-driven risk assessments—are increasingly strained by data silos and volumes that far outstrip human capacity.

Reliance on manual controls introduces high error rates, latency in issue detection and opaque audit trails. When critical data resides in departmental silos, consolidating and reconciling inputs can consume vast resources. Industry surveys show that up to 60 percent of compliance professionals spend the majority of their time on data aggregation rather than on strategic analysis. This focus on routine work limits organizational agility, delays response to regulatory change and increases exposure to financial loss, reputational damage and supervisory scrutiny.

Traditional risk models struggle with the velocity and variety of modern data. Digital transaction volumes have surged, new instruments such as cryptocurrencies pose novel threats, and unstructured sources—customer communications, social media and news feeds—contain hidden risk signals. Rule-based engines collapse under scale, while static controls lose predictive power. Simultaneously, regulatory texts, internal policies and contractual agreements number in the thousands, requiring meticulous tagging, version control and cross-referencing. Without a unified taxonomy or semantic indexing, retrieval and audit readiness become laborious.

These operational challenges quickly cascade into strategic risks. Executive boards lack real-time visibility into compliance posture, hindering critical decision-making. In sectors such as financial services and healthcare, even brief compliance lapses can lead to injunctions, customer attrition and long-term reputational harm. Faced with budget constraints, many organizations divert resources from innovation to maintain manual processes, eroding their competitive edge.

Against this backdrop, interest in advanced solutions has surged. Boards demand faster reporting cycles and transparent dashboards. Chief risk officers seek tools that automate routine tasks, reduce false positives and deliver deeper insights into emerging risk patterns. Compliance teams aim to streamline investigation workflows and redeploy experts toward high-value advisory roles. These pressures set the stage for artificial intelligence as a transformative force in compliance and risk management.

Conceptual Foundations of AI-Driven Automation

From Rules-Based to Learning-Based Systems

AI-driven automation transcends traditional rule-based technologies by incorporating adaptive learning capabilities. Whereas robotic process automation automates scripted tasks against structured inputs, AI systems such as machine learning models and natural language processing engines learn from historical data, detect evolving patterns and generalize to novel scenarios. Common AI techniques include:

- Supervised learning for transaction monitoring, training on labeled examples of suspicious activity to assign risk scores.

- Unsupervised learning for anomaly detection, uncovering outliers without predefined labels.

- Reinforcement learning to optimize decision workflows through continuous feedback loops.

- Natural language processing (NLP) for entity recognition, semantic parsing and sentiment analysis across regulatory texts, contracts and communications.

- Pattern recognition and statistical methods for real-time deviation alerts against established behavior baselines.

These techniques enable proactive, intelligence-driven risk management. Use cases span automated document classification, policy mapping, regulatory reporting and continuous control validation. By augmenting human judgment with data-driven insights, organizations shift from reactive compliance to predictive and prescriptive frameworks.

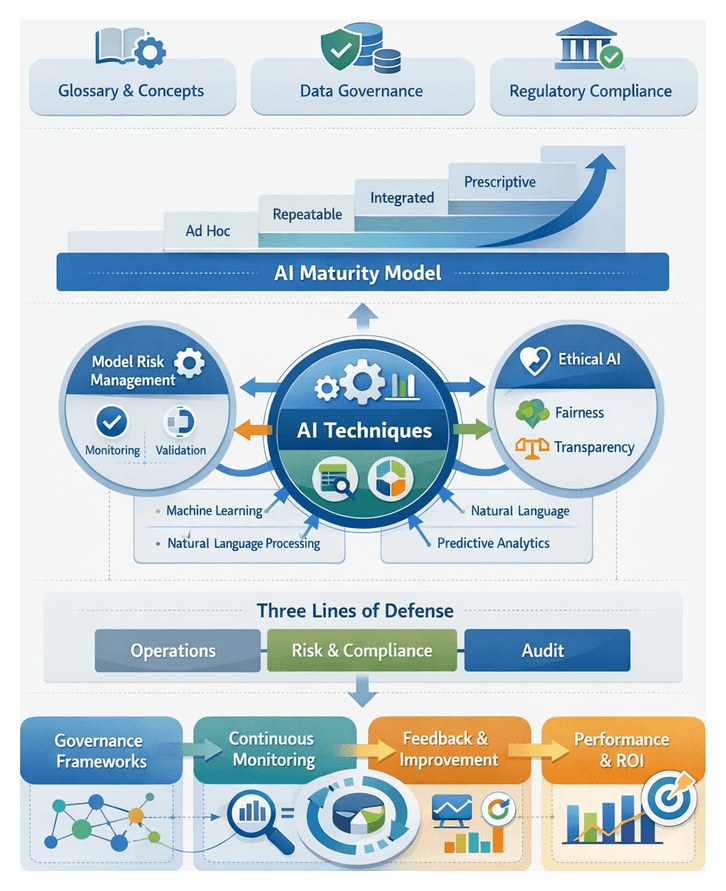

Governance, Explainability and Maturity Models

Robust governance structures are essential for defensible AI deployment. Regulatory bodies emphasize explainability, auditability and accountability for algorithmic decisions. Leading practices include:

- Explainability frameworks such as SHAP and LIME to align model outputs with control objectives.

- Audit trails documenting data lineage, training processes and parameter changes.

- Governance committees—model risk management forums and AI ethics boards—with clear escalation paths.

To assess organizational readiness, many enterprises adopt AI maturity models. A common five-stage framework spans:

- Ad hoc experimentation with isolated pilots.

- Repeatable AI integration into existing workflows.

- Deployment of predictive models for risk scoring.

- Implementation of prescriptive recommendations for control optimization.

- Transition to self-learning systems with minimal human intervention.

By situating initiatives within such models, firms can prioritize investments, identify capability gaps and measure incremental value. A hybrid approach—overlaying machine-learning predictions with rule-based validations—ensures both adaptability and auditability.

Practitioner perspectives emphasize augmentation over replacement. AI excels at pattern recognition and high-volume tasks, but expert oversight remains crucial for interpreting ambiguous regulations, managing model bias and fulfilling ethical obligations. Commercial platforms accelerate adoption—for example UiPath integrates RPA and AI, IBM Watson powers natural language understanding, and Automation Anywhere orchestrates end-to-end automation workflows. These tools demonstrate how AI augments human expertise in real-world compliance environments.

Strategic Considerations for Modernizing Compliance Foundations

Dynamic Governance and Data as a Strategic Asset

Compliance frameworks must evolve from static rulebooks to adaptive socio-technical systems. Dynamic governance constructs—control assurance cycles, model risk committees and AI ethics boards—establish decision rights, escalation paths and continuous feedback loops. Policies, control objectives and risk indicators are mapped to real-time data feeds, enabling rapid calibration of procedures.

High-quality data underpins every AI-driven solution. A holistic data lifecycle approach encompasses stewardship, lineage tracking and integrity controls. Analytical frameworks such as the Data Management Maturity Model guide institutions through capability levels in metadata standards, version control and data quality monitoring. Platforms like those listed on AgentLink AI offer modular connectors for ingesting regulatory updates and transaction data, facilitating seamless integration with existing systems.

Cultural Alignment, Risk Tolerance and Technology Convergence

Successful AI adoption hinges on cross-functional collaboration and talent alignment. Compliance teams, data scientists, IT architects and legal experts must co-design use cases, pilot solutions and scale validated models. Learning programs that build data literacy and AI proficiency within compliance functions foster a culture of continuous improvement and experimentation.

As AI assumes greater roles in monitoring and decision-making, risk appetites and tolerance thresholds require recalibration. Traditional qualitative risk matrices should be augmented with quantitative models that reflect behavioral indicators and stress-testing scenarios. This disciplined approach ensures automated controls operate within acceptable boundaries while maximizing detection efficacy.

Architectural convergence is equally critical. Open architectures, API-driven data exchanges and metadata registries reduce silos and lower total cost of ownership. Integrated platforms unify AI engines, case management tools and enterprise risk systems, enabling scalable, auditable workflows and end-to-end traceability.

Ethical, Regulatory and Operational Resilience

AI offers transformative potential but must be deployed with awareness of ethical and regulatory constraints. Variability in supervisory guidelines for model governance and algorithmic accountability demands pragmatic alignment strategies. Organizations should adopt explainable AI standards and fairness metrics to detect bias and maintain stakeholder trust.

Hybrid human-machine models strike an optimal balance between efficiency and oversight. Automated engines surface anomalies and generate recommendations, while experts validate context, refine policies and address novel regulatory interpretations. This human-in-the-loop paradigm preserves resilience, ensures ethical use and mitigates the risk of model failures.

Investment decisions in AI-driven compliance should rest on rigorous cost-benefit analyses. Frameworks for return on investment encompass direct savings in labor and remediation, as well as qualitative gains in audit readiness, regulatory relationships and competitive differentiation. Scenario-based financial modeling helps executives prioritize initiatives that deliver the greatest strategic value.

By integrating dynamic governance, data excellence, cultural transformation and disciplined risk calibration, organizations can modernize compliance foundations and harness AI-driven automation at scale. These strategic considerations provide the groundwork for detailed exploration of technology selection, implementation methodologies and continuous performance measurement in the chapters that follow.

Chapter 2: The Regulatory Landscape in the AI Era

Traditional Compliance and Risk Management Challenges

Organizations today contend with an unprecedented volume and complexity of regulatory requirements spanning multiple jurisdictions, each with distinct reporting formats, timelines and risk thresholds. Financial institutions, healthcare providers, energy companies and technology firms struggle to translate evolving data privacy, cybersecurity, environmental and anti-fraud directives into operational processes. Manual approaches—where policy analysts track updates in legal portals, control owners maintain spreadsheets and audit teams perform periodic reviews—introduce high error rates, limited scalability, latency between rule issuance and implementation, siloed information flows and resource-intensive operations. As cyber threats adapt in real time, supply chain exposures multiply and reputational risks escalate via social media, reliance on point-in-time assessments and rigid rule engines leaves critical warning signs undetected. In this dynamic environment, continuous monitoring, rapid anomaly detection and predictive insight become imperative.

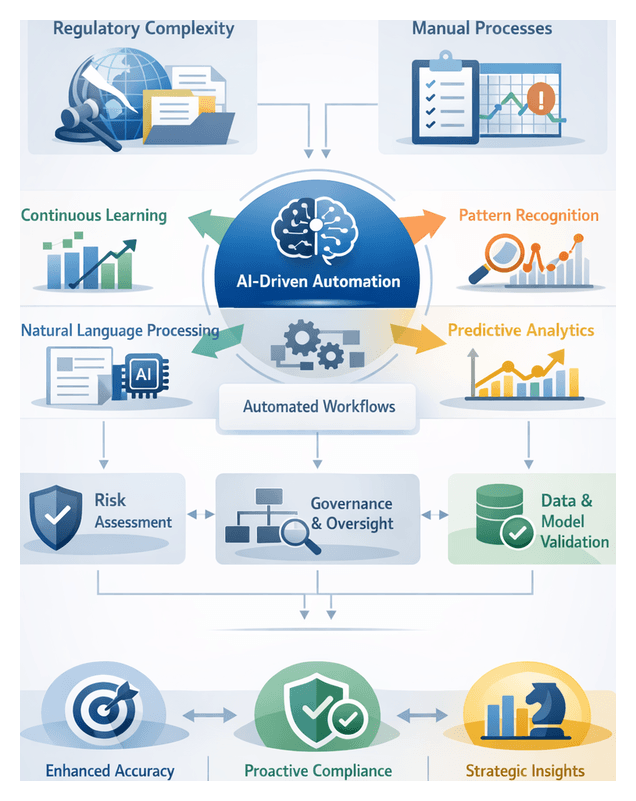

AI-Driven Automation: Conceptual Foundations and Strategic Value

AI-driven automation transforms static, manual processes into adaptive, data-centric systems that learn from historical patterns and evolve in real time. By applying machine learning, natural language processing and advanced analytics to vast structured and unstructured datasets, organizations can uncover latent relationships, extract regulatory obligations and forecast risk trajectories. This capability shifts compliance from reactive remediation to proactive mitigation, amplifying the expertise of compliance professionals and enabling focus on policy interpretation, stakeholder engagement and strategic risk planning.

- Continuous Learning: Models refine their accuracy over time by training on historical compliance events, transaction records and regulatory interpretations.

- Pattern Recognition: Advanced algorithms detect unusual transaction clusters, deviations in contract language and emerging risk clusters.

- Natural Language Understanding: AI systems parse legislation, guidance and internal policies to extract definitions, obligations and control requirements.

- Predictive Analytics: Forward-looking risk scoring and trajectory forecasting enable resource allocation ahead of emerging threats.

- Automated Workflows: Integration with enterprise systems triggers alerts, case creation and remediation assignments automatically.

In boardrooms, AI is positioned not merely as a back-office efficiency tool but as a strategic enabler of predictive compliance. Early adopters gain enhanced risk visibility, accelerated decision cycles and the agility to meet stringent regulatory timelines. By infusing intelligence into core processes, organizations can transform compliance from a cost center into a capability that delivers operational resilience and competitive differentiation.

Analytical and Interpretive Frameworks for AI Integration

To guide evaluation and deployment of AI-driven initiatives, organizations leverage structured frameworks and analytical criteria that align technology with risk governance and investment priorities.

- Capability Maturity Models assess progress from ad hoc processes to fully integrated AI-enabled risk management, defining stages such as pilot deployment, enterprise scaling and continuous improvement.

- Risk-Based Governance Structures realign control activities based on risk severity and likelihood, replacing uniform process flows with adaptive risk scoring and exception-driven workflows.

- Technology Adoption Curves identify early adopters, pragmatists and the early majority, informing engagement and change strategies.

Key analytical considerations include:

- Data Quality and Representativeness: Training datasets must reflect the operational environment and risk universe to prevent model bias.

- Model Validation and Stress Testing: Rigorous back-testing and rare event simulations assess stability and resilience.

- Governance and Oversight Protocols: Clear ownership, escalation pathways and audit trails are essential across model development, deployment and monitoring.

- Transparency and Explainability: Techniques such as SHAP values or LIME analysis support interpretability and regulatory transparency.

- Scalability and Performance Metrics: Throughput, latency and resource utilization must align with real-time or batch processing requirements.

- Change Management and Cultural Adoption: Training, communication and stakeholder collaboration drive acceptance and minimize resistance.

Emerging analytical trends include hybrid intelligence models that combine rule-based engines with machine learning, continuous feedback loops that retrain models based on human analyst decisions, explainability as a compliance enabler and risk-adjusted automation roadmaps aligned with organizational maturity and appetite.

Evolving Regulatory Drivers and System Design Implications

Regulatory frameworks such as the European Union’s AI Act and guidance from the U.S. Office of the Comptroller of the Currency emphasize transparency, accountability and robust model governance. Automated risk management platforms must embed compliance by design, incorporating version control, audit trails and explainability layers into their architecture. System design now demands a dual focus on analytical performance metrics and demonstrable adherence to evolving standards.

Model Governance and Interpretability

Governance frameworks establish clear ownership, documented decision rights and standardized validation processes. Organizations select algorithms that balance predictive power with interpretability and often apply principles from the Basel Committee’s guidelines on effective risk data aggregation and reporting to ensure auditability across the model lifecycle.

Data Privacy and Ethical Considerations

The ingestion of sensitive data under GDPR, the California Consumer Privacy Act and HIPAA mandates data minimization, pseudonymization and secure lineage tracking. Privacy by design ensures analytical processes respect user rights while maintaining model accuracy.

Auditability and Documentation

Comprehensive logs of data inputs, model versions, parameter settings and output explanations support internal and external audits. In regulated sectors like financial services, the ability to trace every automated decision back to documented governance processes is non-negotiable.

Integration, Deployment and Operational Governance

Automated solutions must integrate seamlessly with heterogeneous technology stacks, spanning legacy databases, on-premise applications and third-party platforms. Robust extract, transform and load processes preserve data integrity and lineage, while APIs enforce authentication, encryption and role-based access. Integrations with platforms such as IBM Watson and Palantir often inform vendor selection and deployment strategies.

Modular Architecture and Scalability

Microservices and containerization support isolated updates to components like anomaly detection engines or reporting interfaces without disrupting the entire system. Cloud-native deployments with elastic compute resources accommodate peak processing loads and simplify the incorporation of new data feeds and rule sets.

Operational Monitoring and Escalation

Automated risk management systems generate real-time alerts when performance thresholds degrade or anomalies occur. Escalation workflows route these alerts through predefined channels to compliance officers, risk managers and audit teams, ensuring timely human intervention. Dashboards and case management tools streamline the tracking, investigation and resolution of incidents.

Analytical Frameworks for Risk Prioritization

Clustering algorithms and predictive analytics group related risk events and assign severity, likelihood and impact scores. Decision trees and scoring models translate raw anomaly data into prioritized action items, aligning with standards such as ISO 31000 and the COSO ERM framework.

Vendor Strategies and Cross-Industry Contexts

Vendors differentiate their automated risk platforms with configurable rule engines, audit log management and regulatory content libraries that address use cases from transaction monitoring in banking to claims analysis in healthcare. Roadmaps emphasize interoperability with broader governance, risk and compliance suites and prioritize features that support continuous learning and regulatory change management.

Across industries, AI-driven compliance solutions address common themes: financial services deploy them for anti-money laundering and credit risk; healthcare providers detect billing anomalies and fraud; manufacturers monitor supply chain risks; energy companies anticipate equipment failures with reporting implications. Despite varied data domains, the core challenge remains aligning advanced analytics with sector-specific compliance mandates.

Continuous Calibration, Validation and Ethical Oversight

Model performance degrades as data patterns shift and regulatory expectations evolve. Systems incorporate calibration processes that compare predicted risk against actual outcomes and validation frameworks—such as the Federal Reserve’s SR 11-7 guidance—for back-testing and sensitivity analysis. Ethical considerations, including bias detection and mitigation, ensure equitable treatment across customer segments and uphold reputational integrity.

Feedback loops involving compliance officers, data scientists and business stakeholders drive iterative refinements. Regular review cycles assess alert quality, investigation outcomes and user experience, fostering a learning culture that enhances system performance and regulatory alignment over time.

Core Strategic Imperatives, Limitations and Future Outlook

Successful AI-driven compliance initiatives rest on several cross-cutting imperatives:

- Governance Alignment: Clarify oversight responsibilities, decision rights and escalation pathways across AI and risk governance frameworks.

- Data Integrity: Elevate data quality, lineage and stewardship to core control objectives, ensuring model reliability and auditability.

- Cross-Functional Collaboration: Engage legal, compliance, risk, IT and business stakeholders to foster shared ownership and break down silos.

- Model Governance and Validation: Institute independent validation, continuous monitoring and documentation to guard against drift and bias.

- Scalable Architecture: Adopt modular, interoperable platforms—such as Microsoft Azure AI or Google Cloud AI—to facilitate integration and growth.

- Measurement and Value Realization: Define key performance indicators (accuracy, timeliness, cost savings) and link them to business outcomes.

Organizations must also navigate inherent limitations and risks:

- Regulatory Ambiguity: Divergent supervisory expectations across jurisdictions can create uncertainty around acceptable AI practices.

- Data Bias: Historical biases in training data can propagate discriminatory outcomes without ongoing mitigation strategies.

- Model Explainability: Complex algorithms may lack transparency, challenging stakeholder trust and regulatory justification.

- Cultural Resistance: Insufficient change management can impede adoption as workflows and decision rights evolve.

- Skill Constraints: Talent shortages in data science, IT architecture and regulatory expertise can slow implementation.

- Vendor Lock-In: Dependence on proprietary platforms without exit strategies may limit flexibility and escalate costs.

- Security and Privacy: Large-scale processing of sensitive data demands robust cybersecurity controls and strict access management.

Framework for Evaluating AI-Driven Compliance Initiatives

A structured evaluation framework helps organizations maximize success:

- Strategic Readiness Assessment: Benchmark maturity in data governance, technology infrastructure and risk culture, identifying capability gaps and leadership sponsorship needs.

- Regulatory and Ethical Alignment: Map AI use cases against applicable regulations and ethical standards, embedding transparency, accountability and bias mitigation by design.

- Technology Fit and Scalability: Evaluate platforms for interoperability, modularity and support for continuous learning, favoring open architectures to avoid vendor lock-in.

- Operational Integration: Define process flows, roles and responsibilities for data inputs, model execution, exception handling and escalation, and plan robust training and communication.

- Performance Measurement: Establish dashboards for real-time monitoring of key metrics such as false positive rates, investigation cycle times and cost avoidance.

- Continuous Improvement: Embed feedback loops that feed production data back into model retraining, periodic validation and governance forums to detect drift and emergent biases.

Actionable Next Steps for Responsible AI Adoption

To harness AI’s full potential in compliance and risk management, organizations should:

- Prioritize data governance to ensure the integrity, traceability and quality of inputs driving AI models.

- Embed ethical and human-centric safeguards—such as transparency, human-in-the-loop oversight and bias detection—throughout the model lifecycle.

- Align AI initiatives with enterprise risk frameworks and strategic objectives for seamless oversight and value capture.

- Adopt modular, scalable platforms that support interoperability, continuous learning and vendor neutrality.

- Establish robust measurement systems and feedback loops to drive ongoing refinement and responsiveness to emerging risks.

- Invest in culture change and capability building to embed data-driven insights into compliance decision-making.

By following a structured, analytical and ethical approach, organizations can transform compliance and risk management from a cost center into a strategic enabler, achieving operational resilience and sustained competitive advantage in a rapidly evolving regulatory landscape.

Chapter 3: Essential Concepts of Artificial Intelligence and Machine Learning

Evolving Compliance and Risk Management Challenges

Organizations operating in regulated industries face a rapidly shifting landscape of requirements and expectations. Regulatory bodies continually update rules addressing financial misconduct, data privacy, and environmental, social, and governance standards—often with compressed timelines and ambiguous guidance. Compliance teams must interpret, operationalize, and monitor controls without disrupting business activities. Traditional approaches, reliant on manual processes, fragmented data, and periodic reviews, introduce latency in risk detection, elevate error rates, and obscure audit trails.

Multiple business units frequently maintain inconsistent taxonomies, leading to misaligned reporting and data quality issues such as missing fields, duplicate records, and varied formatting. When regulators request evidence of adherence, teams scramble to reconcile disparate sources, extend remediation timelines, and increase headcount—amplifying costs and detracting from strategic risk management. Meanwhile, digital transformation accelerates data generation across structured and unstructured sources—social media, logs, third-party feeds—creating blind spots that legacy systems cannot integrate or analyze effectively.

- Escalating regulatory volume and pace of change

- Heavy reliance on manual, error-prone processes

- Fragmented data sources and inconsistent taxonomies

- Latent risk detection and delayed remediation

- High operational costs and reactive scaling

- Limited integration of structured and unstructured data

AI-Driven Automation Framework for Compliance

Artificial intelligence offers a new paradigm by embedding compliance into business-as-usual operations through continuous, adaptive automation. Four strategic components underpin this framework:

- Data Ingestion and Normalization: Pipelines that collect and harmonize information from internal systems, external feeds, and unstructured sources such as emails, documents, and audio transcripts.

- Machine Learning and Pattern Recognition: Models that detect anomalies, classify regulatory content, and predict risk events using supervised learning, unsupervised clustering, and deep learning techniques.

- Process Orchestration: Intelligent workflows that assign alerts, route review queues, and trigger controls to minimize manual intervention.

- Governance and Monitoring: Layers that ensure transparency, auditability, continuous model validation, and alignment with regulatory expectations.

Early pilots—such as document classification or transaction monitoring—evolve into integrated platforms spanning risk assessment, control testing, reporting, and remediation. Leading solutions include IBM Watson, Microsoft Azure AI, Google Cloud AI, Amazon SageMaker, and specialized offerings like those on AgentLinkAI. Robotic process automation, such as UiPath bots, further orchestrates data flows and model inferences at scale.

Advanced Analytics: NLP, Pattern Recognition, and Data Proliferation

Compliance and risk management confront vast volumes of structured and unstructured data—transaction records, policy documents, communications, and multimedia content. Natural language processing (NLP) and pattern recognition decode policy texts, extract risk signals, and detect anomalies in transactional data, transforming data proliferation from a burden into an enabler of deeper risk visibility.

Statistical approaches, symbolic rule-based systems, and neural architectures each offer trade-offs in interpretability, precision, and adaptability. Hybrid strategies blend controlled vocabularies with probabilistic models to balance coverage and accuracy. Deep learning models—such as BERT and GPT-based embeddings—capture semantic nuances across document corpora, supporting tasks like policy cross-referencing, regulatory change detection, and sentiment analysis.

Organizations invest in robust preprocessing—tokenization, lemmatization, part-of-speech tagging—and overlay custom glossaries to prevent critical misclassification. Feature engineering extracts regulatory risk indicators from sentiment intensity, policy deviation counts, and semantic similarity measures, improving model precision. When labeled data are scarce, domain adaptation and transfer learning fine-tune pretrained models on smaller, regulatory-specific datasets.

Multimodal pattern recognition extends analysis to time series, network graphs, and multimedia. Sequence analysis and community detection algorithms reveal anomalous transaction flows and hidden relationships in entity networks. Pattern libraries codify suspicious transaction typologies, enabling automated triage and alert prioritization. Throughout, quantitative metrics—precision, recall, F1 score, and cost-weighted evaluations—guide threshold calibration to balance risk of false negatives against investigative resource consumption.

Regulatory interpretability standards, such as GDPR’s right to explanation and supervisory expectations from the Office of the Comptroller of the Currency, mandate transparent AI systems. Explainability frameworks like LIME and SHAP generate both global and local explanations, while model cards and AI fact sheets document intended use cases, performance characteristics, and limitations to support audits and regulatory reviews.

Accelerators of AI Adoption: Regulatory Evolution, Technology Maturity, and Economics

Converging forces have turned AI-driven compliance automation from a strategic option into an operational imperative:

- Regulatory Evolution: New mandates—real-time transaction monitoring, forward-looking provisions under CECL and IFRS 17, data privacy standards—expand compliance scope and granularity. Supervisory expectations for AI explainability and data ethics in jurisdictions such as Singapore and Hong Kong further drive interest.

- Technology Maturity: Cloud computing, open-source frameworks, and pretrained models provide on-demand scalability and modular deployment. Managed services like IBM Watson, Microsoft Azure AI, and Google Cloud AI minimize entry barriers, while TensorFlow and PyTorch empower custom model development.

- Economic Pressures: Rising operational costs, resource constraints, and the need for cost containment position AI as both a cost-avoidance and value-creation lever. Machine learning-driven transaction monitoring can reduce investigation volumes by up to 70 percent, and AI-powered document classification accelerates underwriting and claims adjudication.

- Competitive Differentiation: AI-augmented compliance offers proactive intelligence—market microstructure anomaly detection in trading, counterparty screening in supply chains, and predictive modeling in insurance. Platforms such as Palantir Foundry and DataRobot enable unified analytics and automated ML pipelines, delivering operational insights and regulatory confidence.

Organizations across financial services, healthcare, manufacturing, and beyond tailor AI strategies to domain-specific risk taxonomies, data governance requirements, and regulatory enforcement intensities. Industry consortia—FS-ISAC, HIMSS—facilitate best practice exchange on AI governance and model risk management.

Strategic Integration: Governance, Explainability, and Continuous Improvement

Successful AI adoption in compliance necessitates integration into enterprise risk and control frameworks, governed by clear policies and supported by cross-functional collaboration:

- Alignment with Control Objectives: Map AI techniques to risk categories—supervised models for transaction classification, unsupervised methods for anomaly detection, NLP for policy interpretation—to ensure measurable control outcomes.

- Multi-Layered Governance: Structure evaluation across data integrity, analytical logic, and oversight layers. Incorporate model risk management protocols—inventory, validation, monitoring, change-control—aligned with guidance such as SR 11-7.

- Explainability and Transparency: Use Shapley value analysis, attention visualization, and rule-extraction to provide global and local explanations. Maintain metadata logs and lineage tracking to document training data provenance and decision thresholds.

- Data Quality and Bias Mitigation: Implement continuous monitoring of feature drift, missing data, and bias metrics. Embed fairness assessment tools and remediation processes to uphold ethical standards.

- Human-in-the-Loop and Role Clarity: Define escalation points for manual review in high-risk scenarios. Ensure clear demarcation of responsibilities among data scientists, compliance officers, and audit functions.

- Continuous Learning Cycles: Establish feedback loops capturing false positives, audit findings, and changing risk patterns to retrain models and refine feature sets for resiliency.

- Documentation and Audit Readiness: Produce model cards, AI fact sheets, and technical notes detailing assumptions, limitations, performance benchmarks, and governance controls to support regulatory examinations.

- Strategic Roadmapping: Employ AI maturity models to sequence capability development—data readiness, model sophistication, governance maturity, cultural adoption—and align investments with long-term objectives.

By integrating AI techniques within disciplined governance structures and embedding transparent, ethical practices, organizations can transform compliance from a reactive burden into a proactive, strategic asset. This positions risk management to deliver enhanced visibility, operational efficiency, and regulatory resilience in an increasingly complex environment.

Chapter 4: Data Governance and Quality for Automated Compliance

Current Challenges in Compliance and Risk Management

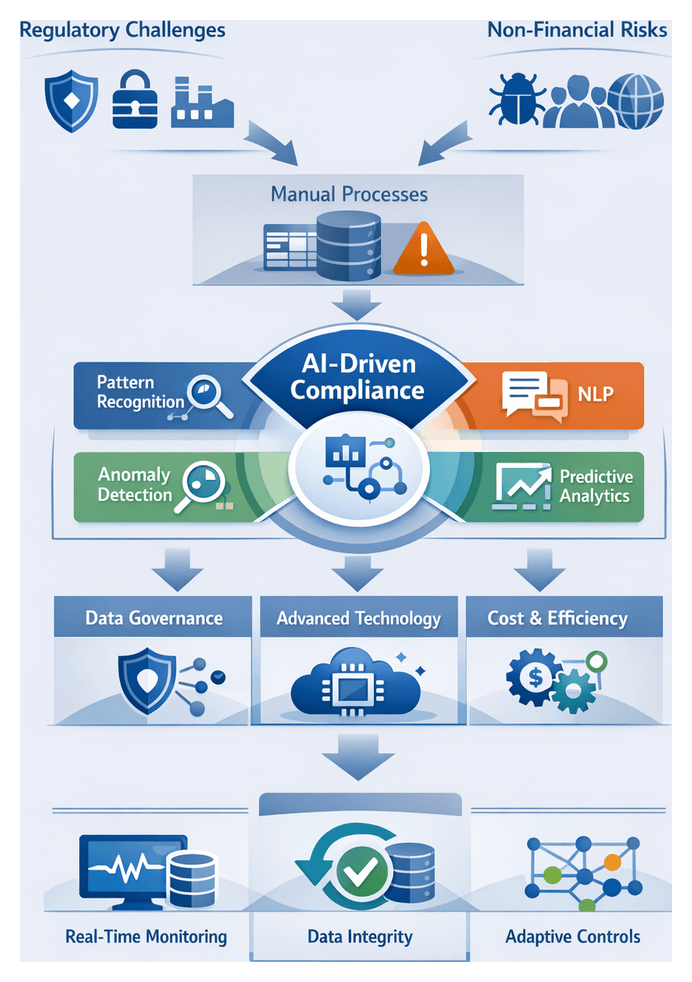

Organizations face a dynamic regulatory landscape with overlapping standards in data privacy, financial integrity, cybersecurity and environmental resilience. Traditional compliance relies on manual controls, spreadsheet tracking and siloed systems that hamper timely issue identification. Risk teams spend excessive time reconciling disparate data sources rather than analyzing emerging threats, resulting in inconsistent assessments and rising costs. At the same time, exposure to nonfinancial risks such as cyber incidents, third-party failures and reputational events materializes rapidly and can cascade across global operations. Manual processes introduce latency in detection and remediation, increasing vulnerability to fines, legal actions and brand damage. Compliance functions must therefore evolve to demonstrate real-time risk awareness, maintain comprehensive audit trails and adapt to changing regulations without proportionally growing headcount.

AI-Driven Paradigm for Automated Compliance

Artificial intelligence transforms compliance from static rule enforcement to dynamic, data-driven insights. Key capabilities include:

- Pattern Recognition: Machine learning uncovers risk indicators and relationships that manual reviews may miss.

- Natural Language Processing: Automated text analysis interprets policies, contracts and guidance.

- Anomaly Detection: Unsupervised algorithms surface outliers without exhaustive rule sets.

- Predictive Analytics: Forecasting models anticipate risks, enabling proactive remediation.

Implementing AI-driven automation requires a human-in-the-loop approach, combining domain expertise with algorithmic efficiency. Models should be designed for explainability, ethical alignment and regulatory compliance. By treating automation as a strategic enabler of an adaptive control environment, organizations can scale controls as operations grow and regulations evolve.

Enablers for AI Adoption in Compliance

Several converging factors make AI adoption in compliance timely and impactful:

- Data Proliferation: Terabytes of structured and unstructured data—from customer transactions to third-party feeds—demand scalable analytics.

- Regulatory Evolution: Supervisory bodies favor principles-based regimes and real-time monitoring capabilities.

- Technological Maturity: Cloud platforms and pre-built AI services reduce deployment barriers. Leading solutions such as IBM Watson, Microsoft Azure AI and Google Cloud AI provide ready-to-use models and tools.

- Cost Pressures: Automating repetitive tasks frees talent for strategic risk management and stakeholder engagement.

- Competitive Differentiation: AI-enabled compliance supports faster product launches, broader market access and stronger governance reputations.

By shifting from periodic, backward-looking reporting to continuous, forward-looking insights, firms can align compliance with broader digital finance and data-driven decision-making initiatives.

Data Governance: Integrity, Lineage and Stewardship

Robust data governance underpins all AI-driven compliance efforts. Three interdependent dimensions are critical:

- Stewardship: Clear data ownership and accountability ensure policies are enforced across business, risk and technology functions.

- Integrity: Controls such as versioning, validation checks and reconciliation preserve data accuracy, completeness and consistency over time.

- Lineage: Mapping data flows from source to model input provides the audit trails necessary for regulatory confidence.

Industry frameworks guide these practices. ISO 8000 defines data quality principles, BCBS 239 emphasizes traceability for risk aggregation, and DAMA DMBoK outlines metadata management and stewardship roles. Regulations like GDPR implicitly mandate provenance and integrity for personal data. Organizations that align internal controls with these standards can articulate a coherent data quality posture to auditors and supervisors.

Architectural Lenses for Lineage Visibility

Comprehensive lineage analysis requires multiple viewpoints:

- Logical Lineage: Business-level data flows across functional domains without technical detail.

- Physical Lineage: Technical artifacts such as database tables, ETL scripts and cloud services trace transformations at a granular level.

- Operational Lineage: Runtime factors including data latency, job schedules and system dependencies reveal processing bottlenecks.

Integrating these lenses delivers the transparency needed for model validation, forensic audits and regulatory inquiries.

Automated Techniques for Lineage Discovery

To reduce manual burdens and improve accuracy, organizations leverage automation powered by AI and advanced metadata tools. Techniques include:

- Metadata Harvesting Engines: Systems that scan pipelines, query logs and configurations to infer lineage without manual input.

- Natural Language Metadata Extraction: NLP analyzes code comments and documentation to enrich lineage repositories.

- Graph-Based Lineage Models: Property graphs allow complex impact analysis and interactive visualization of dependencies.

These tools accelerate the establishment of lineage repositories and support continuous updates as data ecosystems evolve. Governance frameworks must validate machine-generated lineage and contextualize it for stakeholders.

Data Validation in Compliance Use Cases

Rigorous data validation ensures downstream analytics and reports are built on reliable inputs. Key use cases include:

Transaction Monitoring and Suspicious Activity Reporting

Validation checks for completeness, format consistency and reconciliation against master data guard against false positives and blind spots. Risk-based rules prioritize high-risk transactions and enforce temporal checks to detect missing or duplicate entries.

Regulatory Reporting and Disclosure Filings

Schema validation ensures required fields conform to templates. Automated reconciliation aligns internal balances with regulatory aggregates, while exception workflows manage out-of-tolerance variances.

Risk Assessment Models

Statistical consistency checks, outlier detection and cross-validation against independent sources embed quality controls within model governance lifecycles.

Vendor and Third-Party Data Integration

Automated checks align taxonomies, verify currency conversions and flag missing fields in external data feeds to prevent sanctions screening errors and misclassifications.

Sanctions and Watchlist Screening

Probabilistic matching algorithms require clean inputs to minimize false positives. Iterative validation loops reconcile screening hits against known good records, combining automation with human adjudication.

Enterprise Risk Dashboards

Tiered validation—from source system checks to dashboard-level sanity assessments—ensures aggregated metrics accurately reflect underlying exposures.

Model Risk Management

Validation checkpoints at normalization, feature engineering and enrichment stages document data provenance and transformation logic as part of the audit trail.

Organizations embed these validation frameworks within governance platforms such as Collibra or Microsoft Azure Purview to monitor quality metrics, manage exceptions and update rules through feedback loops.

Strategic Principles for Trustworthy Data Practices

A strategic governance framework for AI-driven compliance integrates the following principles:

- Explicit Policy Definition: Codify enterprise-level data standards and usage rules referencing industry benchmarks.

- Role-Based Stewardship: Assign data ownership across cross-functional teams to foster accountability.

- Metadata and Lineage Automation: Deploy cataloging tools and lineage solutions for rapid impact analysis and audit readiness.

- Risk-Aligned Prioritization: Focus on high-value datasets supporting risk models, transaction monitoring and regulatory reports.

- Continuous Monitoring: Implement automated health checks and alerts to detect schema changes, drift and anomalies in near real time.

Leaders must anticipate limitations—such as fragmented repositories, legacy constraints and evolving regulatory guidance—and adopt phased implementation strategies. Emerging considerations include streaming governance architectures, data drift detection, privacy-preserving techniques and integration with DevOps and MLOps workflows.

Measuring Data Governance and Performance Interplay

Measuring maturity and performance fosters continuous improvement. Key indicators include coverage metrics for lineage across logical, physical and operational layers; quality scores for metadata completeness and transformation accuracy; remediation backlogs; and audit findings. By linking governance KPIs to reduced regulatory issues and faster issue resolution, organizations demonstrate tangible value. High governance maturity accelerates model development by reducing data uncertainty, while reliable AI outcomes reinforce investment in stewardship. A balanced approach that marries agility with rigorous controls enables enterprises to realize strategic benefits in compliance and risk management.

Chapter 5: Leveraging AI for Risk Detection and Predictive Analytics

Current Compliance and Risk Management Challenges

Organizations today face unprecedented regulatory complexity as global markets expand and cross-border activities multiply. Each jurisdiction imposes unique rulemaking authorities, reporting requirements, and supervisory expectations, while frequent policy updates demand continuous protocol revisions. Manual processes and legacy systems struggle to adapt, creating data silos, fragmented workflows, and paper-based review cycles that introduce delays, gaps in oversight, and heightened operational risk.

Financial institutions and regulated entities are under intense pressure to demonstrate timely, accurate, and fully auditable controls. Error rates in manual transaction monitoring and document review can trigger material regulatory findings and costly remediation. Audit trails may lack the granularity examiners require, leading to repeat inquiries and fines. Clients and investors also demand transparent, data-driven insights into risk governance, expecting organizations to identify emerging threats before they materialize into compliance failures or financial losses.

Despite these pressures, many risk and compliance teams remain overburdened by spreadsheets, disjointed reporting systems, and manual reconciliations. Routine tasks—policy interpretation, regulatory gap analysis, exception investigation—consume significant headcount. As transaction volumes grow and new data sources emerge, traditional approaches become unsustainable, leaving organizations exposed to oversight lapses and strategic blind spots.

Leaders recognize the need to modernize controls, enhance data integrity, and accelerate decision cycles. They seek solutions that augment human expertise with algorithmic precision, freeing compliance professionals to focus on high-value activities. Understanding the scope of these challenges is the critical first step toward transformation in compliance and risk management.

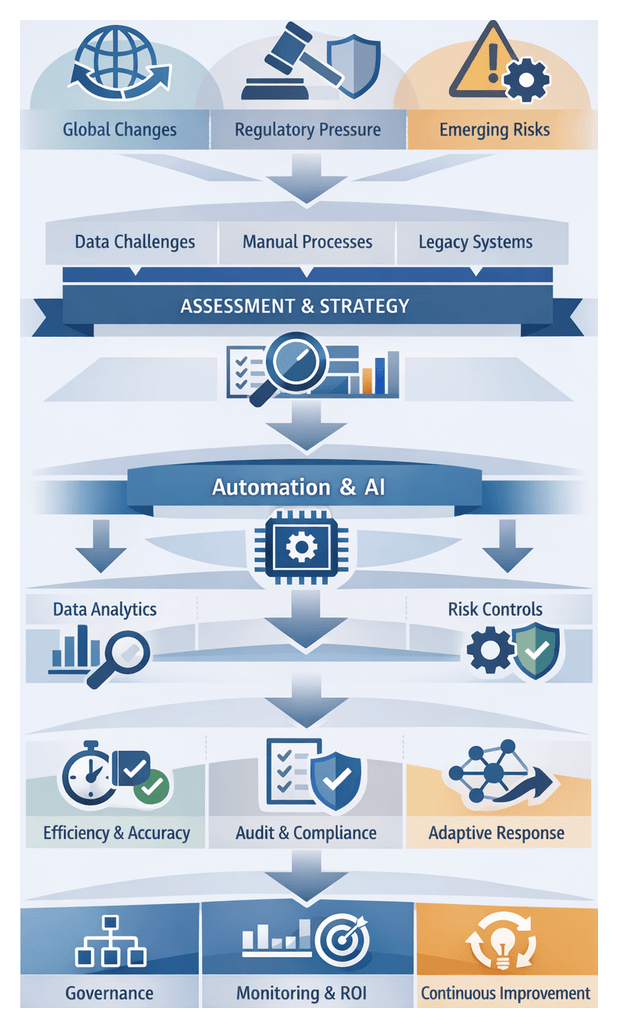

AI-Driven Automation Framework

Artificial intelligence presents a fundamentally different approach to compliance and risk management. Rather than relying on static rule-based engines and manual review, AI-driven automation leverages machine learning, natural language processing, and pattern recognition to analyze large, complex datasets and generate insights at scale. This paradigm reframes compliance controls as dynamic, continuously learning systems.

The framework comprises three interconnected layers:

- Data layer: Ingests structured and unstructured information from transaction feeds, document repositories, communication logs, and external sources. Advanced preprocessing—entity resolution, data normalization—ensures a unified, high-quality dataset.

- Analytics layer: Applies supervised and unsupervised machine learning to detect anomalies, classify documents, and predict risk trajectories. Natural language processing automates extraction of obligations from regulatory texts and internal policies, reducing manual interpretation time.

- Orchestration layer: Integrates analytic outputs into workflows and decision frameworks. Automated alerts, risk scores, and evidence packages feed into case management systems for rapid assignment, investigation, and escalation. Dashboards provide real-time visibility for risk committees and audit functions.

Adoption of AI is driven by several converging forces. The volume, velocity, and variety of data have grown exponentially, overwhelming traditional systems. Regulatory expectations now emphasize model risk management, data lineage, and explainable decisions. Technological maturity—cloud platforms, distributed computing, open-source frameworks—lowers barriers to deployment. Economic pressures and competitive dynamics demand efficiency gains, while proactive risk management is increasingly viewed as a source of market differentiation.

Organizations often pursue a phased approach: initial proofs of concept in high-volume, high-pain areas such as transaction monitoring or regulatory change management. Rapid prototyping and iterative refinement build confidence, while cross-functional governance ensures alignment with risk appetite. A center of excellence can eventually steward broader deployment, codifying best practices and ensuring consistent controls across business lines.

Comparative Predictive Modeling Approaches

Predictive modeling has become a cornerstone for anticipating threats and allocating resources efficiently. Organizations evaluate methodologies—classical statistical techniques to advanced machine learning—by analytic rigor, interpretability, and operational resilience. A multifaceted strategy harnesses the strengths of various approaches.

Regression Analysis and Statistical Modeling

Linear and logistic regression, along with generalized linear models (GLMs), quantify relationships between variables—transaction volume, client risk scores, policy change frequency—and compliance outcomes such as suspicious activity alerts or breach probabilities. Regression is prized for transparency: coefficients offer direct measures of variable impact, simplifying model governance and explanations to regulators. Regularization techniques (L1, L2) and stepwise selection mitigate overfitting, though strict linearity assumptions may understate complex interactions.

Time Series Forecasting Methods

For risk metrics tracked over time—daily compliance case volumes, fraud loss estimates, sanction screening hits—time series forecasting (ARIMA, exponential smoothing, state-space models) models seasonality, trend components, and residual patterns. These methods support early detection of anomalies relative to expected baselines. Hybrid frameworks incorporating external regressors or multivariate extensions (VAR, dynamic factor models) address interrelated series and exogenous events, balancing complexity with governance requirements for validation and interpretability.

Ensemble Learning and Hybrid Techniques

Ensemble methods—random forests, gradient boosting machines, stacking algorithms—offer superior predictive accuracy and noise resilience by combining multiple base learners. They excel in heterogeneous data environments where linear models fall short. However, interpretability challenges arise. Post-hoc tools like SHAP and LIME provide feature contributions, but regulators often demand intrinsically interpretable models. Organizations therefore deploy ensembles for high-volume screening, then apply simpler rule-based or regression models for decision justification.

Ensemble platforms such as IBM SPSS Statistics and Microsoft Azure Machine Learning services illustrate the trade-off between analytic power and governance overhead, requiring scalable infrastructure, robust feature pipelines, and specialized talent.

- Transparency versus performance: Regression and time series offer clarity; ensembles deliver accuracy at the cost of opacity.

- Scalability and resources: Classical methods are computationally efficient; advanced algorithms demand scalable infrastructure and expertise.

- Adaptability: Time series and ensembles adapt to concept drift; static regression models risk staleness without frequent updates.

A structured selection framework considers data characteristics, risk appetite, and governance maturity. Stress testing under extreme scenarios evaluates model resilience to regime shifts, sudden fraud tactics, or policy overhauls. Embedding domain knowledge—regulatory guidance nuances, illicit behavior typologies, jurisdictional risk differentials—through hybrid rule-based and machine learning approaches enhances detection rates while preserving interpretability.

Proactive Threat Mitigation

Integrating predictive analytics transforms organizations from reactive responders to anticipatory risk stewards. Rather than waiting for incidents or inquiries, leaders can forecast emerging threats, model scenarios, and deploy controls before issues escalate. This shift reshapes risk culture, decision-making, and strategic planning.

Anticipatory Risk Management

Predictive models inform strategic and operational decisions. Time series forecasting, ensemble learning, and scenario simulations identify patterns preceding compliance breaches or unusual behaviors. Risk committees and executives access probability-weighted forecasts of non-compliance incidents, enabling them to adjust risk appetites, refine controls, and align resource commitments to forecasted signals. Embedding predictive indicators into governance forums ensures that analytics directly influence policy updates, budget allocations, and audit planning.

Strategic Resource Allocation

Predictive risk scoring quantifies the likelihood and potential impact of future incidents across regions, product lines, or customer segments. Organizations shift from broad-brush allocations to targeted investments in high-risk areas. Financial institutions may redeploy monitoring personnel to transaction corridors flagged for elevated risk. Manufacturers might forecast supplier non-performance by blending external market indicators with procurement data, enabling preemptive engagement of alternative vendors or contractual safeguards.

Early Warning Systems

Enhanced early warning systems combine anomaly detection, clustering algorithms, and rule-based triggers to generate near-real-time alerts. Predictive models calibrate thresholds to balance false positives and sensitivity to subtle shifts. Key applications include:

- Regulatory reporting: Automated monitoring of filing deadlines and content anomalies weeks before submission windows close.

- Transaction surveillance: Pattern recognition uncovers evolving money-laundering typologies by identifying transaction clusters that deviate from customer profiles.

- Operational risk: Sensor data analysis in manufacturing and energy sectors anticipates equipment failures, reducing environmental incidents and compliance violations.

- Third-party risk: Social media sentiment analysis and external news monitoring surface reputational threats associated with suppliers or partners.

Organizational Resilience and Culture

Adoption of predictive analytics fosters a mindset of continuous vigilance. Cross-functional collaboration among risk, compliance, IT, and business units becomes essential for data sharing and refining analytic assumptions. Change management challenges include establishing clear accountability for model interpretation, defining escalation pathways for alerts, and communicating model limitations. Workshops, simulation exercises, and executive briefings reinforce how predictive signals translate into preemptive actions, cultivating trust and a culture of anticipatory stewardship.

Strategic Considerations for Predictive Risk Solutions

Effective deployment of AI-driven predictive risk solutions requires a holistic approach that spans methodology selection, data stewardship, interpretability, governance, and continuous improvement. The following principles guide risk leaders in harnessing predictive capabilities while managing associated risks.

First, predictive models function as components of a dynamic ecosystem rather than standalone tools. A portfolio approach—combining supervised, unsupervised, and ensemble techniques—captures diverse risk signals and balances analytic power with interpretability.

Second, data quality and governance are non-negotiable. Documented provenance, lineage tracking, and continuous validation safeguard against misleading signals and regulatory censure.

Third, interpretability and transparency enable stakeholder trust and regulatory compliance. Explainable AI methods—feature importance, surrogate models, rule extraction—translate algorithmic decisions into human-readable rationales.

Fourth, threshold calibration demands iterative alignment with operational realities. Pilot deployments, feedback loops, and dashboards comparing predicted scores to actual outcomes converge on optimal settings that balance detection rates and investigation costs.

Fifth, cross-functional governance ensures that predictive outputs integrate with policy frameworks, workflows, and strategic risk appetites. A multidisciplinary steering committee clarifies decision rights and streamlines issue escalation.

Sixth, the evolving regulatory environment mandates comprehensive documentation and audit trails—recording data inputs, feature engineering steps, model versions, and performance metrics—to align with supervisory expectations.

Seventh, vigilance against model drift and changing risk landscapes is essential. Automated monitoring for statistical drift, scheduled retraining cycles, and complementary detection mechanisms preserve model relevance and reliability.

Eighth, ethical considerations and bias mitigation must be embedded throughout the model lifecycle. Fairness assessments—disparate impact ratios, unawareness techniques—identify and correct unintended biases.

Ninth, investment in change management and upskilling ensures that risk professionals develop data literacy, statistical reasoning, and model interpretation skills, fostering effective collaboration between technologists and domain experts.

Finally, treat predictive models as evolving assets subject to continuous improvement. Benchmark against industry innovations, pilot emerging techniques such as graph-based risk networks or reinforcement learning, and refine governance frameworks to stay ahead of threat actors and regulatory changes.

- Adopt a portfolio of modeling techniques to capture diverse risk signals.

- Embed rigorous data governance throughout the model lifecycle.

- Pursue explainability by integrating explainable AI methods.

- Calibrate detection thresholds through iterative feedback loops.

- Align predictive outputs with cross-functional governance structures.

- Document data and model artifacts comprehensively for audit readiness.

- Monitor for drift and schedule regular model retraining.

- Assess and mitigate bias to ensure ethical outcomes.

- Invest in upskilling to bridge data science and domain expertise.

- View predictive solutions as living systems, continuously evolving.

By embracing these strategic imperatives, organizations can construct a resilient and responsible framework for anticipating risks, optimizing resource allocation, and maintaining regulatory alignment. AI-driven predictive analytics thus transforms compliance and risk management from cost centers into strategic enablers of resilience and sustainable growth.

Chapter 6: Automating Reporting and Regulatory Filings with Intelligent Systems

Current Compliance and Risk Management Challenges

Organizations in regulated industries confront a complex and dynamic environment of rules, expectations and enforcement actions. Geopolitical shifts, financial crises and cross-border market integration have accelerated regulatory change across finance, healthcare, energy and manufacturing sectors. Financial firms face anti-money laundering directives, consumer protection standards and capital adequacy rules. Healthcare providers must navigate patient privacy mandates, reimbursement frameworks and quality reporting requirements. Energy and manufacturing companies comply with variable environmental, health and safety regulations. Overlapping mandates in data protection, ethics and risk governance further amplify complexity.

This complexity strains traditional compliance frameworks. Manual controls, spreadsheets and legacy workflow systems are ill-equipped to reconcile disparate data sources at the necessary scale and speed. Compliance teams devote vast hours to gathering documentation, cross-referencing policies and validating exceptions. Risk officers rely on human review to detect emerging threats, increasing the probability of oversight and error. As a result, organizations face elevated exposure to fines, reputational harm and strategic disruptions.

Simultaneously, volumes of structured and unstructured data—from transaction records and communications archives to third-party assessments and regulatory updates—have grown exponentially. Conventional processes lack the scalability to manage this data deluge. Key risk indicators either emerge too late or remain buried in isolated silos, leaving organizations vulnerable to material losses when latent threats go undetected.

Manual frameworks also impede agility. When new rules arrive, teams must update control matrices, retrain staff and reconfigure checklists under tight deadlines. Audit cycles extend to accommodate documentation backfills, and strategic initiatives stall as resources shift to close compliance gaps. This misalignment between regulatory demands and operational capabilities forces compliance functions into reactive mode, turning processes into friction points rather than sources of assurance.

AI-Driven Transformation: Core Concepts

Artificial intelligence introduces a paradigm shift in compliance and risk management, moving from retrospective review to proactive intelligence. AI-driven automation augments human expertise by applying machine learning algorithms and natural language processing to high-volume data ingestion, pattern identification and decision support. Compliance practitioners focus on complex judgments, while AI handles routine analysis and monitoring.

- Data Ingestion and Harmonization—Automated pipelines process structured feeds alongside unstructured sources such as contracts, emails and regulatory bulletins, normalizing inputs into a unified risk data repository.

- Pattern Recognition and Anomaly Detection—Unsupervised learning models continuously scan data for deviations from norms, surfacing unusual transaction patterns, policy exceptions or control breakdowns for human review.

- Natural Language Understanding—Advanced NLP techniques extract entities, obligations and risk indicators from voluminous text, making compliance documents, guidance and third-party reports searchable and analyzable at scale.

- Predictive Analytics—Supervised learning models forecast emerging risk exposures by identifying precursors in historical data, enabling teams to calibrate controls before issues escalate.

- Workflow Orchestration—Intelligent automation coordinates task assignment, escalation and documentation, ensuring consistent rule application and preserving audit trails.

Integrated, these capabilities enable high-frequency monitoring, real-time exception handling and continuous dashboards. Compliance teams gain speed, precision and resilience, aligning operations with evolving regulatory expectations.

Converging trends make AI adoption imperative. Data volumes have outstripped human processing capacity. Regulators emphasize model risk management, transparency and data lineage. Cloud-based machine learning services, pre-trained language models and low-code tools enable pilots in weeks. Competitive pressures reposition compliance from cost center to strategic enabler, and multinational enterprises require scalable, consistent approaches across jurisdictions. Early adopters realize measurable benefits in cost reduction, risk mitigation and strategic agility.

Analytical Foundations of Document Classification

Document classification lies at the heart of automated reporting and regulatory filings. Organizations must interpret vast volumes of unstructured text—compliance manuals, regulatory notices and financial disclosures—and assign each document to appropriate reporting categories. Classification methods fall into four analytical approaches, each with distinct advantages and trade-offs:

- Rule-Based Systems—Rely on handcrafted patterns and ontologies. They offer transparent decision logic and low data requirements but demand continuous maintenance as regulations evolve.

- Classical Machine Learning—Techniques such as support vector machines, naïve Bayes classifiers and decision trees transform text into numeric features. They excel with structured, well-labeled data and allow transparent error analysis but struggle with semantic nuances.

- Deep Learning—Architectures like convolutional neural networks and transformer models automate feature extraction. Models such as BERT leverage contextual embeddings for superior semantic capture but require substantial computational resources and specialized expertise.

- Hybrid Frameworks—Combine rule filters with machine learning classifiers. For example, initial rule-based tagging pre-filters documents into broad categories, followed by transformer-based fine-grained classification for precision, controlling computational overhead.

Model performance evaluation extends beyond accuracy, precision, recall and F1 score. In compliance contexts, false negatives carry significant risk while false positives waste review resources. Organizations prioritize recall for high-risk categories and precision for low-tolerance environments. Receiver operating characteristic curves and area under the curve analyses inform threshold selection, while confusion matrices reveal inter-category misclassification patterns. Multi-label classification expands metrics to micro and macro averaging, balancing performance across frequent and rare classes.

Benchmarking against shared tasks—such as the LegalEval benchmarks or Financial Document Classification challenges—provides external validation. Cross-validation and out-of-sample testing guard against overfitting, while periodic re-evaluation aligns models with new regulations.

Regulatory taxonomies like XBRL tagging for financial disclosures or the EU sustainability taxonomy impose standardized labels and hierarchies. Ontology mapping aligns text to defined concepts, while knowledge graph embeddings capture semantic relationships for context-aware classification. Unsupervised clustering uncovers latent categories, enabling taxonomy evolution to address emergent regulatory topics.

Unstructured text environments introduce additional challenges. Documents in varied formats—PDFs, Word files and HTML notices—deploy OCR for extraction, risking errors and inconsistent metadata. Multilingual content demands language detection and translation pipelines. Domain adaptation via transfer learning with pre-trained models—for example using IBM Watson Natural Language Classifier or Amazon Comprehend—mitigates data scarcity but requires vigilant error analysis and recalibration to address domain drift.

Bias and fairness concerns arise if models prioritize common categories at the expense of minority classes. Structured audits, stakeholder reviews and continuous performance monitoring ensure balanced recall across all classes.

Strategically, document classification models triage incoming filings, route submissions to specialized teams and flag urgent regulatory changes. Explainability and auditability—supported by tools like Microsoft Azure Text Analytics—are vital. Decision logs capturing feature influences enable transparent reporting to regulators and internal auditors. Governance frameworks integrate classification metrics into risk dashboards, triggering review when performance falls below thresholds and engaging cross-functional committees to manage taxonomy updates.

Practical Impacts on Audit Readiness and Transparency