AI Agents Unlocked Insights into Automation and Strategic Impact

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Evolution of Autonomous AI Agents

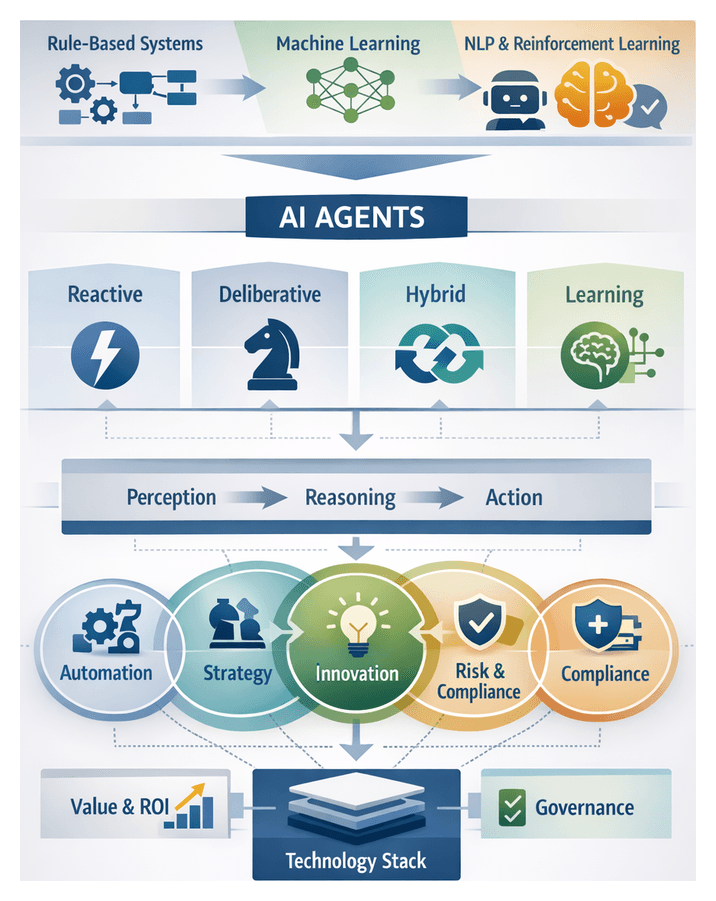

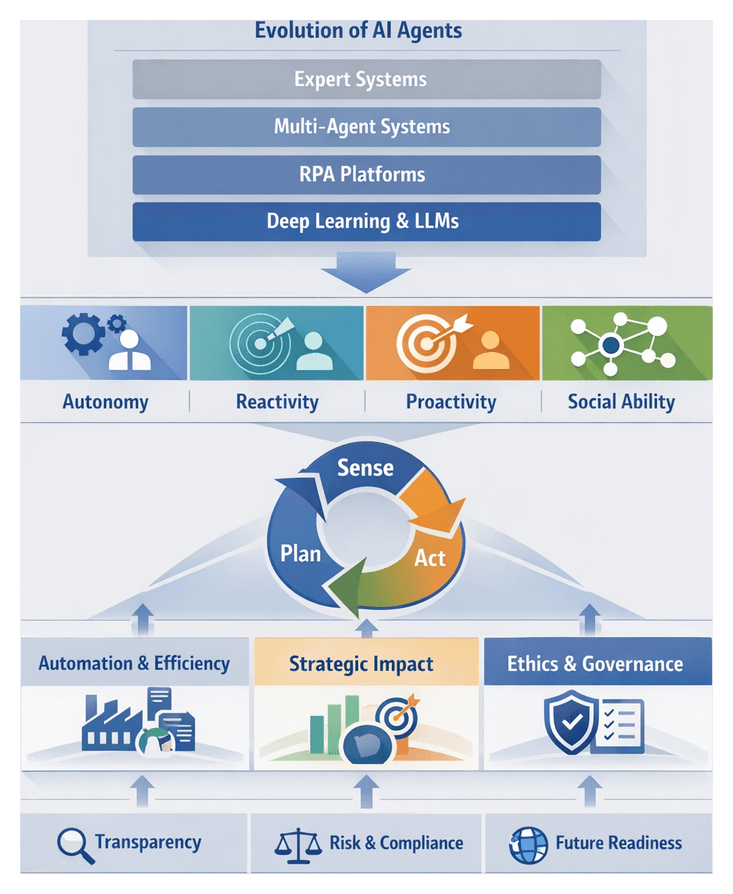

Autonomous AI agents have evolved through successive waves of innovation, each expanding the capabilities of systems to perceive, decide, and act with minimal human oversight. In the 1960s through the 1980s, early automation centered on rule-based “expert systems” that encoded domain knowledge into deterministic logic engines. Projects such as DENDRAL at Stanford and MYCIN demonstrated that chemical analysis and medical treatment protocols could be captured in rule libraries, while commercial deployments like XCON automated computer order configurations. Although these systems offered transparent decision paths and clear audit trails, they struggled to scale as business contexts shifted and rule bases ballooned.

In the 1990s, the advent of statistical machine learning ushered in a new paradigm. Algorithms such as decision trees, support vector machines, and ensemble methods like random forests became practical through increased compute power and the proliferation of digital data. Open source libraries, notably scikit-learn, standardized model development and evaluation, while enterprise analytics vendors embedded predictive modules into dashboard tools. IBM’s Watson platform famously combined multiple learning methods to answer complex queries, illustrating how data-driven models could adapt without explicit rule authoring.

The 2010s saw rapid advances in natural language processing and conversational interfaces. Deep learning architectures—first recurrent neural networks and later transformers—revolutionized text understanding and generation. Google’s BERT introduced contextual embeddings, and OpenAI’s GPT series reached unprecedented fluency with GPT-4. Cloud services such as Amazon Lex, Microsoft LUIS and Google Dialogflow democratized chatbot deployment, enabling organizations to automate customer support, HR help desks, and knowledge management workflows.

Deep reinforcement learning integrated deep neural networks with trial-and-error policy optimization, marking another milestone. DeepMind’s AlphaGo and OpenAI Five showcased superhuman performance in complex games, while industry applications emerged in warehouse robotics, autonomous driving simulations, and dynamic supply chain planning. These agents learned continuously from simulated environments, refining strategies without manual intervention.

Together, these waves—rule-based systems, statistical learning, NLP-driven interfaces, and deep reinforcement learning—have converged under market pressures demanding speed, adaptability, and resilience. Understanding this evolution equips leaders to recognize the foundations upon which next-generation autonomous agents are built.

Defining Autonomy and Agency

Autonomy denotes a system’s capacity to perceive its environment, make decisions based on that perception, and execute actions without direct human control. Agency extends this concept by emphasizing goal-directed behavior and adaptive strategy formation. Rather than viewing autonomy as a binary trait, industry practitioners interpret it along a spectrum—from reactive systems that trigger predefined workflows to deliberative architectures capable of planning, learning, and self-optimization.

Core interpretive models guide solution design:

- Reactive Architectures: Real-time responses to stimuli, ideal for domains like network security monitoring.

- Deliberative Architectures: Internal simulation and planning layers, suited for strategic decision support.

- Hybrid Architectures: Integration of reactive modules with deliberative planners to balance speed and long-term optimization.

- Learning-Driven Architectures: Continuous retraining with operational data, underpinning personalization engines and adaptive assistants.

These models inform the selection of frameworks such as OpenAI’s GPT-based agents or Google Bard for natural language reasoning, versus platforms like IBM Watson Assistant when auditability and compliance are critical.

Evaluative dimensions extend beyond performance metrics to include capability scope, adaptability, transparency, compliance readiness, integration flexibility, and risk profile. By applying these lenses, stakeholders align on the level of agency required to meet functional objectives and strategic goals.

Market Forces and Strategic Imperatives

Competitive differentiation increasingly hinges on autonomous capabilities. Organizations that deploy AI agents to automate complex workflows, personalize customer interactions, and generate real-time insights capture market share through faster time to decision and 24/7 service availability. Gartner’s PACE Layered Application Strategy positions AI agents as the agile layer interfacing with legacy back ends, enabling rapid responsiveness to evolving requirements.

Efficiency remains a top priority as digital scale amplifies labor costs and process complexity. Autonomous agents reduce manual effort in customer service triage, data reconciliation, and workflow orchestration, freeing professionals to focus on strategic and creative work. Consulting firms report typical operational cost reductions of 20–40 percent over two years, driven by labor efficiency, process reliability, and continuous learning loops that refine agent behavior over time.

Innovation itself is a strategic imperative. AI agents serve as experimental platforms for prototyping new products and business models at minimal cost. Agents can simulate user interactions, gather real-time feedback, and adapt features on the fly—accelerating design sprints in industries from insurance underwriting to pharmaceutical trial management.

Resilience and risk mitigation have risen in importance amid global uncertainties. Agents enforce compliance policies, flag deviations in real time, and generate audit trails, bolstering accountability in regulated sectors. Financial institutions leverage agents for anti-money laundering checks by correlating transaction patterns across disparate sources, while clinical research uses trial-monitoring agents to ensure protocol adherence.

Workforce transformation accompanies agent adoption. Human-in-the-loop models pair agent-driven analysis with human validation, optimizing talent deployment and enhancing job satisfaction. In recruitment, AI agents screen resumes and schedule interviews, improving time to hire and candidate quality.

Industry-specific drivers illustrate these forces in context. In retail, agents power dynamic pricing, predictive inventory replenishment, and conversational shopping assistants. Manufacturing relies on digital twins and predictive maintenance, while logistics employs routing agents to optimize delivery networks. Healthcare and utilities deploy clinical decision support agents and grid management systems that balance renewable integration and demand.

Learning Objectives and Analytical Frameworks

This eBook equips leaders with a structured approach to autonomous AI agents through clear learning objectives and cross-cutting frameworks.

- Contextualize historical and market forces shaping agent technologies.

- Define and categorize autonomous agents to assess applicability across business challenges.

- Build a business case that addresses competitive, operational, and strategic imperatives.

- Understand core technologies—machine learning, NLP, knowledge graphs, and planning algorithms.

- Apply architectural principles—modularity, orchestration, and integration patterns—for scalable and resilient systems.

- Evaluate domain-specific impacts in IT operations, marketing, finance, HR, and compliance.

- Measure impact and establish continuous feedback loops for sustainable value.

- Implement governance and ethical safeguards aligned with emerging regulations.

To support these goals, the following frameworks guide strategic decision-making:

- Evolutionary Lens: A timeline of agent milestones showing how each technological advance addressed market needs.

- Capability-Autonomy Taxonomy: Categorization of agents by autonomy level and task complexity for clear solution comparisons.

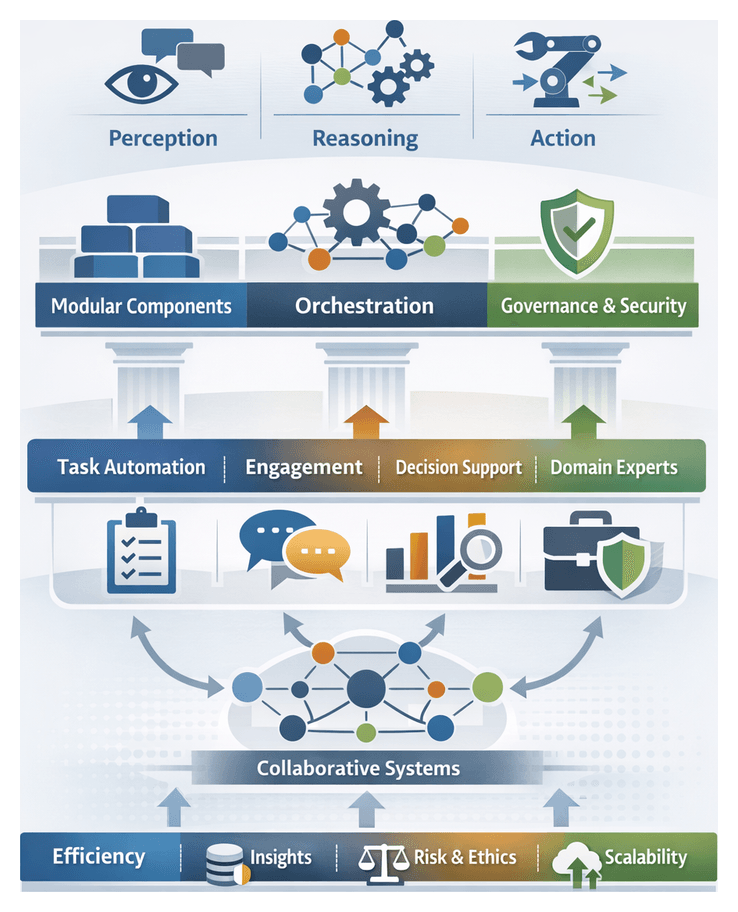

- Technology Stack Decomposition: Analysis of perception, reasoning, and action layers to illustrate interoperability of learning, NLP, and planning components.

- Architecture and Orchestration Patterns: Trade-offs among monolithic, microservice, event-driven, and hybrid designs for agility and scalability.

- Domain Impact Matrices: Mapping of agent use cases to operational and strategic outcomes across functions.

- Value Realization and ROI Models: Quantitative and qualitative approaches for estimating cost avoidance, revenue enhancement, and user satisfaction.

- Governance and Ethical Safeguards: Principles and control points for transparency, fairness, and accountability.

Key Challenges and Considerations

Successful agent adoption requires navigating technical, organizational, and governance hurdles. Key considerations include:

- Data Quality and Availability: Incomplete, siloed, or poorly annotated data undermines learning processes and can embed biases.

- Integration Complexity: Legacy systems and human workflows demand extensive API development and refactoring.

- Organizational Readiness: Cultural resistance, skill gaps, and change management often pose greater risks than technical obstacles.

- Scalability Constraints: Proof-of-concepts may not scale without sufficient compute resources, maintenance processes, and retraining capabilities.

- Governance and Ethical Risks: Autonomous decisions require transparent explainability, bias detection, and ethical guardrails to maintain trust.

- Regulatory Uncertainty: Evolving data privacy and algorithmic accountability mandates necessitate ongoing compliance monitoring.

- Vendor Lock-In: Proprietary platforms without open standards can restrict future innovation; assess extensibility and community support.

- Cost-Benefit Trade-Offs: Align expectations around ROI, balancing quick wins with strategic long-term investments.

Keeping these challenges in view ensures that agent initiatives deliver sustainable competitive advantage while balancing ambition with pragmatism.

Chapter 1: Foundations of Autonomous AI Agents

Evolution of Autonomous Agent Technologies

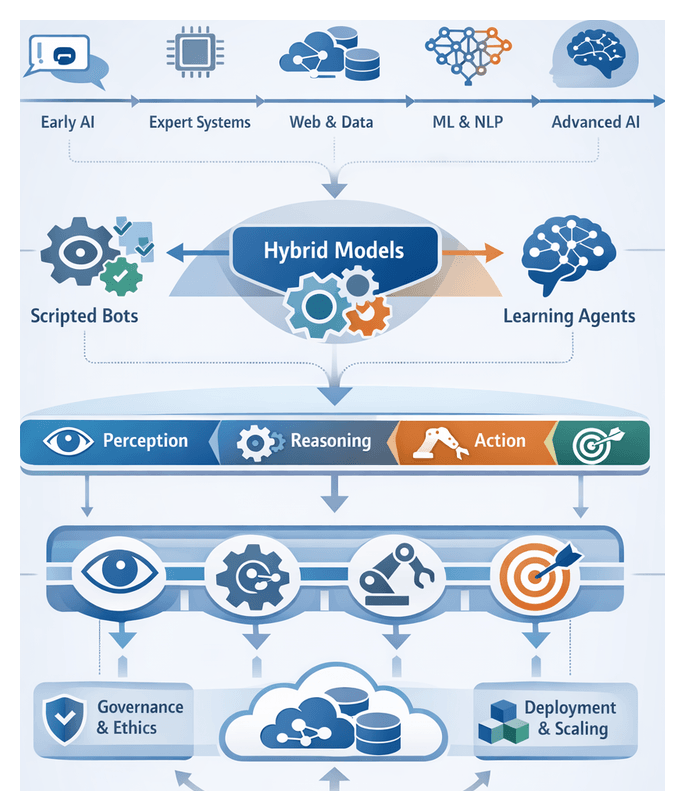

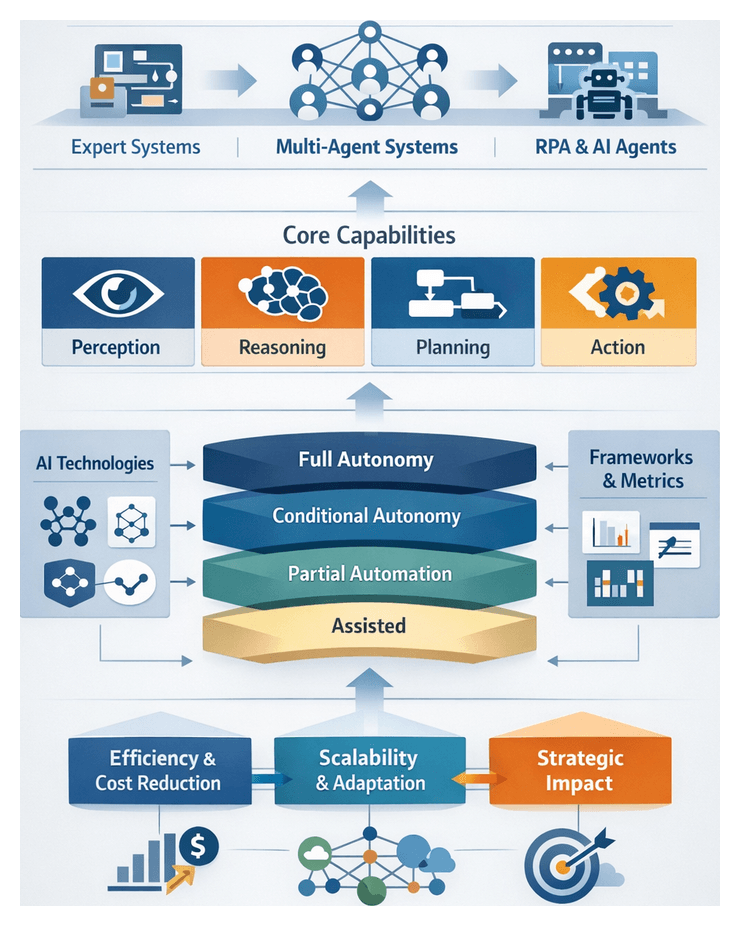

Over six decades, intelligent software agents have evolved from academic curiosities to strategic enablers across industries. Early prototypes such as ELIZA demonstrated scripted conversational patterns in the 1960s, paving the way for 1980s expert systems that embedded domain expertise in knowledge bases and inference engines to support medical diagnosis and financial analysis. The emergence of the internet and distributed computing in the 1990s spurred demand for automated solutions capable of processing data at scale and coordinating workflows across networks. Breakthroughs in statistical machine learning and natural language processing in the early 2000s enabled agents to learn from data and handle unstructured language inputs. In the 2010s, advances in deep neural networks, cloud infrastructure, and GPU acceleration accelerated capabilities. Frameworks such as Rasa and Microsoft Bot Framework introduced ecosystems for building conversational assistants, while research into reinforcement learning and cognitive architectures produced agents capable of trial-and-error adaptation. Today, autonomous AI agents leverage large language models such as OpenAI’s GPT-4, hybrid planning algorithms, and integrated perception, reasoning, and action modules to execute multi-step workflows with minimal human oversight. Key milestones include the shift from rule-based systems to statistical learning, integration of unstructured data processing, and orchestration of autonomous workflows.

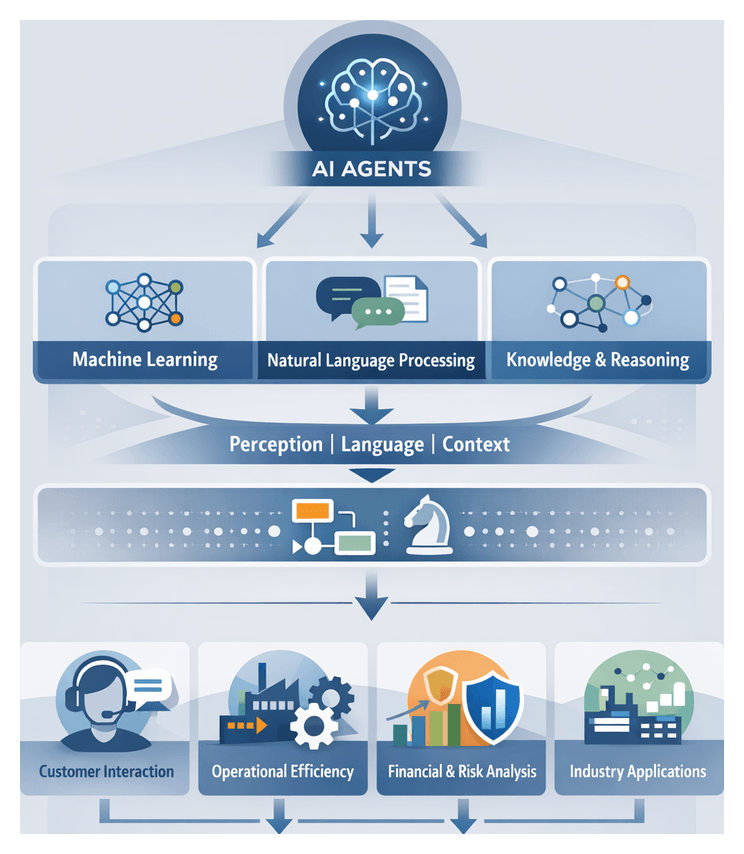

Defining Autonomous AI Agents

An autonomous AI agent is a software entity with four core properties: perception, reasoning, action, and goal orientation. The perception layer interprets inputs from structured data streams, text, and sensors using techniques such as natural language processing, vision modules, and anomaly detection. The reasoning component applies algorithms—including knowledge representations, ontologies, and automated planning—to evaluate options, infer context, and prioritize objectives. Action modules execute decisions by invoking APIs, generating communications, or modifying data. A goal-driven architecture ensures that the agent adapts its plan dynamically in response to new information, optimizing strategies to achieve defined outcomes. Framed within disciplines such as artificial intelligence, decision theory, and human–computer interaction, this definition provides a conceptual baseline for assessing readiness across data quality, algorithmic maturity, integration flexibility, and governance structures.

Scripted Bots Versus Learning Agents

Conceptual Distinctions

Scripted bots and learning agents represent two ends of an automation spectrum. Scripted bots follow predetermined workflows and conditional rules, excelling in stable environments with deterministic requirements. Learning agents leverage machine learning and reinforcement learning to infer patterns, adapt to novel inputs, and optimize decisions over time. In scripted solutions, intelligence resides in comprehensive rule sets; in learning agents, intelligence emerges through data-driven model refinement. Hybrid approaches combine rule-based scaffolding with adaptive learning to balance predictability and flexibility.

Industry Perspectives on Scripted Bots

Scripted bots are widely used in finance, human resources, customer service, and IT support to automate high-volume tasks such as invoice processing, identity verification, and incident resolution. They offer transparent behavior, ease of compliance, and rapid deployment within weeks using existing process documentation. Key evaluation metrics include throughput, accuracy, and failure rates under controlled inputs. Limitations arise when processes involve dynamic data, unstructured inputs, or evolving rules, leading to “rule fatigue” and brittle automations. A maturity model assessing process stability, exception frequency, and rule complexity helps identify candidates best suited for scripted automation.

Analytical Frameworks for Learning Agents

Learning agents are categorized by autonomy and capability maturity. Foundational agents use supervised learning for classification, entity extraction, and recommendation tasks. Intermediate agents apply reinforcement learning and unsupervised methods for policy optimization, anomaly detection, and clustering. Advanced agents synthesize information across domains, negotiate outcomes, and self-improve via continuous feedback loops. Organizations should pilot learning agents in low-risk contexts—such as support ticket routing based on sentiment analysis—before scaling to critical decision support or resource allocation roles. Governance frameworks emphasize explainability, bias detection, and traceable decision provenance to build trust and meet regulatory requirements.

Comparative Evaluation Criteria

- Adaptability Versus Predictability: Scripted bots deliver consistent outcomes; learning agents offer adaptability with potential unpredictability.

- Scalability of Intelligence: Rule scaling incurs linear complexity; learning agents leverage model retraining and transfer learning for broader generalization.

- Governance Overhead: Rule-based systems favor auditability; learning agents require robust model governance, bias monitoring, and explainability mechanisms.

- Development Lifecycle: Scripted bots follow traditional software lifecycles; learning agents follow data-centric lifecycles of collection, training, validation, and continuous improvement.

- Operational Maintenance: Script modifications demand manual updates; learning agents depend on automated retraining pipelines and monitoring for concept drift.

Hybrid Architectures and Transitional Models

Many enterprises adopt hybrid models that integrate rule-based scaffolding with learning-driven enhancements. For instance, a support workflow may begin with a scripted decision tree and escalate complex queries to a learning agent trained on historical resolutions. Fraud detection systems often layer deterministic rules over anomaly detection models, combining known signature filters with machine learning to identify novel threats. The “automation continuum” framework maps this journey, illustrating how optimal balances between scripted and learning components evolve as data maturity, governance frameworks, and organizational capabilities advance.

Strategic Alignment and Governance

Organizational Alignment

Shared foundational definitions of autonomous agents enable senior leaders, architects, and business units to prioritize initiatives that deliver competitive advantage. Clarity around autonomy levels, learning requirements, and integration points informs project selection, budget allocations, and governance structures. By anchoring strategic discussions in a unified agent taxonomy, organizations reduce misalignment risks, foster cross-functional collaboration, and sequence investments in data infrastructure, security controls, and model lifecycle management to match maturity.

Risk Management and Governance Contexts

Defining agent autonomy—from deterministic rule enforcement to adaptive learning—allows governance teams to tailor control frameworks to specific risk profiles. Classification schemas inform approval workflows and testing protocols: recommendation agents may require periodic bias assessments and human-in-the-loop validation, while routine extraction agents need standard security reviews. Embedding these definitions into risk and compliance matrices creates nuanced policies that balance innovation velocity with regulatory requirements and ethical considerations.

Investment and Resource Prioritization

Executives evaluate autonomous agent investments against defined benchmarks such as self-learning rates, interpretability, and integration flexibility. A phased development taxonomy—prototype, pilot, scale, and continuous improvement—guides resource allocation for infrastructure, talent, and data operations. Clear demarcation of development phases enables accurate budgeting for model retraining, security assessments, and governance activities, reducing overruns and improving stakeholder confidence in agent roadmaps.

Analytical Frameworks for Foundational Assessment

Consulting and practitioner frameworks assess agent foundations through maturity models, value chain analyses, and risk–benefit matrices. Capability maturity models chart progression from scripted workflows to fully autonomous agents, ensuring conceptual clarity at each stage. Value chain analyses map agent use cases to competitive differentiation points, while risk–benefit matrices juxtapose autonomy levels with operational resilience and reputational impact. These frameworks surface dependencies—data governance, integration standards—and identify areas for foundational refinement before scaling.

Industry-Specific Application Scenarios

Foundational requirements vary by sector. Financial services demand auditability and explainability for trading and credit scoring agents. Manufacturing prioritizes integration with IIoT networks, low latency, and resilience for adaptive control. Healthcare emphasizes patient safety, privacy, and clinical validation, necessitating human-in-the-loop approvals. Retail focuses on personalization and real-time inventory management, orienting foundations toward customer data orchestration and omnichannel elasticity. Tailoring foundational definitions to sector imperatives ensures relevance and compliance.

Core Attributes of Autonomous Agents

Autonomous agents combine eight functional characteristics:

- Perception and Contextual Awareness: Ingest and interpret data from sensors, applications, and text using NLP and vision modules to establish situational models.

- Autonomy and Proactiveness: Plan and execute actions aligned with goals, from reactive responses to self-directed, adaptive behaviors.

- Adaptive Learning: Employ supervised classifiers, reinforcement learning, and continuous pipelines to refine models and improve decision accuracy.

- Interoperability: Integrate with legacy systems and third-party platforms via standardized APIs and middleware.

- Explainability: Apply feature attribution and policy visualization to audit decisions and ensure compliance.

- Security and Privacy: Enforce access controls, encryption, and privacy principles to protect data and meet regulations such as GDPR and CCPA.

- Scalability and Resilience: Use microservices, containers, and event-driven orchestration to support variable workloads and recover from failures.

- Ethical and Governance Alignment: Embed bias mitigation, fairness metrics, and human oversight within lifecycle management.

Evaluating and Prioritizing Agent Initiatives

Decision makers should map agent capabilities to business objectives, maturity, and risk appetite across eight lenses:

- Business Alignment: Prioritize use cases with measurable KPIs, such as reduced mean time to resolution or increased conversion rates.

- Data Readiness: Assess completeness, consistency, and governance of data sources for reliable model training and inference.

- Organizational Adoption: Evaluate change management capacity, talent availability, and collaboration frameworks across AI governance teams, data stewards, and domain experts.

- Technology Maturity: Examine platforms for machine learning, knowledge graphs, and orchestration, balancing turnkey solutions with open-source flexibility.

- Integration Complexity: Identify dependencies, transformation requirements, and bottlenecks, leveraging ESBs and API gateways for streamlined connectivity.

- Governance and Compliance: Define processes for model validation, ethical reviews, and audit trails to satisfy internal policies and external regulations.

- Total Cost of Ownership: Estimate costs for development, infrastructure, maintenance, model retraining, and governance oversight, including data labeling and security testing.

- Time to Value: Adopt phased deployments that deliver early wins and enable incremental scaling based on lessons learned.

Managing Risks and Limitations

Autonomous agents introduce novel risks that require proactive mitigation:

- Model Opacity and Bias: Conduct regular audits to detect unintended correlations and ensure fairness.

- Data Drift: Monitor performance degradation, trigger retraining pipelines, and validate alignment with current contexts.

- Overreliance and Deskilling: Maintain human oversight, escalation protocols, and training to preserve expertise and critical thinking.

- Regulatory Uncertainty: Track AI legislation, engage in industry consortia, and update governance frameworks as standards evolve.

- Security Vulnerabilities: Integrate threat modeling, penetration testing, and runtime anomaly detection to protect against adversarial manipulation.

- Integration Technical Debt: Favor modular architectures and review dependencies to avoid brittle solutions and upgrade roadblocks.

Roadmapping for Sustainable Deployment

Organizations should translate insights into actionable roadmaps through six practices:

- Cross-Functional Governance: Establish a steering committee with representatives from business, IT, data science, legal, and compliance to oversee use case prioritization, standards, and ethics.

- Modular Reference Architecture: Define service contracts for perception, reasoning, learning, and actuation layers. Use containerization and orchestration to decouple components and streamline updates.

- Phased Adoption Roadmap: Sequence pilot initiatives, scaling criteria, and impact milestones. Begin with low-risk, high-value scenarios to build momentum and expand as governance and capabilities mature.

- Data and Talent Ecosystem: Develop robust pipelines for continuous training and invest in upskilling teams on MLOps, AI ethics, and domain knowledge.

- Continuous Feedback Loops: Embed performance metrics, user feedback channels, and automated monitoring to refine algorithms, governance policies, and strategic priorities.

- Culture of Responsible Innovation: Encourage experimentation within guardrails, recognize teams that achieve ethical compliance and business impact, and disseminate learnings to foster a community of practice.

Chapter 2: Enabling Technologies Behind AI Agents

Core Technologies Powering Autonomous Agents

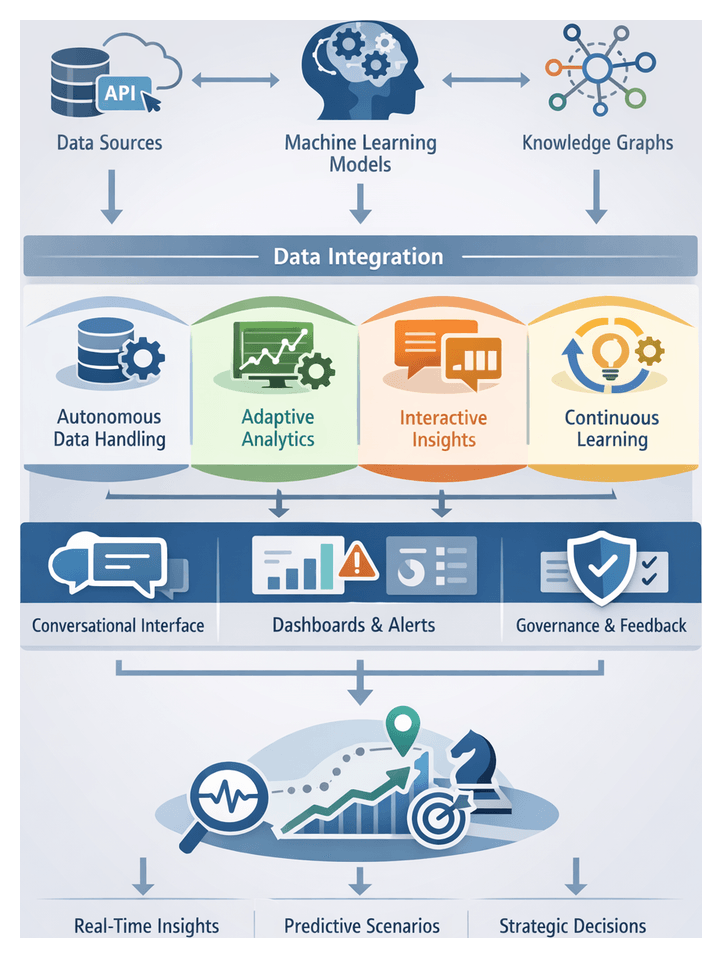

Autonomous AI agents leverage a layered technology stack—machine learning, natural language processing, knowledge representation and planning algorithms—to perceive environments, reason over data and execute actions with minimal human intervention. Recent advances in these domains have converged to enable use cases ranging from intelligent customer engagement to real-time operational management. Understanding the capabilities, dependencies and representative tools in each layer is essential for organizations seeking to architect robust agent solutions.

Machine Learning Foundations

Machine learning equips agents with predictive and adaptive capabilities. Key paradigms include supervised learning for classification and regression, unsupervised techniques for clustering and anomaly detection, reinforcement learning for sequential decision making, and deep learning architectures—such as convolutional neural networks and recurrent networks—for high-dimensional data modeling. Production-ready frameworks like TensorFlow and PyTorch support distributed training, hardware acceleration and integration with data pipelines, enabling agents to ingest sensor streams, user interactions and log data at scale.

Natural Language Processing and Understanding

Natural language processing allows agents to interpret, generate and interact via human language. Core components include tokenization, syntactic parsing, embedding representations, named entity recognition, intent classification and dialogue management. Transformer-based models such as BERT and generative systems like GPT-4 underpin modern language understanding and generation. Open-source libraries—spaCy and Hugging Face Transformers—and managed services like Google Cloud Natural Language accelerate development of conversational interfaces, sentiment analysis pipelines and text summarization modules.

Knowledge Representation and Reasoning

Knowledge frameworks supply the structured context needed for inference and decision making. Knowledge graphs and ontologies encode entities, relationships and business rules in machine-readable formats, enabling semantic search, rule evaluation and data integration across heterogeneous sources. Graph databases such as Neo4j and managed services like Amazon Neptune deliver scalable storage, query performance and ontology management. Standards like RDF and OWL ensure interoperability, while vector stores capture semantic proximities for similarity search and memory augmentation.

Planning, Orchestration and Decision Algorithms

Planning algorithms enable agents to sequence actions, allocate resources and adapt to dynamic conditions. Classical search methods (A\*, plan graphs), hierarchical task networks, dynamic programming and Monte Carlo tree search drive strategic and operational decision making. Workflow orchestration tools—such as OpenAI Gym for simulation, Apache Airflow for pipeline management and Kubernetes operators for service coordination—support both experimentation and production deployment of multi-stage processes.

Together, these core technologies form an interlocking ecosystem. Machine learning and NLP confer perception and language capabilities; knowledge graphs supply contextual depth; and planning frameworks drive purposeful action. Recognizing their interdependencies is the first step toward designing autonomous agents that reliably deliver business value.

Analytical Frameworks for Technology Integration

Evaluating and integrating AI technologies requires structured frameworks that align technical maturity with strategic objectives. Organizations employ technology maturity matrices, risk registers and composite scorecards to benchmark capabilities, anticipate challenges and guide investments across predictive intelligence, language understanding and knowledge integration dimensions.

Assessing Machine Learning Maturity

Machine learning initiatives move from ad hoc experimentation to governed, enterprise-scale deployment. Key evaluation criteria include data quality scorecards, bias-variance trade-off analyses, model robustness under data drift and explainability measures. Tools such as IBM Watson OpenScale and DataRobot trace feature contributions, monitor model performance and support regulatory reporting. Reinforcement learning projects leverage simulation environments to evaluate convergence speed, reward stability and transferability to real-world contexts.

Evaluating NLP Pipelines

NLP evaluation spans intrinsic metrics—perplexity, cross-entropy and benchmark scores on GLUE/SuperGLUE—to extrinsic measures like end-to-end task success and user satisfaction. Fine-tuning strategies are assessed for training convergence, catastrophic forgetting and inference latency. Open-source toolkits (spaCy, Hugging Face models) facilitate rapid prototyping, while dialogue systems employ metrics on fallback rates, average turn length and escalation frequency to refine conversational policies.

Benchmarking Knowledge Structures

Knowledge graphs are evaluated on connectivity measures, average path lengths and cluster coefficients to gauge navigability and expressiveness. Ontology assessments consider coverage breadth, hierarchical depth and axiomatic soundness. Temporal knowledge graphs introduce metrics for consistency across time-stamped snapshots. Governance frameworks track schema versioning, access controls and update lineage to ensure reliability and compliance in regulated industries.

Strategic Fusion and Risk Management

At advanced maturity stages, organizations integrate NLP interfaces to capture user intent, ML models to infer probabilities and knowledge graphs to constrain decision pathways. Composite scorecards combine performance, cost and risk metrics to prioritize initiatives, while risk registers assign ownership and mitigation controls for bias, data inconsistency and model drift. This strategic fusion supports complex scenarios such as regulatory risk assessment, personalized financial advice and adaptive supply chain orchestration.

Emerging Industry Use Cases

Converging AI technologies have given rise to high-impact applications across sectors. Below are representative use cases that illustrate how autonomous agents deliver strategic and operational value.

- Customer Service and Conversational Interfaces: NLP and dialogue management underpin agents that handle inquiries, perform triage and execute transactions. Platforms like IBM Watson Assistant and OpenAI ChatGPT API are assessed on first-contact resolution, customer effort score and cost-to-serve metrics.

- Manufacturing and Predictive Maintenance: ML models trained on sensor data predict equipment failures; rule-based planners schedule maintenance. Industrial IoT platforms—GE Predix, Siemens MindSphere—use knowledge graphs to capture asset hierarchies and failure modes, enhancing MTBF and reducing unplanned downtime.

- Financial Forecasting and Risk Management: Supervised and unsupervised learning models forecast markets and detect anomalies. Knowledge graphs map exposures and instrument hierarchies. Solutions such as Bloomberg Quant Platform integrate ML outputs into portfolio optimization, stress testing and regulatory reporting.

- Healthcare Diagnostics and Clinical Decision Support: ML on imaging and EHR data, combined with NLP extraction and knowledge graphs, recommend care protocols. Services like Google Cloud Healthcare API and IBM Watson Health measure diagnostic accuracy, time-to-diagnosis and clinician adoption rates.

- Supply Chain and Logistics Optimization: Demand-forecasting models, planning algorithms and NLP‐driven risk analysis optimize inventory and routing. Platforms such as SAP Integrated Business Planning enable scenario planning, reducing working capital and improving service levels.

- Marketing Personalization and Recommendation Engines: Collaborative filtering, content-based and hybrid models drive real-time personalization. Salesforce Einstein illustrates how knowledge graphs and sentiment analysis enhance offer sequencing and dynamic pricing.

- HR Analytics and Talent Management: NLP resume parsing, ML scoring and knowledge graphs mapping competencies enable workforce planning. LinkedIn Talent Insights supports diversity metrics, succession modeling and retention forecasting.

- Legal Research and Compliance Monitoring: NLP review of contracts, knowledge graph encoding of regulations and automated workflows reduce review cycles. Solutions like Thomson Reuters Westlaw track false positive rates, audit readiness and human-in-the-loop checkpoints.

- Research and Knowledge Discovery: Knowledge graphs connect scientific findings; topic modeling surfaces trends; semantic search powers exploration. Semantic Scholar accelerates hypothesis generation and cross-disciplinary insights.

- Energy Management and Smart Grid: Load forecasting, dispatch planning and knowledge graphs model grid dependencies. IoT and digital twin frameworks—Schneider Electric EcoStruxure—measure load balance, demand response and renewable integration.

Across domains, leaders emphasize data quality, lineage and governance, value chain analysis and risk-reward evaluation to transition from proofs-of-concept to sustainable, scalable deployments.

Governance, Scalability and Integration Imperatives

Deploying autonomous agents at scale requires addressing technological maturity, data and model governance, performance constraints, security, ethics, interoperability and total cost of ownership. A phased, evidence-based approach aligns initiatives with strategic objectives and risk management frameworks.

- Technology Maturity Assessments: Evaluate readiness of ML models, NLP modules and knowledge graph infrastructures through pilot programs and performance benchmarks. Avoid premature integration of prototypes that may introduce instability or maintenance burdens.

- Data Quality and Model Governance: Implement provenance tracking, schema versioning and continuous validation. Use platforms such as TensorFlow Extended and Hugging Face Accelerated Inference to embed governance checkpoints, detect drift and enforce compliance.

- Scalability and Performance: Balance horizontal and vertical scaling strategies. Leverage containerization, microservices and observability tools for capacity planning, real-time monitoring and latency management to meet service-level objectives.

- Security and Compliance: Adopt end-to-end encryption, role-based access controls and audit trails. Integrate security-by-design principles, regular penetration testing and threat modeling to protect sensitive data and automated actions under regulations such as GDPR and HIPAA.

- Ethical and Bias Risk Mitigation: Establish bias detection algorithms, fairness constraints, ethics committees and impact assessments. Ensure transparent explainability of decision pathways and involve multidisciplinary teams in scenario planning.

- Vendor Lock-In and Interoperability: Favor open standards and container-agnostic architectures. Use formats like ONNX (Open Neural Network Exchange) and industry-standard messaging protocols to preserve portability and flexibility.

- Total Cost of Ownership and ROI Validation: Account for infrastructure, retraining, monitoring and talent acquisition costs. Develop financial frameworks that map cost drivers to measurable outcomes, with ongoing reassessments and feedback loops.

By critically addressing these imperatives, organizations can mitigate risks, govern autonomous behaviors and unlock the strategic advantages of AI agents across enterprise functions.

Chapter 3: Architecture and Design Principles

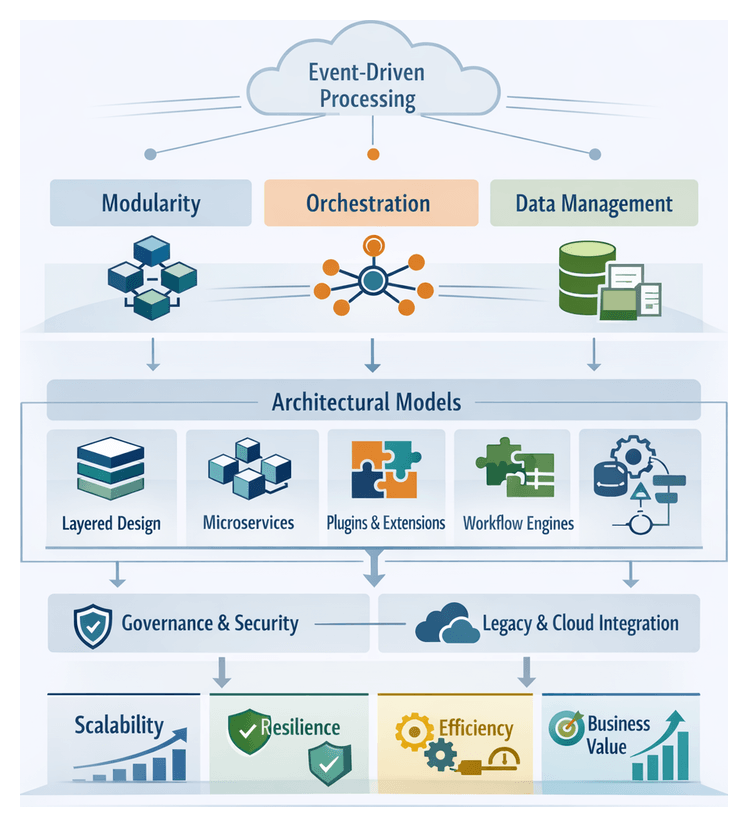

Framing Autonomous Agent Architecture

Autonomous AI agents have evolved from experimental prototypes into mission-critical components that drive strategic outcomes in modern enterprises. Establishing a coherent architecture is essential to balance rapid feature delivery with security, compliance, and operational resilience. A well-structured framework decomposes complex capabilities into modular building blocks, aligns cross-functional teams, and manages risk across cloud platforms, legacy systems, and real-time data streams.

By treating architecture as a strategic asset rather than a technical afterthought, organizations unlock advantages in scalability, resilience, maintainability, governance, and interoperability. Clear separation of concerns and robust orchestration layers ensure that expanding workloads, fault tolerance, policy enforcement, and seamless integration with enterprise applications occur without unmanageable technical debt.

Core Concepts in Agent Design

Every architectural decision for autonomous agents rests on foundational concepts that guide design and evaluation:

- Modularity: Decompose agent responsibilities into cohesive modules—perception, reasoning, planning, execution—that can be developed, tested, and scaled independently.

- Orchestration: Implement supervisory layers to coordinate task assignment, state synchronization, and inter-module communication, supporting complex, multi-step workflows.

- Event-Driven Processing: Favor asynchronous, message-centric interactions to enhance throughput and responsiveness under variable loads.

- Data Management: Define clear data contracts, transformation pipelines, and semantic enrichment processes for knowledge retrieval, analytics, and reporting.

- Extensibility: Design plugin-style interfaces or microservices that permit new skills, integrations, and learning capabilities to be added without disrupting operations.

- Observability: Embed logging, metrics, and tracing at module boundaries to enable real-time health checks, root-cause analysis, and continuous improvement.

These guiding principles establish a shared vocabulary for architects, engineers, and business stakeholders to communicate requirements, trade-offs, and quality attributes throughout the design lifecycle.

Foundational Architectural Models

Several established models serve as blueprints for scaling autonomous agents. Organizations often adopt hybrid patterns to satisfy performance, governance, and resilience requirements:

- Layered Architecture: Components are organized into hierarchical tiers—presentation, orchestration, data—promoting decoupling and reuse when agents interface with diverse channels and data stores.

- Microservices Architecture: Functionality is decomposed into independently deployable services communicating via lightweight APIs or event streams. This model excels in fault isolation and team autonomy.

- Plugin or Agent-as-a-Service: Core capabilities are hosted centrally while domain-specific extensions load dynamically, accelerating customization and specialized skill updates.

- Orchestrated Workflow Engines: A central engine, often rules-driven or state-machine based, choreographs multi-step processes across agents and external systems. It suits complex tasks requiring human-in-the-loop interventions.

Hybrid approaches frequently combine layered and microservices patterns with event-driven orchestration to align agility with governance objectives.

Modularity and Orchestration: Analytical Insights

From an analytical perspective, modularity and orchestration are interdependent facets that determine an organization’s capacity to adapt autonomous agents to changing business, compliance, and performance demands.

Conceptualizing Modularity: Modularity entails logical separation, performance isolation, and interface clarity. Metrics for coupling and cohesion gauge the quality of decomposition. High cohesion and low coupling enable parallel development, targeted testing, and incremental upgrades.

Frameworks such as Domain-Driven Design partition functionality into bounded contexts aligned with business domains—customer engagement, compliance monitoring, predictive analytics—achieving traceability between technical modules and strategic objectives.

Interpreting Orchestration Models: Orchestration governs module interactions, exception flows, and policy enforcement. Centralized orchestration offers predictability and auditability, while event-driven choreography enhances resilience and scalability. Evaluations consider visibility into runtime behavior, policy enforcement granularity, and support for dynamic workflow adjustments.

Balancing Flexibility and Control: Modularity fosters flexibility but can challenge governance when many services require consistent policy application. Orchestration embeds organizational policies—data access rules, security protocols, quality thresholds—into execution fabric, counterbalancing modular autonomy.

Analysts employ dual-axis maturity frameworks plotting modular depth against orchestration intelligence. Organizations with deep modular hierarchies and AI-enhanced rule engines achieve rapid innovation and resilient operations.

Metrics for Architecture Health

Quantitative metrics underpin continuous assessment of modularity and orchestration effectiveness. Key indicators include:

- Change Lead Time: Time from code change initiation to successful deployment. Shorter lead times reflect high modularity.

- Incident Isolation Rate: Proportion of faults confined to a single module, signaling effective failure containment.

- Policy Compliance Score: Percentage of workflows that automatically enforce governance rules, indicating orchestration rigor.

- Inter-Module Latency: Average response time between service calls, measuring performance impact of decomposition.

Integrating these metrics into dashboards allows decision-makers to correlate architectural adjustments with outcomes such as reliability, user satisfaction, and regulatory adherence.

Aligning Architecture with Business Objectives

Architecture ultimately serves strategic goals. Early engagement with stakeholders ensures design decisions map directly to desired outcomes:

- Stakeholder Workshops: Define success criteria, risk tolerances, and compliance requirements with business analysts, security leads, and operations teams.

- Use Case Prioritization: Sequence agent capabilities based on strategic impact, cost savings, and integration complexity.

- Value Stream Mapping: Visualize end-to-end workflows to pinpoint where agent autonomy yields maximal efficiency and risk reduction.

- Architectural Roadmapping: Plan phased deliveries that balance quick wins with incremental rollout of advanced features—continuous learning loops, multi-agent coordination.

Grounding architecture in business drivers secures executive support, optimizes resource allocation, and enables adaptation as priorities evolve without sacrificing stability.

The Strategic Imperative for Adoption

Competitive pressures, operational demands, and innovation imperatives have made autonomous AI agents a strategic necessity across industries:

- Market Dynamics: Digital-native entrants use agents for personalized experiences, real-time supply chain optimization, and accelerated service development, raising the bar for incumbents.

- Operational Efficiency: Agents automate repetitive tasks—data extraction, incident triage, customer inquiries—reducing toil, minimizing errors, and reallocating human effort to high-value work.

- Innovation Acceleration: Continuous insights, automated experimentation, and co-creation with human teams drive rapid hypothesis testing and solution refinement.

- Workforce Transformation: Agents augment human capabilities, prompting reskilling, role evolution, and talent attraction focused on AI oversight and strategic collaboration.

Delayed or partial adoption exposes organizations to market share loss, diminished data leverage, increased technical debt, and compliance vulnerabilities.

Interpretive Frameworks for Decision Makers

Leaders employ analytical lenses to guide agent initiatives:

- Agency Spectrum Analysis: Position agents along a continuum from passive executors to fully autonomous self-optimizers.

- Perception-Reason-Action Loop: Decompose functionality into sensory inputs, inferential processes, and operational outputs to identify performance bottlenecks.

- Task Decomposition Frameworks: Translate high-level objectives into discrete, agent-executable tasks to enable modular orchestration.

- Value Chain Integration: Assess where agents can augment or replace human effort across procurement, production, distribution, and customer engagement.

- Risk-Reward Quadrants: Balance efficiency gains against risks of accuracy, bias, and system fragility.

These frameworks provide common terminology for cross-functional teams and support scenario-based architectural reviews that stress-test modular and orchestration capabilities against future workloads and regulatory changes.

Key Considerations and Limitations

Successful agent deployments require addressing inherent constraints:

- Data Quality and Availability: Incomplete or biased data undermines agent reliability and risks amplifying errors.

- Integration Complexity: Legacy systems and siloed data stores can impede seamless orchestration and interoperability.

- Governance Overhead: Monitoring tools, policy enforcement mechanisms, and audit trails demand investment to mitigate operational risks.

- Ethical and Regulatory Risks: Domains such as finance and healthcare impose stringent compliance, fairness, and explainability requirements.

- Talent and Skill Gaps: Shortages of practitioners versed in AI technologies and domain workflows can delay adoption and compromise quality.

- Change Management: Cultural inertia and process realignment require structured communication and leadership commitment.

- Security and Privacy: Exposed APIs, third-party models, and insufficient access controls create vulnerabilities to breaches and adversarial manipulation.

By acknowledging these limitations up front, organizations can establish realistic timelines, allocate resources effectively, and prioritize remediation strategies.

Bridging Strategy to Implementation

Embedding autonomous AI agents within enterprise roadmaps demands a disciplined approach that unites architecture, metrics, governance, and change management. Organizations should:

- Incorporate modular and orchestration maturity assessments into governance forums.

- Align investment in containerization, workflow engines, and monitoring platforms with strategic business cases.

- Define clear key performance indicators—cycle time reduction, compliance adherence, user satisfaction—to measure agent impact.

- Establish continuous learning and upskilling programs to prepare teams for evolving roles.

- Implement scenario-based stress tests to uncover architectural debt and guide roadmap adjustments.

Through iterative delivery, rigorous measurement, and stakeholder alignment, enterprises can transform autonomous AI agents from isolated experiments into integrated, resilient capabilities that deliver sustainable competitive advantage.

Chapter 4: Automating Operations and IT Management

The Rise of Autonomous AI Agents in IT Operations

Autonomous AI agents have emerged as a critical enabler for modern IT operations, shifting routine monitoring, incident response and system maintenance from manual processes to intelligent automation. Faced with distributed cloud environments, microservices architectures and relentless demands for uptime and responsiveness, traditional operational models struggle to maintain agility and reliability.

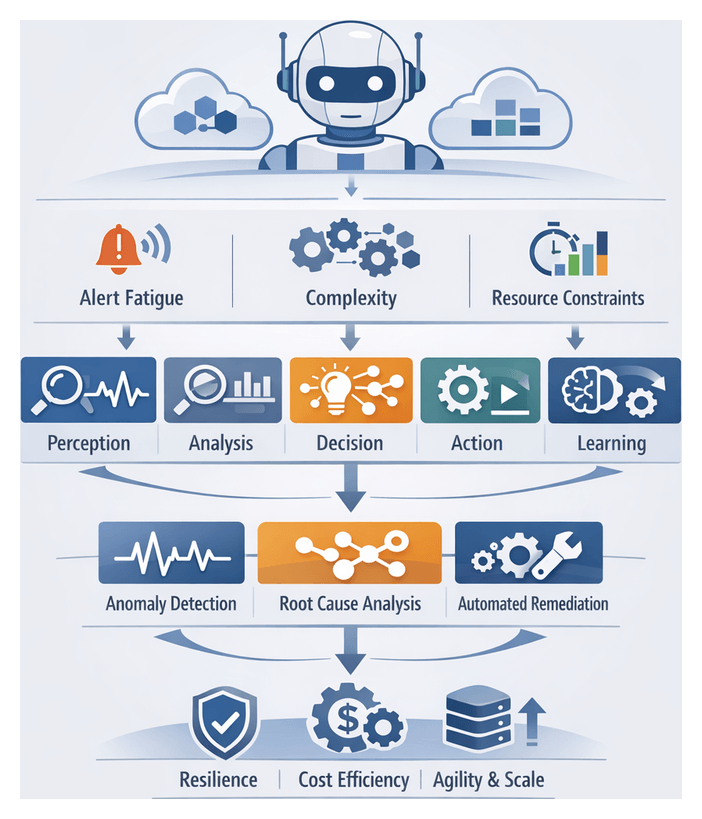

Organizations confront a host of operational challenges that elevate risk and consume valuable capacity:

- Alert fatigue from high volumes of notifications across diverse monitoring tools

- Root cause complexity due to interconnected services and dynamic dependencies

- Resource constraints as skilled personnel are tied up in repetitive tasks

- Rapid scale and velocity of containers, serverless functions and ephemeral workloads

- Stringent reliability expectations driven by service-level objectives and regulatory demands

Autonomous AI agents address these pressures by continuously ingesting telemetry, detecting anomalies, diagnosing issues, executing remediation playbooks and learning from outcomes. Unlike static scripts or rule-based workflows, they adapt over time through feedback loops, refining thresholds and optimizing actions to accelerate incident resolution and reduce operational toil.

Defining Autonomous AI Agents

- Perception: Continuous ingestion and contextualization of logs, metrics and traces

- Analysis: Anomaly detection algorithms and correlation engines to identify emerging incidents

- Decision-making: Probabilistic models and causal inference to select remediation strategies

- Action: Automated execution of workflows such as scaling resources, restarting services or invoking diagnostics

- Learning: Feedback loops that refine detection thresholds and response tactics based on past results

Historical Evolution

Automation in IT operations has advanced through distinct phases. Early monitoring tools generated alerts when static thresholds were crossed. Integration frameworks then enabled simple orchestration scripts for routine tasks. The advent of AIOps platforms introduced machine learning–driven anomaly detection and alert correlation, reducing noise and clustering related events. Solutions such as IBM Watson AIOps and Dynatrace pioneered dynamic baselining and root cause analysis. Today’s autonomous agents represent a third phase, weaving detection, diagnosis and remediation into a single, adaptive layer of intelligence that scales across dynamic environments.

Strategic Imperatives for Adoption

- Operational resilience: Early anomaly detection and standardized remediation minimize downtime and maintain service continuity

- Cost efficiency: Automation of repetitive tasks lowers total cost of ownership and frees teams for strategic reliability projects

- Agility and scalability: Real-time orchestration aligns with containerized and serverless infrastructures, eliminating manual bottlenecks

Core Capabilities and Strategic Benefits

To deliver transformative value, autonomous agents integrate multiple capabilities into a unified framework:

- Anomaly Detection: Advanced statistical and unsupervised learning models for time series outlier identification

- Event Correlation: Graph-based and probabilistic algorithms to group related alerts into coherent incidents

- Root Cause Analysis: Causal inference and dependency mapping to pinpoint underlying triggers

- Automated Remediation: Executable playbooks that modify system state, scale resources or invoke external services

- Knowledge Retention: Continuous learning mechanisms that capture incident histories and best practices for future refinement

These core functions translate into three primary benefits:

- Operational resilience: Predictable reliability through proactive fault detection and self-healing actions

- Cost efficiency: Reduction of manual toil and reallocation of human expertise to high-value engineering tasks

- Agility and scalability: Programmatic interfaces for dynamic scaling, patching and configuration management

Measuring Impact: Reliability, Toil Reduction and ROI

Core Metrics for Reliability

Organizations track established Site Reliability Engineering indicators to quantify agent impact:

- Service Level Objectives (SLOs) for uptime and latency targets

- Error budgets defining acceptable margins of failure

- Mean Time to Detect (MTTD) and Mean Time to Acknowledge (MTTA)

- Mean Time to Resolve (MTTR) and change failure rates

Agents optimized for continuous anomaly detection and automated remediation can drive statistically significant improvements in these metrics, enabling near-real-time alerting and faster recovery.

Quantifying Toil Reduction

Toil encompasses repetitive, manual operational tasks that scale with service growth. To measure toil reduction, organizations analyze workload categories such as alert triage, log analysis, patch application and incident documentation. Key evaluation criteria include:

- Task coverage: Percentage of manual tasks fully or partially automated

- Time saved: Aggregate human hours reclaimed per period

- Quality of execution: Accuracy and consistency compared to manual processes

- Impact on staff utilization: Shift of workforce allocation toward strategic projects

High-impact deployments often achieve over a 50 percent reduction in manual alert triage within the first quarter, with continued gains as agents mature.

Analytical Frameworks and Interpretive Models

- Reliability ROI Model: Correlates downtime costs, preventable incidents, MTTR reduction and salary savings to forecast payback period and net present value. Sensitivity analyses account for learning curves and diminishing returns.

- Operational Risk Index: Quantifies service risk exposure by weighting incident severity, failure frequency and automated remediation percentage. Guides prioritization of agent deployment to high-risk domains.

Case Illustrations

- E-commerce platform: A log-analysis agent reduced high-severity database incidents by 35 percent, cut manual health checks by 45 percent and saved $120,000 monthly in operational costs.

- Financial services firm: An autonomous remediation agent accelerated critical patch application by 80 percent, reduced unpatched vulnerabilities by 60 percent and improved compliance audit scores.

- Telecommunications provider: An anomaly detection agent improved MTTD from 20 minutes to under five, reduced traffic-anomaly incidents by 30 percent and freed 40 percent of field engineering time for network optimization.

Balancing Automation Benefits and New Failure Modes

To avoid unintended consequences, organizations establish guardrails around agent actions:

- Monitor false positive and false negative rates to prevent alert fatigue or undetected incidents

- Maintain detailed logs of agent decisions for audit and post-incident review

- Integrate automated actions with change management pipelines and approval workflows

- Ensure agents scale reliably during major outages or traffic spikes

Continuous Refinement

- Comparative A/B testing of agent-augmented versus manual workflows

- Error budget tracking to monitor impact on service-level agreements

- Inclusion of agent contributions in post-mortem analyses for ongoing improvement

- Monitoring machine learning performance metrics such as precision, recall and latency

Strategic Insights for Decision Makers

- Prioritize high-impact domains with significant incident costs and toil intensity for early adoption

- Invest in robust observability platforms that unify logs, metrics and traces

- Define governance frameworks for agent authority, escalation criteria and human oversight

- Foster cross-functional collaboration among reliability, development, security and business teams

- Plan for scalable evaluation templates to maintain consistency as adoption expands

Deployment Scenarios and Organizational Readiness

Autonomous agents derive strategic value when aligned with specific operational contexts. Leaders must interpret agent capabilities through domain-specific lenses and match deployment scenarios to organizational objectives and risk profiles.

Enterprise IT Environments

- Proactive capacity management across mainframes, virtual clusters and legacy systems

- Automated incident triage integrated with enterprise service management platforms

- Predictive fault detection and automated failover coordination for mission-critical services

Cloud and Hybrid Architectures

- Predictive autoscaling based on traffic forecasts rather than reactive thresholds

- Cost governance by identifying underutilized assets and recommending rightsizing actions

- Hybrid synchronization of on-premises and cloud resources to minimize service disruptions

DevOps and Continuous Delivery Pipelines

- Pipeline health monitoring to detect flaky tests, code quality regressions and configuration drifts

- Release approval orchestration by collating security, performance and stakeholder inputs

- Rollback intelligence recommending targeted rollbacks based on root cause analysis

Site Reliability Engineering and Incident Management

- Alert correlation to cluster related events and reduce on-call burnout

- Automated runbook triggers for predefined remediation actions under human oversight

- Preliminary incident report generation synthesizing timeline events and impact estimates

Network and Security Operations

- Threat hunting automation correlating intrusion detection events with user behavior analytics

- Policy enforcement by automatically remediating deviations from network segmentation rules

- Vulnerability response prioritizing patches based on exploit likelihood and asset criticality

Edge Computing and IoT Operations

- Predictive maintenance through local analysis of sensor streams and just-in-time interventions

- Local orchestration of firmware updates and configuration changes without continuous connectivity

- Data triage at the edge to filter and enrich streams before central transmission

Regulated Industries

- Comprehensive logging of agent actions to satisfy audit and compliance requirements

- Strict data handling policies to protect sensitive customer or patient information

- Alignment of automated changes with formal change advisory board processes

Adoption Models and Maturity

- Pilot initiatives in low-risk environments to validate efficacy and ROI assumptions

- Centers of Excellence to define standards, share best practices and govern expansion

- Phased rollouts aligned with operational priorities and capacity for change absorption

Assessing Readiness and Risk

- Risk–reward matrices to balance operational gains against skill gaps and data quality challenges

- Governance alignment through steering committees spanning IT, security, compliance and business stakeholders

- Skill development programs for data science, AI strategy and agent oversight capabilities

- Change management communication plans to cultivate trust and address concerns early

Best Practices and Key Caveats

Strategic Alignment

- Link agent KPIs to business outcomes such as MTTR, service availability and cost per ticket

- Embed automation roadmaps within IT service management and reliability maturity models

- Periodically revalidate objectives to prevent mission drift and retire outdated agents

Data Quality and Observability

- Unify logs, metrics and traces into a single context layer

- Enforce data governance policies for schema evolution, retention and access controls

- Use adapters or middleware to instrument legacy systems and translate proprietary formats

Incremental Deployment

- Begin with low-risk, high-volume tasks to tune policies and escalation criteria

- Define success metrics, feedback loops and rollback procedures for pilot programs

- Roll out agents progressively across development, staging and production environments

Cross-Functional Collaboration

- Include operations, development and security teams in automation design discussions

- Maintain transparent decision logs for audit and stakeholder review

- Convene regular sessions combining quantitative data with qualitative feedback

Continuous Monitoring and Adaptive Tuning

- Implement adaptive algorithms that adjust sensitivity based on performance metrics

- Monitor agent-generated alert fatigue indices to detect excessive false positives

- Establish cadences for model retraining, rule updates and playbook refinements

Key Caveats to Address

- Overautomation and Alert Fatigue: Excessive low-value alerts can desensitize operators and obscure genuine incidents.

- Platform Maturity Dependency: Early-stage observability frameworks may lack robust APIs, limiting integration scope.

- Shadow Automation: Decentralized scripts can lead to redundancy, conflicts and maintenance overhead.

- Security and Compliance Risks: Privileged agents must use secure credentials and generate audit trails for regulatory adherence.

- Opaque Decision Models: Black-box behavior can erode trust; explainability frameworks are essential.

- Hidden Total Cost of Ownership: Ongoing investments in retraining, playbook updates and topology tracking can exceed initial estimates.

- Scalability Constraints: Agents tuned for specific workloads may struggle in hybrid or multi-cloud environments with diverse configurations.

Governance Frameworks

- Maturity Model Assessment: Plot current and target states across detection accuracy, response sophistication and governance rigor to guide investment roadmaps.

- Risk-Benefit Analysis Matrix: Categorize use cases by impact severity and frequency to determine autonomy levels and human oversight requirements.

Autonomous AI agents represent a paradigm shift in IT operations. Their promise of reduced manual toil, accelerated incident resolution and enhanced system reliability can be realized only through disciplined alignment with strategic objectives, robust data foundations, phased deployments, cross-functional collaboration and vigilant governance. By embracing these best practices and addressing key caveats, organizations can harness the transformative potential of operational automation while preserving resilience, compliance and human-centered oversight.

Chapter 5: AI Agents in Sales, Marketing and Customer Engagement

Evolution of Autonomous Agent Technologies

Over the past four decades, the landscape of intelligent systems has undergone a series of paradigm shifts. In the 1980s and 1990s, expert systems attempted to encapsulate human expertise in rule-based engines for domains such as medical diagnosis and financial planning. Although these early systems laid the foundation for automated decision-making, they were constrained by brittle rule sets and an inability to handle unanticipated scenarios.

By the late 1990s and early 2000s, research in multi-agent systems introduced software entities capable of cooperating, negotiating and coordinating to achieve complex objectives. Academic frameworks such as JADE and coordination models like TSpaces illustrated how agents could form dynamic coalitions and distribute computation across networked environments. Despite their promise, integration challenges and a lack of standardized protocols limited enterprise adoption.

The 2010s witnessed the rise of robotic process automation with platforms such as UiPath, Automation Anywhere and Blue Prism. These tools enabled organizations to script repetitive user-interface interactions at scale, reducing manual effort and error rates. However, their deterministic workflows lacked the cognitive agility needed to interpret unstructured data or adapt to evolving business rules.

The emergence of large-scale machine learning and natural language processing has given rise to a new class of autonomous AI agents that perceive context, reason over diverse data sources and execute multi-step workflows. Solutions such as ChatGPT, Microsoft Copilot, Watson Assistant and Dialogflow demonstrate the ability to generate human-like responses, extract key entities and integrate with downstream systems via APIs. These agents can handle customer inquiries, perform predictive maintenance checks and orchestrate complex service operations with minimal human intervention.

Concurrently, enterprises face talent shortages in areas such as data science and IT support, escalating customer expectations for personalized experiences and an explosion of structured and unstructured data sources. This confluence of market forces has accelerated the imperative to adopt intelligent automation systems that learn continuously, make decisions in real time and adapt orchestration across siloed applications. The current challenge is to harness these autonomous AI agents to build resilient, scalable and contextually aware business processes.

At the enterprise level, organizations are combining knowledge graphs and semantic ontologies with API-driven architectures to model domain entities and relationships. This integration supports real-time context sharing across distributed agents and legacy systems, enabling more nuanced reasoning and coordination in complex workflows.

Conceptualizing Autonomous AI Agents

At their core, autonomous AI agents are software constructs endowed with four interrelated capabilities: perception, reasoning, planning and action. Unlike traditional bots that execute predefined scripts, these agents interpret new inputs, update internal state and adjust their strategies dynamically.

- Perception: Ingesting structured and unstructured data streams such as transactional records, sensor feeds and natural language inputs.

- Reasoning: Modeling knowledge, evaluating potential actions and weighing outcomes against objectives and constraints.

- Planning and Decision Making: Generating multi-step workflows or dialogue protocols that progress from current states to desired goals.

- Action and Adaptation: Executing plans via system integrations or user interfaces and refining behavior through feedback loops.

Autonomy exists on a continuum rather than as a binary attribute. Organizations commonly map agent use cases to discrete levels of oversight and sophistication:

- Assisted Agents: Offer alerts or suggestions with final human approval.

- Partial Automation Agents: Execute routine tasks under defined conditions, invoking human oversight for exceptions. An example is Amazon Lex-powered conversational flows with scripted branching.

- Conditional Autonomy Agents: Adapt behavior within policy constraints, escalating or learning when encountering novel scenarios.

- Full Autonomy Agents: Assume end-to-end responsibility, self-monitor performance and continuously update policies through learning pipelines.

Mapping specific business scenarios—such as conversational assistants, forecasting tools or supply-chain orchestrators—to these autonomy levels guides architectural decisions and governance models. For instance, an IT incident response agent in assisted mode may only suggest remediation steps, while a full autonomy deployment could identify anomalies, predict root causes and implement corrective actions without human approval.

Interpretive Frameworks

To evaluate and prioritize agent initiatives, decision makers use analytical lenses such as the Capability-Outcome Matrix, which cross-references functional competencies—natural language understanding, predictive analytics and robotic process automation—with desired strategic outcomes like cost reduction, revenue growth and compliance assurance. By plotting capabilities against objectives, organizations identify portfolio gaps and investment priorities.

The Socio-Technical Integration Model emphasizes the interplay between agent autonomy and human workflows, examining trust calibration, role redefinition and change management. Recognizing that agents operate within organizational cultures, this model underscores the need for aligned processes and governance structures to enable seamless human-agent collaboration.

Theoretical Perspectives and Metrics

Cognitive architectures such as Soar and ACT-R propose layered modules for perception, working memory and decision control, while robotics paradigms adopt sense-plan-act loops to continuously refine an agent’s internal model of its environment. Industry reference architectures adapt these concepts into modular systems comprising knowledge graphs, decision orchestration engines and machine learning pipelines for supervised, unsupervised and reinforcement learning.

Analysts quantify autonomy through metrics that capture responsiveness, proficiency, adaptability and compliance. Decision latency measures the time from event perception to action execution, while success rate tracks completed tasks without human correction. Adaptation index evaluates performance improvement when agents encounter novel data, and compliance adherence gauges alignment with policy constraints. Benchmarking against pilot studies or industry reports enables organizations to calibrate maturity levels and set performance targets.

Strategic Implications and Roadmapping

The way an enterprise frames its autonomy ambitions shapes its strategic roadmap. A full autonomy-first framing can drive ambitious research and rapid proof-of-concept initiatives but may introduce governance risks and unintended behaviors. Conversely, a phased approach that begins with assisted modes can build user trust and deliver quick wins, laying the foundation for more advanced deployments.

Leaders adopt a hypothesis-driven methodology, launching pilot programs to validate theoretical assumptions, measuring outcomes against frameworks like the Capability-Outcome Matrix and iterating on architectural designs informed by cognitive models. This calibrated framing ensures that investments in autonomous agents yield sustainable value while maintaining organizational readiness for increasingly sophisticated autonomy levels.

Common challenges in agent deployments include data quality issues, integration complexity and establishing user trust through transparent decision explanations. Enterprises address these through master data management practices, standardized APIs and human-in-the-loop checkpoints that refine agent reasoning and build stakeholder confidence.

Key Chapter Objectives

- Trace the evolution of agent technologies from rule-based systems to cognitive, self-directed agents.

- Define core concepts and autonomy taxonomies to align stakeholder expectations and guide architecture.

- Assess market drivers and strategic urgency for agent adoption across business functions.

- Identify enabling technologies—machine learning, natural language processing and planning algorithms—underpinning agent capabilities.

- Apply interpretive frameworks to prioritize use cases and measure performance metrics.

- Anticipate governance, ethical and operational considerations essential for responsible integration.

- Develop a phased roadmap for iterative adoption, continuous improvement and enterprise-scale deployment.

Transforming Go-To-Market with Autonomous Agents

In sales and marketing, autonomous AI agents redefine the go-to-market model as a dynamic marketplace ecosystem. Agents collaborate with human teams, channel partners and systems to deliver synchronized, personalized experiences across the customer journey, replacing rigid, campaign-centric approaches.

Strategic shifts include:

- Value Proposition Evolution: Agents experiment with micro-variations of offers, bundles and messaging in real time, leveraging A/B testing frameworks to refine propositions based on live customer feedback rather than fixed quarterly cycles.

- Adaptive Pricing Mechanisms: Through integration with pricing engines and competitive intelligence feeds, agents adjust price tiers, discounts and promotional offers dynamically, optimizing for individual propensity scores and market conditions.

- Continuous Lifecycle Management: The linear funnel transforms into an always-on loop where agents monitor engagement signals, detect churn risks, re-engage lapsed accounts and recommend upsell or cross-sell opportunities without manual triggers.

- Collaborative Channel Networks: Autonomous agents coordinate messaging and handoffs across digital, field and partner channels, ensuring consistent brand voice while adapting execution to channel-specific dynamics.

Real-Time Personalization and Segmentation

Living segmentation allows agents to refine audience clusters continuously. By ingesting streaming transactional data, behavioral analytics and third-party feeds, agents can detect emergent micro-segments—niches of high-propensity customers responding to novel triggers. Frameworks guiding investment include:

- Contextual Relevance Framework: Aligns content and offers with real-time variables such as location, device and recency of interactions.

- Predictive Segment Dynamics: Prioritizes segments based on forecasted conversion velocity and lifetime value to allocate engagement resources effectively.

- Feedback Loop Integration: Feeds performance metrics from agent interactions back into segmentation models, automating model retraining and driving continuous refinement.

Channel Orchestration and Attribution

Agents operating across email, chat, social media, digital advertising and field platforms require a unified orchestration layer to manage both sequential and parallel engagements:

- Sequential Coordination: Agents negotiate handoffs between channels, escalating from bot-driven interactions to human sales reps when intent thresholds or risk criteria are met.

- Parallel Engagement: Multiple agents support a single customer concurrently, delivering personalized content recommendations while other agents manage transactional inquiries or technical support.

Attribution analytics must evolve from last-click and last-touch models to causal inference frameworks that leverage dwell times, sentiment shifts and micro-conversion events captured by agents. This granular attribution clarifies the true drivers of pipeline acceleration and informs budget allocation, channel investment and ongoing optimization of agent behaviors.

Organizational Structures and Talent Shifts

As agents assume routine tasks—lead qualification, follow-up scheduling and basic personalization—human roles shift toward strategic oversight, creative problem solving and relationship management. Emerging roles include:

- Agent Strategists: Define decision logic, engagement rules and performance metrics that steer agent behavior.

- Data Interpreters: Translate agent-generated insights—such as micro-segment discoveries and sentiment trends—into actionable business strategies.

- Experience Curators: Design human-agent collaboration points, ensuring seamless handoffs and preserving brand authenticity.

Matrixed “fusion teams” co-locating data scientists, marketing technologists and sales leaders enable rapid iteration of agent strategies and align accountability for outcomes. Without these structural and talent shifts, organizations risk underutilizing agent capabilities or encountering cultural resistance that slows adoption.

Governance, Compliance and ROI Considerations

Scaling autonomous agents in regulated or consumer-facing markets requires robust governance frameworks. Policy domains include data privacy and security under regulations such as GDPR and CCPA, message approval workflows, escalation protocols for high-risk interactions and auditability of decision logs. Transparent disclosures of AI involvement and consent management enhance customer trust and reinforce brand integrity.

Strategic investment models must account for the continuous nature of agent programs, including data pipeline maintenance, model retraining and orchestration tuning. Organizations measure success using a balanced scorecard:

- Cost Avoidance Metrics: Reduced manual follow-up time, shorter sales cycles and lower content production costs.

- Revenue Acceleration Indicators: Uplifts in deal velocity, win rates and incremental revenue from agent-sourced opportunities.

- Customer Equity Enhancements: Improvements in lifetime value, retention rates and referral volumes driven by elevated engagement quality.

- Strategic Agility Scores: Speed of campaign launches and new segment entry enabled by agent workflows.

Early adopters report engagement rate uplifts of 15 to 30 percent and reductions in sales cycle length by up to 25 percent, illustrating the measurable impact of agent-driven go-to-market strategies on top-line growth and operational efficiency.

Strategic and Operational Takeaways for Engagement Automation

The deployment of autonomous AI agents in customer engagement requires a holistic approach that marries strategic vision with operational rigor. The following distillations draw on the analytical, technical and organizational dimensions discussed earlier.

Strategic Insights

Autonomous agents redefine competitive advantage by shifting the focus from cost efficiency to the orchestration of personalized, context-aware experiences. Success depends on aligning agent objectives with enterprise goals, embedding experimentation into workflows and leveraging agents as compounding sources of strategic insight.

- Personalization at scale drives higher engagement and deeper relationships.

- Integration of agent KPIs with business targets ensures measurable impact.

- Hypothesis-driven A/B testing and multivariate analysis accelerate learning.

- Cross-channel orchestration reinforces brand consistency and maximizes reach.

- Agents uncover latent segments and behavioral patterns to inform strategy.

- Governance and transparency reinforce customer trust and brand integrity.

Operational Imperatives