Smart Inventory AI Agents and Predictive Stocking for the Future of Supply Chains

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

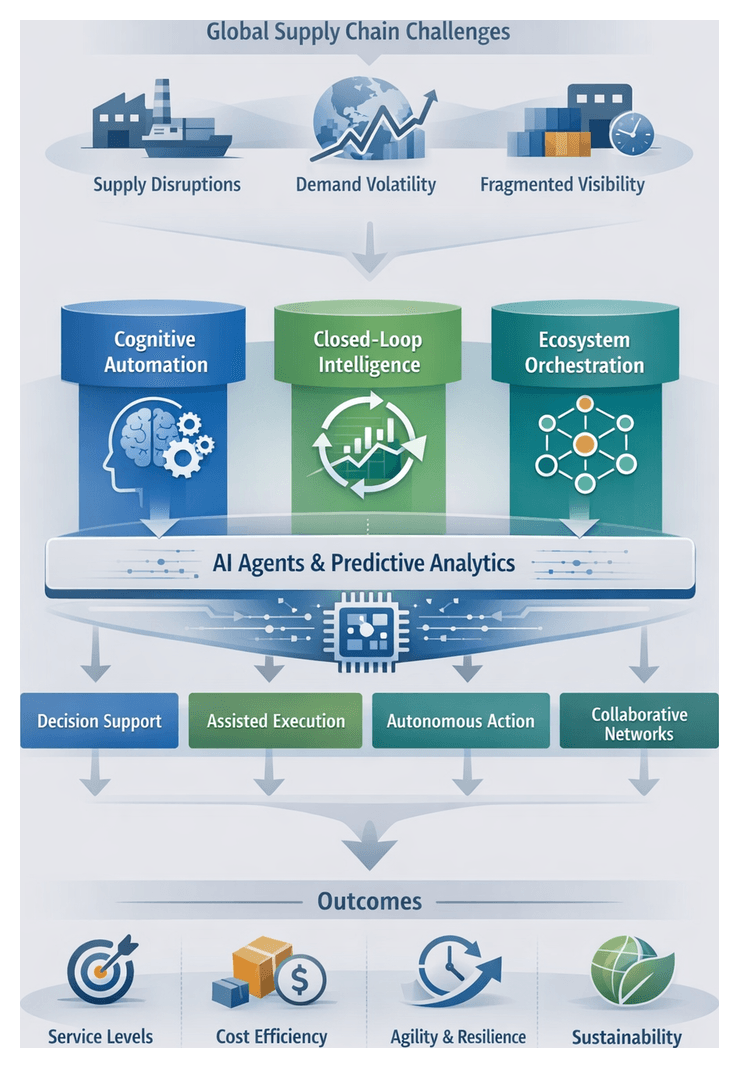

Modern Inventory Challenges in Global Supply Chains

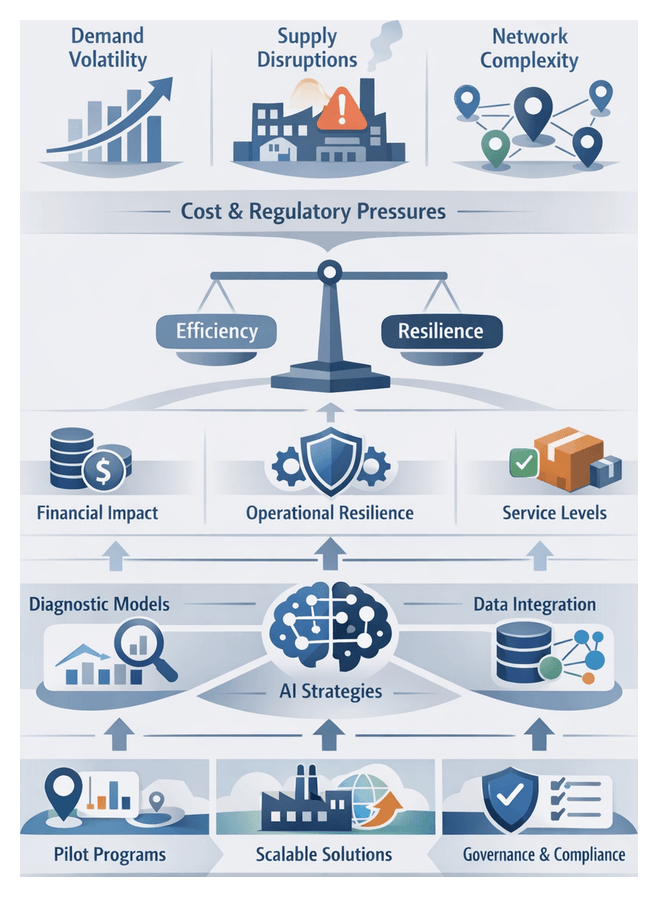

In today’s interconnected economy, inventory management sits at the nexus of product availability, cost control, and service quality. As organizations expand sourcing networks across continents, they encounter unprecedented complexity: multi-tiered suppliers, variable lead times, regional demand fluctuations, and transportation disruptions. Traditional models based on fixed reorder points and periodic reviews struggle to maintain reliable guidance in this dynamic environment. Fragmented visibility, static parameters, and siloed decision making drive either excessive holding costs or critical stockouts, undermining operational resilience and competitive positioning.

Complex global networks expose organizations to diverse variables at each node—capacity constraints, quality issues, customs delays, and geopolitical events. Conventional inventory approaches calibrated for one segment can produce imbalances elsewhere, inflating safety stock across low-risk tiers while leaving high-risk nodes vulnerable. Rapid shifts in consumer behavior, fueled by digital channels and emerging market trends, further amplify demand volatility. Promotional activities, seasonal surges, and off-premise channels such as e-commerce and subscriptions introduce erratic patterns that simple extrapolation methods cannot capture.

Disruptions from natural disasters, trade restrictions, health crises, or labor shortages often trigger reactive measures—expedited freight, regional stock reallocations, or halted production—incurring premium costs and eroding reliability. Fragmented visibility across suppliers and transport modes leaves planners with blind spots, while conflicting objectives among procurement, operations, finance, and sales impede alignment on safety stock strategies and contingency plans.

The financial impact of rising inventory costs is substantial. Capital tied up in idle stock represents lost opportunity for innovation or expansion. Warehousing expenses scale with volume and duration, and accelerated product life cycles heighten obsolescence risks. Decentralized fulfillment to meet customer expectations of same-day or next-day delivery multiplies stock positions and increases waste when demand forecasts miss the mark. Legacy systems and data silos perpetuate latency and errors, while functional incentives discourage enterprise-wide collaboration. In this context, a shift toward intelligence-driven inventory management becomes imperative for organizations seeking to optimize service levels, reduce costs, and enhance resilience.

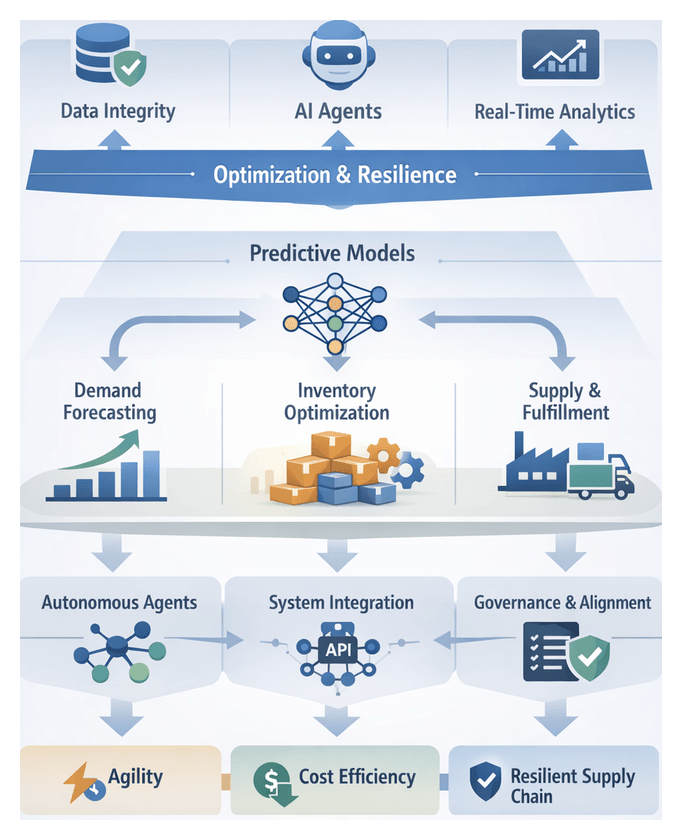

Framing AI Agents and Predictive Stocking Concepts

Inventory management reframed through AI agents and predictive stocking transforms static rules into dynamic, data-driven disciplines. An AI agent in this context is an autonomous or semi-autonomous software entity that ingests multiple data streams—transactional records, supplier performance metrics, market indicators—reasoning about stock requirements and executing decisions once reserved for human planners. Predictive stocking encompasses forecasting algorithms and statistical estimators that anticipate demand fluctuations and supply variability.

Conceptual Frameworks

- Cognitive Automation: AI agents emulate expert judgment in demand planning, continuously calibrating safety buffers using machine learning models.

- Closed-Loop Intelligence: Forecasting engines and agents form an iterative feedback loop, executing replenishment actions, monitoring outcomes, and refining algorithms in real time.

- Ecosystem Orchestration: Specialized agents coordinate across demand sensing, supplier collaboration, and logistics optimization, negotiating lead times and contingency plans within an integrated network.

Agent Autonomy and Adaptability

Agent functionality is evaluated along autonomy and adaptability dimensions. Autonomy tiers range from decision support to fully autonomous replenishment:

- Decision Support: Agents recommend actions; human planners retain final approval.

- Assisted Execution: Agents handle routine tasks under predefined rules, escalating exceptions.

- Autonomous Replenishment: Agents interface with ERP systems to trigger orders and transfers.

- Collaborative Networks: Agents coordinate across external partners, adjusting parameters based on shared data.

Adaptability reflects learning mechanisms:

- Static Learning: Periodic offline retraining.

- Incremental Learning: Continuous updates from recent performance data.

- Reinforcement-Driven Adaptation: Agents optimize decisions via reinforcement learning, using service levels and cost metrics as reward signals.

Performance Metrics and Interpretive Frameworks

Predictive stocking efficacy extends beyond forecast accuracy metrics such as mean absolute percentage error (MAPE) or weighted root mean square error (wRMSE). Leading organizations assess:

- Service Level Optimization: Achieving target fill rates with minimal stockouts.

- Inventory Capital Efficiency: Reducing working capital through precise buffer calibration.

- Supply Responsiveness: Adapting to demand spikes or supplier delays.

- Cycle Time Reduction: Shortening the interval from signal generation to on-shelf availability.

Domain Perspectives

Supply chain practitioners focus on risk mitigation, process stability, and governance, viewing predictive stocking as an evolution of reorder-point models with clear audit trails and escalation protocols. Technology vendors emphasize autonomy and rapid deployment. Platforms such as Blue Yonder Luminate, IBM Watson Supply Chain, Oracle Cloud SCM, and Kinaxis RapidResponse champion self-healing supply networks with minimal human oversight. Bridging these views requires integrated frameworks that address operational realities and technological ambitions.

Key Debates

- Explainability versus Performance: Balancing black-box model accuracy with regulatory and stakeholder transparency requirements.

- Centralized Core versus Edge Autonomy: Central digital platforms offer unified control, while distributed intelligence at warehouses accelerates local decision making.

- Short-Term Gains versus Long-Term Resilience: Aggressive inventory reduction can expose vulnerabilities during demand anomalies.

- Human Oversight versus Full Automation: Industry risk profiles dictate the degree of human-in-the-loop governance.

Unified Taxonomy

A consolidated taxonomy maps predictive stocking and agent capabilities across five domains:

- Data Intelligence: Diversity of data sources from transactional logs to macroeconomic signals.

- Analytical Rigor: Forecasting sophistication, including ensemble and probabilistic models.

- Decision Autonomy: Execution authority from recommendations to self-initiated replenishment.

- Integration Flexibility: Ease of connecting agents with ERP, TMS, and warehouse systems.

- Governance and Controls: Exception workflows, auditability, and compliance features.

Strategic Imperative for Intelligent Stocking

Intelligent stocking—driven by AI agents and predictive analytics—is now a strategic necessity rather than an innovation. By treating inventory as strategic capital, organizations optimize stock levels in real time, anticipate demand shifts, and allocate resources dynamically. The benefits extend beyond cost reductions to enhanced service levels, risk management, and market responsiveness.

Market Volatility and Demand Uncertainty

With volatility as the norm, traditional forecasting falters. AI-driven stocking reads early indicators—social media sentiment, macro data—and recalibrates inventory recommendations near real time, transforming supply chains into agile value generators that mitigate financial impacts of erratic demand.

Digital Transformation Trends

Cloud adoption, IoT integration, and embedded analytics platforms underpin intelligent stocking as a hallmark of digital maturity. Solutions from Blue Yonder and Kinaxis RapidResponse illustrate how predictive and prescriptive analytics are embedded into core workflows, enabling API-driven orchestration and continuous forecast refinement.

Competitive Differentiation

Service excellence based on optimized inventory positions distinguishes market leaders. AI-empowered stocking delivers service level gains of 5–15 percentage points and reduces carrying costs by 10–20 percent, bolstering customer loyalty and elevating enterprise valuation.

Regulatory and Sustainability Drivers

Stricter reporting on carbon footprints and waste disposal, combined with corporate ESG agendas, drive the integration of environmental and social metrics into stocking algorithms. AI-informed stocking minimizes overstock, supports circular economy goals, and meets compliance mandates.

Globalization and Risk Landscapes

In multi-tier networks, intelligent stocking continuously assesses risk signals—port congestion, supplier health, commodity volatility—and adapts buffers across nodes. During disruptions, AI agents reprioritize shipments, adjust regional stocks, and activate secondary sourcing to preserve service commitments.

Strategic Takeaways

- Resilience: Predictive insights create adaptive buffers against volatility.

- Efficiency: Dynamic alignment minimizes capital lock-up and waste.

- Competitiveness: Agility and service excellence drive differentiation.

- Sustainability: Integrated ESG metrics enhance compliance and reputation.

Thematic Insights and Practical Considerations

Four core insights define the frontier of AI-driven inventory management:

- Volatility as a Constant: Policies grounded in probabilistic forecasts replace deterministic reorder points.

- Agent-Based Autonomy: Continuous feedback systems enable real-time replenishment within defined guardrails.

- Strategic Resilience: Predictive stocking builds networks that absorb shocks and recover with minimal manual intervention.

- Data Integrity and Integration: Robust, governed data architectures underpin accurate demand and supply signals.

- Cross-Functional Alignment: Collaboration among procurement, operations, finance, and IT turns insights into unified strategies.

- Continuous Learning: Feedback loops monitor forecast accuracy, measure KPIs, and trigger model retraining as conditions evolve.

Core Limitations

- Model Scope: SKU-level optimization demands network-wide context to avoid unintended interactions.

- Data Quality: Bias from incomplete or inconsistent data undermines forecast reliability.

- Technical Debt: Legacy systems require phased integration and may limit real-time connectivity.

- Human-Machine Alignment: Transparent model logic and clear governance foster trust and accountability.

- Governance: Automated decisions must comply with procurement policies and audit requirements.

- Model Drift: Evolving market conditions necessitate ongoing monitoring, retraining, and version control.

- Cybersecurity: Protecting real-time data flows and agent interfaces demands strong encryption and access controls.

Strategic Frameworks

Multiple interpretive lenses guide AI-driven deployments:

- Dynamic Capabilities: Sensing, seizing, and transforming resources using real-time intelligence.

- Digital Maturity: Assessing readiness across data infrastructure, process standardization, and culture.

- Risk-Return Analysis: Balancing service improvements against carrying cost trade-offs and autonomy thresholds.

- Systems-of-Systems: Ensuring local optimizations align with global performance metrics.

Implications for Practitioners

- Redefine Success Metrics: Include forecast bias, fill-rate variability, and resilience indicators alongside traditional KPIs.

- Establish Governance Councils: Align replenishment policies, approve agent parameters, and manage trade-offs across functions.

- Create a Center of Excellence: Multidisciplinary teams manage data pipelines, model development, and institutionalize best practices.

- Conduct Test-and-Learn Pilots: Isolate factors such as seasonality and promotions to validate ROI in controlled environments.

- Implement Continuous Feedback: Dashboards and alerts track agent performance, enabling rapid tuning and corrective action.

Chapter 1: Foundations of Modern Inventory Management

Modern Inventory Challenges in Global Supply Chains

Global supply chains today span continents, products and partners, creating intricate networks that magnify disruptions and strain traditional inventory practices. Rapid shifts in consumer demand, driven by digital commerce and evolving preferences, undermine the predictive power of historical data. At the same time, geopolitical events, raw material shortages and logistics bottlenecks introduce lead-time variability and stock-out risks. Meanwhile, SKU proliferation and multi-echelon distribution raise coordination complexity across factories, warehouses, cross-docks and retail locations. Finally, balancing working capital against service commitments demands ever-finer trade-offs: excess safety stock ties up funds while insufficient buffers threaten lost sales and customer dissatisfaction. In this volatile environment, static reorder formulas and preset safety-stock rules are no longer adequate. Organizations need dynamic, data-driven strategies that adapt in real time to emerging signals and reconcile cost-service tensions across the network.

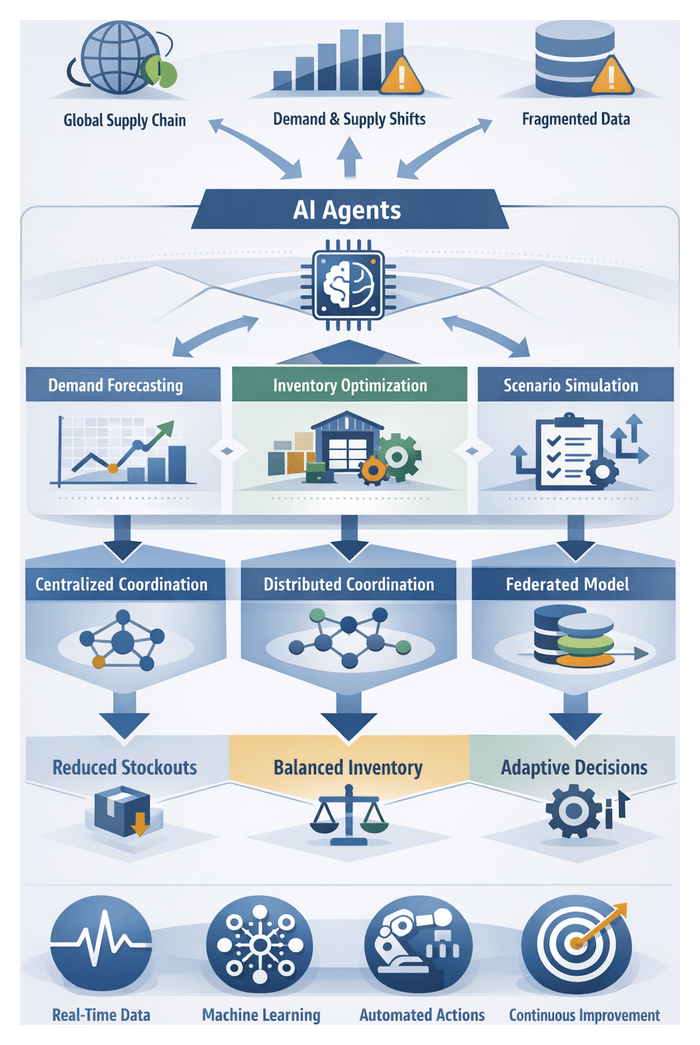

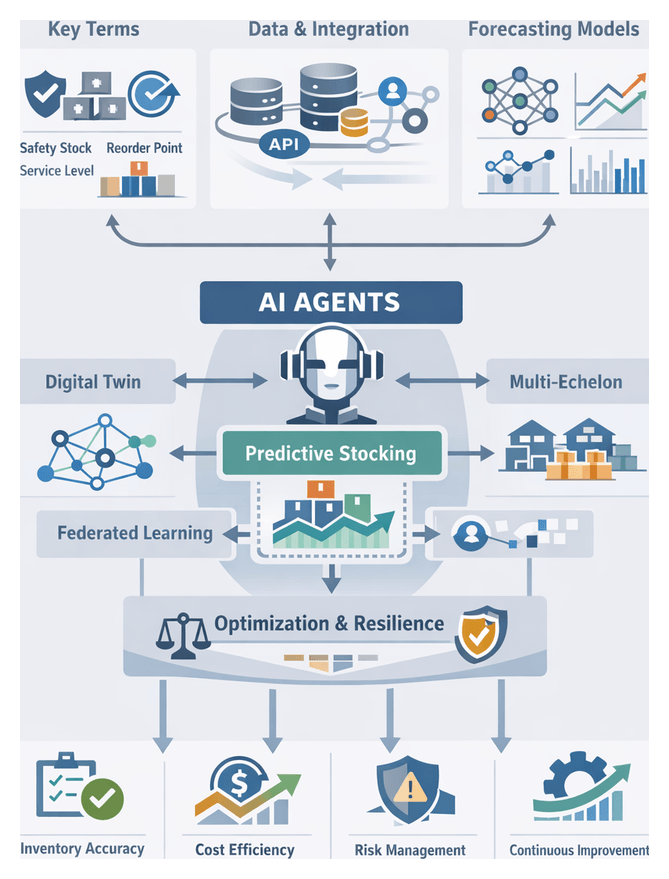

Conceptual Foundations: AI Agents and Predictive Stocking

Intelligent stocking frameworks hinge on two interdependent elements: AI agents and predictive stocking techniques. AI agents are autonomous software entities characterized by four essential attributes: the ability to make independent decisions, coordinate with other agents and systems, respond swiftly to fluctuations in demand or supply, and anticipate disruptions to adjust inventory parameters proactively. Predictive stocking enhances traditional forecasting by leveraging machine learning and probabilistic models to calculate reorder points and safety-stock levels dynamically. This process involves real-time demand sensing, which integrates point-of-sale, e-commerce, and external market data; lead-time estimation models that refine supplier expectations based on performance metrics; multi-echelon coordination algorithms that optimize stock placement across the supply chain; and autonomous decision engines that initiate purchase orders, transfers, or emergency replenishments within established parameters. By integrating AI agents with predictive stocking, businesses can transform inventory management from a reactive cost center into a strategic asset that enhances service levels and capital efficiency.

Core Metrics and Multi-Objective Optimization

At the heart of any AI-driven inventory system lie three core metrics: safety stock, reorder points and service-level targets. Safety stock quantifies the buffer required to absorb demand variability and supply disruptions; in AI-enabled environments it becomes a dynamic variable influenced by real-time market trends, supplier reliability and logistical risk. Reorder points set the trigger thresholds for replenishment; by leveraging probabilistic demand distributions and live lead-time forecasts, AI agents recalibrate these thresholds continuously and tailor them to product clusters based on demand patterns and margin profiles. Service levels express the probability of meeting demand without stockouts and translate into penalty functions within optimization algorithms, balancing revenue impact against carrying costs. Rather than optimizing each metric in isolation, multi-objective frameworks integrate them via weighted objective functions, Pareto-front analyses or constraint programming. Cross-functional feedback loops ensure that shifts in one parameter—such as elevated service targets—are assessed in terms of their downstream effects on working capital and risk exposure.

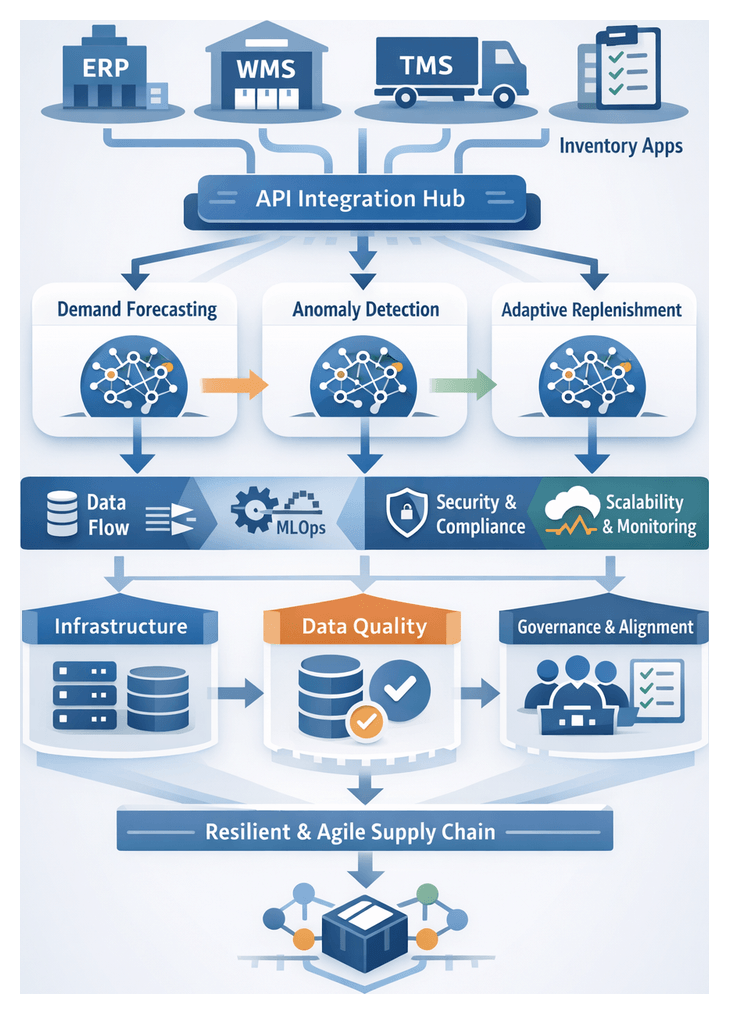

Analytical and Maturity Frameworks

Supply chain leaders often gauge their predictive stocking capabilities through maturity models that progress from descriptive analytics (reporting past performance) and diagnostic analyses (root-cause investigations) to predictive forecasting (anticipating demand trajectories) and prescriptive recommendations (autonomous replenishment actions). The apex stage—adaptive learning—features AI agents that continuously refine models based on execution data and emergent market signals. From an analytical standpoint, the power of these systems emerges when predictive models and agent autonomy form a closed feedback loop: demand insights inform automated orders, execution outcomes feed back into model retraining, and control-theory principles ensure system stability around target inventory levels. Leading frameworks emphasize four governance pillars: data fidelity for accurate and timely signals; algorithmic robustness and explainability; orchestration architecture that seamlessly integrates AI agents with ERP, WMS and supplier portals; and governance structures defining accountability, auditability and exception-handling protocols.

Industry Adaptations and Use Cases

Sectors leverage intelligent stocking concepts tailored to their unique needs. High-volume retail emphasizes short-term demand sensing and inventory clustering to reduce markdown risks. Discrete manufacturing prioritizes synchronizing multi-tier bills of materials, ensuring component availability while controlling work-in-progress levels. In pharmaceutical supply chains, risk-based assessments are crucial for maintaining critical drug availability within regulatory frameworks. Consumer electronics face the challenge of rapid SKU sunsetting and reallocating safety stock as product lifecycles shorten. Practitioners in these fields monitor key metrics like forecast accuracy, inventory turns, fill-rate attainment, and cash-to-cash cycle time. A/B pilot frameworks evaluate the performance of AI-enabled SKUs against traditional methods, with benchmarks showing that a mean absolute percentage error (MAPE) below 15 percent can lead to 10–20 percent reductions in inventory while achieving a 95 percent fill rate. Additionally, third-party studies from leading analyst firms demonstrate that integrating predictive analytics platforms with autonomous reorder agents can drive double-digit improvements in service levels and working capital efficiency.

Data Architecture and Governance

Robust data infrastructure underlies every predictive stocking initiative. Organizations must develop holistic data domain maps that integrate sales transactions, external market signals (including weather and economic indicators), supplier performance metrics and internal operational telemetry. Governance maturity models adapted from frameworks such as CMMI guide practices for data stewardship, version control and lineage tracking, ensuring transparency and compliance. Quality-control mechanisms—real-time anomaly detection, outlier screening and missing-value thresholds—guard against concept drift and data skew. Event-driven architectures and message queuing protocols support continuous data streams, while schema evolution processes allow AI models to accommodate new product attributes, changing service agreements and evolving cost structures.

Implementation Strategies and Change Management

Embedding AI agents within organizational structures requires clear delineation of decision rights, escalation protocols and human-agent collaboration models. Interpretive lenses such as the Viable System Model help align agent autonomy with oversight and accountability. Stakeholder alignment tools—RACI matrices—clarify roles during integration with ERP, warehouse management and procurement systems. Organizational readiness assessments measure digital fluency, change-management capacity and executive sponsorship, with high-readiness environments reporting faster time to value. Pilot programs should define performance metrics, establish cross-functional governance forums and apply iterative scaling approaches. Robust error-handling and rollback capabilities in data pipelines preserve resilience during initial deployments.

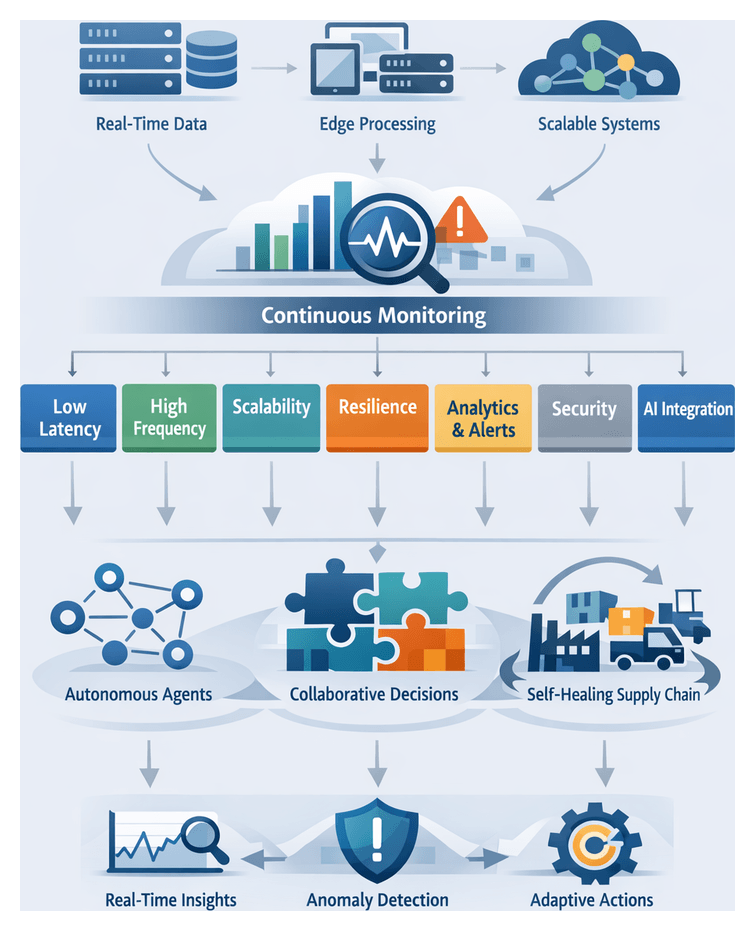

Resilience, Real-Time Analytics, and Autonomous Replenishment

Advanced scenario modeling techniques—probabilistic risk networks and Monte Carlo simulations—enable stress-testing of inventory buffers under extreme demand and supply shocks. Real-time analytics architectures borrow from stream processing to balance decision latency against computational complexity. Unsupervised learning algorithms detect anomalies in order patterns, warehouse throughput and supplier lead times, triggering autonomous replenishment orders or exception workflows. Effective governance frameworks define allowable intervention thresholds, human override mechanisms and audit trails, ensuring that AI agents enhance resilience without compromising risk controls.

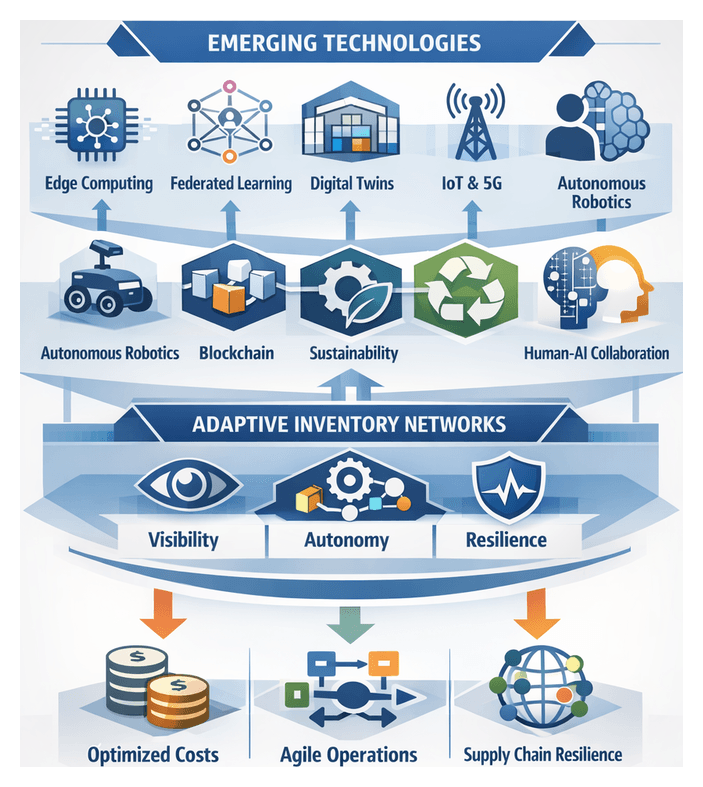

Emerging Trends, Limitations, and Future Readiness

- Edge Computing Paradigms: Deploying inference engines at warehouse and factory nodes to reduce latency and support localized replenishment logic.

- Federated Learning Architectures: Enabling collaborative model training across partner networks without exchanging raw data, enhancing forecast accuracy while preserving privacy.

- Digital Twin Simulations: Creating virtual replicas of supply-chain nodes to perform what-if analyses and align stocking policies with real-world performance.

- Blockchain Traceability: Leveraging immutable ledgers for verified supplier reliability records, informing predictive adjustments and compliance audits.

Despite their promise, AI-driven stocking systems face limitations: data bias and quality gaps can degrade model performance, opaque algorithms may erode stakeholder trust, and cybersecurity vulnerabilities pose operational risks. Over-reliance on automation without adequate human oversight can lead to systemic blind spots. Effective governance must embed explainability, continuous model validation, access controls and clear escalation protocols to mitigate these challenges and sustain long-term value.

Reader Outcomes and Strategic Steps

- Diagnose Inventory Challenges by mapping volatility, carrying costs and stock-out risks to targeted AI solutions.

- Design Data Architectures that integrate essential internal and external data domains with rigorous governance practices.

- Frame Intelligent Agent Roles, defining decision scopes, learning mechanisms and coordination models within the supply chain.

- Evaluate Forecasting Techniques, comparing statistical and machine learning methods against operational interpretability and computational constraints.

- Develop Predictive Replenishment Strategies, configuring dynamic safety stocks, adaptive reorder points and multi-echelon rules driven by probabilistic models.

- Plan Integration and Deployment, assessing technical architectures, change-management requirements and scaling pathways.

- Implement Real-Time Analytics, establishing continuous monitoring, anomaly detection and autonomous replenishment triggers.

- Build Risk-Resilient Frameworks through scenario analysis, stress-testing and contingency buffer design.

- Overcome Adoption Barriers with stakeholder alignment, pilot frameworks and performance measurement practices.

- Anticipate Future Trends by exploring edge computing, federated learning, digital twins and blockchain traceability.

Chapter 2: Data Architecture and Quality for Predictive Insights

Data Foundations for Predictive Inventory Management

Accurate demand forecasting depends on a comprehensive data architecture that captures internal operations and external market signals. By integrating sales history, inventory transactions, supplier metrics, product attributes, macroeconomic indicators, and event-based data, organizations gain the multidimensional context required for reliable predictive models. These inputs become the signals that drive dynamic replenishment decisions, safety stock calibration, and strategic network planning.

Key Data Domains

- Sales and Transaction History: Granular SKU-level order and return records reveal seasonality, trend shifts, and promotional impacts.

- Inventory and Warehouse Operations: Real-time stock-on-hand, inbound receipts, outbound shipments, and cycle count variances highlight throughput and bottlenecks.

- Supplier Performance Metrics: Lead-time variability, fill rates, and defect ratios inform buffer requirements and stockout risk.

- Product Attributes and Lifecycle Data: Stage-of-life classification, physical characteristics, and substitution relationships refine demand projections.

- Market and Macroeconomic Indicators: Currency fluctuations, commodity price indices, consumer sentiment, and employment rates contextualize demand for discretionary goods.

- Environmental and Event-Based Data: Weather conditions, holiday calendars, trade restrictions, and social media sentiment serve as leading indicators of demand anomalies.

Temporal Resolution and Architecture Patterns

Aligning data granularity with product dynamics is critical. Fast-moving goods may require hourly or daily intervals, while industrial items suit weekly or monthly aggregates. Key considerations include time interval consistency, lookback window selection, and multi-horizon forecasting for tactical and strategic planning.

Common data architecture patterns include centralized data warehouses for batch reporting, data lakes for flexible analysis, and hybrid or lambda architectures that blend streaming ingestion with batch retraining. Selection should reflect existing infrastructure, data volume growth, and latency requirements.

External Signal Integration

Leading platforms such as Amazon Forecast, and Google Cloud AI Forecast demonstrate the value of enriching sales data with external factors. Effective integration involves source validation, feature engineering (for example, temperature deviations or sentiment indexes), and correlation analysis to quantify the impact on demand.

Data Quality and Master Data Management

Predictive model performance hinges on data accuracy, consistency, and completeness. Automated data profiling detects anomalies, while error correction workflows fill gaps, standardize codes, and remove duplicates. Tools such as Talend Data Quality and Informatica Data Quality automate cleansing tasks and reduce model bias.

Master Data Management practices establish canonical definitions for products, customers, suppliers, and locations. Data governance frameworks enforce policies through stewardship committees, metadata catalogs, and role-based access controls, ensuring integrity and compliance with regulations like GDPR and CCPA.

Data Governance and Integration Strategies

Robust data governance and seamless integration form the backbone of predictive intelligence. Governance transforms compliance obligations into strategic assets by ensuring data integrity, lineage visibility, and secure access. Integration strategies balance centralized repositories with decentralized, domain-oriented approaches.

Governance Pillars

- Policy and Standards: Guidelines for data classification, accessibility, and permissible use aligned with regulations.

- Roles and Responsibilities: Defined data custodians, stewards, and domain experts enforcing quality thresholds.

- Data Quality Metrics: KPIs for accuracy, completeness, consistency, timeliness, and validity feeding into scorecards.

- Lineage and Traceability: End-to-end tracking of data origin, transformations, and consumption for auditability.

Integration Patterns and DataOps

Practitioners weigh batch ETL/ELT, streaming event-driven flows, and API-led connectivity. Batch pipelines suit structured operational data, while streaming platforms like Apache Kafka and Confluent enable low-latency updates. API-driven microservices enhance modularity. High-maturity organizations orchestrate these patterns through DataOps workflows with CI/CD for data pipelines.

Leading integration and governance suites include Informatica, Collibra, and open-source tools like Apache NiFi for real-time flow management.

Monitoring, Metrics, and Lineage

Integration success is measured through latency compliance, throughput consistency, error rates, and recovery time objectives. Automated anomaly detection on pipeline performance and data quality—powered by platforms such as DataRobot—provides proactive alerts for data drift. Lineage visualization tools like Alation enable rapid impact analysis and accelerate root-cause investigations.

Contextual Use Cases of AI-Driven Forecasting

Predictive models deliver maximum value when aligned with specific operational and strategic contexts. Continuous validation against service level targets, fill rate thresholds, and cost minimization objectives ensures that insights translate into actionable decisions across diverse inventory scenarios.

SKU-Level Forecasting in High-Variability Environments

Segment SKUs by variability metrics—coefficient of variation, intermittency, outlier events—to tailor forecasting approaches. Hybrid models combining statistical smoothing with machine learning capture sudden shifts. Exception-based review frameworks automate replenishment for stable items while surfacing anomalies for expert intervention.

Multi-Echelon Planning and Network Effects

In distribution networks with central warehouses, regional centers, and retail outlets, forecasts at each node must account for lead-time variability, replenishment policies, and transshipment. Simulation-based validation under disruption scenarios quantifies error propagation and informs safety stock calibration to balance service levels against carrying costs.

Seasonal and Promotional Demand

Decompose time series into trend, seasonal, and event-driven components. Enhance additive or multiplicative models with promotional intensity, competitor activity, and search trends. Lift factor methodologies and causal inference isolate the incremental impact of promotions, calibrated through cross-validation across seasons and regions.

New Product Introduction and Lifecycle Phases

For items lacking historical data, use analog forecasting by clustering new products with mature items sharing attributes. Employ phased models that shift from qualitative inputs—market research, expert judgment—to quantitative techniques as sales data accrues, refining accuracy over the product lifecycle.

Omnichannel Fulfillment and Inventory Pooling

Unified forecasts must serve store replenishment, e-commerce shipping, and click-and-collect pickups. Hierarchical time series methods reconcile national, regional, and store forecasts. Real-time allocation engines adjust safety stock distribution in response to forecast updates, optimizing service across channels without inflating total inventory.

Risk Mitigation and Scenario-Based Planning

Monte Carlo simulations and “what-if” analyses explore supplier disruptions, geopolitical events, and demand shocks. Integrate risk indicators—supplier lead-time volatility, market sentiment, climate risk—into scenario models. Executive dashboards display worst-case coverage, service breach probabilities, and incremental holding costs to inform contingency strategies.

Integration with Business Planning and Financial Objectives

Embed forecasts into Sales and Operations Planning cadences and integrate with ERP platforms such as SAP Integrated Business Planning or Oracle NetSuite. Align model performance metrics—mean absolute percentage error, bias—with financial KPIs like return on invested capital and inventory turns to ensure measurable business impact.

Analytical Insights, Anticipated Outcomes, and Critical Considerations

Anticipated Competencies and Outcomes

- Strategic Framing of Inventory Challenges: Diagnose volatility drivers and position AI solutions as targeted responses.

- Architectural Appreciation of Data Pipelines: Grasp data integration, governance, and quality assurance roles in forecasting.

- Interpretive Frameworks for AI Agents: Evaluate autonomous agent architectures, coordination protocols, and decision frameworks.

- Analytical Assessment of Forecasting Techniques: Compare ARIMA, gradient boosting, and deep learning against SKU lifecycles and promotional patterns.

- Optimization Trade-Off Analysis: Balance service levels, holding costs, and stock-out risks using sensitivity and multi-echelon models.

- Integration and Change Management Insights: Map dependencies between AI agents, ERP platforms, data infrastructures, and stakeholders.

- Risk and Resilience Planning: Master scenario modeling to quantify supply disruptions and design buffer strategies.

- Future-Readiness and Emerging Technologies: Anticipate the impact of edge computing, federated learning, digital twins, and autonomous logistics.

Key Analytical Insights

- Data Integrity as Foundation: Treat governance as a strategic enabler to normalize, version, and trace all inputs.

- Forecasting Beyond Point Estimates: Adopt probabilistic and scenario-based frameworks to quantify uncertainty.

- Agent Coordination Dynamics: Explore multi-agent negotiation protocols and reward structures for network-wide optimization.

- End-to-End Optimization Lens: Integrate replenishment decisions with procurement, production, and distribution processes.

- Real-Time Analytics Imperative: Leverage streaming anomaly detection and event-driven triggers for supply chain responsiveness.

- Interpretability and Trust: Use SHAP values and attention-weight visualizations to validate model outputs.

- Change Management for AI Integration: Align stakeholder mapping, communication flows, and governance checkpoints across teams.

- Resilience Through Predictive Buffers: Apply stress-testing and dynamic safety stock methodologies across multi-tier networks.

- Continual Learning and Model Governance: Establish drift detection, retraining cadences, and model-ops pipelines for ongoing calibration.

- Future-Proofing Inventory Architectures: Explore federated learning and digital twins with platforms like Azure Machine Learning and AWS Forecast.

Critical Considerations and Limitations

- Data Quality and Representativeness: Historical biases and outliers can skew forecasts without rigorous profiling and outlier treatment.

- Infrastructure and Latency Constraints: Legacy warehouses may bottleneck real-time analytics; assess compute, bandwidth, and event-processing frameworks.

- Model Complexity vs. Transparency: Deep learning may sacrifice interpretability; simpler models or explainable AI may be needed for auditability.

- Organizational Readiness and Skill Gaps: Cross-disciplinary teams require data engineering, machine learning, and supply chain expertise.

- Change Management and Stakeholder Alignment: Pilot programs and clear governance help build trust in autonomous decision-making.

- Scalability and Maintenance Overheads: Automate version control, testing, and deployment through model-ops to manage growth in SKUs and nodes.

- Integration Complexity: Middleware and pre-integration assessments reduce schema mismatches and authentication hurdles with ERP and WMS systems.

- Regulatory and Ethical Considerations: Ensure AI policies and override rules comply with regulations in critical supply contexts.

- External Unpredictables: Complement data-driven forecasts with manual contingency protocols for black swan events.

- Cost-Benefit Calibration: Develop rigorous financial models to compare software, hardware, and change management costs against expected ROI.

Chapter 3: Understanding AI Agents in Supply Chains

Addressing Modern Inventory Challenges with AI Agents

Global supply chains have evolved into complex, multi-tiered networks spanning continents and stakeholders. Demand volatility—driven by shifting consumer preferences and unpredictable market trends—combines with supply-side disruptions such as natural disasters and geopolitical shifts to upend traditional inventory methods. Fragmented data across legacy enterprise resource planning (ERP), warehouse management, and point-of-sale systems often results in siloed insights, manual reconciliation delays, and decisions based on incomplete information. As a result, organizations face stockouts, overstock, inflated carrying costs, and degraded service levels. Incremental improvements to rule-based systems no longer suffice; the need for tools that process real-time signals, adapt continuously, and coordinate decisions across nodes has become paramount. AI agents equipped with predictive stocking capabilities offer a transformative approach, shifting inventory management from reactive responses to anticipatory orchestration.

Fundamentals of AI Agents and Predictive Stocking

AI agents are autonomous software constructs that perceive their environment, apply reasoning, and execute actions to meet predefined objectives. In supply chains, these agents ingest diverse data streams—historical sales, lead times, market indicators—and leverage machine learning to forecast demand, calculate dynamic safety stocks, and simulate scenarios. Unlike static rule-based systems, AI agents continuously refine their models based on real-time outcomes, enabling:

- Continuous demand estimation using time-series models, regression analysis, or neural networks.

- Dynamic inventory policy optimization that adjusts reorder points and safety stocks in response to emerging trends.

- Scenario simulation to evaluate the impact of disruptions and alternative fulfillment strategies.

AI agents integrate forecasting with execution, autonomously placing purchase orders, routing replenishments, and triggering exception workflows. For example, an agent may detect a regional surge in SKU demand, assess supplier capacities and lead times, and recommend staggered replenishment plans. If transportation delays occur, the agent recalibrates safety stocks and explores alternative routes, all without manual intervention. When embedded within ecosystems such as IBM Watson Supply Chain or integrated with Microsoft Azure AI services, these agents unlock real-time decision making at scale, balancing service levels, cost efficiency, and sustainability objectives.

Architecting and Coordinating AI Agents

Effective deployment of multiple AI agents hinges on coordination architectures, communication protocols, and negotiation mechanisms. Organizations typically choose from three paradigms:

- Centralized Coordination: A single orchestration hub aggregates data and issues directives, simplifying global optimization but introducing potential bottlenecks.

- Distributed Coordination: Peer-to-peer agent communication enhances scalability and resilience, requiring robust consensus protocols to maintain alignment.

- Federated Coordination: A hybrid model combining local autonomy with periodic synchronization via a federated controller, balancing responsiveness with oversight.

Standardized communication protocols ensure semantic interoperability. Industry frameworks like the FIPA agent communication language define message performatives—request, inform, propose, agree—while lightweight JSON or XML schemas transported through message brokers support domain-specific attributes. Negotiation mechanisms address conflicting objectives across agents:

- Contract Net Protocol for task allocation through calls for proposals and bid evaluation.

- Auction-Based Mechanisms, including combinatorial auctions, to allocate resources based on bid competitiveness and demand variability.

- Consensus Algorithms such as iterative averaging or belief propagation, driving agreement on shared variables like reorder quantities.

Performance evaluation spans operational, technical, and strategic metrics:

- Operational: Order fulfillment rate, stockout frequency, inventory turnover.

- Technical: Message latency, throughput, error rates.

- Strategic: Adaptability to demand shifts, resilience against disruptions, cost-benefit ratios.

By aligning coordination protocols with network topology, governance constraints, and performance targets—and by leveraging simulation tools for stress testing—organizations can select coordination architectures that drive resilience and scalability.

Transforming Inventory Processes with AI Agents

Embedding AI agents into inventory workflows reshapes decision dynamics, organizational roles, and continuous learning cycles:

Redefined Decision Dynamics

- Accelerated response times through real-time ingestion of sales, supplier, and logistics signals.

- Contextual prioritization that weighs service targets, cost thresholds, and lead-time variability to present ranked replenishment scenarios.

- Enhanced forecast predictability as agents learn seasonality, promotions, and trend patterns, reducing error and smoothing replenishment.

Transformed Organizational Roles

- Planners evolve into orchestrators who interpret agent insights, manage exceptions, and adjust risk parameters.

- Governance frameworks define agent autonomy levels, escalation thresholds, and audit processes.

- Cross-functional forums align supply chain planning, procurement, and finance teams around agent-generated recommendations.

Continuous Learning and Feedback

- Online learning techniques enable incremental model refinements as new data arrives.

- Anomaly-driven retraining triggers model updates when forecast errors exceed thresholds.

- Knowledge graphs capture causal relationships, accelerating adaptation to recurring disruptions.

Real-World Application Contexts

- High-velocity consumer goods: Agents tune reorder suggestions hourly using web analytics and social sentiment.

- Multi-echelon networks: Agents coordinate buffers across factories, distribution centers, and retail outlets to minimize total system cost.

- Perishable assortments: Agents integrate spoilage rates and weather forecasts to balance freshness and waste.

- New product introductions: Agents leverage analog clustering and early sales signals to bootstrap forecasts.

Technology Ecosystem Integration

Successful agent deployments integrate with existing ERP and WMS landscapes. Leading platforms include:

- Blue Yonder’s Platform, embedding autonomous replenishment agents within its supply chain suite.

- IBM Sterling Inventory Control Tower, leveraging Watson AI for demand sensing and network-wide optimization.

- DataRobot for robust API orchestration between legacy systems and AI platforms.

Organizational and Technical Readiness

Cultural Alignment and Governance

Adopting AI agents demands executive sponsorship, data-driven mindsets, and agile change management. Readiness assessments gauge data literacy, collaboration, and governance maturity, guiding organizations from awareness to advanced agent autonomy. Key elements include leadership endorsement, cross-functional forums, targeted training on AI concepts, and feedback loops for refining agent decisions.

Data Infrastructure and Integration

AI agents require comprehensive, high-quality data. A federated data mesh approach accelerates integration of ERP modules, WMS, transportation platforms, and third-party sources while maintaining domain ownership and governance. Event-driven architectures and message brokering—using frameworks such as Apache Kafka—support real-time visibility. For cloud-based pipelines, services such as Azure Event Grid enable seamless data flow between operational systems and agent platforms.

Technical Scalability and Performance

Scaling from pilot to enterprise involves aligning agent architectures with decision rhythms. Containerized deployments on Kubernetes offer elasticity for inference workloads, while serverless functions handle event-triggered tasks. Hybrid models offload training to centralized cloud resources and deploy inference at the edge near warehouse execution systems. Performance metrics include compute utilization, inference latency, autoscaling policies, and observability through telemetry pipelines.

Trust, Interpretability, and Risk Management

Building trust requires explainable models, bias detection, and policy enforcement. Techniques such as SHAP values and counterfactual analysis illuminate agent reasoning, while rule-based guardrails ensure compliance with business and regulatory constraints. Risk mitigation strategies include continuous validation against legacy systems, fallback mechanisms to manual processes, periodic retraining to address model drift, and stress-testing with digital twins. Cross-functional incident response teams investigate anomalies and restore operations swiftly.

Continuous Improvement and Future Outlook

Recognizing limitations—such as cold-start challenges and multi-agent objective conflicts—guides ongoing refinement. Integrating alternative data sources, implementing meta-learning or reinforcement learning, and developing arbitration layers for conflicting agent recommendations enhance performance. Industry standards like those emerging from the Open Agent Standard Consortium and federated learning approaches will drive interoperability and collaboration across supply chain partners. Advancements in edge-native coordination and blockchain-anchored messaging promise ultra-low latency decisions and audit-ready trails. Organizations that invest in holistic agent ecosystems, embrace iterative improvement, and participate in standards development will secure lasting competitive advantage and resilient supply chains.

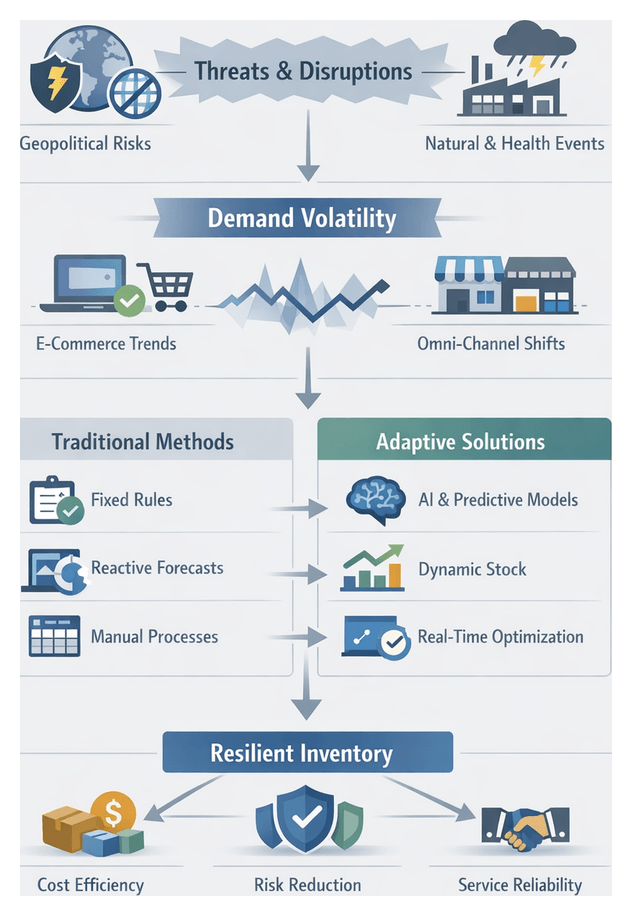

Chapter 4: Machine Learning Techniques for Demand Forecasting

Industry Volatility and Demand Uncertainty

Global supply chains have grown more complex and interdependent, exposing inventory systems to geopolitical tensions, natural disasters, pandemics and regulatory shifts that can disrupt production, logistics and lead times overnight. At the same time, digitization, e-commerce and dynamic marketing amplify demand volatility through real-time trends, overlapping promotions and shifting channel preferences.

- Regional conflicts and sanctions can close critical trade lanes without warning

- Natural disasters in manufacturing hubs may halt output for weeks

- Public health measures can affect labor availability, transportation capacity and consumer behavior simultaneously

- Digital marketing campaigns and social media trends trigger rapid, localized demand spikes

- Omni-channel fulfillment requires balancing shared inventory across retail, online and marketplaces

In this environment, rigid safety-stock rules and static reorder points either inflate carrying costs or expose businesses to stockouts. An adaptive, data-driven inventory framework is essential to navigate frequent shocks, minimize risk and maintain service levels.

Limitations of Traditional Inventory and Forecasting Models

Conventional planning methods—periodic review systems, fixed reorder thresholds and uniform safety-stock policies—rest on assumptions of stable lead times and demand patterns. These assumptions break down under modern volatility, leading to:

- Reactive decision cycles that lag market shifts and amplify oscillations

- One-size-fits-all buffers that ignore SKU-level volatility and criticality

- Reliance on historical data alone, without forward-looking risk indicators

- High manual overhead as planners consolidate data across spreadsheets

The result is working capital tied up in slow movers and insufficient stock for fast movers, with the bullwhip effect magnifying small disruptions into costly imbalances.

Objectives for Intelligent Inventory Management

- Enhance forecast accuracy by integrating transaction records, market signals and disruption indicators

- Dynamically adjust safety stock and reorder parameters in real time

- Reduce carrying costs while maintaining or improving service levels

- Automate routine adjustments and exception handling to cut manual cycle times

- Provide cross-functional visibility so procurement, logistics and finance share a single source of truth

Realizing these objectives requires continuous optimization routines, exception-driven dashboards and AI algorithms that learn from incoming data and evolving risk landscapes.

Comparative Analysis of Forecasting Techniques

Demand-forecasting models are evaluated on predictive accuracy, robustness and operational feasibility. Key metrics include Mean Absolute Percentage Error, Root Mean Square Error and Weighted Absolute Percentage Error. Common methodological categories are:

Time-Series Models

Classic methods such as ARIMA, exponential smoothing and Holt-Winters deliver transparency and computational efficiency. Implementations in Prophet and open-source R or Python packages are widely used.

- Strengths: Explainable seasonal and trend components; low data requirements; fast training

- Limitations: Linear assumptions; difficulty incorporating external regressors; sensitivity to structural breaks

Regression-Based Approaches

Machine-learning regressions—XGBoost, Random Forest, elastic net—enable inclusion of promotional calendars, pricing and macroeconomic indicators. Platforms such as Azure Machine Learning streamline feature engineering and model evaluation.

- Strengths: Handles heterogeneous signals; robust to outliers; scalable to large SKU portfolios

- Limitations: Reduced interpretability with complex interactions; extensive hyperparameter tuning; batch retraining latency

Neural Network Architectures

Deep-learning models—LSTM, GRU and transformer-based networks—capture nonlinear demand patterns and multi-step dependencies. Frameworks like TensorFlow and PyTorch provide the necessary compute capabilities.

- Strengths: Superior handling of promotions and complex seasonality; multi-horizon coherence; transfer learning opportunities

- Limitations: Black-box nature; high data volume requirements; need for GPU acceleration

Hybrid and Ensemble Strategies

Blending models—such as combining ARIMA baselines with gradient-boosting residuals or integrating neural forecasts via weighted averaging—yields variance reduction and accuracy gains. Managed services like Amazon Forecast automate ensemble selection at scale.

- Evaluated on ensemble consistency, model diversity and operational complexity

- Offers 2–4 percent MAPE improvements but increases maintenance and governance demands

Application Contexts for Forecasting Methods

Effective forecasting aligns methodologies with the nature of the product, data availability and risk profile. Key contexts include:

- Product Lifecycle: Causal regressions or Bayesian frameworks for new product introductions; exponential smoothing or gradient boosting in growth phases; SARIMA in maturity; machine learning for decline management

- Seasonality and Cycles: Deterministic approaches (STL, SARIMA) for stable seasonal patterns; tree-based regressors and RNNs in Amazon Forecast for shifting seasonal interactions

- Promotions and Events: Time-series models with impulse functions; multivariate regressions in Azure Machine Learning for cross-SKU lift and substitution; Bayesian models for elasticity analysis

- New Product Introductions: Analogue mapping and hierarchical aggregation; expert elicitation with Bayesian updating to address data sparsity

- Multi-Echelon Networks: Centralized forecasts for baseline planning; edge-level adjustments with lightweight algorithms; graph neural networks to model spatial-temporal dependencies

- Turnover and Obsolescence: LSTM networks for high-frequency FMCG demand; probabilistic decay models for perishables and season-limited items

- Regional Variations: Local econometric regressions; transfer learning to adapt neural models across markets; clustering to share parameters among similar regions

- Granularity Trade-Offs: Fine-grain SKU forecasts for high-impact items; coarse-grain category forecasts for sparse data; dynamic granularity strategies based on SKU velocity

Model Selection Criteria and Key Considerations

Data Characteristics and Model Alignment

Match model complexity to data volume and granularity. Use ARIMA and exponential smoothing for stable, aggregate series. Deploy gradient boosting or deep networks when transaction frequency and feature diversity warrant advanced methods. Cloud services such as Vertex AI Forecasting automate covariate integration, while LSTM architectures handle unstructured inputs.

Complexity Versus Interpretability

Balance accuracy gains against the need for transparency. Linear and hierarchical time-series models offer clear parameter interpretations. Black-box methods require explainability toolkits—SHAP, LIME or integrated gradients—often embedded in solutions like Azure Machine Learning.

Scalability and Maintenance

- Retraining Frequency: Trigger retraining based on error drift rather than fixed schedules

- Version Control: Use MLflow registries and containerization to manage model lifecycles and rollbacks

- Automation: Orchestrate data pipelines and exception alerts to minimize manual interventions

Infrastructure Constraints

Evaluate hosting options and compute requirements. Statistical methods run on commodity hardware; deep learning benefits from GPU clusters. Consider data-sovereignty needs when choosing between cloud and on-premises deployments, and assess total cost of ownership.

Integration with Business Processes

Ensure forecasts seamlessly feed inventory optimization, procurement planning and executive dashboards. Align model outputs with KPIs—service levels, inventory turnover, lost-sales reduction—and involve cross-functional committees in decision-making to secure stakeholder buy-in.

Risk Factors and Governance

- Concept drift: Monitor for shifts in consumer behavior or disruption patterns

- Data quality: Implement rigorous cleansing and anomaly detection

- Overfitting/Underfitting: Guard with cross-validation and out-of-sample testing

- Explainability versus accuracy: Weigh marginal precision gains against stakeholder trust

- Operational scalability: Automate high-frequency retraining and prediction delivery

Model selection should be a continuous strategic practice. Organizations that institutionalize performance reviews, maintain robust governance and align technology with business objectives will harness both classical and modern forecasting to deliver resilient, cost-effective inventory management in an increasingly volatile landscape.

Chapter 5: Predictive Stocking Strategies and Optimization

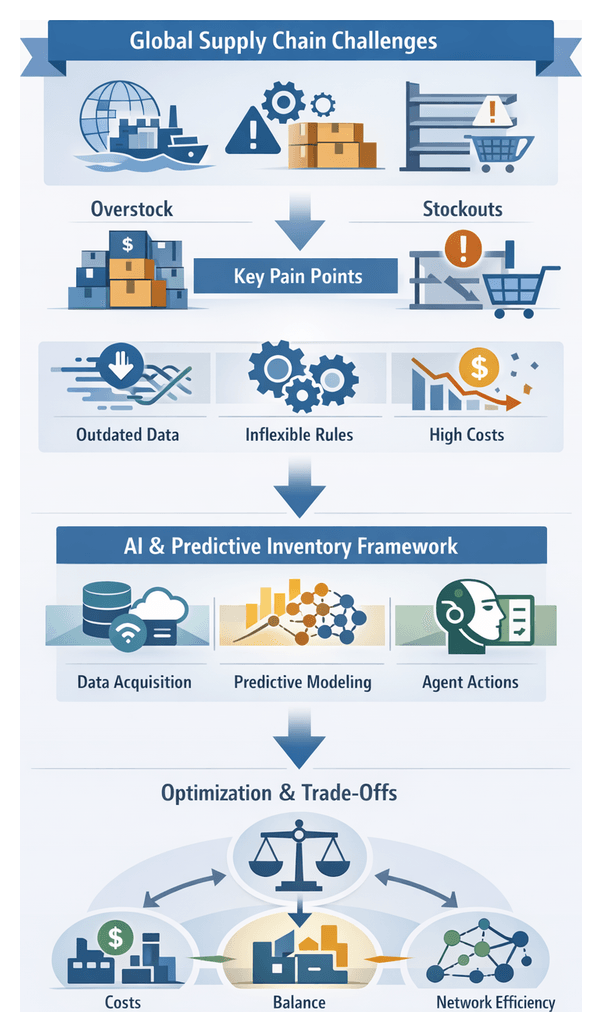

Modern Inventory Challenges in Global Supply Chains

Global supply chains today operate under unprecedented complexity. Companies source components across continents, adjust to fluctuating consumer demand in real time, and navigate evolving regulatory regimes. Traditional inventory management—relying on static reorder points and fixed safety stock buffers—struggles to address lead-time variability, geopolitical disruptions, and seasonal demand surges. The result is a precarious balance between overstocking, which ties up capital and erodes margins, and stockouts, which damage customer loyalty and revenue.

Recent shocks—from pandemic-induced factory closures to port congestions and material shortages—have revealed the brittleness of conventional replenishment models. Siloed operations limit multi-tier supplier visibility, and delayed demand signals amplify the bullwhip effect. SKU proliferation and niche assortments further complicate linear forecasting approaches.

Three core pain points emerge:

- Inability to process real-time data streams from point-of-sale systems, supplier performance feeds, social media trends and external market indicators, leading to outdated or inaccurate demand projections.

- Rigid replenishment rules that cannot adapt instantaneously to sudden disturbances such as supplier failures or promotional spikes.

- Magnified financial impacts of excess inventory or lost sales due to thin margins and high customer fulfillment expectations.

Organizations must shift from reactive, manual planning to predictive, autonomous decision-making to enhance resilience, reduce waste and maintain competitive advantage.

AI Agents and Predictive Stocking Framework

Implementing predictive stocking requires intelligent agents—autonomous software entities that perceive their environment, make decisions based on objectives, and act to optimize inventory. These agents leverage advanced predictive analytics, applying machine learning algorithms to uncover demand patterns, seasonality and emergent market signals.

The framework rests on three pillars:

- Data Acquisition: Integrates structured and unstructured inputs—including historical sales, supplier lead-time distributions, macroeconomic indicators, weather forecasts and social sentiment—into a unified repository.

- Predictive Modeling: Utilizes time-series decomposition, ensemble learning and deep neural networks to generate probabilistic demand forecasts at the SKU and location level.

- Agent Orchestration: Translates forecasts into replenishment directives, with continuous feedback loops measuring performance against predictions to refine strategies.

AI agents negotiate replenishment cycles, initiate emergency orders and schedule lateral transfers between distribution centers without human intervention. They evaluate trade-offs in real time—balancing holding costs, service-level objectives and sustainability targets—to dynamically calibrate reorder points and inventory allocations.

Analytical Examination of Optimization Trade-Offs

Evaluating Economic Trade-Offs

Effective inventory optimization balances carrying costs against the revenue impact of stockouts. Total Cost of Ownership (TCO) and cost-to-serve models capture:

- Holding Costs: Warehousing, insurance, obsolescence and capital costs for on-hand inventory.

- Stockout Penalties: Lost sales, expedited shipping and customer churn.

- Ordering Costs: Transaction costs and variability costs from unpredictable demand.

- Opportunity Cost: Foregone returns on alternative uses of tied-up capital.

Scenario analysis simulates incremental safety stock adjustments against service level gains. Tools like Amazon Forecast enable finance and operations teams to model how service level improvements affect profitability, offering a dynamic cost curve instead of static order-point calculations.

Balancing Responsiveness and Efficiency

Supply chains must be both agile and cost disciplined. Responsiveness addresses demand spikes and supply shocks, while efficiency avoids waste across thousands of SKUs. Differentiated service policies align stocking rules with SKU velocity:

- High-velocity items: Aggressive reorder triggers, smaller economic order quantities and intra-day demand sensing.

- Slow-movers: Periodic review cycles and higher safety stocks to minimize stockouts amid sporadic demand.

Segmentation frameworks such as ABC and XYZ categorize SKUs by consumption value and demand variability, guiding tailored stocking strategies.

Multi-Echelon Considerations

Inventory decisions at one network node affect the entire supply chain. Multi-echelon optimization (MEO) evaluates:

- Centralization vs. Decentralization: Central buffers reduce aggregate safety stock but increase regional lead times.

- Transshipment Costs: Regional stock transfers may prove more economical than direct supplier replenishment.

- Risk Pooling Benefits: Aggregating demand variability across sites lowers safety stock, balanced against network transport costs.

Platforms such as Kinaxis RapidResponse and Relex Solutions offer multi-echelon modules that weight transportation expenses against inventory savings, integrating lead-time variability metrics to ensure robust buffer sizing.

Sensitivity and Scenario Analyses

Robust stocking strategies require understanding sensitivity to key assumptions. Analysts perform:

- Parameter Sensitivity: Varying safety stock multipliers, service level targets and demand volatility inputs to test solution robustness.

- Stress Testing: Simulating supply disruptions, demand spikes and lead-time fluctuations to quantify resilience.

- What-If Modeling: Evaluating alternative supplier performance, promotional events and geopolitical risks to outline outcome ranges.

Advanced platforms automatically recalibrate scenarios as new ERP and IoT data arrive, ensuring continuous alignment with evolving conditions.

Key Performance Indicators

Diverse metrics must be evaluated collectively:

- Fill Rate: Percentage of demand fulfilled from stock on hand.

- Inventory Turnover: Frequency of stock cycling, indicating capital efficiency.

- Days of Supply: Forecasted consumption horizon, highlighting potential overstock or understock.

- Order Cycle Time: Lead time from order placement to receipt, influencing safety stock.

High fill rates with low turnover suggest excessive buffers, while rapid turnover paired with low fill rates indicates stockout risk. Comparative dashboards and peer benchmarking establish realistic performance targets.

Decision Governance Frameworks

Effective governance ensures that optimization insights translate into reliable policies. Key elements include:

- Model Assumptions: Regular validation of demand forecasts, lead-time distributions and cost estimates.

- Policy Overrides: Defined criteria for manual exceptions in cases like new product launches or critical customers.

- Continuous Review Cadence: Scheduled re-optimization of parameters to reflect market shifts.

Such governance fosters transparency, aligns finance, procurement and operations, and mitigates over-reliance on black-box outputs.

Strategic Use Contexts for Predictive Stocking

Predictive stocking strategies deliver value across diverse operational environments. Tailoring models to demand patterns, risk exposures and service mandates maximizes impact.

High-Velocity and Fast-Moving Consumer Goods

Environments with rapid turnover—such as consumer packaged goods—require real-time demand sensing, intra-day replenishment triggers and micro-seasonal safety stock adjustments. Forecast granularity at hourly or daily levels informs tiered reorder points. Lead-time sensitivity analyses guide buffer sizing. Continuous feedback loops refine anomaly detection and demand drivers. Solutions like IBM Sterling Inventory Insight with Watson ingest real-time sales streams and generate automated reorder suggestions, reducing out-of-stock incidents and carrying costs by up to 15% in the first year.

Seasonal and Promotional Planning

Industries such as fashion and consumer electronics face short-duration demand surges that defy typical patterns. By mapping promotional calendars to probabilistic demand distributions and event lift multipliers, organizations calibrate safety stocks in alignment with promotional intensity and lead-time elasticity. Scenario-based simulations stress-test inventory positions under varying campaign parameters. Platforms like SAP Integrated Business Planning synchronize marketing forecasts with supply chain constraints, ensuring balanced service levels and minimal post-event markdown inventory.

Spare Parts and Service Inventory

Capital-intensive industries—such as aerospace and energy—contend with intermittent, skewed demand for long-tail SKUs. Bayesian updating of failure distributions and multi-tier stocking between central warehouses and regional hubs balances responsiveness against inventory investment. Oracle Cloud SCM integrates maintenance schedules and warranty data, applying predictive algorithms to position critical parts, reduce mean time to repair and improve equipment effectiveness.

Agile Buffer Strategies in Contingent Networks

In volatile global networks, risk-adjusted buffer analysis maps disruption probabilities against cost trade-offs, optimizing buffer placement for network-wide service metrics. Safety stocks adjust dynamically in response to real-time alerts and predictive risk scores. Coupa Supply Chain Design simulates disruption scenarios and recommends buffer nodes that reduce expected stockout costs by up to 20% in high-risk regions.

Omnichannel and E-Fulfillment Environments

Unified inventory views and responsive replenishment are essential when online and physical channels converge. Models incorporate cross-channel demand correlations and multi-touch attribution to forecast facility-level fulfillment volumes. Safety stocks account for local sales and internal transfers. Manhattan Active Warehouse Management enables intelligent agents to prioritize fulfillment waves, adjust buffers dynamically and maintain consistent service expectations across channels.

Perishable Goods and Cold Chain Logistics

Perishables—such as food and pharmaceuticals—require expiration-aware safety stocks and decay-adjusted demand forecasts. Shelf-life segmentation, temperature excursion data and spoilage rates inform buffer sizes that balance waste reduction with availability. Blue Yonder Luminate Platform integrates real-time telemetry to refine spoilage predictions, reducing waste by up to 25% annually while upholding quality standards.

Implementation Pillars and Essential Takeaways

Successful predictive stocking initiatives rest on five interdependent pillars: data integrity, model rigor, operational integration, organizational alignment and continuous governance.

Data Quality and Governance

- Ensure completeness and consistency of historical sales, lead times and supplier reliability data.

- Establish data governance processes for ownership, stewardship and validation across ERP and warehouse systems.

- Leverage scalable architectures for real-time ingestion of transactional and external signals using platforms like Amazon Forecast or Google Cloud AI Platform.

- Regularly audit for data bias and drift to detect non-stationary patterns from promotions and disruptions.

- Balance data enrichment with privacy and compliance, ensuring alignment with regional regulations.

Model Design and Interpretability

Trade-offs between accuracy, complexity and transparency guide model selection. Hybrid frameworks that combine explainable time-series models with targeted machine learning ensembles often yield optimal performance and stakeholder trust. Embed interpretability metrics—such as feature importance and partial dependence plots—into evaluation dashboards. Sensitivity analyses on hyperparameters and feature sets quantify forecast uncertainty and inform safety stock adjustments.

Operational Integration and Scalability

APIs and microservices enable modular integration of forecasting outputs into ERP and warehouse execution systems. For example, IBM Watson Studio offers deployment pipelines that link model predictions to automated reorder triggers. Plan for compute elasticity to handle peak processing needs during promotions and period-end cycles. A phased rollout—piloting select SKUs in controlled centers—validates performance while minimizing operational risk.

Organizational Alignment and Change Management

Transforming to AI-driven stocking redefines roles across functions. Supply planners become analytical interpreters; procurement leverages forward-looking insights for supplier negotiations; finance adjusts working capital forecasts. Leadership must foster a data-driven culture, invest in upskilling and establish cross-functional governance committees to align service-level targets, KPIs and escalation protocols. Transparent communication of objectives, pilot outcomes and feedback loops accelerates adoption.

Governance, Monitoring and Continuous Improvement

Ongoing oversight detects model degradation and sustains performance. Define KPIs—forecast accuracy, fill rate, turnover—and set thresholds for automated alerts. Continuous monitoring dashboards track demand drift and supply disruptions, triggering retraining or scenario updates. Root-cause analyses of forecast errors guide iterative feature engineering. Periodic reviews of safety stock multipliers ensure alignment with evolving risk tolerances. Feedback loops between planners and data scientists drive a test-learn-refine cycle for incremental improvements.

Key Limitations and Cautionary Notes

- Data Scarcity for New Products: Cold-start challenges require analog projections or expert judgment for SKUs with limited history.

- Market Volatility and Black-Swan Events: Models excel within historical regimes but may fail under unprecedented disruptions; stress-testing remains essential.

- Model Overfitting and Technical Debt: Complex ensembles deliver short-term gains but increase maintenance overhead; favor interpretability to manage technical debt.

- Integration Bottlenecks: Legacy systems may lack real-time interfaces; plan modernization roadmaps to avoid brittle point-to-point connections.

- Regulatory Constraints: Data privacy and trade compliance can limit external data enrichment and cross-border forecasting.

- Human-In-The-Loop Dependencies: Critical decisions—such as safety stock overrides—often require expert judgment; avoid fully black-box deployments.

Chapter 6: Integrating AI Agents with Enterprise Systems

Enterprise System Landscape and Integration Drivers

Global supply chains operate on a tapestry of enterprise resource planning platforms, warehouse management systems, transportation management solutions, and specialized inventory applications. These systems underpin procurement, production planning, order fulfillment, and logistics execution, yet often reflect a heterogeneous architecture of mergers, phased upgrades, regional customizations, and legacy on-premise deployments. As e-commerce growth intensifies demand volatility and customer expectations for rapid fulfillment, organizations seek to infuse AI agents capable of continuous demand forecasting, real-time anomaly detection, and adaptive replenishment into their existing environments. By integrating intelligent agents without discarding core investments, companies can pursue resilience and agility while maintaining stability, compliance, and performance across ERP and warehouse management frameworks.

Technical Architecture for AI Agent Integration

API Orchestration and Interface Standardization

Rather than creating point-to-point connections, leading organizations adopt an intermediary integration layer to harmonize interfaces, transform payloads, enforce versioning, and embed governance rules. Platforms such as MuleSoft and Boomi enable unified service endpoints across ERP, WMS, CRM, and third-party applications. This strategic API orchestration reduces schema mismatches, simplifies connector maintenance, and allows AI agents to invoke a standardized set of services for data retrieval and action execution.

Data Flow Design and Latency Management

Timely decision making requires balancing batch processing and real-time streaming. Batch pipelines handle large volumes of transactions but introduce latency, while event-driven architectures using technologies like Apache Kafka enable near-instantaneous transmission of order, shipment, and inventory events. A hybrid approach reserves streaming feeds for critical signals and scheduled batch jobs for less time-sensitive data, ensuring downstream systems remain performant and consistent even under high event volumes.

Model Operationalization and MLOps

Operationalizing machine learning models demands production-grade frameworks to version, deploy, monitor, and retrain inference services. Open-source solutions such as Kubeflow and MLflow, alongside managed pipelines offered by cloud providers, automate continuous integration and continuous deployment of models. AI agents access inference endpoints to generate restocking recommendations and demand anomaly alerts. Feedback loops that detect drift, log mispredictions, and trigger retraining pipelines are essential to sustain model accuracy and business impact.

Security, Compliance, and Governance

Inventory data often contains sensitive partner agreements, pricing schedules, and customer histories. Integration architects enforce end-to-end encryption, role-based access controls, and audit trails to safeguard data across on-premise and cloud environments. Identity-aware proxies, token-based authentication frameworks such as OAuth2 and JWT, and governance tools from providers like Informatica ensure that AI agents operate within defined security perimeters and comply with regulations such as GDPR and CCPA.

Scalability, Resilience, and Observability

AI-driven workloads exhibit peaks during promotions, seasonal surges, and supply disruptions. Elastic compute and storage resources—provisioned through cloud platforms like Microsoft Azure and Amazon Web Services—allow auto-scaling of model serving clusters. Containerization strategies, orchestration frameworks, and circuit breaker patterns ensure resilience, while observability platforms monitor latency distributions, error rates, and resource utilization. Failover mechanisms enable AI agents to degrade gracefully or switch to fallback modes when downstream systems are unavailable, preserving core operational continuity.

Organizational Readiness and Governance

Cross-Functional Alignment and Decision Rights

Successful AI integration hinges on cross-functional collaboration among supply chain planners, IT architects, data scientists, compliance officers, and executive sponsors. A steering committee or Center of Excellence establishes integration standards, performance metrics, and accountability frameworks. RACI matrices delineate roles for pipeline maintenance, model performance oversight, and operational authority, ensuring that AI agent recommendations are trusted, actionable, and aligned with strategic objectives such as inventory turnover and fill rate improvement.

Skills, Change Management, and Cultural Readiness

Integrating AI agents requires specialized skills in API design, event streaming, data engineering, MLOps, and change management. Organizations bridge capability gaps through targeted training programs, partnerships with system integrators, and “train-the-trainer” initiatives. Rigorous change management frameworks, informed by models such as the Technology Acceptance Model, address stakeholder concerns, communicate benefits, and pilot AI workflows in controlled settings. By positioning AI agents as partners rather than replacements, companies foster a culture of data-driven decision making and mitigate resistance.

Vendor and Partner Ecosystem Dynamics

Enterprises assemble ecosystems of ERP, WMS, integration middleware, cloud platforms, and AI specialists. Strategic selections—such as standardizing on Oracle for core ERP, engaging SAP for warehouse management, and leveraging niche tools like DataRobot for automated data quality controls—balance ecosystem coherence with domain innovation. Collaborative partnership models, including co-innovation workshops and joint governance boards, align roadmaps, prevent version mismatches, and ensure rapid issue resolution as integration complexity scales.

Infrastructure Flexibility and Data Management at Scale

Elastic Compute and Containerization

Supporting growth in data volume and agent concurrency requires infrastructure platforms that auto-scale compute clusters and decouple data stores. Modular architectures leverage cloud services from Azure and AWS, while containerization frameworks like Kubeflow and MLflow enable independent deployment, updates, and rollbacks of AI agent services. Without elasticity and isolation, enterprises risk latency spikes, outage risk, and degraded decision speeds at peak loads.

Master Data Consistency and Quality Controls

Master data inconsistencies—duplicate SKUs, misaligned location identifiers, or conflicting supplier records—undermine AI agent decisions. Enterprises establish single sources of truth, enforce consistent naming conventions, and implement automated validation rules. Solutions like DataRobot assist by detecting drift in feature distributions, while metadata catalogs document source systems, refresh cadences, and data quality metrics. Versioned data pipelines with rollback capabilities preserve lineage and enable forensic analysis when anomalies arise.

Security, Privacy, and Regulatory Compliance

- Role-based access controls and least-privilege principles restrict AI agents’ reach to sensitive financial or contractual data.

- TLS encryption for API communications and field-level encryption at rest prevent unauthorized exposure.

- Comprehensive audit logs capture agent inputs, model versions, and decision outputs for regulatory audits and incident investigations.

- Geo-fencing controls ensure compliance with data sovereignty regulations across jurisdictions.

Continuous Monitoring, Maintenance, and Risk Mitigation

Performance Monitoring and Model Drift Detection

Key performance indicators—forecast error rates, fill rates, decision latency—inform service-level objectives for AI agents. Automated monitoring alerts detect deviations, triggering retraining pipelines when drift is observed. Shadow deployments run new model versions in parallel to compare performance before full roll-out, reducing risk of degradation and preserving accuracy across multi-tier networks.

Limitations and Mitigation Strategies

- Data availability gaps from remote sites: mitigate via hybrid batch-stream architectures that default to safe thresholds when real-time feeds lag.