Harnessing AI Agents for Business Insights A Strategic Guide to Data Driven Decision Making

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

The Evolution of Business Analytics

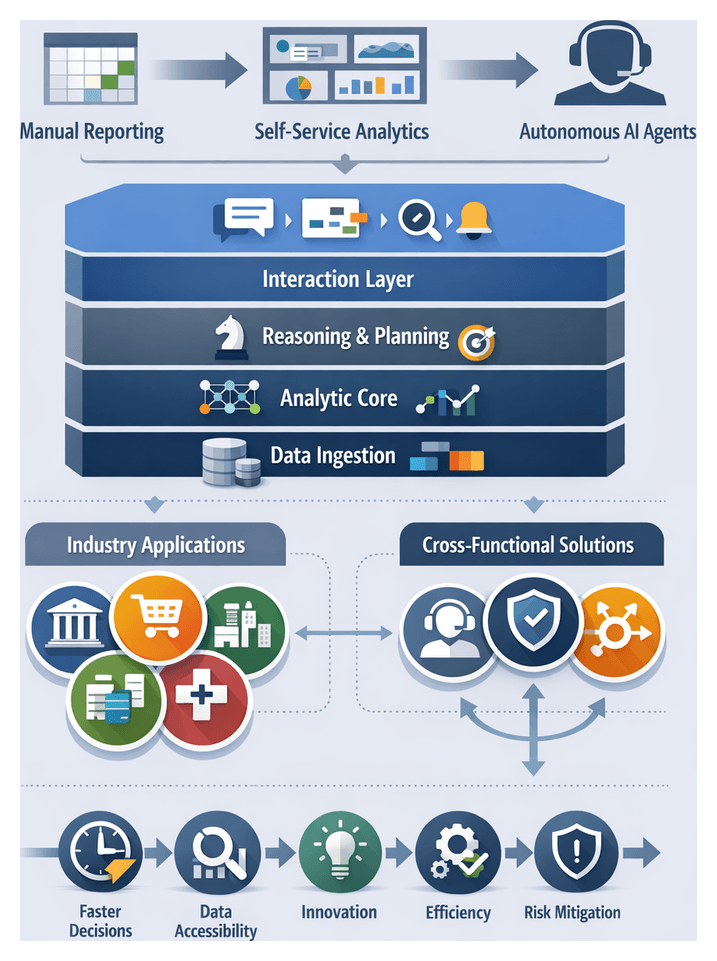

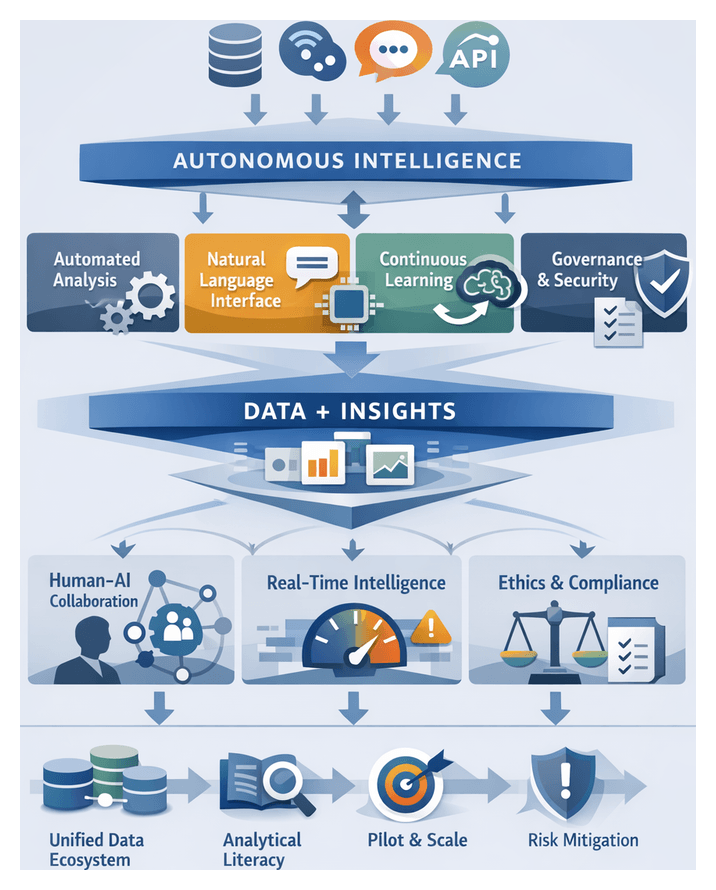

Over the past several decades, business intelligence has advanced from manual ledgers and overnight batch reports to self-service dashboards and, more recently, to autonomous intelligence platforms powered by AI.

In the 1980s and 1990s, organizations centralized data in warehouses and relied on IT-driven reporting. The early 2000s introduced self-service tools that empowered analysts and line-of-business managers to query data directly. Despite these gains, rising data volumes, complexity, and accelerated decision cycles revealed the limitations of static dashboards and delayed insights.

Today’s enterprises ingest streams of structured and unstructured data from CRM systems, IoT sensors, web logs, social media, and external marketplaces. Traditional BI workflows struggle to keep pace as analysts devote most of their time to cleaning and integrating data rather than extracting insights.

Autonomous intelligence shifts the paradigm from retrospective reporting to proactive, prescriptive analytics. AI agents continuously ingest and normalize multi-modal data, detect patterns, forecast trends, and recommend actions with minimal human intervention. This transition aligns decision support with the tempo of modern business, reducing latency between data arrival and strategic action.

Several factors have converged to drive this evolution:

- Data Proliferation—Rapid growth in data sources and formats demands automated ingestion and synthesis.

- Computational Advances—Deep learning, reinforcement learning, and natural language processing enable adaptive models that refine themselves over time.

- Cloud Infrastructure—Scalable storage and distributed compute lower barriers to enterprise-grade analytics.

- API-Driven Ecosystems—Seamless integration with operational systems embeds intelligence into workflows.

- Demand for Agility—Competitive pressures require fast, contextually relevant recommendations on demand.

By embedding AI agents within decision workflows, organizations transition from nightly ETL and static dashboards to continuous insight delivery. This real-time orientation not only accelerates responses to anomalies—from supply chain disruptions to shifts in customer sentiment—but also enriches strategic depth through holistic analyses that integrate predictive modeling, simulation, anomaly detection, and optimization.

Defining Autonomous AI Agents and Architecture

Autonomous AI agents represent a spectrum of capabilities, from rule-driven alerts to fully self-optimizing systems. Industry maturity models typically describe progression through:

- Decision Support—Agents surface insights based on predefined criteria.

- Goal Orientation—Agents execute tasks within boundaries and refine performance via feedback.

- Autonomous Decisioning—Agents negotiate constraints, reallocate resources, and learn new objectives without human directives.

Architectural paradigms for autonomous agents include:

- Reactive Agents—Respond to stimuli with simple inference engines, suited for structured environments.

- Deliberative Agents—Incorporate planning and knowledge representation for multi-step reasoning.

- Hybrid Cognitive Agents—Combine symbolic rules with statistical learning and leverage large language models for natural language interfaces.

To assess readiness, organizations employ evaluative frameworks that map analytics maturity from descriptive and diagnostic through predictive and prescriptive stages to autonomous decisioning. Performance metrics span both technical and business dimensions:

- Accuracy and Precision—Alignment of predictions with actual outcomes.

- Timeliness—Latency in generating decisions at required data velocities.

- Adaptability—Speed of learning new patterns and handling concept drift.

- Resource Efficiency—Computational cost and scalability under load.

- Business Impact—Improvements in revenue, cost savings, risk mitigation, and customer satisfaction.

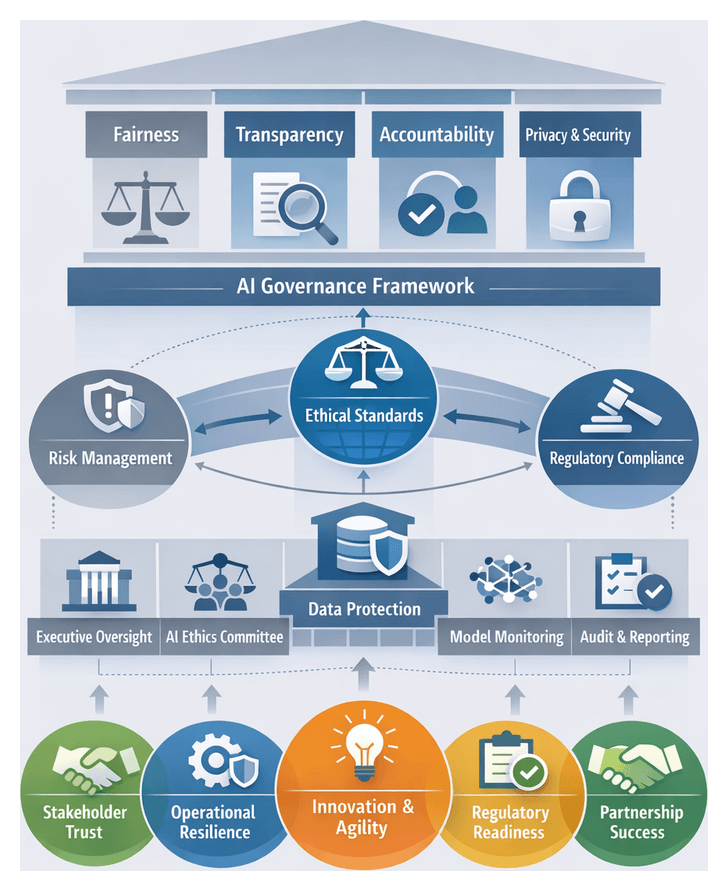

As autonomy increases, explainability, trust, and governance become critical. Effective oversight requires:

- Explainable Decisions—Agents provide rationales via feature importance, counterfactuals, or human-readable rules.

- Governance Controls—Policies for approval, escalation, and model revision maintain risk boundaries.

- Continuous Monitoring—Automated detection of drift, bias, and performance degradation ensures reliability.

Domain-specific requirements shape agent design. Financial services emphasize stress testing and compliance; manufacturing prioritizes real-time production scheduling and predictive maintenance; healthcare demands patient data privacy and transparent diagnostic reasoning; retail focuses on segmentation and sentiment analysis. Balancing fully autonomous operation with human-in-the-loop review ensures that routine decisions are automated while high-stakes scenarios escalate to experts under structured protocols.

The Imperative for AI-Driven Insights

In a landscape defined by rapid change and intensified competition, continuous, adaptive insights have become essential. Market leaders and nimble startups alike leverage AI agents to personalize experiences, optimize operations, and accelerate innovation. The inability to harness real-time analytics risks operational inefficiencies, lost market share, and suboptimal strategic decisions.

Data Velocity and Complexity

Enterprises process vast volumes of streaming and static data—IoT telemetry, transaction logs, unstructured text, and social signals. Autonomous AI agents automate data curation, anomaly detection, and trend identification, delivering holistic insights instead of outdated snapshots.

Real-Time Agility

Agents deliver decision-ready recommendations on demand. In logistics, they optimize routes in response to live traffic and weather data. In energy grids, they balance supply and demand instantaneously. Embedding real-time analytics transforms decision making into a continuous practice, enabling rapid pivots and risk mitigation.

Scalability and Resource Optimization

By automating routine analytical tasks—data cleansing, correlation analysis, alert generation—agents free specialized teams to focus on strategic interpretation. Marketing strategists, for example, rely on sentiment analysis agents to process millions of social posts, while cybersecurity teams triage alerts flagged by threat detection agents.

Regulatory and Ethical Compliance

Compliance with GDPR, CCPA, and industry mandates demands traceability, transparency, and bias mitigation. Autonomous agents must incorporate governance frameworks that document data lineage, justify analytical logic, and safeguard sensitive information.

Human-AI Collaboration

The greatest value emerges when AI agents augment, not replace, human judgment. Collaborative models pair machine-driven scenario projections with expert contextual insights. Feedback loops between users and agents drive continuous learning and alignment with evolving business priorities.

Measuring Value and ROI

Effective ROI frameworks integrate operational KPIs—decision latency reduction, forecast accuracy—with business outcomes—revenue growth, cost savings, customer retention. Insurance carriers, for instance, track improvements in claims cycle times and customer satisfaction. Supply chain leaders measure reductions in stock-outs and working capital requirements.

Application Domains

- Customer experience management through dynamic personalization and sentiment monitoring.

- Supply chain resilience via predictive demand forecasting and risk forecasting.

- Financial planning with continuous budgeting, scenario modeling, and anomaly detection.

- Product innovation through trend analysis, competitive benchmarking, and market gap identification.

- Risk management and compliance via automated monitoring of regulatory changes and audit preparedness.

Synthesizing Principles and Forward Outlook

Across maturity models and industry case studies, several themes unite autonomous intelligence implementations:

- Converged Analytics and Conversation—Natural language interfaces translate data into narratives aligned with strategic priorities.

- Interoperability—Seamless integration across legacy systems, cloud services, and external APIs underpins scalability.

- Automation with Oversight—Balanced human-in-the-loop governance refines agent behavior and manages risk.

- Ethics and Trust—Explainability, bias mitigation, and continuous monitoring build stakeholder confidence.

- Domain-Driven Design—Tailored agents yield higher adoption and more actionable outputs than one-size-fits-all solutions.

- Continuous Learning—Agents that learn from feedback and evolving data deliver increasingly precise recommendations.

Embedding these principles transforms operating models: decision cycles accelerate as real-time insights replace batch reporting; departments gain democratized access to consistent intelligence; skilled professionals shift toward scenario planning and creative problem solving; and automated monitoring agents detect anomalies before they escalate.

Despite these benefits, organizations must address key considerations:

- Data Quality and Governance—Accurate, consistent data and clear lineage are foundational.

- Bias and Fairness—Regular assessments prevent inherited training biases from distorting outcomes.

- Explainability Versus Complexity—Balancing model performance with transparent reasoning is critical in regulated sectors.

- Integration Challenges—Clear architectural patterns are needed to avoid fragmented deployments.

- Governance and Accountability—Defined ownership, audit trails, and remediation processes safeguard trust.

- Skill Gaps—Upskilling, role redefinition, and cultural change require sustained leadership support.

- Cost-Benefit Tradeoffs—Comprehensive TCO analyses ensure investments deliver projected value.

Looking ahead, mature organizations will establish centers of excellence to standardize best practices, share reusable components, and curate dynamic catalogs of agent capabilities. The most transformative agents will blend analytical rigor with expansive research and document parsing by converging tools such as ChatExcel, Raycast, Consensus, Perplexity, Humata, and AskYourPDF. These integrated agents will anticipate strategic inflection points, recommend portfolio adjustments, and propose policy changes in real time.

Realizing this vision requires investment in scalable infrastructure, robust governance frameworks, and talent development that bridges data science, domain expertise, and ethical stewardship. Organizations that embed autonomous agents as core components of an intelligent enterprise will sustain competitive advantage in an era defined by data velocity and complexity.

Chapter 1: The Evolution of AI Agents in Business Insights

Emergence of Autonomous Intelligence in Business

Organizations have progressed from manual reporting in the 1990s to intelligent agents that continuously monitor data, detect patterns, and recommend actions with minimal human intervention. Early BI efforts relied on static spreadsheets and scheduled reports. The advent of OLAP enabled interactive, multidimensional analysis but still required expert query designers. In the 2010s, self-service analytics democratized data exploration but left users overwhelmed by dashboards and alerts. The rise of streaming sources, IoT, and unstructured inputs demanded a new generation of autonomous AI agents capable of end-to-end ingestion, analysis, and proactive guidance.

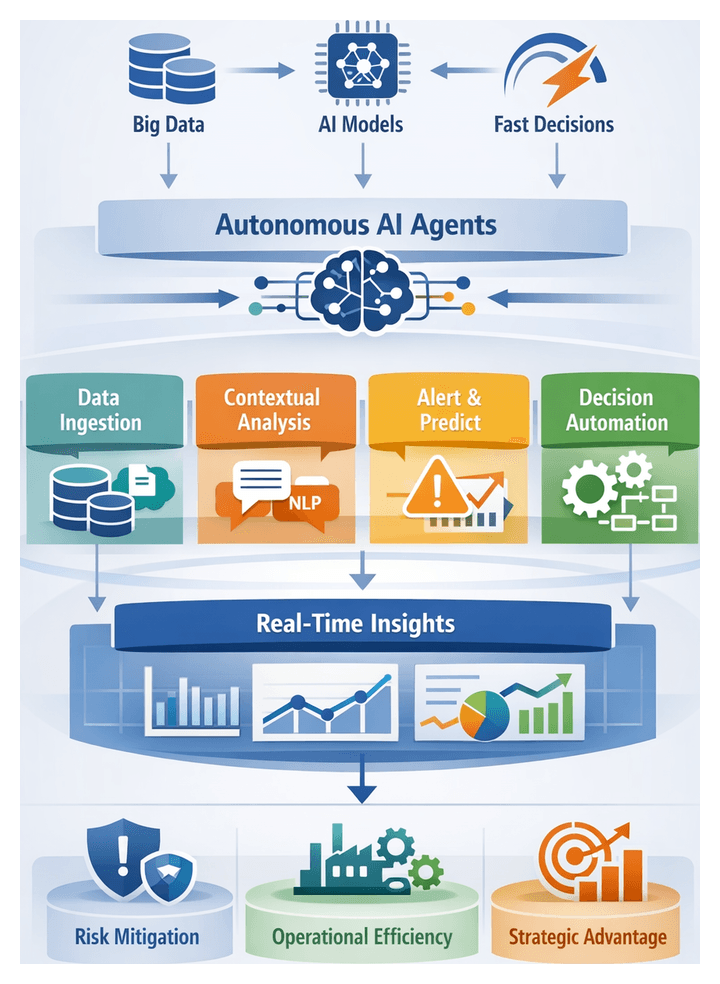

An autonomous AI agent integrates four core components:

- Data Ingestion Layer: Collects and normalizes data from databases, document repositories, streaming feeds, and APIs.

- Analytic Core: Applies machine learning, natural language processing, and statistical models to detect anomalies, classify information, and forecast outcomes.

- Reasoning and Planning Module: Evaluates scenarios using business rules and optimization routines, prioritizing actions to achieve high-level objectives.

- Interaction Layer: Delivers insights through conversational interfaces, automated workflows, and embedded alerts.

Distinctive capabilities include context awareness, adaptive learning, goal-oriented planning, and explainable reasoning. These features enable continuous monitoring of sales performance, supply chain dynamics, and customer sentiment, transforming disparate inputs into strategic guidance and elevating decision making from reactive to anticipatory.

The Transition to Conversational Analytics

Conversational AI interfaces replace menu-driven dashboards with natural language dialogue, lowering barriers for nontechnical users. Successful implementations translate colloquial queries into precise database operations, maintain context across multiple turns, and integrate insights seamlessly into workflows.

Evaluative Criteria

Adoption frameworks extend models such as the Technology Acceptance Model to include metrics for linguistic fluency, intent recognition reliability, and domain terminology handling. Key dimensions include usability, context retention, and governance:

- Usability and Cognitive Load—Evaluators assess clarity of system prompts, support for follow-up questions, error recovery, and feedback mechanisms that build trust.

- Context Retention and Dialogue Management—Agents must preserve semantic memory, ask clarifying questions, and maintain session continuity to support complex, multi-turn inquiries.

- Analytical Accuracy and Data Governance—Compliance with access controls, exposure of lineage metadata, and audit logging are critical in regulated environments.

Vendor Platforms

- Power BI Q&A provides deep integration with Azure services and semantic modeling for interactive Q&A.

- Tableau Ask Data offers an intuitive question builder and seamless transition to visualizations.

- ThoughtSpot specializes in AI-driven search analytics that scale across large data lakes.

Enterprise Adoption Scenarios and Strategic Impact

Autonomous AI agents now move beyond pilots to strategic deployments across functions and industries. Illustrative use cases reveal common patterns in value creation:

Industry-Specific Scenarios

- Financial Services—Real-time fraud detection and risk scoring streamline investigations and strengthen compliance readiness.

- Retail and E-Commerce—Demand forecasting, dynamic pricing, and personalized promotions drive margin uplift and customer loyalty.

- Manufacturing and Supply Chain—Predictive maintenance and rerouting simulations reduce downtime and inventory costs.

- Healthcare and Life Sciences—Clinical decision support and drug discovery agents accelerate diagnoses and research cycles.

- Energy and Utilities—Grid balancing forecasts and anomaly detection enhance resilience and environmental compliance.

Cross-Functional Deployments

- Customer Experience Management—Agents analyze omnichannel interactions and sentiment to personalize engagement and pre-empt issues.

- Risk, Compliance, and Audit—Continuous policy monitoring and automated audit reporting reinforce governance frameworks.

- Strategic Planning and Corporate Development—Competitive intelligence agents recommend M&A targets and market entry strategies.

- Talent Management and HR—Recruitment and upskilling agents match candidates, forecast workforce needs, and curate training pathways.

- Accelerated Decision Cycles—Weeks of analysis condense to minutes, enabling rapid responses to emerging trends.

- Data-Driven Culture—Natural language access empowers nontechnical stakeholders to interrogate data directly.

- Innovation Acceleration—Agents surface novel correlations and scenario projections, inspiring new offerings.

- Resource Optimization—Predictive insights guide targeted allocation of inventory, R&D budgets, and workforce deployment.

- Risk Mitigation—Continuous alerts preempt compliance and security incidents.

Interpretive Frameworks and Prerequisites

Leaders assess deployments through maturity models, value chain mapping, strategic alignment models, and Return on Analytics (ROA) analyses. Successful scaling depends on foundational capabilities:

- Data Readiness—Governed, accessible data pipelines ensure agent reliability.

- Leadership Sponsorship—Executive buy-in secures resources and cross-functional collaboration.

- Governance and Ethics—Policies for privacy, fairness, and transparency maintain stakeholder trust.

- Skill Development—AI literacy programs and cross-disciplinary teams foster effective adoption.

- Change Management—Pilots, feedback loops, and clear communication ease transitions to AI-augmented workflows.

Key Strategic Lessons for AI Agent Deployment

The journey from manual BI to autonomous agents offers critical lessons to guide future initiatives:

- Balance Automation and Expertise—Delegate data ingestion and routine analysis to agents, while retaining expert oversight for contextual judgment and ethical considerations.

- Ensure Data Integrity—Invest in data cataloging, lineage tracking, and anomaly detection to protect against error propagation and maintain trust.

- Design for Interpretability—Embed explainable components, visualize key drivers, and provide confidence metrics to foster transparency and accountability.

- Integrate Technologically and Culturally—Establish cross-functional governance, pilot programs, and training to align stakeholders and reduce resistance.

- Align with Strategic Objectives—Define clear performance indicators, prioritize high-ROI use cases, and tie agent features to business goals.

- Navigating Regulatory and Ethical Standards—Embed compliance checks, data minimization, and fairness constraints within agent workflows.

- Embrace Iterative Improvement—Leverage feedback loops and performance monitoring to refine models, adapt to new data, and sustain relevance.

- Plan for Scalability—Adopt modular architectures that balance complex strategic analytics with lightweight microservices for real-time queries.

- Mitigate Bias and Ensure Equity—Conduct bias audits, enforce fairness during training, and monitor outcomes across demographic dimensions.

- Foster Cross-Disciplinary Collaboration—Combine domain experts, data scientists, and engineers in co-located teams to align technical solutions with business needs.

- Recognize Autonomy Limits—Implement hybrid models where ambiguous or high-risk decisions escalate to human experts.

- Conduct Economic Analysis—Include data engineering, integration, change management, and support costs in total cost of ownership assessments.

- Develop a Roadmap for Next-Generation Agents—Plan for advanced capabilities such as multimodal processing and agent-to-agent collaboration, anchored by iterative value delivery and governance.

By internalizing these lessons, organizations can navigate the complexities of autonomous intelligence, harness its transformative potential, and maintain a competitive edge in an evolving data-driven landscape.

Chapter 2: Underlying Technologies Powering AI Agents

Technological Foundations of Autonomous AI Agents

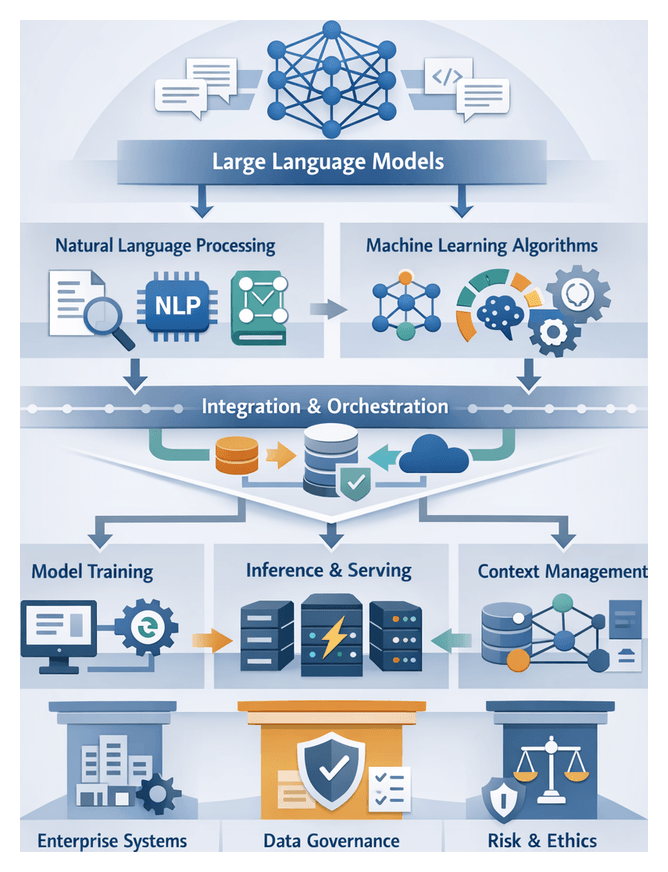

Large Language Models as Cognitive Engines

Large language models power autonomous agents by interpreting, generating, and reasoning over human language. Based on transformer architectures and self-attention mechanisms, these models learn contextual relationships across vast text corpora during pretraining. OpenAI’s GPT series and Hugging Face Transformers serve as prime examples of high-capacity models that can be fine-tuned for domain-specific tasks such as financial analysis or legal contract interpretation. Enterprises often deploy these models in secure enclaves or private clouds to protect sensitive data while leveraging continuous community enhancements.

Natural Language Processing and Semantic Understanding

Specialized NLP engines refine raw text into structured representations. Core components include tokenization, part-of-speech tagging, named entity recognition, dependency parsing, and semantic role labeling. Tokenizers handle subword units to accommodate rare or domain-specific terms. Entity recognition modules extract company names, dates, and monetary amounts. Dependency parsers and semantic role labelers resolve grammar, intent, and “who did what to whom.” Frameworks such as spaCy and adapters within Hugging Face Transformers integrate large language models with task-specific pipelines, ensuring high-throughput, accurate semantic processing.

Machine Learning Beyond Language Models

Beyond language tasks, autonomous agents rely on a variety of machine learning algorithms. Supervised techniques like gradient boosting trees, convolutional neural networks, and recurrent architectures enable predictive analytics and classification. Unsupervised methods such as k-means clustering and autoencoders support customer segmentation and anomaly detection. Reinforcement learning optimizes decision policies through sequential interactions, applicable to dynamic pricing or workflow orchestration. Implementation ecosystems like TensorFlow and PyTorch facilitate distributed training, GPU acceleration, and model serialization, while explainability tools such as SHAP and LIME provide transparency for compliance and stakeholder trust.

Data Orchestration for Scalable Workflows

Robust data infrastructure underpins autonomous intelligence, coordinating ingestion, cleansing, transformation, and feature engineering. Streaming platforms like Apache Kafka and NATS handle low-latency message brokering, while workflow managers such as Apache Airflow and Prefect orchestrate batch pipelines. Cloud-native services like AWS Glue and Google Cloud Dataflow offer managed, serverless alternatives with built-in connectors. Metadata management via data catalogs and lineage tracking ensures auditability, while feature stores such as Feast and Tecton centralize definitions to maintain consistency across training and inference.

Integrating Data, Models, and Infrastructure

Data Pipelines and Orchestration Frameworks

Effective AI agents treat pipelines, models, and infrastructure as an ecosystem rather than isolated layers. Data engineers build ETL and event-streaming processes to handle transactional databases, IoT feeds, and third-party APIs. Apache Kafka and cloud-native event buses supply real-time data streams. Batch processing on Databricks and Snowflake supports large-scale transformations. Integration metrics—end-to-end latency, error rates, and throughput—guide tool selection. Monitoring, alerting, and automated remediation uphold data integrity, driving resilience in AI deployments.

Model Training and Continuous Learning

MLOps platforms and experiment-tracking tools enable reproducible research and production-grade pipelines. Solutions like Kubeflow and MLflow integrate version control, hyperparameter tuning, and CI/CD for model retraining. Retraining triggers—time-based or performance-driven—balance data freshness with operational risk. Distributed training on GPU clusters, spot instances, or serverless environments optimizes resource utilization. This continuous learning approach ensures agents adapt to evolving customer behaviors and market shifts.

Inference Workflows and Real-Time Serving

Deployment strategies vary from batch inference to online serving. TensorFlow Serving, TorchServe, and NVIDIA Triton Inference Server provide scalable endpoints for model inference. Key considerations include latency guarantees, autoscaling, and fault tolerance with circuit breakers and canary deployments. Performance monitoring—P99 latency, throughput under load, and cost per inference—informs infrastructure tuning. A mature serving layer integrates A/B testing and fallback mechanisms to maintain availability of critical AI services.

Context Management and State Handling

Stateful context management is essential for coherent agent interactions. Vector databases such as Pinecone and Weaviate index embeddings, support namespace isolation, and perform similarity searches. Session tracking and retrieval-augmented generation frameworks preserve conversation histories and decision contexts. Balancing in-memory caches and persistent stores optimizes speed and durability. Rigorous privacy controls safeguard sensitive identifiers and personal preferences, meeting data protection standards.

Enterprise Integration and Governance

Interoperability and Architectural Alignment

Seamless integration with legacy systems and modern services depends on API-first strategies, event-driven architectures, and domain-driven design. Standardized interfaces reduce friction, enabling AI agents powered by OpenAI or Microsoft Copilot to plug into existing workflows. Microservices and containerization facilitate modular upgrades, supporting evolving model versions and context services without disrupting enterprise operations.

Data Governance, Quality, and Compliance

Embedding AI agents amplifies the need for robust governance. Frameworks like the Data Management Maturity Model guide capabilities in metadata management, lineage tracking, and policy enforcement. Detailed catalogs document data sources, transformation logic, and feature vectors. Access controls enforce role-based permissions and encryption protocols. Quality assurance rules and statistical monitors detect anomalies or bias, ensuring that autonomous agents produce reliable, auditable outputs aligned with regulatory requirements.

Organizational Roles and Change Management

Successful integration reshapes organizational structures. A Center of Excellence coordinates best practices and governance for AI agent lifecycles. Hybrid teams of data engineers, AI specialists, and business analysts align technical feasibility with domain relevance. Change management programs prepare staff through transparent communication and training, framing agents as collaborative tools. Performance metrics span technical KPIs—latency and throughput—and strategic impact measures such as decision cycle time and cost savings.

Risk, Ethics, and Explainability

Scaling AI agents raises risk, ethical, and compliance considerations. Methodologies like OCTAVE Allegro assess threats and prioritize mitigations. Privacy impact assessments guard against regulatory penalties. Bias and fairness audits leverage SHAP and LIME for interpretable analytics. Zero-trust security models protect endpoints and data stores. Transparent explainability satisfies regulators and fosters stakeholder trust, balancing the power of deep neural networks with the need for interpretability in regulated industries.

Balancing Trade-Offs for Scalable, Sustainable AI

Model Scale, Performance, and Cost

High-capacity LLMs such as GPT-4 excel in broad reasoning but impose computational and latency costs. Smaller domain-tuned models offer faster responses at lower expense. A dual-tier architecture leverages a general model for complex queries and lightweight models for routine tasks, optimizing resource utilization while maintaining analytical depth.

Deployment Paradigms and Vendor Ecosystems

On-premise environments grant control over data governance and latency but demand capital investment and operational expertise. Public cloud platforms provide elasticity and managed services for AI workloads. A hybrid approach balances regulatory compliance with agility. Proprietary solutions often include optimized performance and support SLAs, while open-source frameworks such as Hugging Face Transformers promote transparency and avoid lock-in. Enterprises commonly adopt blended strategies, standardizing on open standards and selectively integrating proprietary modules for specialized functions.

Processing Paradigms and Real-Time Constraints

Batch workflows on Databricks and Snowflake suit large-scale transformations but incur latency. Streaming architectures with Apache Kafka or cloud event buses enable low-latency insights for customer sentiment monitoring or fraud detection. High-frequency decision environments may deploy quantized models at the edge to meet millisecond constraints, accepting modest accuracy trade-offs. Analysts align processing paradigms with service-level objectives and query patterns to meet business needs.

Limitations and Operational Considerations

- Data privacy and governance require anonymization, lineage tracking, and strict access controls to meet GDPR, CCPA, and industry-specific mandates.

- Talent shortages in data engineering, machine learning, and DevOps necessitate ongoing training, partnerships, and upskilling programs.

- Vendor lock-in and interoperability challenges can be mitigated with abstraction layers, open standards, and containerized deployments.

- Total cost of ownership includes compute resources, model retraining, infrastructure maintenance, and staffing overhead, demanding rigorous cost governance.

- Change management and cultural readiness hinge on transparent communication, stakeholder involvement, and incremental rollouts that demonstrate value.

- Algorithmic bias and ethical implications require regular audits, fairness metrics, and integration of bias detection methodologies into development pipelines.

- Scalability boundaries must be addressed through elastic provisioning, distributed inference, and capacity planning to accommodate peak demand.

- Continuous monitoring of model drift, latency trends, and usage patterns, coupled with automated retraining and remediation, secures ongoing agent reliability.

Chapter 3: Data Analysis and Visualization with AI Agents

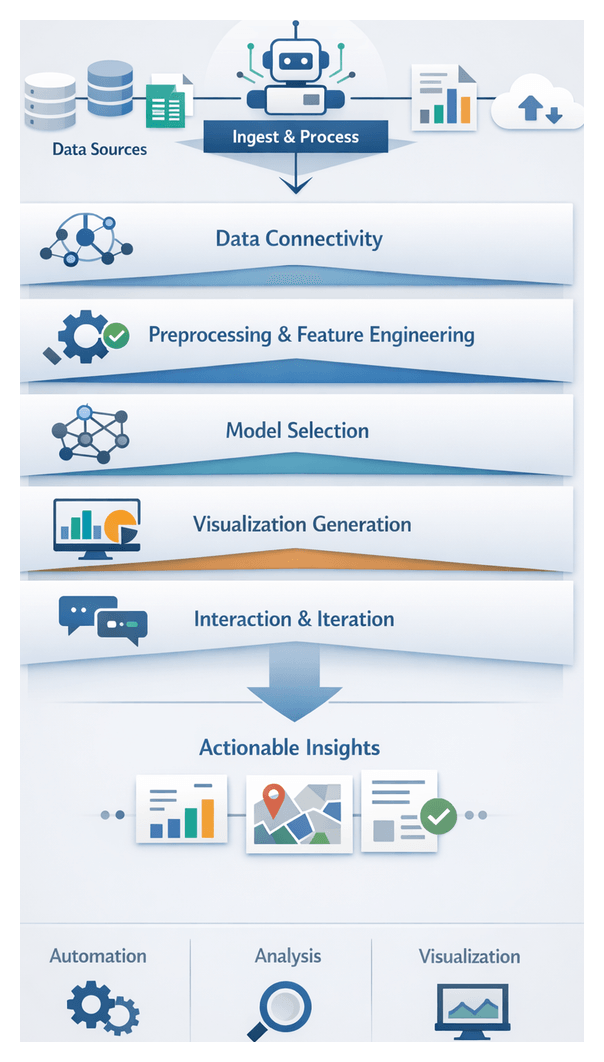

Autonomous AI-Driven Data Interpretation Agents

In an era defined by rapidly expanding data volumes and complexity, autonomous AI-driven agents transform raw information into actionable insights without manual intervention. These systems ingest structured and unstructured sources—relational databases, spreadsheets, logs, documents and streaming feeds—perform automated cleansing, normalization and feature engineering, apply statistical tests or machine learning models, and generate context-aware visualizations and narrative summaries in real time. By automating connectivity to on-premises and cloud data platforms, handling schema variations and missing values, and selecting optimal analytical methods, they accelerate the analytics lifecycle and free decision makers to focus on strategy rather than data mechanics.

At the heart of these agents lies an end-to-end workflow comprising five stages:

- Data Connectivity: Securely establishing connections to diverse sources and reconciling authentication protocols.

- Preprocessing and Feature Engineering: Cleansing raw inputs, detecting outliers, imputing missing values and deriving new features.

- Analytical Model Selection: Automatically choosing and tuning statistical or machine learning algorithms based on metadata and objectives.

- Visualization Generation: Mapping results to charts, heat maps, geospatial plots or dashboards, annotating key patterns and enabling interactivity.

- Interaction and Iteration: Supporting conversational queries and GUI controls that allow users to refine analyses and drill into specific segments.

By embedding logging, versioning and audit trails at each stage, autonomous agents also uphold governance, compliance and reproducibility standards essential for regulatory frameworks such as SOX, GDPR and HIPAA. They democratize analytics through intuitive interfaces, natural language querying and presentation-ready graphics, empowering business analysts, marketing managers and operational leaders to derive insights without relying on scarce data science resources.

Evaluating Visualization Agents

Selecting the right visualization agent requires systematic evaluation across strategic dimensions. Two leading solutions—ChatExcel and Raycast—exemplify distinct approaches tailored to different user personas and workflows. Decision makers should assess capabilities in data input handling, natural language understanding, visualization variety, interaction workflows, integration flexibility, customization, performance and governance.

Data Input Handling and Preprocessing

ChatExcel interfaces directly with spreadsheets, parsing cell ranges, formulas and pivot tables via automated heuristics that detect headers, dates and categorical variables. This hands-off approach suits teams working with moderately sized tabular data. Raycast relies on API-based ingestion of JSON or CSV streams from back-end services. Its explicit data mapping offers flexibility for high-velocity pipelines but demands careful configuration to meet governance and accuracy requirements.

Natural Language Understanding and Prompt Engineering

ChatExcel leverages transformer-based models to interpret free-form queries—”Show quarterly revenue growth by region”—and maintain context for follow-up prompts. Its conversational paradigm supports exploratory analysis akin to dialoguing with a data analyst. Raycast employs AI-assisted command palettes where shorthand keywords map to predefined scripts. This controlled lexicon reduces misinterpretation but limits open-ended inquiry for nontechnical users.

Visualization Variety and Fidelity

ChatExcel generates bar, line, scatter, heatmap and pie charts with configurable axes, color palettes and annotations, producing high-resolution images suitable for executive presentations. It also exports to BI platforms such as Tableau or Power BI. Raycast delivers lightweight inline charts and widgets optimized for rapid prototyping within developer UIs. Its minimalistic style accelerates ad hoc checks but lacks the polish required for stakeholder-facing reports.

User Interaction and Iteration Workflows

Through its chat interface, ChatExcel enables iterative refinement of filters, parameters and drill-downs. Users may encounter session drift over prolonged dialogs, requiring context resets. Raycast’s command chaining facilitates efficient, script-driven transformations but can disrupt the exploratory flow for unstructured analyses. Organizations often maintain shared script repositories to standardize workflows and democratize knowledge across teams.

Integration with Existing Systems

ChatExcel offers native connectors to cloud spreadsheet services, single sign-on and data synchronization across teams. It supplements BI ecosystems via export functions. Raycast features a marketplace of community-contributed extensions for GitHub, Datadog and SQL databases. While the open ecosystem accelerates customization, it also demands rigorous security vetting and governance processes to manage extension provenance and mitigate risks.

Customization and Extensibility

Organizations can extend ChatExcel’s template library through JSON configurations to align with corporate branding and proprietary metrics. Raycast’s JavaScript-based scripts afford maximal flexibility for bespoke transformations and visual logic, but place maintenance burdens on engineering teams. Governance frameworks for script lifecycle management are vital to prevent technical debt.

Performance, Scalability and Cost Efficiency

ChatExcel scales reliably for spreadsheets under several hundred megabytes, though inference latency and licensing costs can rise with larger deployments. Raycast executes commands locally, minimizing cloud latency and consumption fees, but may require hybrid architectures to balance speed with centralized governance. A thorough total cost of ownership analysis should account for inference costs, infrastructure provisioning and support overhead.

Governance, Security and Compliance

ChatExcel provides data encryption at rest and in transit, granular access controls and detailed audit logs. Raycast’s open extension model necessitates zero-trust principles, role-based access controls and network segmentation to secure script execution. Organizations with mature engineering security practices can leverage Raycast effectively, provided periodic penetration testing and sandbox environments are in place.

Actionable Data Storytelling in Practice

Autonomous agents excel when they frame analytical outputs as narratives that guide stakeholders from context through insights to recommendations. The following case studies demonstrate how ChatExcel and Raycast elevate charts into strategic stories.

Retail Demand Forecasting and Inventory Optimization

A national retailer integrated ChatExcel into its BI platform to forecast SKU-level demand across 350 stores. By synthesizing point-of-sale data, promotional calendars and weather feeds, the agent overlaid confidence bands on historical trends, flagged high-variance locations for daily review and embedded collaborative annotations from sales, supply chain and finance teams. This narrative approach reduced lost sales by 15 percent and excess inventory by 12 percent within three quarters.

Healthcare Operational Efficiency and Patient Flow Analysis

A regional hospital network deployed Raycast to visualize patient flow across emergency, surgical and inpatient units. Interactive heat maps narrated bottlenecks by time of day and care pathway, contextualized against staffing levels and case complexity. Sequential storyboards were reviewed in operational huddles, driving a 20 percent improvement in operating room efficiency and a 25 percent reduction in emergency department boarding times.

Financial Services Risk Monitoring and Compliance

An investment bank’s risk division adopted an AI visualization agent to narrate Value-at-Risk movements, portfolio concentrations and stress-test outcomes. The dashboard framed VaR changes as a story, identifying currency and credit drivers, visualizing compliance guardrails with sentiment-based alerts and linking insights to hedging strategies. Daily risk sign-off time fell by 30 percent, and senior interventions became timelier during market volatility.

Manufacturing Predictive Maintenance and Trend Insights

A global manufacturer used an autonomous agent to combine sensor telemetry, maintenance logs and failure histories into interactive trend narratives. Anomaly annotations highlighted inflection points, lifecycle chapters guided inspection cadences and narrative dashboards synchronized predictive insights with shift schedules. Unplanned downtime fell by 40 percent and maintenance costs by 22 percent over six months.

Marketing Campaign Performance and Attribution Modeling

A consumer goods firm deployed an agent to ingest multi-channel spend, clickstream data and sales lift metrics. Layered bar charts and annotated time-series plots walked marketers through channel contributions, lift context and budget reallocation scenarios. This narrative-driven attribution modeling delivered a 12 percent uplift in marketing ROI and boosted confidence in investment decisions across regions.

Best Practices and Strategic Considerations

Maximizing value from AI visualization agents requires rigorous accuracy protocols, proactive mitigation of limitations and alignment with organizational strategy.

- Ensure Data Provenance and Governance: Track lineage, enforce access controls and maintain audit logs to detect biases and comply with regulations.

- Validate Model Outputs: Define domain-specific benchmarks, perform spot-checks with subject-matter experts and monitor error rates, confidence intervals and model drift.

- Mitigate Common Limitations: Retrain agents regularly to prevent overfitting, embed rule-based heuristics to maintain business relevance, adopt standardized visualization guidelines and pair black-box models with explainability modules.

- Embed Governance Frameworks: Establish policies for data access, model updates and user permissions, ensuring accountability and consistency.

- Foster Interdisciplinary Collaboration: Align data scientists, analysts and executives through joint workshops and shared roadmaps that connect technical capabilities with strategic objectives.

- Implement Change Management: Provide training, documentation and knowledge sharing to build AI fluency and accelerate adoption among end users.

- Adopt Iterative Evaluation: Treat deployments as continuous improvement cycles by tracking usage metrics, soliciting feedback and refining agent parameters over time.

- Integrate with BI Ecosystems: Ensure seamless interoperability through well-designed APIs, minimizing duplication and preserving historical reporting contexts.

Emerging Trends and Future Directions

- Multimodal Insight Generation: Fusion of tabular data, text, images and streaming media will yield richer narratives, demanding robust context management.

- Adaptive Visualization Agents: Systems will learn user preferences to personalize styles while maintaining transparency of customization algorithms.

- Ethical Visualization Practices: Frameworks will guide decisions on highlighting or obscuring sensitive information, ensuring privacy and avoiding manipulative representations.

- Democratization of Data Storytelling: Low-code interfaces will empower nontechnical users, with guardrails to prevent misinterpretation or misuse of insights.

- Real-Time Collaborative Analytics: Distributed teams will co-author dashboards and narrative reports in real time, requiring synchronization protocols and conflict-resolution strategies.

By integrating these practices, organizations can harness autonomous AI-driven interpretation and visualization agents as strategic assets, translating raw data into a sustainable competitive advantage.

Chapter 4: Knowledge Discovery and Research with AI Agents

The Evolution and Imperative of Autonomous AI in Enterprise Analytics

Over the past three decades, business intelligence has transformed from static, IT-generated reports into dynamic, self-service environments accessible to line-of-business users. Advances in data storage and computing power spawned interactive dashboards that visualized key performance indicators in near real time, yet these platforms still relied on specialized skills to construct queries and interpret results. As data volumes, velocities, and varieties grew—spanning social media sentiment, sensor feeds, and external market intelligence—traditional BI architectures struggled to integrate and analyze information with the agility required for competitive decision making.

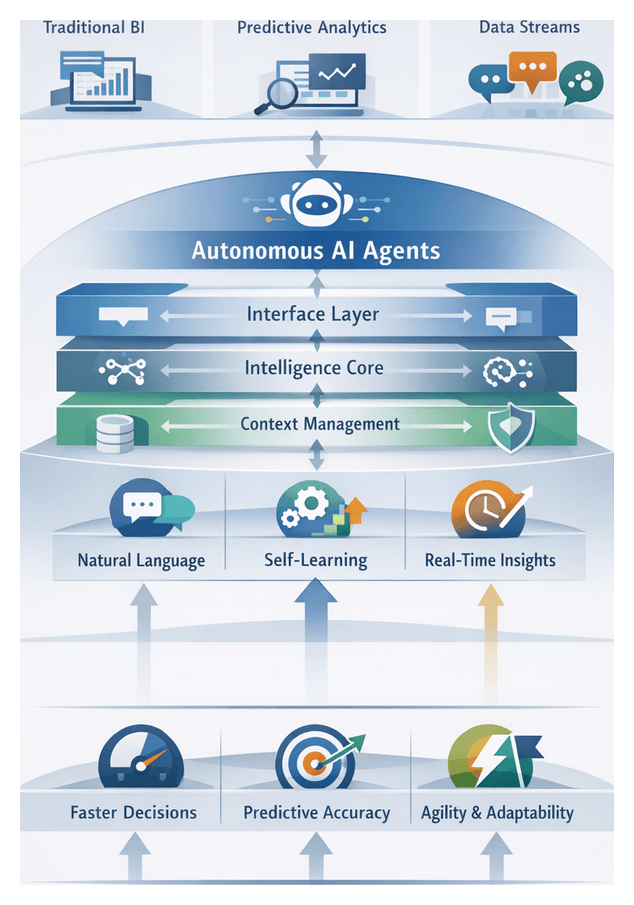

Predictive analytics and machine learning models extended analytics into forecasting and pattern detection, but these efforts often operated in isolated silos, demanded expert data science resources, and delivered insights with latency that limited impact. Autonomous AI agents represent the next frontier in enterprise analytics: systems that proactively ingest diverse data sources, apply advanced reasoning algorithms, and surface actionable recommendations in conversational or programmatic form. By embedding domain context, continuous learning, and real-time data streams, these agents elevate BI from descriptive and diagnostic disciplines to prescriptive, self-optimizing systems.

Today’s markets shift in seconds, customer preferences evolve across channels, and risks emerge without warning. Autonomous AI agents continuously scan complex data landscapes, detect anomalies and emerging trends, and engage decision makers with scenario analyses and execution workflows. This level of responsiveness and contextual understanding was unattainable a decade ago, making autonomous intelligence a strategic imperative for organizations seeking accelerated decision cycles, improved forecast accuracy, and enhanced agility.

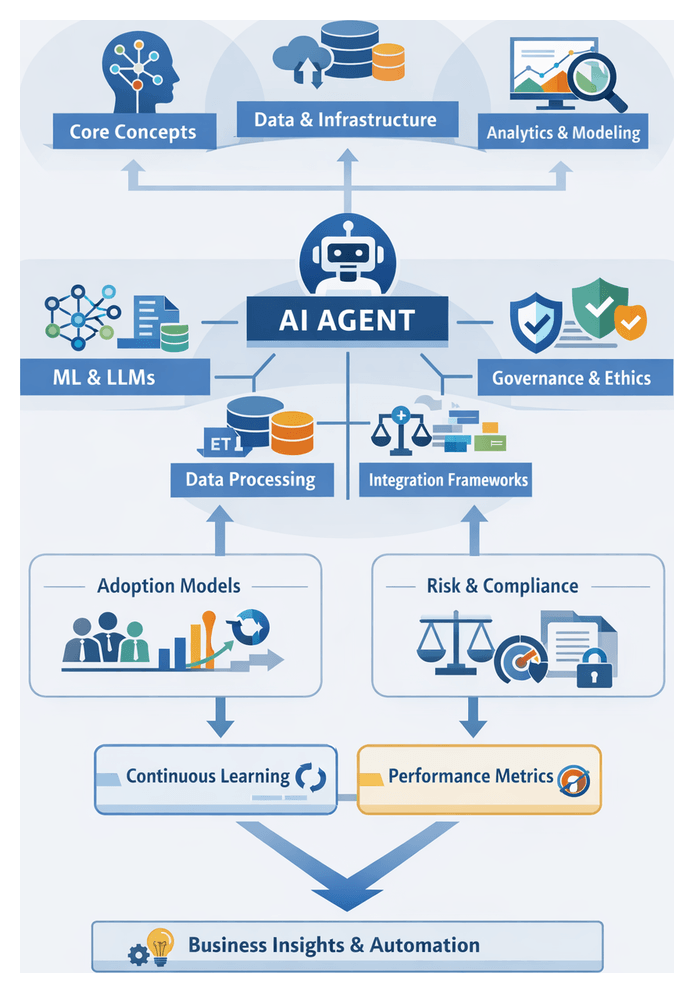

Architecting Autonomous AI Agents for Strategic Decision Making

An autonomous AI agent is a system designed to perceive its environment, make decisions, and execute actions with minimal human intervention. In enterprise settings, agents ingest raw data from structured databases, streaming feeds, documents, and knowledge bases; apply statistical methods, machine learning algorithms, and large language models; and generate recommendations or trigger tasks that drive outcomes.

A typical architecture comprises four interconnected layers:

- Data Ingestion: Unifies disparate sources into a consistent format for downstream processing.

- Context Management: Maintains session state, organizational rules, and domain ontologies to ensure policy alignment.

- Intelligence Core: Applies natural language processing, predictive analytics, reinforcement learning, and reasoning to interpret inputs and evaluate actions.

- Interface Layer: Delivers insights via conversational chatbots, API endpoints, or embedded dashboard plugins and orchestrates follow-up workflows.

Key capabilities distinguish autonomous agents:

- Natural Language Understanding: Enables users to pose questions in everyday language.

- Memory and Context Retention: Supports multi-step dialogues, refines analyses based on feedback, and recalls prior interactions.

- Self-Learning Mechanisms: Continuously adapt model parameters and update knowledge bases as new data and outcomes emerge.

In practice, an AI agent might monitor sales and inventory data, detect a regional stock shortage, generate replenishment scenarios, simulate cost and delivery impacts via a messaging interface, and trigger purchase orders once a decision is reached. This integration of data processing, strategic reasoning, and automated execution transforms raw information into tangible business value.

Measuring and Optimizing Information Retrieval Efficiency

In knowledge discovery and research, measuring retrieval efficiency is critical to ensure AI-driven platforms surface relevant, high-quality insights. Evaluation spans technical metrics tied to algorithms and business-oriented indicators that reflect user satisfaction and strategic impact.

Indexing and Query Processing

Efficient retrieval begins with content representation. Traditional inverted indexes map terms to document locations for fast keyword lookups. Modern platforms integrate vector embeddings from transformer-based models to capture semantic relationships, enabling retrieval of conceptually similar passages even without exact keyword matches. Key evaluation criteria include index size, update latency, and retrieval speed for both keyword and semantic queries.

Ranking and Relevance Scoring

After candidate passages are retrieved, relevance scoring orders results. Statistical models like BM25 rely on term frequency and inverse document frequency for interpretability and scale. Neural ranking models fine-tune transformer architectures on click-through data or explicit relevance judgments to deliver richer semantic understanding at higher computational cost. Composite frameworks blend signals such as semantic similarity, lexical relevance, content freshness, and source authority. Platforms like Perplexity weight these signals dynamically based on query context and user preferences.

- Normalized Discounted Cumulative Gain (nDCG): Measures alignment with graded relevance judgments.

- Mean Reciprocal Rank (MRR): Reports the average position of the first relevant result.

- Click-Through Rate Lift: Indicates user engagement improvements after deploying new scoring models.

Precision, Recall, and Trade-Offs

Precision reflects the proportion of retrieved items that are relevant, while recall measures the proportion of relevant items that are retrieved. High-recall settings—such as regulatory analysis or systematic literature reviews—prioritize exhaustive retrieval through aggressive query expansion and lower scoring thresholds, accepting increased noise. High-precision contexts—where executives need concise, decision-ready answers—tighten ranking thresholds and emphasize authoritative sources to minimize review burden.

- Precision@K: Proportion of relevant items among top K results.

- Recall@K: Proportion of all relevant items retrieved within top K results.

- F1 Score: Harmonic mean of precision and recall for balanced evaluation.

User-Centric Metrics and Benchmarking

Beyond algorithmic measures, user-centric metrics capture the real-world experience of analysts and decision makers. Time to Insight quantifies the interval from initial query to identification of a decision-informing finding. Query Success Rate measures the share of sessions yielding actionable answers without external tools. Session abandonment rate, user satisfaction scores, and assisted query reduction further illuminate adoption and productivity gains.

Benchmarking frameworks—leveraging public datasets such as TREC and custom, domain-specific test collections—enable comparative analysis across systems and over time. Continuous monitoring and A/B testing assess indexing strategies, ranking adjustments, and interface changes against production baselines. Visual dashboards, statistical significance analyses, and cost-benefit evaluations ensure that technical improvements translate into tangible business outcomes.

Deployment Scenarios for Competitive Intelligence

AI-enabled research agents automate the aggregation and analysis of news feeds, patent filings, financial disclosures, and social media streams, transforming competitive intelligence into a continuous capability. By embedding real-time data inputs and probabilistic trend forecasts into established frameworks—SWOT analysis, Porter’s Five Forces, and PESTLE—organizations convert raw signals into strategic narratives that guide planning, innovation, and risk mitigation.

- Strategic Planning and Scenario Analysis

- Product Development and Innovation Pipeline

- Mergers, Acquisitions, and Partnership Due Diligence

- Regulatory Monitoring and Compliance Forecasting

- Market Entry and Expansion Decisions

- Pricing Strategy and Revenue Optimization

- Benchmarking and Performance Tracking

Product strategists leverage platforms like Consensus and Perplexity to distill research abstracts into prioritized feature lists and technology readiness levels. M&A teams accelerate due diligence by extracting patterns from filings, mapping executive networks, and gauging social sentiment. Regulatory teams scan global rulings and draft regulations, correlating approval timelines with competitor pipelines. Expansion initiatives assess regional dynamics through local reports, price lists, and consumer sentiment. Pricing strategists ingest competitor offers and inventory data to forecast optimal price points. Benchmarking units track public financial metrics, patent counts, web traffic, and social engagement to situate performance relative to peers.

Implementing these scenarios transforms competitive intelligence units into continuous insight centers. AI agents handle data acquisition and preliminary analysis, enabling human experts to focus on interpretation, hypothesis validation, and strategic recommendation. Cross-functional integration via open APIs and standardized schemas embeds insights into dashboards, ERP systems, and collaboration platforms, ensuring real-time guidance within everyday processes. Governance protocols—combining automated quality checks with human review—maintain data integrity and ethical usage. Continuous improvement loops compare decision outcomes against AI forecasts, recalibrating agent performance in alignment with evolving market conditions and organizational strategies.

Evaluating Research Accuracy and Insight Depth

Deploying AI agents for knowledge discovery requires rigorous evaluation of both accuracy—alignment of assertions with verifiable facts—and insight depth—the capacity to situate those facts within broader conceptual frameworks. A layered validation approach combines automated metrics and expert review. Precision, recall, and F1 score establish a quantitative baseline, while subject matter specialists assess contextual interpretation, thematic coverage, and synthesis quality.

Assessing Depth through Concept Mapping

Concept mapping techniques evaluate how effectively an agent connects discrete data points into coherent idea networks. Metrics such as coverage ratio (proportion of core concepts captured) and coherence score (logical relationships among concepts) guide depth assessments. Domain-specific ontologies and taxonomies further tailor these measures to organizational priorities.

Source Reliability and Bias Mitigation

AI agents draw on heterogeneous repositories with varying credibility. Source weighting schemas assign reliability scores based on peer review status, author credentials, publication date, and publisher reputation. Periodic bias audits address algorithmic, recency, and topical biases by analyzing output distributions and enforcing guardrails that preserve historical context alongside emerging research.

Operationalizing Balanced Scorecards

Balanced scorecards integrate retrieval effectiveness, synthesis quality, source reliability, and operational efficiency into a unified evaluation framework. Target thresholds align with risk appetite and strategic goals. Regular reviews and stakeholder alignment sessions drive iterative improvements and ensure that AI-driven insights meet performance expectations.

Governance and Continuous Model Stewardship

Governance protocols combine automated quality checks—such as citation verification and contradiction detection—with human oversight to mitigate hallucinations and contextual errors. Drift detection algorithms and scheduled retraining maintain alignment with evolving knowledge landscapes. Feedback loops compare actual decision outcomes against AI forecasts, enabling agents to recalibrate weighting schemes and improve relevance over time.

By uniting technical rigor, human judgment, and robust governance, organizations can scale AI-driven research workflows without compromising on accuracy, depth, or ethical standards. The orchestration of autonomous AI outputs within human-led strategic processes delivers sustainable competitive advantage in an era defined by complexity and rapid change.

Chapter 5: Document Comprehension and Q&A Agents for Enterprises

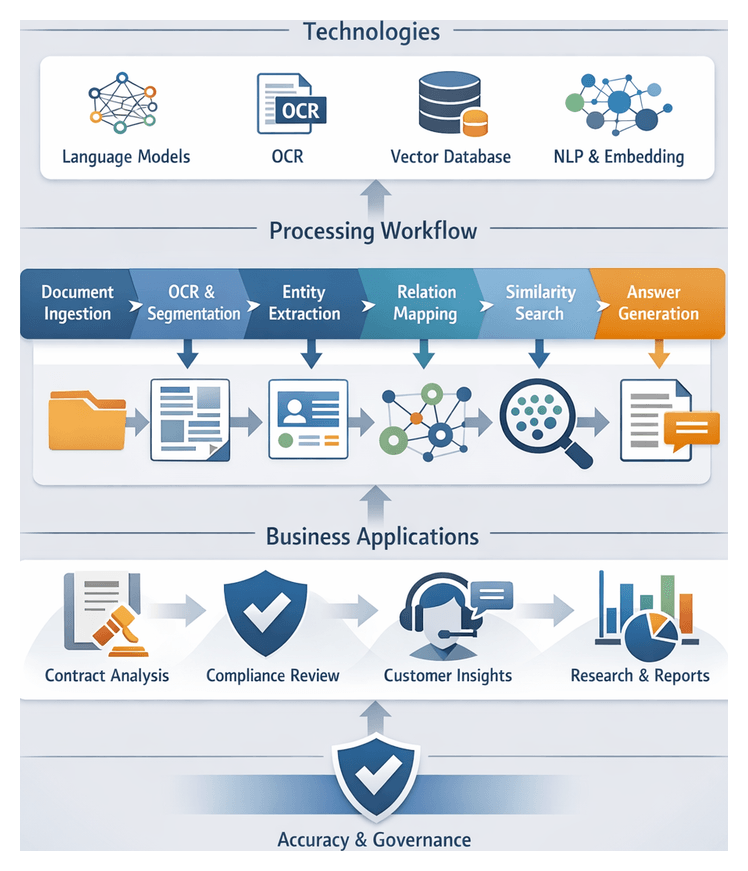

Core Mechanisms of Document Analysis Agents

Document analysis agents leverage advanced natural language processing and machine learning to convert unstructured text into structured insights. These autonomous systems support enterprises in interpreting legal contracts, technical manuals, customer correspondence, and regulatory filings by automating parsing, semantic indexing, and contextual understanding. Key capabilities include optical character recognition for scanned files, text segmentation to detect chapters and tables, entity recognition for names, dates, and monetary values, relation extraction to map connections between clauses, semantic embedding for similarity search, and natural language generation for summaries and query responses.

Fundamental Technologies

- Transformer-Based Language Models such as BERT, RoBERTa, and GPT, fine-tuned on domain-specific corpora to capture semantic nuance.

- OCR Engines that preserve document layout while converting images and PDFs into machine-readable text.

- Embedding Frameworks like Sentence-BERT that generate high-dimensional vectors for sentences and paragraphs.

- Vector Databases including Pinecone and Milvus for rapid similarity search over millions of embeddings.

- Hybrid Information Retrieval Pipelines combining inverted-index search with neural re-ranking to balance speed and relevance.

- Natural Language Generation Modules that produce coherent extractive or abstractive summaries tailored to user queries.

Operational Workflow

- Document Ingestion: Automated transfer from content management systems, cloud storage, email servers, or network drives.

- Preprocessing and OCR: Layout analysis and text cleaning for scanned or image-based content.

- Segmentation and Annotation: Division into logical units annotated with metadata (author, date, type).

- Embedding Generation: Conversion of each unit into vector representations.

- Indexing and Storage: Storage of embeddings in vector databases alongside traditional search indexes.

- Query Processing: Natural language queries embedded and matched against the index to retrieve relevant passages.

- Answer Generation: Generative models synthesize retrieved content into concise, context-aware responses.

- Delivery and Feedback: Results delivered via UI or API, with user feedback captured to refine models.

Enterprise Use Cases

- Contract Review and Management: AI agents such as Humata identify termination rights, indemnities, and renewal terms to accelerate due diligence.

- Regulatory Compliance: Automated analysis of policy updates and audit reports to pinpoint obligations in finance, healthcare, and manufacturing.

- Customer Support Knowledge Bases: Conversational agents powered by AskYourPDF answer complex inquiries with document-backed responses.

- Research and Competitive Intelligence: Real-time summarization of white papers, market studies, and news releases.

- Internal Knowledge Discovery: Consolidation of siloed repositories to surface historical decisions and expertise across teams.

- Policy Analysis in Healthcare and Finance: Extraction of reimbursement guidelines and reporting standards to identify compliance gaps.

Accuracy Assessment in Legal and Regulatory Review

Reviewing legal and regulatory documents demands precision to avoid penalties and operational risk. AI-driven comprehension agents must be evaluated through rigorous frameworks that address the complexities of legal language, benchmark performance, and ensure governance and auditability.

Complexities of Legal Language

Legal texts feature specialized jargon, multi-party clauses, cross-references to statutes or schedules, and context-dependent definitions. Effective agents employ domain-adapted tokenization, specialized entity recognition, and custom ontologies to preserve semantic fidelity. Accuracy assessment extends beyond word-level matching to verifying that obligations, rights, and conditions are correctly mapped and contextualized.

Key Evaluation Metrics

- Precision, Recall, and F1-Score for extracted entities and clauses.

- Clause Detection Rate: Proportion of relevant clauses identified versus a human-annotated gold standard.

- Obligation Extraction Accuracy: Correct capture and labeling of duties, rights, and prohibitions.

- Cross-Reference Resolution Score: Ability to link in-text references to statutes, schedules, or prior clauses.

- Context Preservation Index: Degree to which extracted segments retain interpretive context.

Benchmarking and Interpretive Frameworks

Benchmarking leverages validated datasets such as the Contract Understanding Atticus Dataset (CUAD) and proprietary, annotated corpora focused on risk clauses and indemnity provisions. Beyond metrics, interpretive frameworks from legal hermeneutics and discourse analysis diagnose systematic errors:

- Hermeneutic Circle: Iterative contextual review refining clause interpretation and overall document purpose.

- Rhetorical Structure Theory: Verification that functional roles of clauses (elaboration, justification, condition) are preserved.

- Ontological Alignment: Mapping entities and relationships to a formal legal ontology for consistency.

- Pragmatic Context Evaluation: Recognition of situational cues such as jurisdictional scope or industry regulations.

Human-in-the-Loop Auditing

- First-Pass Extraction Review: Legal professionals annotate false positives and omissions.

- Semantic Consistency Check: Experts verify obligations, rights, and definitions in context.

- Regulatory Compliance Validation: Compliance officers confirm alignment with current statutes.

- Continuous Feedback Loop: Annotated corrections refine model performance over time.

Platforms like Humata and AskYourPDF support collaborative auditing by enabling trackable annotations and side-by-side comparisons of source text and AI summaries.

Confidence Calibration and Governance

- Aggressive thresholds for low-risk documents and conservative thresholds, with mandatory human review for high-risk contracts.

- Adaptive thresholding based on document type, clause complexity, and stakeholder risk tolerance.

- Versioned model deployment, explainability logs, and access controls to maintain audit trails.

- Dashboards tracking false-positive and false-negative rates for continuous refinement.

Interactive Question-and-Answer Applications

Interactive Q&A agents transform static document repositories into conversational partners. By engaging in dialogue, users obtain context-aware insights that accelerate decision cycles, reduce cognitive load, and democratize organizational knowledge.

Contract Negotiation and Due Diligence

AI assistants parse clause hierarchies, compare deviations from standard templates, and flag risk exposures. Teams can query “Which contracts expose us to termination fees over one million dollars?” or “Where do indemnification obligations cover third-party conduct?” Real-time analysis of nested references and cross-document relationships expedites negotiations and drives consistency.

Regulatory Compliance and Policy Interpretation

Compliance officers query policy documents against regulatory texts to surface divergences and generate tailored summaries. Questions such as “Which internal policies require updates for the new data privacy regulation?” yield pinpointed clauses, recommended revisions, and comparative tables between legacy and updated language.

Customer Support and Service Intelligence

Support teams use agents like Humata and AskYourPDF to translate technical manuals and service logs into step-by-step guidance. Queries such as “How do I reset the network module on the X500 router?” produce coherent solutions drawn from manuals and past support cases, reducing resolution times and improving customer satisfaction.

Knowledge Sharing and Onboarding

Centralized Q&A interfaces unify training materials, project archives, and best practice guides. New hires ask “What were the key milestones in Project Orion?” or “Which templates does finance use for quarterly forecasts?” The agent retrieves project plans, meeting minutes, and reporting frameworks, accelerating ramp-up and fostering continuous learning.

Executive Briefing and Strategic Decision Support

Leaders pose high-level queries such as “What are the top regulatory risks in Europe?” or “How does our roadmap compare to competitors?” Agents synthesize multiple strategic documents into concise, evidence-based insights, enhancing agility and focus at the executive level.

- Interactive Q&A agents convert repositories into conversational partners, improving speed and precision.

- Contract analysis benefits from real-time clause comparison and risk identification.

- Compliance teams gain proactive policy interpretation and streamlined audit readiness.

- Support functions deliver faster, document-backed solutions.

- Onboarding leverages centralized access to institutional memory.

- Executives extract high-level summaries without manual report review.

Security, Governance, and Operational Resilience

Secure and reliable deployment of document AI requires multi-layered safeguards, transparent governance, and robust operational practices to mitigate risks and maintain trust.

Security and Privacy Architecture

- End-to-end encryption with enterprise key management systems.

- Zero-trust network principles to isolate AI resources and reduce lateral movement.

- Data loss prevention policies to block unauthorized exfiltration of sensitive content.

- Secure enclaves or homomorphic encryption for inference on encrypted data.

- Sanitization, tokenization, and redaction applied before invoking services such as Google Document AI or Azure Form Recognizer.

Explainability and Transparency

- Evidence tracing by logging source passages alongside AI responses.

- Presentation of confidence scores and probability distributions for key extractions.

- User feedback loops capturing corrections to refine model outputs.

- Model registry tracking versions, training snapshots, and fine-tuning parameters for auditability.

Governance and Compliance

- Data classification aligned with regulatory and organizational risk profiles.

- Approval gates for data ingestion, model fine-tuning, and production rollout.

- Standard operating procedures for incident response when outputs contravene policy.

- Comprehensive audit trails of data access, model interactions, and user overrides.

- Cross-border data transfer controls and rights to explanation under privacy statutes.

- Automated validation suites comparing AI outputs against expert-validated benchmarks.

- Monitoring for model drift, data drift, and service latency with alerting mechanisms.

- Disaster recovery plans with fallback to human review or legacy parsing systems.

- Capacity planning and load testing to maintain performance during peak demand.

Key Considerations and Limitations

- Data Sensitivity: Anonymization and on-premises hosting for highly regulated content.

- Model Hallucinations: Human-in-the-loop review for high-stakes documents to intercept fabricated outputs.

- Domain Specificity: Fine-tuning on curated corpora to improve handling of specialized jargon.

- Regulatory Complexity: Ongoing adaptation to evolving requirements across jurisdictions and sectors.

- Explainability Constraints: Partial visibility into transformer decision logic necessitates thorough testing.

- Integration Trade-Offs: Middleware and API orchestration to connect AI agents with legacy systems.

- Resource Intensity: Substantial compute and storage demands that impact total cost of ownership.

- Human Oversight Balance: Defined thresholds for automation versus manual validation to sustain quality control.

A holistic approach combining advanced technology, rigorous evaluation, collaborative governance, and continuous monitoring enables enterprises to unlock the full potential of AI-driven document intelligence while managing associated risks.

Chapter 6: Competitive Intelligence and Market Analysis Agents

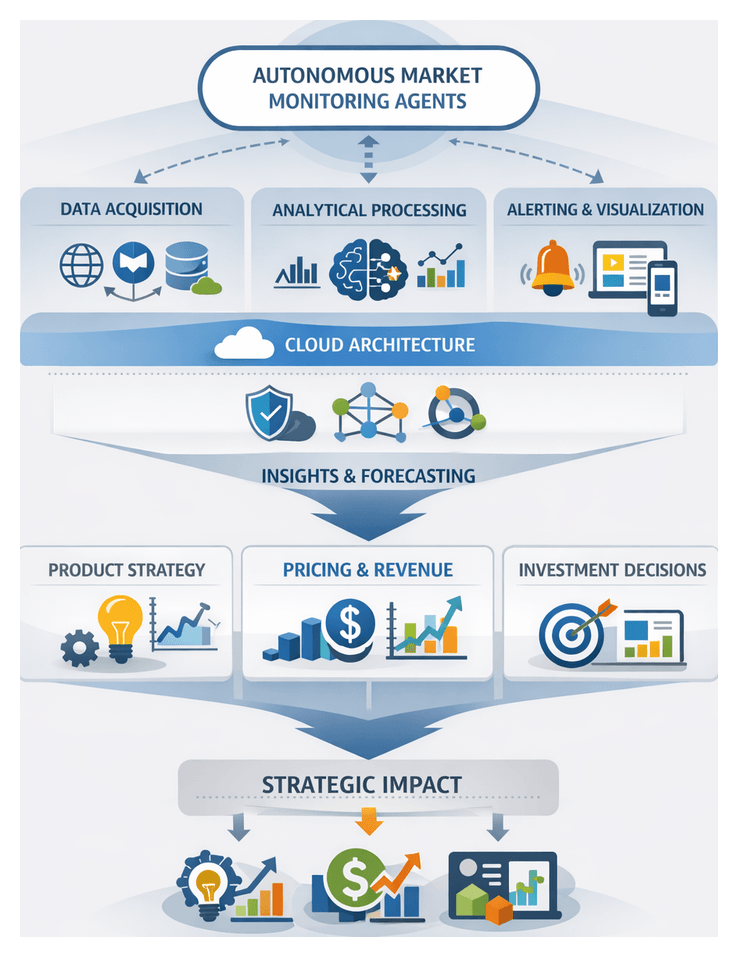

Strategic Foundations of Autonomous Market Monitoring Agents

In an environment defined by accelerating digital footprints and intensifying competitive pressures, autonomous market monitoring agents have emerged as essential tools for continuous competitive intelligence. These software entities ingest structured and unstructured data—from news feeds, social media, regulatory filings, competitor websites and internal systems—then apply natural language processing, machine learning and predictive analytics to detect trends, anomalies and strategic signals in real time. Unlike periodic reports or static dashboards, these agents operate 24/7, delivering actionable insights that inform product development, pricing, investment decisions and risk mitigation.

At their core, market monitoring agents integrate three capabilities:

- Data Acquisition: Continuous web scraping, API ingestion and connector-based integration with CRM, ERP and regulatory databases to maintain broad coverage and up-to-date information.

- Analytical Processing: Modular libraries of statistical classifiers, topic models, sentiment analyzers and time-series forecasters that classify, cluster and predict market variables.

- Intelligent Alerting and Visualization: Customizable rule engines and natural-language summarization that deliver insights via dashboards, mobile alerts and conversational interfaces.

These components rest on a cloud-native architecture that ensures elastic compute, modular workflows and secure governance. Semantic enrichment through knowledge graphs and domain ontologies adds industry-specific context, while logging, version control and access management uphold compliance and auditability. Together, they support a closed-loop intelligence cycle in which data is ingested, insights are generated and recommendations are delivered to decision makers.

Historically, market intelligence relied on manually assembled reports that lagged behind real-time events. Early automation—rule-based scrapers and keyword alerts—reduced manual effort but lacked scalability and adaptability. The integration of machine learning and large language models in the 2010s enabled automated classification, entity extraction and sentiment scoring. Today’s agents incorporate reinforcement learning for adaptive thresholds and deep semantic understanding, elevating competitive intelligence from a periodic function to an embedded, strategic capability.

Strategic implications are far-reaching. In product development, agents surface feature gaps and emerging customer needs. Pricing teams leverage competitor price movements and elasticity models for dynamic revenue management. Corporate development uses opportunity scoring and patent analysis for M&A screening. Risk management gains early warnings of compliance violations or supply-chain disruptions. By embedding autonomous monitoring across organizational workflows, enterprises shift from reactive responses to proactive strategies.

Methodologies for Trend Forecasting

Selecting the right forecasting methodology is critical to translating data streams into reliable projections. Competitive intelligence teams balance model interpretability, computational requirements and adaptability to evolving market conditions. Key approaches include:

- Statistical Time-Series Models — Techniques such as ARIMA, Holt-Winters exponential smoothing and structural time-series analysis offer transparent decomposition into trend, seasonal and irregular components. Evaluative criteria include metrics like AIC, BIC and Ljung-Box tests to assess fit and residual autocorrelation.

- Machine Learning and AI-Driven Techniques — Algorithms such as Random Forests, XGBoost, Support Vector Regression and neural architectures like LSTM networks capture nonlinear behaviors and complex interactions. Platforms such as DataRobot and H2O.ai provide automated model selection, hyperparameter tuning and explainability modules using SHAP values and partial dependence plots.

- Pattern Recognition and Signal Detection — Clustering (K-Means, DBSCAN) and anomaly detection (Isolation Forest, One-Class SVM) frameworks identify emergent behaviors and regime shifts. Event-based signals from platforms like PredictHQ augment historical trends to surface early warning indicators.

- Ensemble and Hybrid Strategies — Stacking, blending or weighted averaging of statistical and AI models leverages complementary strengths. Analysts assess diversity metrics, out-of-sample robustness and resilience to concept drift through back-testing and Monte Carlo simulations.

- Real-Time and Streaming Forecasting — Online learning algorithms in libraries such as River and incremental stochastic gradient descent enable continuous model updates. Streaming architectures powered by KX or Apache Kafka balance latency and accuracy through sliding-window recalibration and anomaly thresholds.

- Agent-Based Simulations and Scenario Forecasting — Monte Carlo simulations and multi-agent interactions generate a spectrum of plausible futures. Resources like those listed on AgentLink AI illustrate how simulated outputs integrate with SWOT or OODA frameworks to anticipate competitor moves and regulatory shifts.

When comparing methodologies, organizations use multi-criteria decision frameworks that consider data characteristics (volume, granularity, stationarity), model complexity (compute needs, expertise), interpretability (transparency, diagnostics), scalability (across markets and product lines), resilience (to drift and disruptions) and integration (with existing BI platforms and data pipelines). Interpretive frameworks such as Predict-Prepare-Perform or Balanced Scorecards ensure that forecasts map to readiness indicators and strategic objectives, transforming raw projections into actionable guidance.

Embedding Insights into Strategic Planning

Integrating agent-generated intelligence into strategic planning elevates static annual roadmaps to dynamic, adaptive frameworks. Cross-functional synthesis of signal interpretation and hypothesis testing ensures that market signals become core inputs to product, pricing and investment decisions.

Aligning Intelligence with Product Roadmaps

- Trend Extrapolation: Agents detect shifts in keyword usage across developer forums, social media and patent filings, informing quantitative forecasts of feature demand.

- Gap Analysis: Automated benchmarking against competitor feature launches and customer sentiment highlights white-space opportunities.

- Risk Scenario Calibration: Monitoring supply-chain disruptions or regulatory developments enables contingency planning within roadmap governance.

These insights feed into iterative feedback loops within product governance forums, allowing teams to adjust feature sequencing and resource allocation as market signals evolve.

Informing Pricing and Revenue Strategies

- Competitive Benchmark Monitoring: Continuous scraping of listed prices, discounts and bundling practices feeds into dynamic pricing engines.

- Elasticity Modeling: Agent-driven simulations incorporating historical sales data and macroeconomic indicators support real-time adjustments.

- Promotion Effectiveness Evaluation: Immediate attribution of campaign performance enables iterative refinement of discount levels and timing.

By embedding these streams into value-based pricing models and customer lifetime value analyses, organizations sustain margins while responding swiftly to competitive moves.

Guiding Investment and Portfolio Decisions

- Market Attractiveness Scoring: AI agents assess growth rates, competitive intensity and regulatory risks, informing net present value and risk-adjusted return analyses.

- Portfolio Diversification Analysis: Comparative intelligence on adjacent markets identifies mitigation strategies for concentration risks.

- M&A Target Screening: Automated profiling of potential acquisition candidates based on innovation signals, patent activity and partnership announcements.

Standardized decision gates map intelligence outputs to predefined criteria, ensuring alignment with corporate risk appetite and long-term value creation targets.

Cross-Functional Integration and Governance

Enterprises establish intelligence councils or centers of excellence that define interpretation protocols, data quality standards and escalation pathways. Cross-functional representation from strategy, finance, product, marketing and legal harmonizes perspectives on conflicting signals. Balanced Scorecards incorporate agent-derived metrics across financial performance, customer satisfaction, internal processes and learning and growth. Scenario planning workshops leverage agent simulations to stress-test strategic assumptions, embedding clear accountability and feedback loops that refine both AI models and human interpretation.

Measuring Strategic Impact

Effectiveness is gauged through leading indicators—such as time from insight to action and the percentage of revenue influenced by agent-informed decisions—and lagging measures like market share changes and ROI on strategic initiatives. Economic Value Added (EVA) analyses isolate incremental value from intelligence-driven projects, while risk reduction metrics quantify avoided losses. A comprehensive impact dashboard triangulates these metrics, providing executives with visibility into how autonomous insights translate into tangible outcomes.

Interpretive Frameworks

AI outputs gain strategic meaning when anchored in established models. Porter’s Five Forces benefits from empirical signals on competitor actions, supplier dynamics and customer bargaining power. PESTEL analyses draw on real-time geopolitical and regulatory intelligence, while SWOT assessments integrate trend data to validate strengths and expose threats. Technology adoption lifecycle models forecast diffusion curves using early adopter sentiment and channel readiness. By weaving AI insights into these frameworks, organizations ensure decisions rest on both robust data and time-tested conceptual structures.

Key Considerations for Adoption and Governance

- Data Quality and Coverage: Evaluate data vendors and public feeds for completeness, timeliness and bias, balancing proprietary subscriptions with open‐source sources.

- Analytical Framework Alignment: Map AI-driven intelligence to strategic models—such as Porter’s Five Forces or PESTEL—to enhance interpretability and executive buy-in.

- Systems Integration: Ensure seamless connections to CRM, ERP, planning tools and BI platforms, embedding trend dashboards within Microsoft Power BI or Salesforce via APIs.

- Governance and Oversight: Define policies for model retraining, data retention and access control. Cross-functional governance teams should monitor performance metrics, audit logs and ethical risk indicators.

- Change Management and Skill Development: Invest in training and rotational programs to cultivate hybrid analysts skilled in data engineering, model validation and strategic interpretation.

- Scalability and Performance Trade-Offs: Balance real-time processing needs against compute costs. High-frequency monitoring may demand streaming architectures, while strategic forecasting can leverage batch workflows.

Limitations and Risk Management