Design Intelligence AI Agents as Creative Partners in the Age of Automation

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

Transforming the Creative Landscape with AI Automation

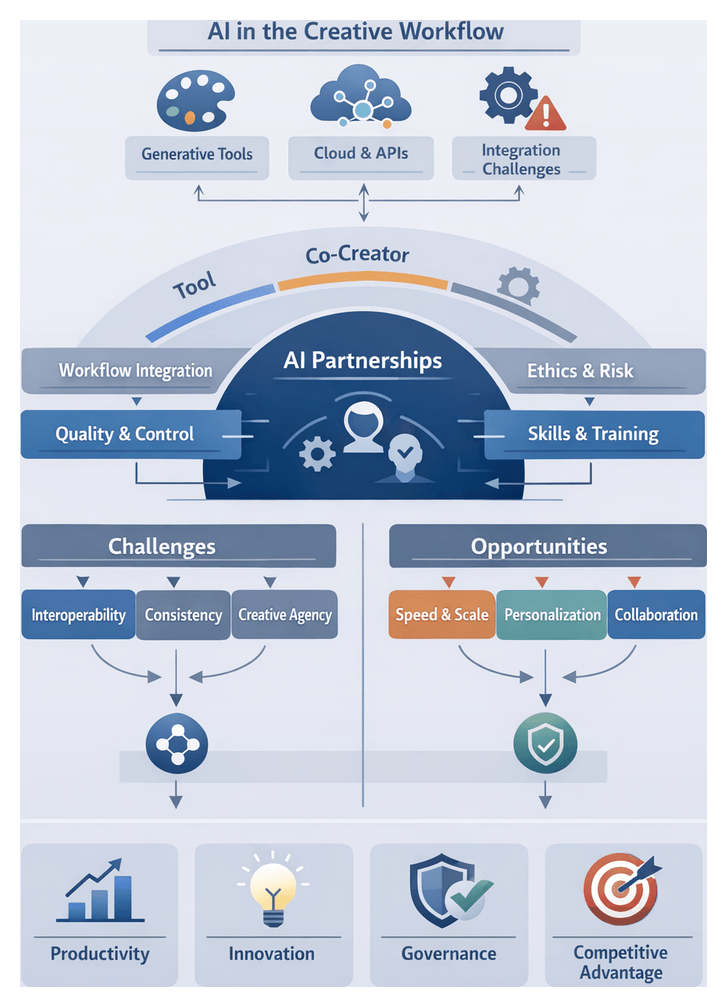

Across marketing agencies, design studios, publishing houses and in-house teams, advances in artificial intelligence, machine learning and cloud compute are reshaping every stage of the creative workflow. Breakthroughs in deep learning, the democratization of GPUs and AI accelerators, and the availability of open datasets and pre-trained models have lowered the barrier to entry for powerful generative and analytic tools. Leading engines such as DALL·E 3, Midjourney and Adobe Firefly produce complex visuals from simple prompts. Platforms like Runway ML enable real-time video and image manipulation, while Canva’s Magic Write and various Figma plugins offer AI-driven copy generation, layout suggestions and responsive design tweaks. These systems move beyond repetitive tasks, entering realms of ideation, style exploration and brand-consistent adaptation.

Faced with competitive pressures for faster turnaround, higher content volume and personalized experiences, creative teams see AI as a path to speed, scale and variation. Yet pilot projects often stall at integration points, quality control and governance concerns. Designers worry about preserving creative agency, while technical teams navigate custom integrations with legacy systems. These frictions define a problem space of unfulfilled potential, organizational resistance and the risk of unintended outcomes.

Challenges and Opportunities at Scale

As organizations explore creative automation, they encounter systemic challenges that must be addressed to realize AI’s promise:

- Workflow Integration and Interoperability: Disparate AI tools and cloud APIs require custom connectors or manual handoffs, leading to bottlenecks and version conflicts.

- Quality Control and Consistency: Generative outputs can vary widely in style and brand alignment, demanding review processes that may erode efficiency gains.

- Maintaining Creative Agency: When AI becomes a primary source of ideas, designers risk being relegated to curators rather than authors, impacting engagement and job satisfaction.

- Skill Gaps and Training Needs: Effective AI use requires prompt engineering, model assessment and data literacy—skills many creatives must now acquire.

- Data Privacy, Bias and IP: Models trained on large uncurated datasets can reproduce stereotypes or infringe on rights; emerging legal frameworks around AI-generated content add complexity.

Despite these hurdles, AI opens transformative possibilities:

- Accelerated Productivity: Automating background removal, color palette generation and text layout frees teams to focus on high-value concept work.

- Expanded Creative Exploration: Generative models surface novel visual directions and copy variations, reducing creative blocks.

- Hyper-Personalization: Real-time analysis of user data enables tailored visuals and messaging at scale.

- Real-Time Collaboration: Cloud-based AI fosters synchronous co-creation, with instant alternatives and performance predictions.

- New Business Models: On-demand design services, dynamic advertising networks and subscription platforms adapt content automatically to performance data.

- Democratization of Design: User-friendly AI interfaces empower non-designers to produce professional assets with minimal training.

Reframing AI Agents as Co-Creative Partners

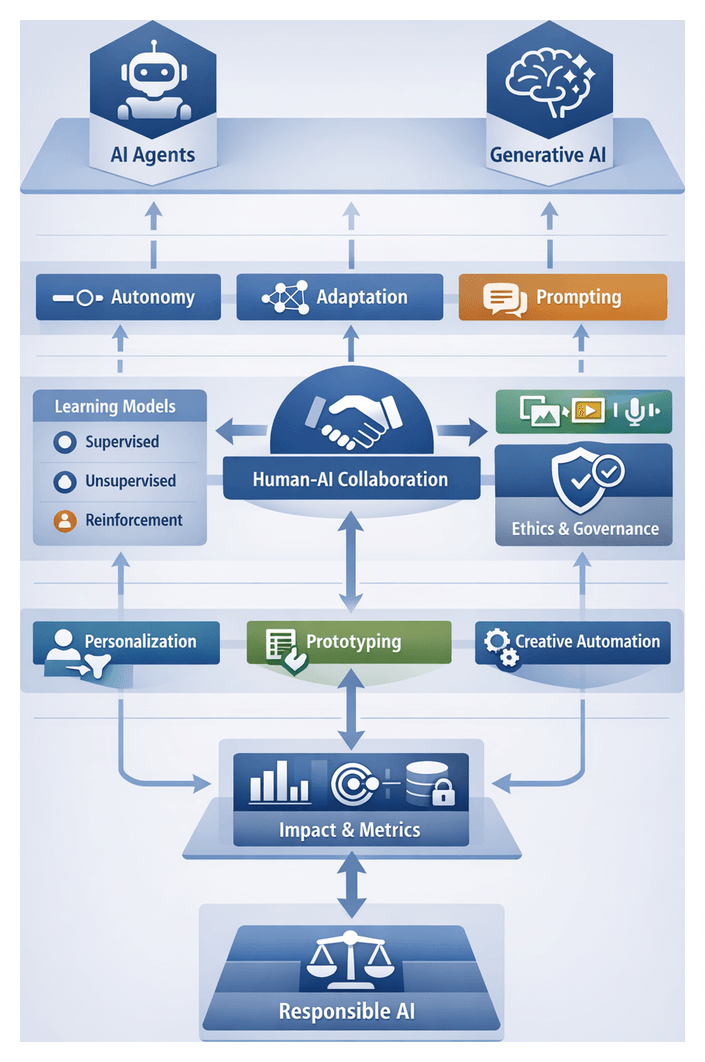

Moving beyond the tool paradigm, autonomous AI agents can be viewed as active collaborators that learn, adapt and contribute original ideas. Collaboration spans a continuum of agency—from reactive assistants executing predefined tasks to co-creators generating divergent concepts and engaging in iterative dialogue. Practitioners classify these modes by autonomy, contextual understanding and adaptive feedback loops powered by models such as GPT-4.

Two complementary frameworks guide this reframing:

- Agency Continuum Model: Ranges from rule-based Tool stage to high-autonomy Co-creator stage, enabling organizations to assess maturity and define integration targets.

- Mixed-Initiative Interaction Framework: Distinguishes between human-led, AI-led and shared-initiative modes, informing interface design and feedback mechanisms.

In branding, tools like Adobe Firefly batch-generate logo concepts that specialists curate. In digital product design, integrated modules of DALL·E 2 and Midjourney suggest iconography and illustrative assets aligned to brand assets. In architectural workflows, generative layout agents evaluate constraints and propose spatial configurations for human adaptation. Across domains, challenges of attribution, bias reinforcement, trust calibration and evolving skill sets invite robust governance and interpretive clarity.

The Strategic Imperative to Adopt AI in Design

The shift from “if” to “how fast” reflects AI’s transition from experimental novelty to strategic necessity. Early adopters capture first-mover advantages in talent, service offerings and market perception. Lagging teams risk falling behind in speed to market, cost efficiency and personalized experiences. Four interrelated forces drive this urgency:

- Market Competition and Customer Expectations: Brands leveraging AI for dynamic storytelling and targeted content outperform those relying on manual processes.

- Accelerated Innovation Cycles: AI-driven rapid prototyping and real-time iteration compress design timelines.

- Data-Driven Decision Making: AI systems surface insights from user behavior at scale, informing creative variants for segmented audiences.

- Resource Constraints and Efficiency Demands: Lean teams and tight budgets benefit from automating routine tasks, reallocating human effort to strategic work.

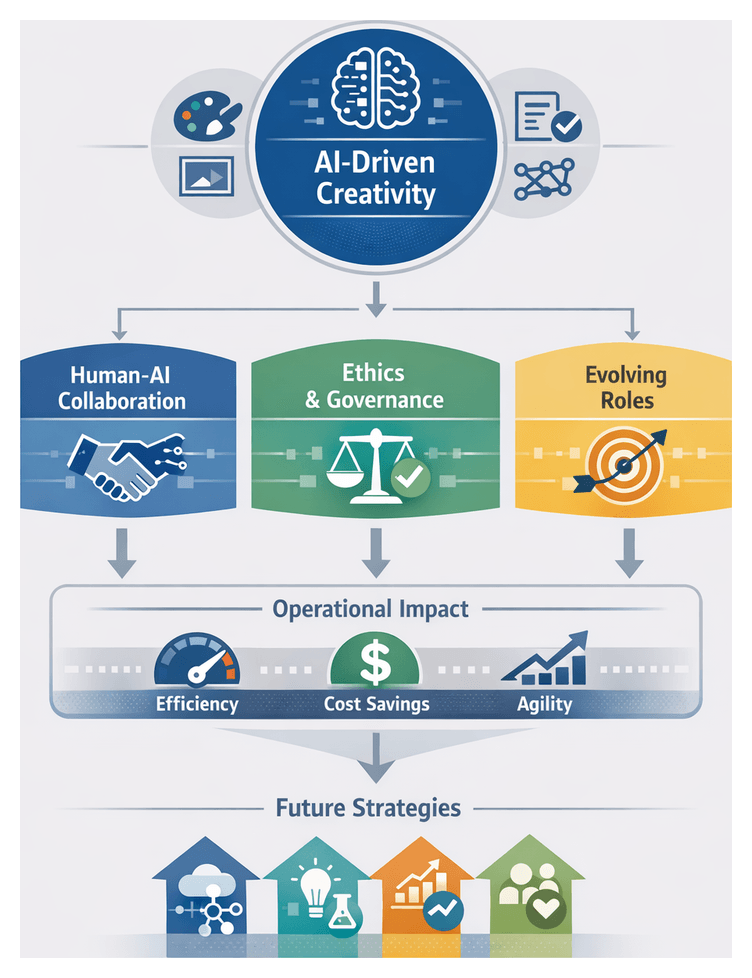

Forward-thinking organizations embed AI competency within design maturity frameworks that span technical fluency, strategic alignment and cultural readiness. Cross-functional Center of Excellence models coordinate pilots, share learnings and govern tool portfolios. Design curricula increasingly mandate AI literacy, ensuring new graduates can direct, critique and co-create with agents. Ethical stewardship—guardrails for bias, transparency and data privacy—becomes a core dimension of AI readiness.

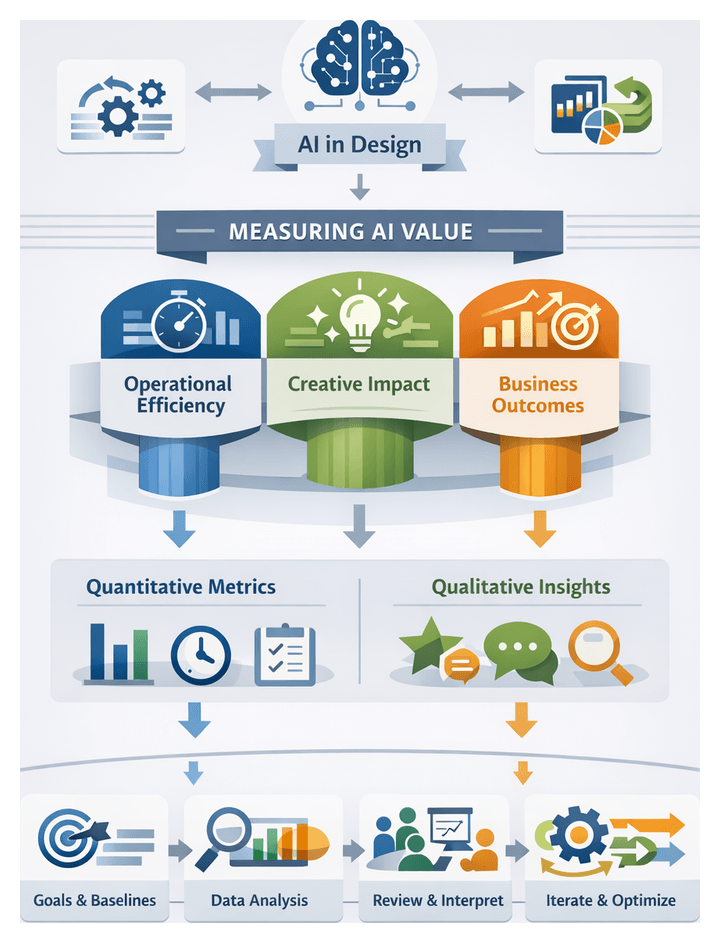

A phased rollout—from low-risk pilots like automated asset resizing to advanced generative collaborations—builds confidence, refines protocols and informs governance before scaling to mission-critical initiatives. Quantitative metrics (time savings, campaign performance) paired with qualitative assessments (creative diversity, brand alignment) track both efficiency and differentiation. This dual lens enables design leaders to navigate adoption with strategic clarity.

Navigational Overview of the Guide

This guide offers a structured exploration of AI agents in design practice, blending historical context, conceptual definitions and practical insights. Key chapters include:

- Evolution of AI in Design: From rule-based systems to adaptive, generative agents.

- Defining AI Agents: Autonomy, learning, adaptation and generative capabilities.

- Integrating AI Agents: Human-AI partnership models and workflow optimization.

- Ideation and Concept Generation: Generative prompting, divergent thinking and expanded creative horizons.

- AI-Assisted Prototyping: Real-time feedback, iteration speed and quality trade-offs.

- Personalization and User-Centered Design: Data-driven customization for engagement.

- Human-AI Co-Design Dynamics: Social, cognitive and organizational dimensions of co-creativity.

- Ethical and Responsible Use: Frameworks for bias mitigation, transparency and governance.

- Measuring Impact and ROI: Quantitative and qualitative metrics for stakeholder value.

- Future Trends and Innovations: Next-generation architectures, multimodal tools and immersive creativity environments.

Throughout, interpretive lenses such as Sociotechnical Systems Theory, Human-Machine Teaming Models, Co-Creativity and Affordance Theory, Data-Driven Decision Frameworks and Ethical AI Governance illuminate strategic choices. Key takeaways include:

- The shift from automation to collaboration positions AI agents as partners that augment creativity through adaptive learning.

- Effective integration relies on strategic entry points in existing workflows and mixed-initiative interfaces balancing autonomy and oversight.

- Generative prompting and iterative feedback loops enhance ideation, but require calibration to prevent bias and stagnation.

- Real-time prototyping accelerates cycles but demands rigorous UX frameworks to preserve intent.

- Hyper-personalization unlocks engagement but involves trade-offs in privacy, consent and fairness.

- Designer roles evolve toward facilitation and curation, fostering trust and collaboration.

- Metrics for AI impact should combine efficiency gains with measures of innovation, satisfaction and creative diversity.

- Emerging multimodal and immersive tools will redefine creative boundaries, calling for ongoing skill development.

Practitioners must remain mindful of limitations—data bias, transparency challenges, overreliance risks, organizational readiness, regulatory compliance, ROI measurement and evolving model maturity. By synthesizing these insights, frameworks and caveats, design professionals can chart a strategic path to integrate AI agents, harness creative potential and navigate the complexities of real-world adoption.

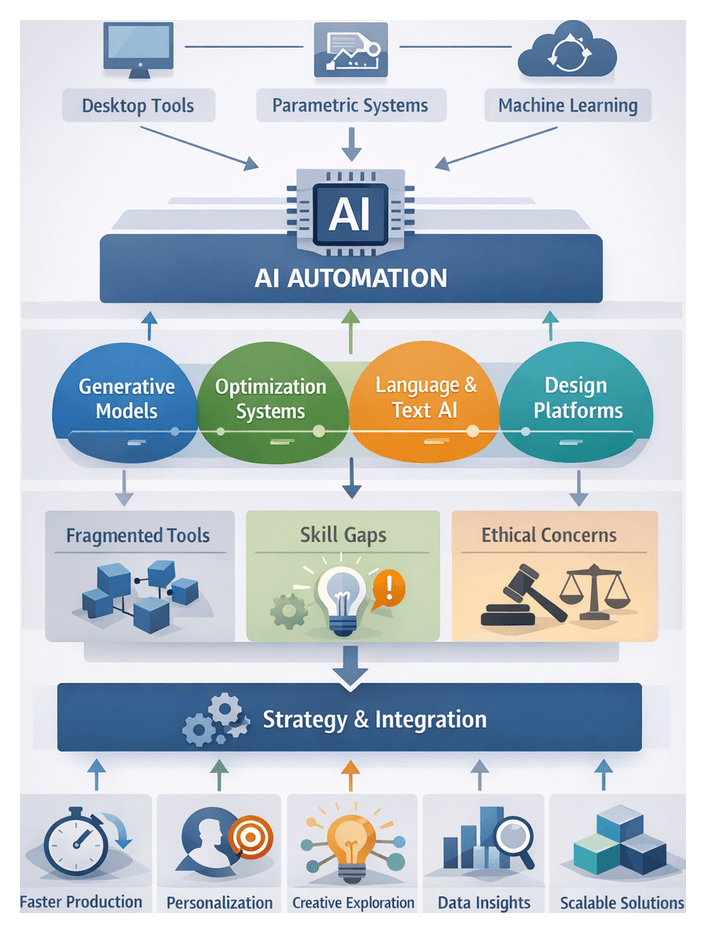

Chapter 1: The Evolution of AI in Design

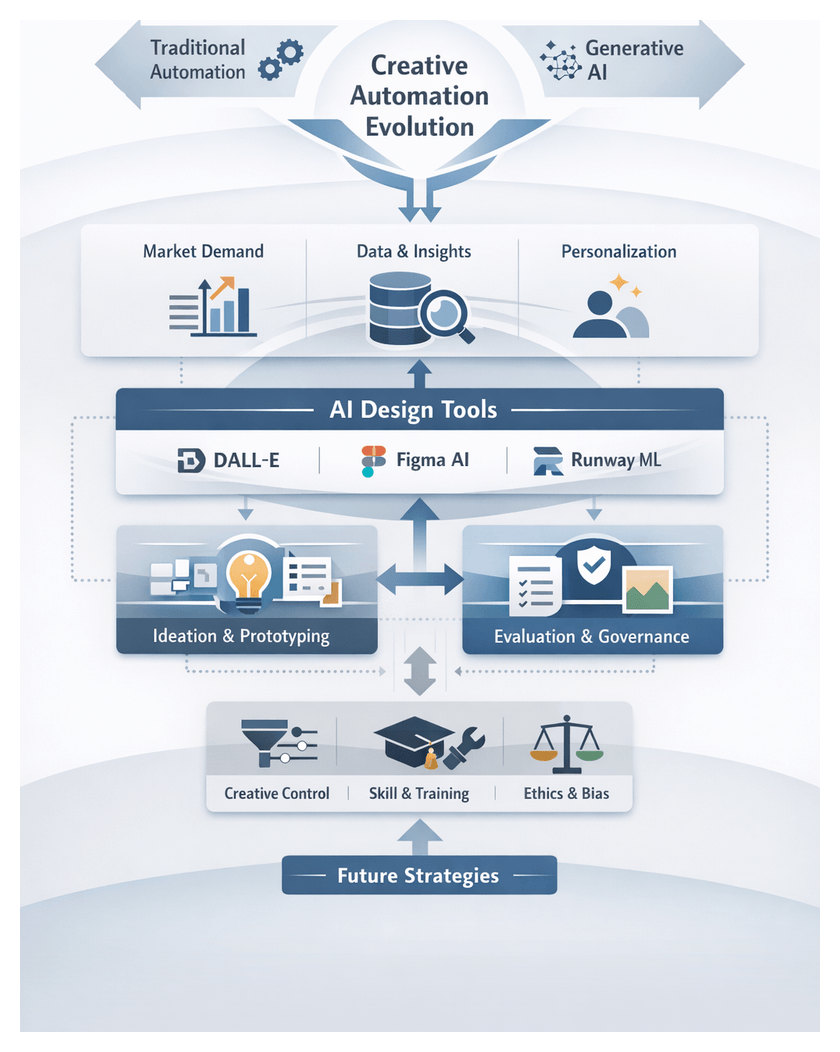

Creative Automation Landscape and Challenges

The integration of automation technologies has reshaped traditional design workflows, moving from manual sketching and iterative mock-ups to AI-driven systems that generate concepts, color palettes, and complete layouts with minimal human intervention. Platforms like DALL·E, Midjourney, Adobe Firefly, and RunwayML leverage large neural networks trained on extensive visual and textual datasets to produce novel imagery and style treatments in seconds. Writing assistants such as Jasper and automated layout engines embedded in leading graphic applications streamline content generation for blogs, marketing materials, and interactive experiences. Repetitive tasks—background removal, style transfer, template assembly—can now be executed with unprecedented speed and consistency.

However, many organizations struggle to integrate disparate tools into cohesive workflows, leading to fragmentation and quality variability. Designers often spend significant time on post-processing and curation to ensure outputs meet standards. Intensified turnaround demands create tension between speed and conceptual depth, challenging teams to balance exploratory experimentation with efficiency. Without a clear framework, businesses risk diluting brand consistency, undermining creative integrity, and missing opportunities for innovation. At the same time, AI-driven systems offer the potential to offload routine tasks, freeing designers for strategic visioning, user research, and cross-disciplinary collaboration. Generative tools can surface unexpected visual combinations, inspire fresh narratives, accelerate prototyping, and enable personalization at scale while maintaining quality and consistency standards.

AI Agents as Collaborative Partners

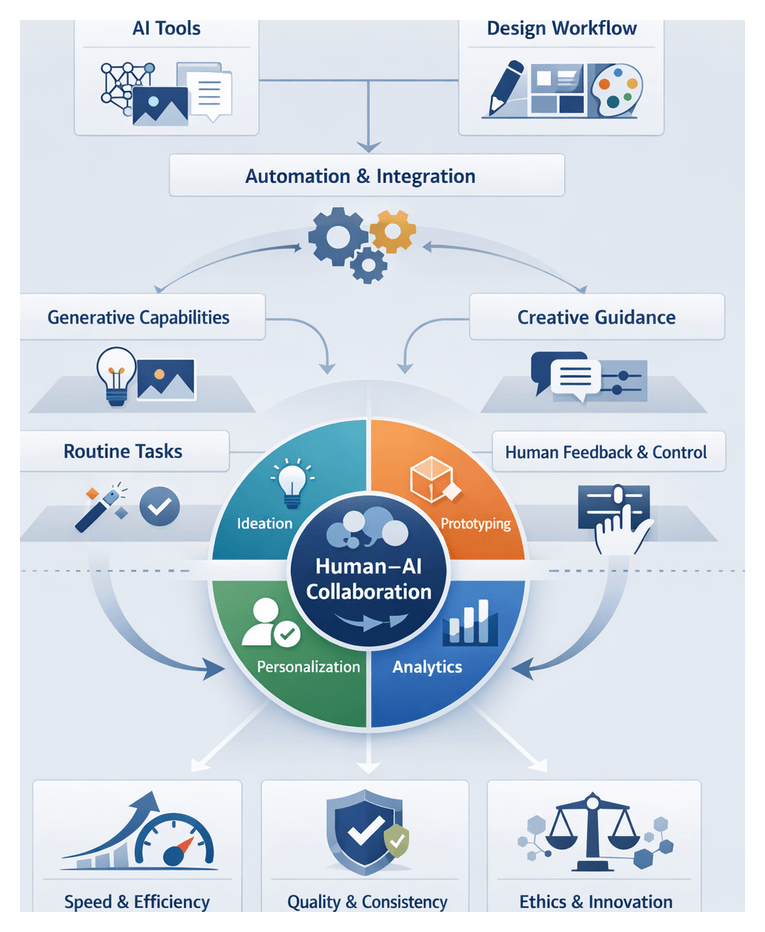

Moving beyond static utilities, AI agents function as adaptive collaborators that learn from interactions and refine outputs over time. Unlike macros or plugins, these agents possess autonomy, contextual understanding, and multimodal interaction capabilities. By framing them as partners, design teams engage in a bidirectional dialogue where agents propose variations, offer data-driven insights, or simulate user journeys, and designers provide evaluative feedback to guide subsequent recommendations. This iterative exchange fosters synergies that transcend traditional brainstorming sessions.

- Contextual Understanding: Parsing briefs, design system constraints, and brand guidelines to generate relevant suggestions.

- Generative Capacity: Producing novel visual or textual artifacts, from mood boards to interactive prototypes.

- Adaptive Learning: Refining outputs based on explicit feedback and implicit usage patterns.

- Multimodal Interaction: Engaging seamlessly across text, imagery, and code for cross-disciplinary workflows.

By positioning agents as co-creators, organizations unlock a spectrum of creative possibilities. Junior designers leverage agent suggestions to bridge skill gaps, while senior practitioners focus on high-impact decisions and mentorship. This model democratizes advanced capabilities, cultivates diverse perspectives, and fosters inclusive ecosystems where human insight and machine fluency combine to generate innovative outcomes.

The Imperative for AI Adoption

Accelerating breakthroughs in transformer architectures, self-supervised learning, and cloud compute have made sophisticated generative models accessible to studios of all sizes. Competitive pressures and evolving consumer expectations demand faster ideation, higher personalization, and data-backed creative decisions. Remote and distributed teams require collaborative platforms that maintain creative momentum across geographic divides. Economic constraints drive organizations to maximize talent productivity by delegating routine tasks to AI.

Early adopters of AI-augmented workflows iterate more rapidly, respond to market feedback with agility, and build a culture of experimentation. Cloud-based services and pre-trained models lower barriers to entry, enabling small agencies and in-house teams to deploy advanced agents without extensive infrastructure investment. This democratization intensifies competitive pressure: integration is now an imperative rather than an option.

Design leaders must develop clear roadmaps, governance frameworks, and skill-building initiatives to guide human-agent collaboration. Those who master AI-driven creativity will define the next era of design excellence; laggards risk inefficiency, stagnation, and brand irrelevance. Embedding agents across ideation, prototyping, personalization, and user testing transforms AI from a tactical enhancement into a strategic asset.

Analytical Frameworks and Collaboration Models

Establishing a shared vocabulary for human-AI collaboration is essential. The Collaboration Spectrum maps interactions along initiative (human-led to agent-led) and autonomy (low to high), allowing teams to position applications such as Adobe Sensei‘s generative fill or Autodesk Generative Design within a coherent taxonomy. The MATE model (Mediator-Agent-Tool-Environment) highlights roles agents can play: orchestrating resources, generating content, serving as specialized tools, or acting within a broader ecosystem.

Industry standards propose autonomy tiers from Level 1 (algorithmic suggestion) to Level 5 (self-driven generation with human oversight). A Level 2 moodboard system might curate imagery for designer selection, while a Level 4 agent could propose layouts, simulate user interactions, and refine designs independently. These gradations enable organizations to set achievable goals, monitor progress, and benchmark maturity against peers.

The human-in-the-loop paradigm remains central. Interactive machine learning methodologies, exemplified by RunwayML, allow real-time steering and validation of generative models through visual interfaces. Continuous feedback cycles embed human judgment at critical junctures, preserving authorship, ethical oversight, and brand authenticity.

To assess collaborative effectiveness, firms augment traditional KPIs—task completion time, error rates, resource utilization—with measures of novelty, serendipity, and design diversity. Quantitative sentiment analysis and diversity indices gauge the breadth of AI-mediated ideation, while A/B testing compares user engagement of agent-augmented prototypes against control designs. These metrics demonstrate not only efficiency gains but also enhanced creative impact.

Core Strategic Insights

- Reframing Automation as Collaboration: Shifting from task execution to a dialogue where agents contribute context-aware perspectives enriches creative dialogues and fosters divergent exploration.

- Market and Technology Drivers Create Urgency: Rapid advances in neural architectures and intensifying demands for novel user experiences make timely AI integration essential to maintain competitive relevance.

- Human-Centered Agent Frameworks: Emphasizing shared mental models, transparent feedback loops, and mixed-initiative control safeguards creative agency and aligns outputs with human intent.

- Modular Integration Pathways: Introducing agents incrementally across ideation, prototyping, and personalization reduces friction and demonstrates measurable value at each stage.

- Ethical Stewardship as Differentiator: Addressing bias, transparency, and accountability cultivates trust and protects brand integrity in an environment sensitive to algorithmic fairness.

- Multimodal and Immersive Horizons: Investing in vision, language, and spatial computing will redefine creative expression in AR/VR and beyond, positioning early adopters for leadership.

Guiding Principles for Responsible Implementation

- Iterative Pilots with Measurable Outcomes: Launch small-scale experiments targeting specific creative tasks. Define success metrics—ideation velocity, user satisfaction, error reduction—and refine agent configurations based on performance data.

- User-Defined Boundaries: Empower designers to set guardrails for agent behavior. Configurable prompts, adjustable autonomy levels, and manual override mechanisms ensure human judgment remains central.

- Cross-Functional Collaboration: Form multidisciplinary teams—data science, user research, legal, ethics—to align technical capabilities with brand values, regulatory requirements, and user needs.

- Transparent Communication: Document agent workflows, data sources, and decision logic. Sharing this knowledge fosters trust, accelerates adoption, and facilitates continuous learning.

- Continuous Learning Culture: Capture lessons from each integration cycle. Conduct post-mortems to surface technical, organizational, and ethical challenges, iterating on process improvements.

- Ethics by Design: Embed bias mitigation, accessibility checks, and privacy considerations throughout the design lifecycle. Formalize guidelines and conduct periodic audits to maintain accountability.

Considerations and Future Directions

While AI-driven creativity offers significant promise, practitioners must navigate data quality constraints, domain biases, and interpretability trade-offs. Roles will evolve toward strategic oversight, facilitation, and curation, requiring targeted upskilling. Tool proliferation risks workflow fragmentation without clear integration standards and API governance. Intellectual property, attribution, and cultural sensitivities demand proactive policies to safeguard assets and promote inclusive outcomes.

Looking ahead, advances in self-supervised and continual learning will yield more adaptive agents, while the convergence of AI with AR/VR platforms will transform spatial storytelling. Emerging regulatory frameworks and industry standards for responsible AI will shape transparent and accountable agent use. New metrics—diversity of concept space, emotional resonance, human-AI synergy indices—will better capture the qualitative impact of co-creative processes. Participation in open ecosystem collaborations and research consortia can accelerate innovation and interoperability.

By integrating strategic insights, ethical guardrails, and practical adoption principles, design organizations can harness the full potential of AI agents. This balanced approach ensures that intelligent automation amplifies human ingenuity, delivering transformative creative outcomes in an era defined by rapid technological change.

Chapter 2: Defining AI Agents and Their Creative Potential

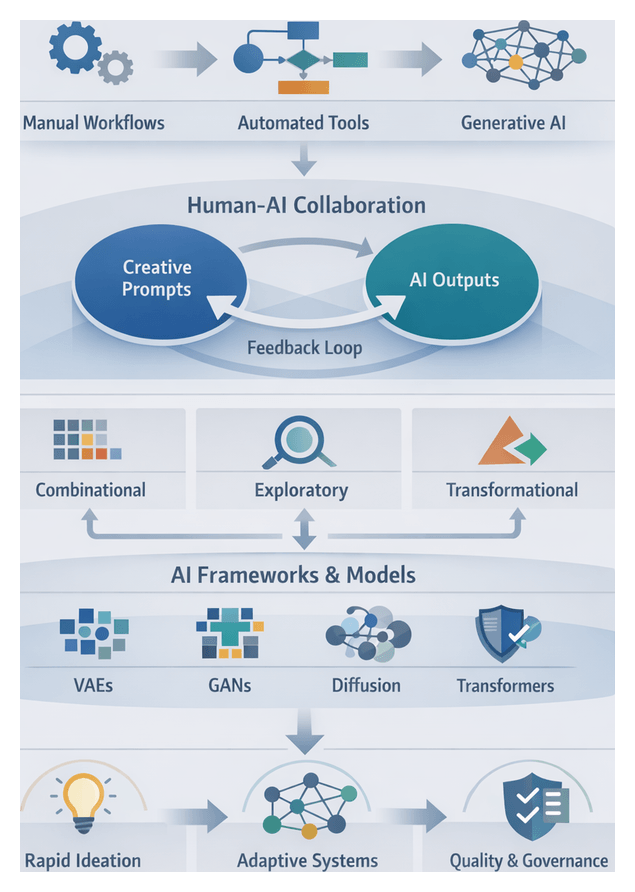

Creative Automation and the Generative Shift

The creative industries have evolved from fully manual workflows to rule-based automation and now to data-driven generative systems. Early scripting engines and parametric tools accelerated tasks like layout adjustments and batch asset generation, yet they remained brittle and required extensive oversight. Today, platforms such as Adobe Sensei and Midjourney leverage deep learning to analyze vast image and text corpora, infer stylistic patterns, and generate novel design proposals at scale. These capabilities unlock rapid concept exploration and personalized content, but also introduce challenges: fragmented handoffs between AI suggestions and human judgment, overwhelming volumes of options, and inconsistent output quality that can erode trust in machine-generated ideas.

Integrating generative engines into coherent workflows is now a central problem. Creative teams must balance machine throughput with human aesthetic oversight, ensuring that AI enhances rather than disrupts momentum. Brands that master this integration gain competitive advantage through accelerated ideation, richer visual languages, and scalable personalization.

AI Agents as Collaborative Design Partners

Moving beyond isolated features, autonomous AI agents embody continuous, goal-oriented processes that perceive context, learn from interactions, and refine outputs without human initiation at every step. An agent combines analytical pattern recognition with generative modeling: it ingests a designer’s brief, translates qualitative goals into parametric specifications, and proposes color palettes, typography treatments or initial mockups aligned with brand guidelines and user preferences.

Adopting AI agents requires a mindset shift from “tool invocation” to “collaboration engagement.” Designers craft prompts, review ranked alternatives, and supply feedback that the agent internalizes. Over iterative loops, human creativity co-evolves with machine speed and scalability, reducing effort spent filtering irrelevant suggestions and ensuring consistency across deliverables.

Technical Foundations of Generative Creativity

Computational Creativity Frameworks

Industry practitioners draw on academic models, notably Margaret Boden’s tripartite framework—combinational, exploratory, and transformational creativity—to assess agent capabilities. Combinational systems remix existing assets, while exploratory engines search defined concept spaces and transformational models redefine constraints to generate entirely new visual grammars. Julia Wiggins’ generative indices—novelty, typicality and quality—help quantify output characteristics and align AI proposals with brand standards and user expectations.

Machine Learning Architectures

Generative agents rely on diverse architectures. Variational Autoencoders (VAEs) pioneered probabilistic sampling, Generative Adversarial Networks (GANs) enhanced realism through adversarial training, and diffusion models—employed by platforms like RunwayML—incrementally refine noise into coherent images. Transformer-based models now enable multimodal synthesis of text, image and audio, with parameter count and attention layers influencing conceptual breadth. Reinforcement learning introduces goal-oriented adaptation, where reward functions tied to user engagement steer creativity, though poorly defined rewards can lead to repetitive outputs. Modular pipelines that decouple perception, reasoning and generation support interpretability, a crucial factor in regulated industries.

Data, Embeddings and Governance

Agents’ creative potential hinges on curated datasets that reflect brand aesthetics, functional requirements and cultural contexts. Supervised learning offers control through labeled inputs, while unsupervised methods uncover latent patterns in unstructured data. Self-supervised paradigms enable large-scale pretraining without extensive annotation. Embedding spaces drive latent exploration: for example, Adobe Sensei integrates learned embeddings into design applications, letting practitioners adjust semantic directions—”increase warmth” or “abstract geometry”—via intuitive interfaces. Data governance frameworks, such as the Data Ethics Canvas, ensure provenance tracking, bias auditing and regulatory compliance in sectors like healthcare and finance.

Adaptive Learning and Emergence

Online learning methods allow agents to update their models based on real-time designer feedback, refining style recommendations as users select preferred variants. Meta-reinforcement learning introduces emergent behaviors that expand design boundaries through iterative critique loops. Federated learning enables decentralized adaptation, letting regional teams fine-tune shared models on local aesthetics without centralizing sensitive data. These adaptive paradigms drive co-creative evolution but necessitate guardrails—constraint programming and manual curation—to maintain predictability and brand consistency.

Evaluation and Quality Assessment

Assessing AI creativity combines quantitative metrics with qualitative human judgment. Metrics such as Fréchet Inception Distance (FID) gauge visual fidelity, while bespoke indices evaluate form complexity or harmonic consistency. Human evaluation remains the gold standard: A/B testing and blind studies by design juries and user panels rate outputs for novelty, relevance and emotional resonance. Interactive evaluation tools embedded in suites like Figma or Sketch track variant exploration, feed usage data back to models and monitor human trust. Explainable AI techniques—saliency maps and decision-path visualizations—offer transparency, critical for compliance and stakeholder confidence.

Use Cases Across Design Domains

Branding and Campaign Development

Agencies and in-house teams use adaptive agents to iterate visual identities and campaign assets. Adobe Firefly dynamically adapts color palettes and typography to market data, while brand semiotics frameworks ensure narrative coherence. Agents act as “creative accelerators,” surfacing unexpected alternatives that spark storytelling innovation and reduce multi-channel time-to-market.

User Experience and Interaction Design

UX teams integrate lightweight agents into platforms like Figma to suggest adaptive layouts based on behavior analytics. Co-design workshops and human-centered design frameworks validate that interaction proposals align with user journeys and accessibility standards, ensuring automation enriches empathy-driven strategy.

Product, Industrial and Architectural Design

Industrial designers employ Autodesk Generative Design to explore form-finding, weight distribution and sustainability constraints, accelerating prototyping cycles. Architects use RunwayML plugins in BIM platforms to simulate solar exposure, pedestrian flow and structural efficiency. Performance-based design frameworks evaluate energy performance and occupant comfort, grounding agent innovation in cost, quality and environmental targets.

Entertainment, Media and Fashion

Studios leverage Midjourney and DALL·E to prototype storyboards, character concepts and narrative scenes. Storytelling models like the hero’s journey guide selection and adaptation of machine-generated visuals. In fashion, agents analyze trend data and material metrics to propose patterns and colorways, with forecasting frameworks ensuring commercial viability and sustainability alignment.

Analytical Frameworks for Contextual Application

- Human-Agent Co-Creative Loop: Iterative feedback between designer intent and machine suggestions.

- Contextual Integrity Assessment: Cultural, ethical and regulatory considerations for outputs.

- Value-Driven Design Metrics: Alignment of generative exploration with strategic KPIs.

- Multimodal Synthesis Evaluation: Integration analysis of visual, auditory and spatial dimensions.

Strategic Insights and Responsible Integration

Transformational Strengths

- Scalable Generative Output: Vast design spaces explored with minimal resource scaling.

- Advanced Pattern Recognition: Detection of latent relationships across multimodal inputs.

- Data-Driven Adaptation: Continuous refinement based on feedback and performance metrics.

- Diversification of Ideation: Controlled randomness breaks cognitive biases and entrenched patterns.

- Multimodal Synthesis: Unified text, image, audio and 3D concept presentations.

Limitations and Challenges

- Contextual Nuance: Cultural, symbolic and brand-specific subtleties require human validation.

- Opacity and Explainability: Complex model internals resist straightforward interpretation.

- Bias Propagation: Training datasets may perpetuate stereotypes without rigorous mitigation.

- Dependence on Data Quality: Diversity and currency of data directly affect creativity and accuracy.

- Overreliance and Skill Atrophy: Excessive automation risks erosion of human design intuition.

- Resource Constraints: High-performance models demand substantial compute and energy investments.

Guiding Principles for Adoption

- Purpose-Driven Alignment: Define specific creative roles for agents—ideation, prototyping or personalization.

- Human-Centric Oversight: Curate outputs to validate ethics, brand integrity and design quality.

- Iterative Feedback Loops: Embed prompt engineering and annotation tools for rapid adaptation.

- Transparency and Explainability: Document model provenance, training data and decision logic.

- Bias Detection and Mitigation: Screen datasets and outputs with quantitative fairness metrics.

- Ethical Data Practices: Ensure consent, anonymization and compliance with data protection laws.

- Scalable Infrastructure: Balance on-premises and cloud resources for peak workloads.

- Continuous Skill Development: Invest in training for prompt design, data analytics and AI literacy.

Evaluating Agent Impact

- Output Quality Metrics: Objective alignment with guidelines and subjective expert reviews.

- Efficiency Measurements: Time savings, reduced revisions and resource reallocations.

- User Engagement Indicators: Performance lifts in click-through rates, dwell time and conversions.

- Innovation Index: Tracking breakthrough concepts and cross-domain integrations.

- Ethical and Compliance Audits: Regular checks against accessibility, brand ethics and regulations.

Future-Proofing Design Practices

- Modular Adoption Pathways: Phase in agents for discrete tasks before end-to-end workflows.

- Interoperability Standards: Open APIs and shared schemas to avoid vendor lock-in.

- Cross-Disciplinary Collaboration: Governance bodies spanning design, data science, ethics and strategy.

- Ongoing Monitoring: Dashboards and risk indicators to track performance, bias and satisfaction.

- Exploration of Emerging Modalities: Early experiments in immersive AI, generative audio and experiential interfaces.

By combining strategic frameworks, technical insights and responsible practices, design organizations can harness AI agents to amplify creativity, drive innovation and maintain ethical rigor. This balanced approach ensures that agents serve as authentic collaborators—extending human ingenuity rather than replacing it—and position teams to lead the next era of design excellence.

Chapter 3: Integrating AI Agents into the Design Workflow

The Evolution of Design Workflows with AI Agents

Design practices have continually advanced alongside innovations in technology. From manual sketching and physical mock-ups to digital canvases and cloud-based collaboration, each toolset has reshaped how ideas are conceived, refined and delivered. Today, autonomous AI agents—software entities capable of learning from data, adapting to context and generating original outputs—are redefining creative workflows. Rather than executing preset commands, these agents collaborate by proposing alternatives, automating repetitive tasks and learning from feedback.

Early applications of machine learning in design appeared as intelligent features within established platforms. Adobe Sensei introduced content-aware fill, auto-tagging and style transfer, while Autodesk Generative Design enabled engineers to specify constraints and explore optimized structures automatically. More recent tools such as Runway ML and Canva Magic Write democratize generative design with intuitive interfaces, moving from passive automation toward active collaboration.

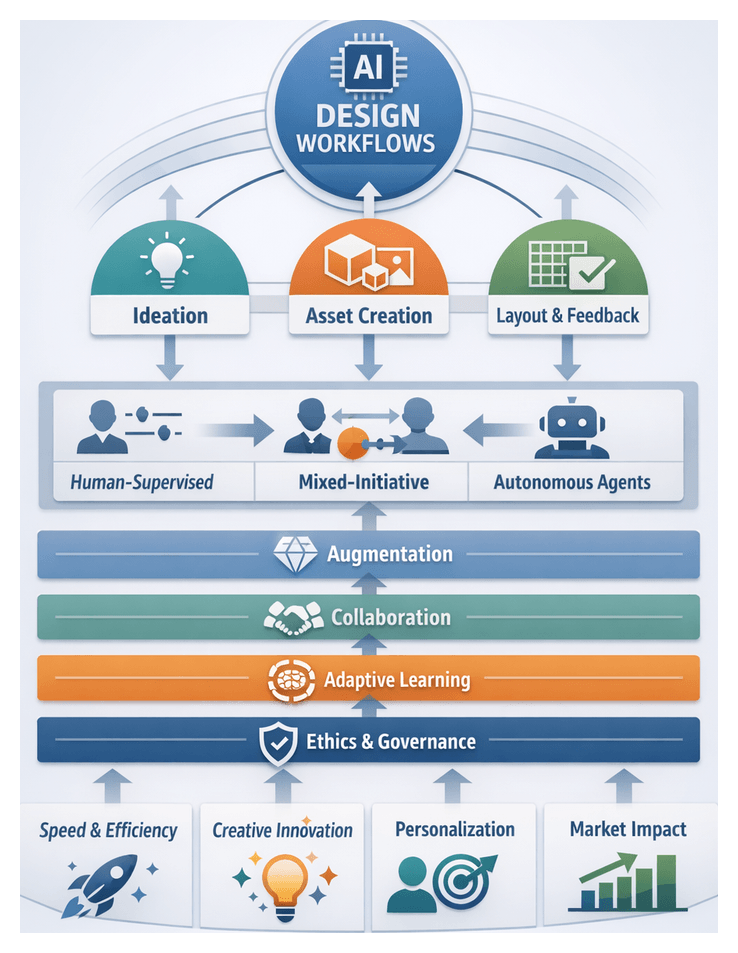

Embedding AI agents effectively requires identifying workflow entry points where automation augments human creativity without disrupting focus. Common integration points include:

- Ideation Support—Analyzing mood boards, branding guidelines and market trends to generate initial sketches or concept palettes.

- Asset Generation—Producing icon libraries, 3D models and style-consistent elements that reduce manual creation effort.

- Layout and Composition—Applying typographic hierarchies, grid systems and responsive design rules to automate page and screen arrangements.

- Real-Time Feedback—Monitoring progress to flag accessibility issues, enforce brand consistency and suggest refinements to color contrast or spacing.

- Personalization Engines—Adapting components dynamically based on user behavior or preference data for hyper-targeted experiences.

Underlying these integrations are core principles designed to preserve human agency and foster trust:

- Unobtrusive Augmentation—Integrate agents within familiar tools to minimize workflow disruption.

- Human-Centered Collaboration—Establish bidirectional feedback loops that let designers guide objectives and agents propose data-backed insights.

- Adaptive Learning—Enable continuous refinement based on project history, user feedback and performance outcomes.

- Transparency and Explainability—Surface rationales behind suggestions through annotations or interactive visualizations.

- Scalable Governance—Implement frameworks to manage brand compliance, ethical standards and oversight of automated processes.

Strategic Drivers of AI Adoption in Design

Design organizations face a convergence of market pressures and internal imperatives that make AI integration urgent. Accelerated time to market, enhanced creative capacity and data-driven decision making have become prerequisites for competitive differentiation.

- Accelerated Time to Market—Agents automate repetitive tasks, generate multiple concept variations and provide instant feedback, shortening iteration cycles and enabling faster launches.

- Enhanced Creative Capacity—Delegating routine adjustments to AI frees designers to focus on strategic vision and nuanced aesthetic decisions.

- Data-Driven Decisions—Agents ingest analytics, A/B test results and behavioral data to inform design elements and predict user preferences.

- Personalization at Scale—Autonomous variant generation supports hyper-targeted campaigns and dynamic content that would overwhelm manual workflows.

- Resource Optimization—Automating asset creation and layout planning minimizes errors, reduces manual labor and improves collaboration across distributed teams.

External factors reinforce these strategic drivers:

- Market and Competitive Urgency—Globalized demand and digital transformation reward organizations that iterate concepts in days rather than weeks.

- Technological Accessibility—Cloud-based APIs and plug-and-play services make generative image platforms, automated layout engines and intelligent content assistants widely available.

- Client Expectations—Stakeholders demand personalized, data-backed experiences with rapid validation and omnichannel consistency.

- Talent Dynamics—Designers versed in AI tools are in high demand; organizations must upskill teams to avoid widening skill gaps.

- ROI Imperatives—Leaders must justify AI investments with pilot KPIs such as reduced concept cycles, asset reuse rates and improved engagement metrics.

- Regulatory and Ethical Contexts—Evolving standards on algorithmic bias, data privacy and content authenticity require proactive governance frameworks.

Collaboration Paradigms and Role Allocation

Choosing a collaboration model and role allocation strategy is critical for balancing creative control with efficiency gains. Three predominant paradigms have emerged:

Human-Supervised Models

Designers set objectives, guide agents with detailed instructions and review outputs before approval. This “low autonomy, high control” approach maintains predictability, preserves brand integrity and builds trust during early AI adoption.

- Benefits: Direct creative control, adherence to guidelines, iterative learning of agent capabilities.

- Limitations: Slower turnaround, designer fatigue from oversight, underutilization of generative potential.

Autonomous Agent Models

Agents generate concepts, select visual elements and propose layouts with minimal human input. Known as “high autonomy, low control,” this model accelerates prototyping and supports large-scale exploration.

- Benefits: Rapid concept generation, reduced manual workload, exploration of unconventional styles.

- Limitations: Risk of misaligned outputs, poor interpretability of decisions, potential loss of human nuance.

Mixed-Initiative Models

Designers and agents engage in dynamic exchanges: humans seed high-level ideas, agents generate proposals, and subsequent refinements alternate until goals are met. This co-creative synergy leverages complementary strengths.

- Benefits: Combines human intuition with machine augmentation, fosters iterative refinement, balances control and innovation.

- Limitations: Requires advanced UX for interaction management, potential cognitive overhead, complex governance to resolve conflicts.

Role allocation frameworks guide task distribution between humans and agents:

- Capabilities-Risk Matrix—Maps task complexity against risk. Low-risk, low-complexity tasks (e.g., background pattern generation) go to agents; high-risk, high-complexity tasks (e.g., brand identity) remain human-led; mid-range tasks use mixed-initiative.

- Value-Chain Partitioning—Decomposes the design process from research to delivery, assigning data analysis and rapid prototyping to agents while retaining narrative crafting and stakeholder alignment for human teams.

Emerging frameworks emphasize adaptability:

- Dynamic Autonomy Spectrum—Agents adjust their initiative based on real-time performance assessments and thresholds set by design teams.

- Trust Calibration Metrics—Quantify confidence in agent recommendations to inform oversight levels.

- Hybrid Creative Intelligence—Multi-agent ecosystems collaborate among themselves and with humans to produce composite designs.

Organizational and Tactical Considerations for Integration

AI integration is as much a strategic transformation as a technical deployment. Key organizational factors include:

- Leadership Vision—Articulate how AI supports business objectives such as acceleration, differentiation and cost savings.

- Cultural Readiness—Foster a data-driven, experimental mindset and address concerns about creative autonomy through transparent communication.

- Skill Development—Provide training in prompt engineering, agent interaction paradigms and ethical guardrails.

- Governance Frameworks—Establish policies for data usage, version control, intellectual property and compliance with emerging regulations.

- Cross-Functional Teams—Embed AI expertise within squads of UX researchers, data scientists and creative leads to accelerate experimentation and optimization.

- Pilot Initiatives—Define measurable KPIs for small-scale projects to demonstrate ROI and build stakeholder support.

Ethical, Technical, and Operational Constraints

Maximizing AI benefits requires navigating inherent limitations and ethical considerations:

- Data Quality and Bias—Agents trained on narrow or biased datasets may perpetuate stereotypes. Implement data governance and bias-detection checkpoints.

- Opacity of Generative Processes—Complex models often operate as black boxes. Provide provenance metadata and rationale for suggestions to maintain trust.

- Infrastructure and Latency—High-performance compute or cloud inference incurs costs and may introduce delays in real-time workflows.

- Integration Overhead—Custom APIs, prompt libraries and version compatibility demand specialized technical support, straining small teams.

- Scalability Challenges—As output volumes grow, manual review can become a bottleneck. Balance automation with quality-assurance processes.

- Intellectual Property and Attribution—Clarify ownership of agent-generated assets and honor licensing requirements for third-party content.

- User Privacy—Adhere to data protection regulations and opt-in policies when leveraging behavioral data for personalization.

- Accountability—Define responsibility for final design decisions, especially in regulated or brand-sensitive contexts.

Best Practices for Sustainable Adoption

- Embrace a Partnership Model—Treat AI agents as co-creators. Leverage their generative capabilities to expand ideation while preserving human oversight.

- Start with High-Impact Use Cases—Prioritize concept diversification, rapid iteration and data-driven personalization where agents deliver clear efficiency gains.

- Invest in Data and Infrastructure—Curate representative design assets and establish scalable computing environments to support agent performance.

- Cultivate Ethical Oversight—Implement bias detection, transparency requirements and clear attribution policies to maintain creative integrity and user trust.

- Foster Continuous Learning—Iterate on collaboration models, monitor performance metrics and adapt governance as agent capabilities evolve.

By aligning strategic vision, collaboration models and governance frameworks, design organizations can harness AI agents not as mere tools but as strategic enablers of sustained creative innovation, operational agility and competitive advantage.

Chapter 4: Enhancing Ideation and Concept Generation

Creative Automation Landscape and Evolution

The convergence of creativity and automation has evolved from simple rule-based scripts to sophisticated generative systems that redefine design practice. Early automation relied on macros and heuristics to manage repetitive tasks—batch resizing, color correction and basic layout suggestions. Advances in machine learning and deep neural networks have given rise to platforms such as Adobe Sensei, DALL·E and Runway ML, which analyze vast design repositories to extract stylistic parameters and synthesize novel visual concepts from textual prompts or existing assets.

Simultaneously, AI democratization embeds generative capabilities within familiar environments. Designers using Figma’s AI features can auto-generate icons, refine illustrations or convert sketches into vectors without leaving their workspace. This integration accelerates iteration, fosters experimentation and reduces time to market.

Key drivers accelerate automation adoption:

- Market Pressure: Brands demand continuous content—social media graphics, personalized campaigns and responsive interfaces—at scale and pace that manual workflows cannot sustain.

- Data-Driven Insights: Analytics, A/B tests and engagement metrics provide rich context. Automation tools process this data to identify effective visual patterns, informing generative outputs with empirical grounding.

- Hyper-Personalization: Consumers expect customized experiences. Automation platforms generate variant-rich collateral—from localized ads to adaptive packaging—without manual overhead for each permutation.

Challenges accompany opportunity:

- Creative Control: Preserving brand coherence and nuanced aesthetics demands careful calibration of model parameters and human oversight.

- Skill Gaps: Designers must acquire competencies in prompt engineering, data interpretation and model evaluation.

- Data Quality and Bias: Generative systems risk reflecting historical biases or limited stylistic diversity, requiring rigorous dataset curation and inclusive design frameworks.

Thoughtfully implemented, automation transforms the design lifecycle. Rapid concept exploration generates dozens of proposals in minutes, fostering divergent thinking. Structured inputs allow non-design stakeholders to contribute to ideation, enhancing cross-functional collaboration. Emergent styles surfaced by generative tools inspire innovation, positioning organizations as trendsetters rather than followers.

Addressing automation’s complexity requires a framework that spans technical, organizational and ethical dimensions. Technical considerations include agent architectures, interoperability and performance benchmarks. Organizational factors involve role realignment, training and change management. Ethical dimensions cover transparency, bias mitigation and intellectual property. This holistic approach positions AI agents as collaborative partners that amplify human creativity within resilient, adaptable design ecosystems.

Analytical Framework for Generative Prompting

Generative prompting has become central to guiding AI agents toward meaningful outputs. Prompts function as interpretive instruments, shaping how systems balance creativity with constraints. Experts evaluate prompts through structured frameworks, employ quantitative and qualitative metrics, and institute governance to ensure consistency and accountability.

Generative Prompting as Interpretive Exchange

- Contextual Anchoring – Embedding domain terminology, brand guidelines and project objectives situates generative responses within defined boundaries, reducing off-brand or incoherent outputs.

- Constraint Layering – Specifying color palettes, aspect ratios and typographic hierarchies alongside higher-level directives balances creative freedom with feasibility.

- Iterative Reflection – Treating prompts as living documents supports feedback loops where AI proposals are reviewed, critiqued and reformulated into refined prompts, cultivating emergent ideas beyond single-pass generation.

Prompt Taxonomies and Archetypes

- Exploratory Prompts – Early-stage ideation: “Imagine a brand identity inspired by…” prioritizes novelty and uncovers unforeseen design territories.

- Directional Prompts – Mid-project guidance: “Generate three logo concepts using geometric sans-serif typography and warm gradients” balances openness with specificity.

- Refinement Prompts – Optimization stage: “Enhance visual hierarchy and improve legibility” focuses on iterative improvements.

- Constraint-Driven Prompts – Challenge models: “Blend Art Deco and brutalist aesthetics within a single packaging form” supports innovative risk-taking and stress-tests algorithmic flexibility.

Evaluation Metrics and Methodologies

- Quantitative Metrics – Diversity, relevance and novelty measured via feature-space clustering, semantic similarity scores using embeddings from models like GPT-4, and deviation from historical archives.

- Qualitative Evaluations – Structured design reviews rate outputs on aesthetic appeal, brand alignment and user empathy with Likert scales or semantic differential scales.

- A/B Testing and User Studies – Prototypes tested with target audiences using platforms like Optimizely and Lyssna, measuring task completion time and preference rankings.

- Iterative Scoring Systems – Composite scores reflect prompt clarity, output coherence and alignment with objectives, evolving as teams learn prompt–output correlations.

Governance Mechanisms

- Prompt Libraries – Centralized repositories of approved templates categorized by use case and design phase streamline kickoffs and enforce brand compliance.

- Prompt Auditing and Version Control – Tracking prompt iterations maintains audit trails for retrospection and accountability.

- Cross-Functional Review Boards – Designers, strategists and data scientists vet prompt strategies against ethical standards, brand values and technical feasibility.

- Educational Initiatives – Workshops, certification programs and mentorship networks democratize prompt expertise.

Interpretive Challenges

- Model Opacity – Subtle prompt phrasing or parameter shifts can cause output divergence, complicating root-cause analysis.

- Bias Amplification – Without careful design, prompts may reinforce stereotypes; bias audits and de-risking techniques are essential.

- Semantic Drift – Over feedback loops, agents may shift interpretations; continuous calibration and revalidation protocols detect drift early.

- Scalability of Expertise – Reliance on expert prompt engineers creates bottlenecks; low-code tools and in-platform guidance help scale capabilities.

Drivers of Urgency in Design Practice

AI integration is no longer optional but a strategic imperative. Machine learning–driven workflows accelerate ideation, enable data-informed decisions and deliver hyper-personalized experiences. As generative systems mature, they transition from novelties to indispensable collaborators, reshaping competitive dynamics across industries.

Market and Competitive Pressures

Globalization and digital transformation intensify competition. AI agents that autonomously generate mockups, variant assets for A/B tests or sentiment-driven visuals empower first movers to capture market share and command premium positioning.

Technological Advancements

Innovations in GANs and multimodal models power platforms like DALL·E, Midjourney and Adobe Firefly, synthesizing high-fidelity imagery, typographic treatments and style remixes in real time. As latency decreases and context awareness deepens, delayed adoption risks manual processes falling behind generative speed and scale.

Evolving Consumer Expectations

Personalization has shifted from broad demographic targeting to one-to-one design responsiveness. AI analyzes interaction logs and psychographic profiles to recommend elements that heighten engagement in e-commerce, streaming and mobile apps.

Organizational Imperatives

Budget and bandwidth constraints drive automation of repetitive tasks—layout variants, testing simulations and accessibility checks—freeing designers for strategic problem solving. AI agents become catalysts for lean operations and rapid iteration.

Implications for the Profession

- Skill Evolution: Mastery of vector editors is complemented by prompt engineering, data literacy and model evaluation.

- Strategic Differentiation: Firms offer AI-driven services—faster concept generation and data-backed design rationales—as competitive advantages.

- Risk Management: New vectors of IP ambiguity, bias and regulatory compliance necessitate governance frameworks.

- Ethical Responsibility: Practitioners must ensure outputs reflect inclusive values, avoid stereotypes and maintain transparency.

Contexts of Application

- Brand and Marketing Agencies: AI generates multi-variant social graphics, video storyboards and copy suggestions for dynamic pitches.

- Product and UX Teams: Systems inform wireframes, simulate flows and propose micro-interactions to optimize funnels and accessibility.

- Industrial and Architectural Design: Generative design evaluates materials, sustainability and ergonomics for prototype configurations.

- Content Creation and Publishing: Media outlets use AI for image editing, video clipping and headline generation under tight content cycles.

- Open Source and Community Ventures: Collaborations on platforms like Hugging Face refine model checkpoints, curate biases and share best practices.

Strategic Frameworks for Readiness

- Diffusion of Innovations: Mapping stakeholders from innovators to laggards identifies champions and anticipates resistance.

- Gartner Hype Cycle: Tracking technologies along hype and productivity phases sets realistic timelines for adoption.

- Design Thinking Integration: Embedding AI exploration within empathize, define, ideate, prototype and test phases preserves human empathy alongside algorithmic efficiency.

Balancing Speed with Foresight

Urgency must be tempered with governance. Clear objectives, success metrics and ethical guardrails enable controlled experimentation. Documented outcomes and iterative scaling transform urgency into disciplined innovation that delivers sustainable value rather than transient novelty.

Strategic Pillars and Actionable Takeaways

The following thematic pillars synthesize essential insights for integrating AI agents as creative collaborators. Each pillar highlights strategic opportunities, potential limitations and practical considerations.

- Evolutionary Foundations: Understand the shift from rule-based scripts to collaborative intelligence. View AI agents as dynamic participants in feedback loops, not static tools.

- Defining Agent Capabilities: Assess autonomy, adaptability, contextual awareness and generative fluency through probabilistic models, reinforcement learning and transformer architectures. Balance innovation with oversight via guardrails and validation criteria.

- Integration and Workflows: Compare supervised, mixed-initiative and autonomous collaboration frameworks. Design entry points—prompt checkpoints, peer reviews, sandbox environments—and invest in change management, knowledge repositories and transparent communication.

- Ideation and Concept Generation: Employ layered prompting frameworks for divergent exploration and targeted refinement. Structure review sessions treating outputs as provocations. Guard against semantic drift with diverse data and periodic taxonomy reevaluation.

- Prototyping and Iteration: Leverage interactive style transfer and correction engines for rapid mockup cycles. Implement versioning, anomaly detection and human-in-the-loop validation to maintain quality control.

- Personalization and User-Centered Design: Integrate clustering, recommendation systems and behavioral models for hyper-personalized variations. Uphold ethical data practices—transparent collection, consent and bias audits—and monitor engagement metrics to refine parameters.

- Human-AI Co-Design Dynamics: Foster shared mental models via dialogue protocols, annotations and interpretability layers. Position designers as curators and ethical stewards, while agents handle pattern discovery and trial generation. Build trust with performance baselines and error-reporting mechanisms.

- Ethical Considerations: Mitigate biases through representative sampling, corrective algorithms and third-party audits. Ensure transparency with provenance tracking and usage logs. Establish governance defining acceptable use cases, escalation paths and review boards.

- Measuring Impact and ROI: Combine quantitative KPIs—time savings, error reduction and throughput gains—with qualitative indicators like creative satisfaction and stakeholder buy-in. Use case studies and before-after comparisons. Revisit metrics as business objectives and agent functionalities evolve.

- Future Trends and Roadmapping: Monitor self-supervised learning, multimodal synthesis and distributed intelligence. Explore AI in AR/VR/MR environments for immersive ideation. Design modular architectures for emerging APIs, model updates and interoperable data schemas.

Key Considerations and Limitations

- Outputs depend on data quality; invest in curation and validation.

- Over-reliance on templates risks homogenized outcomes; inject human novelty.

- Navigating IP, licensing and privacy requires legal collaboration.

- AI literacy gaps and cultural resistance demand targeted education and leadership alignment.

- Attribution of AI contribution can be challenging; refine evaluation frameworks.

- Legacy systems and data silos may require phased pilots and infrastructure upgrades.

- Rapid technology shifts necessitate continuous scanning of new platforms and models.

By embracing these strategic pillars with analytical rigor and organizational foresight, design teams can transform AI into a genuine creative partner. This framework empowers practitioners to harness generative capabilities, maintain human-centered excellence and drive sustainable innovation in a rapidly evolving landscape.

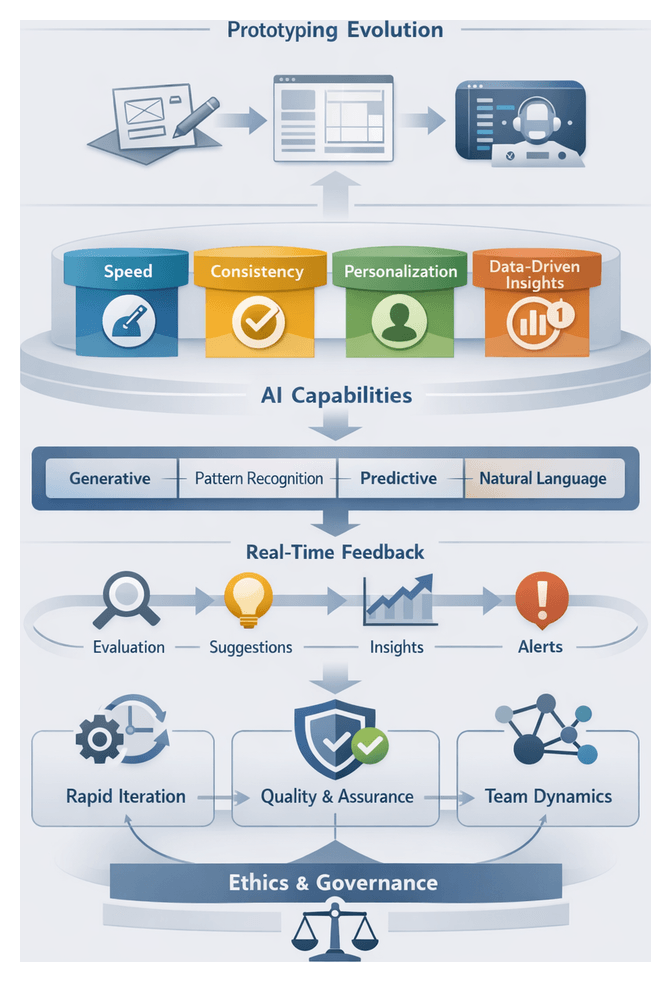

Chapter 5: AI-Assisted Prototyping and Iteration

Evolution and Drivers of AI-Powered Prototyping

Prototyping has evolved from paper sketches and foam mock-ups to digital wireframes and interactive simulations, serving as the critical bridge between abstract ideas and tangible outcomes. Recent advances in artificial intelligence have ushered in a paradigm shift, transforming prototyping from a manual exercise into an intelligent, data-driven activity. Platforms like Uizard convert hand-drawn sketches into functional interfaces, while Framer employs intelligent layout engines to suggest responsive designs tailored to content and user behavior. Creative studios integrate tools such as Runway ML to generate dynamic visual assets in real time, accelerating iteration and enabling rapid experimentation.

Design teams adopt AI-assisted prototyping in response to multiple strategic pressures: compressed time-to-market, rising customer expectations for polished interactive experiences, increasing product complexity, and the need for seamless coordination across design, engineering, and data science disciplines. AI integration addresses these challenges by:

- Increasing velocity: Automated asset creation and intelligent layouts enable same-day prototype revisions.

- Enhancing consistency: Machine learning enforces brand guidelines and design-system rules, reducing visual drift.

- Improving confidence: Data-driven feedback on usability and performance informs adjustments with empirical evidence.

- Scaling personalization: Dynamic content and adaptive interfaces cater to diverse user segments without manual rework.

By automating routine tasks and shortening feedback cycles, AI-powered prototyping lets designers focus on strategic, high-order creative decisions.

Fundamental AI Capabilities

AI-driven prototyping leverages four core technical functions that reshape how interfaces are generated and refined:

- Generative modeling: Architectures such as generative adversarial networks and variational autoencoders synthesize novel visual assets, layout variations, and interaction patterns based on learned distributions from design datasets.

- Pattern recognition: Convolutional neural networks and clustering algorithms detect recurring design motifs and component relationships, enabling automated grouping and reuse.

- Predictive adaptation: Reinforcement learning agents anticipate user behaviors and environmental contexts, proposing adjustments—such as resizing buttons or repositioning navigation—to optimize engagement and accessibility.

- Natural language understanding: Transformer-based models interpret textual prompts, design briefs, or stakeholder feedback to generate content suggestions, annotations, and iteration directives within prototypes.

Combined, these capabilities enable AI agents to evolve from passive assistants into active collaborators, shaping design solutions based on learned patterns and real-time user data.

Real-Time Feedback and Interpretation Frameworks

Real-time feedback capabilities represent a major advancement in AI-assisted prototyping, allowing teams to assess and refine concepts without traditional handoff delays. Feedback types include:

- Evaluative feedback: Agents assess elements against heuristics or best practices, highlighting contrast issues per WCAG or typographic inconsistencies based on trained corpora.

- Generative suggestions: Tools such as Adobe Sensei propose alternative layouts, color palettes, and asset variations aligned with brand identity.

- Predictive insights: Platforms like Framer forecast engagement metrics and flag usability risks before user testing.

- Contextual alerts: Agents monitor design activity and notify teams of scope deviations or style-guide conflicts, as seen in Uizard.

Practitioners interpret agent feedback through established frameworks:

- Human-in-the-Loop theory: Emphasizes continuous collaboration where feedback becomes a conversational exchange, fostering shared mental models and trust.

- Distributed cognition: Positions AI agents as external cognitive artifacts, distributing pattern recognition and error detection across tools and collaborators.

- Feedback loop optimization: Focuses on minimizing latency between designer action and agent response to preserve momentum and maximize creative output.

Effectiveness is measured by metrics such as accuracy rate, response latency, adoption rate, designer satisfaction, and iteration velocity. Explainable AI frameworks—like heatmaps indicating which interface areas influenced contrast-check recommendations—enhance trust and allow designers to validate or override suggestions. To manage cognitive load, teams implement threshold-based alerting, delivering high-confidence critiques immediately while batching lower-priority insights for review during reflection phases.

Emerging challenges include adaptive learning overhead, cross-modal feedback for AR/VR and voice interfaces, conflict resolution when multiple agents produce divergent suggestions, and securing project data in compliance with privacy regulations.

Implications for Iteration Speed, Quality, and Team Dynamics

AI-driven agents compress feedback cycles and reallocate human effort. Continuous monitoring and suggestions allow designers to iterate through dozens of variations in the time previously required for a single mockup. Key impacts include:

- Resource efficiency: AI amortizes routine refinements across many permutations, reducing per-iteration cost and freeing senior talent for strategic work.

- Exploration vs. refinement: Adaptive variation thresholds, constraint-driven generation, and tiered review processes balance creative divergence with convergent quality checks.

- Quality assurance: Automated and human-centric layers flag accessibility violations, brand inconsistencies, and performance issues, ensuring only high-fidelity prototypes reach user testing.

- Team evolution: Roles shift toward curator-strategists who direct AI agents and synthesize outputs. Cross-disciplinary collaboration intensifies as DesignOps coordinates human-agent workflows.

- Timeline compression: Organizations report 30–50 percent reductions in project durations but must realign milestone definitions and manage elevated delivery expectations.

- Risk management: Innovation sandboxes, decision matrices weighing AI suggestions against qualitative criteria, and iterative risk assessments maintain innovation bandwidth while preventing premature convergence on suboptimal solutions.

Successful integration views AI agents not merely as speed tools but as partners in structured, purposeful creative exploration.

Key Considerations for Responsible Deployment

Maximizing AI-driven prototyping requires balancing efficiency gains with strategic oversight and ethical governance. Critical considerations include:

- Balancing innovation velocity and creative agency: Set clear objectives and constraints before generative runs to ensure prototypes remain purposeful.

- Data quality and representativeness: Audit training datasets for bias, apply fairness metrics, and validate outputs against inclusive design principles, especially when using Uizard or Adobe Sensei.

- Transparency and interpretability: Embed explainable AI layers so designers understand why agents suggest specific refinements.

- Governance and ethics: Form cross-functional committees to review privacy, bias, and accessibility compliance during prototyping.

- Curating outputs: Adopt a two-stage process where AI exploration is followed by expert refinement, ensuring domain expertise shapes final designs.

- Hybrid team structures: Invest in training for prompt engineering, model evaluation, and data ethics. Leverage DesignOps to orchestrate human-agent collaboration.

- Technical and resource constraints: Evaluate total cost of ownership for cloud services versus on-premises deployments. Conduct proofs of concept to align prototyping ambitions with IT capacity.

- Integration with design toolchains: Use plugins and APIs—such as the Figma AI Plugin—to surface AI suggestions within Figma, Sketch, or Adobe XD with minimal context switching.

- Preserving brand consistency: Embed design-system tokens for color palettes, typography scales, and component libraries into generative models to prevent deviations.

- Accessibility and inclusive design: Enforce semantic markup, contrast checks, and keyboard navigability by default, especially when exploring parametric layouts with Autodesk Dreamcatcher.

- Measuring impact: Combine quantitative KPIs—such as iteration count and defect reduction—with qualitative feedback from designers and users via surveys and workshops.

- Iterative calibration: Document temperature settings, prompt templates, and style embeddings to align agent behavior with project goals and accelerate future prototyping.

- Intellectual property and ownership: Review vendor agreements for derivative work clauses. Define clear policies on asset provenance and licensing.

- Culture of experimentation: Create sandbox environments, internal hackathons, and show-and-tell sessions to encourage low-stakes trials and knowledge sharing.

- Long-term model evolution: Maintain modular pipelines and partnerships with research labs to integrate emerging techniques without disrupting workflows.

- Alignment with business objectives: Ensure prototyping metrics map to strategic goals—whether reducing time-to-market, improving engagement, or lowering development costs—and review ROI regularly.

By embedding these considerations into AI-driven prototyping initiatives, organizations can harness the full potential of autonomous agents while safeguarding creative agency, ethical standards, and design integrity.

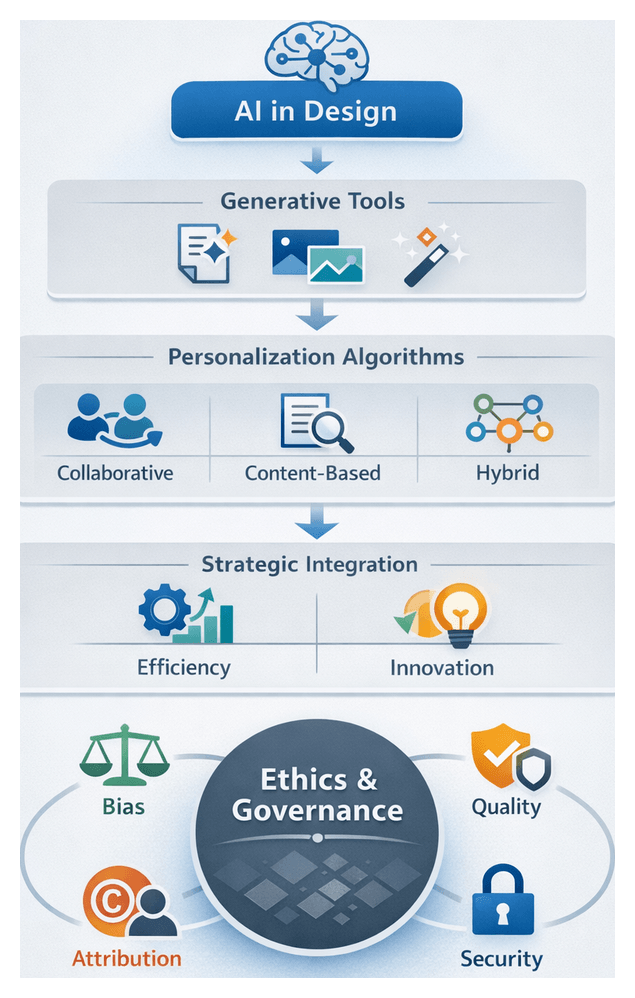

Chapter 6: Personalization and User-Centered Design

The Evolution of Creative Automation and Design Intelligence

Over the past decade, design practice has undergone a paradigm shift driven by the maturation of automation and machine learning technologies. Early desktop publishing tools automated layout calculations and alignment rules, but today’s AI-driven platforms extend far beyond rule-based logic. Systems such as Adobe Sensei and Canva Magic Write integrate predictive text, image suggestion, and style adaptation directly into design workflows. Specialized generative imagery engines like DALL·E 3, Midjourney, and Stable Diffusion convert text prompts into high-fidelity visuals, enabling rapid exploration of aesthetic possibilities. Meanwhile, branding engines such as Looka and Tailor Brands generate logos and color palettes by analyzing industry trends and user inputs, and video platforms like RunwayML offer real-time editing and style transfer powered by cinematic datasets.

As these capabilities proliferate, the definition of design work expands to include the orchestration of AI agents alongside traditional creative methods, allowing human professionals to focus on strategic direction, narrative development, and creative curation.

Data-Driven Personalization in Design

Data-driven customization has become fundamental for delivering personalized experiences at scale. Rather than relying on static personas, organizations now transform behavioral signals—clickstreams, browsing patterns, purchase histories—into tailored interfaces and multimedia content. Three core algorithmic approaches underpin modern personalization:

- Collaborative Filtering: User-based and item-based methods infer preferences by examining similar users’ behaviors, with diversity constraints and temporal decay factors mitigating cold-start and echo chamber effects.

- Content-Based Filtering: Metadata and feature analysis—textual embeddings, visual descriptors, semantic tags—drive recommendations with high interpretability, essential in regulated industries.

- Hybrid Models: Ensembles or cascade architectures blend collaborative and content-based outputs, optimizing precision, recall, and serendipity through weighted re-ranking and contextual signals.

Advanced personalization incorporates real-time adaptation and predictive modeling. Session-based recommendations adjust content dynamically, predictive user segmentation identifies transient states, and reinforcement learning agents optimize long-term engagement policies. Platforms like Adobe Sensei, Dynamic Yield, and Optimizely provide cloud-native APIs and microservices architectures for scalable deployment, with performance evaluated on latency, responsiveness, and prediction stability. Ethical transparency is maintained through explainability tools such as LIME and SHAP, ensuring clear audit trails for recommendation logic.

Strategic Imperatives and Adoption Drivers

The convergence of powerful GPUs, specialized AI accelerators, and accessible frameworks like TensorFlow and PyTorch has lowered barriers to entry for AI-powered design. Cloud services, including Adobe Sensei and RunwayML, enable studios of all sizes to integrate generative functions without deep in-house expertise. Concurrently, competitive pressures demand novel, personalized experiences and faster time to market. Agencies report that AI agents halve the effort spent on asset variations, allowing teams to focus on storytelling and brand differentiation. Tools like Adobe Firefly and Figma’s AI-assisted plugins support rapid prototyping and iterative feedback loops within collaboration platforms such as Figma and Sketch.

Strategically, organizations must view AI integration as a dynamic capability—sensing opportunities, seizing them through experimentation, and reconfiguring resources to sustain competitive advantage. This requires ambidextrous leadership that balances exploratory AI initiatives with the exploitation of existing creative strengths, aligning investments with clear KPIs tied to efficiency, quality, and innovation.

Challenges, Ethical Considerations, and Governance

Despite its promise, creative automation introduces challenges that demand rigorous oversight:

- Quality Control and Consistency: Automated outputs vary in fidelity. Ensuring brand alignment and project relevance often requires manual refinement and strict governance frameworks.

- Bias and Representation: Training data may encode cultural or socioeconomic biases. Regular audits, diverse training sets, and fairness evaluation protocols are essential to mitigate representational risks.

- Creative Agency and Attribution: Generative artifacts raise authorship and intellectual property questions. Clear licensing and attribution practices preserve human creative identity while respecting contributor rights.

- Skill Gaps and Change Management: Effective collaboration with AI agents necessitates new literacies in prompt engineering, model evaluation, and data interpretation. Structured upskilling and cultural incentives foster adoption.

- Integration Complexity: Embedding AI within existing toolchains—whether Adobe Creative Cloud or the OpenAI API—requires technical orchestration, security vetting, and cross-functional coordination.

- Governance and Accountability: Delegating creative decisions to autonomous systems challenges traditional hierarchies. Defined escalation paths, audit trails, and ethical impact matrices ensure transparency and legal compliance.

- Cost Structure: AI adoption adds compute infrastructure, data acquisition, and maintenance expenses. Financial planning must balance these costs against projected efficiency gains and strategic value.

- Generalization versus Specialization: Foundation models offer breadth but may lack domain nuance, while specialized models deliver precision at higher development cost. Selecting the right model mix is critical.

Analytical Frameworks and Organizational Best Practices

Design leaders leverage a variety of interpretive lenses to navigate AI integration:

- Human-Agent Symbiosis Model: Views designers and AI agents as co-evolving partners, emphasizing iterative dialogues rather than static prompts.

- Capability Maturity Framework: Maps AI adoption from experimental proof-of-concept to strategic alignment and business model innovation.

- Personalization Maturity Model: Stages progress from rule-based targeting to autonomous, multi-channel personalization, guiding investment roadmaps.

- Value-Ethics Triangle: Balances user relevance, business gains, and ethical integrity to illuminate trade-offs in personalization aggressiveness.

- Ethical Impact Matrix: Cross-references agent capabilities with bias vectors and accountability mechanisms, prioritizing risk mitigation.

- Value Realization Taxonomy: Distinguishes direct efficiency gains from strategic value creation, aiding stakeholder alignment on ROI narratives.

- Co-Design Cognitive Schema: Examines shared mental models, interaction protocols, and the externalization of creative intent in human-AI collaboration.

Best practices include establishing cross-functional governance teams that unite design leadership, data science, legal counsel, and ethics officers. Continuous experimentation through A/B testing, multivariate analysis, and holdout cohorts validates value propositions while preventing false positives. Robust data governance ensures representative and compliant datasets. Explainability tools such as LIME and SHAP foster trust, particularly in regulated sectors. Cloud-native architectures and microservices support scalable deployment, and phased pilot programs with clear KPIs enable organizations to validate benefits before scaling.

Key Takeaways for Practitioners

- Position AI agents as collaborators that amplify human insight rather than replace creative expertise.

- Adopt a phased integration strategy, starting with small-scale experiments to validate value before enterprise-wide rollout.

- Invest in interdisciplinary governance structures that unify design, data science, ethics, and legal domains.

- Commit to ongoing skill development in prompt engineering, model interpretation, and AI literacy.

- Prioritize data integrity and bias mitigation as foundational elements of generative design initiatives.

- Balance efficiency gains with creative diversity by maintaining open-ended exploration alongside structured prompts.

- Plan for the full cost lifecycle of AI agents, including infrastructure, integration, licensing, and maintenance.

- Ensure transparency around AI contributions in stakeholder communications to uphold credibility and informed collaboration.

- Monitor emerging trends—edge personalization, multimodal synthesis, federated learning—to anticipate how next-generation AI will reshape design practice.

Chapter 7: Human-AI Co-Design Dynamics

The Evolution and Current Landscape of Creative Automation

The creative industries are experiencing a profound transformation as automation and artificial intelligence reshape traditional design workflows. Originating with desktop publishing tools that automated pagination and basic formatting, the field has progressed through scripting interfaces in vector drawing applications and rule-based parametric systems in manufacturing and architecture. Over the past decade, machine learning has accelerated this evolution. Data-driven inference now enables generative systems to recognize patterns, propose compositions, and produce original assets from vast image, layout, and text datasets. Creative outputs have shifted from the product of predefined scripts to emergent artifacts informed by statistical models and large-scale training data.

Today’s market offers a spectrum of AI-driven design assistants. Generative adversarial networks create photorealistic textures and characters, reinforcement learning optimizes interface usability, and natural language models draft headlines, product descriptions, and narratives. Platforms such as Adobe Sensei integrate automated asset tagging and style transfer. Canva proposes brand-consistent templates, while Autodesk Generative Design evolves structural components via simulation data. Specialized tools like Runway ML and Midjourney enable rapid visual concept prototyping. Together, these applications illustrate both the promise of accelerated creativity and the complexity introduced by an expanding ecosystem of AI capabilities.

Several forces drive adoption of creative automation. Businesses demand faster time-to-market for campaigns, product launches, and digital experiences. Consumer expectations for personalized content at scale continue to rise. Competition for talent and budget pressures compels organizations to streamline workflows. Meanwhile, access to cloud-based AI services democratizes advanced capabilities for small studios and independent creators. Rapid improvements in compute resources and open-source machine learning frameworks lower the barrier to innovation. As a result, both legacy agencies and in-house teams are under pressure to evaluate AI solutions, integrate them into existing pipelines, and measure their impact on quality and efficiency.

Challenges and Strategic Considerations

Despite clear benefits, integrating AI into creative operations presents significant challenges. Addressing these issues requires strategic alignment, robust governance, and continuous learning.

Fragmented Tool Ecosystems