Data Analysis AI Agents Insights Harnessing Autonomous Intelligence for Deeper Data Understanding

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

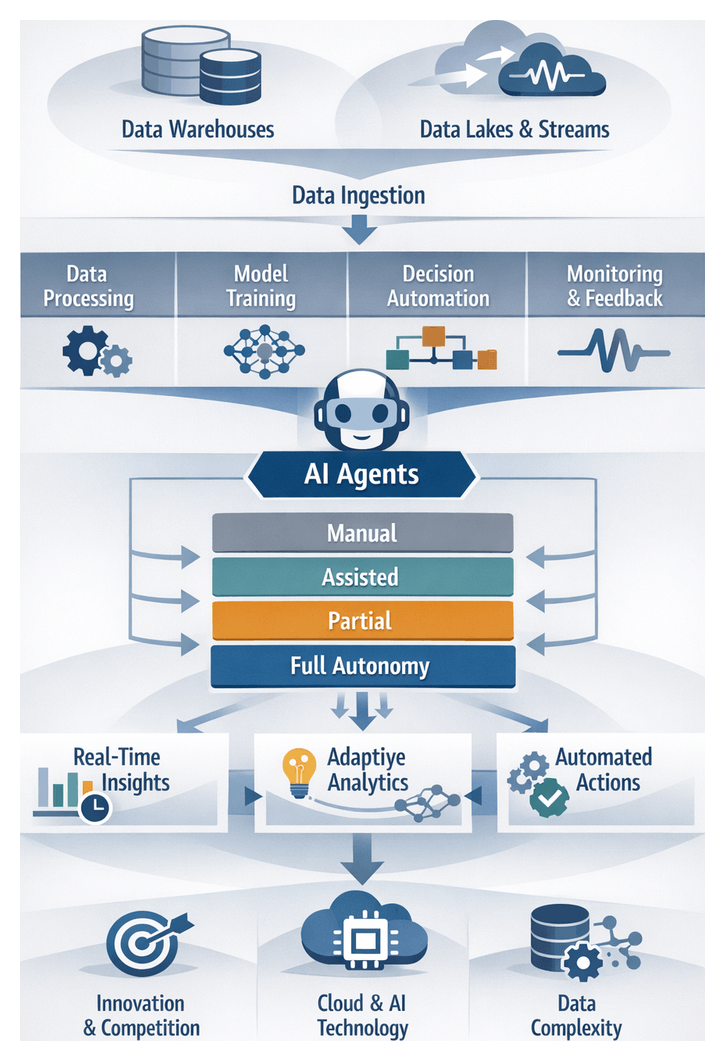

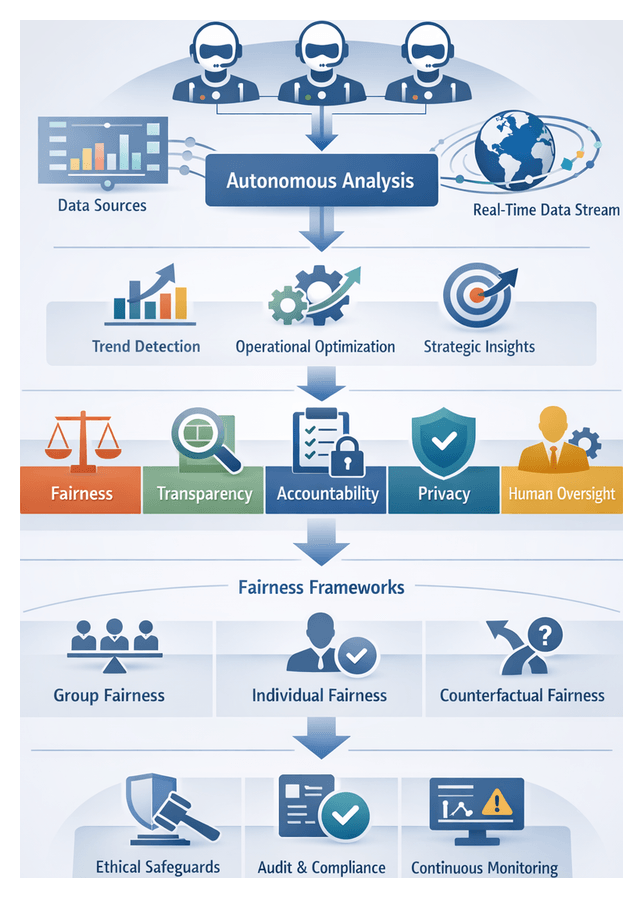

Evolution of Data Autonomy in Analytics Ecosystems

Organizations today face unprecedented volumes, varieties, and velocities of data across every function and industry. Early digital transformation efforts centralized information into relational warehouses and business intelligence platforms. With the advent of cloud-native solutions like Snowflake and Databricks, enterprises built sprawling data lakes and real-time ingestion pipelines. At the same time, sources such as Internet of Things devices, mobile apps, and social media introduced unstructured and semi-structured streams that overwhelmed manual extract-transform-load processes and static reporting. In response, data autonomy has emerged as a strategic imperative, embedding intelligence into analytics workflows through self-directed AI agents that generate proactive insights and orchestrate end-to-end processes without constant human intervention.

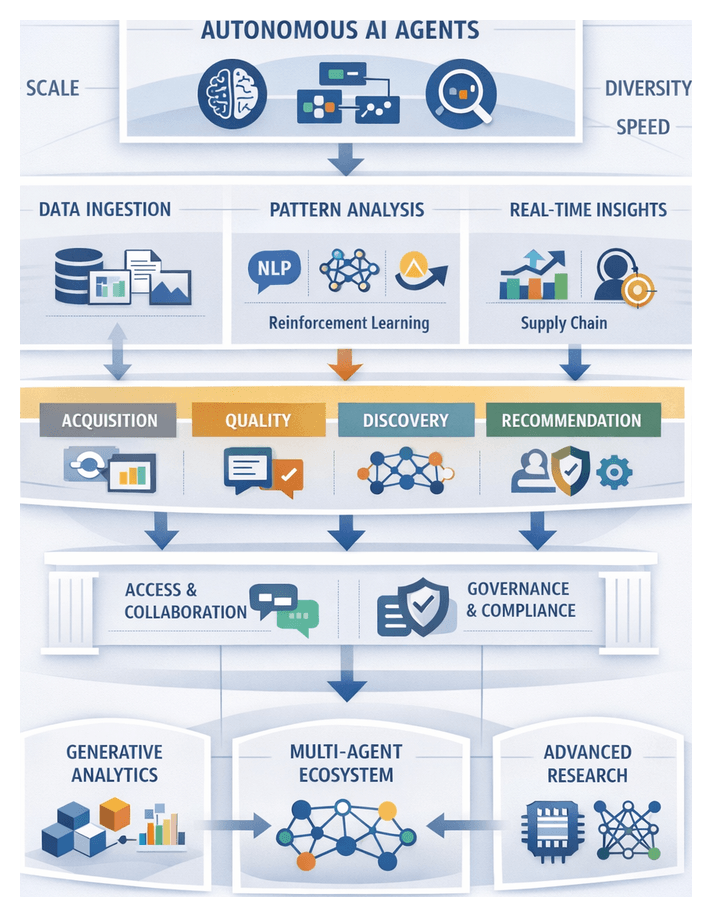

From Centralized Warehouses to Intelligent Agents

The analytics landscape has shifted from scheduled batch jobs and human-driven hypothesis testing to systems capable of continuous self-configuration. Autonomous AI agents leverage machine learning, natural language processing, and optimization algorithms to ingest diverse data sources, detect anomalies, retrain models in real time, and recommend actions. This evolution addresses challenges of scale, complexity, and speed by automating data quality monitoring, resource provisioning, and contextual reasoning, ultimately accelerating decision cycles and enabling organizations to manage information assets at unprecedented scale.

Defining Autonomous AI Agents

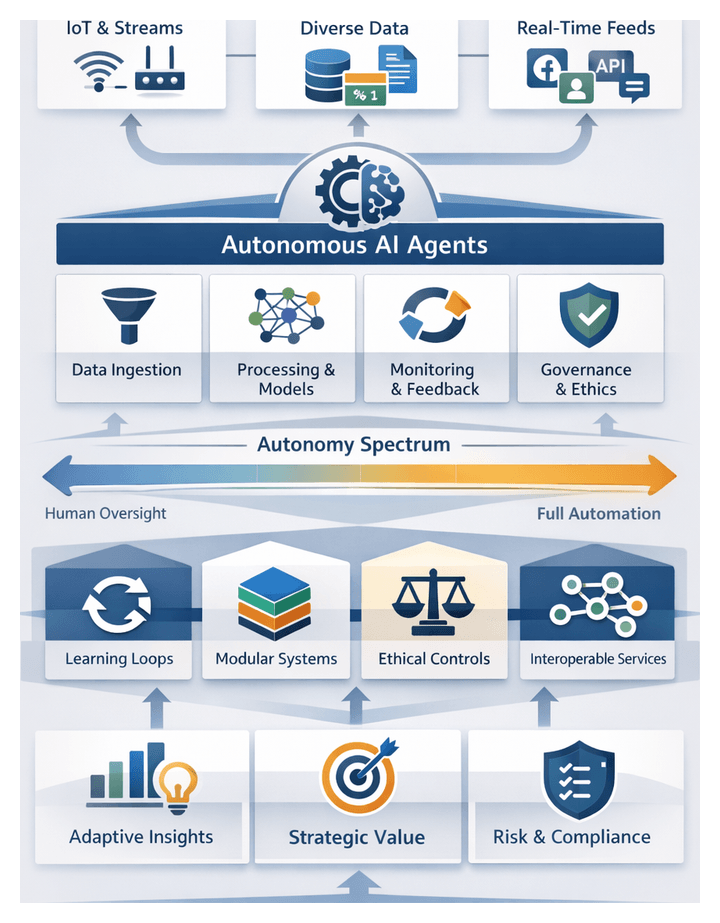

What Is Data Autonomy?

Data autonomy refers to the capacity of an analytics system to independently manage key stages of data ingestion, processing, analysis, and interpretation. Unlike traditional tools that execute predefined queries or dashboards on demand, autonomous agents initiate tasks, prioritize objectives, adapt to changing conditions, and iterate through feedback loops. This self-directed behavior transforms analytics from a reactive service into a strategic driver of value.

Core Components of Autonomous Agents

- Perception engines that connect to structured databases, cloud object stores, APIs, and streaming sources.

- Preprocessing modules for cleaning, normalization, and schema evolution management.

- Model training frameworks that evaluate algorithms, select optimal configurations, and manage retraining triggers.

- Decision logic leveraging probabilistic planning, reinforcement learning, and utility-driven optimization.

- Monitoring layers for drift detection and self-healing pipelines.

- User interfaces that generate visualizations, narratives, and recommendation alerts.

Autonomy Spectrum

Practitioners assess agent capabilities along a continuum:

- Mundane Automation: Fixed scripts executing static tasks.

- Assisted Intelligence: Human-in-the-loop confirmation before actions.

- Partial Autonomy: Self-governed routine processes with escalation for complex decisions.

- Full Autonomy: End-to-end workflow management and adaptation without human input.

Distinguishing Agents from Traditional Software

- Dynamic Adaptation versus Static Scheduling: Agents adjust pipelines in real time based on data anomalies and emerging patterns.

- Contextual Reasoning versus Pre-configured Rules: Natural language understanding and semantic analysis enable agents to interpret user intent and business context.

- Self-Improvement versus Manual Tuning: Continuous learning loops allow agents to refine decision policies without expert intervention.

- Unified Orchestration versus Point Solutions: Agents integrate ingestion, modeling, and delivery into cohesive workflows.

Drivers of Autonomous Analysis

Market Catalysts

Heightened competitive intensity, commoditization of insights, and the demand for real-time differentiation compel organizations to seek faster, deeper analytical capabilities. Start-ups and digital entrants leverage data-driven strategies to challenge incumbents, eroding traditional barriers to entry. Meanwhile, open-source libraries and platforms such as Microsoft Azure Cognitive Services and Databricks SQL Analytics democratize basic analytics, making agility and contextual interpretation key differentiators. In high-frequency domains—financial trading, digital marketing, supply chain orchestration—agents deliver sub-second insights that manual or scheduled processes cannot achieve.

Technological Enablers

Advances in cloud compute elasticity, foundational AI models, and data integration frameworks underpin the rise of autonomous agents. Hyperscale providers offer on-demand GPU and CPU resources for rapid model training and inference. Pretrained transformers and graph neural networks empower agents to process unstructured text, recognize semantic relationships, and generate interpretive narratives. Platforms like Google Vertex AI and Amazon SageMaker standardize workflows for fine-tuning these models on enterprise data. Data virtualization, metadata orchestration, and event streaming allow agents to access hybrid, multi-cloud, and on-premises sources without cumbersome ETL, maintaining alignment with evolving schemas through metadata-driven pipelines.

Organizational Imperatives

Scarcity of data science talent, decentralization of decision rights, and a cultural shift toward data-driven decision making drive agent adoption. Autonomous agents amplify existing teams by automating routine tasks and translating results into business-friendly narratives, enabling analysts to focus on domain interpretation. In federated models aligned with data mesh principles, agents deployed at the edge empower local teams under centralized governance guardrails. This balance accelerates insight delivery while preserving compliance and auditability.

- Competitive Tension elevates the cost of insight latency.

- Compute Elasticity lowers the barrier to iterative experimentation.

- Data Heterogeneity demands autonomous integration across silos.

- Talent Gaps shift roles toward interpretation and oversight.

- Decentralized Governance fosters agility within compliance frameworks.

Strategic Insights and Interpretive Frameworks

Analytical Value Chain and Feedback Loops

The analytical value chain encompasses raw data acquisition, quality assurance, modeling, visualization, and decision activation. Autonomous agents introduce continuous feedback at each stage: real-time data quality checks, dynamic model refinement, adaptive visualization updates, and automated action triggers. The coherence of these loops determines an agent’s effectiveness in maintaining contextually relevant insights as new data arrives.

Maturity Continuum

Organizations map agent implementations against a maturity continuum to guide adoption and risk management. Early stages involve guided assistants requiring frequent prompts. Intermediate levels feature partial autonomy with human oversight on anomalies. The apex delivers fully self-directed systems that plan and execute analytical experiments autonomously. Benchmarking against these milestones forces clarity on readiness, dependency gaps, and governance requirements before scaling.

Trust Calibration and Governance

Trust in autonomous analysis hinges on transparency, explainability, and performance consistency. Frameworks such as explainable AI libraries and model cards document decision pathways and variable influences. Governance dashboards track metrics like error rates, drift detection events, and corrective actions. Cross-functional committees ensure ethical standards, regulatory compliance, and strategic alignment govern agent behavior, preserving the delicate balance between autonomy and human authority.

Deployment Considerations

- Data Governance and Quality: Define ownership, stewardship policies, and robust metadata catalogs to ensure reliable inputs.

- Legacy Integration: Ensure API compatibility, real-time connectivity, and schema alignment with existing BI platforms and operational systems.

- Explainability and Auditability: Implement frameworks for interpretable reasoning, regulatory reporting, and ethical oversight.

- Scalability and Performance: Choose architectures—distributed clusters or edge compute—that balance responsiveness with cost and resource constraints.

- Change Management and Adoption: Engage analysts through pilots, training, and clear role definitions to foster collaborative human-AI workflows.

- Security and Compliance: Apply identity access controls, encryption, and standards (GDPR, HIPAA) to protect sensitive information.

Roadmap for Practitioners

- Conduct a Readiness Diagnostic: Evaluate data maturity, infrastructure capabilities, and cultural readiness for autonomous analytics.

- Define High-Value Use Cases: Prioritize scenarios such as anomaly detection, demand forecasting, or fraud monitoring where agents can deliver rapid impact.

- Launch Controlled Pilots: Validate agent capabilities in sandbox environments, refine governance mechanisms, and capture lessons.

- Establish Governance Foundations: Implement performance monitoring dashboards, retraining triggers, bias mitigation processes, and ethical review boards.

- Scale via Modular Architectures: Leverage reusable analytics components and standardized integration patterns to expand across business units.

- Foster an AI-Enabled Culture: Provide ongoing training, reward data-driven decision making, and embed agent collaboration into routine workflows.

Chapter 1: Foundations of AI Agents in Data Analytics

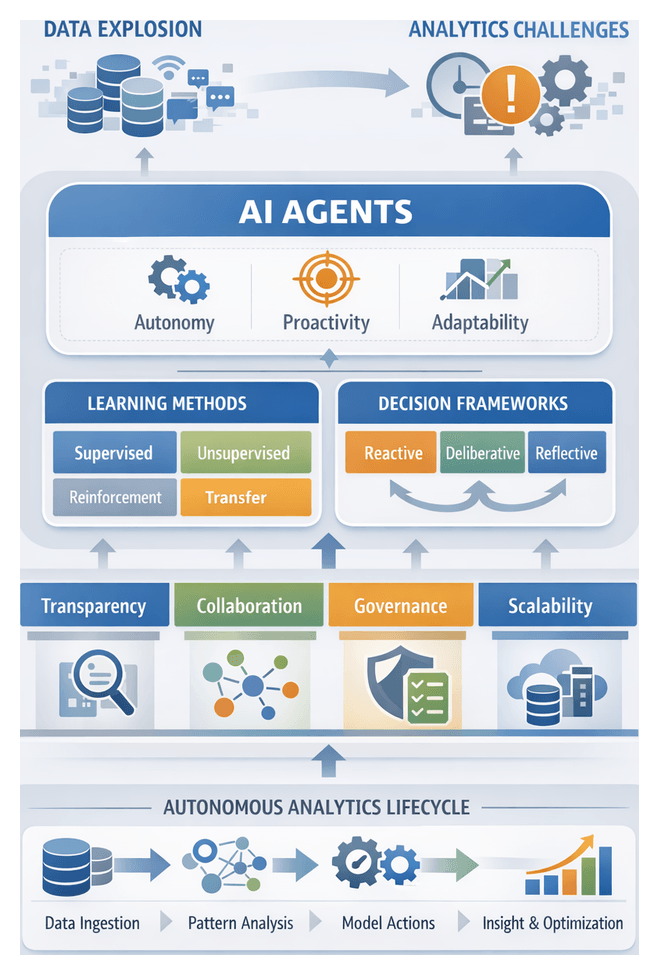

The Imperative for Autonomous Analytics

In data-driven enterprises, volumes of structured and unstructured information—ranging from transactional logs and IoT streams to social media feeds—have outstripped the capacity of manual analysis workflows. Traditional extract, transform and load pipelines and static business intelligence tools struggle to adapt to dynamic data characteristics, schema drift and real-time processing demands. This data explosion, coupled with intense competitive pressures and a shortage of skilled analytics professionals, drives the need for self-directed systems that minimize human intervention and accelerate time to insight. Autonomous analytics systems ingest, process and interpret diverse datasets continuously, detecting anomalies, optimizing processing pipelines and scaling compute resources according to defined success metrics such as completeness, consistency and relevance. By embedding intelligence into core data operations, organizations can shift from delayed reporting to a living intelligence layer that propels innovation across finance, manufacturing, retail and beyond.

Defining AI Agents and Core Attributes

At the heart of the autonomous analytics paradigm are AI agents—software entities endowed with autonomy, proactivity and adaptability. Autonomy enables agents to initiate, plan and execute analytical workflows without granular human direction. Proactivity equips them to anticipate needs by monitoring performance indicators and initiating corrective actions before thresholds are breached. Adaptability ensures that agents refine their models and strategies in response to evolving data patterns, schema changes or shifting business priorities. Two additional attributes—transparency and collaboration—are essential for auditability and trust: transparency surfaces decision logs, model feature importances and rationale artifacts, while collaboration supports iterative feedback loops with human analysts and adjacent systems.

Analytical autonomy extends these principles by engaging agents in hypothesis generation, validation and refinement cycles. For example, an agent may detect a shift in customer churn rates, formulate causal hypotheses, test them against historical records and present findings along with confidence estimates. Decision mechanisms rely on statistical inference, optimization heuristics and machine learning techniques such as supervised, unsupervised, reinforcement and transfer learning. Reinforcement learning optimizes long-term objectives through trial-and-error, while probabilistic models quantify uncertainty and guide data acquisition when confidence is low. Rule-based components encode domain expertise and governance constraints, aligning agent behaviors with organizational policies.

AI agent architectures typically integrate modular pipelines for perception, reasoning and action. Perception modules handle data ingestion and feature extraction using libraries such as TensorFlow for deep learning and spaCy for natural language processing. Reasoning layers leverage algorithms from clustering to Bayesian networks to interpret patterns and propose next steps. Action modules orchestrate downstream tasks, triggering data transformations, model retraining, alerts, or visualization updates. Together, these modules enable end-to-end autonomy in the analytics lifecycle.

Architectures and Decision-Making Paradigms

AI agents manifest across a spectrum of decision-making frameworks—reactive, deliberative and reflective. Reactive agents execute event-driven pipelines when predefined triggers occur. Deliberative agents maintain internal goal models, selecting sequences of analytical operations based on projected outcome utility. Reflective agents add meta-reasoning layers that monitor performance, diagnose regressions and adapt strategies dynamically.

Learning paradigms include:

- Supervised learning for training predictive models on labeled data (for example, credit scoring or churn prediction).

- Unsupervised learning for pattern recognition and anomaly detection in unlabeled datasets.

- Reinforcement learning for optimizing sequential decision policies in dynamic pricing or resource allocation.

- Transfer learning for adapting pre-trained models to related tasks, reducing data and compute requirements.

To guide agent selection and evaluation, practitioners employ interpretive taxonomies such as the autonomy spectrum, which ranges from assisted analysis—where agents suggest actions for human approval—to full autonomy, where agents manage entire analytical pipelines. The International Data Architecture Association’s functional taxonomy further categorizes agents by roles: exploratory profiling, predictive modeling, prescriptive optimization and monitoring. Socio-technical frameworks position agents within enterprise ecosystems of governance, data literacy and culture, emphasizing that technical proficiency alone is insufficient for success.

Tools and Platforms for Autonomous Analysis

Modern autonomous analytics leverage a mix of open source libraries and cloud-native services to streamline development, deployment and governance. Key technologies include:

- TensorFlow and PyTorch for building and training deep learning models at scale.

- spaCy and Hugging Face for natural language processing and transformer-based inference.

- Amazon SageMaker and Microsoft Azure Machine Learning for managed model training, deployment and MLOps workflows.

- DataRobot and H2O.ai for automated model governance, versioning and drift monitoring integrated into unified interfaces.

- IBM Watson Studio for end-to-end data science lifecycle management, from data preparation to deployment.

These platforms encapsulate best practices in model evaluation, explainability and ethical governance. They facilitate composable agent libraries, sandbox experimentation environments and integrated performance dashboards that track metrics such as latency, accuracy and business KPIs.

Governance, Collaboration, and Integration

Robust governance and clear collaboration models are critical to scaling autonomous analytics responsibly. Interaction paradigms span human-in-the-loop—where agents pause for human judgment—to human-on-the-loop, which provides transparency and override capabilities, and human-out-of-the-loop for standardized, low-risk scenarios. In regulated industries, human-on-the-loop frameworks prevail, using confidence thresholds to trigger manual review and explainable AI tools such as SHAP or LIME to produce feature-level impact summaries.

Governance councils comprising stakeholders from analytics, IT, legal and business units define policies on data access, model validation, audit trails and recovery protocols. Key artifacts include ethical impact assessments, immutable logs of data lineage and decision rationales, performance scorecards and rollback procedures. Cross-functional ownership models ensure shared responsibility for agent performance, infrastructure costs and change management, fostering a culture of accountability and trust around autonomy.

Integration pilots validate end-to-end workflows within legacy ecosystems, addressing data latency, API constraints and system interoperability. By phasing in agent responsibilities—starting with targeted tasks such as anomaly detection—organizations build confidence and refine governance controls before expanding autonomy.

Deployment Strategy and Best Practices

Successful autonomous analytics initiatives follow a pragmatic, phased approach:

- Align use cases to maturity: Begin with high-data-maturity domains and clearly measurable business outcomes, such as time-series forecasting or structured anomaly detection.

- Define success metrics: Go beyond model accuracy to track time-to-insight reduction, decision cycle acceleration and error rate improvements within executive dashboards.

- Govern incremental autonomy: Establish a roadmap that gradually reduces manual checkpoints as agents demonstrate consistent performance and compliance alignment.

- Embed feedback loops: Automate retraining triggers based on operational metrics—such as data drift or accuracy declines—with human review for major model updates.

- Invest in interpretability: Adopt frameworks that visualize decision pathways and quantify feature contributions, bridging technical and business stakeholder perspectives.

- Foster cross-functional stewardship: Create joint teams combining data scientists, IT architects and business users to share accountability for agent life cycles and outcomes.

By incorporating these practices into strategic planning, organizations can mitigate risks associated with data quality dependencies, transparency gaps and cultural readiness, laying the foundation for expanding autonomy in analytics.

Future Directions and Continuous Evolution

The autonomous analytics landscape evolves rapidly, and future-proofing initiatives requires flexibility and vigilance. Recommended approaches include:

- Modular architectures: Design agent frameworks with interchangeable components for data connectors, modeling engines and visualization tools, enabling seamless upgrades without system overhauls.

- Real-time health monitoring: Implement performance dashboards that track model drift, data latency and compute utilization, with automated alerts to prevent silent failures.

- Experimentation sandboxes: Maintain isolated environments for testing new algorithms, data sources and multi-agent coordination models, fostering innovation while containing risk.

- Elastic infrastructure: Leverage cloud-native services for dynamic scaling of compute and storage, ensuring responsiveness as analytic workloads expand.

- Research collaboration: Engage with academic and industry forums to stay current on emerging techniques in generative analytics, explainability and agent orchestration.

- Ethical and regulatory vigilance: Continuously audit agent behaviors against evolving privacy standards, bias metrics and compliance guidelines to safeguard stakeholder trust.

By institutionalizing these practices, organizations can sustain and extend the value of autonomous analytics, adapting to new data challenges and unlocking ever deeper insights.

Chapter 2: Core Technologies Powering Data Analysis Agents

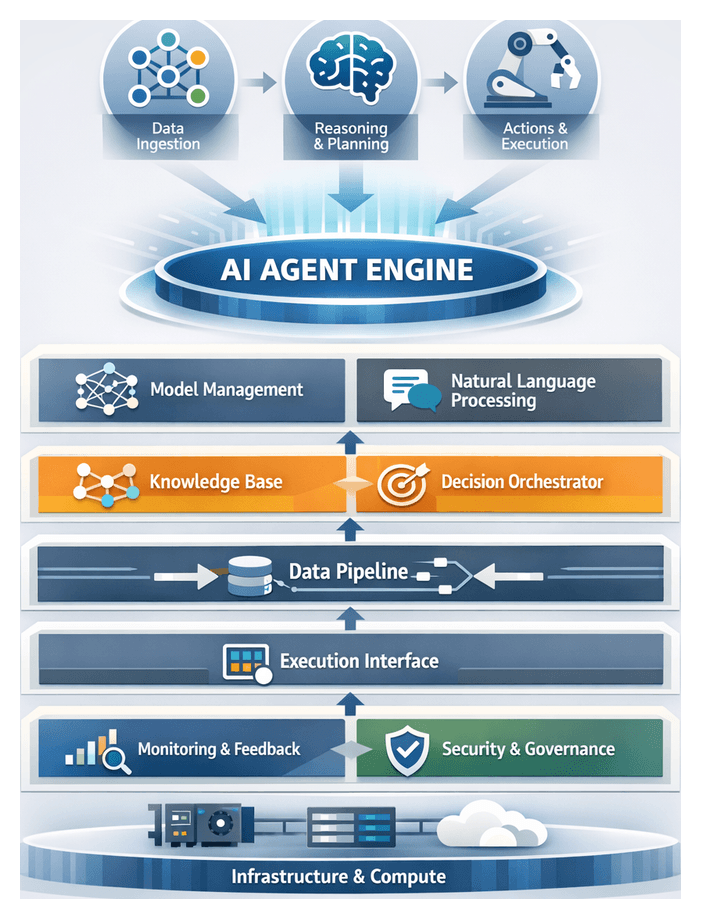

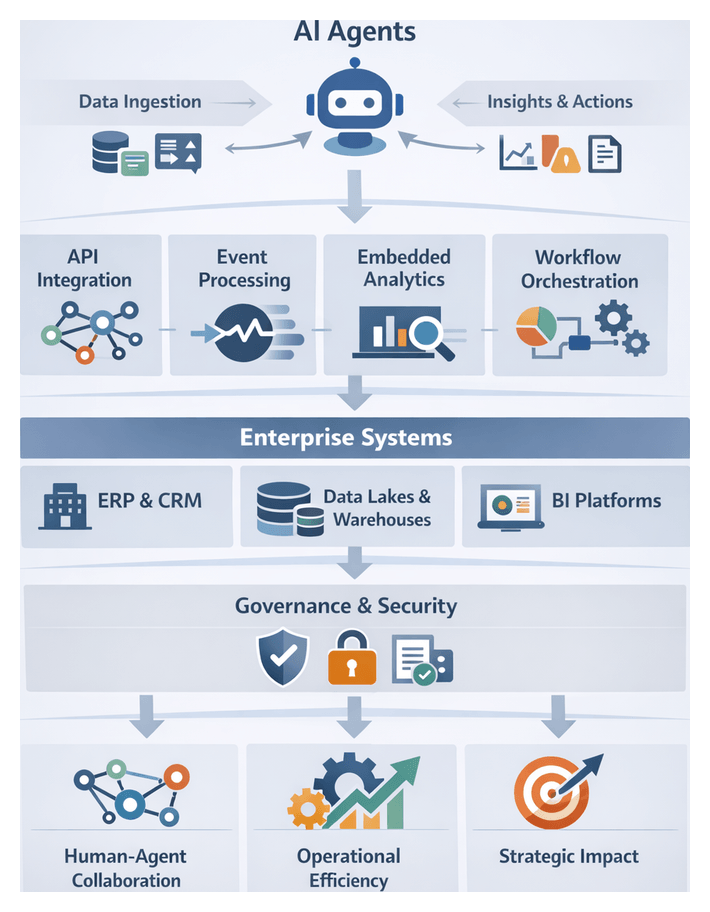

Context and Importance of Engine Components in Autonomous AI Agents

In today’s data-intensive enterprises, autonomous AI agents serve as vital collaborators for analytics, decision support and process automation. At their core, these agents rely on a cohesive engine architecture that unifies sensing, reasoning, action and learning. Well-designed engine components transform isolated software modules into a platform capable of ingesting diverse data streams, interpreting intent, executing tasks and continuously refining performance with minimal human intervention. Understanding this foundational layer is essential for architects, data engineers and business leaders seeking to deploy reliable, scalable and adaptive analytical solutions.

Engine components provide unified interfaces for model serving, natural language processing, workflow orchestration and governance. Without a robust engine, agents struggle to integrate heterogeneous sources, maintain reliability under load or adapt to evolving business objectives. This section lays out the core concepts, architecture patterns and trade-offs that underpin modern autonomous analytics platforms, equipping readers to evaluate or design enterprise-grade AI agents.

Layered Architecture of AI Agent Engines

A clear separation of concerns is achieved through a layered engine design. Each layer exposes standardized interfaces, enabling modular development, rapid experimentation and component interchangeability. Common layers include:

- Infrastructure and Compute Layer: Scalable orchestration of GPUs, CPUs and storage abstractions

- Data Ingestion and Preprocessing Layer: Connectors to databases, event streams, data lakes and APIs

- Model and Language Services Layer: Hosting of machine learning models, NLP engines and rule-based interpreters

- Decision and Planning Layer: Goal formulation, multi-step planners and optimization routines

- Execution and Action Layer: Interfaces to applications, BI platforms, dashboards and downstream systems

- Monitoring, Feedback and Governance Layer: Metrics collection, user feedback, audit trails and policy enforcement

This modular layout allows, for example, swapping an NLP with a transformer served via Hugging Face Transformers without altering orchestration logic. It also supports independent scaling, testing and governance of each component.

Core Engine Components and Their Roles

- Model Management and Serving Module: Manages model lifecycles, version control, A/B tests and rollouts. Common frameworks include TensorFlow Serving and TorchServe.

- Natural Language Understanding and Processing Engine: Extracts intent, entities and context for text or speech interactions. Options range from OpenAI’s GPT models for generative tasks to Rasa NLU for custom intent pipelines.

- Data Pipeline and Integration Manager: Orchestrates data flows from transactional systems, IoT sensors and third-party APIs. Tools such as Apache Kafka, Apache NiFi and cloud data services enable real-time streaming and batch ingestion.

- Knowledge Base and Context Repository: Stores structured knowledge graphs, ontologies and context snapshots for long-term reasoning. Graph databases like Neo4j or Ontotext GraphDB support semantic queries and relationship traversal.

- Decision Orchestrator and Planner: Interprets objectives, formulates multi-step plans and selects actions using constraint solvers, integer programming or reinforcement learning agents.

- Execution Interface and Action Dispatcher: Translates high-level decisions into API calls, SQL queries, dashboard updates or notifications. Workflow engines like Apache Airflow ensure reliable task scheduling and retries.

- Monitoring, Feedback and Self-Learning Loop: Captures performance indicators, user corrections and error rates to trigger retraining, hyperparameter tuning or policy updates. MLOps platforms such as MLflow and Kubeflow Pipelines streamline these loops.

- Security, Compliance and Governance Layer: Enforces access controls, encryption and audit logging. Policy engines like Open Policy Agent integrate governance frameworks for regulatory compliance.

Integration Patterns and Data Flow Architectures

Seamless cooperation among engine components is achieved through established integration patterns. Key approaches include:

- Event-Driven Architecture—Components communicate via message buses (e.g., Apache Kafka or AWS EventBridge), enabling real-time triggers from ingestion through decisioning.

- API-First Modular Design—Each service exposes REST or gRPC endpoints, with the orchestration layer handling sequencing, retries and version management.

- Shared Data Lake with Metadata Catalog—Centralized storage of raw and processed data alongside metadata, enabling components to read and write governed by a catalog service.

- Service Mesh for Secure Communication—Microservices use a mesh (e.g., Istio or Linkerd) to enforce encryption, policies and traffic routing transparently.

Data flow architectures range from batch processing for massive historical datasets to real-time streaming. Batch frameworks offer predictable execution windows, while streaming platforms such as Apache Pulsar, AWS Kinesis and Google Cloud Pub/Sub deliver low-latency event handling. Organizations choose between Lambda (combined batch and real-time) and Kappa (stream-only) patterns based on latency tolerance, consistency needs and operational overhead.

Model Integration, Standardization and Interpretability

Standardized interfaces ensure interoperability among heterogeneous model components. Formats like the Open Neural Network Exchange (ONNX) and Predictive Model Markup Language (PMML) enable model exchange across frameworks such as TensorFlow and PyTorch. Key criteria include serialization fidelity, language-agnostic exchange, backward compatibility and community governance. Containerized function interfaces, inspired by serverless paradigms, further enhance portability across cloud and on-premises environments.

Rigorous tracking of data provenance and model lineage is vital for transparency and compliance. Metadata catalogs and graph-based lineage systems document sources, transformations, feature derivation and hyperparameters. Interpretability frameworks embed explanatory annotations—feature attributions, counterfactuals and surrogate models—enabling stakeholders to reconstruct decision rationales. Organizations often align with the FAIR Data Principles for findability, accessibility, interoperability and reusability of data and models.

Performance Considerations and Infrastructure

Performance is multidimensional, encompassing inference latency, throughput, model accuracy, resource efficiency and sustainability. Decision makers use cost-performance curves, Pareto analyses and multi-objective matrices to balance these factors. Model architectures—from deep transformers and graph neural networks to classical algorithms—are evaluated via benchmarks like MLPerf Inference. Inference engines such as TensorFlow Serving, PyTorch Serve and the NVIDIA Triton Inference Server optimize execution, support dynamic batching and manage GPU memory.

Model optimization techniques including quantization (INT8), pruning and distillation reduce operational footprints and accelerate inference. Data pipeline throughput depends on processing strategies: micro-batch frameworks like Apache Spark Streaming versus true streaming systems. Hardware accelerators—GPUs, TPUs, FPGAs and AI ASICs—offer trade-offs in latency, energy efficiency and programming complexity. Cloud services such as AWS SageMaker, Google Cloud Vertex AI and Azure Machine Learning provide elastic endpoints, while hybrid deployments combine on-premises accelerators with cloud bursting for peak loads.

Balancing throughput and latency involves adaptive batching, priority queuing and distributed inference via Kubernetes or Kubeflow. Observability through time-series databases, distributed tracing and dashboards enables rapid detection and remediation of performance degradations. Sustainability considerations, guided by frameworks like the Green Software Foundation’s Energy Impact Model, factor energy consumption and carbon footprint into technology choices.

Explainability requirements introduce additional compute overhead. Tools such as NVIDIA Nsight and Intel VTune profile runtime behavior, uncover bottlenecks and support continuous integration pipelines. Contextual scenarios—from millisecond-latency trading to diagnostic healthcare—dictate distinct performance and explainability priorities. Standard benchmarks and governance protocols, including MLPerf and emerging regulatory frameworks, ensure accountability and comparability across deployments.

Cost-optimization strategies—spot instances, reserved capacity, serverless inference and dynamic scaling—align infrastructure spending with usage. By integrating performance targets with budget constraints, organizations achieve cost-effective scalability without sacrificing analytical agility.

Balancing Innovation with Practical Constraints

Pursuing advanced agent capabilities must be balanced against operational realities. Defining clear performance objectives tied to business value prevents overengineering. Pilot initiatives scoped for immediate ROI limit complexity, while roadmapped vendor maturity guides upgrade paths. Allocating resources for iterative improvements rather than large-scale upfront development accelerates time-to-value.

Scalability demands—distributed processing, microservices and specialized hardware—introduce integration and maintenance overhead. Leaders weigh horizontal scaling via Kubernetes against monolithic prototypes, and distributed datastores against single-node databases. Vendor ecosystems present trade-offs between proprietary lock-in and open-source flexibility. Hybrid architectures and abstraction layers help future-proof systems.

Total cost of ownership analyses encompass licensing, compute, data engineering, model training, monitoring and security audits. ROI frameworks compare development expenses and operational costs against revenue uplifts, cost reductions and risk mitigation. Multidisciplinary teams and data-driven cultures ensure AI insights are trusted and acted upon. Change management includes training, cross-functional collaboration, governance councils and user feedback loops.

Governance and security checkpoints—data catalogs, role-based access controls, encryption protocols, audit logging and compliance reviews—protect against unauthorized access and regulatory violations. Balancing high-performance models with interpretability may involve surrogate explainers, hybrid architectures and calibrated acceptance criteria. Modular designs, versioning practices and CI/CD pipelines mitigate technical debt. Ethical frameworks enforce bias detection, transparency, diverse oversight and human intervention in high-stakes scenarios, ensuring that autonomous agents uphold organizational and societal values.

Chapter 3: Intelligent Data Preparation and Quality Management

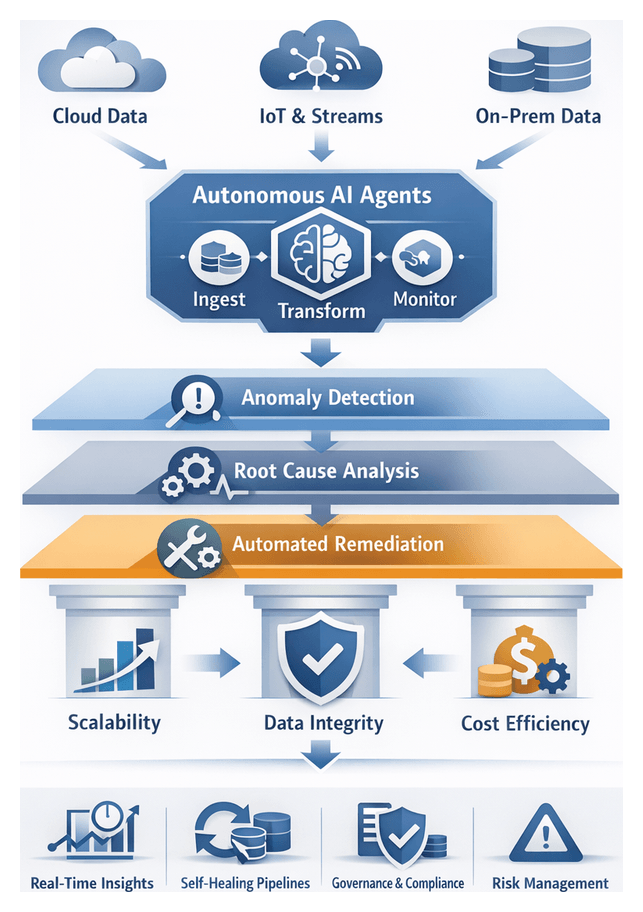

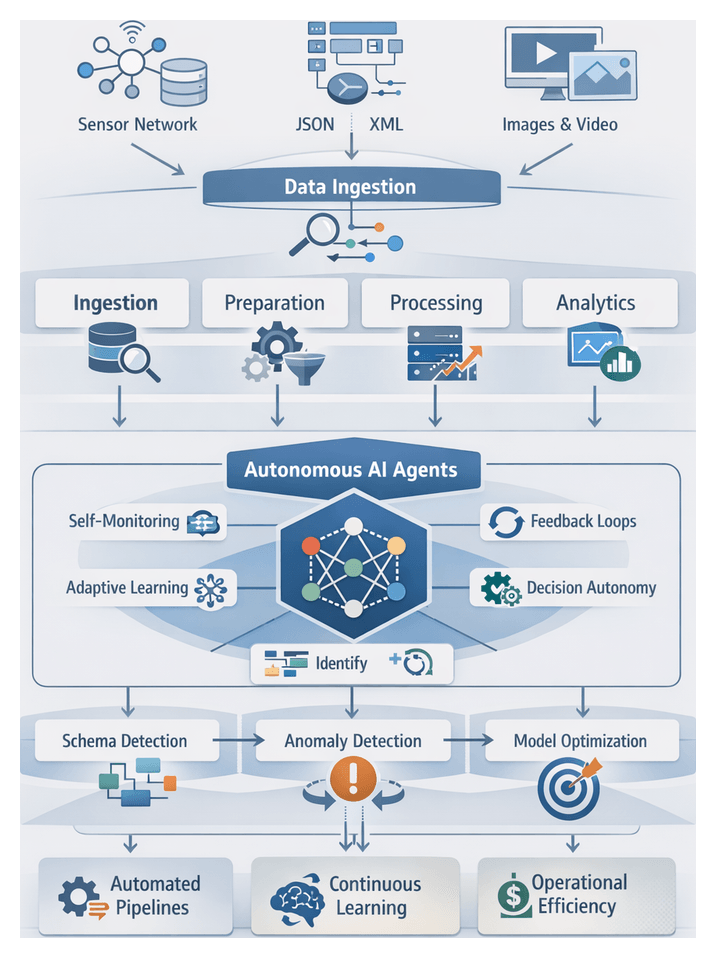

Data Autonomy: Transforming the Analytics Ecosystem

Enterprises today generate and process massive volumes of data across cloud platforms, streaming applications, on-premise repositories and edge devices. As data sources multiply and stakeholders demand real-time insights, traditional extract-transform-load (ETL) architectures strain under the weight of scale, complexity and speed. Data autonomy—a model in which intelligent software agents assume end-to-end responsibility for data stewardship—addresses these challenges by embedding machine learning, natural language processing and real-time orchestration throughout the analytics lifecycle.

Autonomous agents perform self-directed tasks that span source identification, ingestion, validation, transformation, cataloging and monitoring. They detect anomalies, resolve inconsistencies, adapt to schema changes and maintain transparent lineage. By shifting repetitive preparation work from humans to machines, organizations can:

- Scale ingestion and cleansing to petabyte volumes.

- Navigate diverse formats and evolving schemas.

- Deliver insights on demand for agile decision making.

Three converging trends drive the urgency for autonomy: the explosion of structured and unstructured sources including IoT and social feeds; the imperative for instantaneous, predictive and prescriptive analytics; and resource constraints that limit the availability of specialized data engineers. In response, data professionals now define high-level objectives, quality thresholds and governance policies, while agents translate those specifications into operational workflows, surface exceptions and learn from feedback.

Self-Healing Pipelines: Conceptual Foundations

Self-healing pipelines extend data autonomy by detecting, diagnosing and remediating issues without direct human intervention. Moving beyond simple scheduling and alerting, these pipelines embody closed-loop logic to sustain data integrity, flow continuity and analytical reliability under dynamic conditions.

Layered Architecture for Anomaly Management

- Anomaly Detection Layer: Monitors schema conformity, row counts and distribution patterns.

- Diagnostic Layer: Correlates deviations with latency spikes, schema shifts or upstream failures to identify root causes.

- Remediation Layer: Executes predefined or adaptive fixes, from schema reconciliation and data imputation to process restarts and transaction replays.

Detection Mechanisms

- Rule-based monitoring using integrity constraints and thresholds.

- Statistical profiling of time-series baselines and multivariate correlations.

- Machine learning classifiers trained on labeled incident data.

Rule-based approaches offer transparency and ease of auditing but can be brittle. Statistical methods adapt to drift yet risk false positives during legitimate shifts. Machine learning models provide nuanced detection but require curated training sets and ongoing governance.

Automated Remediation Strategies

- Schema Reconciliation: Dynamic field mapping and optional attribute handling.

- Data Imputation: Statistical interpolation, predictive modeling or reference lookups.

- Process Rescheduling: Rerouting flows to alternate clusters or pausing until upstream systems stabilize.

- Rollback and Replay: Reverting to the last known good state and replaying batched transactions.

Leading practices combine deterministic fixes for low-risk anomalies with conditional workflows for complex issues, preserving resilience without compromising data fidelity.

Interpretive Frameworks

- Resilience-First Model: Prioritizes rapid recovery via hot failovers and process duplication.

- Data-Integrity Model: Emphasizes detection precision, audit trails and remediation traceability.

- Cost-Efficiency Model: Balances automation benefits against infrastructure and maintenance overhead.

Financial services often adopt data-integrity models with strict compliance tracking, whereas digital media platforms lean toward resilience-first architectures to support high-velocity personalization.

Metrics for Effectiveness

- Mean Time To Detection (MTTD): Time from anomaly onset to detection.

- Mean Time To Repair (MTTR): Duration between detection and remediation.

- Automated Remediation Rate: Percentage of incidents resolved without human intervention.

- False Positive Rate: Share of alerts triggered by non-actionable deviations.

- Data Quality Impact Score: Composite of downstream error rates, drift incidents and stakeholder feedback.

Top-performing pipelines achieve automation rates above 80 percent with false positive rates below 10 percent, though actual figures depend on domain complexity and data variability.

Building Trust and Mitigating Risk

Continuous Quality Assurance

Autonomous quality management agents monitor data streams in real time, enforcing schema validation, completeness thresholds and anomaly detection. They generate contextual alerts that explain the nature and severity of quality issues, enabling analysts, executives and operational teams to interpret insights with full awareness of any caveats.

Predictive Risk Management

By modeling historical anomaly patterns and integrating external signals—such as system performance metrics or update schedules—agents can forecast potential quality degradations. This forward-looking approach allows data teams to allocate resources proactively, ensuring that critical dashboards and reporting pipelines remain accurate and actionable.

Governance, Compliance and Auditability

Quality agents embed policy enforcement directly into data pipelines, validating compliance with retention schedules, privacy regulations like GDPR and CCPA, and internal standards. Automated policy checks reduce manual governance overhead while producing audit-ready logs of detected issues, corrective measures and stakeholder notifications.

Cross-Functional Collaboration and Culture

Agents classify quality incidents by domain, severity and business impact, providing a shared language for data engineers, analysts and business users. Contextual metadata—source identifiers, change timestamps and anomaly signatures—facilitates rapid root-cause analysis and strategic discussions. Over time, stakeholders internalize quality principles through exposure to agent-generated insights, accelerating data literacy and fostering a culture of continuous improvement.

Accelerating Decision Cycles

By preemptively gating data quality at ingestion, agents prevent flawed information from propagating through analytics pipelines. Near-real-time validation within event-driven architectures surfaces issues within seconds, enabling rapid response to market dynamics, environmental factors or operational disruptions.

Best Practices for Autonomous Data Preparation

- Establish Clear Governance Frameworks: Define steward roles for metadata standards, quality metrics and transformation logic. Codify approval workflows to ensure agents operate within business and regulatory boundaries.

- Define Measurable Quality Metrics: Agree on KPIs such as duplication rates, missing value thresholds and schema drift frequencies. Monitor metrics through dashboards or alerts to keep agents calibrated.

- Leverage Explainable Transformation Logic: Choose platforms like Trifacta and Alteryx that expose visual lineage views, rule annotations and correction rationales to empower auditability and stakeholder trust.

- Preserve Domain Context: Enrich agent knowledge bases with business glossaries, domain ontologies and custom dictionaries to avoid semantic misinterpretation in specialized areas.

- Implement Iterative Feedback Loops: Capture false positives, negatives and edge cases from stewards and end users. Incorporate curated examples into training corpora or rule sets to refine agent inference patterns.

- Balance Automation with Human Oversight: Set confidence thresholds and escalation policies to ensure that complex schema conflicts, ambiguous corrections or high-impact anomalies are reviewed by humans.

- Ensure Scalability and Performance: Architect pipelines on distributed engines or cloud-native services like Informatica and Talend. Perform load testing with representative datasets to validate throughput under peak demand.

- Embed Security and Privacy Controls: Enforce encryption at rest and in transit. Integrate data masking or tokenization policies and align with identity and access management solutions to protect sensitive information.

Caveats and Strategic Considerations

- Bias Propagation: Agents trained on historical data may inherit and amplify existing biases. Regular bias audits and detection routines are essential.

- Overfitting to Past Patterns: Pipelines that rely heavily on historical anomalies may struggle with novel event types. Schedule periodic retraining with forward-looking scenarios.

- Semantic Misalignment: In complex domains such as healthcare or finance, human-in-the-loop reviews remain indispensable to handle nuanced hierarchies and specialized terminology.

- Transparency Tradeoffs: Advanced machine learning techniques can improve correction accuracy but reduce interpretability. Balance model sophistication with auditability and regulatory requirements.

- Integration Complexity: Mismatches in APIs, metadata models or schema evolution can impede seamless agent deployment. Mitigate risk through phased pilot projects and sandboxed integration.

- Talent and Maintenance Costs: Sustaining agent performance demands data engineering and machine learning expertise. Budget for talent acquisition and upskilling initiatives.

- Governance Overhead: Multiple autonomous pipelines with diverging rulesets can complicate oversight. Maintain a central registry of agent configurations, transformation libraries and version histories.

- Latency Sensitivity: Lightweight rule checks upstream and deeper cleansing downstream can balance speed and quality in low-latency, event-driven environments.

Future Directions

- Predictive Maintenance for Data Flows: Use time-series forecasting to preempt resource bottlenecks and adjust allocations dynamically.

- Reinforcement Learning in Remediation: Employ feedback-driven policy optimization to refine correction strategies based on success metrics and cost signals.

- Cross-Pipeline Orchestration: Coordinate self-healing actions across interdependent workflows to prevent cascading failures.

- Explainable Remediation: Generate human-readable rationales for automated fixes to enhance auditability and stakeholder confidence.

- Natural Language Interfaces: Enable conversational data requirements specification, allowing agents to interpret intent and orchestrate complex pipelines.

- Federated Learning for Collaboration: Extend autonomy across organizational boundaries, facilitating secure model training on shared datasets without exposing raw data.

Chapter 4: Automated Exploratory Analysis and Visualization

Embracing Analytical Autonomy in the Data-Driven Enterprise

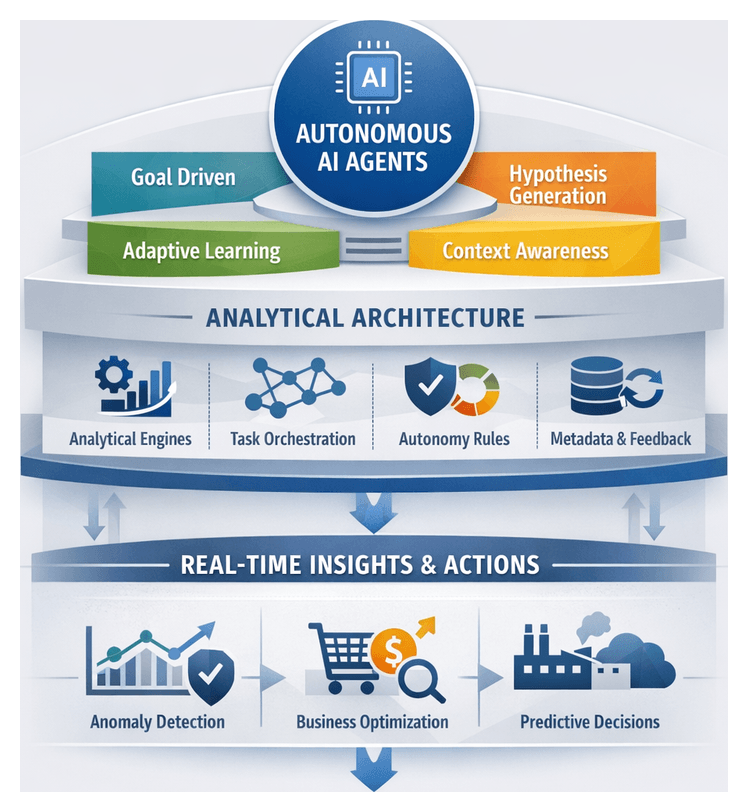

The volume, variety, and velocity of enterprise data—from transactional records and sensor feeds to social media streams—are growing exponentially. Traditional analytics approaches that rely on manually configured queries, static dashboards, and batch reporting struggle to keep pace with modern demands. They become bottlenecks, requiring extensive human intervention and delaying critical decisions. Analytical autonomy represents a transformative shift: software agents that explore, interpret, and generate insights from data with minimal human direction. These autonomous AI agents can self-direct analytical workflows, formulate hypotheses, refine methods through adaptive learning, and maintain context awareness—enabling organizations to move from reactive analysis to proactive, real-time decision support.

Core Components of Autonomous AI Agents

Autonomous AI agents integrate multiple capabilities to deliver end-to-end analytical autonomy:

- Goal Orientation – Agents pursue defined business outcomes, such as demand forecasting or risk detection, autonomously selecting analytical techniques to meet objectives.

- Proactive Hypothesis Generation – Guided by statistical relevance and strategic priorities, agents formulate and evaluate their own hypotheses rather than waiting for human queries.

- Adaptive Learning – Through iterative analysis and feedback loops, agents refine models and choose more effective methods as new data or context emerges.

- Context Awareness – Agents maintain representations of business drivers, historical metrics, and stakeholder preferences to ensure insights align with organizational goals.

- Explainability – Despite autonomous operation, agents produce audit trails and reasoning summaries that enable transparency and trust.

The underlying architecture typically comprises:

- Analytical engines powered by statistical estimators, machine learning models, and natural language processing modules.

- Task orchestration frameworks that sequence data ingestion, model training, validation, and reporting, while managing exceptions.

- Autonomy protocols with rules and thresholds to determine when agents proceed independently versus seeking human input.

- Metadata registries tracking data definitions, lineage, and usage history for traceability and governance.

- Continuous feedback loops that monitor outcome accuracy, user satisfaction, and business impact, guiding model retraining and retirement of obsolete pathways.

Evaluating Dynamic Visualization Algorithms

Dynamic visualization algorithms translate complex data flows into interactive, real-time visual representations. Effective evaluation combines technical performance metrics with human-centric criteria.

Analytical Evaluation Criteria

- Performance Latency – Time from data update to visual refresh under peak loads.

- Scalability – Handling high-volume, high-velocity streams without degradation.

- Adaptability – Support for on-the-fly reconfiguration of charts and filters.

- Resource Efficiency – CPU, GPU, and memory utilization during interactive sessions.

- Interpretability – Clarity of visual encoding and support for user annotations.

- Integration Capability – API compatibility with platforms like Microsoft Power BI, Grafana and AI-driven engines.

Algorithm Taxonomies and Frameworks

- Event-Driven Renderers – Trigger updates based on user actions or system events, common in dashboard tools.

- Streaming Accumulators – Aggregate data over moving windows for operational monitoring.

- Incremental Layout Engines – Gradually recompute visual layouts, typical in network visualizations.

- Hybrid Models – Combine batch processing with micro-updates to balance throughput and responsiveness.

- Context-Aware Adaptors – Dynamically select visual encodings based on data characteristics and user intent.

- Generative Pipelines – Leverage machine learning to propose new views or highlight anomalies automatically.

Performance, Interpretability, and Integration

Leading platforms such as Tableau, powered by smooth transition animations and history panels, reinforce trust through explainable transitions. Solutions like ThoughtSpot and Qlik Sense employ associative engines to reconfigure views dynamically as users probe new dimensions. Integration with AI-driven agents further enhances value: agents can recommend chart types, propose feature transformations, or surface anomalies directly within visualization layers. Compatibility with open standards—OData, MQTT, Apache Arrow—ensures seamless connectivity to machine learning endpoints and platforms like Amazon QuickSight and Grafana.

Governance and Compliance

As dynamic visualizations interact with sensitive data, robust governance is essential. Algorithms must support role-based access control, data masking, audit logging, and comply with protocols such as SAML and OAuth. Compliance with GDPR, HIPAA, and SOC 2 is evaluated through simulated audits and breach scenarios to confirm that dynamic updates do not expose unauthorized data subsets.

Cost-Benefit and Future Directions

Evaluation extends to total cost of ownership and ROI, considering licensing fees, infrastructure costs, and maintenance overhead. Open-source frameworks like D3.js often reduce licensing expenses but may require additional development resources compared to proprietary engines. Emerging research explores reinforcement learning for optimizing update strategies, multimodal interfaces, and generative storyboards that anticipate user queries. The ability of algorithms to orchestrate self-optimizing visual experiences will increasingly differentiate technology choices.

Applications of Autonomous Insight Generation

Autonomous AI agents drive value across diverse industries by discovering patterns and anomalies without explicit human scripting.

- Self-Service Business Intelligence – Platforms such as Microsoft Power BI and Amazon QuickSight embed AI-driven anomaly detection and narrative generation, enabling non-technical users to pose natural-language queries and receive interactive visualizations and summaries.

- Financial Services – Solutions like DataRobot deploy unsupervised clustering and temporal analysis to detect evolving fraud schemes and optimize risk scoring, balancing false-positive rates with detection sensitivity while maintaining regulatory transparency.

- Healthcare – IBM Watson Studio integrates NLP to extract variables from clinical notes, supporting early warning systems for sepsis, optimizing operating room schedules, and uncovering treatment-outcome associations underpinned by evidence-based practice.

- Retail and E-Commerce – ThoughtSpot‘s search-based interface and Qlik Sense agents analyze clickstream logs, social media sentiment, and inventory levels to recommend product bundles, dynamic pricing, and targeted promotions.

- Manufacturing – DataRobot and IoT analytics platforms ingest sensor telemetry and environmental data to forecast equipment failures and recommend maintenance schedules, shifting operations from reactive to condition-based strategies.

- Marketing Analytics – Salesforce Einstein Analytics surfaces high-impact campaign variables and audience segments, enabling mid-campaign pivots that optimize media mixes and improve marketing ROI.

- Supply Chain and Logistics – Qlik Insight Advisor models what-if scenarios—supplier delays, demand spikes—by correlating external indicators like weather and geopolitical events, enabling proactive resilience planning.

- Smart Cities and Environmental Monitoring – Autonomous agents analyze traffic flows, utility usage, and air quality metrics to detect hotspots, forecast infrastructure stress points, and inform urban planning and sustainability initiatives.

Across these applications, autonomous insight generation accelerates sensemaking, democratizes analytics, and reframes professionals as strategic interpreters who validate and contextualize agent outputs through human-in-the-loop mechanisms.

Benefits and Limitations of Automated Exploration

Key Benefits

- Accelerated time to insight through unsupervised clustering, anomaly detection, and dynamic correlation analysis.

- Democratization of data discovery via AI-powered self-service interfaces.

- Scalable exploration across petabyte-scale repositories and streaming telemetry.

- Unbiased pattern detection that surfaces non-intuitive relationships.

- Consistent, repeatable workflows with traceable lineage supporting auditability.

- Continuous, real-time monitoring that maintains situational awareness.

Critical Limitations

- Dependence on data quality and coverage, requiring rigorous stewardship and metadata management.

- Interpretability challenges due to opaque algorithms and proprietary heuristics.

- Risk of spurious correlations without human judgment to assess business relevance.

- Contextual gaps—agents may overlook domain-specific cycles or regulatory events.

- Infrastructure constraints from compute-intensive processes, necessitating hybrid architectures.

- Governance and ethical implications, including bias perpetuation and privacy concerns.

Balancing Automation and Oversight

Maximizing value from automated exploration demands a hybrid approach: embedding governance checkpoints, defining escalation criteria, and integrating domain expert review stages. Regular calibration exercises, in which analysts compare agent-generated insights with manual benchmarks, help maintain accuracy and alignment with evolving objectives.

Strategic Implications

Automated exploration should be viewed as a force multiplier rather than a replacement for expert analysis. Investments in data infrastructure, transparency tools, and change management foster an environment where AI-driven agents augment human capabilities. Continuous monitoring of performance metrics and user feedback loops ensures that autonomous agents adapt to business shifts and maintain analytic integrity. By combining machine efficiency with human judgment, organizations can harness the full potential of AI-driven discovery while controlling for unintended consequences.

Chapter 5: Predictive Modeling and Forecasting by AI Agents

The Rise of Autonomous Data Agents

The modern analytics landscape is undergoing a profound shift as organizations embrace data autonomy—systems that manage ingestion, transformation, analysis, and reporting with minimal human intervention. Autonomous AI agents form the vanguard of this evolution, leveraging adaptive machine learning, natural language processing, and continuous learning frameworks to navigate complex, high-velocity data environments. By ingesting raw inputs, executing analytical workflows, and surfacing actionable insights, these agents reduce reliance on manual configurations, democratize analytics, and accelerate decision cycles.

Driving Forces of Data Autonomy

- Explosive Data Growth: Enterprises generate petabytes of structured and unstructured data from sensors, transactions, social media, and operational systems.

- Increasing Complexity: Hybrid clouds, microservices, and multi-vendor ecosystems complicate integration, lineage tracking, and governance.

- Speed of Business: Competitive markets demand real-time insights. Manual analytics pipelines struggle to keep pace with rapid decision windows.

- Talent Scarcity: A shortage of skilled data scientists and engineers limits scalability of handcrafted models and pipelines.

- User Expectations: Business stakeholders expect self-service access, intuitive interfaces, and personalized insights without lengthy IT cycles.

Autonomous agents address these challenges by automating data preparation, model selection, and anomaly detection. Continuous learning loops enable them to adapt analytical strategies as new patterns emerge, while embedded governance ensures compliance, privacy, and security at every stage.

Defining Data Autonomy

Data autonomy encompasses the system’s ability to self-orchestrate end-to-end workflows, refine models over time, reason contextually about datasets, and deliver proactive insights. Core capabilities include:

- Self-Orchestration: Automated scheduling and dependency management based on data arrival and business priorities.

- Adaptive Learning: Dynamic feature engineering, schema evolution, and hyperparameter tuning in response to shifting data distributions.

- Contextual Reasoning: Customizing analytical techniques to dataset characteristics and stakeholder objectives.

- Proactive Insights: Surfacing trends, anomalies, and predictive signals without explicit queries.

- Governance Enforcement: Integrating audit trails, privacy checks, and compliance controls into automated processes.

Foundational Components

Implementing autonomous analytics requires a cohesive architecture that unites several pillars:

- Machine Learning Platforms: Solutions like DataRobot and H2O.ai provide automated model building, feature generation, and tuning capabilities.

- Natural Language Processing Engines: NLP modules translate business queries into workflows and generate narrative explanations of results.

- Data Storage and Processing: Scalable warehouses such as Snowflake and lakehouse platforms like Databricks Autoloader offer unified, efficient storage and compute.

- Workflow Orchestrators: Apache Airflow and Kubeflow manage scheduling, monitoring, and dependency resolution for end-to-end pipelines.

- Metadata and Catalog Services: Data catalogs centralize schema definitions, lineage metadata, and usage statistics for asset discovery and validation.

- Observability and Monitoring: Platforms such as Splunk and Datadog supply telemetry to detect drift, performance issues, and security anomalies.

- API and Integration Layers: Standardized connectors enable seamless interaction with ERP, CRM, IoT, and third-party systems.

Organizational Impact and Implementation

Delegating routine analytics tasks to autonomous agents yields strategic benefits:

- Accelerated Time to Insight: Real-time pipelines and continuous modeling reduce latency from data generation to decision support.

- Scalable Expertise: Encoded best practices and heuristics allow rapid deployment of advanced analytics without proportionate staffing increases.

- Consistency and Repeatability: Standardized processes ensure reproducible results and reduce human error.

- Democratization of Analytics: Self-service interfaces empower non-technical users to pose complex questions and receive contextualized interpretations.

- Enhanced Governance: Embedded compliance checks and audit logs uphold privacy, security, and regulatory alignment.

To prepare for autonomous analytics, organizations must define unified data strategies, select scalable AI and orchestration tools, establish governance frameworks, upskill teams in AI collaboration, and pilot autonomous workflows before enterprise-wide adoption.

Validating Autonomous Models

Multi-Dimensional Validation Frameworks

Model validation in an autonomous context extends beyond pass/fail checks to a layered framework assessing generalizability, robustness, and strategic relevance. Two complementary approaches guide validation:

- Statistical Rigor: Techniques such as k-fold cross-validation, nested validation, and bootstrap resampling quantify uncertainty and guard against overfitting.

- Strategic Relevance: Cost-benefit analyses, ROI projections, and scenario planning align metric thresholds with business objectives and risk tolerances.

Hybrid validation models embed economic loss functions or utility curves, enabling decision-makers to weigh technical performance alongside anticipated business impact.

Core Metrics Across Use Cases

Performance metrics vary by analytical task, and interpreting them requires domain context. Key measures include:

- Classification: Precision, recall, F1 score, ROC AUC, and area under the precision-recall curve, selected based on class imbalance and misclassification costs.

- Regression: Mean absolute error (MAE), root mean squared error (RMSE), R-squared, mean bias error, and median absolute deviation, each reflecting different sensitivities to outliers and variance.

- Forecasting: MAPE variants, mean absolute scaled error (MASE), and skill scores against naïve benchmarks, illuminating incremental predictive value.

- Anomaly Detection: True positive rate, false alarm rate, and time to detection, crucial for operational resilience.

Experts recommend a balanced scorecard that contextualizes multiple metrics side by side to avoid optimizing on a single dimension at the expense of long-term value.

Domain-Specific Validation Approaches

Domain constraints shape validation methods:

- Financial Services: Backtesting against historical market data and stress testing under hypothetical volatility scenarios reveal drift and resilience.

- Healthcare: Calibration curves and fairness audits ensure predicted probabilities align with observed outcomes and do not disadvantage demographic groups.

- Retail and Marketing: Uplift modeling and causal inference techniques measure the incremental impact of targeted interventions through hold-out experiments and ROI analysis.

- Manufacturing and IoT: Time-to-event analysis and survival curves assess predictive maintenance models against downtime logs and safety thresholds.

Balancing Accuracy, Complexity, and Interpretability

Pursuing marginal accuracy gains often increases model complexity and reduces transparency. Validation frameworks therefore incorporate an interpretability axis, evaluating models on predictive power, complexity, and explainability. Techniques such as partial dependence plots, surrogate trees, SHAP, and LIME offer post-hoc insights, but organizations must calibrate their use against stakeholder expertise and regulatory requirements. In high-stakes settings, simpler or hybrid rule-based architectures may be favored to satisfy auditability and human oversight.

Emerging Validation Methodologies

- Meta-Validation: Embedding validation logic within autonomous workflows to generate dynamic reports across model iterations, cohorts, and feature sets.

- Counterfactual Evaluation: Simulating input perturbations to assess resilience and uncover latent failure modes beyond static test sets.

- Adversarial Validation: Training classifiers to distinguish training from production data, quantifying drift and triggering retraining workflows.

- Stakeholder-Centric Metrics: Capturing decision confidence, user satisfaction, and downstream business outcomes by linking predictions to dashboards and feedback loops.

Integrating Validation into Governance

Validation artifacts—error analyses, calibration plots, fairness audits—feed compliance and audit teams, documenting due diligence and supporting risk committees in setting error thresholds, escalation protocols, and human review gates. Automated governance policies can accelerate low-risk model deployment while enforcing human oversight for high-complexity or high-impact applications.

Continuous Validation and Monitoring

In dynamic environments, continuous validation is essential. Real-time dashboards track performance metrics over time, detecting drift before business impact. Statistical process control charts, cohort analysis, and periodic recalibration protocols maintain model reliability, while data quality checks ensure inputs remain consistent with validation assumptions. Automated alerts and feedback loops trigger retraining or intervention, balancing agility with stability.

Scaling Forecasting Agents Across Industries

Key Operational Domains

Autonomous forecasting agents have become strategic imperatives across sectors:

- Retail and Consumer Goods: Demand forecasting for thousands of SKUs, promotional uplift modeling, and inventory optimization.

- Supply Chain and Logistics: Multi-echelon replenishment, lead-time prediction, and capacity planning in global networks.

- Financial Services: Real-time risk assessment, market trend analysis, and automated portfolio rebalancing.

- Energy and Utilities: Grid load forecasting, renewable generation variability modeling, and dynamic pricing strategies.

- Healthcare and Life Sciences: Patient admission forecasts, resource demand planning, and epidemiological projections.

- Manufacturing and Industrial IoT: Predictive maintenance scheduling, throughput forecasting, and yield estimation.

Strategic Implications of Scale

Centralized, scalable forecasting infrastructures synchronize decision cycles, reduce repetitive retraining tasks, accelerate response to market disruptions, and integrate probabilistic forecasts into risk management dashboards. By automating model distribution and parallel scenario simulations, organizations free analysts to focus on interpretation and strategic action.

Contextual Analytical Frameworks

- Maturity Model for Predictive Intelligence: From ad-hoc statistical forecasts to fully autonomous, self-optimizing systems.

- Decision Value Chain Analysis: Mapping how forecasts flow through decision points and quantifying marginal value.

- Reliability-Complexity Trade-Off Grid: Balancing sophistication, explainability, and operational overhead.

- Capability Footprint Matrix: Charting functionality across frequency, horizon, granularity, and integration depth.

Data Characteristics and Scalability

Scalability depends on data volume, velocity, heterogeneity, seasonality, and governance. High-frequency IoT streams require streaming architectures, while periodic sales figures may tolerate batch updates. Combining structured records with unstructured text or imagery amplifies complexity. Automated metadata management, lineage tracking, and anomaly detection ensure data quality at scale.

Industry Perspectives on Deployment

- Centralized Governance Advocates: Standardize model registries, enterprise data lakes, and validation protocols for distributed agents.

- Federated Innovation Proponents: Empower business units to customize pipelines within central guardrails.

- Human-AI Partnership Models: Blend agent-driven forecasts with expert review cycles.

- Fully Autonomous Experimenters: Pilot continuous-learning agents that update in production based on performance feedback.

Embedding Forecasting into Decision Cycles

By aligning forecast horizons with operational cadences, agents inform:

- Tactical Adjustments: Near-real-time demand signals for inventory and pricing on daily or hourly timescales.

- Operational Planning: Weekly forecasts for workforce scheduling, production runs, and logistics routing.

- Strategic Roadmaps: Quarterly and annual scenarios underpinning budgets, capital investments, and expansion plans.

Ethical and Governance Considerations

- Transparency: Document model assumptions, data sources, and drift detection for auditors and decision-makers.

- Fairness and Bias: Review forecast outputs for disparate impacts, especially in lending, pricing, and resource allocation.

- Accountability: Assign ownership of agent-driven decisions and define escalation paths for high-risk contexts.

- Regulatory Compliance: Adhere to financial reporting standards, patient privacy laws, and industry-specific mandates.

Integrating Agents into Enterprise Strategy

Successful deployment relies on modular architectures that plug into existing BI platforms and data warehouses without full platform replacement. Governance councils with cross-functional stakeholders align forecasting outputs with strategic and ethical objectives. Performance measurement frameworks quantify agent-driven value, balancing efficiency gains with long-term innovation potential.

Emerging Trends in Forecasting

- Generative Scenario Modeling: Agents simulate economic and operational scenarios with narrative explanations.

- Multi-Agent Ecosystems: Specialized agents negotiate and co-optimize forecasts across business domains.

- Context-Aware Adaptivity: Agents adjust logic based on exogenous signals such as social media sentiment or geospatial events.

Technical Architecture and Risk Mitigation

Data Quality and Feature Robustness

Forecasting accuracy hinges on input data integrity. Enterprises must assess source lineage, perform statistical profiling to detect concept drift, and incorporate domain expertise to select causally relevant features. Treating data quality as an ongoing practice ensures models remain robust as market conditions evolve.

Algorithm Selection and Model Complexity

Choosing between expressive architectures and simpler algorithms involves a strategic trade-off. Initial deployments often use interpretable models—such as generalized linear models or tree-based ensembles—to establish performance baselines. Complex models like deep neural networks require extensive cross-validation and stress-testing to justify their incremental accuracy gains and manage tuning, resource, and explainability challenges.

Interpretability and Explainability Constraints

Regulated industries demand transparency in decision logic. Post-hoc tools like SHAP and LIME provide local explanations but may not reveal deeper interactions in opaque models. Hybrid architectures that combine rule-based components with machine learning often satisfy both performance and auditability requirements for high-stakes forecasts.

Infrastructure Scalability and Performance

Large-scale forecasting shifts computational burdens to production environments. Robust infrastructure—distributed compute clusters, GPU nodes, and elastic cloud resources—must be provisioned and scaled dynamically. Capacity planning, containerization, and model quantization help optimize resource usage, but require rigorous validation to preserve predictive fidelity. Network throughput, storage architecture, and data I/O all influence pipeline reliability and latency.

Regulatory and Ethical Compliance

Forecasting agents in regulated sectors must adhere to frameworks such as GDPR, Basel guidelines, or HIPAA. Embedding ethical guardrails—bias audits, transparency logs, and documented decision pathways—ensures alignment with corporate values and external mandates. Interdisciplinary review boards comprising data scientists, legal advisors, and domain experts provide oversight at each stage of the model lifecycle.

Continuous Monitoring and Model Evolution

Autonomous agents require ongoing surveillance to detect drift, accuracy loss, or emergent anomalies. Real-time dashboards track forecast error distributions, calibration curves, and responses to exogenous shocks. Feedback loops trigger retraining or human intervention when deviations exceed predefined tolerances. Thoughtful retraining schedules and version control balance agility with stability, preventing overfitting to transient noise.

Strategic Limitations and Risk Trade-Offs

- Dependence on historical patterns that may not hold in unprecedented events.

- Risk of amplifying latent biases in training data, leading to inequitable outcomes.

- Operational fragility when infrastructure or monitoring frameworks are immature.

- Regulatory constraints favoring transparency over complexity may limit model sophistication.

- Trade-offs between frequent retraining and stability to avoid oscillating predictions.

By navigating these trade-offs with disciplined governance, robust architecture, and cross-functional collaboration, organizations can harness the speed, scale, and insight of autonomous forecasting agents while mitigating the inherent uncertainties of dynamic markets.

Chapter 6: Prescriptive Analytics and Decision Support Agents

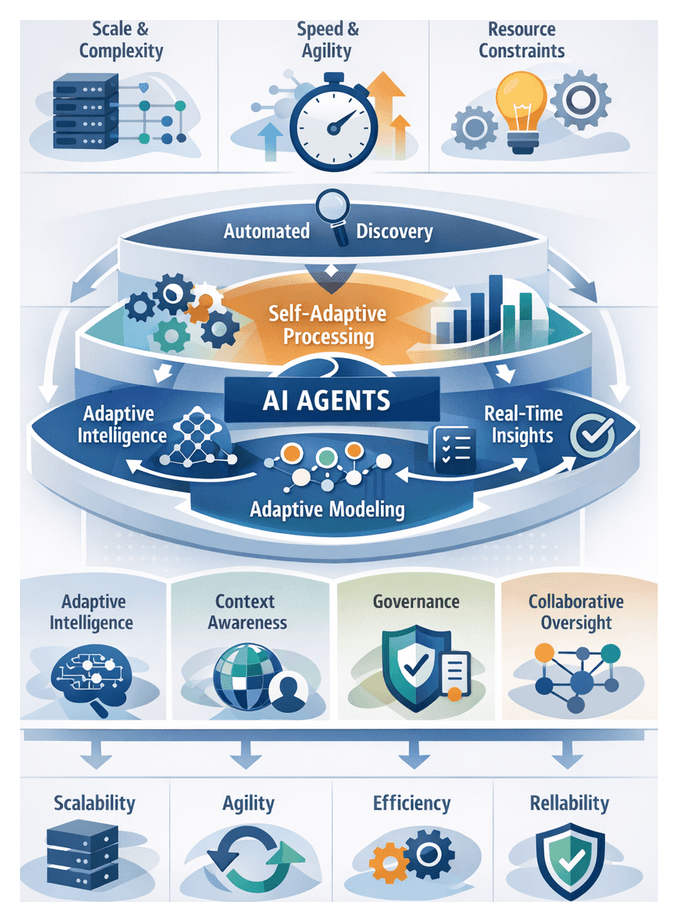

Data Autonomy in the Modern Analytics Ecosystem

The emergence of data autonomy marks a transformative shift in how organizations capture, process, and act upon information. Autonomous analytics systems manage data ingestion, transformation, modeling, and insight generation with minimal human intervention, leveraging adaptive intelligence, continuous learning, and self-governance. As enterprises contend with ever-increasing data scale, variety, and velocity, autonomous functions become essential to sustain agility, scale operations, and sharpen competitive differentiation.

Traditional analytics pipelines relied on manual extraction, cleansing, modeling, and scheduled reporting. These linear workflows struggled under demands for real-time insights, evolving schemas, and exponential growth in structured and unstructured sources. Data autonomy relocates routine tasks—such as schema detection, data profiling, feature extraction, anomaly identification, and model retraining—to intelligent agents that continuously adapt to changing conditions and user interactions.

Key forces driving this evolution include:

- Scale and Complexity: Organizations ingest data from IoT devices, streaming platforms, legacy systems, and external APIs, challenging static architectures.

- Speed of Decision-Making: Competitive markets demand rapid conversion of raw data into actionable intelligence.

- Resource Constraints: Talent shortages in data engineering and analytics incentivize automation of repetitive tasks.

- Democratization of Analytics: Business users require self-service capabilities, guided by agents that abstract technical complexity.

By embedding autonomous agents within analytics platforms, organizations realize:

- Agility in Insight Delivery: Pipelines self-repair in response to schema changes and data anomalies, minimizing downtime.

- Scalability Across Use Cases: Agents scale horizontally, monitoring multiple datasets in parallel without proportional increases in human oversight.

- Operational Efficiency: Automation of data validation and profiling frees analysts to focus on strategic interpretation and hypothesis testing.

- Consistency and Reliability: Governance frameworks enforce compliance and quality thresholds, reducing the risk of unauthorized analyses.

Where legacy analytics follow a linear sequence—data acquisition, manual preparation, static modeling, scheduled reporting, ad hoc analysis—autonomous frameworks operate as a continuous loop:

- Automated Data Discovery and Classification

- Self-Healing Data Preparation with Anomaly Detection

- Adaptive Model Training and Validation

- Real-Time Insight Streaming and Alerting

- Proactive Recommendations and Action Suggestions

Each phase incorporates feedback loops that monitor data quality, model performance, and user feedback, fostering continuous refinement. This closed-loop design aligns analytics outputs with evolving business goals and data characteristics.

Successful adoption reshapes organizational roles:

- Data Leaders: Define strategic objectives, governance policies, and ensure alignment with corporate goals.

- Analytics Translators: Bridge domain expertise and agent capabilities, contextualizing recommendations for stakeholders.

- IT and Infrastructure Teams: Provision scalable compute and storage, manage security controls, and integrate autonomous platforms.

- Business Users: Interact with intuitive interfaces, explore agent-generated insights, and execute actions confidently.

Foundational concepts include:

- Autonomy Continuum: Balancing advisory agents and fully self-executing systems to fit use-case requirements.

- Adaptive Intelligence: Agents learn from new data while maintaining explainability and compliance.

- Context Awareness: Interpreting business context, user intent, and domain rules to deliver relevant recommendations.

- Collaborative Oversight: Establishing trust through transparency, feedback mechanisms, and well-defined boundaries between human and agent tasks.

Analytical Foundations of Prescriptive Optimization

Prescriptive analytics transforms predictive insights into actionable recommendations through optimization techniques. Practitioners evaluate methods based on problem structure, solution guarantees, computational behavior, and alignment with business objectives. Optimization frameworks fall into three broad categories:

- Exact Techniques: Guarantee optimal solutions for well-defined mathematical models. Tools such as IBM ILOG CPLEX and Gurobi Optimizer deliver enterprise-grade solvers valued for rigorous proofs of optimality.

- Heuristic and Metaheuristic Strategies: Include greedy algorithms, genetic algorithms, simulated annealing, and tabu search. Frameworks like Google OR-Tools provide modules for rapid, near-optimal solutions in complex, non-convex domains.

- Hybrid Frameworks: Combine exact and heuristic elements, for example interleaving linear relaxations with local search. Prototyping often leverages SciPy Optimize before scaling to specialized solvers.

Industry experts interpret optimization through multiple analytical lenses:

Problem Structure and Formulation

- Linear vs. non-linear: Linear models enable fast convergence; non-linear models capture curvature and multimodal landscapes.

- Discrete vs. continuous: Integer variables introduce combinatorial complexity; continuous relaxations aid bounding and guiding searches.

Constraint Handling

- Hard Constraints: Inviolable rules such as regulatory or capacity limits.

- Soft Constraints: Penalty functions or slack variables allow trade-offs; frameworks like goal programming assign weights to preferences.

Objective Landscape and Multi-Objective Trade-Offs

- Single-Objective: Simplifies optimization but may overlook broader goals.

- Multi-Objective: Techniques such as weighted sum, ε-constraint, and evolutionary algorithms map Pareto frontiers.

Uncertainty and Robustness

- Deterministic: Assumes certainty, yielding precise recommendations.

- Stochastic Programming: Models input distributions in two-stage or multi-stage formulations.

- Robust Optimization: Seeks solutions that perform well under worst-case deviations.

Scalability and Computational Trade-Offs

- Complexity Analysis: Balances runtime against solution accuracy.

- Parallel and Distributed Architectures: Platforms such as Azure Machine Learning support distributed model fitting but require orchestration.

Evaluation criteria extend beyond raw solution quality:

- Solution Robustness: Performance under data deviations, measured by out-of-sample regret and stability indices.

- Interpretability: Understandability of decision rules; linear models and rule-based approaches rank highly.

- Integration Agility: Seamless connectivity to data pipelines, BI platforms, and human-in-the-loop controls via REST APIs and orchestration tools like Apache Airflow or Kubernetes.

- Cost of Computation: Licensing, infrastructure, and expertise expenses balanced against managed services.

- Governance and Auditability: Logging of decision rationale, version control, and traceable solution paths, critical in regulated industries.

Scenario simulation complements these frameworks. Common strategies include:

- Monte Carlo Simulation: Sampling input distributions to assess distributional performance and tail risks.

- Decision Tree Analysis: Mapping sequential decision points to compare expected value and risk-adjusted outcomes.

- Rolling Horizon Simulation: Periodic re-optimization in dynamic environments such as supply chains, emphasizing adaptation speed and stability.