Agents of Change How AI Agents Are Revolutionizing 24 7 Customer Support in 2026

To download this as a free PDF eBook and explore many others, please visit the AugVation webstore:

Introduction

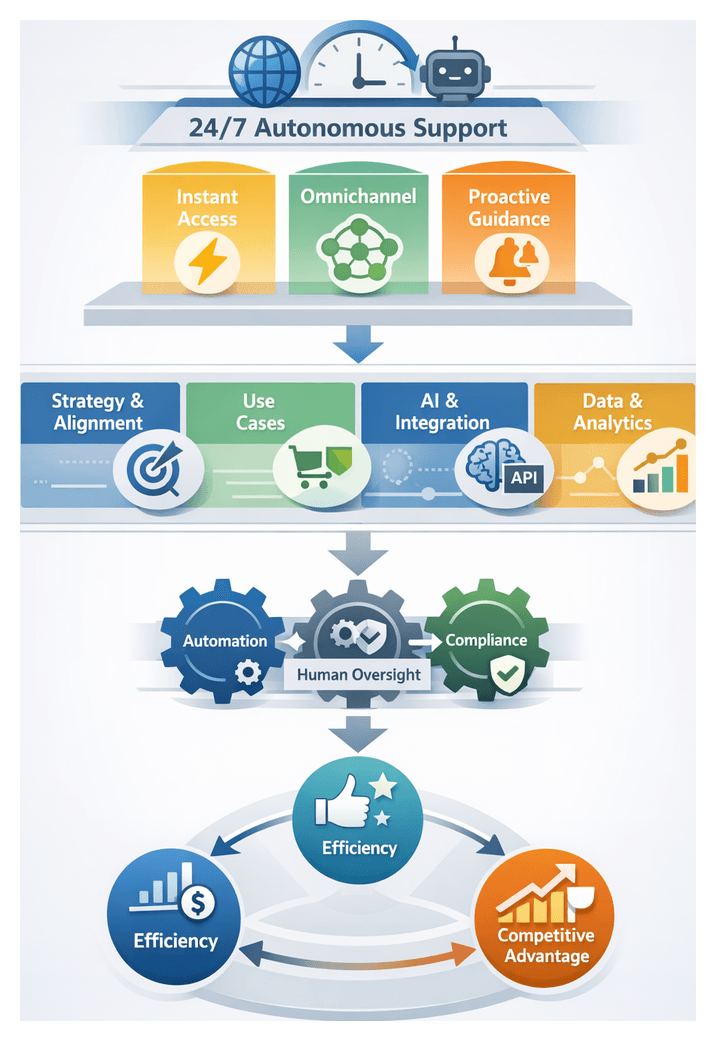

The Imperative for Continuous, AI-Driven Support

In today’s digitally connected world, customers expect assistance any time, anywhere. The rise of global commerce and instant-access platforms has blurred the boundaries of traditional business hours, making service interruptions a costly liability. Industry research shows that a single hour of support downtime can cost large enterprises upwards of $100,000 in lost revenue and customer churn. Customers who encounter friction during critical interactions—such as checkout, account recovery or technical troubleshooting—are significantly less likely to return, underscoring the strategic value of uninterrupted support for long-term growth and brand credibility.

Delivering true 24/7 service challenges conventional call center models built on fixed shifts and manual routing. Rigid schedules and siloed knowledge bases struggle to keep pace with fluctuations in demand, seasonal peaks and unexpected surges driven by product launches or marketing events. Service leaders must therefore design infrastructures that blend human expertise with automated capabilities, enabling rapid triage, intelligent routing and consistent resolution regardless of volume or time zone.

Meeting these demands is not merely an operational efficiency play; it is fundamental to customer loyalty. Surveys reveal that over 75 percent of consumers consider immediate response time critical to their satisfaction. High-value B2B clients similarly demand personalized responses within minutes. Across demographics, from digitally native younger cohorts who favor messaging channels to traditional customers reliant on email or voice, the expectation for speed and continuity remains constant.

Around-the-Clock Customer Expectations

Digital-first experiences on social media, streaming services and mobile apps have instilled an on-demand mindset among consumers. Any delay in communication is perceived as a service failure. Data points illustrate the stakes:

- 40 percent of customers abandon a chat session if not replied to within one minute

- Organizations that respond within five minutes see a 30 percent increase in customer satisfaction

- Proactive outreach during off-hours can reduce inbound volume by up to 20 percent

- Customers who experience latency during checkout or technical support are 50 percent less likely to complete a purchase or renew a subscription

Beyond initial engagement, customers expect proactive updates on issue status, seamless transitions between channels and personalized guidance informed by prior interactions. Maintaining this level of responsiveness requires unified data platforms and AI-driven orchestration layers that can access context, enrich conversation histories and deliver proactive notifications without human intervention.

Competitive Differentiation Through Agility

In markets saturated with comparable offerings, customer support has emerged as a key differentiator. Brands that enable continuous, reliable assistance build trust advantages that are difficult for competitors to replicate. Early adopters of AI-enabled platforms such as Salesforce Einstein, Zendesk and LivePerson leverage automated routing, self-service bots and intelligent escalation to transform support touchpoints into growth opportunities.

Challenger brands increasingly market always-on availability as a core promise. Incumbent organizations, in turn, invest in automation to maintain relevance and drive renewal rates. One global travel company, for example, integrated Zendesk with AI-driven chatbots to extend its support window. Within six months, average first-response times during off-hours decreased by 75 percent and customer satisfaction scores rose by 15 points. By positioning continuous availability as a differentiator, the company not only improved loyalty but also reduced reliance on costly outsourced services, channeling savings into innovation.

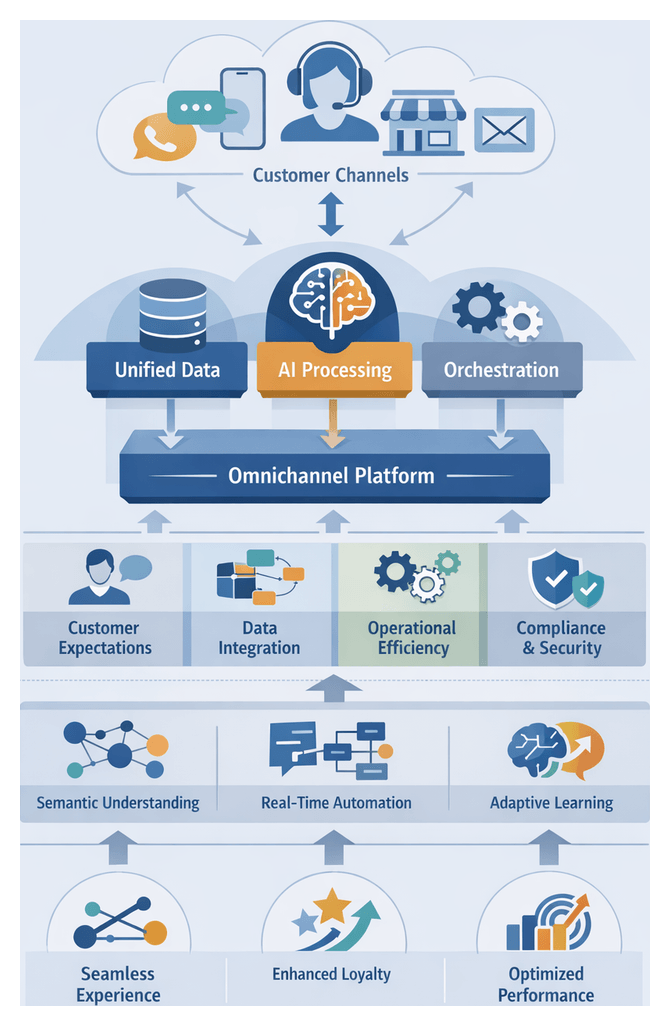

Globalization and Omnichannel Complexity

As businesses expand internationally, support teams confront the challenges of diverse time zones, languages and cultural expectations. Without mechanisms to bridge these divides, critical customer inquiries can remain unattended for hours, undermining brand trust. Traditional solutions—follow-the-sun rotations, offshore teams and outsourced vendors—often struggle with knowledge consistency, quality control and cultural alignment.

Compounding this is the proliferation of digital channels. Customers may begin a conversation on live chat, share photos via messaging apps, continue via social media and eventually switch to voice. Disconnected back-end systems and manual handoffs disrupt context, forcing customers to repeat themselves. To deliver frictionless omnichannel experiences, organizations must adopt unified data architectures and intelligent orchestration layers that manage context enrichment, routing and channel-specific adaptations in real time.

Self-service channels add another dimension of complexity. Well-maintained knowledge bases and interactive FAQs can deflect routine inquiries, but outdated or poorly structured resources frustrate users and increase support volume. Integrating AI-driven semantic search and guided troubleshooting flows ensures that self-service content remains relevant, context-aware and capable of resolving issues without human intervention, thus extending support coverage around the clock.

Economic and Operational Drivers

Sustaining a fully human-staffed 24/7 operation inflates labor expenses and risks burnout. Headcount strategies often lead to overstaffing during slow periods and understaffing during peaks. Analysts estimate that deploying AI agents for routine inquiries can reduce contact center costs by up to 30 percent over three years.

In hybrid support models, AI agents—configured to handle low-complexity, high-volume tasks—free human specialists to focus on strategic and emotionally nuanced interactions. When complexity arises, human teams intervene with full context provided by AI-driven session transcripts and knowledge graphs. This approach delivers near-continuous coverage at a fraction of the cost of a purely human operation, while preserving service quality for critical cases.

Realizing these efficiencies demands careful change management. Organizations must invest in training and upskilling to shift workforce roles from rule-based task execution to oversight, conversational design and exception handling. Process realignment, transparent communication and ethical frameworks are equally important to maintain trust and foster adoption.

AI Agents as the Cornerstone of Modern Support

From Scripted Bots to Cognitive Assistants

The evolution from traditional chatbots to AI agents marks a transformative leap. Conventional bots follow predetermined scripts and decision trees, requiring manual rule updates. In contrast, AI agents leverage advanced machine learning, natural language processing and cognitive reasoning to interpret intent, maintain context and learn from every interaction.

Leading platforms such as IBM Watson Assistant, Amazon Connect and Google Cloud Contact Center AI illustrate how AI agents can autonomously handle large volumes of customer inquiries. These systems employ intent classification, sentiment analysis and knowledge graph integrations to resolve issues accurately and escalate appropriately when human intervention is needed. Over time, their supervised and reinforcement learning capabilities refine dialogue strategies and expand coverage without continuous human oversight.

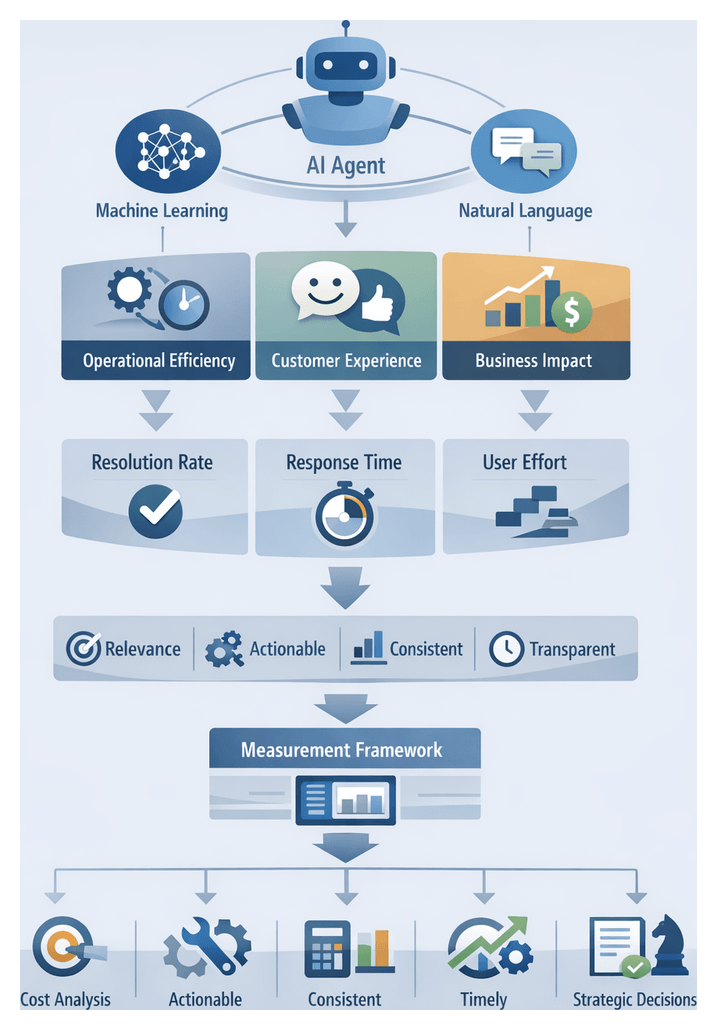

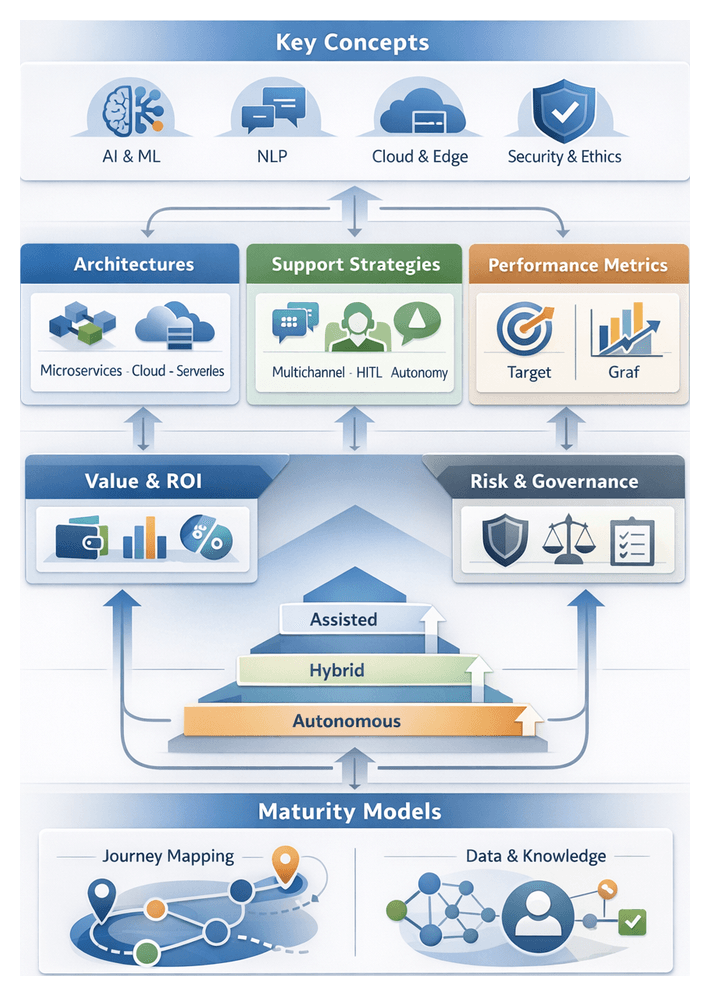

Analytical Frameworks for Understanding AI Agents

Evaluating AI agents requires multidimensional frameworks that align technology capabilities with business objectives:

- Capability Taxonomy—Classifies features into perception, comprehension, decision making and action. This helps organizations identify which cognitive capabilities—such as multi-turn dialogue or sentiment detection—are essential for their support goals.

- Maturity Model—Stages agent sophistication from Level 1 rule-based automation to Level 5 fully autonomous, self-optimizing systems, guiding roadmap planning and incremental enhancements.

- Value Continuum—Maps functionalities to business metrics like efficiency gains, cost avoidance and customer satisfaction uplift, highlighting trade-offs between rapid deployment of basic bots and long-term investment in advanced AI.

Distinct Capabilities and Evaluation Criteria

To distinguish AI agents from legacy automation and assess vendor offerings, organizations focus on several key dimensions:

- Flexibility versus Rigidity—AI agents adapt responses based on real-time context, whereas traditional bots rely on static scripts.

- Learning Capability—Agents employ supervised and reinforcement learning to refine interactions without manual rule updates.

- Contextual Understanding—Agents maintain dialogue state across sessions, enabling personalized recommendations and continuity.

- Proactive Engagement—Predictive analytics enable agents to detect rising frustration, forecast issues and initiate outreach or next-best actions.

- Integration Depth—Seamless data exchange with CRM systems, knowledge bases and analytics platforms supports unified customer experiences.

Evaluation criteria typically include:

- Response Accuracy—Precision in intent classification, recall rates and F1 metrics.

- Resolution Efficiency—First-contact resolution rates, average handle times and deflection percentages.

- Scalability and Resilience—Load testing results, auto-scaling capabilities and fault tolerance.

- Learning Velocity—Retraining frequency, volume of annotated data required and improvement in model performance.

- Governance and Compliance—Data privacy controls, bias mitigation measures and auditability aligned with GDPR, CCPA and industry regulations.

Technological Catalysts Fueling Adoption

The recent surge in AI adoption is driven by breakthroughs in computational power, data availability and algorithmic innovation. Transformer-based models, popularized by platforms such as ChatGPT, have elevated natural language understanding to new levels of accuracy and nuance. At the same time, cloud-based services like Amazon Lex, Google Dialogflow and Microsoft Azure Bot Service offer scalable, consumption-based access to enterprise-grade AI tools, significantly lowering the barrier to entry.

Pre-trained models, transfer learning and collaborative open research have accelerated development cycles, shifting the challenge from building algorithms to fine-tuning domain-specific data and conversational flows. As a result, organizations can deploy pilot projects in weeks rather than months, iterating rapidly based on user feedback and performance metrics.

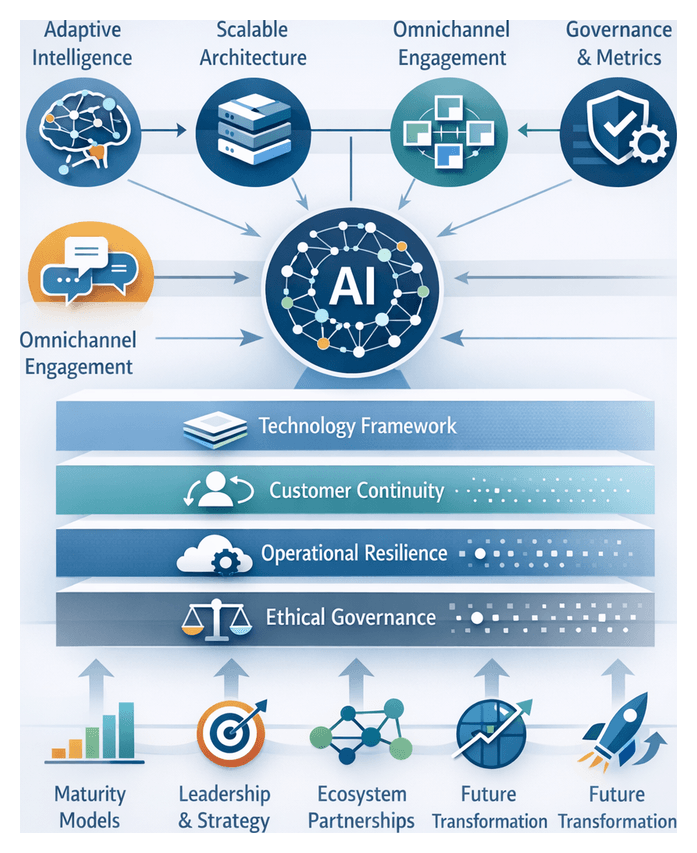

Strategic Implications and Roadmap

To translate market demand into operational excellence, service leaders must adopt a strategic framework encompassing demand analysis, technology selection, organizational alignment and continuous optimization. Key steps include:

- Assess support requirements against customer journeys to identify high-impact automation opportunities.

- Evaluate AI platforms using structured matrices that weigh NLP performance, integration ease, security certifications and licensing models.

- Rearchitect workflows to blend AI agents with human experts, defining clear escalation protocols and governance policies.

- Implement pilot programs with measurable success metrics—first-contact resolution, response time, deflection rate and customer sentiment scores.

- Scale based on iterative feedback loops, refining AI models, expanding channel coverage and aligning resources to emerging demands.

Embedding agile principles in support operations enables rapid adaptation to new channels, evolving customer preferences and technological advances. Governance structures, data privacy safeguards and ethical guidelines must be integral to this journey to maintain compliance and customer trust.

Building a Resilient Support Ecosystem

Analytical Models and Decision Tools

Strategic planning for AI-driven support is supported by robust analytical models that bring clarity to complexity:

- Support Maturity Assessment Framework—Benchmarks organizational capabilities across people, processes, technology, data and governance, pinpointing areas for improvement in self-service adoption, AI accuracy and escalation protocols.

- AI Technology Selection Matrix—Compares vendors and platforms on parameters such as NLP performance, integration ease, security certifications and licensing structures, using weighted scoring to reflect strategic priorities like scalability or explainability.

- Customer Journey Analytics Model—Maps every support touchpoint to business outcomes, overlaying sentiment, effort scores and resolution effectiveness to identify high ROI intervention zones.

- Performance and Measurement Blueprint—Establishes a layered metrics architecture with real-time dashboards, periodic health checks and longitudinal studies, balancing quantitative KPIs such as average response time and containment rate with qualitative customer feedback.

- Stakeholder Alignment Canvas—Visualizes roles, responsibilities and decision rights across IT, operations, compliance and customer experience teams, reducing governance friction and accelerating approval cycles.

Interpretive Perspectives

Beyond structured frameworks, interpretive lenses provide strategic insights that uncover hidden risks and opportunities:

- Risk Management Lens—Focuses on data privacy exposures, vendor concentration risks and continuity threats, supporting scenario planning for system outages, cyber incidents and regulatory shifts.

- Ethical AI Lens—Prioritizes fairness, transparency and user dignity in automated interactions, guiding bias detection, transparency protocols and consent mechanisms.

- Customer-Centricity Lens—Ensures AI engagements uphold empathy and brand values, aligning automated experiences with human-centric expectations across demographics and use cases.

- Operational Resilience Lens—Examines architectural redundancies, load balancing and failover strategies required to sustain uninterrupted service even under peak demand.

- Cross-Channel Consistency Lens—Evaluates coherence of AI-driven responses across digital, voice and messaging platforms, highlighting the importance of a unified knowledge core to prevent fragmentation.

- Innovation Velocity Lens—Balances rapid experimentation in sandbox environments with governance guardrails, promoting continuous learning and model improvements without compromising production stability.

Key Considerations and Limitations

Implementing AI agents at scale requires acknowledging key constraints and planning accordingly:

- Data Readiness and Quality—High-fidelity, representative datasets are essential. Addressing data silos, inconsistent taxonomies and classification errors demands significant remediation effort.

- Regulatory and Compliance Boundaries—Strict mandates in finance, healthcare and other sectors—such as GDPR, HIPAA and PCI-DSS—govern data handling, model explainability and auditability, carrying legal and reputational consequences for non-compliance.

- Model Interpretability—While black-box algorithms may excel in performance, explainable AI techniques are vital for impact analysis, stakeholder assurance and regulatory alignment.

- Cultural Resistance and Change Management—Frontline teams may view AI as a threat. Effective adoption hinges on transparent communication, skills development programs and leadership endorsement to frame AI as a collaborator.

- Total Cost of Ownership—Beyond licensing fees, recurring expenses for cloud infrastructure, data pipelines, ongoing model retraining and integration maintenance must be factored into long-term budgets.

- Integration Complexity—Legacy systems lacking modern APIs or standardized schemas may require reengineering or middleware to achieve seamless interoperability with AI platforms.

- Multilingual and Multicultural Support—Operating in global markets demands localized NLP models that account for language diversity, dialects and cultural nuances, adding layers of development and maintenance complexity.

- Evolving Technology Landscape—Rapid advances in generative AI, computer vision and predictive analytics can outpace existing solutions, underscoring the need for vendor-neutral, modular architectures to mitigate obsolescence risk.

- Ethical and Social Impacts—Automated interactions risk dehumanization or bias amplification. Continuous monitoring, user feedback loops and governance review boards are essential to safeguarding trust.

Looking Ahead: A Roadmap for Continuous Innovation

Achieving AI-enabled, round-the-clock support is a strategic journey that unfolds in phases. The initial steps involve high-level market analysis and organizational readiness assessments to establish a clear baseline for ambition and capability. Subsequent phases focus on targeted pilot projects that validate use cases and measure outcomes against predefined success criteria—such as first-contact resolution, average handle time reduction and customer effort score improvement.

Later chapters will delve into the core AI technologies that power autonomous support—machine learning algorithms, natural language understanding engines and knowledge graph architectures—examining how they interoperate with leading platforms like Salesforce Einstein, Zendesk, IBM Watson Assistant and Google Cloud Contact Center AI. Readers will learn how to design omnichannel strategies that personalize interactions across digital, voice and messaging channels, underpinned by robust data governance and ethical frameworks.

The journey culminates with measurement and optimization protocols—frameworks for defining key performance indicators, conducting total economic impact analyses, and establishing continuous feedback loops. Governance structures, bias mitigation strategies and real-world case studies from retail, finance, healthcare and telecommunications will illustrate critical success factors and common pitfalls.

Equipped with this comprehensive strategic toolkit—encompassing analytical models, interpretive lenses and phased implementation roadmaps—executives and practitioners can navigate the complexities of AI agent adoption. The result is a resilient, scalable support ecosystem that delivers seamless, empathetic customer experiences around the clock, transforming support from a cost center into a sustainable competitive advantage.

Chapter 1: The Evolution of Customer Support in the Digital Age

Industry Dynamics Driving 24/7 Support

In the digital economy, customer expectations have shifted from acceptance of fixed operating hours to an expectation of uninterrupted availability. Mobile applications, social media, and real-time messaging have conditioned users to demand immediate responses. A delay of even a few hours can erode trust, drive abandonment, and prompt customers to seek competitors. Sectors such as e-commerce, financial services, and travel are especially sensitive, where urgent inquiries often arise outside traditional business hours. Ensuring round-the-clock service is no longer a luxury—it is the baseline for leading customer experience.

Competitive pressures amplify the need for continuous availability. In markets saturated with similar products and pricing, service responsiveness becomes a key differentiator. Organizations offering 24/7 support consistently report higher net promoter scores, reduced churn, and increased cross-sell opportunities. Global brands leveraging perpetual assistance across time zones have seen measurable growth in repeat purchases and subscription renewals, demonstrating that sustained investment in continuous support yields long-term returns that outweigh initial costs.

Global expansion introduces further complexity. Distributed customer bases across multiple time zones create service gaps when support centers operate on local schedules. Staggered shifts and follow-the-sun models mitigate coverage issues but introduce management, training, and quality-control challenges. Inconsistent language mastery, cultural nuances, and handoff risks can undermine the promise of a unified global brand experience.

Traditional support models face mounting operational strains. Extending human-agent coverage to nights and weekends incurs elevated labor costs, complex shift planning, high turnover, and quality assurance challenges across distributed or outsourced teams. During peak demand or product launches, scalability falters. Manual processes, knowledge silos, and fragmented routing further increase resolution times and limit first-contact success. Moreover, regulatory mandates in industries such as finance (GDPR, PCI DSS) and healthcare (HIPAA) impose stringent response and audit requirements, compounding operational burdens.

Technological accelerators have responded to these dynamics. Cloud platforms, omnichannel systems, and real-time analytics lay the groundwork for more scalable and resilient support frameworks. Automation and artificial intelligence now promise to handle routine inquiries and orchestrate engagement across channels. Understanding these industry forces establishes the strategic imperative for transitioning to AI-driven, 24/7 support models.

The AI Agent Paradigm

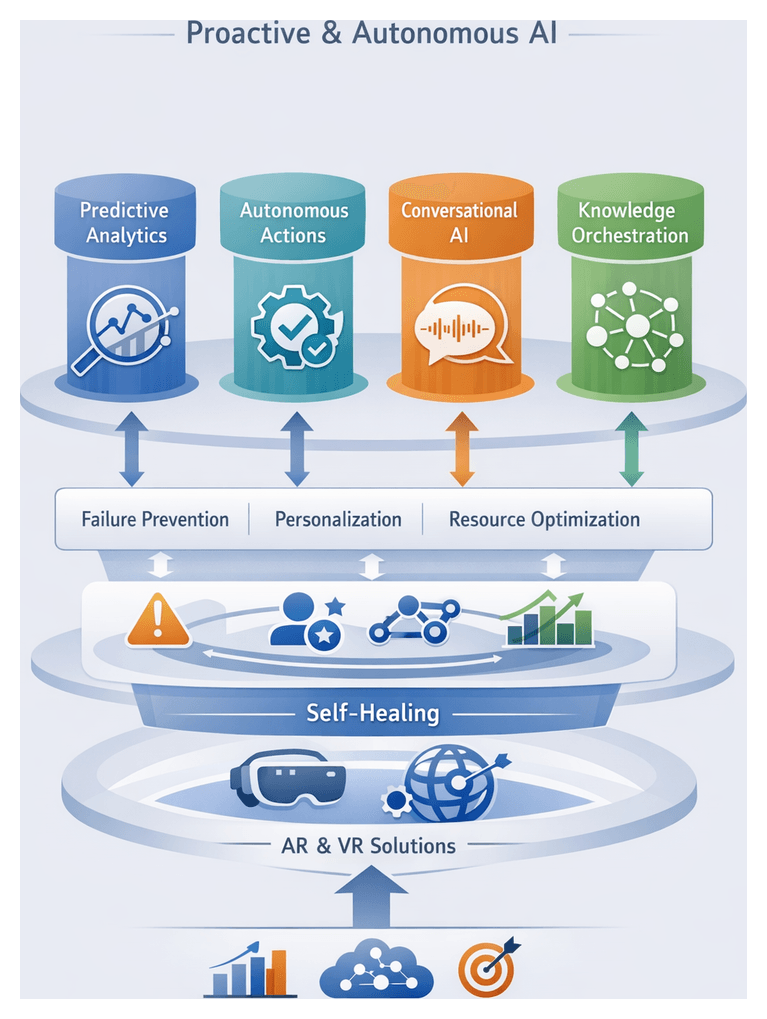

AI agents are software entities that engage autonomously with users, interpret natural language input, and execute tasks toward resolution. Unlike traditional scripted chatbots or IVR menus, AI agents integrate learning capabilities, context management, and decision logic to handle complex, multi-turn inquiries without continuous human intervention. Their maturity can be assessed across four dimensions: intelligence, autonomy, adaptability, and integration.

At the simplest level, agents rely on keyword matching and predefined decision trees. At the most advanced, they harness deep learning models and knowledge graphs to infer intent, anticipate needs, and initiate proactive outreach. This intelligence spectrum provides a roadmap for organizations to assess current capabilities and plan incremental enhancements.

Distinguishing AI Agents from Traditional Automation

- Response Scope: AI agents adapt responses based on real-time analysis of context, sentiment, and user history; rule-based systems follow rigid scripts.

- Learning Mechanisms: AI agents refine intent classifiers and response strategies through machine learning pipelines ingesting conversation logs; traditional tools require manual script updates.

- Context Retention: AI agents maintain dialogue state over extended interactions; legacy models reset context after each query.

- Proactivity: Advanced agents trigger notifications, reminders, and follow-up tasks without explicit user prompts; rule-based systems lack autonomous initiative.

Taxonomy of AI Agents

- Assistive Virtual Assistants: Embedded in digital interfaces for navigation, FAQs, and simple transactions. Examples include Zendesk Answer Bot and IBM Watson Assistant.

- Transactional Chatbots: Execute predefined tasks such as appointment booking or returns processing. Platforms include Google Dialogflow and Microsoft Azure Bot Service.

- Autonomous Service Agents: Handle end-to-end support workflows, integrating with back-office systems for data retrieval and updates.

- Predictive Engagement Agents: Use predictive analytics and user profiling to anticipate issues—such as subscription renewals or payment failures—and initiate proactive outreach.

Analytical Frameworks and Governance

- Autonomy Maturity Model: Stages from human-assisted to fully autonomous delivery, guiding capability roadmaps and risk mitigation.

- Cognitive Capability Matrix: Assesses perception (input interpretation), reasoning (decision logic), and action (task execution).

- Service Science Lens: Views agents within a socio-technical ecosystem, emphasizing co-creation of value, governance, and performance monitoring.

- ROI and Value Mapping: Combines cost savings, efficiency gains, and customer satisfaction impacts into a business case with sensitivity analysis and payback timelines.

Balancing autonomy and oversight through human-in-the-loop protocols, confidence thresholds, and continuous bias detection ensures ethical, auditable, and compliant operations. Key performance indicators—first-contact resolution, deflection rates, customer effort scores, and net promoter scores—quantify progress from reactive support to predictive engagement.

Technological Enablers for Autonomous Support

Recent advances have made AI agents both practical and imperative for modern support. Large-scale transformer architectures such as GPT-4 have delivered breakthroughs in natural language understanding and generation, enabling fine-tuning with modest data volumes to capture domain-specific terminology. Cloud providers have embedded AI services into elastic platforms: Vertex AI, Amazon Lex, and Watson Assistant offer preconfigured pipelines for intent recognition, sentiment analysis, and dialogue management.

Open-source communities around Hugging Face and TensorFlow have fostered continuous innovation in lightweight, domain-adaptable models. The microservices paradigm allows modular AI components to integrate seamlessly with existing CRM platforms, knowledge bases, and analytics pipelines. Cloud migration, omnichannel engagement, and integrated analytics underpin proactive monitoring and elastic scaling.

Competitive imperatives drive adoption. Organizations benchmark service agility in sub-minute response times, viewing AI capabilities as strategic assets. Proprietary training data, domain ontologies, and feedback loops create barriers to imitation and sustainable advantage. Economic pressures—rising labor costs, agent shortages, and demands for operational efficiency—further propel AI integration. Cloud-based agents offer variable cost structures, measured handle-time reductions, and rapid payback within months.

Regulatory frameworks such as GDPR, CCPA, and industry-specific mandates catalyze responsible AI deployment through privacy-by-design, consent management, and auditable data governance. Frameworks like the NIST AI Risk Management Framework guide balanced innovation with accountability, reinforcing brand trust and competitive positioning.

Organizational readiness—driven by executive sponsorship, cross-functional collaboration, and agile governance—ensures rapid iteration of prototypes, continuous learning from performance metrics, and incremental scaling. Assessments of digital maturity, skill gaps, and clear accountability structures align technology deployment with long-term support transformation.

Strategic Principles for Next-Generation Support

Designing resilient, AI-augmented support requires service models that are agile, customer-centric, and sustainable. Leading organizations apply the following principles to guide future service design.

Aligning Support Strategy with Business Objectives

- Map customer journeys to strategic priorities and metrics such as retention, upsell rates, and satisfaction indices.

- Define success criteria in business terms—customer lifetime value impact, net promoter score improvements, and market share gains.

- Embed support considerations in product and service roadmaps via early collaboration among support, product, and marketing teams.

Balancing Automation with Human Empathy

- Implement tiered engagement models where AI agents handle routine inquiries and seamlessly escalate complex or sensitive issues to human agents.

- Maintain human-in-the-loop oversight with real-time monitoring protocols and escalation thresholds.

- Establish personalization guardrails to mitigate bias and privacy risks, defining clear boundaries for automated decision-making.

Designing for Scalability and Resilience

- Adopt elastic capacity planning to anticipate peak demand without excessive overprovisioning.

- Ensure failover and redundancy with distributed backups and automatic rerouting of critical components.

- Deploy continuous performance monitoring frameworks to identify anomalies before customer impact.

Prioritizing Contextual Continuity

- Aggregate cross-channel interaction histories into unified records for seamless dialogue resumption.

- Leverage sentiment analysis and intent signals to adapt conversational tone and determine self-service or human intervention.

- Orchestrate omnichannel transitions—chatbot to live chat or voice—while preserving context.

Embedding Governance, Ethics, and Compliance

- Adopt ethical AI policies outlining acceptable use cases, data handling, and bias mitigation.

- Align with regional and industry regulations—GDPR, CCPA, HIPAA—through consent management and data sovereignty controls.

- Maintain transparent communication, explainability of AI responses, and clear escalation paths for contested decisions.

Leveraging Data as a Strategic Asset

- Establish unified data models for customer profiles, product catalogs, and interaction logs.

- Implement data quality management processes for cleansing, enrichment, and validation to prevent model drift.

- Embed privacy-by-design with anonymization, encryption, and access controls from data ingestion through lifecycle.

Cultivating Organizational Readiness and Culture

- Foster cross-functional collaboration among IT, operations, marketing, and compliance to break down silos.

- Invest in training programs to upskill agents in AI oversight, data literacy, and emotional intelligence.

- Secure executive sponsorship and define clear accountability for AI governance, performance targets, and iterative improvements.

Evaluating Future Service Models

- Strategic Alignment: Assess how design advances core business objectives and customer loyalty.

- Technical Feasibility: Evaluate existing infrastructure, data maturity, and vendor capabilities.

- Operational Impact: Estimate staffing, training, and process redesign requirements.

- Risk and Compliance: Identify regulatory obligations, ethical considerations, and mitigation strategies.

- Customer Acceptance: Gauge user sentiment through pilots or surveys to refine flows and escalation thresholds.

By integrating these strategic principles, organizations can design support models that not only meet the demands of continuous availability but also drive long-term differentiation, operational efficiency, and customer trust.

Chapter 2: Anatomy of AI Agents: Core Technologies and Capabilities

Machine Learning as the Decision Engine

Machine learning transforms historical interaction logs into predictive models that guide autonomous support. Supervised techniques ingest chat transcripts, agent resolutions, and outcomes to train classifiers—from decision forests and support vector machines to deep neural networks and transformers—that determine the next best actions in real time. Feature engineering converts raw inputs such as message text, sentiment, metadata, and past resolution codes into structured vectors that capture patterns essential for accurate predictions. Iterative retraining cycles refine model weights as new labeled data arrives, enabling agents to adapt to evolving customer concerns and product changes.

- Unsupervised clustering detects emerging topics and shifting sentiment trends without labeled examples.

- Reinforcement learning optimizes multi-turn dialogues by assigning rewards to successful resolutions and penalties to unsatisfactory exchanges.

- Continuous integration pipelines automate data ingestion, model retraining, and validation, while monitoring frameworks flag drift and performance degradation.

Natural Language Processing for Human-Centric Interactions

Natural language processing equips AI agents to interpret and generate human language through intent recognition, entity extraction, sentiment analysis, and context management. Transformer-based models pre-trained on large corpora and fine-tuned on domain-specific data drive high-precision intent classifiers, ensuring messages about billing, technical issues, or account updates are routed correctly. Named entity recognition pipelines combine statistical tagging with gazetteers to extract dates, product names, error codes, and user identifiers, enabling personalized and efficient backend operations.

- Sentiment analysis gauges emotional tone, allowing agents to adapt tone or escalate to live specialists when frustration peaks.

- Dialogue state tracking maintains memory across multi-turn and multi-channel interactions, preserving context and avoiding redundant questions.

- Fallback mechanisms incorporate rule-based grammars or human intervention for ambiguous or high-risk scenarios.

Knowledge Graphs as the Semantic Backbone

Knowledge graphs represent domain entities—products, services, workflows—and the relationships between them, enabling AI agents to navigate complex knowledge domains. Nodes such as “router,” “firmware update,” or “network outage” connect via edges denoting compatibility, causation, or prerequisites. This flexible structure supports dynamic schema evolution and enriches graph content through automated pipelines that integrate structured manuals and unstructured forums via NLP annotations.

- Query languages like SPARQL enable real-time graph traversal to retrieve relevant resolution paths.

- Reasoning engines apply rule-based or probabilistic logic to infer implicit relationships, powering proactive recommendations and incident escalations.

- Continuous enrichment ensures the graph reflects new product versions, policy updates, and emerging support articles.

Architectural Synergies and Scalable Pipelines

An integrated pipeline unites machine learning, natural language processing, and knowledge graphs within a modular microservices architecture. Incoming messages flow through NLP modules that detect intent and entities, then reference knowledge graph context before decision models select resolution strategies. Generated responses may invoke API calls to backend systems for ticket creation, account updates, or order inquiries. Containerized services and orchestration platforms manage service discovery, load balancing, and autoscaling, ensuring fault tolerance and minimal latency even under volatile demand.

- Automated scaling policies dynamically allocate compute resources based on predicted ticket volumes and live throughput metrics.

- Multi-region deployments bolster resilience, while auto-healing mechanisms detect and recover from failures without human intervention.

- Unified telemetry aggregates performance data—latency, resolution rates, sentiment scores—into dashboards that inform continuous optimization.

Operational Resilience and Autonomous Service Delivery

Autonomous AI agents deliver 24/7 support with digital elasticity that outpaces traditional human staffing. Predictive analytics anticipate peak loads, triggering preemptive resource provisioning and prioritizing high-value interactions. Advanced fault-tolerance features—such as circuit breakers, retry policies, and degraded-mode fallbacks—allow systems to maintain core functionality during infrastructure disruptions. This self-recovering design shifts the resilience burden from manual on-call rosters to automated monitoring and remediation frameworks.

- Resilience metrics extend beyond uptime to include self-recovery rates and adaptation to evolving query patterns.

- Sandbox environments validate new AI capabilities in parallel, ensuring that full autonomy is deployed only after rigorous performance thresholds are met.

- Escalation triggers embed human-in-the-loop checkpoints for sensitive or complex cases, balancing automation with empathetic intervention.

Analytical Framework for Technology Evaluation

A structured analytical approach compares machine learning, NLP, and knowledge graph components across performance, robustness, and interpretability criteria. Key metrics include classification accuracy (precision, recall, F1-score), predictive reliability (MAE, RMSE), and throughput under stress tests. Robustness assessments examine model resilience to concept drift, adversarial inputs, and multilingual variations, while governance frameworks enforce explainability through SHAP values, LIME explanations, and audit logs.

- Robustness under real-world conditions is ensured by stress-testing models against seasonal spikes, diverse languages, and multimodal data.

- Interpretability safeguards enable compliance with regulations and ethical standards by making decision pathways transparent.

- Error attribution frameworks pinpoint failure modes—misclassified intents, parsing errors, or missing graph relations—to guide targeted improvements.

Governance, Compliance, and Ethical Oversight

Scaling autonomous agents demands clear accountability for automated actions—processing refunds, updating records, or initiating communications. Cross-functional ethics councils and privacy officers embed bias detection and fairness assessments within the AI lifecycle, using demographic parity metrics and continuous bias monitoring tools. Platforms log every agent action, supporting auditability and enabling compliance with regulations such as GDPR and industry-specific mandates.

- Role-based access controls restrict sensitive actions until compliance reviews are complete.

- Audit logs and explainability features provide traceable records of model decisions and data sources.

- Hybrid oversight models combine automated enforcement with periodic human audits to maintain trust and regulatory alignment.

Strategic Alignment and Business Impact

Effective AI-driven support aligns with enterprise objectives through formal governance forums that bridge business leaders, support executives, and technical architects. Frameworks like Objective and Key Results ensure investments advance metrics such as customer satisfaction, first contact resolution, and operational efficiency. Mapping technology capabilities—real-time analytics, multilingual support, predictive routing—to targeted outcomes creates a strategic matrix that guides vendor selection and deployment roadmaps.

- Financial services may prioritize fraud detection and compliance, while retail focuses on conversion uplift and basket size expansion.

- Cultural readiness and change management capacity influence implementation timelines and scalability potential.

- Incremental pilots validate interoperability and performance before extending autonomy to critical touchpoints.

Integration, Interoperability, and Vendor Ecosystem

AI agents must integrate seamlessly with CRM, ERP, and order management systems via API-first designs—RESTful endpoints, GraphQL schemas, or event-driven architectures based on Apache Kafka. Knowledge graphs normalize disparate taxonomies, ensuring consistent entity and intent interpretation across services. Organizations leverage vendor ecosystems that offer prebuilt connectors and industry modules, and tap open source communities for rapid innovation and risk mitigation.

Platforms such as IBM Watson and Google Dialogflow exemplify deeply integrated solutions where semantic relationships from knowledge graphs enhance reasoning across data silos, and unified analytics provide holistic service health insights.

Scalability, Maintainability, and Future-Proofing

Capacity planning employs queueing theory and load testing to validate sub-second response times under burst loads. Choices between serverless functions and containerized microservices balance the simplicity of auto-scaling with the control of orchestration. MLOps practices—version-controlled datasets, automated training pipelines, and deployable model registries—ensure reproducibility, traceability, and auditable lifecycles. Continuous monitoring dashboards detect drift and trigger retraining, while runbooks and technical documentation prevent “black-box abandonment.”

Future-proof architectures embrace open standards such as ONNX for model interchange and Kubernetes for orchestration, and support multiple ML frameworks—from TensorFlow to PyTorch. Emerging paradigms like federated learning and edge inference expand deployment to retail stores, manufacturing lines, and mobile devices, offering new privacy and latency advantages.

Cost Modeling and Risk Mitigation

Total cost of ownership analysis incorporates subscription fees, variable cloud infrastructure costs, data labeling, and ETL overhead. Sensitivity analyses identify cost levers—data volume, model complexity, feature scope—enabling trade-offs between performance and expense. Financial projections, augmented by Monte Carlo simulations, quantify risk-adjusted returns and inform budget allocations.

- Risk registers categorize technical and organizational threats—vendor bankruptcy, security breaches, algorithmic bias—and assign mitigation plans.

- Stress tests simulate data poisoning, model drift, and infrastructure failures to validate fallback strategies and escalation protocols.

- Transparent risk communication with stakeholders builds organizational buy-in and clarifies thresholds for acceptable performance variances.

Balancing Trade-Offs with a Multidimensional Framework

A decision-matrix aligns IT, legal, finance, and customer experience priorities by scoring vendor capabilities, technical fit, and risk profiles against weighted criteria. Workshops define evaluation weights, pilot deployments validate proofs of value, and comparative dashboards guide vendor selection. This structured process minimizes subjective bias and lays the groundwork for sustainable, scalable AI support systems that adapt to evolving market demands and technological innovations.

Chapter 3: Natural Language Processing and Understanding

Understanding Advanced Natural Language Techniques

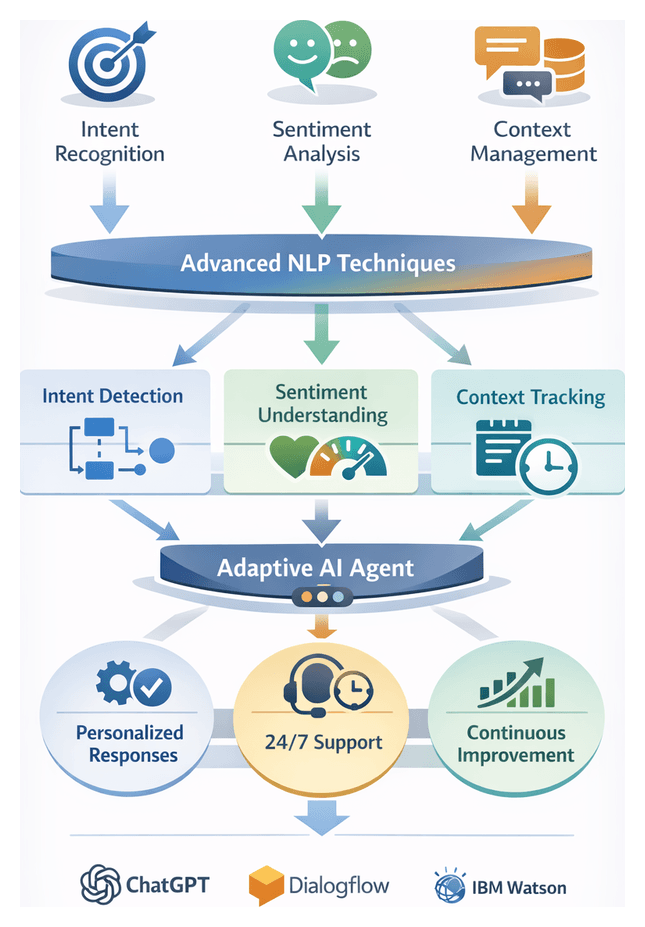

Advanced natural language techniques form the foundation of AI-driven customer support, enabling human-like interaction at scale. These techniques include intent recognition, sentiment analysis, and context management. Intent recognition deciphers user goals, sentiment analysis gauges emotional tone, and context management preserves conversational continuity. Together, they allow organizations to move beyond scripted responses toward adaptive, 24/7 support. Leading platforms such as ChatGPT, Google Dialogflow, and IBM Watson exemplify how these capabilities boost resolution rates, reduce handling times, and improve customer satisfaction.

Intent Recognition

Intent recognition maps user utterances to actionable objectives. By accurately detecting whether a user wants to “check order status,” “reset a password,” or “request a refund,” AI agents can automate full-ticket resolution without human handoffs. Advanced systems also handle compound and nested intents, supporting complex workflows.

- Tokenization and Embedding: Converting text into vectors that capture semantic relationships.

- Supervised Classification: Training models such as support vector machines, random forests, or deep neural networks on annotated datasets.

- Hierarchical Intent Structures: Organizing broad intents and specialized sub-intents to manage scalability.

Accurate intent classification drives workflow routing, knowledge retrieval, and API invocation. It enables personalized interactions, streamlines business logic, and reduces operational costs. In high-volume environments, misclassification rates above 5–10 percent can erode satisfaction. Mitigating these errors requires ongoing training pipelines, active learning, and close integration with annotation platforms.

Methodologies and Evaluation

- Rule-Based Systems: Handcrafted patterns and decision trees, offering transparency but limited scalability.

- Statistical Models: Classical machine learning requiring feature engineering, balancing performance and interpretability.

- Deep Learning: Architectures such as CNNs, RNNs, and transformers (for example, BERT or GPT) that process raw text with minimal feature design, delivering high accuracy at the cost of greater data and compute demands.

- Accuracy Metrics: Precision, recall, and F1 scores per intent class guide performance thresholds.

- Latency and Throughput: Inference speed under peak load must meet service-level agreements.

- Explainability: Transparent decision logic is critical in regulated industries; auxiliary tools can demystify deep learning outputs.

- Scalability: Transfer learning and fine-tuning frameworks reduce data requirements when adding new intents.

Best-in-class teams conduct iterative A/B testing, combining quantitative metrics with qualitative feedback to refine intent models continuously.

Sentiment Analysis

Sentiment analysis interprets the emotional context of user messages—frustration, urgency, satisfaction, or neutrality. By detecting sentiment shifts in real time, AI agents can modulate tone, escalate critical cases, and de-escalate tense interactions to protect brand reputation.

Core Techniques

- Lexicon-Based Approaches: Use predefined sentiment dictionaries with heuristic rules to assign polarity scores.

- Machine Learning Classifiers: Employ features such as n-grams and part-of-speech tags to train models like logistic regression or support vector machines.

- Deep Learning Models: Utilize CNNs and transformer-based architectures to capture complex semantic patterns and context.

Operational Benefits

- Adaptive Tone Modulation: Switching language style from neutral to empathetic when frustration is detected.

- Priority Routing: Flagging negative sentiment for rapid human review or high-priority workflows.

- Sentiment Analytics: Aggregating sentiment data to uncover product pain points and service bottlenecks.

Combining lexicon and machine learning methods often yields the best balance of interpretability and accuracy. Regular updates to sentiment dictionaries and retraining with domain-specific data guard against concept drift and maintain relevance to evolving customer language.

Context Management

Context management tracks dialogue state across turns, ensuring AI agents remember past interactions, resolve pronouns, and handle follow-up questions. Unlike intent recognition and sentiment analysis, which operate per utterance, context management preserves conversational coherence over extended exchanges.

- Dialogue State Tracking: Recording variables such as user identity, selected options, and unfilled slots throughout a session.

- Context Windows: Maintaining a memory of recent exchanges to disambiguate references.

- Long-Term Profiling: Linking sessions to historical data—past tickets, preferences, and purchases—for personalized service.

Business Impact

- Reduced Repetition: Avoiding redundant questions accelerates resolution and boosts satisfaction.

- Enhanced Self-Service: Guiding users through multi-step tasks without human intervention.

- Personalized Follow-Through: Suggesting next-best actions and upsell opportunities based on stored context.

Implementations range from simple rule-based session variables to enterprise-grade state stores and graph-based representations. Ensuring secure handling of sensitive context, especially when integrated with CRM or ERP systems, is paramount.

When intent recognition, sentiment analysis, and context management work in concert, AI agents can emulate human adaptability and empathy. Joint interpretation enhances response relevance and emotional resonance:

- Intent-Context Alignment: Disambiguating requests dependent on prior turns, such as “Apply that discount now.”

- Sentiment-Aware Routing: Escalating interactions where negative sentiment persists across exchanges.

- Dynamic Response Generation: Leveraging advanced language models to craft tailored, contextually grounded replies.

Investing in a unified NLP architecture rather than isolated point solutions positions organizations to deliver seamless, scalable, and human-centered support around the clock.

Analytical Frameworks for Continuous Improvement

Rigorous evaluation and governance elevate AI agents from basic automation to nuanced, adaptive support. Analytical frameworks encompass both technical and business metrics, interpretive structures, and continuous feedback loops.

Performance Metrics

- Task-Level Metrics: Intent accuracy, entity extraction performance, and sentiment classification F1.

- End-to-End Measures: First-contact resolution rate, average handling time, and customer satisfaction scores (CSAT).

- Latency and Scalability: Inference speed under peak loads and the ability to onboard new intents or languages efficiently.

- Bias Monitoring: Counterfactual testing and disparate impact analysis to detect unintended biases.

- Explainability: Utilizing tools such as SHAP or LIME to clarify model decisions for compliance and auditability.

Governance and Feedback Loops

- Error Analysis Workshops: Cross-functional teams review high-impact misclassifications to resolve root causes.

- Human-in-the-Loop: Expert annotators validate edge cases and guide incremental retraining.

- Dashboards and Cadence: Weekly and monthly performance reviews align data scientists, support managers, and UX researchers.

- Benchmarking: Participating in industry challenges and sharing anonymized metrics to compare performance against peers.

Contextual Dimensions and Business Implications

Context shapes every aspect of conversational AI, from interpretation to user trust. Four key dimensions inform design and measurement:

- Session Context: Real-time dialogue state, recent utterances, and detected sentiment.

- User Context: Historical interactions, purchase history, and preferences.

- Domain Context: Industry-specific terminology and regulatory constraints.

- Environmental Context: External factors such as location, device, or time.

Embedding these dimensions enhances conversational coherence, efficiency, trust, and personalization. For example, an AI agent leveraging medical history in healthcare can deliver safer advice, as demonstrated by IBM Watson Assistant. In finance, agents integrate transaction context via Microsoft Azure Bot Service to detect anomalies and comply with regulatory standards. Retail platforms using Google Dialogflow tailor recommendations based on regional promotions and customer profiles.

Considerations for NLP Implementation

Implementing NLP-driven support requires navigating technical, organizational, and ethical complexities. Key considerations include:

- Data Quality and Annotation: Adopt clear label taxonomies and active learning to refine datasets. Monitor for data drift with continuous sampling and reannotation.

- Domain Customization: Leverage transfer learning and maintain dynamic glossaries of industry jargon. Combine rule-based components for compliance-sensitive queries.

- Language Coverage: Calibrate multilingual embeddings and validate cultural nuances through local focus groups. Ensure regional compliance for data residency.

- Model Lifecycle Management: Apply MLOps practices for version control, automated testing, and canary releases. Integrate user feedback to drive continuous retraining.

- Metrics and Bias Monitoring: Balance technical performance metrics with business outcomes. Conduct systematic bias audits and human-in-the-loop evaluations.

- Privacy and Ethical Governance: Enforce data minimization, end-to-end encryption, and role-based access controls. Establish cross-functional ethics committees to guide policy.

- Scalability and Integration: Optimize inference with quantization and microservices architectures. Evaluate vendor lock-in risks and plan for portability.

- Organizational Alignment: Define roles such as conversation designers and compliance officers. Build centers of excellence and invest in training to foster cross-functional collaboration.

By grounding NLP initiatives in rigorous data practices, governance frameworks, and collaborative operating models, organizations can mitigate risks and unlock the full potential of AI-driven conversational support.

Chapter 4: AI Agent Architectures for Continuous Support

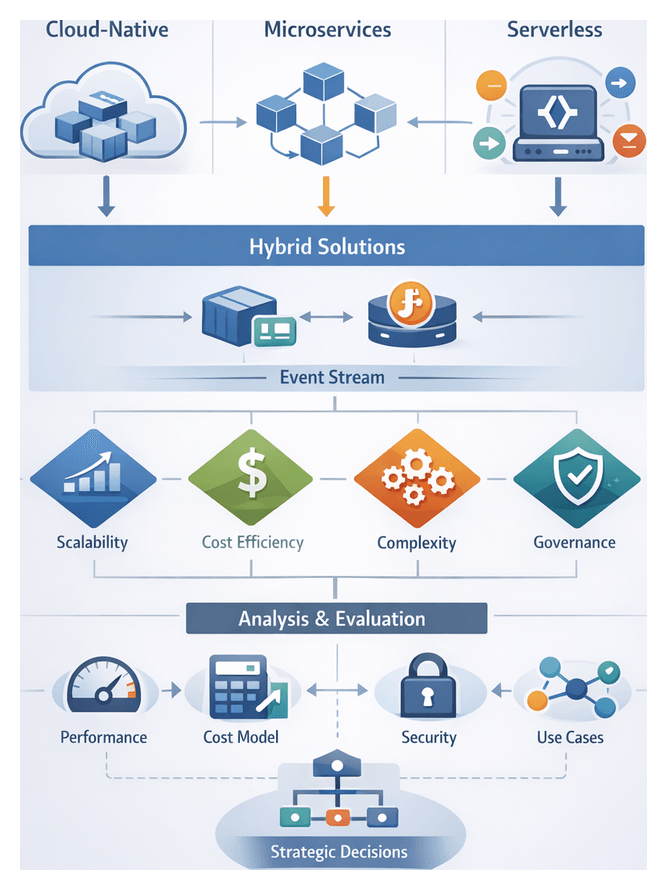

Architectural Paradigms for Scalable AI Agents

Delivering uninterrupted, high-quality AI-driven customer support around the clock demands architectures that balance control, flexibility, resilience, and cost. Three principal paradigms—cloud-native, microservices, and serverless—each offer distinct advantages and trade-offs. Many organizations adopt hybrid patterns, combining containerized core engines with event-driven functions to meet diverse workload characteristics and service-level objectives.

Cloud-Native Architecture

Cloud-native design decouples application components, leverages containerization, and automates infrastructure through orchestration. By abstracting hardware concerns, teams focus on feature delivery while platforms handle scaling, failover, and service discovery.

- Elasticity: Automatic scaling adjusts compute and network resources in real time to match demand.

- Resilience: Orchestrators detect failures and self-heal detached components without manual intervention.

- Continuous Delivery: Pipelines support blue/green or canary deployments, minimizing downtime during updates.

- Vendor Independence: Standards-based containers and open APIs ease migration across public, private, or hybrid clouds.

Core tools include Kubernetes for orchestration, Docker for container images, service meshes such as Istio or Linkerd for traffic management and security, and Infrastructure-as-Code solutions like Terraform or AWS CloudFormation to declaratively provision resources.

Microservices Architecture

Microservices decompose the platform into independently deployable services, each owning a specific capability. Teams manage the full lifecycle of their services, enabling rapid iteration without impacting the broader system.

- Independent Scaling: Components such as natural language processors or recommendation engines scale separately from lightweight modules.

- Fault Isolation: Circuit breakers and retry policies prevent failures from cascading across services.

- Polyglot Flexibility: Teams select languages and frameworks best suited for each service’s requirements.

- Targeted Deployment: Rolling updates and A/B testing enable experimentation with new AI models or dialogue flows.

Key considerations include service discovery using platforms like HashiCorp Consul or Kubernetes DNS, API gateways such as the NGINX Ingress Controller or Ambassador, and communication patterns—synchronous (REST, gRPC) versus asynchronous (message queues, event streams)—to optimize latency and consistency.

Serverless Architecture

Serverless computing abstracts server management entirely, allowing functions to execute in response to events. Providers like AWS Lambda, Google Cloud Functions, or Azure Functions handle provisioning, scaling, and patching, charging only for execution time.

- Cost Efficiency: Idle functions incur no charges, making serverless ideal for unpredictable or low-baseline workloads.

- Automatic Scaling: Functions scale instantly in response to triggers without capacity planning.

- Minimal Operations Overhead: Eliminates server maintenance and patch management.

Trade-offs include cold-start latency, execution time and memory limits, vendor lock-in, and the need for specialized tracing solutions to achieve observability.

Hybrid Patterns

A hybrid approach combines containerized services for core, low-latency conversational engines with serverless functions for asynchronous or bursty tasks. Event streaming platforms like Amazon SQS or Google Pub/Sub bridge front-end channels with back-end pipelines, while a unified API gateway routes requests to the appropriate runtime environment. This pattern leverages the strengths of each paradigm while mitigating individual limitations.

Comparative Analysis

Selecting between cloud-native, microservices, and serverless—or blending them—requires a multidimensional evaluation of performance, cost, complexity, and governance. Decision-makers employ analytical frameworks to align architecture with business priorities and customer experience targets.

Performance and Scalability

Experts assess metrics such as latency, throughput, and resource footprint. Cloud-native systems maintain warm containers and tune autoscaling policies based on CPU, memory, or custom indicators. In serverless, cold starts introduce variability—tens to hundreds of milliseconds depending on runtime and platform optimizations. Strategies like provisioned concurrency or hybrid warm services can mitigate latency spikes.

- Cloud-native pros: predictable latency, fine-tuned autoscaling, granular resource allocation.

- Cloud-native cons: operational overhead, orchestration complexity.

- Serverless pros: zero-ops scaling, pay-per-use cost model, rapid deployments.

- Serverless cons: cold-start variability, execution limits, constrained local resources.

Frameworks such as queuing theory models guide the comparison of autoscaling delays versus concurrency limits. Mapping these characteristics to service-level objectives ensures alignment with customer experience thresholds.

Cost Models and Financial Implications

Financial analysis differentiates capital expenditure on reserved infrastructure from operational expenditure on consumption-based services. Unit economics compare per-second function pricing against amortized container costs. High, steady request rates often favor containers, while spiky or low-volume workloads benefit from serverless.

- Container cost factors: reserved instance fees, utilization rates, overprovisioning buffers.

- Function cost factors: compute duration, memory footprint, invocation count, provisioned concurrency surcharges.

Monte Carlo simulations project cost variability under potential traffic patterns, enabling informed decisions on upfront commitments versus pay-as-you-go spending.

Operational Complexity and Governance

Cloud-native ecosystems demand robust DevOps practices, including continuous integration pipelines, container registries, and policy enforcement via tools like Open Policy Agent. The expanded attack surface requires specialized teams for cluster and network security.

Serverless reduces infrastructure management but shifts governance to event orchestration and dependency validation. Distributed tracing tools such as AWS X-Ray or Google Cloud Operations are critical for diagnosing issues across ephemeral functions.

Frameworks like the Cloud Adoption Framework or the Serverless Framework provide structured guardrails. Dedicated platform teams often abstract complexity for product owners, balancing agility with compliance.

Maintainability and Evolution

Modular microservices facilitate independent lifecycles and incremental migrations. Clear APIs and versioning support backward compatibility. In serverless architectures, granular functions can proliferate, requiring naming conventions and deployment descriptors to manage scale. Testing strategies must combine unit tests for functions with integration tests that simulate event-driven workflows.

Service maturity models guide when to decompose monoliths into microservices or functions, factoring team size, release velocity, and workflow complexity.

Use-Case Alignment

Workloads such as high-volume generic queries, escalated issue triage, and proactive notifications exhibit unique demands:

- Core conversational engines in containers guarantee low latency and persistent state.

- Background tasks like transcript analysis or sentiment aggregation run as serverless functions for cost efficiency.

- Event streaming and message queuing via Amazon SQS or Google Pub/Sub connects interaction channels to processing pipelines.

The Function Suitability Matrix evaluates tasks by execution time, state requirements, and invocation frequency to determine the optimal execution environment.

Resilience and Scalability Strategies

Building resilient, scalable AI agent platforms ensures continuity in the face of failures, disruptions, and demand spikes. Resilience metrics include mean time between failures (MTBF), recovery time objectives (RTO), and graceful degradation capabilities.

Fault Tolerance and Redundancy

- Multi-Region Replication: Geographically dispersed deployments mitigate region-specific outages.

- Active-Active and Active-Passive Configurations: Balancing traffic across concurrent instances or standby failovers influences RTO and recovery point objectives (RPO).

- Data Consistency Models: Eventual consistency suits low-latency use cases, while strong consistency is critical for financial dispute support.

- Graceful Degradation: Non-critical features can be disabled to preserve core functionality under stress.

Auto Scaling and Load Management

Auto scaling reacts to real-time indicators—CPU usage, queue length, request rate—via platforms like Kubernetes and serverless frameworks such as AWS Lambda or Azure Functions. Predictive scaling, leveraging machine learning forecasts, anticipates surges tied to events like product launches or incidents, optimizing capacity before demand peaks.

Monitoring, Observability, and Self-Healing

Comprehensive observability integrates metrics, logs, and distributed tracing. Tools like Prometheus and Grafana provide dashboards for real-time insights. Chaos engineering practices, pioneered by Netflix Chaos Monkey, test system robustness through controlled failures. Automated remediation routines restart failed components, clear resource contention, or reroute traffic to maintain service continuity without human intervention.

Selection Dimensions and Strategic Guidance

Choosing an architecture involves balancing multiple dimensions—performance, cost, complexity, security, and organizational readiness. Applying structured criteria and iterative pilots leads to informed, adaptable decisions.

Performance and Resource Efficiency

- Latency Targets: Container-based microservices on Kubernetes or Amazon EKS deliver predictable response times, while serverless functions require provisioned concurrency to meet sub-second SLAs.

- Throughput and Concurrency: Horizontal scaling through stateless microservices on AWS Fargate or serverless auto scaling must align with concurrency limits to avoid throttling.

- Elasticity: Decoupling components—API gateways, processing engines, data stores—enables granular scaling, optimizing resource allocation across peaks and troughs.

Cost, Security, and Compliance

- Cost Models: Contrast pay-per-use serverless billing with reserved or spot instances. Factor in licensing, data transfer fees, and operational overhead of CI/CD, service meshes, and observability platforms.

- Isolation: Dedicated container clusters or virtual private clouds mitigate noisy neighbor risks in multi-tenant serverless environments.

- Data Residency and Encryption: Ensure encryption at rest and in transit, consistent key management, and regulatory compliance across distributed architectures.

- Identity and Access Management: Implement least-privilege IAM roles and network-layer mutual TLS via service meshes to enforce fine-grained policies.

Organizational Alignment and Skills

- DevOps Maturity: Teams proficient in container orchestration can exploit advanced control and observability, while those new to DevOps may favor serverless for its lower operational burden.

- Vendor Lock-In: Deep reliance on proprietary cloud services accelerates initial delivery but may hinder future multi-cloud strategies. Hybrid architectures using open standards preserve flexibility.

- Community and Partner Ecosystem: Active developer communities and professional services support faster issue resolution and innovation.

Trade-Offs and Iterative Pilots

No single architecture is universally optimal. Evaluating trade-offs—complexity versus control, predictability versus flexibility, maturity of emerging technologies—requires iterative validation:

- Map business objectives—ultra-low latency, cost optimization, compliance—to technical requirements and SLAs.

- Conduct proof-of-concepts that simulate peak loads, failure scenarios, and cost models for both microservices and serverless variants.

- Invest early in end-to-end observability, tracing, and automated remediation frameworks to manage growing complexity.

- Schedule periodic architecture reviews to reassess performance data, cost trends, and team capabilities, adapting the portfolio as customer demands and technology evolve.

By systematically applying these criteria and fostering a culture of continuous improvement, organizations can architect AI agent platforms that deliver resilient, scalable, and cost-effective support aligned with both current needs and future growth.

Chapter 5: Personalization and Customer Experience Enhancement

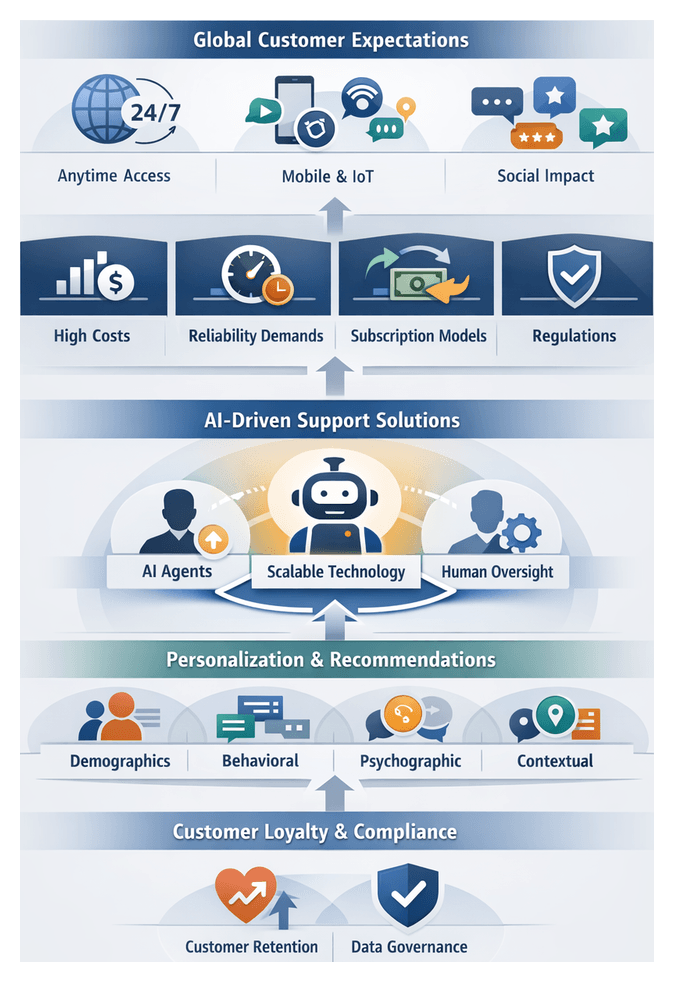

Industry Dynamics Driving 24/7 AI-Enabled Support

In today’s global, digital economy, continuous customer support has evolved from a competitive advantage to a basic expectation. Customers engage across time zones, devices and channels, demanding instant resolution and personalized experiences. Early call center models introduced shift-based staffing to extend service hours, but these solutions were constrained by high labor costs, inconsistent quality and rigid scheduling. As digital channels—email, chat and social media—emerged, brands extended coverage yet continued to struggle with the expense of round-the-clock human staffing.

Mobile ubiquity and on-demand consumer behavior have driven a dramatic shift in expectations. Research from Forrester indicates that over 70 percent of customers anticipate immediate assistance at any hour, and more than half will abandon a brand after just two poor service interactions. Subscription and service-based business models tie revenue directly to satisfaction and retention, placing further pressure on rapid issue resolution. In sectors such as e-commerce, telecommunications and finance, organizations routinely promote 24/7 support capabilities as central to their value propositions, fueling an arms race in response speed.

Social media and review platforms amplify the stakes of every support interaction. A single negative post can spread within minutes, undermining brand reputation, while a swift, public resolution can enhance perception and demonstrate customer-centric values. Regulatory requirements such as GDPR and CCPA add another dimension, mandating timely responses to data access requests and incident notifications. For example, a European telecommunications provider incurred a €35 million fine under GDPR for delayed breach reporting, illustrating that continuous support is essential for compliance as well as satisfaction.

Key Market Forces

- Global customer bases spanning multiple time zones and languages

- Ubiquity of mobile and connected devices, including IoT endpoints

- Expectation of real-time resolution and personalized interactions

- Subscription models linking support responsiveness to revenue retention

- Impact of social media and online reviews on brand trust

- Regulatory obligations for timely communication and data rights

Traditional staffing approaches face challenges in recruiting skilled agents for off-hours, sustaining quality across shifts and managing labor costs. Self-service portals and interactive voice response systems can deflect routine inquiries but often fail with complex or personalized issues. Accordingly, organizations are investing in intelligent automation to achieve scalable, consistent support. AI-powered chatbots and virtual assistants—such as IBM Watson Assistant and Microsoft Azure Bot Service—leverage natural language processing and machine learning to resolve routine cases around the clock and escalate complex queries to human experts.

Advances in cloud computing, microservices and serverless architectures enable elastic scaling of support platforms, while DevOps and continuous integration pipelines facilitate rapid iteration on AI models and content. Yet technology alone is insufficient without clear governance, cross-functional alignment and robust performance measurement. Governance structures must define AI oversight roles, data stewardship and escalation protocols. Metrics should include traditional KPIs—response time and resolution rates—as well as AI-specific measures like deflection rate, model accuracy and customer satisfaction with automated interactions.

Leading organizations adopt a hybrid model combining AI automation with human expertise. Intelligent routing allows AI agents to handle routine inquiries, freeing human agents to focus on complex or high-value interactions. For instance, one telecommunications provider implemented AgentLinkAI for first-level support, achieving a 70 percent deflection rate and reducing average resolution time by 15 percent while improving customer satisfaction. Over time, brands evolve toward unified AI orchestration platforms that integrate chatbots, voice assistants and back-end automation with CRM systems and analytics dashboards. Integrating solutions such as Zendesk with custom AI connectors ensures seamless handoffs and consistent context across automated and human channels.

Personalization and Recommendation Frameworks for AI Support Agents

User Profiling Dimensions

Personalization rests on comprehensive user profiles. Industry practitioners classify profiling into four dimensions:

- Demographic: Static attributes like age, gender, location and occupation provide baseline segmentation but lack depth.

- Behavioral: Activity patterns—page visits, support history and transactions—support adaptive models that evolve with user behavior.

- Psychographic: Preferences, values and motivations inferred via surveys and sentiment analysis enable richer personalization but require bias mitigation.

- Contextual: Situational factors such as device type, geolocation, time of day and channel inform tone, urgency and recommended actions.

Experts evaluate profiling on accuracy, timeliness and privacy compliance. Digital-native brands emphasize behavioral and contextual signals for real-time relevance, while regulated industries balance rich insights with strict data governance.

Recommendation Engine Selection

Translating profiles into actionable suggestions requires selecting the appropriate recommendation algorithm:

- Collaborative Filtering: Identifies user-item interaction patterns through user-based and item-based methods. Strengths include uncovering latent affinities; limitations include cold-start and popularity bias.

- Content-Based Filtering: Matches items to users based on semantic features and metadata. Mitigates new-user issues but can restrict discovery to similar content.

- Hybrid Models: Combine collaborative and content signals through ensemble strategies or context-driven switching for balanced accuracy and exploration.

- Knowledge-Based: Uses domain ontologies, rules or knowledge graphs. Integrated with platforms like Google Dialogflow, these engines suit compliance-sensitive environments.

- Deep Learning: Employs neural architectures—autoencoders, transformers—to capture complex relationships. Offers high precision at scale but requires extensive infrastructure and raises interpretability concerns.

Evaluating Recommendation Effectiveness

Performance is measured across multiple dimensions:

- Precision@K and Recall@K: Gauge relevance within top K recommendations for immediate satisfaction.

- NDCG (Normalized Discounted Cumulative Gain): Weighs relevance by rank position, rewarding higher-ranked items.

- Diversity and Serendipity: Assess the variety and unexpectedness of suggestions to mitigate echo chambers.

- Coverage and Novelty: Measure the algorithm’s capacity to recommend a broad range of items, critical in troubleshooting contexts.

- Fairness and Bias Indicators: Evaluate demographic parity and detect systemic biases, ensuring ethical treatment of user segments.

Real-time analytics dashboards—powered by services like Amazon Personalize and Google Recommendations AI—allow continuous monitoring of performance metrics, drift detection and rapid iteration via champion-challenger testing.

Contextual Nuances in Support Environments

Support use cases impose unique design requirements:

- Intent Sensitivity: Recommendations hinge on accurate intent recognition. Platforms such as Salesforce Einstein Bots integrate NLP-driven intent frameworks to surface relevant help articles or next-best actions.

- Sentiment Adaptation: Emotion signals guide response style, with empathetic suggestions prioritized for frustrated users.

- Session Continuity: Unified data pipelines preserve context across channel switches, ensuring coherent multi-touch journeys.

- Urgency and SLAs: Engines prioritize rapid, high-accuracy recommendations when interactions are flagged as urgent or subject to service-level agreements.

Fine-tuning algorithms to support objectives and incorporating domain-specific evaluation sets are essential to maintain trust and relevance.

Vendor Versus In-House Recommendation Solutions

Organizations choose between turnkey vendor services and custom engines based on cost, agility and control. Vendor platforms—such as Amazon Personalize and Google Recommendations AI—offer managed scalability, automated retraining and rapid time-to-value but can limit customization and data sovereignty. Proprietary in-house engines deliver full control over feature engineering and intellectual property but demand significant investment in data science talent, MLOps infrastructure and maintenance. Hybrid approaches combine vendor APIs for baseline capabilities with in-house extensions for niche use cases or proprietary data sources. A rigorous Total Cost of Ownership and Return on Investment analysis—factoring development costs, deployment timelines, vendor lock-in risks and compliance requirements—guides strategic decisions.

Impact of Tailored Support on Customer Loyalty

AI-driven personalization in support transforms interactions into dynamic dialogues that deepen emotional bonds and reinforce loyalty. By aligning assistance with individual profiles, AI agents can prompt incremental purchases, cross-sell relevant services and accelerate decision cycles. Retailers report a 25 percent uplift in average order value when support conversations reference recent browsing history or loyalty tier. Financial institutions leverage transaction history and risk profiles in tailored advisory messages, achieving higher conversion rates for account upgrades.

Churn reduction is a primary metric for personalization ROI. AI agents analyze sentiment trends, support frequency and frustration indicators to flag at-risk customers. Proactive interventions—clarifying billing issues or offering step-by-step tutorials—can reduce attrition by up to 30 percent. Subscription-based firms credit platforms like Dynamic Yield and Salesforce Einstein with significant gains in renewal rates and lifetime value through personalized retention campaigns.

Beyond economic benefits, tailored support fosters emotional resonance. When AI agents recall past issues, acknowledge milestones and adjust tone to match users’ mood, support becomes a meaningful brand touchpoint. Customers who feel understood demonstrate greater forgiveness of occasional errors and are more likely to advocate the brand. Executives apply analytical frameworks—RFM segmentation, NPS cross-tabulation, customer lifetime value modeling and composite engagement scoring—to isolate the contribution of personalized support to loyalty metrics and prioritize investment accordingly.

- RFM Analysis: Segments customers by Recency, Frequency and Monetary value to focus personalization on high-value cohorts.

- NPS Segmentation: Compares Net Promoter Score outcomes against personalization exposure to assess impact on promoters and detractors.

- Customer Lifetime Value: Projects revenue streams adjusted for uplift from personalized support and incremental sales.

- Engagement Scoring: Combines interaction depth, sentiment analysis and profile completeness to quantify loyalty drivers.

Industry case studies reinforce these outcomes. Telecommunications providers that reference device upgrade cycles and usage patterns in retention offers see 40 percent higher acceptance rates. Healthcare portals powered by IBM Watson Assistant deliver medication reminders and care plan updates, achieving 15 percent higher adherence and improved satisfaction. In B2B contexts, personalized onboarding sequences and industry-specific playbooks strengthen partnerships and accelerate time to value.

Psychological and economic theories such as social exchange and prospect theory explain loyalty dynamics. Tailored support reduces cognitive effort and emphasizes gains over losses in renewal dialogues, fostering positive reciprocity. However, personalization must balance privacy and transparency. GDPR and CCPA require clear data practices and consent. Privacy-by-design approaches—using anonymization, pseudonymization and granular opt-out mechanisms—ensure trust remains intact.